# Statistical physics for artificial neural networks

**Authors**:

- Zongrui Pei (New York University, New York, NY10003, USA)

- E-mail: peizongrui@gmail.com; zp2137@nyu.edu

Abstract

The 2024 Nobel Prize in Physics was awarded for pioneering contributions at the intersection of artificial neural networks (ANNs) and spin-glass physics, underscoring the profound connections between these fields. The topological similarities between ANNs and Ising-type models, such as the Sherrington-Kirkpatrick model, reveal shared structures that bridge statistical physics and machine learning. In this perspective, we explore how concepts and methods from statistical physics, particularly those related to glassy and disordered systems like spin glasses, are applied to the study and development of ANNs. We discuss the key differences, common features, and deep interconnections between spin glasses and neural networks while highlighting future directions for this interdisciplinary research. Special attention is given to the synergy between spin-glass studies and neural network advancements and the challenges that remain in statistical physics for ANNs. Finally, we examine the transformative role that quantum computing could play in addressing these challenges and propelling this research frontier forward. Contents

1. 1 Introduction

1. 2 Spin-glass physics for artificial neural networks

1. 2.1 Relations of spin glasses, biological neurons, and associative memory

1. 2.2 Dictionary of corresponding concepts

1. 2.3 Hopfield neural network and Boltzmann machines

1. 2.4 Replica theory and the cavity method

1. 2.5 Overparameterization and double-descent behavior

1. 3 Challenges and perspectives

1. 3.1 Challenges in ANNs and spin glasses for ANNs

1. 3.2 Spin-glass physics helps understand ANNs

1. 3.3 Do we need new order parameters for ANNs?

1. 3.4 Quantum computing for spin glasses and ANNs

1. 3.5 More opportunities

1. 4 Conclusions

## 1 Introduction

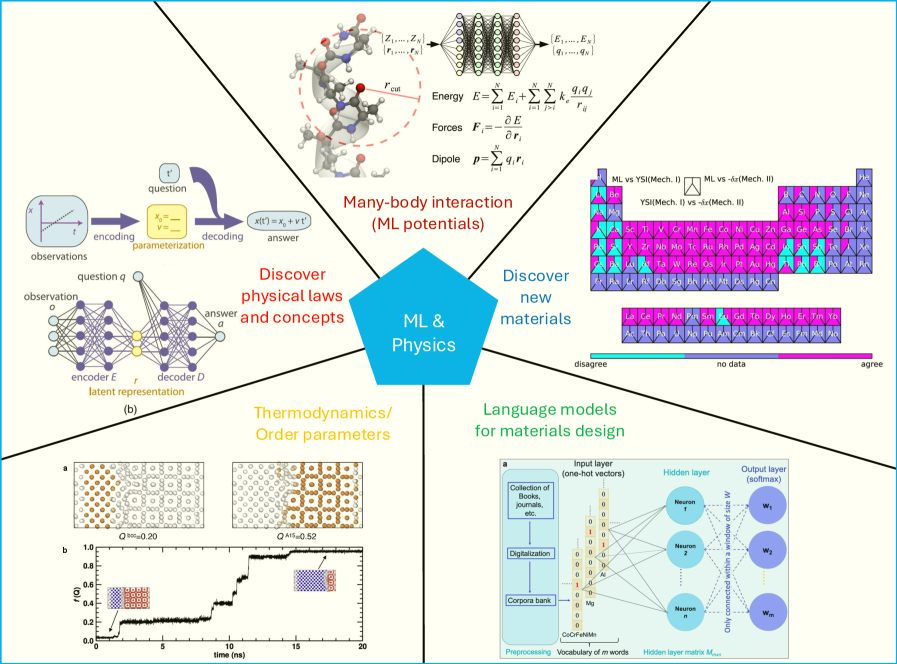

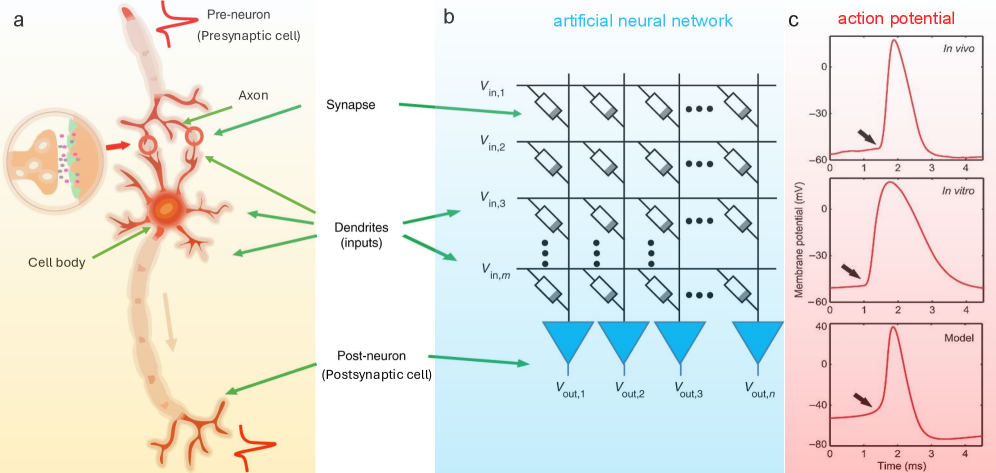

Artificial neural networks (ANNs) have had a profound influence in many sectors, as demonstrated by numerous notable milestones in ANN applications. For example, AlexNet is one of the first models to show the impressive capabilities of ANNs in classification tasks using the ImageNet dataset krizhevsky2012imagenet. AlphaGo corroborates that ANNs can outperform human players in games silver2016mastering; silver2017mastering. ANNs also find their application in self-driving cars badue2021self. Recently, large language models chatGPT; pei2025language, which are complex ANN models, have fundamentally changed how we work, learn, and teach, influencing nearly everyone. ANNs have been widely adopted in various scientific research domains. An outstanding example is AlphaFold, which has solved the half-century-long challenge in structural biology jumper2021highly and accelerated the determination of three-dimensional (3D) protein structures, potentially revolutionizing drug discovery and the healthcare industry. A few more typical examples in physics are shown in Figure 1. ANNs have been used to describe many-body interactions or construct empirical potentials, such as PhysNet unke2019physnet. Some ANN potentials with multiple neural layers are also referred to as deep potentials zhang2018deep. Recently, inspired by the success of foundation models for natural languages, developing foundation models for potentials has been proposed, and some initial studies have been conducted batatia2023foundation. Order parameters can describe the phase transitions in thermodynamics. ANNs have been adopted to construct new order parameters successfully yin2021neural; rogal2019neural; jung2025roadmap. As a proof of concept, ANNs are also used to rediscover physical laws and concepts iten2020discovering. ANN models have been developed to design novel materials pei2023toward; Tshitoyan2019; pei2024designing; pei2024computer. They have been utilized to link the physical properties of various materials and their crystal structures, microstructure, and processing pei2021machine; pei2024towards. Examples of applications include low-dimensional materials, structural materials, and functional materials, among others.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Interdisciplinary Applications of Machine Learning in Physics

### Overview

This image is a conceptual infographic illustrating five key research areas at the intersection of Machine Learning (ML) and Physics. A central blue hexagon labeled "ML & Physics" connects to five surrounding thematic blocks, each containing specific diagrams, equations, and data visualizations. The overall layout is a radial diagram with a light beige background.

### Components/Axes

The diagram is segmented into five primary regions radiating from the center:

1. **Top-Center:** "Many-body interaction (ML potentials)"

2. **Top-Right:** "Discover new materials"

3. **Bottom-Right:** "Language models for materials design"

4. **Bottom-Left:** "Thermodynamics/Order parameters"

5. **Top-Left:** "Discover physical laws and concepts"

A secondary, smaller diagram labeled "(b)" is nested within the "Discover physical laws and concepts" section.

### Detailed Analysis

#### 1. Many-body interaction (ML potentials)

* **Visual:** A molecular structure (ball-and-stick model) with atoms in red, blue, and white. A dashed red circle highlights an interaction radius `r_cut`.

* **Equations:** To the right of the molecule, three equations are presented:

* **Energy:** `E = Σ_i E_i + Σ_i Σ_j>k k_e * (q_i * q_j)/r_ij`

* **Forces:** `F_i = - ∂E/∂r_i`

* **Dipole:** `p = Σ_i q_i * r_i`

* **Neural Network Diagram:** Above the equations, a schematic shows a neural network processing coordinates `{Z_1,...,Z_N}` and distances `{r_1,...,r_N}` to output energies `{E_1,...,E_N}` and charges `{q_1,...,q_N}`.

#### 2. Discover new materials

* **Visual:** A large, complex heatmap or comparison matrix. The matrix is composed of many small triangular cells.

* **Legend:** Located at the bottom of this section. It defines a color scale:

* **Purple/Magenta:** "disagree"

* **Grey:** "no data"

* **Cyan/Teal:** "agree"

* **Matrix Labels:** The matrix is divided into sections with text labels:

* Top-left block: "ML vs YSI(Mech. II)"

* Top-right block: "ML vs -ln(Mech. II)"

* Bottom block: "YSI(Mech. II) vs -ln(Mech. II)"

* **Data Trend:** The matrix shows a patchwork of colors. The "ML vs YSI" block has significant areas of both "agree" (cyan) and "disagree" (purple). The "ML vs -ln" block is predominantly "agree" (cyan). The "YSI vs -ln" block is almost entirely "disagree" (purple).

#### 3. Language models for materials design

* **Visual:** A schematic of a neural network architecture.

* **Components (Left to Right):**

* **Input:** "Collection of Books, patents, etc." -> "Digitization" -> "Corpora bank" -> "Preprocessing" -> "Vocabulary of M words".

* **Network Layers:**

* "Input layer (one-hot vectors)": Shows a vertical vector of 0s and a single 1.

* "Hidden layer": Contains circles labeled "Neuron 1", "Neuron 2", ..., "Neuron N". Connections are labeled "Only connected with window of k W".

* "Output layer (softmax)": Contains circles labeled "W_1", "W_2", ..., "W_M".

* **Text Annotations:** "CoD/PtNiAl" is written near the input. "Hidden layer matrix M_h" is noted below the hidden layer.

#### 4. Thermodynamics/Order parameters

* **Visual (Top):** Two lattice diagrams labeled **a**.

* Left lattice: Disordered arrangement of brown and white circles. Label: `Q^M=0.20`.

* Right lattice: Ordered, checkerboard-like arrangement. Label: `Q^M=0.52`.

* **Visual (Bottom):** A line graph labeled **b**.

* **Y-axis:** Label is `Q^M`. Scale runs from 0.0 to 1.0 with increments of 0.2.

* **X-axis:** Label is `time [ns]`. Scale runs from 0 to 20 with increments of 5.

* **Data Trend:** The black line shows `Q^M` starting near 0, rising sharply around 2 ns to ~0.4, plateauing, then rising again around 12 ns to a final plateau near 0.9. Two small lattice insets are placed on the graph, corresponding to the low-order (start) and high-order (end) states.

#### 5. Discover physical laws and concepts

* **Main Diagram:** A flowchart showing a process from "observations" to "answer".

* **Steps:** "observations" (graph of `x` vs `t`) -> "encoding" -> "parameterization" (box with `x_0`, `v`) -> "decoding" -> "answer" (`x(t) = x_0 + v*t`).

* **Question:** A "question" input (box with `t*`) feeds into the parameterization step.

* **Sub-diagram (b):** A detailed neural network architecture.

* **Components:** "observation o" (input layer), "encoder E", "latent representation" (bottleneck layer), "decoder D", "answer a" (output layer).

* **Question Flow:** A "question q" is shown as a separate input that interacts with the latent representation.

### Key Observations

1. **Interdisciplinary Flow:** The diagram explicitly connects abstract ML concepts (encoders, decoders, neural networks) to concrete physical outputs (molecular energies, material properties, thermodynamic order parameters, symbolic equations).

2. **Data Representation:** Different physical problems require different data representations: 3D coordinates for molecules, text corpora for materials design, lattice configurations for thermodynamics, and time-series observations for law discovery.

3. **Validation Methods:** The "Discover new materials" section highlights a key challenge in computational science: comparing predictions from different models (ML, YSI, -ln) and visualizing areas of agreement and disagreement.

4. **Scale Bridging:** The "Thermodynamics" section shows ML being used to track an order parameter (`Q^M`) over time (nanoseconds), linking microscopic lattice configurations to a macroscopic thermodynamic measure.

### Interpretation

This infographic serves as a high-level map for how modern machine learning techniques are being integrated into physics research. It argues that ML is not a single tool but a versatile toolkit applicable across multiple sub-disciplines:

* **For Fundamental Interactions:** ML potentials (top-center) aim to replace or augment expensive quantum mechanical calculations for simulating molecular dynamics, using neural networks to learn the potential energy surface.

* **For Discovery:** The heatmap (top-right) and language models (bottom-right) represent two approaches to materials discovery. One uses direct property comparison (heatmap), while the other leverages the vast, unstructured knowledge in scientific text (language models) to suggest new materials.

* **For Understanding:** The sections on thermodynamics (bottom-left) and discovering laws (top-left) position ML as a tool for scientific insight. It can identify phase transitions from simulation data and, in a more ambitious goal, distill raw observational data into interpretable physical equations.

The central "ML & Physics" hexagon emphasizes a bidirectional relationship: physics provides rich, structured problems and data for ML, while ML offers powerful new methods for simulation, discovery, and analysis in physics. The diagram suggests a future where computational physics is increasingly driven by data-centric, learning-based approaches.

</details>

Figure 1: The applications of artificial neural networks in physics. Artificial neural networks have been widely utilized to solve a diverse range of problems, including those in scientific research. Here, we show a few typical examples in physical research, i.e., (i) many-body interactions (machine-learning potentials) unke2019physnet; zhang2018deep, (ii) thermodynamics (proposal of order parameters to describe phase transitions) yin2021neural; rogal2019neural, (iii) discovery of physical laws and concepts iten2020discovering, (iv) discovery or design of new materials pei2021machine, and (v) language models for materials design pei2023toward; Tshitoyan2019; pei2024towards.

ANNs have been employed to understand Ising-like physical systems mills2020finding; carrasquilla2017machine; hibat2021variational; fan2023searching, including spin glass hibat2021variational; fan2023searching. They provide a new opportunity to understand the physics of spin glass. Fan et al. searched for spin glass ground states through deep reinforcement learning fan2023searching. Huang et al. confirmed the efficiency of classic machine learning to describe quantum phases, taking 2D random Heisenberg models as an example huang2022provably. ANNs can boost Monte-Carlo simulations of spin glasses (autoregressive neural networks) mcnaughton2020boosting and search for ground states of spin glasses (tropical tensor network) liu2021tropical.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Timeline of Neural Network and AI Architecture Evolution

### Overview

The image is a complex informational diagram illustrating the historical evolution of key concepts, architectures, and models in artificial intelligence and neural networks. It features a central timeline at the bottom, with various diagrams and charts positioned above it, connected by colored arrows indicating their chronological relationship to specific years. The overall narrative traces the path from early theoretical models to modern large language models (LLMs) and protein structure prediction.

### Components/Axes

The diagram is segmented into several distinct visual components:

1. **Central Timeline (Bottom):** A horizontal black-to-red gradient arrow labeled "Year" at the far right. It marks specific years from 1943 to "Now" (implied present, circa 2024/2025). Below each year is a corresponding milestone.

2. **Network Architecture Diagrams (Top Left):** Three network graphs enclosed in a yellow-bordered box.

* **Hopfield Network:** A fully connected graph with 4 nodes labeled with binary states (1, 0, 0, 1).

* **Boltzmann Machine:** A fully connected graph with 4 nodes (2 black, 2 white) and visible/hidden node legend.

* **Restricted Boltzmann Machine:** A bipartite graph with 2 black "Visible nodes" and 2 white "Hidden nodes," with connections only between layers.

* **Legend:** Below the networks, a key defines "Visible nodes" (black circle) and "Hidden nodes" (white circle).

3. **Spin Glass Diagram (Left):** A red-bordered box containing a triangular diagram labeled "Spin glass." It shows three arrows (two blue, one black) with "+" and "-" signs and a red question mark, representing frustrated states.

4. **Protein Structure (Top Right):** A green-bordered box containing a 3D ribbon diagram of a protein structure, colored in blue, yellow, and orange. This is visually linked to the "AlphaFold" milestone on the timeline.

5. **Transformer Architecture Diagram (Center Right):** A detailed schematic of the Transformer model architecture, showing components like "Input embedding," "Positional Encoding," "Multi-Head Attention," "Feed Forward," "Add & Norm," and "Output (shifted right)."

6. **LLM Evolution Bar Chart (Right):** A blue-bordered box containing a stacked bar chart titled "Evolution of LLM Architecture."

* **Y-axis:** "Number of major releases," scaled from 0 to 20.

* **X-axis:** Years from 2018 to 2024.

* **Legend:** Four categories: "Encoder-Only" (orange), "Encoder-Decoder" (yellow), "Decoder-Only" (green), "Linear-Attn" (brown).

* **Data:** Stacked bars for each year showing the count of major model releases per architecture type.

### Detailed Analysis

**Timeline Milestones (Chronological Order):**

* **1943:** McCulloch-Pitts network

* **1949:** Hebb's rule

* **1958:** Perceptron

* **1974:** Little's associative network

* **1975:** EA/SK Spin glass (connected via red arrow to the "Spin glass" diagram)

* **1982:** Hopfield network (connected via yellow arrow to the "Hopfield Network" diagram)

* **1985:** Boltzmann Machine (connected via yellow arrow to the "Boltzmann Machine" and "Restricted Boltzmann" diagrams)

* **...** (ellipsis indicating a gap)

* **2017:** Transformer (connected via blue arrow to the "Transformer Architecture" diagram)

* **2021:** AlphaFold (connected via green arrow to the "Protein Structure" image)

* **Now:** LLMs (connected via blue arrow to the "Evolution of LLM Architecture" chart)

**LLM Evolution Chart Data (Approximate Values):**

* **2018:** ~2 Encoder-Only, ~1 Encoder-Decoder. Total: ~3.

* **2019:** ~4 Encoder-Only, ~3 Encoder-Decoder, ~1 Decoder-Only. Total: ~8.

* **2020:** ~3 Encoder-Only, ~2 Encoder-Decoder, ~1 Decoder-Only. Total: ~6.

* **2021:** ~2 Encoder-Only, ~1 Encoder-Decoder, ~9 Decoder-Only. Total: ~12.

* **2022:** ~1 Encoder-Only, ~1 Encoder-Decoder, ~17 Decoder-Only. Total: ~19.

* **2023:** ~1 Encoder-Only, ~1 Encoder-Decoder, ~7 Decoder-Only. Total: ~9.

* **2024:** ~1 Encoder-Only, ~1 Encoder-Decoder, ~9 Decoder-Only, ~1 Linear-Attn. Total: ~12.

**Trend Verification (LLM Chart):**

* **Encoder-Only (Orange):** Shows a declining trend from a peak in 2019 to minimal presence by 2022-2024.

* **Encoder-Decoder (Yellow):** Remains a small, relatively stable component from 2018 to 2024.

* **Decoder-Only (Green):** Shows a dramatic, dominant upward trend starting in 2020, peaking in 2022, and remaining the most common architecture through 2024.

* **Linear-Attn (Brown):** Appears only in 2024 as a very small segment.

### Key Observations

1. **Architectural Shift:** The bar chart clearly illustrates a major industry shift from Encoder-Only and Encoder-Decoder models towards Decoder-Only architectures (like GPT) starting around 2020-2021.

2. **Theoretical Foundations:** The diagram explicitly links modern deep learning (Transformers, LLMs) to much older theoretical concepts in statistical physics (Spin glasses, 1975) and associative memory (Hopfield networks, 1982).

3. **Convergence of Fields:** The inclusion of AlphaFold (2021) alongside LLMs highlights the parallel, significant impact of deep learning in the scientific domain of protein structure prediction.

4. **Pacing of Innovation:** The timeline shows long gaps between foundational theories (1940s-1980s) and their explosive application in the 2010s and 2020s, suggesting a period of incubation followed by rapid scaling.

### Interpretation

This diagram constructs a **genealogy of modern AI**. It argues that today's most powerful AI systems, particularly LLMs, are not sudden inventions but the culmination of decades of research in interconnected fields.

* **From Physics to Computation:** It draws a direct line from concepts in statistical mechanics (spin glasses, Boltzmann machines) which deal with disordered systems and energy states, to neural network models that use similar principles for optimization and learning (e.g., using energy functions in Hopfield and Boltzmann networks).

* **The Transformer as a Pivot Point:** The year 2017 is marked as a critical juncture with the introduction of the Transformer. Its architecture diagram is centrally placed, acting as the bridge between earlier network theories and the subsequent explosion of LLMs.

* **The "Decoder-Only" Takeover:** The LLM chart provides quantitative evidence for a paradigm shift in AI research and development. The dominance of Decoder-Only models suggests a convergence on a specific, scalable architecture for generative tasks, which has become the foundation for the current generation of AI assistants and tools.

* **AI as a General-Purpose Tool:** By placing AlphaFold (a specialized scientific tool) on the same timeline as general-purpose LLMs, the diagram suggests that the underlying deep learning revolution is domain-agnostic, capable of driving breakthroughs in both open-ended language tasks and precise scientific problems.

In essence, the image is a visual thesis on the **historical continuity and accelerating convergence** of ideas that have led to the current state of artificial intelligence.

</details>

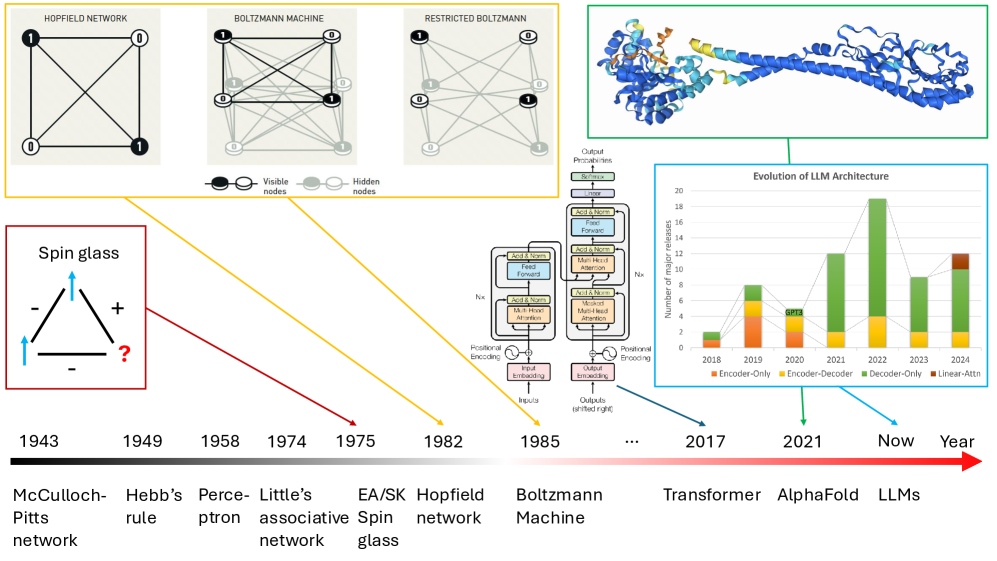

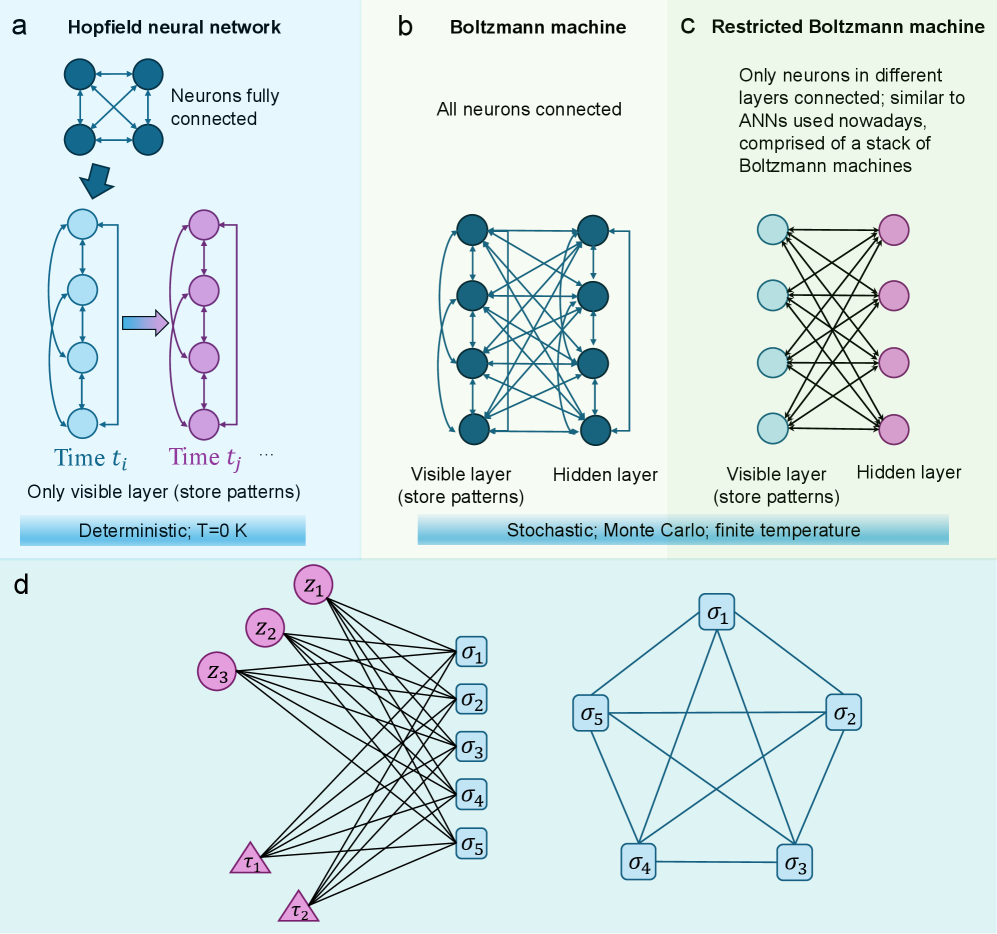

Figure 2: The history of artificial neural networks and relevant physical models. Other landmarks include backpropagation in the 1970s and AlexNet in 2012. Interestingly, bio-inspired ANNs ultimately led to the solution of the protein folding problem that has plagued us for half a century.

The significant breakthroughs in developing ANNs highlight the importance of convergence across different disciplines. ANNs mimic the structure and function of biological neurons, inspired by the human brain. In addition to their biological origin, the physical foundation of ANNs has been recognized by the Nobel Prize in Physics 2024, awarded to physicist John J. Hopfield and computer scientist Geoffrey E. Hinton hinton2025nobel. Their foundational discoveries enabled the development of ANNs and their applications today. ANNs are closely connected with spin glass, a traditional topic theorized by Edwards and Anderson in 1975 edwards1975theory and has attracted considerable attention in recent years. The importance of spin glasses as a representative complex system in different research domains across various length scales was demonstrated by the Nobel Prize in Physics 2021, awarded partly to Giorgio Parisi parisi1979infinite. The Nobel Prizes in Physics in 2021 and 2024 have also contributed to the increased attention. The prize-winning studies revealed a profound connection between spin glasses and diverse disciplines, ranging from large to small scales, encompassing complexity science, biology, and computer science.

There are a few landmarks in the history of ANNs [Figure 2], such as the seminal work of McCulloch and Pitts on neural networks in 1943 mcculloch1943logical that initiated the research domain and the Hebbian mechanism or Hebb’s rule in 1949 that suggests when neurons fire signals hebb2005organization, the simple ANN perceptron proposed by Rosenblatt in 1958 rosenblatt1958perceptron, and the proposal of associative neural networks by Little in 1974 little1974existence, and later by Hopfield in 1982 hopfield1982neural. In 1985, Hinton and colleagues proposed the Boltzmann machine ackley1985learning, a stochastic recurrent neural network (RNN) where the energy state of each neural-network configuration follows the Boltzmann distribution. Restricted Boltzmann machines were proposed later by removing the connections between neurons within the same layer to improve the network’s efficiency. The proposal of backpropagation in the 1970s, which Hinton and colleagues utilized to optimize ANNs like AlexNet in 2012, is another significant milestone. In 2017, the transformer architecture was proposed as an alternative to RNN structures, where the attention mechanism is a key novel component. Due to its high efficiency and suitability in large model optimization, it opened a new era for deep neural networks. AlphaFold jumper2021highly, the Nobel-Prize-winning model, and the popular large language models like GPT-3.5/4/4.5 and Llama 2/3/4 all adopt the transformer architecture.

Albeit with enormous success, the mechanisms behind these successes remain enigmatic. Fortunately, it has been demonstrated that idealized versions of these powerful networks are mathematically equivalent to older, simpler machine learning models, such as kernel machines belkin2018understand; jacot2018neural or physical models jcrn-3nrc. Therefore, the physical foundation of ANNs is closely related to magnetism (spins) and statistical physics amit1985spin; amit1987statistical; watkin1993statistical; mezard2024spin. Hopfield networks hopfield1982neural; hopfield1999brain; krotov2023new and Boltzmann machines ackley1985learning are essentially Sherrington-Kirkpatrick (SK) spin glasses with random interaction parameters sherrington1975solvable, where each neuron or spin is connected with all other ones except itself. When mapping the spin-glass structure to an ANN, each state corresponds to a pattern or memory in the Hopfield network. Finite metastable states indicate the limited capability of the Hopfield network folli2017maximum. It is essential to determine whether general spin glasses exhibit infinite metastable states, as is the case with the mean-field SK model. Spherical spin glass models have been used to study simplified, idealized ANNs choromanska2015loss. Parisi proposed a replica symmetry breaking (RSB) solution parisi1979infinite; charbonneau2023spin to the SK model sherrington1975solvable. The RSB theory has also been used to study ANNs, and steps toward some rigorous results were discussed agliari2020replica. Ghio et al. showed the sampling with flows, diffusion, and autoregressive neural networks from a spin-glass perspective ghio2024sampling. If this equivalence of ANNs and spin-glass systems can be extended beyond idealized neural networks, it may explain how practical ANNs achieve their astonishing results.

Box 1 | Basic Concepts of Statistical Physics Used by ANNs

## Thermodynamics

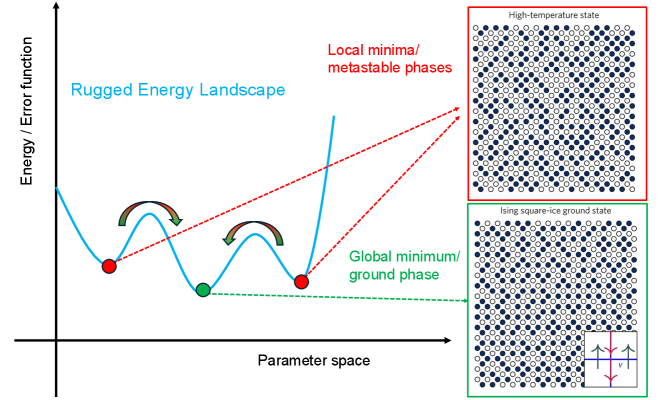

Energy Landscapes and Loss Functions The loss functions in ANNs are similar to the total energy of physical systems [Figure 1]. Statistical physics concepts, such as energy landscapes, are used to understand the dynamics and optimization in neural networks. For example, the weights and states in neural networks can be thought of as occupying a rugged energy landscape with many local minima, just like glassy Ising models. To optimize or train ANNs, we use methods such as simulated annealing and stochastic gradient descent, which are inspired by statistical physics.

Entropy and Information Theory Entropy from statistical physics is used to measure uncertainty in predictions and model behavior. One type of entropy, known as ”cross entropy,” between the actual values and predictions can be used as an error function. In addition, the entropy concept is closely related to the Information Bottleneck Principle, which explains how neural networks compress information during training, much like physical systems reduce entropy under certain conditions.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Rugged Energy Landscape and Corresponding Physical States

### Overview

This image is a conceptual scientific diagram illustrating the relationship between a "rugged energy landscape" in parameter space and the corresponding physical states of a system, likely related to statistical mechanics or optimization problems. It combines a 2D graph with two lattice structure diagrams to explain the concepts of local minima (metastable phases) and the global minimum (ground state).

### Components/Axes

**Main Graph (Left Panel):**

* **Y-axis:** Labeled "Energy / Error function". The axis is vertical with an arrow pointing upward, indicating increasing energy or error.

* **X-axis:** Labeled "Parameter space". The axis is horizontal with an arrow pointing to the right, indicating a change in system parameters.

* **Primary Data Series:** A thick, blue, wavy line labeled "Rugged Energy Landscape". This line represents the energy function over the parameter space.

* **Key Points on the Curve:**

* Two **red dots** are placed at the bottom of two separate valleys (local minima) on the blue curve.

* One **green dot** is placed at the bottom of the deepest valley (global minimum) on the blue curve.

* **Annotations:**

* Text "Local minima/metastable phases" in red, positioned in the upper right quadrant of the graph. Two red dashed arrows originate from this text, each pointing to one of the red dots.

* Text "Global minimum/ground phase" in green, positioned below the green dot. A green dashed arrow originates from this text and points to the green dot.

* Two sets of curved, double-headed arrows (brown/green) are drawn over the peaks between the minima, suggesting the possibility of transitions or barriers between states.

**Lattice Diagrams (Right Panel):**

* **Top Diagram (Red Border):**

* **Title:** "High-temperature state" (text above the box).

* **Content:** A square lattice of circles. The circles are a mix of filled (dark blue) and empty (white), arranged in a seemingly random or disordered pattern. This visually represents a high-entropy, disordered phase.

* **Bottom Diagram (Green Border):**

* **Title:** "Ising square-ice ground state" (text above the box).

* **Content:** A square lattice of circles. The circles are arranged in a highly ordered, alternating pattern of filled and empty circles, forming a checkerboard-like structure. This represents an ordered, low-energy ground state.

* **Inset (Bottom-right corner of this diagram):** A small schematic showing a vertex with four arrows. Two arrows point inward (↑ and →) and two point outward (↓ and ←), illustrating the "ice rule" or local constraint for this specific ground state.

### Detailed Analysis

The diagram establishes a direct visual correlation between abstract energy landscape features and concrete physical configurations.

1. **Graph Analysis:** The "Rugged Energy Landscape" (blue line) is non-convex, featuring multiple valleys. The **red dots** mark local minima—points where the system is stable against small perturbations but not the lowest possible energy state. The **green dot** marks the global minimum—the configuration with the absolute lowest energy. The curved arrows over the peaks imply that moving from one minimum to another requires overcoming an energy barrier.

2. **Lattice Correspondence:**

* The **red dots** (local minima) are linked via red dashed arrows to the "High-temperature state" lattice. This indicates that a disordered, high-temperature configuration corresponds to a metastable state in the energy landscape.

* The **green dot** (global minimum) is linked via a green dashed arrow to the "Ising square-ice ground state" lattice. This indicates that the perfectly ordered, low-temperature configuration corresponds to the true ground state of the system.

3. **Spatial Grounding:** The legend (color-coded text and dots) is integrated directly onto the graph. The "High-temperature state" diagram is positioned in the top-right of the overall image, aligned with the "Local minima" annotation. The "Ising square-ice ground state" diagram is in the bottom-right, aligned with the "Global minimum" annotation.

### Key Observations

* **Visual Metaphor:** The diagram effectively uses the "landscape" metaphor common in physics and machine learning, where finding the global minimum is akin to finding the optimal solution.

* **State Dichotomy:** It presents a clear dichotomy between disorder (high-temperature, local minima) and order (ground state, global minimum).

* **Barrier Illustration:** The curved arrows explicitly show that transitions between states are not free but require traversing higher-energy "hills."

* **Specific Model:** The ground state is specified as an "Ising square-ice" state, which is a particular model in statistical physics with local "ice rule" constraints, as shown in the inset.

### Interpretation

This diagram is a pedagogical tool explaining why certain systems can get "stuck" in suboptimal, disordered states (metastable phases) instead of reaching their true, ordered ground state. The "rugged" nature of the energy landscape—with many local minima separated by barriers—makes it difficult for the system to find the global minimum through random thermal fluctuations alone. This concept is fundamental to understanding phenomena like glassy dynamics, protein folding, and the challenges of training complex neural networks (where the "error function" landscape is similarly rugged). The lattices provide a tangible, microscopic picture of what these abstract energy states actually look like: a messy, high-entropy arrangement versus a pristine, low-entropy pattern. The diagram argues that the system's final state depends critically on its ability to navigate this complex landscape.

</details>

Figure 3: Basic concepts in statistical physics and thermodynamics. Here, we show that each pattern (memory or configuration) corresponds to an energy state in the rugged energy landscape of the free energy function or an error function.

Phase Transitions The concept of phase transitions is useful to describe the behavior of ANNs. ANNs exhibit phase transitions during training when their prediction and reliability suddenly change upon small changes of ANN structures (e.g., neural layers, parameter size in each layer), learning rate, etc., around critical values. This is similar to physical systems undergoing structural changes (e.g., spin glasses).

## Bayesian Inference and Partition Functions

Bayesian statistics finds its application in ANNs. It uses Bayes’ theorem to calculate and update the probability distribution when new data or knowledge is available. This conditional feature meets the flexibility requirement of real-world problems, making it practically useful. Bayesian neural networks use principles from statistical physics for probabilistic inference. The partition function, a cornerstone of statistical mechanics, is used to calculate probabilities in probabilistic graphical models, including some neural networks.

## Learning Dynamics and Langevin Equations

There are a few connections between Langevin equations and ANNs. Here, we mention two of them: (i) The learning dynamics of ANNs is described by Langevin equations, analogous to the motion of particles in a thermal bath. In studying stochastic gradient descent (SGD), the noise introduced by mini-batch sampling is found to resemble thermal noise goldt2019dynamics. (ii) The diffusion model of ANNs for images is similar to Langevin equations albergo2023stochastic, since both start with a white noisy background, and gradually recover the true states based on the status change of each particle or pixel.

## 2 Spin-glass physics for artificial neural networks

Just as neurons are the biological analogue of ANNs, spin glasses could be considered the physical analogue of ANNs: they were the inspiration for the original Hopfield model hopfield1982neural, which itself is viewed by some as a type of spin glass. We will discuss their relationships below.

### 2.1 Relations of spin glasses, biological neurons, and associative memory

Spin glass A spin glass is a type of magnetic system in which the quenched interactions between localized spins may be either ferromagnetic or antiferromagnetic with roughly equal probability. It exhibits an array of experimental properties: spin freezing with no long-range magnetic order at low temperature, a magnetic susceptibility cusp at a critical temperature accompanied by the absence of a singularity in the specific heat, slow relaxation, aging, and several others stein2013spin; stein2011spin. Most theoretical work has focused on the Edwards-Anderson (EA) spin glass edwards1975theory proposed in 1975 and its infinite-range analogue, the Sherrington-Kirkpatrick (SK) model sherrington1975solvable, proposed the same year.

The EA Hamiltonian in the absence of an external field is given by

$$

H=-∑_<ij>J_ijσ_iσ_j , \tag{1}

$$

where the couplings $J_ij$ are i.i.d. random variables representing the interaction between spins $σ_i$ and $σ_j$ at nearest-neighbor sites $i$ and $j$ (denoted by the bracket in (1)). One is free to choose the distribution of the couplings: a common choice, which will be used here, is a Gaussian distribution with zero mean and unit variance. This distinguishes the spin glass from more conventional magnets: if $J_ij$ were a constant $J$ , the model becomes either a ferromagnet ( $J>0$ ) or an antiferromagnet ( $J<0$ ), both with an ordered ground state.

As noted above, when every pair of spins interacts with each other, the model becomes the SK model, a mean-field spin glass with Hamiltonian

$$

H=-\frac{1}{√{N}}∑_i<jJ_ijσ_iσ_j , \tag{2}

$$

where the coupling distribution $J_ij$ is also a mean-zero, unit variance Gaussian. The thermodynamic properties of the SK model are well understood, and analytical expressions were found for its free energy and order parameters parisi1979infinite. Whether similar properties exist in short-range spin glasses remains an open question. Understanding short-range spin glasses in finite dimensions remains a major challenge in both condensed matter physics and statistical mechanics.

ANNs and spin glasses Magnetic systems have long been used to model neural networks, going back (at least) to the work of McCullough and Pitts mcculloch1943logical. In its simplest form, a neuron is assumed to have only two states: ‘on’ or firing, and ‘off’ or quiescent. Therefore, its state can be modelled as an Ising spin, where $+1$ corresponds to the firing state and $-1$ to the quiescent state. A state, or firing pattern, of the entire neural network then corresponds to a spin configuration $\{σ\}$ . The interactions between neurons, i.e., synaptic efficiencies, correspond to the couplings $J_ij$ between spins in a magnetic system.

The Hebb learning rule is used to determine the coupling parameters hebb2005organization. In associative neural networks, such as the Hopfield network, suppose there are $p$ patterns (corresponding to memories). Then the interactions $J_ij$ between neurons are modelled as

$$

J_ij=\frac{1}{N}∑_μ=1^pξ_i^μξ_j^μ, \tag{3}

$$

where the state of the $i^\rm th$ spin in the $μ^\rm th$ pattern (spin configuration) is represented by $ξ_i^μ$ , which also takes on the values $± 1$ . When $p=1$ , the system is equivalent to a Mattis model mattis1976solvable, which is gauge-equivalent to a ferromagnet. Setting $p>1$ introduces frustration into the system, and its behavior becomes more spin-glass-like. Apart from its interpretation as a model for neural networks, the Hopfield model represents an interesting statistical mechanical system in its own right and has been the subject of considerable study. A few studies have used statistical mechanics methods to investigate phase transitions in the Hopfield model hopfield1982neural; amit1985storing; gardner1988space and have found a bound $p$ on the number of memories that can be faithfully recalled from any initial state in a system of $N$ spins or neurons.

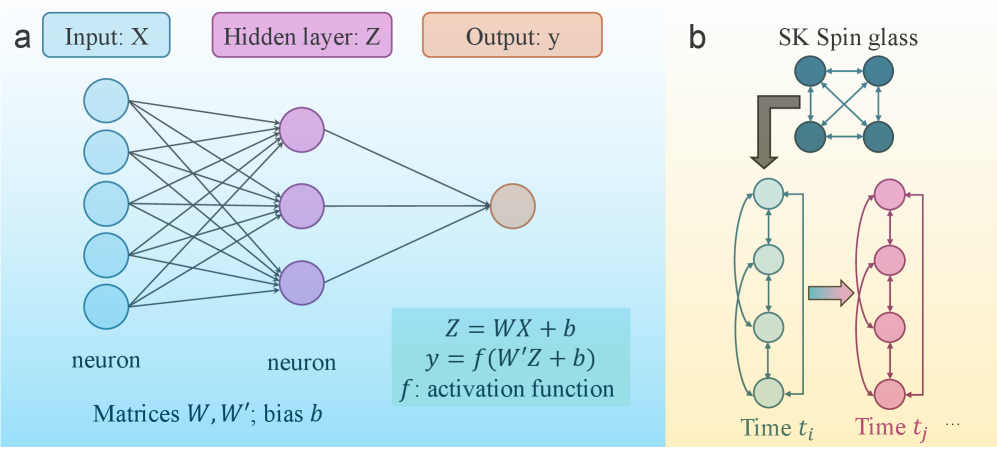

In addition to Hopfield networks, some idealized ANNs also have similar correspondences with spin glasses little1974existence, such as the spherical SK spin glass models choromanska2015loss [Figure 4]. In a spherical spin glass, the spins have varying magnitudes subject to the constraint $∑_i^Nσ_i^2=N$ . Given training data $\{X_i\},y$ , a neural network with $H-1$ hidden layers is equivalent to an equation choromanska2015loss, i.e., $y=qσ\big(W^T_Hσ(W^T_H-1...σ(W_1^TX))...\big)$ , where $q$ is a normalization factor. The loss function of this ANN is

$$

L_Λ,H(\bm{\tilde{w}})=\frac{1}{Λ^(H-1)/2}∑_i_{1,i_2,...,i_H=1}^ΛX_i_{1,i_2,...,i_H}\tilde{w}_i_1\tilde{w}_i_2...\tilde{w}_i_{H}, \tag{4}

$$

where $Λ$ is the number of weights. By imposing the spherical constraint above on the weights that follow $1/Λ∑_i=1^Λ\tilde{w}_i^2=1$ , the loss function of this neural network was found to be mathematically equivalent to the Hamiltonian formulation of a spin glass.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Neural Network and Spin Glass Model Comparison

### Overview

The image is a two-panel technical diagram (labeled **a** and **b**) illustrating the structural analogy between a feedforward neural network and a Sherrington-Kirkpatrick (SK) spin glass model. Panel **a** details the architecture and mathematics of a simple neural network. Panel **b** depicts the SK spin glass model and its conceptual mapping to a time-evolving system.

### Components/Axes

**Panel a (Left): Neural Network**

* **Labels & Structure:**

* Top: Three colored boxes label the layers: `Input: X` (light blue), `Hidden layer: Z` (purple), `Output: y` (orange).

* Diagram: A network graph with:

* An input layer of 5 light blue circles (neurons).

* A hidden layer of 3 purple circles (neurons).

* An output layer of 1 orange circle (neuron).

* Directed lines (edges) connect all input neurons to all hidden neurons, and all hidden neurons to the output neuron.

* Bottom Text: `neuron` is written below the input and hidden layers. `Matrices W, W'; bias b` is written at the bottom.

* **Mathematical Equations (Bottom Right Box):**

* `Z = WX + b`

* `y = f(W'Z + b)`

* `f : activation function`

**Panel b (Right): SK Spin Glass**

* **Labels & Structure:**

* Top: Title `SK Spin glass`.

* Diagram: A network of 4 dark teal circles (spins) fully interconnected with double-headed arrows.

* A large brown arrow points from this network down to a sequence of states.

* The sequence shows two vertical columns of circles connected by curved arrows, representing time evolution:

* Left column: 4 light green circles, labeled `Time t_i` at the bottom.

* Right column: 4 pink circles, labeled `Time t_j` at the bottom, followed by `...`.

* A horizontal arrow points from the green column to the pink column.

* Within each column, curved arrows connect the circles in a loop.

### Detailed Analysis

**Panel a: Neural Network Details**

* **Architecture:** A 3-layer fully connected network. Input dimension = 5, hidden layer dimension = 3, output dimension = 1.

* **Data Flow:** Information flows left to right: Input `X` -> Hidden layer `Z` -> Output `y`.

* **Mathematical Model:** The hidden layer activation `Z` is a linear transformation (`W` is the weight matrix, `b` is the bias) of the input `X`. The final output `y` is a nonlinear function `f` (the activation function) applied to another linear transformation (`W'` is a second weight matrix, with a potentially different bias `b`) of the hidden layer `Z`.

**Panel b: Spin Glass & Time Evolution Details**

* **SK Spin Glass:** Depicted as a fully connected graph of 4 nodes (spins), representing a system where each spin interacts with every other spin.

* **Temporal Mapping:** The diagram suggests a mapping from the static spin glass configuration to a dynamic, time-indexed process. The state of the system at `Time t_i` (green circles) evolves to a state at `Time t_j` (pink circles). The internal looping arrows within each time-slice column imply recurrent or iterative dynamics within that time step.

### Key Observations

1. **Structural Analogy:** The diagram visually proposes a correspondence between the layers of a neural network (a) and the time-evolved states of a spin glass system (b). The hidden layer `Z` in the network may be analogous to the state of the spin system at a given time.

2. **Connectivity:** Both systems are characterized by dense connectivity. The neural network is fully connected between layers. The SK spin glass is fully connected among all its components.

3. **Directionality vs. Recurrence:** Panel **a** shows a strict feedforward (acyclic) flow. Panel **b** introduces recurrence (the loops within each time column) and a temporal progression from `t_i` to `t_j`.

4. **Color Coding:** Colors are used consistently to link concepts: purple for the hidden layer/state, and a progression from green to pink for time evolution.

### Interpretation

This diagram is likely from a technical paper exploring the intersection of **machine learning** (specifically, neural networks) and **statistical physics** (specifically, spin glass theory). The core message is that the mathematical framework used to describe disordered magnetic systems like spin glasses can provide insights into the behavior and training dynamics of neural networks.

* **What it Suggests:** The training process of a neural network, which navigates a complex "loss landscape," may be formally similar to the energy minimization or dynamics of a spin glass. The "hidden layer" state `Z` is a key intermediate representation whose evolution (perhaps during training epochs) is being compared to the time evolution of spin configurations.

* **Relationships:** The mapping implies that concepts from spin glass theory—such as replica symmetry breaking, metastable states, and complex energy landscapes—could be used to analyze issues in neural networks like generalization, convergence, and the presence of local minima.

* **Notable Anomaly/Point of Interest:** The explicit inclusion of time (`t_i`, `t_j`) in the spin glass panel, but not in the neural network panel, is significant. It suggests the author is focusing on the *dynamics* of the system (how it changes over time/iterations), not just its static architecture. The `...` after `t_j` indicates this is an ongoing process.

</details>

Figure 4: Topology of an artificial neural network and Sherrington-Kirkpatrick (SK) model. a, A simple, fully connected neural network with one hidden layer. b, A four-spin mean field SK spin-glass model.

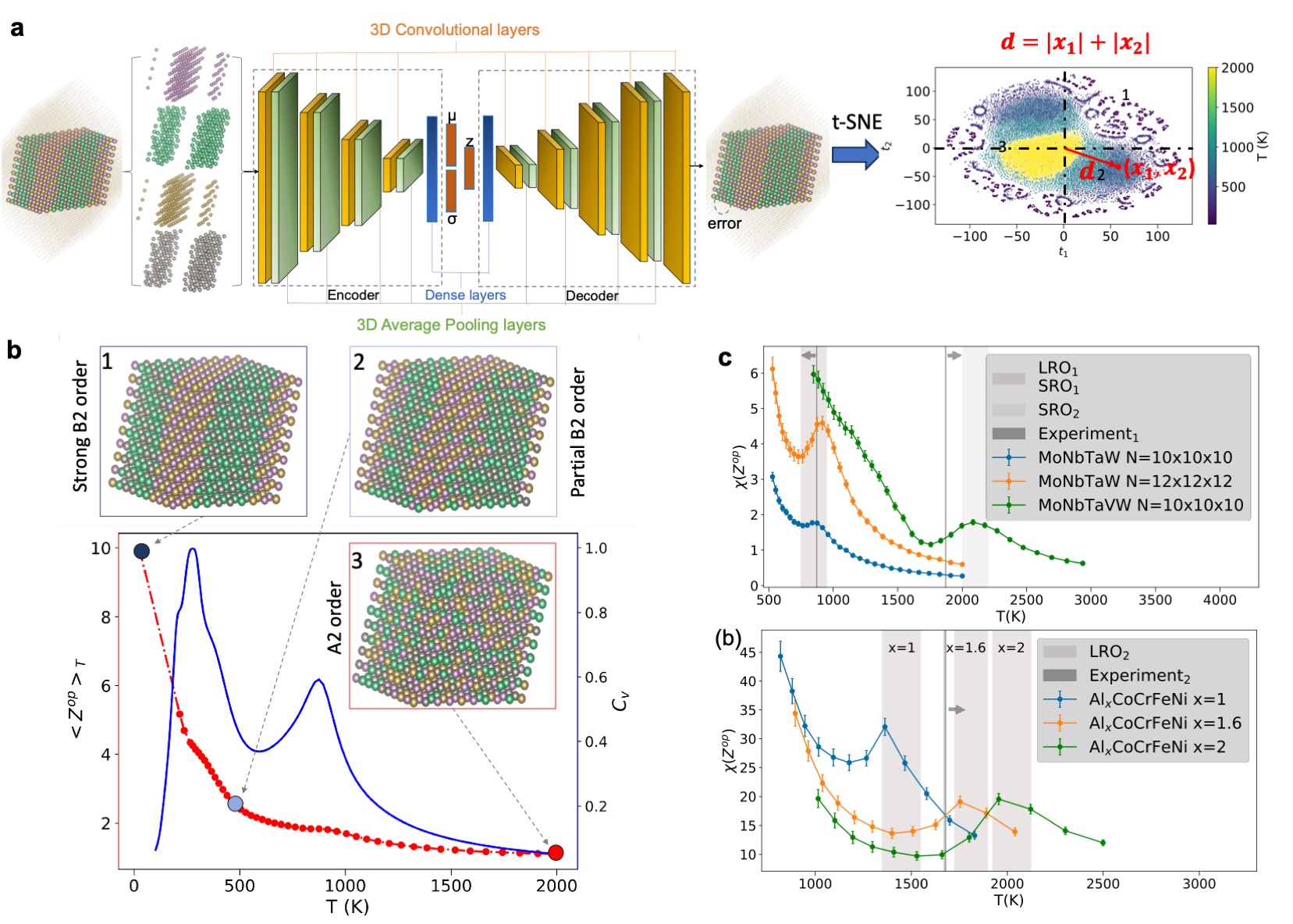

Similarity between biological neurons and ANNs ANNs are designed to imitate the structure of biological neurons [Figure 5] duan2020spiking. A neuron or nerve cell consists of three major parts, i.e., a cell body (soma), dendrites (receiving extensions), and an axon (conducting extension). When two neurons are connected, the presynaptic cell sends an electromagnetic signal to the postsynaptic cell. The signal travels from the axon of the presynaptic cell to the dendrites of the postsynaptic cell, which are directly connected. A threshold potential or action potential controls the activation of a neural connection [Figure 5 c]. The postsynaptic potential decides when to fire an electromagnetic signal. The potential at a specific neuron is a function of all postsynaptic potentials from its presynaptic neurons, i.e.,

$$

V_i=∑_jJ_ij(S_j+1). \tag{5}

$$

When $V_i$ is larger than a threshold $U_i$ , or $V_i-U_i>0$ the neuron is active ( $S_i=1$ ). Using the Heaviside function $H(x)$ , the state of the neuron can be written as $S_i=H(U_i-V_i)$ . We use $h_i$ to represent the molecular field, $h_i=U_i-V_i$ . When the spin direction is parallel to $h_i$ , the local configuration is stable, i.e., $h_iS_i>0$ . Usually, the threshold function $U_i$ is assumed to satisfy $U_i≈∑_jJ_ij$ , then we find $-\frac{1}{2}∑_ih_iS_i≈ H=-\frac{1}{2}∑_ijJ_ijσ_iσ_j$ , which has the same form as Eq. 1. The above formulation is for zero temperature. At finite temperature, the probability of activating one neuron is $P(S_i)=\frac{1}{\exp[-β(V_i-U_i)]+1}$ , which is a Fermi-Dirac distribution. Here, $β$ is the inverse temperature, defined by $β=1/k_BT$ with $k_B$ as the Boltzmann constant and $T$ is the temperature.

ANNs inherit these key features. Each artificial neuron sends data to the neurons in its following layers, which react collectively. This response is calculated using matrix multiplication plus a bias variable. The activation and updated connections of neurons follow Hebb’s rule hebb2005organization, which states that when two neurons fire together, the excitatory (ferromagnetic in magnetic terms) component of their coupling is enhanced. The more often two neurons are active together, the stronger their excitatory connection becomes. This holds for both biological and artificial neural networks.

<details>

<summary>x5.png Details</summary>

### Visual Description

## [Scientific Diagram]: Comparison of Biological Neuron, Artificial Neural Network, and Action Potential Waveforms

### Overview

The image is a three-panel scientific figure (labeled a, b, c) illustrating the analogy between a biological neuron, an artificial neural network (ANN) architecture, and the resulting action potential waveforms. Panel (a) depicts a biological neuron with its key components. Panel (b) shows a schematic of an artificial neural network layer, mapping biological components to artificial ones. Panel (c) presents three graphs comparing action potential waveforms from different sources: *in vivo*, *in vitro*, and a computational model.

### Components/Axes

**Panel (a): Biological Neuron**

* **Main Labels (with approximate positions):**

* `Pre-neuron (Presynaptic cell)`: Top-left, pointing to the axon terminal of a preceding neuron.

* `Axon`: Upper-middle, pointing to the long projection from the cell body.

* `Synapse`: Middle, pointing to the junction between the axon terminal and the dendrite.

* `Dendrites (inputs)`: Middle-right, pointing to the branching input structures of the neuron.

* `Cell body`: Center-left, pointing to the main soma of the neuron.

* `Post-neuron (Postsynaptic cell)`: Bottom-right, pointing to the axon terminal of the depicted neuron, indicating it connects to a subsequent cell.

* **Inset:** A circular inset on the left shows a magnified view of a synapse, with a red arrow pointing from it to the synapse label on the main neuron diagram.

**Panel (b): Artificial Neural Network**

* **Title:** `artificial neural network` (top center, in blue).

* **Input Labels (Left side, vertical column):**

* `V_in,1`

* `V_in,2`

* `V_in,3`

* `V_in,m` (indicating an arbitrary number of inputs).

* **Output Labels (Bottom, horizontal row):**

* `V_out,1`

* `V_out,2`

* `V_out,3`

* `V_out,n` (indicating an arbitrary number of outputs).

* **Structure:** A grid of horizontal and vertical lines. At each intersection is a rectangular symbol representing a resistor or synaptic weight. The vertical lines terminate in downward-pointing blue triangles, representing output neurons or activation functions.

* **Mapping Arrows:** Green arrows connect labels from panel (a) to panel (b):

* `Synapse` → points to a resistor symbol in the grid.

* `Dendrites (inputs)` → points to the `V_in` labels.

* `Post-neuron (Postsynaptic cell)` → points to the blue triangle output units.

**Panel (c): Action Potential Graphs**

* **Title:** `action potential` (top center, in red).

* **Three vertically stacked graphs.** Each shares the same axes:

* **Y-axis:** `Membrane potential (mV)`. Scale ranges from approximately -80 mV to +40 mV.

* **X-axis:** `Time (ms)`. Scale ranges from 0 to 4 ms.

* **Graph Labels (Top-right of each plot):**

* Top graph: `In vivo`

* Middle graph: `In vitro`

* Bottom graph: `Model`

* **Data Series:** Each graph contains a single red line plotting membrane potential over time. A black arrow in each graph points to the initial depolarization phase (the "foot" of the action potential).

### Detailed Analysis

**Panel (a) - Biological Neuron:**

The diagram shows a typical multipolar neuron. The signal flow is implied: input arrives at the dendrites/cell body from a pre-neuron via a synapse, is processed, and an output signal travels down the axon to the next (post-) neuron. The inset clarifies the synaptic cleft structure.

**Panel (b) - Artificial Neural Network:**

This is a schematic of a fully connected layer or a crossbar array, often used in neuromorphic engineering. Each `V_in` is connected to each output unit via a programmable weight (the resistor symbol). The output `V_out,j` is likely a function of the weighted sum of inputs. The green arrows explicitly map the biological concepts (synapse = weight, dendrites = input lines, post-neuron = output unit) to the artificial construct.

**Panel (c) - Action Potential Waveforms:**

* **Trend Verification:** All three waveforms show the classic action potential shape: a rapid depolarization (rising phase) to a peak, followed by repolarization (falling phase) and a hyperpolarization undershoot before returning to resting potential (~-60 to -70 mV).

* **Data Point Extraction (Approximate):**

* **Resting Potential:** ~ -65 mV for all three.

* **Peak Depolarization:**

* `In vivo`: ~ +35 mV

* `In vitro`: ~ +30 mV

* `Model`: ~ +40 mV

* **Timing:** The action potential begins at ~1 ms and peaks at ~1.5-2 ms in all cases. The duration (width at half-maximum) appears slightly broader in the `In vitro` trace compared to the `In vivo` and `Model` traces.

* **Key Feature (Arrows):** The black arrows highlight the pre-potential or "foot" of the action potential, a small depolarization before the rapid upstroke, which is present in all three traces.

### Key Observations

1. **Direct Analogy:** The figure constructs a clear visual analogy: Biological Neuron (a) → Artificial Network Model (b) → Functional Output (c).

2. **Waveform Similarity:** The action potential waveforms from the biological sources (*in vivo*, *in vitro*) and the computational `Model` are qualitatively very similar, suggesting the model successfully captures the essential dynamics.

3. **Minor Discrepancies:** The `Model` produces a slightly higher peak depolarization (+40 mV) compared to the biological examples (+30 to +35 mV). The `In vitro` waveform shows a slightly more pronounced after-hyperpolarization (dip below resting potential).

4. **Spatial Grounding:** The legend/labels are consistently placed: titles at the top of each panel, axis labels on the left and bottom of graphs, and component labels adjacent to their targets with guiding arrows.

### Interpretation

This figure serves as a pedagogical or conceptual bridge between neuroscience and artificial intelligence/neuromorphic engineering. It demonstrates how the fundamental information-processing unit of the brain (the neuron) is abstracted into a mathematical/computational model (the ANN layer). The action potential graphs in panel (c) validate the model by showing it can reproduce the key electrophysiological signature of a biological neuron.

The **Peircean investigative reading** reveals:

* **Iconic Sign:** The diagrams in (a) and (b) are icons—they resemble their real-world counterparts (a neuron, a circuit).

* **Indexical Sign:** The green arrows are indices—they point directly from the biological feature to its artificial analogue, establishing a causal or correlational relationship.

* **Symbolic Sign:** The labels (`V_in`, `mV`, `ms`) and the graph itself are symbols, relying on shared scientific convention to convey precise meaning.

The underlying message is one of biomimicry: by understanding and replicating the structure (panels a & b) of biological neural systems, we can recreate their functional dynamics (panel c). The minor discrepancies between the `Model` and biological traces highlight areas for potential model refinement, such as adjusting parameters to match peak amplitude or repolarization kinetics more precisely. The figure argues for the validity of the artificial construct by grounding it in observable biological data.

</details>

Figure 5: Biological neurons, artificial neural networks, and action potentials. a, Biological neuron and its associated concepts duan2020spiking. b, Artificial neural network with input features $V_in,i$ and output features $V_out,i$ duan2020spiking. Here, the resistor symbols (rectangles) represent the activation functions that switch the signals from individual inputs on or off. c Activation potentials naundorf2006unique. The top panel represents an action potential in a cat visual cortex neuron in vivo. The middle panel is an action potential from a cat visual cortical slice in vivo at 20°C. The bottom panel is a model potential. The arrow indicates the characteristic kink at the onset of the action potential. This figure is adapted from Refs. duan2020spiking; naundorf2006unique and modified.

### 2.2 Dictionary of corresponding concepts

To clarify the connection across the three research domains, we provide a list of analogous features of ANNs, biological neurons, and spin glasses in Table 1. Here, the concepts across the three research domains are compared. A few concepts in the table have been discussed above, such as a spin in spin glass corresponds to a biological neuron in biology and an artificial neuron in ANNs. This comparison helps identify some interesting concepts that are not studied, thus deepening our understanding and providing new research opportunities. For example, the Hamiltonian in spin glasses yields a total energy of the system that has a similar role to the loss function in ANNs, which has no correspondence in biological neurons. The effective learning mechanism of neurons remains elusive, although a few explanations have been proposed, such as the principle of predictive coding luczak2022neurons. The concept of Hamiltonian, if it can capture the overall activity of biological neurons, may be relevant to what dominates the coordination of specific neurons and the choice of particular information propagation paths friston2010free; friston2009free. Various forms of order parameters, including spin overlap parameters, magnetization, giant cluster size (commonly used in percolation theory), etc., are physical quantities that describe the average information of spin variables in spin systems and capture phase states. It can be calculated as the overall activity of biological neurons or artificial neurons, like how closely the current firing pattern matches a learned activity pattern in the hippocampus or cortex. Studying order parameters in ANNs can help understand how many neurons are generally used or activated, which is valuable to understanding the fundamental principles of ANNs as kernels.

Table 1: Dictionary for artificial neural networks (ANNs), spin glasses, and biological neurons. The terms in spin glasses and their corresponding terms in ANNs are compared. Interestingly, no term in ANNs corresponds to the order parameter, which is closely connected to the value of loss functions. The common features of ANNs and spin glasses explain (i) why Monte Carlo methods can explore their energy landscapes and (ii) why spin glass methods can be directly used to study ANNs. Another interesting question is whether we need to introduce new order parameters for spin glass physics.

| spin | biological neuron | artificial neuron |

| --- | --- | --- |

| spin variables | neuron state (active/inactive) | features |

| interaction of two spins | electromagnetic signal | weight between two neurons |

| interaction strengths | action potential | weight matrix |

| total energy (Hamiltonian) | ? (unclear) | loss function |

| stable or metastable states | memory | memorized patterns in associative network |

| connectivity of spins | synapses | activation function |

| spherical SG models | ? (unknown) | topology of fully connected ANNs |

| order parameter | overall activity of neurons | ? (no correspondence) |

### 2.3 Hopfield neural network and Boltzmann machines

The Hopfield neural network hopfield1982neural consists of a single layer of fully interconnected neurons, with each neuron linked to every other neuron [Figure 4 a]. The metastable states, or local minima of the network, correspond to the patterns, which allows the creation of associative or content addressable memories. Patterns are memorized and encoded in the network parameters. A similar idea was proposed by Little eight years prior to Hopfield’s discovery little1974existence. Structurally, it bears a topological resemblance to the SK model sherrington1975solvable. The phase diagram of the Hopfield network is also similar to the SK model sherrington1975solvable. The original motivation of the Hopfield network was to create a model of associative memory, in which a stored pattern can be retrieved from an incomplete or noisy input.

The Hopfield network evolves over time, with the state of each neuron changing dynamically (Figure 4 b). This temporal evolution allows it to be viewed as a multi-layered system, where each “layer” represents a different time step. In this sense, it functions as a fully connected neural network, with each neuron capable of reading and outputting data. The network stores patterns by adjusting connection weights, and the number of patterns it can retain is proportional to the number of neurons folli2017maximum.

The Hopfield neural network influenced the development of recurrent neural networks (RNNs), such as the long short-term memory networks and the more efficient gated recurrent units. RNNs, characterized by recurrent neural layers, are designed to process sequential data, such as speech and natural languages. The transformer architecture has gradually replaced these methods, where the self-attention mechanism is adopted to replace recurrence for processing sequential data. The Hopfield neural network and its successors remain an active area of research agliari2019relativistic; saccone2022direct; negri2023storage.

Similar to the Hopfield neural network, the Boltzmann machine is a system where each spin interacts with all the others ackley1985learning [Figure 6 b]. It consists of a visible layer and a hidden layer, with all neurons—whether in the visible (input/output) or hidden layer—fully connected. Data is both inputted and outputted through the visible layer. A defining characteristic of Boltzmann machines is their stochastic nature: neuron activation is probabilistic rather than deterministic, influenced by connection weights and inputs. The probability of activation is determined by the Boltzmann distribution.

Due to the computational complexity of Boltzmann machines, more tractable “restricted” Boltzmann machines (RBMs) were introduced smolensky1986information; nair2010rectified [Figure 6 c]. RBMs adopt a bipartite structure, where the digital layer (or visible layer) and the analog layer (hidden layer) are fully connected, but there are no intra-layer connections. This structural constraint allows the use of more computationally efficient training algorithms compared to RBMs fischer2014training. Boltzmann machines have been widely applied to physical and chemical problems, including quantum many-body wavefunction simulations nomura2017restricted; melko2019restricted, modeling polymer conformational properties yu2019generating, and representing quantum states with non-Abelian or anyonic symmetries vieijra2020restricted.

Barra et al. studied the equivalence of Hopfield networks and Boltzmann machines (Figure 6 d) barra2012equivalence. The study is based on a “hybrid” Boltzmann machine (HBM) model, where the $P$ hidden units in the analog layer are continuous (for pattern storage) and the $N$ visible neurons are discrete and binary. They showed that the HBM, when marginalized over the hidden units, and the Hopfield network are statistically equivalent. Assume $P(σ,z)$ is the joint distribution for HBM, $P(z)$ is the distribution for the continuous hidden variables that usually follow the Gaussian distribution, and $P(σ)$ is the distribution for the Hopfield distribution. These quantities follow Bayes’ rule $P(σ,z)=P(σ|z)P(z)=P(z|σ)P(σ)$ .

Since this work involves several key concepts used in ANNs, such as the diffusion model and pattern overlap, we discuss their theoretical details further here. The activity of the hidden layer follows a stochastic differential equation $T\frac{dz_μ}{dt}=-z_μ(t)+∑_iξ_i^μσ_i+\frac{2T}{β}ζ_μ(t)$ , where $ζ_μ$ is a white Gaussian noise. The idea is similar to the diffusion model widely used in image and video generation. The probability for $z_μ$ described by the stochastic differential equation above is $P(z_μ|σ)=√{\frac{β}{2π}}\exp\bigg[-\frac{β}{2}\bigg(z_μ-∑_iξ_i^μσ_i\bigg)^2\bigg]$ . The Hamiltonian of the HBM shown in Figure 6 d is $H_hbm(σ,z,τ;ξ,η)=\frac{1}{2}\bigg(∑_μz^2_μ+∑_ντ^2_ν\bigg)-∑_iσ^2_i\bigg(∑_μξ_i^μz_μ+∑_νη_i^ντ_ν\bigg)$ . Then, the joint probability for the HBM can be calculated by $P(σ,z,τ)=\exp[-β H_hbm(σ,z,τ;ξ,η)]/Z(β,ξ,η)$ , where the partition function $Z(β,ξ,η)=∑_σ∫∏^P_μ=1dz_μ∫∏^K_ν=1dτ_ν\exp[-β H_hbm(σ,z,τ;ξ,η)]$ . Assisted by the Gaussian integral and Bayes’ rule, the probability for $σ$ is $P(σ)=\exp\bigg(-\frac{β}{2}∑_i,j(∑_μξ_i^μξ_j^μ)σ_iσ_j\bigg)$ . If we set $J_ij=∑_μξ_i^μξ_j^μ$ , it is straightforward to see that this is the SK spin glass model. After determining the HBM Hamiltonian, it is not difficult to find that the concept of pattern overlap is mathematically equivalent to the overlap of replicas, an order parameter used in spin glass edwards1975theory.

Both Boltzmann machines and Hopfield neural networks have been foundational in the advancement of ANNs and deep learning. While newer, more efficient algorithms continue to emerge, these models demonstrate the profound impact of statistical physics on shaping machine-learning techniques.

### 2.4 Replica theory and the cavity method

Replica theory Replicas are copies of the same system. The key idea is to consider $n$ replicas in calculating its free energy, which is given by

$$

F=k_BT⟨\ln(Z)⟩, \tag{6}

$$

where $⟨·⟩$ represents thermal average over the ensemble. Then, the mathematical identity

$$

⟨\ln(Z)⟩=\lim_n→ 0\frac{⟨ Z^n⟩-1}{n} \tag{7}

$$

can be used at the limit of $n→ 0$ to find the free energy. This is purely mathematical and not physical because the initially assumed integer $n$ is treated like a real number that can be smaller than one and infinitely close to 0. Mathematician Talagrand proved its correctness strictly talagrand2003spin. In the replica symmetry method, each replica is treated identically, which is the origin of the negative entropy in the mean-field solution for the SK model. Parisi proposed a replica symmetry-breaking method that successfully addressed the negative entropy issue by constructing a matrix ansatz in which replicas can have different ordering states.

The replica theory was initially developed to study glassy, disordered systems, such as spin glasses kirkpatrick1978infinite; parisi1983order; charbonneau2023spin; newman2024critical, and later to understand the macroscopic behavior of learning algorithms and capacity limits gardner1988space; amit1985storing. It was used to analyze the phase transitions of associative neural networks (e.g., the Hopfield network) and overparameterization and generalization of ANNs rocks2022memorizing; baldassi2022learning. One crucial question in associative neural networks is the storage capacity of memory. A network system with size $N$ could only provide associative memory $p≤α_cN$ at zero temperature with $α_c=0.1-0.2$ for Hopfield models hopfield1982neural; amit1985storing. For example, Amit and colleagues found $α_c≈ 0.138$ with Hebb’s rule $J_ij=1/2∑_μξ_i^μξ_j^μ$ amit1985storing. When different or no constraints are imposed on $J_ij$ , different values of $α_c$ are found gardner1988space; gardner1987maximum; gardner1988optimal; krauth1989storage. When $p≥α_cN$ , memory quality degrades quickly. There is a phase transition at $α_c$ . Studying this problem is equivalent to finding the number of ground states of a spin glass.

The replica theory has been used to understand the phase transition or the critical behavior of ANNs in supervised and unsupervised learning. Hou et al. proposed a statistical physics model of unsupervised learning and found that the sensory inputs drive a series of continuous phase transitions related to spontaneous intrinsic-symmetry breaking hou2020statistical. Baldassi et al. studied the subdominant dense clusters in ANNs with discrete synapses baldassi2015subdominant. They found these clusters enabled high computational performance in these systems.

Cavity method Later, Parisi and coauthors proposed another method, i.e., the cavity model, to solve the SK model (a method to calculate the statistical properties by removing a single spin and observing the reaction of the spin system) mezard1987spin. It is a statistical method used to calculate thermodynamic properties, serving as an alternative to the replica theory. It focuses on removing one spin and its interactions with its neighbors to create a cavity and calculates the response of the rest of the system. At zero temperature, the response is determined by energy minimization. Rocks et al. adopted the “zero-temperature cavity method” for the random nonlinear features model rocks2022memorizing to study the double descent behavior, an important phenomenon that we will discuss later.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Diagram: Neural Network Architectures Comparison

### Overview

The image is a technical diagram comparing four types of neural network architectures, labeled **a**, **b**, **c**, and **d**. It visually explains the structural differences between Hopfield networks, Boltzmann machines, Restricted Boltzmann Machines (RBMs), and a more complex probabilistic graphical model. The diagram uses color-coded nodes and connection lines to illustrate connectivity patterns and includes descriptive text for each model.

### Components/Axes

The image is divided into four main panels:

* **Panel a (Top Left):** Titled "Hopfield neural network". Contains two sub-diagrams.

* **Panel b (Top Center):** Titled "Boltzmann machine". Contains one diagram.

* **Panel c (Top Right):** Titled "Restricted Boltzmann machine". Contains one diagram.

* **Panel d (Bottom):** Contains two unlabeled diagrams showing more complex network structures.

**Common Elements:**

* **Nodes:** Represented as circles. Colors are used to distinguish layers or types (e.g., dark blue, light blue, pink).

* **Connections:** Represented as lines between nodes. Arrows indicate directed connections; plain lines indicate undirected connections.

* **Text Labels:** Provide model names, descriptions of connectivity, layer functions, and operational characteristics.

### Detailed Analysis

#### **Panel a: Hopfield Neural Network**

* **Top Diagram:** Shows four dark blue circles arranged in a square, fully connected with bidirectional arrows. Label: "Neurons fully connected".

* **Bottom Diagram:** Shows two vertical chains of four light blue circles each, connected sequentially with downward arrows. An arrow points from the left chain to the right chain.

* Left chain label: "Time t_i"

* Right chain label: "Time t_j ..."

* **Descriptive Text:**

* "Only visible layer (store patterns)"

* "Deterministic; T=0 K" (where T likely represents temperature).

#### **Panel b: Boltzmann Machine**

* **Diagram:** Shows two vertical columns of four dark blue circles each. The left column is labeled "Visible layer (store patterns)" and the right "Hidden layer". Every node in the visible layer is connected to every node in the hidden layer, and all nodes within each layer are also fully connected to each other. Connections are undirected lines.

* **Descriptive Text:**

* "All neurons connected"

* "Stochastic; Monte Carlo; finite temperature"

#### **Panel c: Restricted Boltzmann Machine (RBM)**

* **Diagram:** Shows two vertical columns. The left column has four light blue circles labeled "Visible layer (store patterns)". The right column has four pink circles labeled "Hidden layer". Connections exist **only** between nodes in the visible layer and nodes in the hidden layer (a bipartite graph). There are no connections within the same layer.

* **Descriptive Text:**

* "Only neurons in different layers connected; similar to ANNs used nowadays, comprised of a stack of Boltzmann machines"

#### **Panel d: Complex Probabilistic Models**

* **Left Diagram:** A more intricate network.

* **Pink Circles (Top Left):** Labeled `z₁`, `z₂`, `z₃`. These connect to all blue squares.

* **Blue Squares (Right):** Labeled `σ₁`, `σ₂`, `σ₃`, `σ₄`, `σ₅`. These are fully interconnected with each other and also receive connections from the pink circles and pink triangles.

* **Pink Triangles (Bottom Left):** Labeled `τ₁`, `τ₂`. These connect to all blue squares.

* **Right Diagram:** A pentagon-shaped network.

* **Blue Squares (Vertices):** Labeled `σ₁`, `σ₂`, `σ₃`, `σ₄`, `σ₅`.

* **Connections:** Each `σ` node is connected to every other `σ` node, forming a complete graph (K₅). The connections are undirected lines.

### Key Observations

1. **Connectivity Evolution:** The diagrams show a clear progression from fully connected networks (Hopfield, Boltzmann) to restricted, layer-based connectivity (RBM), which is noted as the precursor to modern Artificial Neural Networks (ANNs).

2. **Deterministic vs. Stochastic:** A key distinction is made between the deterministic Hopfield network (operating at T=0 K) and the stochastic Boltzmann/RBM models (using Monte Carlo methods at finite temperature).

3. **Layer Function:** In models **b** and **c**, the "visible layer" is explicitly noted to "store patterns," indicating its role as the input/observation layer.

4. **Structural Analogy:** Panel **d**'s right diagram shows a structure (`σ` nodes in a pentagon) that is topologically identical to the fully connected Hopfield network in panel **a**, but with different labeling, suggesting it represents a similar all-to-all connectivity pattern in a different context.

5. **Model Complexity:** Panel **d**'s left diagram introduces multiple latent variable types (`z`, `τ`) influencing a set of interconnected observed variables (`σ`), representing a more advanced probabilistic graphical model.

### Interpretation

This diagram serves as a pedagogical tool to contrast foundational energy-based and probabilistic neural network models. It highlights the architectural constraints that define each model's capabilities and computational methods.

* **Hopfield Networks (a)** are presented as simple, deterministic associative memories with a single layer of fully connected neurons, capable of storing and retrieving patterns but limited by their fully connected, recurrent nature.

* **Boltzmann Machines (b)** introduce stochasticity and a separation into visible and hidden layers, allowing them to model more complex probability distributions, but their fully connected nature makes them computationally intractable for large systems.

* **Restricted Boltzmann Machines (c)** are shown as the critical simplification that enables practical learning. By removing intra-layer connections, they become tractable to train (e.g., using Contrastive Divergence) and can be stacked to form Deep Belief Networks, bridging the gap to modern deep learning.

* **Panel d** likely illustrates extensions or related models. The left diagram may represent a model with multiple types of latent variables (`z`, `τ`) influencing observed data (`σ`), common in advanced topic models or structured prediction tasks. The right diagram reinforces the concept of a fully connected graph, perhaps as a component within a larger model or to contrast with the restricted connectivity of RBMs.

The overall narrative is one of increasing structural refinement for practical machine learning: moving from theoretically interesting but intractable fully connected models to restricted, layer-wise architectures that balance expressive power with computational feasibility, ultimately leading to the ANNs used today.

</details>

Figure 6: Comparison of Hopfield neural network and Boltzmann machines. a, Principle of the Hopfield neural network. b, Illustration of a Boltzmann machine. c, Restricted Boltzmann machine, generated by removing the intra-layer connections of visible and hidden layers. d, The equivalence of Hopfield neural network and a hybrid restricted Boltzmann machine. Here, the visible variables $\{σ_i\}$ are discrete and binary and hidden variables $\{z_i\}$ and $\{τ_i\}$ are continuous.

### 2.5 Overparameterization and double-descent behavior

Overparameterization The variance-bias trade-off is prevalent and has been observed in numerous models, and the reason is apparent: fewer parameters result in low variance and high bias, while more parameters lead to high variance and low bias for each prediction. There is an optimal choice of intermediate parameter size at which the model achieves its best performance. However, this does not seem to hold for neural networks. The optimal performance of neural networks is achieved with overparameterization belkin2019reconciling; rocks2022memorizing; baldassi2022learning. When a neural network has more parameters than the number of input data points, it is still considered overparameterized. In simple analytical expressions, such as linear equations, this typically becomes problematic, a phenomenon known as overfitting. One long-standing mystery of ANNs is that they seemingly subvert traditional machine learning theory, as their parameter size can exceed the number of data points for training without a signal of overfitting.

Double-descent behavior The total error function of a model usually has a “U” shape, and its minimum corresponds to the optimal parameters. The training error vanishes at a critical value of the parameter size when it equals the size of the training data points. The test error becomes divergent at this critical parameter size, analogous to the specific heat at the transition temperature. In traditional linear models, the error increases except at this critical value; in the overparameterized model, the test error first decreases, then increases at the critical value, and then decreases again. This phenomenon is referred to as double-descent behavior belkin2019reconciling; rocks2022memorizing; baldassi2022learning. This behavior results in an optimal or minimal test error in the overparameterized region.

Understanding the variance-bias trade-off of neural networks with overparameterization is crucial in deep learning. There are different opinions on the overparameterization phenomenon, which relies on methods from statistics and probability theory. For example, this behavior can be studied using the replica trick. The neural networks undergo a phase transition when the parameters increase across the critical threshold. The Information Bottleneck Principle (IBP) proposes that deep neural networks first fit the training data and then discard irrelevant information by going through an information bottleneck, which helps them generalize tishby2000information; tishby2015deep. Since the results are based on a particular type of ANNs, it is controversial whether the IBP conclusion holds generally for all deep neural networks saxe2019information.