# Encoder-Free Knowledge-Graph Reasoning with LLMs via Hyperdimensional Path Retrieval

**Authors**:

- Calvin Yeung Mohsen Imani (University of California, Irvine)

## Abstract

Recent progress in large language models (LLMs) has made knowledge-grounded reasoning increasingly practical, yet KG-based QA systems often pay a steep price in efficiency and transparency. In typical pipelines, symbolic paths are scored by neural encoders or repeatedly re-ranked by multiple LLM calls, which inflates latency and GPU cost and makes the decision process hard to audit. We introduce PathHD, an encoder-free framework for knowledge-graph reasoning that couples hyperdimensional computing (HDC) with a single LLM call per query. Given a query, PathHD represents relation paths as block-diagonal GHRR hypervectors, retrieves candidate paths using a calibrated blockwise cosine similarity with Top- $K$ pruning, and then performs a one-shot LLM adjudication that outputs the final answer together with supporting, citeable paths. The design is enabled by three technical components: (i) an order-sensitive, non-commutative binding operator for composing multi-hop paths, (ii) a robust similarity calibration that stabilizes hypervector retrieval, and (iii) an adjudication stage that preserves interpretability while avoiding per-path LLM scoring. Across WebQSP, CWQ, and GrailQA, PathHD matches or improves Hits@1 compared to strong neural baselines while using only one LLM call per query, reduces end-to-end latency by 40-60%, and lowers GPU memory by 3-5 $\times$ due to encoder-free retrieval. Overall, the results suggest that carefully engineered HDC path representations can serve as an effective substrate for efficient and faithful KG-LLM reasoning, achieving a strong accuracy-efficiency-interpretability trade-off.

## 1 Introduction

Large Language Models (LLMs) have rapidly advanced reasoning over both text and structured knowledge. Typical pipelines follow a retrieve–then–reason pattern: they first surface evidence (documents, triples, or relation paths), then synthesize an answer using a generator or a verifier [21, 32, 46, 43, 47]. In knowledge-graph question answering (KGQA), this often becomes path-based reasoning: systems construct candidate relation paths that connect the topic entities to potential answers and pick the most plausible ones for final prediction [38, 17, 15, 16, 25]. While these approaches obtain strong accuracy on WebQSP, CWQ, and GrailQA, they typically depend on heavy neural encoders (e.g., Transformers or GNNs) or repeated LLM calls to rank paths, which makes them slow and expensive at inference time, especially when many candidates must be examined.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Two Major Pain Points in KG-LLM Reasoning

### Overview

The image is a technical diagram illustrating two primary failure modes or challenges in systems that combine Knowledge Graphs (KG) with Large Language Models (LLMs) for reasoning tasks. It uses a specific example query to demonstrate the first problem and a process flowchart to illustrate the second.

### Components/Axes

The diagram is divided into two main panels, separated by a vertical dashed line.

**Title:** "Two Major Pain Points in KG-LLM Reasoning" (centered at the top).

**Legend (Top Center):**

* `-->` (Green, dashed arrow): "correct (not chosen)"

* `-->` (Red, solid arrow): "chosen wrong"

**Left Panel - Knowledge Graph Reasoning Example:**

* **Input Query (in a thought bubble):** "Which company acquired SolarCity?"

* **Knowledge Graph Nodes (Blue rounded rectangles):** `SolarCity`, `Renewable Energy`

* **Knowledge Graph Entities (Other colored rounded rectangles):** `Tesla` (Green), `Elon Musk` (Blue), `SpaceX` (Pink), `SolarEdge` (Pink)

* **Relationship Edges (Arrows with labels):**

* `acquired_by` (Green, dashed arrow from SolarCity to Tesla)

* `founded_by` (Red, solid arrow from SolarCity to Elon Musk)

* `CEO_of` (Red, solid arrow from Elon Musk to SpaceX)

* `operates_in` (Red, solid arrow from SolarCity to Renewable Energy)

* `related_to` (Red, solid arrow from Renewable Energy to SolarEdge)

* **Outcome Text (Below the graph):** "Outcome: Incorrect reasoning despite access to KG (selected path mismatches the query relation)."

* **Issue Box (Pink background, bottom left):**

* **Header:** "Issue:"

* **Bullet 1:** "Mismatch between query relation ("acquired_by") and used path (founder/CEO)."

* **Bullet 2:** "Hallucination due to non-faithful reasoning over KG."

**Right Panel - Process Flowchart:**

* **Subtitle:** "LLM-based Path Scoring Methods"

* **Annotation:** "*A class of prior methods: per-path LLM evaluation*"

* **Process Flow:**

1. `Candidate Path Generator` (Circle) --> produces multiple paths.

2. Paths are shown as `Path #1`, `Path #2`, `...` (Text).

3. Each path is evaluated by an `LLM` (Diamond shape with a dollar sign `$` icon).

4. Each evaluation produces a `Score s1`, `Score s2` (Text with a stopwatch icon).

5. Scores are used to select the `Best Path` (Green rounded rectangle with a checkmark).

* **Issue Box (Pink background, bottom right):**

* **Header:** "Issue:"

* **Bullet 1:** "Repeated LLM calls per candidate path → high latency & cost."

* **Bullet 2:** "Evaluation is sequential / hard to parallelize."

* **Bullet 3:** "Scalability degrades as candidate count increases."

### Detailed Analysis

**Left Panel Analysis (Trend Verification):**

The visual trend shows a reasoning path that diverges from the correct answer. The correct relationship (`acquired_by`) is present in the graph (green dashed line to `Tesla`) but is not selected. Instead, the system follows a red, solid "chosen wrong" path: `SolarCity` → `founded_by` → `Elon Musk` → `CEO_of` → `SpaceX`. A second, also incorrect, path is shown: `SolarCity` → `operates_in` → `Renewable Energy` → `related_to` → `SolarEdge`. This demonstrates a failure to align the query's intent with the correct graph relation.

**Right Panel Analysis (Component Isolation):**

This section isolates the computational bottleneck. The flow is linear and sequential: generate paths, then evaluate each one individually with an LLM call. The dollar sign (`$`) and stopwatch icons explicitly symbolize the cost and latency associated with each evaluation step. The "..." indicates this process repeats for an arbitrary number of candidate paths.

### Key Observations

1. **Spatial Grounding:** The legend is positioned at the top center, applying to both panels. In the left panel, the correct path (green, dashed) is visually distinct but bypassed. The incorrect paths (red, solid) are prominently displayed.

2. **Dual Failure Modes:** The diagram highlights two distinct but related problems: a *semantic* failure (wrong reasoning path chosen) and a *systemic* failure (inefficient evaluation process).

3. **Symbolism:** Icons are used effectively: a lightbulb for "Issue," a dollar sign for cost, a stopwatch for latency, a checkmark for correct/best, and an 'X' for incorrect.

4. **Color Coding:** Consistent use of green for correct/optimal and red for incorrect/problematic reinforces the message.

### Interpretation

This diagram argues that current KG-LLM reasoning systems suffer from a fundamental trade-off between **faithfulness** and **efficiency**.

* **The Left Panel (Faithfulness Problem)** demonstrates that even with a structured knowledge graph, an LLM can hallucinate or follow a plausible but incorrect reasoning chain (`founder/CEO` instead of `acquired_by`). This suggests the model may not be grounding its reasoning sufficiently in the provided graph structure, leading to unfaithful answers.

* **The Right Panel (Efficiency Problem)** shows that the common method of scoring each candidate path with a separate LLM call is inherently unscalable. It creates a direct linear relationship between the number of potential answers (candidate paths) and both cost and response time. This sequential bottleneck makes the approach impractical for complex queries that generate many candidate paths.

**Underlying Message:** The two pain points are interconnected. Solving the faithfulness problem (left) might require generating and evaluating more candidate paths to find the correct one, which in turn exacerbates the efficiency problem (right). Therefore, advances in this field likely require new methods that can both accurately identify the correct reasoning path *and* do so without prohibitive computational cost, perhaps through more efficient scoring mechanisms or parallelizable evaluation.

</details>

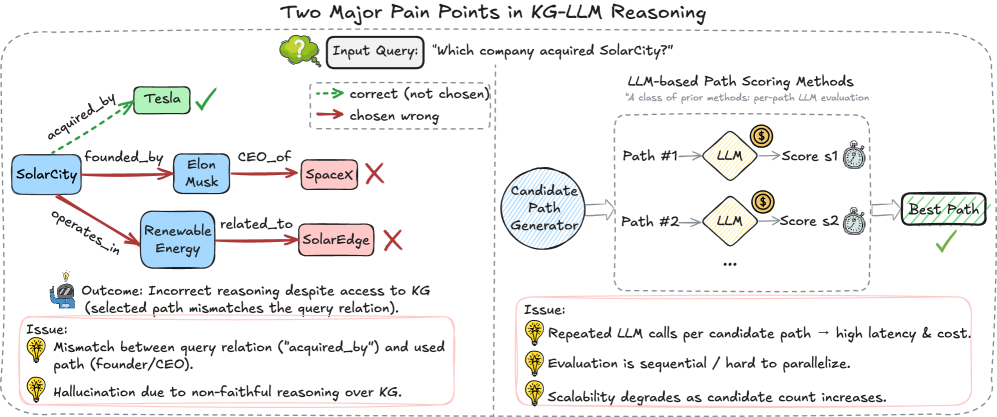

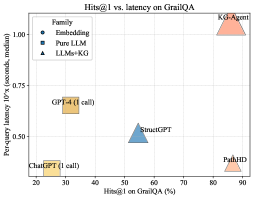

Figure 1: Two major pain points in KG-LLM reasoning. Left: A path-based KGQA system selects a candidate path whose relation sequence does not match the query relation (“acquired_by”), leading to an incorrect answer even though the KG contains the correct evidence. Right: LLM-based scoring evaluates each candidate path in a separate LLM call, which is sequential, hard to parallelize, and incurs high latency and token cost as the candidate set grows.

Figure ˜ 1 highlights two recurring issues in KG–LLM reasoning. ❶ Path–query mismatch: Order-insensitive encodings, weak directionality, and noisy similarity often favor superficially related yet misaligned paths, blurring the question’s intended relation. ❷ Per-candidate LLM scoring: Many systems score candidates sequentially, so latency and token cost grow roughly linearly with set size; batching is limited by context/API, and repeated calls introduce instability, yet models can still over-weight long irrelevant chains, hallucinate edges, or flip relation direction. Most practical pipelines first detect a topic entity, enumerate $10\!\sim\!100$ length- $1$ – $4$ paths, then score each with a neural model or LLM, sending top paths to a final step [38, 25, 16]. This hard-codes two inefficiencies: (i) neural scoring dominates latency (fresh encoding/prompt per candidate), and (ii) loose path semantics (commutative/direction-insensitive encoders conflate founded_by $\!\to$ CEO_of with its reverse), which compounds on compositional/long-hop questions.

Hyperdimensional Computing (HDC) offers a different lens: represent symbols as long, nearly-orthogonal hypervectors and manipulate structure with algebraic operations such as binding and bundling [18, 31]. HDC has been used for fast associative memory, robust retrieval, and lightweight reasoning because its core operations are elementwise or blockwise and parallelize extremely well on modern hardware [7]. Encodings tend to be noise-tolerant and compositional; similarity is computed by simple cosine or dot product; and both storage and computation scale linearly with dimensionality. Crucially for KGQA, HDC supports order-sensitive composition when the binding operator is non-commutative, allowing a path like $r_{1}\!\to r_{2}\!\to r_{3}$ to be distinguished from its permutations while remaining a single fixed-length vector. This makes HDC a promising substrate for ranking many candidate paths without invoking a neural model for each one.

Motivated by these advantages, we introduce PathHD (Hyper D imensional Path Retrieval), a lightweight retrieval-and-reason framework for efficient KGQA with LLMs. First, we map every relation to a block-diagonal unitary representation and encode a candidate path by non-commutative Generalized Holographic Reduced Representation (GHRR) binding [48]; this preserves order and direction in a single hypervector. In parallel, we encode the query into the same space to obtain a query hypervector. Second, we score all candidates via cosine similarity to the query hypervector and keep only the top- $K$ paths with a simple, parallel Top- $K$ selection. Finally, instead of per-candidate LLM calls, we make one LLM call that sees the question plus these top- $K$ paths (verbalized), and it outputs the answer along with cited supporting paths. In effect, PathHD addresses both pain points in Figure ˜ 1: order-aware binding reduces path–query mismatch, and vector-space scoring eliminates per-path LLM evaluation, cutting latency and token cost.

Our contributions are as follows:

- A fast, order-aware retriever for KG paths. We present PathHD, which uses GHRR-based, non-commutative binding to encode relation sequences into hypervectors and ranks candidates with plain cosine similarity, without learned neural encoders or per-path prompts. This design keeps a symbolic structure while enabling fully parallel scoring with $\mathcal{O}(Nd)$ complexity.

- An efficient one-shot reasoning stage. PathHD replaces many LLM scoring calls with a single LLM adjudication over the top- $K$ paths. This decouples retrieval from generation, lowers token usage, and improves wall-clock latency while remaining interpretable: the model cites the supporting path(s) it used.

- Extensive validation and operator study. On WebQSP, CWQ, and GrailQA, PathHD achieves competitive Hits@1 and F1 with markedly lower inference cost. An ablation on binding operators shows that our block-diagonal (GHRR) binding outperforms commutative binding and circular convolution, and additional studies analyze the impact of top- $K$ pruning and latency–accuracy trade-offs.

Overall, PathHD shows that carefully designed hyperdimensional representations can act as an encoder-free, training-free path scorer inside KG-based LLM reasoning systems, preserving competitive answer accuracy while substantially improving inference efficiency and providing explicit path-level rationales.

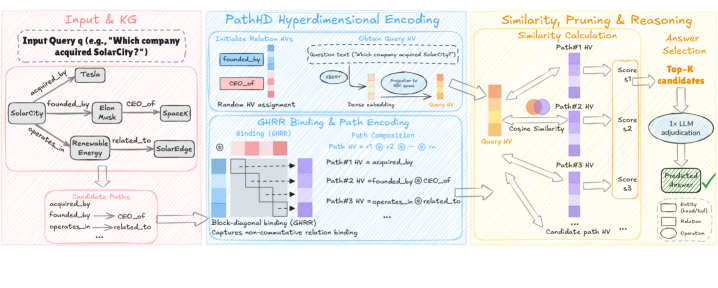

## 2 Method

The proposed PathHD follows a Plan $\rightarrow$ Encode $\rightarrow$ Retrieve $\rightarrow$ Reason pipeline (Figure ˜ 2). (i) We first generate or select relation plans that describe how an answer can be reached (schema enumeration optionally refined by a light prompt). (ii) Each plan is mapped to a hypervector via a non-commutative GHRR binding so that order and direction are preserved. (iii) We compute a blockwise cosine similarity in the hypervector space and apply Top- $K$ pruning. (iv) Finally, a single LLM call produces the answer with path-based explanations. This design keeps the heavy lifting in cheap vector operations, delegating semantic adjudication to one-shot LLM reasoning.

### 2.1 Problem Setup & Notation

Given a question $q$ , a knowledge graph (KG) $\mathcal{G}$ , and a set of relation schemas $\mathcal{Z}$ , the goal is to predict an answer $a$ . Formally, we write $\mathcal{G}=(\mathcal{V},\mathcal{E},\mathcal{R})$ , where $\mathcal{V}$ is the set of entities, $\mathcal{R}$ is the set of relation types, and $\mathcal{E}\subseteq\mathcal{V}\times\mathcal{R}\times\mathcal{V}$ is the set of directed edges $(e,r,e^{\prime})$ . We denote entities by $e\in\mathcal{V}$ and relations by $r\in\mathcal{R}$ . A relation schema $z\in\mathcal{Z}$ is a sequence of relation types $z=(r_{1},\dots,r_{\ell})$ . Instantiating a schema $z$ on $\mathcal{G}$ yields concrete KG paths of the form $(e_{0},r_{1},e_{1},\dots,r_{\ell},e_{\ell})$ such that $(e_{i-1},r_{i},e_{i})\in\mathcal{E}$ for all $i$ . For a given question $q$ , we denote by $\mathcal{P}(q)$ the set of candidate paths instantiated from schemas in $\mathcal{Z}$ and by $N=|\mathcal{P}(q)|$ its size. We write $d$ for the dimensionality of the hypervectors used to represent relations and paths.

A key challenge is to efficiently locate a small set of plausible paths for $q$ from this large candidate pool, and then let an LLM reason over only those paths. A summary of the notation throughout the paper can be found in Appendix ˜ A.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Technical Diagram: PathHD Hyperdimensional Encoding Pipeline

### Overview

This image is a technical flowchart illustrating a system called "PathHD Hyperdimensional Encoding." The system processes a natural language query against a knowledge graph (KG) to find an answer by converting entities and relationships into hyperdimensional vectors (HVs), composing path representations, and performing similarity-based reasoning. The diagram is divided into three main processing stages, flowing from left to right.

### Components/Axes

The diagram is segmented into three primary colored regions, each representing a major processing stage:

1. **Input & KG (Left, Pink Border):**

* **Input Query:** "Input Query (e.g., 'Which company acquired SolarCity?')"

* **Knowledge Graph:** A visual graph with nodes and labeled edges.

* **Nodes:** Tesla, SolarCity, Elon Musk, SpaceX.

* **Edges (Relationships):** `acquired_by`, `founded_by`, `CEO_of`, `operates_in`, `related_to`.

* **Candidate Paths:** A list derived from the graph: `acquired_by`, `founded_by`, `CEO_of`, `operates_in`, `related_to`, etc.

2. **PathHD Hyperdimensional Encoding (Center, Blue Border):**

* **Sub-section 1: Initialize Relation HVs**

* Text: "Initialize Relation HVs" and "Random HV assignment."

* Visual: A list of relations (`acquired_by`, `CEO_of`, etc.) mapped to colored bars representing their initial random hyperdimensional vectors.

* **Sub-section 2: Encode Query HV**

* Text: "Encode Query HV" and "Data encoding."

* Process: The query text is tokenized (`Which`, `company`, `acquired`, `SolarCity`, `?`), encoded, and aggregated into a single "Query HV."

* **Sub-section 3: GHRR Binding & Path Encoding**

* Text: "GHRR Binding & Path Encoding," "Binding (GHRR)," "Path Composition," and "Block-diagonal binding (GHRR)."

* Process: Shows the composition of path HVs using binding (⊗) and permutation (ρ) operations. Example: `Path #1 HV = ρ¹ ⊗ acquired_by`.

* Note: "Captures non-commutative relation binding."

3. **Similarity, Pruning & Reasoning (Right, Yellow Border):**

* **Sub-section 1: Similarity Calculation**

* Text: "Similarity Calculation" and "Cosine Similarity."

* Process: The Query HV is compared via cosine similarity to multiple "Candidate path HVs" (Path #1 HV, Path #2 HV, Path #3 HV...).

* Output: A list of similarity scores (Score #1, Score #2, Score #3...).

* **Sub-section 2: Answer Selection**

* Text: "Answer Selection," "Top-K candidates," "1x LLM re-evaluation," and "Predicted Answer."

* Process: The top-K candidate paths (based on score) are sent to an LLM for re-evaluation, which outputs the final "Predicted Answer."

* **Legend (Bottom Right):** Defines symbols for `entity`, `Relation`, and `Operation`.

### Detailed Analysis

The diagram details a multi-step pipeline for answering a query using hyperdimensional computing:

1. **Input Processing:** A natural language query is parsed. A knowledge graph provides structured data (entities and relations) relevant to the query.

2. **Vector Initialization:** Each relation type in the knowledge graph (e.g., `acquired_by`) is assigned a random, high-dimensional vector (HV).

3. **Query Encoding:** The query text is transformed into a single, dense Query HV.

4. **Path Encoding:** Potential reasoning paths through the knowledge graph (e.g., `Tesla --acquired_by--> ?`) are represented as HVs. This is done using a "Generalized Holographic Reduced Representation (GHRR)" binding operation, which is non-commutative, preserving the order of relations in the path.

5. **Similarity Search:** The system calculates the cosine similarity between the Query HV and all candidate Path HVs. This measures how well each path "matches" the query.

6. **Answer Generation:** The highest-scoring candidate paths are filtered (Top-K) and passed to a Large Language Model (LLM) for final evaluation and answer generation (e.g., "Tesla").

### Key Observations

* **Hybrid Architecture:** The system combines symbolic AI (structured knowledge graphs, explicit paths) with connectionist AI (hyperdimensional vectors, neural LLM).

* **Non-Commutative Binding:** The use of GHRR and permutation (ρ) explicitly encodes the *order* of relations in a path, which is crucial for meaning (e.g., "A acquired by B" vs. "B acquired by A").

* **Two-Stage Reasoning:** A fast, vector-based similarity search prunes the search space, followed by a slower but more powerful LLM re-evaluation for final answer selection.

* **Example Query:** The entire pipeline is illustrated with the concrete example: "Which company acquired SolarCity?" The expected answer, based on the knowledge graph, is "Tesla."

### Interpretation

This diagram presents a novel approach to **neuro-symbolic question answering**. It addresses a key challenge: efficiently searching a vast space of possible reasoning paths in a knowledge graph.

* **What it demonstrates:** The PathHD method translates the symbolic problem of "path finding" into a vector space problem of "similarity search." Hyperdimensional vectors act as a compressed, distributed representation of entire relational paths. This allows for rapid, parallel comparison of many candidate answers.

* **How elements relate:** The Knowledge Graph provides the raw facts. The Hyperdimensional Encoding stage creates a "fuzzy" vector search space from these crisp facts. The Similarity stage performs the core retrieval. The LLM acts as a sophisticated, context-aware filter and answer formatter.

* **Notable implications:** This architecture could offer advantages in **scalability** (handling large KGs) and **interpretability** (the top-scoring paths can be inspected) compared to pure end-to-end neural models. The "non-commutative binding" is a critical technical detail ensuring the semantic integrity of composed paths. The system's effectiveness hinges on the quality of the initial random HVs and the binding operation's ability to create unique, meaningful path representations.

</details>

Figure 2: Overview of PathHD: a Plan $\rightarrow$ Encode $\rightarrow$ Retrieve $\rightarrow$ Reason pipeline. A schema-based planner first generates relation plans over the KG; PathHD encodes these plans and instantiates candidate paths into order-aware GHRR hypervectors, ranks candidates with blockwise cosine similarity and Top- $K$ selection, and then issues a single LLM adjudication call to answer with cited paths, with most computation handled by vector operations and modest LLM use.

### 2.2 Hypervector Initialization

We work in a Generalized Holographic Reduced Representations (GHRR) space. Each atomic symbol $x$ (relation or, optionally, entity) is assigned a $d$ -dimensional hypervector $\mathbf{v}_{x}\!\in\!\mathbb{C}^{d}$ constructed as a block vector of unitary matrices:

$$

\mathbf{v}_{x}\;=\;[A^{(x)}_{1};\dots;A^{(x)}_{D}],\qquad A^{(x)}_{j}\in\mathrm{U}(m),\;\;d=Dm^{2}. \tag{1}

$$

In practice, we sample each block from a simple unitary family for efficiency, e.g.,

$$

A^{(x)}_{j}=\operatorname{diag}\!\big(e^{i\phi_{j,1}},\ldots,e^{i\phi_{j,m}}\big),\qquad\phi_{j,\ell}\sim\operatorname{Unif}[0,2\pi),

$$

or a random Householder product. Blocks are $\ell_{2}$ -normalized so that all hypervectors have unit norm. This initialization yields near-orthogonality among symbols, which concentrates with dimension (cf. Prop. 1). Hypervectors are sampled once and kept fixed; the retriever itself has no learned parameters.

#### Query hypervector.

For a question $q$ , we obtain a query hypervector in two ways, depending on the planning route used in Section ˜ 2: (i) plan-based —encode the selected relation plan $z_{q}=(r_{1},\dots,r_{\ell})$ using the same GHRR binding as paths (see Eq. equation 3); or (ii) text-projection —embed $q$ with a sentence encoder (e.g., SBERT) to $\mathbf{h}_{q}\in\mathbb{R}^{d_{t}}$ and project it to the HDC space using a fixed random linear map $P\in\mathbb{R}^{d\times d_{t}}$ , then block-normalize:

$$

\mathbf{v}_{q}\;=\;\mathcal{N}_{\text{block}}\!\big(P\,\mathbf{h}_{q}\big). \tag{2}

$$

Both choices produce a query hypervector compatible with GHRR scoring. Unless otherwise specified, we use the plan-based encoding in all main experiments, and report the text-projection variant only in ablations (Section ˜ J.2).

### 2.3 GHRR Binding and Path Encoding

A GHRR hypervector is a block vector $\mathbf{H}=[A_{1};\dots;A_{D}]$ with $A_{j}\in\mathrm{U}(m)$ . Given two hypervectors $\mathbf{X}=[X_{1};\dots;X_{D}]$ and $\mathbf{Y}=[Y_{1};\dots;Y_{D}]$ , we define the block-wise binding operator $\mathbin{\raisebox{0.86108pt}{\scalebox{0.9}{$\circledast$}}}$ and the encoding of a length- $\ell$ relation path $z=(r_{1},\dots,r_{\ell})$ by:

$$

\mathbf{v}_{z}\;=\;\mathbf{v}_{r_{1}}\mathbin{\raisebox{0.86108pt}{\scalebox{0.9}{$\circledast$}}}\mathbf{v}_{r_{2}}\mathbin{\raisebox{0.86108pt}{\scalebox{0.9}{$\circledast$}}}\cdots\mathbin{\raisebox{0.86108pt}{\scalebox{0.9}{$\circledast$}}}\mathbf{v}_{r_{\ell}},\qquad\mathbf{X}\mathbin{\raisebox{0.86108pt}{\scalebox{0.9}{$\circledast$}}}\mathbf{Y}=[X_{1}Y_{1};\dots;X_{D}Y_{D}], \tag{3}

$$

followed by block-wise normalization to unit norm. Binding is applied left-to-right along the path, and because the matrix multiplication is non-commutative ( $X_{j}Y_{j}\neq Y_{j}X_{j}$ ), the encoding preserves the order and directionality of relations, which are critical for multi-hop KG reasoning.

#### Properties and choice of binding operator.

Although PathHD only uses forward binding for retrieval, GHRR also supports approximate unbinding: for $Z_{j}=X_{j}Y_{j}$ with unitary blocks, we have $X_{j}\approx Z_{j}Y_{j}^{\ast}$ and $Y_{j}\approx X_{j}^{\ast}Z_{j}$ . This property enables inspection of the contribution of individual relations in a composed path and underpins our path-level rationales.

Classical HDC bindings (XOR, element-wise multiplication, circular convolution) are commutative, which collapses $r_{1}{\to}r_{2}$ and $r_{2}{\to}r_{1}$ to similar codes and hurts directional reasoning. GHRR is non-commutative, invertible at the block level, and offers higher representational capacity via unitary blocks, leading to better discrimination between paths of the same multiset but different order. We empirically validate this choice in the ablation study (Table ˜ 3), where GHRR consistently outperforms commutative bindings. Additional background on binding operations is provided in Appendix ˜ K.

### 2.4 Query & Candidate Path Construction

We obtain a query plan $z_{q}$ via schema-based enumeration on the relation-schema graph (depth $\leq L_{\max}$ ). In all main experiments reported in Section ˜ 3, this planning stage is purely symbolic: we do not invoke any LLM beyond the single final reasoning call in Section ˜ 2.6. The query hypervector $\mathbf{v}_{q}$ is then constructed from the selected plan $z_{q}$ using the plan-based encoding described above (Eq. equation 3 or, for text projection, Eq. equation 2).

Given a query plan, candidate paths $\mathcal{Z}$ are instantiated from the KG either by matching plan templates to existing edges or by a constrained BFS with beam width $B$ , both of which yield symbolic paths. These paths are then deterministically encoded into hypervectors and scored by our HDC module (Sec. 2.5). An optional lightweight prompt-based refinement of schema plans is described in the appendix as an extension; it is not used in our main experiments and does not change the single-call nature of the system.

### 2.5 HD Retrieval: Blockwise Similarity and Top- $K$

Let $\langle A,B\rangle_{F}:=\mathrm{tr}(A^{\ast}B)$ be the Frobenius inner product. Given two GHRR hypervectors $\mathbf{X}=[X_{j}]_{j=1}^{D}$ and $\mathbf{Y}=[Y_{j}]_{j=1}^{D}$ , we define the blockwise cosine similarity

$$

\mathrm{sim}(\mathbf{X},\mathbf{Y})=\frac{1}{D}\sum_{j=1}^{D}\,\frac{\Re\,\langle X_{j},\,Y_{j}\rangle_{F}}{\|X_{j}\|_{F}\,\|Y_{j}\|_{F}}. \tag{4}

$$

For each candidate $z\in\mathcal{Z}$ we compute $\mathrm{sim}(\mathbf{v}_{q},\mathbf{v}_{z})$ and (optionally) apply a calibrated score

$$

s(z)\;=\;\mathrm{sim}(\mathbf{v}_{q},\mathbf{v}_{z})\;+\;\alpha\,\mathrm{IDF}(z)\;-\;\beta\,\lambda^{|z|}, \tag{5}

$$

where $\mathrm{IDF}(z)$ is a simple inverse-frequency weight on relation schemas. Let $\text{schema}(z)$ denote the relation schema of path $z$ and $\mathrm{freq}(\text{schema}(z))$ be the number of training questions whose candidate sets contain at least one path with the same schema. With $N_{\text{train}}$ the total number of training questions, we define:

$$

\mathrm{IDF}(z)=\log\!\left(1+\frac{N_{\text{train}}}{1+\mathrm{freq}(\text{schema}(z))}\right). \tag{6}

$$

Thus, frequent schemas (large $\mathrm{freq}(\text{schema}(z))$ ) receive a smaller bonus, while rare schemas receive a larger one. All similarity scores and calibrated scores can be computed fully in parallel over candidates, with overall cost $\mathcal{O}(|\mathcal{Z}|d)$ .

### 2.6 One-shot Reasoning with Retrieved Paths

Putting the pieces together, PathHD turns a question into (i) a schema-level plan $z_{q}$ , (ii) a set of candidate paths ranked in hypervector space, and (iii) a single LLM call that adjudicates among the top-ranked candidates.

We linearize the Top- $K$ paths into concise natural-language statements and issue a single LLM call with a minimal, citation-style prompt (see Table ˜ 8 from Appendix ˜ C). The prompt lists the question and the numbered paths, and requires the model to return a short answer, the index(es) of supporting path(s), and a 1-2 sentence rationale. This one-shot format constrains reasoning to the provided evidence, resolves near-ties and direction errors, and keeps LLM usage minimal.

### 2.7 Theoretical & Complexity Analysis

| Method | Candidate Path Gen. | Scoring | Reasoning |

| --- | --- | --- | --- |

| StructGPT [15] | ✓ | ✓ | ✓ |

| FiDeLiS [37] | ✓ | ✗ | ✓ |

| ToG [39] | ✓ | ✓ | ✓ |

| GoG [44] | ✓ | ✓ | ✓ |

| KG-Agent [16] | ✓ | ✓ | ✓ |

| RoG [25] | ✓ | ✗ | ✓ |

| PathHD | ✗ | ✗ | ✓ ( $1$ call) |

Table 1: LLM usage across pipeline stages. A checkmark indicates that the method uses an LLM in that stage. Candidate Path Gen.: using an LLM to propose or expand relation paths; Scoring: using an LLM to score or rank candidates (non-LLM similarity or graph heuristics count as “no”); Reasoning: using an LLM to produce the final answer from the retrieved paths. PathHD uses a single LLM call only in the final reasoning stage.

We briefly characterize the behavior of random GHRR hypervectors and the computational cost of PathHD.

**Proposition 1 (Near-orthogonality and distractor bound)**

*Let $\{\mathbf{v}_{r}\}$ be i.i.d. GHRR hypervectors with zero-mean, unit Frobenius-norm blocks. For a query path $z_{q}$ and any distractor $z\neq z_{q}$ encoded via non-commutative binding, the cosine similarity $X=\mathrm{sim}(\mathbf{v}_{z_{q}},\mathbf{v}_{z})$ (Equation ˜ 4) satisfies, for any $\epsilon>0$ ,

$$

\Pr\!\left(|X|\geq\epsilon\right)\leq 2\exp\!\left(-c\,d\,\epsilon^{2}\right), \tag{7}

$$

for an absolute constant $c>0$ depending only on the sub-Gaussian proxy of entries.*

* Proof sketch*

Each block inner product $\langle X_{j},Y_{j}\rangle_{F}$ is a sum of products of independent sub-Gaussian variables (closed under products for the bounded/phase variables used by GHRR). After normalization, the average in Equation ˜ 4 is a mean-zero sub-Gaussian average over $d$ degrees of freedom, so a standard Bernstein/Hoeffding tail bound applies. Details are deferred to Appendix ˜ E. ∎

**Corollary 1 (Capacity with union bound)**

*Let $\mathcal{M}$ be a collection of $M$ distractor paths scored against a fixed query. With probability at least $1-\delta$ ,

$$

\max_{z\in\mathcal{M}}\mathrm{sim}(\mathbf{v}_{z_{q}},\mathbf{v}_{z})\leq\epsilon\quad\text{whenever}\quad d\;\geq\;\frac{1}{c\,\epsilon^{2}}\,\log\!\frac{2M}{\delta}. \tag{8}

$$*

Thus, the probability of a false match under random hypervectors decays exponentially with the dimension $d$ , and the required dimension scales as $d=\mathcal{O}(\epsilon^{-2}\log M)$ for a target error tolerance $\epsilon$ .

#### Complexity comparison with neural retrievers.

Let $N$ be the number of candidates, $d$ the embedding dimension, and $L$ the number of encoder layers used by a neural retriever. A typical neural encoder (e.g., Transformer-based path encoder as in RoG) incurs $\mathcal{O}(NLd^{2})$ cost for encoding and scoring. In contrast, PathHD forms each path vector by $|z|\!-\!1$ block multiplications plus one similarity in Equation ˜ 4, i.e., $\mathcal{O}(|z|d)+\mathcal{O}(d)$ per candidate, giving a total of $\mathcal{O}(Nd)$ and an $\mathcal{O}(Ld)$ -fold reduction in leading order.

Beyond the $\mathcal{O}(Nd)$ vs. $\mathcal{O}(NLd^{2})$ compute gap, end-to-end latency is dominated by the number of LLM calls. Table ˜ 1 contrasts pipeline stages across methods: unlike prior agents that query an LLM for candidate path generation and sometimes for scoring, PathHD defers a single LLM call to the final reasoning step. This design reduces both latency and API cost; empirical results in Section ˜ 3.3 confirm the shorter response times.

## 3 Experiments

We evaluate PathHD against state-of-the-art baselines on reasoning accuracy, measure efficiency with a focus on end-to-end latency, and conduct module-wise ablations followed by illustrative case studies.

### 3.1 Datasets, Baselines, and Setup

We evaluate on three standard multi-hop KGQA benchmarks: WebQuestionsSP (WebQSP) [49], Complex WebQuestions (CWQ) [40], and GrailQA [9], all grounded in Freebase [2]. These datasets span increasing reasoning complexity (roughly 2-4 hops): WebQSP features simpler single-turn queries, CWQ adds compositional and constraint-based questions, and GrailQA stresses generalization across i.i.d., compositional, and zero-shot splits. Our study is therefore scoped to Freebase-style KGQA, extending PathHD to domain-specific KGs are left for future work.

We compare against four families of methods: embedding-based, retrieval-augmented, pure LLMs (no external KG), and LLM+KG hybrids. All results are reported on dev (IID) splits under a unified Freebase evaluation protocol using the official Hits@1 and F1 scripts, so that numbers are directly comparable across systems. Unless otherwise noted, PathHD uses the schema-based planner from Section ˜ 2.4 (no LLM calls during planning), the plan-based query hypervector in Equation ˜ 3, and a single LLM adjudication call as described in Section ˜ 2.6, with all LLMs and sentence encoders used off the shelf (no fine-tuning). Detailed dataset statistics, baseline lists, model choices, and additional training-free hyperparameters are provided in Appendices ˜ G, H and I.

### 3.2 Reasoning Performance Comparison

| | | WebQSP | CWQ | GrailQA (F1) | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Type | Methods | Hits@ $1$ | F1 | Hits@ $1$ | F1 | Overall | IID |

| KV-Mem [27] | $46.7$ | $34.5$ | $18.4$ | $15.7$ | $-$ | $-$ | |

| EmbedKGQA [35] | $66.6$ | $-$ | $45.9$ | $-$ | $-$ | $-$ | |

| NSM [10] | $68.7$ | $62.8$ | $47.6$ | $42.4$ | $-$ | $-$ | |

| Embedding | TransferNet [36] | $71.4$ | $-$ | $48.6$ | $-$ | $-$ | $-$ |

| GraftNet [38] | $66.4$ | $60.4$ | $36.8$ | $32.7$ | $-$ | $-$ | |

| SR+NSM [50] | $68.9$ | $64.1$ | $50.2$ | $47.1$ | $-$ | $-$ | |

| SR+NSM+E2E [50] | $69.5$ | $64.1$ | $49.3$ | $46.3$ | $-$ | $-$ | |

| Retrieval | UniKGQA [17] | $77.2$ | $72.2$ | $51.2$ | $49.1$ | $-$ | $-$ |

| ChatGPT [30] | $67.4$ | $59.3$ | $47.5$ | $43.2$ | $25.3$ | $19.6$ | |

| Davinci - 003 [30] | $70.8$ | $63.9$ | $51.4$ | $47.6$ | $30.1$ | $23.5$ | |

| Pure LLMs | GPT - 4 [1] | $73.2$ | $62.3$ | $55.6$ | $49.9$ | $31.7$ | $25.0$ |

| StructGPT [15] | $72.6$ | $63.7$ | $54.3$ | $49.6$ | $54.6$ | $70.4$ | |

| ROG [25] | $\underline{85.7}$ | $70.8$ | $62.6$ | $56.2$ | $-$ | $-$ | |

| Think-on-Graph [39] | $81.8$ | $76.0$ | $68.5$ | $60.2$ | $-$ | $-$ | |

| GoG [44] | $84.4$ | $-$ | $\mathbf{75.2}$ | $-$ | $-$ | $-$ | |

| KG-Agent [16] | $83.3$ | $\mathbf{81.0}$ | $\underline{72.2}$ | $\mathbf{69.8}$ | $\underline{86.1}$ | $\underline{92.0}$ | |

| FiDeLiS [37] | $84.4$ | $78.3$ | $71.5$ | $64.3$ | $-$ | $-$ | |

| LLMs + KG | PathHD | $\mathbf{86.2}$ | $\underline{78.6}$ | $71.5$ | $\underline{65.8}$ | $\mathbf{86.7}$ | $\mathbf{92.4}$ |

Table 2: Comparison on Freebase-based KGQA. Our method PathHD follows exactly the same protocol. “–” indicates that the metric was not reported by the original papers under the Freebase+official-script setting. We bold the best and underline the second-best score for each metric/column.

We evaluate under a unified Freebase protocol with the official Hits@1 / F1 scripts on WebQSP, CWQ, and GrailQA (dev, IID); results are in Table ˜ 2. Baselines cover classic KGQA (embedding/retrieval), recent LLM+KG systems, and pure LLMs (no KG grounding). Our PathHD uses hyperdimensional scoring with GHRR, Top- $K$ pruning, and a single LLM adjudication step.

Key observations are as follows. Obs.❶ SOTA on WebQSP/GrailQA; competitive on CWQ. PathHD attains best WebQSP Hits@1 ( $86.2$ ) and best GrailQA F1 (Overall/IID $86.7/92.4$ ), while staying strong on CWQ (Hits@1 $71.5$ , F1 $65.8$ ), close to the top LLM+KG systems (e.g., GoG $75.2$ Hits@1; KG-Agent $69.8$ F1). Obs.❷ One-shot adjudication rivals multi-step agents. Compared to RoG ( $\sim$ 12 calls) and Think-on-Graph/GoG/KG-Agent ( $3$ – $8$ calls), PathHD matches or exceeds accuracy on WebQSP/GrailQA and remains competitive on CWQ with just one LLM call, which reduces error compounding and focuses the LLM on a high-quality shortlist. Obs.❸ Pure LLMs lag without KG grounding. Zero/few-shot GPT-4 or ChatGPT underperform LLM+KG systems, e.g., on CWQ GPT-4 Hits@1 $55.6$ vs. PathHD $71.5$ . Obs.❹ Classic embedding/retrieval trails modern LLM+KG. KV-Mem, NSM, SR+NSM rank subgraphs well but lack a flexible language component for composing multi-hop constraints, yielding consistently lower scores.

Candidate enumeration strategy. Before turning to efficiency (Section ˜ 3.3), we briefly clarify how candidate paths are enumerated, since this affects both accuracy and cost. In our current implementation, we use a deterministic BFS-style enumeration of relation paths, controlled by the maximum depth $L_{\max}$ and beam width $B$ . This choice is (i) simple and efficient, (ii) guarantees coverage of all type-consistent paths up to length $L_{\max}$ under clear complexity bounds, and (iii) makes it easy to compare against prior KGQA baselines that also rely on BFS-like expansion. In practice, we choose $L_{\max}$ and $B$ to achieve high coverage of gold answer paths while keeping candidate set sizes comparable to RoG and KG-Agent. More sophisticated, adaptive enumeration strategies (e.g., letting the HDC scores or the LLM guide, which relations to expand next) are an interesting extension, but orthogonal to our core contribution.

### 3.3 Efficiency and Cost Analysis

<details>

<summary>x3.png Details</summary>

### Visual Description

## Scatter Plot: H@n1 vs. Latency on WebQSP

### Overview

This image is a scatter plot comparing the performance of various AI models on the WebQSP benchmark. The chart plots two key metrics: accuracy (H@n1 percentage) on the x-axis and per-query latency (in seconds, on a logarithmic scale) on the y-axis. The data points are categorized into three methodological approaches, indicated by different marker shapes and colors. The plot reveals a general trade-off between higher accuracy and increased latency, with distinct clustering of model types.

### Components/Axes

* **Chart Title:** "H@n1 vs. latency on WebQSP"

* **X-Axis:**

* **Label:** "H@n1 on WebQSP (%)"

* **Scale:** Linear scale from 0 to 90, with major tick marks at 0, 10, 20, 30, 40, 50, 60, 70, 80, 90.

* **Y-Axis:**

* **Label:** "Per-query latency (seconds, log scale)"

* **Scale:** Logarithmic scale from -0.25 to 2.50, with labeled ticks at -0.25, 0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00, 2.25, 2.50.

* **Legend (Top-Left Corner):**

* **Fine-tuning:** Represented by a green circle (●).

* **Base LLM:** Represented by a blue square (■).

* **LLMs+KG:** Represented by an orange triangle (▲).

* **Data Points (Models):** Each point is labeled with a model name. The approximate coordinates (H@n1%, Latency) are extracted below.

### Detailed Analysis

**Data Point Extraction (Approximate Values):**

* **Fine-tuning (Green Circles):**

* **KGSilicon:** Positioned at the far left, near (0%, ~0.00s). This is an outlier with near-zero latency but also near-zero accuracy.

* **GPT-4 (1 call):** Positioned at (~42%, ~0.00s). Shows moderate accuracy with very low latency.

* **Base LLM (Blue Squares):**

* **ChatGPT (1 call):** Positioned at (~38%, ~0.25s).

* **GPT-4 (1 call):** Positioned at (~55%, ~0.50s). *Note: This appears to be a separate data point from the Fine-tuning GPT-4, possibly representing a different configuration.*

* **StreamGPT:** Positioned at (~58%, ~0.50s).

* **GPT-4 (5 calls):** Positioned at (~62%, ~0.75s). Shows that increasing calls improves accuracy but also latency.

* **Think-on-Graph:** Positioned at (~72%, ~1.25s).

* **KG-Agent:** Positioned at (~78%, ~1.50s).

* **LLMs+KG (Orange Triangles):**

* **CoT-LLM:** Positioned at (~75%, ~1.00s).

* **ToG:** Positioned at (~78%, ~1.25s).

* **PaL-HD:** Positioned at (~82%, ~0.25s). This is a notable outlier, achieving high accuracy with relatively low latency.

* **KGSilicon:** Positioned at the top-right, near (~88%, ~2.25s). This is the highest accuracy model but also has the highest latency. *Note: The name "KGSilicon" appears twice, once as a Fine-tuning model with low performance and once as an LLMs+KG model with high performance. This likely represents two different systems or configurations with the same name.*

### Key Observations

1. **Performance Clusters:** The models form three loose clusters:

* **Low Accuracy, Low Latency:** The Fine-tuning models (KGSilicon, GPT-4 1 call) and one Base LLM (ChatGPT 1 call) are in the bottom-left quadrant.

* **Mid-Range:** A cluster of Base LLMs (StreamGPT, GPT-4 5 calls, Think-on-Graph, KG-Agent) and one LLMs+KG model (CoT-LLM) occupy the center of the plot.

* **High Accuracy, High Latency:** The top-right quadrant contains advanced LLMs+KG models (ToG, KGSilicon) and the high-performing Base LLM (KG-Agent).

2. **Significant Outliers:**

* **PaL-HD (LLMs+KG):** Breaks the general trend by achieving high accuracy (~82%) with low latency (~0.25s), suggesting a highly efficient architecture.

* **KGSilicon (Fine-tuning):** Shows near-zero performance on both metrics, indicating a failed or baseline configuration.

3. **Latency-Accuracy Trade-off:** The overall trend slopes upward from left to right, illustrating that higher accuracy on the WebQSP benchmark generally comes at the cost of significantly higher per-query latency, especially when moving from seconds to multiple seconds.

4. **Impact of Methodology:** The "LLMs+KG" (Large Language Models + Knowledge Graphs) approach generally populates the higher-accuracy region of the plot compared to "Base LLM" and "Fine-tuning" approaches, though with a wide latency spread.

### Interpretation

This scatter plot provides a technical comparison of AI question-answering systems, evaluating their effectiveness (accuracy) against their computational cost (latency). The data suggests a fundamental engineering trade-off: more sophisticated systems that integrate external knowledge graphs (LLMs+KG) or use more inference calls (GPT-4 5 calls) achieve better results but require more time per query.

The presence of **PaL-HD** is particularly significant. Its position indicates a potential breakthrough in efficiency, achieving top-tier accuracy without the severe latency penalty seen in other high-performing models like KGSilicon or KG-Agent. This could be due to a novel retrieval mechanism, a more optimized model architecture, or a different approach to knowledge integration.

The duplicate **KGSilicon** label highlights the importance of methodology. The same name applied to a "Fine-tuning" approach yields poor results, while the "LLMs+KG" version is the state-of-the-art in accuracy. This underscores that the system's design and integration strategy are more critical than the base model name alone.

For a technical document, this chart argues that selecting a model involves balancing the need for accuracy against the constraint of response time. Applications requiring real-time answers might favor models like PaL-HD or GPT-4 (1 call), while applications where accuracy is paramount and latency is less critical could justify the use of models like KGSilicon (LLMs+KG) or KG-Agent.

**Language Note:** The model name "KGSilicon" appears to be a proper noun/brand name. No other non-English text is present in the chart.

</details>

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Scatter Plot: Hits@1 vs. latency on CWQ

### Overview

The image is a scatter plot comparing various AI models and systems on two performance metrics: accuracy (Hits@1 on the CWQ benchmark) and speed (per-query latency). The plot categorizes models into three families, distinguished by color and marker shape, showing a general trade-off between higher accuracy and increased latency.

### Components/Axes

* **Title:** "Hits@1 vs. latency on CWQ"

* **X-Axis:** "Hits@1 on CWQ (%)". Scale ranges from 0 to 80, with major ticks at 0, 20, 40, 60, 80.

* **Y-Axis:** "Per-query latency (seconds, median)". Scale ranges from -0.25 to 1.50, with major ticks at -0.25, 0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50.

* **Legend (Top-Left Corner):**

* **Family:** (Header)

* **Fine-tuning:** Green square marker.

* **Pure LLM:** Blue circle marker.

* **LLMs+KG:** Orange triangle marker.

### Detailed Analysis

The plot contains 10 distinct data points, each labeled with a model/system name. Their approximate coordinates (x=Hits@1 %, y=Latency seconds) are as follows:

**Fine-tuning Family (Green Squares):**

1. **XVSMon:** Positioned at the far left and bottom. Approximate coordinates: (10%, -0.15s). This indicates very low accuracy and the lowest latency on the chart.

2. **GPT4-T:** Positioned near the center-bottom. Approximate coordinates: (40%, -0.10s). Shows moderate accuracy with very low latency.

**Pure LLM Family (Blue Circles):**

3. **ChatQA-2:** Positioned left of center. Approximate coordinates: (35%, 0.25s).

4. **UnkQA:** Positioned near the center. Approximate coordinates: (45%, 0.35s).

5. **GPT-4 (1-shot):** Positioned right of center. Approximate coordinates: (50%, 0.40s). This cluster shows a trend of increasing latency with modest gains in accuracy.

**LLMs+KG Family (Orange Triangles):**

6. **PaLM2:** Positioned on the right side, lower than its group. Approximate coordinates: (70%, 0.20s). An outlier within its family, showing high accuracy with relatively low latency.

7. **Think-and-Execute:** Positioned in the upper-right quadrant. Approximate coordinates: (65%, 0.85s).

8. **KG-GPT:** Positioned in the upper-right quadrant. Approximate coordinates: (75%, 0.90s).

9. **KG-LLaMA:** Positioned in the upper-right quadrant. Approximate coordinates: (72%, 1.00s).

10. **RoG:** Positioned at the top of the chart. Approximate coordinates: (60%, 1.40s). This model has the highest latency by a significant margin.

### Key Observations

1. **Clear Family Clustering:** The three model families form distinct clusters. "Fine-tuning" models are in the low-latency, low-to-moderate accuracy region (bottom-left). "Pure LLM" models form a central cluster with moderate latency and accuracy. "LLMs+KG" models dominate the high-accuracy region (right side) but with a wide spread in latency.

2. **Accuracy-Latency Trade-off:** There is a general positive correlation between Hits@1 accuracy and latency. Moving from left to right (increasing accuracy), the data points generally move upward (increasing latency).

3. **Notable Outliers:**

* **PaLM2 (LLMs+KG):** Achieves high accuracy (~70%) with latency (~0.20s) comparable to the "Pure LLM" cluster, making it highly efficient.

* **RoG (LLMs+KG):** Has the highest latency (~1.40s) but only moderate accuracy (~60%), suggesting a potential inefficiency.

* **XVSMon (Fine-tuning):** Has the lowest accuracy and latency, potentially representing a very fast but less capable baseline.

### Interpretation

This chart visualizes the performance landscape of different approaches to complex question answering (on the CWQ benchmark). The data suggests a fundamental trade-off: achieving higher accuracy (Hits@1) typically requires more computational time (latency).

* **Fine-tuning** approaches (green) prioritize speed, offering the lowest latencies but at a significant cost to accuracy. They are suitable for scenarios where response time is critical and some error is acceptable.

* **Pure LLM** approaches (blue) represent a middle ground, offering a balance between reasonable accuracy and moderate speed.

* **LLMs augmented with Knowledge Graphs (LLMs+KG)** (orange) generally achieve the highest accuracy, demonstrating the value of structured knowledge for complex reasoning. However, this comes at the cost of higher and more variable latency, likely due to the overhead of KG retrieval and integration. The wide latency spread within this group (from PaLM2's ~0.20s to RoG's ~1.40s) indicates significant differences in the efficiency of their KG integration mechanisms.

The standout model is **PaLM2**, which breaks the general trend by delivering high accuracy with low latency, suggesting a particularly efficient architecture or integration method. Conversely, **RoG** appears to be the least efficient, incurring a very high latency penalty for its level of accuracy. This analysis would be crucial for a practitioner selecting a model based on their specific constraints for accuracy versus response time.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

## Scatter Plot: H@1 vs. Latency on GraIL QA

### Overview

This image is a scatter plot comparing the performance of various AI models on the GraIL QA task. It plots model accuracy (H@1) against inference latency (95th percentile). The chart includes a legend categorizing models into three families: Fine-tuning, Pure LLM, and LLM+KG.

### Components/Axes

* **Chart Title:** "Hin@1 vs. latency on GraIL QA" (Note: "Hin@1" appears to be a typo or specific metric name, likely meaning "Hits@1").

* **X-Axis:** "Hin@1 on GraIL QA (%)". Scale ranges from 0 to 90, with major ticks at 0, 10, 20, 30, 40, 50, 60, 70, 80, 90.

* **Y-Axis:** "Percentile latency 95% (seconds median)". Scale ranges from 0.00 to 1.00, with major ticks at 0.00, 0.20, 0.40, 0.60, 0.80, 1.00.

* **Legend (Top-Left Corner):**

* **Family:**

* Fine-tuning (Orange square symbol)

* Pure LLM (Blue triangle symbol)

* LLM+KG (Pink triangle symbol)

* **Data Points (Models):** Each point is labeled with a model name and, for some, a note about the number of calls.

### Detailed Analysis

The plot contains five distinct data points, each representing a model. Their approximate coordinates (Hin@1 %, Latency seconds) are:

1. **ChatGPT (1 call)**

* **Family:** Fine-tuning (Orange square)

* **Position:** Bottom-left quadrant.

* **Approximate Values:** Hin@1 ≈ 15%, Latency ≈ 0.10 seconds.

* **Trend:** Lowest accuracy and lowest latency among the plotted models.

2. **GPT-4 (1 call)**

* **Family:** Fine-tuning (Orange square)

* **Position:** Left-center, above ChatGPT.

* **Approximate Values:** Hin@1 ≈ 25%, Latency ≈ 0.40 seconds.

* **Trend:** Higher accuracy and higher latency than ChatGPT.

3. **SimounGPT**

* **Family:** Pure LLM (Blue triangle)

* **Position:** Center of the plot.

* **Approximate Values:** Hin@1 ≈ 55%, Latency ≈ 0.50 seconds.

* **Trend:** Mid-range accuracy and latency.

4. **PahKD**

* **Family:** LLM+KG (Pink triangle)

* **Position:** Bottom-right quadrant.

* **Approximate Values:** Hin@1 ≈ 85%, Latency ≈ 0.20 seconds.

* **Trend:** High accuracy with relatively low latency.

5. **K-LoRAm**

* **Family:** LLM+KG (Pink triangle)

* **Position:** Top-right corner.

* **Approximate Values:** Hin@1 ≈ 90%, Latency ≈ 1.00 seconds.

* **Trend:** Highest accuracy but also the highest latency.

### Key Observations

* **Performance-Latency Trade-off:** There is a general, but not strict, positive correlation between accuracy (Hin@1) and latency. Models with higher accuracy tend to have higher latency.

* **Family Clustering:** Models from the same family (Fine-tuning, LLM+KG) tend to cluster in specific regions of the plot. Fine-tuning models are in the low-accuracy/low-latency region. LLM+KG models are in the high-accuracy region, but with divergent latency.

* **Notable Outlier:** **PahKD** (LLM+KG) is a significant outlier. It achieves very high accuracy (≈85%) with low latency (≈0.20s), breaking the general trend. This suggests a highly efficient architecture or method.

* **Latency Range:** Latencies vary by an order of magnitude, from ~0.1s (ChatGPT) to ~1.0s (K-LoRAm).

* **Accuracy Range:** Accuracy varies widely, from ~15% to ~90%.

### Interpretation

This chart visualizes the core engineering trade-off between model performance (accuracy) and computational cost (latency) for the GraIL QA task. The data suggests:

1. **Methodology Matters:** The "LLM+KG" (Large Language Model + Knowledge Graph) family demonstrates the potential for achieving state-of-the-art accuracy (PahKD, K-LoRAm). This implies that augmenting LLMs with structured knowledge is a powerful strategy for this QA task.

2. **Efficiency is Achievable:** The stark contrast between **PahKD** (high accuracy, low latency) and **K-LoRAm** (high accuracy, high latency) within the same family indicates that not all LLM+KG approaches are equal. PahKD likely represents a more optimized or efficient integration method, making it a more practical choice for real-time applications.

3. **Baseline Performance:** The "Fine-tuning" models (ChatGPT, GPT-4) serve as a baseline, showing that standard fine-tuning of general-purpose LLMs yields lower performance on this specialized task compared to knowledge-augmented approaches.

4. **Task-Specific Insight:** The GraIL QA task appears to benefit significantly from external knowledge (KG), as the top two performing models are from the LLM+KG family. The "Pure LLM" (SimounGPT) sits in the middle, suggesting its parametric knowledge alone is insufficient for top performance.

**In summary, the chart argues for the effectiveness of LLM+KG systems for complex QA, while highlighting that the specific implementation (as seen with PahKD) is critical for balancing accuracy with practical latency constraints.**

</details>

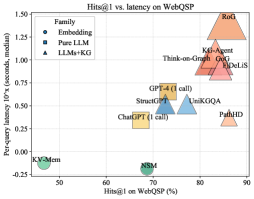

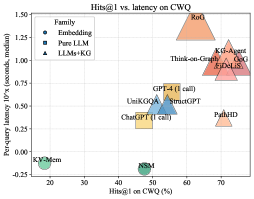

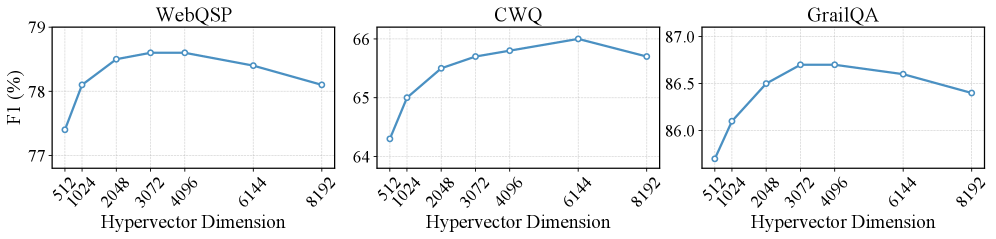

Figure 3: Visualization of performance and latency. The x-axis is Hits@ $1$ (%), the y-axis is per-query latency in seconds (median, log scale). Bubble size indicates the average number of LLM calls; marker shape denotes the method family. PathHD gives strong accuracy with lower latency than multi-call LLMs+KG baselines.

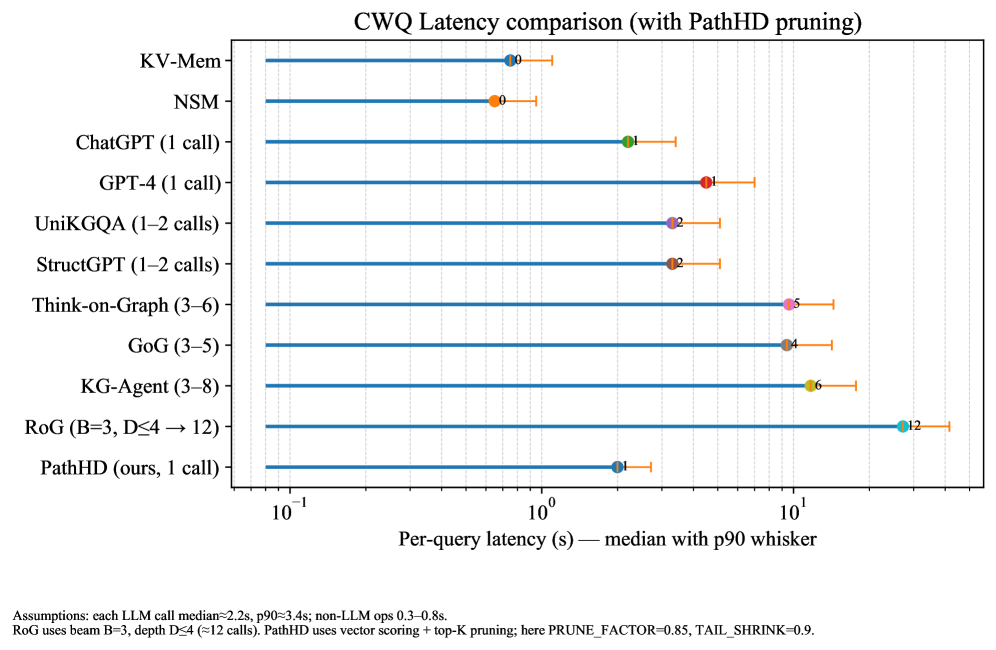

We assess end-to-end cost via a Hits@1–latency bubble plot (Figure ˜ 3) and a lollipop latency chart (Figure ˜ 6). In Figure ˜ 3, $x$ = Hits@1, $y$ = median per-query latency (log-scale); bubble size = average #LLM calls; marker shape = method family. All latencies are measured under a common hardware and LLM-backend setup to enable fair relative comparison. Latencies in Figure ˜ 6 follow a shared protocol: in our implementation, each LLM call takes on the order of a few seconds, whereas non-LLM vector/graph operations are typically within $0.3$ – $0.8$ s. PathHD uses vector-space scoring with Top- $K$ pruning and a single LLM decision; RoG uses beam search ( $B{=}3$ , depth $\leq$ dataset hops). A factor breakdown (#calls, depth $d$ , beam $b$ , tools) appears in Table ˜ 10 (Section ˜ I.1).

Key observations are: Obs.❶ Near-Pareto across datasets. With comparable accuracy to multi-call LLM+KG systems (Think-on-Graph/GoG/KG-Agent), PathHD achieves markedly lower latency due to its single-call design and compact post-pruning candidate set. Obs.❷ Latency is dominated by #LLM calls. Methods with 3–8 calls (agent loops) or $\approx d\times b$ calls (beam search) sit higher in Figure ˜ 3 and show longer whiskers in Figure ˜ 6; PathHD avoids intermediate planning/scoring overhead. Obs.❸ Moderate pruning improves cost–accuracy. Shrinking the pool before adjudication lowers latency without hurting Hits@1, especially on CWQ, where paths are longer. Obs.❹ Pure LLMs are fast but underpowered. Single-call GPT-4/ChatGPT has similar latency to our final decision yet notably lower accuracy, underscoring the importance of structured retrieval and path scoring.

### 3.4 Ablation Study

We analyze the contribution of each module/operation in PathHD. Our operation study covers: (1) Path composition operator, (2) Single-LLM adjudicator, and (3) Top- $K$ pruning.

| Operator | WebQSP | CWQ |

| --- | --- | --- |

| XOR / bipolar product | 83.9 | 68.8 |

| Element-wise product (Real-valued) | 84.4 | 69.2 |

| Comm. bind | 84.7 | 69.6 |

| FHRR | 84.9 | 70.0 |

| HRR | 85.1 | 70.2 |

| GHRR | 86.2 | 71.5 |

Table 3: Effect of the path–composition operator. GHRR yields the best performance.

#### Which path–composition operator works best?

We isolate relation binding by fixing retrieval, scoring, pruning, and the single LLM step, and only swapping the encoder’s path–composition operator. We compare six options (defs. in Appendix ˜ K): (i) XOR/bipolar and (ii) real-valued element-wise products, both fully commutative; (iii) a stronger commutative mix of binary/bipolar; (iv) FHRR (phasors) and (v) HRR (circular convolution), efficient yet effectively commutative; and (vi) our block-diagonal GHRR with unitary blocks, non-commutative and order-preserving. Paths of length $1$ – $4$ use identical dimension/normalization. As in Table ˜ 3, commutative binds lag, HRR/FHRR give modest gains, and GHRR yields the best Hits@1 on WebQSP and CWQ by reliably separating founded_by $\rightarrow$ CEO_of from its reverse.

| Final step | WebQSP | CWQ |

| --- | --- | --- |

| Vector-only | 85.4 | 70.8 |

| Vector $\rightarrow$ 1 $\times$ LLM | 86.2 | 71.5 |

Table 4: Ablation on the final decision maker. Passing pruned candidates and scores to a single LLM for adjudication yields consistent gains over vector-only selection.

#### Do we need a final single LLM adjudicator?

We test whether a lightweight LLM judgment helps beyond pure vector scoring. Vector-only selects the top path by cosine similarity; Vector $\rightarrow$ 1 $\times$ LLM instead forwards the pruned top- $K$ paths (with scores and end entities) to a single LLM using a short fixed template (no tools/planning) to choose the answer without long chains of thought. As shown in Table ˜ 4, Vector $\rightarrow$ 1 $\times$ LLM consistently outperforms Vector-only on both datasets, especially when the top two paths are near-tied or a high-scoring path has a subtle type mismatch; a single adjudication pass resolves such cases at negligible extra cost.

| Pruning | Hits@ $1$ (WebQSP) | Lat. | Hits@ $1$ (CWQ) | Lat. |

| --- | --- | --- | --- | --- |

| No-prune | 85.8 | 2.42s | 70.7 | 2.45s |

| $K{=}2$ | 86.0 | 1.98s | 71.2 | 2.00s |

| $K{=}3$ | 86.2 | 1.92s | 71.5 | 1.94s |

| $K{=}5$ | 86.1 | 2.05s | 71.4 | 2.06s |

Table 5: Impact of top- $K$ pruning before the final LLM. Small sets (K=2–3) retain or slightly improve accuracy while reducing latency. We adopt $K{=}3$ by default.

#### What is the effect of top- $K$ pruning before the final step?

Finally, we study how many candidates should be kept for the last decision. We vary the number of paths passed to the final LLM among $K\!\in\!\{2,3,5\}$ and also include a No-prune variant that sends all retrieved paths. Retrieval and scoring are fixed; latency is the median per query (lower is better). As shown in Table ˜ 5, $K{=}3$ achieves the best Hits@ $1$ on both WebQSP and CWQ with the lowest latency, while $K{=}2$ is a close second and yields the largest latency drop. In contrast, No-prune maintains maximal recall but increases latency and often introduces near-duplicate/noisy paths that can blur the final decision. We therefore adopt $K{=}3$ as the default.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Charts: F1 Score vs. Hypervector Dimension for Three Datasets

### Overview

The image displays three separate line charts arranged horizontally. Each chart plots the F1 score (a performance metric) as a percentage against the "Hypervector Dimension" for a different dataset: WebQSP, CWQ, and GrailQA. The charts collectively illustrate how model performance, measured by F1 score, changes as the dimensionality of the hypervector representation is varied.

### Components/Axes

* **Chart Titles (Top Center of each plot):** "WebQSP", "CWQ", "GrailQA".

* **X-Axis (All Charts):** Label: "Hypervector Dimension". The axis is categorical with the following tick marks: 512, 1024, 2048, 3072, 4096, 6144, 8192.

* **Y-Axis (All Charts):** Label: "F1 (%)". The scale and range differ for each chart:

* **WebQSP:** Range approximately 77% to 79%. Major ticks at 77, 78, 79.

* **CWQ:** Range approximately 64% to 66%. Major ticks at 64, 65, 66.

* **GrailQA:** Range approximately 86.0% to 87.0%. Major ticks at 86.0, 86.5, 87.0.

* **Data Series:** Each chart contains a single blue line with circular markers at each data point. There is no separate legend, as each plot represents one dataset.

### Detailed Analysis

**1. WebQSP Chart (Left)**

* **Trend:** The line shows an initial steep increase, peaks in the middle range of dimensions, and then gradually declines.

* **Data Points (Approximate F1 %):**

* Dimension 512: ~77.4%

* Dimension 1024: ~78.1%

* Dimension 2048: ~78.5%

* Dimension 3072: ~78.6% (Appears to be the peak)

* Dimension 4096: ~78.6% (Similar to 3072)

* Dimension 6144: ~78.4%

* Dimension 8192: ~78.1%

**2. CWQ Chart (Center)**

* **Trend:** The line shows a consistent upward trend that begins to plateau and then slightly dips at the highest dimension.

* **Data Points (Approximate F1 %):**

* Dimension 512: ~64.3%

* Dimension 1024: ~65.0%

* Dimension 2048: ~65.5%

* Dimension 3072: ~65.7%

* Dimension 4096: ~65.8%

* Dimension 6144: ~66.0% (Appears to be the peak)

* Dimension 8192: ~65.8%

**3. GrailQA Chart (Right)**

* **Trend:** The line rises sharply to a peak and then follows a steady, gradual decline.

* **Data Points (Approximate F1 %):**

* Dimension 512: ~86.2%

* Dimension 1024: ~86.5%

* Dimension 2048: ~86.7%

* Dimension 3072: ~86.8% (Appears to be the peak)

* Dimension 4096: ~86.8% (Similar to 3072)

* Dimension 6144: ~86.7%

* Dimension 8192: ~86.5%

### Key Observations

1. **Common Pattern:** All three datasets exhibit a similar inverted-U or "rise-then-fall" pattern. Performance improves as the hypervector dimension increases from 512, reaches an optimal point, and then degrades as the dimension increases further.

2. **Optimal Dimension Range:** The peak performance for all datasets occurs in the mid-range of dimensions tested, specifically between 3072 and 6144.

3. **Dataset Sensitivity:** The magnitude of performance change varies. The CWQ dataset shows the most dramatic relative improvement (from ~64.3% to ~66.0%), while the GrailQA dataset operates in a higher, narrower performance band (86.2% to 86.8%).

4. **Performance Degradation:** For WebQSP and GrailQA, the decline after the peak is more pronounced and begins at a lower dimension (after 4096) compared to CWQ, which peaks later (at 6144) and shows a very slight drop.

### Interpretation

This data demonstrates a critical hyperparameter tuning insight for models using hypervector representations. The relationship between hypervector dimension and task performance (F1 score) is not linear; there is a clear point of diminishing returns followed by negative returns.

* **The "Sweet Spot":** The charts suggest that simply increasing dimensionality does not guarantee better performance. There is an optimal complexity (dimension) for representing the knowledge required by each dataset. Dimensions that are too low may lack the capacity to encode necessary information, while dimensions that are too high may introduce noise, overfit, or make the representation less efficient, leading to performance degradation.

* **Dataset-Dependent Optimum:** The exact optimal dimension varies by dataset (e.g., ~3072-4096 for WebQSP/GrailQA vs. ~6144 for CWQ). This implies that the ideal model configuration is dependent on the specific characteristics and complexity of the target data.

* **Practical Implication:** For practitioners, this underscores the importance of empirical validation across a range of dimensions when designing hypervector-based systems. The charts provide a clear guide that testing dimensions from 512 to 8192 is necessary to identify the peak, and that the optimal value likely lies between 2048 and 6144 for similar question-answering tasks.

</details>

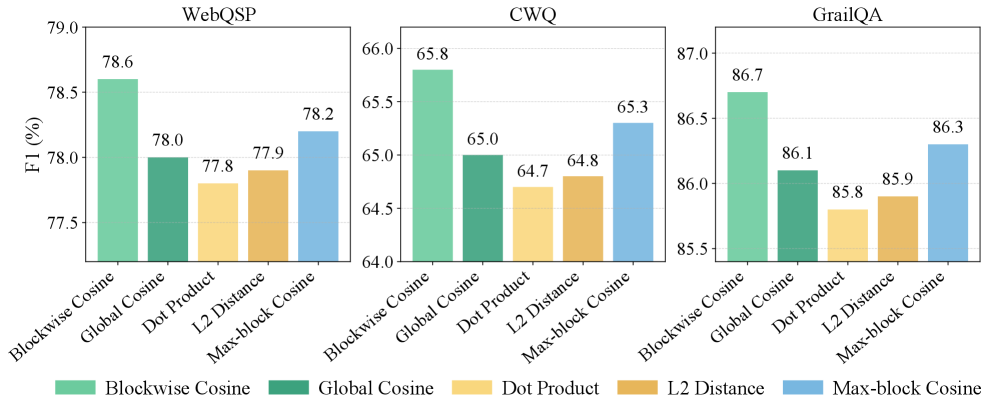

Figure 4: Hypervector dimension study. Each panel reports F1 (%) of PathHD on WebQSP, CWQ, and GrailQA as a function of the hypervector dimension. Overall, performance rises from $512$ to the mid-range and then tapers off: WebQSP and GrailQA peak around 3k–4k, while CWQ prefers a slightly larger size (6k), after which F1 decreases mildly.

### 3.5 Case Study

To better understand how our model performs step-by-step reasoning, we present two representative cases from the WebQSP dataset in Table ˜ 6. These cases highlight the effects of candidate path pruning and the contribution of LLM-based adjudication in improving answer accuracy. Case 1: Top- $K$ pruning preserves paths aligned with both film.film.music and actor cues; the vector-only scorer already picks the correct path, and a single LLM adjudication confirms Valentine’s Day, illustrating that pruning reduces cost while retaining high-coverage candidates. Case 2: A vector-only top path (film.film.edited_by) misses the actor constraint and yields a false positive, but adjudication over the pruned set, now including performance.actor, corrects to The Perks of Being a Wallflower, showing that LLM adjudication resolves compositional constraints beyond static similarity.

| Case 1: which movies featured Taylor Swift and music by John Debney | |

| --- | --- |

| Top-4 candidates | 1) film.film.music (0.2567) |

| 2) person.nationality $\rightarrow$ film.film.country (0.2524) | |

| 3) performance.actor $\rightarrow$ performance.film (0.2479) | |

| 4) people.person.languages $\rightarrow$ film.film.language (0.2430) | |

| Top- $K$ after pruning (K=3) | film.film.music |

| person.nationality $\rightarrow$ film.film.country | |

| performance.actor $\rightarrow$ performance.film | |

| Vector-only (no LLM) | Pick film.film.music ✓ — directly targets the composer-to-film mapping; relevant for filtering by music. |

| 1 $\times$ LLM adjudication | Rationale: “To find films with both Taylor Swift and music by John Debney, use actor-to-film and music-to-film relations. The chosen path targets the latter directly.” |

| Final Answer / GT | Valentine’s Day (predict) / Valentine’s Day ✓ |

| Case 2 : in which movies does Logan Lerman act in that was edited by Mary Jo Markey | |

| Top-4 candidates | 1) film.film.edited_by (0.2548) |

| 2) person.nationality $\rightarrow$ film.film.country (0.2527) | |

| 3) performance.actor $\rightarrow$ performance.film (0.2505) | |

| 4) award.award_winner.awards_won $\rightarrow$ | |

| award.award_honor.honored_for (0.2420) | |

| Top- $K$ after pruning (K=3) | film.film.edited_by |

| person.nationality $\rightarrow$ film.film.country | |

| performance.actor $\rightarrow$ performance.film | |

| Vector-only (no LLM) | Pick film.film.edited_by ✗ — identifies edited films, but lacks actor constraint; leads to false positives. |

| 1 $\times$ LLM adjudication | Rationale: “The question requires jointly filtering for actor and editor. While film.edited_by is relevant, combining it with performance.actor improves precision by ensuring Logan Lerman is in the cast.” |

| Final Answer / GT | Perks of Being a Wallflower (predict) / Perks of Being a Wallflower ✓ |

Table 6: Case studies on multi-hop reasoning over WebQSP. Top- $K$ pruning is applied before invoking LLM, reducing cost while retaining plausible candidates.

Discussion. As PathHD operates in a single-call, fixed-candidate regime, its performance ultimately depends on (i) the Top- $K$ retrieved paths covering at least one valid reasoning chain and (ii) the adjudication LLM correctly ranking these candidates. In practice, we mitigate this by using a relatively generous $K$ (e.g., $K=3$ ) and beam widths that yield high coverage of gold paths (see Section ˜ K.2), but extreme cases can still be challenging. Note that all LLM reasoning systems that first retrieve a Top- $K$ set of candidates will face the same challenge.

## 4 Related Work

### 4.1 LLM-based Reasoning

Large language models (LLMs) are now widely adopted across research and industry—powering generation, retrieval, and decision-support systems at scale [5, 28, 22, 23]. LLM-based Reasoning, such as GPT [33, 3], LLaMA [41], and PaLM [6], have demonstrated impressive capabilities in diverse reasoning tasks, ranging from natural language inference to multi-hop question answering [45]. A growing body of work focuses on enhancing the interpretability and reliability of LLM reasoning through symbolic path-based reasoning over structured knowledge sources [38, 4, 11]. For example, Wei et al. [43] proposed chain-of-thought prompting, which improves reasoning accuracy by encouraging explicit intermediate steps. Wang et al. [42] introduced self-consistency decoding, which aggregates multiple reasoning chains to improve robustness.

Knowledge graphs are widely deployed in real-world systems (e.g., web search, recommendation, biomedicine) and constitute an active research area for representation learning and reasoning [14, 52, 24, 51]. In the context of knowledge graphs, recent efforts have explored hybrid neural-symbolic approaches to combine the structural expressiveness of graph reasoning with the generative power of LLMs. Fan et al. [25] proposed Reasoning on Graphs (RoG), which first prompts LLMs to generate plausible symbolic relation paths and then retrieves and verifies these paths over knowledge graphs. Similarly, Khattab et al. [20] leveraged demonstration-based prompting to guide LLM reasoning grounded in external knowledge. Despite their interpretability benefits, these methods rely heavily on neural encoders for path matching, incurring substantial computational and memory overhead, which limits scalability to large KGs or real-time applications.

### 4.2 Hyperdimensional Computing (HDC)

HDC is an emerging computational paradigm inspired by the properties of high-dimensional representations in cognitive neuroscience [18, 19]. In HDC, information is represented as fixed-length high-dimensional vectors (hypervectors), and symbolic structures are manipulated through simple algebraic operations such as binding, bundling, and permutation [8]. These operations are inherently parallelizable and robust to noise, making HDC appealing for energy-efficient and low-latency computation.

HDC has been successfully applied in domains such as classification [34], biosignal processing [29], natural language understanding [26], and graph analytics [12]. For instance, Imani et al. [12] demonstrated that HDC can encode and process graph-structured data efficiently, enabling scalable similarity search and inference. Recent studies have also explored neuro-symbolic integrations, where HDC complements neural networks to achieve interpretable yet computationally efficient models [13, 34]. However, the potential of HDC in large-scale reasoning over knowledge graphs, particularly when combined with LLMs, remains underexplored. Our work bridges this gap by leveraging HDC as a drop-in replacement for neural path matchers in LLM-based reasoning frameworks, thereby achieving both scalability and interpretability.

Existing KG-LLM reasoning frameworks typically rely on learned neural encoders or multi-call agent pipelines to score candidate paths or subgraphs, often with Transformers, GNNs, or repeated LLM calls. In contrast, our work keeps the retrieval module entirely encoder-free and training-free: PathHD replaces neural path scorers with HDC-based hypervector encodings and similarity, while remaining compatible with standard KG-LLM agents. Our goal is thus not to introduce new VSA theory, but to show that such carefully designed HDC representations can replace learned neural scorers in KG-LLM systems while preserving accuracy and substantially improving latency, memory footprint, and interpretability.

## 5 Conclusion

In this work, we presented PathHD, an encoder-free and interpretable retrieval mechanism for path-based reasoning over knowledge graphs with LLMs. PathHD replaces neural path scorers with hyperdimensional Computing (HDC): relation paths are encoded into order-aware GHRR hypervectors, ranked via simple vector operations, and passed to a single LLM adjudication step that outputs answers together with cited supporting paths. This Plan $\rightarrow$ Encode $\rightarrow$ Retrieve $\rightarrow$ Reason design removes the need for costly neural encoders in the retrieval module and shifts most computation into cheap, parallel hypervector operations. Experimental results on three standard Freebase-based KGQA benchmarks (WebQSP, CWQ, GrailQA) show that PathHD attains competitive Hits@1 and F1 while substantially reducing end-to-end latency and GPU memory usage, and produces faithful, path-grounded rationales that aid error analysis and controllability. Taken together, these findings indicate that carefully designed HDC representations are a practical substrate for efficient KG-LLM reasoning, offering a favorable accuracy-efficiency-interpretability trade-off. An important direction for future work is to apply CS to domain-specific graphs, such as UMLS and other biomedical or enterprise KGs, as well as to tasks beyond QA (e.g., fact checking or rule induction), and to explore how the HDC representations and retrieval pipeline can be adapted in these settings while retaining the same efficiency benefits.

## Acknowledgements

This work was supported in part by the DARPA Young Faculty Award, the National Science Foundation (NSF) under Grants #2127780, #2319198, #2321840, #2312517, and #2235472, #2431561, the Semiconductor Research Corporation (SRC), the Office of Naval Research through the Young Investigator Program Award, and Grants #N00014-21-1-2225 and #N00014-22-1-2067, Army Research Office Grant #W911NF2410360. Additionally, support was provided by the Air Force Office of Scientific Research under Award #FA9550-22-1-0253, along with generous gifts from Xilinx and Cisco.

## References

- [1] Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- [2] Kurt Bollacker, Colin Evans, Praveen Paritosh, Tim Sturge, and Jamie Taylor. Freebase: a collaboratively created graph database for structuring human knowledge. In Proceedings of the 2008 ACM SIGMOD international conference on Management of data, pages 1247–1250, 2008.

- [3] Tom B Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. In Advances in Neural Information Processing Systems (NeurIPS), volume 33, pages 1877–1901, 2020.

- [4] Shulin Cao, Jiaxin Shi, Liangming Pan, Lunyiu Nie, Yutong Xiang, Lei Hou, Juanzi Li, Bin He, and Hanwang Zhang. Kqa pro: A dataset with explicit compositional programs for complex question answering over knowledge base. In Proceedings of the 60th annual meeting of the Association for Computational Linguistics (volume 1: long papers), pages 6101–6119, 2022.

- [5] Yupeng Chang, Xu Wang, Jindong Wang, Yuan Wu, Linyi Yang, Kaijie Zhu, Hao Chen, Xiaoyuan Yi, Cunxiang Wang, Yidong Wang, et al. A survey on evaluation of large language models. ACM transactions on intelligent systems and technology, 15(3):1–45, 2024.

- [6] Aakanksha Chowdhery, Sharan Narang, Jacob Devlin, Maarten Bosma, Gaurav Mishra, Adam Roberts, Paul Barham, Hyung Won Chung, Charles Sutton, Sebastian Gehrmann, et al. Palm: Scaling language modeling with pathways. Journal of Machine Learning Research, 24(240):1–113, 2023.

- [7] E. Paxon Frady, Denis Kleyko, and Friedrich T. Sommer. Variable binding for sparse distributed representations: Theory and applications. Neural Computation, 33(9):2207–2248, 2021.

- [8] Ross W Gayler. Vector symbolic architectures answer jackendoff’s challenges for cognitive neuroscience. arXiv preprint cs/0412059, 2004.

- [9] Yu Gu, Sue Kase, Michelle Vanni, Brian Sadler, Percy Liang, Xifeng Yan, and Yu Su. Beyond iid: three levels of generalization for question answering on knowledge bases. In Proceedings of the web conference 2021, pages 3477–3488, 2021.