# Cognitive Mirrors: Exploring the Diverse Functional Roles of Attention Heads in LLM Reasoning

**Authors**:

- Tongliang Liu James Bailey (The University of Melbourne The University of Sydney)

> This work is not related to Amazon.

Abstract

Large language models (LLMs) have achieved state-of-the-art performance in a variety of tasks, but remain largely opaque in terms of their internal mechanisms. Understanding these mechanisms is crucial to improve their reasoning abilities. Drawing inspiration from the interplay between neural processes and human cognition, we propose a novel interpretability framework to systematically analyze the roles and behaviors of attention heads, which are key components of LLMs. We introduce CogQA, a dataset that decomposes complex questions into step-by-step subquestions with a chain-of-thought design, each associated with specific cognitive functions such as retrieval or logical reasoning. By applying a multi-class probing method, we identify the attention heads responsible for these functions. Our analysis across multiple LLM families reveals that attention heads exhibit functional specialization, characterized as cognitive heads. These cognitive heads exhibit several key properties: they are universally sparse, and vary in number and distribution across different cognitive functions, and they display interactive and hierarchical structures. We further show that cognitive heads play a vital role in reasoning tasks—removing them leads to performance degradation, while augmenting them enhances reasoning accuracy. These insights offer a deeper understanding of LLM reasoning and suggest important implications for model design, training and fine-tuning strategies. The code is available at https://github.com/sihuo-design/CognitiveMirrors.

1 Introduction

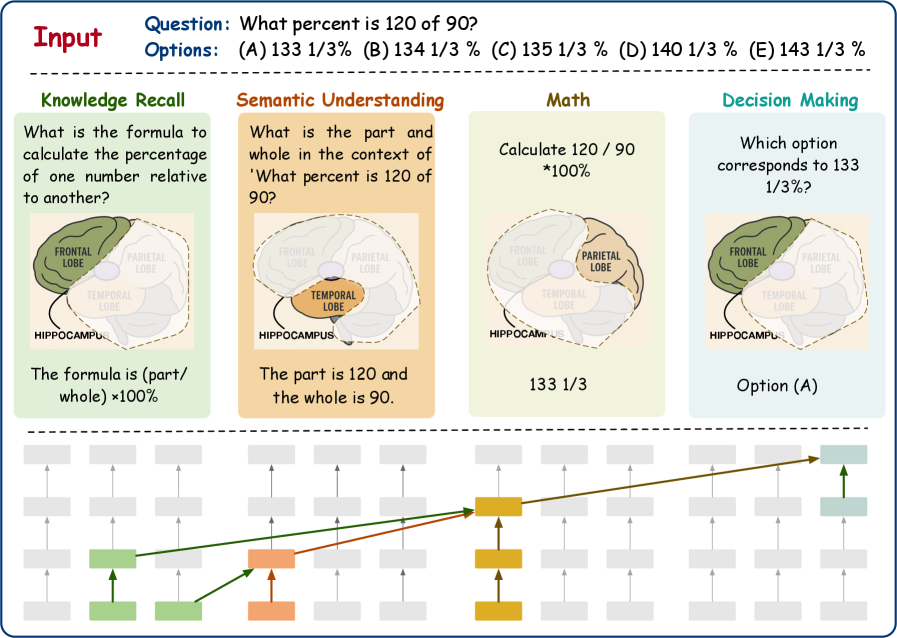

Large language models (LLMs) achiam2023gpt; grattafiori2024llama; touvron2023llama; yang2024qwen2, built on neural networks that mimic the structure of the human brain, have demonstrated exceptional performance across various natural language processing (NLP) tasks, often exceeding human capabilities. This has sparked growing interest in exploring the potential similarities between the cognitive processes of LLMs and the human brain. Prior studies have demonstrated that LLMs can predict brain responses to natural language caucheteux2022deep; schrimpf2021neural, indicating a functional alignment between artificial models and biological systems. However, to the best of our knowledge, systematic efforts to align reasoning processes between LLMs and human cognitive agents remain scarce. When solving complex reasoning tasks (e.g., a mathematical multiple-choice question; Figure 1), the human brain engages a network of specialized regions: the frontal lobe recalls relevant knowledge wheeler1997toward, language areas (e.g., Wernicke’s and Broca’s) support semantic processing ono2022bidirectional; meyer2005language, and the parietal and prefrontal cortices carry out higher-order reasoning barsalou2014cognitive; hubbard2005interactions.

Analogously, recent research suggests that components within LLMs may also take on specialized roles. For example, multi-head attention mechanisms in transformers vaswani2017attention have been found to handle distinct functions, such as information retrieval wu2404retrieval or maintaining answer consistency truthful, pointing toward a form of architectural division of labor. However, most of these findings are based on relatively simple tasks zheng2409attention, leaving open how such specialization operates under complex, multi-step reasoning scenarios.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Cognitive Process Diagram: Percentage Calculation

### Overview

The image illustrates the cognitive processes involved in solving a percentage problem. It breaks down the problem-solving process into four stages: Knowledge Recall, Semantic Understanding, Math, and Decision Making. Each stage is represented with a brain diagram highlighting the relevant brain region and a textual description of the cognitive task. The diagram also includes a neural network representation showing the flow of information between these stages.

### Components/Axes

* **Header:**

* "Input" label in red.

* Question: "What percent is 120 of 90?"

* Options: (A) 133 1/3% (B) 134 1/3% (C) 135 1/3% (D) 140 1/3% (E) 143 1/3%

* **Main Sections (Left to Right):**

* **Knowledge Recall:**

* Title: "Knowledge Recall"

* Text: "What is the formula to calculate the percentage of one number relative to another?"

* Brain Diagram: Frontal lobe highlighted in green. Parietal and Temporal lobes are also labeled. Hippocampus is labeled.

* Text: "The formula is (part/whole) x100%"

* **Semantic Understanding:**

* Title: "Semantic Understanding"

* Text: "What is the part and whole in the context of 'What percent is 120 of 90?'"

* Brain Diagram: Temporal lobe highlighted in orange. Frontal and Parietal lobes are also labeled. Hippocampus is labeled.

* Text: "The part is 120 and the whole is 90."

* **Math:**

* Title: "Math"

* Text: "Calculate 120/90 *100%"

* Brain Diagram: Parietal lobe highlighted in tan. Frontal and Temporal lobes are also labeled. Hippocampus is labeled.

* Text: "133 1/3"

* **Decision Making:**

* Title: "Decision Making"

* Text: "Which option corresponds to 133 1/3%?"

* Brain Diagram: Frontal lobe highlighted in green. Parietal and Temporal lobes are also labeled. Hippocampus is labeled.

* Text: "Option (A)"

* **Neural Network Representation (Bottom):**

* A series of interconnected nodes representing a neural network.

* Nodes are colored grey, green, orange, and yellow, corresponding to the stages above.

* Arrows indicate the flow of information between nodes.

### Detailed Analysis

* **Knowledge Recall:** The frontal lobe is highlighted, suggesting its role in retrieving relevant formulas. The text states the formula for calculating percentage.

* **Semantic Understanding:** The temporal lobe is highlighted, indicating its involvement in understanding the context of the problem. The text identifies the part and the whole from the given question.

* **Math:** The parietal lobe is highlighted, suggesting its role in mathematical calculations. The text shows the calculation and the result.

* **Decision Making:** The frontal lobe is highlighted again, indicating its role in selecting the correct answer based on the calculated result. The text identifies the correct option.

* **Neural Network:**

* The network consists of multiple layers of nodes.

* The initial nodes are green, corresponding to Knowledge Recall.

* These connect to orange nodes, corresponding to Semantic Understanding.

* The orange nodes connect to yellow nodes, corresponding to Math.

* Finally, the yellow nodes connect to green nodes, corresponding to Decision Making.

* The arrows indicate the flow of information from one stage to the next.

### Key Observations

* The diagram clearly illustrates the different cognitive stages involved in solving a percentage problem.

* Each stage is associated with a specific brain region and a corresponding cognitive task.

* The neural network representation shows the flow of information between these stages.

* The diagram highlights the importance of both knowledge recall and semantic understanding in solving mathematical problems.

### Interpretation

The diagram demonstrates a simplified model of how the brain processes a mathematical problem. It suggests that solving such problems involves a sequence of cognitive stages, each relying on different brain regions and cognitive functions. The flow of information between these stages is crucial for arriving at the correct solution. The diagram also emphasizes the interconnectedness of different cognitive processes, highlighting the importance of both knowledge and understanding in problem-solving. The neural network representation, although simplified, provides a visual analogy for how information might be processed and transmitted within the brain during this process.

</details>

Figure 1: To solve a complex question, the human brain engages multiple regions to perform distinct cognitive functions necessary for generating a response. We explore whether there are specific attention heads in LLM play functional roles in producing answers.

In parallel, prompting techniques like chain-of-thought (CoT) cot have been shown to improve LLM performance by decomposing complex problems into intermediate steps, a strategy reminiscent of human problem-solving, like the example in Figure 1. We hypothesize that such prompting may activate and coordinate specialized components within the model. Thus, analyzing the behavior of attention heads under CoT reasoning could contribute insights for a deeper understanding of the internal workings of LLMs and how they process complex tasks.

In this work, we present a novel interpretability framework to systematically analyze the cognitive roles of attention heads during complex reasoning. To facilitate this, we introduce Cognitive Question&Answering (CogQA), a benchmark dataset that decomposes natural language questions into structured subquestions annotated with fine-grained cognitive functions, such as retrieval, logical inference, and knowledge recall. Leveraging CogQA, we develop a multi-class probing method to identify and characterize attention heads responsible for distinct cognitive operations within the transformer architecture.

We conduct extensive experiments on three major LLM families, including LLaMA (touvron2023llama), Qwen (yang2024qwen2), and Yi (young2024yi). Our results reveal the existence of cognitive heads that consistently exhibit universality, sparsity, and layered functional organization across architectures. Further analysis of the correlations among these cognitive heads reveals clear functional clustering, with heads grouping based on cognitive roles, and uncovers a hierarchical structure in which lower-level heads modulate higher-level ones—mirroring the modular and distributed processing observed in the human cortex (barsalou2014cognitive; ono2022bidirectional).

Furthermore, we validate the functional importance of these heads by showing that their removal degrades performance on complex tasks and leads to specific error patterns, while their enhancement improves reasoning capabilities. Our findings shed light on the structured cognitive architecture embedded in LLMs and open avenues for function-aware model design and analysis.

2 CogQA

In this section, we present a detailed account of our benchmark dataset CogQA’s construction and key characteristics. Although extensive existing benchmark collections span a wide array of NLP tasks, to our knowledge no resource explicitly evaluates LLM reasoning across diverse cognitive functions. To address this, we introduce CogQA, a dataset containing 570 main questions and 3,402 subquestions. Each example comprises a question, its answer, and an annotation specifying the cognitive function required for resolution.

2.1 Cognitive Function

To systematically capture the cognitive processes involved in complex reasoning tasks, we categorize cognitive functions into two groups: low-level functions and high-order functions, inspired by established frameworks in cognitive science anderson2014rules; diamond2013executive. Low-level functions primarily involve information retrieval and linguistic analysis, while high-order functions engage more abstract reasoning, problem-solving, and decision-making. Detailed descriptions of these cognitive functions are provided in Appendix A.4.

The low-level cognitive functions include:

- Retrieval: locating relevant information from an external source or prior context.

- Knowledge Recall: accessing stored factual or procedural knowledge from memory.

- Semantic Understanding: interpreting the meaning of words, phrases, or concepts.

- Syntactic Understanding: analyzing the grammatical structure of a sentence.

The high-order cognitive functions include:

- Mathematical Calculation: performing arithmetic or numerical operations.

- Logical Reasoning: drawing conclusions based on formal logical relationships.

- Inference: deriving implicit information that is not directly stated.

- Decision-Making: selecting the best outcome among alternatives based on reasoning.

This categorization reflects a natural progression from basic information processing to complex cognitive integration. Both the human brain and LLMs encompass a wide range of functional modules. Our focus in this work is specifically on reasoning-related cognitive functions. By identifying and organizing these eight core reasoning functions, we can more clearly examine how LLMs handle different types of thinking steps, in a way that is both systematic and easy to interpret.

2.2 Data Collections

Based on our categorization of cognitive functions, we sampled 750 diverse questions from NLP reasoning benchmarks, selecting 150 examples from each of AQuA aqua, CREAK creak, ECQA ecqa, e-SNLI esnli, and GSM8K gsm8k. These datasets cover a range of reasoning types, including logical, mathematical, and commonsense reasoning. Using the CoT paradigm, we prompted GPT-4o hurst2024gpt to decompose each question into subquestions, each targeting a single cognitive function. The prompt encourages structured, step-by-step reasoning, with each subquestion being clear, answerable, and sequentially dependent. This yields a set of subquestion-answer-cognitive function (subQAC) triples for each QA pair: $\operatorname{subQACs}=\left\{\left(q_{i},a_{i},c_{i}\right)\right\}_{i=1}^{k}$ , where each contains a subquestion $q_{i}$ , its concise answer $a_{i}$ , and the corresponding cognitive function label $c_{i}$ . The prompt for generating subquestions and examples are list in Appendix A.4 and Appendix A.6, respectively.

2.3 Data Filtering and Annotation

Recent advances have made it increasingly feasible to use LLMs for dataset construction, owing to their strong reasoning abilities and capacity to generate high-quality annotations at scale llm_annotate. Although our dataset is constructed automatically using an LLM to reduce manual effort, we implement a strict two-stage human verification pipeline to ensure data quality and mitigate hallucinations. In the first stage, three expert annotators independently assess whether the subquestions are logically structured and align with natural human reasoning. QA pairs with inconsistent or incoherent decompositions are filtered out. In the second stage, annotators verify and, if necessary, relabel the cognitive function associated with each subquestion to ensure alignment with the intended mental process. Finally, we validate the subanswers by cross-checking them using the GPT-o4-mini model o4mini2024, followed by human adjudication where discrepancies arise. Details of the annotation process and rubric can be found in Appendix A.5. This multi-step filtering ensures that each retained subQAC triple reflects a coherent, interpretable reasoning step grounded in core cognitive functions. After this refinement, our final dataset contains 570 main QA and 3,402 validated subQAC triplets.

3 Cognitive Function Detections

Given the CogQA dataset, we aim to identify which attention heads in LLMs are associated with specific cognitive functions. We adopt a probing-based framework, a widely used interpretability technique in which an auxiliary classifier is trained to predict properties from intermediate model representations alain2016understanding; belinkov2022probing; tenney2019bert. We frame this as a multi-class classification task: for each cognitively annotated subquestion, we extract head activations (see Section 3.1), train classifier and compute importance scores to identify contributing heads (see Section 3.2). Unlike prior work focusing on a single-class, our method captures many-to-many relationships between heads and functions, enabling a more detailed analysis of functional specialization and overlap compared to prior single-class approaches.

3.1 Head Feature Extraction

Given a large language model $\mathcal{M}$ , we generate an answer $a_{i}^{\mathcal{M}}$ for each subquestion $q_{i}$ derived from a main question $Q_{i}$ . To support coherent multi-step reasoning, we include preceding subquestions and their answers as contextual input, emulating the incremental reasoning process observed in human cognition.

During inference, input tokens are embedded and processed through successive transformer layers. At each layer, attention and feedforward operations update the residual stream, which is ultimately decoded into token predictions. For each generated token $i$ , we extract attention head outputs $X_{i}=\{x_{l}^{m}(i)\mid l=1,...,L,\ m=1,...,M\}$ across all layers, where $x^{m}_{l}$ denotes the value vector from the $m$ -th head in layer $l$ projected into the residual stream, with $M$ the number of heads per layer and $L$ the total number of layers.

Let $N_{t}$ denote the number of tokens in the generated answer $a_{i}^{\mathcal{M}}$ . To isolate semantically informative content relevant to reasoning, we select the top- $k$ most important tokens, We include an ablation study in Appendix A.9 to analyze the impact of using alternative token positions. determined by prompting GPT-o4-mini o4mini2024 (skilled in reasoning), yielding an index set $\mathcal{I}_{k}$ with $|\mathcal{I}_{k}|=k$ (Top- $k$ ( $k=5$ ) token examples are in Appendix A.10). For each index $j∈\mathcal{I}_{k}$ , we extract the corresponding attention head activations $X_{j}$ , and compute the averaged activation feature for the $m$ -th head in layer $l$ as $\bar{x}_{l}^{m}=\frac{1}{k}\sum_{j∈\mathcal{I}_{k}}x_{l}^{m}(j)$ . This results in a full set of head-level features $\bar{X}=\{\bar{x}_{l}^{m}\mid l={1,...,L},\ m={1,...,M}\}$ .

Given prior findings suggesting that cognitive functions may vary by layer depth zheng2409attention, we incorporate layer-wise information by computing the average activation $\bar{x}_{l}=\frac{1}{M}\sum_{m=1}^{M}\bar{x}_{l}^{m}$ for each layer. We then augment each head-level vector with its corresponding layer summary, resulting in enriched features $\bar{x}^{m^{\prime}}_{l}=[\bar{x}^{m}_{l};\bar{x}_{l}]$ . For each subQA triplet $(q_{i},\ a_{i},\ c_{i})$ , the final input to the probing classifier is given by $\{\bar{x}^{m^{\prime}}_{l}\mid l={1,...,L},\ m={1,...,M}\}$ .

3.2 Heads Importance

For the CogQA dataset with $N$ subQA pairs, we collect all activations to construct the probing dataset:

$$

\mathcal{D}_{\text{probe}}=\left\{(\bar{x}^{m^{\prime}}_{l},\ c)_{i}\right\}_{i=1}^{N},l\in\{1,\ldots,L\},\ m\in\{1,\ldots,M\} \tag{1}

$$

We split the dataset into training and validation sets with a $4{:}1$ ratio. Each attention head feature is first passed through a trainable linear projection for dimensionality reduction, followed by a two-layer MLP that performs multi-class classification over cognitive functions (training details are provided in Appendix A.3). To interpret the contribution of individual heads to each function, we use a gradient-based attribution method. Specifically, for each function class $c$ , we compute the contribution of each head feature via the gradient $×$ activation technique:

$$

I^{(c)}_{j}=\mathbb{E}_{(\bar{x},c)\sim\mathcal{D}_{\text{probe}}}\left[\frac{\partial\hat{y}_{c}}{\partial\bar{x}_{j}}\cdot\bar{x}_{j}\right], \tag{2}

$$

where $\bar{x}_{j}$ is the $j$ -th head input feature, and $\hat{y}_{c}$ is the classifier’s predicted logit for class $c$ . This yields an importance score for each attention head with respect to each cognitive function. We aggregate the scores into a matrix $\mathbf{I}∈\mathbb{R}^{C×(L· M)}$ , where each row corresponds to a function class and each column to a specific head in a specific layer.

We hypothesize that attention heads with higher importance scores contribute more significantly to each cognitive function. By ranking heads according to their importance, we can identify which heads and layers are specialized for specific functions. Subsequent targeted interventions on these heads validate the effectiveness of this approach.

4 Experiments

We conduct a series of experiments on three LLM families across various model scales, including LLaMA touvron2023llama (Llama3.1-8B-instruct and Llama3.2-3B-instruct), Qwen yang2024qwen2 (Qwen3-8B and Qwen3-4B), and Yi young2024yi (Yi1.5-9B and Yi1.5-6B). Our goal is to identify cognitive attention heads associated with specific reasoning functions and evaluate their roles via targeted interventions. By selectively masking these heads, we assess their functional significance in supporting downstream performance. We evaluate our method in terms of functional alignment, consistency across models, and causal impact on reasoning tasks. Results confirm the existence of sparse, function-specific heads and highlight their critical contribution to structured cognitive processing within LLMs.

4.1 Properties of Cognitive Heads

<details>

<summary>x2.png Details</summary>

### Visual Description

## Heatmap: Heads Importance by Layer and Task

### Overview

The image presents a series of heatmaps visualizing the importance of different "heads" within a neural network across various layers for different cognitive tasks. Each heatmap represents a specific task (Knowledge Recall, Retrieval, Logical Reasoning, Decision-making, Semantic Understanding, Syntactic Understanding, Inference, and Math Calculation). The x-axis represents the "Head" number (0-30), and the y-axis represents the "Layer" number (0-30). The color intensity indicates the "Heads Importance," ranging from dark purple (0.0000) to bright yellow (0.0030+).

### Components/Axes

* **Titles:** The heatmaps are titled with the cognitive tasks: Knowledge Recall, Retrieval, Logical Reasoning, Decision-making, Semantic Understanding, Syntactic Understanding, Inference, and Math Calculation.

* **X-axis:** Labeled "Head," with tick marks at intervals of 6, ranging from 0 to 30.

* **Y-axis:** Labeled "Layer," with tick marks at intervals of 6, ranging from 0 to 30.

* **Color Legend (Heads Importance):** Located on the right side of the image.

* Dark Purple: 0.0000

* Dark Blue: 0.0005

* Light Blue: 0.0010

* Green: 0.0015

* Yellow-Green: 0.0020

* Yellow: 0.0025

* Bright Yellow: 0.0030+

### Detailed Analysis

Each heatmap represents the importance of each head at each layer for a specific task. The color intensity indicates the level of importance.

* **Knowledge Recall:** Shows some higher importance heads concentrated around layers 12-18, with a few scattered high-importance heads in other layers.

* **Retrieval:** Similar to Knowledge Recall, with some concentration of higher importance heads around layers 12-18, and a few scattered elsewhere.

* **Logical Reasoning:** Shows a more dispersed pattern, with some higher importance heads scattered throughout the layers, but a slight concentration around layer 12.

* **Decision-making:** Shows a relatively even distribution of head importance across layers, with some slightly higher importance heads around layers 12-18.

* **Semantic Understanding:** Shows a few high-importance heads scattered throughout the layers, with no clear concentration.

* **Syntactic Understanding:** Shows a concentration of high-importance heads around layers 12-18, with a few scattered elsewhere.

* **Inference:** Shows a relatively even distribution of head importance across layers, with a few scattered high-importance heads.

* **Math Calculation:** Shows a concentration of high-importance heads in the lower layers (24-30), with a few scattered elsewhere.

### Key Observations

* **Layer 12-18 Importance:** Many tasks (Knowledge Recall, Retrieval, Syntactic Understanding, Decision-making) show a concentration of high-importance heads in the middle layers (around layers 12-18).

* **Math Calculation Anomaly:** Math Calculation stands out with a concentration of high-importance heads in the lower layers (24-30).

* **Sparse Activation:** The heatmaps are generally sparse, indicating that only a small subset of heads are highly important for each task at each layer.

### Interpretation

The heatmaps provide insights into which heads within a neural network are most important for different cognitive tasks at different layers. The concentration of high-importance heads in the middle layers (12-18) for many tasks suggests that these layers may be crucial for general cognitive processing. The unique pattern for Math Calculation, with high-importance heads in the lower layers, may indicate that this task relies on different processing mechanisms or representations compared to the other tasks. The sparsity of the heatmaps suggests that the network learns to use a specialized subset of heads for each task, rather than relying on all heads equally. This specialization could be a key factor in the network's ability to perform diverse cognitive tasks.

</details>

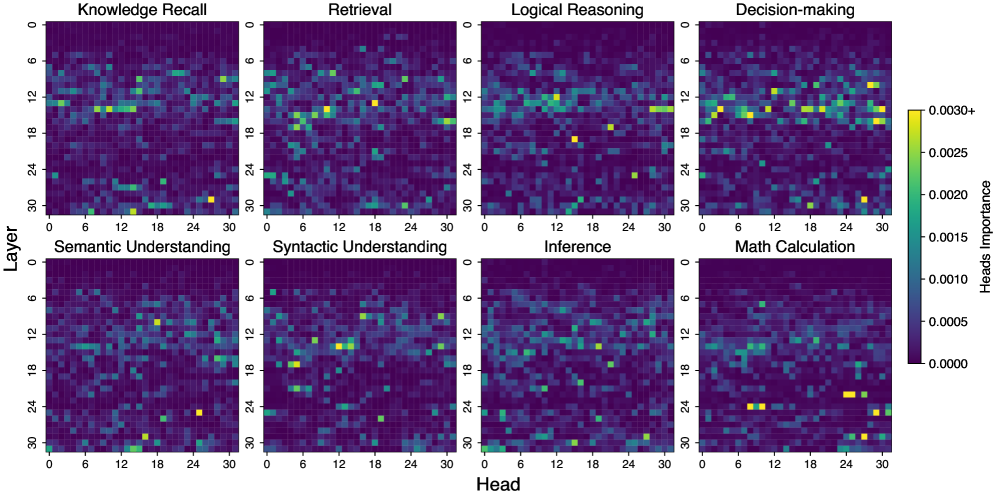

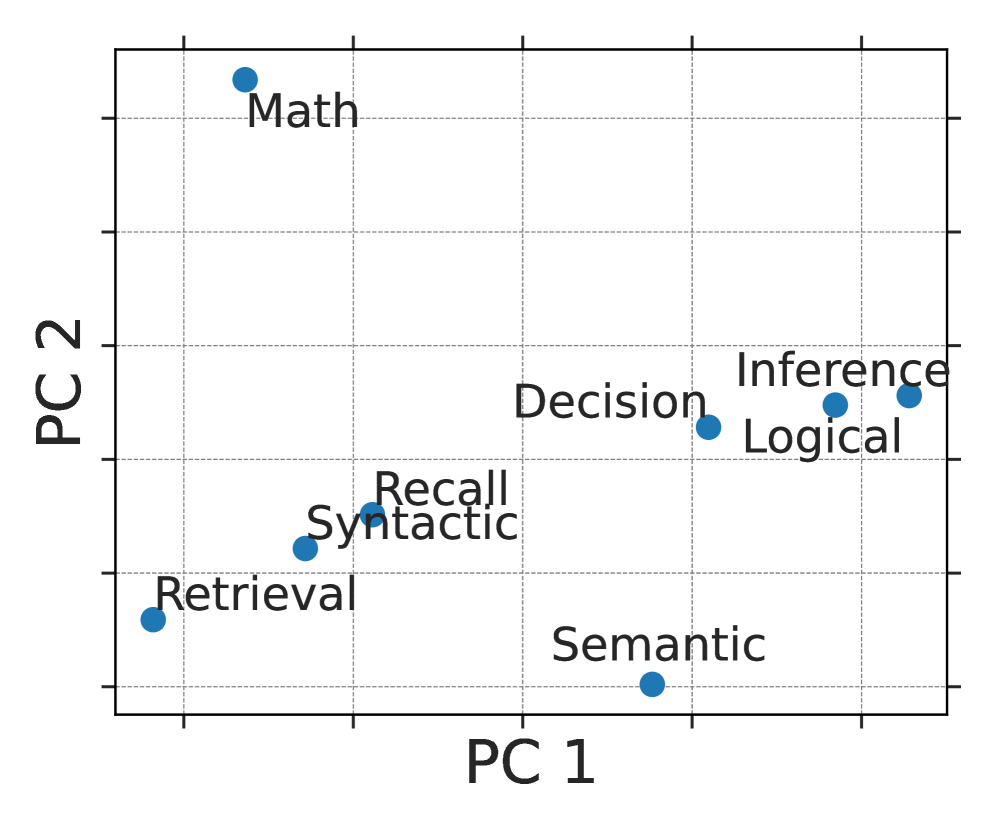

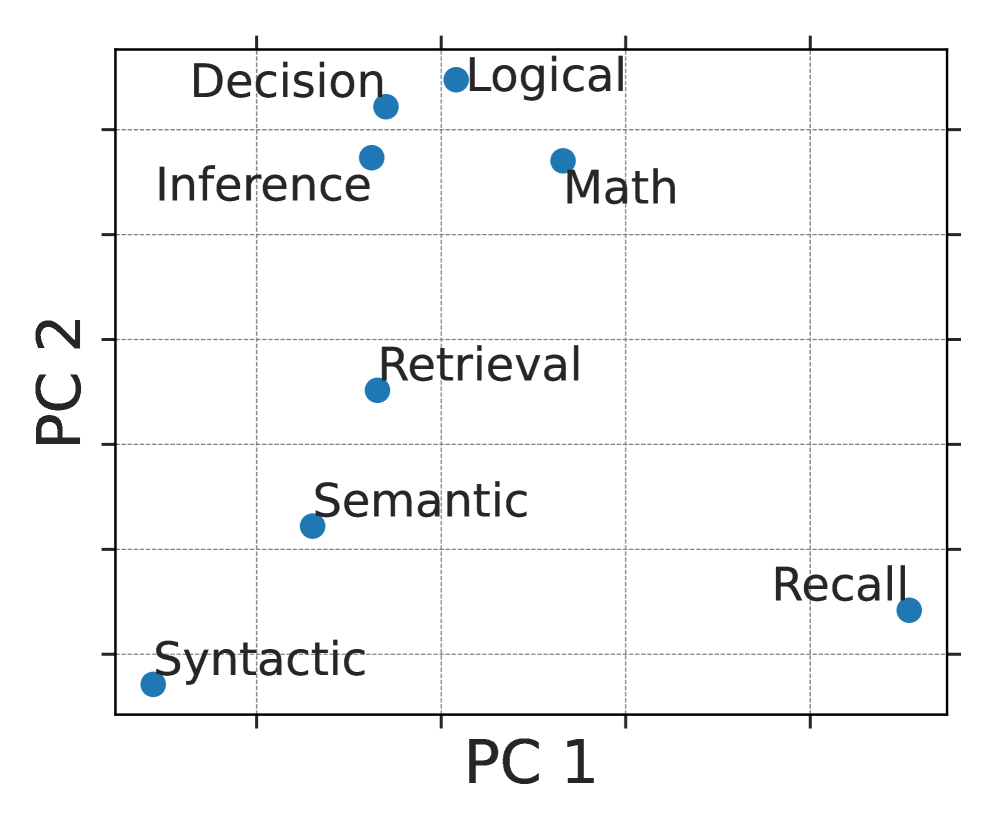

Figure 2: The existence of cognitive heads in Llama3.1-8B-instruct responsible for eight distinct functions in complex reasoning tasks. The x-axis represents the head index, while the y-axis indicates the layer index.

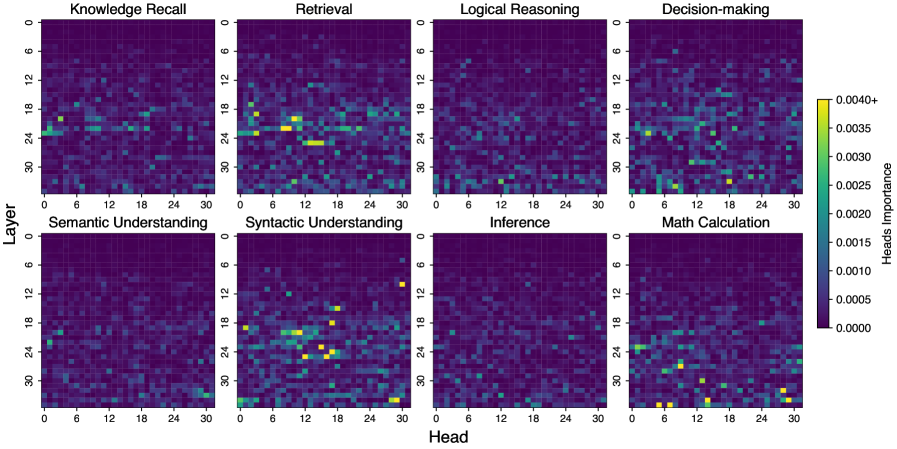

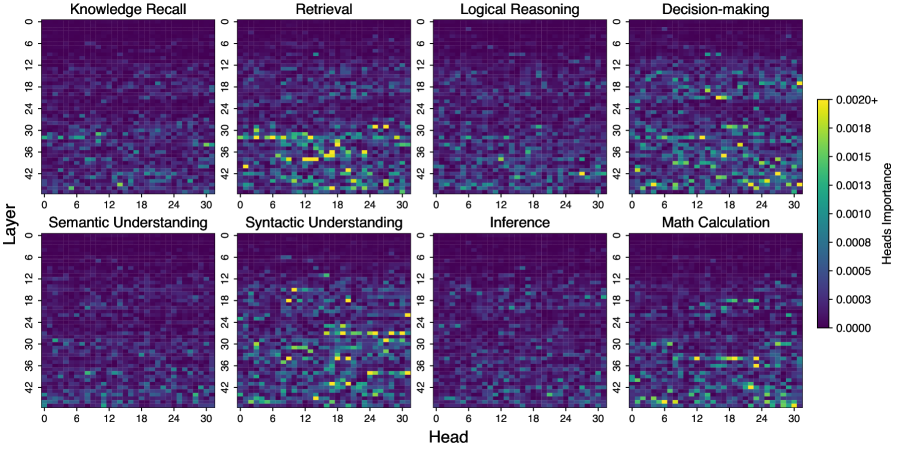

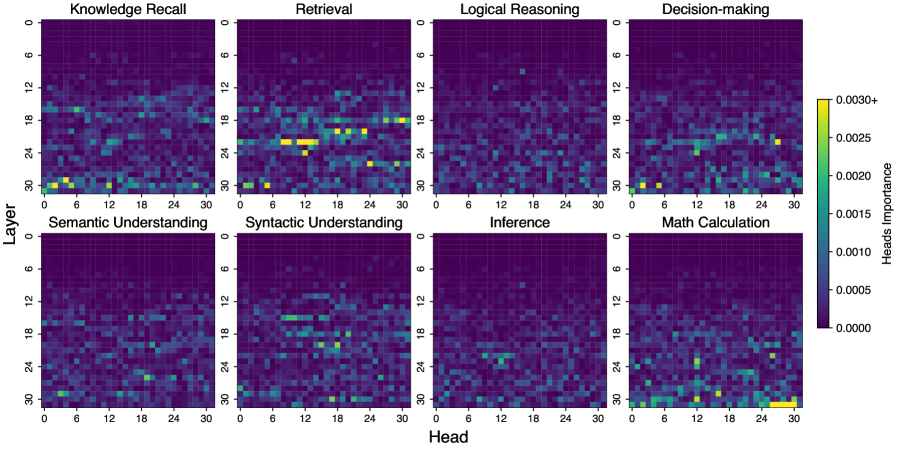

Our analysis reveals that cognitive head importance in large language models exhibits three key properties: sparsity and universality, and layered functional organization. To illustrate these characteristics, we present the heatmap of attention head importance scores across eight cognitive functions in Llama3.1-8B-instruct (Figure 2).

Sparsity and Universality: As shown in Figure 2, each cognitive function activates only a small number of high-importance attention heads, revealing a strikingly sparse pattern. In Llama3.1-8B-instruct, fewer than 7% of all heads have importance scores above 0.001 across the eight functions, suggesting that only a compact subset of heads meaningfully contribute to task performance. This sparsity is not uniform: Retrieval contains the highest proportion of salient heads (6.45% exceeding 0.01), while Inference has the fewest (3.42%). These results highlight that LLMs rely on highly specialized, localized components for different cognitive abilities. Importantly, we observe that this sparse functional organization is consistent across different model architectures and sizes. Additional heatmaps for five other models are provided in Appendix A.1, supporting the universality of this phenomenon.

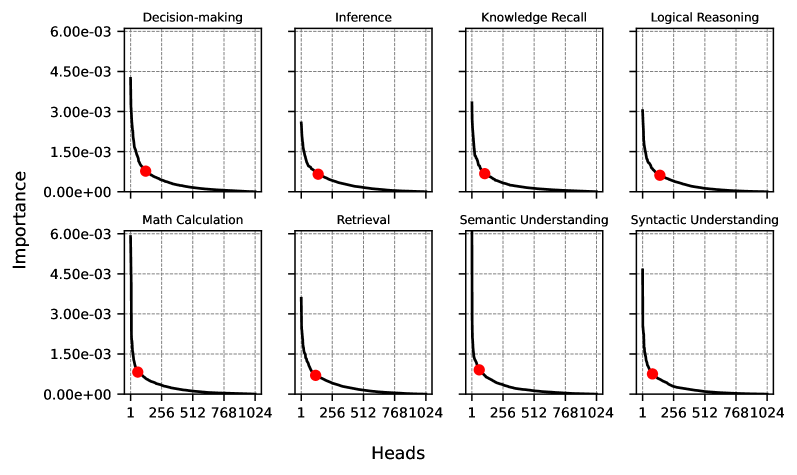

Layered Functional Organization: In addition to sparsity, attention heads show a structured distribution across model layers. Retrieval-related heads cluster primarily in the middle layers, while math-related heads appear more frequently in higher layers. This structured, task-dependent localization points to an emergent modular organization, where different layers support distinct cognitive operations. Further, we identify cognitive heads by selecting those before the elbow point of each function’s descending importance curve (Appendix A.2), and find notable variation in head counts across functions (Appendix A.8). For example, in the LLaMA family, mathematical calculation requires fewer heads (59 in Llama3.1-8B-Instruct, 35 in Llama3.2-3B-Instruct), while inference draws on substantially more (139 and 98, respectively), reflecting differences in representational and computational complexity.

Table 1: Intervention results (%) of cognitive heads vs. random heads across 8 cognitive functions: Retrieval, Knowledge Recall, Semantic Understanding, Syntax Understanding, Math Calculation, Inference, Logic Reasoning, and Decision Making. Lower values indicate more effective intervention outcomes, suggesting that the corresponding heads play a greater role in the cognitive function.

| Model | Inter_Head | Information Extraction and Analysis Functions | Higher-Order Processing Functions | | | | | | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Retrieval | Recall | Semantic | Syntactic | Math | Inference | Logic | Decision | | | | | | | | | | |

| comet | acc | comet | acc | comet | acc | comet | acc | comet | acc | comet | acc | comet | acc | comet | acc | | |

| Llama3.1-8B | random | 90.83 | 84.71 | 87.85 | 83.84 | 91.44 | 97.50 | 87.81 | 66.17 | 94.25 | 83.08 | 91.90 | 70.18 | 91.39 | 54.69 | 97.64 | 90.91 |

| cognitive | 44.96 | 8.24 | 56.93 | 38.38 | 81.98 | 75.00 | 69.20 | 40.00 | 87.81 | 66.17 | 76.65 | 52.63 | 52.07 | 4.69 | 56.02 | 4.55 | |

| Llama3.2-3B | random | 87.89 | 86.47 | 76.35 | 68.69 | 90.54 | 90.00 | 75.82 | 40.00 | 94.98 | 69.65 | 95.66 | 85.96 | 92.75 | 76.56 | 93.30 | 81.82 |

| cognitive | 49.47 | 17.06 | 49.69 | 13.13 | 52.29 | 10.00 | 43.62 | 0.00 | 92.01 | 80.10 | 53.60 | 7.02 | 46.69 | 0.00 | 49.25 | 0.00 | |

| Qwen3-8B | random | 92.81 | 75.29 | 89.90 | 53.54 | 92.73 | 42.50 | 88.60 | 80.00 | 92.69 | 60.20 | 94.45 | 24.56 | 94.15 | 20.31 | 96.52 | 31.82 |

| cognitive | 59.19 | 38.24 | 64.81 | 30.30 | 85.95 | 47.50 | 46.26 | 0.00 | 89.29 | 53.23 | 72.77 | 35.09 | 87.61 | 21.88 | 83.17 | 54.55 | |

| Qwen3-4B | random | 94.17 | 84.71 | 84.61 | 77.78 | 86.91 | 77.50 | 98.15 | 80.00 | 87.15 | 44.78 | 96.89 | 87.72 | 92.00 | 75.00 | 94.79 | 72.73 |

| cognitive | 80.13 | 64.71 | 63.10 | 35.35 | 65.95 | 60.00 | 46.25 | 0.00 | 82.40 | 46.27 | 84.88 | 64.91 | 82.79 | 39.06 | 45.49 | 13.64 | |

| Yi-1.5-9B | random | 86.83 | 79.41 | 82.02 | 54.55 | 77.40 | 35.00 | 81.53 | 60.00 | 76.04 | 36.32 | 89.83 | 36.84 | 87.53 | 42.19 | 86.27 | 63.64 |

| cognitive | 52.76 | 21.76 | 45.99 | 9.09 | 47.25 | 2.50 | 48.10 | 40.00 | 54.22 | 16.92 | 52.41 | 15.79 | 82.75 | 26.56 | 62.85 | 18.18 | |

| Yi-1.5-6B | random | 80.64 | 69.41 | 68.82 | 38.38 | 77.83 | 55.00 | 69.61 | 60.00 | 73.33 | 43.78 | 77.71 | 22.81 | 81.65 | 29.69 | 88.54 | 72.73 |

| cognitive | 49.90 | 15.29 | 68.23 | 41.41 | 49.54 | 2.50 | 42.92 | 0.00 | 76.64 | 43.78 | 68.53 | 14.04 | 44.94 | 0.00 | 86.28 | 50.00 | |

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Charts: Performance Comparison with Masked Heads

### Overview

The image contains four line charts comparing the performance of "TopK" and "RandomK" methods across different tasks: Retrieval, Knowledge Recall, Math Calculation, and Inference. The x-axis represents the number of masked heads (16, 32, 64, 128), and the y-axis represents the score (from 0.0 to 1.0). Each chart displays two solid lines ("TopK Accuracy" and "TopK Comet") and two dashed lines ("RandomK Accuracy" and "RandomK Comet").

### Components/Axes

* **X-axis:** "# Masked Heads" with values 16, 32, 64, and 128.

* **Y-axis:** "Score" ranging from 0.0 to 1.0 in increments of 0.2.

* **Chart Titles:** Retrieval, Knowledge Recall, Math Calculation, Inference.

* **Legend (Top):**

* Blue solid line: "TopK Accuracy"

* Blue dashed line: "RandomK Accuracy"

* Red solid line: "TopK Comet"

* Red dashed line: "RandomK Comet"

### Detailed Analysis

#### Retrieval Chart

* **TopK Accuracy (Blue Solid):** Starts at approximately 0.95 at 16 masked heads, decreases to approximately 0.55 at 32 masked heads, remains relatively stable around 0.60 until 64 masked heads, then drops sharply to approximately 0.0 at 128 masked heads.

* **RandomK Accuracy (Blue Dashed):** Starts at approximately 0.95 and remains relatively stable between 0.95 and 0.80 across all values of masked heads.

* **TopK Comet (Red Solid):** Starts at approximately 0.95 at 16 masked heads, decreases to approximately 0.75 at 32 masked heads, remains relatively stable around 0.75 until 64 masked heads, then decreases to approximately 0.40 at 128 masked heads.

* **RandomK Comet (Red Dashed):** Starts at approximately 0.95 and remains relatively stable between 0.95 and 0.80 across all values of masked heads.

#### Knowledge Recall Chart

* **TopK Accuracy (Blue Solid):** Starts at approximately 0.90 at 16 masked heads, decreases to approximately 0.10 at 128 masked heads, with a slight increase at 64 masked heads.

* **RandomK Accuracy (Blue Dashed):** Starts at approximately 0.95 and decreases to approximately 0.80 at 128 masked heads.

* **TopK Comet (Red Solid):** Starts at approximately 0.95 at 16 masked heads, decreases to approximately 0.20 at 128 masked heads.

* **RandomK Comet (Red Dashed):** Starts at approximately 0.95 and decreases to approximately 0.85 at 128 masked heads.

#### Math Calculation Chart

* **TopK Accuracy (Blue Solid):** Starts at approximately 0.95 at 16 masked heads, decreases to approximately 0.20 at 128 masked heads.

* **RandomK Accuracy (Blue Dashed):** Starts at approximately 0.95 and decreases to approximately 0.90 at 128 masked heads.

* **TopK Comet (Red Solid):** Starts at approximately 0.95 at 16 masked heads, decreases to approximately 0.60 at 128 masked heads.

* **RandomK Comet (Red Dashed):** Starts at approximately 0.95 and decreases to approximately 0.90 at 128 masked heads.

#### Inference Chart

* **TopK Accuracy (Blue Solid):** Starts at approximately 0.95 at 16 masked heads, decreases to approximately 0.65 at 128 masked heads.

* **RandomK Accuracy (Blue Dashed):** Starts at approximately 0.95 and decreases to approximately 0.80 at 128 masked heads.

* **TopK Comet (Red Solid):** Starts at approximately 0.95 at 16 masked heads, decreases to approximately 0.75 at 128 masked heads.

* **RandomK Comet (Red Dashed):** Starts at approximately 0.95 and decreases to approximately 0.85 at 128 masked heads.

### Key Observations

* In all four tasks, the "RandomK Accuracy" and "RandomK Comet" lines (dashed) show more stable performance as the number of masked heads increases, compared to the "TopK Accuracy" and "TopK Comet" lines (solid).

* The "TopK Accuracy" line experiences the most significant drop in performance, especially in the Retrieval and Knowledge Recall tasks.

* The "TopK Comet" line also shows a decrease in performance, but not as drastic as the "TopK Accuracy" line.

### Interpretation

The charts suggest that the "RandomK" methods are more robust to the masking of heads compared to the "TopK" methods. As the number of masked heads increases, the performance of "TopK" methods decreases significantly, indicating that these methods are more sensitive to the loss of information from specific heads. The "RandomK" methods, on the other hand, maintain a more stable performance, suggesting that they are better at utilizing the remaining information when heads are masked. This could be because "RandomK" methods distribute the attention more evenly across the heads, while "TopK" methods rely more heavily on a specific subset of heads. The Retrieval and Knowledge Recall tasks seem to be more affected by the masking of heads than the Math Calculation and Inference tasks, suggesting that these tasks may rely more on specific attention patterns.

</details>

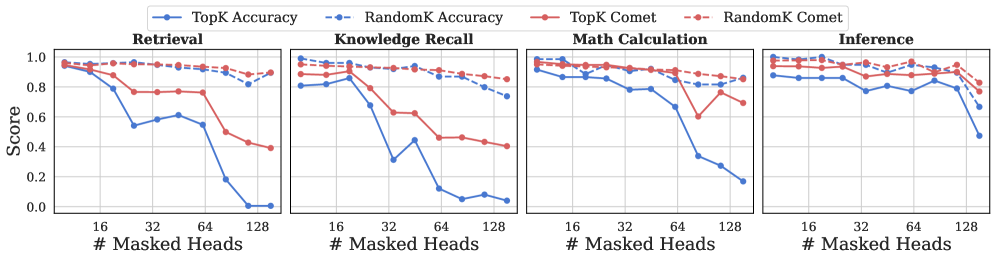

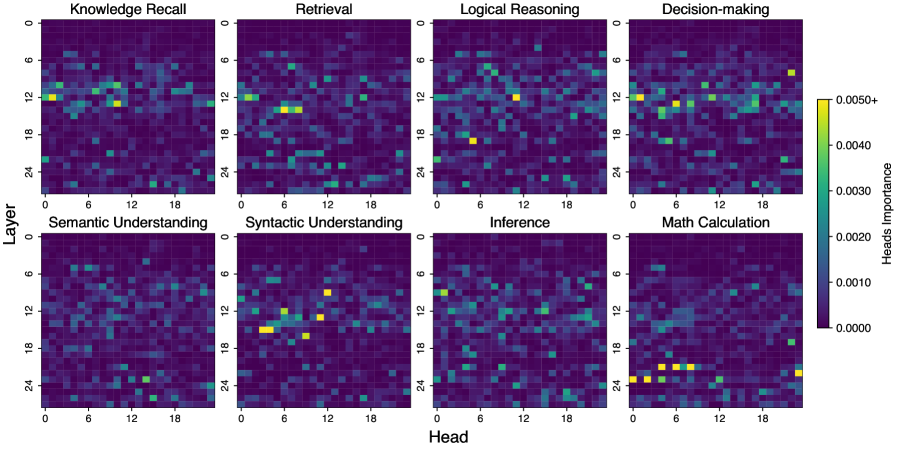

Figure 3: The performance of Llama3.1-8B-instruct by masking out top K cognitive heads vs K random heads on retrieval, knowledge recall, math calculation, and inference.

4.2 Functional Contributions of Cognitive Heads

After identifying the cognitive heads associated with each function, we examine their functional roles by evaluating the model’s behavior on the CogQA test set under targeted interventions. We perform head ablation by scaling the output of a specific attention head with a small factor $\epsilon$ (e.g., 0.001), effectively suppressing its contribution:

$$

x_{i}^{\text{mask}}=\operatorname{Softmax}\left(\frac{W_{q}^{i}W_{k}^{iT}}{\sqrt{d_{k}/n}}\right)\cdot\epsilon W_{v}^{i} \tag{3}

$$

Specifically, we compare model performance when masking identified cognitive heads versus masking an equal number of randomly selected heads. To quantify the impact of masking, we use several standard evaluation metrics including COMET rei2020comet, BLEU papineni2002bleu, ROUGE chin2004rouge, and semantic similarity to compare the model’s outputs before and after intervention. We define an output as unaffected if the BLEU score exceeds 0.8, or either the ROUGE or semantic similarity scores surpass 0.6, and compute accuracy accordingly.

As shown in Table 1, masking cognitive heads leads to a significant decline in performance, whereas masking an equal number of random heads results in only marginal degradation across all LLMs. In some cases, masking the identified cognitive heads causes the accuracy to drop to zero, indicating that the model cannot execute the corresponding function without them. This sharp contrast highlights the essential role cognitive heads play in enabling specific reasoning capabilities. To further validate the functional specialization, we conduct experiments where we mask the retrieval heads during the evaluation of knowledge recall (Recall), and conversely, mask knowledge recall heads during the evaluation of retrieval performance. The results in Table 2 show that masking the corresponding cognitive heads causes a significantly larger performance drop than masking others.

Table 2: Intervention results (%) of different cognitive heads and random heads across Retrieval and Knowledge Recall functions.

| Llama3.1-8B Llama3.1-8B Llama3.1-8B | random retrieval recall | 90.83 44.96 86.79 | 84.71 8.24 75.29 | 87.85 72.05 56.93 | 83.84 33.33 38.38 |

| --- | --- | --- | --- | --- | --- |

| Qwen3-8B | random | 92.81 | 75.29 | 89.90 | 53.54 |

| Qwen3-8B | retrieval | 59.19 | 38.24 | 79.26 | 57.58 |

| Qwen3-8B | recall | 83.31 | 71.18 | 64.81 | 30.30 |

We further investigate the performance of model under different numbers of masked attention heads. As shown in Figure 3, increasing the number of randomly masked heads has minimal impact on overall performance of Llama3.1-8B-instruct. In contrast, masking cognitive heads results in a significant drop in performance across various functions. Notably, masking heads associated with Retrieval and Knowledge Recall causes a pronounced degradation in their respective functions, whereas functions such as Math Calculation and Inference exhibit more resilience. This suggests that certain cognitive functions depend more heavily on specific, distinguishable attention heads, while others are distributed more broadly across the model.

4.3 Relationship Among Cognitive Heads

While cognitive heads are specialized for distinct functions, understanding their relationships is crucial for revealing how complex reasoning emerges from their cooperation.

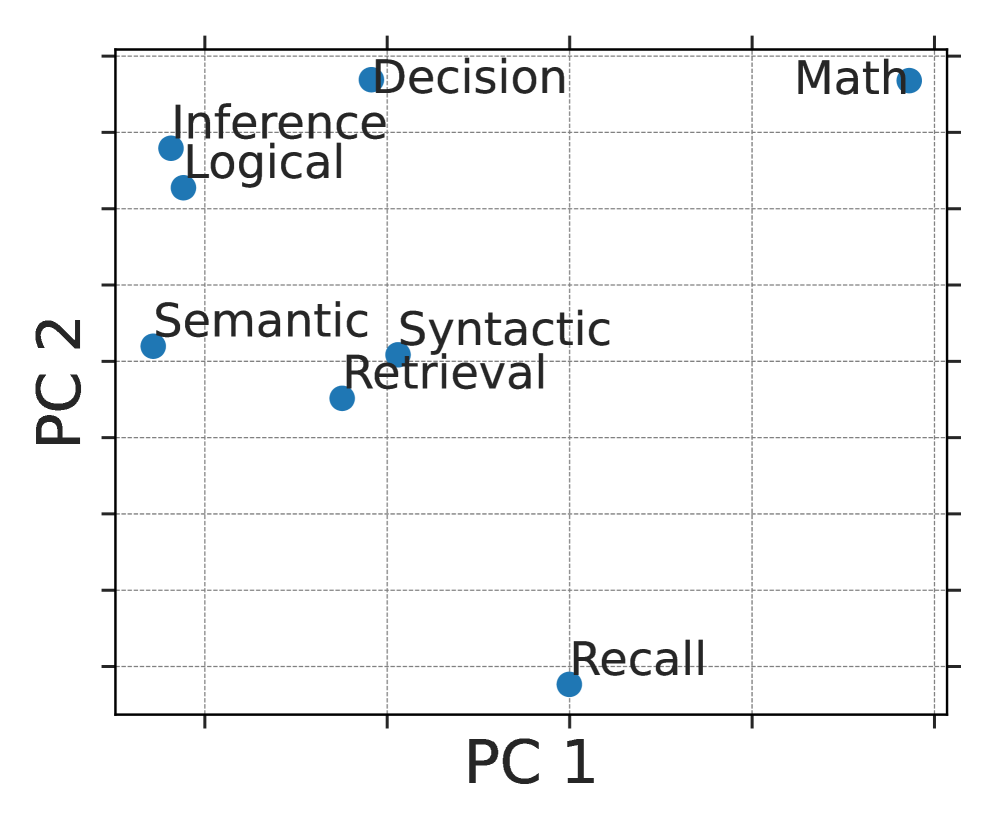

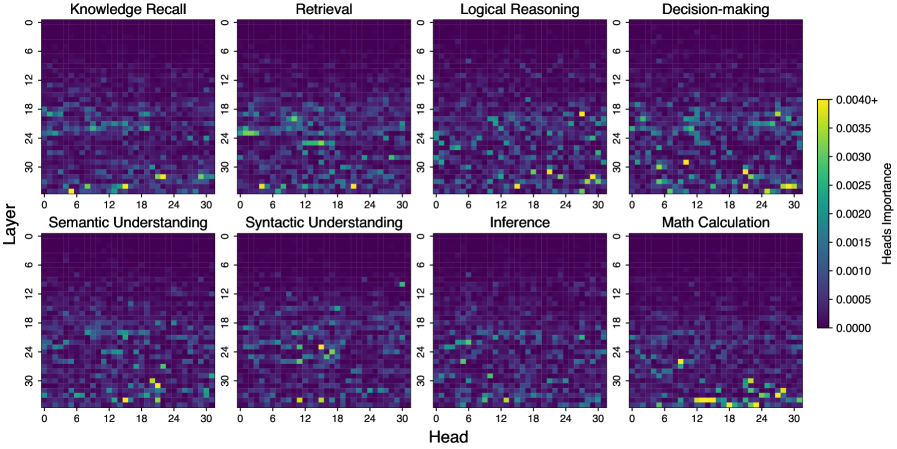

Functional Clustering: Inspired by neuroscience findings that related cognitive functions localize in overlapping brain regions (e.g., prefrontal cortex for reasoning and inference barsalou2014cognitive), we investigate whether LLM attention heads show similar patterns. We rank each head’s importance across eight cognitive functions, form ranking vectors, and apply principal component analysis (PCA) to visualize their organization (Figure 4). The results reveal clear clustering: heads linked to reasoning, inference, and decision-making group closely, while those related to mathematical computation form a distinct cluster in Llama and Qwen, and lie adjacent to reasoning heads in Yi. Lower-level functions also show moderate clustering. These patterns suggest a modular functional architecture in LLMs akin to that in the human brain.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Scatter Plot: Cognitive Task Distribution in PC1 and PC2 Space

### Overview

The image is a scatter plot displaying the distribution of various cognitive tasks along two principal components, PC1 and PC2. Each task is represented by a blue dot, with its name labeled nearby. The plot provides a visual representation of how these tasks relate to each other in a two-dimensional space defined by the principal components.

### Components/Axes

* **X-axis (PC1):** Labeled "PC 1".

* **Y-axis (PC2):** Labeled "PC 2".

* **Data Points:** Blue dots representing cognitive tasks.

* **Gridlines:** Dashed gray lines forming a grid.

* **Cognitive Tasks (Categories):**

* Inference

* Logical

* Semantic

* Syntactic

* Retrieval

* Decision

* Math

* Recall

### Detailed Analysis or ### Content Details

Here's a breakdown of the approximate coordinates of each cognitive task:

* **Inference:** PC1 ≈ -1.0, PC2 ≈ 1.0

* **Logical:** PC1 ≈ -1.0, PC2 ≈ 1.0

* **Semantic:** PC1 ≈ -1.0, PC2 ≈ 0.2

* **Syntactic:** PC1 ≈ -0.2, PC2 ≈ 0.3

* **Retrieval:** PC1 ≈ -0.2, PC2 ≈ 0.3

* **Decision:** PC1 ≈ 0.0, PC2 ≈ 1.2

* **Math:** PC1 ≈ 1.2, PC2 ≈ 1.3

* **Recall:** PC1 ≈ 0.0, PC2 ≈ -1.0

### Key Observations

* "Inference" and "Logical" are clustered closely together in the top-left quadrant.

* "Semantic," "Syntactic," and "Retrieval" are grouped in the middle-left region.

* "Decision" and "Math" are located in the top-right quadrant.

* "Recall" is isolated in the bottom-center region.

### Interpretation

The scatter plot visualizes the relationships between different cognitive tasks based on their projections onto the first two principal components (PC1 and PC2). The proximity of tasks suggests similarities in the underlying cognitive processes captured by these principal components. For example, "Inference" and "Logical" tasks being close together indicates that PC1 and PC2 may similarly influence these tasks. "Recall" being distant from the other tasks suggests it involves different cognitive processes. The plot provides a dimensionality reduction of the cognitive tasks, allowing for a simplified comparison of their characteristics.

</details>

(a) Llama3.1-8B

<details>

<summary>x5.png Details</summary>

### Visual Description

## Scatter Plot: Cognitive Task Distribution in PC1 and PC2 Space

### Overview

The image is a scatter plot displaying the distribution of various cognitive tasks in a two-dimensional space defined by Principal Component 1 (PC1) and Principal Component 2 (PC2). Each task is represented by a blue dot, with its position indicating its relative loading on the two principal components. The plot provides a visual representation of the relationships and distinctions between these cognitive tasks based on their underlying cognitive demands.

### Components/Axes

* **X-axis (PC1):** Labeled "PC 1". The axis ranges from approximately -1 to 1, with no explicit numerical markers.

* **Y-axis (PC2):** Labeled "PC 2". The axis ranges from approximately -1 to 1, with no explicit numerical markers.

* **Data Points:** Each data point is a blue circle representing a cognitive task.

* **Gridlines:** Gray dashed gridlines are present to aid in visually estimating the coordinates of each data point.

### Detailed Analysis or ### Content Details

The following cognitive tasks are plotted:

* **Math:** Located in the top-left quadrant, with approximate coordinates of PC1 = -0.7, PC2 = 0.8.

* **Retrieval:** Located in the bottom-left quadrant, with approximate coordinates of PC1 = -0.8, PC2 = -0.4.

* **Syntactic:** Located slightly to the left of the center, with approximate coordinates of PC1 = -0.5, PC2 = -0.2.

* **Recall:** Located slightly to the left of the center, with approximate coordinates of PC1 = -0.4, PC2 = -0.1.

* **Semantic:** Located in the bottom-right quadrant, with approximate coordinates of PC1 = 0.2, PC2 = -0.7.

* **Decision:** Located in the top-right quadrant, with approximate coordinates of PC1 = 0.1, PC2 = 0.4.

* **Inference:** Located in the top-right quadrant, with approximate coordinates of PC1 = 0.6, PC2 = 0.5.

* **Logical:** Located in the top-right quadrant, with approximate coordinates of PC1 = 0.7, PC2 = 0.4.

### Key Observations

* **Clustering:** The tasks "Inference" and "Logical" are clustered closely together in the top-right quadrant. The tasks "Recall" and "Syntactic" are also clustered together, slightly to the left of the center.

* **Distribution:** The tasks are distributed across all four quadrants, indicating that PC1 and PC2 capture different aspects of cognitive processing.

* **Extreme Points:** "Math" has a high PC2 value and a low PC1 value, while "Semantic" has a low PC2 value and a slightly positive PC1 value.

### Interpretation

The scatter plot visualizes the relationships between different cognitive tasks based on their loadings on the first two principal components (PC1 and PC2). The proximity of tasks in the plot suggests that they share similar cognitive demands as captured by these principal components. For example, "Inference" and "Logical" tasks being close together suggests they load similarly on PC1 and PC2, potentially indicating shared cognitive processes. "Math" and "Semantic" are located in opposite quadrants, suggesting that they rely on different cognitive processes as captured by PC1 and PC2. The plot provides a dimensionality reduction of the cognitive tasks, allowing for a simplified comparison of their underlying cognitive requirements.

</details>

(b) Qwen3-4B

<details>

<summary>x6.png Details</summary>

### Visual Description

## Scatter Plot: Cognitive Task Distribution in PC Space

### Overview

The image is a scatter plot displaying the distribution of various cognitive tasks in a two-dimensional space defined by Principal Component 1 (PC1) and Principal Component 2 (PC2). Each task is represented by a blue dot, with its position indicating its loading on the two principal components. The plot provides a visual representation of the relationships between these tasks based on their underlying cognitive demands.

### Components/Axes

* **X-axis:** PC 1 (Principal Component 1)

* **Y-axis:** PC 2 (Principal Component 2)

* **Data Points:** Blue dots representing cognitive tasks.

* **Gridlines:** Dashed grey lines forming a grid.

* **Cognitive Tasks:**

* Syntactic

* Semantic

* Retrieval

* Inference

* Decision

* Logical

* Math

* Recall

### Detailed Analysis

The scatter plot shows the following approximate coordinates for each cognitive task:

* **Syntactic:** PC1 ~ 0.2, PC2 ~ 0.2

* **Semantic:** PC1 ~ 0.4, PC2 ~ 0.4

* **Retrieval:** PC1 ~ 0.5, PC2 ~ 0.6

* **Inference:** PC1 ~ 0.3, PC2 ~ 0.8

* **Decision:** PC1 ~ 0.4, PC2 ~ 0.9

* **Logical:** PC1 ~ 0.7, PC2 ~ 0.9

* **Math:** PC1 ~ 0.8, PC2 ~ 0.8

* **Recall:** PC1 ~ 0.9, PC2 ~ 0.3

### Key Observations

* The tasks are distributed across the plot, indicating varying degrees of loading on PC1 and PC2.

* "Syntactic" and "Semantic" are clustered together in the bottom-left quadrant.

* "Decision" and "Logical" are clustered together in the top-right quadrant.

* "Recall" is located in the bottom-right quadrant, separated from the other tasks.

### Interpretation

The scatter plot visualizes the relationships between different cognitive tasks based on their underlying cognitive demands, as captured by Principal Component Analysis. The proximity of tasks suggests similarities in their cognitive requirements. For example, "Syntactic" and "Semantic" tasks, being close together, may share common cognitive processes. Conversely, the separation of "Recall" from other tasks suggests it relies on different cognitive mechanisms. The plot provides a simplified representation of the cognitive landscape, highlighting the dimensions along which these tasks differ. The principal components (PC1 and PC2) likely represent underlying cognitive factors that contribute to the performance of these tasks. Further analysis would be needed to determine the specific cognitive processes captured by each principal component.

</details>

(c) Yi-1.5-6B

Figure 4: PCA visualization of the 8 function heads’ clustering in three models.

Table 3: Study on the influence of low-level cognitive heads for high-order function on Llama3.1-8B-instruct. Accuracy is measured based on BLEU, ROUGE, and semantic similarity scores.

| ✗ | ✓ | ✓ | ✓ | $0.00_{\definecolor{tcbcolback}{rgb}{0.87890625,0.9609375,1}\definecolor{tcbcolframe}{rgb}{0.87890625,0.9609375,1}\par\noindent\hbox to28.45pt{\vbox to9.96pt{\pgfpicture\makeatletter\hbox{\thinspace\lower 0.0pt\hbox to0.0pt{\pgfsys@beginscope\pgfsys@invoke{ }\definecolor{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@rgb@stroke{0}{0}{0}\pgfsys@invoke{ }\pgfsys@color@rgb@fill{0}{0}{0}\pgfsys@invoke{ }\pgfsys@setlinewidth{\the\pgflinewidth}\pgfsys@invoke{ }\nullfont\hbox to0.0pt{{}{}{}{}\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{{}}{{}}{{}}{{}}{{}}{{}}{{}}{{}}\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@transformcm{1.0}{0.0}{0.0}{1.0}{2.84526pt}{3.72931pt}\pgfsys@invoke{ }\hbox{{\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\hbox{\minipage[b]{22.76222pt}\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\ignorespaces\centering\ignorespaces{\text{$\downarrow$ 100}}\@add@centering\endminipage}}}\pgfsys@invoke{ }\pgfsys@endscope}\pgfsys@invoke{ }\pgfsys@endscope{}{}{}\hss}\pgfsys@discardpath\pgfsys@invoke{ }\pgfsys@endscope\hss}}\endpgfpicture}}\par}$ | $0.00_{\definecolor{tcbcolback}{rgb}{0.87890625,0.9609375,1}\definecolor{tcbcolframe}{rgb}{0.87890625,0.9609375,1}\par\noindent\hbox to28.45pt{\vbox to9.96pt{\pgfpicture\makeatletter\hbox{\thinspace\lower 0.0pt\hbox to0.0pt{\pgfsys@beginscope\pgfsys@invoke{ }\definecolor{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@rgb@stroke{0}{0}{0}\pgfsys@invoke{ }\pgfsys@color@rgb@fill{0}{0}{0}\pgfsys@invoke{ }\pgfsys@setlinewidth{\the\pgflinewidth}\pgfsys@invoke{ }\nullfont\hbox to0.0pt{{}{}{}{}\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{{}}{{}}{{}}{{}}{{}}{{}}{{}}{{}}\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@transformcm{1.0}{0.0}{0.0}{1.0}{2.84526pt}{3.72931pt}\pgfsys@invoke{ }\hbox{{\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\hbox{\minipage[b]{22.76222pt}\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\ignorespaces\centering\ignorespaces{\text{$\downarrow$ 100}}\@add@centering\endminipage}}}\pgfsys@invoke{ }\pgfsys@endscope}\pgfsys@invoke{ }\pgfsys@endscope{}{}{}\hss}\pgfsys@discardpath\pgfsys@invoke{ }\pgfsys@endscope\hss}}\endpgfpicture}}\par}$ | $0.00_{\definecolor{tcbcolback}{rgb}{0.87890625,0.9609375,1}\definecolor{tcbcolframe}{rgb}{0.87890625,0.9609375,1}\par\noindent\hbox to28.45pt{\vbox to9.96pt{\pgfpicture\makeatletter\hbox{\thinspace\lower 0.0pt\hbox to0.0pt{\pgfsys@beginscope\pgfsys@invoke{ }\definecolor{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@rgb@stroke{0}{0}{0}\pgfsys@invoke{ }\pgfsys@color@rgb@fill{0}{0}{0}\pgfsys@invoke{ }\pgfsys@setlinewidth{\the\pgflinewidth}\pgfsys@invoke{ }\nullfont\hbox to0.0pt{{}{}{}{}\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{{}}{{}}{{}}{{}}{{}}{{}}{{}}{{}}\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@transformcm{1.0}{0.0}{0.0}{1.0}{2.84526pt}{3.72931pt}\pgfsys@invoke{ }\hbox{{\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\hbox{\minipage[b]{22.76222pt}\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\ignorespaces\centering\ignorespaces{\text{$\downarrow$ 100}}\@add@centering\endminipage}}}\pgfsys@invoke{ }\pgfsys@endscope}\pgfsys@invoke{ }\pgfsys@endscope{}{}{}\hss}\pgfsys@discardpath\pgfsys@invoke{ }\pgfsys@endscope\hss}}\endpgfpicture}}\par}$ | $0.00_{\definecolor{tcbcolback}{rgb}{0.87890625,0.9609375,1}\definecolor{tcbcolframe}{rgb}{0.87890625,0.9609375,1}\par\noindent\hbox to28.45pt{\vbox to9.96pt{\pgfpicture\makeatletter\hbox{\thinspace\lower 0.0pt\hbox to0.0pt{\pgfsys@beginscope\pgfsys@invoke{ }\definecolor{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@rgb@stroke{0}{0}{0}\pgfsys@invoke{ }\pgfsys@color@rgb@fill{0}{0}{0}\pgfsys@invoke{ }\pgfsys@setlinewidth{\the\pgflinewidth}\pgfsys@invoke{ }\nullfont\hbox to0.0pt{{}{}{}{}\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{{}}{{}}{{}}{{}}{{}}{{}}{{}}{{}}\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@transformcm{1.0}{0.0}{0.0}{1.0}{2.84526pt}{3.72931pt}\pgfsys@invoke{ }\hbox{{\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\hbox{\minipage[b]{22.76222pt}\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\ignorespaces\centering\ignorespaces{\text{$\downarrow$ 100}}\@add@centering\endminipage}}}\pgfsys@invoke{ }\pgfsys@endscope}\pgfsys@invoke{ }\pgfsys@endscope{}{}{}\hss}\pgfsys@discardpath\pgfsys@invoke{ }\pgfsys@endscope\hss}}\endpgfpicture}}\par}$ |

| --- | --- | --- | --- | --- | --- | --- | --- |

| ✓ | ✗ | ✓ | ✓ | $0.00_{\definecolor{tcbcolback}{rgb}{0.87890625,0.9609375,1}\definecolor{tcbcolframe}{rgb}{0.87890625,0.9609375,1}\par\noindent\hbox to28.45pt{\vbox to9.96pt{\pgfpicture\makeatletter\hbox{\thinspace\lower 0.0pt\hbox to0.0pt{\pgfsys@beginscope\pgfsys@invoke{ }\definecolor{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@rgb@stroke{0}{0}{0}\pgfsys@invoke{ }\pgfsys@color@rgb@fill{0}{0}{0}\pgfsys@invoke{ }\pgfsys@setlinewidth{\the\pgflinewidth}\pgfsys@invoke{ }\nullfont\hbox to0.0pt{{}{}{}{}\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{{}}{{}}{{}}{{}}{{}}{{}}{{}}{{}}\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@transformcm{1.0}{0.0}{0.0}{1.0}{2.84526pt}{3.72931pt}\pgfsys@invoke{ }\hbox{{\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\hbox{\minipage[b]{22.76222pt}\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\ignorespaces\centering\ignorespaces{\text{$\downarrow$ 100}}\@add@centering\endminipage}}}\pgfsys@invoke{ }\pgfsys@endscope}\pgfsys@invoke{ }\pgfsys@endscope{}{}{}\hss}\pgfsys@discardpath\pgfsys@invoke{ }\pgfsys@endscope\hss}}\endpgfpicture}}\par}$ | $0.00_{\definecolor{tcbcolback}{rgb}{0.87890625,0.9609375,1}\definecolor{tcbcolframe}{rgb}{0.87890625,0.9609375,1}\par\noindent\hbox to28.45pt{\vbox to9.96pt{\pgfpicture\makeatletter\hbox{\thinspace\lower 0.0pt\hbox to0.0pt{\pgfsys@beginscope\pgfsys@invoke{ }\definecolor{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@rgb@stroke{0}{0}{0}\pgfsys@invoke{ }\pgfsys@color@rgb@fill{0}{0}{0}\pgfsys@invoke{ }\pgfsys@setlinewidth{\the\pgflinewidth}\pgfsys@invoke{ }\nullfont\hbox to0.0pt{{}{}{}{}\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{{}}{{}}{{}}{{}}{{}}{{}}{{}}{{}}\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@transformcm{1.0}{0.0}{0.0}{1.0}{2.84526pt}{3.72931pt}\pgfsys@invoke{ }\hbox{{\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\hbox{\minipage[b]{22.76222pt}\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\ignorespaces\centering\ignorespaces{\text{$\downarrow$ 100}}\@add@centering\endminipage}}}\pgfsys@invoke{ }\pgfsys@endscope}\pgfsys@invoke{ }\pgfsys@endscope{}{}{}\hss}\pgfsys@discardpath\pgfsys@invoke{ }\pgfsys@endscope\hss}}\endpgfpicture}}\par}$ | $0.00_{\definecolor{tcbcolback}{rgb}{0.87890625,0.9609375,1}\definecolor{tcbcolframe}{rgb}{0.87890625,0.9609375,1}\par\noindent\hbox to28.45pt{\vbox to9.96pt{\pgfpicture\makeatletter\hbox{\thinspace\lower 0.0pt\hbox to0.0pt{\pgfsys@beginscope\pgfsys@invoke{ }\definecolor{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@rgb@stroke{0}{0}{0}\pgfsys@invoke{ }\pgfsys@color@rgb@fill{0}{0}{0}\pgfsys@invoke{ }\pgfsys@setlinewidth{\the\pgflinewidth}\pgfsys@invoke{ }\nullfont\hbox to0.0pt{{}{}{}{}\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{{}}{{}}{{}}{{}}{{}}{{}}{{}}{{}}\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@transformcm{1.0}{0.0}{0.0}{1.0}{2.84526pt}{3.72931pt}\pgfsys@invoke{ }\hbox{{\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\hbox{\minipage[b]{22.76222pt}\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\ignorespaces\centering\ignorespaces{\text{$\downarrow$ 100}}\@add@centering\endminipage}}}\pgfsys@invoke{ }\pgfsys@endscope}\pgfsys@invoke{ }\pgfsys@endscope{}{}{}\hss}\pgfsys@discardpath\pgfsys@invoke{ }\pgfsys@endscope\hss}}\endpgfpicture}}\par}$ | $0.00_{\definecolor{tcbcolback}{rgb}{0.87890625,0.9609375,1}\definecolor{tcbcolframe}{rgb}{0.87890625,0.9609375,1}\par\noindent\hbox to28.45pt{\vbox to9.96pt{\pgfpicture\makeatletter\hbox{\thinspace\lower 0.0pt\hbox to0.0pt{\pgfsys@beginscope\pgfsys@invoke{ }\definecolor{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@rgb@stroke{0}{0}{0}\pgfsys@invoke{ }\pgfsys@color@rgb@fill{0}{0}{0}\pgfsys@invoke{ }\pgfsys@setlinewidth{\the\pgflinewidth}\pgfsys@invoke{ }\nullfont\hbox to0.0pt{{}{}{}{}\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }{}{}{}{}{}{}{}{}\definecolor[named]{pgffillcolor}{rgb}{0.87890625,0.9609375,1}\pgfsys@color@rgb@fill{0.87890625}{0.9609375}{1}\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}{{}{}{{}}}{{}{}{{}}}{}{}\pgfsys@moveto{0.0pt}{2.84526pt}\pgfsys@lineto{0.0pt}{7.11337pt}\pgfsys@curveto{0.0pt}{8.68478pt}{1.27385pt}{9.95863pt}{2.84526pt}{9.95863pt}\pgfsys@lineto{25.60748pt}{9.95863pt}\pgfsys@curveto{27.1789pt}{9.95863pt}{28.45274pt}{8.68478pt}{28.45274pt}{7.11337pt}\pgfsys@lineto{28.45274pt}{2.84526pt}\pgfsys@curveto{28.45274pt}{1.27385pt}{27.1789pt}{0.0pt}{25.60748pt}{0.0pt}\pgfsys@lineto{2.84526pt}{0.0pt}\pgfsys@curveto{1.27385pt}{0.0pt}{0.0pt}{1.27385pt}{0.0pt}{2.84526pt}\pgfsys@closepath\pgfsys@fill\pgfsys@invoke{ }\pgfsys@invoke{ }\pgfsys@endscope\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@fill@opacity{1.0}\pgfsys@invoke{ }{{{}}{{}}{{}}{{}}{{}}{{}}{{}}{{}}\pgfsys@beginscope\pgfsys@invoke{ }\pgfsys@transformcm{1.0}{0.0}{0.0}{1.0}{2.84526pt}{3.72931pt}\pgfsys@invoke{ }\hbox{{\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\hbox{\minipage[b]{22.76222pt}\color[rgb]{0,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0}\pgfsys@color@gray@stroke{0}\pgfsys@color@gray@fill{0}\ignorespaces\centering\ignorespaces{\text{$\downarrow$ 100}}\@add@centering\endminipage}}}\pgfsys@invoke{ }\pgfsys@endscope}\pgfsys@invoke{ }\pgfsys@endscope{}{}{}\hss}\pgfsys@discardpath\pgfsys@invoke{ }\pgfsys@endscope\hss}}\endpgfpicture}}\par}$ |