# Hoffman-London graphs: When paths minimize $H$-colorings among trees

**Authors**: David Galvin, Phillip Marmorino, Emily McMillon, JD Nir, Amanda Redlich

## Hoffman-London graphs: When paths minimize H -colorings among trees

David Galvin ∗ University of Notre Dame dgalvin1@nd.edu

JD Nir Oakland University jdnir@oakland.edu

Phillip Marmorino † Purdue University pmarmori@purdue.edu

Emily McMillon ‡ Rice University em72@rice.edu

Amanda Redlich University of Massachusetts Lowell amanda redlich@uml.edu

January 1, 2026

## Abstract

Given a graph G and a target graph H , an H -coloring of G is an adjacency-preserving vertex map from G to H . The number of H -colorings of G , hom( G,H ), has been studied for many classes of G and H . In particular, extremal questions of maximizing and minimizing hom( G,H ) have been considered when H is a clique or G is a tree.

In this paper, we develop a new technique using automorphisms of H to show that hom( T, H ) is minimized by paths as T varies over trees on a fixed number of vertices. We introduce the term Hoffman-London to refer to graphs that are minimal in this sense. In particular, we define an automorphic similarity matrix which is used to compute hom( T, H ) and give matrix conditions under which H is Hoffman-London.

We then apply this technique to identify several families of graphs that are Hoffman-London, including loop threshold graphs and some with applications in statistical physics (e.g. the Widom-Rowlinson model). By combining our approach with a few other observations, we fully characterize the minimizing trees for all graphs H on three or fewer vertices.

## 1 Introduction

Graph coloring is a well-known problem in which vertices of a graph are assigned a color with the restriction that adjacent vertices receive different colors. In this paper, we consider the related notion of H -colorings of a graph G in which vertices of G are labeled by vertices of H in such a way that adjacent vertices in G must be 'colored' by adjacent vertices of H . Formally, given a simple, loopless graph G = ( V ( G ) , E ( G )) and a target graph H = ( V ( H ) , E ( H )) without multi-edges, but possibly containing loops, an H -coloring of G is a map f : V ( G ) → V ( H ) that preserves adjacency: whenever u ∼ G v we must have f ( u ) ∼ H f ( v ). Note that when H = K q , the complete graph on q vertices, an H -coloring of G is an assignment of the vertices of G to one of q values with the only

∗ Galvin is in part supported by Simons Foundation Gift no. 854277 - Travel Support for Mathematicians.

† Marmorino is in part supported by NSF AGS-10002554.

‡ McMillon is in part supported by NSF DMS-2303380.

restriction that adjacent vertices receive different labels-in other words, the proper q -colorings of G . In this sense, H -colorings are a generalization of proper vertex colorings, though, as we will see, H -colorings generalize several other common graph theoretic notions as well.

While we mainly focus on the framework of H -colorings, it should be noted that an H -coloring of G is just a graph homomorphism from G to H . Fittingly, we denote the set of all H -colorings of G by Hom( G,H ) and use hom( G,H ) to count the number of such colorings.

As mentioned, H -colorings encompass proper q -colorings by allowing H to be a complete graph. By setting H = H ind , which is one looped vertex connected by an edge to an unlooped vertex, we see H -colorings of G also encode the independent (or stable ) sets of G : any collection of vertices mapped to the unlooped vertex contains no internal edges. Lov´ asz [32] investigated connections between H -colorings and graph limits, quasi-randomness, and property testing. In statistical physics, the language of H -coloring has been adopted to describe hard-constraint spin models; see e.g. [1, 2].

This article contributes to a broader investigation into an extremal enumeration question: for a given family of graphs G and a target graph H , can we characterize those G ∈ G which maximize or minimize hom( G,H )? The origins of this question are attributed to Birkhoff's work on the 4-color conjecture [3, 4] with more recent attention coming from questions of Wilf [42] and Linial [30] concerning which graph on n vertices and m edges admits the most proper q -colorings, which is to say maximizes hom( G,K q ). For further results and conjectures, we direct the reader to the surveys [10, 45].

Here we consider the case where G is a family of trees; in particular we consider the family of graphs G = T n , the set of all trees on n vertices. As in many such questions, the path graph P n and star graph S n = K 1 ,n -1 are natural candidates for extremal graphs. When H = H ind , Prodinger and Tichy [34] proved that for each n , the number of independent sets among n vertex trees is maximized by stars and minimized by paths, confirming that for each T n ∈ T n ,

$$\frac { 1 } { m ( T _ { n } , H _ { ind } ) } \leq h o m ( P _ { n } , H _ { ind } ) \leq h o$$

The Hoffman-London inequality (see [25, 31, 39]) is equivalent to the statement that hom( P n , H ) ≤ hom( S n , H ) for any choice of H and n . Sidorenko [40] extended the Hoffman-London inequality to the following very general result:

Theorem 1.1 (Sidorenko [40]) . Fix H and n ≥ 1 . For any T n ∈ T n ,

$$\tan ( \alpha , \beta ) = \frac { C _ { 1 } A _ { 1 } } { B _ { 1 } A _ { 1 } }$$

In other words, for any target graph H and order n , not only P n , but every tree T n admits at most as many H -colorings as S n . Sidorenko's result entirely resolves the maximization question for trees (and, it can be shown, connected graphs).

Given the result of Prodinger and Tichy, one might ask whether paths minimize the number of H -colorings; could one show a result analogous to Sidorenko's that hom( P n , H ) ≤ hom( T n , H ) for all target graphs? Csikv´ ari and Lin [6], following earlier work of Leontovich [29], dash such hopes by describing a target graph H and a tree E 7 on seven vertices in which hom( E 7 , H ) < hom( P 7 , H ). They raise the following natural problem [6, Problem 6.2]:

Problem 1.2. Characterize those H for which hom( P n , H ) ≤ hom( T n , H ) holds for all n and all T n ∈ T n .

Inspired by [25, 31], we introduce the following definitions:

Definition 1.3. We say a target graph H is Hoffman-London if for all n ≥ 1,

$$\frac { 1 } { n } \sum _ { i = 1 } ^ { n } h ( T _ { n } , H ) = \min _ { n \in T _ { n } } h ( P _ { n } , H )$$

If it holds that hom( P n , H ) < hom( T n , H ) for all T n ∈ T n \ { P n } and all n ≥ 1, we say that H is strongly Hoffman-London .

We can thus restate Problem 1.2 as: Which target graphs H are Hoffman-London? To make progress on this problem, we develop criteria for identifying Hoffman-London and strongly HoffmanLondon target graphs based on concepts introduced in a companion paper [19], which we restate here. Given a target graph H , possibly with loops, the orbit partition of H is the partition of V ( H ) obtained by declaring a pair of vertices u, v to be equivalent if there is an automorphism that maps u to v . We say such vertices are automorphically similar . Denote the equivalence classes of the orbit partition, which we call automorphic similarity classes , by H 1 , . . . , H k , where k is the number of automorphism orbits of V ( H ). We will show in Lemma 3.1 that for each 1 ≤ i ≤ k and 1 ≤ j ≤ k , there is a constant m i,j counting the number of neighbors any v ∈ H i has in H j , independent of the specific choice of v . The automorphic similarity matrix of H (relative to the specific ordering of the automorphic similarity classes) is the k × k matrix M = M ( H ) whose ( i, j )-entry is m i,j .

We also make use of the following matrix property, which is related to the idea of stochastic domination.

Definition 1.4. A k × k matrix M with ( i, j )-entry m i,j has the increasing columns property if any terminal segment of columns is non-decreasing: for each 1 ≤ c ≤ k and each 1 ≤ i ≤ k -1,

$$\sum _ { j = c } ^ { k } m _ { i , j } \leq \sum _ { j = c } ^ { k } m _ { i + 1 , j }$$

Our first contribution, captured by Theorem 1.5, is a test that can be applied to the automorphic similarity matrix of a graph H which, when it applies, proves that H is Hoffman-London.

Theorem 1.5. Let H be a target graph, possibly with loops. Suppose there is an ordering of the automorphic similarity classes H 1 , . . . , H k of H such that the automorphic similarity matrix M has the increasing columns property. Then H is Hoffman-London.

In certain cases, the reasoning behind Theorem 1.5 can be extended to show H is strongly Hoffman-London. For a graph G with designated vertex v , let Hom v → i ( G,H ) denote the set of H -colorings of G in which v is mapped to some (arbitrary but specific) representative of H i . We use hom v → i ( G,H ) for the number of such H -colorings. Although Hom v → i ( G,H ) clearly depends on the choice of representative, in Lemma 3.2 we will show that hom v → i ( G,H ) does not.

Corollary 1.6. Let H be a target graph that satisfies the hypotheses of Theorem 1.5. Suppose that for each t ≥ 2 , there exist two classes, H a ( t ) and H b ( t ) , such that there is at least one H -coloring of P t that sends one endvertex of P t to a vertex in H a ( t ) and the other endvertex to a vertex in H b ( t ) . Suppose further that for all s ≥ 2 , it holds that hom v → b ( t ) ( P s , H ) > hom v → a ( t ) ( P s , H ) , where v is one of the end vertices of P s . Then H is strongly Hoffman-London.

In Section 4 we apply Theorem 1.5 to prove (and in some cases, reprove known results) that several families of target graphs are Hoffman-London. These examples include vertex transitive

graphs to which looped dominating vertices are added (partially recovering a result of Engbers and the first author [15]), paths (partially recovering a result of Csikv´ ari and Lin [7]) and fully looped paths, loop threshold graphs, blow-ups of fully looped stars, and families of graphs (including the Widom-Rowlinson model) originating from statistical physics. When possible, we also apply Corollary 1.6 to show that these graphs are strongly Hoffman-London.

## 2 Preliminary observations

In this section, we gather some observations about enumerating H -colorings of trees that will prove useful at various points throughout the paper. We also present a proof that complete bipartite graphs are Hoffman-London.

We begin with two observations pertaining to target graphs with more than one component. Both results follow immediately from the definition of H -coloring.

Observation 2.1. If H has components H 1 , . . . , H k , then for any connected graph G we have

$$h o m ( G , H ) = \sum _ { i = 1 } ^ { k } h o m ( G , H _ { i } )$$

In particular, if it holds that hom( P n , H i ) ≤ hom( T n , H i ) for all i , n , and T n ∈ T n , then it holds that hom( P n , H ) ≤ hom( T n , H ) for all n and T n ∈ T n .

That is, the set of graphs that are Hoffman-London is closed under taking disjoint unions. The second observation follows from the first, but is useful on its own when considering graphs with isolated vertices.

Observation 2.2. If H has isolated vertices and H ′ is obtained from H by removing the isolated vertices, then for each G without isolated vertices we have hom( G,H ) = hom( G,H ′ ). In particular, this means that for the purpose of minimizing H -colorings of trees on two or more vertices, we can ignore isolated vertices.

We now make an observation about regular target graphs. Note that here and throughout this paper, the degree of a vertex v is the number of edges (including loops) that contain v -so loops contribute 1 to the degree, not 2, as is used elsewhere in the literature.

Observation 2.3. If H is regular, then all trees on n vertices admit the same number of H -colorings. Specifically, hom( T, H ) = | V ( H ) | d n -1 , where d is the degree of each vertex in H .

It follows that regular graphs are, trivially, Hoffman-London. To see the validity of Observation 2.3, put an ordering v 1 , . . . , v n on the vertices of T satisfying that for each i ≥ 2, v i is adjacent to exactly one v j , j < i . For example, start with an arbitrary vertex as v 1 , then list all the vertices at distance 1 from v 1 , in some arbitrary order, then move on to all the vertices at distance 2 from v 1 , and so on. When H -coloring the vertices of T in this order, there are | V ( H ) | options for the color at v 1 , then d options for the color of each successive vertex.

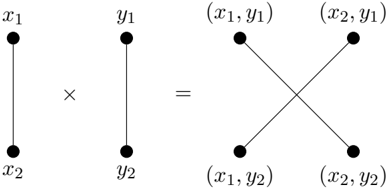

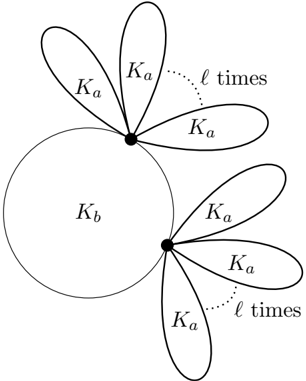

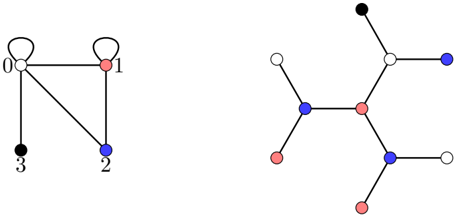

Next, we refer to the notion of the tensor product of two graphs. For graphs H 1 and H 2 , the graph H 1 × H 2 has vertex set V ( H 1 ) × V ( H 2 ), and pairs ( x 1 , y 1 ), ( x 2 , y 2 ) are adjacent in H 1 × H 2 if and only if x 1 x 2 ∈ E ( H 1 ) and y 1 y 2 ∈ E ( H 2 ); see Figure 1.

for all n and T n ∈ T n .

That is, the set of graphs that are Hoffman-London is closed under taking tensor products.

Observation 2.5. Let R be a regular graph of degree d with at least one edge. Then for any H and any n ≥ 1, the trees T n in T n that minimize hom( T n , H ) are the same as the trees T n in T n that minimize hom( T n , H × R ).

Observation 2.5 follows from Observation 2.4 and from the fact that hom( T n , R ) = | V ( R ) | d n -1 (see Observation 2.3) and is thus positive and independent of the specific choice of T n ∈ T n .

Finally, we present a result whose justification is more involved. A bipartition V ( G ) = X ∪ Y of a graph is called balanced if the sizes are as equal as possible, i.e. | X | = | Y | if | V ( G ) | is even and ∣ ∣ | X | - | Y | ∣ ∣ = 1 if | V ( G ) | is odd.

̸

Theorem 2.6. Each complete bipartite graph H = K a,b is Hoffman-London. When a = b , T n ∈ T n minimizes hom( T n , H ) if and only if T n has a balanced bipartition.

̸

Proof. If a = b , then H is a regular graph and Observation 2.3 tells us that every tree admits the same number of H -colorings. For the remainder of the proof we assume that a = b .

Any tree T n on n vertices has a unique bipartition, say V ( T ) = X ∪ Y , with | X | = k and | Y | = n -k . Let A be the part of H containing a vertices and B be the part containing b vertices. Let φ ∈ Hom( T n , K a,b ) and x ∈ X . Then, as T n is connected, each vertex in X is adjacent to some vertex in Y and each vertex in Y is adjacent to some vertex in X , so if φ ( x ) ∈ A , we have φ ( X ) ⊆ A and φ ( Y ) ⊆ B , and if φ ( x ) ∈ B , φ ( X ) ⊆ B and φ ( Y ) ⊆ A . Furthermore, as H is complete bipartite, the image of x (and each other vertex in X ) can be any vertex in the appropriate part, and similarly for the vertices of Y , so

$$\sum _ { n = 1 } ^ { \infty } f ( k ) = a ^ { k } b ^ { - k }$$

Figure 1: The tensor product K 2 × K 2 .

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Cartesian Product of Two Two-Element Sets

### Overview

The image is a mathematical diagram illustrating the Cartesian product of two sets, each containing two elements. It visually demonstrates how the product operation combines elements from the first set with elements from the second set to form all possible ordered pairs.

### Components/Axes

The diagram is divided into three distinct parts, separated by mathematical operators.

1. **Left Component (First Set):**

* A vertical line segment with two endpoints.

* The top endpoint is labeled `x₁`.

* The bottom endpoint is labeled `x₂`.

2. **Middle Component (Second Set):**

* A vertical line segment with two endpoints, identical in form to the first.

* The top endpoint is labeled `y₁`.

* The bottom endpoint is labeled `y₂`.

* Between the first and second components is a multiplication symbol (`×`), indicating the Cartesian product operation.

3. **Right Component (Resulting Set):**

* An equals sign (`=`) separates the operation from its result.

* Four points are arranged in a square formation, representing the elements of the Cartesian product.

* The top-left point is labeled `(x₁, y₁)`.

* The top-right point is labeled `(x₂, y₁)`.

* The bottom-left point is labeled `(x₁, y₂)`.

* The bottom-right point is labeled `(x₂, y₂)`.

* Two diagonal lines cross in the center, connecting the points:

* One line connects `(x₁, y₁)` to `(x₂, y₂)`.

* The other line connects `(x₂, y₁)` to `(x₁, y₂)`.

### Detailed Analysis

The diagram explicitly maps the formation of the Cartesian product `X × Y`, where `X = {x₁, x₂}` and `Y = {y₁, y₂}`.

* **Process Flow:** The flow is from left to right. The two initial sets are presented, the product operation is applied, and the resulting set of four ordered pairs is displayed.

* **Element Mapping:** The diagonal lines in the result visually emphasize the combinatorial nature of the product. Each line connects pairs that share one common element from the original sets (e.g., the line from `(x₁, y₁)` to `(x₂, y₂)` connects pairs that share no common element, while the other line connects pairs that share either `x₂` or `y₁`).

* **Spatial Grounding:** The labels are placed directly adjacent to their corresponding points. The initial sets are vertically oriented, while the result is a 2x2 grid, highlighting the expansion from two linear sets to a planar set of combinations.

### Key Observations

1. **Complete Enumeration:** The diagram shows all four possible ordered pairs (`2 × 2 = 4`), confirming it is a complete representation of the Cartesian product for these finite sets.

2. **Symmetry:** The diagram is symmetric. The two initial sets are structurally identical, and the resulting grid is symmetric about both the vertical and horizontal axes.

3. **Visual Abstraction:** The lines connecting the initial points (`x₁` to `x₂`, `y₁` to `y₂`) are abstract representations of the sets themselves, not functions or mappings. The diagonal lines in the result are also abstract connectors showing the relationship between the output pairs.

### Interpretation

This diagram is a fundamental visual proof or explanation of the Cartesian product operation in set theory. It demonstrates that the product of two sets is the set of all possible ordered pairs where the first element comes from the first set and the second element comes from the second set.

* **What it Suggests:** The data (the sets and their product) suggests a combinatorial explosion. Starting with two simple sets of two items each, the product creates a new set with four distinct, composite items. This principle scales to larger sets and higher dimensions.

* **Relationships:** The core relationship shown is **combination**. The diagram moves from individual, independent elements (`x₁`, `y₁`) to combined, dependent entities (`(x₁, y₁)`). The crossing lines in the result may also hint at the concept of a **complete bipartite graph** between the two original sets, where every element of X is connected to every element of Y.

* **Notable Anomaly:** There is no anomaly; the diagram is a precise and standard representation of a mathematical definition. Its purpose is pedagogical clarity.

**Language Note:** All text in the image is in English and standard mathematical notation. No other languages are present.

</details>

There is a strong connection between tensor products and H -colorings, namely that for any graphs G , H 1 , and H 2 we have

$$\hom ( G , H _ { 1 } \times H _ { 2 } ) = h$$

(see e.g. [32, Equation (5.30)]). From this fact we draw the following two conclusions:

Observation 2.4. If H 1 , H 2 satisfy hom( P n , H i ) ≤ hom( T n , H i ) for each i ∈ { 1 , 2 } , n ≥ 1, and T n ∈ T n , then

$$\leq h o m ( P _ { n } , H _ { 1 } \times H _ { 2 } )$$

We have

̸

$$f ^ { \prime } ( k ) = \frac { \log ( a ) - \log ( b ) } { ( a ^ { 2 } k b ^ { n } - a ^ { n } b ^ { 2 k } ) . }$$

As a = b , the only zero of f ′ occurs when a n -2 k = b n -2 k , which, for distinct positive integers, requires n -2 k = 0, or k = n 2 . Noting

$$f ^ { \prime } ( k ) = \frac { ( \log ( a ) - \log ( b ) ) ^ { 2 } } { ( a ^ { 2 } k ^ { 2 } + a ^ { n } b ^ { 2 } k ) > 0 , }$$

we see k = n 2 is a minimum of f , and furthermore f is decreasing as k increases from 0 to n 2 . Therefore

$$\min _ { T \in F } ( T , H ) \geq 2 a ^ { n } - b ^ { n }$$

when n is even. When n is odd, since k must be an integer,

$$\frac { 1 } { T _ { c } ^ { n } } \min h o m ( T _ { n } , H ) \geq a ^ { ( n - 1 ) / 2 }$$

In both cases, P n achieves the bound, as does any tree whose bipartition is balanced. If T is a tree whose bipartition is not balanced, f ( k ) is strictly greater than the minimum value.

Csikv´ ari and Lin [7] observed the case a = 1 of Theorem 2.6. The question of whether complete multipartite graphs with more than two parts are Hoffman-London remains open.

## 3 Proofs of Theorem 1.5 and Corollary 1.6

In this section, we prove Theorem 1.5, our primary condition for identifying Hoffman-London graphs H , and Corollary 1.6, our condition for identifying strongly Hoffman-London graphs. Recall from the introduction that if H is a target graph, possibly with loops, and u and v are vertices in H , we say u and v are automorphically similar if there is an automorphism of H that sends u to v . Automorphic similarity defines an equivalence relation on V ( H ), and we call the equivalence classes of that relation the automorphic similarity classes of H . The resulting partition of the vertices is sometimes called the orbit partition . As discussed in [23, Section 9.3], the orbit partition is an equitable partition , which means that for any two (not necessarily distinct) automorphic similarly classes A and B , there is a constant c ( A,B ) such | N ( v ) ∩ B | = c ( A,B ) for each v ∈ A . In other words, vertices in the same automorphic similarity class have the same number of neighbors in each other class. For completeness, and because the proof is brief and illustrative, we include the following lemma (which was first presented in [19]).

Lemma 3.1. Let H be a graph, possibly with loops, and let H 1 , . . . , H k be the automorphic similarity classes of H . For any 1 ≤ i ≤ k , any u, v ∈ H i , and any 1 ≤ j ≤ k ,

$$N a O H ^ { + } = N a O H _ { 2 }$$

Proof. Let φ be an automorphism of V ( H ) that sends u to v . Then each w ∈ N ( u ) ∩ H j is mapped to some w ′ . As w ∼ u , we see w ′ ∼ v , and as w ∈ H j and φ ( w ) = w ′ we see w and w ′ are automorphically similar, so w ′ ∈ H j as well. We have shown | N ( u ) ∩ H j | ≤ | N ( v ) ∩ H j | . Repeating the analysis with φ -1 , we see | N ( u ) ∩ H j | ≥ | N ( v ) ∩ H j | and so equality holds.

Using Lemma 3.1, given an ordering H 1 , . . . , H k of the automorphic similarity classes of H , we define m i,j = c ( H i , H j ) to be the number of neighbors that an arbitrary vertex in H i has in H j . We then define an automorphic similarity matrix of H to be a k × k matrix M = M ( H ) whose ( i, j )-entry is m i,j , where we note that different orderings of the automorphic similarity classes of H may result in different automorphic similarity matrices.

As we will see in Lemma 3.3, the matrix M , together with the sizes of the automorphic similarity classes of H , contains all of the information necessary to count H -colorings of trees. This could also be accomplished using the adjacency matrix of H , but for highly symmetric target graphs the automorphic similarity matrix is much smaller than the adjacency matrix and is thus easier to analyze. First, though, we need the following result, also presented in [19].

Lemma 3.2. Let H be a graph, possibly with loops, and let G be an arbitrary graph with distinguished vertex v . Suppose w and w ′ are automorphically similar vertices in H . Let G be the set of H -colorings of G in which v is sent to w , and let G ′ be the set of H -colorings of G in which v is sent to w ′ . Then |G| = |G ′ | .

Proof. Let φ be an automorphism of H that sends w to w ′ , which exists as w and w ′ are automorphically similar. Then the function mapping f to f ◦ φ is a bijection mapping G to G ′ .

We now present another lemma which we will require in the proof of Theorem 1.5 but which we also feel is also of independent interest: a generalization to the setting of automorphic similarity classes and matrices of the tree-walk algorithm of Csikv´ ari and Lin [6]. Recall that Hom v → i ( T, H ) denotes the set of H -colorings of T in which v is mapped to some (arbitrary but specific) representative of H i and that we set hom v → i ( T, H ) = | Hom v → i ( T, H ) | .

Lemma 3.3. Let H be a target graph with automorphic similarity classes H 1 , . . . , H k and associated automorphic similarity matrix M . Let T be a tree with root v . Let a ( H ) be the row vector whose i th entry is | H i | and define h ( T, v ) to be the column vector whose i th entry is hom v → i ( T, H ) .

Then hom( T, H ) = a ( H ) h ( T, v ) , and h ( T, v ) can be explicitly computed from T , a ( H ) , and M , via recursion on T .

Proof. To see that hom( T, H ) = a ( H ) h ( T, v ), partition Hom( T, H ) according to the color given to v . There are | H i | classes in this partition in which v is send to some vertex in H i , and by Lemma 3.2, each of these classes has size hom v → i ( T, H ). Hence the total count of H -colorings is

$$\sum _ { i = 1 } ^ { k } | H ^ { i } | h o m _ { u \rightarrow i } ( T , v ) .$$

To compute h ( T, v ), we proceed recursively. When T consists of a single vertex, necessarily v , we have that hom v → i ( T, H ) = 1 for any i , and so h ( T, v ) is the constant vector with all entries equal to 1.

When T has more than one vertex, we consider separately the cases deg( v ) = 1 and deg( v ) ≥ 2. When deg( v ) = 1, let w be the neighbor of v . Then

$$\sum _ { j = 1 } ^ { k } h o m _ { w - i } ( T , H ) = \sum _ { j = 1 } ^ { k } n$$

Indeed, once we have colored v with the representative vertex from H i , there are, for each j , m i,j choices for a color from H j for w (as the representative vertex in H i has m i,j neighbors in H j for each j ). For each of these m i,j choices, recalling that hom w → j ( T -v, H ) is independent of the choice of representative from H j due to Lemma 3.2, there are hom w → j ( T -v, H ) ways to extend the coloring to the rest of T . More succinctly, we have

$$h ( T , v ) = M h ( T - v , w )$$

where M is the automorphic similarity matrix of H .

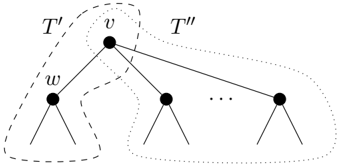

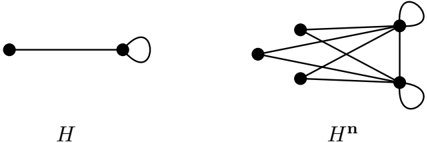

When deg( v ) = d ≥ 2, let w be any neighbor of v . Form tree T ′ by taking the component of T -v that contains w and adding a new vertex v ′ ∼ w . Form tree T ′′ by taking each component of T -v that does not contain w (of which there is at least one, because v has degree at least two) and adding a new vertex v ′′ adjacent to each vertex that was adjacent to v in T . Note that by gluing v ′ to v ′′ we recover T . (See Figure 2.)

Figure 2: Deconstructing T into T ′ and T ′′ .

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: Tree Partitioning Structure

### Overview

The image displays a mathematical or computer science diagram illustrating a tree data structure that has been partitioned into two distinct subtrees, labeled T' and T''. The diagram uses nodes (black circles), edges (solid lines), and grouping boundaries (dashed lines) to show a hierarchical relationship and a specific division point.

### Components/Axes

* **Nodes:** Represented by solid black circles. The primary labeled nodes are `v` and `w`.

* **Edges:** Solid black lines connecting the nodes, indicating parent-child relationships in the tree.

* **Subtree Labels:**

* `T'`: A label positioned in the top-left area, associated with a dashed boundary.

* `T''`: A label positioned in the top-right area, associated with a different dashed boundary.

* **Node Labels:**

* `v`: The label for the root node of the depicted subtree, located at the top center.

* `w`: The label for a child node of `v`, located to the left and below `v`.

* **Ellipsis (`...`):** Located between two child nodes of `v` on the right side, indicating the presence of additional, unspecified nodes or branches in that section of the tree.

* **Grouping Boundaries:** Two distinct dashed-line shapes that enclose different sets of nodes and their descendants.

* The boundary for `T'` encloses node `w` and its subtree.

* The boundary for `T''` encloses node `v` and all its other children (except `w`) and their subtrees.

### Detailed Analysis

The diagram illustrates a tree rooted at node `v`. Node `v` has multiple children. One specific child, labeled `w`, is highlighted. The tree is partitioned into two parts:

1. **Subtree T':** This consists of node `w` and all of its descendants. It is visually isolated by a dashed line on the left side of the diagram.

2. **Subtree T'':** This consists of the original root node `v` and all of its children *except* for node `w`, along with all their respective descendants. This forms the larger partition on the right side.

The ellipsis (`...`) between the second and third visible child nodes of `v` (counting from the left) signifies that the tree structure is generalized; `v` may have an arbitrary number of children, and the diagram shows only a representative subset.

### Key Observations

* The partition is defined by the removal of the edge connecting `v` to `w`. This single cut splits the original tree into two disjoint subtrees.

* The root of the original subtree (`v`) becomes part of the `T''` partition, not the `T'` partition.

* The diagram is abstract and contains no numerical data, specific algorithms, or contextual labels beyond the structural identifiers (`T'`, `T''`, `v`, `w`).

### Interpretation

This diagram is a canonical representation of a **tree partitioning** or **subtree separation** operation, fundamental in algorithms and data structures. It visually answers the question: "If we cut the link between parent `v` and child `w`, what are the resulting components?"

* **What it demonstrates:** The operation creates two independent trees. `T'` is the subtree that was "rooted" at `w`. `T''` is the remainder of the original tree, which retains `v` as its root but loses the entire branch starting at `w`.

* **Relationship between elements:** The dashed lines are crucial for defining the scope of each resulting partition. They show that `T'` is a proper subset of the original structure, while `T''` is the complementary set.

* **Underlying Concept:** This is a visual proof or explanation for concepts like:

* **Tree decomposition** in graph theory.

* **Splitting a tree** at an edge in algorithms (e.g., for dynamic trees or link-cut trees).

* Defining a **subtree** (`T'`) versus the **remaining tree** (`T''`).

* The **pruning** of a branch (`w`'s subtree) from a larger tree.

The absence of specific data or context suggests this is a theoretical or pedagogical figure, meant to illustrate a general principle rather than a specific instance.

</details>

$$1 6 7 = 1 6 7 \textcircled { + } 1 6 7 = 1 6 7$$

where ⊙ represents the Hadamard product in which entries are multiplied term-wise. Indeed, to H -color T while sending v to H i , we take any H -coloring of T ′ where v ′ is sent to H i (hom v ′ → i ( T ′ , H ) many options) and independently any H -coloring of T ′′ where v ′′ is sent to H i (hom v ′′ → i ( T ′′ , H ) many options).

̸

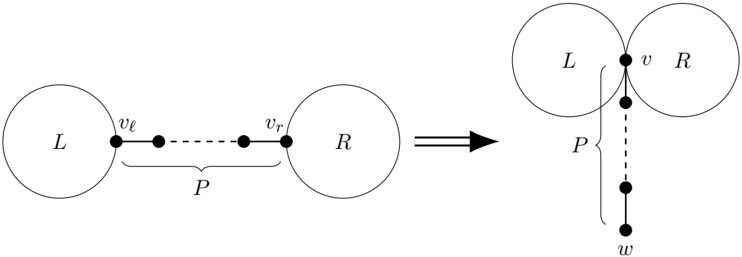

We require an additional ingredient before proving Theorem 1.5. The KC ordering, introduced by Csikv´ ari [5] (under the name generalized tree shift ) as a generalization of an operation introduced by Kelmans [28], is a partial ordering of trees on n vertices. Let T be a tree, and let v ℓ = v r be two non-leaf vertices with the property that the unique v ℓ to v r path in T is a bare path, meaning that each of the internal vertices, if they exist, have degree two. Let P denote that path on t ≥ 2 vertices.

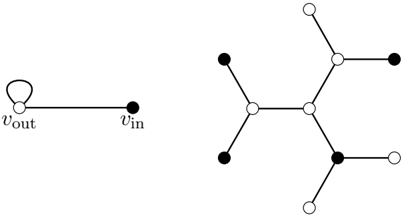

Removing the edges and internal vertices (if any) of P from T leaves two components; call them L (the one with v ℓ ) and R (the one with v r ). We think of these as the 'left' and 'right' components. Denote by T KC the tree built as follows: glue L and R together into a single tree by identifying the vertices v ℓ and v r ; let v be the vertex created by the identification. Then complete the construction of T KC by appending a path with t -1 new vertices to v ; denote by w the other end of the appended path. We say that T KC is obtained from T by a KC move . Figure 3 illustrates this process. Note that T has at least one such a pair of non-leaf vertices v ℓ , v r exactly if T is not a star.

Then

Figure 3: A demonstration of a KC move.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Diagram: Graph Transformation via Vertex Identification

### Overview

The image depicts a two-stage diagram illustrating a graph transformation process. On the left is an initial state with two separate components connected by a path. An arrow points to the right, showing the resulting state after a transformation where the two components are merged at a single vertex, and the connecting path is reattached.

### Components/Axes

The diagram is composed of two primary sections separated by a transformation arrow.

**Left Section (Initial State):**

* **Component L:** A circle labeled with the capital letter "L".

* **Component R:** A circle labeled with the capital letter "R".

* **Path P:** A dashed line connecting the two circles. It is labeled with a capital "P" underneath a curly brace that spans its length.

* **Vertices:**

* `v_l`: A solid black dot on the circumference of circle L, marking the left endpoint of path P.

* `v_r`: A solid black dot on the circumference of circle R, marking the right endpoint of path P.

* Two additional unlabeled solid black dots are shown along the dashed path between `v_l` and `v_r`, indicating intermediate vertices.

**Transformation Arrow:**

* A thick, black arrow points from the left section to the right section, indicating the direction of the transformation.

**Right Section (Transformed State):**

* **Merged Components L and R:** The two circles are now tangent, touching at a single point.

* **Vertex v:** A solid black dot at the point of tangency between circles L and R. This vertex is labeled with a lowercase "v".

* **Path P:** The same dashed line, now originating from vertex `v` and extending vertically downward. It is again labeled with a capital "P" next to a curly brace spanning its length.

* **Vertex w:** A solid black dot at the bottom endpoint of the vertical path P, labeled with a lowercase "w".

* **Intermediate Vertices:** Two additional unlabeled solid black dots are shown along the vertical dashed path between `v` and `w`.

### Detailed Analysis

The diagram illustrates a specific graph operation:

1. **Initial Configuration:** Two distinct graph components (represented by circles L and R) are connected by a path `P`. This path has distinct endpoints `v_l` (in L) and `v_r` (in R).

2. **Transformation:** The arrow signifies an operation where the vertices `v_l` and `v_r` are identified (merged) into a single vertex `v`. This operation joins the two previously separate components L and R into one connected component.

3. **Resulting Configuration:** After the merge, the original path `P` is now attached to the new merged vertex `v`. Its other endpoint is labeled `w`. The path `P` is depicted as hanging vertically from `v`.

### Key Observations

* **Spatial Grounding:** The legend/labels (`L`, `R`, `P`, `v_l`, `v_r`, `v`, `w`) are placed directly adjacent to their corresponding graphical elements. The curly braces for `P` are positioned centrally along the path's length in both stages.

* **Visual Consistency:** The graphical representation of the path `P` (dashed line with solid dot vertices) is consistent before and after the transformation, reinforcing that it is the same subgraph being relocated.

* **Topological Change:** The primary change is topological: two connected components become one. The circles L and R change from being disjoint to touching.

* **Vertex Relabeling:** The endpoints `v_l` and `v_r` cease to exist as distinct entities and are replaced by the single vertex `v`. A new endpoint `w` is introduced for the path in the transformed state.

### Interpretation

This diagram is a formal representation of a **vertex identification** or **edge contraction** operation in graph theory. It demonstrates how merging two vertices (`v_l` and `v_r`) that belong to different components (`L` and `R`) can unify the graph's structure.

The operation has significant implications:

* **Connectivity:** It reduces the number of connected components in the graph from two to one.

* **Path Preservation:** The path `P` is preserved but its attachment point changes from two distinct vertices to a single vertex. This could model scenarios like merging two network nodes and rerouting their connecting link.

* **Abstraction:** The use of circles for `L` and `R` suggests they may represent larger subgraphs or sets of vertices, not just single nodes. The transformation shows how a connection between two complex modules can be simplified to a single junction point.

The diagram serves as a clear, abstract visual proof or definition of this graph operation, emphasizing the change in connectivity and vertex relationships without specifying the internal structure of `L`, `R`, or the full path `P`.

</details>

Csikv´ ari [5, Remark 2.4] shows that the relation S ≤ T defined by T being obtainable from S by a sequence of KC moves defines, for each n , a partial order on T n (the KC ordering ), with P n the unique minimal element and S n the unique maximal element.

The following lemma is necessary for the proof of Theorem 1.5. The proof that we give follows [27, Theorem 1.1, ( a ) ⇒ ( c )]. We choose to present the full details because [27] only treats matrices whose row sums are 1.

Lemma 3.4. Let M be a k × k non-negative matrix that has the increasing columns property, and let h be a non-negative column vector of dimension k whose entries are non-decreasing. Then the entries of M h are non-negative and non-decreasing.

Proof. Let S be the k × k matrix that has 1s on and below the main diagonal and 0s everywhere else. Note that S -1 has 1s down the main diagonal, -1s down the first subdiagonal, and 0s everywhere else, which can be verified by computing SS -1 .

Observe that for a vector v = [ v 1 v 2 · · · v k ] T , the entries of v being non-negative and non-decreasing is equivalent to the entries of S -1 v being non-negative because the first entry of S -1 v is v 1 and for i > 1, the i th entry is v i -v i -1 .

We wish to show that the entries of M h are non-negative and non-decreasing. This is implied by S -1 M h having non-negative entries, which is in turn implied by ( S -1 MS )( S -1 h ) having nonnegative entries. By assumption, the entries of h are non-negative and non-decreasing, so the entries of S -1 h are non-negative. Therefore it suffices to show that S -1 MS has non-negative entries.

Let A = S -1 MS . We have

$$= \sum _ { p = 1 } ^ { k } \sum _ { q = 1 } ^ { j } ( S ^ { - 1 } ) _ { i,p } S _ { q } ^ { j }$$

where we adopt the convention that m 0 ,q = 0. Then

$$\sum _ { q = 1 } ^ { j } m _ { i , q } - \sum _ { q = 1 } ^ { j } m _ { i , q - 1 } \geq 0$$

follows from the increasing columns property.

We're now prepared to prove Theorem 1.5, which we restate here for convenience.

Theorem 1.5. Let H be a target graph, possibly with loops. Suppose there is an ordering of the automorphic similarity classes H 1 , . . . , H k of H such that the automorphic similarity matrix M has the increasing columns property. Then H is Hoffman-London.

Proof. We show that when H is a graph whose automorphic similarity classes can be ordered in such a way that the automorphic similarity matrix of H has the increasing columns property, applying a non-trivial KC move to a tree does not decrease the number of H -colorings of the tree. As the path is the minimum element of the KC ordering, it must therefore be among the minimizers of hom( T n , H ).

̸

We begin by establishing some notation. Let T be a non-star tree, let v ℓ = v r be two non-leaf vertices of T with the property that all of the internal vertices on the unique v ℓ to v r path have degree two, let there be t vertices in that internal path, and let T KC be the tree obtained from T by applying a KC move at vertices v ℓ and v r . Suppose H has automorphic similarity classes H 1 , . . . , H k , labeled in such a way that the automorphic similarity matrix M has the increasing columns property. For 1 ≤ i ≤ k , denote by L i the set of H -colorings of L in which v ℓ is mapped to some specific (but arbitrarily chosen) vertex w i of H i , and let ℓ i = |L i | . Define R i and r i analogously (with L replaced by R ). Note that by Lemma 3.2, ℓ i and r i depend only on i and not on the specific choice of w i . For w,v ∈ V ( H ), denote by P t ( w,v ) the set of H -colorings of any path x 1 , . . . , x t (where, recall, t is the number of vertices on the unique path in T between v ℓ and v r ) in which x 1 is mapped to w and x t is mapped to v , and let p t ( w,v ) = |P t ( w,v ) | . Finally, for 1 ≤ i ≤ k and 1 ≤ j ≤ k , let P t i,j = ⋃ w ∈ H i , v ∈ H j P t ( w,v ) and let p t i,j = |P t i,j | = ∑ w ∈ H i , v ∈ H j p t ( w,v ).

The idea at the core of this proof is that hom( T, H ) and hom( T KC , H ) can both be expressed in terms of the ℓ i , r j , and p t i,j s by partitioning the H -colorings according to, in the case of T , the automorphic similarity classes to which v ℓ and v r are mapped, and in the case of T KC , the automorphic similarity classes to which v and w are mapped. In each case we get expressions that are sums of k 2 terms, and we show hom( T KC , H ) ≥ hom( T, H ) by proving each term in the summation expression for hom( T KC , H ) -hom( T, H ) is non-negative.

When computing hom( T, H ), v ℓ and v r can take any pair of values, and those pairs of values then determine the values taken by the endvertices of P , which gives

$$\sum _ { i = 1 } ^ { k } \sum _ { j = 1 } ^ { k } \sum _ { i = 1 } ^ { k } l _ { i r } p ^ { t } ( v , w )$$

Note here that the count of H -colorings of T that send v ℓ to some w ∈ H i and v r to some v ∈ H j should, a priori , involve terms that depend on w and v , but by Lemma 3.2, these terms ( ℓ i and r j , respectively) are independent of w and v .

In contrast, when computing hom( T KC , H ), v ℓ and v r are forced to take a common value, which determines the values at one of the endvertices of P , and the value at the other endvertex is free, which means

$$\sum _ { i = 1 } ^ { k } \sum _ { j = 1 } ^ { l } \sum _ { i = 1 } ^ { k } \sum _ { j = 1 } ^ { l } \ell _ { i r p t } ( w , v )$$

The term ℓ i r i p t i,i appears in both sums for each 1 ≤ i ≤ k . Using symmetry to note p t i,j = p t j,i for 1 ≤ i < j ≤ k , we combine equations (5) and (6) to write

$$\sum _ { i \leq j \leq k } ( l _ { i r } + l _ { j r } - l _ { i r } - l _ { j r } ) = \sum _ { i \leq j \leq k } ( l _ { j } - l _ { i } ) ( r _ { i } - r _ { j } ) p _ { i , j }$$

Noting that trivially p t i,j ≥ 0, we conclude that in order to prove hom( T KC , H ) ≥ hom( T, H ) it suffices to show that for each i, j we have ( ℓ j -ℓ i )( r j -r i ) ≥ 0.

Given any tree T and distinguished vertex v , recall that hom v → i ( T, H ) denotes the number of H -colorings of T in which distinguished vertex v is mapped to some specific (but arbitrarily chosen) vertex w i of H i , where we again note that by Lemma 3.2, hom v → i ( T, H ) depends only on i and not on the specific choice of w i . As in the statement of Lemma 3.3, define h ( T, v ) to be the k -dimensional column vector whose i th entry is hom v → i ( T, H ). We now prove that our condition on the columns of M , the automorphic similarity matrix of H , ensures that h ( T, v ) is non-decreasing for any choice of T and v , so that in particular when i < j ,

$$l _ { i } = h o m _ { v _ { i } } \rightarrow j ( T , H ) = l _ { i }$$

and analogously r j ≥ r i .

To see that h ( T, v ) is always non-decreasing, we use Lemmas 3.3 and 3.4. By the proof of Lemma 3.3, h ( T, v ) can be computed from the all-1s column vector by a sequence of steps that involve taking Hadamard products of vectors and pre-multiplying vectors by M . Combining the facts that the all-1s vector is non-negative and non-decreasing, that the Hadamard product of nonnegative, non-decreasing vectors is both non-negative and non-decreasing, and that (by Lemma 3.4) pre-multiplying by M preserves the properties of being non-negative and non-decreasing, we see that h ( T, v ) is indeed non-negative and non-decreasing. Therefore, ℓ j ≥ ℓ i and r j ≥ r i , so for each i and j , ( ℓ j -ℓ i )( r j -r i ) ≥ 0 and we must have hom( T KC , H ) ≥ hom( T, H ), as desired.

We now restate and prove Corollary 1.6, which gives conditions under which paths uniquely minimize hom( T, H ). The proof focuses on equation (7) in the special case where T is a path, and aims to show that at least one of the summands on the right-hand of equation (7) is (under certain circumstances) strictly positive. As an aside, we note that unlike traditional corollaries which follow directly from a theorem, we justify Corollary 1.6 using ideas from the proof of Theorem 1.5 rather than using the statement. Nonetheless, we use the term 'corollary' to highlight the connection between the results.

Corollary 1.6. Let H be a target graph that satisfies the hypotheses of Theorem 1.5. Suppose that for each t ≥ 2 , there exist two classes, H a ( t ) and H b ( t ) , such that there is at least one H -coloring of P t that sends one endvertex of P t to a vertex in H a ( t ) and the other endvertex to a vertex in H b ( t ) . Suppose further that for all s ≥ 2 , it holds that hom v → b ( t ) ( P s , H ) > hom v → a ( t ) ( P s , H ) , where v is one of the end vertices of P s . Then H is strongly Hoffman-London.

Proof. Let P KC n be obtained from P n by a KC move. Suppose that the bare path involved in this move has t vertices. We have

$$\sum _ { i = j } ^ { n } ( l _ { j } - l _ { i } ) ( r _ { j } - r _ { i } ) p _ { i j }$$

$$\geq ( b ( t ) - l _ { a } ( t ) ) ( r _ { t } ) - r _ { a }$$

Equation (8) is an instance of equation (7), while inequality (9) uses that ( ℓ j -ℓ i )( r j -r i ) p t ij ≥ 0 for all i, j , which comes from the proof of Theorem 1.5.

Note that p t a ( t ) ,b ( t ) counts the number of H -colorings of P t that send one end vertex of P t to something in H a ( t ) and the other end to something in H b ( t ) ; by hypothesis there is at least one such H -coloring, so p t a ( t ) ,b ( t ) > 0.

We have that ℓ b ( t ) = hom v → b ( t ) ( L, H ) and ℓ a ( t ) = hom v → a ( t ) ( L, H ). As L is a path, we have by hypothesis that ℓ b ( t ) > ℓ a ( t ) . Similarly, r b ( t ) > r a ( t ) . We conclude that

$$( b ( t ) - l _ { a } ( t ) ) ( r _ { b } ( t )$$

It follows from inequality (9) that hom( P KC n , H ) -hom( P n , H ) > 0, and so for any T n obtained from P n by a sequence of KC moves, hom( P n , H ) < hom( P KC n , H ) ≤ hom( T n , H ). Since all trees on n vertices can be thus obtained, the result follows.

## 4 Applications of Theorem 1.5

One goal of this paper is to use Theorem 1.5 to contribute towards the resolution of Problem 1.2. Before we state our results in this direction, we summarize some pertinent results from the literature. We then show how Theorem 1.5 can reprove and sometimes strengthen some of these results, before moving on to establishing some new Hoffman-London families.

## 4.1 Two previous results on Hoffman-London graphs

In inequality (1), we saw that the path minimizes the number of independent sets among trees, i.e. that the target graph H ind is Hoffman-London, where, recall, H ind consists of two vertices joined by an edge with a loop at one vertex. Engbers and Galvin [15] gave a significant generalization of this result by showing that target graphs H formed by adding looped dominating vertices (looped vertices that are adjacent to all other vertices) to a regular graph are Hoffman-London, and they characterized the strongly Hoffman-London target graphs in this family.

Theorem 4.1 (Engbers, Galvin [15]) . If H is obtained from a regular graph by adding any number of looped dominating vertices, then H is Hoffman-London. If H is not regular (equivalently, if H is not a fully looped complete graph), then H is strongly Hoffman-London.

A special case of Theorem 4.1-that H is strongly Hoffman-London when it is obtained from an empty (edgeless) graph by adding any non-zero number of looped dominating vertices, or equivalently when it is the join of empty graph and a fully looped complete graph-can also be easily derived from earlier work of Wingard [43, Theorem 5.1 and Theorem 5.2] on independent sets of fixed size in trees.

Csikv´ ari and Lin [7] showed that paths and stars are Hoffman-London.

Theorem 4.2 (Csikv´ ari, Lin [7]) . If H is either a path or a star (on any number of vertices), then for all n and all T n ∈ T n we have

$$\vert \sin ( P _ { 1 } ) \vert < \vert \sin ( P _ { 2 } ) \vert$$

Note that Csikv´ ari and Lin do not comment on when paths and stars are strongly HoffmanLondon. We will address this question for paths in Section 4.3, specifically after the proof of Theorem 4.6. For stars, note that Theorem 2.6 shows that S k is not strongly Hoffman-London for any k .

## 4.2 Target graphs with two automorphic similarity classes

In this section we specialize Theorem 1.5 to the case in which H has exactly two automorphic similarity classes and is not a regular graph. (Note that by Observation 2.3, if H is regular, then hom( T n , H ) is independent of T n , so H is Hoffman-London but not strongly Hoffman-London.) Recall that automorphic similarity classes partition the vertex set, and so in this case all vertices of H are in either H 1 or H 2 . We have the following corollary of Theorem 1.5 and Corollary 1.6.

Corollary 4.3. Let H be a target graph that is not regular and that has two automorphic similarity classes, H 1 and H 2 , ordered so that the (common) degree of any vertex in H 1 is smaller that the (common) degree of any vertex in H 2 . If m 1 , 2 ≤ m 2 , 2 , then H is Hoffman-London. If, further, there is a vertex in H that has a neighbor in H 1 and a neighbor in H 2 , then H is strongly HoffmanLondon.

Proof. To see that H is Hoffman-London, note that for any vertices x ∈ H 1 and y ∈ H 2 we have

$$2 < m _ { 1 } + m _ { 2 } = deg ( y ) .$$

Together with m 1 , 2 ≤ m 2 , 2 , inequality (10) shows that the automporphic similarity matrix of H satisfies the increasing columns property and so is Hoffman-London by Theorem 1.5.

We now use Corollary 1.6 to establish that if H has a vertex that is adjacent to vertices in both H 1 and H 2 , then H is strongly Hoffman-London. Observe that for 0 < a ≤ b we have

and our hypotheses that m 1 , 1 + m 1 , 2 < m 2 , 1 + m 2 , 2 and 0 < a ≤ b imply that 0 < a ′ < b ′ . We use this fact to show that for all s ≥ 2, we have hom v → 1 ( P s , H ) < hom v → 2 ( P s , H ). As in the statement of Lemma 3.3, define h ( T, v ) to be the k -dimensional column vector whose i th entry is hom v → i ( T, H ). When s = 1, the i th entry of h ( P 1 , v ) is hom v → i ( P 1 , H ), the count of H -colorings of P 1 in which v is mapped to a representative of H 1 , so h ( P 1 , v ) = [ 1 1 ] T , where v is the unique

vertex of P 1 . Then for s ≥ 2, we have h ( P s , v ) = M h ( P s -1 , v ), so by equation (11), we conclude hom v → 1 ( P s , H ) < hom v → 2 ( P s , H ).

To apply Corollary 1.6 and conclude that H is strongly Hoffman-London, it remains to show that for all t ≥ 2 there is an H -coloring of P t that sends one end vertex to H 1 and the other to H 2 . Let x ∈ H be the vertex in H that has a neighbor y ∈ H 1 and z ∈ H 2 , noting that x, y , and z need not necessarily be distinct. Suppose x ∈ H 1 . If t is even, then we can map the vertices of P t alternately to x and z ; if t is odd, then we can map the first vertex of P t to y , the second to x , and then proceed as in the even case. The argument for x ∈ H 2 follows analogously.

We can use Corollary 4.3 to recover a portion of Theorem 4.1. Specifically, Corollary 4.3 yields Theorem 4.1 in the case when looped dominating vertices are added to a vertex-transitive (and so necessarily regular) graph. Although the result below is weaker than Theorem 4.1, we include it as it makes our classification of target graphs on three vertices (see Section 5) self-contained.

Proof of special case of Theorem 4.1. Let H be obtained from a vertex transitive graph, say H 0 , by adding some number of looped dominating vertices. If either the number of looped dominating vertices added is zero or H 0 is a fully looped complete graph, then H is regular, and so by Observation 2.3 H is Hoffman-London but not strongly Hoffman-London. For the remainder of the proof, we assume that H 0 is regular but not a fully looped complete graph and at least one looped dominating vertex is added. Let A be the vertex set of H 0 and let B be the set of added looped dominating vertices. Let k be the degree of vertices in H 0 ; note k < | A | .

It is easy to check that H has two automorphic similarity classes, namely H 1 = A and H 2 = B . Let x and y be arbitrary vertices in H 1 and H 2 , respectively. We have

$$m _ { 2 } = \vert B \vert = m _ { 2 }$$

We apply Corollary 4.3 to conclude that H is Hoffman-London. Furthermore, because any vertex in H 2 is adjacent to itself and to all vertices in H 1 , Corollary 4.3 also shows that H is strongly Hoffman-London.

We note that Theorem 1.5 alone could not possibly imply the full strength of Theorem 4.1, which we demonstrate in Example 4.4.

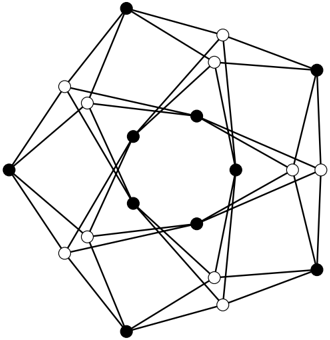

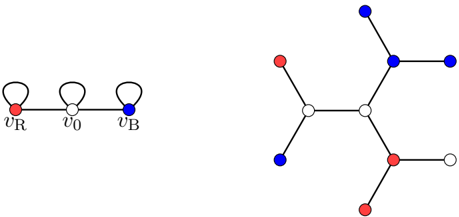

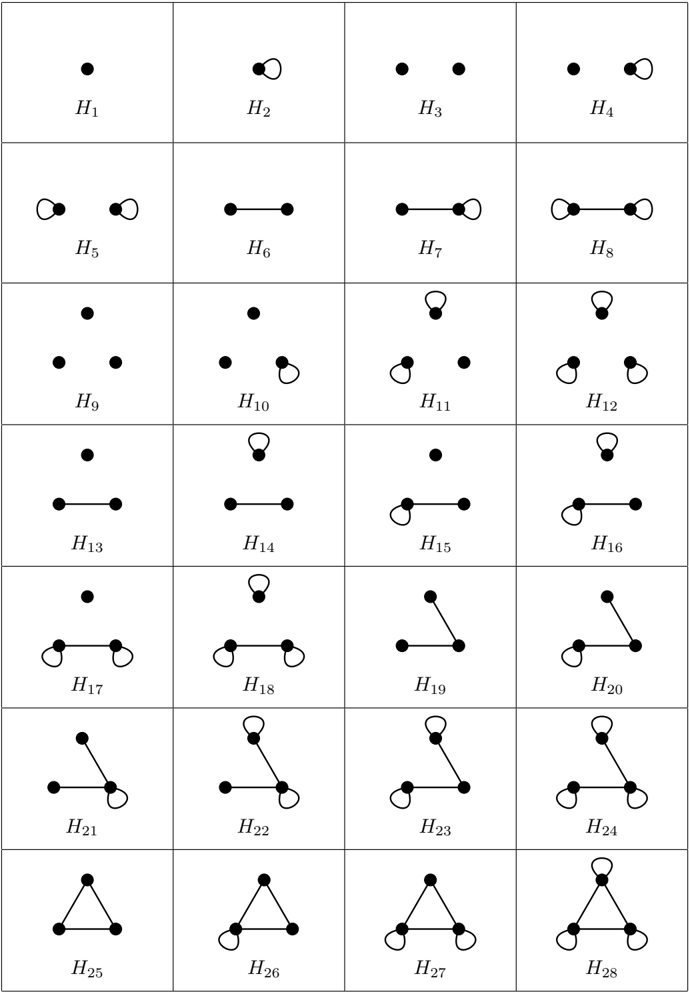

Example 4.4. Consider the Folkman graph [16], a 4-regular graph on 20 vertices constructed from a K 5 by first subdividing each edge and then cloning each of the vertices of the original K 5 ; see Figure 4 where the vertices of the original K 5 are white and those from subdivided edges are black.

The Folkman graph is bipartite and has two automorphic similarity classes, specifically the two bipartition classes. By Theorem 4.1, adding a looped dominating vertex to the Folkman graph produces an H that is Hoffman-London. This H has three automorphic similarity classes: the two bipartition classes of the Folkman graph, each of size ten, which we call A and B , and the looped dominating vertex, which we call C . Vertices in A (respectively, B ) have no neighbors in A (respectively, B ), four neighbors in B (respectively, A ), and one neighbor in C . The vertex in C has ten neighbors in A , ten in B and one in C . So if we set A = H 1 , B = H 2 (or A = H 2 , B = H 1 )

and

Figure 4: The Folkman graph.

<details>

<summary>Image 4 Details</summary>

### Visual Description

\n

## Diagram: Network Graph with Black and White Nodes

### Overview

The image displays a complex, symmetric network graph composed of nodes (circles) and connecting edges (lines). There is no embedded text, numerical data, labels, or axes. The diagram is purely structural, illustrating connections between two distinct sets of nodes differentiated by color.

### Components/Axes

* **Nodes:** There are 16 circular nodes in total.

* **Black Nodes:** 8 solid black circles.

* **White Nodes:** 8 solid white circles with a black outline.

* **Edges:** Straight black lines connect the nodes. Each node is connected to multiple others, forming a dense web.

* **Legend/Labels:** None present. Node differentiation is solely by color (black vs. white).

* **Axes/Scale:** None. This is a topological graph, not a plot with quantitative axes.

### Content Details

The graph exhibits a high degree of symmetry and appears to be a specific type of mathematical or computational graph structure.

* **Spatial Layout & Connectivity:**

* The nodes are arranged in a roughly circular or spherical projection.

* There is a clear pattern of connectivity: each black node appears to be connected to several white nodes, and vice-versa. There are no visible direct connections between nodes of the same color (black-to-black or white-to-white), suggesting this may be a **bipartite graph**.

* The connections create a complex, interwoven pattern with a central, denser region of overlapping edges.

* The overall structure is highly regular and symmetric, implying it represents an idealized or theoretical network rather than a random or organic one.

* **Node Placement (Approximate):**

* **Outer Ring:** 8 nodes (4 black, 4 white) form an approximate outer octagon.

* **Inner Structure:** The remaining 8 nodes (4 black, 4 white) are positioned inside this ring, connected to both outer nodes and each other, creating layered depth.

### Key Observations

1. **Bipartite Nature:** The strict separation of connections between black and white node sets is the most prominent structural feature.

2. **High Symmetry:** The graph possesses rotational and reflective symmetry, indicating a designed, non-random structure.

3. **High Connectivity:** Each node has a relatively high degree (number of connections), resulting in a dense network.

4. **Absence of Textual or Quantitative Data:** The diagram conveys information purely through topology and node categorization. No metrics, labels, or titles are provided.

### Interpretation

This diagram is a visual representation of a **bipartite graph**, a fundamental concept in graph theory and network science. In such a graph, nodes are divided into two disjoint sets (here, black and white), and edges only connect nodes from different sets.

* **What it Demonstrates:** It visually encodes relationships or interactions between two distinct classes of entities. For example, it could model:

* Users and the items they purchase.

* Authors and the papers they cite.

* Tasks and the machines capable of processing them.

* Any scenario where connections only exist between different types of things.

* **Underlying Structure:** The specific symmetric pattern suggests this might be a well-known graph from mathematics or computer science, such as a **Heawood graph**, **Moore graph**, or a representation of a **hypercube** or other polytope. Without labels, the exact identity is uncertain, but its properties (bipartite, symmetric, regular) are clear.

* **Purpose:** The image serves as an abstract, technical illustration of network connectivity principles. Its value lies in showing the pattern and density of relationships between two categorized groups, not in presenting empirical data. To extract specific meaning, contextual labels (e.g., "Server," "Client," "Product," "User") would be required.

</details>

and C = H 3 , the corresponding automorphic similarity matrix is

$$M = \left[ \begin{array}{ccc} 0 & 4 & 1 \\ 4 & 0 & 1 \\ 10 & 10 & 1 \end{array} \right] ,$$

which fails the increasing columns property of Theorem 1.5. Setting C = H 1 or C = H 2 also produces automorphic similarity matrices that fail the increasing columns property, meaning that Theorem 1.5 cannot be used to conclude that H is Hoffman-London.

As an example of the utility of Corollary 4.3 to obtain new families of Hoffman-London graphs, consider the family of constraint graphs H ( a, b, ℓ ), defined for a, b ≥ 1 and ℓ ≥ 0. Start with a clique on b vertices. At each vertex v of the clique, append ℓ copies of a clique on a vertices, with v the only vertex in common to the ℓ appended cliques; see Figure 5. This family generalizes many well known families of graphs; for example, H (2 , 1 , ℓ ) is the star graph, H (2 , 2 , ℓ ) is the balanced double star -an edge with ℓ edges appended to each endpoint, and H (3 , 1 , ℓ ) is the fan graph -a collection of triangles sharing a single common vertex. More generally, H ( a, 1 , ℓ ) is a collection of ℓ cliques on a vertices each, all sharing a single common vertex; following standard notation from topology, we call this a bouquet of ℓ a -cliques .

For some choices of parameters, it is straightforward to verify whether H ( a, b, ℓ ) is HoffmanLondon. In the cases ℓ = 0, ℓ ≥ 1 and a = 1, or ℓ = 1 and b = 1, we see H ( a, b, ℓ ) is just a complete graph, which is regular and so Hoffman-London but not strongly Hoffman-London. If ℓ ≥ 2, a = 2, and b = 1 then H ( a, b, ℓ ) is, as observed above, a star, and so is Hoffman-London by Theorem 4.2, although, as we saw in Theorem 2.6, it is not strongly Hoffman-London. For many other choices of parameters, we can use Corollary 4.3.

Corollary 4.5. For all a, b ≥ 2 and ℓ ≥ 1 , the target graph H ( a, b, ℓ ) is strongly Hoffman-London.

Proof. The graph H ( a, b, ℓ ) has two automorphic similarity classes: H 2 consisting of the b vertices of the initial clique and H 1 consisting of all ℓ ( a -1) added vertices. We have m 1 , 2 = 1 ≤ b -1 = m 2 , 2 , and if x is any vertex in H 1 and y any vertex in H 2 we have deg( x ) = a -1 < b -1+ ℓ ( a -1) = deg( y ). We conclude that H is Hoffman-London by Corollary 4.3.

Figure 5: A visualization of the family of graphs H ( a, b, ℓ ).

<details>

<summary>Image 5 Details</summary>

### Visual Description

\n

## Diagram: Graph Construction with Complete Subgraphs

### Overview

The image is a mathematical diagram illustrating a graph construction. It depicts a large complete graph \(K_b\) connected to two distinct vertices (black dots), each of which is further connected to multiple copies of a smaller complete graph \(K_a\). The diagram uses standard graph theory notation where \(K_n\) denotes a complete graph on \(n\) vertices.

### Components

1. **Central Component**: A large circle labeled \(K_b\), representing a complete graph with \(b\) vertices.

2. **Connection Vertices**: Two solid black dots. These are individual vertices that serve as connection points.

* One vertex is located on the upper-right boundary of the \(K_b\) circle.

* The second vertex is located on the lower-right boundary of the \(K_b\) circle.

3. **Attached Subgraphs**: Multiple loops (ovals) labeled \(K_a\), each representing a complete graph with \(a\) vertices.

* **Upper Group**: Attached to the upper connection vertex. It consists of three visible \(K_a\) loops. A dotted arc spans two of these loops, accompanied by the text "\(\ell\) times".

* **Lower Group**: Attached to the lower connection vertex. It consists of four visible \(K_a\) loops. A dotted arc spans three of these loops, accompanied by the text "\(\ell\) times".

4. **Text Labels**:

* \(K_b\): Centered inside the large circle.

* \(K_a\): Centered inside each of the smaller loops.

* \(\ell\) times: Appears twice, each time near a dotted arc indicating a repeated structure.

### Detailed Analysis

* **Spatial Layout**: The diagram is asymmetric. The \(K_b\) circle dominates the left side. The two connection vertices are positioned on its right-hand side, one above the other. The \(K_a\) loops fan out to the right from these vertices.

* **Structure Interpretation**:

* The diagram suggests that each of the two selected vertices from the graph \(K_b\) is connected to a set of \(\ell\) disjoint copies of the complete graph \(K_a\).

* The dotted arcs with the "\(\ell\) times" label are a schematic shorthand. They indicate that the number of \(K_a\) loops attached to each connection vertex is not limited to the three or four drawn, but is a parameter \(\ell\). The drawn loops are representative.

* The connection between a vertex from \(K_b\) and a copy of \(K_a\) is typically a single edge in such constructions, though the diagram represents this connection by having the loop emanate from the vertex dot.

### Key Observations

1. **Repetition Notation**: The use of "\(\ell\) times" with a dotted arc is a common convention in graph theory diagrams to denote an arbitrary number of identical, disjoint components attached in the same way.

2. **Symmetry of Construction**: The same attachment process (\(\ell\) copies of \(K_a\)) is applied to two distinct vertices of the base graph \(K_b\).

3. **Visual Abstraction**: The diagram abstracts away the internal structure of both \(K_b\) and \(K_a\), representing them as simple shapes. The focus is solely on the connection pattern between these components.

### Interpretation

This diagram visually defines a specific family of graphs. It describes a construction where you start with a complete graph on \(b\) vertices (\(K_b\)). You then select two of its vertices and, to each of these two vertices, attach \(\ell\) disjoint copies of a complete graph on \(a\) vertices (\(K_a\)).

The resulting graph's properties (like connectivity, chromatic number, or existence of certain subgraphs) would depend critically on the parameters \(a\), \(b\), and \(\ell\). This type of construction is often used in graph theory to create counterexamples, study graph parameters, or explore the limits of theorems. The diagram efficiently communicates a complex, parameterized graph structure that would be cumbersome to describe in text alone. The two attachment points suggest the construction might be studying the effect of modifying specific, possibly symmetric, vertices within the base graph \(K_b\).

</details>

Any vertex in H 2 is adjacent to at least one other vertex in H 2 and at least one vertex in H 1 , and so Corollary 4.3 also demonstrates that H is strongly Hoffman-London.

The graphs H ( a, b, ℓ ) that are not covered by Corollary 4.5 or the preceding discussion are those with a ≥ 3, b = 1, and ℓ ≥ 2. In these cases, as mentioned above, H is a bouquet of ℓ a -cliques. Such target graphs have two automorphic similarity classes: one that includes only the central vertex and another that includes all other vertices. For this collection of target graphs, we cannot apply Theorem 1.5: if we choose the central vertex to be H 1 , then the associated automorphic similarity matrix has ℓ ( a -1) > 1 as its (1 , 2)-entry and 1 as its (2 , 2)-entry and fails the increasing columns property, while if we choose the central vertex to be H 2 then the associated automorphic similarity matrix has 1 as its (1 , 2)-entry and 0 as its (2 , 2)-entry, and so again fails the increasing columns property. It is unknown which graphs of this subfamily, if any, are Hoffman-London.

## 4.3 Paths and looped paths as target graphs

In this section, we consider paths and fully looped paths as target graphs. We begin by using Theorem 1.5 to recover part of Theorem 4.2, specifically the case when H is a path with an even number of vertices. We also strengthen this portion of Theorem 4.2 by using Corollary 1.6 to show that target graphs H in this case with at least four vertices are strongly Hoffman-London.

Theorem 4.6. The path graph P 2 m is Hoffman-London for all m ≥ 1 and strongly Hoffman-London for all m ≥ 2 .

Proof. Let H = P 2 m for some m ≥ 1, specifically with vertices u 1 , . . . , u 2 m and edges u i u i +1 for 1 ≤ i ≤ 2 m -1. We claim that P 2 m admits exactly two automorphisms: the identity and the

reversal map that sends u i to u 2 m +1 -i for i = 1 , . . . , 2 m . To verify this claim, first note that an automorphism of P 2 m must preserve degrees and thus leaves must be mapped to leaves. If u 1 is mapped to u 1 , then u 2 , the unique neighbor of u 1 , must be mapped to u 2 , u 3 must be mapped to u 3 , and so on, resulting in the identity map. If u 1 is instead mapped to u 2 m , then u 2 must be mapped to u 2 m -1 , u 3 must be mapped to u 2 m -2 , and so on, giving resulting in the reversal map. It follows that P 2 m has m automorphic similarity classes, each consisting of a pair of vertices { u i , u j } such that i + j = 2 m +1.

Set H i = { u i , u 2 m +1 -i } for i = 1 , . . . , m . The automorphic similarity matrix of H with respect to this ordering of the automorphic similarity classes has the form

That is, it has 1s on the first subdiagonal, 1s on the first superdiagonal, a 1 in the ( m,m ) position, and 0s everywhere else. It is straightforward to check that all terminal sums of the i th row are less than or equal to the corresponding terminal sums of the ( i + 1) th row for all i < m , and so this matrix satisfies the increasing columns property, establishing that P 2 m is Hoffman-London by Theorem 1.5.

We now turn to establishing that P 2 m is strongly Hoffman-London for m ≥ 2. We seek to apply Corollary 1.6. Towards this goal, we will show by induction on t that

$$\sum _ { i = 1 } ^ { n } \sum _ { j = 1 } ^ { m } ( P _ { i } , P _ { j } ) < h o m _ { v - 1 } ( P _ { i } , P _ { 2 m } ) < \ldots < h o m _ { v - 3 } ( P _ { i } , P _ { 2 m } ) = \ldots$$

where v is an endvertex of P t .

When t = 2, inequality (12) holds because hom v → 1 ( P 2 , P 2 m ) = 1 and hom v → i ( P 2 , P 2 m ) = 2 for 2 ≤ i ≤ m . For t > 2, let v ′ be the unique neighbor of v in P t . Using equation (4) from the proof of Lemma 3.3, we have the recurrence relations

$$\begin{array}{ll}

h o m v \rightarrow ( P _ { 1 } , P _ { 2 m } ) = h o & \\

h o m v \rightarrow ( P _ { 1 } , P _ { 2 m } ) = h o & \\

h o m v \rightarrow m ( P _ { 1 } , P _ { 2 m } ) = h o & \\

\end{array}$$

By induction, we have

$$\sum _ { i = 1 } ^ { n } h ( m _ { i } - 1 ) ( P _ { i } - 1 , P _ { 2 m } ) < \ldots < h ( m _ { n } - 1 )$$

When m = 2, we use the fact that hom v ′ → 1 ( P t -1 , P 4 ) > 0 to say

$$\hom _ { v } \left ( P _ { t - 1 } , P _ { 4 } \right ) = \hom _ { v } - 2 \left ( P _ { t - 1 } , P _ { 4 } \right ) = \hom _ { p } \left ( P _ { t - 1 } , P _ { 4 } \right ).$$

which establishes inequality (12).

For m ≥ 3, we first establish hom v → 1 ( P t , P 2 m ) < hom v → 2 ( P t , P 2 m ). By inequality (13), we see that hom v ′ → 2 ( P t -1 , P 2 m ) ≤ hom v ′ → 3 ( P t -1 , P 2 m ). Then, again using hom v ′ → 1 ( P t -1 , P 2 m ) > 0, we have

Next, we show hom v → i ( P t , P 2 m ) ≤ hom v → i +1 ( P t , P 2 m ) for 2 ≤ i ≤ m -2 by using inequality (13) to say

$$\hom _ { v } ^ { - i } ( P _ { t - 1 } , P _ { 2 m } ) = \hom _ { v } ^ { - i + 1 } ( P _ { t - 1 } , P _ { 2 m } )$$

Finally, we demonstrate hom v → m -1 ( P t , P 2 m ) ≤ hom v → m ( P t , P 2 m ) by once again using inequality (13):

which establishes inequality (12).

Finally, we use inequality (12) to show that P 2 m is strongly Hoffman-London. We consider the cases m = 2 and m > 2 separately. For m = 2 and t ≥ 2, set a ( t ) = 1 and b ( t ) = 2. From inequality (12), we have hom v → a ( t ) ( P s , P 4 ) < hom v → b ( t ) ( P s , P 4 ) for all s ≥ 2. To apply Corollary 1.6 to show that P 4 is strongly Hoffman-London, it remains to show that for all t ≥ 2 there is a P 4 -coloring of P t that sends one endvertex v of P t to some vertex in H 1 and the other endvertex w to some vertex in H 2 . For t even, such a coloring is given by sending v to u 1 ∈ H 1 , then sending the vertices of P t alternatively to u 2 and u 1 , ending by sending w to u 2 ∈ H 2 . For t odd, such a coloring is given by sending v to u 1 ∈ H 1 , then sending the vertices of P t alternatively to u 2 and u 1 except for w , which is sent to u 3 ∈ H 2 instead of u 1 .

For m > 2, set a ( t ) = 1 for t ≥ 2, and set b ( t ) = 2 for even t ≥ 2 and set b ( t ) = 3 for odd t ≥ 2. From inequality (12), we again have hom v → a ( t ) ( P s , P 2 m ) < hom v → b ( t ) ( P s , P 2 m ) for all s ≥ 2. To exhibit a coloring of P t that sends v to a vertex in H a ( t ) and w to a vertex in H b ( t ) , we proceed exactly as in the m = 2 case: send v to u 1 ∈ H 1 and proceed to alternate sending the vertices of P t to u 2 and u 1 , ending by sending w to u 2 ∈ H 2 when t is even and deviating by sending w to u 3 ∈ H 3 instead of u 1 when t is odd.

Theorem 4.6 shows that P ℓ is strongly Hoffman-London for all even ℓ ≥ 4, and Observation 2.3 establishes that P 2 is not strongly Hoffman-London, because all trees admit the same number of P 2 -colorings. What can be said of P ℓ when ℓ is odd? As demonstrated in Theorem 2.6, P 3 is not strongly Hoffman-London but in a different manner than P 2 : trees with a balanced bipartition, including paths but not all trees, minimize hom( T n , P 3 ). We know that paths are among the

minimizers of hom( T n , P ℓ ) for odd ℓ due to Csikv´ ari and Lin [7], but the precise set of trees that minimize the number of P ℓ -colorings for odd ℓ ≥ 5 remains open.

The fully looped path P ◦ n is the graph on vertex set u 1 , . . . , u n with edges u i u i +1 for 1 ≤ i ≤ n -1 and u i u i for each 1 ≤ i ≤ n . Unlike for unlooped paths, for which we were only able to apply our techniques when the number of vertices is even, we can completely classify all fully looped paths.

Theorem 4.7. The fully looped path P ◦ n is Hoffman-London for all n ≥ 1 and strongly HoffmanLondon for all n ≥ 3 .

Proof. For n = 1 or 2, P ◦ n is a fully looped complete graph and so Observation 2.3 tells us that P ◦ n is Hoffman-London but not strongly Hoffman-London. When n = 3, P ◦ n is an instance of a regular graph with a looped dominating vertex added, and so applying Theorem 4.1 establishes that P ◦ n is strongly Hoffman-London.

For n ≥ 4, we start by considering n even, say n = 2 m . Exactly as for P 2 m , we find that the automorphic similarity classes of P ◦ 2 m are H i = { v i , v 2 m +1 -i } , i = 1 , . . . , m , each of size 2, and the the associated automorphic similarity matrix has the form

$$\begin{bmatrix} 1 & 1 & 0 & 0 & \cdots & 0 & 0 \\ 1 & 1 & 1 & 0 & \cdots & 0 & 0 \\ 0 & 1 & 1 & 1 & \cdots & 0 & 0 \\ \vdots & \vdots & \vdots & \vdots & \ddots & \vdots & \vdots \\ 0 & 0 & 0 & 0 & \cdots & 1 & 1 \\ 0 & 0 & 0 & 0 & \cdots & 0 & 1 \\ \end{bmatrix}$$

(i.e., it is the automorphic similarity matrix of P 2 m modified by the addition of the identity matrix I 2 m ). It is straightforward to check that all terminal sums of the i th row are less than or equal to the corresponding terminal sums of the ( i +1) th row for all i < m , and so this matrix satisfies the increasing columns property and P ◦ 2 m is Hoffman-London.

For n ≥ 5 and odd, say n = 2 m -1, the automorphic similarity classes of P ◦ 2 m -1 are H i = { v i , v 2 m -i } , i = 1 , . . . , m -1, each of size 2, and H m = { v m } (of size 1). The structure of the corresponding automorphic similarity matrix is the same as in the even case, except that in the final row the 1 and 2 are flipped. That M satisfies the increasing columns condition follows almost exactly as it did in the even case.

We have established that P ◦ n is Hoffman-London for all n and strongly Hoffman-London for n = 3. To establish that P ◦ n is strongly Hoffman-London for n ≥ 4, we will show that Corollary 1.6 can be applied. We first observe that for all s ≥ 2 we have hom v → 1 ( P s , P ◦ n ) < hom v → 2 ( P s , P ◦ n ), where v is one of the endvertices of P s . We can see this via a non-surjective injection from Hom v → 1 ( P s , P ◦ n ) into Hom v → 2 ( P s , P ◦ n ). For concreteness, we take a fixed x ∈ H 1 (necessarily one of the endvertices of P ◦ n ) to be the representative of H 1 that v is mapped to, and take its unique neighbor x ′ ∈ H 2 to be the representative of H 2 that v is mapped to. The injection is simple: given f ∈ Hom v → 1 ( P s , P ◦ n ) with f ( v ) = x , map f to f ′ by changing the value at v to x ′ . That f ′ ∈ Hom v → 2 ( P s , P ◦ n ) (i.e., that f ′ is actually a homomorphism) follows from the fact that f ′ maps v ′ (the unique neighbor of v in P s ) to a neighbor of x , so either x or x ′ , and both of these are neighbors of x ′ . To see that the injection is not a surjection, consider f ′ ∈ Hom v → 2 ( P s , P ◦ n ) that sends v to x ′ and all other vertices of P s to x ′′ , the neighbor of x ′ in H 2 . There is no f ∈ Hom v → 1 ( P s , P ◦ n ) that maps onto f ′ via our injection, since x is not adjacent to x ′′ in P ◦ n .

We next observe that for all t ≥ 2 there is a P ◦ n -coloring of P t that sends one endvertex to a vertex in H 1 and the other to a vertex in H 2 -simply send one endvertex to x and all the others to x ′ . That P ◦ n ( n ≥ 4) is strongly Hoffman-London now follows from Corollary 1.6.

We end our discussion of paths with a quick corollary of Theorem 4.7 to the family of ladder graphs . The ladder graph L n has vertices u 1 , . . . , u n , v 1 , . . . , v n and edges u i u i +1 and v i v i +1 , for 1 ≤ i ≤ n -1, and u i v i for 1 ≤ i ≤ n .

Corollary 4.8. For n ≥ 1 , the ladder graph L n is Hoffman-London. For n ≥ 3 it is strongly Hoffman-London.

Proof. Wehave L n = P ◦ n × K 2 , so the proposition follows from Observation 2.5 and Theorem 4.7.

## 4.4 Loop threshold graphs

Here we define the family of loop threshold graphs and prove that they are Hoffman-London. Denote by K 1 the graph on one vertex with no edges, and by K ◦ 1 the graph on one vertex with a loop. The family of loop threshold graphs is the minimal (by inclusion) family of graphs that includes K 1 and K ◦ 1 , is closed under adding isolated vertices, and is closed under adding looped dominating vertices. Loop threshold graphs were introduced by Cutler and Radcliffe [13] where they were shown to be a natural family to consider in the context of H -coloring; one of the results of [13] is that if H is loop threshold, then among n -vertex m -edge graphs, there is a threshold graph that maximizes the number of H -colorings. For further work on loop threshold graphs, see also [11] (regarding H -coloring) and [21] (regarding enumeration).

The following characterization of loop threshold graphs is stated in [13], where the authors suggest adapting a proof from [8]. For completeness, we present the details here.

Lemma 4.9. For a graph H on n vertices, possibly with loops, the following are equivalent.

1. H is loop threshold.

2. There is an ordering of the vertices of H , say v 1 , v 2 , . . . , v n , with the property that