# Graph-Based Exploration for ARC-AGI-3 Interactive Reasoning Tasks

**Authors**: Evgenii Rudakov, Jonathan Shock, Benjamin Ultan Cowley

## Abstract

We present a training-free graph-based approach for solving interactive reasoning tasks in the ARC-AGI-3 benchmark. ARC-AGI-3 comprises game-like tasks where agents must infer task mechanics through limited interactions, and adapt to increasing complexity as levels progress. Success requires forming hypotheses, testing them, and tracking discovered mechanics. The benchmark has revealed that state-of-the-art LLMs are currently incapable of reliably solving these tasks. Our method combines vision-based frame processing with systematic state-space exploration using graph-structured representations. It segments visual frames into meaningful components, prioritizes actions based on visual salience, and maintains a directed graph of explored states and transitions. By tracking visited states and tested actions, the agent prioritizes actions that provide the shortest path to untested state-action pairs. On the ARC-AGI-3 Preview Challenge, this structured exploration strategy solves a median of 30 out of 52 levels across six games and ranks 3rd on the private leaderboard, substantially outperforming frontier LLM-based agents. These results demonstrate that explicit graph-structured exploration, even without learning, can serve as a strong baseline for interactive reasoning and underscore the importance of systematic state tracking and action prioritization in sparse-feedback environments where current LLMs fail to capture task dynamics. The code is open source and available at https://github.com/dolphin-in-a-coma/arc-agi-3-just-explore.

## Introduction

Introduced in 2019, the Abstraction and Reasoning Corpus for Artificial General Intelligence (ARC-AGI) has become a fundamental benchmark for evaluating general fluid intelligence in artificial systems by posing novel tasks that require minimal prior knowledge (chollet_measure_2019). While the original ARC-AGI benchmarks focused on static grid-based reasoning tasks, ARC-AGI-3 represents a paradigm shift toward Interactive Reasoning Benchmarks (IRBs) that test broader capabilities, including on-the-fly learning, exploration, and memory through game-like environments where agents must perceive, plan, and act across multiple steps to achieve long-horizon goals (arc-agi-3).

ARC-AGI-3 introduces novel game environments designed to test the skill-acquisition efficiency of artificial systems, where agents interact with game environments without instructions, and must discover mechanics through exploration. Early results reveal a stark performance gap: frontier AI models scored 0% while human participants achieved 100% on the initial preview tasks (arc-agi-3). This dramatic disparity underscores fundamental limitations in current AI approaches to interactive reasoning and adaptive learning in novel environments.

The challenge of learning from sparse rewards has been central to reinforcement learning (RL) for decades. When rewards are rare and precise action sequences are required, random exploration fails to discover optimal policies. Exploration strategies have emerged to address this challenge. Curiosity-driven methods use prediction error as intrinsic motivation (pathak_curiosity-driven_2017), enabling agents to explore complex environments like Super Mario Bros without extrinsic rewards (pathak_curiosity-driven_2017). Go-Explore advances systematic exploration by maintaining archives of discovered states and decomposing exploration into phases: return to promising states, then explore from them (ecoffet_first_2021). This approach achieved breakthrough performance on Montezuma’s Revenge, scoring 25% higher than the human expert. For goal-conditioned tasks, Hindsight Experience Replay (HER) learns from failure by relabeling unsuccessful attempts as alternative goals, achieving sample-efficient learning without reward engineering (andrychowicz_hindsight_2017).

Model-based approaches have demonstrated remarkable sample efficiency by learning environment dynamics. MuZero combined learned latent dynamics with tree search, achieving superhuman performance on board games and Atari benchmarks without knowledge of game rules (schrittwieser_mastering_2020). EfficientZero extended this with self-supervised consistency losses, becoming the first algorithm to reach superhuman levels on Atari (194.3% mean human) with just 100k training samples (two hours of real-time experience) per game (ye_mastering_2021). BBF further improved Atari 100k results by scaling the value network sample-efficiency (schwarzer_bigger_2023), in a completely model-free manner.

The family of Dreamer models (hafner_training_2025) takes an alternative approach, learning world models in latent space and training policies through imagined rollouts rather than via tree search, mastering over 150 diverse tasks from Atari to Minecraft with a single configuration (hafner_training_2025). Most recently, Axiom introduced object-centric world models that learn compositional representations by discovering and tracking entities, achieving competitive performance within minutes by targeting 10k-step solutions per environment (heins_axiom_2025).

Despite these advances, current approaches face fundamental limitations for few-shot discovery tasks like ARC-AGI-3. The benchmark provides only a single sparse reward signal, level completion, across no more than 10 levels per game. This scarcity of feedback severely constrains learning-based methods. The challenge is compounded by the fact that each level introduces new mechanics while retaining previous ones, creating a shifting distribution that prevents straightforward transfer learning. Curiosity-driven exploration offers no guarantee of correlation with task progress in truly novel environments where the notion of ”most interesting states” may be orthogonal to goal-relevance. Sample-efficient approaches like Axiom assume object-centric compositional structure and require environments to exhibit consistent physical dynamics, assumptions that may not hold across ARC-AGI-3’s abstract and diverse game mechanics.

ARC-AGI-3 is also relevant for understanding the behaviour of large language model (LLM) agents. Unlike static reasoning benchmarks, it requires agents to infer latent rules through interaction, maintain an evolving notion of state, and design multi-step probes under sparse feedback, making it a complementary testbed for studying how explicit structure and exploration strategies can support LLM-based reasoning.

In this work, we present a graph-based exploration method that combines systematic state-space tracking with visual priority heuristics to tackle ARC-AGI-3’s interactive reasoning challenges. Our approach maintains a directed graph representation of explored states and action transitions, prioritizing actions based on visual salience while ensuring comprehensive exploration through frontier-driven navigation. Unlike learning-based approaches that require extensive training, our method operates as a strong baseline that can make progress through structured exploration alone. We demonstrate that this approach achieves competitive performance on the ARC-AGI-3 benchmark, significantly outperforming state-of-the-art LLMs while providing insights into the nature of exploration required for interactive reasoning tasks.

## ARC-AGI-3

### Benchmark Overview

ARC-AGI-3 represents a significant evolution from the original ARC challenge, shifting from static grid-based reasoning to interactive game environments that test an agent’s ability to learn through exploration (ying_assessing_2025). The benchmark consists of 6 novel game environments, with 3 public games (ft09, ls20, vc33) released for development and 3 private games (sp80, lp85, as66) used to determine final leaderboard rankings. Each game contains between 8 and 10 levels, with each subsequent level introducing new mechanics. Figure 2 in the appendix shows example screenshots from the games.

The benchmark’s evaluation criterion prioritizes both effectiveness and efficiency: agents are scored based on the number of levels completed, with the total number of actions required serving as a tiebreaker. This dual objective encourages solutions that not only discover winning strategies but do so with minimal exploration. For the final evaluation experiments by ARC-AGI-3 organizers, each run was capped at 8 hours of wall-clock time and 10 environment steps per second (sps), shared across the three private games. Under these limits, a single game can receive at most 96,000 steps

### Observation and Action Spaces

#### Visual Observations.

Agents receive visual observations as 64 $\times$ 64 pixel RGB frames with a discrete palette of 16 colors. Each frame contains both the game environment and a status bar displaying the number of steps remaining before an automatic level restart. When the step counter reaches zero, the current level resets to its initial state. In the majority of games, the number of levels passed is also displayed.

#### Action Spaces.

The benchmark features three control schemes. Games such as ls20 use arrow-based control with directional keyboard inputs (up, down, left, right), yielding an action space of size $|\mathcal{A}|=4$ . Games such as ft09, vc33, and lp85 employ click-based control, enabling spatial interaction by allowing the agent to click any pixel location in the frame, yielding an action space of size $|\mathcal{A}|=64\times 64=4{,}096$ . Private games (sp80 and as66) introduce combined control schemes that integrate both arrow and click inputs, resulting in action spaces of size $|\mathcal{A}|=4{,}100$ .

The dramatic difference in action space cardinality between control schemes poses a fundamental challenge: click-based games present over 1,000 times more possible actions at each state than arrow-based games, making exhaustive exploration intractable without intelligent action selection.

### Task Structure and Mechanics

Each game in ARC-AGI-3 embodies a distinct set of mechanics and objectives that agents must discover through interaction. The only feedback signal is level completion: the environment advances to the next level when the agent satisfies (unknown) winning conditions, or resets to the beginning when the step limit expires.

Within each game, levels progressively add new elements while retaining earlier ones. For example, level 1 of ls20 requires basic movement and the use of the transformer object to activate the exit door by adjusting the shape of a key, level 2 adds energy palletes to refill the number of steps remaining, level 3 introduces color dimension to the key, and so forth, up to level 8, when the agent must manage with only partial observations. This progressive structure mirrors how humans naturally acquire skills in games, but poses challenges for algorithms: knowledge transfer between levels could accelerate learning, but the levels are connected on a highly abstract level.

The released games operate deterministically: the same action taken from the same state always produces the same outcome. This property enables systematic state-space exploration strategies and graph-based representations of explored states. However, determinism does not imply simplicity; the complexity arises from the large state and action spaces and the lack of prior knowledge about which actions lead toward goal states.

## Methods

Our approach comprises two primary components: a Frame Processor for extracting key visual features and a Level Graph Explorer for systematic state-space exploration.

### Frame Processor

The Frame Processor reduces irrelevant visual variability and directs exploration toward actionable regions of the game environment through the following operations:

#### Image Segmentation.

Each frame is segmented into single-color connected components, establishing the foundation for identifying distinct visual elements that may constitute interactive objects.

#### Status Bar Detection and Masking.

To prevent conflation of environment features with user interface components, the processor identifies and masks probable status bars. This preprocessing substantially reduces the number of recognized states.

#### Priority-Based Action Grouping.

For click-controlled games, visual segments are stratified into five priority tiers based on their likelihood of representing interactive buttons or objects. Prioritization is determined by segment size, morphological features, and color salience. The lowest priority tier encompasses segments identified as probable status bars, ensuring their exploration only after exhausting higher-priority alternatives.

#### State Hashing.

The processor generates a hash representation of the masked image, serving as a unique identifier for the current game state. This hash facilitates efficient state tracking and duplicate detection during graph exploration.

### Level Graph Explorer

The Level Graph Explorer maintains a directed graph representation of the explored state space, where nodes correspond to unique game states and edges encode action-induced state transitions.

#### Graph Structure.

For each discovered state (graph node), the explorer maintains:

- The action space $\mathcal{A}$ identifiers of connected components for spatial interaction games such as ft09/cv33, keyboard inputs for games such as ls20)

- For each action $a\in\mathcal{A}$ : priority level $\pi(a)$ , exploration status, transition outcome, successor state, and minimal distance to the nearest unexplored frontier

#### Action Selection Strategy.

The explorer implements a hierarchical action selection policy that progressively expands the search space, as shown in Algorithm 1.

Algorithm 1 Hierarchical Action Selection

0: Current state $s$ , priority threshold $p$

if $\exists$ untested actions with priority $\pi(a)\leq p$ in state $s$ then

Select uniformly at random an untested action $a$ where $\pi(a)\leq p$ from $s$

Execute action and update graph with observed transition

else if $\exists$ reachable state $s^{\prime}$ with untested actions where $\pi(a)\leq p$ then

Select action minimizing distance to reachable state $s^{\prime}$ with untested actions at priority $\leq p$

Execute selected action

else

Increment priority threshold: $p\leftarrow p+1$

Recurse from current state $s$ with updated priority $p$

end if

This policy ensures systematic exploration of high-salience actions prior to considering lower-priority alternatives, thereby focusing computational resources on likely-relevant state-action pairs.

#### Frontier Management.

The explorer maintains the shortest-path distances from each explored state to frontier states, those containing untested actions. These distance metrics always guide traversal toward unexplored regions.

## Baselines

We evaluate our approach against two baseline methods to demonstrate the effectiveness of structured exploration.

#### Random Agent.

A simple baseline that selects actions uniformly at random from the available action space at each step. This baseline provides a lower bound on performance and demonstrates the difficulty of solving tasks through undirected exploration alone.

#### LLM+DSL.

We compare against the best-performing LLM-based solution on the leaderboard (fluxon_arc_2025), which combines GPT-4.1 with domain-specific language (DSL) programming. The approach observes game frames and generates Python code to interact with the environment, attempting to discover game mechanics through programmatic reasoning. Despite using a frontier LLM, this approach demonstrates the current limitations of LLM-based methods for interactive reasoning tasks.

Because each environment step is gated by an LLM call, it is severely interaction-limited: within the evaluation budget, it produces only about 4,000 interactions per game, compared to the 96,000 steps that are in principle allowed. To avoid high LLM usage costs, we do not re-run this baseline; instead, we report the results from its official evaluation on the private games, with the limitation that only a single aggregate score is available and no results are reported on the public games.

## Results

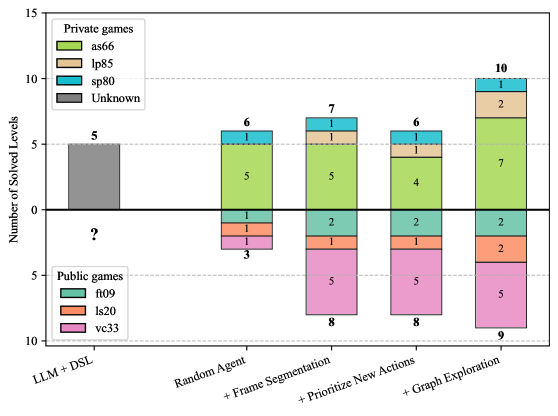

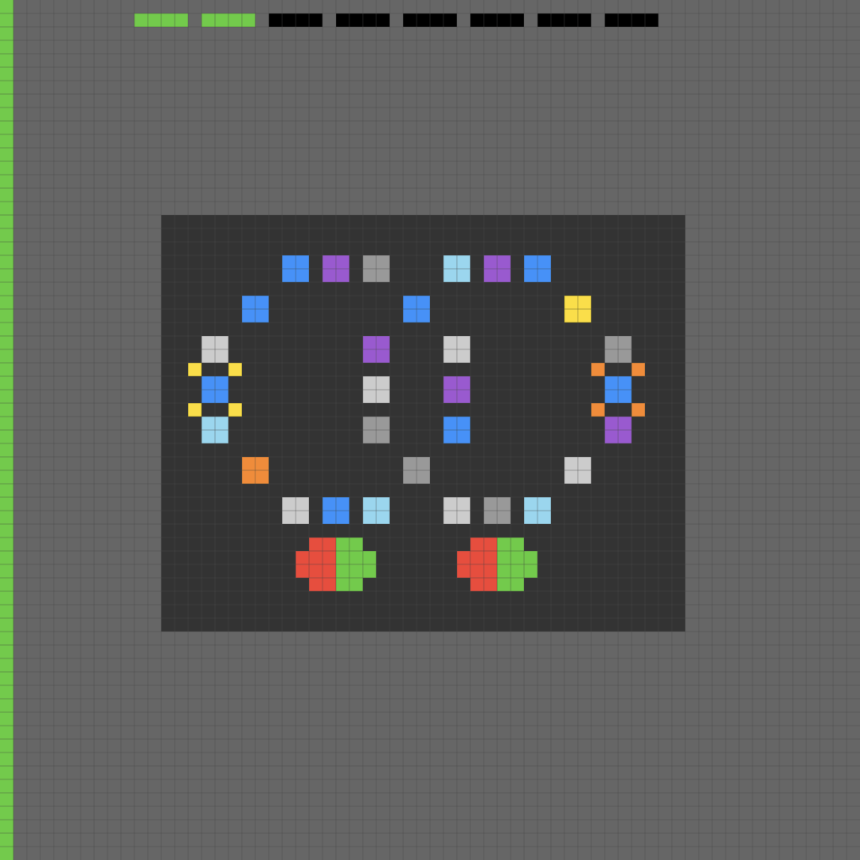

We evaluated our graph-based exploration method on all six ARC-AGI-3 games. Figure 1 reports an incremental component-addition analysis: starting from a random agent, we cumulatively add components and measure the total levels solved across games; the LLM+DSL baseline is included for comparison. Here, to ensure a fair comparison with the LLM-based baseline, all methods are capped at 4,000 interactions per game. All non-LLM configurations report the median over 5 runs, whereas the LLM+DSL baseline is shown as a single result taken from the official challenge evaluation.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Stacked Bar Chart: Game Level Solving Performance by Method

### Overview

This image displays a stacked bar chart comparing the performance of five different methods or agents in solving levels from two categories of games: "Private games" and "Public games." The chart uses a dual-direction stacked bar format, with private game results extending upward from the zero line and public game results extending downward. The primary metric is the "Number of Solved Levels."

### Components/Axes

* **Chart Type:** Stacked Bar Chart (Bidirectional/Diverging).

* **Y-Axis:** Labeled "Number of Solved Levels." The scale runs from 0 at the center to 15 at the top (for private games) and from 0 to 10 at the bottom (for public games). Major gridlines are present at intervals of 5.

* **X-Axis:** Lists five methods/agents:

1. LLM + DSL

2. Random Agent

3. + Frame Segmentation

4. + Prioritize New Actions

5. + Graph Exploration

* **Legends:**

* **Private games (Top-Left):** A box legend with four categories and associated colors:

* `as66` (Light Green)

* `lp85` (Beige/Tan)

* `sp80` (Teal/Cyan)

* `Unknown` (Gray)

* **Public games (Bottom-Left):** A box legend with three categories and associated colors:

* `ft09` (Dark Teal/Green)

* `ls20` (Orange/Salmon)

* `vc33` (Pink/Magenta)

* **Data Labels:** Each colored segment within the bars contains a number indicating the count for that specific category. The total number of solved levels for private games is displayed above each bar, and the total for public games is displayed below each bar.

### Detailed Analysis

Performance is analyzed per method, from left to right.

1. **LLM + DSL**

* **Private Games (Total: 5):** The entire bar is a single gray segment labeled `5`. This corresponds to the `Unknown` category in the legend. No other private game categories are present.

* **Public Games (Total: ?):** No bar extends downward. A question mark `?` is present below the zero line, indicating either zero solved public levels or missing data for this method.

2. **Random Agent**

* **Private Games (Total: 6):** The bar is stacked from bottom to top: a gray segment (`Unknown`, value `1`), a light green segment (`as66`, value `5`), a beige segment (`lp85`, value `1`), and a teal segment (`sp80`, value `1`). *Note: The sum of segments (1+5+1+1=8) does not match the labeled total of 6. This is a visual/data inconsistency in the source chart.*

* **Public Games (Total: 3):** The bar extends downward. From top (zero line) to bottom: a dark teal segment (`ft09`, value `1`), an orange segment (`ls20`, value `1`), and a pink segment (`vc33`, value `1`).

3. **+ Frame Segmentation**

* **Private Games (Total: 7):** Stacked from bottom to top: gray (`Unknown`, `1`), light green (`as66`, `5`), beige (`lp85`, `1`), teal (`sp80`, `1`). Sum of segments (1+5+1+1=8) again does not match the labeled total of 7.

* **Public Games (Total: 8):** Stacked from top to bottom: dark teal (`ft09`, `2`), orange (`ls20`, `1`), pink (`vc33`, `5`).

4. **+ Prioritize New Actions**

* **Private Games (Total: 6):** Stacked from bottom to top: gray (`Unknown`, `1`), light green (`as66`, `4`), beige (`lp85`, `1`), teal (`sp80`, `1`). Sum of segments (1+4+1+1=7) does not match the labeled total of 6.

* **Public Games (Total: 8):** Stacked from top to bottom: dark teal (`ft09`, `2`), orange (`ls20`, `1`), pink (`vc33`, `5`).

5. **+ Graph Exploration**

* **Private Games (Total: 10):** Stacked from bottom to top: gray (`Unknown`, `2`), light green (`as66`, `7`), beige (`lp85`, `2`), teal (`sp80`, `1`). Sum of segments (2+7+2+1=12) does not match the labeled total of 10.

* **Public Games (Total: 9):** Stacked from top to bottom: dark teal (`ft09`, `2`), orange (`ls20`, `2`), pink (`vc33`, `5`).

### Key Observations

* **Performance Trend:** There is a clear upward trend in the total number of solved private game levels as methods become more complex, peaking at 10 for "Graph Exploration." Public game performance also generally improves, from 3 to 9.

* **Dominant Category:** The `as66` category (light green) consistently makes up the largest portion of solved private games for all methods except "LLM + DSL."

* **Public Game Leader:** The `vc33` category (pink) is the dominant component of solved public games for the last three methods, consistently contributing 5 solved levels.

* **Data Inconsistency:** For four of the five bars, the sum of the individual segment values for private games does not equal the total labeled above the bar. This suggests either a chart error, overlapping categories, or that the totals represent unique games solved while segments may count solutions across multiple categories.

* **Unknown Category:** The `Unknown` category (gray) appears in all private game bars, indicating some solved levels could not be classified into the `as66`, `lp85`, or `sp80` categories.

### Interpretation

The chart demonstrates the progressive effectiveness of more sophisticated agent architectures on benchmark game-solving tasks. The "LLM + DSL" baseline shows limited capability, solving only private games and failing on public ones. Each incremental addition—Frame Segmentation, Prioritizing New Actions, and finally Graph Exploration—correlates with increased performance.

The data suggests that **Graph Exploration** is the most effective method shown, achieving the highest scores in both game categories (10 private, 9 public). The consistent performance of the `as66` and `vc33` categories implies these game types or levels are particularly well-suited to the capabilities of the advanced agents, or perhaps are more prevalent in the test suite.

The persistent "Unknown" category and the numerical inconsistencies between segment sums and totals are critical anomalies. They point to potential issues in the evaluation methodology, classification system, or data visualization itself. A technical reviewer would need to investigate whether the totals represent unique solved levels (where a level might belong to multiple categories) or if there is a simple error in the chart's construction. The question mark for the "LLM + DSL" public game score further indicates incomplete or inconclusive results for that baseline.

</details>

Figure 1: Effect of progressively adding method components to a random agent, compared with the LLM+DSL baseline. For each configuration, the stacked bar above the horizontal axis shows the total number of solved levels across the three private games, and the stacked bar below shows the total across the three public games. Colors indicate how many levels are solved in each individual game. The rightmost bars correspond to the full method. All non-LLM configurations report the median over 5 runs, whereas the LLM+DSL baseline is shown as a single result taken from the official challenge evaluation.

The random agent and LLM+DSL baseline solve 6 and 5 levels on the private games, respectively, meaning that the LLM-based method underperforms even a random policy. The random agent also solves 3 levels across the public games.

Adding frame segmentation to random exploration slightly increases performance on the private games, making it possible to solve one level of lp85. It also significantly improves performance on the public games, solving 5 levels on vc33 and 2 levels on ft09.

When, in each state, untested actions are favored without full state-graph exploration, performance slightly decreases on as66, and the method is able to solve only 4 levels.

Our complete approach solves 19 levels with an interaction limit of 4,000: 2 on ft09, 2 on ls20, 5 on vc33, 1 on sp80, 2 on lp85, and 7 on as66.

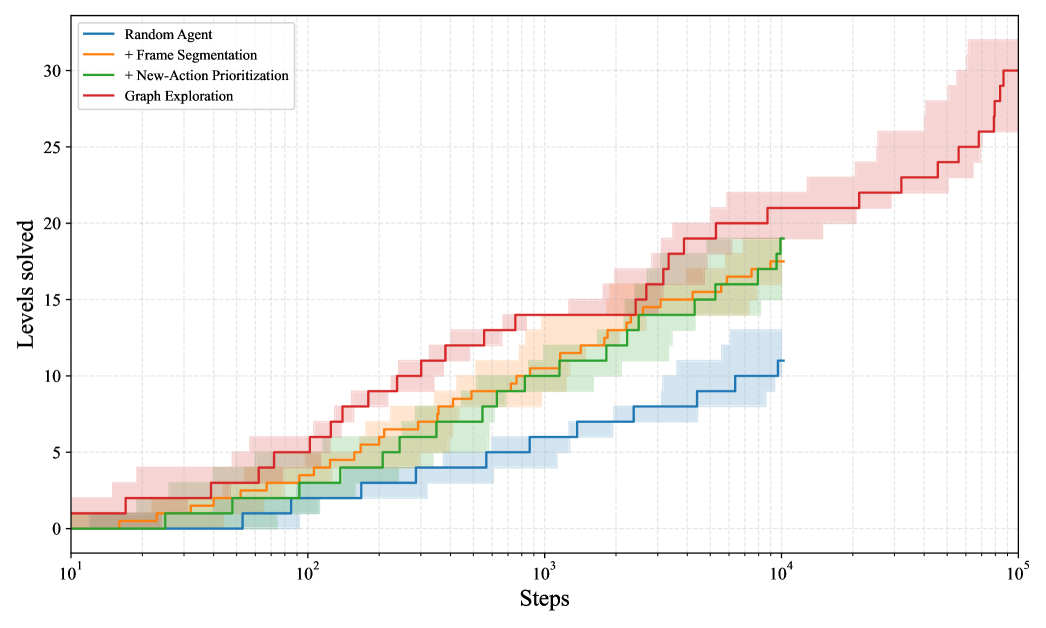

In a full 8-hour run, across 5 independent runs, our method solves a median of 16 levels on the private games and 14 levels on the public games (see Figure 3 in the appendix). Per-level performance is reported in Tables 1 and 2 in the appendix.

On the official ARC-AGI-3 challenge evaluation, the submitted model solves 12 levels on the private games while still ranking 3rd by the number of solved levels. This discrepancy is due to an implementation bug in how reset-inducing actions are handled (see Discussion).

## Discussion

Our graph-based exploration method demonstrates that structured state-space navigation with visual prioritization significantly outperforms both random exploration and frontier LLMs with access to code writing and execution on ARC-AGI-3.

#### Performance Analysis.

The method excelled on games where visual salience aligned with interactive elements (vc33, as66). Performance degraded on games with extremely large state spaces (ft09 levels 6+, ls20 levels 3+), where exhaustive exploration becomes computationally intractable. The improvement over LLM+DSL baselines suggests that structured exploration provides a more reliable foundation for interactive reasoning than pure language-model-based approaches, which struggle to form and test hypotheses systematically.

The discrepancy between the official ARC-AGI-3 evaluation and our re-runs is due to an implementation bug in the handling of reset events. Actions that triggered a reset were not marked as tested in the game graph. Consequently, when such a state–action pair was the nearest remaining untested edge in the graph from the starting node, the agent repeatedly selected it, resetting the game and effectively entering a loop.

#### Limitations.

The method faces two fundamental constraints. First, computational requirements grow linearly with state space size, limiting scalability to levels with moderate complexity. Second, the approach assumes deterministic, fully observable environments and would fail under stochasticity or partial observability.

#### Future Directions.

While the first-place solution on the leaderboard (smit_driessmitarc3-solution_2025) achieved superior performance with a learning-based approach, it did not incorporate structured exploration strategies. A natural next step is to integrate our graph-based exploration framework with adaptive learning algorithms. Such hybrid approaches could leverage graph representations to guide model training and action selection, while learned world models or policies could improve sample efficiency through generalization. The key challenge remains the sparse reward signal and limited training data, making it essential to develop methods that can effectively transfer knowledge across levels while maintaining systematic exploration coverage.

## Appendix A Appendix A: ARC-AGI-3 Games

|

<details>

<summary>Figures/vc33.png Details</summary>

### Visual Description

## Diagram: Pixel Art Level Schematic

### Overview

The image is a pixel art diagram, likely representing a level layout for a 2D platformer game or a similar grid-based schematic. It is composed of a uniform grid of small squares (pixels). The scene features two prominent vertical black structures, colored blocks at their bases, a white vertical area on the left, a green square block on the right, and a status-like bar at the top. There is no textual information present in the image.

### Components & Spatial Layout

The image can be segmented into the following regions:

1. **Header (Top ~10% of image):**

* A solid, bright green horizontal bar spans the entire width at the very top edge.

* Below this bar is a gray area. Within this gray area, near the top, is a horizontal row of small, single-pixel squares:

* Two green squares on the left.

* One yellow square to their right.

* Five white squares following the yellow one, extending to the right edge.

* **Positioning:** This row is centered horizontally within the gray area, near the top boundary.

2. **Main Area (Central ~80% of image):**

* **Background:** A uniform medium-gray grid.

* **Left Vertical Structure:** A tall, solid black vertical bar. It originates from the bottom edge and extends upward, terminating just below the top gray header area.

* **Right Vertical Structure:** A shorter, solid black vertical bar. It originates from the bottom edge and extends upward to approximately the vertical midpoint of the image.

* **White Area:** A vertical strip of white grid squares occupies the far-left side of the image, from the bottom edge up to a point about 70% of the image height. It is adjacent to the left black bar.

* **Green Block:** A solid green square block (approximately 6x6 grid units) is located in the lower-right quadrant. It sits above the bottom edge and to the right of the right black bar.

3. **Footer (Bottom ~10% of image):**

* The base of the scene. The two black bars and the white area terminate here.

* **Colored Base Blocks:**

* At the base of the **left black bar**: A blue square block on its left side and a red square block on its right side.

* At the base of the **right black bar**: A blue square block on its left side and a red square block on its right side.

* A white horizontal strip runs along the bottom edge, connecting the bases of the left and right structures.

4. **Additional Element:**

* A distinct green segment (approximately 2 grid units tall) is embedded within the **right black vertical bar**, located roughly one-third of the way up from its base.

### Detailed Analysis

* **Grid Structure:** The entire image is built on a visible, fine grid. All elements align perfectly to this grid.

* **Color Palette:** The colors are flat and distinct: bright green, medium gray, black, white, blue, red, and yellow.

* **Symmetry/Asymmetry:** The layout is asymmetric. The left side features a tall black bar and a large white area. The right side features a shorter black bar with an embedded green segment and a separate green square block.

* **Element Relationships:**

* The two black bars are the dominant vertical elements.

* The blue and red blocks are consistently paired at the base of each black bar, suggesting they may represent start points, doors, or interactive elements.

* The green elements (top bar, small squares, segment in right bar, large block) may indicate goals, collectibles, or safe zones.

* The white area on the left could represent a wall, a different terrain type, or empty space.

### Key Observations

1. **Functional Color Coding:** Colors are used functionally rather than decoratively. Green, blue, red, and yellow likely have specific meanings within the context of the diagram (e.g., player start, goal, hazard, key).

2. **Structural Contrast:** There is a clear contrast between the tall, uninterrupted left black bar and the shorter, segmented right black bar.

3. **Isolated Element:** The large green square block on the right is isolated from other structures, making it a focal point.

4. **Top Bar as HUD:** The top green bar and the row of small colored squares strongly resemble a Heads-Up Display (HUD) showing status, such as health (green bar) and collected items (the small squares).

### Interpretation

This diagram is a technical schematic for a game level or interactive grid-based environment. The elements suggest a navigational or puzzle-based challenge:

* **The Two Paths:** The tall left bar and the shorter right bar present two distinct vertical pathways or obstacles. The embedded green segment in the right bar might indicate a checkpoint, a switch, or a required item to progress.

* **Objectives:** The isolated green square block is likely a primary objective or goal location. The colored squares in the top HUD area may correspond to items that need to be collected, with the yellow square possibly representing a special or final item.

* **Start/Interaction Points:** The paired blue and red blocks at the base of each black bar are probable player start positions, enemy spawn points, or interactive triggers (e.g., doors that require a key).

* **Spatial Logic:** The layout implies a need for vertical movement (climbing the black bars) and horizontal navigation to reach the green block. The white area on the left may be an impassable boundary or a safe zone.

**In essence, the image encodes a set of rules and spatial relationships for an interactive experience, using a minimalist, color-coded pixel language. The lack of text emphasizes its role as a visual blueprint where form and color define function.**

</details>

|

<details>

<summary>Figures/ls20.png Details</summary>

### Visual Description

\n

## Diagram: Pixel Art Maze/Game Level Layout

### Overview

The image is a pixel art representation of what appears to be a top-down view of a maze or a level in a grid-based video game. The entire scene is rendered on a dark gray grid background. The layout consists of navigable pathways (lighter gray), impassable walls (darker gray), and various colored pixel clusters that likely represent interactive elements, items, or characters. There is no textual information present in the image.

### Components/Axes

* **Grid Structure:** The entire image is built on a uniform square grid. Each "pixel" in the art corresponds to one grid cell.

* **Primary Terrain:**

* **Pathways:** Lighter gray cells forming interconnected corridors and open areas.

* **Walls/Obstacles:** Darker gray cells that block movement, creating the maze structure.

* **Interactive Elements (by color and approximate location):**

* **Purple Blocks:** Scattered single 2x2 pixel blocks. Found in the top-left quadrant, center, lower-left, and bottom-center areas.

* **Blue & Orange Clusters:**

* A 3x3 cluster in the bottom-center: top row is orange, bottom two rows are blue.

* A 2x2 cluster in the top-center: left column is blue, right column is orange.

* A 3x3 cluster in the lower-right quadrant: a mix of light blue, red, and orange pixels.

* **White & Blue Shapes:**

* A small, irregular white shape with a single blue pixel on its top-left, located in the left-center area.

* A larger, L-shaped white structure in the bottom-left corner, with a 2x2 blue block attached to its top-left.

* **Green Blocks:** A horizontal row of four 2x2 green blocks located at the very bottom edge, left of center.

* **Status/Indicator Bar (Top Edge):**

* **Left Segment:** A sequence of 5 gray squares followed by 11 purple squares.

* **Right Segment:** Three red squares in the top-right corner.

### Detailed Analysis

* **Spatial Layout:** The maze is non-linear. The main pathways form a rough, inverted "U" shape around a central wall structure. There are several dead-end pockets, particularly on the right side.

* **Element Distribution:**

* Purple blocks are distributed across different sections of the maze, possibly as collectibles or markers.

* The blue/orange clusters are placed at what appear to be junctions or significant locations within the pathways.

* The white shapes are positioned in more isolated corners (left-center and bottom-left).

* The green blocks are aligned neatly at the bottom boundary, suggesting a possible exit, goal, or inventory bar.

* The top status bar is divided into two distinct color groups (gray/purple and red), which may represent different resource counts or game states.

### Key Observations

1. **No Text:** The image contains zero alphanumeric characters. All information is conveyed through color, shape, and spatial arrangement.

2. **Color-Coded Elements:** The use of distinct, solid colors (purple, blue, orange, white, green, red) for specific pixel clusters strongly implies they are interactive or significant objects within the game's logic.

3. **Asymmetrical Design:** The maze layout is not symmetrical, with more open space on the left and a denser, more convoluted wall structure on the right.

4. **Boundary Elements:** The status bar at the top and the green blocks at the bottom frame the main play area, suggesting UI elements or level boundaries.

### Interpretation

This image is a schematic or asset for a grid-based game level. The **lighter gray pathways** define the navigable space for a player or agent. The **darker gray walls** create the puzzle or obstacle structure. The **colored pixel clusters** are almost certainly game objects:

* **Purple blocks** could be coins, keys, or points of interest.

* **Blue/Orange clusters** might represent enemies, NPCs, switches, or special terrain (e.g., water/lava).

* **White shapes** could be the player character(s) or major interactive machinery.

* **Green blocks** at the bottom likely indicate the level's goal, exit zone, or a persistent UI element like a health bar.

* The **top bar** is a classic video game HUD (Heads-Up Display) element. The sequence of gray and purple squares may track a collected resource (e.g., 5/16 units), while the three red squares could represent lives, health, or a warning indicator.

The design suggests a exploration or puzzle-solving gameplay loop where the player navigates the gray maze, interacts with or avoids the colored objects, and aims to reach the green zone or fulfill the condition shown in the top bar. The lack of text implies the game relies on visual symbolism and player experimentation to understand mechanics.

</details>

|

<details>

<summary>Figures/ft09.png Details</summary>

### Visual Description

## Diagram: Grid-Based Color Matrix

### Overview

The image displays a pixel-art style diagram composed of colored squares arranged in a grid pattern on a dark gray background. The entire composition is framed by a multi-colored border. There is no textual information, labels, axes, legends, or numerical data present in the image. The information is conveyed entirely through the arrangement and color of geometric shapes.

### Components & Spatial Layout

1. **Outer Border:** A thin frame surrounds the entire image.

* **Top Edge:** The left segment is green, the right segment is yellow.

* **Bottom Edge:** The left segment is green.

* **Left Edge:** The entire vertical strip is orange.

* **Right Edge:** The entire vertical strip is orange.

2. **Background:** A uniform dark gray field with a subtle, fine grid pattern.

3. **Central Matrix (Main Component):** A darker gray rectangular area, centered within the background, containing a 3-row by 5-column grid of larger squares.

4. **Auxiliary Element:** A small 2-row by 1-column grid is positioned to the right of the main matrix, aligned with its top edge.

### Detailed Analysis: Central Matrix Content

The 3x5 grid contains squares of three distinct types. The following describes each cell, reading left-to-right, top-to-bottom.

* **Row 1 (Top):**

1. Solid red square.

2. Solid red square.

3. Solid red square.

4. Solid blue square.

5. Solid red square.

* **Row 2 (Middle):**

1. Solid red square.

2. **Patterned Square:** A 3x3 sub-grid of white squares with a gray border, containing a single red square at its center.

3. Solid blue square.

4. **Patterned Square:** A 3x3 sub-grid of white squares with a gray border, containing a single blue square at its center.

5. Solid red square.

* **Row 3 (Bottom):**

1. Solid red square.

2. Solid red square.

3. Solid blue square.

4. Solid red square.

5. Solid blue square.

**Auxiliary Element (Right of Matrix):**

* Top cell: Solid red square.

* Bottom cell: Solid blue square.

### Key Observations

* **Color Distribution:** Red is the dominant color, appearing in 10 of the 15 main grid cells and the top auxiliary cell. Blue appears in 5 main grid cells and the bottom auxiliary cell.

* **Symmetry & Pattern:** The two patterned squares in the middle row (positions 2 and 4) are symmetrically placed around the central blue square. They are identical in structure but differ in the color of their central pixel (red vs. blue).

* **Spatial Grouping:** The auxiliary red/blue pair on the right mirrors the color pairing found in the patterned squares and may serve as a key or legend, though no explicit label confirms this.

### Interpretation

This diagram appears to be an abstract representation of a system, state machine, or data matrix where color and pattern encode meaning. The lack of labels makes definitive interpretation impossible, but the structure suggests several plausible readings:

1. **State or Category Map:** Each square could represent a node, cell, or data point. Red and blue might signify two primary states (e.g., active/inactive, type A/type B). The patterned squares could represent composite states, decision points, or cells undergoing a transformation.

2. **Game Board or Puzzle:** The layout resembles a board for a logic puzzle or simple game, where the goal might involve matching colors or navigating between the patterned "special" cells.

3. **Visual Algorithm or Process Flow:** The arrangement could depict the steps or data flow in a process. The central row, with its unique patterned cells, might represent a processing or comparison stage between the more uniform top and bottom rows.

4. **Encoded Data:** The grid might be a direct visual encoding of a small dataset, where color represents a binary or categorical value for each position.

**Notable Anomaly:** The two patterned squares are the only complex elements. Their internal structure (a colored center within a white/gray field) sets them apart as potentially significant—perhaps as inputs, outputs, or operators within the system the diagram represents. The auxiliary red/blue pair strongly reinforces the idea that these two colors are the fundamental variables of the system.

**Conclusion:** The diagram conveys information through spatial relationship and color contrast. Its meaning is entirely dependent on external context not provided in the image. To be useful, it would require a accompanying legend or textual description defining what the red/blue colors and the specific pattern signify.

</details>

|

| --- | --- | --- |

|

<details>

<summary>Figures/sp80.png Details</summary>

### Visual Description

## Pixel Art Diagram: Abstract Grid Composition

### Overview

The image is a digital pixel art composition presented on a uniform grid. It features a structured arrangement of colored rectangular blocks against a dominant orange background. There is no textual information, labels, charts, data tables, or legends present. The image is purely visual and symbolic, resembling a simplified scene or a schematic for a game level.

### Components/Axes

* **Grid Structure:** The entire image is composed of a fine, uniform grid of square pixels.

* **Color Palette:** The composition uses a limited set of solid colors:

* **Background:** A dominant, uniform orange fills the majority of the grid.

* **Top Border:** A single row of light green pixels runs along the very top edge.

* **Bottom Border:** A single row of light blue pixels runs along the very bottom edge.

* **Foreground Elements:** Blocks of black, magenta, red, blue, and yellow are placed on the orange field.

### Detailed Analysis

The image is segmented into distinct horizontal regions, processed from top to bottom:

1. **Header Region (Top 10%):**

* A single row of light green pixels forms the top border.

* Centered horizontally, just below the green border, is a small vertical structure:

* A 2x2 block of black pixels.

* Directly beneath it, a 2x2 block of magenta pixels.

2. **Main Field Region (Central 80%):**

* The background is a solid orange grid.

* **Upper Element:** A horizontal red bar, approximately 12 pixels wide and 2 pixels tall, is centered horizontally in the upper third of the orange field.

* **Lower Element:** A horizontal blue bar, approximately 20 pixels wide and 2 pixels tall, is centered horizontally in the lower third of the orange field. It is wider than the red bar and positioned further down.

3. **Footer Region (Bottom 10%):**

* Three identical yellow structures are evenly spaced along the bottom of the orange field, just above the blue border.

* Each yellow structure is shaped like a wide, low "U" or a castle battlement. They are each 8 pixels wide and 4 pixels tall, with a 2-pixel-high, 4-pixel-wide gap in the top center.

* A single row of light blue pixels forms the bottom border.

### Key Observations

* **Symmetry and Alignment:** The black/magenta block, the red bar, and the blue bar are all horizontally centered. The three yellow structures are evenly spaced, creating a rhythmic pattern.

* **Layering:** Elements appear to be layered on the orange background. The yellow structures sit at the "ground" level, the blue and red bars are "floating" at different heights, and the black/magenta block is at the "top."

* **Absence of Text:** The image contains zero textual information. There are no labels, numbers, titles, or annotations of any kind.

### Interpretation

This image is an abstract, symbolic representation rather than a data-bearing chart or document. Its meaning is open to interpretation but strongly suggests a **platformer video game level schematic** or a **simple architectural cross-section**.

* **Functional Relationships:** The elements can be read as game objects:

* The **green top** could represent a sky or ceiling.

* The **black/magenta block** might be a starting point, an enemy, or a key item.

* The **red and blue bars** are likely platforms for a character to jump on.

* The **yellow "U" shapes** resemble ground-based obstacles, enemies (like classic "Goombas"), or collectible items.

* The **light blue bottom** could represent water, a pit, or the base of the level.

* **Spatial Logic:** The vertical arrangement implies a progression or challenge from top to bottom. The varying widths of the platforms (blue wider than red) could indicate increasing difficulty or different functions.

* **Notable Anomaly:** The complete lack of text or quantitative data means the image conveys information purely through color, shape, and spatial relationship. Its "data" is its compositional layout, which must be interpreted subjectively based on common visual metaphors in games and diagrams.

**Conclusion:** The image is a non-textual, pixel-art diagram. Its informational content is entirely visual and structural, depicting a layered scene with centered and patterned elements on a grid. To extract further meaning, one must infer a context (e.g., game design, abstract art) for the arrangement of colored blocks.

</details>

|

<details>

<summary>Figures/lp85.png Details</summary>

### Visual Description

## Pixel Art Diagram: Abstract Technical Schematic

### Overview

The image displays a pixel art diagram set against a dark gray grid background. The central focus is a rectangular, darker gray panel containing an arrangement of colored squares (pixels) that form two symmetrical, abstract clusters. There is no textual information, labels, axis titles, legends, or data tables present in the image. The composition is purely graphical and symbolic.

### Components/Axes

* **Background:** A uniform grid of small, dark gray squares on a slightly lighter gray field.

* **Top Bar:** A horizontal row of colored segments positioned at the very top of the image, outside the main panel.

* **Left Segment:** A bright green bar, approximately 4 grid units wide.

* **Right Segment:** A series of 7 black bars, each separated by a single grid unit.

* **Main Panel:** A darker gray rectangle centered in the image, serving as the canvas for the primary diagram.

* **Diagram Elements:** Colored squares (pixels) arranged within the main panel. The colors observed are: bright green, red, orange, yellow, light blue, blue, purple, gray, and white.

### Detailed Analysis

The diagram within the main panel is composed of two symmetrical clusters of colored pixels, mirrored along a vertical axis.

**Left Cluster:**

* **Top:** A horizontal row of 4 pixels: blue, purple, gray, light blue.

* **Upper Left:** A diagonal line of 3 blue pixels descending to the left.

* **Left Side:** A vertical column of 5 pixels: white, blue, blue, light blue, orange.

* **Bottom Left:** A 2x2 block of red and green pixels (red on left, green on right).

* **Connecting Pixels:** Scattered gray, white, and blue pixels form loose connections between the above elements.

**Right Cluster (Mirror of Left):**

* **Top:** A horizontal row of 4 pixels: light blue, purple, blue, yellow.

* **Upper Right:** A diagonal line of 3 blue pixels descending to the right.

* **Right Side:** A vertical column of 5 pixels: gray, blue, blue, purple, orange.

* **Bottom Right:** A 2x2 block of red and green pixels (red on left, green on right).

* **Connecting Pixels:** Scattered gray, white, and blue pixels form loose connections.

**Central Axis:**

A vertical line of pixels runs down the center, acting as a mirror line. From top to bottom, it includes: purple, white, gray, gray, white.

### Key Observations

1. **Symmetry:** The most prominent feature is the near-perfect bilateral symmetry of the two main clusters around the central vertical axis.

2. **Color Coding:** Specific colors are used consistently for certain shapes (e.g., blue for diagonal lines, red/green for the bottom blocks, orange for the outermost side pixels).

3. **Lack of Text:** The diagram contains zero alphanumeric characters, labels, or a legend. All information is conveyed through color, shape, and spatial arrangement.

4. **Top Bar:** The green and black bar at the top is separate from the main diagram and may represent a status indicator, progress bar, or title area, but its meaning is undefined without context.

### Interpretation

This image is an abstract, symbolic representation rather than a data-driven chart or labeled schematic. Its meaning is entirely contextual and open to interpretation.

* **Possible Representations:** The symmetrical layout with connecting pathways strongly suggests a technical or logical diagram. It could represent:

* A simplified **circuit board** or **chip layout**, with colored blocks denoting different components (e.g., resistors, capacitors, logic gates).

* A **data flow** or **process diagram**, where the clusters are functional modules and the scattered pixels are data packets or connections.

* A **memory map** or **storage allocation** visualization, with colors indicating different data types or states.

* A **game asset** or **sprite sheet** for a retro-style video game, depicting a character, item, or interface element.

* **Functional Clues:** The red/green blocks at the bottom of each cluster are visually heavy and could signify input/output terminals, power sources, or foundational components. The orange pixels on the far left and right may represent primary connection points.

* **Missing Information:** The complete absence of text or a legend makes definitive interpretation impossible. The top bar's green and black segments are the only elements that hint at a quantitative or status-based meaning (e.g., "2 of 9 units active"), but this is purely speculative.

**Conclusion:** The image is a piece of pixel art designed to evoke the aesthetic of a technical diagram. It successfully communicates structure, symmetry, and component differentiation through color and form, but requires external context or a key to translate its symbols into specific technical information.

</details>

|

<details>

<summary>Figures/as66.png Details</summary>

### Visual Description

## Diagram: Pixel Grid Layout / Game Map Schematic

### Overview

The image displays a square, pixel-art style grid diagram, likely representing a level layout, puzzle, or schematic for a game or simulation. It consists of a central purple field populated with various colored blocks and shapes, enclosed by a multi-layered border. There is no textual information (labels, titles, legends) present in the image. The entire composition is built from a fine grid of small squares (pixels).

### Components/Axes

* **Grid Structure:** The entire image is a uniform grid of small squares. The central area is a large square field.

* **Borders:**

* **Outermost Border:** A thin, solid orange frame.

* **Second Border:** A thick, dark grey frame inside the orange one.

* **Inner Border:** A light grey frame inside the dark grey one, forming the immediate boundary of the main playfield.

* **Corner Blocks:** Four dark grey/black squares are positioned at the four corners where the inner and second borders meet.

* **Main Field:** The central area is filled with a solid light purple color.

* **Colored Regions & Blocks (within the purple field):**

* **Left Green Bar:** A vertical, solid green bar runs the full height of the purple field along its left edge, adjacent to the inner border.

* **Top Orange Region:** A rectangular orange block is located in the top-center of the field. Within this orange block, there is a smaller, dark red square positioned in its top-right quadrant.

* **White Shape:** A white, irregular shape resembling a stylized letter "A" or a simple house (a rectangle with a smaller rectangle cut out from its bottom center) is located in the lower-central part of the field.

* **Yellow Block:** A single, small yellow square is attached to the left side of a black shape in the lower-left quadrant.

* **Black Shapes:** Multiple black shapes of various sizes and forms are scattered across the field:

* An "L" shape in the upper-left quadrant.

* A small square in the upper-right quadrant.

* A horizontal rectangle in the center-right.

* A vertical rectangle on the far right.

* A horizontal rectangle in the lower-right.

* A complex shape in the lower-left, to which the yellow block is attached.

* A small square just above the white shape.

* **Bottom Bar:** A thin, segmented bar runs along the very bottom edge of the image, outside the main border. It is partially white on the left and black on the right.

### Detailed Analysis

* **Spatial Relationships:**

* The **green bar** acts as a left-side boundary or status indicator within the field.

* The **orange region with the red square** is a prominent feature in the upper area, possibly indicating a goal, hazard, or special zone.

* The **white shape** is centrally located in the lower half, suggesting it may be a player character, a key object, or a safe zone.

* The **black shapes** appear as obstacles, walls, or interactive elements distributed asymmetrically across the field, creating pathways and barriers.

* The **yellow block** is uniquely positioned, attached to a black obstacle, possibly indicating a switch, collectible, or point of interest.

* **Color Palette:** The colors are flat and distinct: light purple (background), green, orange, dark red, white, yellow, black, light grey, dark grey, and orange (outer border). This suggests a functional, non-realistic design where color denotes category or function.

### Key Observations

1. **Asymmetrical Design:** The layout is not symmetrical. Obstacles and features are placed irregularly, suggesting a designed path or puzzle rather than a random pattern.

2. **Isolated Elements:** The yellow block and the dark red square within the orange region are the only instances of their colors, making them visually salient and likely important.

3. **Pathways:** The arrangement of black shapes creates implied pathways, particularly around the central white shape and between the orange region and the lower part of the field.

4. **Layered Borders:** The multi-layered border (orange, dark grey, light grey) creates a strong visual frame, separating the "game space" from the external environment.

### Interpretation

This image is a technical diagram of a spatial layout, most likely a level map for a grid-based game or a puzzle schematic. The absence of text means all information is conveyed through color, shape, and position.

* **Function over Form:** The design prioritizes clear visual distinction between element types (background, obstacles, special zones, player) over aesthetic detail. This is characteristic of a prototype, a design document, or a debug view.

* **Implied Narrative or Mechanics:** The layout suggests a scenario where an entity (represented by the white shape) must navigate from a starting point (possibly near the green bar or the white shape's location) through a field of obstacles (black shapes) to interact with key objectives (the yellow block, the orange/red zone). The green bar could represent a health meter, a timer, or a progress indicator.

* **Investigative Reading:** The design invites analysis of possible routes and interactions. For example: Can the white shape move? Is the yellow block a key to open a path? Does the orange region represent a goal or a danger? The isolated nature of the red square within the orange block suggests it is a specific target or trigger within that larger zone.

* **Data Content:** The image does not contain quantitative data, trends, or textual facts. Its "information" is purely relational and spatial, defining a set of rules and positions within a bounded system. To understand it fully, one would need the accompanying ruleset or context for what the colors and shapes represent (e.g., "white = player, black = wall, yellow = key, orange = exit").

</details>

|

Figure 2: Top row (Public set): vc33, ls20, ft09. Bottom row (Private set): sp80, lp85, as66.

## Appendix B Appendix B: Per-Level Performance Statistics

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Performance of Different AI Agents in Level Solving Over Time

### Overview

The image displays a line chart comparing the performance of four different AI agent strategies in solving levels over a number of steps. The chart uses a logarithmic scale for the x-axis (Steps) and a linear scale for the y-axis (Levels solved). Each strategy is represented by a colored line with a corresponding shaded area, likely indicating confidence intervals or variance across multiple runs.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence bands.

* **X-Axis:**

* **Label:** "Steps"

* **Scale:** Logarithmic (base 10).

* **Major Tick Marks:** 10¹, 10², 10³, 10⁴, 10⁵.

* **Y-Axis:**

* **Label:** "Levels solved"

* **Scale:** Linear.

* **Range:** 0 to 30.

* **Major Tick Marks:** 0, 5, 10, 15, 20, 25, 30.

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Entries (from top to bottom):**

1. **Blue Line:** "Random Agent"

2. **Orange Line:** "+ Frame Segmentation"

3. **Green Line:** "+ New-Action Prioritization"

4. **Red Line:** "Graph Exploration"

* **Data Series:** Four distinct lines, each with a shaded band of the same color but lower opacity.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **Random Agent (Blue Line & Band):**

* **Trend:** Shows the slowest, most gradual upward slope. It begins near 0 levels solved and increases in a step-like fashion.

* **Key Points:**

* At ~10² steps: ~1 level solved.

* At ~10³ steps: ~5 levels solved.

* At ~10⁴ steps: ~10 levels solved.

* The line terminates at 10⁴ steps. The shaded blue band extends slightly above and below the line, indicating variance.

2. **+ Frame Segmentation (Orange Line & Band):**

* **Trend:** Performs better than the Random Agent, with a steeper initial slope that continues steadily.

* **Key Points:**

* At ~10² steps: ~3 levels solved.

* At ~10³ steps: ~10 levels solved.

* At ~10⁴ steps: ~17 levels solved.

* The line terminates at 10⁴ steps. The orange shaded band is wider than the blue band, suggesting higher variance in performance.

3. **+ New-Action Prioritization (Green Line & Band):**

* **Trend:** Follows a very similar trajectory to the "+ Frame Segmentation" agent, often overlapping or running just above it.

* **Key Points:**

* At ~10² steps: ~3 levels solved.

* At ~10³ steps: ~10 levels solved.

* At ~10⁴ steps: ~18 levels solved (slightly above the orange line).

* The line terminates at 10⁴ steps. The green shaded band overlaps significantly with the orange band.

4. **Graph Exploration (Red Line & Band):**

* **Trend:** Demonstrates the strongest performance by a significant margin. It has the steepest slope throughout and continues to improve well beyond 10⁴ steps.

* **Key Points:**

* At ~10² steps: ~5 levels solved.

* At ~10³ steps: ~14 levels solved.

* At ~10⁴ steps: ~21 levels solved.

* At ~10⁵ steps: ~30 levels solved (the maximum on the y-axis).

* The red shaded band is the widest, especially at higher step counts, indicating the greatest variance in outcomes for this method.

### Key Observations

* **Clear Performance Hierarchy:** There is a distinct and consistent ordering of performance: Graph Exploration > + New-Action Prioritization ≈ + Frame Segmentation > Random Agent.

* **Logarithmic Progress:** All agents show progress that appears roughly linear on this semi-log plot, suggesting that the number of levels solved increases proportionally to the *logarithm* of the number of steps taken.

* **Divergence at Scale:** The performance gap between the Graph Exploration agent and the others widens dramatically as the number of steps increases (moving right on the x-axis).

* **Plateau vs. Continued Growth:** The Random Agent, + Frame Segmentation, and + New-Action Prioritization agents all appear to plateau or end their evaluation at 10⁴ steps. The Graph Exploration agent is the only one shown continuing to 10⁵ steps, where it achieves a much higher score.

* **Variance:** The width of the shaded bands suggests that the Graph Exploration method, while highest performing, also has the most variable results. The Random Agent has the most consistent (though lowest) performance.

### Interpretation

This chart likely comes from a research paper or technical report in reinforcement learning or AI agent design, specifically for a task involving sequential problem-solving (e.g., navigating a video game or solving puzzles). The data demonstrates the effectiveness of increasingly sophisticated exploration strategies.

* **The "Random Agent"** serves as a baseline, showing that naive, unguided exploration yields slow progress.

* **"+ Frame Segmentation" and "+ New-Action Prioritization"** represent incremental improvements. They likely incorporate mechanisms to better perceive the environment or prioritize useful actions, leading to roughly double the performance of the random baseline at 10⁴ steps.

* **"Graph Exploration"** represents a more advanced paradigm. The name suggests it builds an internal model or graph of the environment's state space, allowing for more efficient, directed exploration. Its superior and sustained performance indicates that this structured approach is far more sample-efficient, solving more levels with the same number of steps and continuing to learn effectively over a longer timeframe.

* **The widening gap** implies that for complex, long-horizon tasks, the choice of exploration strategy becomes critically important. Simple heuristics provide limited gains, while a robust model-based approach like graph exploration unlocks significantly higher capability. The increased variance might be a trade-off for this higher potential, or it could reflect the inherent difficulty and stochasticity of the later levels being solved.

</details>

Figure 3: Levels solved as a function of environment steps for four methods: Random Agent, Random + Frame Segmentation, Random + Segmentation + New-Action Prioritization, and the full Graph Exploration method. The x-axis is logarithmic; each line shows the median over 5 runs and the shaded region shows the minimum–maximum range. Intermediate variants are shown up to 10,000 environment steps, while the Graph Explorer is plotted over the full evaluation budget.

Table 1: Per-level results on public games (ft09, ls20, vc33). For each game and level, we report the number of steps to solve the level (Stp), summarized as the median together with the minimum and maximum over 5 runs, and the solve rate (SR) over the same 5 runs. We use ‘NS‘ when a level is never solved within the step budget, and ‘-‘ when there is no such level for a given game.

| 1 2 | 125 $[48;340]$ 177 | 1 1 | 124 $[72;140]$ $3.2\times 10^{3}$ | 1 1 | 9 $[5;24]$ 7 | 1 1 |

| --- | --- | --- | --- | --- | --- | --- |

| $[5;433]$ | | $[1.9\times 10^{3};4.9\times 10^{3}]$ | | $[4;19]$ | | |

| 3 | $2.0\times 10^{4}$ | 1 | NS | 0 | 36 | 1 |

| $[3.0\times 10^{3};2.5\times 10^{4}]$ | | | | $[9;96]$ | | |

| 4 | NS | 0 | NS | 0 | 321 | 1 |

| $[298;541]$ | | | | | | |

| 5 | NS | 0 | NS | 0 | 287 | 1 |

| $[260;349]$ | | | | | | |

| 6 | NS | 0 | NS | 0 | $6.9\times 10^{4}$ | 0.8 |

| $[5.4\times 10^{4};8.3\times 10^{4}]$ | | | | | | |

| 7 | NS | 0 | NS | 0 | $4.7\times 10^{3}$ | 0.8 |

| $[1.5\times 10^{3};5.5\times 10^{3}]$ | | | | | | |

| 8 | NS | 0 | NS | 0 | 917 | 0.8 |

| $[627;929]$ | | | | | | |

| 9 | NS | 0 | - | - | NS | 0 |

| 10 | NS | 0 | - | - | - | - |

Table 2: Per-level results on private games (sp80, lp85, as66). Conventions as in Table 1.

| 1 2 | 227 $[153;373]$ $3.6\times 10^{4}$ | 1 1 | 143 $[106;181]$ $2.9\times 10^{3}$ | 1 1 | 39 $[13;47]$ 44 | 1 1 |

| --- | --- | --- | --- | --- | --- | --- |

| $[2.5\times 10^{4};5.0\times 10^{4}]$ | | $[1.1\times 10^{3};3.2\times 10^{4}]$ | | $[24;65]$ | | |

| 3 | $3.9\times 10^{4}$ | 0.4 | $1.7\times 10^{4}$ | 1 | 123 | 1 |

| $[3.6\times 10^{4};4.2\times 10^{4}]$ | | $[1.0\times 10^{4};8.2\times 10^{4}]$ | | $[25;339]$ | | |

| 4 | NS | 0 | $1.6\times 10^{3}$ | 1 | 99 | 1 |

| $[727;2.0\times 10^{4}]$ | | $[69;350]$ | | | | |

| 5 | NS | | $4.6\times 10^{3}$ | 0.8 | $2.2\times 10^{3}$ | 1 |

| $[2.2\times 10^{3};1.4\times 10^{4}]$ | | $[1.2\times 10^{3};2.9\times 10^{3}]$ | | | | |

| 6 | NS | 0 | $1.3\times 10^{4}$ | 0.4 | $1.3\times 10^{3}$ | 1 |

| $[1.1\times 10^{4};1.5\times 10^{4}]$ | | $[112;1.6\times 10^{3}]$ | | | | |

| 7 | NS | 0 | 334.5 | 0.4 | 363 | 1 |

| $[104;565]$ | | $[128;670]$ | | | | |

| 8 | NS | 0 | $9.9\times 10^{3}$ | 0.2 | $1.3\times 10^{3}$ | 1 |

| $[9.9\times 10^{3};9.9\times 10^{3}]$ | | $[168;2.9\times 10^{3}]$ | | | | |

| 9 | - | - | - | - | $3.4\times 10^{3}$ | 1 |

| $[361;8.7\times 10^{3}]$ | | | | | | |

| 10 | - | - | - | - | - | - |