# Constrained Language Model Policy Optimization via Risk-aware Stepwise Alignment

> Corresponding author: .

## Abstract

When fine-tuning pre-trained Language Models (LMs) to exhibit desired behaviors, maintaining control over risk is critical for ensuring both safety and trustworthiness. Most existing safety alignment methods, such as Safe RLHF and SACPO, typically operate under a risk-neutral paradigm that is insufficient to address the risks arising from deviations from the reference policy and offers limited robustness against rare but potentially catastrophic harmful behaviors. To address this limitation, we propose Risk-aware Stepwise Alignment (RSA), a novel alignment method that explicitly incorporates risk awareness into the policy optimization process by leveraging a class of nested risk measures. Specifically, RSA formulates safety alignment as a token-level risk-aware constrained policy optimization problem and solves it through a stepwise alignment procedure that yields token-level policy updates derived from the nested risk measures. This design offers two key benefits: (1) it mitigates risks induced by excessive model shift away from a reference policy, and (2) it explicitly suppresses low-probability yet high-impact harmful behaviors. Moreover, we provide theoretical analysis on policy optimality under mild assumptions. Experimental results demonstrate that our method achieves high levels of helpfulness while ensuring strong safety and significantly suppresses tail risks, namely low-probability yet high-impact unsafe responses.

## I Introduction

Rapid advancements of large language models (LLMs) have propelled artificial intelligence forward at an unprecedented pace [2024_kumar_llms_survey, 2025_xiao_llms_densing_law, 2025_zhang_llm_chemistry], enabling it to demonstrate remarkable capabilities in diverse domains, including content creation [2024_chang_LLMs_evaluation_survey], human-computer interaction [2024_sadeghi_human-computer_interaction_review], and machine translation [2024_ghassemiazghandi_ChatGPT_translation_evaluation], among others [2024_zhao_llm_empowered_recommendation, 2025_yan_llm-based_multi-agent_systems_survey]. However, the increasing integration and deployment of LLMs in safety-critical applications, such as healthcare [2024_haltaufderheide_ChatGPT_healthcare], education [2024_wen_llm_education], and financial services [2024_xie_llm_financial], has heightened concerns about their potential to generate harmful outputs. For instance, in healthcare settings, LLMs may produce clinically plausible but incomplete responses, such as omitting critical diagnoses or neglecting key patient history, which can lead to dangerous recommendations. In financial advising, they may issue overconfident or contextually inappropriate suggestions due to inadequate risk assessment. Consequently, aligning LLMs with human values is essential to mitigate risks stemming from misalignment between model behavior and human intent [2024_shi_llms_safety_survey, 2025_zhang_llm_safety_security_privacy_survey, 2025_liu_llm_safety_evaluation_survey].

To address this challenge, post-training techniques [2023_ji_ai_alignment_survey, 2025_lai_llm_post-training_survey, 2025_kumar_llm_post-training] have been widely employed to align LLMs with human values and intentions. Reinforcement Learning from Human Feedback (RLHF) [2017_christiano_rlhf, 2022_bai_Anthropic-HH] and Direct Preference Optimization (DPO) [2023_rafailov_dpo] are two representative methods that have been widely adopted. However, a substantial body of literature [2024_shi_llms_safety_survey, 2025_zhang_llm_safety_security_privacy_survey] has pointed out that these methods offer only limited protection against harmful outputs because LLM alignment involves a fundamental trade-off between helpfulness and harmlessness, a balance that is difficult for a single scalar reward signal or preference objective to capture. For example, in an attempt to be helpful, an LLM can provide detailed instructions on how to build a weapon or bypass security systems. Although such responses are technically accurate and consistent with the user’s request, they are clearly harmful and ethically unacceptable. Conversely, over-prioritizing harmlessness may cause the model to become overly cautious, leading it to refuse benign queries or withdraw from providing meaningful assistance, thereby undermining its usefulness. Therefore, developing alignment approaches that explicitly balance the trade-off between helpfulness and harmlessness is essential to ensure LLM outputs are simultaneously useful, safe, and trustworthy.

To enhance safety, a promising paradigm involves decoupling the reward and safety objectives: an LLM is fine-tuned to maximize expected reward subject to a safety constraint, with the reward objective and the safety constraint each learned from distinct datasets of human or AI-generated feedback. Safe RLHF [2024_dai_safe-RLHF] is a pioneering approach that introduces safe Reinforcement Learning (RL) techniques [2015_garcia_safe_RL_survey, 2024_gu_safe-RL_review] into LLM alignment by training separate reward and costs models on human preference datasets and optimizing an LLM policy using safe RL. However, the Safe RLHF pipeline is inherently more complex than the already notoriously complex RLHF framework, as it involves training a separate cost model and solving a constrained policy optimization problem via PPO-Lagrangian [2019_ray_ppo-larg], which jointly optimizes the policy and a Lagrange multiplier. In addition, Safe RLHF often suffers from exaggerated safety behaviors, a phenomenon in which models generate responses that are harmless but unhelpful. Subsequently, several works have proposed lightweight solutions. To mention a few, one-shot safety alignment [2024_huang_one-shot_safety_alignment] leverages the closed-form solution of RLHF in the distribution space to compute an optimal dual variable, eliminating the simultaneous primal-dual update. C-DPO [2024_liu_C-DPO] replaces the primal-dual update scheme of PPO with a dual-gradient descent method over DPO, thereby simplifying the optimization pipeline and enhancing efficiency. SafeDPO [2025_kim_safedpo] integrates safety alignment implicitly into a single-stage policy update by adding one safety-focused hyperparameter and making minor adjustments to the DPO algorithm. SACPO [2024_wachi_sacpo] proposes a stepwise alignment method with respect to individual safety metrics by leveraging simple yet effective algorithms such as DPO, thereby effectively mitigating exaggerated safety behaviors [2023_bianchi_Safety-tuned_LLaMAs]. However, these methods suffer from two key limitations: (1) they employ risk-neutral constraints that cannot effectively control low-probability yet high-impact safety violations; and (2) even though KL divergence is used to constrain deviations from the reference model, they cannot effectively control the risk of degradation in the model’s reasoning and decision-making capabilities caused by model drift.

In this paper, we focus on safety alignment of LMs from a risk-sensitive perspective. Specifically, we propose a novel risk-aware constrained policy optimization method by introducing nested risk measures into token level policy update, and provide corresponding theoretical analysis and empirical results.

Main Challenges: The problem we study involves enhancing helpfulness and ensuring policy safety, which poses two key challenges: (1) improving both helpfulness and safety without degrading the model’s intrinsic reasoning and decision-making capabilities, and (2) explicitly suppressing low-probability yet high-impact harmful behaviors during policy optimization. To tackle these challenges, we propose the Risk-aware Stepwise Alignment (RSA) method, and comprehensively evaluate its effectiveness through extensive experiments.

Algorithm Novelty and Theoretical Analysis: Neither Safe RLHF nor SACPO addresses risk-aware constrained policy optimization, despite the critical importance and urgency of this challenge in the deployment of LLMs. In this paper, we propose a novel risk-aware, token-level constrained optimization objective, together with a monotonic policy improvement algorithm and a risk-aware stepwise alignment algorithm, to effectively enhancing helpfulness and safety. Moreover, we theoretically establish that the optimal policy can be recovered through stepwise alignment by (1) deriving the mapping from the risk-aware state-action value function to the reward-aligned policy, and (2) establishing the relationship between the optimal policy and the reward-aligned policy.

Experiment Contributions: We evaluate the proposed method on text generation tasks and multi-turn conversations. Experimental results show that our method achieves high helpfulness scores while maintaining strong safety across a diverse set of harmful prompt benchmarks.

## II Related Works

### II-A Risk in Alignment

When fine-tuning a LM to be both safe and helpful, multiple sources of risk arise, primarily falling into the following three categories: (1) Constraint Violation: Hard-to-detect yet high-impact unsafe responses, such as tail risks under safety constraints, which have been consistently observed in empirical evaluations of LLMs [2025_liu_llm_safety_evaluation_survey, 2024_yuan_R-judge, 2024_mazeika_harmbench]; (2) Model Drift: New risks introduced by deviation from the reference model during alignment training, where aggressive policy updates may degrade behaviors implicitly encoded in the base model [2024_zeng_tdpo, 2025_zhang_ra-dpo]; and (3) Data Noise: Inherent uncertainty in human preferences due to both inter-annotator disagreements [2024_ramesh_grpo], context-dependent biases [2024_peuter_preference-choice], and systematic deviations from expected utility theory in human risk perception [1992_tversky_prospect-theory, 2024_ethayarajh_kto]. In this paper, we focus on the first two types of risk by introducing risk-sensitive measures.

It is worth noting that two classes of risk measures, namely nested and static risk measures, have been widely adopted in the RL field. Static risk measures [2021_fei_static-risk, 2022_bastani_static-risk, 2023_wang_static-risk, 2023_zhao_static-risk] are straightforward to interpret but generally yield policies that are non-Markovian and history-dependent. Nested risk measures [2022_du_Iterated-CVaR, 2024_chen_Iter-CVaR, 2024_zhao_ra-pbrl], which incorporate a Bellman-type recursion, enforce risk sensitivity of the value iteration at each step, resulting in a more conservative approach. In this paper, we adopt nested risk measures because they recursively adhere to the Bellman equation and admit a Markovian formulation via state augmentation, thereby enabling tractable risk-aware policy optimization.

### II-B LLMs Alignment

With the enhanced capabilities of LLMs, new and heightened risks continue to emerge, raising growing concerns about their safety and trustworthiness [2023_ji_ai_alignment_survey, 2024_gao_PL_for_LLM_survey]. These concerns have catalyzed research into aligning LLMs with human intentions and values. RLHF [2022_bai_Anthropic-HH] and DPO [2023_rafailov_dpo] are two representative alignment algorithms and have been extensively applied in the fine-tuning of commercial LLMs such as GPT-4 [2023_achiam_gpt] and Gemini [2023_team_gemini], as well as in the training of open-source models including Yi model family [2024_young_yi] and Mixtral 8x7B-Instruct [2024_jiang_mixtral]. Moreover, methods such as SimPO [2024_meng_simpo] and TDPO [2024_zeng_tdpo] have attracted significant attention for effectively mitigating issues such as excessively verbose generations and large KL divergence between the policy and reference models. However, these methods drive alignment under a single scalar reward or preference objective, which is insufficient for tasks that demand stringent safety guarantees.

### II-C Safe Alignment

Safety and trustworthiness in LLMs differ fundamentally from other performance-oriented metrics such as helpfulness, as they correspond to hard behavioral constraints that must be upheld regardless of utility gains, thereby necessitating explicit and decoupled treatment in alignment objectives [2024_shi_llms_safety_survey, 2025_liu_llm_safety_evaluation_survey, 2025_zhang_llm_safety_security_privacy_survey]. Safe RLHF [2024_dai_safe-RLHF] trains separate reward and cost models on human preference data and then employs safe RL to optimize a policy that jointly maximizes helpfulness and minimizes harm. One-shot Safety Alignment [2024_huang_one-shot_safety_alignment] and SafeDPO [2025_kim_safedpo] both replace RLHF with DPO and simplify the computation of dual variables, thereby improving training efficiency and circumventing iterative optimization of the Lagrange multipliers. SACPO [2024_wachi_sacpo] aligns LLMs with individual safety metrics in a stepwise manner using DPO-based updates, which mitigates over-conservative behaviors (e.g., excessive refusals) while maintaining training stability and low computational overhead. However, these methods operate under a risk-neutral paradigm that fails to control low-probability yet high-impact safety violations. Moreover, despite the use of KL divergence to limit deviation from the reference policy, they remain could vulnerable to performance degradation in reasoning and decision-making due to model drift.

## III Preliminaries

### III-A Preference-based Alignment

Consider a LM alignment task where $x$ denotes an input prompt (question) and $y$ denotes the generated response (answer), with both $x$ and $y$ being sequences of tokens. Human preference data is denoted by $y_{w}\succ y_{l}|x$ , indicating that, given prompt $x$ , response $y_{w}$ (win) is preferred over $y_{l}$ (lose).

To align with human preferences, a preference predictor adhering to the Bradley-Terry (BT) [1952_bradley_BT-model] model has been widely adopted, where the likelihood of a preference pair is typically formulated as follow:

$$

P_{\mathrm{BT}}\left(y_{w}\succ y_{l}|x\right)=\frac{\exp\left(r^{\ast}\left(x,y_{w}\right)\right)}{\exp\left(r^{\ast}\left(x,y_{w}\right)\right)+\exp\left(r^{\ast}\left(x,y_{l}\right)\right)}, \tag{1}

$$

where $r^{\ast}(x,y_{w})$ and $r^{\ast}(x,y_{l})$ are the reward functions over the entire prompt-responses from the preferred and dispreferred answers, respectively. The alignment objective commonly is to maximize the expected constrained reward

$$

\pi^{\ast}=\arg\max_{\pi\in\Pi}\mathcal{J}^{r}(\pi), \tag{2}

$$

where $\mathcal{J}^{r}(\pi)=\mathbb{E}^{\pi}_{x\sim\mathcal{D}}\left[r^{*}(x,y)\right]-\beta\mathbb{D}_{\mathrm{KL}}\left[\pi_{\theta}(y|x)\,||\,\pi_{\mathrm{ref}}(y|x)\right]$ , $\Pi=\left\{\pi\in\Pi\mid\mathcal{J}^{c}(\pi)\leq d\right\}$ is the feasible policy set where $\mathcal{D}$ is the human preference dataset, $\beta$ is the coefficient of the reverse KL divergence penalty, $\pi_{\mathrm{ref}}\left(\cdot|x\right)$ is the policy of a fixed reference model, and $\pi_{\theta}\left(\cdot|x\right)$ represents the policy of the trained model, initialized with $\pi_{\theta}=\pi_{\mathrm{ref}}$ .

### III-B Risk measures

It is more desirable to keep risk under control for language generation tasks rather than relying solely on a risk-neutral criterion, which ignores the distributional characteristics of rewards, especially in applications that may have potential broad societal impact. Therefore, we introduce the risk-sensitive criterion [2014_bauerle_more-RsMDP, 2022_wang_risk-averse-autonomous-systems] to quantify potential hidden risks.

Let $(\mathcal{Z},\mathcal{F})$ be a measurable space, and $\rho:\mathcal{Z}\rightarrow\mathbb{R}$ as a risk measure of the cost that maps uncertain outcomes $Z\in\mathcal{Z}$ to the real line. The risk measure optimization problem can be formulate as follow:

$$

\min_{\pi\in\Pi}\rho^{\pi}(Z), \tag{3}

$$

where $Z$ depends on the selection policy $\pi$ . In this paper, the risk measure function is required to satisfy the following properties for all $Z,Z^{\prime}\in\mathcal{Z}$ : Concavity: $\forall\;\lambda\in\left[0,1\right]:\eta\left(\lambda Z+\left(1-\lambda\right)Z^{\prime}\right)\geq\lambda\eta\left(Z\right)+\left(1-\lambda\right)\eta\left(Z^{\prime}\right)$ ; Translation Invariance: $\forall\;\epsilon\in\mathbb{R}:\eta\left(Z+\epsilon\right)=\eta\left(Z\right)+\epsilon$ . This class captures a broad range of useful objectives, including the popular Conditional Value-at-Risk (CVaR) [1997_artzner_CVaR, 2000_rockafellar_CVaR, 2015_chow_cvar] and Entropic Risk Measure (ERM) [2002_follmer_erm, 2023_hau_ERM].

### III-C Safe Policy Optimization

For preference-based LM alignment, it is more desirable to keep risk under control in a sequential manner and time-consistent, rather than relying solely on a risk-neutral criterion at response-level, which ignores the fact that responses are generated autoregressively, token-by-token, and overlooks the distributional characteristics of rewards or costs, especially in safety-critical tasks. Therefore, we model the preference-based safe policy optimization problem as a Constrained Markov Decision Process (CMDP): $\mathcal{M}=\langle\mathcal{S},\mathcal{A},p,r,c,d,\gamma,T\rangle$ , where $\mathcal{S}$ and $\mathcal{A}$ represent the finite state and action spaces, respectively; $p:\mathcal{S}\times\mathcal{A}\rightarrow\mathcal{S}$ is the probabilistic transition function; $r$ and $c$ are the reward refurn and cost refurn over the entire prompt-response, respectively; $d$ is the cost threshold, $\gamma$ is the discount factor, and $T$ is the length of the entire prompt-response.

At each timestep $t$ , the state $s_{t}=\left[x,y^{<t}\right]\in\mathcal{S}$ consists of the prompt and the generated response up to the previous step, and action $a_{t}=y^{t}\in\mathcal{A}$ corresponds to the current generated token. For a given prompt $x$ and the first $t-1$ tokens $y^{<t}$ of the response $y$ , the probability distribution of the next token conditioned on $[x,y^{<t}]$ is denoted by $\pi_{\theta}(\cdot|[x,y^{<t}])$ . Note that $y^{<1}=[\;]$ is an empty sequence. Therefore, we denote $\left[x\right]=\left[x,[\;]\right]=\left[x,y^{<1}\right]$ . For convenience, below denote $\pi_{t}=\pi_{\theta}\left(\cdot|\left[x,y^{<t}\right]\right)$ . Then, under the nested risk measures, the state-action value and state value respectively can be respectively expressed in Bellman equation type as follows:

$$

\begin{cases}Q^{c}_{\pi}\left(s_{t},a_{t}\right)=C\left(s_{t},a_{t}\right)+\operatorname{\Phi}^{\mu}\left(V_{\pi}\left(s_{t+1}\right)\right),\\

V^{c}_{\pi}\left(s_{t}\right)=\mathbb{E}_{\pi}\left[Q^{c}_{\pi}\left(s_{t},a_{t}\right)\right],\\

V^{c}_{\pi}\left(s_{T}\right)=C\left(s_{T}\right),\end{cases} \tag{4}

$$

where $\operatorname{\Phi}(\cdot)$ is a risk measure function with a risk control parameter $\mu$ .

## IV Method

This section proposes a novel safety alignment method named Risk-aware Stepwise Alignment (RSA). Specifically, we reformulate a risk-aware Bellman equation and design a constrained optimization objective that jointly enforces safety constraints and guarantees monotonic policy improvement under a nested risk measure. Then, we establish two key theoretical connections within the stepwise alignment framework: (i) between the risk-aware state-action value function and the reward-aligned policy, and (ii) between reward-aligned policy and the risk-aware optimal policy under mild assumption. These connections embed risk awareness into the selection of each token, effectively mitigating model bias and suppressing low-probability, high-impact tail risks. Finally, we provide a formal analysis of the optimization objective, derive the corresponding training loss function, and present a practical algorithm for implementation.

### IV-A Risk-aware Constrained Policy Optimization

In this subsection, we aim to construct a constrained policy optimization objective that incorporates risk awareness and guarantees monotonic policy improvement. Specifically, by leveraging the property that the state at the previous timestep is a subset of the state at the current timestep, i.e., $\left[x,y^{<t-1}\right]\subset\left[x,y^{<t}\right]$ , we reconstruct an augmented CMDP [2024_zhao_ra-pbrl], where the augmented value function is defined as $\tilde{V}_{\pi}(s_{t})=V_{\pi}(s_{t})+R_{1:t-1}$ , to circumvent the nonlinear Bellman-type recursion and the non-law-invariant issue [2023_hau_ERM] that arise when incorporating nested risk measures. Based on the Lemma 3.6 in [2024_zhao_ra-pbrl], the recursive Bellman equation in Equation (4) can be reformulated as a classical Bellman equation, where the risk-aware state-action value and state-value functions in terms of reward can be rewritten as

$$

\begin{cases}\tilde{Q}^{r}_{\pi}\left(s_{t},a_{t}\right)=\operatorname{\Phi}^{\mu}\left(\tilde{V}^{r}_{\pi}\left(a_{t+1}\circ\left(s_{t},a_{t}\right)\right)\right),\\

\tilde{V}^{r}_{\pi}\left(s_{t}\right)=\mathbb{E}_{\pi}\left[\tilde{Q}^{r}_{\pi}\left(s_{t},a_{t}\right)\right],\\

\tilde{V}^{r}_{\pi}\left(s_{T}\right)=R\left(s_{T}\right),\end{cases} \tag{5}

$$

where $r=\sum_{t=1}^{T}\gamma^{t-1}R\left(s_{t},a_{t}\right)$ represents the reward over the entire prompt-response, and the operator $\circ$ denotes the concatenation of the state and action. Similarly, the risk-aware state-action value and state-value functions in terms of cost can be rewritten as

$$

\begin{cases}\tilde{Q}^{c}_{\pi}\left(s_{t},a_{t}\right)=\operatorname{\Phi}^{\mu}\left(\tilde{V}^{c}_{\pi}\left(a_{t+1}\circ\left(s_{t},a_{t}\right)\right)\right),\\

\tilde{V}^{c}_{\pi}\left(s_{t}\right)=\mathbb{E}_{\pi}\left[\tilde{Q}^{c}_{\pi}\left(s_{t},a_{t}\right)\right],\\

\tilde{V}^{c}_{\pi}\left(s_{T}\right)=C\left(s_{T}\right),\end{cases} \tag{6}

$$

where $c=\sum_{t=1}^{T}\gamma^{t-1}C\left(s_{t},a_{t}\right)$ represents the cost over the entire prompt-response.

Remark: It is noteworthy that Equation (5) and Equation (6) satisfy the standard requirements for transformer-based long-sequence modeling in LLMs. In addition, there is a significant difference in the computation of $V_{\pi}\left(s_{t}\right)$ and $\tilde{V}_{\pi}\left(s_{t}\right)$ ; their relationship is derived in Appendix A-A.

Based on Equation (5), the risk-aware advantage function can be formulated as:

$$

\tilde{A}^{r}_{\pi}\left(s_{t},z\right)=\tilde{Q}^{r}_{\pi}\left(s_{t},z\right)-\left[f_{\rho}(\tilde{V}_{\pi}\left(s_{t}\right),\eta)+g_{\rho}(\eta)\right], \tag{7}

$$

where $z\sim\pi_{t}$ .

Furthermore, we design a new risk-aware objective function:

$$

\bar{\pi}_{t}=\arg\max_{\hat{\pi}_{t}}\mathbb{E}_{z\sim\hat{\pi}_{t}}\left[\tilde{A}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)-\beta\mathbb{D}_{\mathrm{KL}}\left(\hat{\pi}_{t}\|\pi_{\mathrm{ref},t}\right)\right]. \tag{8}

$$

The objective function maximizes a risk-sensitive advantage function subject to a KL divergence constraint, thereby striking a balance between reward maximization and time-consistent risk control. Then, by utilizing the upper-bound version of Theorem 1 of [2015_schulman_trpo], the following inequality holds

$$

\mathcal{J}^{c}(\bar{\pi}_{t})\leq\mathcal{J}^{c}(\pi_{t})+\mathbb{E}_{z\sim\bar{\pi}_{t}}\tilde{A}^{c}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)+\beta\mathbb{D}_{\mathrm{KL}}\left(\bar{\pi}_{t}\|\pi_{\mathrm{ref},t}\right). \tag{9}

$$

Moreover, maximizing the objective in Equation (8) leads to guaranteed policy improvement, as the following proposition, whose proof is provided in the Appendix A-B.

**Proposition IV.1**

*Given two policies $\pi$ and $\bar{\pi}$ , if for any state $s_{t}=\left[x,y^{<t}\right],$ $\mathbb{E}_{z\sim\bar{\pi}_{t}}\left[\tilde{A}_{\pi}\left(s_{t},z\right)\right]\geq 0$ , then we can conclude $\mathbb{E}_{x\sim\mathcal{D}}\left[\tilde{V}_{\bar{\pi}}(s_{1})\right]\geq\mathbb{E}_{x\sim\mathcal{D}}\left[\tilde{V}_{\pi}(s_{1})\right].$*

Input: Initialize a reference policy $\pi_{\mathrm{ref}}$ , a policy of the trained model $\pi$ , and a cost threshold $d$ .

1 for $t=0,1,\dots$ do

2 Compute the advantage functions $\tilde{A}^{r}_{\pi_{\mathrm{ref}}}(s_{t},z)$ and $\tilde{A}^{c}_{\pi_{\mathrm{ref}}}(s_{t},z)$ .

3 Make an update policy $\bar{\pi}_{t}$ by

$$

\arg\max_{\hat{\pi}_{t}\in\overline{\Pi}}\mathbb{E}_{z\sim\hat{\pi}_{t}}\left[\tilde{A}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)-\beta\mathbb{D}_{\mathrm{KL}}\left(\hat{\pi}_{t}\|\pi_{\mathrm{ref},t}\right)\right],

$$

where $\overline{\Pi}$ is a safe policy set, given by

| | $\displaystyle\overline{\Pi}=$ | $\displaystyle\left\{\hat{\pi}_{t}\in\Pi\,\middle|\mathcal{J}^{c}(\pi_{t})+\mathbb{E}_{z\sim\hat{\pi}_{t}}\tilde{A}^{c}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)\right.$ | |

| --- | --- | --- | --- |

4 end for

Output: An optimal policy $\bar{\pi}$ .

Algorithm 1 Policy iteration with monotonic improvement property.

To summarize, we provide Algorithm 1 that guarantees both safety constraints satisfaction and monotonic performance improvement, which is formally stated in the following theorem.

**Theorem IV.2**

*If a sequence of learn policies $(\pi_{t})_{t=1}^{T}$ is obtained from Algorithm 1, then it has the monotonic improvement property, $\mathcal{J}^{r}(\pi_{t+1})\geq\mathcal{J}^{r}(\pi_{t})$ , as well as it satisfies the safety constraints $\mathcal{J}^{c}(\pi_{t})\leq d_{t}$ .*

### IV-B Optimal Policy by Stepwise Alignment

In this subsction, we focus on how to solve the risk-aware constrainted policy optimization problem in Algorithm 1. Specifically, we first introduce a standard Lagrangian, which is defined as $\mathcal{L}(\bar{\pi}_{t},\lambda,\beta):=\mathcal{J}^{r}(\bar{\pi}_{t},\beta)-\lambda(\mathcal{J}^{c}(\bar{\pi}_{t})+\mathbb{E}_{z\sim\bar{\pi}_{t}}\tilde{A}^{c}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)+\beta\mathbb{D}_{\mathrm{KL}}\left(\bar{\pi}_{t}\|\pi_{\mathrm{ref},t}\right)-d_{t})$ , where $\pi_{t}$ is the primal variable and $\lambda\in\mathbb{R}_{+}$ is a dual variable or the Lagrangian multiplier. Note that, for any dual variable $\lambda\in\mathbb{R}_{+}$ , we can convert the original optimization problem into the following max-min problem:

$$

\displaystyle\max_{\hat{\pi}_{t}}\min_{\begin{subarray}{c}\lambda\geq 0\end{subarray}} \displaystyle\mathbb{E}_{z\sim\hat{\pi}_{t}}\left[\tilde{A}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)-\lambda\tilde{A}^{c}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)\right] \displaystyle-(1+\lambda)\beta\mathbb{D}_{\mathrm{KL}}\left(\hat{\pi}_{t}\|\pi_{\mathrm{ref},t}\right)+\lambda\zeta_{t}, \tag{10}

$$

where $\zeta_{t}=d_{t}-\mathcal{J}^{c}(\pi_{t})$ . Unfortunately, it is not always advisable to solve the max-min problem due to scalarization fallacy [2023_ding_rpg_pd].

Inspired by [2024_wachi_sacpo], we adpot a two-step alignment method, i.e., first aligning the reward-driven policy, and then aligning the safety policy to obtain the optimal policy $\pi^{\ast}_{t}$ of the optimization problem (10). Specifically, we first start from Equation (8) to obtain the mapping from the risk-aware state-action function $\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)$ to the reward-aligned policy $\pi_{r^{\ast}_{t}}^{\ast}$ as stated in the following lemma.

**Proposition IV.3**

*The constrained problem in Equation (8) has the closed-form solution:

$$

\pi_{r^{\ast}_{t}}^{\ast}=\frac{1}{Z_{\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}}(s_{t};\beta)}\pi_{\mathrm{ref},t}e^{\frac{1}{\beta}\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)}, \tag{11}

$$

where $Z_{\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}}(s_{t};\beta)=\mathbb{E}_{z\sim\pi_{\mathrm{ref},t}}e^{\frac{1}{\beta}\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)}$ is the partition function.*

* Proof*

| | | $\displaystyle\max_{\hat{\pi}_{r_{t}}}\mathbb{E}_{z\sim\hat{\pi}_{r_{t}}}\tilde{A}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)-\beta\mathbb{D}_{\mathrm{KL}}\left(\hat{\pi}_{r_{t}}\|\pi_{\mathrm{ref},t}\right)$ | |

| --- | --- | --- | --- |

where $Z_{\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}}(s_{t};\beta)=\mathbb{E}_{z\sim\pi_{\mathrm{ref},t}}e^{\frac{1}{\beta}\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)}$ , which finishes the proof. ∎

To proceed with our theoretical analysis, we make a mild assumption regarding the Slater conditions and present the following lemma about strong duality and boundness of $\lambda^{\ast}$ .

**Assumption IV.4 (Slater condition)**

*There exist a policy $\bar{\pi}_{t}\in\Pi$ and $\xi\in\mathbb{R}_{+}$ such that $\zeta_{t}\leq\xi$ .*

**Lemma IV.5 (Strong duality and boundness ofλ∗\lambda^{\ast})**

*Define the dual function $D(\lambda,\beta):=\max_{\pi}\mathcal{L}(\pi,\lambda,\beta)$ and the optimal dual variable $\lambda^{\ast}:=\arg\min_{\lambda\geq 0}D(\lambda,\beta)$ . Under Assumption IV.4, there exists a primal-dual pair $(\pi^{\ast},\lambda^{\ast})$ such that $R(\pi^{\ast},\beta)=D^{\ast}(\beta)=L(\pi^{\ast},\lambda^{\ast},\beta)$ , and $0\leq\lambda^{\ast}\leq\Lambda$ , where $\Lambda:=\frac{R(\pi^{\ast},\beta)-R(\bar{\pi},\beta)}{\xi}$ .*

Based on Lemma IV.5, we can obtain the relationship between the optimal policy $\pi^{\ast}_{t}$ of the policy optimization problem (10) and the reward-aligned policy $\pi^{\ast}_{r^{\ast}_{t}}$ .

**Theorem IV.6 (Relation betweenπrt∗∗\pi^{\ast}_{r^{\ast}_{t}}andπt∗\pi^{\ast}_{t})**

*The optimal policy of the optimization problem (10) is represented as

$$

\pi^{\ast}_{t}=\frac{1}{Y\left(s_{t};\beta\right)}\pi^{\ast}_{r^{\ast}_{t}}e^{\frac{1}{\beta^{\prime}}\tilde{Q}^{c}_{\pi_{\mathrm{ref}}}(s_{t},z)}, \tag{12}

$$

where $Y\left(s_{t};\beta\right):=\frac{Z_{\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}-\lambda^{\ast}\tilde{Q}^{c}_{\pi_{\mathrm{ref}}}}(s_{t};\beta)}{Z_{\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}}(s_{t};\beta)},\beta^{\prime}=(1+\lambda^{\ast})\beta/\lambda^{\ast}.$*

* Proof*

Given an optimal $\lambda^{\ast}$ , we derive the solution to the optimization problem in Equation (10) following a similar approach to the proof of Proposition IV.3:

| | | $\displaystyle\max_{\hat{\pi}_{t}}\mathbb{E}_{z\sim\hat{\pi}_{t}}\left[\tilde{A}^{r}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)-\lambda^{\ast}\tilde{A}^{c}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)\right]$ | |

| --- | --- | --- | --- |

where

| | | $\displaystyle\tilde{Q}_{\pi_{\mathrm{ref}}}\left(s_{t},z\right)=\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}(s_{t},z)-\lambda^{\ast}\tilde{Q}^{c}_{\pi_{\mathrm{ref}}}(s_{t},z),$ | |

| --- | --- | --- | --- | Therefore, we have:

$$

\pi^{\ast}_{t}=\frac{\pi_{\mathrm{ref},t}e^{\frac{1}{(1+\lambda^{*})\beta}\left(\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}(s_{t},z)-\lambda^{\ast}\tilde{Q}^{c}_{\pi_{\mathrm{ref}}}(s_{t},z)\right)}}{Z_{\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}-\lambda^{*}\tilde{Q}^{c}_{\pi_{\mathrm{ref}}}}(s_{t};\beta)}.

$$ Then, the following chain of equations holds:

| | $\displaystyle\pi^{\ast}_{t}=$ | $\displaystyle\frac{\pi_{\mathrm{ref},t}e^{\frac{1}{(1+\lambda^{*})\beta}\left(\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}(s_{t},z)-\lambda^{\ast}\tilde{Q}^{c}_{\pi_{\mathrm{ref}}}(s_{t},z)\right)}}{Z_{\tilde{Q}^{r}_{\pi_{\mathrm{ref}}}-\lambda^{\ast}\tilde{Q}^{c}_{\pi_{\mathrm{ref}}}}(s_{t};\beta)}$ | |

| --- | --- | --- | --- |

Therefore, we obtained the desired theorem. ∎

Input: Initialize a reference policy $\pi_{\mathrm{ref}}$ , a policy of the trained model $\pi$ , and a cost threshold $d$ .

1 for $t=0,1,\dots$ do

// Reward-optimal policy alignment.

2 Compute the risk-aware state-action function $\tilde{Q}^{r}_{\pi_{t}}(s_{t},z)$ in Equation (5).

3 Compute the reward-optimal policy $\pi^{\ast}_{r^{\ast}_{t}}$ in Equation (11).

// Optimal policy alignment.

4 Compute the risk-aware state-action function $\tilde{Q}^{c}_{\pi_{t}}(s_{t},z)$ in Equation (6).

5 Compute the optimal policy $\pi^{\ast}_{t}$ in Equation (12).

6

7 end for

Output: The optimal policy $\pi^{\ast}$ .

Algorithm 2 Risk-aware Stepwise Alignment (RSA)

### IV-C Loss Function and Formal Analysis

By rearranging Equation (12), we obtain the expression of the cost state-action function in terms of the optimal policy:

$$

\tilde{Q}_{\pi_{\mathrm{ref},t}}^{c}=\beta\log\frac{\pi_{t}^{\ast}}{\pi^{\ast}_{r^{\ast}_{t}}}+\beta\log Y\left(s_{t};\beta\right). \tag{13}

$$

In this way, by utilizing $c=\sum_{t=1}^{T}\gamma^{t-1}C\left(s_{t},a_{t}\right)$ , we can reformulate the BT model to be directly tied to the risk-aware optimal policy $\pi^{\ast}$ and the reward-aligned policy $\pi_{r^{\ast}}^{\ast}$ , which is summarized in the following theorem, whose proof is provided in the Appendix A-C.

**Theorem IV.7**

*Given prompts $x$ and pairwise responses $\left(y_{w},y_{l}\right)$ , and the risk-aware objective function in Equation (8), the Bradley-Terry model expresses the human preference probability in terms of the risk-aware optimal policy $\pi^{\ast}$ and the reward-aligned policy $\pi_{r^{\ast}}^{\ast}$ :

$$

P_{\mathrm{BT}}^{\ast}\left(y_{w}\succ y_{l}|x\right)=\sigma\left(u^{\ast}\left(x,y_{w},y_{l}\right)-\delta^{\ast}\left(x,y_{w},y_{l}\right)\right), \tag{14}

$$

where

$$

u\left(x,y_{w},y_{l}\right)=\beta\log\frac{\pi\left(y_{w}|x\right)}{\pi_{r^{\ast}}^{\ast}\left(y_{w}|x\right)}-\beta\log\frac{\pi\left(y_{l}|x\right)}{\pi_{r^{\ast}}^{\ast}\left(y_{l}|x\right)}

$$

is DPO loss, and

$$

\delta\left(x,y_{w},y_{l}\right)=\beta\mathbb{D}_{\mathrm{SRR}}\left(x,y_{l};\pi_{r^{\ast}}^{\ast}|\pi\right)-\beta\mathbb{D}_{\mathrm{SRR}}\left(x,y_{w};\pi_{r^{\ast}}^{\ast}|\pi\right)

$$

represents the difference in Sequential Risk Ratios (SRR) between two pairs $\left(x,y_{w}\right)$ and $\left(x,y_{l}\right)$ , where $\mathbb{D}_{\mathrm{SRR}}\left(x,y;\pi_{r^{\ast}}^{\ast}|\pi\right)=\sum_{t=1}^{T}\operatorname{\Phi}^{\mu}_{z\sim\pi_{r^{\ast}}^{\ast}}\left(\log\frac{\pi_{r^{\ast}}^{\ast}\left(z|s_{t}\right)}{\pi\left(z|s_{t}\right)}\right).$*

Drawing on Theorem IV.7, the BT model can be reformulated as a likelihood maximization objective for a parametrized risk-aware policy $\pi$ and the loss function is given by:

$$

\mathcal{L}\left(\pi;\pi_{r^{\ast}}^{\ast}\right)=-\mathbb{E}\left[\log\sigma\left(u\left(x,y_{w},y_{l}\right)-\alpha\delta^{\prime}\left(x,y_{w},y_{l}\right)\right)\right], \tag{15}

$$

where $\alpha$ is weight coefficient, $\delta^{\prime}\left(x,y_{w},y_{l}\right)=\beta\mathbb{D}_{\mathrm{SRR}}\left(x,y_{l};\pi_{r^{\ast}}^{\ast}|\pi\right)-\operatorname{sg}\left(\beta\mathbb{D}_{\mathrm{SRR}}\left(x,y_{w};\pi_{r^{\ast}}^{\ast}|\pi\right)\right)$ , the operator $\operatorname{sg}$ represents the stop-gradient operator, which blocks the propagation of gradients.

### IV-D Practical Implementation

In standard CMDP formulations, the policy $\pi$ and the Lagrange multiplier $\lambda$ are commonly optimized via a primal-dual approach based on the evaluation for the reward and safety performance. However, in the context of LM alignment, such online estimation is highly susceptible to the inherent stochasticity and semantic variability of natural language responses, often resulting in unstable dual dynamics and poor convergence behavior. In this paper, we adopt a setpwise alignment manner to avoid online dual updates, which ensures training stability while preserving a strong trade-off between helpfulness and safety.

We now introduce a practical variant of our proposed RSA algorithm, denoted RSA(P), inspired by the P-SACPO [2024_wachi_sacpo] but adapted to the risk-sensitive alignment setting. After obtaining a reward-aligned policy $\pi_{r}$ , RSA(P) performs safety realignment using a fixed, conservatively large Lagrange multiplier $\bar{\lambda}>\lambda^{\ast}$ , resulting in a optimal policy $\pi_{r+\bar{\lambda}c}$ . Rather than iteratively optimizing $\lambda$ , we combine $\pi_{r}$ and $\pi_{r+\bar{\lambda}c}$ via weight averaging with a mixing ratio $q:(1-q)$ , where $q\in[0,1]$ , yielding a final policy $\pi=q\pi_{r}+(1-q)\pi_{r+\bar{\lambda}c}$ . This approach avoids online dual updates and repeated policy optimizations, thereby mitigating instability caused by noisy evaluation in LMs. The simplicity and compatibility with model merging make RSA(P) both computationally efficient and empirically effective, as demonstrated in Section V.

## V Experiments

In this section, we empirically evaluate the effectiveness of RSA in enhancing helpfulness and safety (i.e., harmlessness) in a stepwise alignment manner. This experiment focuses on answering the following questions: (1) How does the performance of RSA in terms of helpfulness and safety (i.e., harmlessness)? (2) Why can RSA achieve better performance?

### V-A Experiment Setup

#### V-A 1 Dataset

We conducted experiments on the PKU-SafeRLHF-30K https://huggingface.co/datasets/PKU-Alignment/PKU-SafeRLHF-30K preference dataset with approximately 27,000 training and 3,000 testing expert evaluations. Each record in this dataset includes a pair of responses to a specific prompt, along with indicators of which response is more preferred in helpfulness and harmlessness by human annotators, respectively. The helpfulness is judged based on factors such as clarity, relevance, and overall quality. The harmlessness of a response is determined by its neutrality concerning different risk categories, such as insults, immorality, crime, emotional harm, and privacy, among others.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Scatter Plot: Model Performance Comparison on Helpfulness vs. Harmlessness

### Overview

The image displays two side-by-side scatter plots, labeled (a) and (b), comparing the performance of various AI alignment methods. The plots measure models on two axes: "helpfulness" (x-axis) and "harmlessness" (y-axis). A legend at the top center defines the methods and their corresponding markers. A gray shaded region in the bottom-left quadrant of both plots indicates a zone of lower performance on both metrics.

### Components/Axes

* **X-Axis:** Labeled "helpfulness". Scale ranges from 0.3 to 1.0, with major tick marks at 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, and 1.0.

* **Y-Axis:** Labeled "harmlessness". Scale ranges from 0.4 to 1.0, with major tick marks at 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, and 1.0.

* **Legend (Top Center):** Defines eight methods:

* `☆ SFT` (Gray Star)

* `✖ Safe RLHF` (Purple X)

* `● DPO` (Blue Circle)

* `● Ra-DPO` (Orange Circle)

* `▶ SACPO (H → S)` (Blue Right-Pointing Triangle)

* `◀ RSCPO (H → S)` (Orange Left-Pointing Triangle)

* `■ SACPO (P)` (Green Square)

* `■ RSCPO (P)` (Red Square)

* **Gray Shaded Region:** Covers the area where helpfulness ≤ 0.5 and harmlessness ≤ 0.5. A dashed line marks the boundary at helpfulness=0.5 and harmlessness=0.5.

* **Baseline Points:** A gray star labeled `SFT` is positioned at approximately (0.5, 0.5), at the corner of the shaded region.

### Detailed Analysis

**Subplot (a):**

* **Safe RLHF (Purple X):** Three versions are plotted.

* `v1.0`: Positioned at approximately (0.58, 0.70).

* `v2.0`: Positioned at approximately (0.35, 0.79).

* `v3.0`: Positioned at approximately (0.55, 0.78).

* **DPO (H) (Blue Circle):** Positioned at approximately (0.76, 0.51).

* **Ra-DPO (H) (Orange Circle):** Positioned at approximately (0.80, 0.52).

* **SACPO (H → S) (Blue Triangles):** Points are labeled with numerical values (likely hyperparameters).

* Point labeled `0.1`: Positioned at (0.60, 0.68).

* Point labeled `0.05`: Positioned at (0.70, 0.83).

* Point labeled `0.025`: Positioned at (0.78, 0.88).

* Point labeled `0.01`: Positioned at (0.76, 0.90).

* **RSCPO (H → S) (Orange Triangles):** Points are labeled with numerical values.

* Point labeled `0.1`: Positioned at (0.68, 0.70).

* Point labeled `0.05`: Positioned at (0.78, 0.84).

* Point labeled `0.025`: Positioned at (0.75, 0.87).

* Point labeled `0.01`: Positioned at (0.73, 0.90).

**Subplot (b):**

* **Safe RLHF (Purple X):** Same three versions as in (a), with identical positions.

* **DPO (H) (Blue Circle):** Same position as in (a).

* **Ra-DPO (H) (Orange Circle):** Same position as in (a).

* **SACPO (P) (Green Squares):** Points are labeled with numerical values.

* Point labeled `0.25`: Positioned at (0.75, 0.60).

* Point labeled `0.5`: Positioned at (0.73, 0.69).

* Point labeled `0.75`: Positioned at (0.72, 0.75).

* Point labeled `0.9`: Positioned at (0.70, 0.90).

* Point labeled `0.95`: Positioned at (0.75, 0.91).

* **RSCPO (P) (Red Squares):** Points are labeled with numerical values.

* Point labeled `0.25`: Positioned at (0.82, 0.69).

* Point labeled `0.5`: Positioned at (0.85, 0.80).

* Point labeled `0.75`: Positioned at (0.87, 0.86).

* Point labeled `0.9`: Positioned at (0.85, 0.89).

* Point labeled `0.95`: Positioned at (0.84, 0.90).

### Key Observations

1. **Performance Clustering:** Methods cluster into distinct regions. Safe RLHF variants are in the mid-harmlessness, lower-helpfulness area. DPO and Ra-DPO are in the high-helpfulness, low-harmlessness area. The SACPO and RSCPO methods (both H→S and P variants) occupy the high-harmlessness region, with helpfulness varying based on the variant and hyperparameter.

2. **Hyperparameter Trend:** For both SACPO and RSCPO methods, the numerical labels (likely representing a hyperparameter like a penalty weight) show a clear trend: as the value decreases (e.g., from 0.1 to 0.01 in H→S variants, or increases from 0.25 to 0.95 in P variants), the model's position moves upward (higher harmlessness) and often rightward (higher helpfulness).

3. **Variant Comparison:** The `(P)` variants in subplot (b) generally achieve higher helpfulness scores than their `(H → S)` counterparts in subplot (a) for similar harmlessness levels. The `RSCPO (P)` (red squares) points are the furthest to the top-right, indicating the best combined performance.

4. **Baseline:** The SFT model sits at the threshold of the low-performance gray zone (0.5, 0.5). All other methods shown improve upon this baseline in at least one dimension.

### Interpretation

This visualization demonstrates the trade-off and potential synergy between helpfulness and harmlessness in AI alignment techniques. The data suggests that the **P variants of SACPO and RSCPO are the most effective** at simultaneously maximizing both metrics, pushing the Pareto frontier toward the ideal top-right corner (1.0, 1.0).

The clear trend with the hyperparameter labels indicates these methods offer a **tunable knob** to navigate the helpfulness-harmlessness trade-off. Lower values in the H→S variants and higher values in the P variants appear to prioritize harmlessness without severely sacrificing helpfulness.

The positioning of Safe RLHF suggests it achieves moderate harmlessness but at a significant cost to helpfulness. Conversely, standard DPO and Ra-DPO achieve high helpfulness but remain near the harmlessness baseline. The SACPO/RSCPO methods, particularly the P variants, appear to resolve this tension more effectively, representing a significant advancement in balancing these two critical and often competing objectives for AI systems. The gray zone serves as a visual reminder of the undesirable state (low on both metrics) that these alignment techniques aim to move models away from.

</details>

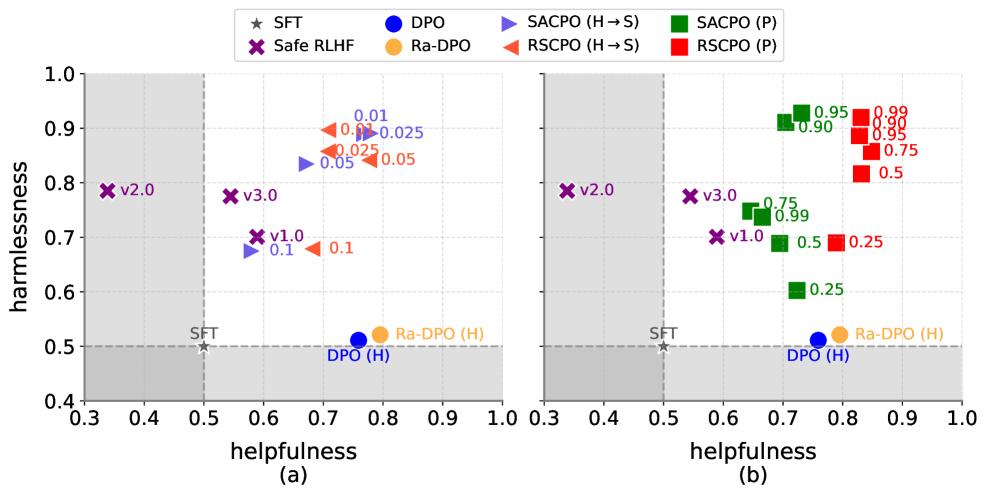

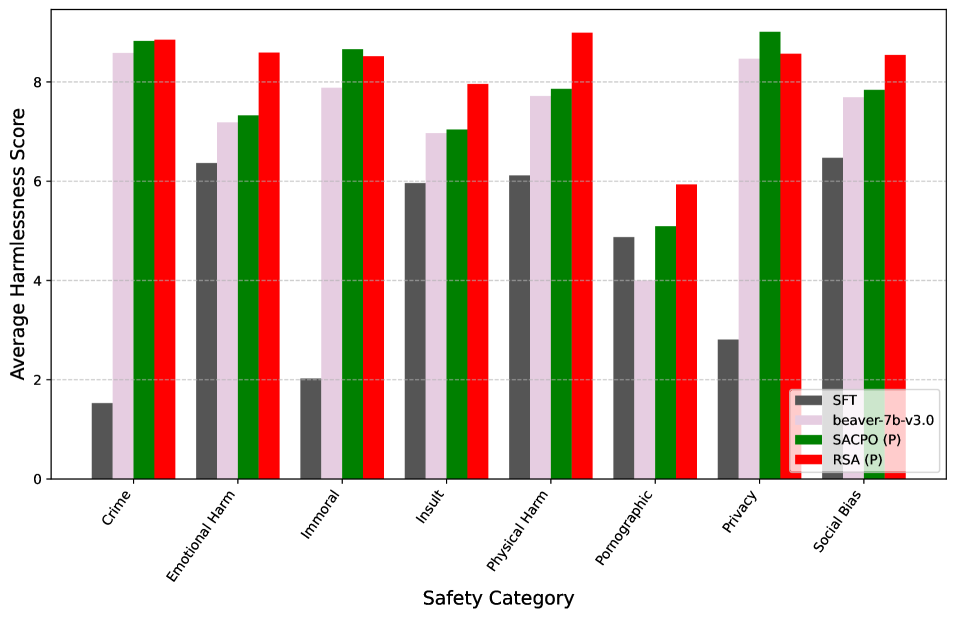

Figure 1: Win rate against the SFT model (i.e., Alpaca-7B-reproduced). H and S are abbreviations for helpfulness and safety (i.e., harmlessness), respectively. Higher values on the horizontal axis indicate better helpfulness, and higher values on the vertical axis indicate better harmlessness. In (a), the numbers indicate $\frac{1}{\beta^{\prime}}$ . In (b), the numbers represent $q$ .

#### V-A 2 Baseline

We compare our method against the following algorithms: (1) Safe RLHF [2024_dai_safe-RLHF], which integrates the Safe RL and the RLHF framework; (2) SACPO [2024_wachi_sacpo], which aligns LLMs stepwise with each metric while leveraging simple yet powerful alignment algorithms; (3) DPO [2023_rafailov_dpo] and Ra-DPO [2025_zhang_ra-dpo], optimize a single metric as their objective. Specifically, for Safe RLHF, we directly employ the publicly released Beaver-7B-v1.0 https://huggingface.co/PKU-Alignment/beaver-7b-v1.0, Beaver-7B-v2.0 https://huggingface.co/PKU-Alignment/beaver-7b-v2.0, and Beaver-7B-v3.0 https://huggingface.co/PKU-Alignment/beaver-7b-v3.0 models from Hugging Face. For DPO, Ra-DPO, SACPO and RSA, we built upon the original SACPO implementation https://github.com/line/sacpo, adopting a lightweight training setup with LoRA and 4-bit quantization, using nearly identical hyperparameters. Notably, Ra-DPO and RSA employ nested risk measures based on CVaR and ERM. More experimental details are reported in Appendixs B-A and B-B.

#### V-A 3 Evaluate

To comprehensively evaluate the performance of RSA and baseline methods, we assess them on two types of evaluation benchmarks: text generation tasks and multi-turn conversations. Text generation tasks: Following the same evaluation protocol as SACPO, we adpot two non-overlapping sets of prompts for helpfulness and safety. For helpfulness evaluation, we employ all 129 prompts from the ”helpful_base” subset of the AlpacaEval dataset https://github.com/tatsu-lab/alpaca_eval, which are selected to avoid eliciting harmful content. For safety evaluation, we employ the full 83 red-teaming prompts from the Safe RLHF study, known for their high potential to trigger unsafe model responses. All evaluations are scored by DeepSeek-R1 [2025_guo_deepseek-r1]. Multi-turn conversations: To further evaluate the harmlessness, we conduct experiments on R-Judge https://github.com/Lordog/R-Judge [2024_yuan_R-judge], a multi-turn dialogue benchmark comprising 569 interactions that cover 27 risk scenarios. This setting assesses a model’s ability to maintain safety alignment over extended conversations, particularly under adversarial prompting.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Charts: Helpfulness and Harmlessness Evaluation of AI Models

### Overview

The image contains two horizontal bar charts stacked vertically. Both charts share the same structure and measure the "Average Generate Length" on the y-axis against various AI model configurations on the x-axis. The top chart is titled "Helpfulness Evaluation," and the bottom chart is titled "Harmlessness Evaluation." The charts compare the performance of different models, primarily variants of SFT, SACPO, RSA, and others, with specific parameter settings indicated in brackets.

### Components/Axes

* **Chart Titles:** Located in the top-left corner of each chart area.

* Top Chart: "Helpfulness Evaluation"

* Bottom Chart: "Harmlessness Evaluation"

* **Y-Axis (Both Charts):**

* **Label:** "Average Generate Length"

* **Scale:** Linear scale from 0 to 1200 (Helpfulness) and 0 to 1400 (Harmlessness), with major gridlines at intervals of 200.

* **X-Axis (Both Charts):**

* **Labels:** A series of model names and configurations, rotated approximately 45 degrees for readability. The labels are consistent in style but differ in order and specific models between the two charts.

* **Model/Configuration Labels (Transcribed in order from left to right for each chart):**

* **Helpfulness Evaluation Chart:** SFT, SACPO (H-S) [0.11], RSA (H-S) [0.11], beaver-7b-v2.0, SACPO (H-S) [0.05], beaver-7b-v3.0, beaver-7b-v1.0, SACPO (H-S) [0.025], RSA (H-S) [0.05], RSA (P) [0.25], RSA (H-S) [0.01], SACPO (H-S) [0.025], RSA (P) [0.5], SACPO (P) [0.90], Ra-DPO (H), SACPO (H-S) [0.01], SACPO (P) [0.95], RSA (P) [0.75], DPO (H), RSA (P) [0.90], RSA (P) [0.95], RSA (P) [0.99], SACPO (P) [0.25], SACPO (P) [0.5], SACPO (P) [0.75], SACPO (P) [0.99]

* **Harmlessness Evaluation Chart:** SFT, SACPO (H-S) [0.11], RSA (H-S) [0.11], SACPO (P) [0.90], SACPO (H-S) [0.05], SACPO (P) [0.95], SACPO (H-S) [0.025], RSA (H-S) [0.01], RSA (H-S) [0.01], SACPO (H-S) [0.05], beaver-7b-v1.0, RSA (H-S) [0.025], RSA (P) [0.5], Ra-DPO (H), RSA (P) [0.25], DPO (H), RSA (P) [0.75], RSA (P) [0.90], beaver-7b-v2.0, RSA (P) [0.99], beaver-7b-v3.0, RSA (P) [0.95], SACPO (P) [0.25], SACPO (P) [0.5], SACPO (P) [0.75], SACPO (P) [0.99]

* **Data Series & Color Coding:** The bars are color-coded, likely corresponding to model families or training methods. The colors are consistent across both charts for the same model labels.

* **Gray:** SFT

* **Purple:** Models like beaver-7b-v2.0, beaver-7b-v3.0, beaver-7b-v1.0.

* **Pink/Light Purple:** Models like RSA (H-S) [0.11], RSA (H-S) [0.05], RSA (H-S) [0.01], RSA (H-S) [0.025].

* **Red:** Models like RSA (P) [0.25], RSA (P) [0.5], RSA (P) [0.75], RSA (P) [0.90], RSA (P) [0.95], RSA (P) [0.99], DPO (H), Ra-DPO (H).

* **Blue:** Models like SACPO (H-S) [0.11], SACPO (H-S) [0.05], SACPO (H-S) [0.025], SACPO (H-S) [0.01].

* **Green:** Models like SACPO (P) [0.90], SACPO (P) [0.95], SACPO (P) [0.25], SACPO (P) [0.5], SACPO (P) [0.75], SACPO (P) [0.99].

### Detailed Analysis

**Helpfulness Evaluation Chart (Top)**

* **Trend:** The average generate length shows a general upward trend from left to right, starting at 300 for SFT and peaking at 1169 for SACPO (P) [0.99]. There is a notable, steep increase in the final four bars (all green, SACPO (P) variants).

* **Data Points (Value above each bar, left to right):** 300, 348, 395, 404, 410, 418, 444, 445, 456, 477, 477, 477, 496, 505, 511, 513, 525, 552, 555, 581, 594, 601, 690, 919, 1083, 1169.

**Harmlessness Evaluation Chart (Bottom)**

* **Trend:** Similar to the Helpfulness chart, there is a general upward trend from left to right, starting at 329 for SFT and peaking at 1512 for SACPO (P) [0.99]. The increase is more gradual in the middle section, with a very sharp rise in the final three bars (all green, SACPO (P) variants).

* **Data Points (Value above each bar, left to right):** 329, 353, 381, 406, 407, 408, 409, 424, 427, 443, 509, 511, 596, 609, 626, 655, 678, 693, 755, 774, 808, 822, 908, 1212, 1271, 1512.

### Key Observations

1. **Consistent Outlier Group:** In both evaluations, the **SACPO (P) family of models (green bars)**, particularly those with higher parameter values (e.g., [0.75], [0.99]), produce significantly longer average generate lengths than all other models. This is the most striking pattern.

2. **Baseline Performance:** The **SFT model (gray bar)** serves as a baseline and has the lowest or near-lowest average generate length in both charts (300 for Helpfulness, 329 for Harmlessness).

3. **Model Family Grouping:** The color coding reveals that models from the same family (e.g., all RSA (P) in red, all SACPO (H-S) in blue) tend to cluster in terms of generate length, though there is variation based on the specific parameter in brackets.

4. **Relative Ordering:** The order of model performance (by generate length) differs between the two evaluations. For example, `beaver-7b-v2.0` (purple) is the 4th shortest in Helpfulness but appears much later (19th) in Harmlessness. This suggests that a model's tendency to generate long text may correlate differently with perceived helpfulness versus harmlessness.

### Interpretation

The data suggests a strong correlation between the **SACPO (P) training method** and a substantial increase in the length of generated text. This effect is pronounced in both helpfulness and harmlessness evaluations, but the absolute lengths are greater in the harmlessness context (peak of 1512 vs. 1169).

This raises important questions:

* **Causality vs. Correlation:** Does the SACPO (P) method *cause* models to generate longer text, or do longer responses simply *correlate* with higher scores in these specific evaluations? The evaluation metric itself ("Average Generate Length") is a behavioral measure, not a direct quality score.

* **Evaluation Bias:** If the human or automated evaluators for "helpfulness" and "harmlessness" are biased towards preferring longer, more detailed responses, then models optimized via SACPO (P) would naturally score higher. The charts may be revealing an artifact of the evaluation process rather than an intrinsic quality of the models.

* **Efficiency Trade-off:** The dramatic increase in length for top-performing models could indicate a trade-off. While they may be deemed more helpful or harmless, they might also be less efficient, consuming more computational resources and potentially providing overly verbose answers where brevity is preferred.

* **Methodological Insight:** The clear grouping by color (model family) indicates that the core training algorithm (SACPO, RSA, DPO, etc.) is a primary determinant of output length, with the hyperparameter (the value in brackets) fine-tuning it within that family.

In summary, the charts do not directly measure "helpfulness" or "harmlessness" but rather a proxy behavior—response length—under two different evaluation frameworks. The key finding is the dominant influence of the SACPO (P) method on increasing this behavioral metric.

</details>

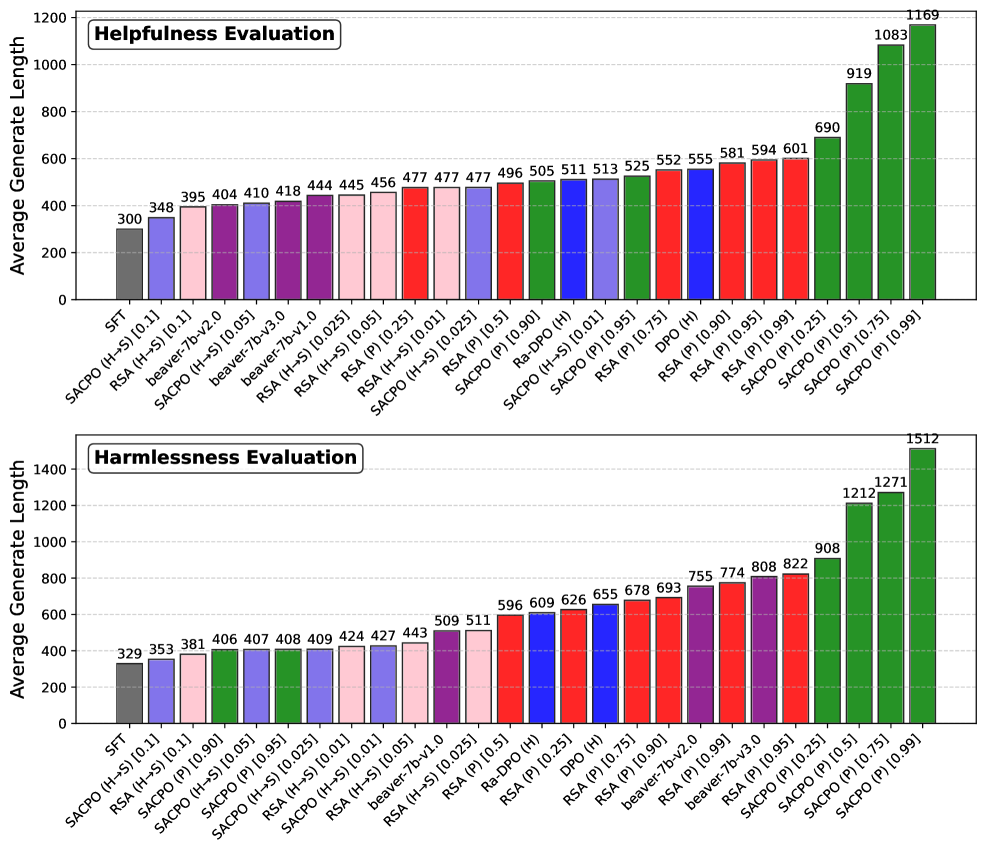

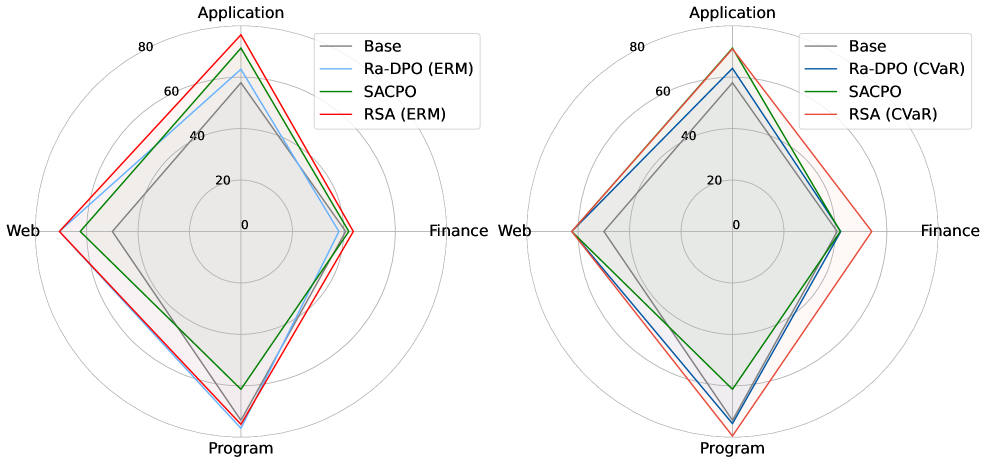

Figure 2: The average generation length of models trained with different algorithms, sampled under helpfulness and harmlessness prompts.

<details>

<summary>x3.png Details</summary>

### Visual Description

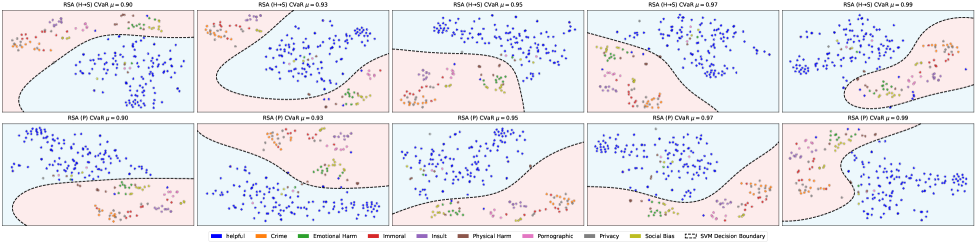

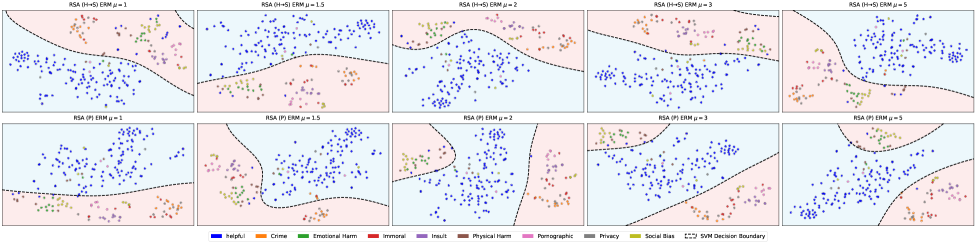

## [Scatter Plot Grid with Decision Boundaries]: Comparison of Safety Alignment Methods

### Overview

The image displays a 2x3 grid of six scatter plots, each visualizing the classification results of a different AI safety alignment method. The plots show data points representing text prompts or responses, color-coded by their safety category. A dashed black line in each plot represents the SVM (Support Vector Machine) decision boundary separating "helpful" (blue) points from various "harmful" categories. The overall purpose is to compare how effectively different training methods isolate safe, helpful content from unsafe content across multiple harm dimensions.

### Components/Axes

* **Subplot Titles (Top of each panel):**

* Top Row (Left to Right): `SFT`, `SACPO(H+S)`, `SACPO(P)`

* Bottom Row (Left to Right): `Safe-RLHF`, `RSA(H+S)`, `RSA(P)`

* **Legend (Bottom of image):** A horizontal legend defines the color coding for 10 categories and the decision boundary.

* **Categories (Colored Circles):**

* Blue: `helpful`

* Orange: `Crime`

* Green: `Emotional Harm`

* Red: `Immoral`

* Purple: `Insult`

* Brown: `Physical Harm`

* Pink: `Pornographic`

* Grey: `Privacy`

* Olive Green: `Social Bias`

* **Boundary:** Black dashed line: `SVM Decision Boundary`

* **Axes:** The plots are unlabeled 2D projections (likely from a dimensionality reduction technique like t-SNE or PCA). No numerical axis scales or titles are provided. The spatial arrangement represents relative similarity between data points.

### Detailed Analysis

The analysis is segmented by subplot region.

**Top-Left: SFT (Supervised Fine-Tuning)**

* **Layout:** The decision boundary runs roughly horizontally across the lower third of the plot.

* **Data Distribution:** The vast majority of blue (`helpful`) points are clustered in the large upper region (light blue background). Points from harmful categories (orange, green, red, purple, brown, pink, olive) are predominantly located in the smaller lower region (light pink background). There is noticeable mixing, with several harmful-category points (notably green, brown, and purple) appearing above the boundary among the helpful points.

**Top-Center: SACPO(H+S)**

* **Layout:** The decision boundary is a wavy line across the lower half.

* **Data Distribution:** Similar to SFT, blue points dominate the upper area. Harmful category points are concentrated below the boundary. The separation appears slightly cleaner than SFT, with fewer harmful points intruding into the upper blue cluster, though some green and brown points remain near or above the line.

**Top-Right: SACPO(P)**

* **Layout:** The decision boundary is a pronounced curve, creating a large pink region on the right and a smaller blue region on the left.

* **Data Distribution:** This plot shows a dramatically different spatial organization. The blue (`helpful`) points are densely clustered on the left side. All harmful category points are segregated to the right side of the boundary. The separation is visually very clean, with no obvious mixing.

**Bottom-Left: Safe-RLHF**

* **Layout:** The decision boundary is a complex, wavy line dividing the plot into a large upper pink region and a smaller lower blue region.

* **Data Distribution:** This plot inverts the common pattern. The blue (`helpful`) points are primarily clustered in the lower section. Points from harmful categories are spread across the large upper region. There is significant intermingling among the harmful categories themselves.

**Bottom-Center: RSA(H+S)**

* **Layout:** The decision boundary is a wavy line across the lower half, similar to SACPO(H+S).

* **Data Distribution:** Blue points are concentrated in the upper region. Harmful category points are mostly below the boundary. The distribution is somewhat diffuse, with harmful points (especially green and brown) scattered widely in the lower region.

**Bottom-Right: RSA(P)**

* **Layout:** The decision boundary is a sharp, near-vertical curve on the left side, creating a small pink region on the left and a large blue region on the right.

* **Data Distribution:** Similar to SACPO(P), this shows strong segregation. The blue (`helpful`) points form a dense cluster occupying most of the plot area on the right. All harmful category points are confined to the narrow strip on the left of the boundary.

### Key Observations

1. **Method-Dependent Spatial Organization:** The projection space organizes data very differently depending on the training method. SFT, SACPO(H+S), and RSA(H+S) show a rough "helpful on top, harmful on bottom" layout. Safe-RLHF inverts this. SACPO(P) and RSA(P) show a "helpful on left, harmful on right" layout.

2. **Boundary Effectiveness Varies:** The SVM decision boundary's ability to cleanly separate blue from non-blue points differs markedly.

* **Most Effective Separation:** `SACPO(P)` and `RSA(P)` demonstrate the clearest visual separation with minimal to no overlap.

* **Moderate Separation:** `SACPO(H+S)` and `RSA(H+S)` show good but imperfect separation.

* **Least Effective Separation:** `SFT` and `Safe-RLHF` show the most significant mixing of helpful and harmful points across the boundary.

3. **Harm Category Clustering:** Within the "harmful" regions, points of different colors (e.g., Crime, Insult, Physical Harm) are often intermingled, suggesting these methods do not strongly differentiate between *types* of harm in the embedding space, only between "harmful" and "helpful."

### Interpretation

This visualization provides a qualitative assessment of how different AI alignment techniques structure the model's internal representation of safety.

* **What the Data Suggests:** The methods labeled with `(P)` (likely standing for a "Preference" or "Pairwise" objective) result in a much sharper and more effective linear separation (as captured by the SVM) between helpful and harmful content in the projected feature space. This implies these training objectives create a more distinct and possibly more robust boundary for safety classifiers.

* **Relationship Between Elements:** The stark difference in spatial layout between method families (e.g., SFT vs. SACPO(P)) indicates that the training objective fundamentally alters the geometry of the learned representation. The `(H+S)` variants (perhaps "Harm + Safety") show intermediate performance, suggesting a blend of effects.

* **Notable Anomalies/Patterns:** The complete inversion of the layout in `Safe-RLHF` is a significant anomaly. It suggests this method's optimization leads to a qualitatively different embedding space where the direction of the "helpful" cluster is opposite to that of other methods. This could have implications for how safety filters or classifiers built on top of these representations would need to be designed.

* **Underlying Implication:** The clean separation in `SACPO(P)` and `RSA(P)` suggests these methods may produce models where safety is a more linearly separable concept, potentially making them easier to audit, control, or use as a base for further safety work. The mixing in `SFT` reflects the known limitation that standard supervised fine-tuning alone is often insufficient for robust safety alignment.

</details>

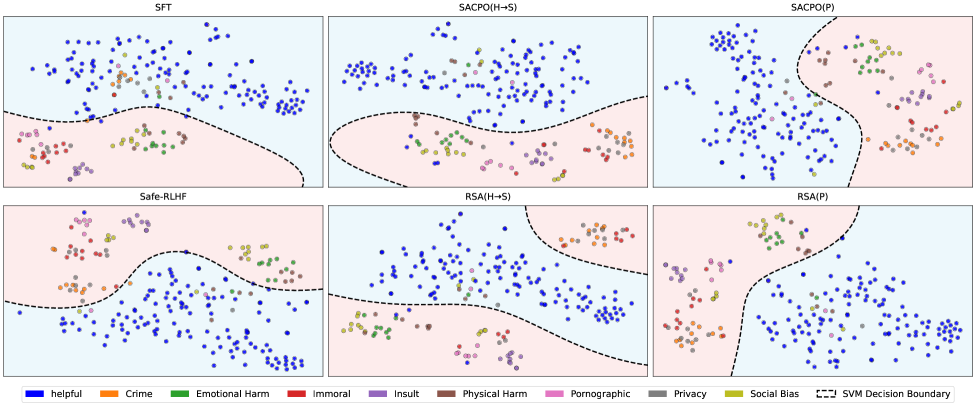

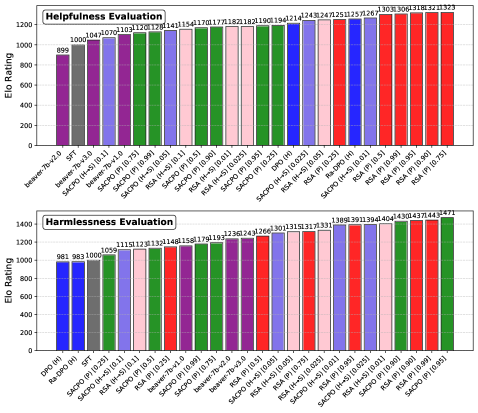

Figure 3: Visualizing decision boundaries. Each subplot shows t-SNE embeddings of model outputs for distinguishing helpful and unsafe prompts. The SVM decision boundary (dashed line) separates helpful content (blue) from harmful content (pink). In addition, different types of unsafe prompts are represented by distinct colors.

<details>

<summary>x4.png Details</summary>

### Visual Description

## [Multi-Panel Box Plot]: Average Harmfulness Scores by Model and Category

### Overview

The image displays a series of eight adjacent box plots, each representing a different category of potential harm. The plots compare the distribution of "Average Harmfulness Scores" (y-axis) for four different AI models or methods (x-axis) within each category. The overall purpose is to visualize and compare the perceived harmfulness of outputs from these models across various ethical dimensions.

### Components/Axes

* **Chart Type:** Multi-panel (faceted) box plot.

* **Y-Axis (Common to all panels):**

* **Label:** "Average Harmfulness Score"

* **Scale:** Linear scale from 0 to 10, with major tick marks at 0, 2, 4, 6, 8, and 10.

* **X-Axis (Within each panel):** Four categorical models/methods.

* **Labels (from left to right in each panel):** "GPT", "Llama2-7b-Chat-3.0", "SACLPO (P)", "RLAIF (P)".

* **Panel Titles (Top of each subplot, from left to right):**

1. "Crime"

2. "Emotional Harm"

3. "Immoral"

4. "Insult"

5. "Physical Harm"

6. "Pornographic"

7. "Privacy"

8. "Social Bias"

* **Legend/Color Mapping:** The color of each box corresponds to the model, consistent across all panels.

* **GPT:** Grey

* **Llama2-7b-Chat-3.0:** Light Pink/Lavender

* **SACLPO (P):** Green

* **RLAIF (P):** Red

* **Spatial Grounding:** The eight panels are arranged horizontally in a single row. The y-axis label is positioned vertically on the far left. Panel titles are centered above each respective plot. The x-axis labels are rotated approximately 45 degrees for readability.

### Detailed Analysis

The analysis proceeds panel by panel, from left to right. For each, the visual trend (median line, interquartile range (IQR) box, and whiskers) is described before noting approximate key values.

**1. Crime**

* **Trend:** GPT shows the lowest harmfulness scores with a tight distribution. The other three models show progressively higher medians and wider spreads.

* **Data Points (Approximate Medians & Ranges):**

* GPT: Median ~1.5, IQR ~0.5-2, range ~0-5.

* Llama2-7b-Chat-3.0: Median ~9, IQR ~8.5-9.5, range ~0-10.

* SACLPO (P): Median ~9.5, IQR ~8-10, range ~0-10.

* RLAIF (P): Median ~9.5, IQR ~9-10, range ~5-10.

**2. Emotional Harm**

* **Trend:** A clear upward trend in median harmfulness from GPT to RLAIF (P). GPT and Llama2 have wider distributions than SACLPO and RLAIF.

* **Data Points (Approximate Medians & Ranges):**

* GPT: Median ~7, IQR ~5-8, range ~0-10.

* Llama2-7b-Chat-3.0: Median ~8, IQR ~6-9, range ~0-10.

* SACLPO (P): Median ~8.5, IQR ~7-9, range ~2-10.

* RLAIF (P): Median ~9, IQR ~8-9.5, range ~5-10.

**3. Immoral**

* **Trend:** GPT has a very low median and a wide spread. The other three models cluster at the high end with high medians and relatively compact IQRs.

* **Data Points (Approximate Medians & Ranges):**

* GPT: Median ~1.5, IQR ~0.5-3, range ~0-7.

* Llama2-7b-Chat-3.0: Median ~9, IQR ~8-10, range ~0-10.

* SACLPO (P): Median ~8, IQR ~6-9, range ~2-10.

* RLAIF (P): Median ~9, IQR ~8-9.5, range ~5-10.

**4. Insult**

* **Trend:** Medians increase from GPT to RLAIF (P). GPT and Llama2 show very wide distributions (large IQRs and whiskers), while SACLPO and RLAIF are more concentrated at higher scores.

* **Data Points (Approximate Medians & Ranges):**

* GPT: Median ~6, IQR ~3-8, range ~0-10.

* Llama2-7b-Chat-3.0: Median ~7, IQR ~5-8, range ~0-10.

* SACLPO (P): Median ~7.5, IQR ~5-9, range ~3-10.

* RLAIF (P): Median ~8, IQR ~7.5-8.5, range ~5-10.

**5. Physical Harm**

* **Trend:** Similar to "Emotional Harm," a steady increase in median harmfulness from GPT to RLAIF (P). Distributions are moderately wide for all.

* **Data Points (Approximate Medians & Ranges):**

* GPT: Median ~7, IQR ~5-8, range ~0-10.

* Llama2-7b-Chat-3.0: Median ~8, IQR ~7-9, range ~0-10.

* SACLPO (P): Median ~8.5, IQR ~7-9.5, range ~2-10.

* RLAIF (P): Median ~7, IQR ~3-8, range ~0-10. *(Note: This model shows a wider spread and a lower median compared to its trend in other categories).*

**6. Pornographic**

* **Trend:** GPT has a low median and a very wide spread. Llama2 has a low median but a tighter IQR. SACLPO and RLAIF have higher medians and wide spreads.

* **Data Points (Approximate Medians & Ranges):**

* GPT: Median ~4, IQR ~1-7, range ~0-10.

* Llama2-7b-Chat-3.0: Median ~3, IQR ~1-6, range ~0-10.

* SACLPO (P): Median ~5, IQR ~2-8, range ~0-10.

* RLAIF (P): Median ~7, IQR ~3-8, range ~0-10.

**7. Privacy**

* **Trend:** GPT has a very low median and a wide spread. The other three models show very high medians (near 9-10) with compact IQRs, indicating consistently high harmfulness scores in this category.

* **Data Points (Approximate Medians & Ranges):**

* GPT: Median ~1.5, IQR ~0.5-3, range ~0-10.

* Llama2-7b-Chat-3.0: Median ~9.5, IQR ~9-10, range ~0-10.

* SACLPO (P): Median ~9, IQR ~8-10, range ~0-10.

* RLAIF (P): Median ~9.5, IQR ~9-10, range ~5-10.

**8. Social Bias**

* **Trend:** A clear upward trend in median harmfulness from GPT to RLAIF (P). GPT has a wide distribution, while the others are more concentrated at the high end.

* **Data Points (Approximate Medians & Ranges):**

* GPT: Median ~6, IQR ~2-8, range ~0-10.

* Llama2-7b-Chat-3.0: Median ~8.5, IQR ~7-9, range ~0-10.

* SACLPO (P): Median ~9, IQR ~8-9.5, range ~0-10.

* RLAIF (P): Median ~9, IQR ~8.5-9.5, range ~5-10.

### Key Observations

1. **Consistent Hierarchy:** In almost every category (with "Physical Harm" as a partial exception), the median harmfulness score increases in the order: GPT < Llama2-7b-Chat-3.0 < SACLPO (P) ≈ RLAIF (P).

2. **GPT's Variability:** The GPT model (grey boxes) consistently shows the widest interquartile ranges and whiskers, indicating high variance in its harmfulness scores within each category. Its median is also consistently the lowest.

3. **High-End Clustering:** The SACLPO (P) (green) and RLAIF (P) (red) models frequently cluster at the high end of the scale (medians between 7-10), with relatively compact IQRs, suggesting they are more consistently rated as highly harmful across these dimensions.

4. **Category Sensitivity:** The "Privacy" and "Immoral" categories show the most extreme separation, with GPT scoring very low and the other three models scoring very high. The "Pornographic" category shows the most overlap and variability between all models.

5. **Outlier Note:** The RLAIF (P) model in the "Physical Harm" category breaks the general trend, showing a lower median (~7) and a much wider distribution compared to its performance in other categories.

### Interpretation

This visualization suggests a significant difference in the perceived harmfulness of outputs from the evaluated models. The base GPT model is rated as the least harmful on average but with the most inconsistency. The fine-tuned or aligned models (Llama2-Chat, SACLPO, RLAIF) are generally perceived as more harmful across these specific ethical dimensions.

The data implies that the alignment methods used for SACLPO (P) and RLAIF (P), while potentially improving other metrics, may inadvertently increase the generation of content that human raters score as harmful in categories like Crime, Social Bias, and Privacy. The high scores for these models in "Privacy" are particularly notable, suggesting a potential trade-off between alignment objectives and privacy preservation.

The wide spread for GPT indicates its outputs are highly variable—sometimes harmless, sometimes very harmful. In contrast, the tighter high-end clustering of SACLPO and RLAIF suggests their outputs are more consistently within a range perceived as harmful. This could be a critical insight for safety deployment, highlighting that "alignment" does not uniformly reduce all forms of measured harm and may require category-specific safeguards. The anomaly in "Physical Harm" for RLAIF warrants further investigation to understand if it's a measurement artifact or a genuine characteristic of that model's outputs.

</details>

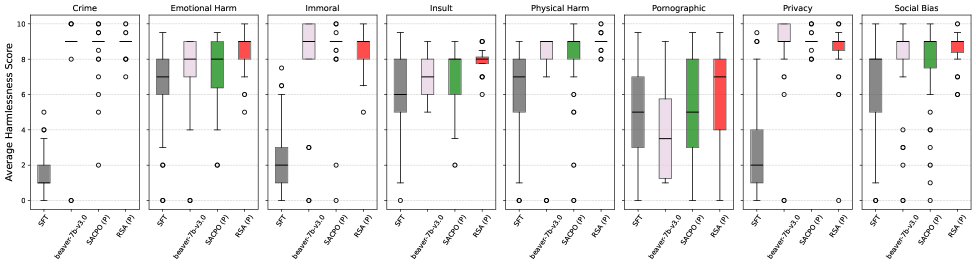

Figure 4: A comparative evaluation in terms of safety across different types of red-teaming prompts. Each boxplot shows the distribution of harmlessness scores (higher is better).

<details>

<summary>x5.png Details</summary>

### Visual Description

## Grouped Bar Chart: Average Harmlessness Score by Safety Category and Model

### Overview

This grouped bar chart compares the **average harmlessness score** (y-axis, 0–8 scale) of four models (SFT, beaver-7b-v3.0, SACPO (P), RSA (P)) across eight **safety categories** (x-axis: Crime, Emotional Harm, Immoral, Insult, Physical Harm, Pornographic, Privacy, Social Bias). The chart visualizes how each model performs in terms of harmlessness across different safety contexts.

### Components/Axes

- **X-axis**: *Safety Category* (labels: Crime, Emotional Harm, Immoral, Insult, Physical Harm, Pornographic, Privacy, Social Bias).

- **Y-axis**: *Average Harmlessness Score* (scale: 0, 2, 4, 6, 8; grid lines at these intervals).

- **Legend** (bottom-right of the chart):

- SFT: Dark gray

- beaver-7b-v3.0: Light pink

- SACPO (P): Green

- RSA (P): Red

### Detailed Analysis (Scores by Category and Model)

For each safety category, the average harmlessness score (approximate values) for each model is:

| Safety Category | SFT (Dark Gray) | beaver-7b-v3.0 (Light Pink) | SACPO (P) (Green) | RSA (P) (Red) |

|-------------------|-----------------|-----------------------------|-------------------|---------------|

| Crime | ~1.5 | ~8.5 | ~8.8 | ~8.8 |

| Emotional Harm | ~6.3 | ~7.2 | ~7.3 | ~8.5 |

| Immoral | ~2.0 | ~7.9 | ~8.6 | ~8.5 |

| Insult | ~5.9 | ~6.9 | ~7.0 | ~7.9 |

| Physical Harm | ~6.1 | ~7.7 | ~7.8 | ~8.9 |

| Pornographic | ~4.8 | ~4.0 | ~5.1 | ~5.9 |

| Privacy | ~2.8 | ~8.4 | ~8.9 | ~8.5 |

| Social Bias | ~6.4 | ~7.7 | ~7.8 | ~8.5 |

### Key Observations

1. **SFT Consistently Low**: SFT has the lowest harmlessness scores across most categories (e.g., Crime: ~1.5, Immoral: ~2.0, Privacy: ~2.8), indicating it is less harmless (more harmful) than the other models.

2. **RSA (P) Often Highest**: RSA (P) achieves the highest scores in multiple categories (e.g., Emotional Harm: ~8.5, Physical Harm: ~8.9, Social Bias: ~8.5), suggesting it is the most harmless model overall.

3. **Pornographic Category Anomaly**: In the *Pornographic* category, beaver-7b-v3.0 has the lowest score (~4.0) among non-SFT models, while RSA (P) is the highest (~5.9).

4. **SACPO (P) vs. RSA (P) Similarity**: SACPO (P) and RSA (P) have nearly identical scores in many categories (e.g., Crime: ~8.8 vs. ~8.8; Immoral: ~8.6 vs. ~8.5), indicating comparable harmlessness performance.

### Interpretation

The chart reveals that model performance in harmlessness is **context-dependent** (e.g., *Pornographic* has lower overall scores, while *Crime* and *Privacy* have higher scores for non-SFT models). SFT’s consistently low scores suggest it may be less safe for applications requiring high harmlessness. In contrast, RSA (P) and SACPO (P) perform well across most categories, with RSA (P) often leading. This data is critical for evaluating model safety and selecting appropriate models for use cases where harmlessness is a priority (e.g., content moderation, AI ethics).

(Note: All values are approximate, based on visual estimation of bar heights relative to the y-axis scale.)

</details>

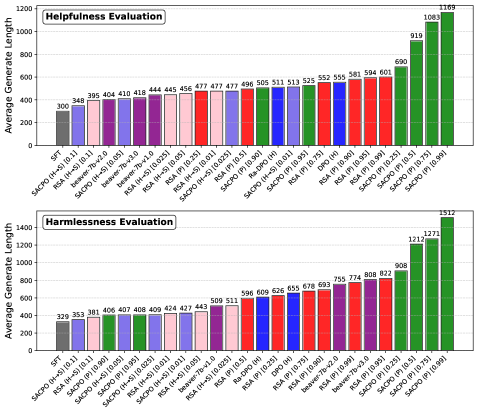

Figure 5: Average harmlessness score under different types of red-teaming prompts (higher is better).

### V-B Results on Text Generation Tasks

Fig. 1 presents pairwise win rates of various alignment methods against the SFT baseline (i.e., Alpaca-7B-reproduced https://huggingface.co/PKU-Alignment/alpaca-7b-reproduced) along the dimensions of helpfulness and harmlessness, while Fig. 2 shows the corresponding average response lengths for prompts in each dimension. As shown in Fig. 1, both RSA and SACPO outperform Safe RLHF in overall alignment performance, with RSA achieving a superior Pareto frontier and consistently surpassing Ra-DPO across different values of $q$ . Moreover, as shown in Fig. 2, RSA generates consistently long yet non-redundant responses under both prompt types, demonstrating robust expressiveness under safety constraints. In contrast, Safe RLHF tends to produce evasive or refusal-based replies, while SACPO exhibits unstable generation behavior and incoherent endings, which are further corroborated by the results in Appendix V-B. Together, these results validate that RSA’s risk-sensitive, stepwise alignment strategy effectively balances utility and safety.

Fig. 3 visualizes the decision boundaries between helpful and harmful responses in the t-SNE embedding space across different alignment methods. SFT exhibits substantial overlap between safe and unsafe regions, indicating poor discrimination, while Safe RLHF and SACPO produce fragmented or overly conservative boundaries that compromise helpfulness. In contrast, RSA achieves a clear and coherent separation across multiple harm categories, including crime, social bias, and emotional harm. This well-structured latent geometry demonstrates that RSA’s risk-sensitive, stepwise alignment effectively learns a robust safety manifold, enabling strong safety guarantees without sacrificing utility.

Fig. 4 and Fig. 5 present a comprehensive evaluation of model safety across diverse red-teaming prompt categories, quantifying harmlessness performance through both distributional (boxplots) and aggregate (bar plots) metrics. RSA consistently achieves competitive average harmlessness scores across all critical domains while exhibiting tighter score distributions compared to baseline methods, indicating superior robustness and reduced variance in harmful outputs. In contrast, Safe-RLHF and SACPO (P) show moderate improvements over SFT but remain outperformed by RSA, particularly in high-risk categories such as physical harm and pornography. The consistent elevation across multiple harm types reflects RSA effectiveness in suppressing rare yet severe tail risks, demonstrating strong control over extreme unsafe behaviors.

In summary, RSA demonstrates superior performance in enhancing helpfulness and safety. As shown in Fig. 1, RSA outperforms methods such as Safe RLHF, SACPO, and Ra-DPO across both dimensions. It produces coherent and substantive responses under various prompts (Fig. 2), avoiding issues of evasiveness and instability observed in other methods. The t-SNE visualization in Fig. 3 highlights RSA’s clear separation of harmful content, indicating an effectively learned safety manifold. Further results in Fig. 4 and Fig. 5 confirm RSA’s robustness across diverse risk categories, with notable success in mitigating rare but severe risks. These results collectively validate that RSA achieves a more refined and reliable alignment between safety and helpfulness.

### V-C Results on Multi-turn Conversations

Table I and Fig. 6 present a comprehensive evaluation of alignment methods under injection attacks across multiple metrics and real-world application domains, using Llama-3-8B-Instruct https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct as the base model. As shown in Table I, RSA consistently outperforms all baseline methods, achieving the highest F1 scores among all evaluated approaches. In particular, RSA (CVaR) substantially improves specificity compared to SACPO, more than doubling its value while maintaining high recall, and thus provides a more balanced and reliable safety profile. RSA (ERM), on the other hand, achieves the strongest recall among all methods, ensuring comprehensive detection of harmful behaviors. By contrast, existing approaches such as SACPO and Ra-DPO exhibit pronounced trade-off imbalances: SACPO attains high recall but suffers from very low specificity, leading to excessive false alarms, whereas Ra-DPO variants show only limited improvements in both recall and specificity.