# Group Deliberation Oriented Multi-Agent Conversational Model for Complex Reasoning

**Authors**: Zheyu Shi, Dong Qiu, Shanlong Yu

## Group Deliberation Oriented Multi Agent Conversational Model for Complex Reasoning

Zheyu Shi* Brown University Providence, USA zheyu\_shi@brown.edu

Dong Qiu New England College Henniker, USA DQiu\_GPS@nec.edu

Abstract -This study proposes a group deliberation multi-agent dialogue model to optimize the limitations of single-language models for complex reasoning tasks. The model constructs a threelevel role division architecture of "generation - verification integration." An opinion-generating agent produces differentiated reasoning perspectives, an evidence-verifying agent matches external evidence and quantifies the support of facts, and a consistency-arbitrating agent integrates logically coherent conclusions. A self-game mechanism is incorporated to expand the reasoning path, and a retrieval enhancement module supplements dynamic knowledge. A composite reward function is designed, and an improved proximal strategy is used to optimize collaborative training. Experiments show that the model improves multi-hop reasoning accuracy by 16.8%, 14.3%, and 19.2% on the HotpotQA, 2WikiMultihopQA, and MeetingBank datasets, respectively, and improves consistency by 21.5%. Its reasoning efficiency surpasses mainstream multi-agent models, achieving a balance between accuracy, stability, and efficiency, providing an efficient technical solution for complex reasoning.

Keywords- Multi-agent dialogue; group discussion; complex reasoning; role division; self-game mechanism; retrieval enhancement

## I. INTRODUCTION

In real-world scenarios of complex reasoning tasks (such as multi-hop question answering and group decision-making), multi-agent collaboration is a core requirement for overcoming the bottleneck of single-model reasoning depth. These tasks require integrating multi-dimensional information and verifying multi-source facts. Prior work has shown that multiagent interaction such as debate can improve factuality and reasoning robustness, implicitly addressing failure modes of

Shanlong Yu Georgia Institute of Technology Atlanta, USA joesyu779@outlook.com single-model reasoning. Furthermore, factual accuracy relies on pre-trained knowledge, making it difficult to dynamically supplement external information, resulting in insufficient stability and reliability in complex tasks. This study proposes a group deliberation multi-agent dialogue model: constructing a collaborative reasoning closed loop through role-based LLM agents (viewpoint generation, evidence verification, consistency arbitration), introducing a self-game mechanism to generate multi-path reasoning chains to expand perspectives, combining a retrieval enhancement module to dynamically supplement external knowledge to strengthen factual accuracy, and designing a reward model based on factual consistency and logical coherence, using a proximal strategy optimization to achieve multi-agent collaborative training. Multi-agent reinforcement learning has been extensively studied across a wide range of collaborative decision-making tasks.

## II. GROUP DELIBERATION MULTI-AGENT DIALOGUE MODEL DESIGN

## A. Role-Based LLM Agent Architecture

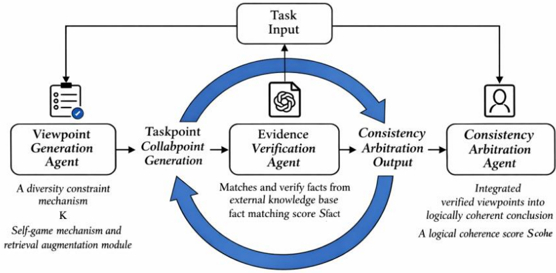

This model constructs a three-level collaborative architecture of "generation - verification - integration" (Figure 1), following the emerging paradigm of role-based multi-agent language model systems [1-2]. Through the division of labor and cooperation among LLM agents with differentiated functions, the architecture enables structured collaboration, similar to recent communicative agent frameworks [3]. The architecture starts with task input, and through a closed-loop process of opinion generation, evidence verification, and consistency arbitration, it outputs reasoning results that are diverse, factual, and logical.

Figure 1. Role-based Multi-agent Collaborative Reasoning Architecture.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Multi-Agent Collaboration Framework

### Overview

The image presents a diagram of a multi-agent collaboration framework. It illustrates the flow of information and processes between different agents to achieve a consistent and verified output. The framework starts with a Task Input and involves agents for viewpoint generation, evidence verification, and consistency arbitration.

### Components/Axes

* **Task Input:** A rectangular box at the top center, representing the initial input to the system.

* **Viewpoint Generation Agent:** A rectangular box on the left, with an icon of a checklist. It is described as having "A diversity constraint mechanism K" and a "Self-game mechanism and retrieval augmentation module."

* **Taskpoint Collabpoint Generation:** A rectangular box to the right of the Viewpoint Generation Agent.

* **Evidence Verification Agent:** A rectangular box in the center, with an icon resembling a document with a logo. It "Matches and verify facts from external knowledge base fact matching score Sfact."

* **Consistency Arbitration Output:** A rectangular box to the right of the Evidence Verification Agent.

* **Consistency Arbitration Agent:** A rectangular box on the right, with an icon of a person. It "Integrated verified viewpoints into logically coherent conclusion A logical coherence score Scohe."

* **Arrows:** Arrows indicate the flow of information between the agents. A curved blue arrow connects the Consistency Arbitration Output back to the Taskpoint Collabpoint Generation, forming a loop.

### Detailed Analysis

* **Task Input:** The Task Input is the starting point of the process. It feeds into both the Viewpoint Generation Agent and, indirectly, into the Evidence Verification Agent via the loop.

* **Viewpoint Generation Agent:** This agent generates different viewpoints based on the task input. It uses a diversity constraint mechanism (K) and a self-game mechanism with retrieval augmentation.

* **Taskpoint Collabpoint Generation:** This stage appears to be an intermediate step between viewpoint generation and evidence verification.

* **Evidence Verification Agent:** This agent verifies the generated viewpoints against an external knowledge base. It calculates a fact matching score (Sfact).

* **Consistency Arbitration Output:** The output of the evidence verification process.

* **Consistency Arbitration Agent:** This agent integrates the verified viewpoints into a logically coherent conclusion, resulting in a logical coherence score (Scohe).

* **Feedback Loop:** The blue arrow indicates a feedback loop, where the output of the Consistency Arbitration is fed back into the Taskpoint Collabpoint Generation.

### Key Observations

* The framework emphasizes both viewpoint diversity and consistency.

* The Evidence Verification Agent plays a crucial role in ensuring the accuracy of the information.

* The feedback loop suggests an iterative process of refinement and improvement.

### Interpretation

The diagram illustrates a sophisticated multi-agent system designed to generate and verify information from multiple perspectives. The system aims to produce a consistent and reliable output by incorporating diverse viewpoints, verifying them against external knowledge, and integrating them into a coherent conclusion. The feedback loop allows the system to learn and improve over time. The use of scores (Sfact and Scohe) suggests a quantitative approach to evaluating the quality of the information. The framework could be applied to various tasks, such as fact-checking, decision-making, and knowledge discovery.

</details>

n Figure 1, arrows from each agent to the "Task Input" block indicate persistent read-only access, not reverse data flow. Each agent relies on the full task input throughout reasoning: the Viewpoint Generation Agent uses it to guide diverse trajectories, the Evidence Verification Agent aligns retrieved facts with the original context, and the Consistency Arbitration Agent ensures semantic coherence in final outputs. This context-preserving design maintains factual grounding and consistency in multiagent collaboration.

## 1) Viewpoint Generation Agent

The core function of this agent is to generate differentiated reasoning viewpoints based on the task input, avoiding the limitations of a single perspective. The generation of multiple differentiated viewpoints helps mitigate single-path reasoning bias, which is consistent with findings from self-consistency based reasoning methods [4]. Its generation process introduces a diversity constraint mechanism, mathematically expressed as:

<!-- formula-not-decoded -->

Wherein, LLMV represents a dedicated LLM for opinion generation (such as a fine-tuned Llama 2), ωk is the viewpoint weight vector, following a multivariate normal distribution with mean μ and covariance matrix Σ , used to control the direction of opinion differentiation; This distribution is used for three reasons. First, the multivariate normal distribution offers a continuous, symmetric space around the reasoning center μ, supporting diverse yet coherent viewpoint generation without directional bias. Second, its covarian ce matrix Σ allows control over inter-factor correlations, enabling structured variations across reasoning dimensions. Third, Gaussian parameters align well with gradient-based learning and self-game updates, ensuring stable exploration and convergence. Thus, the normal distribution acts as an effective inductive bias balancing diversity, control, and stability, rather than assuming a fixed probabilistic form. Here, Emb(Q) is the task input embedding, ⊙ denotes element-wise multiplication, and weight modulation lets each Vk attend to different reasoning aspects. The selfgame mechanism explores varied reasoning paths, akin to treestructured deliberation.

## 2) Evidence Verification Agent

This agent is responsible for matching factual evidence with each candidate opinion Vk and verifying its reasonableness. This retrieval-enhanced verification process is inspired by retrieval-augmented generation frameworks that integrate external knowledge to improve factual grounding in language models [5]. Its core function is to calculate the factual matching degree between the opinion and the evidence:

<!-- formula-not-decoded -->

In the formula, ℰk is the set of evidence related to Vk retrieved from the external knowledge base 𝒦 , | ℰk | represents the number of evidence (the first 5 are taken by default); Emb (⋅) is the embedding function based on SentenceBERT, Tr (⋅) represents the matrix trace operation, ‖ ⋅ ‖ F is the Frobenius norm, which is essentially an improved cosine similarity calculation, Sfact ∈ [ 0 , 1 ] , and the larger the value, the stronger the factual support for the viewpoint [4]. The viewpoint enters the next stage only when Sfact ( Vk , ℰk ) ≥ τ ( τ = 0 . 75 is the preset threshold).

## 3) Consistency Arbitration Agent

Its responsibility is to integrate the verified viewpoints and output a logically coherent unified conclusion. Its logical coherence evaluation formula is:

<!-- formula-not-decoded -->

Where LLMC is the arbitration-specific LLM, Prompt cohe is the logical evaluation prompt, wcohe and bcohe are linear transformation parameters; σ (⋅) is the Sigmoid function, mapping the evaluation result to the [0,1] interval, and a higher Scohe indicates a more coherent conclusion logic.

## B. Self-Game Mechanism

To enrich the diversity of reasoning paths, a self-game mechanism between agents is designed to generate multi-path reasoning chains through viewpoint confrontation:

$$\sum _ { k = 1 } ^ { n } \lambda _ { j = k } S _ { fact ( V _ { i } ) } ^ { 2 }$$

In the formula, η is the learning rate (default 0.01), and ∇ ωk represents the gradient with respect to the weight vector ωk . This formula updates the viewpoint weights by maximizing the difference in factual matching between the current viewpoint and other viewpoints, thereby promoting the generation of more diverse and effective reasoning paths.

## C. Reward Model

A composite reward function integrating factual consistency and logical coherence is constructed to guide multi-agent collaborative optimization:

$$- y \cdot K L ( P ( V _ { k } ) || P _ { ref } ( V ) ) ( S )$$

Where λ = 0 . 6 is the factual consistency weight, ( 1 -λ ) is the logical coherence weight; γ = 0 . 1 is the regularization coefficient, and KL (⋅ ‖ ⋅) is the KL divergence, used to constrain the difference between the opinion distribution P ( Vk ) and the reference distribution Pref ( V ) , avoiding excessive divergence of opinions; a larger R indicates better agent collaboration.

## D. Collaborative Training Strategy

Multi-agent collaborative training is achieved using Improved Proximal Policy Optimization (PPO) to avoid inference collapse and loop generation. The objective function is:

$$\frac { L _ { p p o } = E [ \min ( r _ { t } ( \theta ) A _ { e } , c l i p H ( P ( \theta ) ) ] } { ( 6 ) }$$

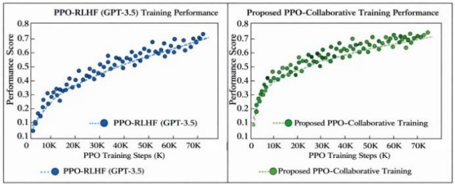

The use of PPO for collaborative optimization is motivated by its demonstrated effectiveness in cooperative multi-agent reinforcement learning settings [6]. In the formula, r t ( θ ) = π θ ( a t ∣ s t ) π θ old ( a t ∣ s t ) is the policy update ratio, πθ is the current policy, and πθold is the old policy; At is the advantage function, ϵ = 0 . 2 is the pruning coefficient; H ( P ( θ )) is the policy entropy, and β = 0 . 05 is the entropy regularization coefficient. Introducing the entropy term encourages policy exploration and avoids inference loops caused by local optima.Figure 2 compares the performance of traditional PPO-RLHF (GPT-3.5) and the proposed multi-agent PPO collaborative training over the same training steps. The x-axis shows PPO steps (in thousands), and the y-axis shows cumulative performance scores. While both start similarly, the proposed model shows more stable and significant gains beyond 50,000 steps, indicating convergence to a superior, stable policy. This highlights the effectiveness of the collaborative mechanism in reducing policy collapse and improving inference stability. Compared to earlier methods like MADDPG [7-8], PPO-based strategies provide better training stability in cooperative tasks, supporting our optimization design.

Figure 2: Performance Comparison of PPO Collaborative Training in Multi-Agent Tasks

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Chart: PPO-RLHF (GPT-3.5) vs. Proposed PPO-Collaborative Training Performance

### Overview

The image presents two scatter plots comparing the training performance of PPO-RLHF (GPT-3.5) and a proposed PPO-Collaborative Training method. The plots show the performance score as a function of PPO training steps (in thousands).

### Components/Axes

**Left Chart:**

* **Title:** PPO-RLHF (GPT-3.5) Training Performance

* **X-axis:** PPO Training Steps (K)

* Scale: 0 to 70K, with tick marks at 0, 10K, 20K, 30K, 40K, 50K, 60K, and 70K.

* **Y-axis:** Performance Score

* Scale: 0.1 to 0.8, with tick marks at 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, and 0.8.

* **Legend:** PPO-RLHF (GPT-3.5) (represented by blue dots and a dashed blue trendline)

**Right Chart:**

* **Title:** Proposed PPO-Collaborative Training Performance

* **X-axis:** PPO Training Steps (K)

* Scale: 0 to 70K, with tick marks at 0, 10K, 20K, 30K, 40K, 50K, 60K, and 70K.

* **Y-axis:** Performance Score

* Scale: 0.1 to 0.8, with tick marks at 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, and 0.8.

* **Legend:** Proposed PPO-Collaborative Training (represented by green dots and a dashed green trendline)

### Detailed Analysis

**Left Chart: PPO-RLHF (GPT-3.5)**

* **Trend:** The performance score generally increases with the number of training steps. The increase is rapid initially, then slows down as the number of steps increases.

* **Data Points:**

* At 0K steps, the performance score is approximately 0.1.

* At 10K steps, the performance score is approximately 0.3.

* At 20K steps, the performance score is approximately 0.4.

* At 30K steps, the performance score is approximately 0.5.

* At 40K steps, the performance score is approximately 0.55.

* At 50K steps, the performance score is approximately 0.6.

* At 60K steps, the performance score is approximately 0.65.

* At 70K steps, the performance score is approximately 0.7.

**Right Chart: Proposed PPO-Collaborative Training**

* **Trend:** The performance score generally increases with the number of training steps. The increase is rapid initially, then slows down as the number of steps increases.

* **Data Points:**

* At 0K steps, the performance score is approximately 0.15.

* At 10K steps, the performance score is approximately 0.4.

* At 20K steps, the performance score is approximately 0.5.

* At 30K steps, the performance score is approximately 0.6.

* At 40K steps, the performance score is approximately 0.65.

* At 50K steps, the performance score is approximately 0.68.

* At 60K steps, the performance score is approximately 0.7.

* At 70K steps, the performance score is approximately 0.72.

### Key Observations

* Both training methods show an increase in performance score as the number of training steps increases.

* The Proposed PPO-Collaborative Training method appears to have a higher initial performance score and a faster rate of improvement in the early stages of training compared to PPO-RLHF (GPT-3.5).

* Both methods seem to plateau in performance as the number of training steps increases beyond 40K.

### Interpretation

The data suggests that the Proposed PPO-Collaborative Training method may be more efficient than PPO-RLHF (GPT-3.5), achieving higher performance scores with fewer training steps, especially in the initial stages. However, both methods appear to converge to similar performance levels as the training progresses. This could indicate that the collaborative training approach provides a better starting point or a faster learning rate, but the ultimate performance ceiling may be similar for both methods. Further investigation with longer training durations and different evaluation metrics would be needed to confirm these observations.

</details>

## E. Retrieval Enhancement Module

External knowledge is dynamically supplemented during the evidence verification stage. The retrieval probability model is as follows:

$$P ( e \vert V _ { k } ) = \frac { \sum _ { l = 1 } ^ { n } p ( S i m ( E m b ( e ) ) ; a ) } { \sum _ { l = 1 } ^ { n } p ( S i m ( C ) ; E m b ( V _ { k } ) ; a ) }$$

Where Sim (⋅,⋅) is the cosine similarity, and α = 1 . 5 is the temperature coefficient used to adjust the confidence level of the retrieval; the matching probability of each knowledge e and opinion Vk is obtained by normalization using the Softmax function, and the top M = 5 knowledge items with the highest probabilities are selected as evidence to strengthen the factual support of the opinion. This modular integration strategy is conceptually related to neuro-symbolic systems that combine language models with external tools and knowledge sources [910].

## III. EXPERIMENTAL DESIGN AND RESULT ANALYSIS

## A. Experimental Datasets

The experiment uses three complex reasoning datasets to ensure result generality: ① HotpotQA: A multi-hop QA benchmark with 113K training and 74K validation samples, requiring reasoning across 2 -5 documents. About 60% are "bridging" questions, stressing cross-document logic. ② WikiMultihopQA: Based on Wikipedia, with 25K training samples and 3.2 average reasoning steps per question. It focuses on entity-based long-chain reasoning. ③ MeetingBank: A group dialogue dataset with 5K+ real meeting samples, requiring integration of multi-round discussions to derive consistent conclusions.

The three datasets correspond to "document-level multihop," "entity-level multi-hop," and "dialogue-level integration" scenarios, respectively, comprehensively validating the model's performance across various complex inference tasks.

## B. Baseline Model and Evaluation Metrics

The baseline model selects mainstream complex inference methods to ensure fairness in the comparison: ① Single LLM model: GPT-3.5, Llama 2-7B (fine-tuned); ② Multi-proxy model: AutoGPT, MetaGPT; ③ Search enhancement model: RAG (Search Enhancement Generation), REALM.GPT-3.5 is selected as a baseline model due to its strong instructionfollowing ability, trained using reinforcement learning from human feedback .

Evaluation metrics focus on core performance and stability: ① Multi-hop reasoning accuracy (Acc): The precise match between the reasoning conclusion and the standard answer, measuring the correctness of the reasoning; ② Consistency index (Cons): The logical consistency rate of the conclusion in multiple rounds of reasoning, calculated by the overlap of the results of 5 consecutive reasoning iterations, measuring stability; ③ Reasoning efficiency (Time): The average time (in seconds) for reasoning per sample, measuring practicality.

## C. Experimental Procedure and Parameter Settings

The experiment includes three steps: ① Data preprocessing: Standardize formats and extract questions, context, and answers. ② GPT-3.5 baseline uses sequential prompting without state sharing: Step 2 inputs the raw question for an initial reasoning chain; Step 3 reuses the output as context for extended reasoning; Step 4 merges prior chains for a final answer. No memory compression or pruning is used, causing performance drops in long chains due to token overflow and diluted context -underscoring the need for collaborative memory and role division. ③ Performance testing: Run each model 10 times on the test set and average the results.

Key parameter configurations are as follows: Number of opinion generation agents K = 3 , Number of evidence retrievals M = 5 , Fact matching threshold τ = 0 . 75 , Reward function weight λ = 0 . 6 , PPO pruning coefficient ϵ = 0 . 2 , Entropy regularization coefficient β = 0 . 05 .

## D. Experimental Results and Analysis

## 1) Performance of the Main Experiment

Table 1 shows the comprehensive performance comparison of each model on the binary dataset. The model presented in this paper significantly outperforms the baseline in all core metrics. Table 1 shows that the proposed model improves accuracy by 16.8%, 14.3%, and 19.2% on HotpotQA, 2WikiMultihopQA, and MeetingBank, respectively, and improves consistency by 21.5%. Although the inference time is slightly higher than that of a single LLM, it is significantly lower than other multi-agent models, achieving a balance between accuracy, stability, and efficiency.

TABLE I. COMPARISON OF OVERALL PERFORMANCE OF VARIOUS MODELS ON BINARY DATASETS

| Model | HotpotQA(Acc/%) | 2WikiMultihopQA(Acc/%) | MeetingBank(Acc/%) | Cons/% | Time/s |

|-----------------------------------|-------------------|--------------------------|----------------------|----------|----------|

| GPT-3.5 | 62.3 | 58.7 | 51.2 | 65.8 | 2.1 |

| Llama 2-7B | 59.1 | 55.3 | 48.6 | 63.2 | 1.8 |

| AutoGPT | 68.5 | 63.2 | 57.9 | 70.2 | 4.3 |

| MetaGPT | 70.2 | 65.1 | 59.4 | 72.5 | 4.7 |

| RAG | 71.1 | 66.5 | 60.3 | 72.4 | 3.5 |

| REALM | 72.4 | 67.8 | 61.7 | 73.8 | 3.8 |

| This article's model | 79.1 | 73 | 70.4 | 87.3 | 3.9 |

| Relative improvement (vs GPT-3.5) | 16.8 | 14.3 | 19.2 | 21.5 | 1.8 |

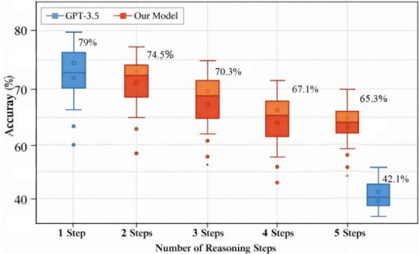

Figure 3 compares Acc between the proposed model and GPT-3.5 across reasoning steps (on 2WikiMultihopQA). The x- axis shows steps (1 -5), and the y-axis shows Acc (%). The box plot reveals GPT-3.5 accuracy drops sharply to 42.1% at 5 steps,

while the proposed model maintains 65.3%, demonstrating strong resistance to long-chain degradation via multi-agent architecture.

Figure 3. Comparison of Model Accuracy under Different Inference Steps.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Box Plot: Accuracy vs. Number of Reasoning Steps for GPT-3.5 and Our Model

### Overview

The image is a box plot comparing the accuracy of GPT-3.5 and "Our Model" across different numbers of reasoning steps (1 to 5). The y-axis represents accuracy in percentage, and the x-axis represents the number of reasoning steps. The plot shows the distribution of accuracy for each model at each step count.

### Components/Axes

* **Title:** Implicit, but the plot compares accuracy vs. reasoning steps.

* **X-axis:** "Number of Reasoning Steps" with categories: 1 Step, 2 Steps, 3 Steps, 4 Steps, 5 Steps.

* **Y-axis:** "Accuracy (%)" with a scale from approximately 40% to 80%.

* **Legend:** Located at the top-left of the chart.

* Blue: GPT-3.5

* Red/Orange: Our Model

### Detailed Analysis

The plot displays box plots for each model at each step. The box represents the interquartile range (IQR), the line inside the box represents the median, and the whiskers extend to the data points within 1.5 times the IQR. Points outside the whiskers are plotted as outliers.

**GPT-3.5:**

* **1 Step:** The box plot is centered around 73% accuracy, with a median around 74%. The top of the box is near 76%, and the bottom of the box is near 71%. The maximum value is near 79%. There is one outlier at approximately 60%.

* **5 Steps:** The box plot is centered around 40% accuracy, with a median around 41%. The top of the box is near 43%, and the bottom of the box is near 38%. The maximum value is near 42.1%.

**Our Model:**

* **2 Steps:** The box plot is centered around 72% accuracy, with a median around 73%. The top of the box is near 75%, and the bottom of the box is near 69%. The maximum value is near 74.5%. There is one outlier at approximately 58%.

* **3 Steps:** The box plot is centered around 69% accuracy, with a median around 70%. The top of the box is near 72%, and the bottom of the box is near 65%. The maximum value is near 70.3%. There is one outlier at approximately 57%.

* **4 Steps:** The box plot is centered around 65% accuracy, with a median around 66%. The top of the box is near 68%, and the bottom of the box is near 62%. The maximum value is near 67.1%. There is one outlier at approximately 53%.

* **5 Steps:** The box plot is centered around 63% accuracy, with a median around 64%. The top of the box is near 66%, and the bottom of the box is near 60%. The maximum value is near 65.3%. There is one outlier at approximately 59%.

### Key Observations

* GPT-3.5 has a significantly higher accuracy with 1 step compared to 5 steps.

* "Our Model" consistently decreases in accuracy as the number of reasoning steps increases.

* "Our Model" outperforms GPT-3.5 at 2, 3, 4 and 5 steps.

* The spread of accuracy (IQR) for "Our Model" appears to be relatively consistent across different numbers of reasoning steps.

### Interpretation

The data suggests that GPT-3.5 is more accurate with fewer reasoning steps, while "Our Model" shows a gradual decline in accuracy as the number of reasoning steps increases. "Our Model" consistently outperforms GPT-3.5 at 2, 3, 4 and 5 steps. This could indicate that "Our Model" is better suited for complex reasoning tasks, but its performance degrades as the complexity increases. The outliers in both models suggest that there are instances where the models perform significantly worse than their average performance. The box plot provides a visual representation of the distribution of accuracy for each model at each step count, allowing for a comparison of their performance.

</details>

## 2) Validation of Model Structure Effectiveness

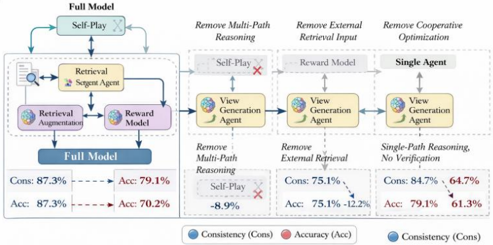

To assess module importance, ablation tests were conducted:

① no self-play; ② no retrieval enhancement; ③ no reward model; ④ single-agent (only opinion generation).Figure 4 shows changes in Cons (left y-axis, %) and Acc (right y-axis, %) across these settings. The x-axis shows the models: full / -selfplay / -retrieval / -reward / single-agent. The single-agent setup yields Cons 64.7% and Acc 61.3%. The full model improves Cons by 22.6% and Acc by 17.8%. Without retrieval, Cons drops 12.2%; without self-play, Acc drops 8.9%, confirming each module ' s necessity.

Figure 4. Comparison of Consistency and Accuracy.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Diagram: Model Ablation Study

### Overview

The image presents a diagram illustrating an ablation study of a "Full Model" by systematically removing components and analyzing the impact on consistency (Cons) and accuracy (Acc). The diagram shows the original model and three ablated versions, each with a specific component removed. The performance metrics (consistency and accuracy) are provided for each model configuration.

### Components/Axes

* **Header:** "Full Model", "Remove Multi-Path Reasoning", "Remove External Retrieval Input", "Remove Cooperative Optimization"

* **Nodes:**

* "Self-Play" (top, connected to Retrieval Sergent Agent)

* "Retrieval Sergent Agent"

* "Retrieval Augmentation"

* "Reward Model"

* "View Generation Agent"

* "Single Agent"

* **Metrics:**

* "Cons" (Consistency) - Represented by blue circles in the legend.

* "Acc" (Accuracy) - Represented by red circles in the legend.

* **Legend:** Located at the bottom of the image.

* Blue circle: "Consistency (Cons)"

* Red circle: "Accuracy (Acc)"

### Detailed Analysis

**1. Full Model (Leftmost)**

* **Components:** "Self-Play", "Retrieval Sergent Agent", "Retrieval Augmentation", "Reward Model"

* **Metrics:**

* Cons: 87.3%

* Acc: 87.3%

* Cons (after ablation): 79.1%

* Acc (after ablation): 70.2%

* **Flow:** The diagram shows a flow from "Retrieval Sergent Agent" to "Retrieval Augmentation" and "Reward Model". "Retrieval Augmentation" and "Reward Model" are interconnected. "Self-Play" connects to "Retrieval Sergent Agent".

**2. Remove Multi-Path Reasoning**

* **Components Removed:** "Self-Play" (indicated by a red "X")

* **Components Present:** "View Generation Agent"

* **Metrics:**

* Self-Play: -8.9%

* Cons: 75.1%

* Acc: 75.1% -12.2%

* **Flow:** The flow is from "View Generation Agent" to the next stage.

**3. Remove External Retrieval Input**

* **Components Present:** "Reward Model", "View Generation Agent"

* **Metrics:**

* Cons: 75.1%

* Acc: 75.1% -12.2%

* **Flow:** The flow is from "View Generation Agent" to the next stage.

**4. Remove Cooperative Optimization (Rightmost)**

* **Components:** "Single Agent", "View Generation Agent"

* **Metrics:**

* Cons: 84.7%, 64.7%

* Acc: 79.1%, 61.3%

* **Text:** "Single-Path Reasoning, No Verification"

* **Flow:** The diagram ends with "View Generation Agent".

### Key Observations

* The "Full Model" has the highest consistency and accuracy.

* Removing "Self-Play" in the "Remove Multi-Path Reasoning" stage results in a decrease in performance.

* Removing "External Retrieval Input" also leads to a decrease in performance.

* Removing "Cooperative Optimization" results in the lowest consistency and accuracy.

### Interpretation

The diagram illustrates the impact of different components on the overall performance of the "Full Model". Removing "Cooperative Optimization" has the most significant negative impact, suggesting that this component is crucial for achieving high consistency and accuracy. The ablation study demonstrates the importance of each component in the model's architecture and provides insights into their individual contributions to the overall performance. The removal of "Self-Play" also has a negative impact, indicating its importance in the model's reasoning process. The diagram highlights the effectiveness of the full model by showing how removing components degrades performance.

</details>

Further analysis shows that the retrieval enhancement module reduces factual errors by supplementing external facts, improving consistency. The self-game mechanism promotes multi-path reasoning, reducing logical blind spots and enhancing accuracy. The reward model, via multi-objective collaborative optimization, avoids goal conflicts and maintains performance balance. In contrast, single-agent models lack division of labor and coordination, fail to meet multidimensional reasoning needs, and struggle with factual verification, resulting in performance drops. This comparison highlights the superiority of the multi-agent role-based architecture, which addresses fact verification, logical integrity, and goal consistency through modular collaboration. Single agents, limited in cognitive scope, cannot balance factual accuracy and logical rigor or verify via multiple perspectives -explaining their core limitations. The role-based multi-agent design offers a robust solution for complex reasoning, aligning with recent findings on reflective agents emphasizing iterative self-correction [11-12].

## IV. CONCLUSION

The proposed group deliberation multi-agent dialogue model, through a three-level role-based architecture, self-game mechanism, retrieval enhancement module, and collaborative training strategy, effectively solves the problems of logical collapse, cyclic generation, and insufficient factuality in complex reasoning of single models. Experimental verification shows that the model's reasoning accuracy and consistency indicators on three typical datasets are significantly better than the baseline, and its reasoning efficiency is balanced, providing reliable support for scenarios such as multi-hop question answering and group decision-making. In addition to the promising performance, several limitations should be noted. First, the reported improvements are obtained under controlled experimental settings with fixed model configurations and dataset splits, and may not fully generalize to other task distributions or prompt formulations. Moreover, due to computational constraints, key hyperparameters were selected based on preliminary validation rather than exhaustive search. Furthermore, while repeated experiments reduce variance, formal statistical significance testing and detailed qualitative error analysis were not conducted. Future work will focus on automated hyperparameter optimization, rigorous significance evaluation, and systematic analysis of failure cases, particularly in scenarios involving ambiguous or conflicting evidence.

## REFERENCES

## [1] Du, Y., Li, S., Torralba, A., Tenenbaum, J. B., & Mordatch, I.

Improving Factuality and Reasoning in Language Models through Multi-Agent Debate. International Conference on Learning Representations (ICLR), 2024.

- [2] Song T, Tan Y, Zhu Z, et al. Multi-agents are social groups: Investigating social influence of multiple agents in human-agent interactions[J]. Proceedings of the ACM on Human-Computer Interaction, 2025, 9(7): 1-33.

- [3] Li, G., Hammoud, H., Itani, H., Khizbullin, D., & Ghanem, B.

3. CAMEL: Communicative Agents for 'Mind' Exploration of Large Language Models. Advances in Neural Information Processing Systems (NeurIPS), 2023.

- [4] Wang, X., Wei, J., Schuurmans, D., et al. Self-Consistency Improves Chain of Thought Reasoning in Language Models. International Conference on Learning Representations (ICLR), 2023.

- [5] Lewis, P., Perez, E., Piktus, A., et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. Advances in Neural Information Processing Systems (NeurIPS), 2020.

- [6] Yu, C., Velu, A., Vinitsky, E., Gao, J., Wang, Y., Bayen, A., & Wu, Y. The Surprising Effectiveness of PPO in Cooperative Multi-Agent Games.

7. Advances in Neural Information Processing Systems (NeurIPS), 2022. [7] Lowe, R., Wu, Y., Tamar, A., Harb, J., Abbeel, O. P., & Mordatch, I. Multi-Agent Actor-Critic for Mixed Cooperative-Competitive Environments. Advances in Neural Information Processing Systems (NeurIPS), 2017.

- [8] Ouyang, L., Wu, J., Jiang, X., et al. Training Language Models to Follow Instructions with Human Feedback. Advances in Neural Information Processing Systems (NeurIPS), 2022.

[9] Zhang, Z., Liu, Z., Zhou, M., et al. Multi-Agent Reinforcement Learning: A Selective Overview of Theories and Algorithms. Artificial Intelligence, vol. 285, 2020.

[10]Sun Y, Liu X. Research and Application of a Multi-Agent-Based Intelligent Mine Gas State Decision-Making System[J]. Applied Sciences, 2025, 15(2): 968.

- [11] Shinn, N., et al. Reflexion: Language Agents with Verbal Reinforcement Learning. NeurIPS Workshop, 2023.

- [12] Yao, S., et al. Tree of Thoughts: Deliberate Problem Solving with Large Language Models. Advances in Neural Information Processing Systems (NeurIPS), 2023.