# AMAP Agentic Planning Technical Report

**Authors**: AMAP AI Agent Team, Yulan Hu, Xiangwen Zhang, Sheng Ouyang, Hao Yi, Lu Xu, Qinglin Lang, Lide Tan, Xiang Cheng, Tianchen Ye, Zhicong Li, Ge Chen, Wenjin Yang, Zheng Pan, Shaopan Xiong, Siran Yang, Ju Huang, Yan Zhang, Jiamang Wang, Yong Liu, Yinfeng Huang, Ning Wang, Tucheng Lin, Xin Li, Ning Guo

<details>

<summary>Image 1 Details</summary>

### Visual Description

Icon/Small Image (176x37)

</details>

## AMAP Agentic Planning Technical Report

AMAP AI Agent LLM Team

## Abstract

We present STAgent, an agentic large language model tailored for spatio-temporal understanding, designed to solve complex tasks such as constrained point-of-interest discovery and itinerary planning. STAgent is a specialized model capable of interacting with ten distinct tools within spatio-temporal scenarios, enabling it to explore, verify, and refine intermediate steps during complex reasoning. Notably, STAgent effectively preserves its general capabilities. We empower STAgent with these capabilities through three key contributions: (1) a stable tool environment that supports over ten domain-specific tools, enabling asynchronous rollout and training; (2) a hierarchical data curation framework that identifies high-quality data like a needle in a haystack, curating high-quality queries by retaining less than 1% of the raw data, emphasizing both diversity and difficulty; and (3) a cascaded training recipe that starts with a seed SFT stage acting as a guardian to measure query difficulty, followed by a second SFT stage fine-tuned on queries with high certainty, and an ultimate RL stage that leverages data of low certainty. Initialized with Qwen3-30B-A3B-2507 to establish a strong SFT foundation and leverage insights into sample difficulty, STAgent yields promising performance on TravelBench while maintaining its general capabilities across a wide range of general benchmarks, thereby demonstrating the effectiveness of our proposed agentic model.

## 1 Introduction

The past year has witnessed the development of Large Language Models (LLMs) incorporating tool invocation for complex task reasoning, significantly pushing the frontier of general intelligence Team et al. (2025); Zeng et al. (2025); Liu et al. (2025); Yang et al. (2025). Tool-integrated reasoning (TIR) Qu et al. (2025) empowers LLMs with the capability to interact with the tool environment, allowing the model to determine the next move based on feedback from the tools Gou et al. (2023); Chen et al. (2025b); Dong et al. (2025); Shang et al. (2025). Crucially, existing TIR efforts mostly focus on scenarios like mathematical reasoning and code testing Zhang et al. (2025); Lin & Xu (2025), while solutions for more practical real-world settings remain lacking.

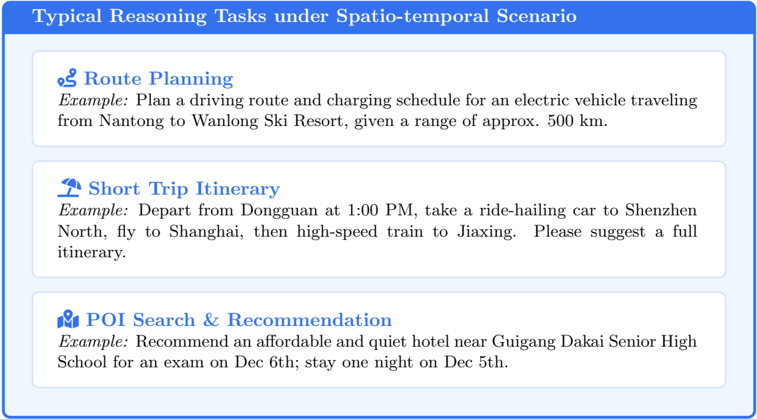

Real-world reasoning tasks can be categorized based on cognitive cost and processing speed into System 1 and System 2 modes Li et al. (2025b): the former is rapid, whereas the latter necessitates extensive and complex deliberation. Reasoning tasks in spatio-temporal scenarios Zheng et al. (2025b); Lei et al. (2025) represent typical System 2 scenarios. As depicted in Figure 2, such complex tasks involve identifying locations, designing driving routes, or planning travel itineraries subject to numerous constraints Ning et al. (2025); Xie et al. (2024), necessitating the coordination of heterogeneous external tools for resolution Zhang et al. (2025); Xu et al. (2025). Consequently, TIR interleaves thought generation with tool execution, empowers the model to verify intermediate steps and dynamically adjust its planning trajectory based on observation feedback, and exhibits an inherent advantage in addressing these real-world tasks.

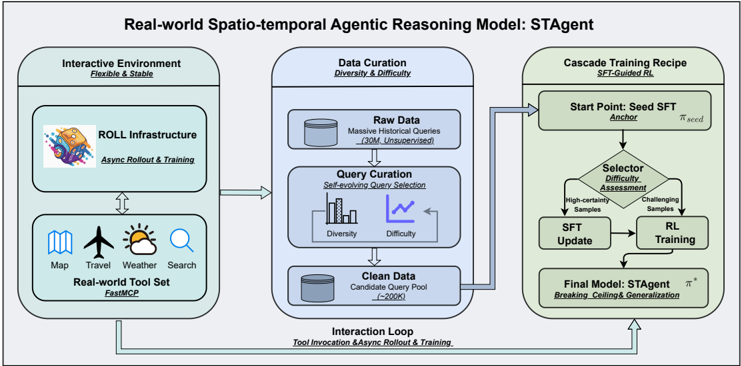

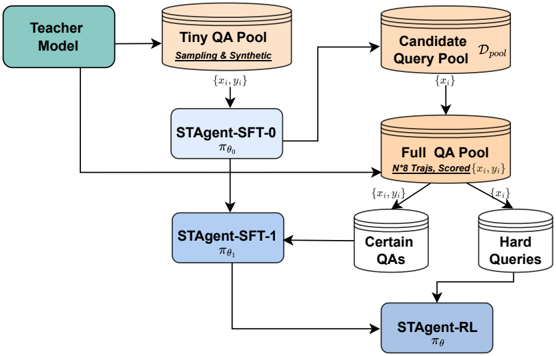

Figure 1: The overall framework of STAgent. It presents a comprehensive pipeline designed for real-world spatio-temporal reasoning. The framework consists of three key phases: (1) Robust Interactive Environment , supported by the ROLL infrastructure and FastMCP protocol to enable efficient, asynchronous tool-integrated reasoning. (2) High-Quality Data Construction , which utilizes a self-evolving selection framework to filter diverse and challenging queries from massive unsupervised data; (3) Cascade Training Recipe , an SFT-Guided RL paradigm that categorizes samples by difficulty to synergize supervised fine-tuning with reinforcement learning.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Diagram: Real-world Spatio-temporal Agentic Reasoning Model: STAgent

### Overview

The diagram illustrates a three-stage pipeline for developing a spatio-temporal reasoning model (STAgent). It integrates real-world data, iterative training, and reinforcement learning (RL) to create an adaptive agent. The system emphasizes handling diverse, challenging data through a feedback loop.

### Components/Axes

1. **Interactive Environment** (Left, green):

- **ROLL Infrastructure**: Labeled with "Async Rollout & Training" and a colorful icon.

- **Real-world Tool Set**: Includes icons for Map, Travel, Weather, Search, and FastMCP (FastMCP labeled below).

- Arrows indicate bidirectional interaction with the Data Curation stage.

2. **Data Curation** (Center, blue):

- **Raw Data**: "Massive Historical Queries (3M+ Unsupervised)" with a database icon.

- **Query Curation**: "Self-evolving Query Selection" with a graph showing Diversity (↑) and Difficulty (↓).

- **Clean Data**: "Candidate Query Pool (C=200K)" with a database icon.

3. **Cascade Training Recipe** (Right, green):

- **Start Point**: "Seed SFT Anchor" with a seed symbol.

- **Selector Difficulty Assessment**: Branches into High-certainty Samples and Challenging Samples.

- **SFT Update** and **RL Training** lead to the Final Model: STAgent (labeled with "Breaking, Celling & Generalization").

4. **Interaction Loop** (Bottom, blue):

- Connects all stages with "Tool Invocation & Async Rollout & Training."

### Detailed Analysis

- **Interactive Environment**: Focuses on real-world data collection via tools (map, weather, etc.) and asynchronous rollout/training.

- **Data Curation**: Processes raw data (3M+ queries) into a curated pool (200K candidates), emphasizing diversity and difficulty trade-offs.

- **Cascade Training**: Uses a difficulty-based selector to split data into high-certainty and challenging samples, iteratively updating the model via SFT and RL.

- **Feedback Loop**: The Interaction Loop ensures continuous refinement through tool use and async rollout.

### Key Observations

- **Data Volume**: Raw data is massive (3M+), but only 200K candidates are retained after curation.

- **Difficulty Handling**: The model explicitly addresses challenging samples via RL training.

- **Modular Design**: Components are isolated (e.g., ROLL Infrastructure, Clean Data) but interconnected via arrows.

### Interpretation

The STAgent model is designed to handle real-world spatio-temporal reasoning by:

1. **Leveraging Real-world Tools**: Integrating diverse data sources (map, weather) to ground the model in practical scenarios.

2. **Iterative Curation**: Using self-evolving queries to refine data quality while balancing diversity and difficulty.

3. **Reinforcement Learning**: Addressing challenging samples through RL to improve generalization and adaptability.

The system’s strength lies in its feedback loop, which ensures continuous learning from real-world interactions. However, the reliance on unsupervised raw data (3M+) raises questions about noise management, though the 200K curated pool suggests robust filtering. The emphasis on "Breaking, Celling & Generalization" implies the model aims to handle edge cases and novel scenarios effectively.

</details>

Figure 2: Typical reasoning tasks under spatio-temporal scenario.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Textual Content Extraction: Typical Reasoning Tasks under Spatio-temporal Scenario

### Overview

The image presents a structured overview of three reasoning tasks related to spatio-temporal planning, each with a labeled example. The content is organized into three distinct sections, each with an icon, title, and descriptive example.

### Components/Axes

- **Main Title**: "Typical Reasoning Tasks under Spatio-temporal Scenario" (top banner, blue background).

- **Section 1**:

- **Icon**: Person with a route symbol (🧭).

- **Title**: "Route Planning" (bold blue text).

- **Example**: "Plan a driving route and charging schedule for an electric vehicle traveling from Nantong to Wanlong Ski Resort, given a range of approx. 500 km."

- **Section 2**:

- **Icon**: Umbrella with a suitcase (🌂🧳).

- **Title**: "Short Trip Itinerary" (bold blue text).

- **Example**: "Depart from Dongguan at 1:00 PM, take a ride-hailing car to Shenzhen North, fly to Shanghai, then high-speed train to Jiaxing. Please suggest a full itinerary."

- **Section 3**:

- **Icon**: Person with a speech bubble (👤💬).

- **Title**: "POI Search & Recommendation" (bold blue text).

- **Example**: "Recommend an affordable and quiet hotel near Guigang Dakai Senior High School for an exam on Dec 6th; stay one night on Dec 5th."

### Detailed Analysis

- **Route Planning**: Focuses on optimizing travel logistics (route + charging schedule) for an electric vehicle with a 500 km range.

- **Short Trip Itinerary**: Involves multi-modal transportation (ride-hailing, flight, high-speed rail) with a fixed departure time and sequence.

- **POI Search & Recommendation**: Requires contextual awareness (exam date, location proximity, duration of stay).

### Key Observations

1. All examples emphasize **spatio-temporal constraints** (e.g., departure times, travel durations, location proximity).

2. Tasks involve **multi-step reasoning**:

- Route Planning: Combines distance, vehicle range, and charging infrastructure.

- Itinerary: Sequences transportation modes with time dependencies.

- POI Search: Balances affordability, quietness, and temporal alignment with an exam.

3. No numerical data or visualizations (e.g., charts, graphs) are present.

### Interpretation

The image outlines **spatio-temporal reasoning tasks** that require integrating geographic, temporal, and contextual variables. Each example demonstrates:

- **Route Planning**: Logistical optimization under resource constraints (e.g., EV battery limits).

- **Itinerary Design**: Sequential decision-making across transportation modes with fixed schedules.

- **POI Recommendation**: Context-aware suggestions based on user needs (e.g., exam timing, accommodation preferences).

These tasks likely serve as benchmarks for evaluating AI systems' ability to handle real-world planning scenarios requiring spatial awareness and temporal sequencing. The absence of numerical data suggests the focus is on qualitative task definitions rather than quantitative analysis.

</details>

However, unlike general reasoning tasks such as mathematics Shang et al. (2025) or coding Zeng et al. (2025), addressing these real-world tasks inherently involves several challenges. First , how can a flexible and stable reasoning environment be constructed? On one hand, a feasible environment requires a toolset capable of handling massive concurrent tool call requests, which will be invoked by both offline data curation and online reinforcement learning (RL) Bian et al. (2025). On the other hand, during training, maintaining effective synchronization between tool calls and trajectory rollouts, and guaranteeing that the model receives accurate reward signals, constitute the cornerstone of effective TIR for such complex tasks. Second , how can high-quality training data be curated?

In real-world spatio-temporal scenarios, massive real-world queries are sent by users and stored in databases; however, this data is unsupervised and lacks necessary knowledge, e.g., the category and difficulty of each query Yu et al. (2025), making the model unaware of the optimization direction Li et al. (2025a). Therefore, it is critical to construct a query taxonomy to facilitate high-quality data selection for model optimization. Third , how should effective training for real-world TIR be conducted? Existing TIR efforts mostly focus on the adaptation between algorithms and tools. For instance, monitoring interleaved tool call entropy changes to guide further token rollout Dong et al. (2025) or increasing rollout batch sizes to mitigate tool noise Shang et al. (2025). Nevertheless, the uncertain environment and diverse tasks in real-world scenarios pose extra challenges when applying these methods. Therefore, deriving a more tailored training recipe is key to elevating the upper bound of TIR performance.

In this work, we propose STAgent, the pioneering agentic model designed for real-world spatiotemporal TIR reasoning. We develop a comprehensive pipeline encompassing a Interactive Environment, High-Quality Data Curation, and a Cascade Training Recipe, as shown in Figure 1. Specifically, STAgent features three primary aspects. For environment and infrastructure, we established a robust reinforcement training environment supporting ten domain-specific tools across four categories, including map, travel, weather, and information retrieval tools. On the one hand, we encapsulated these tools using FastMCP 1 , standardizing parameter formats and invocation protocols, which significantly facilitates future tool modification. On the other hand, we collaborated with the ROLL Wang et al. (2025) 2 team to optimize the RL training infrastructure, offering two core features: asynchronous rollout and training. Compared to the popular open-source framework Verl 3 , ROLL yields an 80% improvement in training efficiency. Furthermore, we designed a meticulous query selection framework to hierarchically extract high-quality queries from massive historical candidates. We focus on query diversity and difficulty, deriving a self-evolving query selection framework to filter approximately 200,000 queries from an original historical dataset of over 30 million, which serves as the candidate query pool for subsequent SFT and RL training. Lastly, we designed a cascaded training paradigm-specifically an SFT-Guided RL approach-to ensure continuous improvement in model capability. By training a Seed SFT model to serve as an evaluator, we assess the difficulty of the query pool to categorize queries for subsequent SFT updates and RL training. Specifically, SFT is refined with samples of higher certainty, while RL targets more challenging samples from the updated SFT model. This paradigm significantly enhances the generalization of the SFT model while enabling the RL model to push beyond performance ceilings.

The final model, STAgent, was built upon Qwen3-30B-A3B-2507 Yang et al. (2025). Notably, during the SFT stage, STAgent incorporated only a minimal amount of general instruction-following data Xu et al. (2025) to enhance tool invocation capabilities, with the vast majority consisting of domain-specific data. Remarkably, as a specialized model, STAgent demonstrates significant advantages on TravelBench Cheng et al. (2025) compared to models with larger parameter sizes. Furthermore, despite not being specifically tuned for the general domain, STAgent achieved improvements on numerous general-domain benchmarks, demonstrating its strong generalization capability.

## 2 Methodology

## 2.1 Overview

We formally present STAgent in this section, focusing on three key components:

- Tool environment construction. We introduce details of the in-domain tools used in Section 2.2.

- High-quality prompt curation. We present the hierarchical prompt curation pipeline in Section 2.3, including the derivation of a prompt taxonomy, large-scale prompt annotation, and difficulty measurement.

1 https://github.com/jlowin/fastmcp

2 https://github.com/alibaba/ROLL

3 https://github.com/volcengine/verl

- Cascaded agentic post-training recipe. We present the post-training procedure in Section 2.4, Section 2.5, and Section 2.6, which corresponds to reward design, agentic SFT, and SFT-guided RL training, respectively.

## 2.2 Environment Construction

To enable STAgent to interact with real-world spatio-temporal services in a controlled and reproducible manner, we developed a high-fidelity sandbox environment built upon FastMCP. This environment serves as the bridge between the agent's reasoning capabilities and the underlying spatiotemporal APIs, providing a standardized interface for tool invocation during both training and evaluation. To reduce API latency and costs during large-scale RL training, we implemented a tool-level LRU caching mechanism with parameter normalization to maximize cache hit rates.

Our tool library comprises 10 specialized tools spanning four functional categories, designed to cover the full spectrum of spatio-temporal user needs identified in our Intent Taxonomy (Section 2.3). All tool outputs are post-processed into structured natural language to facilitate the agent's comprehension and reduce hallucination risks. We describe the summary of the tool definition in Table 1, more tool details can be found in Appendix A.1.

Table 1: Summary of the tool library for the Amap Agent sandbox environment.

| Category | Tool | Description |

|-----------------|---------------------------------------------------------------------------------------------------------------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| Map &Navigation | map search places map compute routes map search along route map search central places map search ranking list | Search POIs by keyword, location, or region Route planning supporting multiple transport modes Find POIs along a route corridor Locate optimal meeting points for multiple origins Query curated ranking lists |

| Travel | travel search flights travel search trains | Search flights with optional multi-day range Query train schedules and fares |

| Weather | weather current conditions weather forecast days | Get real-time weather and AQI Retrieve multi-day forecasts |

| Information | web search | Open-domain web search |

## 2.3 Prompt Curation

To endow the STAgent with comprehensive spatio-temporal reasoning capabilities, we synthesized a high-fidelity instruction dataset grounded in large-scale, real-world user behaviors. This dataset spans the full spectrum of user needs, covering atomic queries such as POI retrieval as well as composite, multi-constraint tasks like intricate itinerary planning.

We leveraged anonymized online user logs spanning a three-month window as our primary data source, with a volume of 30 million. The critical challenge lies in distilling these noisy, unstructured interactions into a structured, high-diversity instruction dataset suitable for training a sophisticated agent. To achieve this, we constructed a hierarchical Intent Taxonomy . This taxonomy functions as a rigorous framework for precise annotation, quantitative distribution analysis, and controlled sampling, ensuring the dataset maximizes both Task Type Diversity (comprehensive intent coverage) and Difficulty Diversity (progression from elementary to complex reasoning).

## 2.3.1 Seed-Driven Taxonomy Evolution

We propose a Seed-Driven Evolutionary Framework to construct a taxonomy that guarantees both completeness and orthogonality (i.e., non-overlapping, independent dimensions). Instead of relying on static classification, our approach initiates with a high-quality kernel of seed prompts and iteratively expands the domain coverage by synergizing the generative power of LLMs with rigorous human oversight. The process unfolds in the following phases:

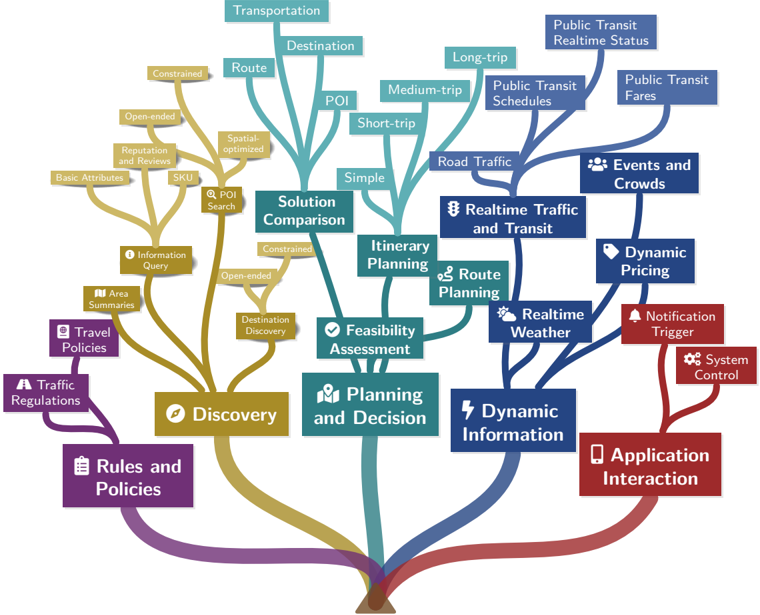

Figure 3: A visual taxonomy of our intent classification system. The hierarchical structure is organized into five primary categories: Rules and Policies, Discovery, Planning and Decision, Dynamic Information, and Application Interaction. The taxonomy further branches into 16 second-level categories and terminates in 30 fine-grained leaf nodes, capturing the multi-faceted complexity of realworld user queries in navigation and travel scenarios.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Flowchart: Travel Planning and Decision-Making Process

### Overview

The flowchart illustrates a hierarchical, interconnected decision-making process for travel planning. It branches into three primary categories: **Discovery**, **Planning and Decision**, and **Dynamic Information**, with feedback loops to **Application Interaction**. Each branch contains sub-components, rules, and policies influencing travel outcomes.

---

### Components/Axes

#### Legend (Bottom Center)

- **Purple**: Rules and Policies

- **Gold**: Discovery

- **Teal**: Planning and Decision

- **Blue**: Dynamic Information

- **Red**: Application Interaction

#### Key Nodes and Branches

1. **Discovery (Gold)**

- **Rules and Policies (Purple)**

- Travel Policies

- Traffic Regulations

- **Information Query**

- Basic Attributes

- Reputation and Reviews

- SKU

- Constrained/Open-ended

- **Destination Discovery**

- Spatial-optimized

- POI Search

- **Feasibility Assessment**

- Constrained/Open-ended

- Destination Discovery

- **Solution Comparison**

- Constrained/Open-ended

- Destination Discovery

2. **Planning and Decision (Teal)**

- **Feasibility Assessment**

- Destination Discovery

- **Itinerary Planning**

- Simple

- Short-trip

- Medium-trip

- Long-trip

- **Route Planning**

- Transportation

- Destination

- Route

- POI

- **Solution Comparison**

- Constrained/Open-ended

- Destination Discovery

3. **Dynamic Information (Blue)**

- **Real-time Traffic and Transit**

- Public Transit Real-time Status

- Public Transit Fares

- Road Traffic

- **Real-time Weather**

- **Dynamic Pricing**

- Events and Crowds

- **Notification Trigger**

- **System Control**

4. **Application Interaction (Red)**

- Connects all branches via feedback loops.

---

### Detailed Analysis

#### Discovery Branch

- **Rules and Policies**: Governs constraints (e.g., Travel Policies, Traffic Regulations).

- **Information Query**: Focuses on user-defined criteria (e.g., Basic Attributes, Reputation).

- **Destination Discovery**: Uses spatial optimization and POI search to identify locations.

- **Feasibility Assessment**: Evaluates options based on constraints and open-ended criteria.

#### Planning and Decision Branch

- **Itinerary Planning**: Categorizes trips by duration (Simple, Short-, Medium-, Long-trip).

- **Route Planning**: Integrates transportation modes (Public Transit, Road Traffic) and POI.

- **Solution Comparison**: Balances constrained vs. open-ended options for optimal routing.

#### Dynamic Information Branch

- **Real-time Data**: Monitors traffic, weather, and transit schedules.

- **Dynamic Pricing**: Adjusts costs based on events, crowds, and demand.

- **Notification Trigger**: Alerts users to changes (e.g., delays, price fluctuations).

#### Application Interaction

- **Feedback Loop**: Red line connects all branches back to the central node, enabling iterative adjustments based on real-time data and user input.

---

### Key Observations

1. **Interconnectedness**: All branches feed into **Application Interaction**, creating a cyclical process.

2. **Real-time Adaptation**: Dynamic Information (Blue) directly influences Planning and Decision (Teal).

3. **User-Centric Design**: Rules and Policies (Purple) and Application Interaction (Red) emphasize user control and feedback.

4. **Hierarchical Complexity**: Sub-components (e.g., POI, Route, Destination) reflect granular decision points.

---

### Interpretation

The flowchart models a **dynamic, user-driven travel planning system** that integrates static rules (e.g., policies) with real-time data (e.g., traffic, weather). Key insights:

- **Feedback Loops**: Application Interaction ensures continuous optimization by incorporating user feedback and real-time updates.

- **Multi-Factor Decision-Making**: Solutions are evaluated across cost, time, reputation, and constraints, reflecting a holistic approach.

- **Scalability**: The modular structure (e.g., Itinerary Planning, Route Planning) allows adaptation to diverse travel scenarios (short trips vs. long-haul journeys).

This system prioritizes flexibility and responsiveness, critical for modern travel platforms requiring real-time adjustments and personalized recommendations.

</details>

Stage 1: Seed Initialization. We manually curate a small, high-variance set of n seed prompts ( D seed ∈ D pool ) to represent the core diversity of the domain. Experts annotate these seeds with open-ended tags to capture abstract intent features. Each query is mapped into a tuple as:

$$S _ { i } = ( q _ { i } , T _ { i } ) , s . t . T _ { i }$$

where T i denotes the k orthogonal or complementary intent nodes covered by instruction q i .

Stage 2: LLM-Driven Category Induction. Using the annotated seeds, we prompt an LLM to induce orthogonal Level-1 categories, ensuring granular alignment across the domain.

Stage 3: Iterative Refinement Loop. To prevent hallucinations, we implement a strict check-update cycle. The LLM re-annotates the D seed using the generated categories; human experts review these annotations to identify ambiguity or coverage gaps, feeding feedback back to the LLM for taxonomy refinement, executing a 'Tag-Feedback-Correction' cycle across several iterations:

$$T ^ { ( k + 1 ) } = Refine ( Annotate ( D$$

During this process, human experts identify ambiguity or coverage gaps in the LLM-generated categories, feeding critical insights back for taxonomy adjustment. The process terminates when T ( k +1) ≈ T ( k ) , signifying that the system has converged into a stable benchmark taxonomy T ∗ .

Stage 4: Dynamic Taxonomy Expansion. To capture long-tail intents beyond the initial seeds, we enforce an 'Other' category at each node. By labeling a massive scale of raw logs and analyzing samples falling into the 'Other' category, we discover and merge emerging categories, allowing the taxonomy to evolve dynamically.

This mechanism transforms the taxonomy from a static tree into an evolving system capable of adapting to the open-world distribution of user queries. The resulting finalized Intent Taxonomy is visualized in Figure 3.

## 2.3.2 Annotation and Data Curation

Building upon this stabilized and mutually orthogonal taxonomy, we deployed a high-capacity teacher LLM to execute large-scale intent classification on the structured log data. The specific prompt template used for this annotation is detailed in Appendix A.2. To ensure the training data achieves both high information density and minimal redundancy, we implemented a rigorous twophase process:

Precise Multi-Dimensional Annotation. We treat intent understanding as a multi-label classification task. Each user instruction is mapped to a composite label vector V = ⟨ I primary , I secondary , C constraints ⟩ .

- I primary and I secondary represent the leaf nodes in our fine-grained Intent Taxonomy.

- C constraints captures specific auxiliary dimensions (e.g., spatial range , time budget , vehicle type ).

This granular tagging captures the semantic nuance of complex queries, distinguishing between simple keyword searches and multi-constraint planning tasks.

Controlled Sampling via Funnel Filtering. Based on the annotation results, we applied a threestage Funnel Filtering Strategy to construct the final curated dataset. This pipeline systematically eliminates redundancy at lexical, semantic, and geometric levels:

- Lexical Redundancy Elimination: We applied global Locality-Sensitive Hashing at corpus level to efficiently remove near-duplicate strings and literal repetitions ('garbage data') across all data, significantly reducing the initial volume.

- Semantic Redundancy Elimination: To ensure high intra-class variance, we partitioned the dataset into buckets based on the ⟨ I primary , I secondary ⟩ tuple. Within each bucket, we performed embedding-based similarity search to prune semantically redundant samples-defined as distinct phrasings that reside within a predefined distance threshold in the latent space. To handle the scale of our dataset, we integrated Faiss to accelerate this process; this transitioned the computational complexity from a quadratic O ( N 2 ) brute-force pairwise comparison to a more scalable sub-linear or near-linear search complexity, significantly reducing the preprocessing overhead.

- Geometric Redundancy Elimination: From the remaining pool, we employed the KCenter-Greedy algorithm to select the most representative samples. By maximizing the minimum distance between selected data points in the embedding space, this step preserves long-tail and corner-case queries that are critical for robust agent performance.

## 2.3.3 Difficulty and Diversity

Real-world geospatial tasks vary significantly in cognitive load. To ensure the STAgent demonstrates robustness across this spectrum, we rigorously stratified our training data. Beyond mere coverage, the primary objective of this stratification is to enable Difficulty-based Curriculum Learning. In RL, a static data distribution often leads to training inefficiency: early-stage models facing excessively hard tasks yield zero rewards (vanishing gradients), while late-stage models facing trivial tasks yield constant perfect rewards (lack of variance). To address this, we employed an Execution-Simulation Scoring Mechanism to label data complexity, allowing us to dynamically align training data with model proficiency. This mechanism evaluates difficulty across three orthogonal dimensions:

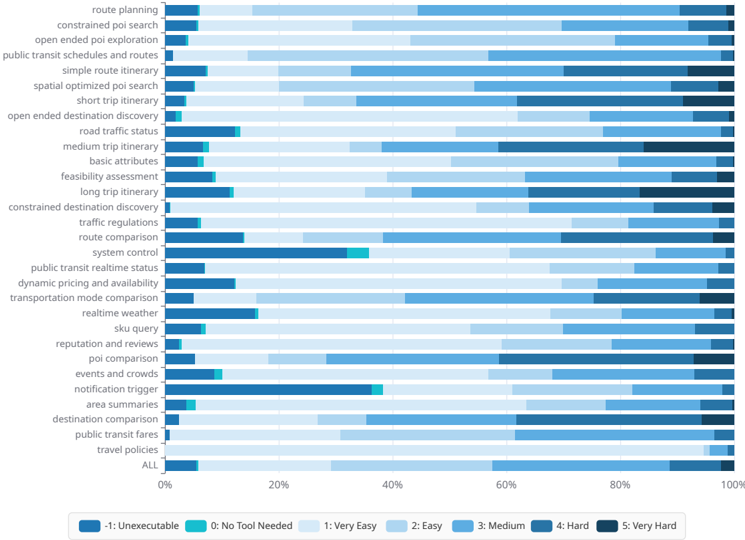

Figure 4: Fine-grained Difficulty Distribution across 30 Geospatial Domains. The visualization reveals the distinct complexity profiles of different tasks. While atomic queries (e.g., basic attributes ) cluster in low-difficulty regions (Score 1-2), composite tasks (e.g., long trip itinerary ) exhibit a significant proportion of high-complexity reasoning (Score 4-5). The visible segments of Score -1 and 0 (denoted as Ext.1 ) across domains like System Control highlight our active sampling of boundary cases for hallucination mitigation.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Horizontal Bar Chart: Task Difficulty Distribution Across Transportation Functions

### Overview

The chart visualizes the distribution of difficulty levels for 28 transportation-related tasks, ranging from "route planning" to "travel policies." Each task is represented by a horizontal bar segmented into color-coded proportions corresponding to difficulty levels (-1: Unexecutable to 5: Very Hard). The "ALL" category at the bottom aggregates difficulty distributions across all tasks.

### Components/Axes

- **Y-Axis**: Task categories (28 transportation functions + "ALL" aggregate)

- **X-Axis**: Percentage scale (0% to 100%)

- **Legend**:

- Dark blue: -1 (Unexecutable)

- Teal: 0 (No Tool Needed)

- Light blue: 1 (Very Easy)

- Medium blue: 2 (Easy)

- Darker blue: 3 (Medium)

- Very dark blue: 4 (Hard)

- Black: 5 (Very Hard)

- **Key Elements**:

- "ALL" category at the bottom

- Color-coded segments per task

- Percentage markers at 0%, 20%, 40%, 60%, 80%, 100%

### Detailed Analysis

1. **Task-Specific Distributions**:

- **Route Planning**: 95% "Medium" (dark blue), 5% "Hard" (very dark blue)

- **Constrained POI Search**: 85% "Medium", 10% "Hard", 5% "Very Hard"

- **Public Transit Schedules**: 70% "Medium", 20% "Easy", 10% "Very Easy"

- **System Control**: 30% "Very Hard", 40% "Hard", 30% "Medium"

- **Notification Trigger**: 60% "Very Easy", 30% "Easy", 10% "Medium"

- **Public Transit Fares**: 50% "Medium", 30% "Easy", 20% "Very Easy"

2. **Aggregate Trends**:

- **ALL Category**:

- 35% "Medium" (largest segment)

- 25% "Easy"

- 20% "Hard"

- 15% "Very Easy"

- 5% "Very Hard"

3. **Notable Outliers**:

- **System Control**: Highest "Very Hard" (30%) and "Hard" (40%) segments

- **Notification Trigger**: Largest "Very Easy" (60%) + "Easy" (30%) combined

- **Public Transit Real-Time Status**: Dominant "Medium" (50%) + "Easy" (30%)

### Key Observations

- **Dominant Difficulty**: "Medium" difficulty (dark blue) appears most frequently across tasks.

- **Low Executability**: Only 2 tasks ("route planning," "constrained poi search") show "Unexecutable" (-1) segments.

- **High Complexity**: "System control" and "long trip itinerary" have significant "Hard" (4) and "Very Hard" (5) proportions.

- **Simplicity Cluster**: Tasks like "notification trigger" and "public transit fares" show majority "Very Easy" to "Easy" distributions.

### Interpretation

The data suggests transportation tasks generally fall into moderate difficulty ("Medium" or "Easy"), with system-level functions ("system control," "long trip itinerary") being notably complex. The "ALL" category's distribution indicates an average difficulty skew toward "Medium," implying most transportation functions require moderate technical effort. Outliers like "notification trigger" (simplest) and "system control" (most complex) highlight variability in task design complexity. The absence of "Unexecutable" (-1) in most tasks suggests all functions are technically feasible, though implementation difficulty varies significantly.

</details>

- Cognitive Load in Tool Selection: Measures the ambiguity of intent mapping. It spans from Explicit Mapping (Score 1-2, e.g., 'Navigates to X') to Implicit Reasoning (Score 4-5, e.g., 'Find a quiet place to read within a 20-minute drive,' requiring abstract intent decomposition).

- Execution Chain Depth: Quantifies the logical complexity of the solution path, tracking the number and type of tool invocations and dependency depth (e.g., sequential vs. parallel execution).

- Constraint Complexity: Assesses the density of constraints (spatial, temporal, and preferential) that the agent must jointly optimize.

To operationalize this mechanism at scale, we utilize a strong teacher LLM as an automated evaluator. This evaluator analyzes the structured user logs, assessing them against the three critical competencies to assign a scalar difficulty score r ∈ {-1 , 0 , 1 , ..., 5 } to each sample. The specific prompt template used for this evaluation is detailed in Appendix A.3.

To validate the efficacy of this scoring mechanism, we visualize the distribution of annotated samples across 30 domains in Figure 4. The distribution aligns intuitively with task semantics, providing strong support for our curriculum strategy:

- Spectrum Coverage for Curriculum Learning: As shown in the 'ALL' bar at the bottom of Figure 4, the aggregated dataset achieves a balanced stratification. This allows us to construct training batches that progressively shift from 'Atomic Operations' (Score 1-2) to 'Complex Reasoning' (Score 4-5) throughout the training lifecycle, preventing the reward collapse issues mentioned above.

- Domain-Specific Complexity Profiles: The scorer correctly identifies the inherent hardness of different intents. For instance, Long-trip Itinerary is dominated by Score 4-5 samples (dark blue segments), reflecting its need for multi-constraint optimization. In contrast, Traffic Regulations consists primarily of Score 1-2 samples, confirming the scorer's ability to distinguish between retrieval and reasoning tasks.

By curating batches based on these scores, we ensure the STAgent receives a consistent gradient signal throughout its training lifecycle. Furthermore, we place special emphasis on boundary conditions to enhance reliability:

Irrelevance and Hallucination Mitigation (Score -1/0). A critical failure mode in agents is the tendency to hallucinate actions for unsolvable queries. To mitigate this, it is critical to train models to explicitly reject unanswerable queries or seek alternative solutions when desired tools are unavailable.

To achieve this, we constructed a dedicated Irrelevance Dataset (denoted as Ext.1 in Figure 4). By training on these negative samples, the STAgent learns to recognize the boundaries of its toolset, significantly reducing hallucination rates in open-world deployment.

## 2.4 Reward Design

To evaluate the quality of agent interactions, we employ a rubrics-as-reward (Hashemi et al., 2024; Gunjal et al., 2025) method to assess trajectories based on three core dimensions: Reasoning and Proactive Planning , Information Fidelity and Integration , and Presentation and Service Loop . The scalar reward R ∈ [0 , 1] is derived from the following criteria:

- Dimension 1, Reasoning and Proactive Planning: This dimension evaluates the agent's ability to formulate an economic and effective execution plan. A key metric is proactivity : when faced with ambiguous or slightly incorrect user premises (e.g., mismatched location names), the agent is rewarded for correcting the error and actively attempting to solve the underlying intent, rather than passively rejecting the request. It also penalizes the inclusion of redundant parameters in tool calls.

- Dimension 2, Information Fidelity and Integration: This measures the accuracy with which the agent extracts and synthesizes information from tool outputs. We enforce a strict veto policy for hallucinations: any fabrication of factual data (e.g. time, price and distance) that cannot be grounded in the tool response results in an immediate reward of 0 . Conversely, the agent is rewarded for correctly identifying and rectifying factual errors in the user's query using tool evidence.

- Dimension 3, Presentation and Service Loop: This assesses whether the final response effectively closes the service loop. We prioritize responses that are structured, helpful, and provide actionable next steps. The agent is penalized for being overly conservative or terminating the service flow due to minor input errors.

Crucially, we recognize that different user intents require different capabilities. Therefore, we implement a Dynamic Scoring Mechanism where the evaluator autonomously determines the importance of each dimension based on the task type, rather than using fixed weights.

Dynamic Weight Determination. For every query, the evaluator first analyzes the complexity of the user's request and categorizes it into one of three scenarios to assign specific weights ( w ):

- Scenario A: Complex Planning (e.g., 'Plan a 3-day itinerary'). The system prioritizes logical coherence and error handling. Reference Weights: w reas ≈ 0 . 6 , w info ≈ 0 . 3 , w pres ≈ 0 . 1 .

- Scenario B: Information Retrieval (e.g., 'What is the weather today?'). The system prioritizes factual accuracy and data extraction. Reference Weights: w reas ≈ 0 . 2 , w info ≈ 0 . 6 , w pres ≈ 0 . 2 .

- Scenario C: Consultation & Explanation (e.g., 'Explain this policy'). The system prioritizes clarity and user interaction. Reference Weights: w reas ≈ 0 . 3 , w info ≈ 0 . 3 , w pres ≈ 0 . 4 .

Score Aggregation and Hallucination Veto. Once the weights are established, the final reward R is calculated using a weighted sum of the dimension ratings s ∈ [0 , 1] . To ensure factual reliability, we introduce a Hard Veto mechanism for hallucinations. Let ⊮ H =0 be an indicator function where H = 1 denotes the presence of hallucinated facts. The final reward is formulated as:

$$R = 1 H = 0 \times \sum _ { k \in \{ reas , info , pres \} } w _ { k }$$

This formula ensures that any trajectory containing hallucinations is immediately penalized with a zero score, regardless of its reasoning or presentation quality.

## 2.5 Supervised Fine-Tuning

Our Supervised Fine-Tuning (SFT) stage is designed to transition the base model from a generalpurpose LLM into a specialized spatio-tempora agent. Rather than merely teaching the model to follow instructions, our primary objective is to develop three core agentic capabilities:

Strategic Planning. The ability to decompose abstract user queries (e.g., Plan a next-weekend trip ) into a logical chain of executable steps.

Precise Tool Orchestration. The capacity to orchastrate a series of tool calls leading to task fulfillment, ensuring syntactic correctness in parameter generation.

Grounded Summarization. The capability to synthesize final answer from heterogeneous tool outputs (e.g., maps, weather, flights ) into a coherent, well-organized response without hallucinating parameters not present in the observation.

## 2.5.1 In-Domain Data Construction

We construct our training data from the curated high-quality prompt pool derived in Section 2.3. To address the long-tail distribution of real-world scenarios where frequent tasks like navigation dominate while complex planning tasks are scarce, we employ a hybrid construction strategy:

Offline Sampling with Strong LLMs. For collected queries, we utilize a Strong LLM (DeepSeekR1) to generate TIR trajectories. To ensure data quality, we generate K = 8 candidate trajectories per query and employ a Verifier (Gemini-3-Pro-Preview) to score them based on the reward dimensions defined in Section 2.4. Only trajectories achieving perfect scores across all dimensions are retained.

Synthetic Long-Tail Generation. To bolster the model's performance on rare, complex tasks (e.g., multi-city itinerary planning with budget constraints), we employ In-Context Learning (ICL) to synthesize data. We sample complex tool combinations rarely seen in the existing data distribution and prompt a Strong LLM to synthesize user queries ( q s ) that necessitate these specific tools in random orders. These synthetic queries are then validated through the offline sampling pipeline to ensure executability.

## 2.5.2 Multi-Step Tool-Integrated Reasoning SFT

We formalize the agent's interaction as a multi-step trajectory optimization problem. Unlike singleturn conversation, agentic reasoning requires the model to alternate between reasoning, tool invocation and tool observation processing.

We model the probability of a trajectory o as the product of conditional probabilities over tokens. The training objective minimizes the negative log-likelihood:

$$\mathcal { L } _ { S F T } ( o _ { t } \vert \theta ) = \frac { 1 } { c } \sum _ { t = 1 } ^ { | o _ { i } | } I ( o _ { i } , t ) \log \pi$$

where o i,t denotes the t -th token of trajectory o i . In practice, q s is optionally augmented with a corresponding user profile including user state (e.g., current location) and preferences (e.g., personal

interests). I ( o i,t ) is a indicator function used for masking:

$$C = - \sum _ { t = 1 } ^ { | o _ { i } | } I ( o _ { i , t } )$$

where text segments corresponding to tool observations do not contribute to the final loss calculation.

## 2.5.3 Dynamic Capability-Aware Curriculum

Acore challenge in model training is determining which data points provide the highest information gain Li et al. (2025a). Static difficulty metrics are insufficient because difficulty is inherently relative to the policy model's current parameterization. However, a task may be trivial for a strong LLM but lie outside the support of our policy model. Training on trivial samples yields vanishing gradients and increases the risk of overfitting, while training on impossible samples leads to high-bias updates and potential distribution collapse. In fact, samples that are trivial for a strong model but difficult for a weak model indicate a distribution gap between the two, and forcing the weak model to fit the distribution of the strong model in such cases can compromise generalization capability Burns et al. (2023). To bridge this gap, we define learnability not as a static property of the prompt, but as the dynamic relationship between task difficulty and the policy's current capability. Learnable tasks are those that reside on the model's decision boundary-currently uncertain but solvable. Figure 5 illustrates the training procedure of the SFT phase.

Figure 5: The training procedure in the SFT phase.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Flowchart: STAgent Training Pipeline

### Overview

The diagram illustrates a multi-stage training pipeline for a question-answering (QA) system called STAgent. It begins with a Teacher Model and progresses through iterative refinement of query-answer pairs (QAs) and model training phases. The pipeline emphasizes hierarchical data curation and model adaptation, culminating in a reinforcement learning (RL) stage.

### Components/Axes

- **Key Components**:

- **Teacher Model**: Initial data source.

- **Tiny QA Pool**: Contains sampled/synthetic QA pairs `{x_i, y_i}`.

- **Candidate Query Pool**: Derived from Tiny QA Pool, labeled `{x_i}`.

- **Full QA Pool**: Aggregates N*8 Trajs, Scored QA pairs `{x_i, y_i}`.

- **Certain QAs**: Subset of Full QA Pool with high confidence.

- **Hard Queries**: Subset of Full QA Pool with low confidence.

- **STAgent-SFT-0**: Initial supervised fine-tuning (SFT) model with parameters `π_θ₀`.

- **STAgent-SFT-1**: Second SFT iteration with parameters `π_θ₁`.

- **STAgent-RL**: Final RL-trained model with parameters `π_θ`.

- **Flow Direction**:

- Arrows indicate data flow and training progression (left to right, top to bottom).

### Detailed Analysis

1. **Data Flow**:

- The Teacher Model generates the Tiny QA Pool via sampling and synthetic data.

- The Candidate Query Pool (`{x_i}`) is extracted from the Tiny QA Pool.

- The Full QA Pool combines N*8 Trajs, Scored QA pairs, sourced from both the Candidate Query Pool and Tiny QA Pool.

- The Full QA Pool is split into **Certain QAs** (high-confidence pairs) and **Hard Queries** (low-confidence pairs).

2. **Model Training**:

- **STAgent-SFT-0**: Trained on the Tiny QA Pool using SFT with initial parameters `π_θ₀`.

- **STAgent-SFT-1**: Further refined using SFT on the Candidate Query Pool, updating parameters to `π_θ₁`.

- **STAgent-RL**: Final model trained via RL on the Full QA Pool, integrating insights from both Certain QAs and Hard Queries.

3. **Key Relationships**:

- The Tiny QA Pool serves as the foundational dataset for subsequent stages.

- The Full QA Pool acts as a comprehensive evaluation/test set for the RL phase.

- STAgent-RL integrates feedback from both Certain QAs (reliable) and Hard Queries (challenging) to optimize performance.

### Key Observations

- **Hierarchical Refinement**: The pipeline progresses from simple sampling (Tiny QA Pool) to complex RL training (STAgent-RL), suggesting iterative improvement.

- **Data Segmentation**: Splitting the Full QA Pool into Certain QAs and Hard Queries implies a focus on balancing easy and difficult examples during RL.

- **Model Evolution**: The transition from SFT-0 to SFT-1 to RL indicates a staged approach to model adaptation, with SFT providing initial supervision and RL enabling dynamic learning.

### Interpretation

This pipeline demonstrates a structured approach to training a robust QA system. By starting with a Teacher Model and iteratively refining data and models, the design prioritizes scalability and adaptability. The use of SFT for initial training and RL for final optimization aligns with modern NLP practices, where supervised learning establishes a baseline, and RL fine-tunes performance on real-world complexity. The segmentation of the Full QA Pool into Certain QAs and Hard Queries highlights an awareness of data quality and difficulty, ensuring the model is tested and improved across diverse scenarios. The absence of explicit numerical values suggests the diagram emphasizes architectural design over empirical results, focusing on conceptual flow rather than quantitative metrics.

</details>

To address this, we introduce a Dynamic Capability-Aware Curriculum that actively selects data points based on the policy model's evolving capability distribution. This process consists of four phases:

Phase 1: Policy Initialization. We first establish a baseline capability to allow for meaningful selfassessment. We subsample a random 10% Tiny Dataset from the curated prompt pool (denoted as D pool ) and generate ground truth trajectories using the Strong LLM. We warm up our policy model by training on this subset to obtain the initial policy π θ 0 . Specifically, for each prompt, we sample K = 8 trajectories and employ a verifier to select the highest-scored generation as the ground truth.

Phase 2: Distributional Capability Probing. To construct a curriculum aligned with the policy's intrinsic capabilities, we perform a distributional probe of the initialized policy π θ 0 against the full unlabeled pool D pool . Rather than relying on external difficulty heuristics, we estimate the local solvability of each task. For every query q i ∈ D pool , we generate K = 8 trajectories { o i,k } K k =1 ∼ π θ 0 ( ·| q i ) and use a verifier to evaluate their rewards r i,k . This yields the mean the variance of the empirical reward distribution, ˆ µ i and ˆ σ 2 i .

Here, ˆ σ 2 i serves as an approximate proxy for uncertainty , where the policy parameters are unstable yet capable of generating high-reward solutions. Unlike random noise, when paired with a non-zero mean, this variance signifies the presence of a learnable signal within the policy's sampling space.

Phase 3: Signal-to-Noise Filtration. We formulate data selection as a signal maximization problem. Effective training samples must possess high gradient variance to drive learning while ensuring the gradient direction is grounded in the policy model's distribution. Based on the estimated statistics, we categorize the data into three regions:

- Trivial Region ( ˆ µ ≈ 1 , ˆ σ 2 → 0 ): Tasks where the policy has converged to high-quality solutions with high confidence. Training on these samples yields negligible gradients ( ∇L ≈ 0 ) and potentially leads to overfitting.

- Noise Region ( ˆ µ ≈ 0 , ˆ σ 2 → 0 ): Tasks effectively outside the policy's current capability scope or containing noisy data. Training the model on these samples often leads to hallucination or negative transfer, as the ground truth lies too far outside the policy's effective support.

- Learnable Region (High ˆ σ 2 , Non-zero ˆ µ ): Tasks maximizing training signals. These samples lie on the decision boundary where the policy is inconsistent but capable.

We retain only the Learnable Region. To quantify the learnability on a per-query basis, we introduce the Learnability Potential Score S i = ˆ σ 2 i · ˆ µ i . This metric inherently prioritizes samples that are simultaneously uncertain (high ˆ σ 2 ) and feasibly solvable (non-zero ˆ µ ), maximizing the expected improvement in policy robustness. The scores are normalized between 0-1.

Phase 4: Adaptive Trajectory Synthesis. Having identified the high-value distribution, we employ an adaptive compute allocation strategy to construct the final SFT dataset. We treat the Strong LLM as an expensive oracle. We allocate the sampling budget B i proportional to the task's learnability score, such that B i ∝ rank ( S i ) . Tasks with more uncertainty receive up to K max = 8 samples from the Strong LLM to maximize the probability of recovering a valid reasoning path, while easier tasks receive fewer calls. This ensures that the supervision signal is densest where the policy model is most uncertain, effectively correcting the policy's decision boundary with high-precision ground truth.

Finally, we aggregate the verified trajectories obtained from this phase. We empirically evaluate various data mixing ratios to determine the optimal training distribution, obtaining π θ 1 , which serves as the backbone model for subsequent RL training.

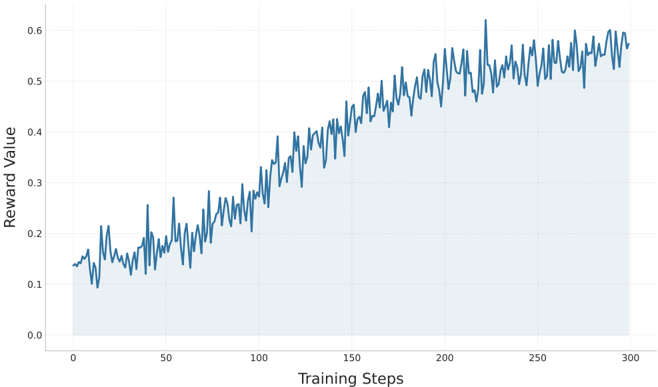

## 2.6 RL

The agentic post-training for STAgent follows the 'SFT-Guided RL' training paradigm, with the RL policy model initialized from . This initialization provides the agent with foundational instructionfollowing capabilities prior to the exploration phase in the sandbox environment.

GRPO (Shao et al., 2024) has become the de facto standard for reasoning tasks. Training is conducted within a high-fidelity sandbox environment (described in Section 2.2), which simulates realworld online scenarios. This setup compels the agent to interact dynamically with the environment, specifically learning to verify and execute tool calls to resolve user queries effectively. The training objective seeks to maximize the expected reward while restricting the policy update to prevent significant deviations from the reference model. We formulate the GRPO objective for STAgent as follows:

where π θ and π θ old denote the current and old policies, respectively. The optimization process operates iteratively on batches of queries. For each query q , we sample a group of G independent trajectories { o 1 , o 2 , . . . , o G } from the policy π θ . Once the trajectories are fully rolled out, the reward function detailed in Section 2.4 evaluates the quality of the interaction. The advantage ˆ A i

for the i -th trajectory is computed as: ˆ A i = r i -mean ( { r 1 ,...,r G } ) std ( { r 1 ,...,r G } )+ δ . D KL refers to the KL divergence between the current policy π θ and the reference policy π ref . β is the KL coefficient.

STAgent is built upon Qwen3-30B-A3B, which features a Mixture-of-Experts (MoE) architecture. In practice, we employ the GRPO variant, Group Sequence Policy Optimization (GSPO) (Zheng et al., 2025a), to stabilize training. Unlike the standard token-wise formulation, GSPO enforces a sequence-level optimization constraint by redefining the importance ratio. Specifically, it computes the ratio as the geometric mean of the likelihood ratios over the entire generated trajectory length :

$$s _ { 1 } ( \theta ) = e ^ { - \frac { 1 } { | o _ { 1 } | } \sum _ { t = 1 } ^ { n } \log \frac { r _ { 0 } ( o _ { 1 } , t ) } { \pi _ { 0 } ( o _ { 1 } , t ) } }$$

## 3 Experiments

## 3.1 Experiment Setups

Our evaluation protocol is designed to comprehensively assess STAgent across two primary dimensions: domain-specific expertise and general-purpose capabilities across a wide spectrum of tasks.

## In-domain Evaluation

To evaluate the STAgent's specialized performance in real-world and simulated travel scenarios, we conduct assessments in two environments:

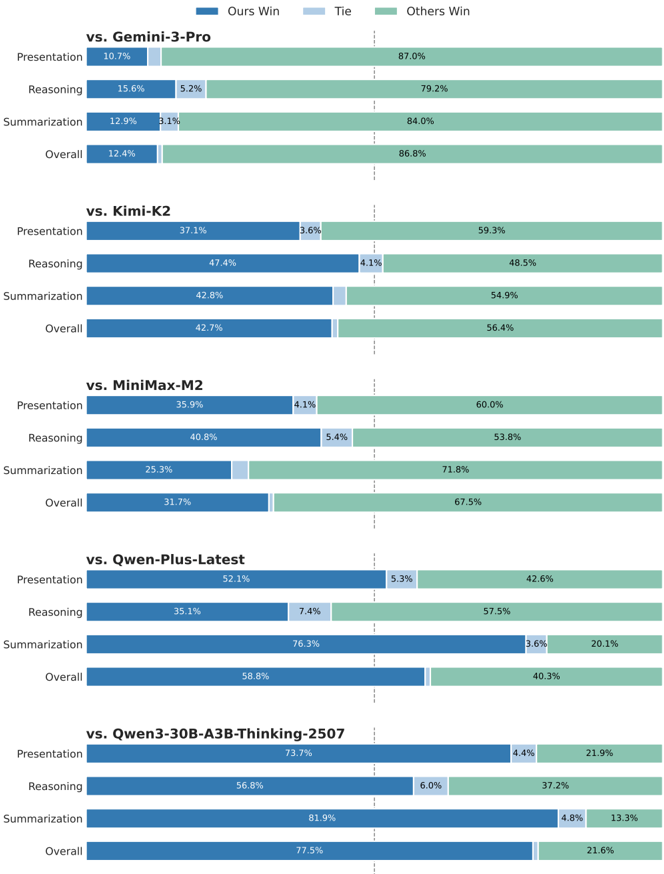

Indomain Online Evaluation. To rapidly evaluate performance in real online environments, we extracted 1,000 high-quality queries covering five task types and seven difficulty levels (as detailed in Section 2.3). For each query, we performed inference 8 times and calculated the average scores. We employ Gemini-3-flash-preview as the judge to compare the win rates between Amap Agent and baselines across different dimensions.

Indomain Offline Evaluation. We evaluate Amap Agent on TravelBench Cheng et al. (2025), a static sandbox environment containing multi-turn, single-turn, and unsolvable subsets. Following the default protocol, we run inference three times per query (temperature 0.7) and report the average results. GPT-4.1-0414 is used to simulate the user, and Gemini-3-flash-preview serves as the grading model.

## General Capabilities Evaluation

To comprehensively evaluate the general capabilities of our model, we conduct assessments across a diverse set of benchmarks. Unless otherwise specified, we standardize the evaluation settings across all general benchmarks with a temperature of 0.6 and a maximum generation length of 32k tokens. The benchmarks are categorized as follows:

Tool Use & Agentic Capabilities. To test the model's proficiency in tool use and complex agentic interactions, we utilize ACEBench Chen et al. (2025a) and τ 2 -Bench Barres et al. (2025). Furthermore, we evaluate function-calling capabilities using the BFCL v3 Patil et al. (2025).

Mathematical Reasoning. We assess advanced mathematical problem-solving abilities using the AIME 24 and AIME 25 AIME (2025), which serve as proxies for complex logical reasoning.

Coding. We employ LiveCodeBench Jain et al. (2024) to evaluate coding capabilities on contamination-free problems. We evaluate on both the v5 (167 problems) and v6 (175 problems) subsets. Specifically, we set the temperature to 0.2, sample once per problem, and report the Pass@1 accuracy.

General & Alignment Tasks. We evaluate general knowledge and language understanding using MMLU-Pro Wang et al. (2024) and, specifically for Chinese proficiency, C-Eval Huang et al. (2023). To assess how well the model aligns with human preferences and instruction following, we employ ArenaHard-v2.0 Li et al. (2024) and IFEval Zhou et al. (2023), respectively.

## 3.2 Main Results

Figure 6: Amap indomain online benchmark evaluation.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Bar Chart: Model Performance Comparison Across Evaluation Criteria

### Overview

The chart compares the performance of a reference model ("Ours") against five other models (Gemini-3-Pro, Kimi-K2, MiniMax-M2, Qwen-Plus-Latest, Qwen3-30B-A3BB-Thinking-2507) across four evaluation criteria: Presentation, Reasoning, Summarization, and Overall. Results are segmented into three categories: "Ours Win" (blue), "Tie" (light blue), and "Others Win" (green), with percentages displayed for each segment.

### Components/Axes

- **X-Axis**: Evaluation criteria (Presentation, Reasoning, Summarization, Overall) for each model comparison.

- **Y-Axis**: Models being compared (Gemini-3-Pro, Kimi-K2, MiniMax-M2, Qwen-Plus-Latest, Qwen3-30B-A3BB-Thinking-2507), listed vertically.

- **Legend**:

- Blue = "Ours Win"

- Light Blue = "Tie"

- Green = "Others Win"

- **Data Format**: Stacked horizontal bars with percentage labels for each segment.

### Detailed Analysis

#### vs. Gemini-3-Pro

- **Presentation**: 10.7% (Ours Win), 5.2% (Tie), 87.0% (Others Win)

- **Reasoning**: 15.6% (Ours Win), 5.2% (Tie), 79.2% (Others Win)

- **Summarization**: 12.9% (Ours Win), 3.1% (Tie), 84.0% (Others Win)

- **Overall**: 12.4% (Ours Win), 1.0% (Tie), 86.8% (Others Win)

#### vs. Kimi-K2

- **Presentation**: 37.1% (Ours Win), 3.6% (Tie), 59.3% (Others Win)

- **Reasoning**: 47.4% (Ours Win), 4.1% (Tie), 48.5% (Others Win)

- **Summarization**: 42.8% (Ours Win), 1.0% (Tie), 54.9% (Others Win)

- **Overall**: 42.7% (Ours Win), 1.0% (Tie), 56.4% (Others Win)

#### vs. MiniMax-M2

- **Presentation**: 35.9% (Ours Win), 4.1% (Tie), 60.0% (Others Win)

- **Reasoning**: 40.8% (Ours Win), 5.4% (Tie), 53.8% (Others Win)

- **Summarization**: 25.3% (Ours Win), 1.0% (Tie), 71.8% (Others Win)

- **Overall**: 31.7% (Ours Win), 1.0% (Tie), 67.5% (Others Win)

#### vs. Qwen-Plus-Latest

- **Presentation**: 52.1% (Ours Win), 5.3% (Tie), 42.6% (Others Win)

- **Reasoning**: 35.1% (Ours Win), 7.4% (Tie), 57.5% (Others Win)

- **Summarization**: 76.3% (Ours Win), 3.6% (Tie), 20.1% (Others Win)

- **Overall**: 58.8% (Ours Win), 1.0% (Tie), 40.3% (Others Win)

#### vs. Qwen3-30B-A3BB-Thinking-2507

- **Presentation**: 73.7% (Ours Win), 4.4% (Tie), 21.9% (Others Win)

- **Reasoning**: 56.8% (Ours Win), 6.0% (Tie), 37.2% (Others Win)

- **Summarization**: 81.9% (Ours Win), 4.8% (Tie), 13.3% (Others Win)

- **Overall**: 77.5% (Ours Win), 1.0% (Tie), 21.6% (Others Win)

### Key Observations

1. **Performance Trends**:

- "Ours Win" percentages increase significantly against weaker models (e.g., Qwen-Plus-Latest: 58.8% Overall vs. 40.3% Others Win).

- Against stronger models (e.g., Gemini-3-Pro), "Ours Win" remains low (12.4% Overall), with "Others Win" dominating (86.8%).

- "Tie" percentages are consistently low (<5%) across all comparisons, except in Reasoning vs. Gemini-3-Pro (5.2%).

2. **Category-Specific Insights**:

- **Reasoning**: Highest "Ours Win" against Qwen3-30B-A3BB-Thinking-2507 (56.8%).

- **Summarization**: Strongest performance against Qwen-Plus-Latest (76.3% Ours Win).

- **Presentation**: Weakest performance against Gemini-3-Pro (10.7% Ours Win).

3. **Anomalies**:

- "Others Win" dominates in most comparisons, suggesting the reference model often underperforms relative to other competitors.

- Qwen3-30B-A3BB-Thinking-2507 shows the most favorable results for "Ours Win" across all categories.

### Interpretation

The data indicates that the reference model ("Ours") performs best in **Reasoning** and **Summarization** against weaker models like Qwen-Plus-Latest and Qwen3-30B-A3BB-Thinking-2507. However, it struggles in **Presentation** against stronger models like Gemini-3-Pro. The high "Others Win" percentages across comparisons suggest that in many cases, neither the reference model nor the compared model is the top performer, possibly due to the presence of unlisted competitors or contextual factors. The model's overall effectiveness improves as the strength of the compared model decreases, highlighting its relative advantages in specific evaluation criteria.

</details>

Amap Indomain online benchmark. The experimental results, as illustrated in Figure 6, demonstrate the superior performance and robustness of STAgent. Specifically, Compared with the baseline model, Qwen3-30B-A3B-Thinking-2507, STAgent demonstrates substantial improvements across all three evaluation dimensions. Notably, in terms of contextual summarization/extraction (win rate: 81.9%) and content presentation (win rate: 73.7%), our model exhibits a robust capability to accurately synthesize preceding information and effectively present solutions that align with user requirements. When compared to Qwen-Plus-Latest, while STAgent shows a performance gap in reasoning and planning, it achieves a marginal lead in presentation and a significant advantage in the summarization dimension. Regarding other models such as MiniMax-M2, Kimi-K2, and Gemini-3Pro-preview, a performance disparity persists across all three dimensions; we attribute this primarily to the constraints of model scale (30B), which limit the further enhancement of STAgent 's capabil-

ities. Overall, these results validate the efficacy and promising potential of our proposed solution in strengthening tool invocation, response extraction, and summarization within map-based scenarios.

Amap Indomain offline benchmark. As shown in Table 2, our model trained on top of Qwen330B achieves consistent improvements across all three subtasks, with gains of +11.7% (Multi-turn), +5.8% (Single-turn), and +26.1% (Unsolved) over the Qwen3-30B-thinking baseline. It also delivers a higher overall score ( 70.3 ) than substantially larger models such as DeepSeek R1 and Qwen3235B-Instruct. Notably, our model attains the best performance on the Multi-turn subtask ( 66.6 ) and the second-best performance on the Single-turn subtask ( 73.4 ), which further supports the effectiveness of our training pipeline.

General domain evaluation. The main experimental results, summarized in Table 3, shows several key observation. Firstly, STAgent achieves a significant performance leap on the private domain benchmark, marking a substantial improvement over its initialization model, Qwen3-30BA3B-Thinking. Notably, STAgent surpasses larger-scale models, including Qwen3-235B-A22BThingking-2507 and DeepSeek-R1-0528, while comparable to Qwen3-235B-A22B-Instruct-2507. Secondly, STAgent shows a notable performance in Tool Use benchmarks, which suggests that models trained on our private domain tools possess a strong ability to generalize their tool-calling capabilities to other diverse functional domains. Finally, the model effectively maintains its high performance across public benchmarks for mathematics, coding, and general capabilities , proving that our domain-specific reinforcement learning process does not lead to a degradation of general-purpose performance or fundamental reasoning skills. Despite being a specialized model for the spatiotemporal domain, STAgent still achieves excellent performance in general domains while maintaining its strong in-domain capabilities, demonstrating the effectiveness of our training methodology.

Table 2: Results on TravelBench, covering three subtasks: Multi-turn, Single-turn, and Unsolved.

| Model | Multi-turn | Single-turn | Unsolved | Overall |

|--------------------------|--------------|---------------|------------|-----------|

| Deepseek R1-0528 | 34.3 | 76.1 | 83.7 | 64.7 |

| Qwen3-235B-A22B-Ins-2507 | 60.1 | 69.7 | 80 | 69.9 |

| Qwen3-235B-A22B-Th-2507 | 56.6 | 73.2 | 51.7 | 60.5 |

| Qwen3-30B-A3B-Th-2507 | 59.6 | 69.4 | 56.3 | 61.8 |

| Qwen3-14B | 47 | 57 | 54 | 52.7 |

| Qwen3-4B | 42 | 42.1 | 73 | 52.4 |

| STAgent | 66.6 | 73.4 | 71 | 70.3 |

Table 3: Model performance evaluation across general and in-domain benchmarks.

| Domain | Benchmark | Qwen3-4B- Thinking-2507 | Qwen3 14B | Qwen3-30B- A3B-2507 | Qwen3-235B- A22B-Ins-2507 | Qwen3-235B- A22B-Th-2507 | DeepSeek- R1-0528 | STAgent | ∆ (30B-A3B) |

|-----------|------------------|---------------------------|-------------|-----------------------|-----------------------------|----------------------------|---------------------|-----------|---------------|

| Tool Use | ACEBench | 71.7 | 69.8 | 75.7 | 75.6 | 75.7 | - | 75.3 | -0.4 |

| | Tau2-Bench | 46.2 | 37.6 | 47.7 | 52.4 | 58.5 | 52.7 | 47 | -0.7 |

| | BFCL V3 | 71.2 | 70.4 | 72.4 | 70.9 | 71.9 | 63.8 | 76.8 | 4.4 |

| Math | AIME 24 | 83.8 | 79.3 | 91.3 | 80.8 | 93.8 | 91.4 | 90.2 | -0.9 |

| Math | AIME 25 | 81.3 | 70.4 | 85 | 70.3 | 92.3 | 87.5 | 85.2 | 0.2 |

| Coding | LiveCodeBench-v5 | 61.7 | 63.5 | 70.1 | 57.5 | 68.3 | - | 70.7 | 0.6 |

| Coding | LiveCodeBench-v6 | 55.2 | 55.4 | 66 | 51.8 | 74.1 | 73.3 | 66.3 | 0.3 |

| General | ArenaHard-v2.0 | 34.9 | 30.4 | 51.4 | 79.2 | 79.7 | - | 46.4 | -5 |

| General | IFEval | 87.4 | 85.4 | 88.9 | 88.7 | 87.8 | 79.1 | 87.1 | -1.8 |

| General | MMLU-Pro | 74 | 77.4 | 80.9 | 83 | 84.4 | 85.0 | 80.5 | -0.4 |

| General | C-Eval | 72.3 | 87.5 | 87.1 | 90.7 | 92 | 91.5 | 87.9 | 0.8 |

| In-domain | TravelBench | 60.8 | 52.7 | 60.2 | 69.9 | 60.5 | 64.7 | 70.3 | 10.1 |

## 4 Conclusion

In this work, we present STAgent, a agentic model specifically designed to address the complex reasoning tasks within real-world spatio-temporal scenarios. A stable tool calling environment, highquality data curation, and a difficulty-aware training recipe collectively contribute to the model's performance. Specifically, we constructed a calling environment that supports tools across 10 distinct domains, enabling stable and highly concurrent operations. Furthermore, we curated a set of

high-quality candidate queries from a massive corpus of real-world historical queries, employing an exceptionally low filtering ratio. Finally, we designed an SFT-guided RL training strategy to ensure the model's capabilities continuously improve throughout the training process. Empirical results demonstrate that STAgent, built on Qwen3-30B-A3B-thinking-2507, significantly outperforms models with larger parameter sizes on domain-specific benchmarks such as TravelBench, while maintaining strong generalization across general tasks. We believe this work not only provides a robust solution for spatio-temporal intelligence but also offers a scalable and effective paradigm for developing specialized agents in other complex, open-ended real-world environments.

## Author Contributions

## Project Leader

Yulan Hu

## Core Contributors

Xiangwen Zhang, Sheng Ouyang, Hao Yi, Lu Xu, Qinglin Lang, Lide Tan, Xiang Cheng

## Contributors

Tianchen Ye, Zhicong Li, Ge Chen, Wenjin Yang, Zheng Pan, Shaopan Xiong, Siran Yang, Ju Huang, Yan Zhang, Jiamang Wang, Yong Liu, Yinfeng Huang, Ning Wang, Tucheng Lin, Xin Li, Ning Guo

## Acknowledgments

We extend our sincere gratitude to the ROLL Wang et al. (2025) team for their exceptional support on the training infrastructure. Their asynchronous rollout and training strategy enabled a nearly 80% increase in training efficiency. We also thank Professor Yong Liu from the Gaoling School of Artificial Intelligence, Renmin University of China, for his insightful guidance on our SFT-guided RL training recipe.

## References

- AIME. AIME problems and solutions. https://artofproblemsolving.com/wiki/ index.php/AIME\_Problems\_and\_Solutions , 2025.

- Victor Barres, Honghua Dong, Soham Ray, Xujie Si, and Karthik Narasimhan. τ 2 -bench: Evaluating conversational agents in a dual-control environment, 2025. URL https://arxiv.org/abs/ 2506.07982 .

- Song Bian, Minghao Yan, Anand Jayarajan, Gennady Pekhimenko, and Shivaram Venkataraman. What limits agentic systems efficiency? arXiv preprint arXiv:2510.16276 , 2025.

- Collin Burns, Pavel Izmailov, Jan Hendrik Kirchner, Bowen Baker, Leo Gao, Leopold Aschenbrenner, Yining Chen, Adrien Ecoffet, Manas Joglekar, Jan Leike, et al. Weak-to-strong generalization: Eliciting strong capabilities with weak supervision. arXiv preprint arXiv:2312.09390 , 2023.

- Chen Chen, Xinlong Hao, Weiwen Liu, Xu Huang, Xingshan Zeng, Shuai Yu, Dexun Li, Yuefeng Huang, Xiangcheng Liu, Wang Xinzhi, and Wu Liu. ACEBench: A comprehensive evaluation of LLM tool usage. In Christos Christodoulopoulos, Tanmoy Chakraborty, Carolyn Rose, and Violet Peng (eds.), Findings of the Association for Computational Linguistics: EMNLP 2025 , pp. 12970-12998, Suzhou, China, November 2025a. Association for Computational Linguistics. ISBN 979-8-89176-335-7. doi: 10.18653/v1/2025.findings-emnlp.697. URL https://aclanthology.org/2025.findings-emnlp.697/ .

- Mingyang Chen, Linzhuang Sun, Tianpeng Li, Haoze Sun, Yijie Zhou, Chenzheng Zhu, Haofen Wang, Jeff Z Pan, Wen Zhang, Huajun Chen, et al. Learning to reason with search for llms via reinforcement learning. arXiv preprint arXiv:2503.19470 , 2025b.

- Xiang Cheng, Yulan Hu, Xiangwen Zhang, Lu Xu, Zheng Pan, Xin Li, and Yong Liu. Travelbench: Areal-world benchmark for multi-turn and tool-augmented travel planning. 2025. URL https: //api.semanticscholar.org/CorpusID:284313793 .

- Guanting Dong, Hangyu Mao, Kai Ma, Licheng Bao, Yifei Chen, Zhongyuan Wang, Zhongxia Chen, Jiazhen Du, Huiyang Wang, Fuzheng Zhang, et al. Agentic reinforced policy optimization. arXiv preprint arXiv:2507.19849 , 2025.

- Zhibin Gou, Zhihong Shao, Yeyun Gong, Yelong Shen, Yujiu Yang, Minlie Huang, Nan Duan, and Weizhu Chen. Tora: A tool-integrated reasoning agent for mathematical problem solving. arXiv preprint arXiv:2309.17452 , 2023.

- Anisha Gunjal, Anthony Wang, Elaine Lau, Vaskar Nath, Yunzhong He, Bing Liu, and Sean M Hendryx. Rubrics as rewards: Reinforcement learning beyond verifiable domains. In NeurIPS 2025 Workshop on Efficient Reasoning , 2025.

- Helia Hashemi, Jason Eisner, Corby Rosset, Benjamin Van Durme, and Chris Kedzie. Llm-rubric: A multidimensional, calibrated approach to automated evaluation of natural language texts. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) , pp. 13806-13834, 2024.

- Yuzhen Huang, Yuzhuo Bai, Zhihao Zhu, Junlei Zhang, Jinghan Zhang, Tangjun Su, Junteng Liu, Chuancheng Lv, Yikai Zhang, Jiayi Lei, Yao Fu, Maosong Sun, and Junxian He. C-eval: A multi-level multi-discipline chinese evaluation suite for foundation models, 2023. URL https: //arxiv.org/abs/2305.08322 .

- Naman Jain, King Han, Alex Gu, Wen-Ding Li, Fanjia Yan, Tianjun Zhang, Sida Wang, Armando Solar-Lezama, Koushik Sen, and Ion Stoica. Livecodebench: Holistic and contamination free evaluation of large language models for code, 2024. URL https://arxiv.org/abs/ 2403.07974 .

- Mingcong Lei, Yiming Zhao, Ge Wang, Zhixin Mai, Shuguang Cui, Yatong Han, and Jinke Ren. Stma: A spatio-temporal memory agent for long-horizon embodied task planning, 2025. URL https://arxiv.org/abs/2502.10177 .

- Ang Li, Zhihang Yuan, Yang Zhang, Shouda Liu, and Yisen Wang. Know when to explore: Difficulty-aware certainty as a guide for llm reinforcement learning. arXiv preprint arXiv:2509.00125 , 2025a.

- Tianle Li, Wei-Lin Chiang, Evan Frick, Lisa Dunlap, Tianhao Wu, Banghua Zhu, Joseph E Gonzalez, and Ion Stoica. From crowdsourced data to high-quality benchmarks: Arena-hard and benchbuilder pipeline. arXiv preprint arXiv:2406.11939 , 2024.

- Zhong-Zhi Li, Duzhen Zhang, Ming-Liang Zhang, Jiaxin Zhang, Zengyan Liu, Yuxuan Yao, Haotian Xu, Junhao Zheng, Pei-Jie Wang, Xiuyi Chen, et al. From system 1 to system 2: A survey of reasoning large language models. arXiv preprint arXiv:2502.17419 , 2025b.

- Heng Lin and Zhongwen Xu. Understanding tool-integrated reasoning, 2025. URL https:// arxiv.org/abs/2508.19201 .

- Aixin Liu, Aoxue Mei, Bangcai Lin, Bing Xue, Bingxuan Wang, Bingzheng Xu, Bochao Wu, Bowei Zhang, Chaofan Lin, Chen Dong, et al. Deepseek-v3. 2: Pushing the frontier of open large language models. arXiv preprint arXiv:2512.02556 , 2025.

- Yansong Ning, Rui Liu, Jun Wang, Kai Chen, Wei Li, Jun Fang, Kan Zheng, Naiqiang Tan, and Hao Liu. Deeptravel: An end-to-end agentic reinforcement learning framework for autonomous travel planning agents. arXiv preprint arXiv:2509.21842 , 2025.

- Shishir G Patil, Huanzhi Mao, Fanjia Yan, Charlie Cheng-Jie Ji, Vishnu Suresh, Ion Stoica, and Joseph E. Gonzalez. The berkeley function calling leaderboard (BFCL): From tool use to agentic evaluation of large language models. In Forty-second International Conference on Machine Learning , 2025. URL https://openreview.net/forum?id=2GmDdhBdDk .

- Changle Qu, Sunhao Dai, Xiaochi Wei, Hengyi Cai, Shuaiqiang Wang, Dawei Yin, Jun Xu, and JiRong Wen. Tool learning with large language models: A survey. Frontiers of Computer Science , 19(8):198343, 2025.

- Ning Shang, Yifei Liu, Yi Zhu, Li Lyna Zhang, Weijiang Xu, Xinyu Guan, Buze Zhang, Bingcheng Dong, Xudong Zhou, Bowen Zhang, et al. rstar2-agent: Agentic reasoning technical report. arXiv preprint arXiv:2508.20722 , 2025.

- Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Yang Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300 , 2024.

- Kimi Team, Yifan Bai, Yiping Bao, Guanduo Chen, Jiahao Chen, Ningxin Chen, Ruijue Chen, Yanru Chen, Yuankun Chen, Yutian Chen, et al. Kimi k2: Open agentic intelligence. arXiv preprint arXiv:2507.20534 , 2025.

- Weixun Wang, Shaopan Xiong, Gengru Chen, Wei Gao, Sheng Guo, Yancheng He, Ju Huang, Jiaheng Liu, Zhendong Li, Xiaoyang Li, et al. Reinforcement learning optimization for largescale learning: An efficient and user-friendly scaling library. arXiv preprint arXiv:2506.06122 , 2025.

- Yubo Wang, Xueguang Ma, Ge Zhang, Yuansheng Ni, Abhranil Chandra, Shiguang Guo, Weiming Ren, Aaran Arulraj, Xuan He, Ziyan Jiang, Tianle Li, Max Ku, Kai Wang, Alex Zhuang, Rongqi Fan, Xiang Yue, and Wenhu Chen. Mmlu-pro: A more robust and challenging multi-task language understanding benchmark, 2024. URL https://arxiv.org/abs/2406.01574 .

- Jian Xie, Kai Zhang, Jiangjie Chen, Tinghui Zhu, Renze Lou, Yuandong Tian, Yanghua Xiao, and Yu Su. Travelplanner: A benchmark for real-world planning with language agents. arXiv preprint arXiv:2402.01622 , 2024.

- Zhangchen Xu, Adriana Meza Soria, Shawn Tan, Anurag Roy, Ashish Sunil Agrawal, Radha Poovendran, and Rameswar Panda. Toucan: Synthesizing 1.5 m tool-agentic data from real-world mcp environments. arXiv preprint arXiv:2510.01179 , 2025.

- An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, et al. Qwen3 technical report. arXiv preprint arXiv:2505.09388 , 2025.

- Zhaochen Yu, Ling Yang, Jiaru Zou, Shuicheng Yan, and Mengdi Wang. Demystifying reinforcement learning in agentic reasoning. arXiv preprint arXiv:2510.11701 , 2025.

- Aohan Zeng, Xin Lv, Qinkai Zheng, Zhenyu Hou, Bin Chen, Chengxing Xie, Cunxiang Wang, Da Yin, Hao Zeng, Jiajie Zhang, et al. Glm-4.5: Agentic, reasoning, and coding (arc) foundation models. arXiv preprint arXiv:2508.06471 , 2025.

- Guibin Zhang, Hejia Geng, Xiaohang Yu, Zhenfei Yin, Zaibin Zhang, Zelin Tan, Heng Zhou, Zhongzhi Li, Xiangyuan Xue, Yijiang Li, et al. The landscape of agentic reinforcement learning for llms: A survey. arXiv preprint arXiv:2509.02547 , 2025.

- Chujie Zheng, Shixuan Liu, Mingze Li, Xiong-Hui Chen, Bowen Yu, Chang Gao, Kai Dang, Yuqiong Liu, Rui Men, An Yang, et al. Group sequence policy optimization. arXiv preprint arXiv:2507.18071 , 2025a.

- Haozhen Zheng, Beitong Tian, Mingyuan Wu, Zhenggang Tang, Klara Nahrstedt, and Alex Schwing. Spatio-temporal llm: Reasoning about environments and actions. arXiv preprint arXiv:2507.05258 , 2025b.

- Jeffrey Zhou, Tianjian Lu, Swaroop Mishra, Siddhartha Brahma, Sujoy Basu, Yi Luan, Denny Zhou, and Le Hou. Instruction-following evaluation for large language models. arXiv preprint arXiv:2311.07911 , 2023.

## A Tool Schemas and Prompt Templates

## A.1 Tool schemas

## 1. Map & Navigation Tools

- map search places : Searches for Points of Interest (POIs) based on keywords, categories, or addresses. Supports nearby search with customizable radius, administrative region filtering, and multiple sorting options (distance, rating, price). Returns rich metadata including ratings, prices, business hours, and user reviews.