Toward Safe and Responsible AI Agents:

A Three-Pillar Model for

Transparency, Accountability, and Trustworthiness

Edward C. Cheng

echeng04@stanford.edu

Jeshua Cheng

jeshua.cheng@inquiryon.com

Alice Siu asiu@stanford.edu

[Warning: Draw object ignored]

Abstract – This paper presents a conceptual and operational framework for developing and operating safe and trustworthy AI agents based on a Three-Pillar Model grounded in transparency, accountability, and trustworthiness. Building on prior work in Human-in-the-Loop systems, reinforcement learning, and collaborative AI, the framework defines an evolutionary path toward autonomous agents that balances increasing automation with appropriate human oversight. The paper argues that safe agent autonomy must be achieved through progressive validation, analogous to the staged development of autonomous driving, rather than through immediate full automation. Transparency and accountability are identified as foundational requirements for establishing user trust and for mitigating known risks in generative AI systems, including hallucinations, data bias, and goal misalignment, such as the inversion problem. The paper further describes three ongoing work streams supporting this framework: public deliberation on AI agents conducted by the Stanford Deliberative Democracy Lab, cross-industry collaboration through the Safe AI Agent Consortium, and the development of open tooling for an agent operating environment aligned with the Three-Pillar Model. Together, these contributions provide both conceptual clarity and practical guidance for enabling the responsible evolution of AI agents that operate transparently, remain aligned with human values, and sustain societal trust.

Keywords— Generative AI, AI Agent, Human-in-the-Loop, HITL, RLHF, Responsible AI, Trustworthy AI

[Warning: Draw object ignored]

1. Introduction

The emergence of AI agents marks a new phase in the evolution of generative AI. While traditional chatbots focus on generating text-based responses, AI agents extend this capability into real-world action. These systems can execute tasks, reason over goals, and make decisions on behalf of humans. This shift from text generation to autonomous task execution holds the key to unlocking the economic and practical value of generative AI. Yet, as these systems gain autonomy and agency, the risks of error, bias, and misalignment also multiply. When AI agents make consequential real-life decisions, such as transferring funds, filing drug prescriptions, drafting contracts, or guiding robotic actions, their mistakes may lead to financial losses, privacy breaches, or even physical harms. These errors may arise from training biases, lack of situational context, hallucinated reasoning, or misalignment between user intent and model objectives. Consequently, the field is confronted with an urgent challenge: how to ensure safe, transparent, and accountable AI agents that enhance productivity without compromising accuracy, trust or human values.

A growing body of recent research has emerged to address this challenge, focusing on the Human-in-the-Loop (HITL) paradigm and its extensions as means to govern, calibrate, and align AI agent behavior. These works explore how human expertise, oversight, and ethical grounding can be woven into the AI learning and action loop to produce systems that are both impactful and controllable. Collectively, they represent a growing consensus that human-AI collaboration, rather than full automation, is the most promising pathway toward efficient, effective, and safe AI agents that will result in higher productivity gain [1].

To organize this literature, we can group the representative surveys into three major thematic clusters that trace the conceptual evolution of safe AI agent design:

1. Foundational theories of human-in-the-loop AI and machine learning.

1. Operational frameworks and platforms for human-AI collaboration.

1. Emerging approaches for uncertainty alignment and human-governed AI agents.

1. Foundational Theories of Human-in-the-Loop AI

Early research established the theoretical and ethical foundations for integrating humans into the AI lifecycle. Zanzotto (2019) proposed Human-in-the-loop Artificial Intelligence (HitAI) as both a moral and structural correction to the unregulated growth of autonomous AI [2]. He argued that humans are not mere annotators but the original “knowledge producers” whose insights underpin AI performance and thus must remain central to both credit and control. Wu et al. (2022) expanded this notion through a systematic survey of HITL for machine learning, framing it as a data-centric methodology that unites human cognition with computational scalability. They demonstrated that effective human involvement improves labeling efficiency, interpretability, and robustness, forming the foundation for iterative feedback loops in model development [3].

Building on these theoretical bases, Mosqueira-Rey et al. (2023) presented a unifying taxonomy of Human-in-the-Loop Machine Learning (HITL-ML) paradigms [4]. They identified key interaction modes, which include Active Learning, Interactive ML, Machine Teaching, Curriculum Learning, and Explainable AI. They revealed that human-AI relationships exist along a continuum of control: from machine-driven query optimization to human-driven knowledge transfer and interpretation. These early frameworks collectively redefined HITL as not simply supervision, but shared agency between human reasoning and machine inference, setting the epistemic groundwork for subsequent advances in safety and transparency.

Extending the HITL perspective beyond technical design, recent studies from MIT Sloan introduced a management-oriented framework known as AI Alignment. This paradigm emphasizes that model accuracy, reliability in real-world contexts, and stakeholder relevance must be achieved through continuous human engagement. It reframes human involvement not only as a safeguard but also as a means for organizations to learn and adapt as they deploy AI. Grounded in empirical case studies, this framework shows that practices such as expert feedback and stakeholder participation are essential for building safe, context-aware AI systems [5]. A complementary MIT Sloan study found that asking critical safety questions early in the AI development process helps prevent systemic errors and security vulnerabilities, further reinforcing the importance of proactive human oversight [6].

1. Operational Frameworks for Safe and Collaborative AI Agents

As Human-in-the-Loop principles matured, a second wave of research shifted toward practical frameworks and system architectures that enable effective human-AI collaboration in real-world, embodied environments. Bellos and Siskind (2025) exemplify this transition by introducing a structured evaluation framework, a multimodal dataset, and an augmented-reality (AR) AI agent designed to guide humans through complex physical tasks such as culinary cooking and battlefield medicine. Their empirical studies demonstrate that interactive, context-aware guidance significantly improves task success rates, reduces procedural errors, and enhances user experience. Importantly, their results also show that exposure to AI-assisted guidance leads to measurable improvements in subsequent unassisted task performance, indicating that AI agents can support not only immediate task completion but also longer-term human skill acquisition. These findings position AI agents as collaborative partners that augment human capability rather than as purely automated systems [7].

In parallel, Mozannar et al. (2025) introduced Magentic-UI, an open-source user-interface platform for human-in-the-loop agentic systems. Built on Microsoft’s Magentic-One framework, it enables users to co-plan, co-execute, approve, and verify AI actions in complex digital tasks such as coding and document handling [8]. The platform embeds human oversight through structured, repeatable mechanisms. It supports co-planning, co-tasking, action approval, and answer verification, establishing a controlled environment for studying trust calibration, safety, and usability in AI agents. Together, these efforts move the field from abstract advocacy to practical system engineering, demonstrating that safety and transparency can be designed into agent interfaces, workflows, and orchestration protocols.

1. Emerging Approaches for Uncertainty-Aware and Human-Governed AI Agents

Recent work has deepened the mathematical and procedural foundations of safety and alignment. Retzlaff et al. (2024) surveyed the domain of Human-in-the-Loop Reinforcement Learning (HITL-RL), arguing that reinforcement learning (RL) inherently depends on human feedback and should be understood as a HITL paradigm. Their work outlined design requirements such as feedback quality, trust calibration, and explainability for moving from human-guided to human-governed learning [9]. Complementing this, Ren et al. (2023) proposed the KNOWNO (“Know When You Don’t Know”) framework for LLM-driven robotic planners to identify critical moments that require human involvement. By employing conformal prediction to quantify uncertainty, KNOWNO enables robots to detect when their confidence falls below a safety threshold and proactively request human input to ensure safe and reliable task execution [10]. This model of uncertainty alignment provides formal statistical guarantees on task success while minimizing unnecessary human intervention. This work represents a crucial step toward self-aware, help-seeking agents.

At a broader institutional level, research from Harvard University has expanded the discussion of AI safety to include ethics, governance, and societal accountability. Allen et al. (2024) proposed a democratic model of power-sharing liberalism, emphasizing human flourishing, shared authority, and institutional accountability. They argued that AI governance must move beyond risk management to actively promote public goods, equality, and autonomy through inclusive participation and transparent oversight. Their framework identifies six core governance tasks: mitigating harm, managing emergent capabilities, preventing misuse, advancing public benefit, building human capital, and strengthening democratic capacity [11]. Complementing this perspective, Barroso and Mello (2024) examined AI as both a revolutionary and perilous force shaping humanity’s future, calling for a global governance framework grounded in human dignity, transparency, accountability, and democratic oversight [12]. Together, these contributions frame AI not as a force to restrain but as a catalyst for renewing democracy and reinforcing collective well-being.

Finally, Natarajan et al. (2025) reframed the entire discussion through the concept of AI-in-the-Loop (AI2L). Their analysis reveals that many systems labeled as HITL should be considered as AI2L, where humans, not AI, remain the decision-makers. They argue that this distinction is critical for designing systems that emphasize collaboration over automation, human impact over algorithmic efficiency, and co-adaptive intelligence over substitution [13]. This reorientation marks a philosophical inflection point: moving from human-assisted AI to AI-assisted humanity.

1. Toward a Framework for Safe, Transparent AI Agents

Across these studies, a clear trajectory emerges. The field has progressed from recognizing the ethical necessity of human oversight, to engineering collaborative systems, and to developing experimentally grounded mechanisms for uncertainty and governance. Collectively, these efforts affirm that the challenge of AI agent safety, transparency, and alignment is both urgent and tractable. Embedding humans as teachers, collaborators, and governors within the AI lifecycle consistently improves reliability and trustworthiness, yet fragmentation persists across methodologies and evaluation metrics.

This paper advances the next step to synthesize these developments into a unified conceptual framework and a set of guiding principles that integrate HITL, AI2L, uncertainty alignment, and human-governed learning into a progressively improving autonomous environment. Together, these foundations define an operational setting for a new generation of AI agents that are transparent by design, collaborative by nature, and accountable in operation, with the explicit goal of enabling increasing level of autonomy in a safe, controlled, and trustworthy manner.

1. The Evolution Path Towards Autonomous Agents

The vision of achieving fully autonomous AI agents represents one of the most ambitious goals in artificial intelligence. However, this vision cannot be realized in a single leap. It must evolve through progressive stages of validation and oversight, where human involvement is reduced only as confidence in the system’s performance and alignment grows through proven safety, reliability, and accountability. This evolutionary approach has clear precedents in other industries, particularly in the development of autonomous driving.

1. Lessons from Autonomous Driving

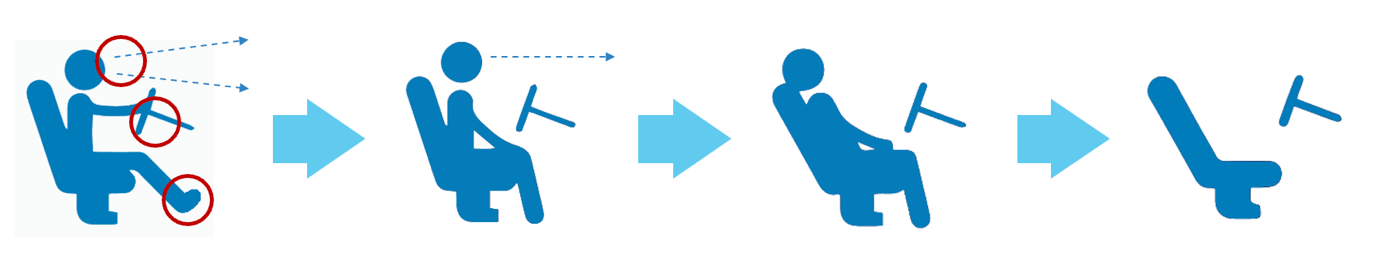

The field of autonomous driving provides an instructive example of how automation can evolve responsibly. Early driver-assist systems such as adaptive cruise control and lane-keeping support were designed to assist rather than replace human judgment. These systems required the driver to maintain foot on the pedal, hands on the wheel, and eyes on the road at all times. As perception models, control algorithms, and sensor fusion technologies advanced, vehicles began to handle more complex scenarios independently, such as automatic parking and highway lane changes. At this stage, the human driver could briefly disengage from active control but still had to monitor the road and be prepared to intervene if necessary.

<details>

<summary>SafeAIAgent-img001.png Details</summary>

### Visual Description

## Diagram: Driver Posture and Vehicle Control Transition Sequence

### Overview

The image is a four-panel sequential diagram illustrating the progressive change in a driver's posture and the state of vehicle controls, likely depicting a transition from active driving to a non-driving or post-event state. The sequence flows from left to right, connected by light blue directional arrows. The first panel contains specific annotations (red circles and dashed lines) that are absent in the subsequent panels.

### Components/Axes

* **Panels:** Four distinct panels arranged horizontally.

* **Primary Elements (in each panel):**

* A blue silhouette of a person (driver) seated in a car seat.

* A simplified representation of a steering wheel.

* A car seat with a headrest.

* **Annotations (Panel 1 only):**

* Three red circles highlighting specific areas.

* Two dashed blue arrows originating from the driver's head area, pointing forward and slightly upward.

* **Transition Indicators:** Three large, light blue, right-pointing arrows placed between the panels, indicating the direction of sequence.

### Detailed Analysis

**Panel 1 (Leftmost):**

* **Driver Posture:** Upright seated position. Hands are placed on the steering wheel. Feet appear to be positioned near the pedals.

* **Annotations:**

* **Red Circle 1:** Positioned around the driver's head/eye level.

* **Red Circle 2:** Positioned around the driver's hands on the steering wheel.

* **Red Circle 3:** Positioned around the driver's feet/ankle area.

* **Dashed Arrows:** Two lines emanate from the head circle, indicating the driver's forward line of sight.

**Panel 2:**

* **Driver Posture:** The driver's torso is reclined slightly. Hands are no longer on the steering wheel; they are resting in the lap. Feet remain in a similar position.

* **Annotations:** None. The dashed sightline is replaced by a single, horizontal dashed arrow pointing forward from the head level, suggesting a fixed, forward gaze.

**Panel 3:**

* **Driver Posture:** The driver's torso is reclined significantly further. The head is tilted back against the headrest. Hands remain in the lap. The steering wheel appears to have moved forward, away from the driver.

**Panel 4 (Rightmost):**

* **Driver Posture:** The seat is now empty. The seatback is in a highly reclined position.

* **Steering Wheel:** The steering wheel is shown detached and tilted forward, away from the seat.

### Key Observations

1. **Progressive Reclining:** There is a clear, continuous trend of the driver's seatback reclining from an upright driving position to a fully reclined, non-driving position.

2. **Control Disengagement:** The driver's hands move from actively gripping the steering wheel (Panel 1) to resting passively in the lap (Panels 2 & 3), culminating in the complete absence of a driver (Panel 4).

3. **Steering Wheel Movement:** The steering wheel's position relative to the seat changes, moving forward and away from the occupant as the sequence progresses.

4. **Annotation Focus:** The red circles in Panel 1 specifically highlight the three primary points of driver-vehicle interaction: visual input (head/eyes), manual control (hands/wheel), and pedal operation (feet). These annotations are not present in the subsequent "result" states.

5. **Gaze Direction:** The change from two diverging dashed arrows (Panel 1) to a single horizontal arrow (Panel 2) suggests a shift from active scanning of the environment to a fixed, possibly passive, forward gaze.

### Interpretation

This diagram visually narrates a process of **driver disengagement** from the active control of a vehicle. The sequence likely illustrates one of the following scenarios:

* **Autonomous Vehicle Handover:** The transition from human driving (Panel 1) to a state where the vehicle's automated system takes over, allowing the driver to recline and relax (Panels 2-3), potentially leading to a "driver absent" state if the vehicle is fully autonomous (Panel 4).

* **Post-Crash or Medical Event Positioning:** It could depict the recommended or observed positioning of a driver following a collision or a medical emergency, where the seat is reclined to aid breathing or facilitate extraction, and the steering wheel is moved away to create space.

* **Safety System Demonstration:** The diagram may be explaining the function of an advanced safety system that automatically adjusts the seat and steering wheel position upon detecting a specific event (e.g., a pre-collision warning, driver drowsiness, or the initiation of an autonomous driving mode).

The **core message** is the transformation of the driver's role from an active operator to a passive occupant, accompanied by corresponding mechanical adjustments to the vehicle's interior. The red circles in the first panel serve as a baseline, emphasizing the critical interaction points that are subsequently relinquished. The empty final seat is a powerful visual endpoint, indicating a complete transfer of control away from the human.

</details>

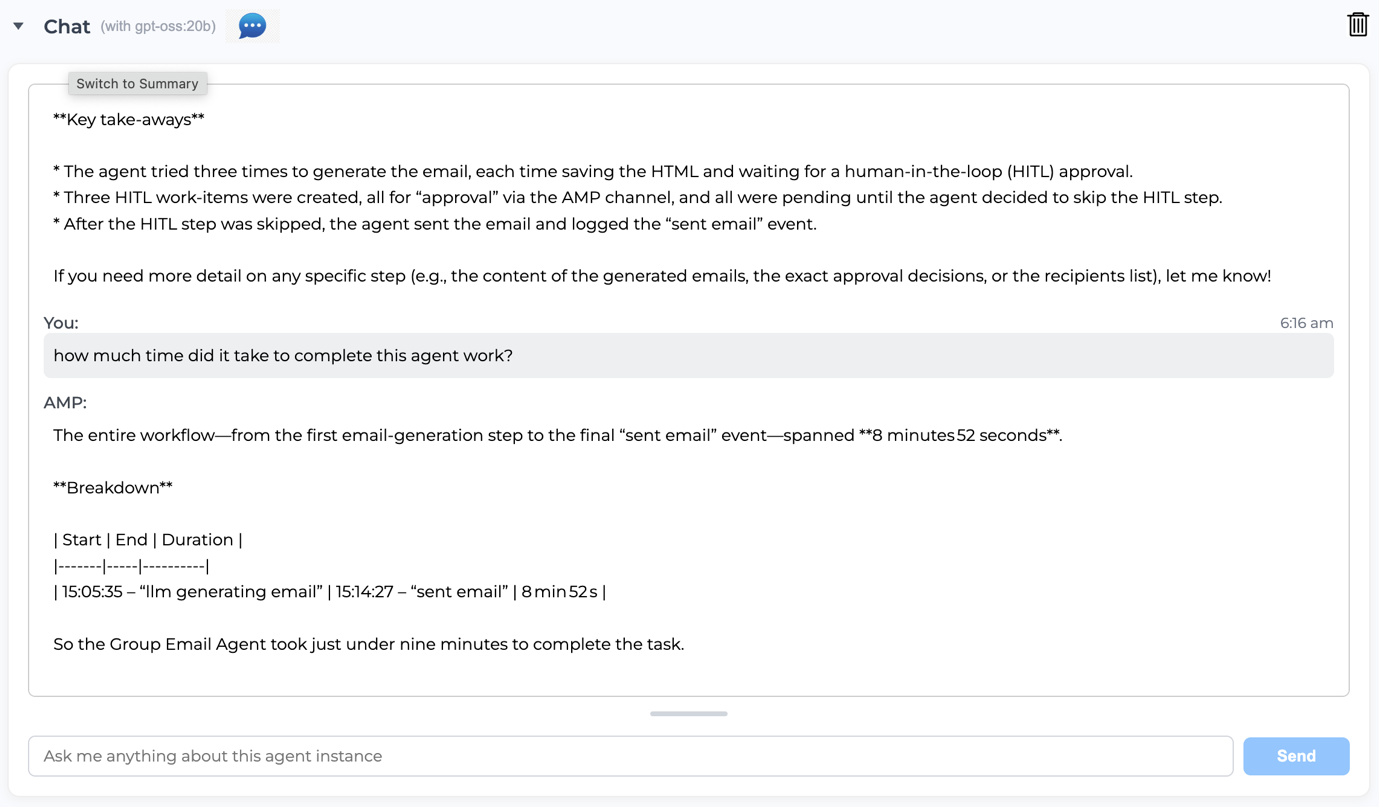

Figure 1: Full Autonomous Driving was a Gradual Evolving Process

This gradual and transparent evolution allowed engineers to identify edge cases, improve algorithms, and refine user interfaces based on real-world feedback. Most importantly, it allowed trust of the society to grow incrementally. Each technological improvement was accompanied by clearer communication about the system’s limitations and capabilities. Drivers learned when to rely on the system and when to take over. Through testing, validation, and iterative learning, both the technology and its human users matured on autonomous driving together. Only through this patient process did the industry approach Level 4 and Level 5 autonomy, where vehicles can operate without human intervention in most or all conditions [12, 13]. The success of this journey lies not only in technological innovation but also in earning human trust through transparency, communication of system limits, and clear accountability.

1. Parallels in AI Agents Development

A similar path must be followed in the evolution of autonomous AI agents. These systems act on behalf of humans in both digital and physical environments, making decisions that can have significant consequences. Like early autonomous vehicles required drivers to remain attentive, current AI agents still depend on Human-in-the-Loop (HITL) oversight to ensure that their actions align with human intent. Human involvement serves as both a safeguard and a source of learning, helping the system adapt responsibly. As discussed in the earlier introduction, research by Wu (2022), Mosqueira-Rey (2023), and Retzlaff (2024) consistently shows that HITL systems improve interpretability, accountability, and model reliability [3, 4, 9]. Rather than viewing human oversight as an administrative overhead, it should be recognized as a critical step in the learning and governance process that helps agents mature progressively.

1. HITL as a Mechanism for Trust and Safety

Human oversight is particularly essential during the intermediate stages of agent development and deployment. At this point, agents are capable of complex reasoning but still lack the contextual, ethical, and situational awareness required for independent operation [16]. Well-designed HITL mechanisms allow humans to validate outputs, correct errors, and prevent harm caused by hallucinations, data biases, or incorrect assumptions. This feedback loop not only safeguards users but also enables the system to learn and improve over time. As the system demonstrates consistent accuracy and reliability, the level of human intervention can be reduced. However, this reduction must be based on measurable improvements, not assumption.

The importance of this gradual approach becomes even more evident in trust-sensitive domains such as finance, human resources, healthcare, legal, and areas that require regulatory compliance. Human oversight ensures share responsibility between humans and AI, maintaining compliance with both legal standard and societal expectations. Just as self-driving systems underwent years of supervised testing before being trusted on public roads, autonomous AI agents must demonstrate reliability before operating independently in high-stakes environments. Yet, their journey to full automation will likely unfold more rapidly, driven by the accelerating pace of AI research and development.

1. A Collaborative Path Toward Full Autonomy

The journey toward fully autonomous agents is both a technological and social process. Technological progress enables higher levels of independence, while social acceptance depends on observable safety and accountability. Research from Bellos (2025) and Mozannar (2025) has shown that when humans and AI collaborate effectively, the result is higher task success rates, improved trust, and greater user confidence [7, 8]. Collaboration thus provides a bridge between current assisted systems and the future of full autonomy.

This process can be viewed as four evolutionary stages of AI agency:

1. Assisted Agents: Humans make decisions while AI supports them through recommendations and reasoning.

1. Collaborative Agents: Humans and AI share responsibility in decision-making and task execution, combining human contextual understanding with AI computational precision and scalability. Human participation remains essential within the agentic workflow, as it enriches situational and semantic context, ensuring that AI agents produce responses and actions that are relevant, accurate, and aligned with user intent and real-world constraints [16].

1. Supervised Autonomy: AI operates independently in constrained environments while remaining accountable through human review.

1. Full Autonomy with Human Governance: AI functions independently within transparent, auditable frameworks that preserve human oversight at the policy level.

Advancement through these stages must be validated by evidence of safety, predictability, and alignment with human intent. This progressive process reflects the same progression that made autonomous driving successful. Skipping these steps would risk premature deployment and loss of confidence, which could set back both innovation and adoption.

1. Toward Trustworthy Autonomy

True autonomy cannot be declared by design; it must be demonstrated through experience and data. Each stage of progress should confirm that the agent can act responsibly and transparently within defined boundaries. By embedding Human-in-the-Loop principles throughout the development process ensures that autonomy and trust grow in tandem. As seen in autonomous driving, confidence arises from steady progress and accountable design. While AI agents may reach maturity more quickly due to faster digital feedback loops and lower physical risks, their path to autonomy must still be guided by the same principles of transparency, validation, and ethical oversight.

1. A Three-Pillar Model for a Safe AI-Agent Operating Environment

In the previous sections, we demonstrated that as AI systems evolve from passive chatbots to fully autonomous agents capable of acting on behalf of humans, the potential for both benefit and harm expands dramatically. In addition, as AI agents evolve to become increasingly independent of humans, their autonomy must emerge through a gradual, trust-building process in which human oversight and collaboration remain essential until AI systems demonstrate consistent reliability and alignment.

Building on these foundations, this section proposes that to enable this evolutionary process to unfold safely and productively, AI agents must operate within a structured environment designed to support growth, supervision, and accountability. Without such an environment, autonomous evolution would occur in an uncontrolled manner, exposing organizations and individuals to unacceptable risks.

To address this need, we propose a Three-Pillar Model (3PM) to support a safe AI-agent operating environment. This model defines the fundamental principles and environmental conditions required to develop, deploy, and operate safe autonomous agents while maintaining a balance between automation and human collaboration. The three pillars are:

1. Transparency of AI Agents ensures visibility into how agents operate across their life cycles.

1. Accountability in Decision-Making provides mechanisms to attribute and explain decisions made by both humans and AI.

1. Trustworthiness through Human-AI Collaboration establishes confidence in agentic systems through well-timed human oversight and fallback safeguards.

Together, these pillars create the foundation for a safe and productive ecosystem where AI agents and humans can share responsibilities and co-evolve toward higher levels of autonomy. They support the long-term goal of achieving responsible, human-aligned AI while ensuring that enterprises can realize measurable return on investment through efficient, reliable, and trustworthy automation.

1. Pillar One: Transparency and Building Trust with AI

Transparency provides the visibility necessary for humans to understand, monitor, guide, and audit agent behavior. It allows operators to know how the agent works, what it is doing, and why it acts in a particular way. This visibility is critical during the evolutionary path described earlier, because it enables humans to supervise and calibrate the agent’s performance as autonomy increases.

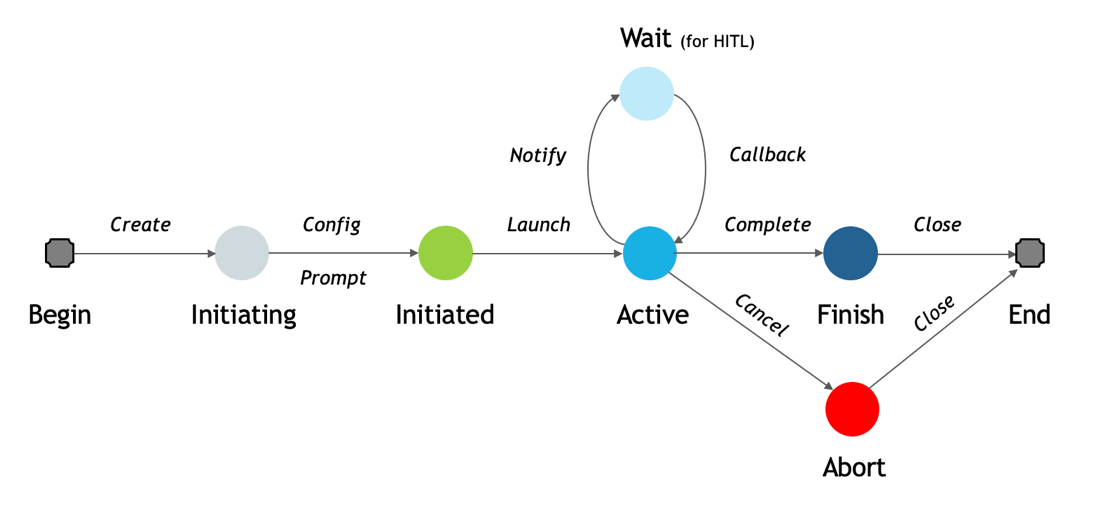

Every agent instance passes through a lifecycle consisting of three stages: initiation, active operation, and completion or termination. Transparency must exist throughout each stage to make the process comprehensible and auditable.

<details>

<summary>SafeAIAgent-img002.png Details</summary>

### Visual Description

## State Diagram: Process Workflow with Human-In-The-Loop (HITL) Intervention

### Overview

The image displays a state transition diagram (or workflow) illustrating the lifecycle of a process. The flow moves generally from left to right, starting at "Begin" and ending at "End," with a central "Active" state that can loop to a "Wait" state for human intervention and can also branch to an "Abort" state. The diagram uses colored circles to represent states and labeled arrows to represent transitions or actions.

### Components/States

The diagram consists of eight distinct states, represented by circles or rounded squares, arranged in a specific spatial layout:

1. **Begin** (Far left): A gray rounded square. This is the entry point.

2. **Initiating** (Left of center): A light gray circle.

3. **Initiated** (Center-left): A green circle.

4. **Active** (Center): A cyan (bright blue) circle. This is a central hub state.

5. **Wait (for HITL)** (Above "Active"): A light blue circle. The label includes "(for HITL)" in parentheses, indicating "Human-In-The-Loop."

6. **Finish** (Center-right): A dark blue circle.

7. **Abort** (Below "Finish"): A red circle.

8. **End** (Far right): A gray rounded square. This is the terminal point.

### Detailed Analysis: Transitions and Flow

The states are connected by directional arrows, each labeled with an action or event that triggers the transition. The flow is as follows:

* **From "Begin" to "Initiating":** Transition labeled *Create*.

* **From "Initiating" to "Initiated":** Two parallel transitions are shown:

* An arrow labeled *Config*.

* An arrow labeled *Prompt*.

* **From "Initiated" to "Active":** Transition labeled *Launch*.

* **From "Active" to "Wait (for HITL)":** Transition labeled *Notify*.

* **From "Wait (for HITL)" back to "Active":** Transition labeled *Callback*. This creates a loop between the "Active" and "Wait" states.

* **From "Active" to "Finish":** Transition labeled *Complete*.

* **From "Active" to "Abort":** Transition labeled *Cancel*.

* **From "Finish" to "End":** Transition labeled *Close*.

* **From "Abort" to "End":** Transition labeled *Close*.

### Key Observations

1. **Central Hub:** The "Active" state is the most connected node, with four outgoing transitions (*Notify*, *Complete*, *Cancel*) and one incoming from the loop (*Callback*).

2. **Human-In-The-Loop Loop:** The "Wait (for HITL)" state forms a dedicated loop with "Active," indicating a process that can pause for human input and then resume.

3. **Two Terminal Paths:** The process can conclude via two distinct paths to the "End" state: a successful path through "Finish" or a failure/termination path through "Abort." Both final transitions are labeled *Close*.

4. **Color Semantics:** Colors appear to signify state type:

* **Gray (Begin/End):** Terminal/Bookend states.

* **Green (Initiated):** A "ready" or "prepared" state.

* **Cyan (Active):** The primary operational state.

* **Light Blue (Wait):** A passive, waiting state.

* **Dark Blue (Finish):** A successful completion state.

* **Red (Abort):** A critical failure or cancellation state.

5. **Parallel Initialization:** The "Initiating" to "Initiated" step has two labels (*Config*, *Prompt*), suggesting these actions may happen concurrently or are both required to advance the state.

### Interpretation

This diagram models a robust, interruptible process workflow. It is not a simple linear sequence but a state machine that accounts for real-world complexities.

* **Process Resilience:** The inclusion of a "Wait (for HITL)" loop demonstrates a design for processes requiring human oversight, approval, or input, making the system collaborative rather than fully autonomous.

* **Error Handling:** The explicit "Abort" state, reachable from "Active" via a *Cancel* action, provides a clear pathway for graceful termination upon failure or user intervention, separate from successful completion.

* **Lifecycle Clarity:** The flow clearly demarcates phases: initialization (*Create, Config, Prompt*), execution (*Launch, Active*), potential interruption (*Notify, Callback*), and conclusion (*Complete/Cancel -> Finish/Abort -> Close -> End*).

* **Ambiguity in "Close":** The use of the same label *Close* for transitions from both "Finish" and "Abort" to "End" is notable. It suggests that the final cleanup or resource release action is identical regardless of whether the process succeeded or was aborted, which is a sensible design for resource management.

**In essence, this diagram depicts a managed process that can be configured, launched, actively run with the option for human pause/resume, and then formally concluded through either success or cancellation.**

</details>

Figure 2 Agent State Transition Diagram Within the 3-Pillar Model

Initiation State. During initiation, a human defines the scope, context, and objectives of the agent’s work. This stage establishes the foundation for safe collaboration. For example, a Research Agent tasked with supporting a product’s go-to-market strategy must receive a clearly defined configuration that includes market segments, data sources, and success criteria. By setting these parameters, the human ensures that the agent’s goals are properly aligned with organizational objectives and ethical standards. This stage also serves as a point of human control, where configurations, role definitions, and constraints can be verified before the agent begins operation.

Active State. Once launched, the agent enters its active state, where it performs the actions for which it was designed. For instance, a Research Agent may conduct web searches and synthesize findings. Likewise, a Payment Agent may initiate payment transactions. A Collection Letter Agent may draft personalized communications based on debtor information and credit conditions. During this phase, activity recording and observability become essential. The environment must automatically generate activity journals that record the agent’s decisions, interactions, and results.

These logs enable oversight and provide a transparent record for post-task evaluation. Moreover, during this phase, the Human-in-the-Loop (HITL) mechanism plays an important role. When the agent encounters uncertainty or ambiguity, it may consult a human collaborator for guidance. Depending on task complexity and risk level, human involvement can vary from direct supervision to collaborative decision-making to minimal observation. Transparency allows both sides to know when and why such handoffs occur.

Abort State. Both human operators and authorized AI subsystems should have the ability to abort or suspend an active agent when necessary. Abort events may occur if the agent cannot fulfill its mission due to missing resources, time constraints, or safety violations. The authority to abort should follow clearly defined governance rules, reflecting the contractual and regulatory conditions under which the agent operates.

Finish State. When an agent finishes or terminates its task, it should produce a clear output along with a record of its entire operation. Transparency requires three complementary forms of documentation:

1. State transition records: Marking changes from initiation to finish.

1. Work progress records: Showing the detailed actions taken by the agent.

1. HITL records: capturing every human–AI interaction and decision.

These records serve as the backbone of transparency within the agent operating environment. They allow developers, regulators, and users to reconstruct events, assess system performance, and identify opportunities for improvement. Without sufficient transparency, human collaborators cannot effectively supervise agent behavior, learn from outcomes, or develop trust in autonomous agent systems. While these three record types are not exhaustive, they represent the minimum information required to achieve acceptable transparency. In practice, the agent system may also maintain additional journals, such as system logs, user feedback logs, performance metrics, and other operational traces, to further support monitoring, analysis, and continuous improvement.

1. Pillar Two: Accountability and Responsibility

While transparency answers what happened, accountability answers why it happened and who is responsible. In the previous section on the evolutionary path, we emphasized that autonomy must be earned gradually. Accountability provides the ethical and operational framework that makes this process safe. As AI agents gain more independence, the environment must ensure that each decision, whether made by a human or AI, is traceable to its source and understandable and explainable in context.

Achieving accountability requires comprehensive decision journaling that records not only the outcomes but also the reasoning and contextual factors behind each choice. This is closely related to the principle of explainability in AI. Agents must be able to provide, upon request, the rationale for their decisions, including the data sources consulted, the constraints considered, and the degree of confidence associated with their outputs.

A practical example illustrates this need. Suppose an automated food-ordering agent failed to account for a customer’s allergy to wheat or soy, resulting in a serious medical incident. In such a case, assigning responsibility requires a clear understanding of each participant’s role in the agentic workflow. Was the customer’s input ambiguous? Did a human worker at the restaurant fail to verify the order details during preparation? Did the AI agent miscommunicate the constraints? Or did the underlying language model generate an inaccurate summary of the order that omitted critical information? Without explicit records of each decision and the reasoning behind it, no clear accountability can be established or assigned.

Accountability serves both corrective and developmental purposes. From a legal or regulatory perspective, it ensures that organizations can assign responsibility when things go wrong. From a technical perspective, it enables learning and continuous improvement. By identifying which part of the agentic workflow led to an undesirable outcome, AI and engineers can make targeted improvements to prevent recurrence. Accountability thus becomes the engine of continuous improvement within the agent ecosystem, reinforcing the learning loop necessary for safe autonomy and growing trust.

1. Pillar Three: Trustworthiness & Human-in-the-Loop

The third pillar, trustworthiness, unites and build on top of the previous two. Transparency makes operations visible, accountability clarifies responsibility, and trustworthiness converts these attributes into confidence and willingness to rely on autonomous systems.

As discussed in the evolutionary path section, human trust is not granted by design but earned through consistent, observable, and reliable performance. During the early phases of adoption, enterprises and end users will trust AI agents only if they can see clear boundaries of control and know that humans can intervene when necessary. Therefore, the operating environment must include mechanisms to specify risk thresholds and escalation rules that determine when human oversight is required.

For example, in domains such as finance or healthcare, high-risk actions such as large transactions or clinical recommendations should automatically trigger human review. These checkpoints form structured Human-in-the-Loop interventions that ensure oversight at critical moments. Conversely, in high-volume, low-risk tasks, AI may operate independently for greater efficiency. Over time, as the system demonstrates reliability, the frequency of human interventions can be gradually reduced, following the same incremental trust-building logic that was illustrated in the autonomous driving analogy. However, any decision to increase the level of autonomy must be explicitly approved by a human authority and clearly documented. In addition, periodic spot checks should be conducted to verify safety and correctness, even after incremental advances in autonomous decision-making have been introduced.

Trustworthiness also recognizes that in some contexts, AI can be more dependable than humans. Machines do not suffer from fatigue, emotional fluctuation, or inconsistency, and in repetitive or data-intensive tasks, AI may exhibit higher reliability than human operators. Accordingly, a trustworthy operating environment must support mutual confidence. Humans must trust AI agents to function within clearly defined safety boundaries, while AI systems must be designed to rely on validated human inputs and to defer judgment appropriately when required. The objective is not blind reliance but calibrated trust, grounded in empirical performance evidence and shared accountability. To support this calibration, every decision and every change must be properly recorded and remain auditable.

Finally, trustworthiness ensures that when failures occur, they do not propagate unchecked. The environment must include robust fallback and recovery mechanisms that detect anomalies based on historical patterns, suspend automated actions, and transfer control to human operators before harm occurs. These safety measures ensure that risk remains manageable in very large-scale deployments with thousands of concurrently operating agents, even as autonomy levels continue to increase.

1. Integrating the Three Pillars in the Evolutionary Process

The Three-Pillar Model is not a theoretical abstraction but a practical extension of the evolutionary approach described earlier. As agents progress from Assisted to Collaborative, to Supervised Autonomy, and ultimately to Full Autonomy under Human Governance, the balance among the three pillars must evolve in parallel with each successive stage of autonomy.

In early stages, transparency plays the dominant role, ensuring that every action is observable, explainable, and auditable. As systems progress into collaborative stages, accountability becomes increasingly important because humans and AI share responsibility for decisions and outcomes. In the later stages, once agents have demonstrated consistent reliability and alignment, trustworthiness becomes the decisive factor that enables increasing levels of autonomy. Importantly, companies and users will always retain the ability to determine the degree of autonomy they are comfortable and willing to grant to different agents operating in their environments. This flexibility allows organizations to balance efficiency with risk tolerance, enabling a gradual and confident transition toward greater autonomy while maintaining control and trust throughout the process.

These pillars together form a feedback ecosystem in which humans and AI learn from each other. Transparency provides data for accountability. Accountability identifies what needs improvement. Trustworthiness motivates greater delegation of control. Through this cycle, autonomy grows safely and progressively.

In conclusion, the 3PM for agent creation, deployment, and operation establishes the essential conditions for safe evolution toward autonomous agents. It ensures that the journey from collaboration to independence occurs within a structure that is observable, responsible, and trustworthy. Only through such an environment can enterprises accelerate adoption, build user confidence, and achieve the full potential of AI agents while preserving human values and safety.

1. A Sample Use Case: Group Email Agent

To illustrate the application of the Three-Pillar Model within a practical context, we consider a Group Email Agent operating in an enterprise-grade agentic environment. This use case demonstrates how transparency, accountability, and trustworthiness jointly ensure safe and effective collaboration between humans and AI.

A Group Email Agent is a common and valuable application for enterprises that need to compose, review, and distribute communications to internal employees, customers, or business partners. Such messages can include policy updates, marketing announcements, product release communications, event invitations, or crisis management notifications. Because of their wide impact, group emails typically require coordination among multiple stakeholders, including representatives from the business unit, marketing and communications teams, legal and compliance departments, and senior management. These participants contribute to drafting, editing, verifying, and approving both the message content and the list of recipients. The Group Email Agent acts as an author, a coordinator, and executor, automating repetitive tasks while preserving human oversight where contextual understanding and judgment are critical.

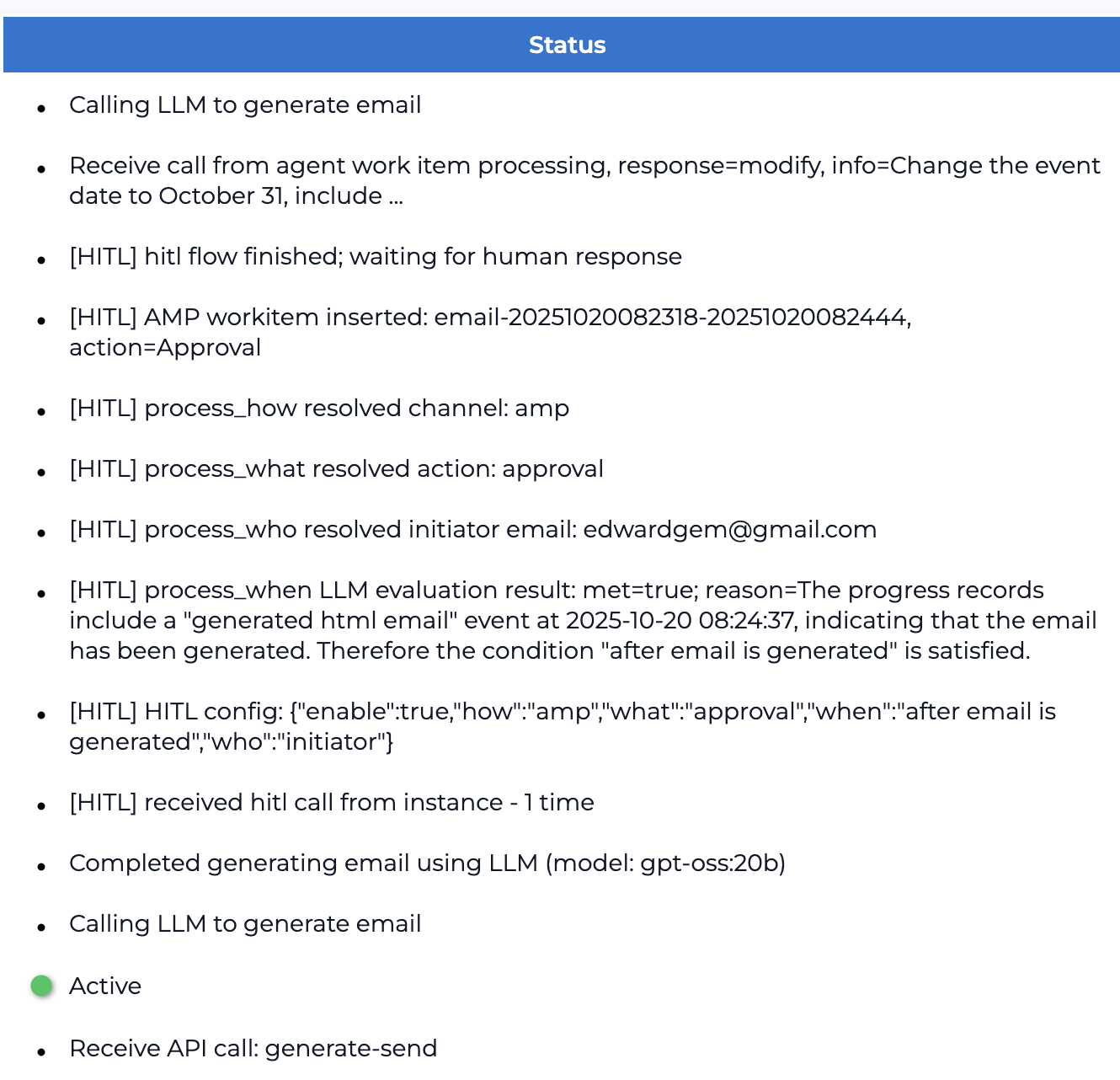

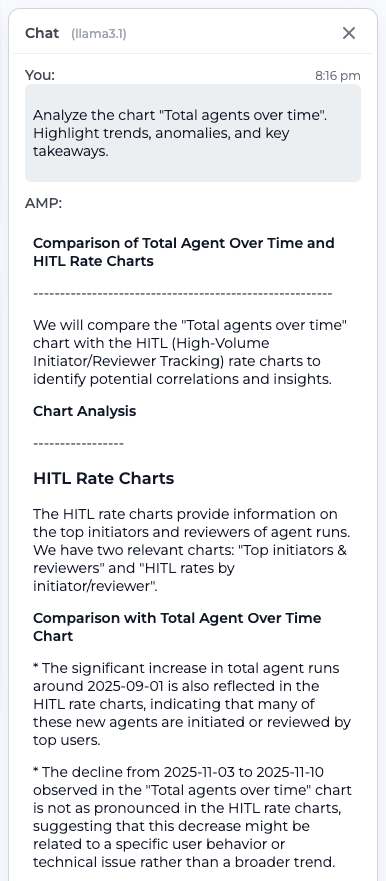

Figure 3 displays the agent activity records captured by the system throughout the lifecycle of a Group Email Agent instance. These records include state transitions, detailed task progress, and Human-in-the-Loop interactions, illustrating how the operating environment maintains continuous transparency and traceability from initiation to completion.

<details>

<summary>SafeAIAgent-img003.png Details</summary>

### Visual Description

## Status Log: Automated Email Generation with Human-in-the-Loop (HITL) Workflow

### Overview

The image displays a status log or console output from an automated system. It details the sequential steps of a workflow that involves generating an email using a Large Language Model (LLM) and incorporating a Human-in-the-Loop (HITL) approval process. The log entries are presented as a bulleted list under a blue header titled "Status."

### Components/Axes

* **Header:** A solid blue bar at the top containing the centered white text "Status".

* **Main Content:** A vertical list of bullet points on a white background, detailing system events and process states.

* **Status Indicator:** A green circular dot followed by the word "Active" near the bottom of the list, indicating the current operational state of the process or a specific step.

### Detailed Analysis / Content Details

The log entries, transcribed in the order they appear, are as follows:

1. `Calling LLM to generate email`

2. `Receive call from agent work item processing, response=modify, info=Change the event date to October 31, include ...`

3. `[HITL] hitl flow finished; waiting for human response`

4. `[HITL] AMP workitem inserted: email-20251020082318-20251020082444, action=Approval`

5. `[HITL] process_how resolved channel: amp`

6. `[HITL] process_what resolved action: approval`

7. `[HITL] process_who resolved initiator email: edwardgem@gmail.com`

8. `[HITL] process_when LLM evaluation result: met=true; reason=The progress records include a "generated html email" event at 2025-10-20 08:24:37, indicating that the email has been generated. Therefore the condition "after email is generated" is satisfied.`

9. `[HITL] HITL config: {"enable":true,"how":"amp","what":"approval","when":"after email is generated","who":"initiator"}`

10. `[HITL] received hitl call from instance - 1 time`

11. `Completed generating email using LLM (model: gpt-oss:20b)`

12. `Calling LLM to generate email`

13. `Active` (preceded by a green dot)

14. `Receive API call: generate-send`

**Language:** All text is in English.

### Key Observations

* **Sequential Process:** The log shows a clear sequence: an initial LLM call, a modification request from an agent, the triggering and completion of an HITL approval flow, and a subsequent LLM call.

* **HITL Workflow Details:** The HITL process is extensively logged. It uses an "amp" channel for an "approval" action initiated by "edwardgem@gmail.com". The trigger condition ("after email is generated") was met based on a timestamped event.

* **System Configuration:** The HITL configuration is explicitly stated in JSON format, defining the enable state, channel, action, trigger condition, and responsible party.

* **Model Identification:** The LLM used for email generation is specified as "gpt-oss:20b".

* **Active State:** The green "Active" indicator appears after a second "Calling LLM to generate email" entry and before an API call to "generate-send," suggesting the system is currently in an active processing state for sending the generated email.

### Interpretation

This log depicts a sophisticated, auditable automated workflow for creating and dispatching communications. The data suggests a system designed for tasks requiring both automation and human oversight.

* **Process Flow:** The workflow appears to be: 1) Generate email draft via LLM, 2) Receive a request to modify the draft (e.g., change a date), 3) Re-generate or finalize the email, 4) Route the finalized email for human approval via an "AMP" channel (likely referring to a specific workflow or messaging platform), 5) Upon approval, proceed to send the email via an API call.

* **Human-in-the-Loop Integration:** The detailed HITL logs demonstrate a robust integration where human approval is a gated step. The system automatically evaluates if its pre-conditions (email generation) are met before requesting human intervention, and it logs the resolution (channel, action, initiator).

* **Audit Trail:** The log serves as a precise audit trail, capturing timestamps, user emails, configuration states, and model versions. This is crucial for debugging, compliance, and understanding the history of a specific automated action.

* **Current State:** The final entries indicate the process has moved past the approval stage and is now actively executing the "generate-send" API call, meaning the approved email is likely being dispatched.

</details>

Figure 3: Agent Activity Records Captured While Running a Group Email Agent

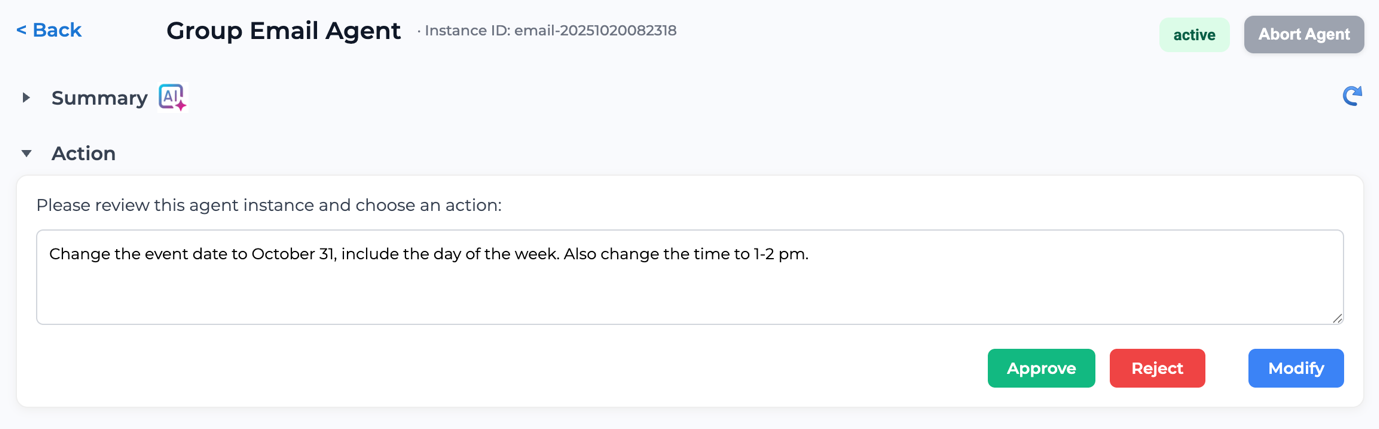

Figure 4 demonstrates a user interface (UI) portal that enables an authorized human participant to provide contextual inputs, review agent activities, and intervene when necessary. This interface supports Human-in-the-Loop collaboration by allowing users to configure, guide, or correct the agent’s actions in real time, ensuring that human oversight remains an integral part of the agent’s operational workflow.

<details>

<summary>SafeAIAgent-img004.png Details</summary>

### Visual Description

## UI Screenshot: Group Email Agent Instance Review Interface

### Overview

This image is a screenshot of a web-based user interface for reviewing and managing an automated "Group Email Agent" instance. The interface presents a specific agent task for human approval or modification. The overall design is clean and functional, with a light theme and clear visual hierarchy.

### Components & Layout

The interface is structured into distinct horizontal sections from top to bottom:

1. **Header/Navigation Bar (Top):**

- **Left:** A `< Back` navigation link.

- **Center:** The title `Group Email Agent` followed by a unique identifier: `Instance ID: email-20251020082318`.

- **Right:** A status badge labeled `active` (green text on a light green background) and a button labeled `Abort Agent` (gray background).

2. **Collapsible Sections (Middle):**

- **Summary Section:** A collapsed section indicated by a right-pointing arrow (`▶`) and the label `Summary`. It includes a small icon with the text `AI` and a sparkle symbol.

- **Action Section:** An expanded section indicated by a down-pointing arrow (`▼`) and the label `Action`. This is the primary focus of the screen.

3. **Action Panel (Main Content):**

- **Instruction Text:** A line of text reads: `Please review this agent instance and choose an action:`

- **Text Input/Display Area:** A large, bordered text box containing the agent's proposed action or message. The text inside is: `Change the event date to October 31, include the day of the week. Also change the time to 1-2 pm.`

- **Action Buttons (Bottom-right of panel):** Three colored buttons are aligned horizontally:

- `Approve` (Green background)

- `Reject` (Red background)

- `Modify` (Blue background)

### Detailed Content Details

- **Instance Identification:** The agent instance is uniquely identified as `email-20251020082318`.

- **Agent Status:** The agent is currently `active`.

- **Proposed Agent Action:** The core content is the instruction within the text box, which specifies two modifications to an event:

1. **Date Change:** To `October 31` and to `include the day of the week`.

2. **Time Change:** To `1-2 pm`.

- **User Decision Path:** The interface provides three clear, mutually exclusive actions for the reviewer: `Approve` the proposed change, `Reject` it, or `Modify` it (presumably to edit the instruction before approval).

### Key Observations

- **Human-in-the-Loop Design:** This interface is a classic example of a human-in-the-loop (HITL) system, where an AI agent performs a task but requires human validation before execution.

- **Clear Status and Control:** The `active` status and the prominent `Abort Agent` button in the header provide immediate system state awareness and an emergency stop function.

- **Action-Oriented Layout:** The design prioritizes the decision-making task. The proposed action is centrally displayed in a large text area, and the decision buttons are large, color-coded (green for go, red for stop, blue for edit), and placed in a conventional location for form submission.

- **Context Preservation:** The `Summary` section is collapsed, suggesting it contains background information that can be accessed if needed, keeping the primary action view uncluttered.

### Interpretation

This screenshot captures a critical governance point in an automated workflow. The system is designed to manage tasks that require precision and have potential consequences (like changing event details for a group), ensuring a human reviews and authorizes significant modifications.

- **What the data suggests:** The agent has parsed a request or identified a need to update an event's schedule. The specificity of the instruction ("include the day of the week") indicates the agent is attempting to fulfill a detailed requirement, not just make a generic change.

- **How elements relate:** The header provides system context and control. The `Action` panel is the interactive core, presenting the agent's output (the proposed change) and the user's input mechanisms (the buttons). The `Summary` likely provides the history or rationale leading to this proposed action.

- **Notable Anomalies/Considerations:** The instance ID `email-20251020082318` suggests a timestamp (October 20, 2025, 08:23:18), which could be used for logging and auditing. The interface does not show the original event details or the source of the change request, implying that context is either in the collapsed `Summary` or known to the user from prior interaction. The user's decision here will directly determine whether an automated email is sent with the updated event information.

</details>

Figure 4: UI for Obtaining Human-in-the-Loop Inputs During an Agentic Workflow

Figure 5 illustrates how users can interact with the large language model (LLM) to discover, query, and inspect the progress of agents operating within the environment. Through conversational interfaces, users can retrieve explanations, review activity logs, and monitor task completion status. This interactive transparency fosters mutual understanding and trust between humans and AI agents, allowing confidence to grow naturally as agents demonstrate reliability and accountability over time.

<details>

<summary>SafeAIAgent-img005.png Details</summary>

### Visual Description

## Screenshot: AI Agent Workflow Chat Interface

### Overview

This image is a screenshot of a chat interface displaying a conversation between a user and an AI system named "AMP." The conversation summarizes the actions and timing of an automated "Group Email Agent" that attempted to generate and send an email, involving human-in-the-loop (HITL) approval steps. The interface includes a header, a scrollable chat history, and a message input area.

### Components/Axes

* **Header Bar (Top):**

* Left: A dropdown arrow and the label "Chat (with gpt-oss:20b)" next to a blue chat bubble icon.

* Right: A trash can icon (likely for deleting the chat).

* **Chat History Area (Center):**

* Contains a structured message from "AMP" (presumably the system) and a user query.

* A button labeled "Switch to Summary" is positioned at the top-left of the message container.

* **Message Input Area (Bottom):**

* A text input field with the placeholder text: "Ask me anything about this agent instance".

* A blue "Send" button to the right of the input field.

* A thin horizontal divider line above the input area.

### Content Details

The chat history contains the following text, transcribed precisely:

**Message from AMP (System):**

```

**Key take-aways**

* The agent tried three times to generate the email, each time saving the HTML and waiting for a human-in-the-loop (HITL) approval.

* Three HITL work-items were created, all for "approval" via the AMP channel, and all were pending until the agent decided to skip the HITL step.

* After the HITL step was skipped, the agent sent the email and logged the "sent email" event.

If you need more detail on any specific step (e.g., the content of the generated emails, the exact approval decisions, or the recipients list), let me know!

```

**User Query (Timestamp: 6:16 am):**

```

how much time did it take to complete this agent work?

```

**Response from AMP:**

```

The entire workflow—from the first email-generation step to the final "sent email" event—spanned **8 minutes 52 seconds**.

**Breakdown**

| Start | End | Duration |

|-------|-----|----------|

| 15:05:35 – "I'm generating email" | 15:14:27 – "sent email" | 8 min 52 s |

So the Group Email Agent took just under nine minutes to complete the task.

```

### Key Observations

1. **Workflow Summary:** The agent's process involved three iterative attempts to generate an email, each pausing for HITL approval. The HITL steps were ultimately bypassed.

2. **Timing Data:** The total workflow duration is explicitly stated as 8 minutes and 52 seconds. A data table provides the start event ("I'm generating email" at 15:05:35), the end event ("sent email" at 15:14:27), and the calculated duration.

3. **Interface Elements:** The UI is minimal, with a clear separation between the historical chat log and the current input field. The "Switch to Summary" button suggests an alternative view mode is available.

4. **Language:** All text in the image is in English.

### Interpretation

This screenshot documents the execution log and performance metrics of an autonomous email-sending agent. The data demonstrates a specific failure mode or design choice in the agent's workflow: it created multiple HITL approval requests but proceeded without receiving them, indicating either a timeout, a deliberate override, or a system configuration that allows skipping approvals after a certain point.

The primary factual information extracted is the agent's **total task completion time (8m 52s)** and the **sequence of its key actions** (generate → wait for HITL → skip HITL → send). The conversation itself serves as a technical audit trail, answering a user's query about process duration with precise timestamps. The interface is designed for querying and reviewing the actions of AI agent instances.

</details>

Figure 5: Transparency Enables Human-AI Collaboration with Trustworthiness in Agent Operation

By systematically capturing and recording agent activities, the operating environment enables a high degree of transparency that supports comprehensive analytics on both agent behavior and Human-in-the-Loop interactions. This transparency makes it possible to surface aggregated insights through a dashboard component, which serves as a central interface for monitoring, managing, and improving a large-scale agent operating environment. The dashboard plays a critical role in supporting operational oversight, performance evaluation, and continuous improvement, while also informing decisions about when and how to safely increase the level of autonomy within agentic workflows.

Figure 6 illustrates the dashboard view, which presents a collection of analytic charts summarizing agent execution patterns, lifecycle states, intervention frequencies, and HITL engagement metrics. These visualizations allow users to quickly assess system health, identify bottlenecks, detect anomalous behavior, and understand where human involvement is most frequently required. By consolidating this information at scale, the dashboard enables organizations to manage thousands of concurrently operating agents in a controlled and informed manner.

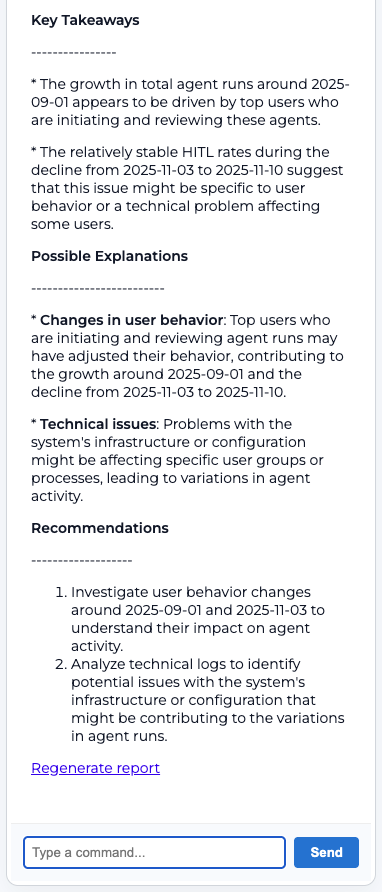

In addition to static visualization, the dashboard integrates interactive analysis through a natural language interface powered by a large language model. As shown in Figure 7, selecting a chart allows users to open an LLM-driven chat window that generates a contextual analysis report explaining observed trends and patterns. Users can further engage in dialogue with the LLM to ask follow-up questions, explore root causes, and derive business insights related to efficiency, risk, and workflow optimization. This combination of visual analytics and conversational analysis supports deeper understanding of agentic behavior and helps users identify targeted opportunities to refine processes, improve safety, and incrementally advance the autonomy of the overall agentic workflow system.

<details>

<summary>SafeAIAgent-img006.png Details</summary>

### Visual Description

## Dashboard: Realtime Adoption & Quality Trends

### Overview

This image displays a comprehensive analytics dashboard titled "Realtime adoption & quality trends." It presents a series of charts and metrics organized into three main sections: Volume & Adoption, Quality & HITL (Human-In-The-Loop), and Performance & Operations. The dashboard is filtered for a specific date range and user group, showing weekly aggregated data.

### Components/Axes

**Header & Filters:**

* **Title:** "Realtime adoption & quality trends" (top-left).

* **Notification:** "Edwardgem, you have 3 work items awaiting your attention" (top-center).

* **Filter Bar:**

* **Start date:** 08/01/2025

* **End date:** 12/03/2025

* **Agent types:** "5 selected"

* **User:** "All users"

* **Granularity:** "Weekly"

**Section 1: Volume & Adoption**

* **Chart 1: Total agents over time** (Line Chart)

* **Y-axis:** Linear scale from 0 to 8000.

* **X-axis:** Date range from approximately 2025-08-11 to 2025-12-01.

* **Chart 2: Volume by agent type** (100% Stacked Bar Chart)

* **Y-axis:** Percentage scale from 0% to 100%.

* **X-axis:** Date markers for 2025-08-11, 2025-09-15, 2025-10-20, 2025-12-01.

* **Legend (Bottom):** Customer-Support (blue), Group-Email (yellow), Invoice-Payment (green), Newsletter (purple), Research (pink).

* **Chart 3: Top agent types** (Horizontal Bar Chart)

* **X-axis:** Linear scale from 0 to 40,000.

* **Y-axis (Categories):** Invoice-Payment, Customer-Support, Group-Email, Research, Newsletter.

**Section 2: Quality & HITL**

* **Chart 4: Finished vs aborted** (Area Chart)

* **Y-axis:** Linear scale from 0 to 8000.

* **X-axis:** Date range from approximately 2025-08-11 to 2025-12-01.

* **Legend (Bottom):** finished (green line/area), aborted (pink line/area).

* **Chart 5: Error distribution** (Donut Chart)

* **Segments (from largest to smallest):** none (light purple), validation_error (dark blue), timeout (orange), user_cancelled (red), system_error (purple).

* **Legend (Bottom):** none, validation_error, timeout, user_cancelled, system_error.

* **Chart 6: HITL rate (%)** (Line Chart)

* **Y-axis:** Percentage scale from 0% to 80%.

* **X-axis:** Date range from approximately 2025-08-11 to 2025-12-01.

**Section 3: Performance & Operations**

* **Chart 7: Average duration by agent type** (Vertical Bar Chart)

* **Y-axis:** Linear scale from 0m to 333m (likely minutes).

* **X-axis (Categories):** Customer-Support, Invoice-Payment, Research. (Note: Only three categories are labeled, but there appear to be 5 bars total).

* **Chart 8: Queue wait trend** (Line Chart)

* **Y-axis:** Linear scale from 0s to 60s (seconds).

* **X-axis:** Date range from approximately 2025-08-11 to 2025-12-01.

* **Chart 9: Concurrency heatmap** (Heatmap)

* **Y-axis:** Linear scale from 0 to 23 (likely representing hours of the day).

* **X-axis:** Date range from approximately 2025-10-05 to 2025-12-04.

* **Color Scale:** Light purple to dark purple, indicating increasing concurrency intensity.

### Detailed Analysis

**Volume & Adoption:**

1. **Total agents over time:** The line shows a sharp increase starting around late August 2025, peaking at approximately 6,500 agents in mid-September. It then plateaus around 5,500-6,000 before a steep decline beginning in late November, dropping below 2,000 by early December.

2. **Volume by agent type:** The composition changes significantly over time. In early August, "Customer-Support" (blue) and "Group-Email" (yellow) dominate. By mid-September, "Invoice-Payment" (green) becomes the largest segment, comprising over 50% of the volume, and maintains this dominance through December. "Research" (pink) and "Newsletter" (purple) remain small, stable segments.

3. **Top agent types:** "Invoice-Payment" has the highest volume, with its bar extending to approximately 40,000. "Customer-Support" is next at ~15,000, followed by "Group-Email" (~12,000), "Research" (~8,000), and "Newsletter" (~2,000).

**Quality & HITL:**

1. **Finished vs aborted:** The "finished" (green) and "aborted" (pink) lines follow a nearly identical trend to the "Total agents over time" chart. The "finished" volume is consistently higher than "aborted." Both peak in mid-September (~6,000 finished, ~500 aborted) and decline sharply in late November.

2. **Error distribution:** The vast majority of outcomes fall into the "none" category (light purple), representing successful completions without errors. The remaining errors are distributed among "validation_error," "timeout," "user_cancelled," and "system_error," with each appearing to be less than 10% of the total.

3. **HITL rate (%):** The Human-In-The-Loop rate shows a clear downward trend. It starts near 80% in early August, drops to around 60% by mid-September, and falls further to fluctuate between 40-50% from October through December.

**Performance & Operations:**

1. **Average duration by agent type:** "Invoice-Payment" has the longest average duration, reaching the top of the scale at ~333 minutes. "Research" is next at ~180m, followed by "Customer-Support" at ~160m. Two unlabeled bars show much shorter durations (~30m and ~60m).

2. **Queue wait trend:** The average queue wait time remains relatively stable, fluctuating between approximately 45 and 55 seconds throughout the period, with a very slight downward trend visible from October onward.

3. **Concurrency heatmap:** The heatmap shows consistent, high concurrency (darker purple bands) across most hours of the day (Y-axis 0-23) throughout the displayed period (Oct-Dec). There are no obvious low-concurrency hours or days, suggesting sustained, around-the-clock usage.

### Key Observations

* **Volume Peak and Decline:** A major adoption event occurred in September 2025, driving a peak in total agent volume, which was not sustained and declined sharply by year-end.

* **Dominance of Invoice-Payment:** The "Invoice-Payment" agent type became the dominant use case by volume in September and also has the longest average task duration.

* **Improving Automation:** The steadily decreasing HITL rate suggests the system is becoming more automated or reliable over time, requiring less human intervention.

* **Stable Operational Metrics:** Despite large swings in volume, queue wait times remained stable, indicating robust operational scaling. Concurrency was consistently high.

* **Error Profile:** Errors are a small minority of outcomes, with "none" (success) being the overwhelming category.

### Interpretation

This dashboard tells a story of a system experiencing a rapid, successful adoption surge for a specific function (Invoice-Payment) in Q3 2025, followed by a significant contraction in overall usage by Q4. The data suggests the initial spike may have been due to a specific project, campaign, or onboarding wave that concluded.

The concurrent decline in the HITL rate is a positive indicator of system maturation—as usage patterns became established (particularly for the dominant Invoice-Payment flow), the agents likely required less human oversight. The stability of queue wait times amidst volatile volume is a key operational success, showing the backend infrastructure could handle the peak load without degrading user experience. The persistent high concurrency in the heatmap indicates the tool is integral to continuous, likely global, business processes.

The sharp drop in total agents at the end of the period raises questions: Was this a planned wind-down, a seasonal effect, or an indication of a problem? The dashboard itself doesn't provide the cause, but it clearly flags this trend as requiring investigation. The dominance of a single agent type (Invoice-Payment) also presents a risk; the system's health is heavily tied to the demand for that one function.

</details>

Figure 6 Analytic Charts Illustrating Realtime Adoption and Quality Trends of the Agentic System

<details>

<summary>SafeAIAgent-img007.png Details</summary>

### Visual Description

## Chat Interface Screenshot: Technical Analysis Conversation

### Overview

This image is a screenshot of a chat interface window titled "Chat (llama3.1)". The window displays a conversation between a user ("You") and an AI assistant ("AMP"). The user requests an analysis of a chart titled "Total agents over time," and AMP provides a detailed comparative analysis with another set of charts called "HITL Rate Charts." The interface includes standard UI elements like a close button and a timestamp.

### Components/Axes

* **Window Header:** Located at the top. Contains the title "Chat (llama3.1)" on the left and a close button (an "X" icon) on the right.

* **Conversation Area:** The main body of the window, containing the message thread.

* **Message Bubbles:** Two distinct message bubbles are present.

* **User Message ("You"):** Positioned at the top of the conversation area. Includes a timestamp "8:16 pm" in the top-right corner of the bubble.

* **Assistant Message ("AMP"):** Positioned below the user's message. This is a longer, multi-paragraph response with formatted headings and bullet points.

* **Text Content:** All text is in English. The content is a technical analysis comparing data trends.

### Detailed Analysis / Content Details

**1. User Message (Top Bubble):**

* **Sender Label:** "You:"

* **Timestamp:** "8:16 pm"

* **Message Text:** "Analyze the chart "Total agents over time". Highlight trends, anomalies, and key takeaways."

**2. Assistant Message (AMP - Bottom Bubble):**

* **Sender Label:** "AMP:"

* **Main Title:** "Comparison of Total Agent Over Time and HITL Rate Charts"

* **Divider Line:** A dashed line (`-------------------`) separates the title from the body.

* **Introductory Paragraph:** "We will compare the "Total agents over time" chart with the HITL (High-Volume Initiator/Reviewer Tracking) rate charts to identify potential correlations and insights."

* **Section 1 Title:** "Chart Analysis"

* **Divider Line:** Another dashed line (`-------------------`).

* **Section 2 Title:** "HITL Rate Charts"

* **Section 2 Text:** "The HITL rate charts provide information on the top initiators and reviewers of agent runs. We have two relevant charts: "Top initiators & reviewers" and "HITL rates by initiator/reviewer"."

* **Section 3 Title:** "Comparison with Total Agent Over Time Chart"

* **Bullet Point 1:** "* The significant increase in total agent runs around 2025-09-01 is also reflected in the HITL rate charts, indicating that many of these new agents are initiated or reviewed by top users."

* **Bullet Point 2:** "* The decline from 2025-11-03 to 2025-11-10 observed in the "Total agents over time" chart is not as pronounced in the HITL rate charts, suggesting that this decrease might be related to a specific user behavior or technical issue rather than a broader trend."

### Key Observations

* **Structured Analysis:** AMP's response is methodically structured, moving from a general comparison statement to specific chart descriptions and then to a direct point-by-point comparison.

* **Specific Date Ranges:** The analysis references precise date ranges for observed trends: a spike around "2025-09-01" and a decline from "2025-11-03 to 2025-11-10".

* **Acronym Definition:** The acronym "HITL" is explicitly defined as "High-Volume Initiator/Reviewer Tracking".

* **Chart References:** AMP references three distinct charts by name: 1) "Total agents over time", 2) "Top initiators & reviewers", and 3) "HITL rates by initiator/reviewer".

* **Causal Hypothesis:** The analysis proposes a hypothesis for the observed data discrepancy (the decline not being as pronounced in HITL charts), attributing it to "specific user behavior or technical issue".

### Interpretation

This screenshot captures a moment of technical data analysis within a conversational AI interface. The core information is not the visual chart itself, but the **textual interpretation of chart data** provided by the AI assistant.

The analysis suggests a multi-chart dashboard is being examined. The key insight is a **correlation** between overall agent activity and activity from a subset of "top users" (initiators/reviewers) during a period of growth (September 2025). However, a **divergence** appears during a period of decline (early November 2025), where the drop in overall activity is not mirrored as strongly in the top-user metrics. This leads AMP to infer that the November decline may be **non-systemic**—potentially caused by a localized issue affecting a broader user base rather than a change in the behavior of the core, high-volume users.

The chat interface itself is minimal, with a focus on the textual content. The "llama3.1" in the title likely indicates the underlying language model version powering the chat assistant. The conversation demonstrates the AI's capability to perform comparative analysis across multiple data sources and generate hypotheses based on observed trends and anomalies.

</details>

<details>

<summary>SafeAIAgent-img008.png Details</summary>

### Visual Description

## Screenshot: Technical Report Summary

### Overview

The image is a screenshot of a digital interface displaying a structured technical report summary. The report analyzes variations in "agent runs" and "HITL rates" over specific date ranges, offering key takeaways, possible explanations, and recommendations. The interface includes interactive elements for report regeneration and command input.

### Components/Axes

The content is organized into distinct textual sections within a light-themed interface. There are no traditional chart axes or legends. The primary components are:

1. **Header Section:** Contains the title "Key Takeaways".

2. **Body Sections:** Contain bulleted and numbered lists under the headings "Key Takeaways", "Possible Explanations", and "Recommendations".

3. **Interactive Footer:** Contains a "Regenerate report" link, a text input field labeled "Type a command...", and a "Send" button.

### Detailed Analysis / Content Details

**Text Transcription (English):**

**Key Takeaways**

-------------------

* The growth in total agent runs around 2025-09-01 appears to be driven by top users who are initiating and reviewing these agents.

* The relatively stable HITL rates during the decline from 2025-11-03 to 2025-11-10 suggest that this issue might be specific to user behavior or a technical problem affecting some users.

**Possible Explanations**

-------------------

* **Changes in user behavior:** Top users who are initiating and reviewing agent runs may have adjusted their behavior, contributing to the growth around 2025-09-01 and the decline from 2025-11-03 to 2025-11-10.

* **Technical issues:** Problems with the system's infrastructure or configuration might be affecting specific user groups or processes, leading to variations in agent activity.

**Recommendations**

-------------------

1. Investigate user behavior changes around 2025-09-01 and 2025-11-03 to understand their impact on agent activity.

2. Analyze technical logs to identify potential issues with the system's infrastructure or configuration that might be contributing to the variations in agent runs.

[Link Text: Regenerate report] (Displayed in purple, underlined)

[Input Field Placeholder: Type a command...]

[Button Label: Send] (Displayed in blue)

### Key Observations

* **Temporal Focus:** The analysis centers on two specific date ranges: around **2025-09-01** (associated with growth) and from **2025-11-03 to 2025-11-10** (associated with a decline).

* **Metric Correlation:** The report links changes in "total agent runs" with the stability of "HITL rates" (Human-In-The-Loop rates) to form its hypothesis.

* **Dual Hypothesis:** The "Possible Explanations" section consistently presents two parallel theories for the observed phenomena: one behavioral (user actions) and one technical (system issues).

* **Action-Oriented:** The "Recommendations" directly map to the two hypotheses, suggesting parallel investigative paths.