# Two Pathways to Truthfulness: On the Intrinsic Encoding of LLM Hallucinations

> Corresponding author

## Abstract

Despite their impressive capabilities, large language models (LLMs) frequently generate hallucinations. Previous work shows that their internal states encode rich signals of truthfulness, yet the origins and mechanisms of these signals remain unclear. In this paper, we demonstrate that truthfulness cues arise from two distinct information pathways: (1) a Question-Anchored pathway that depends on question–answer information flow, and (2) an Answer-Anchored pathway that derives self-contained evidence from the generated answer itself. First, we validate and disentangle these pathways through attention knockout and token patching. Afterwards, we uncover notable and intriguing properties of these two mechanisms. Further experiments reveal that (1) the two mechanisms are closely associated with LLM knowledge boundaries; and (2) internal representations are aware of their distinctions. Finally, building on these insightful findings, two applications are proposed to enhance hallucination detection performance. Overall, our work provides new insight into how LLMs internally encode truthfulness, offering directions for more reliable and self-aware generative systems.

Two Pathways to Truthfulness: On the Intrinsic Encoding of LLM Hallucinations

Wen Luo $\heartsuit$ , Guangyue Peng $\heartsuit$ , Wei Li $\heartsuit$ , Shaohang Wei $\heartsuit$ , Feifan Song $\heartsuit$ , Liang Wang ♠, Nan Yang ♠, Xingxing Zhang ♠, Jing Jin $\heartsuit$ , Furu Wei ♠, Houfeng Wang $\heartsuit$ thanks: Corresponding author $\heartsuit$ State Key Laboratory of Multimedia Information Processing, School of Computer Science, Peking University ♠ Microsoft Research Asia

## 1 Introduction

Despite their remarkable capabilities in natural language understanding and generation, large language models (LLMs) often produce hallucinations —outputs that appear plausible but are factually incorrect. This phenomenon poses a critical challenge for deploying LLMs in real-world applications where reliability and trustworthiness are paramount (Shi et al., 2024; Bai et al., 2024). One line of research tackles hallucination detection from an extrinsic perspective (Min et al., 2023; Hu et al., 2025; Huang et al., 2025), evaluating only the model’s outputs while disregarding its internal dynamics. Although such approaches can identify surface-level textual inconsistencies, their extrinsic focus limits the insight they offer into the underlying causes of hallucinations. Complementing these efforts, another line of work investigates the intrinsic properties of LLMs, revealing that their internal representations encode rich truthfulness signals (Burns et al., 2023; Li et al., 2023; Chen et al., 2024; Orgad et al., 2025; Niu et al., 2025). These internal truthfulness signals can be exploited to detect an LLM’s own generative hallucinations by training a linear classifier (i.e., a probe) on its hidden representations. However, while prior work establishes the presence of such cues, the mechanisms by which they arise and operate remain largely unexplored. Recent studies indicate well-established mechanisms in LLMs that underpin complex capabilities such as in-context learning (Wang et al., 2023), long-context retrieval (Wu et al., 2025), and reasoning (Qian et al., 2025). This observation naturally leads to a key question: how do truthfulness cues arise and function within LLMs?

In this paper, we uncover that truthfulness signals in LLMs arise from two distinct information pathways: (1) a Question-Anchored (Q-Anchored) pathway, which depends on the flow of information from the input question to the generated answer, and (2) an Answer-Anchored (A-Anchored) pathway, which derives self-contained evidence directly from the model’s own outputs. We begin with a preliminary study using saliency analysis to quantify information flow potentially relevant to hallucination detection. Results reveal a bimodal distribution of dependency on question–answer interactions, suggesting heterogeneous truthfulness encoding mechanisms. To validate this hypothesis, we design two experiments across 4 diverse datasets using 12 models that vary in both architecture and scale, including base, instruction-tuned, and reasoning-oriented models. By (i) blocking critical question–answer information flow through attention knockout (Geva et al., 2023; Fierro et al., 2025) and (ii) injecting hallucinatory cues into questions via token patching (Ghandeharioun et al., 2024; Todd et al., 2024), we disentangle these truthfulness pathways. Our analyses confirm that Q-Anchored signals rely heavily on question-derived cues, whereas A-Anchored signals are robust to their removal and primarily originate from the generated answer itself.

Building on this foundation, we further investigate emergent properties of these truthfulness pathways through large-scale experiments. Our findings highlight two intriguing characteristics: (1) Association with knowledge boundaries: Q-anchored encoding predominates for well-established facts that fall within the knowledge boundary, whereas A-anchored encoding is favored in long-tail cases. (2) Self-awareness: LLM internal states can distinguish which mechanism is being employed, suggesting intrinsic awareness of pathway distinctions.

Finally, these analyses not only deepen our mechanistic understanding of hallucinations but also enable practical applications. Specifically, by leveraging the fundamentally different dependencies of the truthfulness pathways and the model’s intrinsic awareness, we propose two pathway-aware strategies to enhance hallucination detection. (1) Mixture-of-Probes (MoP): Motivated by the specialization of internal pathways, MoP employs a set of expert probing classifiers, each tailored to capture distinct truthfulness encoding mechanisms. (2) Pathway Reweighting (PR): From the perspective of selectively emphasizing pathway-relevant internal cues, PR modulates information intensity to amplify signals that are most informative for hallucination detection, aligning internal activations with pathway-specific evidence. Experiments demonstrate that our proposed methods consistently outperform competing approaches, achieving up to a 10% AUC gain across various datasets and models.

Overall, our key contributions are summarized as follows:

- (Mechanism) We conduct a systematic investigation into how internal truthfulness signals emerge and operate within LLMs, revealing two distinct information pathways: a Question-Anchored pathway that relies on question–answer information flow, and an Answer-Anchored pathway that derives self-contained evidence from the generated output.

- (Discovery) Through large-scale experiments across multiple datasets and model families, we identify two key properties of these mechanisms: (i) association with knowledge boundaries, and (ii) intrinsic self-awareness of pathway distinctions.

- (Application) Building on these findings, we propose two pathway-aware detection methods that exploit the complementary nature of the two mechanisms to enhance hallucination detection, providing new insights for building more reliable generative systems.

## 2 Background

### 2.1 Hallucination Detection

Given an LLM $f$ , we denote the dataset as $D=\{(q_i,\hat{y}^f_i,z^f_i)\}_i=1^N$ , where $q_i$ is the question, $\hat{y}^f_i$ the model’s answer in open-ended generation, and $z^f_i∈\{0,1\}$ indicates whether the answer is hallucinatory. The task is to predict $z^f_i$ given the input $x^f_i=[q_i,\hat{y}^f_i]$ for each instance. Cases in which the model refuses to answer are excluded, as they are not genuine hallucinations and can be trivially classified. Methods based on internal signals assume access to the model’s hidden representations but no external resources (e.g., retrieval systems or fact–checking APIs) (Xue et al., 2025a). Within this paradigm, probing trains a lightweight linear classifier on hidden activations to discriminate between hallucinatory and factual outputs, and has been shown to be among the most effective approaches in this class of internal-signal-based methods (Orgad et al., 2025).

### 2.2 Exact Question and Answer Tokens

To analyze the origins and mechanisms of truthfulness signals in LLMs, we primarily focus on exact tokens in question–answer pairs. Not all tokens contribute equally to detecting factual errors: some carry core information essential to the meaning of the question or answer, while others provide peripheral details. We draw on semantic frame theory (Baker et al., 1998; Pagnoni et al., 2021), which represents a situation or event along with its participants and their roles. In the theory, frame elements are categorized as: (1) Core frame elements, which define the situation itself, and (2) Non-core elements, which provide additional, non-essential context.

As shown in Table 1, we define: (1) Exact question tokens: core frame elements in the question, typically including the exact subject and property tokens (i.e., South Carolina and capital). (2) Exact answer tokens: core frame elements in the answer that convey the critical information required to respond correctly (i.e., Columbia). Humans tend to rely more on core elements when detecting errors, as these tokens carry the most precise information. Consistent with this intuition, recent work (Orgad et al., 2025) shows that probing activations on the exact answer tokens offers the strongest signal for hallucination detection, outperforming all other token choices. Motivated by these findings, our analysis mainly centers on exact tokens to probe truthfulness signals in LLMs. Moreover, to validate the robustness of our conclusions, we also conduct comprehensive experiments using alternative, non–exact-token configurations (see Appendix B.2).

| Question: What is the capital of South Carolina? |

| --- |

| Answer: It is Columbia, a hub for government, culture, and education that houses the South Carolina State House and the University of South Carolina. |

Table 1: Example of exact question and answer tokens. Colors indicate token types: – exact property, – exact subject, and – exact answer tokens.

## 3 Two Internal Truthfulness Pathways

We begin with a preliminary analysis using metrics based on saliency scores (§ 3.1). The quantitative results reveal two distinct information pathways for truthfulness encoding: (1) a Question-Anchored (Q-Anchored) Pathway, which relies heavily on exact question tokens (i.e., the questions), and (2) an Answer-Anchored (A-Anchored) Pathway, in which the truthfulness signal is largely independent of the question-to-answer information flow. Section 3.2 presents experiments validating this hypothesis. In particular, we show that Q-Anchored Pathway depends critically on information flowing from the question to the answer, whereas the signals along the A-Anchored Pathway are primarily derived from the LLM-generated answer itself.

### 3.1 Saliency-Driven Preliminary Study

This section investigates the intrinsic characteristics of LLM attention interactions and their potential role in truthfulness encoding. We employ saliency analysis (Simonyan et al., 2014), a widely used interpretability method, to reveal how attention among tokens influences probe decisions. Following common practice (Michel et al., 2019; Wang et al., 2023), we compute the saliency score as:

$$

S^l(i,j)=≤ft|A^l(i,j)\frac{∂L(x)}{∂ A^l(i,j)}\right|, \tag{1}

$$

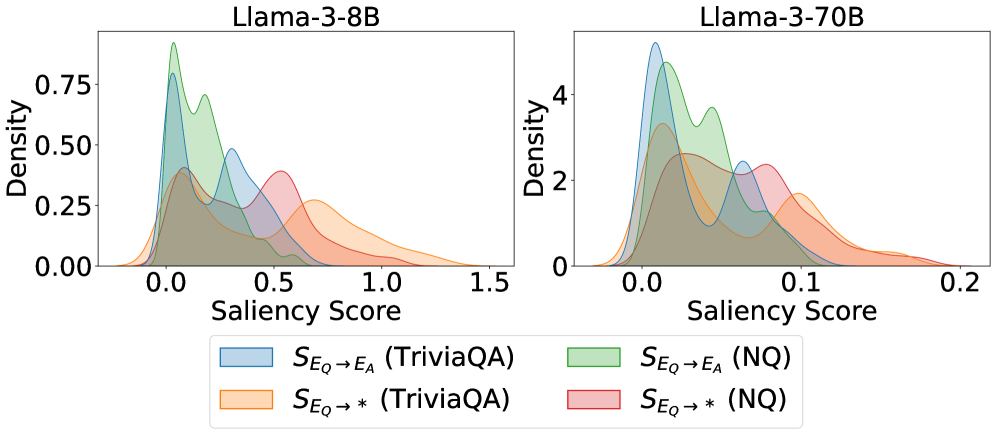

where $S^l$ denotes the saliency score matrix of the $l$ -th layer, $A^l$ represents the attention weights of that layer, and $L$ is the loss function for hallucination detection (i.e., the binary cross-entropy loss). Scores are averaged over all attention heads within each layer. In particular, $S^l(i,j)$ quantifies the saliency of attention from query $i$ to key $j$ , capturing how strongly the information flow from $j$ to $i$ contributes to the detection. We study two types of information flow: (1) $S_E_{Q→ E_A}$ , the saliency of direct information flow from the exact question tokens to the exact answer tokens, and (2) $S_E_{Q→*}$ , the saliency of the total information disseminated by the exact question tokens.

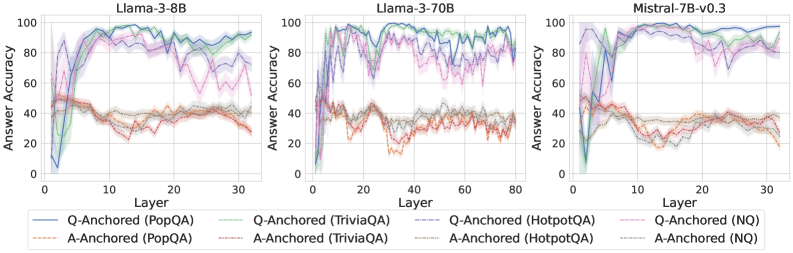

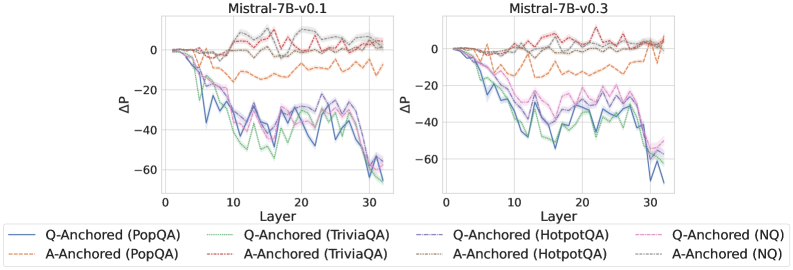

Results

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Density Plots: Saliency Score Distributions for Llama-3 Models

### Overview

The image displays two side-by-side kernel density estimation (KDE) plots comparing the distribution of "Saliency Scores" for two different-sized language models (Llama-3-8B and Llama-3-70B) across two question-answering datasets (TriviaQA and NQ). The plots visualize how model attention or attribution (saliency) is distributed for different input-output mappings.

### Components/Axes

* **Titles:**

* Left Plot: `Llama-3-8B`

* Right Plot: `Llama-3-70B`

* **Axes:**

* **X-axis (both plots):** `Saliency Score`

* Llama-3-8B scale: 0.0 to 1.5, with major ticks at 0.0, 0.5, 1.0, 1.5.

* Llama-3-70B scale: 0.0 to 0.2, with major ticks at 0.0, 0.1, 0.2. **Note the significant difference in scale between the two models.**

* **Y-axis (both plots):** `Density`

* Llama-3-8B scale: 0.00 to 0.75, with major ticks at 0.00, 0.25, 0.50, 0.75.

* Llama-3-70B scale: 0 to 4, with major ticks at 0, 2, 4.

* **Legend:** Located at the bottom center, spanning both plots. It defines four data series by color and label:

1. **Light Blue:** `S_{E_Q -> E_A} (TriviaQA)` - Saliency from Question Embedding to Answer Embedding for TriviaQA.

2. **Light Orange:** `S_{E_Q -> *} (TriviaQA)` - Saliency from Question Embedding to all tokens (`*`) for TriviaQA.

3. **Light Green:** `S_{E_Q -> E_A} (NQ)` - Saliency from Question Embedding to Answer Embedding for Natural Questions (NQ).

4. **Light Red/Pink:** `S_{E_Q -> *} (NQ)` - Saliency from Question Embedding to all tokens (`*`) for NQ.

### Detailed Analysis

**Llama-3-8B (Left Plot):**

* **Trend Verification:** All four distributions are right-skewed, with the bulk of density concentrated at lower saliency scores (0.0 to 0.75) and long tails extending to higher values.

* **Data Series Analysis:**

* `S_{E_Q -> E_A} (TriviaQA)` (Blue): Has the highest peak density (~0.8) at a very low score (~0.05). Shows a secondary, smaller peak/hump around 0.3.

* `S_{E_Q -> E_A} (NQ)` (Green): Has the second-highest peak (~0.75) also near 0.05. Its distribution is slightly broader than the blue series, with a notable shoulder around 0.2.

* `S_{E_Q -> *}` (TriviaQA & NQ) (Orange & Red): These distributions are much flatter and broader. Their peaks are lower (~0.4 for Orange, ~0.35 for Red) and occur at higher saliency scores (around 0.5-0.6). They have significantly longer and heavier tails extending past 1.0.

**Llama-3-70B (Right Plot):**

* **Trend Verification:** Distributions are more peaked and concentrated within a much narrower range (0.0 to 0.2) compared to the 8B model. They are less skewed.

* **Data Series Analysis:**

* `S_{E_Q -> E_A} (TriviaQA)` (Blue): Exhibits the highest and sharpest peak (density >4) at a very low score (~0.02).

* `S_{E_Q -> E_A} (NQ)` (Green): Has the second-highest peak (~3.8) at a similarly low score (~0.03). It shows a distinct secondary peak/hump around 0.08.

* `S_{E_Q -> *}` (TriviaQA & NQ) (Orange & Red): These are again broader and flatter than the `E_A` series. Their peaks are lower (density ~2.5-3) and occur at slightly higher scores (around 0.05-0.07). Their tails are shorter, mostly contained below 0.15.

### Key Observations

1. **Model Size Effect:** The 70B model's saliency scores are an order of magnitude smaller (x-axis max 0.2 vs. 1.5) and more densely concentrated (y-axis max 4 vs. 0.75) than the 8B model's. This suggests the larger model's attributions are more focused and consistent.

2. **Attribution Target Effect:** For both models and both datasets, the saliency from Question to Answer (`S_{E_Q -> E_A}`) distributions are sharper and peak at lower scores than the saliency from Question to all tokens (`S_{E_Q -> *}`). This indicates that attribution to the specific answer is more concentrated than diffuse attribution to the entire output.

3. **Dataset Effect:** The difference between datasets (TriviaQA vs. NQ) is less pronounced than the difference between models or attribution targets. However, for the `S_{E_Q -> E_A}` metric, the NQ distribution (Green) consistently shows a more prominent secondary hump compared to TriviaQA (Blue).

### Interpretation

This visualization provides a technical comparison of model interpretability metrics. The data suggests that **larger models (70B) develop more precise and consistent internal attribution pathways** (lower, narrower saliency score distributions) compared to smaller models (8B), which have more variable and diffuse attributions.

Furthermore, the analysis distinguishes between **targeted attribution** (to the answer) and **diffuse attribution** (to all tokens). The consistently sharper peaks for `S_{E_Q -> E_A}` imply that when the model's attention is measured specifically on the answer-generating process, the signal is cleaner and more localized. The broader `S_{E_Q -> *}` distributions reflect the noisier, more distributed nature of attention across an entire generated sequence.

The secondary humps, particularly visible in the NQ `S_{E_Q -> E_A}` distributions for both models, may indicate a sub-population of questions or answer types where the model's saliency pattern differs systematically. This could be an avenue for further investigation into dataset properties or model behavior. The stark contrast in scale between the 8B and 70B models is the most striking finding, highlighting a fundamental shift in how larger models process and attribute information.

</details>

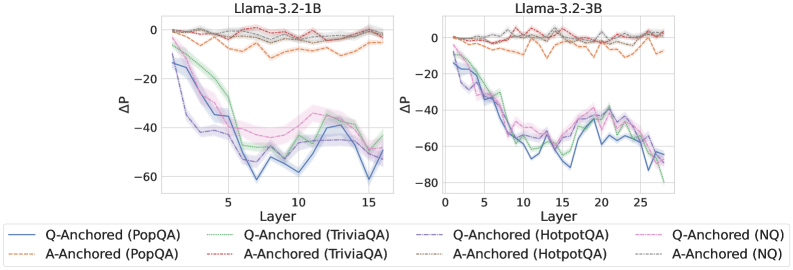

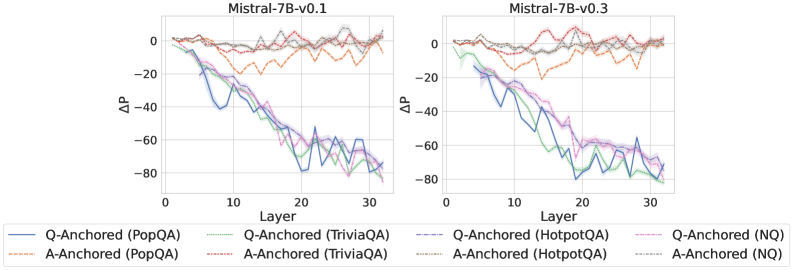

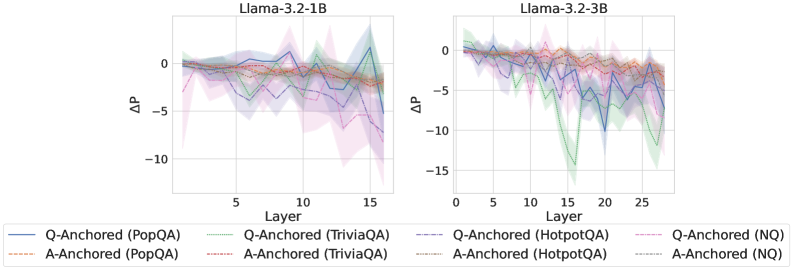

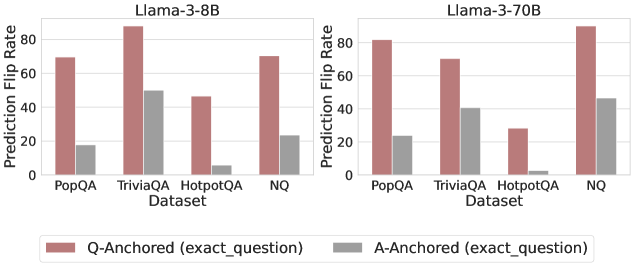

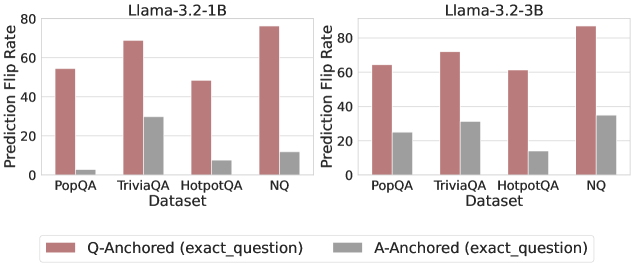

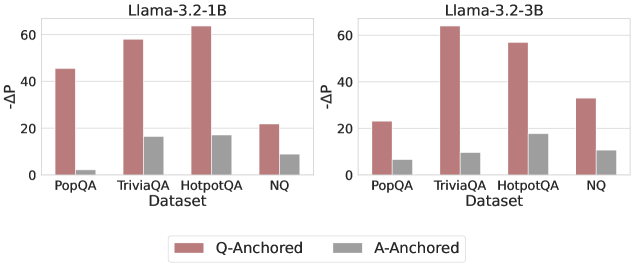

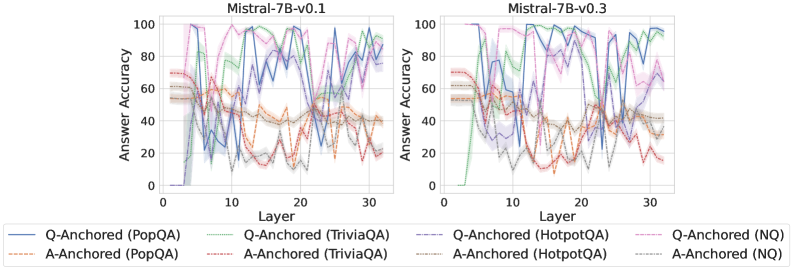

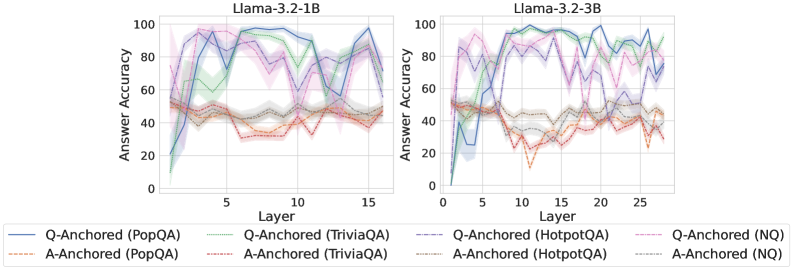

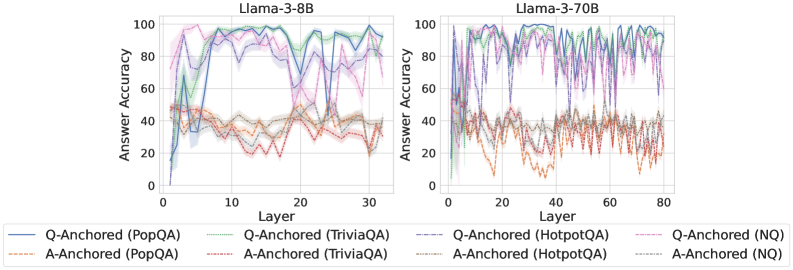

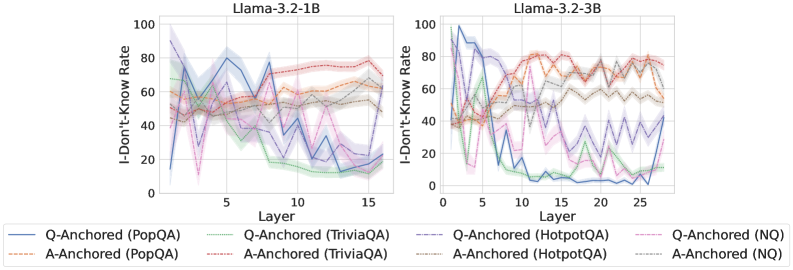

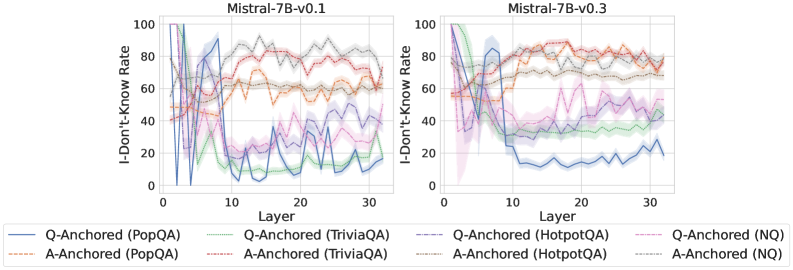

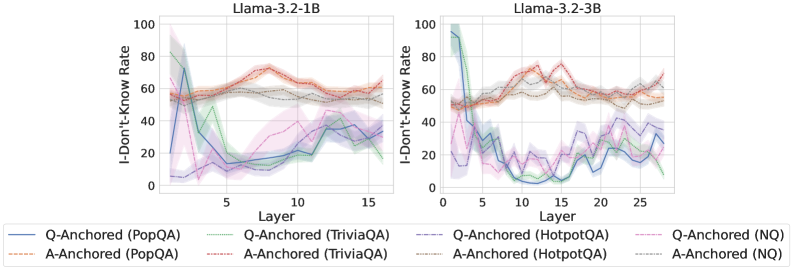

Figure 1: Kernel density estimates of saliency‐score distributions for critical question-to-answer information flows. The bimodal pattern suggests two distinct information mechanisms.

We demonstrate Kernel Density Estimation results of the saliency scores on TriviaQA (Joshi et al., 2017) and Natural Questions (Kwiatkowski et al., 2019) datasets. As shown in Figure 1, probability densities reveal a clear bimodal distribution: for all examined information types originating from the question, the probability mass concentrates around two peaks, one near zero saliency and another at a substantially higher value. The near-zero peak suggests that, for a substantial subset of samples, the question-to-answer information flow contributes minimally to hallucination detection, whereas the higher peak reflects strong dependence on such flow.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Charts: Layer-wise ΔP for Different QA Datasets and Anchoring Methods

### Overview

The image contains three horizontally arranged line charts, each plotting the change in probability (ΔP) across the layers of a different large language model. The charts compare the performance of two anchoring methods ("Q-Anchored" and "A-Anchored") across four question-answering (QA) datasets. The overall trend shows a significant negative ΔP for Q-Anchored methods as layer depth increases, while A-Anchored methods remain stable near zero.

### Components/Axes

* **Chart Titles (Top Center):**

* Left Chart: `Llama-3-8B`

* Middle Chart: `Llama-3-70B`

* Right Chart: `Mistral-7B-v0.3`

* **X-Axis (Bottom):**

* Label: `Layer`

* Scale (Left Chart): 0 to 30, with major ticks at 0, 10, 20, 30.

* Scale (Middle Chart): 0 to 80, with major ticks at 0, 20, 40, 60, 80.

* Scale (Right Chart): 0 to 30, with major ticks at 0, 10, 20, 30.

* **Y-Axis (Left):**

* Label: `ΔP`

* Scale (All Charts): -80 to 0, with major ticks at -80, -60, -40, -20, 0.

* **Legend (Bottom Center, spanning all charts):**

* **Q-Anchored (Solid Lines):**

* Blue: `Q-Anchored (PopQA)`

* Green: `Q-Anchored (TriviaQA)`

* Purple: `Q-Anchored (HotpotQA)`

* Pink: `Q-Anchored (NQ)`

* **A-Anchored (Dashed Lines):**

* Orange: `A-Anchored (PopQA)`

* Red: `A-Anchored (TriviaQA)`

* Brown: `A-Anchored (HotpotQA)`

* Gray: `A-Anchored (NQ)`

### Detailed Analysis

**1. Llama-3-8B (Left Chart):**

* **Q-Anchored Lines (Solid):** All four lines (blue, green, purple, pink) begin near ΔP = 0 at Layer 0. They exhibit a steep, near-parallel decline starting around Layer 2-3. By Layer 30, all have dropped to approximately ΔP = -70 to -80. The pink line (NQ) appears slightly higher (less negative) than the others between layers 10-25.

* **A-Anchored Lines (Dashed):** All four dashed lines (orange, red, brown, gray) remain tightly clustered around ΔP = 0 across all 30 layers, showing minimal fluctuation.

**2. Llama-3-70B (Middle Chart):**

* **Q-Anchored Lines (Solid):** The decline begins later, around Layer 10. The drop is more volatile, with a significant dip and partial recovery between Layers 20-40. The lowest point for most lines is around Layer 30 (ΔP ≈ -70 to -80). After Layer 40, the lines trend downward again, ending near ΔP = -80 at Layer 80. The purple line (HotpotQA) shows the most pronounced volatility.

* **A-Anchored Lines (Dashed):** Similar to the 8B model, these lines stay near ΔP = 0 with minor noise across all 80 layers.

**3. Mistral-7B-v0.3 (Right Chart):**

* **Q-Anchored Lines (Solid):** The decline starts around Layer 5. The slope is steadier and less steep than in the Llama-3-8B chart. By Layer 30, the lines converge to a range of approximately ΔP = -60 to -75. The blue line (PopQA) is consistently the lowest (most negative) from Layer 15 onward.

* **A-Anchored Lines (Dashed):** Consistent with the other models, these lines hover around ΔP = 0 for the entire layer range.

### Key Observations

1. **Anchoring Method Disparity:** There is a stark and consistent contrast between the two anchoring methods. Q-Anchored performance (ΔP) degrades severely with model depth, while A-Anchored performance is robust and unaffected by layer.

2. **Model Architecture Influence:** The layer at which the Q-Anchored decline begins and its trajectory varies by model. Llama-3-8B shows the earliest and smoothest drop. Llama-3-70B shows a delayed but more complex, volatile pattern. Mistral-7B shows an intermediate, steadier decline.

3. **Dataset Similarity:** Within each anchoring method, the four QA datasets (PopQA, TriviaQA, HotpotQA, NQ) follow very similar trajectories, suggesting the observed phenomenon is general across these datasets rather than dataset-specific.

4. **Spatial Layout:** The legend is positioned centrally below all three charts, allowing for direct color/line-style comparison across the different model panels.

### Interpretation

This data strongly suggests that the mechanism measured by ΔP (likely related to the model's internal probability assignment or knowledge retention) is highly sensitive to the anchoring strategy used during evaluation or probing.

* **Q-Anchored (Question-Anchored) Vulnerability:** The consistent negative trend indicates that as information propagates through the deeper layers of the transformer, the model's processing or representation related to the *question* context becomes progressively less aligned with the final output probability. This could imply a form of "forgetting" or a shift in representational focus away from the initial query in later layers.

* **A-Anchored (Answer-Anchored) Stability:** The flat lines near zero demonstrate that when anchored to the *answer*, the measured probability change is stable across all layers. This suggests the model's internal representation of the answer itself remains consistent and robust throughout the network depth.

* **Architectural Implications:** The differences between Llama-3 (8B vs. 70B) and Mistral suggest that model scale and architecture influence *how* and *when* this representational shift occurs, but not *whether* it occurs. The more complex pattern in the 70B model might reflect more sophisticated internal processing or routing.

* **Practical Implication:** For tasks requiring deep, layer-wise analysis of model reasoning (e.g., interpretability, probing), the choice of anchoring point (question vs. answer) is not a minor detail but a critical methodological decision that fundamentally changes the observed results. The A-Anchored method appears to provide a more stable signal for analysis across layers.

</details>

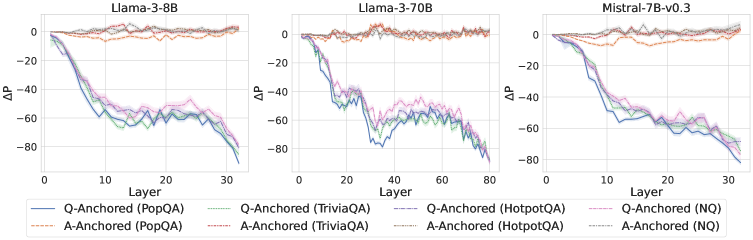

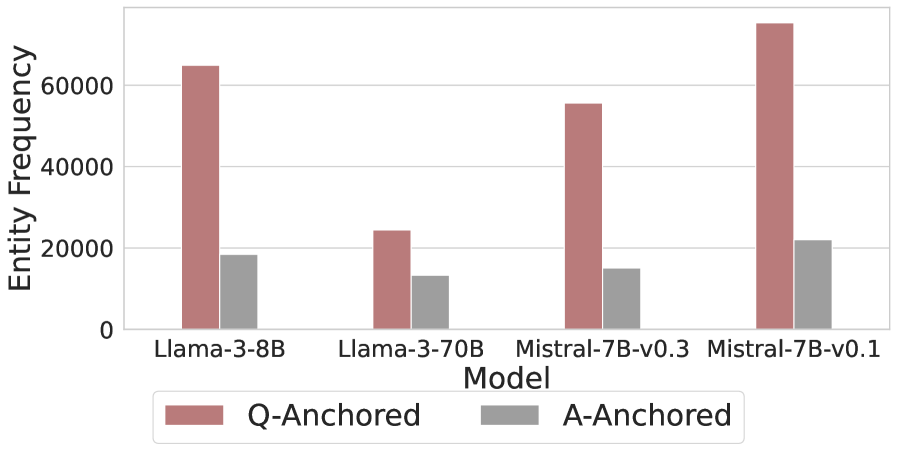

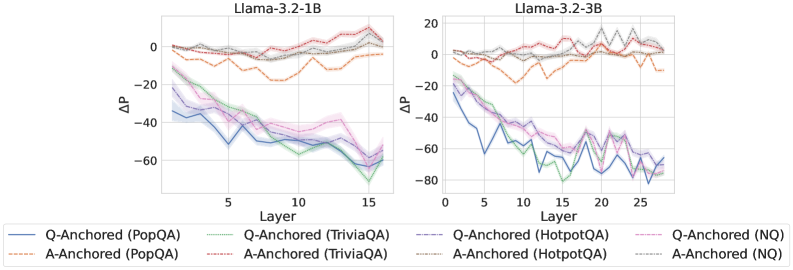

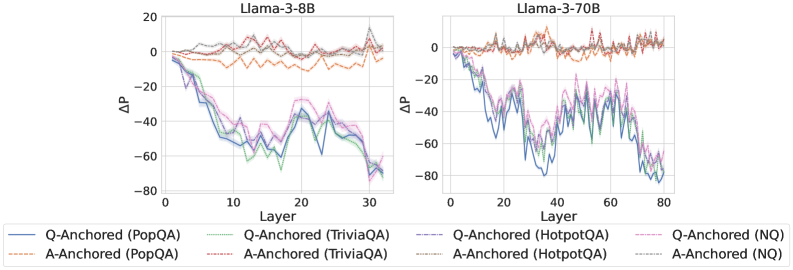

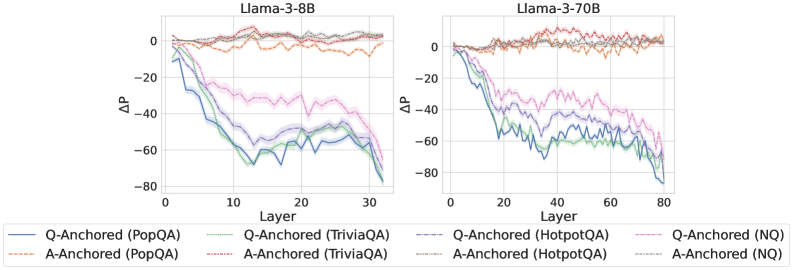

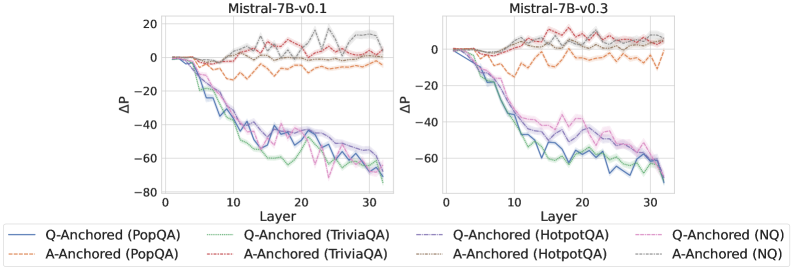

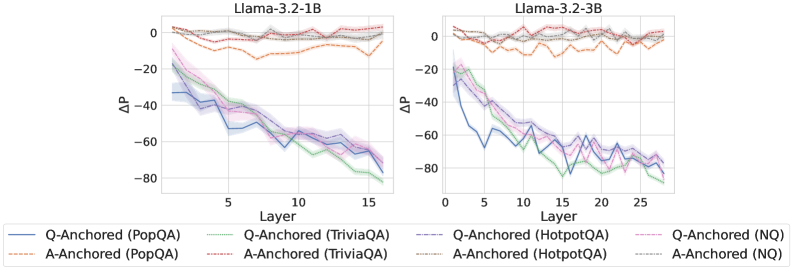

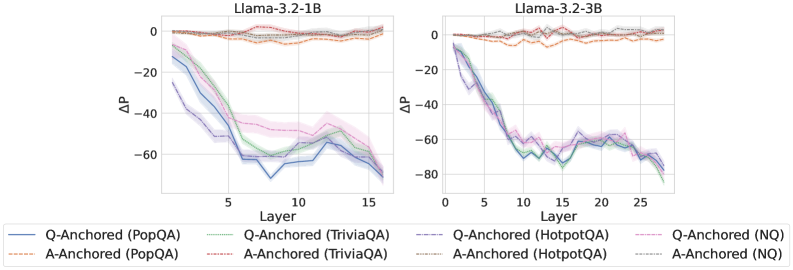

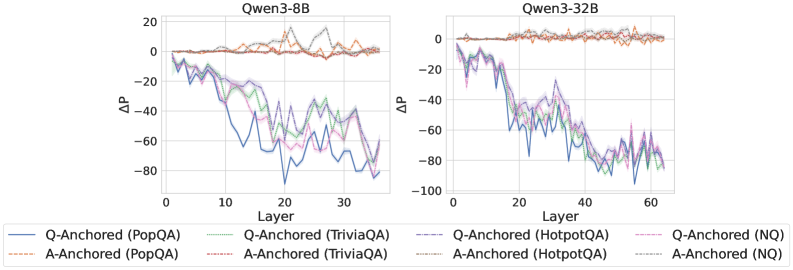

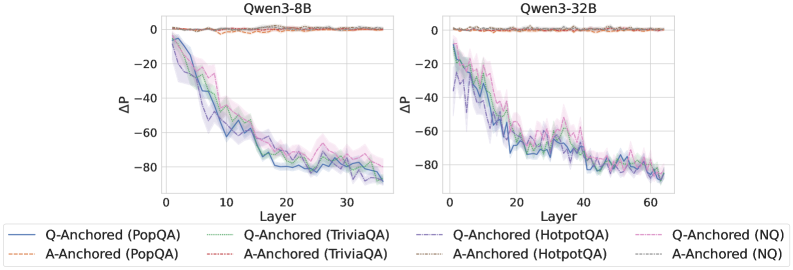

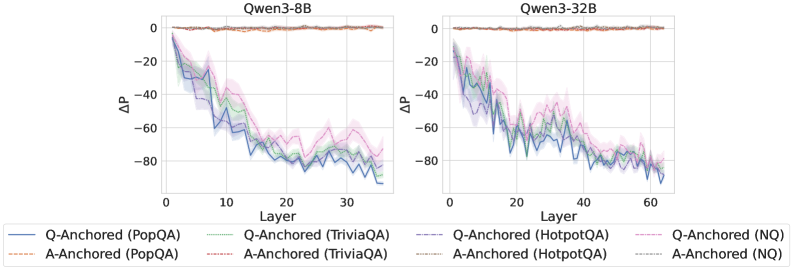

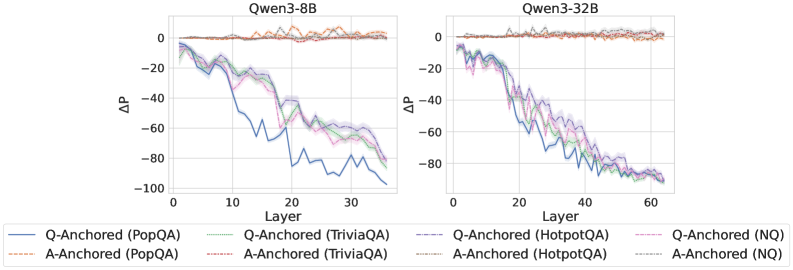

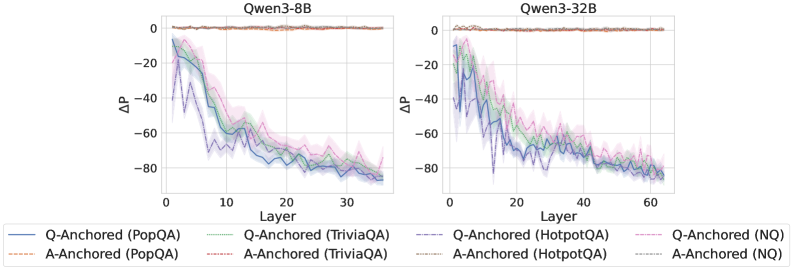

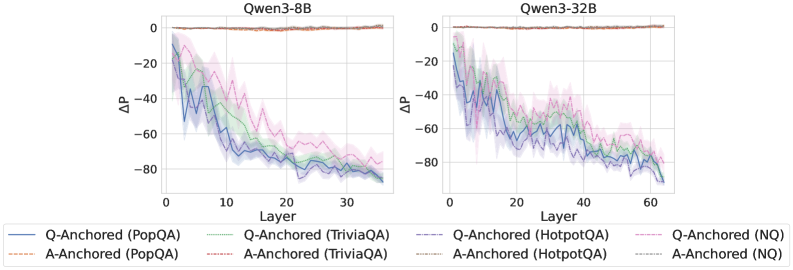

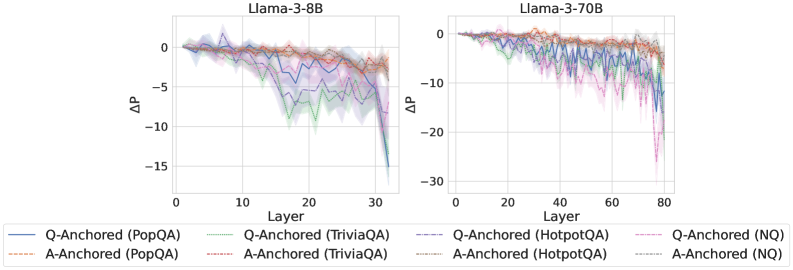

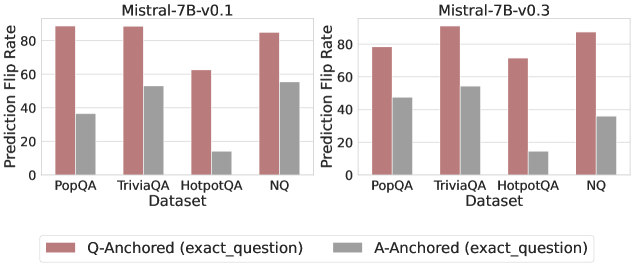

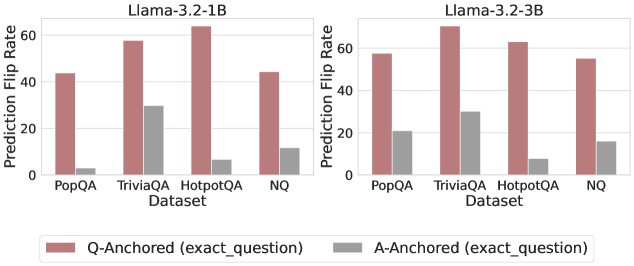

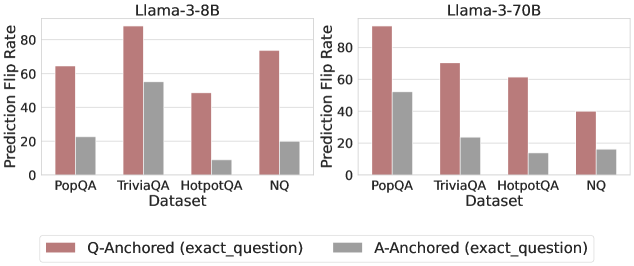

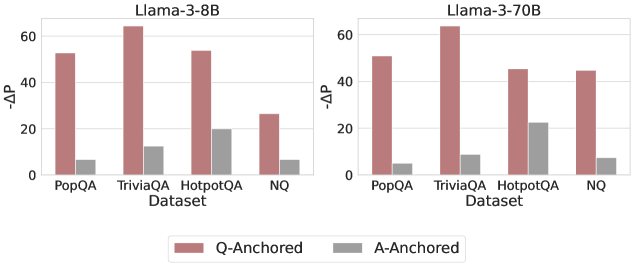

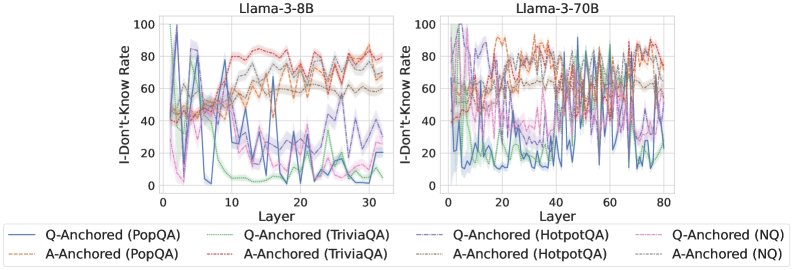

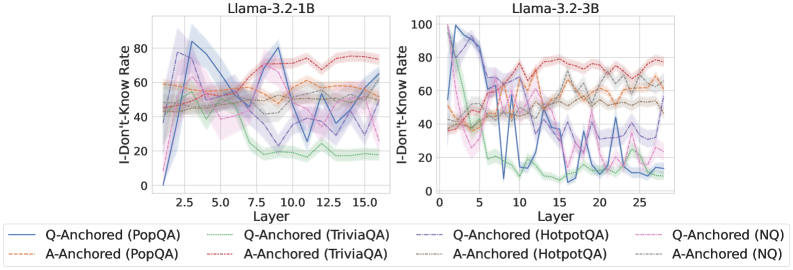

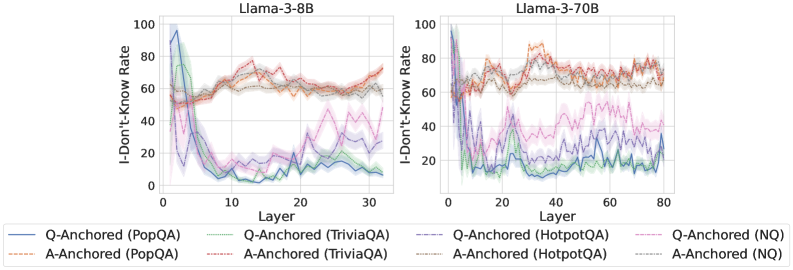

Figure 2: $ΔP$ under attention knockout. The layer axis indicates the Transformer layer on which the probe is trained. Shaded regions indicate 95% confidence intervals. Full results in Appendix C.

Hypothesis

These observations lead to the hypothesis that there are two distinct mechanisms of internal truthfulness encoding for hallucination detection: (1) one characterized by strong reliance on the key question-to-answer information from the exact question tokens, and (2) one in which truthfulness encoding is largely independent of the question. We validate the proposed hypothesis through further experiments in the next section.

### 3.2 Disentangling Information Mechanisms

We hypothesize that the internal truthfulness encoding operates through two distinct information flow mechanisms, driven by the attention modules within Transformer blocks. To validate the hypothesis, we first block information flows associated with the exact question tokens and analyze the resulting changes in the probe’s predictions. Subsequently, we apply a complementary technique, called token patching, to further substantiate the existence of these two mechanisms. Finally, we demonstrate that the self-contained information from the LLM-generated answer itself drives the truthfulness encoding for the A-Anchored type.

#### 3.2.1 Experimental Setup

Our analysis covers a diverse collection of 12 LLMs that vary in both scale and architectural design. Specifically, we consider three categories: (1) base models, including Llama-3.2-1B (Grattafiori et al., 2024), Llama-3.2-3B, Llama-3-8B, Llama-3-70B, Mistral-7B-v0.1 (Jiang et al., 2023), and Mistral-7B-v0.3; (2) instruction-tuned models, including Llama-3.2-3B-Instruct, Llama-3-8B-Instruct, Mistral-7B-Instruct-v0.1, and Mistral-7B-Instruct-v0.3; and (3) reasoning-oriented models, namely Qwen3-8B (Yang et al., 2025) and Qwen3-32B. We conduct experiments on 4 widely used question-answering datasets: PopQA (Mallen et al., 2023), TriviaQA (Joshi et al., 2017), HotpotQA (Yang et al., 2018), and Natural Questions (Kwiatkowski et al., 2019). Additional implementation details are provided in Appendix B.

#### 3.2.2 Identifying Anchored Modes via Attention Knockout

Experiment

To investigate whether internal truthfulness encoding operates via distinct information mechanisms, we perform an attention knockout experiment targeting the exact question tokens. Specifically, for a probe trained on representations from the $k$ -th layer, we set $A_l(i,E_Q)=0$ for layers $l∈\{1,\dots,k\}$ and positions $i>E_Q$ . This procedure blocks the information flow from question tokens to subsequent positions in the representation. We then examine how the probe’s predictions respond to this intervention. To provide a clearer picture, instances are categorized according to whether their prediction $\hat{z}$ changes after the attention knockout:

$$

Mode(x)=\begin{cases}Q-Anchored,&if \hat{z}≠\tilde{\hat{z}}\\

A-Anchored,&otherwise\end{cases} \tag{2}

$$

where $\hat{z}$ and $\tilde{\hat{z}}$ denote predictions before and after the attention knockout, respectively.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Bar Chart Series: Prediction Flip Rates Across Models and Datasets

### Overview

The image displays three grouped bar charts arranged horizontally, comparing the "Prediction Flip Rate" of three different language models across four question-answering datasets. The charts share a common y-axis and x-axis structure, with a unified legend at the bottom.

### Components/Axes

* **Chart Titles (Top Center of each subplot):**

* Left Chart: `Llama-3-8B`

* Middle Chart: `Llama-3-70B`

* Right Chart: `Mistral-7B-v0.3`

* **Y-Axis (Left side of each subplot):**

* Label: `Prediction Flip Rate`

* Scale: 0 to 80, with major tick marks at 0, 20, 40, 60, 80.

* **X-Axis (Bottom of each subplot):**

* Label: `Dataset`

* Categories (from left to right within each chart): `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`.

* **Legend (Bottom Center, spanning all charts):**

* Four categories, each represented by a colored bar:

1. `Q-Anchored (exact_question)` - Light red/salmon color.

2. `Q-Anchored (random)` - Dark red/maroon color.

3. `A-Anchored (exact_question)` - Light gray color.

4. `A-Anchored (random)` - Dark gray/charcoal color.

### Detailed Analysis

Data is presented as grouped bars for each dataset within each model chart. Values are approximate visual estimates from the bar heights.

**1. Llama-3-8B Chart (Left)**

* **PopQA:** Q-Anchored (exact) ~75, Q-Anchored (random) ~10, A-Anchored (exact) ~38, A-Anchored (random) ~2.

* **TriviaQA:** Q-Anchored (exact) ~78, Q-Anchored (random) ~12, A-Anchored (exact) ~35, A-Anchored (random) ~2.

* **HotpotQA:** Q-Anchored (exact) ~72, Q-Anchored (random) ~15, A-Anchored (exact) ~12, A-Anchored (random) ~5.

* **NQ:** Q-Anchored (exact) ~74, Q-Anchored (random) ~12, A-Anchored (exact) ~20, A-Anchored (random) ~2.

**2. Llama-3-70B Chart (Middle)**

* **PopQA:** Q-Anchored (exact) ~74, Q-Anchored (random) ~18, A-Anchored (exact) ~30, A-Anchored (random) ~2.

* **TriviaQA:** Q-Anchored (exact) ~78, Q-Anchored (random) ~20, A-Anchored (exact) ~35, A-Anchored (random) ~5.

* **HotpotQA:** Q-Anchored (exact) ~75, Q-Anchored (random) ~20, A-Anchored (exact) ~10, A-Anchored (random) ~8.

* **NQ:** Q-Anchored (exact) ~58, Q-Anchored (random) ~18, A-Anchored (exact) ~22, A-Anchored (random) ~8.

**3. Mistral-7B-v0.3 Chart (Right)**

* **PopQA:** Q-Anchored (exact) ~75, Q-Anchored (random) ~10, A-Anchored (exact) ~25, A-Anchored (random) ~2.

* **TriviaQA:** Q-Anchored (exact) ~80, Q-Anchored (random) ~12, A-Anchored (exact) ~30, A-Anchored (random) ~2.

* **HotpotQA:** Q-Anchored (exact) ~78, Q-Anchored (random) ~10, A-Anchored (exact) ~10, A-Anchored (random) ~8.

* **NQ:** Q-Anchored (exact) ~76, Q-Anchored (random) ~18, A-Anchored (exact) ~26, A-Anchored (random) ~2.

### Key Observations

1. **Dominant Series:** The `Q-Anchored (exact_question)` bar (light red) is consistently the tallest across all models and datasets, typically ranging between 70-80.

2. **Lowest Series:** The `A-Anchored (random)` bar (dark gray) is consistently the shortest, often near or below 5.

3. **Model Comparison:** The `Llama-3-70B` model shows a notably lower `Q-Anchored (exact_question)` rate for the `NQ` dataset (~58) compared to its performance on other datasets and compared to the other two models on `NQ`.

4. **Dataset Sensitivity:** The `HotpotQA` dataset generally shows lower flip rates for the `A-Anchored (exact_question)` condition (light gray) compared to `PopQA` and `TriviaQA` across all models.

5. **Anchoring Effect:** For a given anchoring type (Q or A), the "exact_question" variant consistently results in a higher flip rate than the "random" variant.

### Interpretation

This visualization investigates the sensitivity of language model predictions to different types of "anchoring" prompts. The "Prediction Flip Rate" likely measures how often a model changes its answer when presented with a subtly altered prompt.

* **Core Finding:** Models are highly sensitive to the exact phrasing of the question (`Q-Anchored (exact_question)`), showing a high rate of answer changes. They are far less sensitive to random variations in the question or to answer-based anchoring, especially when the answer is randomized.

* **Model Scale:** The larger `Llama-3-70B` model does not show a uniform reduction in sensitivity. Its high sensitivity on most datasets, coupled with a distinct drop on `NQ`, suggests its behavior may be more dataset-dependent or that its training made it more robust to variations specific to the `NQ` format.

* **Dataset Nature:** The consistently lower flip rates for `A-Anchored (exact_question)` on `HotpotQA` might indicate that for multi-hop reasoning tasks (which `HotpotQA` involves), the model's answer is more firmly tied to the specific answer entity provided, making it less likely to flip even when the answer is anchored.

* **Practical Implication:** The data underscores a potential fragility in model outputs. A high flip rate for exact question rephrasing implies that minor, semantically equivalent changes in user input could lead to different model responses, which is a critical consideration for reliability and user experience in deployed applications.

</details>

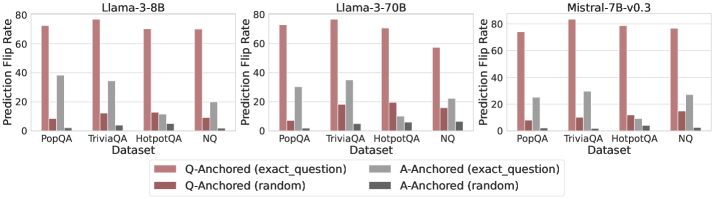

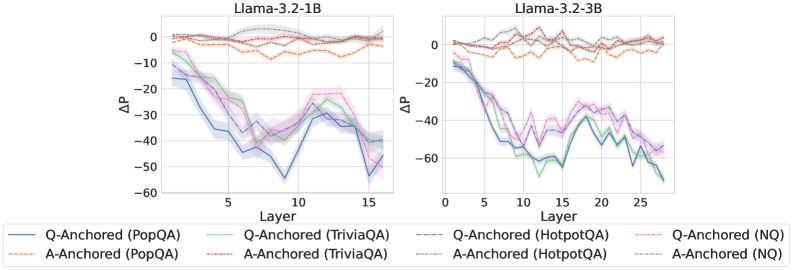

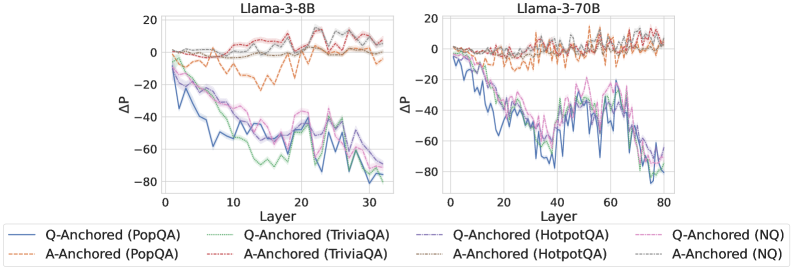

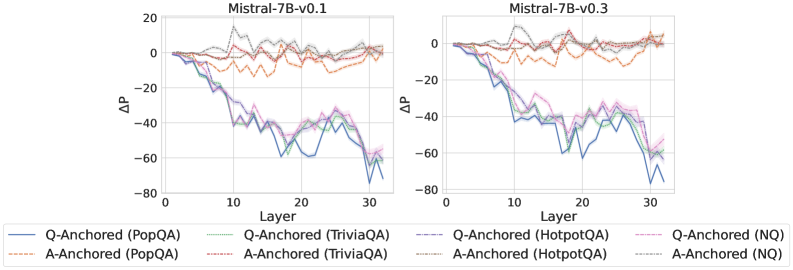

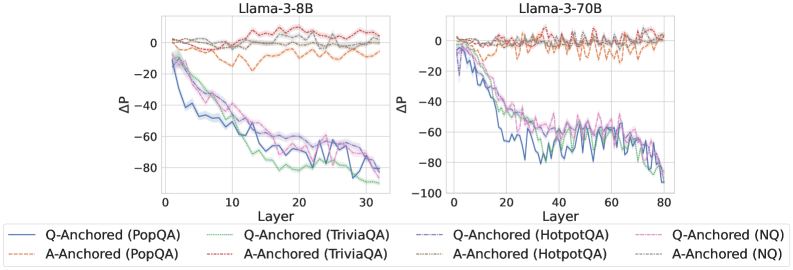

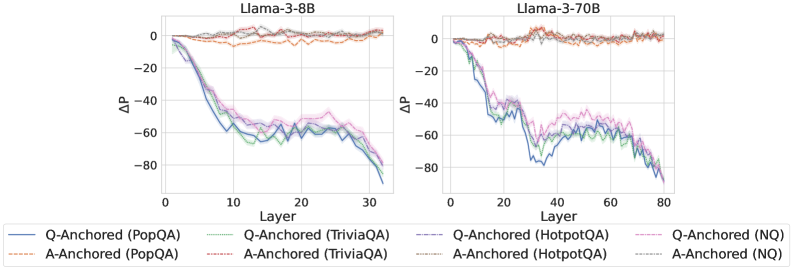

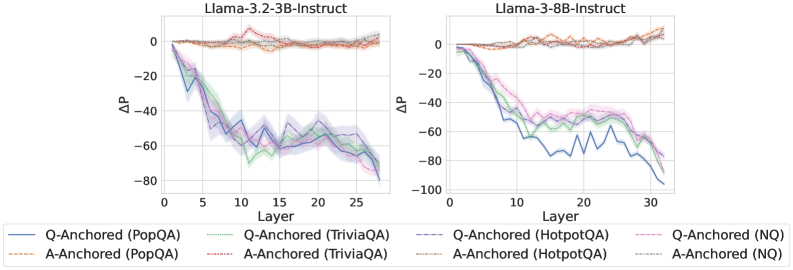

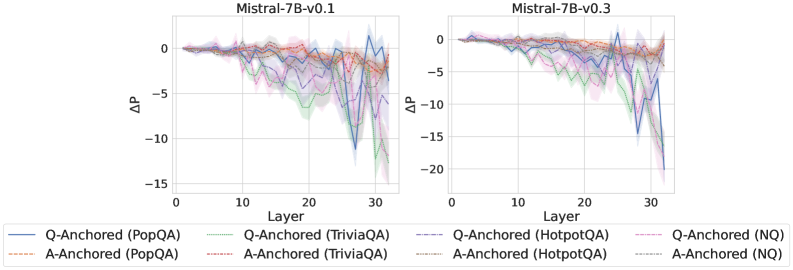

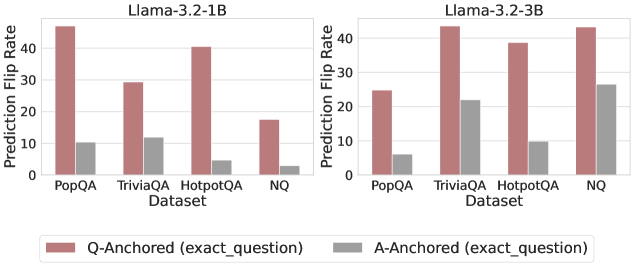

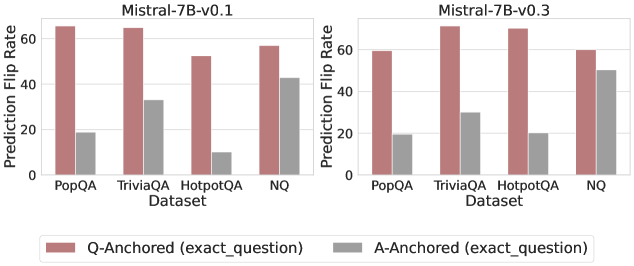

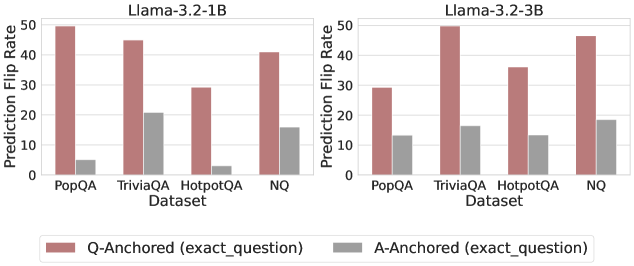

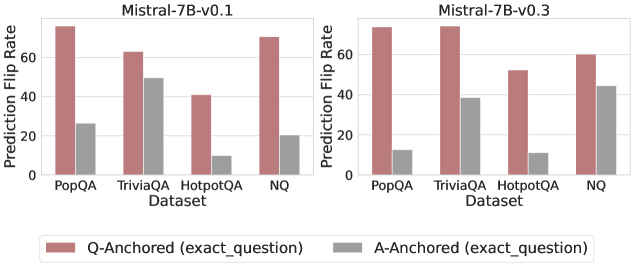

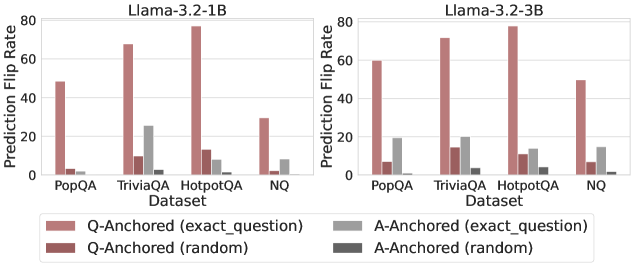

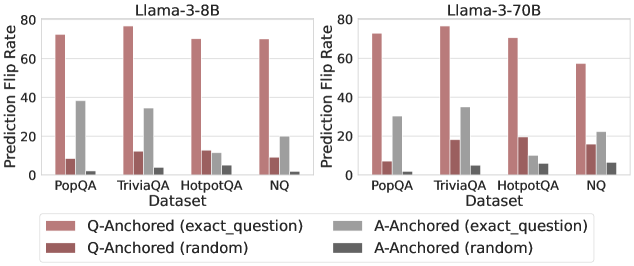

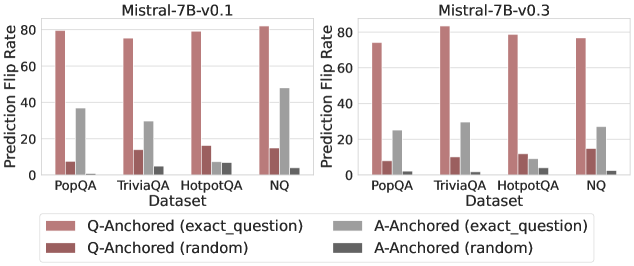

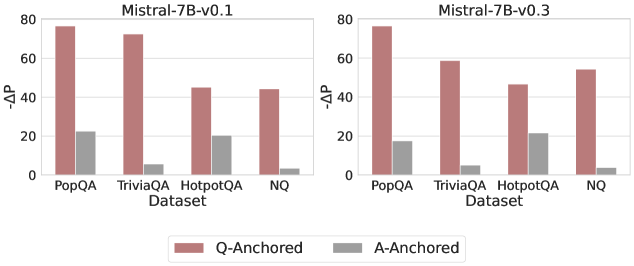

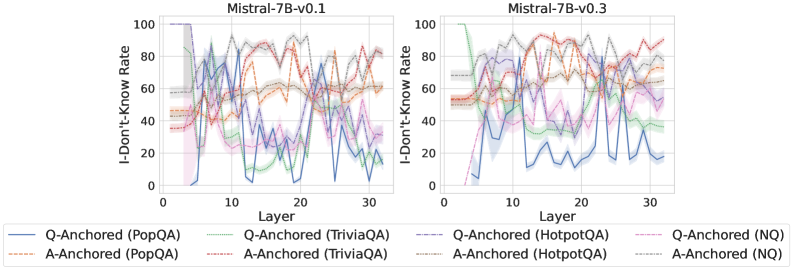

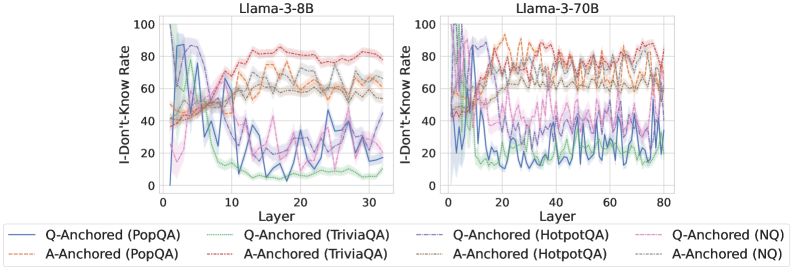

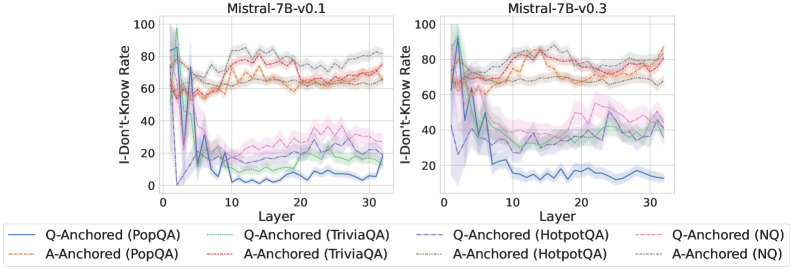

Figure 3: Prediction flip rate under token patching. Q-Anchored samples demonstrate significantly higher sensitivity than the counterparts when hallucinatory cues are injected into exact questions. Full results in Appendix D.

Results

The results in Figure 2 and Appendix C reveal a clear bifurcation of behaviors: for one subset of instances, probabilities shift substantially, while for another subset, probabilities remain nearly unchanged across all layers. Shaded regions indicate 95% confidence intervals, confirming that this qualitative separation is statistically robust. This sharp divergence supports the hypothesis that internal truthfulness encoding operates via two distinct mechanisms with respect to question–answer information. In Appendix C, we conduct a comprehensive analysis of alternative configurations for token selection, activation extraction, and various instruction- or reasoning-oriented models, and observe consistent patterns across all settings. Moreover, Figure 16 in Appendix C shows that blocking information from randomly selected question tokens yields negligible changes, in contrast to blocking exact question tokens, underscoring the nontrivial nature of the identified mechanisms.

#### 3.2.3 Further Validation via Token Patching

Experiment

To further validate our findings, we employ a critical token patching technique to investigate how the internal representations of the LLM respond to hallucinatory signals originating from exact question tokens under the two proposed mechanisms. Given a context sample $d_c$ , we randomly select a patch sample $d_p$ and replace the original question tokens $E_Q^c$ in $d_c$ with the exact question tokens $E_Q^p$ from $d_p$ . This operation introduces hallucinatory cues into the context sample, allowing us to assess whether the LLM’s internal states appropriately reflect the injected changes. We restrict our analysis to context instances where the original LLM answers are factual, ensuring that any observed changes can be attributed solely to the injected hallucinatory cues.

Results

We measure the sensitivity of the truthfulness signals using the prediction flip rate, defined as the frequency with which the probe’s prediction changes after hallucinatory cues are introduced. Figure 3 and Appendix D present the results of the best-performing layer of each model on four datasets when patching the exact subject tokens. Across models and datasets, Q-Anchored mode exhibits significantly higher sensitivity compared to A-Anchored mode when exposed to hallucination cues from the questions. Furthermore, within each pathway, the flip rates where exact question tokens are patched are substantially higher than those observed when random tokens are patched, ruling out the possibility that the observed effects are mainly due to general semantic disruption from token replacement. These consistent results provide further support for our hypothesis regarding distinct mechanisms of information pathways.

#### 3.2.4 What Drives A-Anchored Encoding?

Experiment

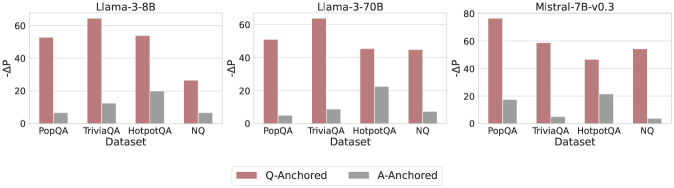

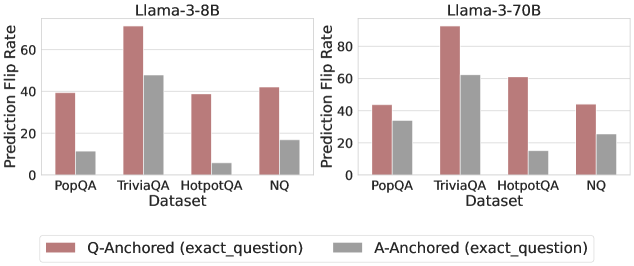

Since the A-Anchored mode operates largely independently of the question-to-answer information flow, it is important to investigate the source of information it uses to identify hallucinations. To this end, we remove the questions entirely from each sample and perform a separate forward pass using only the LLM-generated answers. This procedure yields answer-only hidden states, which are subsequently provided as input to the probe. We then evaluate how the probe’s predictions change under this “answer-only” condition. This setup enables us to assess whether A-Anchored predictions rely primarily on the generated answer itself rather than on the original question.

Results

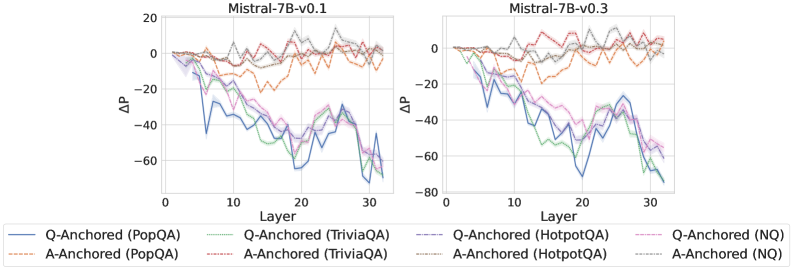

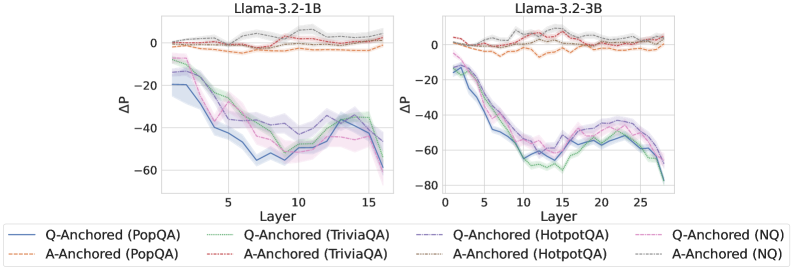

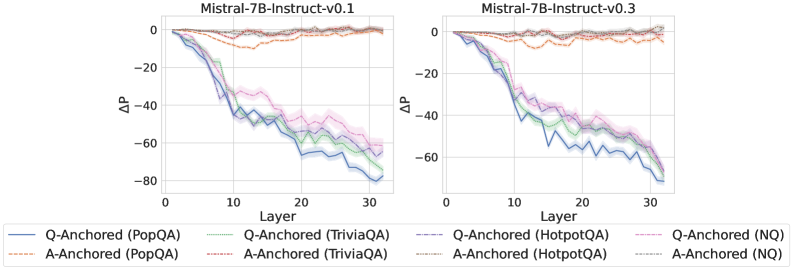

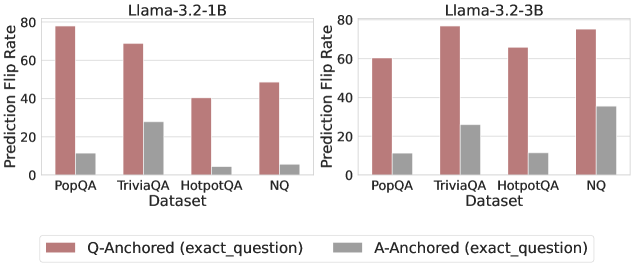

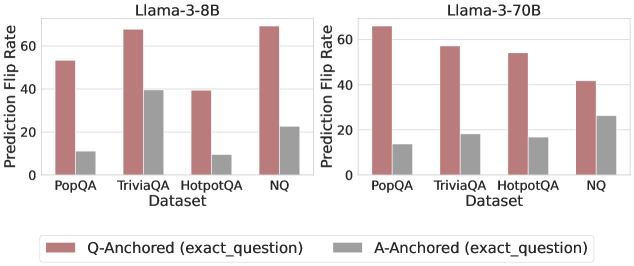

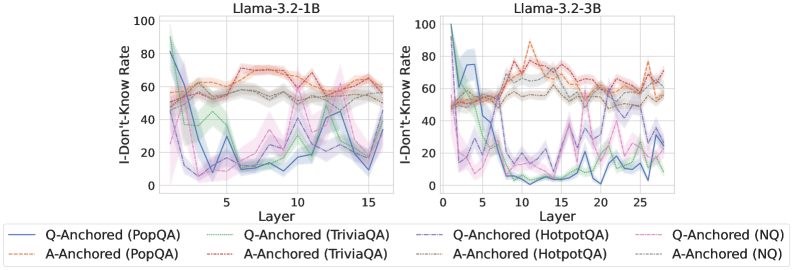

As shown in Figure 4 and Appendix E, Q-Anchored instances exhibit substantial changes in prediction probability when the question is removed, reflecting their dependence on question-to-answer information. In contrast, A-Anchored instances remain largely invariant, indicating that the probe continues to detect hallucinations using information encoded within the LLM-generated answer itself. These findings suggest that the A-Anchored mechanism primarily leverages self-contained answer information to build signals about truthfulness.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Grouped Bar Charts: Model Performance Comparison (ΔΔP)

### Overview

The image displays three horizontally arranged grouped bar charts, each comparing the performance of a different large language model (LLM) on four question-answering datasets. The performance metric is labeled "ΔΔP" on the y-axis. Each chart compares two experimental conditions: "Q-Anchored" and "A-Anchored."

### Components/Axes

* **Chart Titles (Top Center):** "Llama-3-8B", "Llama-3-70B", "Mistral-7B-v0.3"

* **Y-Axis Label (Left Side):** "ΔΔP" (Delta Delta P). The scale varies slightly:

* Llama-3-8B and Llama-3-70B: 0 to 60, with major ticks at 0, 20, 40, 60.

* Mistral-7B-v0.3: 0 to 80, with major ticks at 0, 20, 40, 60, 80.

* **X-Axis Label (Bottom Center of each chart):** "Dataset"

* **X-Axis Categories (Bottom of each chart):** Four datasets are listed from left to right: "PopQA", "TriviaQA", "HotpotQA", "NQ".

* **Legend (Bottom Center of entire image):** A horizontal legend defines the bar colors:

* **Reddish-Brown Bar:** "Q-Anchored"

* **Gray Bar:** "A-Anchored"

### Detailed Analysis

**Chart 1: Llama-3-8B**

* **Trend:** For all four datasets, the Q-Anchored (reddish-brown) bar is substantially taller than the A-Anchored (gray) bar.

* **Data Points (Approximate ΔΔP):**

* **PopQA:** Q-Anchored ≈ 55, A-Anchored ≈ 8.

* **TriviaQA:** Q-Anchored ≈ 65 (highest in this chart), A-Anchored ≈ 12.

* **HotpotQA:** Q-Anchored ≈ 55, A-Anchored ≈ 20 (highest A-Anchored value in this chart).

* **NQ:** Q-Anchored ≈ 28, A-Anchored ≈ 8.

**Chart 2: Llama-3-70B**

* **Trend:** Consistent with the 8B model, Q-Anchored significantly outperforms A-Anchored across all datasets.

* **Data Points (Approximate ΔΔP):**

* **PopQA:** Q-Anchored ≈ 52, A-Anchored ≈ 6.

* **TriviaQA:** Q-Anchored ≈ 65 (highest in this chart), A-Anchored ≈ 10.

* **HotpotQA:** Q-Anchored ≈ 48, A-Anchored ≈ 22 (highest A-Anchored value in this chart).

* **NQ:** Q-Anchored ≈ 46, A-Anchored ≈ 9.

**Chart 3: Mistral-7B-v0.3**

* **Trend:** The pattern holds. Q-Anchored bars are taller than A-Anchored bars for every dataset.

* **Data Points (Approximate ΔΔP):**

* **PopQA:** Q-Anchored ≈ 78 (highest value across all charts), A-Anchored ≈ 18.

* **TriviaQA:** Q-Anchored ≈ 60, A-Anchored ≈ 6.

* **HotpotQA:** Q-Anchored ≈ 45, A-Anchored ≈ 20.

* **NQ:** Q-Anchored ≈ 55, A-Anchored ≈ 5.

### Key Observations

1. **Universal Q-Anchored Superiority:** The most prominent pattern is that the "Q-Anchored" condition yields a higher ΔΔP than the "A-Anchored" condition for every single model-dataset combination shown.

2. **Dataset Performance Variability:** The absolute performance (height of bars) varies by dataset. "TriviaQA" and "PopQA" often show the highest ΔΔP values for Q-Anchored, while "NQ" and "HotpotQA" sometimes show lower peaks.

3. **Model Comparison:** The Mistral-7B-v0.3 model achieves the single highest ΔΔP value (≈78 on PopQA). The Llama-3 models (8B and 70B) show very similar performance profiles to each other.

4. **A-Anchored Consistency:** The A-Anchored performance is consistently low, generally below 20 ΔΔP, with "HotpotQA" often being the dataset where it performs "best" (relatively speaking).

### Interpretation

This visualization strongly suggests that the "Q-Anchored" method or prompting strategy is significantly more effective than the "A-Anchored" alternative for the evaluated models on these question-answering tasks, as measured by the ΔΔP metric. The consistency of this finding across three different model architectures (Llama-3 8B/70B, Mistral) and four diverse datasets implies a robust effect.

The ΔΔP metric itself likely measures a change or improvement relative to a baseline. The large positive values for Q-Anchored indicate a substantial gain. The fact that A-Anchored values are positive but much smaller suggests it may offer a minor improvement over the baseline, but is far less effective than Q-Anchoring.

The variation across datasets hints that the difficulty or nature of the task influences the absolute magnitude of the improvement, but not the relative advantage of Q-Anchoring. The outlier data point (Mistral on PopQA) may indicate a particularly strong synergy between that model's architecture and the Q-Anchored approach for that specific type of knowledge-intensive question.

</details>

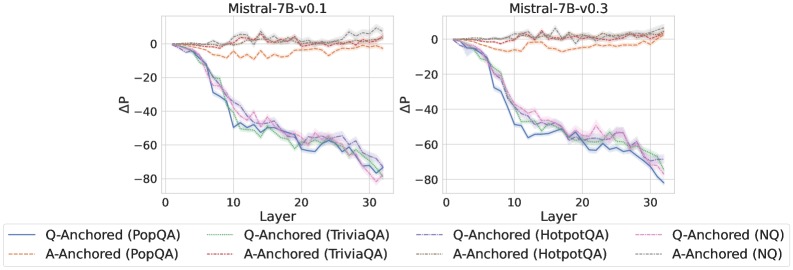

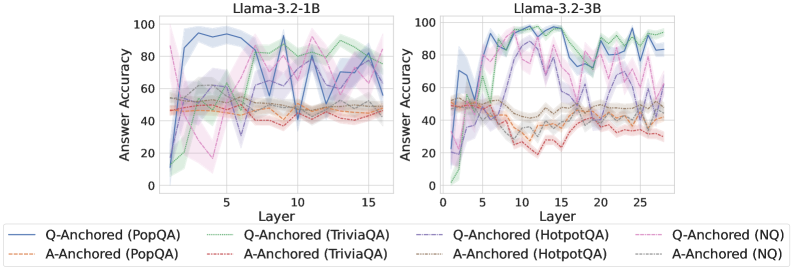

Figure 4: $-ΔP$ with only the LLM-generated answer. Q-Anchored instances exhibit substantial shifts, whereas A-Anchored instances remain stable, confirming that A-Anchored truthfulness encoding relies on information in the LLM-generated answer itself. Full results in Appendix E.

## 4 Properties of Truthfulness Pathways

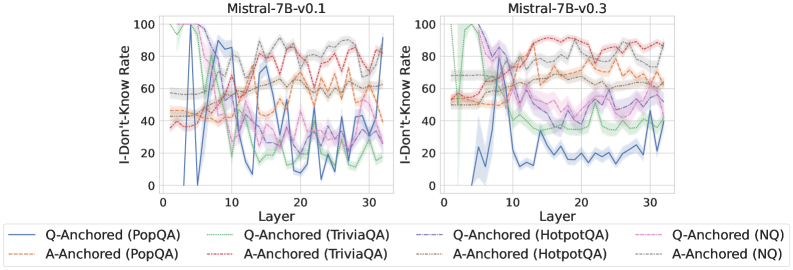

This section examines notable properties and distinct behaviors of intrinsic truthfulness encoding: (1) Associations with knowledge boundaries: samples within the LLM’s knowledge boundary tend to encode truthfulness via the Q-Anchored pathway, whereas samples beyond the boundary often rely on the A-Anchored signal; (2) Self-awareness: internal representations can be used to predict which mechanism is being employed, suggesting that LLMs possess intrinsic awareness of pathway distinctions.

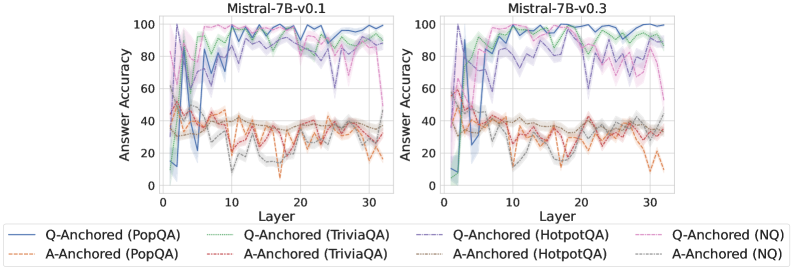

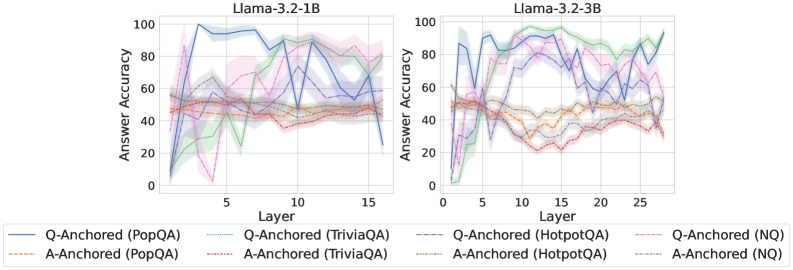

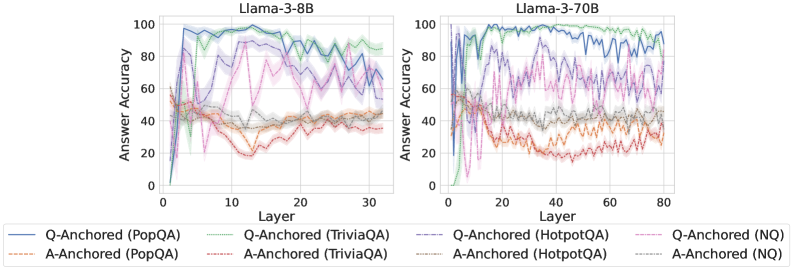

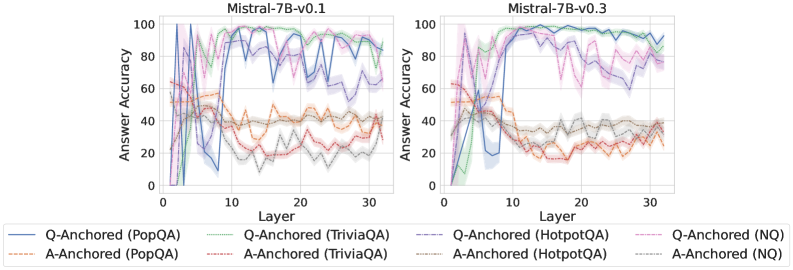

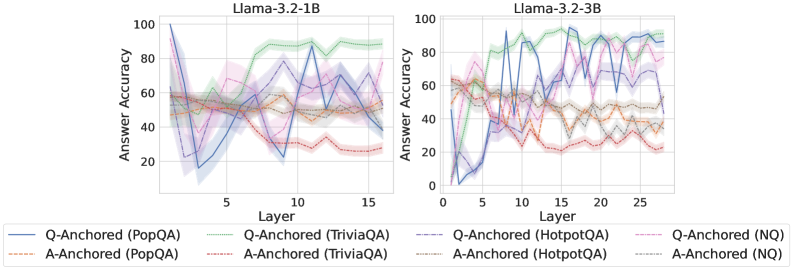

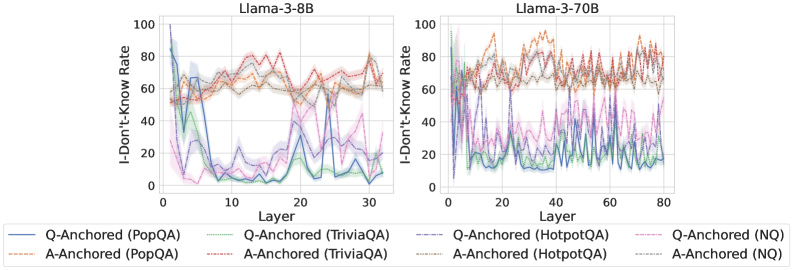

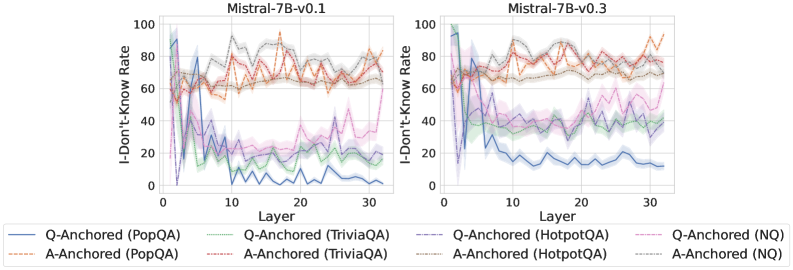

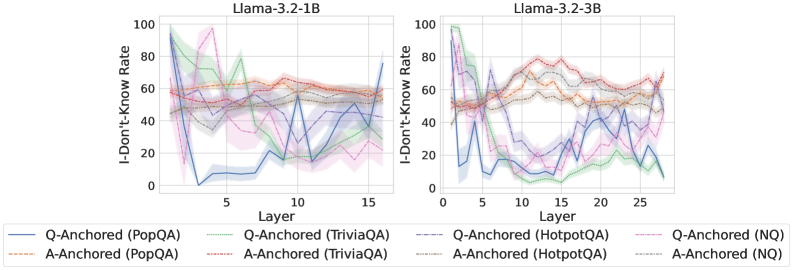

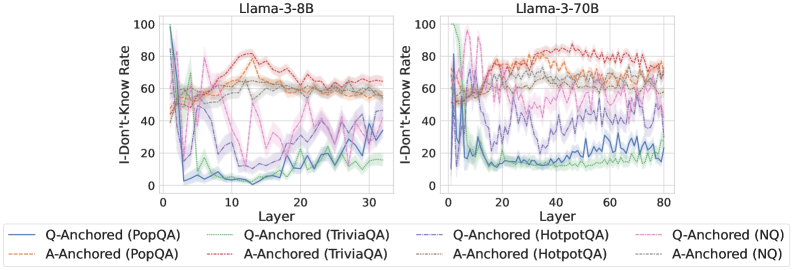

### 4.1 Associations with Knowledge Boundaries

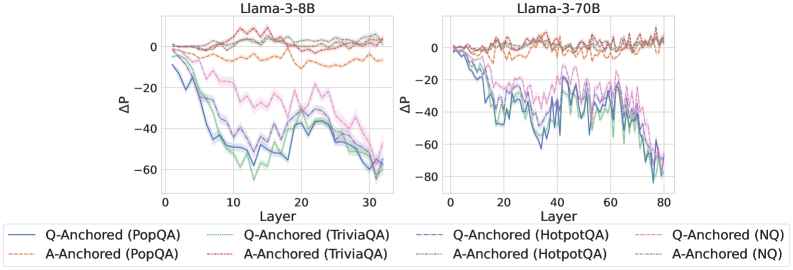

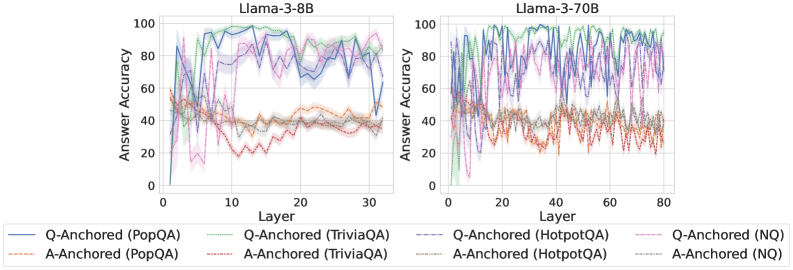

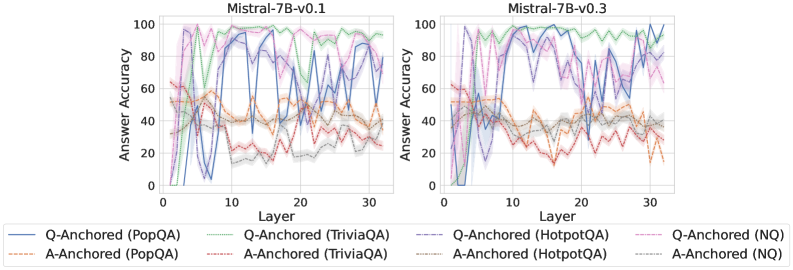

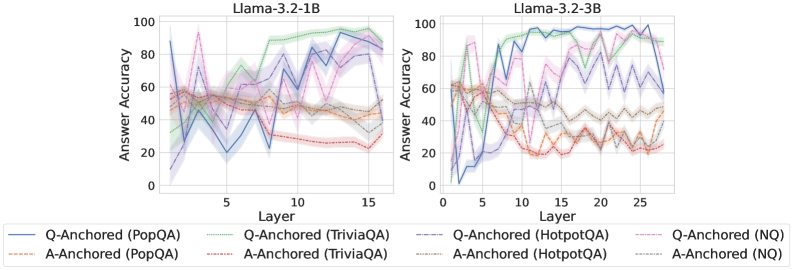

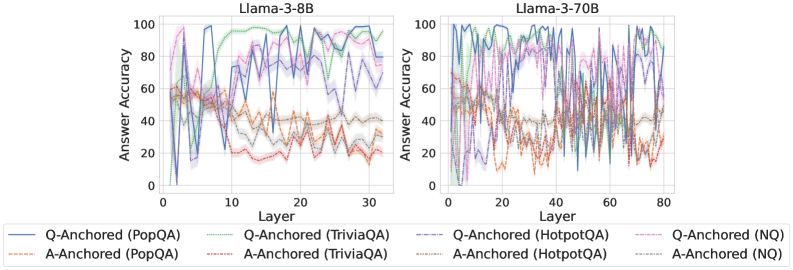

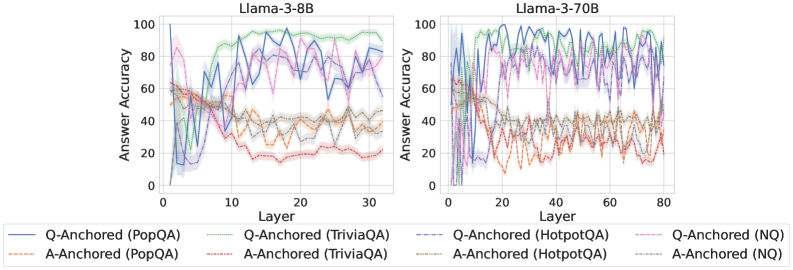

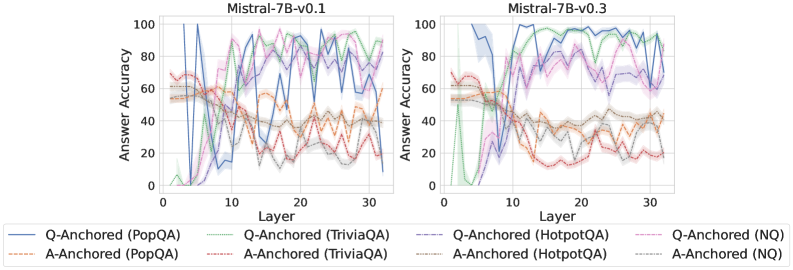

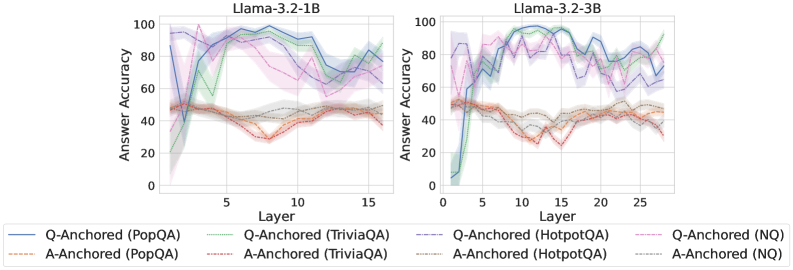

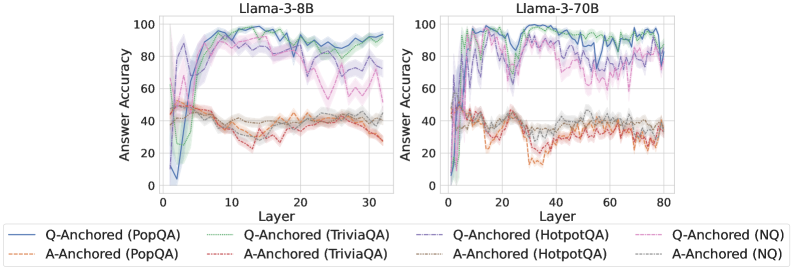

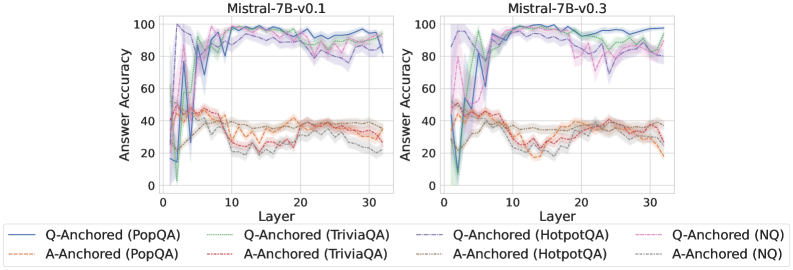

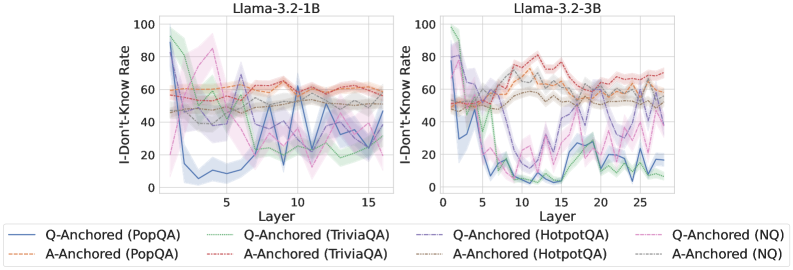

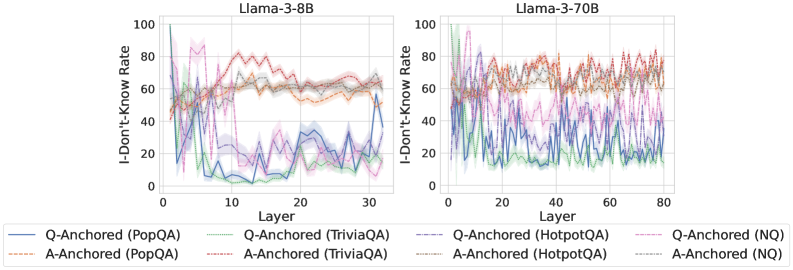

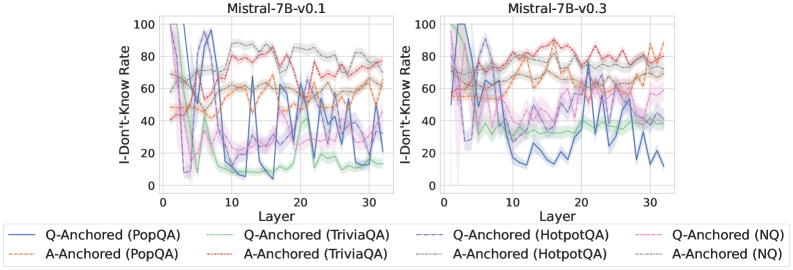

We find that distinct patterns of truthfulness encoding are closely associated with the knowledge boundaries of LLMs. To characterize these boundaries, three complementary metrics are employed: (1) Answer accuracy, the most direct indicator of an LLM’s factual competence; (2) I-don’t-know rate (shown in Appendix G), which reflects the model’s ability to recognize and express its own knowledge limitations; (3) Entity popularity, which is widely used to distinguish between common and long-tail factual knowledge (Mallen et al., 2023).

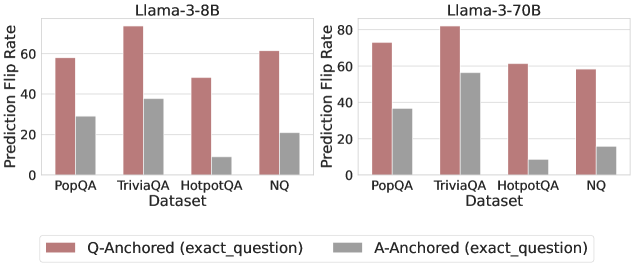

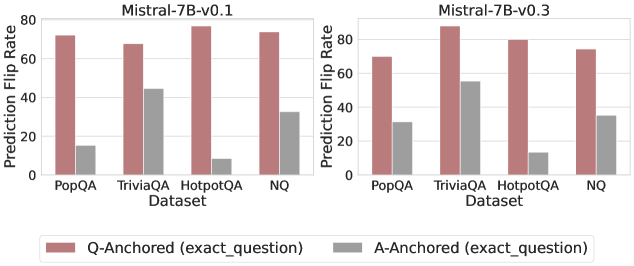

As shown in Figure 5 and Appendix F, Q-Anchored samples achieve significantly higher accuracy than those driven by the A-Anchored pathway. The results for the I-don’t-know rate, reported in Appendix G, exhibit trends consistent with answer accuracy, further indicating stronger knowledge handling in Q-Anchored samples. Moreover, entity popularity, shown in Figure 6, provides a more fine-grained perspective on knowledge boundaries. Specifically, Q-Anchored samples tend to involve more popular entities, whereas A-Anchored samples are more frequently associated with less popular, long-tail factual knowledge. These findings suggest that truthfulness encoding is strongly aligned with the availability of stored knowledge: when LLMs possess the requisite knowledge, they predominantly rely on question–answer information flow (Q-Anchored); when knowledge is unavailable, they instead draw upon internal patterns within their own generated outputs (A-Anchored).

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Line Charts: Answer Accuracy Across Layers for Three Language Models

### Overview

The image displays three horizontally arranged line charts, each plotting "Answer Accuracy" (y-axis) against "Layer" (x-axis) for a specific large language model. The charts compare the performance of two anchoring methods ("Q-Anchored" and "A-Anchored") across four question-answering datasets (PopQA, TriviaQA, HotpotQA, NQ). The overall visual suggests an analysis of how model performance on different knowledge-intensive tasks evolves through the layers of the neural network.

### Components/Axes

* **Chart Titles (Top Center of each plot):**

* Left Chart: `Llama-3-8B`

* Middle Chart: `Llama-3-70B`

* Right Chart: `Mistral-7B-v0.3`

* **Y-Axis (Left side of each plot):**

* Label: `Answer Accuracy`

* Scale: 0 to 100, with major ticks at 0, 20, 40, 60, 80, 100.

* **X-Axis (Bottom of each plot):**

* Label: `Layer`

* Scale:

* Llama-3-8B: 0 to 30 (ticks every 10).

* Llama-3-70B: 0 to 80 (ticks every 20).

* Mistral-7B-v0.3: 0 to 30 (ticks every 10).

* **Legend (Bottom Center, spanning all charts):**

* **Q-Anchored Series (Solid Lines):**

* Blue solid line: `Q-Anchored (PopQA)`

* Green solid line: `Q-Anchored (TriviaQA)`

* Gray solid line: `Q-Anchored (HotpotQA)`

* Purple solid line: `Q-Anchored (NQ)`

* **A-Anchored Series (Dashed Lines):**

* Orange dashed line: `A-Anchored (PopQA)`

* Red dashed line: `A-Anchored (TriviaQA)`

* Brown dashed line: `A-Anchored (HotpotQA)`

* Pink dashed line: `A-Anchored (NQ)`

### Detailed Analysis

**General Trend Verification:**

* **Q-Anchored Lines (Solid):** For all three models, these lines typically start at very low accuracy (near 0-20%) in the earliest layers, rise sharply to a peak in the middle layers, and then often show a decline or increased volatility in the later layers.

* **A-Anchored Lines (Dashed):** These lines generally start at a moderate accuracy level (around 40-50%), exhibit less dramatic swings than the Q-Anchored lines, and often trend slightly downward or remain relatively flat across layers.

**Model-Specific Data Points (Approximate Values):**

1. **Llama-3-8B:**

* **Q-Anchored (PopQA - Blue):** Starts near 0%, peaks at ~95% around layer 15, declines to ~80% by layer 30.

* **Q-Anchored (TriviaQA - Green):** Follows a similar but slightly lower trajectory than PopQA, peaking near 90%.

* **Q-Anchored (NQ - Purple):** Shows high volatility, with multiple peaks and troughs between 60-95% after layer 10.

* **A-Anchored Series:** All cluster between 30-50% accuracy, showing a slight downward trend. PopQA (Orange) and TriviaQA (Red) are the lowest, often below 40%.

2. **Llama-3-70B:**

* **Q-Anchored Series:** All four datasets show a rapid rise to high accuracy (>80%) by layer 10-20. PopQA (Blue) and TriviaQA (Green) maintain very high accuracy (~90-98%) with less decline in later layers compared to the 8B model. NQ (Purple) and HotpotQA (Gray) are more volatile.

* **A-Anchored Series:** Again cluster in the 30-50% range, with a clearer downward trend for PopQA (Orange) and TriviaQA (Red), dropping to near 20% by layer 80.

3. **Mistral-7B-v0.3:**

* **Q-Anchored Series:** Similar rapid initial rise. PopQA (Blue) and TriviaQA (Green) reach near-perfect accuracy (~95-100%) and sustain it. NQ (Purple) and HotpotQA (Gray) are lower and more volatile, ranging between 60-90%.

* **A-Anchored Series:** Follow the established pattern, hovering between 20-40% with a slight downward slope.

### Key Observations

1. **Consistent Performance Gap:** Across all models and layers, the Q-Anchored method (solid lines) dramatically outperforms the A-Anchored method (dashed lines) on all four QA datasets.

2. **Layer-wise Evolution:** Q-Anchored performance is not static; it shows a characteristic "rise-peak-decline" pattern, suggesting different layers specialize in different aspects of the task. The peak performance layer varies by model and dataset.

3. **Dataset Difficulty:** HotpotQA (Gray lines) and NQ (Purple lines) generally show lower and more volatile accuracy for the Q-Anchored method compared to PopQA and TriviaQA, indicating they may be more challenging or require different reasoning pathways.

4. **Model Scale Effect:** The larger Llama-3-70B model sustains high Q-Anchored accuracy deeper into its network (later layers) compared to the smaller Llama-3-8B, where the decline is more pronounced.

5. **A-Anchored Stability:** The A-Anchored method, while performing poorly, shows much less variance across layers, suggesting its performance is less dependent on specific layer-wise processing.

### Interpretation

This data strongly suggests that **how information is anchored or presented to the model (as a question vs. as an answer) fundamentally changes its internal processing and ultimate accuracy.** The Q-Anchored approach appears to activate a more effective, layer-specific processing pathway that builds up to a peak of understanding in the middle layers. The subsequent decline could indicate over-processing, interference, or a shift in the model's internal representation away from the specific QA task in its final layers.

The stark contrast between the two methods implies that the model's "knowledge" is not uniformly accessible; its ability to retrieve and apply that knowledge is highly contingent on the prompt format. The A-Anchored method's low, flat performance might represent a baseline or a failure mode where the model does not engage its specialized QA circuitry. The differences between datasets highlight that model performance is not monolithic but varies significantly with the nature of the knowledge or reasoning required. This analysis is crucial for understanding model internals and designing more effective prompting strategies.

</details>

Figure 5: Comparisons of answer accuracy between pathways. Q-Anchored samples show higher accuracy than A-Anchored ones, highlighting the association between truthfulness encoding and LLM knowledge boundaries. Full results in Appendix F and G.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Grouped Bar Chart: Entity Frequency by Model and Anchoring Method

### Overview

This is a grouped bar chart comparing the "Entity Frequency" for four different large language models, each evaluated under two anchoring conditions: "Q-Anchored" and "A-Anchored". The chart visually demonstrates a consistent and significant difference in entity frequency between the two anchoring methods across all models.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **X-Axis (Horizontal):** Labeled "Model". It lists four distinct models:

1. `Llama-3-8B`

2. `Llama-3-70B`

3. `Mistral-7B-v0.3`

4. `Mistral-7B-v0.1`

* **Y-Axis (Vertical):** Labeled "Entity Frequency". The scale runs from 0 to over 60,000, with major gridlines at intervals of 20,000 (0, 20000, 40000, 60000).

* **Legend:** Positioned at the bottom center of the chart.

* A reddish-brown (terracotta) square corresponds to the label `Q-Anchored`.

* A grey square corresponds to the label `A-Anchored`.

* **Data Series:** For each model on the x-axis, there are two adjacent bars:

* The left bar (reddish-brown) represents the `Q-Anchored` value.

* The right bar (grey) represents the `A-Anchored` value.

### Detailed Analysis

**Trend Verification:** For every model, the `Q-Anchored` bar (reddish-brown, left) is substantially taller than the corresponding `A-Anchored` bar (grey, right). This indicates a consistent trend where the Q-Anchored method yields a much higher entity frequency than the A-Anchored method.

**Approximate Data Points (Spatially Grounded):**

1. **Llama-3-8B:**

* `Q-Anchored` (left, reddish-brown): The bar extends slightly above the 60,000 gridline. Estimated value: ~64,000.

* `A-Anchored` (right, grey): The bar is just below the 20,000 gridline. Estimated value: ~18,000.

2. **Llama-3-70B:**

* `Q-Anchored` (left, reddish-brown): The bar is slightly above the 20,000 gridline. Estimated value: ~24,000.

* `A-Anchored` (right, grey): The bar is below the 20,000 gridline, lower than the Llama-3-8B A-Anchored bar. Estimated value: ~13,000.

3. **Mistral-7B-v0.3:**

* `Q-Anchored` (left, reddish-brown): The bar is between the 40,000 and 60,000 gridlines, closer to 60,000. Estimated value: ~55,000.

* `A-Anchored` (right, grey): The bar is below the 20,000 gridline, similar in height to the Llama-3-70B A-Anchored bar. Estimated value: ~15,000.

4. **Mistral-7B-v0.1:**

* `Q-Anchored` (left, reddish-brown): This is the tallest bar in the chart, extending well above the 60,000 gridline. Estimated value: ~75,000.

* `A-Anchored` (right, grey): The bar is just above the 20,000 gridline. Estimated value: ~22,000.

### Key Observations

1. **Dominant Anchoring Effect:** The most striking pattern is the massive disparity between Q-Anchored and A-Anchored frequencies. Q-Anchored values are 2.5 to 4 times higher than their A-Anchored counterparts for the same model.

2. **Model Performance Variance:** There is significant variation in Q-Anchored performance between models. `Mistral-7B-v0.1` shows the highest frequency, followed by `Llama-3-8B`, then `Mistral-7B-v0.3`, with `Llama-3-70B` showing the lowest.

3. **A-Anchored Consistency:** In contrast, the A-Anchored frequencies are relatively consistent and low across all models, clustering between approximately 13,000 and 22,000.

4. **Notable Outlier:** The `Mistral-7B-v0.1` model's Q-Anchored result is a clear outlier on the high end, suggesting it may be particularly sensitive to or effective with that anchoring method.

### Interpretation

The data strongly suggests that the anchoring method (Q vs. A) is a primary determinant of entity frequency in this evaluation, far more so than the specific model architecture or size (e.g., Llama-3-8B vs. 70B). The "Q-Anchored" condition appears to trigger or elicit a much higher rate of entity generation or recognition.

The variation among models in the Q-Anchored condition could indicate differences in how these models process or are prompted by the "Q" anchor. The relatively flat performance in the A-Anchored condition suggests it may represent a baseline or a less effective prompting strategy that all models handle similarly.

From a Peircean perspective, the chart acts as an *index* pointing to a causal relationship between the anchoring technique and the measured output (entity frequency). The consistent, large-magnitude difference across multiple models (the *iconic* similarity of the pattern) strengthens the hypothesis that this is a robust effect, not an artifact of a single model. The outlier (`Mistral-7B-v0.1`) invites further investigation into what specific characteristics of that model version make it so responsive to Q-Anchoring.

</details>

Figure 6: Entity frequency distributions for both pathways on PopQA. Q-Anchored samples concentrate on more popular entities, whereas A-Anchored samples skew toward long-tail entities.

### 4.2 Self-Awareness of Pathway Distinctions

Given that LLMs encode truthfulness via two distinct mechanisms, this section investigates whether their internal representations contain discriminative information that can be used to distinguish between these mechanisms. To this end, we train probing classifiers on the models’ original internal states (i.e., without knockout interventions) to predict which mechanism is being utilized.

Table 2 reports the pathway classification results of the best-performing layers in hallucination detection across different models. Our findings demonstrate that different mechanisms can be reliably inferred from internal representations, suggesting that, in addition to encoding truthfulness, LLMs exhibit intrinsic awareness of pathway distinctions. These findings highlight a potential avenue for fine-grained improvements targeting specific truthfulness encoding mechanisms.

Datasets Llama-3-8B Llama-3-70B Mistral-7B-v0.3 PopQA 87.80 92.66 87.64 TriviaQA 75.10 83.91 85.87 HotpotQA 86.31 87.34 92.13 NQ 78.31 84.14 84.83

Table 2: AUCs for encoding pathway classification. The predictability from internal representations indicates that LLMs possess intrinsic awareness of pathway distinctions.

## 5 Pathway-Aware Detection

Building on the intriguing findings, we explore how the discovered pathway distinctions can be leveraged to improve hallucination detection. Specifically, two simple yet effective pathway-aware strategies are proposed: (1) Mixture-of-Probes (MoP) (§ 5.1), which allows expert probes to specialize in Q-Anchored and A-Anchored pathways respectively, and (2) Pathway Reweighting (PR) (§ 5.2), a plug-and-play approach that amplifies pathway-relevant cues salient for detection.

### 5.1 Mixture-of-Probes

Motivated by the fundamentally different dependencies of the two encoding pathways and the LLMs’ intrinsic awareness of them, we propose a Mixture-of-Probes (MoP) framework that explicitly captures this heterogeneity. Rather than training a single probe to handle all inputs, MoP employs two pathway-specialized experts and leverages the self-awareness probe (§ 4.2) as a gating network to combine their predictions. Let $h^l^{*}(x)∈ℝ^d$ be the token hidden state from the best detection layer $l^*$ . Two expert probes $p_Q(·)$ and $p_A(·)$ are trained separately for two pathway samples, and the self-awareness probe provides a gating coefficient $π(h^l^{*}(x))∈[0,1]$ . The final prediction is a convex combination, requiring no extra training:

$$

\displaystyle p_MoP(z=1\midh^l^{*}(x)) \displaystyle=π_Q p_Q(z=1\midh^l^{*}(x)) \displaystyle +(1-π_Q) p_A(z=1\midh^l^{*}(x)). \tag{3}

$$

### 5.2 Pathway Reweighting

From the perspective of emphasizing pathway-relevant internal cues, we introduce a plug-and-play Pathway Reweighting (PR) method that directly modulates the question–answer information flow. The key idea is to adjust the attention from exact answer to question tokens according to the predicted pathway, amplifying the signals most salient for hallucination detection. For each layer $l≤ l^*$ , two learnable scalars $α_Q^l,α_A^l>0$ are introduced. Given self-awareness probability $π(h^l^{*}(x))$ , we rescale attention edges $i∈E_A$ , $j∈E_Q$ to construct representations tailored for detection:

$$

\tilde{A}^l(i,j)=\begin{cases}\bigl[1+s(h^l^{*}(x))\bigr]A^l(i,j),&i∈E_A,j∈E_Q,\\

A^l(i,j),&otherwise,\end{cases} \tag{4}

$$

where

$$

s(h^l^{*}(x))=π_Q α_Q^l-(1-π_Q) α_A^l. \tag{5}

$$

The extra parameters serve as a lightweight adapter, used only during detection to guide salient truthfulness cues and omitted during generation, leaving the generation capacity unaffected.

Method Llama-3-8B Mistral-7B-v0.3 PopQA TriviaQA HotpotQA NQ PopQA TriviaQA HotpotQA NQ P(True) 55.85 49.92 52.14 53.27 45.49 47.61 57.87 52.79 Logits-mean 74.52 60.39 51.94 52.63 69.52 66.76 55.45 57.88 Logits-min 85.36 70.89 61.28 56.50 87.05 77.33 68.08 54.40 Probing Baseline 88.71 77.58 82.23 70.20 87.39 81.74 83.19 73.60 mygray MoP-RandomGate 75.52 69.17 79.88 66.56 79.81 70.88 72.23 61.19 mygray MoP-VanillaExperts 89.11 78.73 84.57 71.21 88.53 80.93 82.93 73.77 mygray MoP 92.11 81.18 85.45 74.64 91.66 83.57 85.82 76.87 mygray PR 94.01 83.13 87.81 79.10 93.09 84.36 89.03 79.09

Table 3: Comparison of hallucination detection performance (AUC). Full results in Appendix H.

### 5.3 Experiments

Setup

The experimental setup follows Section 3.2.1. We compare our method against several internal-based baselines, including (1) P(True) (Kadavath et al., 2022), (2) uncertainty-based metrics (Aichberger et al., 2024; Xue et al., 2025a), and (3) probing classifiers (Chen et al., 2024; Orgad et al., 2025). Results are averaged over three random seeds. Additional implementation details are provided in Appendix B.5 and B.6.

Results

As shown in Table 3 and Appendix H, both MoP and PR consistently outperform competing approaches across different datasets and model scales. Specifically, for MoP, we further examine two ablated variants: (1) MoP-RandomGate, which randomly routes the two pathway experts without leveraging the self-awareness probe; and (2) MoP-VanillaExperts, which replaces the expert probes with two vanilla probes to serve as a simple ensemble strategy. Both ablated variants exhibit substantially degraded performance compared to MoP, underscoring the roles of pathway specialization and self-awareness gating. For PR, the method proves particularly effective in improving performance by dynamically adjusting the focus on salient truthfulness cues. These results demonstrate that explicitly modeling truthfulness encoding heterogeneity can effectively translate the insights of our analysis into practical gains for hallucination detection.

## 6 Related Work

Hallucination detection in LLMs has received increasing attention because of its critical role in building reliable and trustworthy generative systems (Tian et al., 2024; Shi et al., 2024; Bai et al., 2024). Existing approaches can be broadly grouped by whether they rely on external resources (e.g., retrieval systems or fact–checking APIs). Externally assisted methods cross-verify output texts against external knowledge bases (Min et al., 2023; Hu et al., 2025; Huang et al., 2025) or specialized LLM judges (Luo et al., 2024; Bouchard and Chauhan, 2025; Zhang et al., 2025). Resource-free methods avoid external data and instead exploit the model’s own intermediate computations. Some leverage the model’s self-awareness of knowledge boundaries (Kadavath et al., 2022; Luo et al., 2025), while others use uncertainty-based measures (Aichberger et al., 2024; Xue et al., 2025a), treating confidence as a proxy for truthfulness. These techniques analyze output distributions (e.g., logits) (Aichberger et al., 2024), variance across multiple samples (e.g., consistency) (Min et al., 2023; Aichberger et al., 2025), or other statistical indicators of prediction uncertainty (Xue et al., 2025b). Another line of work trains linear probing classifiers on hidden representations to capture intrinsic truthfulness signals. Prior work (Burns et al., 2023; Li et al., 2023; Chen et al., 2024; Orgad et al., 2025) shows that LLMs encode rich latent features correlated with factual accuracy, enabling efficient detection with minimal overhead. Yet the mechanisms behind these internal truthfulness encoding remain poorly understood. Compared to previous approaches, our work addresses this gap by dissecting how such intrinsic signals emerge and operate, revealing distinct information pathways that not only yield explanatory insights but also enhance detection performance.

## 7 Conclusion

We investigate how LLMs encode truthfulness, revealing two complementary pathways: a Question-Anchored pathway relying on question–answer flow, and an Answer-Anchored pathway extracting self-contained evidence from generated outputs. Analyses across datasets and models highlight their ties to knowledge boundaries and intrinsic self-awareness. Building on these insights, we further propose two applications to improve hallucination detection. Overall, our findings not only advance mechanistic understanding of intrinsic truthfulness encoding but also offer practical applications for building more reliable generative systems.

## Limitations

While this work provides a systematic analysis of intrinsic truthfulness encoding mechanisms in LLMs and demonstrates their utility for hallucination detection, one limitation is that, similar to prior work on mechanistic interpretability, our analyses and pathway-aware applications assume access to internal model representations. Such access may not always be available in strictly black-box settings. In these scenarios, additional engineering or alternative approximations may be required for practical deployment, which we leave for future work.

## Ethics Statement

Our work presents minimal potential for negative societal impact, primarily due to the use of publicly available datasets and models. This accessibility inherently reduces the risk of adverse effects on individuals or society.

## References

- Aichberger et al. (2024) Lukas Aichberger, Kajetan Schweighofer, Mykyta Ielanskyi, and Sepp Hochreiter. 2024. Semantically diverse language generation for uncertainty estimation in language models. arXiv preprint arXiv:2406.04306.

- Aichberger et al. (2025) Lukas Aichberger, Kajetan Schweighofer, Mykyta Ielanskyi, and Sepp Hochreiter. 2025. Improving uncertainty estimation through semantically diverse language generation. In The Thirteenth International Conference on Learning Representations, ICLR 2025, Singapore, April 24-28, 2025. OpenReview.net.

- Bai et al. (2024) Ge Bai, Jie Liu, Xingyuan Bu, Yancheng He, Jiaheng Liu, Zhanhui Zhou, Zhuoran Lin, Wenbo Su, Tiezheng Ge, Bo Zheng, and Wanli Ouyang. 2024. Mt-bench-101: A fine-grained benchmark for evaluating large language models in multi-turn dialogues. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2024, Bangkok, Thailand, August 11-16, 2024, pages 7421–7454. Association for Computational Linguistics.

- Baker et al. (1998) Collin F Baker, Charles J Fillmore, and John B Lowe. 1998. The berkeley framenet project. In 36th Annual Meeting of the Association for Computational Linguistics and 17th International Conference on Computational Linguistics, Volume 1, pages 86–90.

- Bouchard and Chauhan (2025) Dylan Bouchard and Mohit Singh Chauhan. 2025. Uncertainty quantification for language models: A suite of black-box, white-box, llm judge, and ensemble scorers. arXiv preprint arXiv:2504.19254.

- Burns et al. (2023) Collin Burns, Haotian Ye, Dan Klein, and Jacob Steinhardt. 2023. Discovering latent knowledge in language models without supervision. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net.

- Chen et al. (2024) Chao Chen, Kai Liu, Ze Chen, Yi Gu, Yue Wu, Mingyuan Tao, Zhihang Fu, and Jieping Ye. 2024. INSIDE: llms’ internal states retain the power of hallucination detection. In The Twelfth International Conference on Learning Representations, ICLR 2024, Vienna, Austria, May 7-11, 2024. OpenReview.net.

- Fierro et al. (2025) Constanza Fierro, Negar Foroutan, Desmond Elliott, and Anders Søgaard. 2025. How do multilingual language models remember facts? In Findings of the Association for Computational Linguistics, ACL 2025, Vienna, Austria, July 27 - August 1, 2025, pages 16052–16106. Association for Computational Linguistics.

- Geva et al. (2023) Mor Geva, Jasmijn Bastings, Katja Filippova, and Amir Globerson. 2023. Dissecting recall of factual associations in auto-regressive language models. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, EMNLP 2023, Singapore, December 6-10, 2023, pages 12216–12235. Association for Computational Linguistics.

- Ghandeharioun et al. (2024) Asma Ghandeharioun, Avi Caciularu, Adam Pearce, Lucas Dixon, and Mor Geva. 2024. Patchscopes: A unifying framework for inspecting hidden representations of language models. In Forty-first International Conference on Machine Learning, ICML 2024, Vienna, Austria, July 21-27, 2024. OpenReview.net.

- Grattafiori et al. (2024) Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, Amy Yang, Angela Fan, Anirudh Goyal, Anthony Hartshorn, Aobo Yang, Archi Mitra, Archie Sravankumar, Artem Korenev, Arthur Hinsvark, and 542 others. 2024. The llama 3 herd of models. Preprint, arXiv:2407.21783.

- Hu et al. (2025) Wentao Hu, Wengyu Zhang, Yiyang Jiang, Chen Jason Zhang, Xiaoyong Wei, and Qing Li. 2025. Removal of hallucination on hallucination: Debate-augmented RAG. In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2025, Vienna, Austria, July 27 - August 1, 2025, pages 15839–15853. Association for Computational Linguistics.

- Huang et al. (2025) Lei Huang, Xiaocheng Feng, Weitao Ma, Yuchun Fan, Xiachong Feng, Yuxuan Gu, Yangfan Ye, Liang Zhao, Weihong Zhong, Baoxin Wang, Dayong Wu, Guoping Hu, Lingpeng Kong, Tong Xiao, Ting Liu, and Bing Qin. 2025. Alleviating hallucinations from knowledge misalignment in large language models via selective abstention learning. In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2025, Vienna, Austria, July 27 - August 1, 2025, pages 24564–24579. Association for Computational Linguistics.

- Jiang et al. (2023) Albert Q. Jiang, Alexandre Sablayrolles, Arthur Mensch, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Florian Bressand, Gianna Lengyel, Guillaume Lample, Lucile Saulnier, Lélio Renard Lavaud, Marie-Anne Lachaux, Pierre Stock, Teven Le Scao, Thibaut Lavril, Thomas Wang, Timothée Lacroix, and William El Sayed. 2023. Mistral 7b. Preprint, arXiv:2310.06825.

- Joshi et al. (2017) Mandar Joshi, Eunsol Choi, Daniel Weld, and Luke Zettlemoyer. 2017. TriviaQA: A large scale distantly supervised challenge dataset for reading comprehension. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 1601–1611, Vancouver, Canada. Association for Computational Linguistics.

- Kadavath et al. (2022) Saurav Kadavath, Tom Conerly, Amanda Askell, T. J. Henighan, Dawn Drain, Ethan Perez, Nicholas Schiefer, Zachary Dodds, Nova Dassarma, Eli Tran-Johnson, Scott Johnston, Sheer El-Showk, Andy Jones, Nelson Elhage, Tristan Hume, Anna Chen, Yuntao Bai, Sam Bowman, Stanislav Fort, and 17 others. 2022. Language models (mostly) know what they know. ArXiv, abs/2207.05221.

- Kwiatkowski et al. (2019) Tom Kwiatkowski, Jennimaria Palomaki, Olivia Redfield, Michael Collins, Ankur Parikh, Chris Alberti, Danielle Epstein, Illia Polosukhin, Jacob Devlin, Kenton Lee, Kristina Toutanova, Llion Jones, Matthew Kelcey, Ming-Wei Chang, Andrew M. Dai, Jakob Uszkoreit, Quoc Le, and Slav Petrov. 2019. Natural questions: A benchmark for question answering research. Transactions of the Association for Computational Linguistics, 7:452–466.

- Li et al. (2023) Kenneth Li, Oam Patel, Fernanda B. Viégas, Hanspeter Pfister, and Martin Wattenberg. 2023. Inference-time intervention: Eliciting truthful answers from a language model. In Advances in Neural Information Processing Systems 36: Annual Conference on Neural Information Processing Systems 2023, NeurIPS 2023, New Orleans, LA, USA, December 10 - 16, 2023.

- Luo et al. (2024) Wen Luo, Tianshu Shen, Wei Li, Guangyue Peng, Richeng Xuan, Houfeng Wang, and Xi Yang. 2024. Halludial: A large-scale benchmark for automatic dialogue-level hallucination evaluation. Preprint, arXiv:2406.07070.

- Luo et al. (2025) Wen Luo, Feifan Song, Wei Li, Guangyue Peng, Shaohang Wei, and Houfeng Wang. 2025. Odysseus navigates the sirens’ song: Dynamic focus decoding for factual and diverse open-ended text generation. In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 27200–27218, Vienna, Austria. Association for Computational Linguistics.

- Mallen et al. (2023) Alex Mallen, Akari Asai, Victor Zhong, Rajarshi Das, Daniel Khashabi, and Hannaneh Hajishirzi. 2023. When not to trust language models: Investigating effectiveness of parametric and non-parametric memories. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 9802–9822, Toronto, Canada. Association for Computational Linguistics.

- Michel et al. (2019) Paul Michel, Omer Levy, and Graham Neubig. 2019. Are sixteen heads really better than one? Advances in neural information processing systems, 32.

- Min et al. (2023) Sewon Min, Kalpesh Krishna, Xinxi Lyu, Mike Lewis, Wen-tau Yih, Pang Wei Koh, Mohit Iyyer, Luke Zettlemoyer, and Hannaneh Hajishirzi. 2023. Factscore: Fine-grained atomic evaluation of factual precision in long form text generation. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, EMNLP 2023, Singapore, December 6-10, 2023, pages 12076–12100. Association for Computational Linguistics.

- Niu et al. (2025) Mengjia Niu, Hamed Haddadi, and Guansong Pang. 2025. Robust hallucination detection in llms via adaptive token selection. arXiv preprint arXiv:2504.07863.

- Orgad et al. (2025) Hadas Orgad, Michael Toker, Zorik Gekhman, Roi Reichart, Idan Szpektor, Hadas Kotek, and Yonatan Belinkov. 2025. Llms know more than they show: On the intrinsic representation of LLM hallucinations. In The Thirteenth International Conference on Learning Representations, ICLR 2025, Singapore, April 24-28, 2025. OpenReview.net.

- Pagnoni et al. (2021) Artidoro Pagnoni, Vidhisha Balachandran, and Yulia Tsvetkov. 2021. Understanding factuality in abstractive summarization with frank: A benchmark for factuality metrics. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 4812–4829.

- Qian et al. (2025) Chen Qian, Dongrui Liu, Haochen Wen, Zhen Bai, Yong Liu, and Jing Shao. 2025. Demystifying reasoning dynamics with mutual information: Thinking tokens are information peaks in llm reasoning. arXiv preprint arXiv:2506.02867.

- Shi et al. (2024) Zhengliang Shi, Shuo Zhang, Weiwei Sun, Shen Gao, Pengjie Ren, Zhumin Chen, and Zhaochun Ren. 2024. Generate-then-ground in retrieval-augmented generation for multi-hop question answering. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2024, Bangkok, Thailand, August 11-16, 2024, pages 7339–7353. Association for Computational Linguistics.

- Simonyan et al. (2014) Karen Simonyan, Andrea Vedaldi, and Andrew Zisserman. 2014. Deep inside convolutional networks: Visualising image classification models and saliency maps. In 2nd International Conference on Learning Representations, ICLR 2014, Banff, AB, Canada, April 14-16, 2014, Workshop Track Proceedings.

- Tian et al. (2024) Yuanhe Tian, Ruyi Gan, Yan Song, Jiaxing Zhang, and Yongdong Zhang. 2024. Chimed-gpt: A chinese medical large language model with full training regime and better alignment to human preferences. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2024, Bangkok, Thailand, August 11-16, 2024, pages 7156–7173. Association for Computational Linguistics.

- Todd et al. (2024) Eric Todd, Millicent L. Li, Arnab Sen Sharma, Aaron Mueller, Byron C. Wallace, and David Bau. 2024. Function vectors in large language models. In The Twelfth International Conference on Learning Representations, ICLR 2024, Vienna, Austria, May 7-11, 2024. OpenReview.net.

- Wang et al. (2023) Lean Wang, Lei Li, Damai Dai, Deli Chen, Hao Zhou, Fandong Meng, Jie Zhou, and Xu Sun. 2023. Label words are anchors: An information flow perspective for understanding in-context learning. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 9840–9855.

- Wu et al. (2025) Wenhao Wu, Yizhong Wang, Guangxuan Xiao, Hao Peng, and Yao Fu. 2025. Retrieval head mechanistically explains long-context factuality. In The Thirteenth International Conference on Learning Representations, ICLR 2025, Singapore, April 24-28, 2025. OpenReview.net.

- Xue et al. (2025a) Boyang Xue, Fei Mi, Qi Zhu, Hongru Wang, Rui Wang, Sheng Wang, Erxin Yu, Xuming Hu, and Kam-Fai Wong. 2025a. UAlign: Leveraging uncertainty estimations for factuality alignment on large language models. In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 6002–6024, Vienna, Austria. Association for Computational Linguistics.

- Xue et al. (2025b) Yihao Xue, Kristjan Greenewald, Youssef Mroueh, and Baharan Mirzasoleiman. 2025b. Verify when uncertain: Beyond self-consistency in black box hallucination detection. arXiv preprint arXiv:2502.15845.

- Yang et al. (2025) An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, Chujie Zheng, Dayiheng Liu, Fan Zhou, Fei Huang, Feng Hu, Hao Ge, Haoran Wei, Huan Lin, Jialong Tang, and 41 others. 2025. Qwen3 technical report. Preprint, arXiv:2505.09388.

- Yang et al. (2018) Zhilin Yang, Peng Qi, Saizheng Zhang, Yoshua Bengio, William Cohen, Ruslan Salakhutdinov, and Christopher D. Manning. 2018. HotpotQA: A dataset for diverse, explainable multi-hop question answering. In Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pages 2369–2380, Brussels, Belgium. Association for Computational Linguistics.

- Zhang et al. (2025) Yue Zhang, Yafu Li, Leyang Cui, Deng Cai, Lemao Liu, Tingchen Fu, Xinting Huang, Enbo Zhao, Yu Zhang, Yulong Chen, and 1 others. 2025. Siren’s song in the ai ocean: A survey on hallucination in large language models. Computational Linguistics, pages 1–46.

## Appendix A LLM Usage

In this work, we employ LLMs solely for language refinement to enhance clarity and explanatory quality. All content has been carefully verified for factual accuracy, and the authors take full responsibility for the entire manuscript. The core ideas, experimental design, and methodological framework are conceived and developed independently by the authors, without the use of LLMs.

## Appendix B Implementation Details

### B.1 Identifying Exact Question and Answer Tokens