## Imandra CodeLogician: Neuro-Symbolic Reasoning for Precise Analysis of Software Logic

Hongyu Lin 1 , Samer Abdallah 1 , Makar Valentinov 1 , Paul Brennan 1 , Elijah Kagan 1 , Christoph M. Wintersteiger 1 , Denis Ignatovich 1 , and Grant Passmore ∗ 1,2

1 Imandra Inc.

2 Clare Hall, University of Cambridge

{ hongyu,samer,makar,paul,elijah,christoph,denis,grant } @imandra.ai

February 10, 2026

## Abstract

Large Language Models (LLMs) have shown strong performance on code understanding and software engineering tasks, yet they fundamentally lack the ability to perform precise, exhaustive mathematical reasoning about program behavior. Existing benchmarks either focus on mathematical proof automation, largely disconnected from real-world software, or on engineering tasks that do not require semantic rigor.

We present CodeLogician 1 , a neurosymbolic agent and framework for precise analysis of software logic, and its integration with ImandraX 2 , an industrial automated reasoning engine successfully deployed in financial markets, safety-critical systems and government applications. Unlike prior approaches that use formal methods primarily to validate or filter LLM outputs, CodeLogician uses LLMs to help construct explicit formal models ('mental models') of software systems, enabling automated reasoning to answer rich semantic questions beyond binary verification outcomes. While the current implementation builds on ImandraX and benefits from its unique features designed for large-scale industrial formal verification and software understanding, the framework is explicitly designed to be reasoner-agnostic and can support additional formal reasoning backends.

To rigorously evaluate mathematical reasoning about software logic, we introduce a new benchmark dataset code-logic-bench 3 targeting the previously unaddressed middle ground between theorem proving and software engineering benchmarks. The benchmark measures correctness and efficacy of reasoning about program state spaces, control flow, coverage constraints, decision boundaries, and edge cases, with ground truth defined via formal modeling and automated decomposition.

Using this benchmark set, we compare LLM-only reasoning against LLMs augmented with CodeLogician . Across all evaluated models, formal augmentation with CodeLogician yields substantial and consistent improvements, closing a 41-47 percentage point gap in reasoning accuracy and achieving complete coverage while LLM-only approaches remain approximate or incorrect. These results demonstrate that the neurosymbolic integration of LLMs with formal reasoning engines is essential for scaling program analysis beyond heuristic reasoning toward rigorous, autonomous software understanding and formal verification.

∗ The author would like to thank the Isaac Newton Institute for Mathematical Sciences, Cambridge, for support and hospitality during the programme Big Proof: Formalizing Mathematics at Scale , where some of the work on this paper was undertaken. This work was supported by EPSRC grant EP/Z000580/1.

1 https://www.codelogician.dev

2 https://www.imandra.ai/core

3 https://github.com/imandra-ai/code-logic-bench

## Contents

| Introduction | Introduction | 4 |

|---------------------------------------------------------------------------------------------------------------------------------|---------------------------------------------------------------------------------------------------------------------------------|----------------------------------------------------------------------|

| 1.1 Beyond Verification-as-Filtering | . . . . . . | . . . . . . . . . . 4 |

| | . . . . | . . . . . . . . . . 4 |

| 1.2 | Neuro-Symbolic Complementarity IML Agent . . . . . . | . . . . . . . . . . 5 . . . . . . . . . . . . . . . . . . . . 7 |

| 1.3 ImandraX and Reasoner-Agnostic Design | 1.3 ImandraX and Reasoner-Agnostic Design | . . . . . . . . . . 4 |

| 1.4 The Missing Benchmark: Mathematical Reasoning About . | 1.4 The Missing Benchmark: Mathematical Reasoning About . | . . . . . . . . . . 5 |

| 1.5 Contributions . . . . . . . . . . . . . . . . . . . . . . . | 1.5 Contributions . . . . . . . . . . . . . . . . . . . . . . . | 5 |

| CodeLogician overview | CodeLogician overview | CodeLogician overview |

| 2.1 Positioning 2.2 Extensible | and Scope . . . . . . . . . . . Reasoner-Oriented Design . . . | . . . . . . . . . . 5 . . . . . . . . . . 6 |

| 2.3 Modes of Operation . . . 2.4 Governance and Feedback | . . . . . . . . . . . . . . . . . . | . . . . . . . . . . 6 . . . . . . . . . . 7 |

| 2.5 Relationship to the CodeLogician 2.6 Summary . . . . . . . . . . . . | 2.5 Relationship to the CodeLogician 2.6 Summary . . . . . . . . . . . . | 7 |

| Overview of ImandraX | Overview of ImandraX | Overview of ImandraX |

| | | 7 |

| 3 | 3 | 3 |

| | Language | . . . . . . . . . . 8 |

| 3.1 Formal Models in the Imandra Modeling 3.2 Verification Goals . . . . . . . . . . . . . | . | . . . . . . . . . . 8 |

| 3.3 Region | Decomposition . . . . . . . . . . . | . . . . . . . . . . 9 |

| 3.4 Focusing Decomposition: Side | Conditions | . . . . . . . . . . 10 |

| 3.5 Test Generation from Regions . . . . 3.6 Summary . . . . . . . . . . . . . . . | . . . . . . | . . . . . . . . . . 11 . . . . . . . . . . 11 |

| | | 11 |

| Autoformalization of source code | Autoformalization of source code | Autoformalization of source code |

| | | 12 |

| 4.3 Abstraction Levels . . . . . . . 4.4 Dealing with Unknowns . . . | . . . . . . | . . . . . . . . . . |

| | . . . . | . . . . . . . . . . 13 |

| . . . | . . . . | . . . . . . . . . . 13 |

| 4.4.1 Opaque Functions . . . . . . | . . . . | . . . . . . . . . . 13 |

| 4.4.2 Axioms and Assumptions | 4.4.2 Axioms and Assumptions | 4.4.2 Axioms and Assumptions |

| 4.4.3 Approximation Functions 4.5 Summary . . . . . . . . . . . . | . . . . . . . . . | . . . . . . . . . . 13 . . . . . . . . . . 13 |

| CodeLogician IML Agent | CodeLogician IML Agent | CodeLogician IML Agent |

| . | . | . |

| . 5.1 From 5.2 Verification | Source Code to Formal Models . . . Goals and Logical Properties | . . . . . . . . . . 14 . . . . . . . . . . 14 |

| | . . . . . . . | . . . . . . . . . . 14 |

| 5.3 Beyond Yes/No Verification . 5.4 Agent Architecture and State | . . . . . . | . . . . . . . . . . 14 |

| . 5.5 Model Refinement and | . . | . . . . . . . . . . 15 |

| Executability . . . . . | . . | . . . . . . . . . . 15 |

| 5.6 Assessing Model Correctness . | 5.6 Assessing Model Correctness . | . . . . . . . . . . 15 |

| 5.7 Region decomposition, test case generation, and refinement 5.8 Interfaces . . . . . . . . . . . . . . . . . . . . . . . . . | 5.7 Region decomposition, test case generation, and refinement 5.8 Interfaces . . . . . . . . . . . . . . . . . . . . . . . . . | . . . . . . . . . . 16 |

| 5.8.1 Programmatic Access via python RemoteGraph . . | 5.8.1 Programmatic Access via python RemoteGraph . . | . . . . . . . . . . 17 |

| 5.8.2 VS Code Extension . . . . . . . . . . . . . 5.9 Summary . . . . . . . . . . . . . . . . . . . . . . | 5.8.2 VS Code Extension . . . . . . . . . . . . . 5.9 Summary . . . . . . . . . . . . . . . . . . . . . . | . . . . . . . . . . 17 |

| Server | Server | Server |

| CodeLogician | CodeLogician | CodeLogician |

| 6 | 6 | 17 |

| 6.1 Server | Responsibilities . . . . . . . . . . . Formalization via | . . . . . . . . . . 19 . . . . . . . . . . 19 |

| | . . . . . | . . . . . . . . . . |

| 6.2 Strategies: Project-Wide | 6.2 Strategies: Project-Wide | 19 |

| 6.3 Metamodel-Based Formalization 6.4 Core Definitions . | . . . . . . . . . . . . | . . . . . . . . . . |

| . | . | 19 20 |

| 6.5 Formalization Levels . . . . . . . . . 6.6 Conditions Triggering | 6.5 Formalization Levels . . . . . . . . . 6.6 Conditions Triggering | 6.5 Formalization Levels . . . . . . . . . 6.6 Conditions Triggering |

| Re-Formalization . | Re-Formalization . | 20 |

| 6.7 . . . | 6.7 . . . | 6.7 . . . |

| | Autoformalization Workflow . . . . | |

| . | . | . |

| . | . | . |

| . | . | . |

| . | . | . |

| . | . | . |

| . | . | |

| | . . . 20 | . . . 20 |

| | . . . . . | . . . . . |

| | . . . | . . . |

| | . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . |

| | | . . . . . . . . . . . . . . . . . |

| | 6.8 Minimal Re-Formalization | Strategy . . . . . | 21 |

|-----|-------------------------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------|------|

| | 6.9 Summary and Error Resilience . | . . . . . . | 21 |

| 7 | Performance | Performance | 23 |

| | Dataset . . . . . . . . . | . . . . . . . . . . . | 23 |

| | Metrics . . . . . . . . . . . . . | . . . . . . . | 24 |

| | 7.2.1 | State Space Estimation Accuracy . . | 24 |

| | 7.2.2 | Outcome Precision . . . . . . . . . . | 24 |

| | 7.2.3 | Direction Accuracy . . . . . . . . . . | 25 |

| | 7.2.4 | Coverage Completeness . . . . . . . | 25 |

| | 7.2.5 | Control Flow Understanding . . . . | 25 |

| | 7.2.6 | Edge Case Detection . . . . . . . . . | 26 |

| | 7.2.7 | Decision Boundary Clarity . . . . . | 26 |

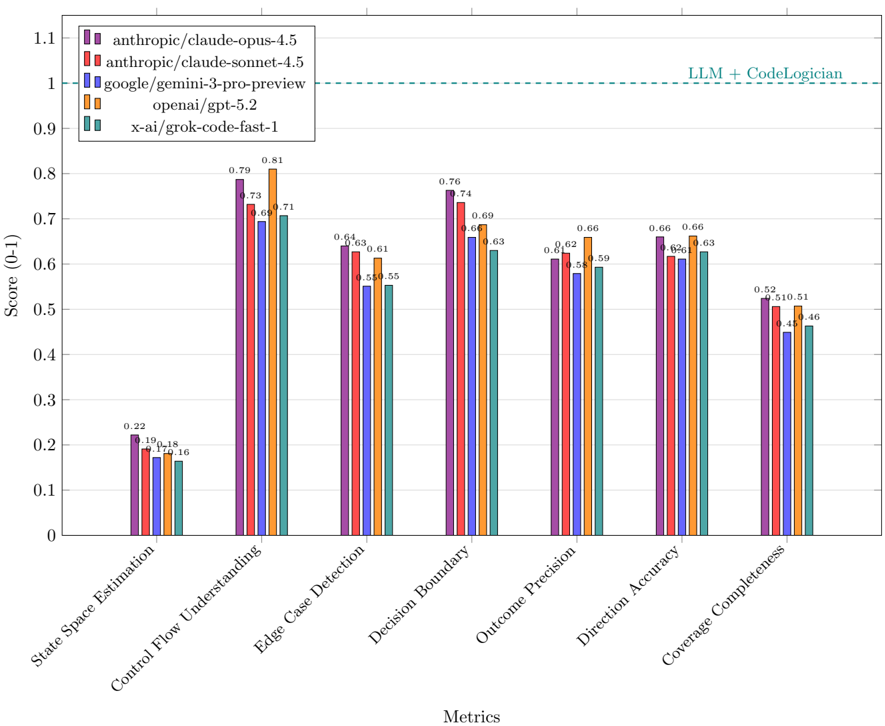

| 7.3 | Evaluation . . . . . . . . . . . | . . . . . . . . | 27 |

| | 7.3.1 | Answer Generation . . . . . . . . . . | 27 |

| | 7.3.2 | Answer Evaluation (LLM-as-a-Judge) | 27 |

| | 7.4 Results . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 7.4 Results . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | 27 |

| | 7.4.1 | Overall Performance . . . . . . . . . | 27 |

| | 7.4.2 | Model Comparison by Metric . . . . | 29 |

| 8 | | | 32 |

| | Case Studies | Case Studies | |

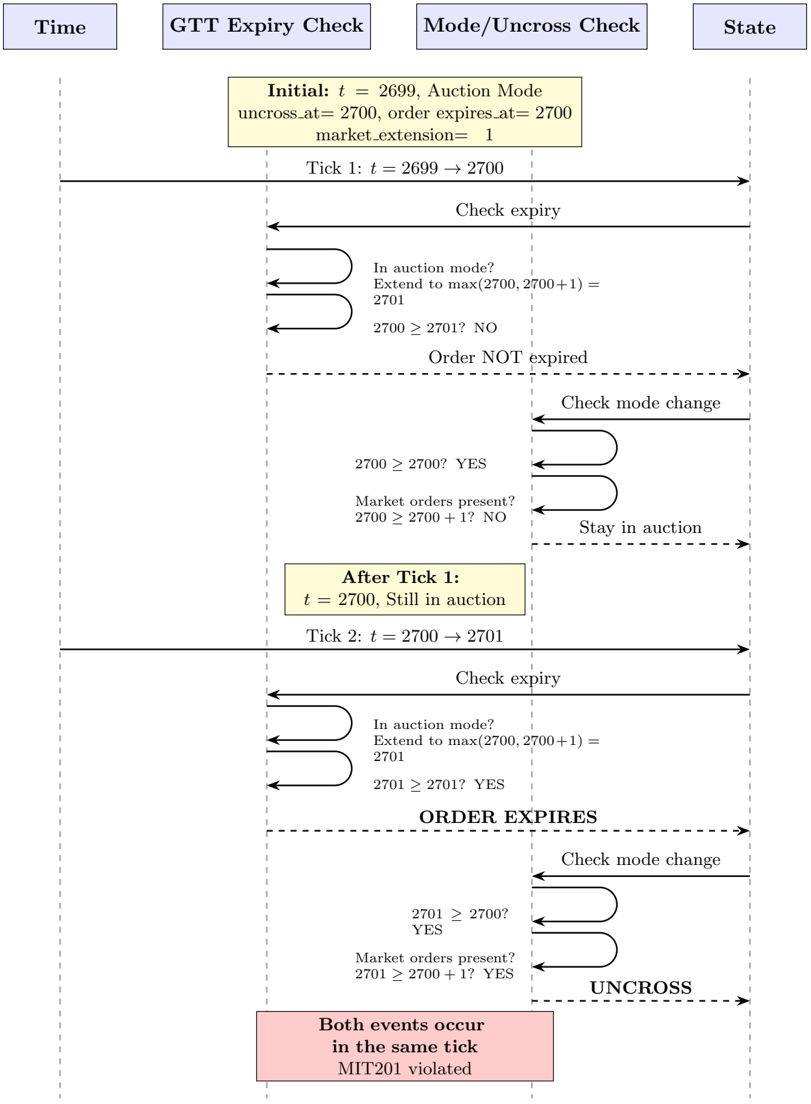

| | 8.1 LSE GTT Order Expiry | . . . . . . . . . . . | 32 |

| | 8.1.1 Verification . . . | . . . . . . . . . . . | 34 |

| | 8.2 London Stock Exchange Fee Schedule | . . . | 34 |

| | 8.2.1 | Verified Invariants . . . . . . . . . . | 34 |

| | 8.2.2 | Region Decomposition . . . . . . . . | 35 |

| | Multilateral Netting Engine . | . . . . . . . . | 35 |

| | 8.3.1 Input Validation . | . . . . . . . . . . | 36 |

| | 8.3.2 | Floating Point Precision . . . . . . . | 36 |

| 9 | Conclusion and Future Work | Conclusion and Future Work | 36 |

| A | LSE GTT Order Expiry | LSE GTT Order Expiry | 38 |

| | A.1 Python model . . . . . . . | . . . . . . . . . . | 38 |

| | A.2 Sequence diagram . . | . . . . . . . . . . . . . | 45 |

| B | Multilateral Netting Engine | Multilateral Netting Engine | 46 |

| | B.1 Python model . . . . . | . . . . . . . . . . . . | 46 |

| | B.2 Evaluation | Example: Transportation Risk Manager | 48 |

| | B.2.1 | The Three Questions . . . . . . . . . | 48 |

| | B.2.2 | Question 1: State Space Estimation | 48 |

| | B.2.3 | Question 2: Conditional Analysis . . | 49 |

| | B.2.4 | Question 3: Property Verification . . | 50 |

| | B.2.5 | Summary . . . . . . . . . . . . . . . | 51 |

## 1 Introduction

The rapid adoption of Large Language Models (LLMs) in software development has brought the notion of 'reasoning about code' into the mainstream. LLMs are now widely used for tasks such as code completion, refactoring, documentation, and test generation, and have demonstrated strong capabilities in understanding control flow and translating between programming languages. However, despite these advances, LLMs remain fundamentally limited in their ability to perform precise, exhaustive mathematical reasoning about program behavior.

In parallel, the field of automated reasoning-often referred to as symbolic AI or formal methodshas long focused on mathematically precise analysis of programs expressed as formal models. Decades of research and industrial deployment have produced powerful techniques for verifying correctness, exhaustively exploring program behaviors, and identifying subtle edge cases. Yet these techniques have historically required specialized expertise, limiting their accessibility and integration into everyday software development workflows.

This paper introduces CodeLogician , a neuro-symbolic framework that bridges these two paradigms. CodeLogician combines LLM-driven agents with ImandraX , an automated reasoning engine that has been successfully applied in financial markets, safety-critical systems, and government agencies. With CodeLogician , LLMs are used to translate source code into executable formal models and to interpret reasoning results, while automated reasoning engines perform exhaustive mathematical analysis of those models.

## 1.1 Beyond Verification-as-Filtering

Much of the existing work that combines LLMs with formal methods treats symbolic systems as external 'validators'. In these approaches, LLMs generate code, specifications, or proofs, and automated reasoning tools are applied post hoc to check consistency or correctness, typically yielding a binary yes/no outcome. While such verification-as-filtering is valuable for enforcing correctness constraints, it does not fundamentally expand the reasoning capabilities of LLMs.

In particular, verification-as-filtering does not help LLMs construct accurate internal models of program behavior, reason about large or infinite state spaces, or answer rich semantic questions involving constraints, decision boundaries, and edge cases. As a result, LLMs remain confined to approximate and heuristic reasoning, even when formal tools are present in the pipeline.

CodeLogician adopts a different perspective. Rather than using formal methods to police LLM outputs, we use LLMs to construct explicit formal mental models of software systems expressed in mathematical logic. Automated reasoning engines are then applied directly to these models to answer questions that go far beyond satisfiability or validity, including exhaustive behavioral coverage, precise boundary identification, and systematic test generation. In this setting, LLMs act as translators and orchestrators, while mathematical reasoning is delegated to tools designed explicitly for that purpose.

## 1.2 Neuro-Symbolic Complementarity

The strengths and weaknesses of LLMs closely mirror those of symbolic reasoning systems. LLMs excel at translation, abstraction, and working with incomplete information, but struggle with precise logical reasoning. Symbolic systems, by contrast, require strict formalization and domain discipline, but excel at exhaustive and exact reasoning once a model is available.

CodeLogician embraces this neuro-symbolic complementarity. LLMs bridge the gap between informal source code and formal logic, while automated reasoning engines provide mathematical guarantees about program behavior. This separation of concerns enables a scalable and principled approach to reasoning about real-world software systems.

## 1.3 ImandraX and Reasoner-Agnostic Design

At the core of CodeLogician lies ImandraX , an automated reasoning engine operating over the Imandra Modeling Language (IML), a pure functional language based on a subset of OCaml with extensions for specification and verification [14]. Beyond traditional theorem proving and formal verification, ImandraX supports advanced analysis techniques such as region decomposition, enabling systematic exploration of program state spaces and automated generation of high-coverage test suites.

While CodeLogician currently integrates ImandraX as its primary reasoning backend, the framework is explicitly designed to be reasoner-agnostic . Additional formal reasoning tools can be incorporated without architectural changes, allowing CodeLogician to evolve alongside advances in automated reasoning.

## 1.4 The Missing Benchmark: Mathematical Reasoning About Software

Despite rapid progress in evaluating LLMs, existing benchmarks fall into two largely disconnected categories. Mathematical proof benchmarks evaluate abstract symbolic reasoning over mathematical domains, but do not address reasoning about real-world software behavior. Engineering benchmarks, such as those focused on debugging or patch generation, evaluate practical development skills but do not require precise or exhaustive semantic reasoning.

What is missing is a benchmark that evaluates an LLM's ability to apply mathematical reasoning to executable software logic . To address this gap, we introduce a new benchmark dataset code-logicbench [9] designed to measure reasoning about program state spaces, constraints, decision boundaries, and edge cases. Ground truth is defined via formal modeling and automated reasoning, enabling objective evaluation of correctness, coverage, and boundary precision. The benchmark supports principled comparison between LLM-only reasoning and LLMs augmented with formal reasoning via CodeLogician .

## 1.5 Contributions

This paper makes the following contributions:

- We present CodeLogician , a neuro-symbolic framework that teaches LLM-driven agents to construct formal models of software systems and reason about them using automated reasoning engines.

- We introduce a new benchmark dataset targeting mathematical reasoning about software logic, filling a critical gap between existing mathematical and engineering-oriented benchmarks.

- We provide a rigorous empirical evaluation demonstrating substantial improvements when LLMs are augmented with formal reasoning, compared to LLM-only approaches.

## 2 CodeLogician overview

CodeLogician is a neuro-symbolic, agentic governance framework for AI-assisted software development. It provides a uniform orchestration layer that exposes formal reasoning engines (called 'reasoners'), autoformalization agents, and analysis workflows through consistent interfaces. It supports multiple modes of use, including direct invocation of reasoners, agent-driven auto-formalization, and LLM-assisted utilities for documentation and analysis. It is available as both CLI and server-based workflows-with an interactive TUI that can invoke agents over entire directories-making formal reasoning, verification, and test-case generation accessible across development pipelines.

Rather than competing with LLM-based coding tools, CodeLogician is designed to augment and guide them by supplying mathematically grounded feedback, proofs, counterexamples, and exhaustive behavioral analyses.

At its core, CodeLogician serves as a source of mathematical ground truth . Where LLM-based tools operate probabilistically, CodeLogician anchors reasoning about software behavior in formal logic, ensuring that conclusions are derived from explicit models and automated reasoning rather than statistical inference.

## 2.1 Positioning and Scope

CodeLogician targets reasoning tasks that arise above the level of individual lines of code, where system behavior emerges from the interaction of components, requirements, and environments. Typical application areas include architectural reasoning, multi-system integration, protocol and workflow validation, and functional correctness of critical business logic.

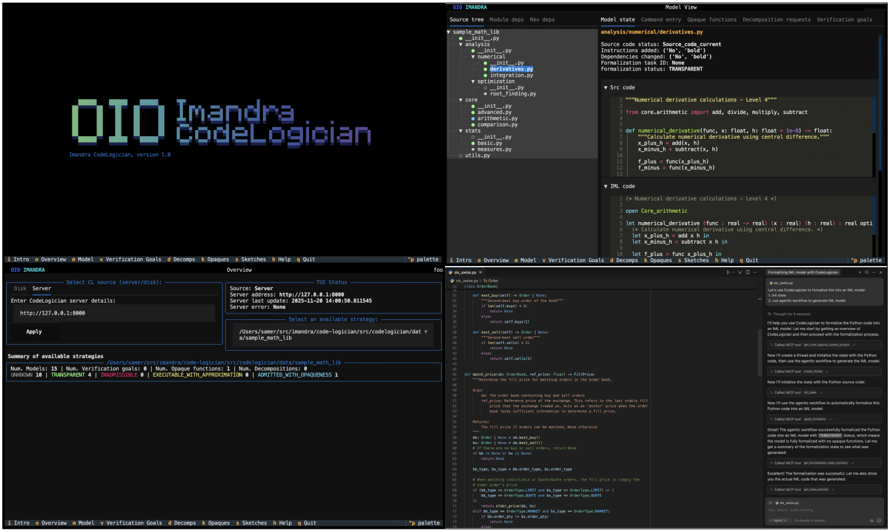

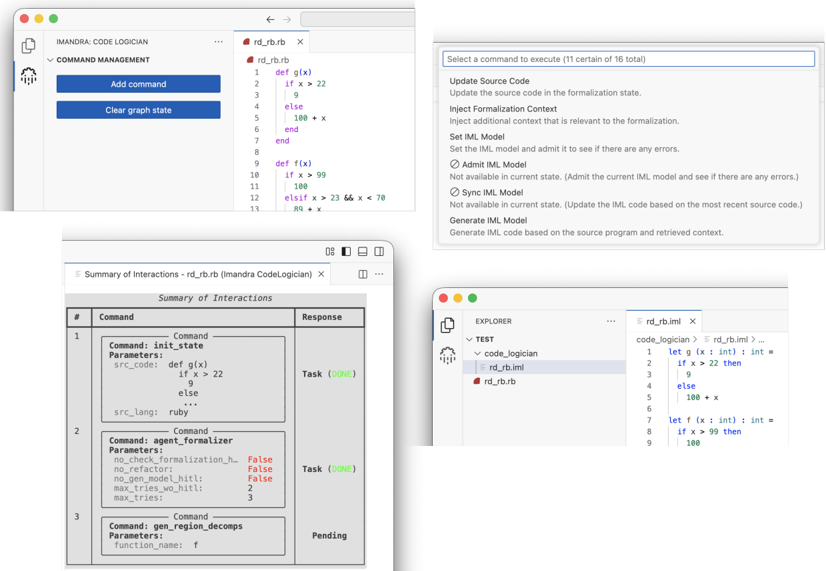

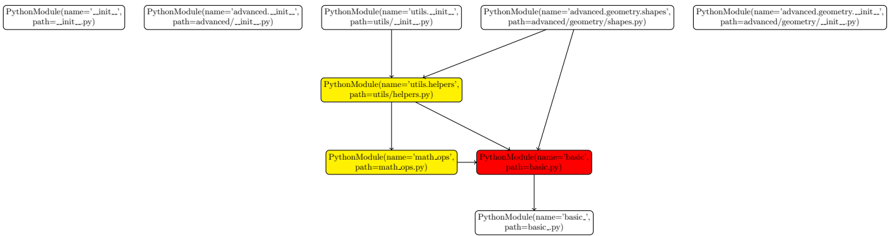

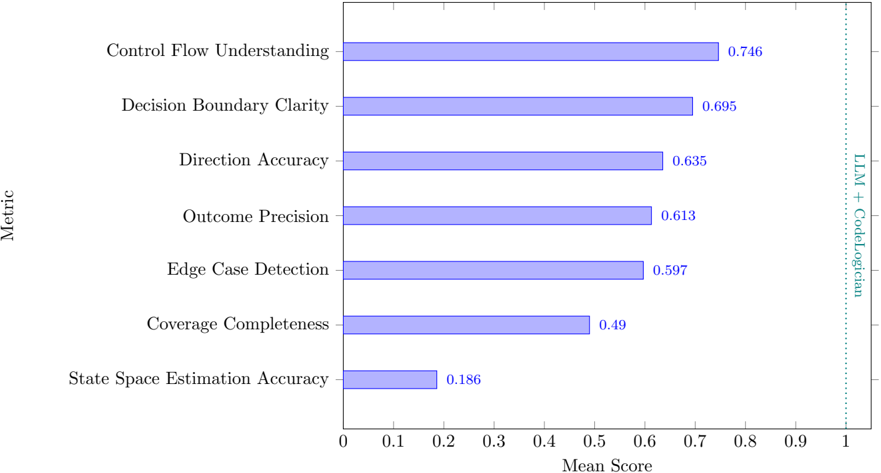

Figure 1: Imandra CodeLogician , neurosymbolic agent and framework for precise reasoning about software logic, with CLI, MCP and VS Code interfaces. Available from www.codelogician.dev .

<details>

<summary>Image 1 Details</summary>

### Visual Description

## IDE Screenshot: Imandra CodeLogician

### Overview

The image is a screenshot of the Imandra CodeLogician IDE, showing multiple panels with code, project structure, server status, and available strategies. It displays a complex software development environment with various tools and information related to formal verification and code analysis.

### Components/Axes

**1. Main IDE Window (Top-Left):**

* **Header:** "DIO IMANDRA CodeLogician, version 1.0" in pixelated font.

* **Source Tree:** Shows the project structure with files and directories.

* Root directory: `sample_math_lib`

* Subdirectories: `analysis`, `core`, `stats`, `utils.py`

* Files: `__init__.py`, `numerical`, `derivatives.py`, `integration.py`, `optimization`, `root_finding.py`, `advanced.py`, `arithmetic.py`, `comparison.py`, `basic.py`, `measures.py`

* **Tabs:** "Source tree", "Module deps", "Rev deps"

**2. Model View Window (Top-Right):**

* **Header:** "Model View"

* **Tabs:** "Model state", "Command entry", "Opaque Functions", "Decomposition requests", "Verification goals"

* **File Path:** `analysis/numerical/derivatives.py`

* **Model State Information:**

* Source code status: `Source_code_current`

* Instructions added: `('No', 'bold')`

* Dependencies changed: `('No', 'bold')`

* Formalization task ID: `None`

* Formalization status: `TRANSPARENT`

* **Src code:** Python code snippet for numerical derivative calculations.

* Includes imports: `from core.arithmetic import add, divide, multiply, subtract`

* Function definition: `def numerical_derivative(func, x: float, h: float = 1e-8) -> float:`

* **DML code:** DML (Declarative Machine Language) code snippet for numerical derivative calculations.

* Includes: `open Core_arithmetic`

* Function definition: `let numerical_derivative func: real -> real -> real -> real -> real -> real opti`

**3. Server Status Window (Bottom-Left):**

* **Header:** "DIO IMANDRA"

* **Tabs:** "Disk", "Server"

* **Server Details:**

* Enter CodeLogician server details: `http://127.0.0.1:8000`

* Apply button

* **Overview:**

* Source: `Server`

* Server address: `http://127.0.0.1:8000`

* Server last update: `2023-11-28 14:00:58.811545`

* Server error: `None`

* **Available Strategy:**

* Path: `/Users/samer/src/imandra/code-logician/src/codelogician/data/sample_math_lib`

* **Summary of Available Strategies:**

* Num. Models: 15

* Num. Verification goals: 1

* Num. Opaque functions: 1

* Num. Decompositions: 0

* TRANSPARENT: 4

* ADMISSIBLE: 0

* EXECUTABLE_WITH_APPROXIMATION: 1

* ADMITTED_WITH_OPAQUENESS: 1

**4. Code Editor Window (Bottom-Center):**

* Displays Python code, likely from one of the files in the project.

* The code appears to define a class `Order` with methods like `next_best_wait`, `next_wait`, `next_sell_wait`.

* Includes comments explaining the purpose of the code.

**5. Formatting Window (Bottom-Right):**

* **Header:** "Formatting IML Model with Codelogician"

* **Content:** Text describing how to use CodeLogician to formalize IML models.

* "Let's use Codelogician to formalize this into an IML model."

* "Use agents workflow to generate IML model."

* "Thought for 4 seconds"

* "I'll help you use Codelogician to formalize the Python code into an IML model. Let me start by getting an overview of CodeLogician and then proceed with the formalization process."

* "Called MCP tool: get_codelogician_overview"

* "Now I'll create a thread and initialize the state with the Python code, then use the agents workflow to generate the IML model."

* "Called MCP tool: new_thread"

* "Now I'll initialize the state with the Python source code."

* "Called MCP tool: init_state"

* "Now I'll use the agents workflow to automatically formalize the Python code into an IML model."

* "Called MCP tool: agents_workflow"

* "Great! The agents workflow successfully formalized the Python code into an IML model with 15 models, which means the model is fully formalized with no opaque functions. Let's get a summary of the formalization state to see what was generated."

* "Called MCP tool: get_formalization_state"

* "Excellent! The formalization was successful. Let me also show you the actual IML code that was generated."

* "Called MCP tool: get_iml_code"

* "ds_swaps.py"

* "import_export_utils"

### Detailed Analysis or Content Details

* **Project Structure:** The project `sample_math_lib` contains modules for analysis, core functionality, and statistics. The `analysis` module includes numerical methods and derivatives.

* **Code Snippets:** The Python code shows numerical derivative calculations using central difference. The DML code provides a declarative representation of the same calculations.

* **Server Status:** The CodeLogician server is running locally at `http://127.0.0.1:8000`.

* **Available Strategies:** The IDE provides strategies for formal verification, including transparent, admissible, executable with approximation, and admitted with opaqueness.

* **Formalization Process:** The formatting window describes the process of using CodeLogician to formalize Python code into an IML model.

### Key Observations

* The IDE is designed for formal verification and code analysis.

* It supports both Python and DML code.

* The IDE provides tools for managing projects, analyzing code, and formalizing models.

* The formalization process involves multiple steps, including getting an overview, creating a thread, initializing the state, and running the agents workflow.

### Interpretation

The screenshot demonstrates the Imandra CodeLogician IDE in action, showcasing its capabilities for formal verification and code analysis. The IDE provides a comprehensive environment for developers to analyze, formalize, and verify their code. The presence of both Python and DML code suggests a hybrid approach to software development, where Python is used for implementation and DML is used for formal specification and verification. The detailed server status and available strategies indicate a focus on ensuring the correctness and reliability of the code. The formatting window provides a step-by-step guide to using CodeLogician, making it accessible to developers with varying levels of expertise.

</details>

While CodeLogician can, in principle, reason about low-level implementation details, its primary value lies in governing system-level behavior. Domains such as memory safety, SQL injection detection, or hardware-adjacent concerns are already well served by specialized static analyzers and runtime tools. CodeLogician complements these point solutions by providing a framework for validating architectural intent, invariants, and cross-component interactions with mathematical completeness.

## 2.2 Extensible Reasoner-Oriented Design

CodeLogician is explicitly designed as a multi-reasoner framework. Its first release integrates the ImandraX automated reasoning engine, which supports executable formal models, proof-oriented verification, and exhaustive state-space analysis. However, the framework is intentionally reasoner-agnostic and is architected to host additional formal tools (e.g., temporal logic model checkers such as TLA+) under the same orchestration and governance layer.

This design allows CodeLogician to evolve alongside advances in formal methods without coupling its user-facing workflows to a single solver, logic, or verification paradigm.

## 2.3 Modes of Operation

CodeLogician exposes its capabilities through several complementary modes of operation, all backed by the same underlying orchestration and reasoning infrastructure.

Direct invocation. In direct mode, users or external tools invoke reasoning engines and agents explicitly. This mode supports fine-grained control over formalization, verification, and analysis steps and is particularly useful for expert users and debugging complex models.

Agent-mediated workflows. In agentic modes, CodeLogician coordinates LLM-driven agents that automatically formalize source code, refine models, generate verification goals, and invoke reasoning backends. These workflows enable end-to-end analysis with minimal human intervention while retaining the ability to request feedback when ambiguities or inconsistencies arise.

Bring-Your-Own-Agent (BYOA). CodeLogician can be used as a reasoning backend for externally hosted LLM agents. In this mode, an LLM interacts with CodeLogician through structured commands, receives formal feedback, and iteratively improves its own formalization behavior. This enables tight integration with existing LLM-based coding assistants while preserving a clear separation between statistical generation and logical reasoning.

Server-based operation. For large-scale or continuous analysis, CodeLogician can be deployed in a server mode that monitors codebases or projects and incrementally updates formal models and analyses in response to changes. This supports use cases such as continuous verification, regression analysis, and long-running agentic workflows.

## 2.4 Governance and Feedback

A central contribution of CodeLogician is its role as a governance layer for AI-assisted development. Rather than merely accepting or rejecting LLM outputs, CodeLogician provides structured, semantically rich feedback grounded in formal reasoning. This includes:

- proofs of correctness when properties hold,

- counterexamples when properties fail,

- explicit characterization of decision boundaries and edge cases,

- quantitative coverage information derived from exhaustive analysis.

This feedback can be consumed by humans, external agents, or LLMs themselves, enabling iterative improvement and learning. In this sense, CodeLogician does not merely analyze code-it shapes how AI systems reason about software over time.

## 2.5 Relationship to the CodeLogician IML Agent

The CodeLogician IML Agent described in Section 5 is one concrete realization of the framework's capabilities. It implements an end-to-end autoformalization pipeline that translates source code into executable formal models and invokes ImandraX for analysis.

More generally, the framework supports multiple agents, reasoning engines, and workflows operating over shared representations and governed by the same orchestration logic. This separation between framework and agents allows CodeLogician to scale across domains, reasoning techniques, and modes of interaction.

## 2.6 Summary

CodeLogician is a unifying framework for integrating LLMs with formal reasoning engines in a principled, extensible manner. By providing orchestration, governance, and structured feedback around automated reasoning, it enables AI-assisted software development to move beyond heuristic reasoning toward mathematically grounded analysis. The framework establishes a firm foundation upon which multiple agents and reasoners can coexist, evolve, and collaborate in the service of rigorous software engineering.

## 3 Overview of ImandraX

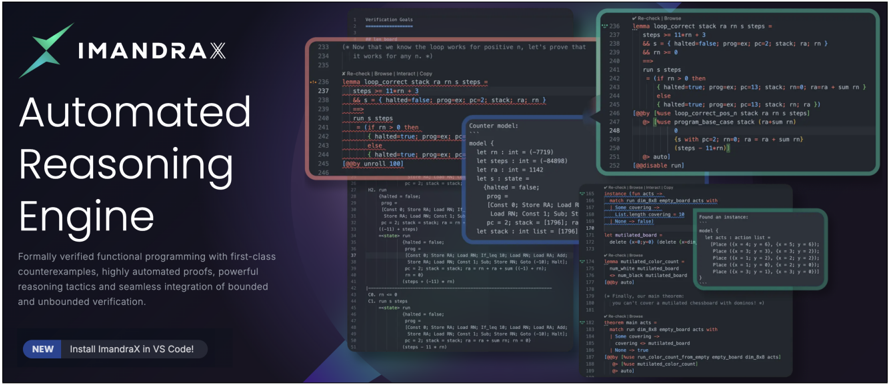

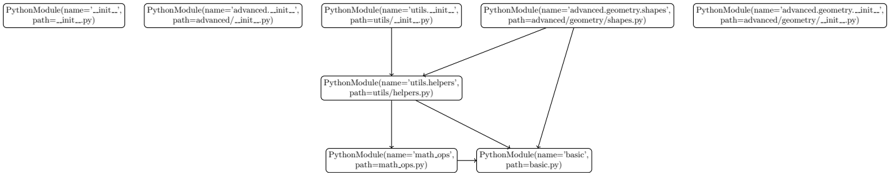

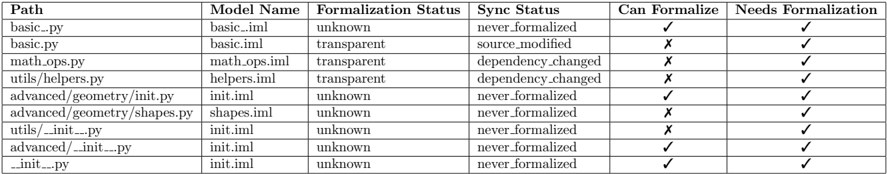

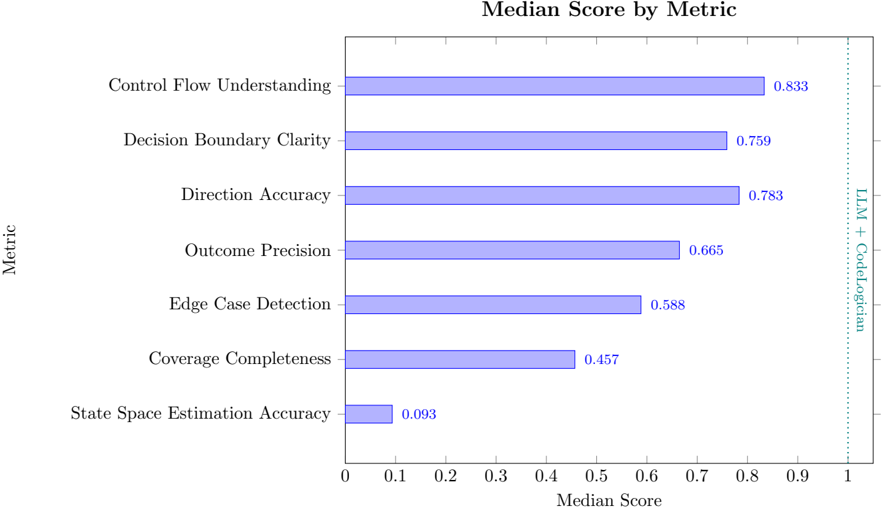

ImandraX (cf. Fig 2) is an automated reasoning engine for analyzing executable mathematical models of software systems. Its input language is the Imandra Modeling Language (IML), a pure functional subset of OCaml augmented with directives for specification and reasoning (e.g., verify , lemma and instance ) [14]. IML models are ordinary programs, but they are also formal models : ImandraX contains a mechanized formal semantics for IML, and thus IML code is both executable code and rigorous mathematical logic to which ImandraX applies automated reasoning.

Figure 2: The ImandraX automated reasoning engine and theorem prover. Interfaces are available for both humans (VS Code) and AI assistants (MCP and CLI), and may be installed from www.imandra.ai/core .

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Screenshot: ImandraX Automated Reasoning Engine

### Overview

The image is a promotional screenshot for ImandraX, an "Automated Reasoning Engine". It showcases the software's capabilities through code snippets and interface elements. The screenshot highlights features like formal verification, counterexample generation, automated proofs, and integration with VS Code.

### Components/Axes

The image is divided into several regions:

1. **Header (Left):** Contains the ImandraX logo and the title "Automated Reasoning Engine". It also includes a brief description of the software's capabilities and a call to action to install ImandraX in VS Code.

2. **Code Snippets (Center & Right):** Displays various code snippets, likely demonstrating the ImandraX's functionality. These snippets are annotated with callouts and highlights.

3. **Interface Elements (Overlaid):** Shows pop-up windows and interface elements, providing a glimpse into the user experience.

### Detailed Analysis or ### Content Details

**1. Header (Left):**

* **Logo:** The ImandraX logo is present at the top-left.

* **Title:** "Automated Reasoning Engine" is prominently displayed.

* **Description:** "Formally verified functional programming with first-class counterexamples, highly automated proofs, powerful reasoning tactics and seamless integration of bounded and unbounded verification."

* **Call to Action:** "NEW Install ImandraX in VS Code!"

**2. Code Snippets (Center):**

* **Verification Goals:** The code snippet starts with a comment indicating "Verification Goals".

* **Loop Correctness:** A section of code focuses on verifying the correctness of a loop.

* Line 233: `(* Now that we know the loop works for positive n, let's prove that it works for any n. *)`

* Line 236: `@Re-check | Browse | Interact | Copy`

* Line 237: `lemma loop_correct stack ra rn s steps =`

* Line 238: `steps == 11*rn + 3`

* Line 239: `&& s == {halted=false; prog_ex: pc=2; stack; ra; rn}`

* Line 240: `&& rn >= 0`

* Line 241: `run s steps`

* Line 242: `(if rn > 0 then`

* Line 243: `{halted=true; prog_ex: pc`

* Line 244: `else`

* Line 245: `{halted=true; prog_ex: pc`

* Line 246: `(by unroll 100)`

* **Counter Model:** A section defines a counter model.

* Line 248: `Counter model:`

* Line 249: `model {`

* Line 250: `let rn : int = (-7719)`

* Line 251: `let steps : int = (-84898)`

* Line 252: `let ra : int = 1142`

* Line 253: `let s : state =`

* Line 254: `(halted = false;`

* Line 255: `prog =`

* Line 256: `[Const 0; Store RA; Load RN;`

* Line 257: `Load RN; Const 1; Sub; St`

* Line 258: `pc = 2; stack = [1796]; ra`

* Line 259: `let stack : int list = [1796]`

**3. Code Snippets (Right):**

* **More Code:**

* Line 236: `@Re-check`

* Line 237: `lemma loop_correct stack ra rn s steps`

* Line 238: `steps == 11*rn + 3`

* Line 239: `&& s == {halted=false; prog_ex: pc=2; stack; ra; rn}`

* Line 241: `run s steps`

* Line 242: `(if rn > 0 then`

* Line 243: `{halted=true; prog_ex: pc=13; stack; rn@; ra@ra = sum rn}`

* Line 244: `else`

* Line 245: `{halted=true; prog_ex: pc=13; stack; rn; ra}`

* Line 246: `(by Nuse loop_correct_pos_n stack ra rn s steps)`

* Line 247: `Nuse program_base_case stack (ra=sum rn)`

* Line 248: `{s with pc=2; rn=0; ra = ra + sum rn}`

* Line 249: `(steps = -11+rn)}`

* Line 251: `@auto`

* Line 252: `@disable run`

**4. Interface Elements (Overlaid):**

* **Instance Search:** A pop-up window shows search results for instances.

* `Re-check | Browse | Interact | Copy`

* `match run dim_8x8 empty_board acts with`

* `Some covering ->`

* `List. length covering = 18`

* `None = false`

* **Mutilated Board:** A section related to a "mutilated board".

* `let mutilated_board =`

* `delete (x-y: x-y-0) (delete`

* **Theorem Main Acts:**

* `Re-check | Browse | Interact | Copy`

* `match run dim_8x8 empty_board acts with`

* `Some covering ->`

* `covering -> mutilated_board`

* `None -> true`

* `(by Nuse run_color_count_from_empty_empty_board acts)`

* **Found an Instance:** Another pop-up window shows a found instance.

* `Found an instance:`

* `model {`

* `let acts : action list =`

* `Place ((x = 4; y = 0), (x = 5; y = 6));`

* `Place ((x = 3; y = 3), (x = 3; y = 2));`

* `Place ((x = 1; y = 2), (x = 2; y = 2));`

* `Place ((x = 1; y = 0), (x = 2; y = 1));`

* `Place ((x = 3; y = 1), (x = 3; y = 0));`

* `}`

### Key Observations

* The code snippets use a functional programming style.

* The interface elements suggest a user-friendly environment for interacting with the reasoning engine.

* The examples focus on loop verification, counter model generation, and board-related problems.

### Interpretation

The screenshot aims to convey the power and ease of use of the ImandraX Automated Reasoning Engine. It highlights the software's ability to formally verify code, generate counterexamples, and automate proofs. The code snippets and interface elements demonstrate the practical application of these features. The focus on specific examples, such as loop verification and board-related problems, suggests the software's versatility. The call to action encourages users to try ImandraX in VS Code, indicating a seamless integration with a popular development environment. Overall, the screenshot presents ImandraX as a valuable tool for developers seeking to improve the reliability and correctness of their code.

</details>

CodeLogician relies on ImandraX as its primary reasoning backend 4 . In the autoformalization workflow (cf. Section 4), LLM-driven agents translate source code into executable IML models, make abstraction choices explicit, and introduce assumptions where required. ImandraX then performs the mathematical analysis of these models, enabling both proof-oriented verification and richer forms of behavioral understanding.

This section summarizes the two ImandraX capabilities most heavily used by CodeLogician : verification goals and state-space region decomposition. As in Section 4, we emphasize that LLMs are used to construct explicit formal mental models, while ImandraX is used to reason about them.

## 3.1 Formal Models in the Imandra Modeling Language (IML)

IML inherits the modeling discipline discussed in Section 4: models are pure (no side effects), statically typed functional programs in ImandraX 's fragment of OCaml, structured to support inductive reasoning modulo decision procedures. Imperative constructs such as loops are represented via recursion, and systems with mutable state are modeled as state machines in which the evolving state is carried explicitly through function inputs and outputs.

The question is therefore not whether a system can be modeled, but at what level of abstraction it should be modeled (Section 4). ImandraX provides analysis capabilities that remain meaningful across these abstraction levels, provided the model is executable and its assumptions are made explicit.

## 3.2 Verification Goals

Formal verification in ImandraX is performed via the statement and analysis of verification goals (VGs): boolean-valued IML functions that encode properties of the system being analyzed. VGs are (typically) implicitly universally quantified: when one proves a theorem in ImandraX , one establishes that a boolean-valued VG is true for all possible inputs. Unlike testing, which evaluates finitely many concrete executions, VGs quantify over (typically) infinite input spaces and yield either: (i) a proof that the property holds universally, or (ii) a counterexample witness demonstrating failure.

As programs and datatypes may involve recursion over structures of arbitrary depth, these proofs may involve induction. A unique feature of ImandraX is its seamless integration of bounded and unbounded verification. Every goal may be subjected to both bounded model checking and full inductive theorem proving. Bounded proofs are automatic based on ImandraX 's recursive function unrolling modulo decision procedures, and typically take place in a setting for which they are complete

4 https://www.imandra.ai/core

```

order1 = { order2 = { order3 = {

peg = NEAR; peg = NEAR; order_type = PEGGED_CI; qty = 8400;

order_type = PEGGED; order_type = PEGGED_CI; qty = 8400;

qty = 1800; qty = 8400; price = 10.0;

price = 10.5; price = 12.0; time = 236;

time = 237; time = 237; ...

... ... };

}; };

```

Figure 3: Counterexample for Order Ranking Transitivity

for counterexamples. ImandraX contains deep automation for constructing inductive proofs, including a lifting of the Boyer-Moore inductive waterfall to ImandraX 's typed, higher-order setting [14]. Moreover, ImandraX contains a rich tactic language for the interactive construction of proofs and counterexamples, and for more general system and state-space exploration.

For example, consider the transitivity of an order ranking function for a trading venue [16]. This may naturally be conjectured as a lemma, which may then be subjected to verification:

```

lemma rank_transitivity side order1 order2 order3 mkt =

order_higher_ranked(side,order1,order2,mkt) &&

order_higher_ranked(side,order2,order3,mkt)

==>

order_higher_ranked(side,order1,order3,mkt)

```

In practice, verification is iterative: counterexamples often reveal missing preconditions, modeling gaps, or specification errors. For example, in Fig. 3 we see a (truncated version of) an ImandraXsynthesized counterexample for rank transitivity of a real-world trading venue [16].

In ImandraX , all counterexamples are 'first-class' objects which may be reflected in the runtime and computed with directly via ImandraX 's eval directive. This unified computational environment for programs, conjectures, counterexamples and proofs is important for the analysis of real-world software. Verification goals should immediately be amenable to automated analysis, counterexamples for false goals should be synthesized efficiently, and these counterexamples should be available for direct execution through the system under analysis, enabling rapid triage and understanding for goal violations and false conjectures. This use of counterexamples aligns with the autoformalization perspective: constructing a good formal model is a refinement process in which assumptions and intended semantics are progressively made explicit, and counterexamples to conjectures can rapidly help one to fix faulty logic and recognize missing assumptions.

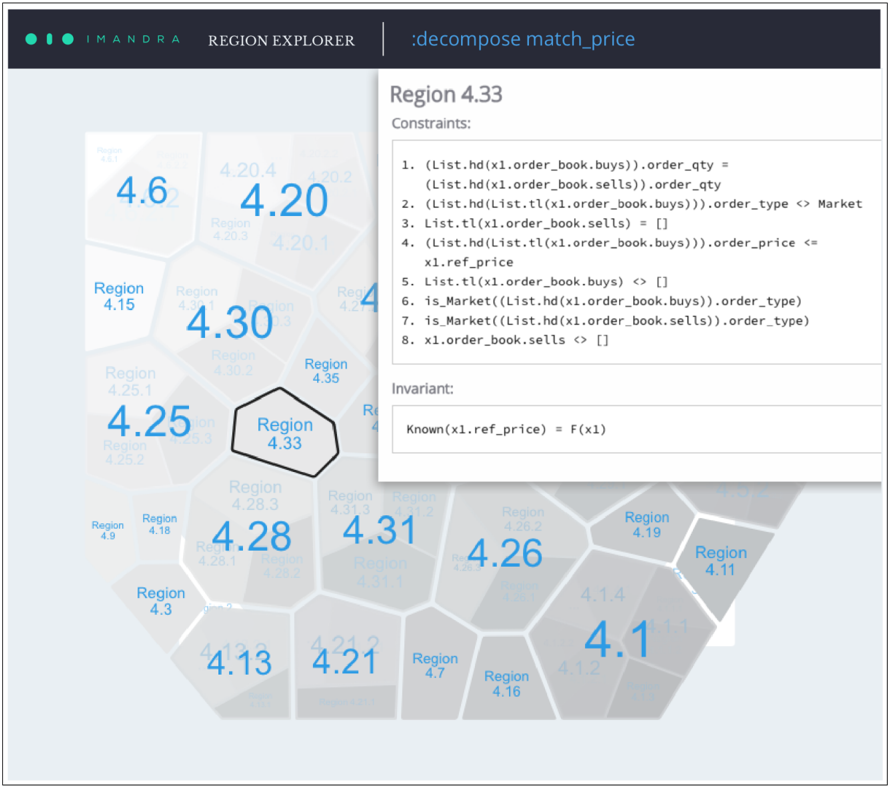

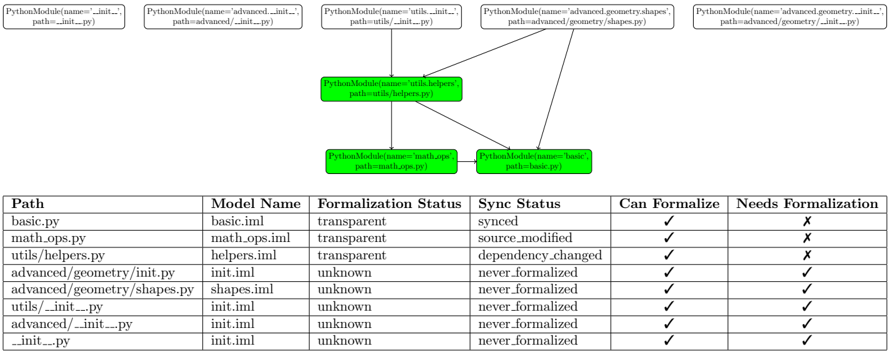

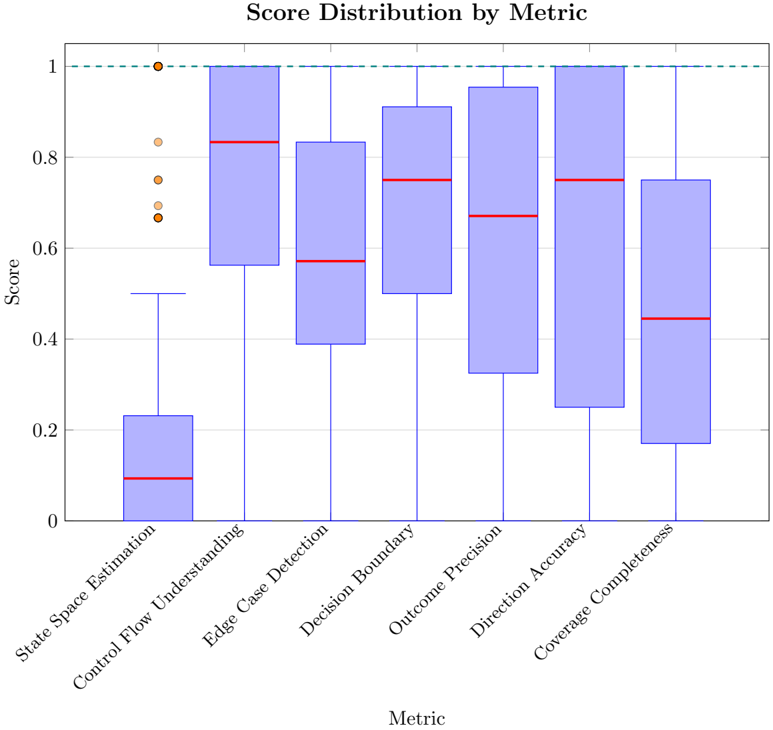

## 3.3 Region Decomposition

Many questions central to program understanding are not naturally expressed as binary VGs. To support richer semantic analysis, ImandraX provides region decomposition , which partitions a function's input space into finitely many symbolic regions corresponding to distinct behaviors.

Each region comprises:

- constraints over inputs that characterize when execution enters the region,

- an invariant result describing the output (or a symbolic characterization of it) for all inputs satisfying the constraints, and

- sample points witnessing satisfiable instances of the constraints.

Region decomposition provides a mathematically precise notion of 'edge cases' and directly supports the systematic test generation goals described in Section 4.

ImandraX decomposes function into regions corresponding to the distinct path conditions induced by their control flow, each with explicit constraints and invariant outputs. These decompositions may be performed relative to logical side-conditions and configurable abstraction boundaries called basis functions to tailor state-space exploration for a particular goal. In real software systems, small

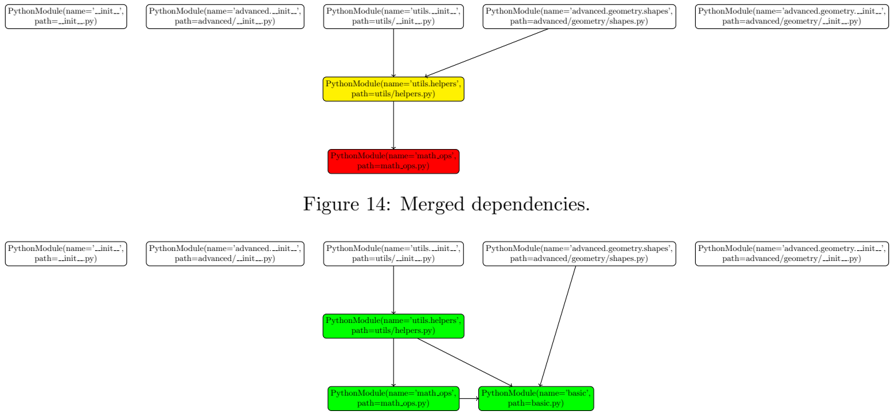

Figure 4: A principal region decomposition of a trading venue pricing function

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Region Explorer: Decompose Match Price

### Overview

The image shows a visualization of regions with associated numerical values, likely representing some kind of data analysis or clustering. A panel on the right displays constraints and invariants for a selected region (4.33). The regions are spatially arranged and labeled with numerical identifiers.

### Components/Axes

* **Header:** Contains "IMANDRA REGION EXPLORER" on the left and ":decompose match\_price" on the right.

* **Main Chart:** A map-like structure composed of irregular polygons, each representing a region. Each region is labeled with a numerical identifier (e.g., "Region 4.33") and a numerical value (e.g., "4.33").

* **Right Panel:** Displays information about the selected region (Region 4.33). It includes "Constraints" and "Invariant" sections.

### Detailed Analysis or Content Details

**Region Map:**

The region map consists of several regions, each labeled with a "Region X.XX" identifier and a corresponding numerical value. The regions are arranged spatially, and their sizes vary. Here's a breakdown of the visible regions and their values:

* Region 4.6: Located in the top-left corner.

* Region 4.20: Located near the top-center. Sub-regions 4.20.4, 4.20.3, 4.20.2, and 4.20.1 are nearby.

* Region 4.15: Located to the left of Region 4.30.

* Region 4.30: Located in the upper-center area. Sub-regions 4.30.1 and 4.30.2 are nearby.

* Region 4.25: Located below Region 4.15. Value is 4.25. Sub-regions 4.25.1, 4.25.2, and 4.25.3 are nearby.

* Region 4.35: Located to the right of Region 4.30.

* Region 4.33: Located in the center, highlighted with a thicker border.

* Region 4.28: Located below Region 4.33. Value is 4.28. Sub-regions 4.28.1, 4.28.2, and 4.28.3 are nearby.

* Region 4.31: Located to the right of Region 4.28. Value is 4.31. Sub-regions 4.31.1, 4.31.2, and 4.31.3 are nearby.

* Region 4.26: Located to the right of Region 4.31. Value is 4.26. Sub-regions 4.26.1 and 4.26.2 are nearby.

* Region 4.9: Located in the bottom-left corner.

* Region 4.18: Located above Region 4.9.

* Region 4.3: Located to the left of Region 4.13.

* Region 4.13: Located in the bottom-left area.

* Region 4.21: Located to the right of Region 4.13. Value is 4.21. Sub-region 4.21.2 is nearby.

* Region 4.7: Located to the right of Region 4.21.

* Region 4.16: Located below Region 4.7.

* Region 4.19: Located to the right of Region 4.26.

* Region 4.11: Located to the right of Region 4.19.

* Region 4.1: Located in the bottom-right corner. Sub-regions 4.1.1, 4.1.2, and 4.1.4 are nearby.

**Right Panel - Region 4.33:**

* **Constraints:** A list of logical constraints, expressed in a formal language, likely related to order book data.

1. (List.hd(x1.order\_book.buys)).order\_qty = (List.hd(x1.order\_book.sells)).order\_qty

2. (List.hd(List.tl(x1.order\_book.buys))).order\_type <> Market

3. List.tl(x1.order\_book.sells) = \[\]

4. (List.hd(List.tl(x1.order\_book.buys))).order\_price <= x1.ref\_price

5. List.tl(x1.order\_book.buys) <> \[\]

6. is\_Market((List.hd(x1.order\_book.buys)).order\_type)

7. is\_Market((List.hd(x1.order\_book.sells)).order\_type)

8. x1.order\_book.sells <> \[\]

* **Invariant:** A statement of invariance, also expressed in a formal language.

* Known(x1.ref\_price) = F(x1)

### Key Observations

* The regions are spatially organized, suggesting a relationship between their values and their positions.

* Region 4.33 is highlighted, indicating it is the currently selected or focused region.

* The constraints and invariants provide a formal specification of the properties of Region 4.33.

* The constraints involve comparisons of order quantities, order types, and order prices, suggesting the data relates to a financial market or trading system.

### Interpretation

The image represents a system for analyzing and decomposing match prices in a financial market or similar domain. The region map provides a visual representation of different price levels or market states, while the constraints and invariants define the logical rules that govern the behavior of the selected region. The "decompose match\_price" label suggests that the system is used to break down the components of a match price and understand the factors that influence it. The constraints likely represent conditions that must be satisfied for a match to occur in that region, while the invariant represents a property that remains constant within that region. The system allows users to explore different regions and understand their specific characteristics.

</details>

boundary differences (e.g., a flipped inequality) often lead to qualitatively different outcomes; region decomposition isolates these differences and makes the boundaries of logically invariant behavior explicit and auditable.

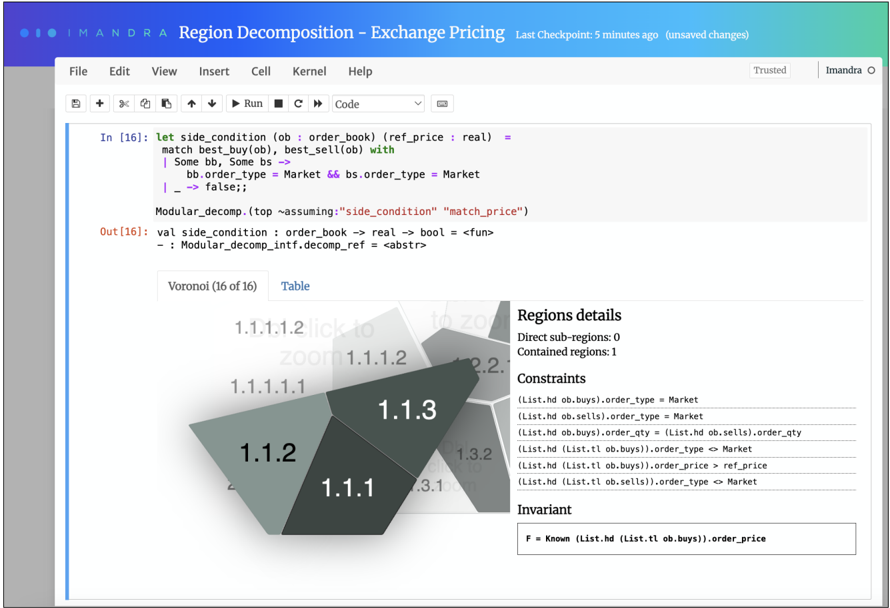

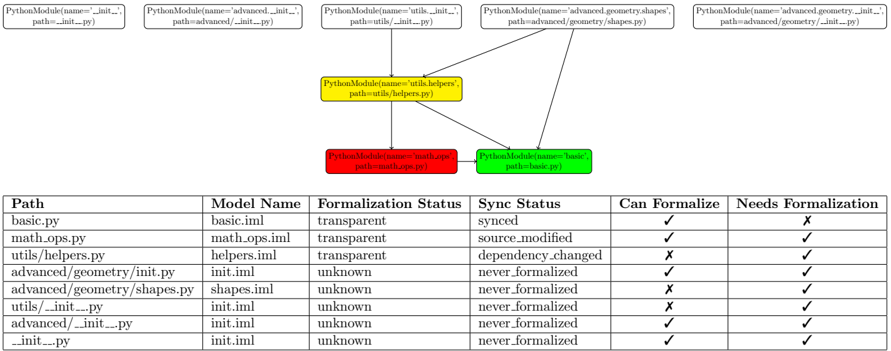

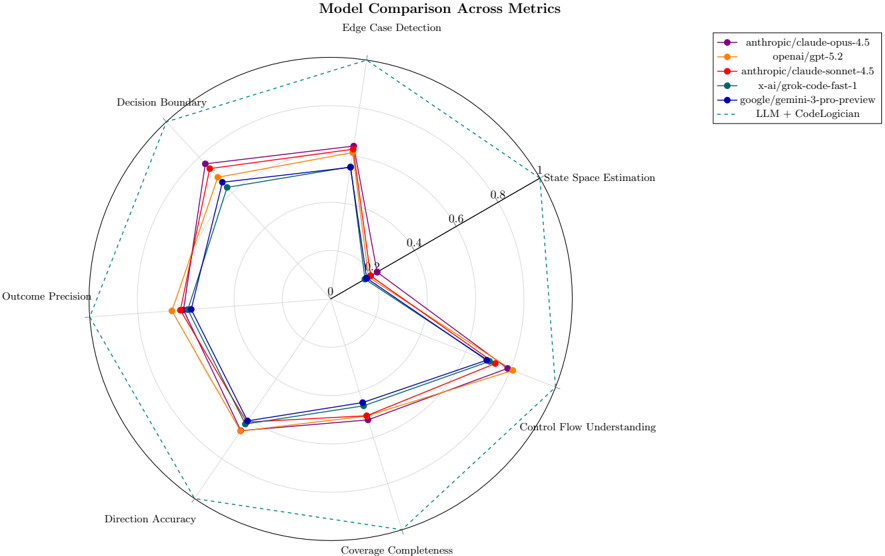

## 3.4 Focusing Decomposition: Side Conditions and Basis

Autoformalization often produces models whose full state spaces are too large-or simply too broadfor a given analysis objective. ImandraX provides two complementary mechanisms to focus decomposition, mirroring the abstraction discipline in Section 4.

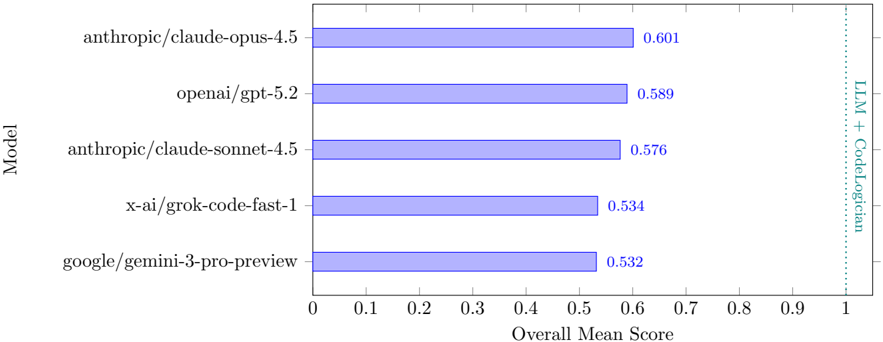

Side conditions. A side condition is a boolean predicate with the same signature as the decomposed function. When supplied, ImandraX explores only regions for which the side condition holds. This is useful for restricting analysis to scenarios of operational or regulatory interest. Figure 5 illustrates a region decomposition query augmented with a side-condition.

Basis functions. A basis specifies functions to be treated as atomic during decomposition. ImandraX will not explore their internal branching structure, reducing the number of regions and improving interpretability. Basis selection provides a principled way to 'abstract away' auxiliary computations while retaining their influence on the surrounding model.

Figure 5: A refined decomposition of the venue pricing function with a side-condition

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Voronoi Diagram: Region Decomposition - Exchange Pricing

### Overview

The image presents a screenshot of a software interface, likely related to financial modeling or algorithmic trading, featuring a Voronoi diagram, code snippets, and region details. The diagram visually represents a region decomposition, while the code defines a side condition and a modular decomposition. Constraints and invariants are also listed.

### Components/Axes

* **Header:** "IMANDRA Region Decomposition - Exchange Pricing" with "Last Checkpoint: 5 minutes ago (unsaved changes)" and "Trusted" and user "Imandra O"

* **Menu Bar:** "File Edit View Insert Cell Kernel Help"

* **Code Section:** Contains code snippets in a functional programming language (likely OCaml or similar). Includes definitions for `side_condition` and `Modular_decomp`.

* **Voronoi Diagram:** A diagram composed of several polygonal regions, each labeled with a numerical identifier (e.g., 1.1.1, 1.1.2, 1.1.3). The diagram is labeled "Voronoi (16 of 16)".

* **Regions Details:** A section providing information about the selected region. Includes "Direct sub-regions: 0" and "Contained regions: 1".

* **Constraints:** A list of constraints related to order types, quantities, and prices.

* **Invariant:** A statement of an invariant condition.

### Detailed Analysis or Content Details

**Code Section:**

* `In [16]: let side_condition (ob : order_book) (ref_price : real) =`

`match best_buy(ob), best_sell(ob) with`

`| Some bb, Some bs ->`

`bb.order_type = Market && bs.order_type = Market`

`| _ -> false;;`

* `Modular_decomp.(top ~assuming:"side_condition" "match_price")`

* `Out [16]: val side_condition : order_book -> real -> bool = <fun>`

`- : Modular_decomp_intf.decomp_ref = <abstr>`

**Voronoi Diagram:**

The diagram is composed of several regions, each with a label. The labels and approximate relative sizes are:

* 1.1.1: Darkest region, bottom-right.

* 1.1.2: Medium-dark region, left of 1.1.1.

* 1.1.3: Light region, top-right of 1.1.1.

* 1.1.1.1: Very light region, top-left of 1.1.1.

* 1.1.1.1.1: Very light region, top-left of 1.1.1.1.

* 1.1.1.1.2: Very light region, above 1.1.1.1.1.

* 1.3.2: Light region, right of 1.1.3.

* 2.2: Light region, above 1.1.3.

* 3.1: Light region, right of 1.1.1.

**Regions Details:**

* Direct sub-regions: 0

* Contained regions: 1

**Constraints:**

* `(List.hd ob.buys).order_type = Market`

* `(List.hd ob.sells).order_type = Market`

* `(List.hd ob.buys).order_qty = (List.hd ob.sells).order_qty`

* `(List.hd (List.tl ob.buys)).order_type = Market`

* `(List.hd (List.tl ob.buys)).order_price > ref_price`

* `(List.hd (List.tl ob.sells)).order_type = Market`

**Invariant:**

* `F = Known (List.hd (List.tl ob.buys)).order_price`

### Key Observations

* The code defines a `side_condition` function that checks if the order types of the best buy and best sell orders are both "Market".

* The Voronoi diagram visually partitions the space based on some criteria, likely related to the conditions defined in the code.

* The constraints specify relationships between order types, quantities, and prices, suggesting a system for managing and validating orders.

* The invariant states that the order price is known, implying that the system has a way to determine or track the price of orders.

### Interpretation

The image represents a system for analyzing and managing exchange pricing using region decomposition. The Voronoi diagram likely visualizes different regions or states in the order book, with the code defining the conditions and constraints that govern these regions. The constraints ensure that orders meet certain criteria, while the invariant provides a guarantee about the order price. This system could be used for algorithmic trading, risk management, or market surveillance. The "Region Decomposition" likely refers to partitioning the order book state space into regions with similar properties, allowing for more efficient analysis and decision-making. The "Exchange Pricing" aspect suggests that the system is focused on understanding and predicting price movements in the exchange.

</details>

Together, side-conditions and basis functions provide a rich query language for targeting the analysis of system state spaces towards particular classes of behaviors [11, 12].

## 3.5 Test Generation from Regions

Each region produced by decomposition includes sample points satisfying its constraints. These witnesses can be extracted to generate tests that correspond directly to semantic distinctions in program behavior, rather than to ad hoc input distributions. In CodeLogician , this capability underpins automated test generation: agents use regions to produce tests that cover all behavioral cases discovered by decomposition, consistent with the 'exhaustive coverage' objective motivating autoformalization.

## 3.6 Summary

ImandraX provides the formal reasoning substrate for CodeLogician . Verification goals support prooforiented analysis, while region decomposition exposes the structure of program state spaces, makes decision boundaries explicit, and enables systematic test-case generation. Together with the autoformalization process (Section 4), these capabilities allow LLM-driven agents to construct formal mental models of software and to answer rich semantic questions beyond binary verification outcomes.

## 4 Autoformalization of source code

Autoformalization is the process of translating executable source code into precise, mathematical models suitable for automated reasoning. While this translation is partially automated by CodeLogician, it relies on a particular way of thinking about software-one that emphasizes explicit state, clear abstraction boundaries, and well-defined assumptions. Just as developers strive to write clear code , effective automated reasoning depends on writing or synthesizing clear formal models .

## 4.1 Pure Functional Modeling

All formal models produced by CodeLogician are expressed in the Imandra Modeling Language (IML), a pure functional subset of OCaml. Purity here means the absence of side effects: functions cannot mutate global state or influence values outside their scope. Instead, all state changes must be made explicit via function arguments and return values.

This restriction is not a fundamental limitation. Programs with side effects can be systematically transformed into state-machine models in which the evolving program state is explicitly carried through computations. Such techniques are well established in the literature and trace back to foundational work by Boyer and Moore [4, 3]. For example, this (infinite) state-machine model approach underlies successful formal verification of highly complex industrial systems, including large-scale financial market infrastructure [16, 15], complex hardware models [17], robotic controllers integrating deep neural networks [6], and high-frequency communication protocols [10].

## 4.2 Key Modeling Constructs

Several structural features of IML are particularly important for automated reasoning:

Static Types IML is a statically typed functional language. All variable types are determined before execution and cannot change dynamically. This eliminates entire classes of ambiguity present in dynamically typed languages. During autoformalization, CodeLogician extracts type information from source programs-using static annotations where available and inference otherwise-and enforces type consistency in the generated models. Type errors detected by ImandraX often correspond directly to logical inconsistencies in the original program.

Recursion and Induction Loops in imperative source code are rewritten as recursive functions. Both for and while constructs are mapped to structurally recursive definitions. This transformation is essential because reasoning about unbounded iteration requires inductive reasoning, which is naturally expressed over recursive functions.

State Machines Many real-world programs are best modeled as state machines. These machines are typically infinite-state, as the state may include complex data structures such as lists, maps, or trees in addition to numeric values. State-machine modeling is particularly effective for representing code with side effects and for capturing the behavior of complex reactive systems, such as trading platforms or protocol handlers.

## 4.3 Abstraction Levels

A central question in autoformalization is not merely whether a program can be modeled, but at what level of abstraction it should be modeled . The appropriate abstraction depends on the properties one wishes to analyze.

We distinguish three broad abstraction levels:

High-Level Models High-level models typically arise from domain-specific languages (DSLs) designed to describe complex systems concisely. In such cases, direct use of CodeLogician is often unnecessary. Instead, the DSL itself is translated into IML. For example, the Imandra Protocol Language (IPL) provides a compact notation for symbolic state-transition systems and compiles into IML with significant structural expansion. Reasoning at this level focuses on system-wide invariants and high-level behavioral properties.

Mid-Level Models Application-level software represents the primary target for CodeLogician. At this level, source programs can be translated directly into IML without introducing an intermediate DSL. This category includes most business logic, data-processing pipelines, and control-heavy application code.

Low-Level Models Low-level code, such as instruction sequences or hardware-near implementations, can also be modeled in ImandraX, but doing so requires an explicit formalization of the execution environment (e.g., memory model, virtual machine, or instruction semantics). Such models are outside the intended scope of CodeLogician, which assumes access to semantic abstractions of the underlying execution platform.

## 4.4 Dealing with Unknowns

Real-world programs inevitably rely on external libraries, services, or system calls. Autoformalization therefore requires explicit handling of unknown or partially specified behavior. CodeLogician adopts techniques analogous to mocking in software testing, but within a formal reasoning framework.

## 4.4.1 Opaque Functions

IML supports opaque functions , which declare a function's type without specifying its implementation. Opaque functions allow models to type-check and support reasoning without making any assumptions about the function's internal behavior.

For example, calls to external libraries such as pseudo-random number generators are introduced as opaque functions during autoformalization. Reasoning about code that depends on such functions remains sound, but necessarily conservative.

## 4.4.2 Axioms and Assumptions

To refine reasoning about opaque functions, users (or agents) may introduce axioms that constrain their behavior. These axioms encode assumptions about external components, such as value ranges or monotonicity properties. Introducing axioms narrows the set of possible behaviors considered during reasoning and can significantly strengthen the conclusions that can be drawn.

Importantly, axioms are explicit and local: reasoning results are valid only under the stated assumptions, making the modeling process auditable and transparent.

## 4.4.3 Approximation Functions

In many cases, opaque functions can be replaced with sound approximations. For common mathematical functions, CodeLogician provides libraries of approximation functions, such as bounded Taylorseries expansions for trigonometric operations. These approximations allow models to remain executable and enable test generation, while still supporting rigorous reasoning within known bounds.

## 4.5 Summary

Autoformalization is not merely a syntactic translation process. It is a disciplined approach to modeling software behavior in which state, control flow, assumptions, and abstraction boundaries are made explicit. By combining LLM-driven translation with principled formal modeling techniques, CodeLogician enables automated reasoning engines to answer deep semantic questions about software behavior that are inaccessible to LLMs alone.

## 5 CodeLogician IML Agent

CodeLogician is a neuro-symbolic agent framework designed to enable Large Language Models (LLMs) to reason rigorously about software systems. Rather than asking LLMs to reason directly about source code, CodeLogician teaches LLM-driven agents to construct explicit formal models in mathematical logic and to delegate semantic reasoning to automated reasoning engines.

The current implementation integrates the ImandraX automated reasoning engine together with static analysis tools and auxiliary knowledge sources within an agentic architecture built on LangGraph. CodeLogician exposes both low-level programmatic commands and high-level agentic workflows, allowing users and external agents to flexibly combine automated reasoning with guided interaction. When required information is missing or inconsistencies are detected, the agent can request human

feedback, enabling mixed-initiative refinement. The framework can also be embedded into external agent systems via a remote graph API.

## 5.1 From Source Code to Formal Models

At the core of CodeLogician lies the process of autoformalization (Section 4). Given a source program written in a conventional programming language (e.g., Python), the agent incrementally constructs an executable formal model in the Imandra Modeling Language (IML).

Autoformalization is not a single translation step, but a structured refinement process that involves:

- extracting structural, control-flow, and type information from the source program,

- refactoring imperative constructs into pure functional representations,

- making implicit assumptions explicit (e.g., about external libraries or partial functions),

- synthesizing an IML model that is admissible to the reasoning engine.

The resulting model is functionally equivalent to the source program at the chosen level of abstraction and suitable for mathematical analysis by ImandraX.

## 5.2 Verification Goals and Logical Properties

Once a formal model has been constructed, CodeLogician assists in formulating verification goals (VGs). A verification goal is a boolean-valued predicate over the model that expresses a property expected to hold for all possible inputs. Unlike traditional testing, verification goals quantify symbolically over infinite input spaces and admit mathematical proof or counterexample.

For example, consider a ranking function used in a financial trading system. A fundamental correctness requirement is transitivity: if one order ranks above a second, and the second ranks above a third, then the first must rank above the third. Such properties are expressed directly as verification goals in IML and discharged by ImandraX.

When a verification goal does not hold, ImandraX produces a counterexample witness. These counterexamples are concrete executions that violate the property and often expose subtle logical flaws that are difficult to detect through testing or informal inspection. In industrial case studies, this approach has uncovered violations of core invariants in production systems that would otherwise remain hidden.

## 5.3 Beyond Yes/No Verification

While the proof or refutation of a verification goal determines whether or not a property is true , many forms of program understanding require richer, non-binary kinds of semantic information. CodeLogician therefore relies not only on formal verification, but also on exhaustive behavioral analysis techniques provided by ImandraX.

In particular, CodeLogician uses state-space exploration to identify decision boundaries, semantic edge cases, and invariant behaviors. These capabilities enable explanations, documentation, and test generation that go far beyond yes/no verification and are central to CodeLogician's value proposition.

## 5.4 Agent Architecture and State

CodeLogician is implemented as a stateful agent whose execution is organized around an explicit agent state . This state captures all artifacts relevant to the reasoning process and evolves as the agent performs analysis and refinement.

The agent state includes:

- the original source program,

- extracted formalization metadata (types, control flow, assumptions),

- the generated IML model,

- the model's admission and executability status,

- verification goals, decomposition requests, and related artifacts.

Agent operations fall into three broad categories:

- State transformations , which update the agent state explicitly (e.g., modifying the source program or model);

- Agentic workflows , which orchestrate multi-step processes such as end-to-end autoformalization and analysis;

- Low-level commands , which perform individual actions such as model admission, verification, or decomposition.

This separation allows users and external agents to mix automated and guided reasoning as appropriate.

## 5.5 Model Refinement and Executability

Autoformalization proceeds as an iterative refinement process. The immediate objective is to produce a model that is admissible to ImandraX, meaning that it is well-typed and free of missing definitions. A further objective is to make the model executable , eliminating opaque functions either by implementation or by sound approximation.

These two stages correspond to:

- Admitted models , which can be verified but may contain unresolved abstractions;

- Executable models , which support full behavioral analysis and test generation.

This refinement process mirrors established practices in model-based software engineering and digital twinning. By working with an executable mathematical model, CodeLogician enables rigorous reasoning about systems whose direct analysis in the source language would be impractical.

## 5.6 Assessing Model Correctness

A natural question arises when constructing formal models: how do we know the model is correct? CodeLogician adopts a notion of correctness based on functional equivalence at the chosen abstraction level. Two programs are considered equivalent if they produce the same observable outputs for the same inputs within the modeled domain.

Exhaustive behavioral analysis plays a central role in this assessment. By enumerating all distinct behavioral regions of the model, CodeLogician provides a structured and auditable view of program behavior. This makes mismatches between intended and modeled semantics explicit and actionable.

## 5.7 Region decomposition, test case generation, and refinement

The final stage is where Imandra shows its power. We perform region decomposition on the IML code to obtain a finite number of symbolically described regions such that (a) the union of all regions covers the entire state-space, and (b) in each region, the behavior of the system is invariant.

Consider the following IML code that models order discount logic:

```

type priority = Standard | Premium

type order = { amount: int; customer: priority }

let discount = fun o ->

match o.customer with

| Premium -> if o.amount > 100 then 20 else 10

| Standard -> if o.amount > 100 then 10 else 0

```

Region decomposition partitions the input space into four distinct regions based on customer type and order amount. For each region, ImandraX provides concrete witness values that satisfy the region's constraints. To bridge the gap between these symbolic results and executable test cases, we developed imandrax-codegen , an open-source code generation tool. Given the decomposition artifacts, the tool automatically generates code including type definitions and test cases. We use Python as the target language in this example; support for additional languages is planned:

```

@dataclass

class order:

amount: int

customer: priority

@dataclass

class Standard:

pass

@dataclass

class Premium:

pass

priority = Standard | Premium

def test_1():

"""test_1

- invariant: 0

- constraints:

- not (o.customer = Premium)

- o.amount <= 100

"""

result: int = discount(o=order(0, Standard()))

expected: int = 0

assert result == expected

def test_2():

"""test_2

- invariant: 10

- constraints:

- not (o.customer = Premium)

- o.amount >= 101

"""

result: int = discount(o=order(101, Standard()))

expected: int = 10

assert result == expected

# ... test_3, test_4 are omitted for brevity

```

This workflow ensures that we have test cases that cover all possible behavioral regions of the system-in this example, all four combinations of customer priority and order amount threshold.

The entire pipeline is configurable, allowing for a balance between automation and human intervention. For example, the user can choose to double-check refactored code in intermediate steps, provide additional human feedback on error messages or let the agent handle it by itself, etc. And as mentioned in the previous section, FDB provides critical RAG support in all stages of the pipeline.

## 5.8 Interfaces

CodeLogician exposes both input and output interfaces designed to be simultaneously user-friendly and programmatically accessible. The interfaces follow a simple input schema paired with a rich, structured output format, enabling use in interactive development environments as well as integration into larger agent-based systems.

The input interface accepts source programs (currently Python) as textual input, together with optional configuration parameters that control the autoformalization and reasoning process. This minimal input design allows CodeLogician to be invoked uniformly from command-line tools, IDEs, or external agents.

The output interface is structured using Pydantic models, providing strong validation, typing, and serialization guarantees. The output schema captures detailed artifacts produced during each reasoning

attempt, including:

- generated IML models,

- compilation and admission results,

- verification outcomes and counterexamples,

- region decomposition results and associated metadata.

This structured representation makes CodeLogician suitable both for standalone use and as a component within larger neuro-symbolic pipelines.

CodeLogician also exposes configuration parameters that control the degree of automation and human intervention. Parameters such as confirm\_refactor , decomp , max\_attempts , and max\_attempts\_wo\_human\_feedback allow users to trade off full automation against mixed-initiative interaction, depending on the complexity and criticality of the target code.

## 5.8.1 Programmatic Access via python RemoteGraph

CodeLogician can be directly integrated with other LangGraph agents using the RemoteGraph interface. By specifying the agent's URI within Imandra Universe and supplying an API key, external agents can invoke CodeLogician as a remote reasoning component. This enables composition with other agentic workflows, including multi-agent systems and tool-augmented LLM pipelines. In addition to programmatic access, CodeLogician is also available through a web-based chat interface in Imandra Universe.

## 5.8.2 VS Code Extension

CodeLogician is distributed with a free Visual Studio Code (VS Code) extension [8], providing a lightweight and accessible interface for interactive use. The extension communicates with the CodeLogician agent via the RemoteGraph interface and exposes core commands through the VS Code command palette.

The extension is built around a command-queue abstraction that allows users to sequence multiple operations-such as autoformalization, verification, and decomposition-before execution. It supports simultaneous formalization of multiple source files and provides visibility into the agent's internal state, including generated models and reasoning artifacts.

Figure 6 illustrates the VS Code extension's command management system, state viewer, and an example IML model produced by CodeLogician.

## 5.9 Summary

The CodeLogician IML agent operationalizes the neuro-symbolic approach advocated in this paper. LLMs are used to construct formal mental models of software systems, while automated reasoning engines provide mathematically precise analysis. By combining autoformalization, verification goals, and exhaustive behavioral analysis within an agentic framework, CodeLogician enables rigorous reasoning about real-world software systems beyond the capabilities of LLM-only approaches.

## 6 CodeLogician Server

The CodeLogician server provides a persistent backend for project-wide formalization and analysis. It manages formalization tasks, maintains analysis state across sessions, and serves multiple client interfaces (TUI and CLI). Unlike one-shot invocation modes, the server is designed for continuous and incremental operation: it caches results, reacts to code changes, and recomputes only the minimal set of affected artifacts required to keep formal models consistent with the underlying code base.

Figure 6: CodeLogician VS Code extension, showing command management, agent state visualization, and a generated IML model.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Software Interface: Imandra Code Logician

### Overview

The image shows a software interface, likely for a tool named "Imandra Code Logician." It displays various panels related to code analysis, command execution, and interaction summaries. The interface includes code editors, command management options, and a summary of interactions.

### Components/Axes

* **Top-Left Panel:**

* Header: "IMANDRA: CODE LOGICIAN"

* Section: "COMMAND MANAGEMENT"

* Buttons: "Add command", "Clear graph state"

* **Top-Center Panel:**

* File Name: "rd_rb.rb"

* Code Editor: Contains Ruby code defining functions `g(x)` and `f(x)`.

* **Top-Right Panel:**

* Title: "Select a command to execute (11 certain of 16 total)"

* List of Commands: "Update Source Code", "Inject Formalization Context", "Set IML Model", "Admit IML Model" (Not available in current state), "Sync IML Model" (Not available in current state), "Generate IML Model"

* **Bottom-Left Panel:**

* Title: "Summary of Interactions - rd_rb.rb (Imandra CodeLogician)"

* Table: "Summary of Interactions" with columns "#", "Command", and "Response".

* **Bottom-Right Panel:**

* Explorer: Shows a file tree with "TEST" as the root, containing "code_logician", "rd_rb.iml", and "rd_rb.rb".

* Code Editor: Displays the content of "rd_rb.iml", which contains code defining functions `g(x)` and `f(x)` using a syntax similar to OCaml.

### Detailed Analysis

**Top-Left Panel:**

* The "COMMAND MANAGEMENT" section provides buttons to "Add command" and "Clear graph state".

**Top-Center Panel:**

* The file "rd_rb.rb" contains the following Ruby code:

```ruby

def g(x)

if x > 22

9

else

100 + x

end

end

def f(x)

if x > 99

100

elsif x > 23 && x < 70

89 + x

end

end

```

**Top-Right Panel:**

* The command selection panel indicates that 11 out of 16 commands are available.

* The "Admit IML Model" and "Sync IML Model" commands are "Not available in current state".

**Bottom-Left Panel:**

* The "Summary of Interactions" table has the following entries:

* **Row 1:**

* Command: `init_state`

* Parameters:

* `src_code`: `def g(x) if x > 22 9 else end`

* `src_lang`: `ruby`

* Response: `Task (DONE)`

* **Row 2:**

* Command: `agent_formalizer`

* Parameters:

* `no_check_formalization_h`: `False`

* `no_refactor`: `False`

* `no_gen_model_hitl`: `False`

* `max_tries_wo_hitl`: `2`

* `max_tries`: `3`