# AI for Mathematics: Progress, Challenges, and Prospects

**Authors**: Haocheng Ju, Bin Dong

> School of Mathematical Sciences, Peking University, Beijing 100871, China

> Corresponding authorBeijing International Center for Mathematical Research, Peking University, Beijing 100871, ChinaCenter for Machine Learning Research, Peking University, Beijing 100871, ChinaCenter for Intelligent Computing, Great Bay Institute for Advanced Study, Great Bay University, Dongguan 523000, ChinaZhongguancun Academy, Beijing 100094, China

Abstract

AI for Mathematics (AI4Math) has emerged as a distinct field that leverages machine learning to navigate mathematical landscapes historically intractable for early symbolic systems. While mid-20th-century symbolic approaches successfully automated formal logic, they faced severe scalability limitations due to the combinatorial explosion of the search space. The recent integration of data-driven approaches has revitalized this pursuit. In this review, we provide a systematic overview of AI4Math, highlighting its primary focus on developing AI models to support mathematical research. Crucially, we emphasize that this is not merely the application of AI to mathematical activities; it also encompasses the development of stronger AI systems where the rigorous nature of mathematics serves as a premier testbed for advancing general reasoning capabilities. We categorize existing research into two complementary directions: problem-specific modeling, involving the design of specialized architectures for distinct mathematical tasks, and general-purpose modeling, focusing on foundation models capable of broader reasoning, retrieval, and exploratory workflows. We conclude by discussing key challenges and prospects, advocating for AI systems that go beyond facilitating formal correctness to enabling the discovery of meaningful results and unified theories, recognizing that the true value of a proof lies in the insights and tools it offers to the broader mathematical landscape.

1 Introduction

The automation of mathematical reasoning has been a central objective of artificial intelligence (AI) since its inception. The dream of mechanizing reasoning predates the digital computer, tracing back to the 1920s when David Hilbert proposed a program to formalize all of mathematics, aimed at proving every theorem within a consistent axiomatic system. While this optimism was theoretically challenged by Kurt Gödel’s incompleteness theorems in 1931 [59], which demonstrated that any sufficiently rich formal system is necessarily incomplete, Gödel’s results did not close the subject. As noted by Ulam [133], von Neumann viewed these discoveries as a prompt to rethink the role of formalism rather than abandon it. Later research has further shown that large portions of classical mathematics admit finitistic reductions [128], keeping the dream of partial mechanization alive.

With the advent of digital computers, these theoretical ideas were translated into practice. In the 1950s, Martin Davis implemented a decision procedure for Presburger arithmetic [30, 38], arguably the first instance of a computer mechanically verifying logical statements. Shortly after, Newell, Simon, and Shaw developed the “Logic Theorist” [109], widely considered the first true AI program, which performed theorem proving in symbolic logic. A particularly influential milestone in this symbolic era was Wen-Tsun Wu’s method of mechanical theorem proving in geometry [149, 151]. By translating geometric problems into systems of algebraic equations and applying the characteristic set method, Wu demonstrated that complex logical consequences could be derived algorithmically.

However, most of these symbolic methods faced a critical bottleneck: the combinatorial explosion of the search space. As proofs grew in complexity, the number of possible logical paths expanded exponentially, rendering exhaustive search intractable. In recent decades, the integration of machine learning has revitalized this pursuit, offering data-driven approaches to navigate these complex landscapes. Since the 2010s, connectionist AI Connectionist AI is an AI paradigm inspired by the interconnected structure of neurons in the human brain. It enables perception, reasoning, and decision-making through multi-layer networks of nodes that learn patterns from data by optimizing connection weights. has risen to prominence, achieving remarkable success in computer vision and natural language processing. Mathematicians have subsequently begun leveraging these models to identify patterns that guide human intuition [37, 64, 41], to construct counterexamples via reinforcement learning (RL) [136, 61, 126], and to train neural theorem provers [150, 11]. More recently, the rapid progress of large language models (LLMs) has enabled the generation of new mathematical constructions [119, 110, 55], autoformalization [153, 107], and collaborative theorem proving [176].

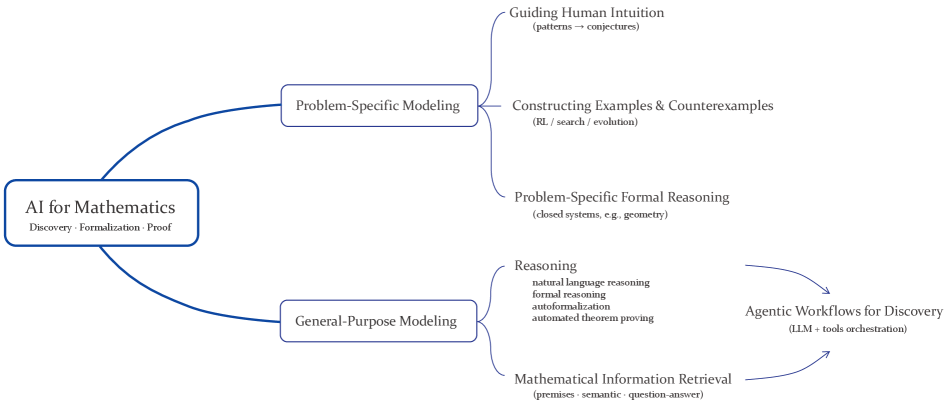

This convergence has given rise to the interdisciplinary field of AI for Mathematics (AI4Math). We emphasize that AI4Math is not merely the application of AI tools to mathematical tasks; it also encompasses the development of stronger AI systems where the rigorous nature of mathematics serves as a premier testbed for advancing general reasoning capabilities. Broadly speaking, as illustrated in Figure 1, research in this field can be categorized into two complementary directions:

- Problem-Specific Modeling: This involves the design of specialized architectures tailored to specific research questions or narrow classes of problems, such as guiding intuition in knot theory or reasoning within closed geometric systems. Beyond their high effectiveness for targeted tasks, these models typically require significantly less data and computational resources, making them accessible to a broader range of researchers. However, they rarely transfer to other domains without substantial modification.

- General-Purpose Modeling: This focuses on the development of foundation models, ranging from specialized math-specific language models to general-purpose reasoning engines, designed to support broader workflows across diverse mathematical areas. While offering versatility, these approaches demand massive training datasets, substantial computational resources, and significant engineering expertise. Furthermore, they may not achieve the same degree of specificity or effectiveness as specialized models when applied to narrowly defined mathematical problems. This category encompasses advances in natural language reasoning, the bridging of informal and formal mathematics through autoformalization, and the construction of agentic systems capable of automated theorem proving and information retrieval.

It is worth noting that the scope of AI4Math technically extends beyond logical reasoning to include AI for Computational Mathematics and Scientific Computing. This domain focuses on building AI models to assist in numerical computations, such as for solving partial differential equations (PDEs), optimization, and inverse problems. The roots of this approach trace back to the 1980s and 1990s, where researchers explored the use of shallow neural networks to approximate solutions to differential equations. However, the field experienced a renaissance around 2015 with the advent of deep learning. While these computational advances constitute a vital pillar of the broader AI4Math landscape, this review restricts its focus to mathematical reasoning—encompassing discovery, formalization, and proof. We refer readers interested in the computational aspects of AI4Math to other comprehensive surveys [6, 79, 19, 148, 42, 24].

In this paper, we provide a systematic overview of the progress, challenges, and prospects of AI4Math. Our goal is not to be exhaustive, but to highlight representative works that illustrate the field’s evolution. Readers interested in specific subareas may consult existing surveys on automated reasoning in geometry [29, 152] or deep learning for theorem proving [95]. Section 2 examines problem-specific modeling, while Section 3 reviews general-purpose modeling. Finally, Section 4 discusses the key challenges ahead, advocating for systems that move beyond simple verification toward the discovery of deep mathematical insights.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: AI for Mathematics

### Overview

The image is a concept diagram illustrating the application of Artificial Intelligence (AI) in the field of Mathematics. It outlines two primary approaches: Problem-Specific Modeling and General-Purpose Modeling, and their respective sub-categories and applications. The diagram uses a mind-map style layout with connecting lines to show relationships between concepts.

### Components/Axes

* **Central Node:** "AI for Mathematics" with sub-categories "Discovery", "Formalization", and "Proof".

* **Main Branches:**

* "Problem-Specific Modeling"

* "General-Purpose Modeling"

* **Sub-Branches of "Problem-Specific Modeling":**

* "Guiding Human Intuition" (patterns -> conjectures)

* "Constructing Examples & Counterexamples" (RL / search / evolution)

* "Problem-Specific Formal Reasoning" (closed systems, e.g., geometry)

* **Sub-Branches of "General-Purpose Modeling":**

* "Reasoning" (natural language reasoning, formal reasoning, autoformalization, automated theorem proving)

* "Mathematical Information Retrieval" (premises, semantic, question-answer)

* "Agentic Workflows for Discovery" (LLM + tools orchestration)

### Detailed Analysis or ### Content Details

The diagram starts with the central concept of "AI for Mathematics," which is further divided into three aspects: Discovery, Formalization, and Proof. From this central node, two main branches emerge: Problem-Specific Modeling and General-Purpose Modeling.

* **Problem-Specific Modeling:** This branch focuses on AI techniques tailored to specific mathematical problems. It includes:

* **Guiding Human Intuition:** Using AI to identify patterns and form conjectures.

* **Constructing Examples & Counterexamples:** Employing AI techniques like Reinforcement Learning (RL), search algorithms, or evolutionary algorithms to generate examples and counterexamples.

* **Problem-Specific Formal Reasoning:** Applying AI to formal reasoning within closed systems, such as geometry.

* **General-Purpose Modeling:** This branch focuses on AI techniques that can be applied to a broader range of mathematical problems. It includes:

* **Reasoning:** Utilizing AI for natural language reasoning, formal reasoning, autoformalization, and automated theorem proving.

* **Mathematical Information Retrieval:** Using AI to retrieve relevant mathematical information based on premises, semantics, and question-answering.

* **Agentic Workflows for Discovery:** Orchestrating Large Language Models (LLMs) and other tools to create workflows for mathematical discovery.

### Key Observations

* The diagram highlights the dual approach of using AI in mathematics: one focused on specific problems and the other on general-purpose techniques.

* The sub-branches provide concrete examples of how AI can be applied in each approach.

* The diagram emphasizes the role of AI in both assisting human mathematicians (e.g., guiding intuition) and automating mathematical tasks (e.g., automated theorem proving).

### Interpretation

The diagram illustrates the multifaceted role of AI in mathematics, spanning from assisting human intuition to automating complex reasoning processes. The distinction between problem-specific and general-purpose modeling suggests a strategic approach to AI implementation, where tailored solutions are used for specific challenges, while broader techniques are applied to more general mathematical tasks. The inclusion of "Agentic Workflows for Discovery" indicates a forward-looking perspective on AI's potential to drive new mathematical discoveries through automated exploration and reasoning. The diagram suggests that AI is not just a tool for solving existing problems but also a catalyst for generating new mathematical knowledge.

</details>

Figure 1: Landscape of AI4Math research directions. AI4Math research can be broadly divided into two complementary paradigms: problem-specific modeling and general-purpose modeling. Problem-specific modeling encompasses three major lines of work: using machine learning to guide human intuition, constructing examples and counterexamples, and performing formal reasoning in closed systems. General-purpose modeling focuses on mathematical reasoning and mathematical information retrieval; together, these capabilities underpin emerging agentic workflows for mathematical discovery. This diagram is intended to organize and clarify conceptual relationships among research directions, rather than to provide a one-to-one correspondence with the sections of this review.

2 Problem-Specific Modeling

With the rapid advancement of data-driven techniques, researchers started to design specialized machine learning models tailored to specific mathematical research problems. These efforts generally fall into three distinct directions: identifying patterns in high-dimensional data to guide human intuition and inspire new conjectures; constructing examples or counterexamples to rigorously test or disprove mathematical hypotheses; and performing formal reasoning within closed axiomatic systems, such as Euclidean geometry. In this section, we review recent developments across these three areas and discuss their respective advantages and limitations.

2.1 Guiding Human Intuition through Machine Learning

One of the earliest examples of utilizing machine learning to assist in formulating mathematical conjectures is [21]. In this study, the authors employed linear regression to predict the rank of the geometric gauge group across a large ensemble of F-theory geometries, successfully rediscovering an existing conjecture regarding gauge group rank. Building on this, they applied logistic regression to a classification problem involving $E_{6}$ gauge groups; through attribution analysis of the model, they formulated a genuinely new conjecture that was subsequently proved by human mathematicians.

However, the pivotal study that demonstrated the broad potential of deep learning in mathematical research is [37]. The central contribution of this work is a systematic framework designed to accelerate the conjecture generation process—traditionally a time-consuming cycle where mathematicians hypothesize relationships, attempt proofs, and refine their ideas iteratively. In the workflow proposed by [37], mathematicians begin by hypothesizing that a relationship may exist between two mathematical objects. A specially designed neural network is then trained to predict one quantity from the features of the other. Attribution methods are subsequently used to identify the most influential input components, thereby guiding mathematicians toward more precise and refined conjectures. This cycle repeats until a mathematically meaningful statement is formulated. Using this approach, the authors discovered a new relationship between algebraic and geometric invariants in knot theory [36] and proposed a candidate algorithm predicted by the combinatorial invariance conjecture for symmetric groups [17].

Inspired by this paradigm, [41] designed another AI-guided intuition framework to the study of affine Deligne–Lusztig varieties (ADLV). This work not only independently rediscovered the classical virtual dimension formula in arithmetic geometry but also established a novel, precise lower-bound theorem. By providing a quantitative characterization that fills an important theoretical gap, this result demonstrates the efficacy of AI-guided intuition in facilitating rigorous discoveries within deep areas of pure mathematics.

Beyond refining conjectures, machine learning has also proven effective in uncovering entirely new mathematical phenomena. A notable example is [65], where the authors represented elliptic curves as vectors and trained a logistic regression classifier to distinguish curves of different ranks. To interpret the classifier’s strong performance, the authors performed Principal Component Analysis (PCA) on the vector representations. Upon plotting the averages of Frobenius traces for elliptic curves of fixed rank over a given conductor range, they revealed a surprising oscillatory pattern, which they termed “murmurations.” This phenomenon has since been the subject of in-depth theoretical study [178, 90, 18]. A growing body of literature continues to leverage machine learning to discover or rediscover relationships across various domains [21, 81, 47, 14, 16, 91, 5, 62, 123, 87, 89].

2.2 Constructing Examples and Counterexamples

One of the pioneering works to utilize machine learning for constructing examples and counterexamples is [136]. In this study, the author encodes graphs as 0–1 sequences and applies the deep cross-entropy method from RL to search for graph constructions that serve as counterexamples to existing conjectures. Following this precedent, RL has been adopted for a variety of structural problems. For instance, [13] models the Hironaka game, a framework central to the resolution of singularities in algebraic geometry, as a Markov decision process (MDP). By combining Monte Carlo tree search with Deep Q-Networks, the authors successfully trained an agent to replace singular points with smooth ones, achieving near-optimal resolutions for du Val singularities. Similarly, [126] investigates the Andrews–Curtis (AC) conjecture. After first employing classical search algorithms to identify AC triviality for infinite subfamilies of the Miller–Schupp series and to obtain length reductions in the Akbulut–Kirby series, the authors formulate the general problem as an MDP. They trained an RL agent on problem instances of varying difficulty, ultimately discovering two novel AC trivializations of balanced presentations that had eluded classical search methods.

Beyond RL, a diverse array of other machine learning techniques has been proposed for mathematical construction. [4] trains a Transformer model on synthetic data to predict Lyapunov functions for stable dynamical systems; the trained model is then used to discover novel Lyapunov functions for non-polynomial systems. In combinatorics, [22] introduces an iterative bootstrapping procedure. This method begins by generating candidate constructions via a local search algorithm, trains a neural network on the highest-scoring candidates, and then samples new seeds from the network to initialize the next round of local search. Using this approach, the authors successfully discovered a counterexample to a 30-year-old conjecture. [bérczi2026flowbasedextremalmathematicalstructure] proposes a geometry-aware conditional flow-matching model for continuous geometric optimization problems, interleaving flow integration with projection onto the constraint manifold. The method initializes a training set using stochastic relaxation with perturbations, trains a conditional flow-matching model with geometric penalties, and employs geometry-aware sampling together with reward-guided policy optimization to iteratively refine configurations via local search. This approach discovers configurations that match or exceed the best known results in problems such as circle packing maximizing the sum of radii and the Heilbronn triangle problem. Additionally, [15] applies genetic algorithms to generate reflexive polytopes in dimensions 2 through 5, identifying several previously unknown polytopes in dimension 5. Other works leveraging machine learning for the construction of examples or counterexamples include [26, 27, 61, 129, 44].

As LLMs continue to grow more capable, LLM-based agents have also improved, and several recent works have demonstrated their potential for discovering new mathematical constructions. FunSearch [119] searches for programs that generate desired constructions using an evolutionary approach. For problems equipped with a well-defined evaluator, FunSearch employs an off-the-shelf LLM to iteratively evolve low-scoring candidate programs into higher-scoring ones. This is achieved by maintaining a large, diverse pool of programs and repeatedly prompting the LLM to improve upon earlier candidates. Using this method, FunSearch discovered new constructions of large cap sets that surpass the best previously known results in extremal combinatorics. Building on this line of work, AlphaEvolve [110] uses a stronger LLM and extends the evolutionary process to entire code files rather than single functions, while also optimizing multiple metrics simultaneously. AlphaEvolve has produced improved constructions for several problems, including the Minimum Overlap Problem and the Kissing Numbers problem in 11 dimensions. Open-source implementations inspired by AlphaEvolve include OpenEvolve [125], ShinkaEvolve [88], and DeepEvolve [97]. AlphaEvolve-style agents are particularly suitable for finding new constructions in mathematical problems that can be approached by writing code and evaluated with a well-defined scoring function.

2.3 Problem-Specific Formal Reasoning

AlphaGeometry [132] represents a neuro-symbolic approach to solving Olympiad-level Euclidean geometry problems. It integrates a symbolic geometric reasoning engine with a language model designed to suggest auxiliary constructions. The symbolic component, based on a deductive database (DD) [28] and algebraic rules (AR), exhaustively derives the deduction closure of a given set of premises. Since purely symbolic deduction cannot introduce new geometric objects, a capability often required for complex proofs, the system utilizes a language model to propose useful auxiliary points. To circumvent the scarcity of human proof data, the authors generated a large-scale dataset of synthetic proof graphs by alternating symbolic deduction with random point insertions, subsequently extracting minimal proofs via traceback. During inference, the system operates in a loop: the symbolic engine expands the deduction closure, the model proposes high-probability auxiliary points, and the cycle repeats until the target conclusion is reached. This architecture allows AlphaGeometry to significantly outperform heuristic-based systems, reaching performance comparable to an IMO silver medalist.

Its successor, AlphaGeometry2 [25], further advances this paradigm through enhancements in both expressivity and efficiency. The formal geometry language was expanded to support locus descriptions, linear geometric relations, and non-constructive statements, while the underlying symbolic engine was re-engineered for greater speed and robustness. These improvements enabled the generation of a larger, more diverse synthetic training set for the language model. Furthermore, AlphaGeometry2 introduces a novel search algorithm, the Shared Knowledge Ensemble of Search Trees (SKEST), which executes multiple search trees in parallel. By allowing these trees to exchange discovered information, SKEST significantly improves the exploration of the auxiliary construction space. Consequently, AlphaGeometry2 achieves gold-medalist performance on IMO-level geometry problems. Beyond neural approaches for auxiliary point generation, recent work such as HAGeo [46] proposes a purely heuristic-based strategy that introduces auxiliary constructions with favorable geometric properties, including intersections of lines and circles, midpoints, and point reflections, and likewise achieves gold-medal-level performance. Additional work on Euclidean geometry problem solving can be found in [170, 66, 177, 171, 166].

2.4 Discussion

The approaches discussed in this section, identifying patterns to guide intuition, constructing counterexamples, and performing formal reasoning in closed systems, each offer distinct advantages while presenting unique challenges.

The AI-guided intuition paradigm is powerful because it enables mathematicians to uncover patterns in high-dimensional data that are otherwise difficult or time-consuming to detect manually, effectively reducing the search space in exploratory research. However, this approach is not universally applicable. It relies on careful problem selection, as the target question must admit the generation of sufficiently large and representative datasets. Furthermore, successful implementation demands a high barrier of dual expertise: beyond standard machine learning considerations such as architecture design and loss engineering, deep mathematical insight is indispensable for interpreting model outputs and transforming empirical correlations into rigorous mathematical theory. Ultimately, since the final proof and verification are typically carried out by human mathematicians, the degree of automation in this workflow remains limited.

Conversely, employing machine learning to construct examples and counterexamples can significantly accelerate the formulation and testing of conjectures by discovering objects that defy human intuition. Yet, this direction also faces technical hurdles, particularly regarding out-of-distribution (OOD) generalization. For instance, the backward generation method in [4], which constructs problems from sampled solutions, may yield training sets with specific distributions. This can present challenges for generalizing to typical problem instances and often requires carefully designed procedures, such as the diversity promoting mechanism for Lyapunov functions proposed by the authors, to ensure robust performance. Additionally, when RL is employed, mapping a mathematical problem onto an MDP is non-trivial. Defining appropriate state representations, action spaces, and reward functions can be intricate [136], and the learning process may be further complicated by sparse rewards and long planning horizons [126].

Systems like FunSearch and AlphaEvolve represent a powerful shift toward automated mathematical discovery through program evolution. These agents excel at construction-type problems, such as finding extremal configurations in combinatorics or improving algorithmic bounds, where the search space can be navigated by iteratively refining code and verified through a well-defined scoring function. However, this paradigm remains inherently constrained by its reliance on such evaluators. While highly effective at identifying specific mathematical objects (e.g., cap sets or high-dimensional sphere packings), these systems do not explicitly engage with the formulation of conceptual frameworks or structural principles that underlie general theories or connect disparate mathematical domains. Their success is therefore currently concentrated in verifiable construction, leaving the discovery of transferable abstractions and unifying insights as an open frontier.

Finally, problem-specific formal reasoning systems, such as AlphaGeometry, demonstrate that combining symbolic engines with neural language models can achieve expert-level performance in structured domains. However, the success of these systems typically relies on the availability of domain-specific symbolic engines (e.g., deductive databases for geometry [28]) and the capability to generate large-scale synthetic data. Consequently, these architectures are often tightly tailored to their specific problem scope and may not transfer to other areas of mathematics without substantial modification.

3 General-Purpose Modeling

General-purpose modeling marks a shift from specialized algorithms designed for isolated problems to adaptable systems capable of handling broad areas of mathematics. Unlike problem-specific modeling, which requires bespoke features and architectures for each new task, general-purpose approaches leverage foundation models trained on vast corpora to learn universal representations of mathematical knowledge. These models aim to support a wide spectrum of activities, from solving diverse problem sets to retrieving theorems and orchestrating complex workflows, without requiring substantial modification for each new domain.

We categorize recent progress in general-purpose modeling into three complementary directions: natural language reasoning models that leverage the intuitive power of language; formal reasoning models that ensure rigor through interaction with proof assistants; and mathematical information retrieval systems that ground reasoning in established knowledge. This section begins by analyzing the capabilities and inherent limitations of foundation models (especially LLMs), establishing the context for our detailed review of these three key areas.

3.1 Foundation Models and LLMs

Compared with traditional machine learning models that are trained for a single, narrowly defined task, LLMs serve as foundation models: a single architecture trained on a broad collection of data and tasks in a unified manner. Mathematically, this distinction represents a paradigm shift from function approximation to operator approximation, which is a process intimately related to meta-learning. A traditional model is typically designed to approximate a specific, fixed mapping $f:\mathcal{X}→\mathcal{Y}$ between Euclidean spaces, such as classifying objects in an image, by interpolating discrete data points sampled from that specific manifold.

In contrast, foundation models perform task interpolation rather than simple data interpolation. They are trained over a vast distribution of tasks $\{T_{i}\}$ , where each task effectively defines a distinct mapping $f_{i}$ . By learning to generalize across this distribution, the foundation model does not merely approximate a single function; instead, it approximates a global operator (or functional) $\Psi$ acting on a function space. Given a task specification or context as input, $\Psi$ instantiates the specific mapping $f_{i}$ required for that context. In essence, while traditional machine learning approximates functions, foundation models approximate the operators that generate and manipulate those functions.

A critical factor in their success is the capability to process diverse data types; tokenization converts heterogeneous inputs into a common sequence representation, and the next-token prediction objective provides a single learning rule that applies uniformly across all tasks the model encounters. Attention-based architectures are pivotal in this regime. Beyond effectively scaling with model size and data volume, the attention mechanism serves as the key engine for reasoning by enforcing long-context coherence during training. This allows the model to capture and maintain complex dependencies over extended sequences—a prerequisite for logical deduction. Through exposure to diverse domains and supervision signals, the model is forced to compress vast amounts of heterogeneous data into shared internal representations and to discover a common low-dimensional structure across tasks and languages. A natural hypothesis is that a key component of this low-dimensional structure corresponds to general-purpose reasoning abilities that can be expressed in different languages and domains.

Mathematics fits naturally into this framework. Mathematical work is governed by strict logical rules, and many mathematical tasks can be phrased as producing the next meaningful step in a calculation, derivation, or proof—exactly the kind of stepwise structure that the next-token prediction objective is designed to model. Consequently, when an LLM is trained as a foundation model on sufficiently rich mathematical and scientific corpora, the same mechanisms that support cross-domain generalization and long-context coherence can be harnessed to learn and use a wide range of mathematical reasoning abilities.

However, a fundamental gap remains between mastering standardized examinations and engaging in research-level mathematics. While current models excel at solving well-defined undergraduate problems, research demands open-ended exploration, absolute logical rigor, and the ability to navigate the “long tail” of specialized domain knowledge—challenges where stochastic text generation often falls short. To elevate AI from a competent solver to a reliable research partner, general-purpose modeling must therefore advance beyond simple next-token prediction, integrating formal verification, semantic retrieval, and agentic workflows to bridge the divide between plausible text and rigorous truth.

3.2 Natural Language Reasoning

Current approaches to natural language mathematical reasoning generally fall into two categories: math-specific LLMs and general-purpose reasoning models.

Math-specific LLMs are typically adapted from general foundation models through specialized pre-training and post-training pipelines. During pre-training, filtering pipelines [124, 160] extract high-quality mathematical content from web corpora (e.g., Common Crawl), textbooks, and research papers to maximize domain relevance. Post-training refines these models using supervised fine-tuning (SFT) and RL. The data for SFT is often structured as Chain-of-Thought (CoT) [147] pairs, consisting of problems and step-by-step solutions, or Tool-Integrated Reasoning (TIR) [60] examples that incorporate external code execution. A prominent example is NuminaMath [93], which won the first AIMO Progress Prize by fine-tuning on high-quality CoT and TIR datasets. While models in this category [10, 124, 160, 163] excel at elementary and competition-level benchmarks (e.g., GSM8K [31], MATH [67], AIME), their capacity for advanced mathematics remains less explored.

Concurrently, general-purpose LLMs have achieved significant mathematical milestones driven by scale and novel inference strategies. Early iterations like GPT-3 struggled with basic arithmetic, whereas GPT-4 [1] achieved 92.0% on GSM8K. A paradigm shift occurred with the introduction of test-time scaling, where models dedicate increased computation to reasoning during inference. OpenAI’s o1 model demonstrated strong performance on the AIME, and subsequent reasoning models [63, 131, 159, 33] have further validated this approach. By 2025, enhanced reasoning models, such as Google’s Gemini Deep Think, reached gold-medal performance at the International Mathematical Olympiad (IMO) using purely natural-language reasoning, marking a definitive maturity for the technology in the domain of high-school olympiad mathematics.

However, transitioning from Olympiad problems to higher mathematics presents a steeper challenge. Prior studies indicate that while GPT-4 can assist with undergraduate topics, it requires critical human oversight [32] and often fails at the graduate level [50]. Recent benchmarks quantify this gap: [78] reported that DeepSeek-R1 achieves 71.0% proof accuracy on graduate-level algebra (FATE-H) but drops to 33.0% on PhD qualifying exams (FATE-X). Similarly, on the FrontierMath Benchmark [56], which consists of unpublished research-level problems, Gemini 3 Pro scores 18.75% on the research-level split (Tier 4) https://epoch.ai/frontiermath, indicating that robust research-level reasoning remains an open problem.

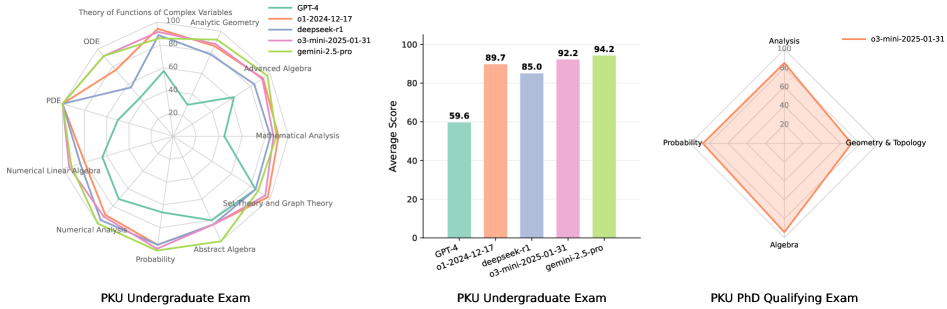

To empirically assess the current capabilities of state-of-the-art models, we constructed a dataset of 100 problems drawn from the final exams of 11 undergraduate mathematics subjects at Peking University (PKU). We evaluated five models: GPT-4, o1, o3-mini, DeepSeek-R1, and Gemini 2.5 Pro. Sample problems and model responses are provided in Appendix B. Human experts graded the outputs on a 0–5 scale (criteria in Table 1); the normalized results are presented in Figure 2 (left and middle). While GPT-4 scores below 60, reasoning-enhanced models (OpenAI o-series, DeepSeek-R1, Gemini 2.5 Pro) show substantial improvement, with several exceeding a score of 90.

Furthermore, we evaluated o3-mini on 58 problems from PKU PhD Qualifying Exams in Analysis, Probability, Algebra, and Geometry & Topology. As shown in Figure 2 (right), o3-mini achieves an average score of 84.4. A closer examination of the subject-wise performance reveals a notable divergence: the model exhibits its strongest proficiency in Algebra while scoring lowest in Geometry & Topology. Under the assumption that these exams pose a comparable level of challenge for human students, this suggests that current AI systems are comparatively more adept at handling abstract algebraic structures than tasks requiring geometric intuition. Although these results must be interpreted with caution due to potential data contamination and the specific nature of exam problems compared to open research, they provide strong evidence that top-tier models can now handle a significant portion of graduate-level mathematics.

Synthesizing these findings, we observe a clear trajectory: the mathematical reasoning abilities of LLMs have progressed from mastering elementary calculations and high-school competitions to achieving competence in undergraduate curriculum, and are now beginning to penetrate the domain of graduate and research-level mathematics [20, 74].

<details>

<summary>x2.png Details</summary>

### Visual Description

## Chart Analysis: PKU Exam Performance Comparison

### Overview

The image presents a comparison of the performance of different AI models (GPT-4, 01-2024-12-17, deepseek-r1, o3-mini-2025-01-31, and gemini-2.5-pro) on PKU (Peking University) undergraduate and PhD qualifying exams. The data is visualized using two radar charts and one bar chart. The radar charts show performance across different subject areas for the undergraduate and PhD exams, while the bar chart shows the average score on the undergraduate exam.

### Components/Axes

**Left Radar Chart (PKU Undergraduate Exam):**

* **Title:** PKU Undergraduate Exam

* **Axes:**

* Theory of Functions of Complex Variables

* Analytic Geometry

* Advanced Algebra

* Mathematical Analysis

* Set Theory and Graph Theory

* Abstract Algebra

* Probability

* Numerical Analysis

* Numerical Linear Algebra

* PDE

* ODE

* **Scale:** 0 to 100, with increments of 20.

* **Legend (Top-Right):**

* GPT-4 (Teal)

* 01-2024-12-17 (Orange)

* deepseek-r1 (Blue)

* 03-mini-2025-01-31 (Pink)

* gemini-2.5-pro (Lime Green)

**Center Bar Chart (PKU Undergraduate Exam - Average Score):**

* **Title:** PKU Undergraduate Exam

* **X-axis:** AI Models (GPT-4, 01-2024-12-17, deepseek-r1, 03-mini-2025-01-31, gemini-2.5-pro)

* **Y-axis:** Average Score, ranging from 0 to 100.

* **Bars:** Each bar represents the average score of a specific AI model. The colors of the bars match the colors in the radar chart legend.

**Right Radar Chart (PKU PhD Qualifying Exam):**

* **Title:** PKU PhD Qualifying Exam

* **Axes:**

* Analysis

* Geometry & Topology

* Algebra

* Probability

* **Scale:** 0 to 100, with increments of 20.

* **Legend (Top-Right):**

* 03-mini-2025-01-31 (Orange)

### Detailed Analysis

**Left Radar Chart (PKU Undergraduate Exam):**

* **GPT-4 (Teal):** Generally scores between 20 and 40 across most subjects, with a peak around 60 in Advanced Algebra.

* **01-2024-12-17 (Orange):** Scores vary more widely, with peaks around 80 in Theory of Functions of Complex Variables and Analytic Geometry, but lower scores in Probability and Numerical Analysis (around 20-40).

* **deepseek-r1 (Blue):** Shows a relatively consistent performance across subjects, mostly between 60 and 80.

* **03-mini-2025-01-31 (Pink):** Similar to deepseek-r1, with scores generally between 60 and 80.

* **gemini-2.5-pro (Lime Green):** Outperforms the other models in most subjects, with scores generally between 80 and 100.

**Center Bar Chart (PKU Undergraduate Exam - Average Score):**

* **GPT-4 (Teal):** Average score of 59.6.

* **01-2024-12-17 (Orange):** Average score of 89.7.

* **deepseek-r1 (Blue):** Average score of 85.0.

* **03-mini-2025-01-31 (Pink):** Average score of 92.2.

* **gemini-2.5-pro (Lime Green):** Average score of 94.2.

**Right Radar Chart (PKU PhD Qualifying Exam):**

* **03-mini-2025-01-31 (Orange):** Scores approximately 80 in Analysis, Geometry & Topology, Algebra, and Probability.

### Key Observations

* The gemini-2.5-pro model generally outperforms the other models on the PKU undergraduate exam, both in individual subjects and in average score.

* GPT-4 shows the lowest average score on the undergraduate exam.

* The 03-mini-2025-01-31 model shows consistent performance across all subjects in the PhD qualifying exam.

* The bar chart confirms the relative performance of the models as seen in the radar chart for the undergraduate exam.

### Interpretation

The data suggests that the gemini-2.5-pro model is the most proficient among the tested AI models in the PKU undergraduate exam, while GPT-4 lags behind. The 03-mini-2025-01-31 model demonstrates strong performance in the PKU PhD qualifying exam. The radar charts provide a detailed view of the strengths and weaknesses of each model across different subject areas, while the bar chart provides a concise summary of their overall performance. The consistent performance of 03-mini-2025-01-31 across the PhD qualifying exam subjects suggests a well-rounded understanding of the core concepts. The variability in performance of the 01-2024-12-17 model in the undergraduate exam indicates potential specialization in certain areas.

</details>

Figure 2: Performance of five LLMs across different Peking University (PKU) exams. Left: Scores on 11 undergraduate subjects. Middle: Average scores of the five LLMs across these subjects. Right: o3-mini’s performance on four PhD qualifying exam fields (average score: 84.4).

3.3 Formal Reasoning

Although top-tier LLMs can now solve certain graduate-level and even certain research-level problems, evaluating their capabilities remains a significant bottleneck involving substantial human effort. As the complexity of mathematics increases, assessment depends heavily on domain experts; yet, because natural language is inherently imprecise, even experienced mathematicians can misjudge arguments. A notable historical example occurred in 1994, when a result published in the Annals of Mathematics claimed that the Busemann–Petty problem has a negative solution in dimensions at least four [167]. This conclusion was later shown to be incorrect [83, 82], and a 1999 paper established that the problem in fact possesses a positive solution in dimension four [168]. Similarly, a paper [121] published in the Annals of Mathematics in 2004 was retracted in 2023 [122] after a counterexample to its main result, discovered in 2006, was identified [84]. These cases illustrate that errors can persist even within the rigorous peer-review processes of top journals. To enable fast and reliable verification of mathematical reasoning, research must therefore move toward a more mechanical and unambiguous framework. Formal systems provide exactly such a foundation. In this section, we discuss the benefits of formal systems for mathematical research and their utility in enhancing the reasoning abilities of LLMs, followed by a review of recent progress in automated theorem proving and autoformalization.

3.3.1 Formal Systems

Formal systems provide a precise symbolic language paired with rigorously defined mechanisms for constructing and verifying proofs. Various systems exist, distinguished by their underlying logical foundations: HOL systems (e.g., HOL Light, Isabelle/HOL) utilize simple type theory; Coq (now known as Rocq) and Lean employ dependent type theory; Metamath operates on first-order logic with explicitly specified axioms; and Mizar is grounded in Tarski–Grothendieck set theory. Once a mathematical argument is translated into the formal language of an interactive theorem prover (ITP), it can be verified with absolute rigor. Had the erroneous 1994 result regarding the Busemann–Petty problem been formalized in such a system, the underlying logical flaws would likely have been detected immediately, preventing the publication of an incorrect claim.

Beyond their intrinsic value to mathematical correctness, formal systems offer a critical advantage for AI development: they provide reliable, verifiable supervision. Unlike elementary mathematics, where answers are often numeric and easily checked, advanced proof-oriented problems lack simple verifiers, making it difficult to generate reliable training signals. Interactive theorem provers bridge this gap by providing precise feedback on the validity of every logical step. This capability supplies high-quality training signals for RL, thereby enabling models to develop stronger reasoning capabilities in rigorous settings.

Among interactive theorem provers, Lean [39, 40] has cultivated a particularly robust ecosystem. Its unified mathematical library, mathlib4, has expanded rapidly through large-scale community collaboration and, as of December 2025, contains over 250,000 theorems and 120,000 definitions. A landmark achievement in this domain is the Liquid Tensor Experiment, led by Johan Commelin, which formalized a central theorem of Peter Scholze on liquid vector spaces. Scholze, who initially harbored doubts about the proof’s correctness, remarked that the theorem might be his “most important to date” https://xenaproject.wordpress.com/2020/12/05/liquid-tensor-experiment/. The project, which spanned approximately 18 months, not only verified the result but also led to a simplification of the original Clausen–Scholze proof, helping Scholze deepen his understanding of the argument’s structure https://xenaproject.wordpress.com/2021/06/05/half-a-year-of-the-liquid-tensor-experiment-amazing-developments/. Furthermore, the Liquid Tensor Experiment catalyzed the development of algebraic infrastructure within mathlib4: it drove the early formalization of homological algebra and category theory and attracted a wave of algebraists to the community. Other notable milestones include the formalization of the Polynomial Freiman–Ruzsa (PFR) conjecture https://teorth.github.io/pfr//, the sphere eversion theorem https://leanprover-community.github.io/sphere-eversion/, ongoing work on formalizing the solution to the sphere packing problem in dimension 8 https://thefundamentaltheor3m.github.io/Sphere-Packing-Lean/, and the long-term effort to formalize Fermat’s Last Theorem https://leanprover-community.github.io/blog/posts/FLT-announcement/ lead by Kevin Buzzard. Expanding into applied mathematics, recent work has also established the formal foundations for numerical optimization in Lean4, specifically verifying the convergence of first-order algorithms [92].

However, these projects remain labor-intensive, requiring experts to manually translate definitions and proofs into code. This high cost motivates the development of autoformalization tools and automated theorem provers to accelerate the digitalization of mathematical knowledge—the conversion of standard informal mathematics into rigorous formal systems such as Lean.

3.3.2 Autoformalization

Autoformalization is the task of translating mathematical statements and proofs from natural language into formal code in an autonomous manner (e.g. by a language model). Early efforts in this domain employed sequence-to-sequence models trained on aligned data. For instance, [144] constructed datasets by informalizing Mizar statements to train translation models. Addressing the scarcity of aligned pairs, [143] subsequently explored unsupervised approaches based on cycle-consistency losses, where models learned to reconstruct statements by translating them between informal and formal domains without explicit supervision.

The advent of LLMs fundamentally shifted this paradigm. Research demonstrated that off-the-shelf LLMs could generate reasonable formalizations via few-shot prompting [153, 51, 3, 9]. Crucially, [153] observed an asymmetry: translating formal code to natural language (informalization) is significantly easier for models than the reverse. This insight catalyzed the creation of large-scale synthetic datasets, where LLMs are used to informalize massive formal libraries (such as mathlib4) to generate high-quality aligned pairs for training specialized autoformalizers [75, 102, 53, 99].

Recent work focuses on improving the quality and grounding of these systems. Herald [53] introduces a hierarchical informalization strategy that respects the dependency graph of the library mathlib. By translating declarations in topological order, it ensures that the natural language descriptions of prerequisite concepts are available when translating dependent theorems. Herald further augments its data via tactic-based state synthesis and achieves over 96% statement autoformalization accuracy on the miniF2F validation set. To enhance grounding, RAutoformalizer [99] employs retrieval mechanisms to anchor generated code in existing formal declarations. Addressing the challenge of missing concepts, common in research-level mathematics, [140] proposed Aria, an LLM-based agentic system. More generally, an LLM-based agent refers to a system that operates through an explicit interaction loop with its environment: it maintains intermediate states, reasons over observations, performs multi-step planning, and selects actions accordingly. These actions may include invoking external tools such as semantic retrieval, symbolic reasoning modules, or code synthesis components, with feedback from the environment used to guide subsequent decisions. Such agentic designs enable the decomposition of complex tasks into structured subtasks and support iterative refinement beyond single-pass generation [141]. Within this framework, Aria decomposes informal statements into a dependency graph of concepts; if a concept is absent from the library mathlib, the agent recursively defines it from the bottom up using semantic search and synthesis, effectively handling the “long tail” of mathematical vocabulary.

Evaluation and Verification

Evaluating statement autoformalization is non-trivial. Once a statement is correctly autoformalized, evaluating an autoformalized proof becomes straightforward due to the formal system, which makes statement autoformalization the primary difficulty from an evaluation perspective. While human expert review is the gold standard, it is unscalable. The challenge, therefore, lies in developing automated metrics for correctness.

- With Ground Truth: When a reference formal statement exists, correctness can be assessed via logical equivalence rather than mere string matching. For example, BEq [99] utilizes a neural theorem prover to check if the generated statement and the ground truth can be derived from one another. Similar equivalence-checking approaches are explored in [100, 113].

- Without Ground Truth (Semantic Verification): In the absence of a reference, one must verify semantic correctness —whether the formal code faithfully captures the intent of the informal statement. A naive approach involves “back-translation”, where an LLM translates the code back to English for comparison [162, 53]. However, this is prone to errors as LLMs may miss subtle logical discrepancies. To mitigate this, [158] proposes Mathesis, a fine-grained evaluation framework. Mathesis decomposes statements into assumptions and conclusions, evaluates the alignment of each component separately, and aggregates these scores using a fuzzy integral to rigorously reject inconsistencies. To further assist verification, Aria [140] enriches the context by retrieving detailed metadata (types, values, informal descriptions) for every term in the formal statement, enabling more accurate semantic judgments.

Reliable verifiers are not only essential for evaluation but also serve as critical reward models for RL, creating a feedback loop that iteratively improves autoformalization performance [158, 102, 70].

Note: This section has focused on statement autoformalization. The autoformalization of proofs, which requires translating logical reasoning steps rather than just definitions, is inextricably linked to automated theorem proving. We therefore discuss proof autoformalization in the context of proof generation in the following section.

3.3.3 Automated Theorem Proving

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Proof Tree and State Transition

### Overview

The image depicts a diagram illustrating a proof tree and state transition process, likely within a formal verification or automated theorem proving context. It shows the flow from a proof tree to a state, involving tactic application, premise retrieval from a math library, and tactic generation. A code snippet on the right provides a concrete example.

### Components/Axes

* **Proof Tree:** Located on the left side of the diagram. It represents the hierarchical structure of the proof.

* Node 1: (S: Type u\_1) (inst +: Mul S) (h₁: ∀ (a b: S), a\*b=a) (h₂: ∀ (a b: S), a\*b=b\*a)

* Node 2: (S: Type u\_1) (inst +: Mul S) (h₁: ∀ (a b: S), a\*b=a) (h₂: ∀ (a b: S), a\*b=b\*a)

* **State:** Located on the top-right side of the diagram. It represents the current state of the proof.

* Node: (S: Type u\_1) (inst +: Mul S) (h₁: ∀ (a b: S), a\*b=a) (h₂: ∀ (a b: S), a\*b=b\*a) |- Cardinal.mk S ≤ 1

* **Tactic:** Labels the action applied to the proof tree.

* `rw [Cardinal.le_one_iff_subsingleton]`

* **Math Library:** Represented by a building icon, located in the upper-right quadrant.

* **Premise Retriever:** A process that retrieves relevant premises from the math library.

* **Retrieved Premises:** A list of theorems retrieved from the math library.

* theorem `Cardinal.le_one_iff_subsingleton`

* theorem `Cardinal.mk_eq_one`

* theorem `Cardinal.eq_one_iff_unique`

* **Tactic Generator:** A process that generates tactics based on the retrieved premises.

* **Code Snippet:** Located on the right side of the diagram. It provides a code example.

* `import Mathlib`

* `variable {S: Type*} [Mul S]`

* `example (h₁: ∀ (a b: S), a * b = a) (h₂: ∀ (a b: S), a * b = b * a): Cardinal.mk S ≤ 1 := by`

* `rw [Cardinal.le_one_iff_subsingleton]`

* `refine (?_)`

* `intro a b`

* `rw [← h₁ a b]`

* `rw [h₂ a b]`

* `exact h₁ b a`

### Detailed Analysis

* The proof tree starts with an initial state and branches out as tactics are applied.

* The tactic `rw [Cardinal.le_one_iff_subsingleton]` is applied to the initial state in the proof tree.

* The state transitions from the initial state to a state where `Cardinal.mk S ≤ 1` needs to be proven.

* The premise retriever retrieves relevant theorems from the math library.

* The tactic generator generates tactics based on the retrieved premises.

* The code snippet provides a concrete example of how the tactic `Cardinal.le_one_iff_subsingleton` is used in a proof.

### Key Observations

* The diagram illustrates the process of automated theorem proving, where tactics are applied to a proof tree to reach a desired state.

* The math library provides a source of relevant theorems that can be used to generate tactics.

* The code snippet provides a concrete example of how the process works in practice.

### Interpretation

The diagram demonstrates a simplified model of automated theorem proving. The proof tree represents the exploration of different proof paths, guided by tactics. The state represents the current goal or assertion to be proven. The math library and premise retriever provide the necessary knowledge to guide the proof search. The code snippet provides a practical example, showing how these components interact in a real-world scenario. The diagram highlights the interplay between symbolic reasoning (proof tree), knowledge retrieval (math library), and tactic application in the context of formal verification.

</details>

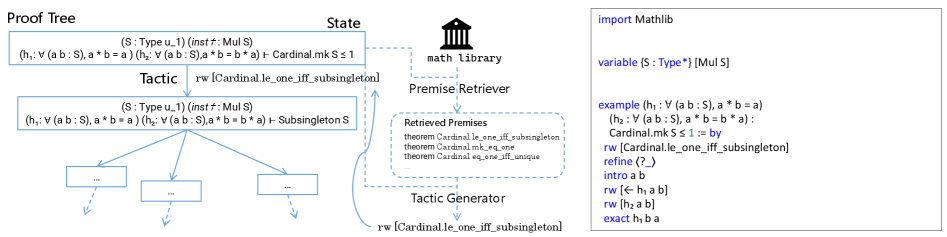

Figure 3: Example of a proof step generation method and the complete proof it produces. Left: An illustration of a proof tree, where each node represents a proof state and each edge corresponds to a tactic. Applying an appropriate tactic transforms the current proof state into the next one. At each step, the proof step generation method retrieves relevant premises and proposes several candidate tactics to try. Right: The complete proof ultimately obtained by this process.

Automated theorem proving in formal systems aims to generate valid proofs for formal statements. Deep learning–based approaches to this task fall into two broad categories: single-model approaches and agentic approaches. Single-model approaches can be further divided into proof step generation and whole-proof generation methods.

Proof Step Generation

Proof step generation methods formulate theorem proving as a tree search problem. In this framework, each node in the search tree corresponds to a proof state, and each action corresponds to the application of a tactic that transitions the prover to a new proof state. A proof is successfully constructed once a path is found to a state with no remaining goals. Figure 3 illustrates an example of a proof tree produced by such a method, alongside the final proof.

The strengths of proof step generation lie in reusability and exploration. During search, proof states are reusable: if a newly encountered state coincides with one previously explored, they can be merged. Furthermore, the system exhibits strong exploration capabilities by attempting multiple tactics at each step. However, these methods often suffer from slow inference speeds due to the computational cost of tree search, difficulty in training stability, and a heavy reliance on efficient interactive tools for both training and inference.

One of the earliest neural approaches in this domain is Holophrasm [150], which employs Monte Carlo Tree Search (MCTS) for exploration. Holophrasm integrates three neural components: a relevance network for retrieving useful theorems, a generative network for proposing variable substitutions, and a value network for estimating provability. Subsequent work largely treated tactic prediction as a classification problem, with representative examples including GamePad [69], DeepHOL [11], and graph-based approaches [111]. Moving beyond pure classification, GPT-f [115] trains a Transformer to generate proof steps via a conditional language modeling objective, constructing proofs using best-first search. Similarly, [86] introduces a hypertree-based search combined with an online training strategy, where policy and critic models are periodically updated on data collected from repeated proof searches.

A major challenge in this domain is the scarcity of large-scale formal data. To mitigate this bottleneck, researchers have pursued two complementary directions: improving model performance under limited formal data, and synthesizing additional formal data. For the former, ABEL [58] proposes an online reinforcement learning framework with prioritized sampling to encourage exploration; notably, its RL stage is trained on only 244 formal statements yet achieves performance comparable to the state of the art at the time. For the latter, REAL-Prover [127] proposes an integrated pipeline comprising a statement autoformalizer to translate informal statements, a retrieval-augmented prover that generates tactics conditioned on relevant premises, and an expert iteration paradigm. In this loop, the model trains on generated state-tactic pairs, performs proof search, and iteratively collects new training data from successful searches. Building on the retrieval-augmented architecture of REAL-Prover, the authors introduced the reap https://github.com/frenzymath/reap tactic as a concrete instantiation of retrieval-guided proof automation. The reap tactic is currently in a beta-testing phase and maintained as a candidate feature branch for mathlib4.

A notable milestone is AlphaProof [72]. AlphaProof trains a 3B-parameter proof network that jointly outputs tactics and value estimates. Its training pipeline involves pretraining on 300B tokens, supervised fine-tuning on 300k state-tactic pairs, and reinforcement learning on 80 million autoformalized statements. These formal statements are derived from approximately one million informal problems, where the autoformalization model is trained on triplets of (informal statement, formalization chain-of-thought, formal statement), with multiple distinct translations generated per problem. For particularly challenging tasks, AlphaProof applies test-time reinforcement learning to adapt to the problem structure by constructing and training on a specialized curriculum. As a result, it achieves performance comparable to an IMO silver medalist. Other notable approaches include [76, 114, 139, 161, 156, 94, 157].

Whole-Proof Generation

In contrast, whole-proof generation methods aim to produce an entire formal proof, possibly augmented with inline comments, in a single forward pass. Their primary advantages are high inference speed and independence from interactive tools during generation. However, their exploration capability is limited compared to step-wise search; they often rely on behavior cloning and are more susceptible to error accumulation since they lack access to intermediate proof states.

This paradigm relies heavily on data quality and quantity. Since there is no principled a priori method for determining data quality, evaluation is often indirect via model performance. To address data quantity, [154] proposed an integrated pipeline involving automatic statement formalization, filtering (to remove trivial or false statements), statement proving, and iterative training on the resulting verified pairs. Building on this, DeepSeek-Prover-V1.5 [155] improved performance by constructing richer datasets that include informal proofs written before formal code and inline informal comments, and by applying Reinforcement Learning from Verifier Feedback (RLVF). Other works adopting this paradigm include [49, 10, 163, 43, 169, 137, 96].

Agentic Approaches

Agentic approaches [77, 138, 135, 23, 78, 57, 2] represent a paradigm shift from single-model systems to modular workflows. These methods decompose theorem proving into coordinated sub-tasks, such as retrieval, decomposition, and verification, orchestrated through structured workflows that integrate language models with external tools. The effectiveness of these approaches relies on three core components: robust retrieval systems, the reasoning capabilities of LLMs, and workflows designed to mimic the mathematical research process.

Draft, Sketch, and Prove (DSP) [77] is a prototype of this paradigm. It generates an informal proof, translates it into a formal sketch with open subgoals, and closes them using a lightweight prover. LEGO-Prover [138] extends this by maintaining a persistent lemma pool. Uniquely, it evolves verified lemmas into new ones through strategies such as dimension extension, key concept identification, parameterization, and complexity enhancement. Hilbert [135] transforms informal proofs into formal sketches via recursive subgoal decomposition, guided by theorem retrieval. Seed-Prover-1.5 [23] similarly employs a specialized sketch model and a dedicated prover model, achieving 80% accuracy on the graduate-level benchmark FATE-H and 33% accuracy on the PhD-level benchmark FATE-X [78]. Notably, for agentic approaches such as Seed-Prover, the widely used miniF2F benchmark [172] has largely been saturated and can be regarded as effectively solved. Addressing the granularity gap between informal reasoning and formal code, [146] proposes a two-stage Chain of States (CoS) framework. By extracting a sequence of intermediate formal states aligned with the logical flow of the informal argument before generating the specific transition tactics, this approach significantly reduces the complexity of tactic generation under constrained computational budgets.

Advanced agents like Aristotle [2] interleave informal reasoning with formal verification. Aristotle drafts proofs as sequences of lemmas, formalizes them, and attempts verification, refining its outputs iteratively based on feedback. Together with a geometry solver, it achieved performance at the level of an IMO gold medal. Numina-Lean-Agent [98] equips the coding agent Claude Code with Lean-LSP-MCP [45] for interacting with Lean, a semantic search engine for theorem retrieval, an informal prover [71], and an external LLM for additional reasoning support. It successfully solved all problems in the 2025 Putnam Competition, an achievement first reported by AxiomProver [8]. Numina-Lean-Agent also formalized a research paper on Brascamp–Lieb inequalities [12] by interacting with mathematicians through iterative refinement of a natural language blueprint, represented as a dependency graph for both statements and proofs. Finally, the Gauss agent [107] demonstrated the power of human-AI collaboration by formalizing the strong Prime Number Theorem within three weeks, leveraging expert scaffolding. These results demonstrate that carefully designed agentic workflows can effectively exploit both intrinsic reasoning capabilities and external tools to achieve substantial gains in automated theorem proving.

The practical impact of formal reasoning agents is perhaps most clearly illustrated by their emerging role in recent work on conjectures posed by Paul Erdős, as discussed by Terence Tao https://github.com/teorth/erdosproblems/wiki/AI-contributions-to-Erd%C5%91s-problems. During late 2025 and early 2026, agentic systems such as Aristotle became integral components of a high-leverage verification workflow: frontier language models (e.g., Gemini Deep Think, ChatGPT) were used to generate candidate constructions or heuristic arguments, which were then rigorously formalized and mechanically checked in Lean. This pipeline has already yielded formally verified counterexamples to several Erdős problems (notably Problem #205) and partial resolutions of more intricate problems (e.g., #367), while many related cases remain open. Importantly, these examples are best viewed as representative rather than definitive: the process is ongoing, and further results continue to emerge. While formalization dramatically reduces the risk of irreparable hallucinations, it does not eliminate all failure modes—models may still exploit unintended problem specifications or rediscover arguments later found in the literature. Nevertheless, these developments mark a qualitative shift. Formal reasoning agents now function as reliable research copilots, systematically converting heuristic insight into certified mathematical knowledge and mitigating long-standing verification bottlenecks in exploratory mathematics.

3.4 Mathematical Information Retrieval

Mathematical Information Retrieval concerns the task of retrieving mathematical content, including formulas, theorems, and problem solutions, from large collections of mathematical documents. In contrast to standard text retrieval, MIR must explicitly account for the distinctive structure and semantics of mathematical expressions. Mathematical formulas are inherently structured objects whose meaning depends on symbolic composition and relational structure rather than mere lexical overlap. Consequently, an effective MIR system must address challenges such as matching mathematical structure and symbolic patterns, while simultaneously leveraging surrounding textual context to resolve ambiguity and interpret meaning.

Crucially, MIR is not merely a search tool for human users but a foundational component of modern automated theorem proving (ATP) and AI agent systems. In the context of ATP, premise retrieval, the task of identifying useful theorems, lemmas, or definitions from a vast library to prove a new theorem, is often the primary bottleneck. As mathematical libraries grow to contain hundreds of thousands of formal statements (e.g., mathlib4), the ability to efficiently retrieve the “needle in the haystack” determines whether a prover can solve a problem or times out. For agentic systems, MIR enables access to long-term mathematical memory, allowing agents to ground their reasoning in established knowledge rather than hallucinating unsupported facts. This requires a shift from traditional keyword-based matching to reasoning-based retrieval. A robust MIR model must understand logical implication and mathematical equivalence; for instance, it must recognize that a theorem stating “a square matrix has a non-zero determinant” is a critical premise required to answer a query about whether “the columns of the matrix form a linearly independent set,” despite the absence of shared keywords between the two statements.

Depending on the granularity of the retrieval target and the nature of the query, MIR encompasses several closely related tasks. Prominent examples include premise retrieval, semantic retrieval, and question-answer retrieval.

Premise Retrieval

In automated theorem proving, a central subproblem is premise retrieval: given a conjecture and a large library of existing mathematical statements, the system must identify which premises are likely to be useful for constructing a proof. Early approaches relied primarily on handcrafted similarity measures and heuristics [68, 108]. Variants and extensions of these ideas, including tree-based similarity scores, have continued to be explored in more recent work [145]. In parallel, lightweight machine learning methods such as k-nearest neighbors or sparse naive Bayes were also investigated for premise selection [35].

Over the past decade, deep learning methods have increasingly been applied to premise retrieval. A representative early neural approach is DeepMath [73], which encodes conjectures and candidate premises separately and the resulting representations are concatenated and passed through a fully connected network that predicts whether a premise is useful for proving the conjecture. Training is supervised using existing proofs, where premises appearing in proofs are treated as positive examples and hard negative mining is employed to construct informative negative samples. Subsequent work sought to better exploit the internal structure of logical formulas. FormulaNet [142], for instance, represents each formula as a graph derived from its syntactic structure, with nodes corresponding to constants, variables, or quantifiers. A graph neural network is then used to compute embeddings, which are combined and fed into a classifier to estimate relevance.

Beyond pairwise scoring models, later work explored graph-level representations over entire libraries of statements. In [48], the authors construct a global graph in which nodes correspond to mathematical statements and directed edges encode premise–conclusion relationships extracted from proofs. Premise selection for a new conjecture is formulated as a link prediction problem, and a graph convolutional network is used to score potential edges based on textual and structural features of nodes. In parallel, another line of research adopts embedding-based retrieval methods, treating each mathematical statement as text and encoding it into a single vector using a learned embedding model. Relevance is then assessed via similarity in the embedding space, often followed by a learned re-ranking stage to refine the retrieved candidate set. Training typically relies on contrastive objectives that draw conjectures closer to premises appearing in their proofs while pushing them away from irrelevant statements. Representative examples of this approach include [161, 130, 127].

Semantic Retrieval

Semantic retrieval aims to identify mathematically equivalent or closely related statements from a mathematical corpus based on their meaning rather than surface-level similarity. This task is motivated by practical use cases such as theorem search in large mathematical libraries. For example, users of Lean frequently need to locate relevant theorems in mathlib4 when constructing proofs. In this setting, a query may be expressed either in natural language or in formal code, while the retrieval corpus typically consists of formal declarations from mathlib4. To bridge the gap between informal queries and formal corpora, LeanSearch http://leansearch.net/ constructs an aligned informal–formal corpus derived from mathlib4 and performs retrieval over the combined representation [52]. This approach enables semantic matching across representation modalities and significantly improves retrieval effectiveness for natural language queries. At present, LeanSearch is officially supported by the Lean community and has been integrated into mathlib4 as a standard client for semantic retrieval. This level of community endorsement and infrastructural integration makes LeanSearch a practical and sustainable component of the mathlib ecosystem, rather than a standalone research prototype. In addition to LeanSearch, several other semantic search tools have been developed for mathlib4, including Moogle https://www.moogle.ai/, LeanExplore [7], LeanFinder [101], and LeanDex https://leandex.projectnumina.ai/.

Formula retrieval constitutes an important subtask of semantic retrieval, where the query is a mathematical formula or a formula pattern and the objective is to retrieve semantically related formulas from a collection of documents. This setting introduces unique challenges. Formulas that represent the same mathematical concept may differ significantly in surface form due to notational variation or algebraic properties such as commutativity. Conversely, formulas with similar visual appearance may encode different meanings when interpreted in different mathematical contexts. Traditional approaches to formula retrieval are largely based on tree representations that encode the structural organization of mathematical expressions. In these methods, a formula is represented as a tree, and similarity is defined over trees or their substructures. A widely used representation is the Symbol Layout Tree (SLT) [164], in which nodes correspond to symbols and edges encode spatial relationships such as superscript, subscript, or adjacency. Another prominent representation is the Operator Tree (OPT) [54], where internal nodes represent operators and leaf nodes represent operands. Compared with SLTs, operator trees abstract away visual layout and focus on mathematical operations and their hierarchical relationships. Tree-based retrieval algorithms typically compare formulas by matching subtrees or paths, or by computing tree edit distances. For example, Approach0 [175, 174] represents formulas as operator trees and uses leaf-to-root paths as basic retrieval units. It performs retrieval in two stages, first identifying candidate formulas whose paths overlap with those of the query, and then re-ranking candidates based on similarity measures derived from the largest common subtrees.

Beyond traditional symbolic matching, recent work has explored the use of text embedding models for formula retrieval. Early approaches adapt techniques from natural language representation by linearizing structured formula encodings and embedding them in a continuous vector space. For instance, Tangent-CFT [105] performs a depth-first traversal over SLT and OPT, tokenizes the resulting tuple sequences, and applies text embedding models to obtain formula representations. Concurrent work augments formula representations with surrounding textual context to better capture semantic meaning [104, 85]. For example, MathAMR [104] integrates formulas into their linguistic context by combining Abstract Meaning Representation (AMR) graphs with OPTs, replacing formula nodes in the AMR graph by the root nodes of the corresponding OPTs, and embedding the resulting linearized graphs using Sentence-BERT.

Question-Answer Retrieval

Question–answer (QA) retrieval concerns the task of retrieving mathematical answers, explanations, or supporting documents in response to a natural language query. Mathematical questions are inherently multimodal, typically combining natural language with symbolic expressions, formulas, or diagrams, and candidate answers exhibit similar structure. As a result, relevance in mathematical QA retrieval is defined by semantic adequacy, namely whether an answer correctly and meaningfully addresses the question, for example by providing a valid solution, proof, or conceptual explanation, rather than by surface-level lexical overlap.

Traditional general-purpose text retrieval methods, such as BM25 [117, 118] and TF–IDF, rely on sparse vector representations of queries and documents and assess relevance through weighted vector similarity [120]. While these methods can be directly applied to question–answer retrieval, they often perform poorly in mathematical settings. This limitation stems from their dependence on exact term matching and their inability to capture the semantics of mathematical language or the structural relationships encoded in mathematical formulas. With the rise of deep learning, research shifted toward neural retrieval models based on pretrained transformers. A common approach is to pretrain and fine-tune transformer models on large-scale mathematical corpora to obtain representations better aligned with mathematical syntax and semantics. For example, MathBERT [112] was pretrained on math-heavy corpora containing formulas and introduced objectives such as masked formula substructure prediction to better model mathematical symbols in context. Building on dense retrieval paradigms, [116] investigated the use of ColBERT [80] on the ARQMath benchmarks [106, 165], fine-tuning neural retrievers on millions of question–answer pairs with negatives selected via rule-based heuristics. Recognizing the complementary strengths of symbolic and neural methods, several hybrid approaches have also been proposed. Mabowdor [173], for example, combines dense passage retrieval with parallel sparse retrieval based on structure-aware mathematical indexing and traditional text-based methods, merging their outputs via a learned weighted scheme. This hybrid strategy achieved strong performance in ARQMath-3 [103], highlighting the effectiveness of integrating classical mathematical structure with neural semantic representations for QA retrieval.

4 Challenges and Prospects