# Knowledge Graphs are Implicit Reward Models: Path-Derived Signals Enable Compositional Reasoning

**Authors**:

- Yuval Kansal (Princeton University)

- &Niraj K. Jha (Princeton University)

## Abstract

Large language models have achieved near-expert performance in structured reasoning domains like mathematics and programming, yet their ability to perform compositional multi-hop reasoning in specialized scientific fields remains limited. We propose a bottom-up learning paradigm in which models are grounded in axiomatic domain facts and compose them to solve complex, unseen tasks. To this end, we present a post-training pipeline, based on a combination of supervised fine-tuning and reinforcement learning (RL), in which knowledge graphs act as implicit reward models. By deriving novel reward signals from knowledge graph paths, we provide verifiable, scalable, and grounded supervision that encourages models to compose intermediate axioms rather than optimize only final answers during RL. We validate this approach in the medical domain, training a 14B model on short-hop reasoning paths (1-3 hops) and evaluating its zero-shot generalization to complex multi-hop queries (4-5 hops). Our experiments show that path-derived rewards act as a “compositional bridge,” enabling our model to significantly outperform much larger models and frontier systems like GPT-5.2 and Gemini 3 Pro, on the most difficult reasoning tasks. Furthermore, we demonstrate the robustness of our approach to adversarial perturbations against option-shuffling stress tests. This work suggests that grounding the reasoning process in structured knowledge is a scalable and efficient path toward intelligent reasoning.

footnotetext: Preprint. Under review.

## 1 Introduction

Recent advances in language models have revealed that reasoning capabilities can be significantly enhanced through a combination of high-quality pretraining, supervised fine-tuning (SFT), carefully tuned reinforcement learning (RL)-based post-training, and strategic use of additional test-time compute (OpenAI, 2025; Google DeepMind, 2025; Yang et al., 2025; Muennighoff et al., 2025). The resulting systems achieve near-expert performance in well-structured domains, such as mathematics and programming, where high-quality data have been curated, reasoning steps are clear, ground truth is unambiguous, and intermediate verification is tractable (Lightman et al., 2023; Anthropic, 2025). However, true human-level intelligence in specialized fields requires more than just general pattern matching or long-form generation; it requires compositional reasoning: the ability to reliably combine axiomatic facts for complex multi-hop problem solving Kamp & Partee (1995); Fodor (1975). While current large language models (LLMs) excel when reasoning steps are clear and carefully curated expert data are available, compositional reasoning in high-stakes scientific domains, where reasoning paths are multi-faceted, remains elusive (Yin et al., 2025; Kim et al., 2025).

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Question/Diagram: Hypogammaglobulinemia Mechanisms

### Overview

The image presents a medical question regarding the causes of hypogammaglobulinemia in a 58-year-old male patient, along with multiple-choice options and a diagram illustrating potential causal relationships between different medical conditions. The correct answer is indicated as option B.

### Components/Axes

The image contains the following components:

* **Question Text:** A detailed patient case description.

* **Options:** Four multiple-choice answers (A, B, C, D).

* **Correct Answer:** Highlighted as "B".

* **Diagram:** A circular diagram with four interconnected nodes representing medical conditions.

* **Node Labels:** Each node is labeled with a specific condition.

* **Connecting Arrows:** Arrows labeled "maybe_cause" connect the nodes, indicating potential causal relationships.

### Content Details

**Question Text:**

"A 58-year-old male presents with a 6-month history of intermittent flushing, diarrhea, and new-onset wheezing. Serum 5-HIAA is markedly elevated. Over the past month, he has also developed significant edema and ascites. Laboratory investigations reveal low serum albumin, IgG, IgA, and IgM levels. His C3 and C4 complement levels are normal. A CT scan reveals a mass in the ileum with liver metastases. Which of the following mechanisms is MOST likely contributing to this patient's hypogammaglobulinemia?"

**Options:**

A. Direct bone marrow suppression by tumor metastases.

B. Loss of immunoglobulins due to a protein-losing enteropathy secondary to chronic diarrhea and complement hyperactivation.

C. Decreased immunoglobulin production secondary to tryptophan depletion by the tumor, impairing B-cell function.

D. Immune complex formation with vasoactive substances released by the tumor, leading to clearance of immunoglobulins.

**Diagram Details:**

The diagram consists of four nodes arranged in a circular fashion, connected by arrows labeled "maybe_cause".

* **Node 1 (Bottom-Left):** "Carcinoid tumours and carcinoid syndrome" - Represented by a brain icon.

* **Node 2 (Left):** "Malabsorption syndrome" - Represented by an intestine icon.

* **Node 3 (Right):** "Complement hyperactivation angiopatic thrombosis and protein-losing enteropathy" - Represented by a blood vessel icon.

* **Node 4 (Bottom-Right):** "Hypogammaglobulinemia" - Represented by a vial icon.

The arrows indicate the following potential causal relationships:

* Carcinoid tumours/syndrome -> Malabsorption syndrome

* Malabsorption syndrome -> Complement hyperactivation/protein-losing enteropathy

* Complement hyperactivation/protein-losing enteropathy -> Hypogammaglobulinemia

### Key Observations

* The diagram suggests a chain of events leading to hypogammaglobulinemia, starting with carcinoid tumors and culminating in immunoglobulin loss.

* The "maybe_cause" label indicates that the relationships are not definitively established, but rather represent potential contributing factors.

* The correct answer (B) aligns with the pathway highlighted in the diagram, specifically the role of protein-losing enteropathy in immunoglobulin loss.

### Interpretation

The image presents a clinical reasoning scenario. The question tests the understanding of the mechanisms underlying hypogammaglobulinemia in the context of a complex clinical presentation. The diagram serves as a visual aid to illustrate the potential pathways involved, emphasizing the role of gastrointestinal dysfunction (malabsorption and protein-losing enteropathy) as a key contributor. The use of "maybe_cause" acknowledges the complexity of the disease process and the possibility of multiple contributing factors. The correct answer (B) highlights the importance of considering protein-losing enteropathy, which is consistent with the patient's symptoms (diarrhea, edema, ascites) and laboratory findings (low serum albumin, immunoglobulins). The diagram is a simplified representation of a complex biological system, and the relationships depicted should be interpreted with caution. The diagram's structure suggests a causal chain, but correlation does not equal causation. The diagram is a visual aid to help understand the potential mechanisms, but it does not provide definitive proof of causality.

</details>

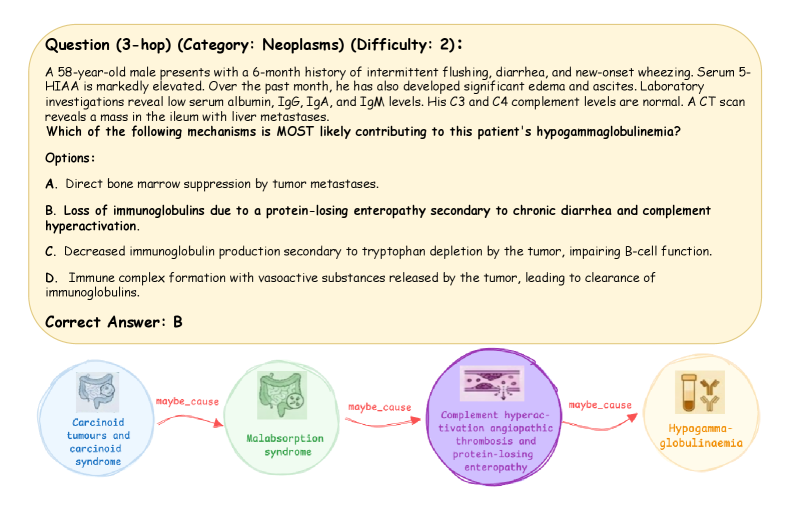

Figure 1: Compositional Reasoning: A sample 3-hop query that requires systematic traversal of axiomatic triples to make a grounded, multi-step clinical deduction.

To bridge this gap, we argue in favor of a bottom-up learning paradigm: grounding models in axiomatic facts and then composing these fundamentals into sophisticated domain knowledge. Knowledge graphs (KGs) provide a natural and promising scaffold for this grounding; they encode entities and relations in a structured, interpretable fashion that can represent the building blocks of domain knowledge at scale. Recent work Dedhia et al. (2025); Wang et al. (2024) has shown how high-quality data can be curated from such graphs and used to fine-tune models to obtain better reasoning traces. However, good static data is just the first step towards mastering the process of composition. Beyond high-quality data, robust reward design is a key lever for shaping models that can compose axiomatic facts from a domain to arrive at a logical conclusion.

Existing post-training methods, e.g., reinforcement learning from human feedback Ouyang et al. (2022) and direct preference optimization Rafailov et al. (2023), optimize models to match human preference with final outputs, not the process that produced them. Proxy reward signals, such as reward length and alignment with expert-written answers, while useful, fail to account for the composition intricacies needed to answer a complex multi-hop query. In practice, reward models often conflate superficial correlates (fluency, deference) with quality, thus leading to reward over-optimization and brittle answers (Shrivastava et al., 2025). In safety-critical domains, the result is a mismatch between human-liked style and ground-truth validity (Damani et al., 2025; Weng, 2024; Rafailov et al., 2023). Whereas process supervision (rewarding intermediate steps) has shown promise in mathematics and logic Zhang et al. (2025); Cui et al. (2025); Wang et al. (2025); Lightman et al. (2023), curating and scaling expert-annotated data for other domains are notoriously difficult to achieve and nontrivial. This raises a key question: How can we build systems and reward signals at scale that promote grounded compositional reasoning in multi-hop tasks without relying on expensive human-in-the-loop annotations?

KGs offer an implicit solution to this scaling problem. In a KG, domain-specific concepts and their relationships are represented as axiomatic triples $(head,relation,tail)$ . Our core insight is that by comparing the reasoning and assertions of a model during post-training against relevant triples and the chain of axiomatic facts required to solve the problem, we can turn the match (or mismatch) into a high-quality reward signal. Instead of an answer that “looks good,” this lets us reward the model to the degree its response is supported by verifiable domain knowledge and implicitly reward it for correctly composing facts to produce a solution. This is readily scalable without requiring external expert supervision and further enables us to move away from top-down distillation and ground the model’s reasoning in the field’s fundamental building blocks.

In this article, we realize this idea through a Base Model $\rightarrow$ SFT [Low-Rank Adaptation (LoRA)] $\rightarrow$ RL [Group Relative Policy Optimization (GRPO)] post-training pipeline that uses a grounded KG to derive a novel reward signal to enable compositional reasoning (Hu et al., 2022; Guo et al., 2025; Yasunaga et al., 2021). Whereas the approach can be generally applied, we study it in the medical domain, a field that serves as a rigorous stress test for compositional reasoning. Medical knowledge inherently requires multi-hop reasoning; a single clinical diagnosis may require navigating from a patient’s demographics and medical history to symptoms, from those symptoms to a disease, and finally to a drug (a sample multi-hop query is shown in Fig. 1). By training a Qwen3 14B model Yang et al. (2025) on simple 1-, 2-, and 3-hop reasoning paths derived from a KG, we probe whether it can learn the underlying “logic of composition” to solve unseen, complex medical queries, ranging from 2- to 5-hop, in the ICD-Bench test suite (Dedhia et al., 2025). Our results indicate that this grounded SFT+RL approach leads to large accuracy improvements on the most difficult questions, and remains robust under stress tests, such as option shuffling and ICD-10 category breakdowns (Organization, 1992). We find that while SFT provides the necessary knowledge base, RL acts as the “compositional bridge.” We demonstrate that insights learned on an 8B model transfer effectively to a 14B model, outperforming larger reasoning and frontier models.

Our core contributions can be summarized as follows:

- A Grounded, Scalable Reinforcement Learning with Verifiable Rewards (RLVR) Pipeline: We introduce a scalable SFT+RL post-training framework designed to enable compositional reasoning in models using KGs as a verifiable ground truth.

- KG-Path Inspired Reward: We conduct a thorough investigation to design a novel reward signal derived from the KG that encourages compositional reasoning, correctness, and enables process supervision at scale.

- Compositional Generalization: We demonstrate how training on 1-to-3-hop paths enables a model to generalize to difficult and longer 4-, 5-hop questions, significantly outperforming base models and larger models.

- Robustness & Real-World Validation: We stratify our model’s performance by different difficulty levels, on real-world medical categories (ICD-10), and its resilience against adversarial option shuffling.

## 2 Related Work

### 2.1 Role of SFT and RL in Reasoning

Recent studies have intensely debated the distinct contributions of SFT and RL to model performance (Jin et al., 2025; Kang et al., 2025; Matsutani et al., 2025). The authors of Chu et al. (2025) argue that “SFT memorizes, RL generalizes,” claiming that while SFT stabilizes outputs, it struggles with out-of-distribution scenarios that RL can navigate. The authors of Rajani et al. (2025) characterize GRPO as a “scalpel” that amplifies existing capabilities and SFT as a “hammer” to overwrite prior knowledge. Our findings align with these dynamics. We use SFT to instill atomic domain knowledge in the model and RL to amplify compositional logic required to connect such knowledge.

Contrary to the findings in Yue et al. (2025), our results on unseen 4-, 5-hop queries demonstrate that when rewards are grounded in relevant axiomatic primitives, RL can elicit novel compositional abilities beyond the baseline. This echoes the findings in Yuan et al. (2025) that demonstrate that RL can teach models to compose old skills into new ones; we validate this in a high-stakes real-world domain rather than a synthetic one.

### 2.2 RL on KGs

Traditional applications of RL on KGs, e.g., Das et al. (2017) and Xiong et al. (2017), primarily focus on traversing graph structures to complete missing triples (link prediction) or find missing entities. The authors of Lin et al. (2018) further refine this approach with reward shaping to improve multi-hop reasoning, but still largely confine themselves to the task of graph completion instead of open-ended question answering in a real-world setting. In more recent works, the authors of Wang et al. (2024) propose “Learning to Plan,” where KGs guide the retrieval process for retrieval-augmented generation systems, and those of Yan et al. (2025) introduce RL from KG feedback to replace human feedback with KG signals. While promising, these approaches often limit the role of the KG to retrieval planning or simple search tools for alignment.

Our work differs fundamentally by centrally positioning KGs as a dense process verifier for real-world multi-hop reasoning. The authors of Khatwani et al. (2025) use LLMs as a reward model for KG reasoning, but found the approach to be brittle, with poor transfer to downstream diagnostic tasks. We attribute this to the lack of a compositional training curriculum; by combining the bottom-up data curation of Dedhia et al. (2025) with treating the KG as a reward model to derive path-aligned signals, we overcome this limitation. Furthermore, unlike Gunjal et al. (2025) that uses unstructured LLM-created rubrics as rewards, or rule-based Logic-RL Xie et al. (2025), we derive our signal directly from grounded axiomatic paths of the KG.

## 3 Preliminaries

### 3.1 Notation and RL for Language Models

We treat an LLM as a stochastic policy $\pi_{\theta}$ that maps a query $q$ [multiple-choice question (MCQ) task] to a distribution over possible completions $y$ . Each completion $y$ comprises a reasoning trace $r$ (chain-of-thought) Wei et al. (2022) and a final answer $\hat{a}$ (spanning A-D). Each training task $q$ is associated with a ground-truth answer $a^{*}$ and a ground-truth KG path $P={(h_{i},r_{i},t_{i})}^{L}_{i=1}$ (see Section 4.1).

A composite scalar reward function $R(y)$ , derived from the KG, is used to score a generated completion $y$ (see Section 4.4). The RL objective is to maximize the expected reward under the prompt distribution:

$$

\mathbf{J}(\theta)=\mathbf{E}_{q\sim D}\mathbf{E}_{y\sim\pi_{\theta}(\cdot|q)}[R(y)]

$$

Whereas the response $y$ is produced token-by-token, we treat the entire completion as a single trajectory for reward assignment, following common practice in LLM post-training.

Policy updates are performed using GRPO, a popular proximal policy optimization-like optimizer Schulman et al. (2017) that drops the critic and estimates advantages at the group level using normalization (Guo et al., 2025). See Appendix E for details of our hyperparameter configuration for the SFT and RL stages.

### 3.2 SFT Followed by RL

Our training recipe follows the widely adopted Base Model $\rightarrow$ SFT $\rightarrow$ RL framework for improving LLMs. First, an SFT stage initializes the policy (base LLM) to produce high-quality, KG-grounded reasoning traces. A subsequent RL stage refines the policy directly to optimize the reward signal and enable compositional reasoning. Formally, SFT minimizes the negative log-likelihood on a supervised dataset consisting of question-answer (QA) tasks paired with reference reasoning traces and answers.

In our experiments, the SFT stage provides broad KG coverage, whereas the RL stage is deliberately small: a design choice motivated by observed instability in an RL-from-scratch approach and because targeted RL with good reward can enable compositional abilities when built atop SFT initialization (Section 4.2, Appendix G have more details).

### 3.3 Medical KG: Unified Medical Language System (UMLS)

We instantiate our framework on a standard biomedical KG based on UMLS Bodenreider (2004), which encodes canonical medical ontology in a structured graph format. Each fact is represented as a triple $(head,relation,tail)$ and multi-hop paths $P={(h_{i},r_{i},t_{i})}_{i=1}^{L}$ serve as axiomatic compositional primitives used to generate QA tasks and path-alignment reward signals, and evaluate correctness. Full QA task generation and reward-design choices/strategies are described in the following section.

## 4 Methodology

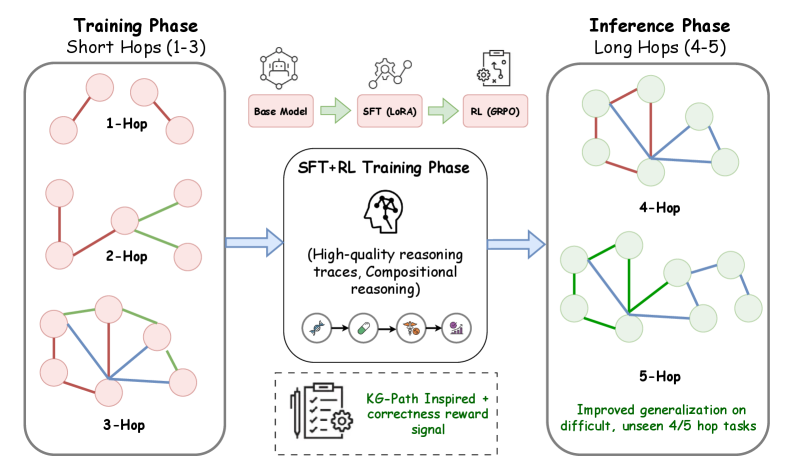

This section describes our data construction and training pipeline. We emphasize the sequential decisions we make: dataset choices, SFT warm start, RL budget, reward design, and experiments that guide these choices. Our training pipeline is designed to transition a base model from broad competence to deep, compositional medical-domain reasoning. Fig. 2 presents an overview of our pipeline.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Training and Inference Phases for Reasoning

### Overview

This diagram illustrates a two-phase process: a Training Phase involving short reasoning hops (1-3) and an Inference Phase involving longer reasoning hops (4-5). The diagram depicts the flow of information and the components used in each phase, focusing on the progression from base models to improved generalization through reinforcement learning.

### Components/Axes

The diagram is divided into three main sections: "Training Phase (Short Hops 1-3)", "SFT+RL Training Phase", and "Inference Phase (Long Hops 4-5)". Within each phase, there are visual representations of reasoning hops, depicted as node-link diagrams. The SFT+RL Training Phase section contains icons representing different model components and training techniques.

### Detailed Analysis or Content Details

**Training Phase (Short Hops 1-3):**

* **1-Hop:** A network of 5 nodes connected by 5 edges. The edges are colored green.

* **2-Hop:** A network of 5 nodes connected by 7 edges. The edges are colored red and green.

* **3-Hop:** A network of 5 nodes connected by 9 edges. The edges are colored red and green.

**SFT+RL Training Phase:**

* **Base Model:** Represented by a brain icon.

* **SFT (LoRA):** Represented by a chain-link icon.

* **RL (GRPO):** Represented by a robot icon.

* **Text:** "(High-quality reasoning traces, Compositional reasoning)"

* **KG-Path Inspired + correctness reward signal:** Represented by a gear icon.

**Inference Phase (Long Hops 4-5):**

* **4-Hop:** A network of 5 nodes connected by 9 edges. The edges are colored green and blue.

* **5-Hop:** A network of 5 nodes connected by 11 edges. The edges are colored green and blue.

* **Text:** "Improved generalization on difficult, unseen 4/5 hop tasks"

**Arrows:**

* Blue arrows indicate the flow of information from the Training Phase to the SFT+RL Training Phase, and from the SFT+RL Training Phase to the Inference Phase.

### Key Observations

* The complexity of the reasoning hops increases from the Training Phase to the Inference Phase, as indicated by the increasing number of edges in the node-link diagrams.

* The color of the edges changes from red/green in the Training Phase to green/blue in the Inference Phase, potentially indicating a shift in the type of reasoning or information flow.

* The SFT+RL Training Phase acts as a bridge between the Training and Inference Phases, utilizing different model components to enhance reasoning capabilities.

* The diagram highlights the importance of high-quality reasoning traces and compositional reasoning in the training process.

### Interpretation

The diagram illustrates a methodology for improving reasoning capabilities in a model. The training phase focuses on shorter reasoning chains (1-3 hops) to establish a foundational understanding. This is then enhanced through Supervised Fine-Tuning (SFT) with LoRA and Reinforcement Learning (RL) using GRPO, resulting in high-quality reasoning traces and compositional reasoning. Finally, the model is tested on longer, more complex reasoning chains (4-5 hops) during the inference phase, demonstrating improved generalization on difficult tasks. The change in edge colors between the training and inference phases could signify a change in the type of reasoning being performed, potentially moving from exploratory reasoning (red) to more confident or established reasoning (blue). The use of KG-Path inspired methods and correctness reward signals suggests a focus on grounding the reasoning process in knowledge graphs and ensuring the accuracy of the results. The overall goal is to create a model that can effectively handle complex reasoning tasks by building upon a solid foundation and leveraging advanced training techniques.

</details>

Figure 2: SFT+RL pipeline overview: Schematic of the pipeline from SFT to KG-grounded RL. While SFT enables domain-specific grounding, the path-derived reward signal during RL provides the process supervision necessary for compositional reasoning.

### 4.1 Data Construction and Axiomatic Grounding

Given our testbed that involves compositional reasoning and to ensure our model learns true depth rather than mere pattern matching, we adopt the data-generation and curation pipeline from Dedhia et al. (2025), which enables scalable generation of multi-hop reasoning questions grounded in a medical KG. The structured representation of medical concepts, such as diseases, drugs, signs, and mechanisms, in a KG as $(head,relation,tail)$ triples enables question generation directly from verifiable and grounded KG paths. Questions are generated in natural language in an MCQ format using a backend LLM by traversing $n$ -length paths within the KG, where $n$ represents the number of “hops” required to link a starting node to a final node. This enables precise control over the compositional complexity of each query. Furthermore, each question is paired with a rich reasoning trace and a ground truth path: a sequence of $(head,relation,tail)$ triples that constitutes a verifiable logical chain. The pipeline helps stratify questions by hop length, difficulty, and ICD-10 category, and enforces strict separation between the training and test sets at the path and entity levels to avoid leakage.

We generate a training set of 24,660 QA tasks designed to ensure maximum node coverage across the KG (Yasunaga et al., 2021). For evaluation, we use ICD-Bench, a non-overlapping test set of 3,675 questions (Dedhia et al., 2025). Importantly, the training set consists of 1-3 hop paths, whereas ICD-Bench includes 2-5 hop path tasks across 15 ICD-10 categories to test zero-shot compositional generalization at different difficulty levels [1 (very easy) - 5 (very hard)]. More analysis of overlaps between the training and test sets is presented in Appendix D.

Our training pipeline consists of three stages: Base Model $\rightarrow$ SFT (LoRA) $\rightarrow$ RL (GRPO). This design reflects our central hypothesis that compositional reasoning emerges most reliably when models are first grounded in rich reasoning traces via supervised learning and then tuned using scalable process-aligned rewards derived from the KG paths. We emphasize that all training data and rewards are derived from the same KG to ensure consistency between training and evaluation.

### 4.2 RL Alone is Insufficient

A core finding of our work is that the Zero-RL approach, applying GRPO directly to the base LLM, is insufficient for deep domain expertise at our model scale. The model requires an understanding of the domain axioms before it can learn to compose. Starting from the base model (Qwen3 8B), we apply GRPO directly using subsets of training data: 5k, 10k, and all 24.66k examples. Across these settings, we find that while RL improves performance relative to the base model, it does not consistently outperform SFT-only training on the base model. Interestingly, the budget of 5k examples yields the strongest results among all other settings, suggesting how large-scale vanilla RL without proper grounding is insufficient for compositional behavior, motivating the use of SFT for an initial warm start.

Based on these observations, we use 5k examples in the RL stage and the remaining 19.66k in the SFT stage. The base model is fine-tuned using LoRA on 19.66k examples, followed by GRPO on the remaining 5k examples as per the SFT+RL pipeline.

See Appendix A, B for detailed ablations with the Zero-RL and SFT+RL pipelines on the Qwen3 8B model, respectively. See Appendix H for our GRPO training prompt.

### 4.3 Reward Design Exploration for Compositional Reasoning

A central goal and a novel contribution of this work is to design scalable reward signals during training that enable compositional reasoning beyond surface-level understanding. Towards this end, we conduct extensive ablation studies using a combination of four distinct reward signals to determine which best fosters verifiable composition:

- Binary Correctness $(R_{bin})$ : A simple outcome-based signal that rewards the final answer.

- Similarity $(R_{sim})$ : A distillation-based reward that measures the Jaccard similarity between the model output and an expert reasoning trace (generated by Gemini 2.5 Pro during data curation).

- Thinking Quality $(R_{think})$ : A reward designed to score the thinking quality and length of the generation.

- Path Alignment $(R_{path})$ : A novel reward that scores the model based on the coverage of the ground-truth KG triples in the model response.

We conduct a systematic exploration by evaluating a combination of these rewards, always including $R_{bin}$ (+1 for correctness, 0 otherwise) as a minimal signal. Empirically, we discover that $R_{think}$ is often unstable and leads to reward hacking, generating inefficacious chains. $R_{sim}$ also proved sub-optimal, suggesting distillation rewards over-optimize aesthetic mimicry rather than true logical composition.

Our findings highlight the power of simplicity: The combination of path alignment and binary correctness provides the strongest signal for composition. Whereas $R_{bin}$ optimizes correctness, $R_{path}$ rewards the model for identifying and applying the axiomatic facts (triples) required to compose the correct solution. To further strengthen the outcome signal, we replace the simple $R_{bin}$ with negative sampling reinforcement Zhu et al. (2025), which penalizes incorrect generations by upweighting the negative reward, thereby encouraging the model to explore alternative/correct trajectories.

### 4.4 KG-Grounded Reward Formulation

To provide a robust reward signal to enable composition, we develop a composite reward that balances outcome correctness with path-level alignment grounded in the KG. Let the model generate a response $y$ for a question $q$ with the reasoning trace $r$ and a final answer $\hat{a}$ . For each QA task, there is a ground truth answer $a^{*}$ and a ground-truth KG path $P={(h_{i},r_{i},t_{i})}_{i=1}^{L}$ , where $L$ is the path length. The total reward is then a combination of the two rewards:

$$

\vskip-5.0ptR_{total}(y)=R_{bin}(\hat{a},a^{*})+R_{path}(r,P)\vskip-5.0pt

$$

Binary Correctness Reward: This provides a minimal but necessary supervision signal on the final answer.

$$

\vskip-5.0ptR_{\text{bin}}(\hat{a},a^{*})=\begin{cases}\alpha,&\text{if }\hat{a}=a^{*}\\

-\beta,&\text{otherwise}\end{cases}\vskip-5.0pt

$$

where $\alpha,\beta>0$ and $\beta>\alpha$ . This asymmetric design ensures stable learning by reinforcing exploration of correct alternate paths. We use $\alpha=0.1,\beta=1$ in accordance with investigations by (Zhu et al., 2025).

Path Alignment Reward (KG-grounded): The primary technical innovation is $R_{path}$ , which provides automated and scalable process supervision by evaluating whether the reasoning trace of the model aligns with the ground-truth KG path $P$ during RL post-training. We first tokenize and normalize the reasoning trace $r$ to extract a set of textual tokens, $T(r)$ . We derive a corresponding set of path tokens, $T(P)$ , from the ground-truth path $P$ , representing the entities in $P$ . The core signal is path coverage:

$$

\vskip-5.0pt\text{coverage}(r,P)=\frac{\mid T(r)\cap T(P)\mid}{\mid T(P)\mid}\vskip-5.0pt

$$

We include a minimum-hit constraint that requires alignment with at least two distinct path entities to discourage trivial matches and promote logical composition. We apply an additional repetition penalty to reduce reward hacking and avoid linguistic collapse. The final reward is defined as:

$$

\vskip-5.0pt\begin{split}R_{path}(r,P)=&\min(\gamma_{1}\cdot\text{coverage}(r,P)\\

&+\gamma_{2}\cdot\mathbf{I}(|T(r)\cap T(P)|\geq 2),R_{max}),\end{split}\vskip-5.0pt

$$

scaled by a repetition penalty factor, $\phi_{rep}$ , and clipped to a fixed maximum. We use $\gamma_{1}=1.2,\gamma_{2}=0.3$ , $R_{max}=1.5$ .

The total reward, $R_{total}$ , is process-level, grounded, and compositional. It is readily scalable and easily verifiable as a result of the grounding in the KG. Furthermore, unlike similarity-based distillation or AI-based rubric rewards Gunjal et al. (2025), alignment is scored against true domain structure rather than stylistic mimicry. We present a formal formulation of $R_{sim}$ and $R_{think}$ in Appendix C.

### 4.5 Scaling and Benchmarking

We evaluate our pipeline on the Qwen3 8B model before scaling the findings to the 14B variant without modification. The 14B model trained on our pipeline not only generalizes to 2- and 3-hop tasks, but also to 4-and 5-hop tasks with remarkable efficacy, surpassing much larger frontier models. In addition to overall accuracy, we stratify performance by hop length, difficulty level, ICD-10 category, and robustness under the option-shuffling stress test.

## 5 Results

Setup: We initially evaluate three systems: Base Qwen3 14B model, model trained using LoRA on the full training set (24,660 QA tasks), and our proposed SFT+RL pipeline (SFT on 19,660 tasks followed by GRPO on the remaining 5k tasks) based on leveraging the KG-derived reward. We do not report results for models trained using the Zero-RL approach, described in Section 4.2, since all of them performed worse than SFT on the full dataset (see Appendix A for details). All evaluations use the held-out test ICD-Bench test set (3,675 tasks) to validate whether path-derived signals truly enable compositional reasoning. Finally, we evaluate our SFT+RL model against larger frontier and reasoning models. See Appendix F for sample model responses of our final Qwen3 14B SFT+RL model.

### 5.1 Scaling Composition: From Short-Hop Training to Long-Hop Reasoning

The primary claim of this work is that KGs function as implicit reward models, and grounding the model reasoning in path-derived signals enables it to learn the underlying logic of composition rather than regurgitating information seen during training.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Reasoning Hops

### Overview

This line chart depicts the relationship between the number of reasoning hops and the accuracy of three different models: a Base Model, an SFT (Supervised Fine-Tuning) Only model, and an SFT+RL (Reinforcement Learning) model. The chart illustrates how accuracy changes as the complexity of reasoning increases, indicated by the number of reasoning hops. A shaded region in the upper-right corner highlights the "Generalization (unseen complexity)" and a corresponding accuracy increase of +11.1%.

### Components/Axes

* **X-axis:** Number of Reasoning Hops (ranging from 2 to 5).

* **Y-axis:** Accuracy (%) (ranging from 60% to 95%).

* **Data Series:**

* Base Model (Blue, dashed circle line)

* SFT Only (Magenta, dashed square line)

* SFT+RL (Orange, dashed diamond line)

* **Legend:** Located in the bottom-right corner, clearly labeling each data series with its corresponding color and marker.

* **Annotation:** "Generalization (unseen complexity)" with "+11.1%" positioned in the top-right corner, indicating an accuracy improvement.

### Detailed Analysis

* **Base Model (Blue):** The line starts at approximately 69% accuracy at 2 reasoning hops, decreases to around 64% at 3 hops, and then gradually increases to approximately 70% at 5 hops. The trend is generally flat with a slight dip in the middle.

* **SFT Only (Magenta):** The line begins at approximately 76% accuracy at 2 reasoning hops, decreases to around 74% at 3 hops, increases to approximately 80% at 4 hops, and then slightly decreases to around 79% at 5 hops. This line shows a more pronounced increase between 3 and 4 hops.

* **SFT+RL (Orange):** The line starts at approximately 85% accuracy at 2 reasoning hops, decreases to around 81% at 3 hops, and then increases sharply to approximately 87% at 4 hops, and then slightly decreases to around 86% at 5 hops. This line consistently demonstrates the highest accuracy across all reasoning hops.

**Specific Data Points (approximate):**

| Reasoning Hops | Base Model (%) | SFT Only (%) | SFT+RL (%) |

|---|---|---|---|

| 2 | 69 | 76 | 85 |

| 3 | 64 | 74 | 81 |

| 4 | 68 | 80 | 87 |

| 5 | 70 | 79 | 86 |

### Key Observations

* The SFT+RL model consistently outperforms both the Base Model and the SFT Only model across all reasoning hops.

* The Base Model exhibits the lowest accuracy and the most fluctuating performance.

* All models show a dip in accuracy at 3 reasoning hops, potentially indicating a point of increased complexity.

* The largest performance gain for the SFT+RL model occurs between 3 and 4 reasoning hops.

* The annotation highlights a significant generalization improvement of +11.1% at 5 reasoning hops, specifically related to unseen complexity.

### Interpretation

The data suggests that incorporating Reinforcement Learning (RL) into Supervised Fine-Tuning (SFT) significantly improves the model's ability to handle complex reasoning tasks. The SFT+RL model demonstrates a clear advantage in accuracy, particularly as the number of reasoning hops increases, indicating a better capacity for generalization to unseen complexities. The dip in accuracy at 3 reasoning hops for all models could represent a threshold where the reasoning process becomes more challenging, requiring more sophisticated learning techniques. The +11.1% generalization improvement at 5 hops further emphasizes the benefits of the SFT+RL approach for tackling complex, real-world problems. The Base Model's lower performance suggests that simply scaling up the model size or training data may not be sufficient to achieve high accuracy in complex reasoning scenarios; targeted fine-tuning and reinforcement learning are crucial.

</details>

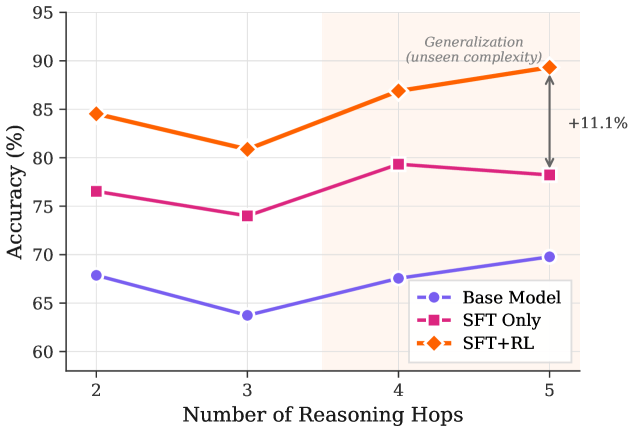

Figure 3: Accuracy by Hop Length: Our SFT+RL model not only outperforms baselines on 2-3 hop tasks but exhibits a positive performance gradient on unseen 4-, 5-hop reasoning tasks, validating the “compositional bridge” enabled by path-aligned rewards.

Path-derived Signals Enable Compositional Reasoning: Whereas the model was exposed to 1-, 2-, and 3-hop paths during the SFT+RL training phase, it remained totally naive to tasks involving 4-, 5-hop reasoning. As shown in Fig. 3, the SFT+RL model demonstrates substantially stronger generalization to longer paths, achieving a notable gain of 7.5% on unseen 4-hop and 11.1% on unseen 5-hop questions relative to the SFT-only approach. This improvement is not attributable to exposure to longer chains, since the training and evaluation distributions are identical across all models. Instead, it reflects the effect of path-derived signals introduced during RL. By rewarding assertions that align the model directly with the ground-truth KG path, the model learns the logic of composition. Importantly, the generalization gap between the SFT-only and SFT+RL approaches widens as hop-length increases. This is a hallmark of genuine compositional learning. The KG-derived reward signal $(R_{path})$ enables the model to decompose long-horizon reasoning into verifiable steps and compose reasoning beyond the complexity observed during training.

### 5.2 Robustness to Tasks Involving Reasoning Depth

A significant challenge in medical reasoning is maintaining integrity as the complexity of tasks increases. Aggregate performance metrics often obscure model failure on long-tail high-difficulty scenarios, where deep reasoning is paramount. We assign difficulty ratings from 1 (very easy) to 5 (very hard) Dedhia et al. (2025) to each ICD-Bench question and assess model performance as a function of question difficulty.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Bar Chart: Accuracy vs. Difficulty Level for Different Models

### Overview

This bar chart compares the accuracy of three different models – Base Model, SFT Only, and SFT+RL – across five difficulty levels: (Very Easy), (Easy), (Medium), (Hard), and (Very Hard). Accuracy is measured as a percentage, ranging from 0% to 100%.

### Components/Axes

* **X-axis:** Difficulty Level, with categories: 1 (Very Easy), 2 (Easy), 3 (Medium), 4 (Hard), 5 (Very Hard).

* **Y-axis:** Accuracy (%), ranging from 0 to 100.

* **Legend:** Located in the top-left corner, identifying the three data series:

* Base Model (Purple)

* SFT Only (Magenta/Pink)

* SFT+RL (Orange)

### Detailed Analysis

The chart consists of five groups of three bars, one for each model at each difficulty level.

**Difficulty Level 1 (Very Easy):**

* Base Model: Approximately 84% accuracy.

* SFT Only: Approximately 88% accuracy.

* SFT+RL: Approximately 94% accuracy.

**Difficulty Level 2 (Easy):**

* Base Model: Approximately 58% accuracy.

* SFT Only: Approximately 76% accuracy.

* SFT+RL: Approximately 82% accuracy.

**Difficulty Level 3 (Medium):**

* Base Model: Approximately 48% accuracy.

* SFT Only: Approximately 69% accuracy.

* SFT+RL: Approximately 76% accuracy.

**Difficulty Level 4 (Hard):**

* Base Model: Approximately 40% accuracy.

* SFT Only: Approximately 65% accuracy.

* SFT+RL: Approximately 68% accuracy.

**Difficulty Level 5 (Very Hard):**

* Base Model: Approximately 30% accuracy.

* SFT Only: Approximately 52% accuracy.

* SFT+RL: Approximately 62% accuracy.

**Trends:**

* **Base Model:** Accuracy decreases consistently as difficulty level increases.

* **SFT Only:** Accuracy decreases as difficulty level increases, but at a slower rate than the Base Model.

* **SFT+RL:** Accuracy decreases as difficulty level increases, but generally maintains the highest accuracy across all difficulty levels.

### Key Observations

* SFT+RL consistently outperforms both the Base Model and SFT Only across all difficulty levels.

* The Base Model exhibits the most significant drop in accuracy as difficulty increases.

* The difference in accuracy between the models is most pronounced at higher difficulty levels.

* All models show a clear negative correlation between difficulty level and accuracy.

### Interpretation

The data suggests that incorporating Reinforcement Learning (RL) with Supervised Fine-Tuning (SFT) significantly improves model performance, particularly on more challenging tasks. The Base Model, lacking fine-tuning, struggles with increasing difficulty, indicating the importance of adapting the model to specific task complexities. The SFT Only model shows improvement over the Base Model, demonstrating the benefit of supervised learning. However, the SFT+RL model's consistent superiority highlights the added value of reinforcement learning in optimizing performance. The decreasing accuracy across all models with increasing difficulty is expected, as tasks become inherently more complex and require more sophisticated reasoning and problem-solving capabilities. The gap between the models widens at higher difficulty levels, suggesting that RL is particularly effective in tackling complex challenges. This data supports the idea that a combination of supervised and reinforcement learning techniques is crucial for building robust and adaptable models.

</details>

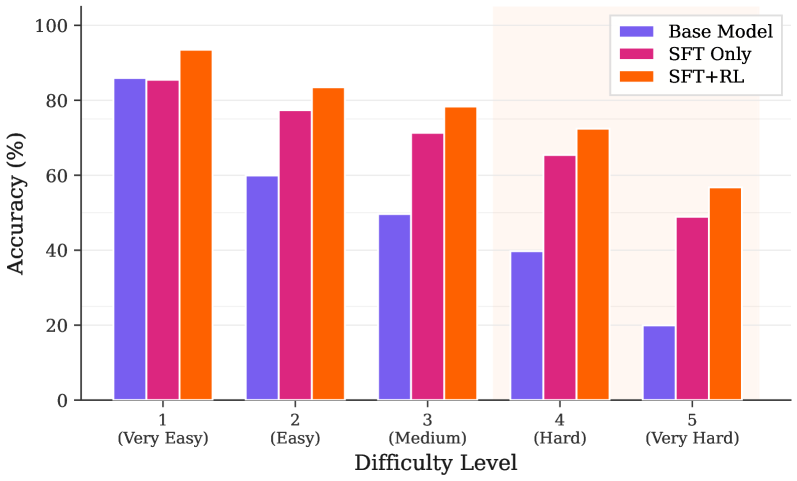

Figure 4: Accuracy by Difficulty Level: Whereas the Base Model’s reasoning collapses as task complexity increases, the SFT+RL pipeline exhibits robustness, maintaining a consistent lead over the SFT-only baseline across all levels.

Dominance in High-Complexity Tasks: The results shown in Fig. 4 demonstrate that the SFT+RL pipeline, grounded in path-derived signals, provides the most significant gains as task complexity increases. On Level-5 tasks, the base model accuracy collapses to 19.94%, indicating worse-than-random-guess accuracy in complex clinical scenarios. Whereas the SFT-only approach improves this to 48.93%, our SFT+RL model achieves 56.75%, nearly tripling the base model performance.

Consistency across all Difficulty Levels: On Level-1 tasks, our model reaches a near-ceiling accuracy (93.49%) and the performance gap remains robust across difficulty levels, with the SFT+RL model consistently maintaining a 7-10% lead over the SFT-only model. This demonstrates that by using the KG as an implicit reward model, we raise the performance floor for the most complex queries. On Level-5 tasks, where even large frontier models struggle, maintaining over 56% accuracy is a testament to how path-aligned rewards do more than just stylistic inference; they enable the model to reliably compose multi-step chains.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: Improvement over Base Model by Medical Specialty

### Overview

This is a horizontal bar chart comparing the improvement over a base model for two different approaches: "SFT Only" and "SFT+RL (Ours)", across various medical specialties. The improvement is measured in percentage (%). The chart displays the performance gain for each specialty using two distinct colored bars.

### Components/Axes

* **Y-axis (Vertical):** Lists medical specialties: Ear, Congenital, Neoplasms, Circulatory, Pharmacology, Eye, Musculoskeletal, Blood/Immune, Infectious, Respiratory, Skin, Endocrine, Digestive, Nervous, Mental Health.

* **X-axis (Horizontal):** Represents the "Improvement over Base Model (%)", ranging from 0 to 25.

* **Legend (Bottom-Right):**

* Magenta/Purple bar: "SFT Only"

* Orange bar: "SFT+RL (Ours)"

### Detailed Analysis

The chart consists of 15 horizontal bars, grouped by medical specialty. For each specialty, there are two bars representing the improvement achieved by "SFT Only" and "SFT+RL (Ours)".

Here's a breakdown of the approximate improvement percentages for each specialty:

* **Ear:** SFT Only ~14%, SFT+RL ~22%

* **Congenital:** SFT Only ~11%, SFT+RL ~19%

* **Neoplasms:** SFT Only ~12%, SFT+RL ~21%

* **Circulatory:** SFT Only ~8%, SFT+RL ~23%

* **Pharmacology:** SFT Only ~7%, SFT+RL ~16%

* **Eye:** SFT Only ~10%, SFT+RL ~18%

* **Musculoskeletal:** SFT Only ~12%, SFT+RL ~17%

* **Blood/Immune:** SFT Only ~10%, SFT+RL ~19%

* **Infectious:** SFT Only ~8%, SFT+RL ~13%

* **Respiratory:** SFT Only ~9%, SFT+RL ~16%

* **Skin:** SFT Only ~8%, SFT+RL ~18%

* **Endocrine:** SFT Only ~9%, SFT+RL ~17%

* **Digestive:** SFT Only ~7%, SFT+RL ~15%

* **Nervous:** SFT Only ~8%, SFT+RL ~16%

* **Mental Health:** SFT Only ~5%, SFT+RL ~12%

**Trends:**

* The "SFT+RL (Ours)" consistently outperforms "SFT Only" across all medical specialties.

* The orange bars (SFT+RL) generally slope upwards, indicating a positive correlation between specialty and improvement.

* The magenta bars (SFT Only) also show a general upward trend, but less pronounced than the orange bars.

### Key Observations

* The largest improvement difference between the two methods is observed in the "Circulatory" specialty, with SFT+RL achieving approximately 23% improvement compared to SFT Only's 8%.

* The smallest improvement difference is in "Mental Health", with SFT+RL at 12% and SFT Only at 5%.

* "SFT+RL (Ours)" consistently provides a substantial improvement over "SFT Only" in all categories, suggesting the reinforcement learning component is beneficial.

### Interpretation

The data suggests that incorporating Reinforcement Learning (RL) with Supervised Fine-Tuning (SFT) significantly enhances model performance across a diverse range of medical specialties. The consistent outperformance of "SFT+RL (Ours)" indicates that RL effectively refines the model's capabilities beyond what can be achieved through SFT alone. The varying degrees of improvement across specialties might reflect the complexity of each field or the availability of relevant training data. The largest gains in "Circulatory" could indicate that this specialty benefits most from the RL component, potentially due to the intricate relationships within the circulatory system. The relatively smaller gain in "Mental Health" might suggest that this area is already well-represented in the base model or that the RL component has limited impact due to the subjective nature of the data. Overall, the chart demonstrates the potential of RL to improve the accuracy and effectiveness of models in medical applications.

</details>

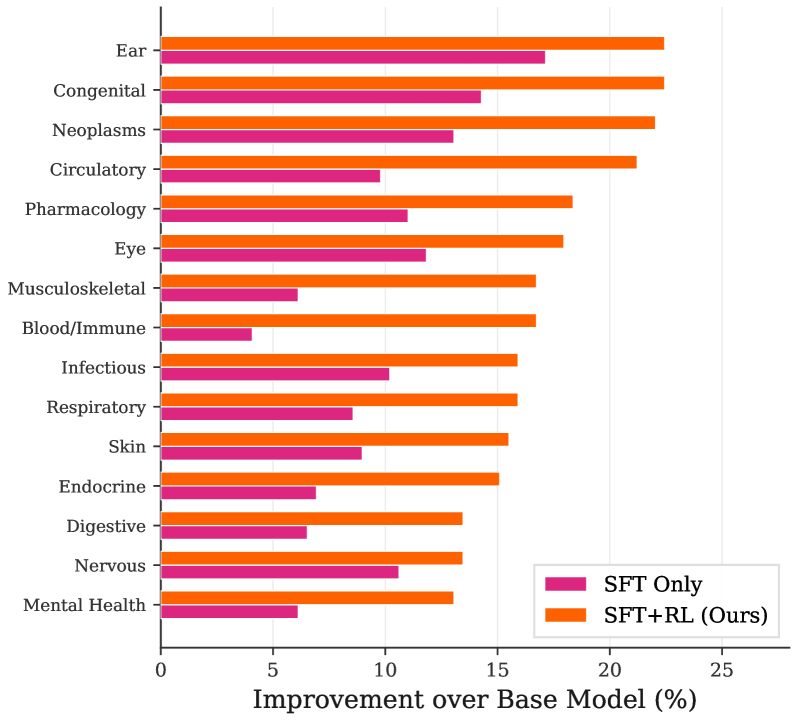

Figure 5: Accuracy by ICD-10 Category: Path-aligned rewards consistently improve performance across all 15 medical sub-domains.

### 5.3 ICD-10 Category Analysis: KG-grounded Gains Are Broadly Distributed

To connect our empirical evaluation to real-world, clinically meaningful structures, we follow the categorization introduced in Dedhia et al. (2025) that groups ICD-Bench questions into 15 ICD-10 categories in the data curation step. This classification lets us evaluate the breadth of our approach, enabling us to measure whether improvements of the SFT+RL model are concentrated in a few medical sub-domains or distributed across practical specialties.

The analysis, visualized in Fig. 5, reveals that our SFT+RL pipeline consistently achieves the highest accuracy and maintains a substantial lead over the SFT-only baseline across all medical categories. Some of the most significant improvements occur in high-stakes areas such as “Blood and Immune System Diseases” and “Circulatory System Diseases,” where diagnostic reasoning often requires complex, multi-hop evidence composition. This is consistent with our design choice to derive the reward from KG paths; the path-alignment reward can assign a meaningful intermediate signal and better shape compositional behavior.

### 5.4 Robustness to Format Perturbation

A common failure mode for LLMs is reliance on superficial cues, e.g., the order of options and answer in a multiple-choice list, rather than the true logical content and chain of reasoning. To evaluate the robustness of our models against such positional bias, we subject them to Stress-Test 3 (option shuffling) Gu et al. (2025). In this test, the order of incorrect distractor options is randomized while keeping the correct answer choice constant.

Table 1: Analysis of Option Format Perturbation.

| SFT-Only | 75.95% | 74.91% | $-1.04\$ |

| --- | --- | --- | --- |

| SFT+RL (Ours) | 83.62% | 82.45% | $-1.17\$ |

The results in Table 1 demonstrate a remarkable degree of robustness of our approach compared to frontier models analyzed in the literature. Whereas leading systems, such as GPT-5 and Gemini-2.5 Pro, have been shown to suffer performance drops of 4-6% under similar perturbations (in text-only medical contexts) Gu et al. (2025), our models maintain nearly stable performance with a negligible drop of $\sim 1\$ .

Importance of High-Quality Data: This resilience highlights a fundamental axiom of machine learning: use of high-quality, grounded data is paramount. Our data curation and training pipeline incentivizes the model to identify the correct answer based on a verifiable reasoning path rather than shortcut patterns seen during unstructured training. The fact that even the SFT-only model trained on high-quality traces exhibits such stability suggests that grounding the model in structured domain axiomatic knowledge is as critical as subsequent RL optimization. By grounding the model and ensuring it learns true logical composition, we move closer to systems capable of genuine domain competence rather than “illusion of readiness.”

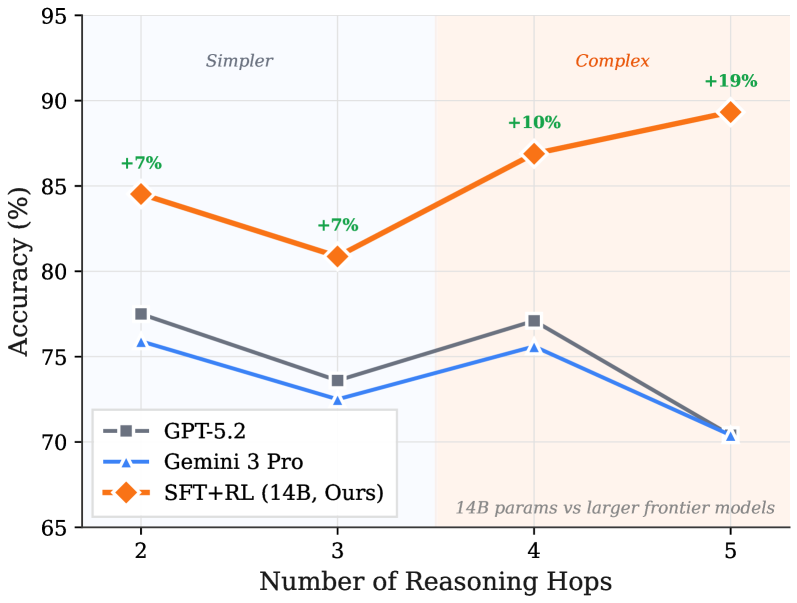

### 5.5 Algorithmic Efficiency vs. Scale: Surpassing Frontier Models

One of our central claims is that careful reward design and bottom-up data curation can enable compositional reasoning that outperforms top-down brute-force scaling. To validate this, we compare our 14B SFT+RL model against two distinct classes of benchmarks: (1) large frontier models (GPT-5.2, Gemini 3 Pro) that represent the ceiling of generalist zero-shot reasoning, and (2) QwQ-Med-3 (32B), a domain-expert model distilled for medical reasoning.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Reasoning Hops for Language Models

### Overview

This line chart compares the accuracy of three language models – GPT-5.2, Gemini 3 Pro, and SFT+RL (14B, Ours) – as the number of reasoning hops increases. The chart highlights a performance difference between a smaller model (SFT+RL) and larger frontier models (GPT-5.2 and Gemini 3 Pro) as the complexity of the reasoning task increases. The x-axis represents the number of reasoning hops, and the y-axis represents the accuracy in percentage. The chart is divided into two regions labeled "Simpler" and "Complex" based on the number of reasoning hops.

### Components/Axes

* **X-axis:** Number of Reasoning Hops (Scale: 2, 3, 4, 5)

* **Y-axis:** Accuracy (%) (Scale: 65, 70, 75, 80, 85, 90, 95)

* **Legend:**

* GPT-5.2 (Grey dashed line)

* Gemini 3 Pro (Blue dashed line)

* SFT+RL (14B, Ours) (Orange solid line)

* **Regions:**

* "Simpler" (Reasoning Hops 2 & 3) - Light orange background

* "Complex" (Reasoning Hops 4 & 5) - Light green background

* **Annotation:** "14B params vs larger frontier models" (Bottom-right corner)

* **Percentage Change Annotations:** "+7%", "+7%", "+10%", "+19%" (placed near the SFT+RL data points)

### Detailed Analysis

* **GPT-5.2 (Grey dashed line):** The line starts at approximately 76% accuracy at 2 reasoning hops, decreases slightly to around 74% at 3 hops, increases to approximately 76% at 4 hops, and then drops sharply to around 70% at 5 hops.

* **Gemini 3 Pro (Blue dashed line):** The line begins at approximately 73% accuracy at 2 reasoning hops, decreases to around 72% at 3 hops, remains relatively stable at approximately 73% at 4 hops, and then declines to around 69% at 5 hops.

* **SFT+RL (14B, Ours) (Orange solid line):** The line starts at approximately 84% accuracy at 2 reasoning hops, decreases to around 81% at 3 hops, increases significantly to approximately 87% at 4 hops, and continues to increase to approximately 91% at 5 hops. The percentage changes are annotated as +7% (from 2 to 3 hops), +7% (from 3 to 4 hops), +10% (from 4 to 5 hops), and +19% (overall from 2 to 5 hops).

### Key Observations

* SFT+RL (14B) consistently outperforms GPT-5.2 and Gemini 3 Pro across all reasoning hop levels.

* The performance gap between SFT+RL and the other models widens as the number of reasoning hops increases, particularly in the "Complex" region (4 and 5 hops).

* GPT-5.2 and Gemini 3 Pro exhibit a decreasing trend in accuracy as the number of reasoning hops increases, suggesting they struggle with more complex reasoning tasks.

* SFT+RL demonstrates an increasing trend in accuracy with more reasoning hops, indicating its ability to handle more complex tasks effectively.

### Interpretation

The data suggests that the SFT+RL (14B) model, despite being smaller in parameter size compared to GPT-5.2 and Gemini 3 Pro, is more robust and effective at handling complex reasoning tasks. The increasing accuracy of SFT+RL with more reasoning hops indicates that it benefits from deeper reasoning chains, while the larger models appear to be hindered by increased complexity. This could be due to differences in training data, model architecture, or optimization techniques. The annotation "14B params vs larger frontier models" explicitly highlights this comparison. The division into "Simpler" and "Complex" regions suggests a transition point where the benefits of the SFT+RL model become more pronounced. The consistent decline in performance for the larger models as reasoning hops increase could indicate issues with error propagation or difficulty maintaining coherence over longer reasoning chains. The +19% overall improvement for SFT+RL is a significant finding, demonstrating its potential as a competitive alternative to larger language models for reasoning-intensive tasks.

</details>

Figure 6: Accuracy Comparisons against Frontier Models by Hop Level: Whereas the accuracy of generalist giants decays on longer chains, our 14B SFT+RL model achieves its highest accuracy on unseen 5-hop queries, validating the KG as a superior supervisor for complex composition.

Superiority over Frontier Models: We evaluate the zero-shot performance of the model against leading generalist models in Fig. 6. Our results demonstrate that grounding a smaller model in a domain’s axioms enables it to surpass larger, generally trained giants at complex reasoning tasks. We also note a striking trend: Whereas GPT-5.2 and Gemini 3 Pro maintain respectable performance on shorter hops, their accuracy stagnates or declines as the hop count increases. In contrast, our model exhibits a positive compositional gradient and achieves its highest accuracy (89.33%) on the 5-hop queries. This supports our claim that KG-grounded path-derived signals teach the model how to compose axioms, not just standard pattern matching.

Surpassing Expert-distilled Scale (SFT-Only): Finally, we compare our model with the QwQ-Med-3 model presented in Dedhia et al. (2025), which was trained on a similar data distribution. For a fair comparison, we use the majority-voting ( $n=16$ ) metric, matching the aggregation metric used in their study (see Table 2).

Table 2: Performance by difficulty level (using majority voting).

| 1 | 96.75% | 94.23% | $-2.52\$ |

| --- | --- | --- | --- |

| 2 | 83.79% | 85.63% | $+1.84\$ |

| 3 | 79.33% | 80.33% | $+1.00\$ |

| 4 | 70.56% | 71.50% | $+0.94\$ |

| 5 | 49.69% | 59.05% | $+9.36\$ |

Whereas the QwQ-Med-3 model holds a slight advantage on lower-difficulty tasks, which typically rely on factual recall, our model successfully bridges the recall-reasoning gap. By using the KG as an implicit reward model, we enable a smaller architecture to “out-reason” a much larger model. This confirms our premise that while scale is a powerful tool for breadth of knowledge, path-aligned rewards are the true bridge to deep compositional reasoning.

## 6 Discussion

The ideas and results presented in this work highlight a fundamental shift in how we approach the development of expert-level reasoning. By using a KG as an implicit reward model, we demonstrate how bottom-up primitives in a domain can serve as highly scalable, verifiable process supervisors. Unlike contemporary human-in-the-loop process supervision, which is prohibitively expensive and infeasible to scale to millions of reasoning chains across domains, our path-derived reward signal provides an automated, scalable axiomatic grounding mechanism.

Furthermore, our findings reinforce the basic axiom of machine learning: Good data are paramount! We show that when models are trained with proper axiomatic grounding, they develop a robust skill for compositional reasoning beyond simple factual recall. This is most evident by our model’s ability to surpass much larger generalist giants. While brute-force scaling continues to dominate the search for general intelligence, our work suggests a more efficient path towards building superintelligent systems: building small, specialized models that master composition within their respective domains.

Finally, our reward design mechanism is inherently scalable and domain-agnostic. Any scientific or technical field that can be represented as a structured KG (from organic chemistry to case law) is a candidate for this pipeline. As domain KGs continue to expand in coverage and fidelity, they offer a practical route to building systems that reason from first principles rather than surface correlations or simple pattern matching. We posit this work as an early step toward scalable, verifiable domain-specific superintelligence, and we encourage future research to explore richer graph structures, broader domains, and tighter integration between symbolic knowledge and neural architectures to build better reasoning systems.

## 7 Conclusion

We introduced a simple, general idea: Treat KGs as implicit reward models and use path-derived rewards in a scalable fashion to teach models how to compose domain primitives into longer reasoning chains. We combined SFT with a compact RL stage and a KG path-aligned reward (plus correctness) to synthesize models that can generalize from 1-3-hop training to unseen 4-5-hop problems, improve baseline accuracy on the hardest tasks, and remain robust under format perturbations. Our recipe yields consistent gains over an SFT-only approach and can outperform much larger models, validating that good grounded data and reward design, not just scale, are central to compositional reasoning. Although we have successfully validated our claims within the medical domain, we acknowledge that this is only a starting point. We encourage the community to further investigate how structured KGs can be leveraged as reward supervisors to build the next generation of superintelligent systems.

## Acknowledgments

The experiments reported in this paper were performed on the computational resources managed and supported by Princeton Research Computing, Princeton AI Lab, and the Princeton Language and Intelligence Initiative at Princeton University.

## References

- Anthropic (2025) Anthropic. Claude Opus 4.5 System Card Technical Report, 2025. URL https://assets.anthropic.com/m/64823ba7485345a7/Claude-Opus-4-5-System-Card.pdf.

- Bodenreider (2004) Bodenreider, O. The Unified Medical Language System (UMLS): Integrating Biomedical Terminology. Nucleic acids research, 32:D267–D270, 2004.

- Chu et al. (2025) Chu, T., Zhai, Y., Yang, J., Tong, S., Xie, S., Schuurmans, D., Le, Q. V., Levine, S., and Ma, Y. SFT Memorizes, RL Generalizes: A Comparative Study of Foundation Model Post-Training. arXiv preprint arXiv:2501.17161, 2025.

- Cui et al. (2025) Cui, G., Yuan, L., Wang, Z., Wang, H., Li, W., He, B., Fan, Y., Yu, T., Xu, Q., Chen, W., Yuan, J., Chen, H., Zhang, K., Lv, X., Wang, S., Yao, Y., Han, X., Peng, H., Cheng, Y., Liu, Z., Sun, M., Zhou, B., and Ding, N. Process Reinforcement Through Implicit Rewards. arXiv preprint arXiv:2502.01456, 2025.

- Damani et al. (2025) Damani, M., Puri, I., Slocum, S., Shenfeld, I., Choshen, L., Kim, Y., and Andreas, J. Beyond Binary Rewards: Training LMs to Reason About Their Uncertainty. arXiv preprint arXiv:2507.16806, 2025.

- Das et al. (2017) Das, R., Dhuliawala, S., Zaheer, M., Vilnis, L., Durugkar, I., Krishnamurthy, A., Smola, A., and McCallum, A. Go for a Walk and Arrive at the Answer: Reasoning Over Paths in Knowledge Bases Using Reinforcement Learning. arXiv preprint arXiv:1711.05851, 2017.

- Dedhia et al. (2025) Dedhia, B., Kansal, Y., and Jha, N. K. Bottom-up Domain-specific Superintelligence: A Reliable Knowledge Graph is What We Need. arXiv preprint arXiv:2507.13966, 7 2025.

- Fodor (1975) Fodor, J. A. The Language of Thought, volume 5. Harvard University Press, 1975.

- Google DeepMind (2025) Google DeepMind. Gemini 3 Pro Model Card, November 2025. URL https://storage.googleapis.com/deepmind-media/Model-Cards/Gemini-3-Pro-Model-Card.pdf. Technical Report.

- Gu et al. (2025) Gu, Y., Fu, J., Liu, X., Valanarasu, J. M. J., Codella, N. C., Tan, R., Liu, Q., Jin, Y., Zhang, S., Wang, J., et al. The Illusion of Readiness in Health AI. arXiv preprint arXiv:2509.18234, 2025.

- Gunjal et al. (2025) Gunjal, A., Wang, A., Lau, E., Nath, V., Liu, B., and Hendryx, S. Rubrics as Rewards: Reinforcement Learning Beyond Verifiable Domains. arXiv preprint arXiv:2507.17746, 2025.

- Guo et al. (2025) Guo, D., Yang, D., Zhang, H., Song, J., Zhang, R., Xu, R., Zhu, Q., Ma, S., Wang, P., Bi, X., et al. Deepseek-R1: Incentivizing Reasoning Capability in LLMs Via Reinforcement Learning. arXiv preprint arXiv:2501.12948, 2025.

- Hu et al. (2022) Hu, E. J., Shen, Y., Wallis, P., Allen-Zhu, Z., Li, Y., Wang, S., Wang, L., Chen, W., et al. LoRA: Low-Rank Adaptation of Large Language Models. In Proceedings of International Conference on Learning Representations, 1(2):3, 2022.

- Jin et al. (2025) Jin, H., Luan, S., Lyu, S., Rabusseau, G., Rabbany, R., Precup, D., and Hamdaqa, M. RL Fine-Tuning Heals OOD Forgetting In SFT. arXiv preprint arXiv:2509.12235, 2025.

- Kamp & Partee (1995) Kamp, H. and Partee, B. Prototype Theory and Compositionality. Cognition, 57(2):129–191, 1995.

- Kang et al. (2025) Kang, F., Kuchnik, M., Padthe, K., Vlastelica, M., Jia, R., Wu, C.-J., and Ardalani, N. Quagmires in SFT-RL Post-Training: When High SFT Scores Mislead and What to Use Instead. arXiv preprint arXiv:2510.01624, 2025.

- Khatwani et al. (2025) Khatwani, S., Cheng, H., Afshar, M., Dligach, D., and Gao, Y. Brittleness and Promise: Knowledge Graph Based Reward Modeling for Diagnostic Reasoning. arXiv preprint arXiv:2509.18316, 2025.

- Kim et al. (2025) Kim, Y., Jeong, H., Chen, S., Li, S. S., Lu, M., Alhamoud, K., Mun, J., Grau, C., Jung, M., Gameiro, R., Fan, L., Park, E., Lin, T., Yoon, J., Yoon, W., Sap, M., Tsvetkov, Y., Liang, P., Xu, X., Liu, X., McDuff, D., Lee, H., Park, H. W., Tulebaev, S., and Breazeal, C. Medical Hallucination in Foundation Models and Their Impact on Healthcare. arXiv preprint arXiv:2503.05777, 2025.

- Lightman et al. (2023) Lightman, H., Kosaraju, V., Burda, Y., Edwards, H., Baker, B., Lee, T., Leike, J., Schulman, J., Sutskever, I., and Cobbe, K. Let’s Verify Step by Step. arXiv preprint arXiv:2305.20050, 2023.

- Lin et al. (2018) Lin, X. V., Socher, R., and Xiong, C. Multi-Hop Knowledge Graph Reasoning With Reward Shaping. arXiv preprint arXiv:1808.10568, 2018.

- Matsutani et al. (2025) Matsutani, K., Takashiro, S., Minegishi, G., Kojima, T., Iwasawa, Y., and Matsuo, Y. RL Squeezes, SFT Expands: A Comparative Study of Reasoning LLMs. arXiv preprint arXiv:2509.21128, 2025.

- Muennighoff et al. (2025) Muennighoff, N., Yang, Z., Shi, W., Li, X. L., Fei-Fei, L., Hajishirzi, H., Zettlemoyer, L., Liang, P., Candès, E., and Hashimoto, T. B. s1: Simple Test-Time Scaling. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, pp. 20286–20332, 2025.

- OpenAI (2025) OpenAI. Introducing GPT-5.2. https://openai.com/index/introducing-gpt-5-2/, 2025. Accessed: 2026.

- Organization (1992) Organization, W. H. International Statistical Classification of Diseases and Related Health Problems 10th Revision (ICD-10). World Health Organization, 1992.

- Ouyang et al. (2022) Ouyang, L., Wu, J., Jiang, X., Almeida, D., Wainwright, C., Mishkin, P., Zhang, C., Agarwal, S., Slama, K., Ray, A., et al. Training Language Models to Follow Instructions with Human Feedback. Advances in Neural Information Processing Systems, 35:27730–27744, 2022.

- Rafailov et al. (2023) Rafailov, R., Sharma, A., Mitchell, E., Ermon, S., Manning, C. D., and Finn, C. Direct Preference Optimization: Your Language Model is Secretly a Reward Model. Advances in Neural Information Processing Systems, 36, 2023.

- Rajani et al. (2025) Rajani, N., Gema, A. P., Goldfarb-Tarrant, S., and Titov, I. Scalpel vs. Hammer: GRPO Amplifies Existing Capabilities, SFT Replaces Them. arXiv preprint, 2025. Preprint.

- Schulman et al. (2017) Schulman, J., Wolski, F., Dhariwal, P., Radford, A., and Klimov, O. Proximal Policy Optimization Algorithms. arXiv preprint arXiv:1707.06347, 2017.

- Shrivastava et al. (2025) Shrivastava, V., Awadallah, A., Balachandran, V., Garg, S., Behl, H., and Papailiopoulos, D. Sample More to Think Less: Group Filtered Policy Optimization for Concise Reasoning. arXiv preprint arXiv:2508.09726, 2025.

- Wang et al. (2025) Wang, G., Li, J., Sun, Y., Chen, X., Liu, C., Wu, Y., Lu, M., Song, S., and Abbasi-Yadkori, Y. Hierarchical Reasoning Model. arXiv preprint arXiv:2506.21734, 2025.

- Wang et al. (2024) Wang, J., Chen, M., Hu, B., Yang, D., Liu, Z., Shen, Y., Wei, P., Zhang, Z., Gu, J., Zhou, J., Pan, J. Z., Zhang, W., and Chen, H. Learning to Plan for Retrieval-Augmented Large Language Models from Knowledge Graphs. In Findings of the Association for Computational Linguistics: EMNLP 2024, pp. 7813–7835. Association for Computational Linguistics, 2024.

- Wei et al. (2022) Wei, J., Wang, X., Schuurmans, D., Bosma, M., Xia, F., Chi, E., Le, Q. V., Zhou, D., et al. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. Advances in Neural Information Processing Systems, 35:24824–24837, 2022.

- Weng (2024) Weng, L. Reward Hacking in Reinforcement Learning. https://lilianweng.github.io/posts/2024-11-28-reward-hacking/, 2024. Lil’Log blog post.

- Xie et al. (2025) Xie, T., Gao, Z., Ren, Q., Luo, H., Hong, Y., Dai, B., Zhou, J., Qiu, K., Wu, Z., and Luo, C. Logic-RL: Unleashing LLM Reasoning with Rule-Based Reinforcement Learning. arXiv preprint arXiv:2502.14768, 2025.

- Xiong et al. (2017) Xiong, W., Hoang, T., and Wang, W. Y. DeepPath: A Reinforcement Learning Method for Knowledge Graph Reasoning. arXiv preprint arXiv:1707.06690, 2017.

- Yan et al. (2025) Yan, L., Tang, C., Guan, Y., Wang, H., Wang, S., Liu, H., Yang, Y., and Jiang, J. RLKGF: Reinforcement Learning from Knowledge Graph Feedback without Human Annotations. In Findings of the Association for Computational Linguistics: ACL 2025, pp. 6619–6633, 2025.

- Yang et al. (2025) Yang, A., Li, A., Yang, B., Zhang, B., Hui, B., Zheng, B., Yu, B., Gao, C., Huang, C., Lv, C., et al. Qwen3 Technical Report. arXiv preprint arXiv:2505.09388, 2025.

- Yasunaga et al. (2021) Yasunaga, M., Ren, H., Bosselut, A., Liang, P., and Leskovec, J. QA-GNN: Reasoning with Language Models and Knowledge Graphs for Question Answering. arXiv preprint arXiv:2104.06378, 2021.

- Yin et al. (2025) Yin, M., Qu, Y., Yang, L., Cong, L., and Wang, M. Toward Scientific Reasoning in LLMs: Training from Expert Discussions via Reinforcement Learning. arXiv preprint arXiv:2505.19501, 2025.

- Yuan et al. (2025) Yuan, L., Chen, W., Zhang, Y., Cui, G., Wang, H., You, Z., Ding, N., Liu, Z., Sun, M., and Peng, H. From $f(x)$ and $g(x)$ to $f(g(x))$ : LLMs Learn New Skills in RL by Composing Old Ones. arXiv preprint arXiv:2509.25123, 2025.

- Yue et al. (2025) Yue, Y., Chen, Z., Lu, R., Zhao, A., Wang, Z., Song, S., and Huang, G. Does Reinforcement Learning Really Incentivize Reasoning Capacity in LLMs Beyond the Base Model? arXiv preprint arXiv:2504.13837, 2025.

- Zhang et al. (2025) Zhang, Z., Zheng, C., Wu, Y., Zhang, B., Lin, R., Yu, B., Liu, D., Zhou, J., and Lin, J. The Lessons of Developing Process Reward Models in Mathematical Reasoning. arXiv preprint arXiv:2501.07301, 2025.

- Zhu et al. (2025) Zhu, X., Xia, M., Wei, Z., Chen, W.-L., Chen, D., and Meng, Y. The Surprising Effectiveness of Negative Reinforcement in LLM Reasoning. arXiv preprint arXiv:2506.01347, 2025.

## Appendix A Zero-RL and Reward Ablation Studies on Qwen3 8B

In this section, we provide the empirical details of our experiments with the “Zero-RL” approach (applying RL directly to the base model without a prior SFT phase). These ablations were instrumental in determining our final training scale, pipeline design, and reward configuration.

### A.1 Zero-RL Performance and Scaling

We evaluate the performance of the Qwen3 8B base model using the GRPO algorithm across three data scales: 5k, 10k, and 24.66k examples. For each scale, we test four reward configurations: (1) path alignment (path overlap), (2) Jaccard similarity (distillation), (3) binary correctness, and (4) all rewards combined (see Table 3). All experiments are performed on the Qwen3 8B model using 8 H100 NVIDIA GPUs.

Note that we always include the binary correctness reward as a minimal signal.

Table 3: Ablation study on reward components across training scales on Qwen3 8B.

| Training Size | Reward | Accuracy | $\sim$ Training Time (hrs) |

| --- | --- | --- | --- |

| 0 | Baseline | 64.98% | – |

| 5k | Path Align. | 67.51% | 12 |

| Jaccard Sim. | 68.03% | 12 | |

| Binary | 69.36% | 12 | |

| 10k | Path Align. | 64.24% | 24 |

| Jaccard Sim. | 65.74% | 24 | |

| Binary | 67.56% | 24 | |

| All Rewards | 68.44% | 24 | |

| 24.66k | Path Align. | 68.41% | 65 |

| Jaccard Sim. | 65.77% | 65 | |

| Binary | 70.18% | 65 | |

| All Rewards | 68.44% | 65 | |

Key Observations:

- SFT Requirement: Whereas Zero-RL provides a marginal improvement over the base model, no configuration reaches the performance levels of the SFT-only baseline (70.86%) or our final SFT+RL model ( $\sim$ 82%). This confirms that the model requires a grounded factual foundation from SFT before it can effectively leverage RL for compositional reasoning. In the final SFT+RL pipeline, path alignment, along with binary correctness (with upweighted negative reward), provides the best and most reliable signal.

- Scale vs. Efficiency: Performance gains from 5k to 24.66k examples are minimal (e.g., a $\sim$ 0.8% gain for binary correctness reward) while significantly increasing training time from 12 to 65 hours. This justifies our decision to set the RL stage to use a high-quality 5k subset of our final pipeline.

- Reward Stability: The binary correctness reward remains the most stable signal in the Zero-SFT setting. The distillation-based rewards are prone to reward hacking and stylistic mimicry without providing superior reasoning abilities.

### A.2 GRPO on LoRA Parameters Only

We also explore a variant of the pipeline where we perform SFT followed by GRPO, with updates limited only to the LoRA modules. This configuration yields an accuracy of 66.75% on the 8B model. The significantly lower performance compared to our full SFT+RL results suggests that compositional reasoning requires more extensive/aggressive weight updates during RL than those afforded by the restricted LoRA-only approach.

## Appendix B SFT+RL Ablation Studies on Qwen3 8B

This study investigates the synergy between the binary reward and path alignment reward, and provides the final missing link by ablating the specific reward components used in the RL stage. Whereas Appendix A focuses on the Zero-RL settings, the following experiments demonstrate the impact of reward designs on the model that has already undergone SFT. We conduct these ablations on the Qwen3 8B model to efficiently identify the optimal configuration for the larger 14B SFT+RL pipeline.

We specifically evaluate the addition of negative reinforcement Zhu et al. (2025) by penalizing the model more for incorrect final answers than correct reasoning paths. As shown in Table 4, the combination of path-derived signals and negative binary reward provides the most robust reasoning improvements, significantly outperforming configurations that rely on binary signals alone or a combination of all rewards.

Table 4: Ablation study on reward components with the SFT+RL training pipeline (8B Model). All RL runs are conducted for 5k steps following a 19.66k SFT baseline.

| 19.66k SFT + 5k RL | Path Alignment Only | 79.29% |

| --- | --- | --- |

| Path Alignment + Binary | 68.03% | |

| Binary Only (Normal) | 79.46% | |

| Negative Binary Only | 79.54% | |

| All Rewards | 55.21% | |

| Path Align. + Negative Binary | 82.20% | |

Key Insights:

- The Synergy of Negative Reinforcement: Consistent with the findings of Zhu et al. (2025), we observe that “Normal” binary rewards (positive reinforcement only) can be unstable when combined with path-aligned rewards. Transitioning to negative binary rewards while rewarding valid intermediate paths yields our highest accuracy of 82.20%.

- The “All Rewards” Failure: Attempting to combine all reward signals (path alignment, Jaccard similarity, normal binary, and thinking quality) leads to a significant performance collapse (55.21%). This suggests that over-optimizing the reward signal can lead to conflicting gradients or reward hacking, where the model fails to optimize for the primary reasoning task.

- Effect of the Path Alignment Signal: Even without outcome-based binary rewards, path alignment only provides a substantial $\sim$ 9% boost over the SFT baseline. This confirms that the KG itself acts as a sufficient implicit reward model to guide the model towards better compositional reasoning.

## Appendix C Additional Reward Function Formulations

In addition to the path-alignment reward, $R_{path}$ , and the binary correctness reward, $R_{bin}$ , we investigate two alternative reward functions for RL experiments: semantic answer similarity (distillation-based reward) ( $R_{sim}$ ) and thinking quality ( $R_{think}$ ). Whereas these functions provided some learning signals, they are ultimately less effective than path-derived rewards in enabling deep compositional reasoning.

### C.1 Semantic Answer Similarity Reward $(R_{sim})$

The semantic answer similarity (or distillation) reward, $R_{sim}$ , is designed to distill the reasoning patterns of the SFT model by rewarding semantic overlap between the model-generated reasoning and the ground truth answer reasoning trace.

The reward is calculated using the Jaccard similarity of normalized token sets:

$$

R_{sim}=\frac{|T_{model}\cap T_{target}|}{|T_{model}\cup T_{target}|}\times\phi_{rep},

$$

where $T_{model}$ is the set of unique tokens from the thinking trace of the model, $T_{target}$ is the token set of the ground truth reasoning text (distilled from a larger LLM), and $\phi_{rep}$ is a repetition penalty factor used to discourage repetitive generation. This function encourages the model to mention the correct clinical entities and mechanisms associated with the target answer, though it does not explicitly verify the logical validity of the connections between those entities.

### C.2 Thinking Quality Reward $(R_{think})$

The thinking quality reward, $R_{think}$ , is designed to encourage the model to produce well-organized, stepwise reasoning. It evaluates the response based on its adherence to logical formatting cues. It is calculated as a weighted sum of three structural components:

- Structure (50%): A binary indicator of whether the reasoning trace exceeds a minimum length threshold (20 characters).

- Step-wise Keywords (30%): A normalized count of logical-thinking indicator words such as “first,” “then,” “therefore,” and “finally.”

- Enumeration (20%): A score based on the presence of numbered lists (e.g., “1.,” “2.”), which often indicate a systematic breakdown of a multi-hop clinical diagnostic problem.

In addition, we employ a penalty, $\phi_{rep}$ , to discourage repetitive output. Whereas $R_{think}$ shows initial promise in improving the readability of the model outputs, it often leads to reward hacking in contrast to the rigorous logical traversal induced by path-derived reward signals.

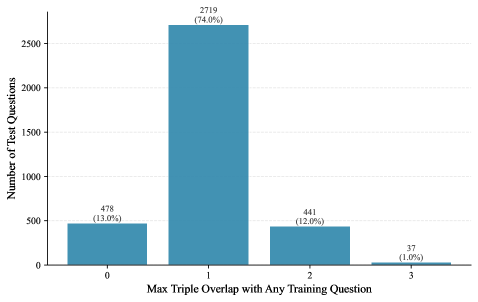

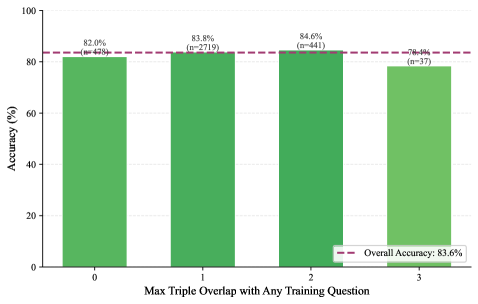

## Appendix D Train-Test Split Overlap Analysis