# A Syllogistic Probe: Tracing the Evolution of Logic Reasoning in Large Language Models

**Authors**:

- Junbo Zhao, Haobo Wang (Zhejiang University,

University of Chinese Academy of Social Sciences,)

## Abstract

Human logic has gradually shifted from intuition-driven inference to rigorous formal systems. Motivated by recent advances in large language models (LLMs), we explore whether LLMs exhibit a similar evolution in the underlying logical framework. Using existential import as a probe, we for evaluate syllogism under traditional and modern logic. Through extensive experiments of testing SOTA LLMs on a new syllogism dataset, we have some interesting findings: (i) Model size scaling promotes the shift toward modern logic; (ii) Thinking serves as an efficient accelerator beyond parameter scaling; (iii) the Base model plays a crucial role in determining how easily and stably this shift can emerge. Beyond these core factors, we conduct additional experiments for in-depth analysis of properties of current LLMs on syllogistic reasoning.

A Syllogistic Probe: Tracing the Evolution of Logic Reasoning in Large Language Models

Zhengqing Zang 1,3*, Yuqi Ding 2,3*, Yanmei Gu 3 $\dagger$ , Changkai Song 3, Zhengkai Yang 3, Guoping Du 4, Junbo Zhao 1,3, Haobo Wang 1 $\dagger$ 1 Zhejiang University, 2 University of Chinese Academy of Social Sciences, 3 Ant Group, 4 Chinese Academy of Social Sciences {zangzq, wanghaobo}@zju.edu.cn, dingyuqi@ucass.edu.cn, yanmeigu.gym@antgroup.com footnotetext: * These authors contributed equally. footnotetext: $\dagger$ Corresponding Authors.

## 1 Introduction

Human logic has evolved from earlier, more intuition-driven accounts of valid inference Aristotle (1984) to increasingly rigorous formal frameworks Enderton (1972). In particular, the development of symbolic logic clarified the semantics of quantification and enabled precise validity checking under explicit model-theoretic interpretations, laying the foundation for contemporary logical analysis.

Recently, neural networks have evolved from early, relatively simple architectures with limited capacity for logical reasoning to today’s large language models (LLMs), which have achieved remarkable progress across natural language processing tasks. State-of-the-art models such as GPT-5 OpenAI (2025a) and Gemini-3-Pro-Preview deepmind (2025), often rival human experts in complex reasoning tasks ranging from commonsense reasoning Bang et al. (2023); Bisk et al. (2019) to mathematical problem-solving Phan et al. (2025); Wei et al. (2023). These advances raise a natural question: do LLMs exhibit an analogous evolution in their underlying logical framework? If so, what changes, and how does this change emerge?

<details>

<summary>images/test_4.png Details</summary>

### Visual Description

## Conceptual Diagram: Existential Import in Syllogistic Logic

### Overview

The image is a conceptual flowchart illustrating how the philosophical concept of "Existential Import" (EI) determines the validity of a classic syllogism. It contrasts two logical systems—Traditional Logic and Modern Logic—based on whether they assume terms in a proposition refer to existing things. The diagram uses a syllogism about unicorns as a test case.

### Components/Axes

The diagram is organized into three main regions, flowing from left to right:

1. **Left Region (Syllogism Box):** A beige, rounded rectangle titled "Syllogism". It contains the logical argument.

2. **Center Region (EI Toggle):** A light blue, rounded rectangle titled "Existential Import (EI)". It features a central toggle switch.

3. **Right Region (Logic Outcomes):** Two stacked, light blue, rounded rectangles representing the two logical systems.

* **Top Box:** "Traditional Logic (EI = ON)"

* **Bottom Box:** "Modern Logic (EI = OFF)"

**Flow Arrows:**

* A thick, orange arrow points from the Syllogism box to the EI Toggle box.

* From the EI Toggle, two arrows branch out:

* A green arrow curves upward to the "Traditional Logic" box.

* A red arrow curves downward to the "Modern Logic" box.

### Detailed Analysis

**1. Syllogism Box (Left):**

* **Title:** "Syllogism"

* **Content:** A standard categorical syllogism with color-coded terms.

* **Premise 1:** "All **hairy animals** are **mammals**"

* "hairy animals" is highlighted in orange.

* "mammals" is highlighted in blue.

* **Premise 2:** "All **unicorns** are **hairy animals**"

* "unicorns" is highlighted in purple.

* "hairy animals" is highlighted in orange.

* **Conclusion:** "Some **unicorns** are **mammals**"

* "unicorns" is highlighted in purple.

* "mammals" is highlighted in blue.

* **Icons:** Each line is preceded by a small icon: a scroll for premises, a gavel for the conclusion.

**2. Existential Import (EI) Toggle (Center):**

* **Title:** "Existential Import (EI)"

* **Toggle Switch:** A horizontal slider with two states.

* **Left (ON) State:** Green background. Label: "licenses existence".

* **Right (OFF) State:** Grey background. Label: "allows empty classes".

* **Function:** This switch represents the core philosophical choice. Setting EI to "ON" means universal statements ("All A are B") imply that the subject class (A) has members. Setting it to "OFF" means such statements can be true even if the subject class is empty.

**3. Logic Outcome Boxes (Right):**

* **Traditional Logic (Top Box):**

* **Title:** "Traditional Logic (EI = ON)"

* **Visual Outcome:** A large green circle with a white checkmark and the word "VALID".

* **Icon:** A stylized unicorn head (purple mane, blue horn) is shown to the right of the checkmark.

* **Interpretation:** When EI is ON, the syllogism is valid. The premises imply unicorns exist (as hairy animals), so the conclusion "Some unicorns are mammals" follows.

* **Modern Logic (Bottom Box):**

* **Title:** "Modern Logic (EI = OFF)"

* **Visual Outcome:** A large red circle with a white "X" and the word "INVALID".

* **Note:** Below the circle, text reads: "Ø Note: Empty Set issue". The "Ø" symbol represents the empty set.

* **Interpretation:** When EI is OFF, the syllogism is invalid. The premises can be true even if the class of unicorns is empty. Therefore, you cannot validly conclude that *some* unicorns exist and are mammals.

### Key Observations

1. **Color Consistency:** The diagram uses color consistently to track terms: orange for "hairy animals," blue for "mammals," and purple for "unicorns." This aids in following the logical flow.

2. **Visual Metaphor:** The toggle switch is a powerful metaphor for a binary philosophical assumption. The green/red and checkmark/X iconography clearly contrasts the two outcomes.

3. **The Unicorn Example:** The choice of "unicorns" is deliberate. It is a universally recognized example of a non-existent (empty) class, making it the perfect test case for the problem of existential import.

4. **Spatial Grounding:** The flow is strictly left-to-right, with the central EI toggle acting as a decision point that branches into two distinct, vertically separated outcomes (Traditional above, Modern below).

### Interpretation

This diagram elegantly explains a fundamental schism in the history of logic. It demonstrates that the validity of a seemingly straightforward syllogism depends entirely on a hidden assumption about existence.

* **Traditional Logic (Aristotelian):** Assumes that the subject of a universal proposition ("All unicorns...") must have members. This makes the syllogism valid but commits to the existence of things like unicorns when reasoning about them.

* **Modern Logic (Boolean):** Rejects this assumption. Universal statements are interpreted as conditional ("If something is a unicorn, then it is a hairy animal"). This allows logic to handle empty classes without contradiction, making it more suitable for mathematics and abstract reasoning, but rendering the classic syllogism invalid.

The "Empty Set issue" note highlights the core problem: Modern logic treats classes as potentially empty, which breaks the traditional syllogistic form known as *Darapti* (the form used in the example). The diagram thus serves as a concise lesson on how foundational assumptions shape logical systems and their conclusions. It shows that logic is not just about form, but also about the metaphysical commitments embedded within that form.

</details>

<details>

<summary>images/train2.png Details</summary>

### Visual Description

## Diagram: AI Model Progression Train

### Overview

The image is a conceptual diagram using a train metaphor to illustrate a progression or spectrum of AI models. A train, consisting of a locomotive and three freight cars, moves from right to left along a track. Below the track is a labeled timeline arrow indicating a directional flow from "Traditional Logic" to "Modern Logic." Each train car contains the names of specific AI models, grouping them conceptually.

### Components/Axes

1. **Train:**

* **Locomotive:** Positioned on the far left. It is a stylized, cartoonish steam engine in light blue with red and yellow accents. It has no text label.

* **Freight Car 1 (Leftmost):** A light blue boxcar directly behind the locomotive. Text: "GPT-o3, GPT-5".

* **Freight Car 2 (Center):** A light blue boxcar. Text: "Qwen3-8B, Qwen3-30B-A3B".

* **Freight Car 3 (Rightmost):** A light blue boxcar. Text: "Llama3-8B, Qwen3-0.6B".

2. **Timeline/Axis:**

* A black horizontal arrow runs beneath the train track, pointing to the left.

* **Left Label (Arrow Tip):** "Modern Logic"

* **Center Label:** "Turning Point"

* **Right Label (Arrow Tail):** "Traditional Logic"

### Detailed Analysis

* **Spatial Grounding & Flow:** The diagram establishes a clear leftward directional flow. The locomotive leads the train towards "Modern Logic." The cars are ordered sequentially from right (Traditional Logic) to left (Modern Logic).

* **Model Grouping:**

* The car closest to the "Traditional Logic" end contains "Llama3-8B" and "Qwen3-0.6B".

* The middle car, aligned with the "Turning Point" label, contains "Qwen3-8B" and "Qwen3-30B-A3B".

* The car closest to the "Modern Logic" end, pulled by the locomotive, contains "GPT-o3" and "GPT-5".

* **Text Transcription:** All text is in English. The transcribed text is as follows:

* On Cars: "GPT-o3, GPT-5", "Qwen3-8B, Qwen3-30B-A3B", "Llama3-8B, Qwen3-0.6B".

* On Timeline: "Modern Logic", "Turning Point", "Traditional Logic".

### Key Observations

1. **Conceptual, Not Quantitative:** This is a metaphorical diagram, not a data chart. It does not provide performance metrics, benchmarks, or numerical data. It visualizes a perceived conceptual ordering.

2. **Model Families:** The diagram groups models from different families (GPT, Qwen, Llama) across the spectrum. Notably, the Qwen3 model series appears in all three cars, suggesting it spans the conceptual range.

3. **The "Turning Point":** The central car is explicitly labeled as the "Turning Point," implying it represents a transitional phase or a balance between traditional and modern approaches.

4. **Directionality:** The leftward arrow and the train's orientation strongly imply that "Modern Logic" is the destination or the direction of progress.

### Interpretation

This diagram presents a subjective, conceptual framework for categorizing large language models (LLMs) based on their underlying reasoning or architectural paradigms, termed "logic."

* **What it Suggests:** It proposes that models like Llama3-8B and smaller Qwen variants (Qwen3-0.6B) are associated with "Traditional Logic." In contrast, the GPT series (GPT-o3, GPT-5) is positioned as the exemplar of "Modern Logic." The Qwen3-8B and Qwen3-30B-A3B models are placed at a "Turning Point," suggesting they may hybridize or transition between these paradigms.

* **Relationships:** The train metaphor implies a journey or evolution. The locomotive (unlabeled, perhaps representing the driving force of research) pulls the field towards modernity. The grouping suggests that model size (e.g., 0.6B vs. 30B) and series are factors in this conceptual placement, but the primary axis is the type of "logic."

* **Anomalies/Notes:** The placement is interpretive. For instance, putting "GPT-5" (a hypothetical future model at the time of the diagram's creation) in the "Modern Logic" car is a predictive statement. The diagram does not define what constitutes "Traditional" vs. "Modern" logic, leaving it open to interpretation (e.g., it could refer to symbolic vs. neural approaches, reasoning capabilities, or architectural innovations). The key takeaway is the visual argument that the AI field is moving from one conceptual paradigm to another, with specific models occupying different stages in that transition.

</details>

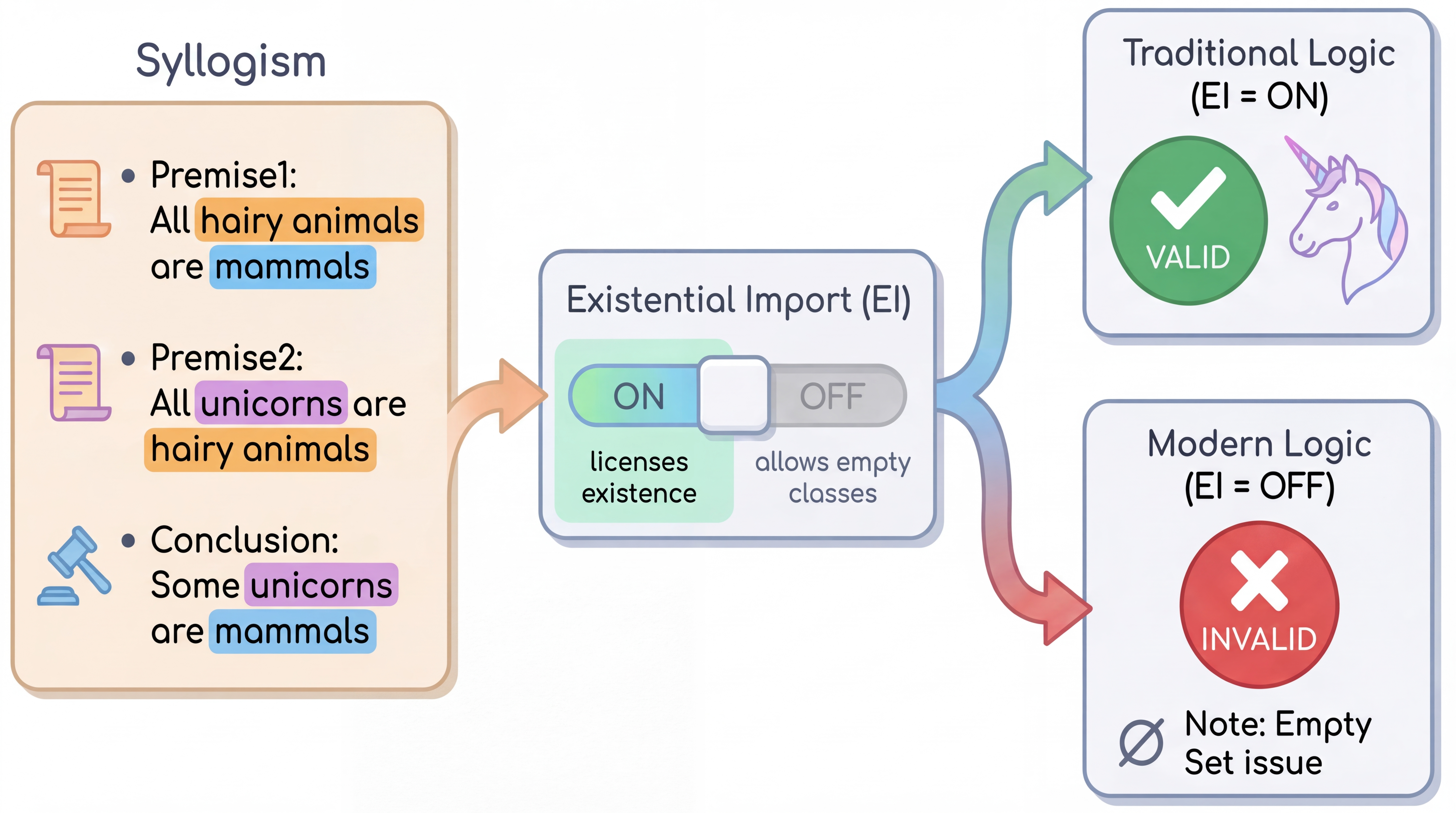

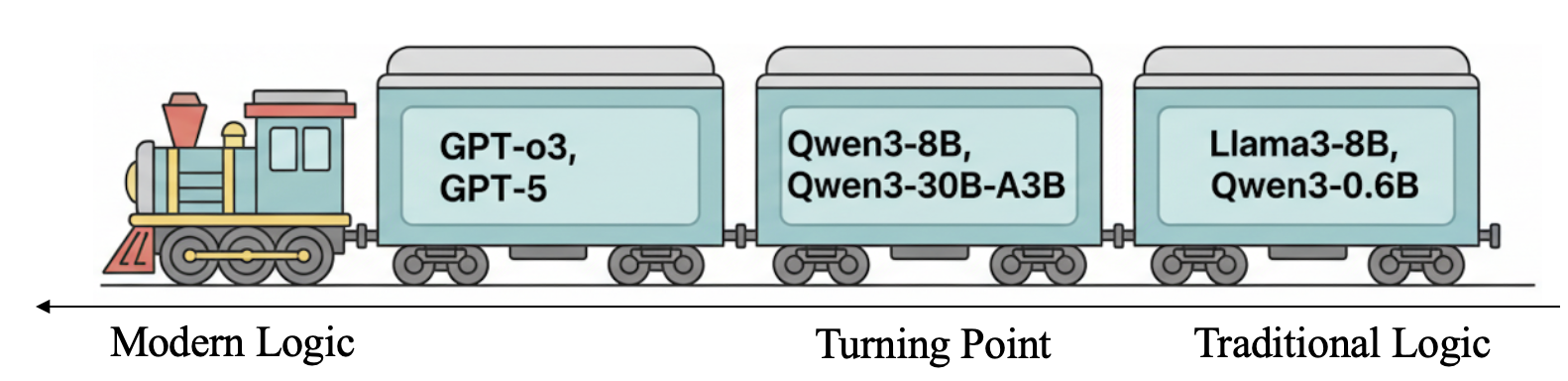

Figure 1: The illustration of existential import problem and the trace of model logic.

<details>

<summary>images/acc-t.png Details</summary>

### Visual Description

## Scatter Plot: AI Model Accuracy Comparison

### Overview

This image is a scatter plot comparing the accuracy (acc-t) of various large language models (LLMs) from different model families. The chart uses colored bubbles to represent individual models, with the bubble size likely corresponding to model size (parameter count) or another scaling metric. The plot is divided into two sections by a vertical gray line, separating the main comparison group from three additional models on the far right.

### Components/Axes

* **Y-Axis:** Labeled "acc-t" (likely accuracy on a specific task or benchmark). The scale runs from 60 to 100, with major tick marks at 60, 70, 80, 90, and 100. A horizontal dashed gray line is drawn at acc-t = 80.

* **X-Axis:** Lists the names of specific AI models. The labels are rotated for readability. From left to right, the models are:

* Llama3-8B, Llama3-70B, Llama3.3-70B

* Gemma-3-1B, Gemma-3-4B, Gemma-3-12B, Gemma-3-27B

* Qwen3-0.6B, Qwen3-1.7B, Qwen3-4B, Qwen3-8B, Qwen3-14B, Qwen3-32B, Qwen3-30B-A3B, Qwen3-NEXT-80B-A3B, Qwen3-235B-A22B

* Qwen3-0.6B-T, Qwen3-1.7B-T, Qwen3-4B-T, Qwen3-8B-T, Qwen3-14B-T, Qwen3-32B-T, Qwen3-30B-A3B-T, Qwen3-NEXT-80B-A3B-T, Qwen3-235B-A22B-T

* (After vertical divider) Gemini-2.5-pro, Gpt-o3, GPT-5

* **Legend:** Located in the top-right corner, titled "Model Family". It maps colors to model families:

* **Blue:** Llama

* **Green:** Gemma

* **Purple:** Qwen

* **Pink:** Qwen-T (likely a variant, possibly "Turbo" or "Tuned")

* **Yellow-Green:** Gemini

* **Light Blue:** GPT

### Detailed Analysis

**Data Points (Approximate acc-t values, grouped by family):**

* **Llama Family (Blue):**

* Llama3-8B: ~59

* Llama3-70B: ~96

* Llama3.3-70B: ~98

* **Gemma Family (Green):**

* Gemma-3-1B: ~86

* Gemma-3-4B: ~91

* Gemma-3-12B: ~96

* Gemma-3-27B: ~96

* **Qwen Family (Purple):**

* Qwen3-0.6B: ~100 (appears as a small dot at the very top)

* Qwen3-1.7B: ~77

* Qwen3-4B: ~92

* Qwen3-8B: ~91

* Qwen3-14B: ~94

* Qwen3-32B: ~93

* Qwen3-30B-A3B: ~67

* Qwen3-NEXT-80B-A3B: ~66

* Qwen3-235B-A22B: ~65

* **Qwen-T Family (Pink):**

* Qwen3-0.6B-T: ~91

* Qwen3-1.7B-T: ~93

* Qwen3-4B-T: ~84

* Qwen3-8B-T: ~67

* Qwen3-14B-T: ~76

* Qwen3-32B-T: ~82

* Qwen3-30B-A3B-T: ~70

* Qwen3-NEXT-80B-A3B-T: ~63

* Qwen3-235B-A22B-T: ~64

* **Gemini Family (Yellow-Green):**

* Gemini-2.5-pro: ~72

* **GPT Family (Light Blue):**

* Gpt-o3: ~63

* GPT-5: ~63

**Bubble Size Observation:** Larger bubbles are generally associated with larger model names (e.g., Llama3.3-70B, Gemma-3-27B, Qwen3-235B-A22B). However, the relationship is not perfectly linear, and some high-accuracy models (like Qwen3-0.6B) have very small bubbles.

### Key Observations

1. **Performance Spread:** There is a wide spread in accuracy, from ~59 to ~100. Most models cluster above the acc-t=80 dashed line.

2. **Family Trends:**

* **Llama & Gemma:** Show a clear positive trend where larger models (70B, 27B) achieve very high accuracy (>95).

* **Qwen (Purple):** Shows a bimodal distribution. The standard Qwen3 models (0.6B to 32B) generally perform well (>90), except for the 1.7B model. The larger "Mixture-of-Experts" style models (30B-A3B, NEXT-80B-A3B, 235B-A22B) show a significant drop in accuracy, clustering between 65-67.

* **Qwen-T (Pink):** Exhibits high variance. The smaller T models (0.6B-T, 1.7B-T) perform very well (~91-93), but performance degrades erratically with size, with several models falling below the 80 line.

3. **Outliers:**

* **Qwen3-0.6B (Purple):** Achieves the highest apparent accuracy (~100) despite being the smallest model in its family (tiny bubble). This is a significant outlier.

* **Qwen3-30B-A3B and similar (Purple):** The large MoE models underperform dramatically compared to smaller dense models from the same family.

* **Gemini-2.5-pro & GPT models:** Positioned to the right of the divider, these models show moderate to lower accuracy (~63-72) in this specific comparison.

### Interpretation

This chart visualizes a benchmark comparison that challenges simple assumptions about model size and performance.

* **Size vs. Accuracy:** While there's a general trend for larger models within the Llama and Gemma families to perform better, this relationship breaks down completely for the Qwen families. The Qwen3-0.6B model's top performance suggests that for this specific "acc-t" task, architectural efficiency or training data quality can trump raw parameter count.

* **The "T" Variant Impact:** The Qwen-T series shows that applying a specific modification (the "T" variant) introduces significant performance instability. It can boost small models (0.6B-T vs 0.6B) but harm larger ones, indicating the modification's effect is highly context-dependent.

* **MoE Model Underperformance:** The poor showing of Qwen's large Mixture-of-Experts models (A3B, A22B) is striking. It implies that for this particular evaluation metric, the sparse activation of MoE models may be a disadvantage compared to dense models, or that these specific MoE architectures are not optimized for this task.

* **Benchmark Context:** The dashed line at 80 likely represents a human-performance baseline or a target threshold. Many models exceed it, but the top performers are not exclusively the largest ones. The placement of commercial models like Gemini and GPT on the lower end suggests this benchmark may measure a specific capability where open-weight models currently excel, or that the evaluation setup differs from standard commercial benchmarks.

In summary, the data suggests that model family, architecture (dense vs. MoE), and specific variant ("T") are more critical predictors of performance on this "acc-t" task than model size alone. The outlier performance of the tiny Qwen3-0.6B model is the most notable finding, warranting investigation into its training methodology.

</details>

<details>

<summary>images/acc-m.png Details</summary>

### Visual Description

## Scatter Plot: AI Model Accuracy Comparison

### Overview

The image is a scatter plot comparing the accuracy metric ("acc-m") of various large language models (LLMs). The plot displays individual data points for each model, with the y-axis representing accuracy and the x-axis listing model names. A horizontal dashed line at acc-m = 80 serves as a reference benchmark. The data points are colored and sized, likely to group model families or indicate another variable (like model size), though no explicit legend is provided within the image frame.

### Components/Axes

* **Y-Axis:** Labeled "acc-m". Scale ranges from 50 to 100, with major tick marks at 50, 60, 70, 80, 90, and 100.

* **X-Axis:** Lists model names. The models are grouped into distinct families, separated by visual spacing and color.

* **Reference Line:** A horizontal dashed gray line at y = 80.

* **Data Points:** Circles of varying sizes and colors. Each circle represents a single model's performance.

* **Model Families (from left to right):**

* **Llama (Blue):** Llama3-8B, Llama3-70B, Llama3.3-70B

* **Gemma (Green):** Gemma-3-1B, Gemma-3-4B, Gemma-3-12B, Gemma-3-27B

* **Qwen (Purple):** Qwen2.5-0.5B, Qwen2.5-1.5B, Qwen2.5-3B, Qwen2.5-7B, Qwen2.5-14B, Qwen2.5-32B, Qwen3-30B-A3B, Qwen3-NEXT-80B-A3B, Qwen3-235B-A22B

* **Qwen-T (Pink):** Qwen3-0.6B-T, Qwen3-1.7B-T, Qwen3-4B-T, Qwen3-8B-T, Qwen3-14B-T, Qwen3-32B-T, Qwen3-30B-A3B-T, Qwen3-NEXT-80B-A3B-T, Qwen3-235B-A22B-T

* **Other Models (Right of vertical divider):** Gemini-2.5-pro (Yellow), Gpt-4o (Light Blue), GPT-5 (Light Blue)

### Detailed Analysis

**Data Points (Approximate acc-m values, read from y-axis):**

* **Llama Family (Blue):**

* Llama3-8B: ~55

* Llama3-70B: ~62

* Llama3.3-70B: ~64

* **Gemma Family (Green):**

* Gemma-3-1B: ~55

* Gemma-3-4B: ~63

* Gemma-3-12B: ~63

* Gemma-3-27B: ~64

* **Qwen Family (Purple):**

* Qwen2.5-0.5B: ~62

* Qwen2.5-1.5B: ~59

* Qwen2.5-3B: ~64

* Qwen2.5-7B: ~67

* Qwen2.5-14B: ~67

* Qwen2.5-32B: ~69

* Qwen3-30B-A3B: ~95

* Qwen3-NEXT-80B-A3B: ~97

* Qwen3-235B-A22B: ~98

* **Qwen-T Family (Pink):**

* Qwen3-0.6B-T: ~61

* Qwen3-1.7B-T: ~69

* Qwen3-4B-T: ~78

* Qwen3-8B-T: ~95

* Qwen3-14B-T: ~87

* Qwen3-32B-T: ~81

* Qwen3-30B-A3B-T: ~89

* Qwen3-NEXT-80B-A3B-T: ~99

* Qwen3-235B-A22B-T: ~99

* **Other Models:**

* Gemini-2.5-pro: ~90

* Gpt-4o: ~100

* GPT-5: ~100

**Trend Verification:**

* **Within Qwen (Purple):** The line of points shows a clear upward trend from left to right, indicating that larger or more advanced Qwen models achieve higher accuracy.

* **Within Qwen-T (Pink):** The trend is less linear but generally high-performing, with several models clustered near the top of the chart.

* **Across Families:** There is a general progression from lower accuracy on the left (Llama, Gemma, smaller Qwen) to higher accuracy on the right (larger Qwen, Qwen-T, and the final group of Gemini/GPT).

### Key Observations

1. **Performance Threshold:** The dashed line at acc-m=80 clearly separates two performance tiers. Most Llama, Gemma, and smaller Qwen models fall below this line, while larger Qwen models, Qwen-T models, and the final group (Gemini, GPT) are above it.

2. **Model Size Correlation:** Within the Qwen (purple) series, there is a strong positive correlation between the model identifier (which likely correlates with size/capability) and accuracy.

3. **Top Performers:** The highest accuracy values (~99-100) are achieved by Qwen3-NEXT-80B-A3B-T, Qwen3-235B-A22B-T, Gpt-4o, and GPT-5.

4. **Outliers/Notable Points:**

* Qwen3-30B-A3B (purple) shows a significant jump in accuracy compared to its predecessor Qwen2.5-32B.

* Qwen3-8B-T (pink) has a very high accuracy (~95) for its apparent model size, outperforming many larger models in the standard Qwen series.

* The two GPT models (Gpt-4o, GPT-5) and the top Qwen-T models are clustered at the very top of the accuracy scale.

### Interpretation

This chart visualizes a benchmark comparison of LLM performance on a specific task measured by "acc-m". The data suggests several key insights:

1. **Architectural/Training Advances:** The significant performance gap between the Qwen2.5 series (purple, lower) and the Qwen3 series (both purple and pink, higher) indicates substantial improvements in the Qwen3 generation, likely due to architectural changes, better training data, or more compute.

2. **The "T" Variant Advantage:** The Qwen3-T models (pink) generally outperform their non-T counterparts of similar size (e.g., Qwen3-8B-T vs. Qwen3-8B is not directly shown, but Qwen3-8B-T is very high). This implies the "T" denotes a specialized variant (e.g., fine-tuned, distilled, or trained with a different objective) that is highly effective for this specific metric.

3. **State-of-the-Art Frontier:** The cluster of points at the top-right (Qwen3-NEXT-80B-A3B-T, Qwen3-235B-A22B-T, Gpt-4o, GPT-5) defines the current state-of-the-art frontier for this task. The fact that an open-weight model (Qwen) is performing in the same range as proprietary models (GPT, Gemini) is a notable finding.

4. **Benchmark Context:** The dashed line at 80 likely represents a human-performance baseline or a previous state-of-the-art threshold. Crossing this line signifies a model achieving a high level of proficiency on the underlying task.

**In summary, the chart demonstrates rapid progress in LLM capabilities, highlights the effectiveness of specific model variants (Qwen-T), and shows that the performance gap between leading open and closed models has narrowed considerably on this particular benchmark.**

</details>

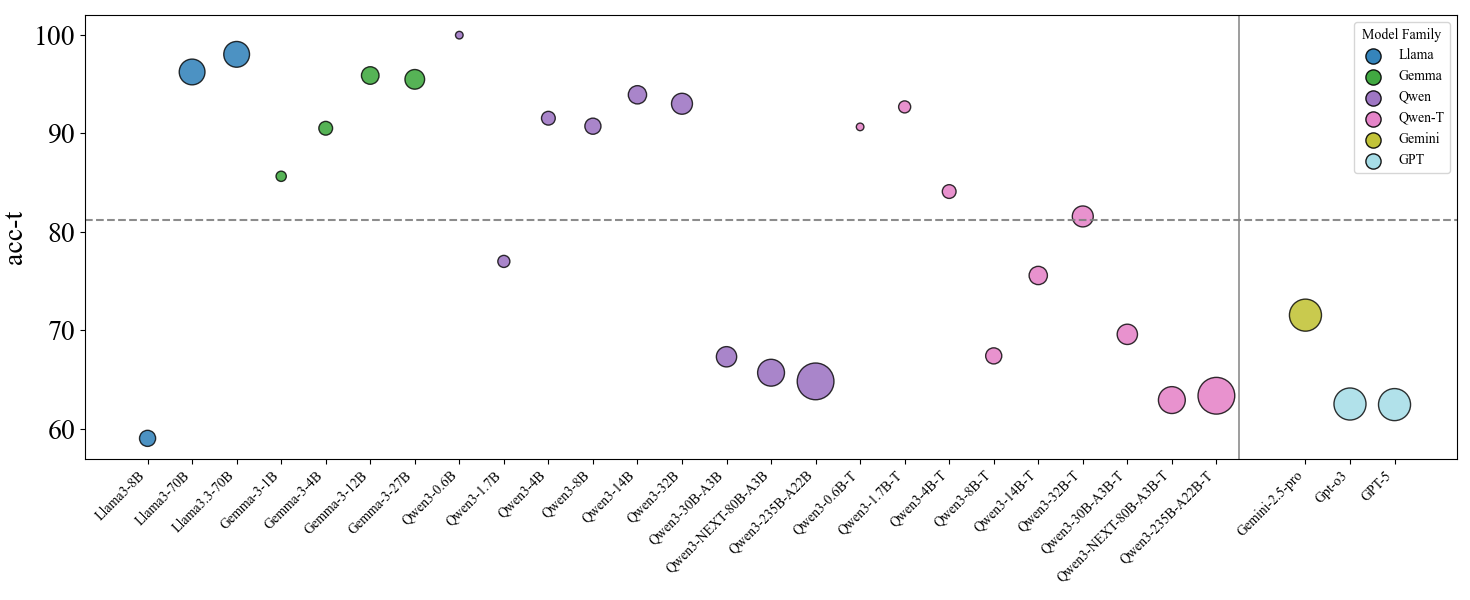

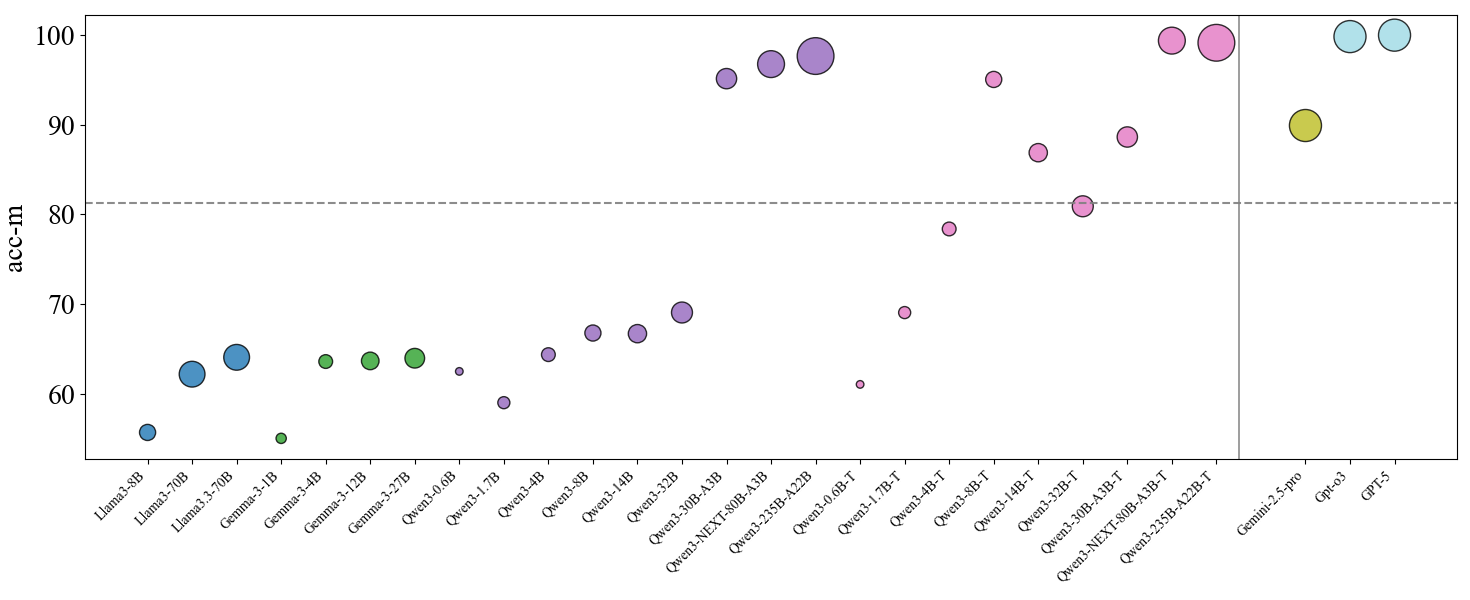

Figure 2: Overall performance of auto-regressive models under traditional logic and modern logic. The upper figure shows model performance under the traditional logic criterion, while the lower panel reports performance under the modern logic criterion. Point size is proportional to model scale, and color denotes model family. Qwen-T indicates Qwen Thinking models/mode. For closed-source models, we use a fixed medium point size for visualization only, which does not reflect their true parameter counts. The horizontal dashed line marks the dividing line between the traditional and modern logic.

Existing reasoning benchmarks Han et al. (2024) increasingly target first-order logic(modern logic), examining whether they can follow this more rigorous, formal style of reasoning. However, in syllogism reasoning, existing datasets Ando et al. (2023); Nguyen et al. (2025); Wu et al. (2023) typically treat traditional logic as the implicit default. This creates a systematic bias. A model may score high simply because it has learned dataset-specific shortcuts in traditional syllogisms, not because it truly has rigorous reasoning ability that can be transferred to new settings. On the other hand, a model may score low because it takes a modern logic view and therefore refuses to infer existence from a statement like “All unicorns are hairy animals”, which then gets marked as wrong. Even worse, these unstated rules mix up a model’s reasoning ability with how well it matches the evaluation convention, making the scores hard to interpret.

In this study, we focus on syllogism Aristotle (1984), a classic and well-studied form of deductive reasoning. The evaluation of syllogisms differs between two frameworks: Traditional Logic (Aristotelian) and Modern Logic (Boolean interpretation). The key difference between them lies in existential import (EI) Parsons and Ciola (2025), where traditional logic typically assumes the relevant terms are non-empty while modern logic makes existential commitments only when explicitly stated. As shown in Figure 1, this syllogism is typically treated as "valid" in traditional logic because the universal statement about unicorns (Premise2: All unicorns are hairy animals) is taken to presuppose that unicorns exist. While in modern logic, the conclusion does not follow unless existence of unicorns is separately asserted ("Some unicorns exist"), since the premise can be true even when there are no unicorns.

To trace the evolution of logic reasoning in LLMs, we use existential import as a probe and conduct a series of investigations on a new syllogism dataset, which can be summarized in following key findings:

(1) Controlled evidence across open-source model families and scales. We run systematic evaluations on Qwen 3 Yang et al. (2025), Llama 3 Grattafiori et al. (2024) , and Gemma 3 Team et al. (2025) series across model sizes and training variants. We find that as model size increases, $acc_{m}$ rises across all models. Models in Llama 3 and Gemma 3 series largely retain a traditional-logic reasoning style. However, in the Qwen series, we observe a clear shift in its logical paradigm from traditional logic to modern logic. We also identify a turning point where consistency fluctuates during the transition.

(2) Thinking as an efficient driver beyond parameter scaling. By comparing matched-size models, we show that RL-trained thinking variants can strongly accelerate the shift toward modern logic and improve consistency. Prompted chain-of-thought yields only a partial shift, and distillation alone does not reliably produce strict modern logic behavior, suggesting that the transition is driven more by post-training optimization of reasoning policies than by scale or imitation learning alone.

(3) Base-model constraints on learnability and stability. We evaluate Base models and show that they set the starting point for post-training. When the Base model already shows signals aligned with modern logic, post-training shifts are easier and more stable. Otherwise, the shift is harder and less stable.

We further report the experiments of different prompts, the emptiness of minor terms, cross-lingual gaps, and architecture effects including diffusion-based LLMs, conducting an in-depth analysis of properties of current LLMs on syllogistic reasoning.

## 2 Background and Dataset Construction

### 2.1 Syllogism and Existential Import

Aristotle characterizes a syllogism as consisting of two premises and a conclusion Aristotle (1984), where each statement is a categorical proposition relating a subject term ( $S$ ) to a predicate term ( $P$ ). Within the syllogism’s structure, the conclusion’s subject ( $S$ ) is called the minor term, and its predicate ( $P$ ) the major term. In standard form, there are four categorical proposition types (A/E/I/O):

| | A(universal affirmative): | All $S$ are $P$ | |

| --- | --- | --- | --- |

In this paper, we use traditional logic to denote the Aristotelian syllogistic framework, and modern logic to denote the Boolean interpretation of categorical propositions Boole (1854). For reference, under modern logic these four forms are typically rendered as:

| | A: | $\displaystyle\ \forall x\,(Sx\rightarrow Px)$ | |

| --- | --- | --- | --- |

The core distinction is existential import (EI): whether a proposition is taken to imply that its subject class is non-empty Parsons and Ciola (2025).

$\bullet$ In traditional logic, universal propositions (A/E) are typically assumed to have EI: for instance, "All $S$ are $P$ " is read as implying that the class $S$ is not empty.

$\bullet$ In modern logic, universal propositions lack EI. "All $S$ are $P$ " is formalized as a conditional, $\forall x\,(Sx\rightarrow Px)$ , which can remain true even if no $S$ exists (i.e., it is vacuously true).

We illustrate this contrast with the unicorn example in Figure 1. Under the traditional EI reading, the universal premise “All unicorns are mammals” is commonly taken to license the existential conclusion “Some unicorns are mammals.” Under modern logic, however, the universal premise entails only $\forall x\,(Ux\rightarrow Mx)$ and does not imply $\exists x\,Ux$ ; therefore the existential conclusion does not follow unless we add an explicit existence premise.

### 2.2 Dataset Construction

We build our data for analysis with a multi-stage agent pipeline that proposes terms and relations, checks factual consistency, and enforces logical constraints before generating syllogistic instances. Using this process, we generate 100 concept triplets with an empty minor-term extension and 100 with a non-empty minor-term extension, combined with Chinese/English versions and 15+9 syllogistic forms, which yields 9600 syllogisms in total. More detailed of the data construction will be discussed in Appendix 7.4.

| Model | ZH+ | ZH- | EN+ | EN- | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| $\text{Acc}_{t}$ | $\text{Acc}_{m}$ | Cons | $\text{Acc}_{t}$ | $\text{Acc}_{m}$ | Cons | $\text{Acc}_{t}$ | $\text{Acc}_{m}$ | Cons | $\text{Acc}_{t}$ | $\text{Acc}_{m}$ | Cons | |

| Qwen Series – Dense Models | | | | | | | | | | | | |

| Qwen3-0.6B | 100.00 | 62.50 | 100.00 | 99.96 | 62.46 | 95.83 | 100.00 | 62.50 | 100.00 | 100.00 | 62.50 | 100.00 |

| Qwen3-0.6B-Thinking | 94.71 | 61.04 | 4.17 | 92.96 | 61.12 | 16.67 | 86.67 | 60.25 | 0.00 | 88.33 | 61.75 | 4.17 |

| Qwen3-1.7B | 97.00 | 62.42 | 50.00 | 95.58 | 60.92 | 37.50 | 75.21 | 59.71 | 16.67 | 35.17 | 47.58 | 4.17 |

| Qwen3-1.7B-Thinking | 92.92 | 67.67 | 29.17 | 94.29 | 67.71 | 50.00 | 91.62 | 70.54 | 54.17 | 91.96 | 70.29 | 58.33 |

| Qwen3-4B | 92.46 | 67.12 | 45.83 | 94.46 | 67.04 | 54.17 | 85.79 | 61.62 | 4.17 | 93.50 | 61.67 | 12.50 |

| Qwen3-4B-Thinking | 82.54 | 79.96 | 62.50 | 85.33 | 77.08 | 58.33 | 83.62 | 78.88 | 66.67 | 84.92 | 77.58 | 62.50 |

| Qwen3-8B | 94.12 | 67.46 | 33.33 | 96.67 | 65.42 | 62.50 | 85.46 | 69.58 | 4.17 | 86.71 | 64.62 | 0.00 |

| Qwen3-8B-Thinking | 67.83 | 94.50 | 54.17 | 71.62 | 90.88 | 62.50 | 64.83 | 97.67 | 75.00 | 65.29 | 97.21 | 66.67 |

| Qwen3-14B | 97.75 | 64.50 | 66.67 | 99.25 | 63.25 | 87.50 | 87.12 | 70.96 | 25.00 | 91.58 | 68.08 | 20.83 |

| Qwen3-14B-Thinking | 72.96 | 89.54 | 62.50 | 76.50 | 86.00 | 66.67 | 74.92 | 87.50 | 58.33 | 77.92 | 84.50 | 58.33 |

| Qwen3-32B | 91.67 | 70.33 | 58.33 | 95.54 | 66.96 | 75.00 | 91.00 | 70.50 | 45.83 | 93.88 | 68.46 | 54.17 |

| Qwen3-32B-Thinking | 82.21 | 80.29 | 62.50 | 85.75 | 76.75 | 62.50 | 77.96 | 84.50 | 62.50 | 80.38 | 82.08 | 62.50 |

| Qwen Series – MoE Models | | | | | | | | | | | | |

| Qwen3-30B-A3B-Instruct | 66.58 | 95.83 | 70.83 | 71.96 | 90.54 | 66.67 | 64.00 | 98.50 | 75.00 | 66.71 | 95.71 | 66.67 |

| Qwen3-30B-A3B-Thinking | 69.17 | 93.33 | 62.50 | 71.50 | 91.00 | 62.50 | 67.71 | 86.12 | 16.67 | 70.00 | 84.08 | 8.33 |

| Qwen3-NEXT-80B-A3B-Instruct | 65.58 | 96.92 | 66.67 | 70.08 | 92.42 | 66.67 | 62.71 | 99.62 | 70.83 | 64.38 | 98.12 | 62.50 |

| Qwen3-NEXT-80B-A3B-Thinking | 62.71 | 99.79 | 83.33 | 63.08 | 99.42 | 79.17 | 62.88 | 98.96 | 50.00 | 62.96 | 99.38 | 75.00 |

| Qwen3-235B-A22B-Instruct | 66.17 | 96.33 | 66.67 | 67.83 | 94.67 | 66.67 | 62.54 | 99.88 | 87.50 | 62.71 | 99.79 | 83.33 |

| Qwen3-235B-A22B-Thinking | 62.71 | 99.79 | 83.33 | 62.88 | 99.62 | 83.33 | 64.75 | 97.75 | 62.50 | 63.08 | 99.42 | 70.83 |

| Gemma Series | | | | | | | | | | | | |

| Gemma-3-1B-IT | 87.96 | 53.29 | 0.00 | 77.62 | 51.71 | 0.00 | 90.29 | 57.54 | 0.00 | 86.71 | 57.54 | 0.00 |

| Gemma-3-4B-IT | 94.46 | 63.38 | 16.67 | 77.88 | 63.54 | 0.00 | 95.00 | 63.08 | 12.50 | 94.79 | 64.38 | 25.00 |

| Gemma-3-12B-IT | 98.54 | 63.38 | 41.67 | 98.96 | 62.88 | 45.83 | 93.67 | 63.42 | 20.83 | 92.38 | 64.96 | 20.83 |

| Gemma-3-27B-IT | 95.33 | 62.00 | 16.67 | 94.17 | 61.58 | 20.83 | 96.54 | 65.71 | 50.00 | 95.96 | 66.54 | 66.67 |

| Llama Series | | | | | | | | | | | | |

| Llama3-8B-Instruct | 75.12 | 60.21 | 0.00 | 63.29 | 53.79 | 0.00 | 50.25 | 56.88 | 0.00 | 47.42 | 51.83 | 0.00 |

| Llama3-70B-Instruct | 98.58 | 63.17 | 58.33 | 96.88 | 62.71 | 45.83 | 98.88 | 62.54 | 62.50 | 90.67 | 60.29 | 20.83 |

| Llama3.3-70B-Instruct | 96.08 | 65.92 | 58.33 | 97.88 | 63.96 | 62.50 | 99.08 | 63.00 | 87.50 | 99.12 | 63.38 | 79.17 |

| Closed-source Models | | | | | | | | | | | | |

| Claude-3.7-Sonnet | 85.29 | 76.54 | 45.83 | 90.46 | 71.71 | 50.00 | 70.33 | 92.00 | 54.17 | 73.08 | 89.42 | 62.50 |

| Claude-4.5-Sonnet | 81.38 | 81.12 | 62.50 | 93.96 | 68.57 | 62.50 | 70.01 | 92.52 | 66.67 | 84.11 | 78.40 | 62.50 |

| Gemini-2.5-Pro | 71.92 | 89.33 | 29.17 | 76.17 | 83.50 | 25.00 | 65.17 | 97.33 | 70.83 | 72.92 | 89.50 | 58.33 |

| Gemini-3-Pro-Preview | 73.11 | 89.20 | 54.17 | 99.00 | 63.48 | 66.67 | 63.48 | 99.00 | 79.17 | 98.41 | 64.02 | 70.83 |

| GPT-4o-2024-11-20 | 93.17 | 68.42 | 41.67 | 96.17 | 65.71 | 50.00 | 93.33 | 68.75 | 50.00 | 94.04 | 67.83 | 50.00 |

| GPT-4.1-2025-04-14 | 80.38 | 80.04 | 33.33 | 85.08 | 76.67 | 45.83 | 80.04 | 82.38 | 58.33 | 81.54 | 80.96 | 62.50 |

| GPT-o3 | 62.38 | 99.54 | 87.50 | 62.58 | 99.92 | 91.67 | 62.50 | 100.00 | 100.00 | 62.58 | 99.92 | 95.83 |

| GPT-5-2025-08-07 | 62.50 | 100.00 | 100.00 | 62.50 | 100.00 | 100.00 | 62.50 | 100.00 | 100.00 | 62.50 | 100.00 | 100.00 |

Table 1: Results for various models by language and the subject term’s existence condition (non-empty vs. empty extension). Detailed metrics (e.g., precision and recall) are reported in the Appendix 7.6.2.

## 3 Experiment Design

### 3.1 The 15+9 Distinction of Valid Syllogistic Forms

This disagreement over EI directly creates a split in the set of valid syllogistic forms. A form is defined by its mood (the A/E/I/O pattern) and figure (term arrangement).

- Traditional Logic recognizes 24 valid forms.

- Modern Logic accepts only 15 of these as unconditionally valid. The remaining 9 forms are rejected precisely because they commit the existential fallacy.

As shown in Appendix 7.2, we use 15+9 split to distinguish traditional from modern logic validity, and report accuracy under each logic paradigm accordingly.

We further compare a baseline prompt with a Prior-check prompt that explicitly asks the model to first state whether the concepts are empty in the given setting by add "Do you think {major term}, {middle term}, {minor term} are empty sets? Keep that in mind and answer:" at the beginning of prompt, testing whether making the existence status explicit shifts the model’s behavior between traditional and modern logic.

We evaluate model behavior under both traditional logic and modern logic, and also examine how stable its reasoning is across instances of the same syllogistic form.

We first report traditional-logic accuracy ( $\text{Acc}_{t}$ ), defined as the proportion of instances in which the model accepts the conclusion, treating all 24 moods as valid under existential import. We then report modern-logic accuracy ( $\text{Acc}_{m}$ ), defined with respect to modern semantics: the model should accept instances from the 15 moods that are valid in modern logic, and reject instances from the 9 moods that become invalid when the minor term $S$ has an empty extension. Higher $\text{Acc}_{t}$ indicates behavior closer to traditional logic, while higher $\text{Acc}_{m}$ indicates behavior more consistent with the modern logic.

Moreover, consistency score (Cons) of each mood in each language and concept-emptiness set is report as $\frac{n}{24}$ . Model can earn the score only if all answers of the same mood is consistent. In addition, we report precision and recall separately on the two mood subsets (the 15 unconditionally-valid moods and the 9 existential-import-dependent moods) to better characterize how the model distinguishes between these two logic regimes, detailed in Appendix 7.5.

## 4 Results and Analysis

### 4.1 Main Results

#### 4.1.1 Scaling Effects of Logical Evolution

Advanced models exhibit modern-logic behavior.

Widely recognized as advanced closed-source LLMs(e.g., Gemini-2.5-Pro Comanici et al. (2025), GPT-o3 OpenAI (2025b), GPT-5 OpenAI (2025a)) increasingly prefer modern logic while maintaining relatively low scores under the traditional logic (see Table 1). This change is not only about higher accuracy, but also suggests that models are moving toward a more rule-based and principled way to analyze validity.

Motivated by this, we ask a basic question: how does the preference for the modern logic emerge as models are developed and scaled up? To study this in a controlled way, we turn to open-source model families where we can compare many related checkpoints. Concretely, we evaluate the Qwen Yang et al. (2025), Llama Grattafiori et al. (2024), and Gemma Team et al. (2025) series. Overall, we find that as model size increases, $Acc_{m}$ rises across all models, indicating that models’ logical reasoning became more rigorous as the parameter scaling up.

Clear family-specific scaling patterns.

We further conduct a detailed analysis of three major model families. Since Qwen series provide comprehensive coverage across the wide scale range from 0.6B to 235B, multiple variants, and different architectures (including dense and mixture-of-experts models), we primarily analyze Qwen models and report them as our main results.

Among these three families, we find clear family-specific scaling patterns in logical behavior. Qwen shows a scaling trend that includes a clear logic paradigm shift. For small to mid-sized non-thinking and instruction-tuned Qwen models, $\text{Acc}_{t}$ remains very high, indicating a strong preference for the traditional logic. However, when moving to larger Qwen models—especially thinking variants and some large instruction-tuned variants—the pattern can flip, with $\text{Acc}_{m}$ becoming much higher than $\text{Acc}_{t}$ . This trend holds in both Chinese and English, suggesting it is not tied to a single language setting. In contrast, for Llama and Gemma, models at different sizes mostly follow the traditional logic. Scaling mainly makes them stronger within the traditional logic.

We hypothesize this is because, at small sizes, the model gradually grasps traditional logic to improve inference performance. However, at larger sizes, to solve more complex problems, the model must switch to full modern logic. We also observe the consistency scores fluctuate when scaling up Qwen models. This instability is more likely near the Turning Point where the model’s logic switches. This suggests the transition from traditional logic to modern logic is not always smooth. During the change, the model may mix the behaviors of following surface patterns from data and more rigorous reasoning of modern logic, which can temporarily lead to disagreements across closely related test cases.

Takeaway 1

As models scale up, their logic judgments clearly shift from the traditional logic to modern logic, matching the same direction we see in advanced closed-source models.

#### 4.1.2 Thinking as an Efficient Driver of the Logic Evolution

Thinking accelerates the logic shift at fixed scale.

Since Thinking directly strengthens a model’s multi-step reasoning process, it enables more consistent rule-based inference with less reliance on scale alone. We compare same-sized Instruct/Non-thinking models with their Thinking counterparts. The results show that the thinking mechanism can strongly speed up the shift from the traditional logic to the modern logic. This is most obvious in the Qwen3-8B pair: while Qwen3-8B still mostly follows the traditional logic, Qwen3-8B-Thinking moves clearly toward the modern logic stance. For larger models where the Instruct version is already strongly modern-logic-aligned, the Thinking version often further improves $\text{Acc}_{m}$ and increases consistency across closely related test cases.

A natural explanation is that reinforcement learning (RL) makes the model rely more on step-by-step, rule-like deduction, and also helps it give more stable answers when two cases are very similar. In this sense, Thinking does not just add better instruction following. It changes the decision criterion and makes the logic paradigm shift more likely.

Thinking is an efficient alternative to parameter scaling.

Under the modern logic, Qwen3-8B-Thinking can reach a performance level close to Qwen3-30B-A3B-Instruct, even though it uses far fewer parameters. So, increasing model size is not the only way to get strong modern logic behavior. RL training with explicit reasoning traces can partly replace the need for more parameters by changing how the model uses its capacity. In practice, scaling tends to improve broad robustness but is expensive, while RL-based thinking can be a more focused and compute-efficient way to push the model into the modern logic. The best results still come from combining large model further enhanced with RL.

CoT Prompting and Distillation are insufficient.

To further investigate the effectiveness of Thinking mechanism, we conduct two additional experiments. First, starting from the Instruct models, we add an explicit CoT-trigger prompt (e.g., "Let’s think step by step."). The results are reported in Appendix 7.6.4. We find that Instruct+CoT setting can induce a partial shift toward modern logic, but the shift is limited. In contrast, the Thinking models produce a more complete transition in the underlying logic criterion, further supporting our main finding that RL-trained thinking acts as a promoter of logic shift.

In addition, we also examine several distilled models derived from large RL-trained model (e.g., DeepSeek-R1-Distill-Llama-8B DeepSeek-AI et al. (2025)). The results in Appendix 7.6.4 suggest that RL training does not automatically lead to rigorous modern logic in all models. Instead, achieving a stable shift to modern logic appears to require careful, task-aware design. At least in our setting, distillation from DeepSeek-R1 alone is far from sufficient to produce the same level of strict modern logic behavior.

Takeaway 2

The thinking process derived from RL can push a smaller model into modern logic.

#### 4.1.3 Base Models as the Starting Point and a Constraint

Base models shape what post-training can achieve.

Scaling and RL can change a model’s logical stance, but these changes do not start from nowhere. Here we test a more basic point: how much the final behavior is already shaped by the underlying Base model? To answer this, we evaluate several base models (Appendix 7.6.5). Overall, the base model sets the starting point for post-training, and it strongly affects both (i) what the later Instruct / Thinking models can learn and (ii) how stable that learned criterion will be.

Modern-logic signals at the Base stage enable easier shifts.

From Qwen3-8B-Base, where we later observe a clear shift toward modern logic, we already see an important signal at the base stage. It achieves relatively high $rec_{V}$ , and in most settings its $rec_{I}$ is also clearly higher than other base models. This suggests that Qwen3-8B-Base is not fully locked by traditional logic. Instead, it already shows some ability to separate the modern-valid moods from all moods, leaving room for post-training to strengthen modern logic. This explains why RL in the Thinking variant can push Qwen3-8B toward modern more easily.

In contrast, Gemma and LLaMA Base models often have low $rec_{V}$ , meaning they frequently fail to recognize modern-valid moods and tend to answer "invalid" by default. This also explains their seemingly high $rec_{I}$ on the existential-import-dependent subset: the high $rec_{I}$ is largely caused by a general rejection tendency, rather than real sensitivity to existential import.

The effect of Base model is strong but not absolute .

Small models (e.g., Qwen3-8B) benefit the most when the Base already shows modern logic signals. Larger models can still learn the modern logic through post-training (e.g., Qwen3-30B-A3B), but the learned shift is not always stable: under Thinking, judgments can fluctuate, and in some cases the model can drift back toward a traditional pattern. This suggests that post-training can move the decision criterion, but the base model still influences how reliable that move will be.

Takeaway 3

The base model is the starting point. If it already leans toward modern logic, post-training shifts are easier and more stable.

<details>

<summary>images/qwen3-4b_24x4_heatmap.png Details</summary>

### Visual Description

## Heatmap: Syllogism Format Performance by Language Condition

### Overview

This image is a heatmap visualizing the performance of various syllogism formats across four different language conditions. The performance metric is "The number of predicted VALID" responses, represented by a color gradient. The chart is organized with syllogism formats as rows and language conditions as columns. A prominent red horizontal line divides the syllogism formats into two distinct groups.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "Syllogism Format". It lists 24 distinct syllogism formats, each a combination of three letters (A, E, I, O) and a number (1-4). The formats are, from top to bottom:

* AAA-1, EAE-1, AII-1, EIO-1, EAE-2, AEE-2, EIO-2, AOO-2, AII-3, IAI-3, OAO-3, EIO-3, AEE-4, IAI-4, EIO-4

* *(Red Horizontal Line)*

* AAI-1, EAO-1, AEO-2, EAO-2, AAI-3, EAO-3, AAI-4, AEO-4, EAO-4

* **X-Axis (Horizontal):** Contains four categorical labels representing language conditions. The labels are:

* `zh+` (Chinese, positive?)

* `zh-` (Chinese, negative?)

* `en+` (English, positive?)

* `en-` (English, negative?)

* *Note: The "+" and "-" symbols are part of the labels.*

* **Color Bar/Legend (Right Side):** A vertical gradient bar titled "The number of predicted VALID". It maps color to numerical value, ranging from **55 (black/dark purple)** at the bottom to **100 (light yellow)** at the top. The scale has major ticks at intervals of 5: 55, 60, 65, 70, 75, 80, 85, 90, 95, 100.

### Detailed Analysis

The heatmap displays a grid of colored cells. The color of each cell corresponds to the estimated number of predicted VALID responses for a specific syllogism format under a specific language condition. Values are approximate, inferred from the color bar.

**General Trend:** The vast majority of cells are light yellow, indicating high performance (values between ~95 and 100). Performance drops significantly (darker colors) only in specific, isolated cells, primarily in the bottom two rows (AAI-4, AEO-4) and a few others.

**Row-by-Row Data Extraction (Approximate Values):**

* **Top Group (Above Red Line):** Generally very high performance (~95-100) across all four columns (zh+, zh-, en+, en-).

* **Notable Exceptions (Lower Performance):**

* **AOO-2:** `en+` and `en-` columns are orange, estimated ~80-85.

* **IAI-3:** `en+` column is light orange, estimated ~85-90.

* **AEE-4:** `zh-` and `en+` columns are light orange, estimated ~85-90.

* **IAI-4:** `en+` column is orange, estimated ~80-85.

* **EIO-4:** `en+` column is light orange, estimated ~85-90.

* **Bottom Group (Below Red Line):** Shows more variability and the lowest performance values on the chart.

* **AAI-1, AEO-2, EAO-2, AAI-3, EAO-3:** Mostly high performance (~95-100), similar to the top group.

* **EAO-1:** `en+` column is orange, estimated ~80-85.

* **AAI-4:** This row contains the lowest values.

* `zh+`: Black, value ~55.

* `zh-`: Black, value ~55.

* `en+`: Dark purple, value ~60-65.

* `en-`: Red/pink, value ~70-75.

* **AEO-4:** Also shows very low performance.

* `zh+`: Black, value ~55.

* `zh-`: Black, value ~55.

* `en+`: Black, value ~55.

* `en-`: Red/pink, value ~70-75.

* **EAO-4:** Performance recovers.

* `zh+`: Light yellow, ~95-100.

* `zh-`: Light orange, ~85-90.

* `en+`: Orange, ~80-85.

* `en-`: Light yellow, ~95-100.

### Key Observations

1. **Severe Performance Drop for Specific Formats:** The syllogism formats **AAI-4** and **AEO-4** exhibit dramatically lower performance (55-75) compared to all others, especially under the `zh+`, `zh-`, and `en+` conditions.

2. **Language Condition Impact:** For the problematic formats (AAI-4, AEO-4), performance is worst in the Chinese conditions (`zh+`, `zh-`) and the `en+` condition. There is a notable, though still reduced, improvement in the `en-` condition for these formats.

3. **Isolated Dips in Top Group:** Even within the generally high-performing top group, specific formats (AOO-2, IAI-3, AEE-4, IAI-4, EIO-4) show localized performance dips, primarily in the `en+` column.

4. **Structural Division:** The red line separates the syllogism formats into two groups. The bottom group contains all the formats with the most severe performance issues (AAI-4, AEO-4), suggesting a categorical difference between the formats above and below the line.

### Interpretation

This heatmap likely presents results from an experiment testing an AI model's ability to identify logically valid syllogisms. The "number of predicted VALID" is the count of times the model correctly identified a valid argument format.

* **What the Data Suggests:** The model is highly proficient (near-perfect) with most classical syllogism formats (like AAA-1, EAE-1). However, it has a critical failure mode with specific, less common formats (AAI-4, AEO-4). The performance collapse for these formats is severe and consistent across multiple language conditions.

* **Relationship Between Elements:** The x-axis conditions (`zh+/-`, `en+/-`) likely represent different prompt phrasings or language contexts (e.g., positive vs. negative framing in Chinese and English). The model's weakness is most pronounced in Chinese contexts and a specific English context (`en+`) for the problematic formats. The `en-` condition appears to partially mitigate the issue for AAI-4 and AEO-4.

* **Notable Anomalies:** The stark contrast between the near-perfect performance for 90% of the grid and the catastrophic failure for AAI-4/AEO-4 is the central finding. This indicates the model's logical reasoning is not robust; it has "blind spots" for particular syntactic structures of valid arguments. The red line may separate more standard syllogistic forms (above) from more complex or atypical ones (below), highlighting that the model's competence is not uniform across logical syntax.

</details>

(a) Qwen3-4B

<details>

<summary>images/qwen3-8b_24x4_heatmap.png Details</summary>

### Visual Description

## Heatmap: Syllogism Format Prediction Validity

### Overview

This image is a heatmap visualizing the number of predicted "VALID" outcomes for various syllogism formats across four different conditions. The data is presented as a grid where color intensity represents a numerical value, with a color scale provided on the right. A horizontal red line divides the syllogism formats into two distinct groups.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "Syllogism Format". It lists 24 distinct syllogism formats, each with a numerical suffix (e.g., AAA-1, EAE-1, AII-1, etc.). The formats are grouped, with a red horizontal line separating the first 15 formats (AAA-1 through EIO-4) from the last 9 formats (AAI-1 through EAO-4).

* **X-Axis (Horizontal):** Contains four categorical labels: `zh+`, `zh-`, `en+`, `en-`. These likely represent experimental conditions, possibly related to language (Chinese/English) and another binary variable (+/-).

* **Color Bar/Legend (Right Side):** A vertical gradient bar titled "The number of predicted VALID". The scale ranges from **65** (black/dark purple) at the bottom to **100** (light yellow/cream) at the top. The gradient transitions through dark purple, purple, magenta, red, orange, and yellow.

* **Visual Separator:** A solid red horizontal line is drawn across the heatmap between the rows for "EIO-4" and "AAI-1".

### Detailed Analysis

The heatmap displays approximate values for each cell based on color matching with the legend. Values are estimates; the exact number is not written in the cells.

**Top Group (Above Red Line: AAA-1 to EIO-4)**

This group is characterized by predominantly high values (light yellow to light orange), indicating a high number of predicted VALID outcomes across most conditions.

* **General Trend:** Values are consistently high, mostly in the 90-100 range.

* **Notable Patterns:**

* The `zh-` column often shows the lightest colors (highest values, ~95-100) for many formats in this group.

* The `en+` column shows slightly more variation, with some cells (e.g., AII-3, IAI-4, EIO-4) appearing as light orange, suggesting values in the ~85-90 range.

* Formats like AAA-1, EAE-1, AII-1, EIO-1, AEE-2, IAI-3, OAO-3, and EIO-3 show very high, uniform values across all four conditions.

**Bottom Group (Below Red Line: AAI-1 to EAO-4)**

This group shows significantly more variation and generally lower values (darker colors), indicating fewer predicted VALID outcomes.

* **General Trend:** Values are more dispersed, ranging from the mid-60s to low 90s.

* **Notable Patterns:**

* The `en+` column contains the most extreme low values in the entire chart. Specifically:

* **AAI-3 / en+:** Dark purple, estimated value ~70-75.

* **AAI-4 / en+:** Black, estimated value ~65 (lowest on scale).

* **AEO-4 / en+:** Dark purple, estimated value ~70.

* **EAO-4 / en+:** Very dark blue/purple, estimated value ~65-70.

* The `zh+` and `zh-` columns for the bottom three rows (AAI-4, AEO-4, EAO-4) show a mix of black (very low, ~65) and red/orange (mid-range, ~80-85).

* The `en-` column for the bottom three rows shows orange/red values (~80-85), which are higher than the corresponding `en+` values for the same formats.

### Key Observations

1. **Bimodal Distribution:** The red line clearly separates two performance clusters. Syllogism formats above the line are predicted as VALID much more frequently than those below it.

2. **Condition-Specific Difficulty:** The `en+` condition appears to be the most challenging for the syllogism formats in the bottom group, yielding the lowest validity predictions.

3. **Format-Specific Outliers:** Within the bottom group, formats with the suffix "-4" (AAI-4, AEO-4, EAO-4) exhibit the most severe drops in predicted validity, especially under the `en+` condition.

4. **Language Condition Contrast:** For the difficult formats at the bottom, the `en-` condition consistently shows higher validity predictions than the `en+` condition, suggesting the "+" factor significantly reduces performance in English.

### Interpretation

This heatmap likely presents results from a study evaluating an AI model's or a cognitive system's ability to judge the logical validity of different syllogistic reasoning formats. The syllogism formats are classical categorical syllogisms (e.g., AAA-1 is "Barbara").

* **What the data suggests:** The system finds a clear subset of syllogism formats (those above the red line) to be reliably valid. The formats below the line, particularly those ending in "-4" (which may denote a specific figure or complexity), are much harder for the system to validate correctly.

* **How elements relate:** The x-axis conditions (`zh+`, `zh-`, `en+`, `en-`) probably manipulate the language of the premises (Chinese vs. English) and another factor (e.g., presence/absence of a distracting element, or affirmative/negative phrasing). The data shows that this second factor (`+` vs `-`) interacts strongly with language, especially for difficult syllogisms. The `en+` condition is uniquely detrimental.

* **Notable Anomalies:** The drastic performance cliff for formats like AAI-4 and AEO-4 in the `en+` condition is the most striking finding. It indicates a specific failure mode where the combination of a difficult logical format and the `en+` condition leads to near-total failure in predicting validity (values at the scale minimum of 65). The red line may represent a threshold of "formal validity" or "cognitive ease" in the experimental design.

**Language Present:** The labels `zh` and `en` are abbreviations for Chinese and English, respectively. All other text is in English.

</details>

(b) Qwen3-8B

<details>

<summary>images/qwen3-next-80b-a3b-instruct_24x4_heatmap.png Details</summary>

### Visual Description

## Heatmap: Syllogism Format Prediction Validity by Language Prompt

### Overview

This image is a heatmap visualizing the number of predicted "VALID" outcomes for various syllogism formats under four different language prompt conditions. The data is presented in a grid where color intensity represents the count, with a clear separation between two groups of syllogism formats.

### Components/Axes

* **Y-Axis (Vertical):** Labeled **"Syllogism Format"**. It lists 26 distinct syllogism format codes. A horizontal red line separates the list into two distinct groups.

* **Top Group (15 formats, above red line):** AAA-1, EAE-1, AII-1, EIO-1, EAE-2, AEE-2, EIO-2, AOO-2, AII-3, IAI-3, OAO-3, EIO-3, AEE-4, IAI-4, EIO-4.

* **Bottom Group (11 formats, below red line):** AAI-1, EAO-1, AEO-2, EAO-2, AAI-3, EAO-3, AAI-4, AEO-4, EAO-4.

* **X-Axis (Horizontal):** Four categorical labels representing language prompt conditions:

* `zh+` (Chinese, positive framing)

* `zh-` (Chinese, negative framing)

* `en+` (English, positive framing)

* `en-` (English, negative framing)

* **Color Bar/Legend (Right Side):** A vertical gradient bar titled **"The number of predicted VALID"**. The scale runs from **0** (black/dark purple) at the bottom to **100** (light yellow) at the top, with intermediate markers at 20, 40, 60, and 80. This bar serves as the key for interpreting the cell colors in the heatmap.

### Detailed Analysis

The heatmap is divided into two clear regions by a horizontal red line.

**1. Top Region (Above Red Line):**

* **Trend:** All 15 syllogism formats in this group show uniformly high values across all four language prompt conditions (`zh+`, `zh-`, `en+`, `en-`).

* **Data Points:** Every cell in this 15x4 block is colored light yellow, corresponding to the top of the color scale. The number of predicted VALID outcomes is approximately **100** for every combination. There is no visible variation within this group.

**2. Bottom Region (Below Red Line):**

* **Trend:** This group shows significant variation in values, both between different syllogism formats and across the four language conditions. Values are generally much lower than in the top region.

* **Data Points (Approximate values based on color):**

* **AAI-1:** `zh+` (~10, dark purple), `zh-` (~20, purple), `en+` (~0, black), `en-` (~5, very dark purple).

* **EAO-1:** `zh+` (~15), `zh-` (~30, magenta), `en+` (~10), `en-` (~10).

* **AEO-2:** `zh+` (~30), `zh-` (~40, pinkish), `en+` (~0, black), `en-` (~10).

* **EAO-2:** `zh+` (~35), `zh-` (~50, salmon), `en+` (~10), `en-` (~25).

* **AAI-3:** `zh+` (~15), `zh-` (~30), `en+` (~5), `en-` (~0, black).

* **EAO-3:** `zh+` (~30), `zh-` (~60, orange), `en+` (~10), `en-` (~25).

* **AAI-4:** `zh+` (~0, black), `zh-` (~0, black), `en+` (~5), `en-` (~5).

* **AEO-4:** `zh+` (~5), `zh-` (~25), `en+` (~5), `en-` (~10).

* **EAO-4:** `zh+` (~25), `zh-` (~35), `en+` (~10), `en-` (~20).

### Key Observations

1. **Bimodal Distribution:** The red line acts as a stark divider. The 15 formats above it are predicted as VALID nearly 100% of the time regardless of language prompt. The 11 formats below it have much lower and more variable validity prediction rates.

2. **Language Prompt Effect:** Within the bottom group, the `zh-` (Chinese, negative) condition consistently yields the highest number of predicted VALID outcomes for most formats (e.g., EAO-3 peaks at ~60). The `en+` (English, positive) condition often results in the lowest values, frequently near zero.

3. **Format-Specific Patterns:** Certain formats like EAO-2 and EAO-3 show relatively higher validity predictions, especially under Chinese prompts. Others, like AAI-4, show near-zero validity predictions across all conditions.

### Interpretation

This heatmap likely presents results from an experiment testing how different language frames (Chinese/English, positive/negative) affect an AI model's judgment of the logical validity of various syllogistic reasoning formats.

* **The Red Line's Significance:** The clean separation suggests the top 15 formats are **classically valid** syllogisms (e.g., AAA-1, EAE-2). The model correctly identifies them as valid nearly perfectly. The bottom 11 formats are likely **classically invalid** or "weak" syllogisms (e.g., AAI, EAO forms). The model's ability to predict them as invalid is inconsistent and influenced by the prompt.

* **Language and Framing Bias:** The data indicates a potential bias. The model is more likely to incorrectly label an invalid syllogism as "VALID" when prompted in Chinese, especially with negative framing (`zh-`). Conversely, it is more conservative (predicting fewer VALIDs) when prompted in English with positive framing (`en+`). This suggests the model's logical reasoning is not perfectly language- or frame-invariant.

* **Practical Implication:** The findings highlight that for robust, unbiased logical reasoning, AI models may require careful prompt engineering or specialized training, as their performance can vary significantly based on superficial linguistic cues, even on formal logic tasks.

</details>

(c) Qwen3-NEXT-80B-A3B-Instruct

<details>

<summary>images/qwen3-235b-a22b-thinking_24x4_heatmap.png Details</summary>

### Visual Description

## Heatmap: Syllogism Format Prediction Validity

### Overview

The image is a heatmap visualizing the number of predicted "VALID" outcomes for various syllogism formats across four different conditions. The data is presented in a grid where color intensity represents the count, with a clear separation between two distinct groups of syllogism formats.

### Components/Axes

* **Vertical Axis (Y-axis):** Labeled **"Syllogism Format"**. It lists 25 distinct categorical formats, grouped into two sections separated by a horizontal red line.

* **Top Section (Above Red Line):** AAA-1, EAE-1, AII-1, EIO-1, EAE-2, AEE-2, EIO-2, AOO-2, AII-3, IAI-3, OAO-3, EIO-3, AEE-4, IAI-4, EIO-4.

* **Bottom Section (Below Red Line):** AAI-1, EAO-1, AEO-2, EAO-2, AAI-3, EAO-3, AAI-4, AEO-4, EAO-4.

* **Horizontal Axis (X-axis):** Represents four conditions, likely related to language and polarity. The labels are:

* `zh+` (likely Chinese, positive condition)

* `zh-` (likely Chinese, negative condition)

* `en+` (likely English, positive condition)

* `en-` (likely English, negative condition)

* **Color Scale/Legend:** Located on the right side. It is a vertical gradient bar labeled **"The number of predicted VALID"**.

* **Scale:** Ranges from **0** (black/dark purple) to **100** (light yellow/cream).

* **Gradient:** Black (0) -> Dark Purple -> Purple -> Magenta -> Orange -> Light Yellow (100).

### Detailed Analysis

The heatmap reveals a stark dichotomy between the two groups of syllogism formats.

**1. Top Group (Above Red Line - 15 formats):**

* **Trend:** All cells in this group are a uniform, very light yellow color.

* **Data Points:** Based on the color scale, the value for every cell in this group is approximately **95-100**. There is no visible variation across the four conditions (`zh+`, `zh-`, `en+`, `en-`) for any of these formats. They consistently show the maximum number of predicted VALID outcomes.

**2. Bottom Group (Below Red Line - 9 formats):**

* **Trend:** This group shows significant variation and much lower values overall. The colors range from black to purple.

* **Data Points (Approximate by Row and Column):**

* **AAI-1:** `zh+` (~5, dark purple), `zh-` (~0, black), `en+` (~10, dark purple), `en-` (~5, dark purple).

* **EAO-1:** `zh+` (~0, black), `zh-` (~10, dark purple), `en+` (~30, purple), `en-` (~5, dark purple).

* **AEO-2:** `zh+` (~0, black), `zh-` (~0, black), `en+` (~25, purple), `en-` (~15, dark purple).

* **EAO-2:** `zh+` (~0, black), `zh-` (~0, black), `en+` (~35, magenta/purple), `en-` (~0, black).

* **AAI-3:** `zh+` (~0, black), `zh-` (~0, black), `en+` (~0, black), `en-` (~0, black).

* **EAO-3:** `zh+` (~0, black), `zh-` (~10, dark purple), `en+` (~30, purple), `en-` (~5, dark purple).

* **AAI-4:** `zh+` (~0, black), `zh-` (~0, black), `en+` (~10, dark purple), `en-` (~5, dark purple).

* **AEO-4:** `zh+` (~0, black), `zh-` (~0, black), `en+` (~20, purple), `en-` (~0, black).

* **EAO-4:** `zh+` (~0, black), `zh-` (~10, dark purple), `en+` (~30, purple), `en-` (~10, dark purple).

### Key Observations

1. **Binary Performance:** The red line acts as a perfect separator. Syllogism formats above it are predicted as VALID nearly 100% of the time across all tested conditions. Formats below it are predicted as VALID far less frequently.

2. **Condition Sensitivity in Low-Performance Group:** Within the bottom group, the `en+` (English, positive) condition consistently shows the highest values (brightest colors), suggesting the model is most likely to predict VALID for these problematic formats when presented with English positive statements.

3. **Near-Zero Performance:** Several cells, particularly for `zh+` and `zh-` in the bottom group, are black, indicating a value of 0 or very close to 0 predicted VALID outcomes.

4. **Format Patterns:** The bottom group consists exclusively of formats starting with "AAI", "EAO", or "AEO". The top group contains a wider variety, including "AAA", "EAE", "AII", etc.

### Interpretation

This heatmap likely illustrates the results of an experiment testing an AI model's ability to identify logically valid syllogisms. The "Syllogism Format" labels (e.g., AAA-1) are standard notation in categorical logic, where letters denote the type of proposition (A=Universal Affirmative, E=Universal Negative, I=Particular Affirmative, O=Particular Negative) and numbers denote the figure (arrangement of terms).

* **What the data suggests:** The model has a clear, binary understanding of syllogism validity. It correctly identifies a specific set of 15 formats (the top group) as almost always valid. Conversely, it struggles significantly with another set of 9 formats (the bottom group), rarely predicting them as valid.

* **How elements relate:** The separation by the red line is the most critical relationship. It implies a fundamental logical distinction between the two groups. In traditional logic, the top group likely contains all and only the **unconditionally valid** syllogism forms (e.g., AAA-1, EAE-1). The bottom group likely contains **conditionally valid** or **invalid** forms, which may only be valid under specific interpretations or not at all.

* **Notable anomaly/trend:** The model's performance on the "invalid" group is not uniformly zero. The relative success in the `en+` condition could indicate a bias in the model's training data or a linguistic cue in English positive statements that sometimes leads it to incorrectly validate these forms. The complete failure on `AAI-3` (all cells black) is a notable outlier within the low-performance group.

**In essence, the heatmap visualizes a model's sharp, logic-based dichotomy in judging syllogisms, with a secondary layer showing how language and polarity conditions can slightly modulate its errors on logically unsound forms.**

</details>

(d) Qwen3-235B-A22B-Thinking

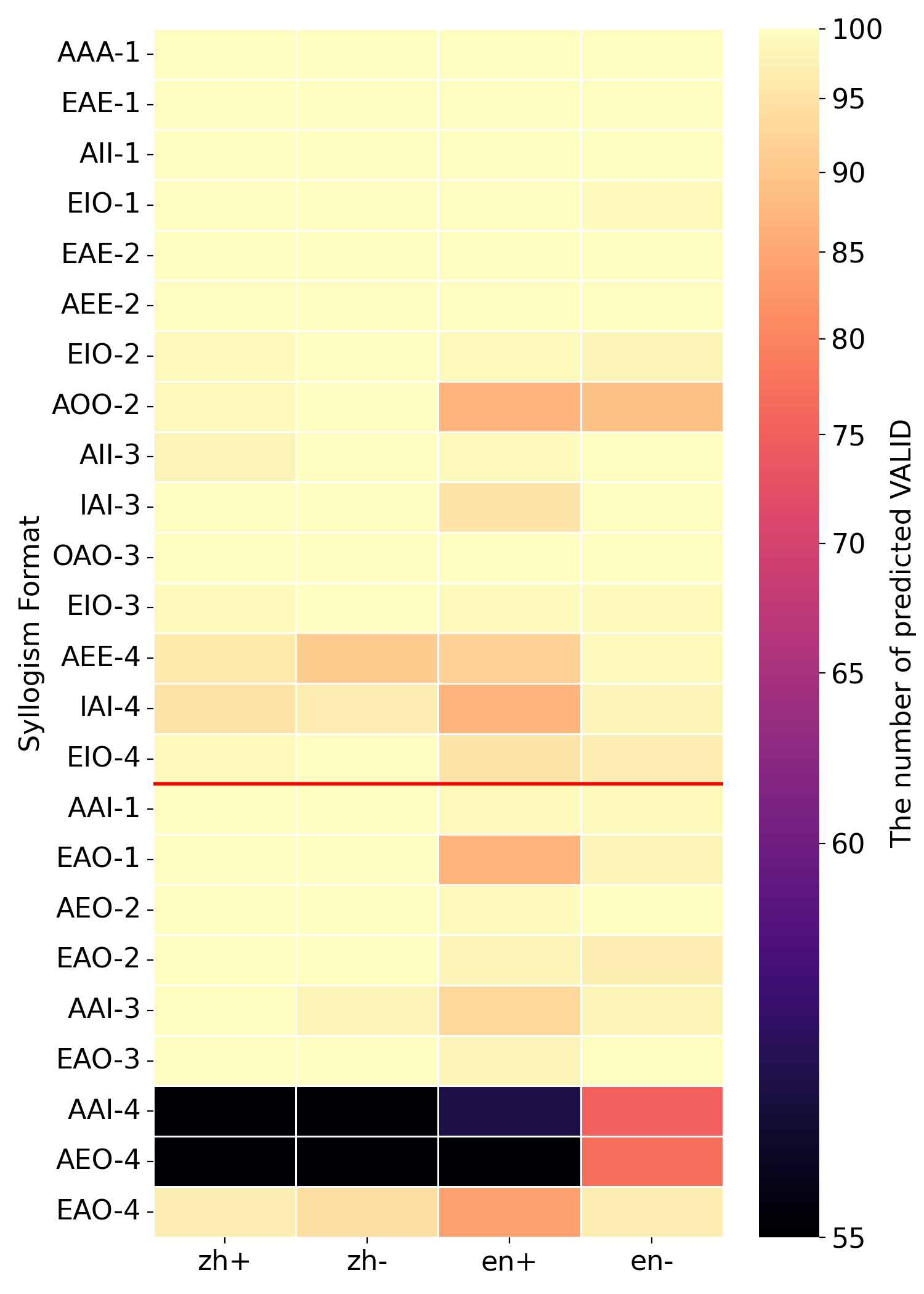

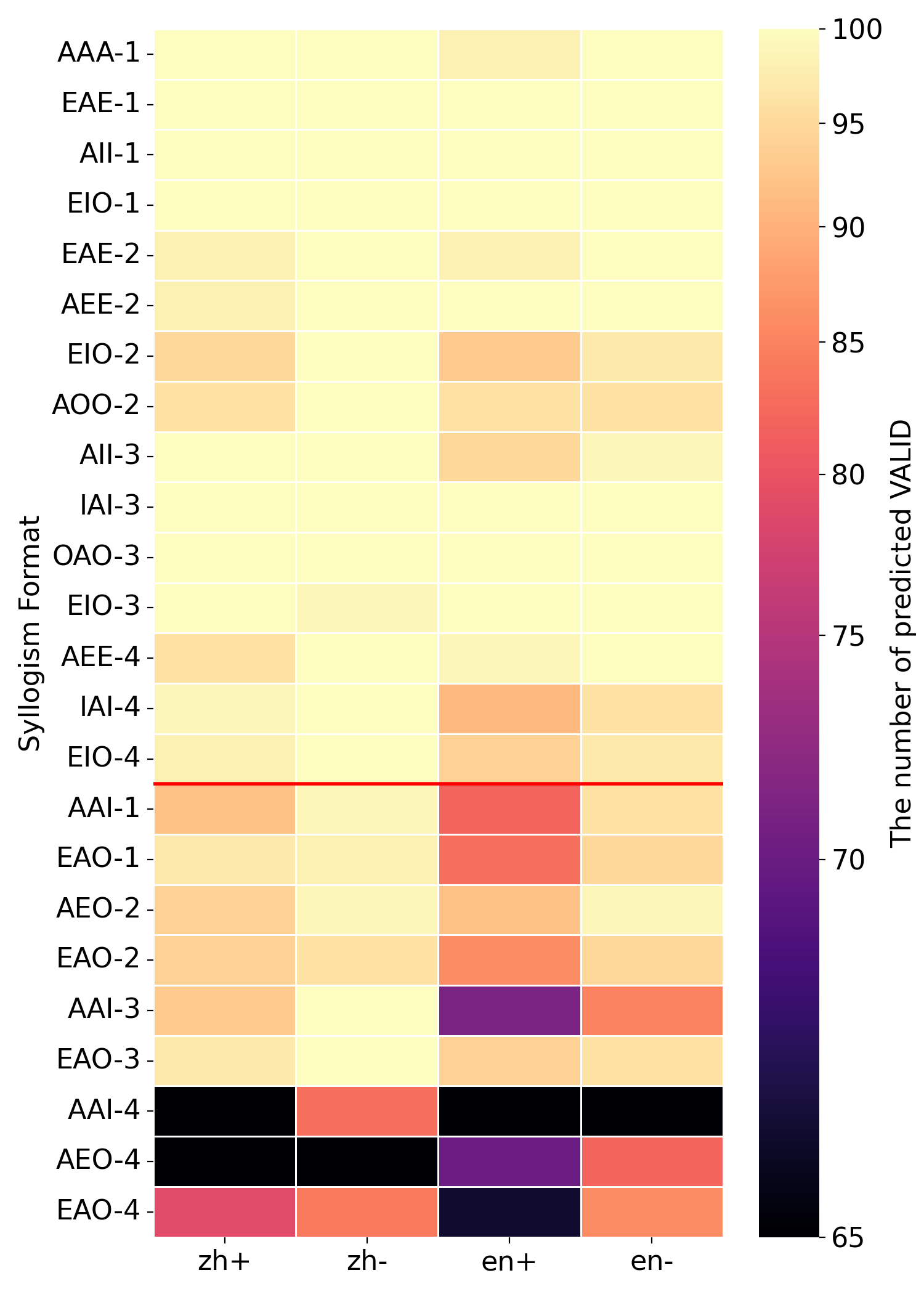

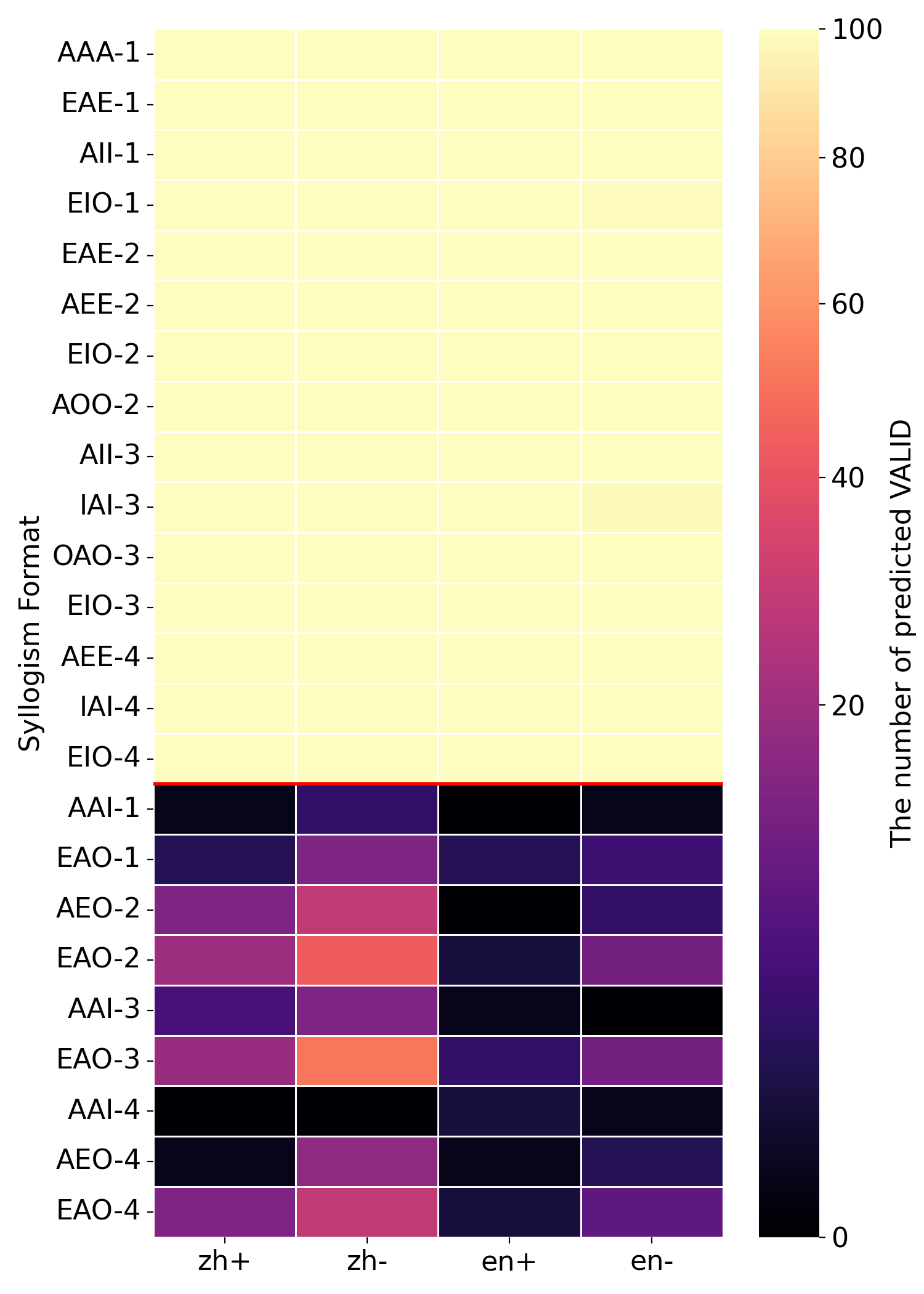

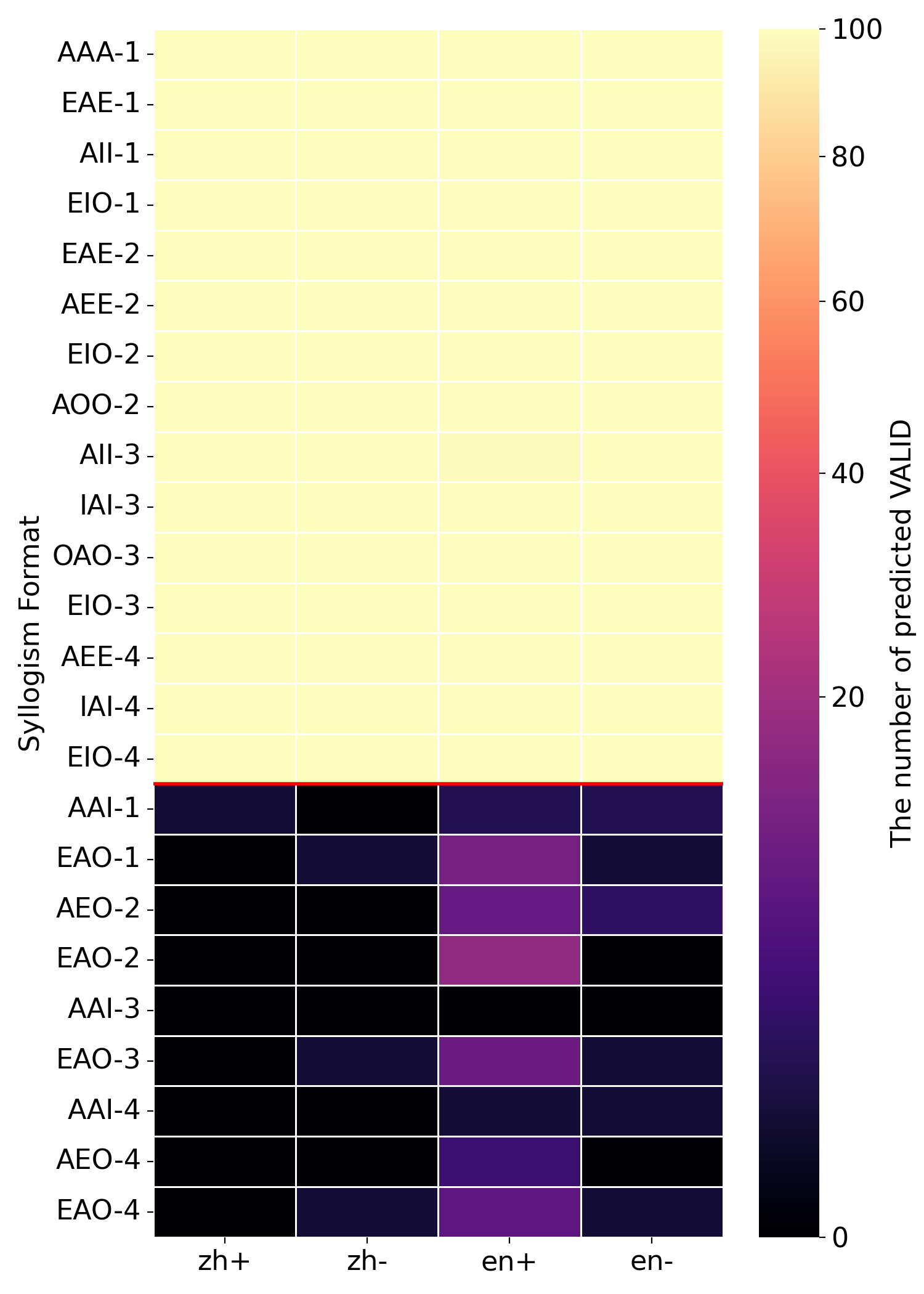

Figure 3: The heatmaps of two types of model logic. (a) and (b) are traditional logic while (c) and (d) are modern logic.

### 4.2 Further Analysis

#### 4.2.1 Prior-check Prompt

To ensure that our measured modern logic performance is not an artifact of prompting, we introduce Prior-check prompt that explicitly asks the model to check the relevant existence condition before making a validity judgment. The goal is simple: make the model perform a semantic check that is required under modern logic evaluation, without changing the logical content of the task.

Main effect: higher $\mathrm{Acc}_{m}$ without stance flipping.

As a control group, we report results with baseline prompt in Appendix 7.6.3. We observe that Prior-check prompt consistently increases $\text{Acc}_{m}$ for most models, while keeping their overall logical stance stable and easy to interpret. This suggests that the prompt improves compliance with modern logic rather than introducing systematic bias.

Turning-point instability.

A notable exception appears in the Qwen3-30B-A3B pair. Although the Instruct version looks modern-logic, the Thinking version shifts back toward traditional logic. This suggests that Qwen3-30B-A3B model is close to the turning point between paradigms. Long thinking contents may sometimes bring back traditional defaults. The fluctuations reveal that the model’s stance can be fragile during the logic transition stage.

#### 4.2.2 The emptiness of minor term

Empty minor terms are consistently harder.

Under both Prior-check prompt and the baseline setting, models show lower $rec_{I}$ when the minor term is empty than when the non-empty counterpart.

One likely reason is that empty minor terms make counterexamples harder to construct. To judge an argument as invalid under modern logic, the model often needs to consider a situation where the premises are true but the conclusion is false. When the minor term is empty, this kind of reasoning is less intuitive because there are no concrete instances to reason about. As a result, the model tends to fall back on traditional logic. This increases false positives and reduces $rec_{I}$ . This result highlights the permeability of world knowledge. Plausibility priors can leak into formal reasoning and interfere with rule-governed validity judgments.

Mood-specific error concentration suggests data imprinting.

To further probe knowledge effects in syllogistic reasoning, we visualize the number of "valid" answers across languages, minor-term existence settings, and all 24 syllogistic moods, as shown in Figure 3. The figure compares four models under Prior-check prompt. Regardless of whether a model generally aligns with traditional or modern logic, errors concentrate on a few specific moods rather than being evenly distributed. For example, Qwen3-4B is overall closer to traditional logic, it displays a strong tendency toward the modern logic in the AAI-4 and AEO-4 syllogism forms. One explanation is that certain moods are more frequent in training data, leading to better learning of those forms. This supports the view that LLMs’ logical behavior is shaped by training data, rather than reflecting an abstract reasoning ability that generalizes uniformly.

#### 4.2.3 Cross-lingual Gaps

Clear language-dependent effect. When comparing three open-source series, the Qwen and LLaMA series generally perform better in Chinese than in English, while Gemma shows the opposite pattern, with higher performance in English. This difference is most visible in accuracy measured under each model’s dominant logical stance.

This cross-lingual gap suggests that current LLMs’ logical ability is not fully language-agnostic. Instead, it is still strongly shaped by language-specific patterns in training data. In short, what looks like “logical reasoning” in these models is still partly tied to the language they operate in, rather than being a truly language-independent reasoning skill.

#### 4.2.4 Architecture and Reasoning Ability

We next study how model architecture relates to logical reasoning behavior. We consider two settings: (i) open-source auto-regressive (AR) LLMs, comparing Dense models with mixture-of-experts (MoE) models shown in Table 1; and (ii) emerging diffusion LLMs (dLLMs) shown in Table 9.

MoE in AR models correlates with more modern-leaning behavior.

Within AR models, MoE variants in the Qwen family exhibit a stronger tendency toward modern logic than same-generation dense models. A plausible explanation is the combined effect of MoE efficiency and model scaling. MoE architectures make it easier to train models with higher effective capacity under similar compute, and the shift toward modern logic becomes more likely as model size increases.

DLLMs mostly follow traditional logic.

For dLLMs, most models still predominantly follow the traditional logic. Only one exception is LLaDA2.0-flash, which is a 100B model with MoE architecture. This exception again reflects the joint impact of MoE architecture and model scaling.

## 5 Related Works

In recent years, many benchmarks have been proposed for syllogism reasoning. ENN Dong et al. (2020) constructed syllogisms extracted from WordNet Miller (1995). The syllogsims are in the form of triplets with no natural language descriptions. Syllo-Figure Peng et al. (2020) and NeuBAROCO Ando et al. (2023) are two natural language syllogism datasets, with data derived from existed datasets. Syllo-Figure derives omitted syllogisms from SNLI Bowman et al. (2015) and rewrites the missing premise by annotators. The target is to identify the specific figure. NeuBAROCO transforms questions from BAROCO Shikishima et al. (2009) into a format used for natural language inferences(NLI). Beyond categorical syllogism, SylloBase Wu et al. (2023) covers more types and patterns of syllogism, covering a complete taxonomy of syllogism reasoning patterns. There are also several researches focusing on the human-like bias of syllogism, such as belief bias Nguyen et al. (2025); Ando et al. (2023) and atmosphere effects Ando et al. (2023). However, these works all assume existential import by default, meaning they approach the task under a traditional logic setting. To examine different models’ tendencies under different logical paradigms and gain deeper insights, we use existential import as a probe and conduct a series of investigations.

## 6 Conclusion and Discussion

This work studies whether LLMs’ syllogistic validity judgments shift toward a more rigorous modern logic criterion as models develop. Among all models, $\mathrm{Acc}_{m}$ generally increases with scale, but only the Qwen series exhibits a clear logic shift , consistent with the behavior of advanced closed-source models. Matched-size comparisons further show that RL-trained Thinking variants efficiently accelerate this shift and improve consistency; in contrast, CoT prompting induces only a limited move toward modern logic, and distillation alone does not reliably yield strict modern logic behavior.