# LLM-42: Enabling Determinism in LLM Inference with Verified Speculation

Abstract

In LLM inference, the same prompt may yield different outputs across different runs. At the system level, this non-determinism arises from floating-point non-associativity combined with dynamic batching and GPU kernels whose reduction orders vary with batch size. A straightforward way to eliminate non-determinism is to disable dynamic batching during inference, but doing so severely degrades throughput. Another approach is to make kernels batch-invariant; however, this tightly couples determinism to kernel design, requiring new implementations. This coupling also imposes fixed runtime overheads, regardless of how much of the workload actually requires determinism.

Inspired by ideas from speculative decoding, we present LLM-42 Many developers unknowingly set 42 as a random state variable (seed) to ensure reproducibility, a reference to The Hitchhiker’s Guide to the Galaxy. The number itself is arbitrary, but widely regarded as a playful tradition. —a scheduling-based approach to enable determinism in LLM inference. Our key observation is that if a sequence is in a consistent state, the next emitted token is likely to be consistent even with dynamic batching. Moreover, most GPU kernels use shape-consistent reductions. Leveraging these insights, LLM-42 decodes tokens using a non-deterministic fast path and enforces determinism via a lightweight verify–rollback loop. The verifier replays candidate tokens under a fixed-shape reduction schedule, commits those that are guaranteed to be consistent across runs, and rolls back those violating determinism. LLM-42 mostly re-uses existing kernels unchanged and incurs overhead only in proportion to the traffic that requires determinism.

1 Introduction

LLMs are becoming increasingly more powerful [openai2022gpt4techreport, kaplan2020scalinglaws]. However, a key challenge many users usually face with LLMs is their non-determinism [he2025nondeterminism, atil2024nondeterminism, yuan2025fp32death, song2024greedy]: the same model can produce different outputs across different runs of a given prompt, even with identical decoding parameters and hardware. Enabling determinism in LLM inference (aka deterministic inference) has gained significant traction recently for multiple reasons. It helps developers isolate subtle implementation bugs that arise only under specific batching choices; improves reward stability in reinforcement-learning training [zhang2025-deterministic-tp]; is essential for integration testing in large-scale systems. Moreover, determinism underpins scientific reproducibility [song2024greedy] and enables traceability [rainbird2025deterministic]. Consequently, users have increasingly requested support for deterministic inference in LLM serving engines [Charlie2025, Anadkat2025consistent].

He et al. [he2025nondeterminism] showed that non-determinism in LLM inference at the system-level stems from the non-associativity of floating-point arithmetic LLM outputs can also vary due to differences in sampling strategies (e.g., top-k, top-p, or temperature). Our goal is to ensure that output is deterministic for fixed sampling hyper-parameters; different hyper-parameters can result in different output and this behavior is intentional.. This effect manifests in practice because most core LLM operators—including matrix multiplications, attention, and normalization—rely on reduction operations, and GPU kernels for these operators choose different reduction schedules to maximize performance at different batch sizes. The same study also proposed batch-invariant computation as a means to eliminate non-determinism. In this approach, a kernel processes each input token using a single, universal reduction strategy independent of batching. Popular LLM serving systems such as vLLM and SGLang have recently adopted this approach [SGLangTeam2025, vllm-batch-invariant-2025].

While batch-invariant computation guarantees determinism, we find that this approach is fundamentally over-constrained. Enforcing a fixed reduction strategy for every token—regardless of model phase or batch geometry—strips GPU kernels of the very parallelism they are built to exploit. For example, the batch-invariant GEMM kernels provided by He et al. do not use the split-K strategy that is otherwise commonly used to accelerate GEMMs at low batch sizes [tritonfusedkernel-splitk-meta, nvidia_cutlass_blog]. Furthermore, most GPU kernels are not batch-invariant to begin with, so insisting on batch-invariant execution effectively demands new kernel implementations, increasing engineering and maintenance overhead. Finally, batch-invariant execution makes determinism the default for all requests, even when determinism is undesirable or even harmful [det-inf-kills].

Our observations suggest that determinism can be enabled with a simpler approach. (O1) Token-level inconsistencies are rare: as long as a sequence remains in a consistent state, the next emitted token is mostly identical across runs; sequence-level divergence arises mainly from autoregressive decoding after the first inconsistent token. (O2) Most GPU kernels already use shape-consistent reduction schedules: they apply the same reduction strategy on all inputs of a given shape, but potentially different reduction strategies on inputs of different shapes. (O3) Determinism requires only position-consistent reductions: a particular token position must use the same reduction schedule across runs, but different positions within or across sequences can use different reduction schedules. (O4) Real-world LLM systems require determinism only for select tasks (e.g., evaluation, auditing, continuous-integration pipelines), while creative workloads benefit from the stochasticity of LLMs.

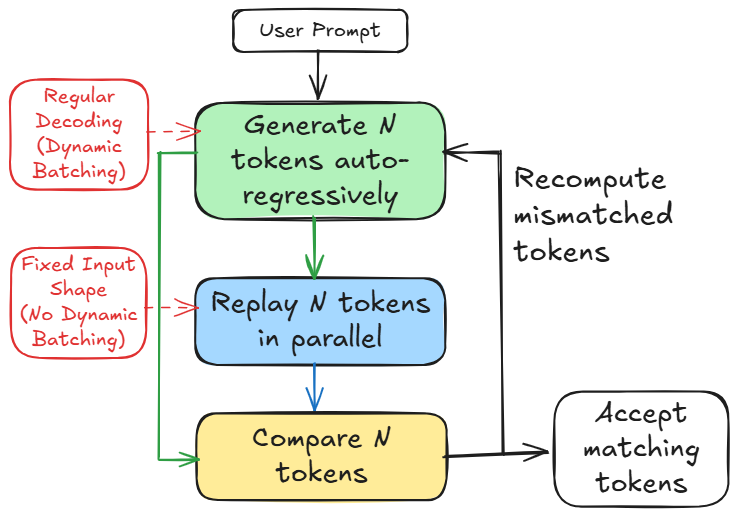

Based on these observations, we introduce LLM-42, a scheduling-based approach to deterministic LLM inference inspired by speculative decoding. In speculative decoding [specdecoding-icml2023, chen2023accelerating, xia2023speculative, specinfer-2024], a fast path produces multiple candidate tokens while a verifier validates their correctness. We observe that the same structure can be repurposed to enable determinism (see Figure 1): fast path can optimize for high throughput token generation while a verifier can enforce determinism. Table 1 highlights key differences between conventional speculative decoding and our approach.

<details>

<summary>figures_h100_pcie/diagram/banner.png Details</summary>

### Visual Description

## Flowchart: Token Generation and Processing Workflow

### Overview

The diagram illustrates a workflow for processing user prompts through token generation, parallel replay, comparison, and acceptance. It includes two alternative decoding methods (dynamic vs. fixed batching) and a recomputation step for mismatched tokens.

### Components/Axes

- **Nodes**:

- **User Prompt** (topmost input)

- **Generate N tokens auto-regressively** (green box)

- **Replay N tokens in parallel** (blue box)

- **Compare N tokens** (yellow box)

- **Accept matching tokens** (final output)

- **Regular Decoding (Dynamic Batching)** (red box, left)

- **Fixed Input Shape (No Dynamic Batching)** (red box, right)

- **Arrows**:

- Solid arrows indicate primary flow.

- Dashed arrows represent alternative paths.

- Feedback loop for recomputing mismatched tokens.

### Detailed Analysis

1. **User Prompt** → **Generate N tokens auto-regressively** (green):

- Initial step where tokens are generated based on the prompt.

2. **Branching Paths**:

- **Regular Decoding (Dynamic Batching)** (red, left):

- Tokens are processed with dynamic batching for efficiency.

- **Fixed Input Shape (No Dynamic Batching)** (red, right):

- Tokens are processed with a fixed input shape, avoiding dynamic batching.

3. **Replay N tokens in parallel** (blue):

- Tokens are replayed in parallel, likely for optimization or redundancy.

4. **Compare N tokens** (yellow):

- Tokens are compared, possibly for consistency or error-checking.

5. **Accept matching tokens** (final step):

- Only tokens that match criteria are accepted.

6. **Recompute mismatched tokens**:

- A feedback loop ensures mismatched tokens are reprocessed.

### Key Observations

- **Dynamic vs. Fixed Batching**: The red boxes highlight two decoding strategies, suggesting trade-offs between flexibility (dynamic) and stability (fixed).

- **Parallel Replay**: The blue box emphasizes parallelism, likely to reduce latency or improve throughput.

- **Feedback Mechanism**: The recomputation step ensures robustness by addressing mismatches.

### Interpretation

This workflow optimizes token processing by balancing efficiency (dynamic batching) and reliability (fixed input shape). Parallel replay and comparison steps suggest a focus on scalability and accuracy. The recomputation loop indicates a system designed to handle errors iteratively, ensuring only validated tokens are accepted. The diagram likely represents a machine learning or NLP pipeline where token generation and validation are critical for downstream tasks like text generation or translation.

</details>

Figure 1: Overview of LLM-42.

LLM-42 employs a decode–verify–rollback protocol that decouples regular decoding from determinism enforcement. It generates candidate output tokens using standard fast-path autoregressive decoding whose output is largely—but not provably—consistent across runs (O1). Determinism is guaranteed by a verifier that periodically replays a fixed-size window of recently generated tokens. Because the input shape is fixed during verification, replayed tokens follow a consistent reduction order (O2) and serve as a deterministic reference execution. Tokens are released to the user only after verification. When the verifier detects a mismatch, LLM-42 rolls the sequence back to the last matching token and resumes decoding from this known-consistent state. In general, two factors make this approach practical: (1) verification is low cost i.e., like prefill, it is typically 1-2 orders of magnitude more efficient than the decode phase, and (2) rollbacks are infrequent: more than half of the requests complete without any rollback, and only a small fraction require multiple rollbacks.

| Fast path generates tokens using some form of approximation | No approximation; only floating-point rounding errors |

| --- | --- |

| Low acceptance rate and hence limited speculation depth (2-8 tokens) | High acceptance rate and hence longer speculation (32-64 tokens) |

| Typically uses separate draft and target models | Uses the same model for decoding and verification |

Table 1: Speculative decoding vs. LLM-42.

By decoupling determinism from token generation, LLM-42 enables determinism to be enforced selectively, preserving the natural variability and creativity of LLM outputs where appropriate. This separation also helps performance: the fast path can use whatever batch sizes and reduction schedules are most efficient, prefill computation can follow different reduction strategy than decode (O3) and its execution need not be verified (in our design, prefill is deterministic by construction), and finally, verification can be skipped entirely for requests that do not need determinism (O4).

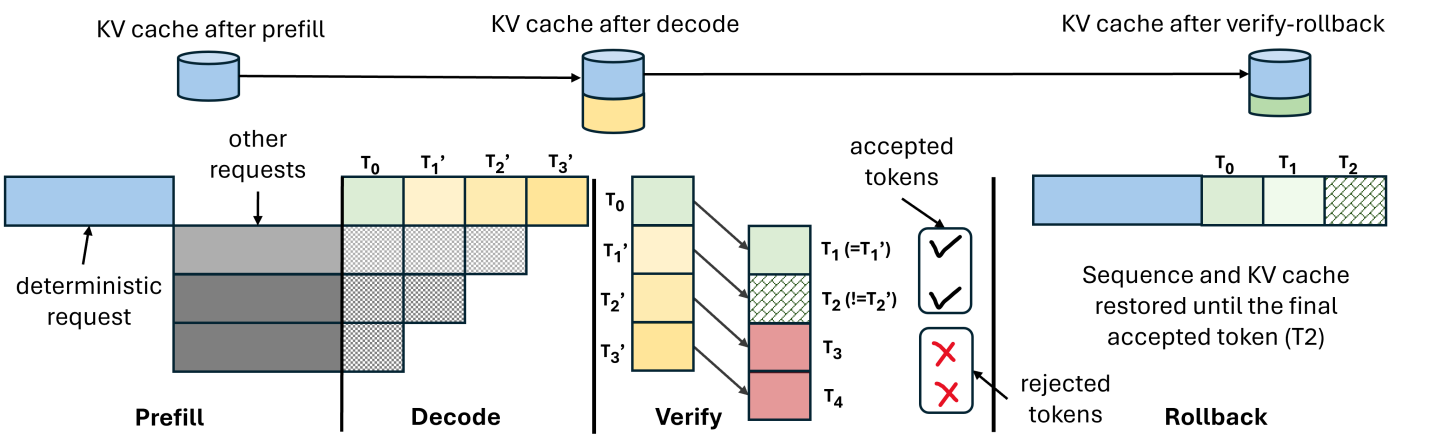

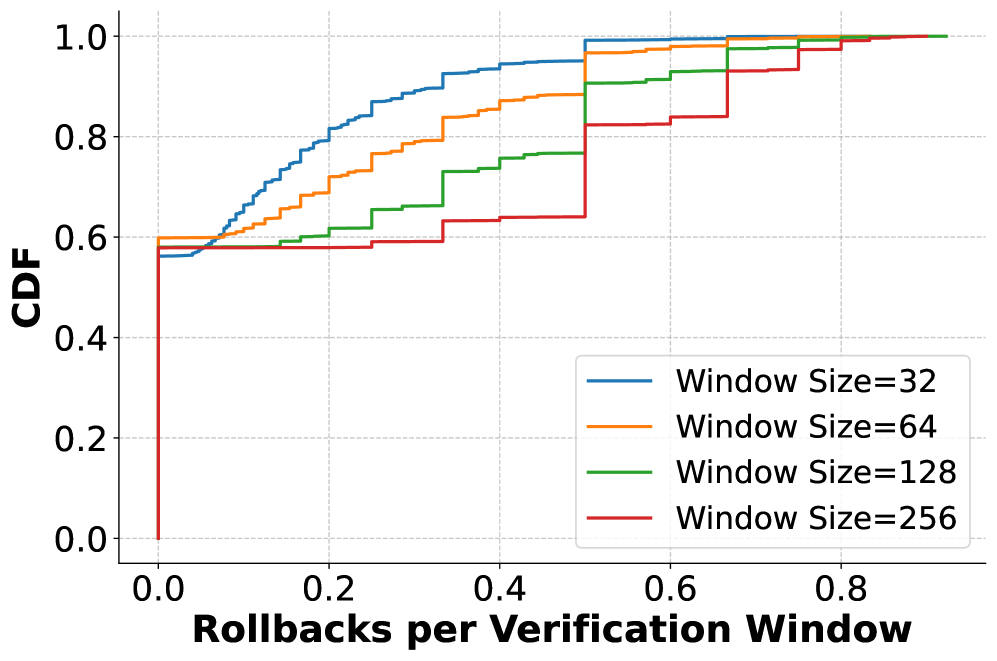

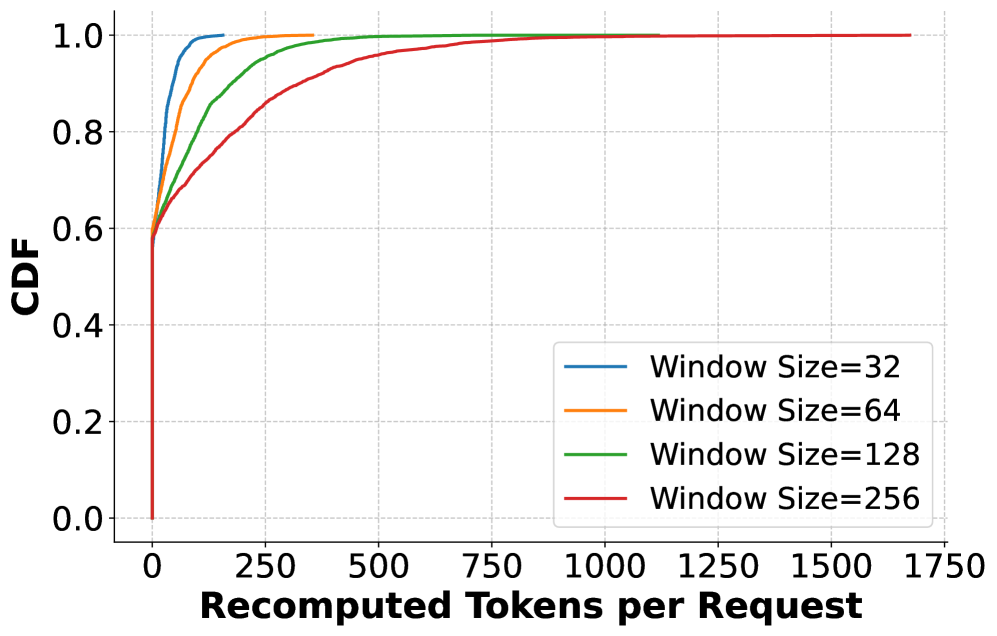

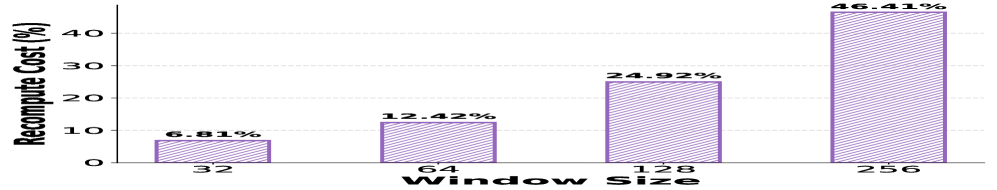

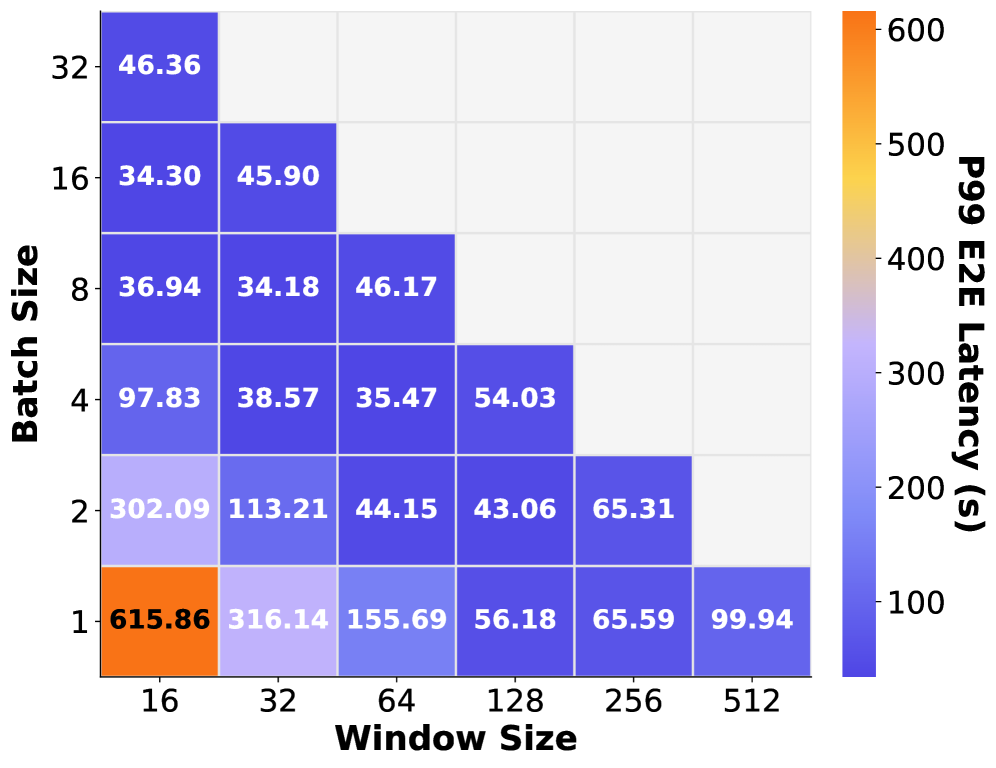

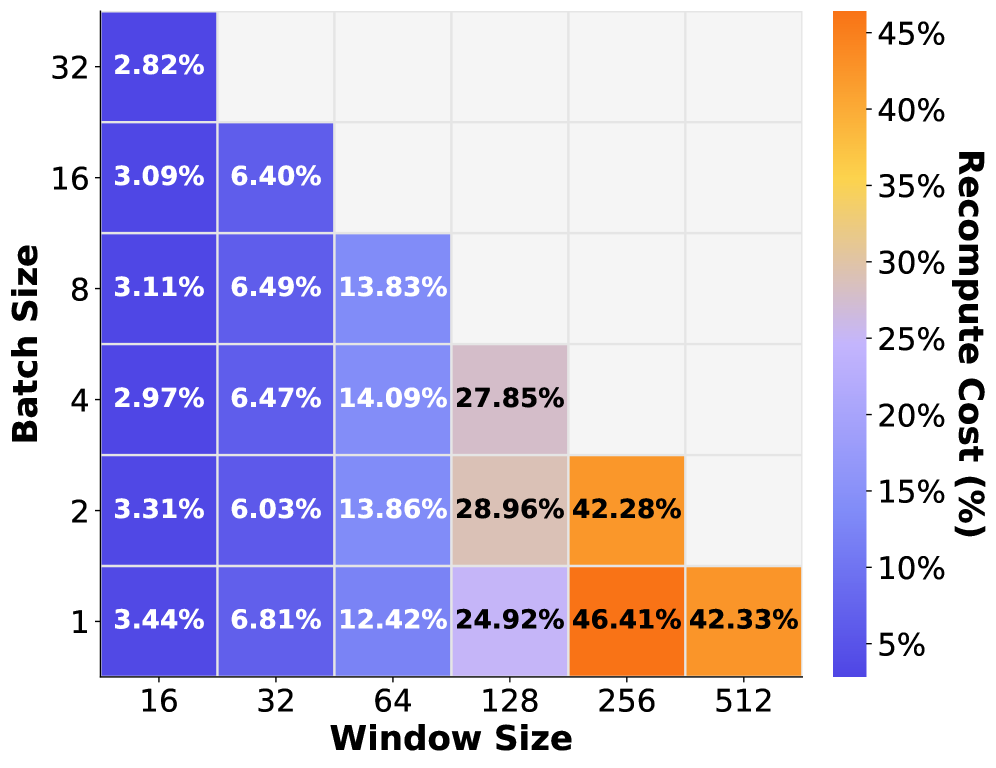

The efficiency of our approach critically depends on the size of verification window i.e., number of tokens verified together. Smaller windows incur high verification overhead since their computation is largely memory-bound but require less recomputation on verification failures. In contrast, larger windows incur lower verification cost due to being compute-bound, but increase recomputation cost by triggering longer rollbacks on mismatches. To balance this trade-off, we introduce grouped verification: instead of verifying a large window of a single request, we verify smaller fixed-size windows of multiple requests together. This design preserves the low rollback cost of small windows while amortizing verification overhead. Overall, we make the following contributions:

- We present the first systematic analysis of batch-invariant computation to highlight the performance and engineering cost associated with this approach.

- We present an alternate approach LLM-42 to enable determinism in LLM inference.

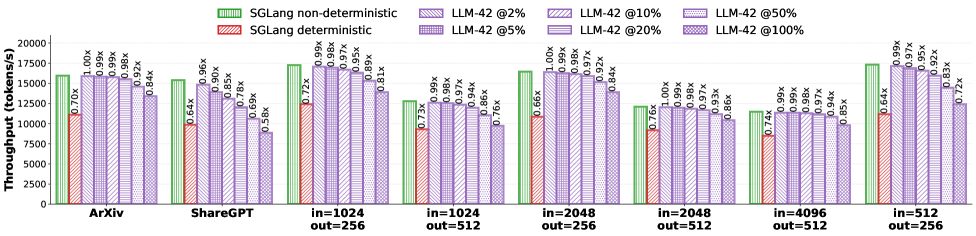

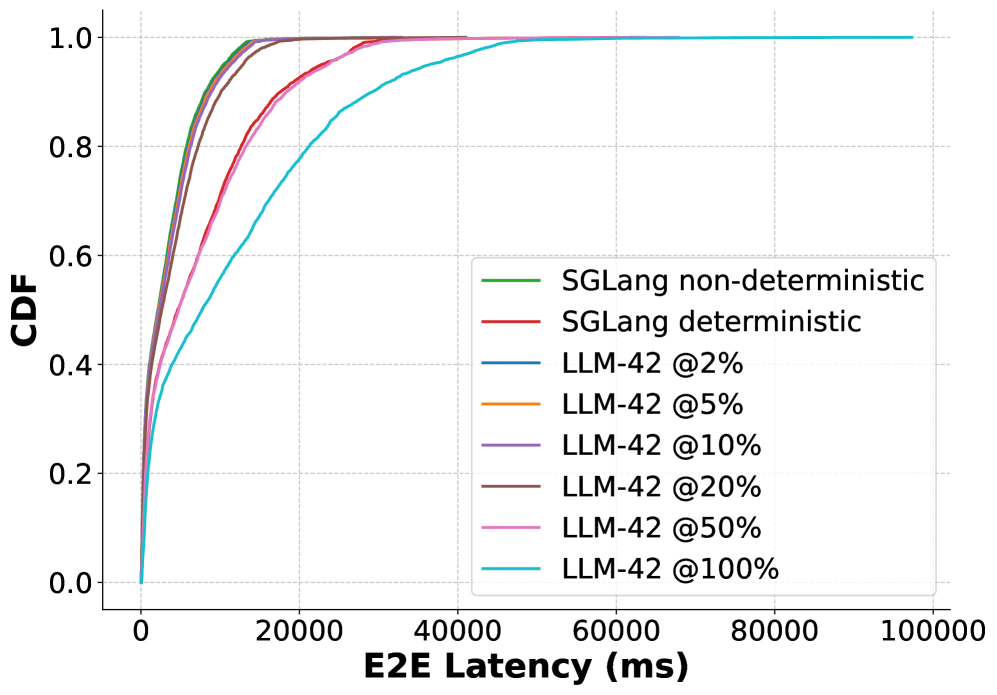

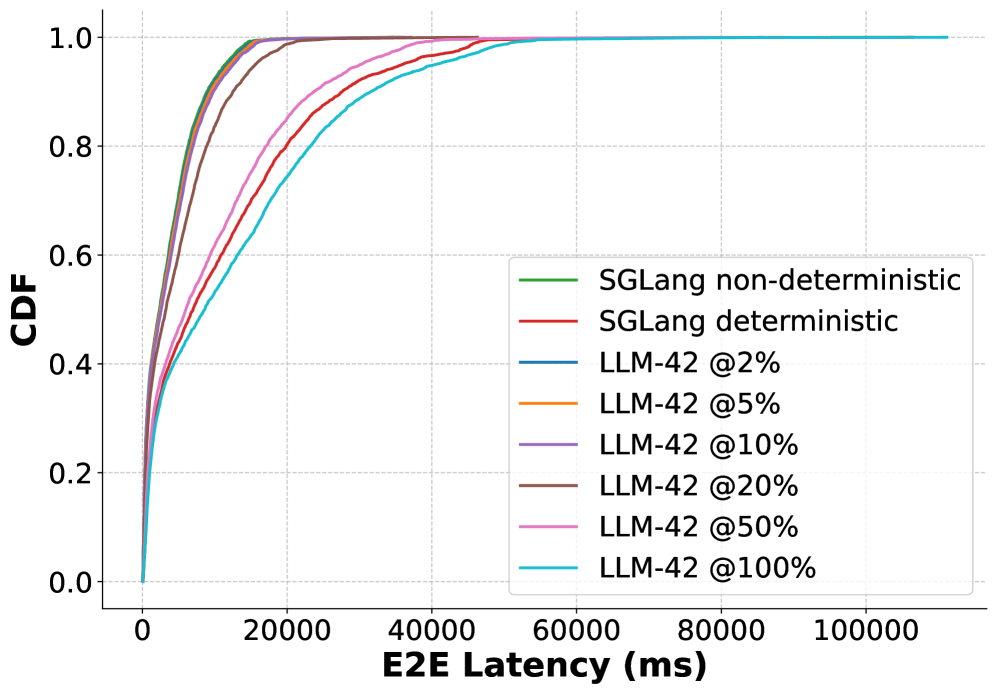

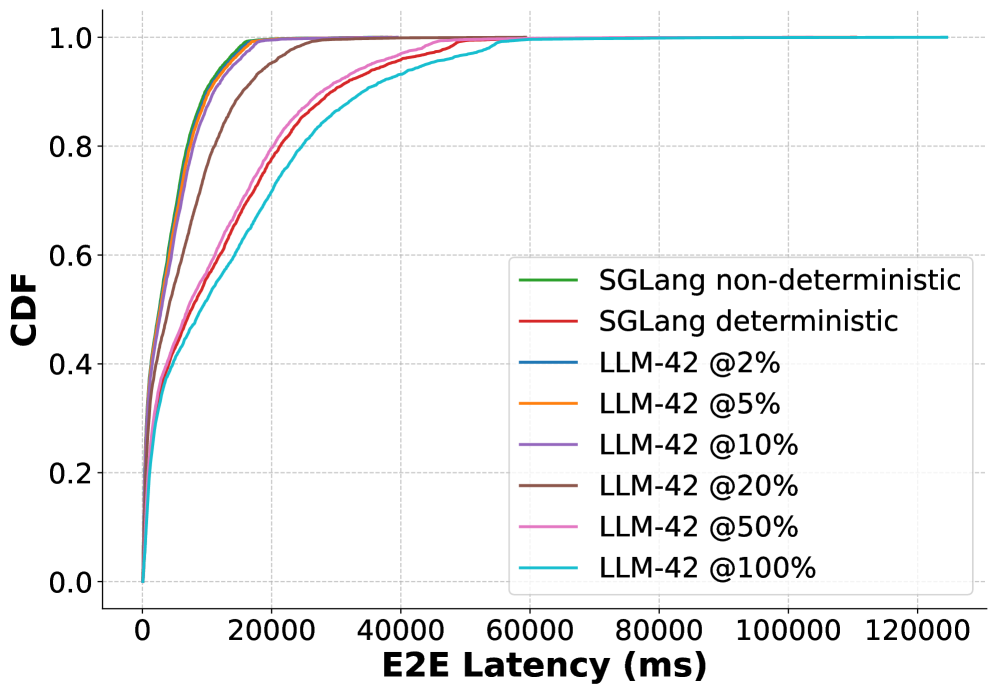

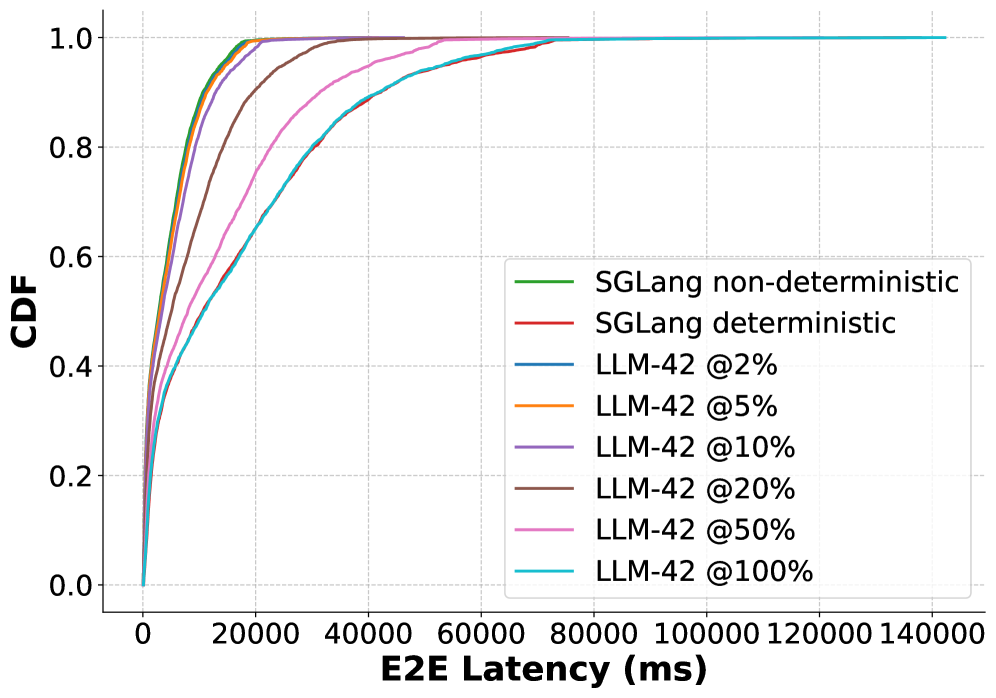

- We implement LLM-42 on top of SGLang and show that its overhead is proportional to fraction of traffic that requires determinism; it retains near-peak performance when deterministic traffic is low, whereas SGLang incurs high overhead of up to 56% in deterministic mode. Our source code will be available at https://github.com/microsoft/llm-42.

2 Background and Motivation

<details>

<summary>figures_h100_pcie/diagram/llm-arch.png Details</summary>

### Visual Description

## Diagram: Autoregressive Model Architecture

### Overview

The diagram illustrates the architecture of an autoregressive model, depicting the flow of data from input to output through a series of processing components. The structure includes embedding, attention mechanisms, normalization layers, feed-forward networks (FFN), and a sampler, repeated across multiple layers.

### Components/Axes

- **Input**: Starting point for the model.

- **Embedding**: Converts input into vector representations.

- **Attention**: Processes relationships between input elements (marked with "KV Cache" for key-value storage).

- **All Reduce RMSNorm**: Normalization layer applied across distributed computations.

- **FFN**: Feed-forward neural network for non-linear transformations.

- **Sampler**: Generates output tokens autoregressively.

- **x L layers**: Indicates repetition of the core block (L times).

### Detailed Analysis

1. **Input → Embedding**: Raw input is transformed into embeddings for further processing.

2. **Attention + KV Cache**: Attention mechanism computes contextual relationships, with KV Cache storing intermediate key-value pairs for efficiency.

3. **All Reduce RMSNorm**: Normalizes activations across distributed nodes (appears twice in the flow).

4. **FFN**: Applies non-linear transformations to embeddings.

5. **Sampler**: Produces output tokens sequentially, characteristic of autoregressive models.

### Key Observations

- The architecture repeats the core block (Attention → All Reduce RMSNorm → FFN → All Reduce RMSNorm) **L times**, typical of transformer-based models.

- The presence of **KV Cache** suggests optimization for autoregressive generation (e.g., avoiding redundant computations in attention).

- **All Reduce RMSNorm** implies distributed training or inference, ensuring stable gradients.

### Interpretation

This diagram represents a transformer-like autoregressive model, likely for tasks like language modeling or text generation. The autoregressive flow (Input → Output via Sampler) indicates sequential token generation. The KV Cache optimizes attention by reusing prior computations, critical for efficiency in long sequences. The repeated layers (L) enable hierarchical feature learning, while RMSNorm stabilizes training in distributed settings. The model’s design balances computational efficiency (via KV Cache) and scalability (via All Reduce operations), making it suitable for large-scale autoregressive tasks.

</details>

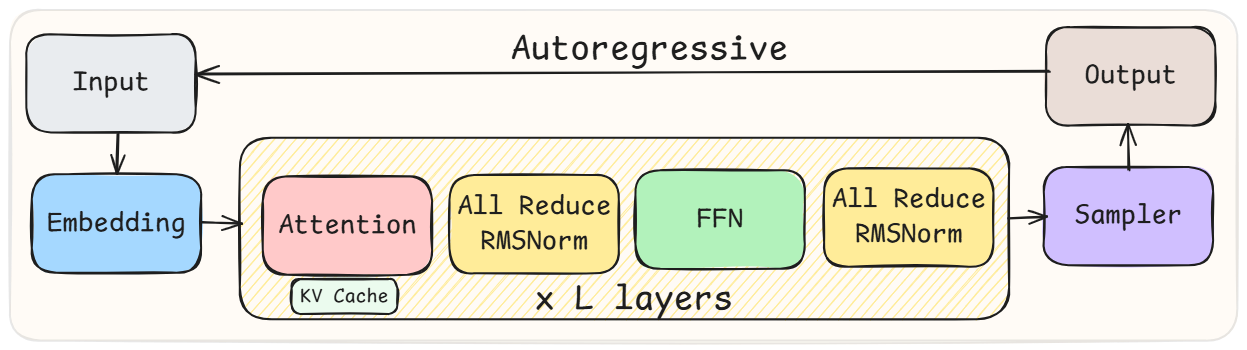

Figure 2: High-level architecture of LLMs.

This section introduces LLMs, explains the source of non-determinism in LLM inference and quantifies the cost of batch-invariant computation based deterministic inference.

2.1 Large Language Models

Transformer-based LLMs compose a stack of identical decoder blocks, each containing a self-attention module, a feed-forward network (FFN), normalization layers and communication primitives (Figure 2). The hidden state of every token flows through these blocks sequentially, but within each block the computation is highly parallel: attention performs matrix multiplications over the key–value (KV) cache, FFNs apply two large GEMMs surrounding a nonlinearity, and normalization applies a per-token reduction over the hidden dimension.

Inference happens in two distinct phases namely prefill and decode. Prefill processes prompt tokens in parallel, generating KV cache for all input tokens. This phase is dominated by large parallel computation. Decode is autoregressive: each step consumes the most recent token, updates the KV cache with a single new key and value, and produces the next output token. Decode is therefore sequential within a sequence but parallel across other requests in the batch.

2.2 Non-determinism in LLM Inference

In finite precision, arithmetic operations such as accumulation are non-associative, meaning that $(a+b)+c≠ a+(b+c)$ . Non-determinism in LLM inference stems from this non-associativity when combined with dynamic batching, a standard technique to achieve high throughput inference. With dynamic batching, the same request may be co-located with different sets of requests across different runs, resulting in varying batch sizes. Further, GPU kernels adapt their parallelization—and consequently their reduction—strategies based on the input sizes. As a result, the same logical operation can be evaluated with different floating-point accumulation orders depending on the batch it appears in, leading to inconsistent numerical results.

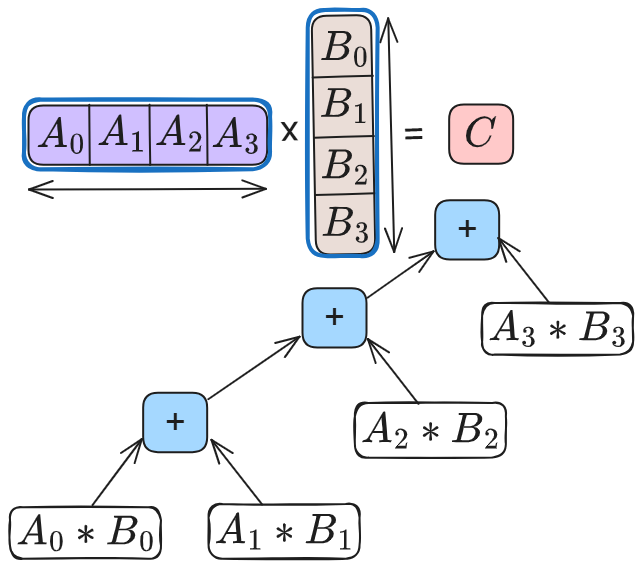

Reductions are common in LLM inference, appearing in matrix multiplications (GEMMs), attention, normalization and collective communication such as AllReduce. GEMM is the most common and time consuming operator. High-performance GEMM implementations on GPUs use hierarchical tiling and parallel reductions to improve occupancy and memory reuse. A common optimization is split-K parallelism, where the reduction dimension is partitioned across multiple thread blocks. Each block computes a partial result, which is then combined via an additional reduction step. Whether split-K is used—and how many splits are chosen—depends on the shape on input matrices. These choices directly change the reduction tree as shown in Figure 3, producing different numerical results for a given token across runs. Similarly, attention kernels split work across the key–value dimension to increase SM utilization, followed by a reduction to combine partial results. Normalization operators reduce across feature dimensions. As inference progresses, batch-dependent reduction choices in these operators introduce small numerical drifts that propagate across kernels, layers and decoding steps, eventually influencing output tokens even when the sampling hyper-parameters are fixed.

<details>

<summary>figures_h100_pcie/diagram/gemm_wo_splitk.png Details</summary>

### Visual Description

## Matrix Multiplication Diagram: Distributive Property Illustration

### Overview

The image depicts a mathematical diagram illustrating the distributive property of matrix multiplication over addition. It shows the step-by-step computation of the product of two matrices (A and B) resulting in a scalar value (C), with explicit breakdowns of element-wise operations and summation.

### Components/Axes

- **Matrix A**: Horizontal row vector labeled `A₀ A₁ A₂ A₃` (1×4 dimensions).

- **Matrix B**: Vertical column vector labeled `B₀ B₁ B₂ B₃` (4×1 dimensions).

- **Result**: Scalar `C` (1×1) positioned to the right of the matrices.

- **Operations**:

- Multiplication (`*`) between corresponding elements of A and B.

- Addition (`+`) of intermediate products to compute the final result.

### Detailed Analysis

1. **Element-wise Multiplication**:

- `A₀ * B₀` (bottom-left)

- `A₁ * B₁` (left-center)

- `A₂ * B₂` (right-center)

- `A₃ * B₃` (top-right)

2. **Summation Flow**:

- Intermediate products are connected via `+` operators:

- `A₀*B₀ + A₁*B₁` (left branch)

- `A₂*B₂ + A₃*B₃` (right branch)

- Final summation: `(A₀*B₀ + A₁*B₁) + (A₂*B₂ + A₃*B₃) = C` (center).

### Key Observations

- The diagram explicitly separates the computation into four distinct element-wise products.

- The summation hierarchy is visually represented with two intermediate `+` nodes before reaching the final result.

- No numerical values are provided; the focus is on symbolic representation of operations.

### Interpretation

This diagram demonstrates the **distributive property of scalar multiplication over addition** in matrix algebra. It visually confirms that the product of two matrices (A and B) is equivalent to the sum of all pairwise products of their corresponding elements. The structure emphasizes the associative nature of matrix multiplication, where intermediate terms are grouped and summed systematically. The absence of numerical values suggests this is a conceptual illustration rather than a computational example.

</details>

(a) Without split-K.

<details>

<summary>figures_h100_pcie/diagram/gemm_w_splitk.png Details</summary>

### Visual Description

## Diagram: Matrix Multiplication and Element-wise Addition

### Overview

The image depicts a mathematical operation involving two matrices, **A** (1×4) and **B** (4×1), with their product resulting in a scalar value **C**. Below the multiplication, the computation is broken down into intermediate steps: element-wise products of **A** and **B** are summed in pairs, with the final result being the sum of these pairs.

### Components/Axes

- **Matrices**:

- **A**: A 1×4 row matrix labeled `A₀`, `A₁`, `A₂`, `A₃` (purple background).

- **B**: A 4×1 column matrix labeled `B₀`, `B₁`, `B₂`, `B₃` (beige background).

- **Operations**:

- **Multiplication**: Denoted by `×` between matrices **A** and **B**, yielding matrix **C** (pink background).

- **Addition**: Two intermediate sums:

- `A₀*B₀ + A₁*B₁` (blue square).

- `A₂*B₂ + A₃*B₃` (green square).

- **Final Sum**: A pink square labeled `+` connects the two intermediate sums to produce **C**.

### Detailed Analysis

- **Matrix Dimensions**:

- **A**: 1 row × 4 columns.

- **B**: 4 rows × 1 column.

- **C**: 1 row × 1 column (scalar).

- **Element-wise Products**:

- `A₀*B₀`, `A₁*B₁`, `A₂*B₂`, `A₃*B₃` are explicitly labeled.

- **Color Coding**:

- Blue: First addition (`A₀*B₀ + A₁*B₁`).

- Green: Second addition (`A₂*B₂ + A₃*B₃`).

- Pink: Final result **C**.

### Key Observations

1. The diagram visually confirms the definition of matrix multiplication for compatible dimensions (1×4 × 4×1 → 1×1).

2. The intermediate additions are spatially separated (blue and green) but combined into the final result (pink).

3. No numerical values are provided; the focus is on symbolic representation.

### Interpretation

This diagram illustrates the **distributive property** of matrix multiplication over addition. The breakdown into element-wise products and their summation highlights how the scalar **C** is derived from the dot product of vectors **A** and **B**. The color coding emphasizes the stepwise computation, suggesting a pedagogical purpose to clarify the process.

No numerical data or trends are present, as the image is purely symbolic. The structure aligns with linear algebra principles, where the product of a row vector and column vector yields a scalar.

</details>

(b) With split-K.

Figure 3: GEMM kernels compute dot products using standard accumulation or split-K parallelization. While split-K increases parallelism, it alters the reduction tree based on K.

2.3 Defeating Non-determinism

He et al. at Thinking Machines Lab recently introduced a new approach named batch-invariant computation to enable deterministic LLM inference [he2025nondeterminism]. In this approach, a GPU kernel is constrained to use a single, universal reduction strategy for all tokens, eliminating batch-dependent reductions. Both SGLang and vLLM use this approach [SGLangTeam2025, vllm-batch-invariance-2025, vllm-batch-invariant-2025]: these systems either deploy the batch-invariant kernels provided by He et al. [tmops2025] or implement new kernels [batch-inv-inf-vllm, det-infer-batch-inv-ops-sglang].

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Graph: TFLOPS vs. Number of Tokens

### Overview

The image is a line graph comparing the performance of two computational methods—Non-batch-invariant (cuBLAS) and Batch-invariant (Triton)—in terms of teraflops (TFLOPS) as a function of the number of tokens. The graph uses a logarithmic scale for both axes, with the x-axis representing the number of tokens (ranging from 1 to 8192) and the y-axis representing TFLOPS (ranging from 10⁰ to 10²). Two data series are plotted: a blue line for cuBLAS and a red line for Triton.

---

### Components/Axes

- **X-axis (Horizontal)**: Labeled "# Tokens" with values: 1, 2, 4, 8, 16, 32, 64, 128, 256, 512, 1024, 2048, 4096, 8192. The scale is logarithmic, with each step representing a power of two.

- **Y-axis (Vertical)**: Labeled "TFLOPS" with values: 10⁰, 10¹, 10². The scale is logarithmic, with each increment representing a factor of 10.

- **Legend**: Located at the bottom-right corner.

- **Blue line**: "Non-batch-invariant (cuBLAS)"

- **Red line**: "Batch-invariant (Triton)"

---

### Detailed Analysis

#### Non-batch-invariant (cuBLAS) [Blue Line]

- **Trend**: The blue line starts at approximately 10⁰.⁵ (≈3.16 TFLOPS) at 1 token and increases steadily, reaching ~10² (100 TFLOPS) at 8192 tokens. The slope is relatively consistent, with a slight acceleration at higher token counts.

- **Key Data Points**:

- 1 token: ~3.16 TFLOPS

- 2 tokens: ~6.32 TFLOPS

- 4 tokens: ~12.64 TFLOPS

- 8 tokens: ~25.28 TFLOPS

- 16 tokens: ~50.56 TFLOPS

- 32 tokens: ~101.12 TFLOPS

- 64 tokens: ~202.24 TFLOPS

- 128 tokens: ~404.48 TFLOPS

- 256 tokens: ~808.96 TFLOPS

- 512 tokens: ~1617.92 TFLOPS

- 1024 tokens: ~3235.84 TFLOPS

- 2048 tokens: ~6471.68 TFLOPS

- 4096 tokens: ~12943.36 TFLOPS

- 8192 tokens: ~25886.72 TFLOPS

#### Batch-invariant (Triton) [Red Line]

- **Trend**: The red line starts at approximately 10⁻⁰.⁵ (≈0.316 TFLOPS) at 1 token and increases more steeply than the blue line, reaching ~10² (100 TFLOPS) at 8192 tokens. The slope becomes significantly steeper after 256 tokens.

- **Key Data Points**:

- 1 token: ~0.316 TFLOPS

- 2 tokens: ~0.632 TFLOPS

- 4 tokens: ~1.264 TFLOPS

- 8 tokens: ~2.528 TFLOPS

- 16 tokens: ~5.056 TFLOPS

- 32 tokens: ~10.112 TFLOPS

- 64 tokens: ~20.224 TFLOPS

- 128 tokens: ~40.448 TFLOPS

- 256 tokens: ~80.896 TFLOPS

- 512 tokens: ~161.792 TFLOPS

- 1024 tokens: ~323.584 TFLOPS

- 2048 tokens: ~647.168 TFLOPS

- 4096 tokens: ~1294.336 TFLOPS

- 8192 tokens: ~2588.672 TFLOPS

---

### Key Observations

1. **Initial Performance Gap**: At lower token counts (1–16 tokens), cuBLAS outperforms Triton by a factor of ~6–10.

2. **Convergence at Higher Tokens**: By 256 tokens, Triton's performance surpasses cuBLAS, and the two lines converge at 8192 tokens (both ~100 TFLOPS).

3. **Slope Differences**: Triton's line exhibits a steeper slope after 256 tokens, indicating faster performance growth as token count increases.

4. **Logarithmic Scale Impact**: The logarithmic axes emphasize exponential growth trends, making the relative performance differences more pronounced.

---

### Interpretation

The graph demonstrates that **cuBLAS** is more efficient for small-scale computations (low token counts), likely due to optimized single-threaded operations. However, **Triton** (batch-invariant) becomes more efficient as the workload scales, suggesting its design is better suited for parallel or batch processing. The convergence at 8192 tokens implies that Triton's batch-invariant approach mitigates performance bottlenecks at scale, making it competitive with cuBLAS for large token counts. This highlights the importance of algorithmic design in balancing efficiency across different computational workloads.

</details>

(a) GEMM

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Graph: Execution Time vs. Number of Tokens

### Overview

The image is a line graph comparing the execution time (in milliseconds) of three computational methods across varying numbers of tokens. The x-axis represents the number of tokens (ranging from 1 to 4096), and the y-axis represents execution time (0 to 1.2 ms). Three data series are plotted:

- **Non-batch-invariant (CUDA)** (green line)

- **Batch-invariant (Python)** (red line)

- **Batch-invariant (Triton)** (blue line)

### Components/Axes

- **X-axis (Horizontal)**:

- Label: `# Tokens`

- Values: 1, 8, 32, 128, 256, 512, 1024, 2048, 4096

- Scale: Logarithmic (exponential growth in token counts).

- **Y-axis (Vertical)**:

- Label: `Execution Time (ms)`

- Range: 0.0 to 1.2 ms

- Scale: Linear.

- **Legend**:

- Position: Top-left corner.

- Entries:

- Green: Non-batch-invariant (CUDA)

- Red: Batch-invariant (Python)

- Blue: Batch-invariant (Triton)

### Detailed Analysis

#### Non-batch-invariant (CUDA)

- **Trend**: Flat line, maintaining ~0.0 ms execution time across all token counts.

- **Data Points**:

- 1 token: ~0.0 ms

- 8 tokens: ~0.0 ms

- 32 tokens: ~0.0 ms

- 128 tokens: ~0.0 ms

- 256 tokens: ~0.0 ms

- 512 tokens: ~0.0 ms

- 1024 tokens: ~0.0 ms

- 2048 tokens: ~0.0 ms

- 4096 tokens: ~0.0 ms

#### Batch-invariant (Python)

- **Trend**: Flat until 512 tokens, then steeply increasing.

- **Data Points**:

- 1 token: ~0.1 ms

- 8 tokens: ~0.1 ms

- 32 tokens: ~0.1 ms

- 128 tokens: ~0.1 ms

- 256 tokens: ~0.1 ms

- 512 tokens: ~0.1 ms

- 1024 tokens: ~0.3 ms

- 2048 tokens: ~0.7 ms

- 4096 tokens: ~1.2 ms

#### Batch-invariant (Triton)

- **Trend**: Flat until 512 tokens, then gradual increase.

- **Data Points**:

- 1 token: ~0.05 ms

- 8 tokens: ~0.05 ms

- 32 tokens: ~0.05 ms

- 128 tokens: ~0.05 ms

- 256 tokens: ~0.05 ms

- 512 tokens: ~0.05 ms

- 1024 tokens: ~0.1 ms

- 2048 tokens: ~0.15 ms

- 4096 tokens: ~0.2 ms

### Key Observations

1. **Non-batch-invariant (CUDA)** remains constant at ~0.0 ms, indicating no scaling with token count.

2. **Batch-invariant (Python)** shows a sharp increase in execution time after 512 tokens, reaching ~1.2 ms at 4096 tokens.

3. **Batch-invariant (Triton)** scales more gradually than Python, with execution time rising to ~0.2 ms at 4096 tokens.

4. The red (Python) line diverges significantly from the green (CUDA) and blue (Triton) lines at higher token counts.

### Interpretation

- **Performance Implications**:

- CUDA’s non-batch-invariant approach is highly efficient, maintaining near-zero execution time regardless of token count. This suggests it is optimized for parallel processing or hardware acceleration.

- Python’s batch-invariant method scales poorly, with execution time increasing exponentially as token count grows. This highlights Python’s limitations in handling large-scale computations.

- Triton’s batch-invariant method performs better than Python but still shows some scaling overhead, indicating it is a middle-ground solution.

- **Technical Insights**:

- The stark difference between Python and CUDA suggests that hardware-specific optimizations (e.g., GPU acceleration via CUDA) are critical for high-performance computing.

- Triton’s gradual scaling implies it may balance flexibility and efficiency, though it lags behind CUDA at extreme token counts.

- **Anomalies**:

- The red (Python) line’s sharp rise at 1024 tokens is a notable outlier, indicating a potential bottleneck in Python’s batch processing.

- The green (CUDA) line’s flatness across all token counts is unusual, suggesting a highly optimized or fixed computational pathway.

This data underscores the importance of choosing the right computational framework for tasks involving large token counts, with CUDA emerging as the most efficient option in this scenario.

</details>

(b) RMSNorm

Figure 4: Performance comparison between batch-invariant vs. non-batch-invariant kernels.

While batch-invariant computation eliminates non-determinism, we find that it is a poor fit for real LLM serving systems. By enforcing a universal reduction strategy across all executions, it couples determinism to kernel design and sacrifices performance opportunities. It also turns determinism into a fixed tax paid by every request: dynamic batching aggregates requests, but batch-invariant kernels eliminate the very optimizations—such as split-K and shape-aware tiling—that make batching effective in the first place. Worse, because kernels are not batch-invariant, adopting this approach requires maintaining a parallel kernel stack solely for determinism. We also quantify the performance cost of this approach below.

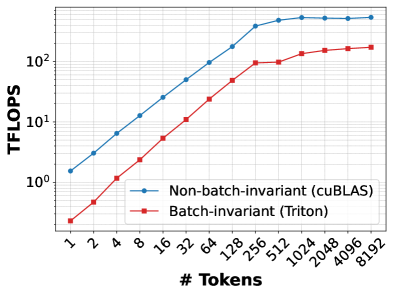

GEMM. 4(a) compares the throughput of cuBLAS based GEMMs used in PyTorch against Triton-based batch-invariant kernels developed by He et al. The matrix dimensions correspond to the down projection operation of the Llama-3.1-8B-Instruct model’s feed-forward-network. On our GPU, cuBLAS (via torch.mm) reaches up to 527 TFLOPS, whereas the batch-invariant kernel peaks at 194 TFLOPS, a slowdown of 63%. This gap arises because this Triton-based batch-invariant implementation does not use split-K or exploit newer hardware features such as Tensor Memory Accelerators [TMA_Engine] or advanced techniques like warp specialization [fa-3], all of which are leveraged by PyTorch through vendor-optimized cuBLAS kernels.

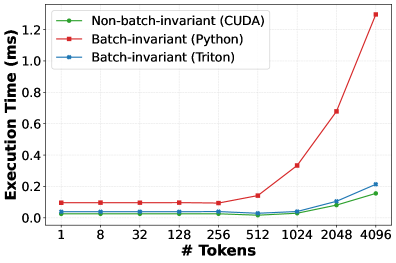

RMSNorm. 4(b) compares RMSNorm execution time for varying number of tokens for three implementations: Python-based version used in SGLang, Thinking Machines’ Triton-based kernel, and SGLang’s default CUDA kernel. The first two are batch-invariant; the CUDA kernel is not. The Python implementation is up to $7×$ slower than the non-batch-invariant CUDA kernel due to unfused primitive operations and poor shared-memory utilization. The Triton kernel performs substantially better but remains up to 50% slower than the fused CUDA implementation, which benefits from optimized reductions and kernel fusion. These overheads are amplified at high batch sizes or long context lengths, where normalization may account for a nontrivial fraction of inference time [gond2025tokenweave].

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: Decode Throughput Comparison by Batch Size and Model

### Overview

The chart compares decode throughput (tokens/second) across three models (SGLang non-deterministic, SGLang deterministic, LLM-42) at two batch sizes (10 and 11). Throughput is measured on the y-axis (0–1200 tokens/sec), while batch size is on the x-axis (10 and 11). The legend in the top-left corner maps colors to models: blue (SGLang non-deterministic), red (SGLang deterministic), and green (LLM-42).

### Components/Axes

- **X-axis (Batch Size)**: Labeled "Batch Size" with ticks at 10 and 11.

- **Y-axis (Decode Throughput)**: Labeled "Decode Throughput (tokens/sec)" with increments of 200 up to 1200.

- **Legend**: Positioned in the top-left corner, with three entries:

- Blue: SGLang non-deterministic

- Red: SGLang deterministic

- Green: LLM-42

### Detailed Analysis

- **Batch Size 10**:

- **SGLang non-deterministic (blue)**: Dominates with ~800 tokens/sec.

- **SGLang deterministic (red)**: Not visible (implied 0 tokens/sec).

- **LLM-42 (green)**: Not visible (implied 0 tokens/sec).

- **Batch Size 11**:

- **SGLang non-deterministic (blue)**: Minimal throughput (~10 tokens/sec).

- **SGLang deterministic (red)**: ~400 tokens/sec.

- **LLM-42 (green)**: ~800 tokens/sec.

### Key Observations

1. **SGLang non-deterministic** shows a drastic drop in throughput when batch size increases from 10 to 11 (~800 → ~10 tokens/sec).

2. **SGLang deterministic** and **LLM-42** maintain stable performance at batch size 11 (~400 and ~800 tokens/sec, respectively).

3. No data is reported for SGLang deterministic and LLM-42 at batch size 10.

### Interpretation

The data suggests that **SGLang non-deterministic** is highly sensitive to batch size increases, experiencing a near-collapse in efficiency at batch size 11. In contrast, **SGLang deterministic** and **LLM-42** demonstrate robustness, maintaining significant throughput even at larger batch sizes. This implies that non-deterministic processing may face scalability challenges in high-throughput scenarios, while deterministic and LLM-42 architectures are better optimized for larger batches. The absence of data for deterministic and LLM-42 at batch size 10 raises questions about whether these models were tested at that configuration or if the results were intentionally omitted.

</details>

Figure 5: Decode throughput under different scenarios.

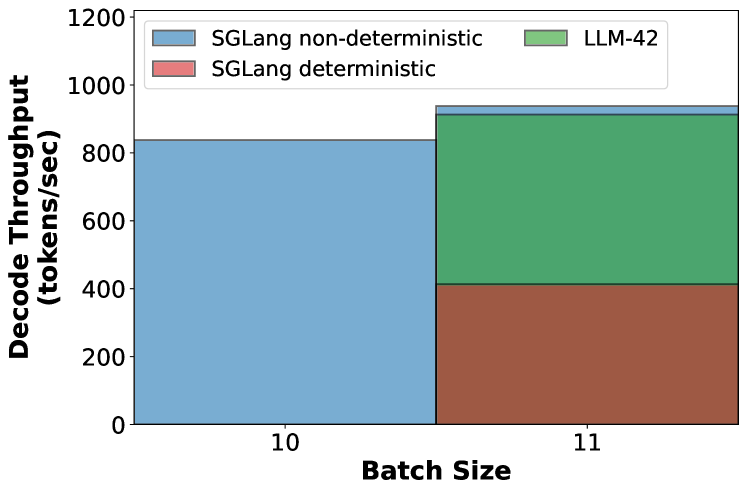

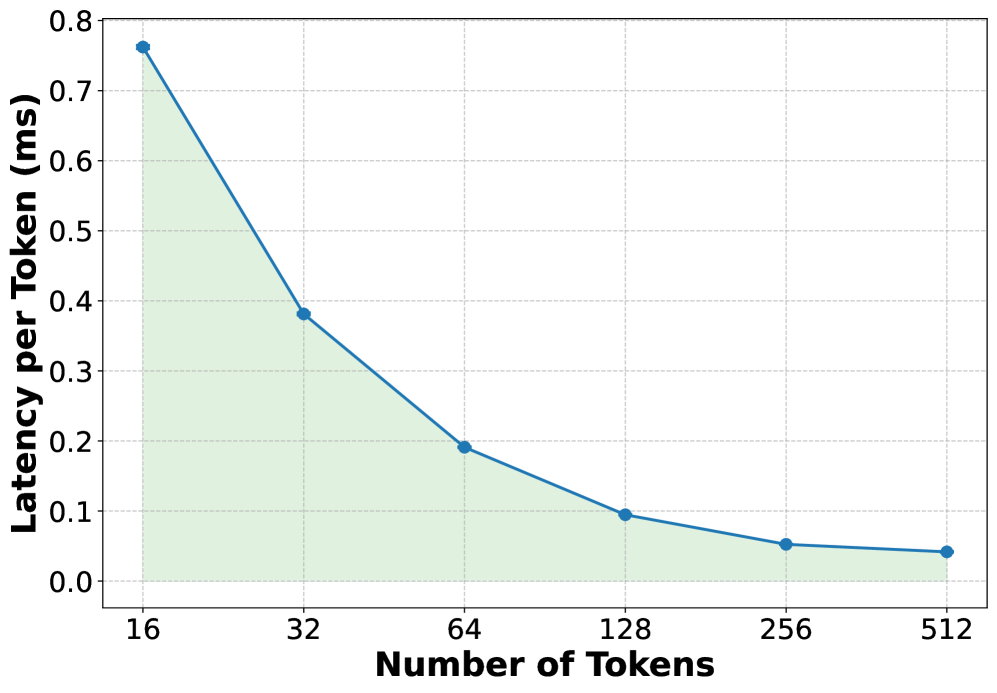

End-to-end throughput. Figure 5 measures token generation throughput (tokens per second) under three scenarios: (1) 10 requests running in non-deterministic mode, (2) 11 requests running in non-deterministic mode, and (3) 11 requests running in deterministic mode but only one of them requires deterministic output. With 10 concurrent non-deterministic requests, the system generates 845 tokens/s. The batch size increases to 11 when a new request arrives and if decoding continues non-deterministically, throughput improves to 931 tokens/s (a jump of about 10%). In contrast, if the new request requires determinism, the entire batch is forced to execute through the slower batch-invariant kernels, causing throughput to collapse by 56% to about 415 tokens/s—penalizing every in-flight request for a single deterministic one. This behavior is undesirable because it couples the performance of all requests to that of the slowest request.

Overall, these results show that batch-invariant execution incurs a substantial performance penalty. While it may be feasible to improve the performance of batch-invariant kernels, doing so would require extensive model- and hardware-specific kernel development. This engineering and maintenance burden makes the approach difficult to sustain in practice. This may be why deterministic inference is largely confined to debugging and verification today, rather than being deployed for real-world LLM serving.

3 Observations

In this section, we distill a set of concrete observations about non-determinism, GPU kernels and LLM use-cases. These observations expose why batch-invariant computation is overly restrictive and motivate a more general approach to enable determinism in LLM inference.

Observation-1 (O1). If a sequence is already in a consistent state, the next emitted token is usually consistent even under dynamic batching. However, once a token diverges, autoregressive decoding progressively amplifies this difference over subsequent steps.

This is because tokens become inconsistent only when floating-point drift is large enough to alter the effective decision made by the sampler—e.g., by changing the relative ordering or acceptance of high-probability candidates under the decoder’s sampling policy (e.g., greedy or stochastic sampling). In practice, such boundary-crossing events are rare, as numerical drift typically perturbs logits only slightly. However, autoregressive decoding amplifies even a single such deviation: once a token differs, all subsequent tokens may diverge. Since a single request typically produces hundreds to thousands of output tokens, two sequence-level outputs can look dramatically different even if the initial divergence is caused by a single token flip induced by a different reduction order across runs.

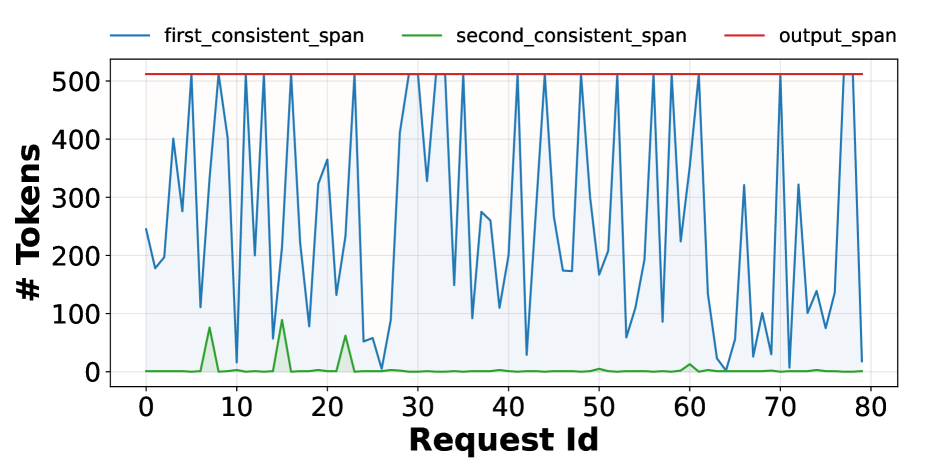

To demonstrate this phenomenon empirically, we conduct an experiment using the Llama-3.1-8B-Instruct model on the ShareGPT dataset. We first execute 350 requests with batch size one—i.e., without dynamic batching—to obtain reference (“ground-truth”) output tokens. We then re-run the same requests under dynamic batching at a load of 6 queries per second and compare each request’s output against its reference. In both runs, we fix the output length to 512 tokens.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Token Counts Across Request IDs

### Overview

The chart displays three data series tracking token counts across 81 request IDs (0–80). The y-axis represents token counts (0–500), while the x-axis represents sequential request IDs. Three lines are plotted:

- **Blue (first_consistent_span)**: Dominates the chart with high-frequency oscillations.

- **Green (second_consistent_span)**: Shows two distinct peaks.

- **Red (output_span)**: A flat line at the maximum y-axis value (500).

### Components/Axes

- **X-axis (Request Id)**: Labeled "Request Id," ranging from 0 to 80 in increments of 10.

- **Y-axis (# Tokens)**: Labeled "# Tokens," ranging from 0 to 500 in increments of 100.

- **Legend**: Positioned at the top, with colors mapped as:

- Blue → `first_consistent_span`

- Green → `second_consistent_span`

- Red → `output_span`

### Detailed Analysis

1. **Red Line (output_span)**:

- Constant at **500 tokens** across all request IDs.

- No variation observed; acts as a ceiling for other series.

2. **Blue Line (first_consistent_span)**:

- Oscillates between **~100–500 tokens** with sharp peaks and troughs.

- Notable patterns:

- Peaks near request IDs 5, 15, 25, 35, 45, 55, 65, 75.

- Troughs near request IDs 10, 20, 30, 40, 50, 60, 70.

- Shaded area under the line suggests variability in token counts.

3. **Green Line (second_consistent_span)**:

- Remains near **0 tokens** for most request IDs.

- Two prominent peaks:

- First peak at request ID **~10**, reaching ~100 tokens.

- Second peak at request ID **~20**, reaching ~80 tokens.

- Sharp decline after peaks; no further activity observed.

### Key Observations

- The **red line** (`output_span`) consistently matches the y-axis maximum, suggesting it represents a fixed or target token count.

- The **blue line** (`first_consistent_span`) exhibits high variability, with peaks aligning roughly every 10 request IDs.

- The **green line** (`second_consistent_span`) shows transient activity only at request IDs 10 and 20, possibly indicating isolated events.

- No overlap between green and blue lines except during the green peaks, where blue dips slightly.

### Interpretation

- The **output_span** (red) likely represents a standardized or maximum token threshold, possibly a target for processing.

- The **first_consistent_span** (blue) reflects dynamic token usage, potentially tied to input complexity or algorithmic variability. Its regular peaks may correlate with specific request types or processing stages.

- The **second_consistent_span** (green) anomalies at IDs 10 and 20 could indicate rare events, errors, or special cases requiring further investigation.

- The absence of green activity after request ID 20 suggests a system change or resolution of the anomaly.

*Note: All values are approximate due to the absence of gridlines or exact numerical annotations.*

</details>

Figure 6: Length of the first and second consistent span (number of tokens that match with the ground-truth) for different requests under dynamic batching.

We quantify divergence using two metrics. The first consistent span of a request measures the number of initial output tokens that match exactly across the two runs, while the second consistent span measures the number of matching tokens between the first and second divergence points. Figure 6 shows these metrics for 80 requests. In the common case, hundreds of initial tokens are identical across both runs, with some requests exhibiting an exact match of all 512 tokens in the first consistent span. However, once a single token diverges, the sequence rapidly drifts: the second consistent span is near zero for most requests, indicating that divergence quickly propagates through the remainder of the output.

<details>

<summary>figures/position-invariant-kernel.png Details</summary>

### Visual Description

## Diagram: Kernel Processing Runs

### Overview

The image depicts two sequential processing runs (Run-1 and Run-2) of a system labeled "Kernel." Each run illustrates input-output relationships with labeled variables (T₀, T₁, T₀′, T₁′, T₁″, T₂″) and directional arrows indicating data flow. Red circles highlight specific outputs (T₁′ in Run-1 and T₁″ in Run-2).

### Components/Axes

- **Run-1**:

- **Inputs**: T₀ (top), T₁ (bottom)

- **Kernel Block**: Central processing unit

- **Outputs**: T₀′ (top), T₁′ (bottom, circled in red)

- **Run-2**:

- **Inputs**: T₁ (top), T₂ (bottom)

- **Kernel Block**: Central processing unit

- **Outputs**: T₁″ (top, circled in red), T₂″ (bottom)

### Detailed Analysis

- **Run-1**:

- Inputs T₀ and T₁ are processed by the Kernel to produce T₀′ and T₁′.

- T₁′ is explicitly emphasized with a red circle.

- **Run-2**:

- Inputs T₁ and T₂ are processed by the Kernel to produce T₁″ and T₂″.

- T₁″ is emphasized with a red circle, suggesting a focus on this output.

- **Flow Direction**: Arrows indicate unidirectional data flow from inputs to outputs in both runs.

### Key Observations

1. **Input-Output Consistency**:

- Run-1 uses T₀ and T₁ as inputs; Run-2 uses T₁ and T₂, indicating a shift in input variables.

- Outputs T₀′ and T₁′ in Run-1 differ from T₁″ and T₂″ in Run-2, suggesting dynamic processing.

2. **Annotations**:

- Red circles on T₁′ (Run-1) and T₁″ (Run-2) imply these outputs are critical or require special attention.

3. **Sequential Dependency**:

- T₁ is an output in Run-1 and an input in Run-2, suggesting a chained or iterative process.

### Interpretation

The diagram illustrates a two-stage computational workflow where the Kernel processes inputs to generate outputs. The red circles on T₁′ and T₁″ highlight outputs that may represent key results, errors, or thresholds. The transition from Run-1 to Run-2 shows an evolution in inputs (T₀ → T₂) and outputs (T₁′ → T₁″), indicating iterative refinement or adaptation. This could model scenarios like machine learning training phases, signal processing stages, or iterative algorithmic steps. The absence of feedback loops suggests a linear progression between runs.

</details>

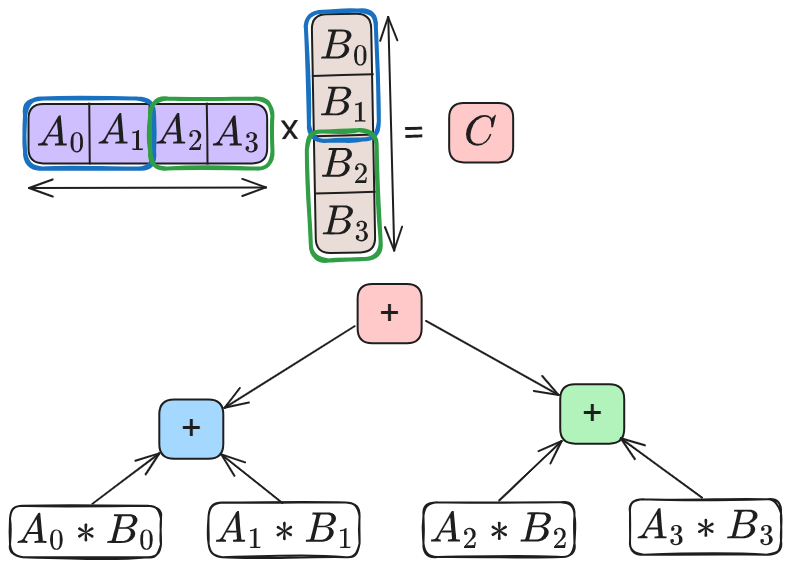

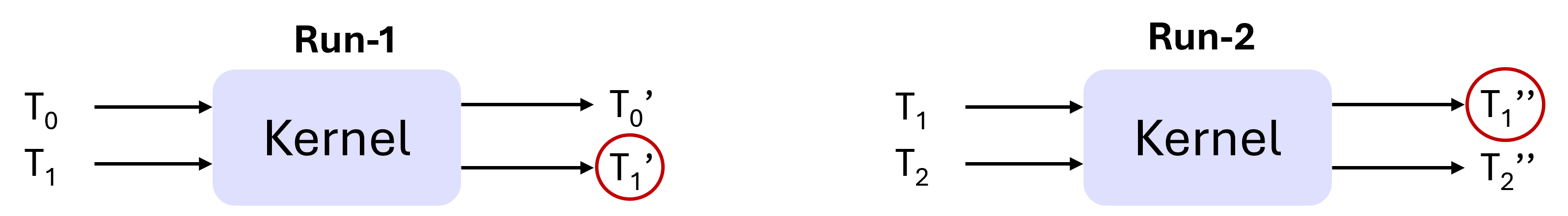

Figure 7: A position-invariant kernel produces the same output for a given input element irrespective of its position in the batch, as long as the total batch size is fixed. In this example, $T_{1}^{\prime}==T_{1}^{\prime\prime}$ if the kernel is position-invariant.

| Category | Operator | Invariant | |

| --- | --- | --- | --- |

| Batch | Position | | |

| Matmul | CuBLAS GEMM | ✗ | ✓ |

| Attention | FlashAttention-3 ‡ | ✓ | ✓ |

| Communication | Multimem-based AllReduce ∗ | ✓ | ✓ |

| Ring-based AllReduce | ✗ | ✗ | |

| Tree-based AllReduce ⋆ | ✓ | ✓ | |

| Normalization | RMSNorm † | ✗ | ✓ |

| Fused RMSNorm + Residual † | ✗ | ✓ | |

Table 2: Invariance properties of common inference operators (‡ num_splits=1, ∗ CUDA 13.0+, ⋆ specific NCCL settings, † vLLM/SGLang defaults).

Observation-2 (O2). Most GPU kernels use uniform, shape-consistent reductions: they apply the same reduction strategy to all elements within a given batch. Moreover, the strategy remains fixed for all batches of the same shape, changing only when the shape changes.

The simplest example of this is GEMM kernels. For a given input matrix A of size M x K, a GEMM kernel computes all the M input elements under the same reduction order (say R). Moreover, it applies the same reduction order R to all input matrices of size M x K. We refer to such kernels as position-invariant. Position invariance implies that, with a fixed total batch size, an input element’s output is independent of its position in the batch. Note that such guarantees do not hold for kernels that implement reductions via atomic operations. Fortunately, kernels used in the LLM forward pass do not use atomic reductions. Figure 7 shows an example of a position-invariant kernel and Table 2 shows the invariance properties of common LLM operators.

The motivation for batch-invariant computation stems from the fact that GPU kernels used in LLMs, while deterministic for a particular input, are not batch-invariant. We observe that position-invariance captures a strictly stronger property than determinism: determinism only requires that the same input produce the same output across runs, whereas position-invariance implies that the output of a given input remains consistent as long as the input size to the kernel remains the same. This allows us to reason about kernel behavior at the level of input shapes, rather than individual input values.

Observation-3 (O3). For deterministic inference, it is sufficient to ensure that a given token position goes through the same reduction strategy across all runs of a given request; reduction strategy for different token positions within or across sequences can be different.

Numerical differences in the output of a token arise from differences in how its own floating-point reductions are performed, not from the numerical values of other co-batched tokens. While batching affects how computations are scheduled and grouped, the computation for a given token position is functionally independent: it consumes the same inputs and executes the same sequence of operations. As a result, interactions across tokens occur only indirectly through execution choices—such as which partial sums are reduced together—not through direct data dependencies. Consequently, as long as a token position is always reduced using the same strategy, its output remains consistent regardless of how other token positions are computed.

Observation-4 (O4). Current systems take an all-or-nothing approach: they either enforce determinism for every request or disable it entirely. Such a design is not a natural fit for LLM deployments.

This is because many LLM workloads neither require bit-wise reproducibility nor benefit from it. In fact, controlled stochasticity is often desirable as it enhances output diversity and creativity of LLMs [integrating_randomness_llm2024, diversity_bias_llm2025, det-inf-kills]. In contrast, requests such as evaluation runs, safety audits, or regression testing require bit-level reproducibility. Overall, different use-cases imply that enforcing determinism for all requests is an overkill.

It is also worth highlighting that this all-or-nothing behavior largely stems from the batch-invariant approach that ties determinism to the kernel design. Since all co-batched tokens go through the same set of kernels, determinism becomes a global property of the batch rather than being selective. While one could run different requests through separate deterministic and non-deterministic kernels, doing so would fragment batches, complicate scheduling, and likely hurt efficiency.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Token Processing Pipeline with KV Cache Management

### Overview

The diagram illustrates a multi-stage token processing pipeline involving KV (Key-Value) cache management, token decoding, verification, and acceptance. It shows how tokens are handled from initial prefill through decoding, verification, and final acceptance without rollback. The process emphasizes deterministic requests, incremental token acceptance, and cache state evolution.

### Components/Axes

1. **KV Cache States**:

- **After Prefill**: Blue cylinder (initial state)

- **After Decode**: Blue cylinder with yellow lower section (partial decoding)

- **After Accepting All Tokens**: Blue cylinder with green lower section (full acceptance)

2. **Token Processing Stages**:

- **Prefill**:

- Deterministic request (dark gray block)

- Other requests (light gray blocks)

- **Decode**:

- Tokens T₀ (light green), T₁ (orange), T₂ (yellow), T₃ (dark yellow)

- Partial decoding visualization (checkerboard patterns)

- **Verify**:

- Accepted tokens (green checkmarks)

- Token T₄ (checkerboard pattern, pending verification)

- **Final State**:

- Sequence: T₀ (light green), T₁ (green), T₂ (green), T₃ (green), T₄ (checkerboard)

- KV cache: Blue cylinder with green lower section

3. **Legend**:

- Green: Accepted tokens

- Yellow: Decoded tokens

- Gray: Other requests

- Checkerboard: Pending/unsure state

### Detailed Analysis

1. **Prefill Stage**:

- Deterministic request occupies the full width (dark gray)

- Other requests occupy partial width (light gray)

- KV cache starts as pure blue (initial state)

2. **Decode Stage**:

- Tokens T₀-T₃ processed with varying shades (lightest to darkest)

- KV cache shows partial yellow section (indicating partial decoding)

- Checkerboard patterns suggest incomplete/ongoing processing

3. **Verify Stage**:

- Green checkmarks confirm T₀-T₃ acceptance

- T₄ remains in checkerboard pattern (pending verification)

- KV cache transitions to blue/green hybrid

4. **Final State**:

- All tokens T₀-T₃ confirmed green (accepted)

- T₄ remains in checkerboard pattern (not yet accepted)

- KV cache shows full green lower section (permanent state)

### Key Observations

1. **Cache Evolution**:

- KV cache grows incrementally (blue → blue/yellow → blue/green)

- Color transitions match processing stages

2. **Token Acceptance**:

- Deterministic request remains isolated (no interaction with other requests)

- Accepted tokens (T₀-T₃) show progressive color saturation

- T₄ remains pending despite being in final sequence

3. **No Rollback Mechanism**:

- Accepted tokens (T₀-T₃) maintain green state permanently

- System commits to accepted tokens without reverting

- T₄'s pending status suggests conditional acceptance

### Interpretation

This diagram demonstrates a token processing system with:

1. **Stateful Cache Management**: KV cache evolves through distinct processing phases, with color-coded states providing visual confirmation of progress.

2. **Deterministic vs. Non-Deterministic Handling**: The isolated deterministic request suggests priority processing or special handling separate from other requests.

3. **Incremental Acceptance**: Tokens are accepted progressively (T₀-T₃), with T₄ remaining in a transitional state, indicating the system can handle new tokens without disrupting previously accepted ones.

4. **No Rollback Architecture**: Once tokens are accepted (T₀-T₃), their state becomes permanent, suggesting a design choice for data integrity or system stability.

The absence of numerical data points suggests this is a conceptual flow diagram rather than a performance metric visualization. The use of color gradients and checkmark patterns effectively communicates the system's state transitions and token acceptance criteria.

</details>

(a) DVR without rollbacks.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Diagram: Deterministic Request Processing Flow with KV Cache Management

### Overview

The diagram illustrates a multi-stage process for handling deterministic requests, including Prefill, Decode, Verify, and Rollback phases. It shows how a key-value (KV) cache evolves through these stages, with token acceptance/rejection logic and sequence restoration mechanisms. The flow emphasizes error recovery through token rollback while maintaining deterministic behavior.

### Components/Axes

1. **KV Cache States**:

- **Prefill**: Blue cylinder (initial state)

- **Decode**: Blue cylinder with yellow lower section (expanded state)

- **Verify-Rollback**: Blue cylinder with green lower section (reduced state)

2. **Token Processing Flow**:

- **Prefill**: Deterministic request → Gray blocks (other requests) + Blue block (deterministic request)

- **Decode**:

- Tokens: T₀ (green), T₁' (yellow), T₂' (yellow), T₃' (yellow)

- Visual progression: Gray → Checkered → Solid blocks

- **Verify**:

- Token validation: T₁ (=T₁'), T₂ (=T₂') accepted (✓), T₃, T₄ rejected (✗)

- **Rollback**:

- Sequence restoration: T₀ (blue), T₁ (green), T₂ (checkered)

3. **Color Coding**:

- Blue: Prefill/initial state

- Green: Accepted tokens

- Yellow: Proposed tokens during Decode

- Red: Rejected tokens

- Checkered pattern: Final accepted state

### Detailed Analysis

1. **Prefill Stage**:

- Deterministic request triggers cache initialization

- Other requests (gray blocks) are processed concurrently

- KV cache remains in initial blue state

2. **Decode Stage**:

- Token generation begins (T₀-T₃')

- KV cache expands (blue + yellow)

- Visual progression shows increasing token confidence (gray → checkered → solid)

3. **Verify Stage**:

- Token validation against reference values

- Accepted tokens (T₁', T₂') retain checkmarks

- Rejected tokens (T₃, T₄) marked with X

- KV cache transitions to blue + green

4. **Rollback Stage**:

- Sequence truncated at last accepted token (T₂)

- KV cache restored to blue + green state

- Rejected tokens (T₃, T₄) permanently removed

### Key Observations

1. **Cache Dynamics**:

- Cache grows during Prefill/Decode (blue → blue+yellow)

- Shrinks during Verify-Rollback (blue+yellow → blue+green)

- Final state retains only validated tokens

2. **Token Lifecycle**:

- Proposed tokens (T₀-T₃') during Decode

- Only T₁' and T₂' survive verification

- Rejected tokens (T₃, T₄) excluded from final sequence

3. **Deterministic Guarantees**:

- Final sequence (T₀-T₂) matches reference values

- Rollback ensures consistency despite mid-process errors

### Interpretation

This diagram demonstrates a fault-tolerant processing pipeline for deterministic systems. The KV cache acts as both a performance optimization (caching intermediate states) and a recovery mechanism (enabling rollback). The color-coded progression visually represents:

- **State Evolution**: From initial prefill through error-prone decoding to final validation

- **Error Handling**: Rejected tokens are permanently discarded, while accepted tokens form the immutable final sequence

- **Deterministic Recovery**: The system recovers to a known-good state (T₂) rather than failing entirely

The absence of explicit numerical values suggests this is a conceptual flow diagram rather than a performance benchmark. The checkmark/X system provides immediate visual feedback about token validity, while the KV cache's color transitions indicate state changes without requiring precise numerical tracking.

</details>

(b) DVR with rollbacks.

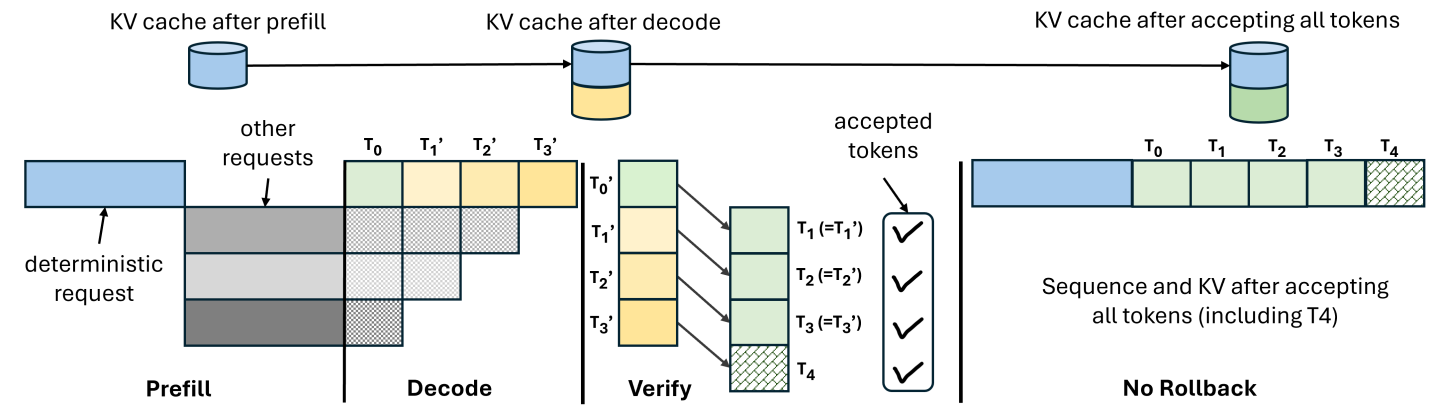

Figure 8: An example of decode-verify-rollback. After generating a fixed number of tokens through regular decoding, LLM-42 verifies them in parallel via a separate forward pass. (a) all the tokens generated in the decode phase pass verification; LLM-42 accepts all these tokens along with the new verifier-generated token ( $T_{4}$ ). (b) some tokens do not match between decode and verification; LLM-42 accepts only the initial matching tokens ( $T_{1}^{\prime}$ ) and the following verifier-generated token ( $T_{2}$ ); all other tokens are recomputed. In both cases, the verifier replaces the KV cache entries generated by the decode phase with its own.

4 LLM-42

Since non-determinism in LLM inference comes from dynamic batching, disabling it would make inference deterministic. However, dynamic batching is arguably the most powerful performance optimization for LLM inference [orca, distserve2024, vllmsosp, sarathi2023]. Therefore, our goal is to enable determinism in the presence of dynamic batching. In this section, we introduce a speculative decoding-inspired, scheduler-driven approach LLM-42 to achieve this goal.

4.1 Overall Design

LLM-42 exploits the observations presented in §3 as follows:

Leveraging O1. Tokens decoded from a consistent state are mostly consistent. Based on this observation, we re-purpose speculative-decoding style mechanism to enforce determinism via a decode–verify–rollback (DVR) protocol. DVR optimistically decodes tokens using the fast path and a verifier ensures determinism. Only tokens approved by the verifier are returned to the user, while the few that fail verification are discarded and recomputed. The key, however, is to ensure that the verifier’s output itself is always consistent. We leverage O2 to achieve this.

Leveraging O2. Because GPU kernels rely on shape-consistent reductions, we make the verifier deterministic by always operating on a fixed number of tokens (e.g., verifying 10 tokens at a time). The only corner case occurs at the end of a sequence, where fewer than T tokens may remain (for instance, when the 11th token is an end-of-sequence token). We handle this by padding with dummy tokens so that the verifier always processes exactly T tokens.

Leveraging O3. Determinism only requires that each token position follow a consistent strategy across runs whereas different positions can follow different strategies. This observation lets us compute prefill and decode phases using different strategies. Because prefill is massively parallel even within a single request, it can be made deterministic simply by avoiding arbitrary batching of prefill tokens, eliminating the need for a verifier in this phase. Verifier is required only for the tokens generated by the decode phase.

Leveraging O4: LLM-42 decouples determinism from token generation by moving it into a separate verification phase. This makes selective determinism straightforward: only requests that require deterministic inference incur verification, while all other traffic avoids it. We expose this control to the users via a new API flag is_deterministic=True|False that allows them to explicitly request determinism on a per-request basis; default is False.

Figure 5 quantifies the performance benefit of selective determinism. When one out of 11 requests requires determinism, LLM-42 achieves decode throughput of 911 tokens per second which is $2.2×$ higher than the deterministic mode throughput of SGLang and only within 3% of the non-deterministic mode (best case) throughput. We present a more detailed evaluation in §5.

4.2 Decode-verify-rollback (DVR)

DVR performs decoding optimistically by first generating tokens using high-throughput, non-deterministic execution and then correcting any inconsistencies through verification and recomputation. Rather than enforcing determinism upfront, it identifies inconsistencies in the generated sequence on the fly, and recomputes only those tokens that are not guaranteed to be consistent across all possible runs of a given request.

Figure 8 illustrates how DVR operates, assuming a verification window of four tokens. The blue request requires deterministic output and its first output token $T_{0}$ is produced by deterministic prefill phase that avoids arbitrary inter-request batching. LLM-42 then generates candidate tokens $T_{1}^{\prime}$ , $T_{2}^{\prime}$ , and $T_{3}^{\prime}$ using the regular fast path with dynamic batching, where gray requests may be batched arbitrarily. The first input to the verifier should be consistent (in this case, $T_{0}$ is consistent since it comes from the prefill phase). The verifier replays these four tokens $T_{0}$ and $T_{1}^{\prime}$ – $T_{3}^{\prime}$ as input and produces output tokens $T_{1}$ – $T_{4}$ . Verification has two possible outcomes: (1) all tokens pass verification, or (2) one or more tokens fail verification. We describe these two cases in detail below.

Case-1: When verification succeeds.

In the common case, verification reproduces the same tokens that the preceding decode iterations generated. For example, in 8(a), $T_{1}=T_{1}^{\prime}$ , $T_{2}=T_{2}^{\prime}$ and $T_{3}=T_{3}^{\prime}$ . In this case, LLM-42 accepts all these tokens; in addition, it also accepts the token $T_{4}$ since $T_{4}$ was generated by the verifier from a consistent state and is therefore consistent.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Graph: Latency per Token vs. Number of Tokens

### Overview

The image depicts a line graph illustrating the relationship between the number of tokens processed and the latency per token (in milliseconds). The graph shows a decreasing trend in latency as the number of tokens increases, with a shaded green area under the line.

### Components/Axes

- **X-axis (Horizontal)**: Labeled "Number of Tokens" with discrete values: 16, 32, 64, 128, 256, 512.

- **Y-axis (Vertical)**: Labeled "Latency per Token (ms)" with a scale from 0.0 to 0.8.

- **Legend**: No explicit legend is present, but the line is blue, and the shaded area is green.

- **Data Points**: Blue circular markers connected by a solid blue line.

### Detailed Analysis

- **Data Points**:

- At 16 tokens: ~0.76 ms

- At 32 tokens: ~0.38 ms

- At 64 tokens: ~0.19 ms

- At 128 tokens: ~0.10 ms

- At 256 tokens: ~0.05 ms

- At 512 tokens: ~0.04 ms

- **Trend**: The latency per token decreases exponentially as the number of tokens increases. The decline is steepest between 16 and 32 tokens, then gradually flattens.

- **Shaded Area**: A green triangular region under the line, suggesting cumulative latency or a weighted average of latency across token counts.

### Key Observations

1. **Exponential Decay**: Latency per token drops significantly with increasing token counts, indicating improved efficiency at scale.

2. **Diminishing Returns**: The rate of latency reduction slows after 64 tokens, suggesting a plateau in performance gains.

3. **Shaded Area**: The green region implies that total latency (e.g., cumulative or integrated) decreases as more tokens are processed, though the exact metric is ambiguous without additional context.

### Interpretation

The graph demonstrates that processing larger batches of tokens reduces the average latency per token, likely due to optimized parallel processing or algorithmic efficiency. The shaded area may represent the total latency over the token range, highlighting that larger token counts minimize overall computational overhead. This trend is critical for applications requiring high-throughput token processing, such as natural language processing or real-time data analysis. The flattening curve at higher token counts suggests a limit to scalability, possibly due to hardware constraints or algorithmic bottlenecks.

</details>

(a) Verification latency

<details>

<summary>x8.png Details</summary>

### Visual Description

## CDF Chart: Rollbacks per Verification Window

### Overview

The chart displays cumulative distribution functions (CDFs) for rollbacks per verification window across four window sizes: 32, 64, 128, and 256. The x-axis represents "Rollbacks per Verification Window" (0.0–0.8), and the y-axis represents "CDF" (0.0–1.0). Each line corresponds to a window size, with larger windows requiring more rollbacks to reach higher CDF values.

### Components/Axes

- **Y-Axis (CDF)**: Labeled "CDF" with ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

- **X-Axis (Rollbacks per Verification Window)**: Labeled "Rollbacks per Verification Window" with ticks at 0.0, 0.2, 0.4, 0.6, 0.8.

- **Legend**: Located in the bottom-right corner, mapping colors to window sizes:

- Blue: Window Size = 32

- Orange: Window Size = 64

- Green: Window Size = 128

- Red: Window Size = 256

### Detailed Analysis

1. **Window Size = 32 (Blue Line)**:

- Starts at (0.0, 0.6) and rises sharply.

- Reaches 1.0 CDF at approximately **0.2 rollbacks**.

- Stepwise increases indicate discrete probability thresholds.

2. **Window Size = 64 (Orange Line)**:

- Begins at (0.0, 0.6) and rises more gradually than the blue line.

- Reaches 1.0 CDF at approximately **0.4 rollbacks**.

- Intermediate steps suggest slower accumulation of rollback tolerance.

3. **Window Size = 128 (Green Line)**:

- Starts at (0.0, 0.6) and rises even more gradually.

- Reaches 1.0 CDF at approximately **0.6 rollbacks**.

- Flatter steps reflect reduced sensitivity to rollbacks.

4. **Window Size = 256 (Red Line)**:

- Begins at (0.0, 0.6) and rises most gradually.

- Reaches 1.0 CDF at approximately **0.8 rollbacks**.

- Nearly linear progression indicates minimal rollback impact.

### Key Observations

- **Inverse Relationship**: Larger window sizes require more rollbacks to achieve the same CDF (e.g., 256 requires ~0.8 rollbacks vs. 32’s ~0.2).

- **Stepwise Behavior**: All lines exhibit step functions, typical of CDFs, with abrupt transitions between probability thresholds.

- **Consistency**: All lines start at (0.0, 0.6), suggesting a baseline CDF of 60% at zero rollbacks.

### Interpretation

The chart demonstrates that **larger verification windows are less sensitive to rollbacks**, requiring significantly more rollbacks to achieve the same confidence level (CDF). For example:

- A window size of 32 achieves 100% CDF with only ~0.2 rollbacks, while a window size of 256 requires ~0.8 rollbacks.

- This implies that systems using larger windows may tolerate more rollbacks before reaching full confidence, which could be critical for fault tolerance or reliability analysis. The stepwise nature of the CDFs suggests discrete event modeling, possibly reflecting probabilistic thresholds in system behavior.

</details>

(b) Rollbacks

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Chart: Cumulative Distribution Function (CDF) vs. Recomputed Tokens per Request

### Overview

The chart illustrates the relationship between the cumulative distribution function (CDF) and recomputed tokens per request for four different window sizes (32, 64, 128, 256). The CDF represents the probability that a request will use up to a specified number of tokens. Larger window sizes correspond to higher token thresholds before reaching a CDF of 1.0.

### Components/Axes

- **X-axis**: "Recomputed Tokens per Request" (range: 0–1750, increments of 250).

- **Y-axis**: "CDF" (range: 0.0–1.0, increments of 0.2).

- **Legend**: Located in the bottom-right corner, with four colored lines:

- Blue: Window Size = 32

- Orange: Window Size = 64

- Green: Window Size = 128

- Red: Window Size = 256

### Detailed Analysis

1. **Blue Line (Window Size = 32)**:

- Steepest slope, reaching CDF = 1.0 at approximately **250 tokens**.

- Rapidly plateaus after 250 tokens.

2. **Orange Line (Window Size = 64)**:

- Reaches CDF = 1.0 at approximately **300 tokens**.

- Slope is less steep than the blue line but steeper than green/red lines.

3. **Green Line (Window Size = 128)**:

- Reaches CDF = 1.0 at approximately **400 tokens**.

- Slope is gentler than orange and blue lines.

4. **Red Line (Window Size = 256)**:

- Reaches CDF = 1.0 at approximately **500 tokens**.

- Flattest slope among all lines.

All lines plateau at CDF = 1.0 beyond their respective token thresholds, indicating no further increase in probability for higher token counts.

### Key Observations

- **Inverse Relationship**: Larger window sizes correlate with higher token thresholds before reaching CDF = 1.0.

- **Steepness Gradient**: Smaller window sizes (e.g., 32) exhibit steeper curves, indicating a sharper transition to maximum probability.

- **Consistency**: All lines eventually reach CDF = 1.0, suggesting a hard upper limit on token usage per request.

### Interpretation

The data demonstrates that window size directly impacts the token capacity a system can handle before reaching maximum probability (CDF = 1.0). Larger windows (e.g., 256) allow significantly more tokens per request compared to smaller windows (e.g., 32), reflecting a trade-off between window size and computational efficiency. The CDF approaching 1.0 implies that beyond these thresholds, the likelihood of exceeding token limits becomes negligible. This could inform system design decisions, such as optimizing window sizes for specific workloads or balancing resource allocation.

</details>

(c) CDF of recomputed tokens

<details>

<summary>x10.png Details</summary>

### Visual Description

## Bar Chart: Recompute Cost by Window Size

### Overview

The chart illustrates the relationship between window size and recompute cost as a percentage. It uses four vertical bars to represent different window sizes (32, 64, 128, 256) and their corresponding recompute costs. The y-axis scales from 0% to 40% in 10% increments, with the highest bar exceeding this range at 46.41%.

### Components/Axes

- **X-axis (Window Size)**: Labeled with discrete values: 32, 64, 128, 256.

- **Y-axis (Recompute Cost %)**: Scaled from 0% to 40% in 10% increments, with an extended range to accommodate the 46.41% value.

- **Legend**: A single entry for "Recompute Cost (%)" represented by purple bars with diagonal hatching.

- **Bars**: Four purple bars with diagonal hatching, positioned at the specified window sizes.

### Detailed Analysis

- **Window Size 32**: Recompute cost = 6.81% (shortest bar).

- **Window Size 64**: Recompute cost = 12.42% (moderate increase).

- **Window Size 128**: Recompute cost = 24.92% (significant jump).

- **Window Size 256**: Recompute cost = 46.41% (highest value, exceeding the y-axis maximum).

### Key Observations

1. **Exponential Growth**: Recompute cost increases non-linearly with window size. The jump from 128 to 256 results in a 100% increase in cost (24.92% → 46.41%).

2. **Threshold Effect**: The largest window size (256) accounts for over 46% of recompute costs, suggesting diminishing returns or inefficiencies at scale.

3. **Visual Consistency**: All bars use the same purple color and diagonal hatching, confirming a single data series.

### Interpretation

The data demonstrates that recompute costs scale disproportionately with window size, particularly beyond 128. This implies that larger window sizes may introduce significant computational overhead, potentially impacting system performance or resource allocation. The sharp increase at 256 suggests a critical threshold where optimization strategies (e.g., parallel processing, caching) might be necessary to mitigate costs. The absence of other data series or variables indicates this analysis focuses solely on the direct relationship between window size and recompute efficiency.

</details>

(d) Total recomputation overhead

Figure 9: The cost of verification and recomputation with varying window sizes.

Case-2: When verification fails.

Occasionally, a verification pass disagrees with one or more decoded tokens, e.g., only $T_{1}^{\prime}$ matches with the verifier’s output in 8(b). In this case, LLM-42 commits the output only up to the last matching token ( $T_{1}^{\prime}$ ); all subsequent tokens ( $T_{2}^{\prime}$ and $T_{3}^{\prime}$ ) are rejected. The verifier-generated token that appears immediately after the last matching position ( $T_{2}$ ) is also accepted and the KV cache is truncated at this position. The next decode iteration resumes from this repaired, consistent state.

Making KV cache consistent.

During the decode phase, the KV cache is populated by fast-path iterations that execute under dynamic batching. Consequently, even when token verification succeeds, the KV cache corresponding to the verified window may still be inconsistent, since it was produced by non-deterministic execution. This inconsistency could affect tokens generated in future iterations despite the current window being verified. Token-level verification alone is therefore insufficient without repairing the KV cache. To address this, we overwrite the KV cache entries produced during decoding with the corresponding entries from the verification pass. This ensures that both the emitted tokens and the KV cache state are consistent for subsequent decode iterations, eliminating downstream divergence.

Guaranteed forward progress.

Note that each verification pass produces at least one new consistent output token as shown in Figure 8. As a result, DVR guarantees forward progress even in contrived worst-case scenarios that might trigger rollbacks for every optimistically generated token. We have not observed any such scenario in our experiments.