# Dynamic Thinking-Token Selection for Efficient Reasoning in Large Reasoning Models

**Authors**: Zhenyuan Guo, Tong Chen, Wenlong Meng, Chen Gong, Xin Yu, Chengkun Wei, Wenzhi Chen

Abstract

Large Reasoning Models (LRMs) excel at solving complex problems by explicitly generating a reasoning trace before deriving the final answer. However, these extended generations incur substantial memory footprint and computational overhead, bottlenecking LRMs’ efficiency. This work uses attention maps to analyze the influence of reasoning traces and uncover an interesting phenomenon: only some decision-critical tokens in a reasoning trace steer the model toward the final answer, while the remaining tokens contribute negligibly. Building on this observation, we propose Dyn amic T hinking-Token S election (DynTS). This method identifies decision-critical tokens and retains only their associated Key-Value (KV) cache states during inference, evicting the remaining redundant entries to optimize efficiency. Across six benchmarks, DynTS surpasses the state-of-the-art KV cache compression methods, improving Pass@1 by $2.6\%$ under the same budget. Compared to vanilla Transformers, it reduces inference latency by $1.84–2.62×$ and peak KV-cache memory footprint by $3.32–5.73×$ without compromising LRMs’ reasoning performance. The code is available at the github link. https://github.com/Robin930/DynTS

KV Cache Compression, Efficient LRM, LLM

1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

## Token Retention Methods Diagram

### Overview

The image is a diagram illustrating different methods for token retention in language models. It compares Transformers, SnapKV, StreamingLLM, H2O, and DynTS, showing which tokens each method keeps based on different criteria.

### Components/Axes

* **Methods (Left Column):** Lists the different language model methods being compared: Transformers, SnapKV, StreamingLLM, H2O, and DynTS.

* **Tokens (Top Row):** Represents the sequence of tokens processed by each method. Each token is represented by a square.

* **Keeps (Right Column):** A legend explaining the color-coding used to indicate which tokens are kept by each method.

* White: All Tokens

* Orange: High Importance Prefill Tokens

* Yellow: Attention Sink Tokens

* Light Blue: Local Tokens

* Green: Heavy-Hitter Tokens

* Red: Predicted Importance Tokens

### Detailed Analysis

* **Transformers:** All tokens are kept (represented by white squares).

* **SnapKV:** Keeps some tokens as "High Importance Prefill Tokens" (orange squares) within a "Prompt" region. An "Observation Window" is also indicated. The remaining tokens are gray, implying they are not retained.

* **StreamingLLM:** Keeps a few "Attention Sink Tokens" (yellow squares) at the beginning, followed by "Local Tokens" (light blue squares).

* **H2O:** Keeps some "Heavy-Hitter Tokens" (green squares) and then "Local Tokens" (light blue squares).

* **DynTS:** Keeps "Predicted Importance Tokens" (red squares) and "Local Tokens" (light blue squares). Arrows point from the red and gray tokens to a box labeled "Answer," indicating the predicted importance of tokens to the final answer.

### Key Observations

* Transformers retain all tokens, while the other methods selectively retain tokens based on different criteria.

* SnapKV focuses on retaining tokens from the prompt.

* StreamingLLM and H2O retain a combination of specific token types (Attention Sink, Heavy-Hitter) and local tokens.

* DynTS retains tokens based on their predicted importance to the final answer.

### Interpretation

The diagram illustrates different strategies for managing and retaining tokens in language models. The methods vary in their approach, with some focusing on retaining important tokens from the prompt (SnapKV), others on specific token types (StreamingLLM, H2O), and others on predicted importance to the final answer (DynTS). The choice of method likely depends on the specific application and the trade-off between computational cost and performance. The diagram highlights the evolution from retaining all tokens (Transformers) to more selective retention strategies.

</details>

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart: Accuracy vs. KV Cache Length

### Overview

The image is a bar chart comparing the accuracy of different models (Transformers, DynTS, Window StreamingLLM, SepLLM, H2O, SnapKV, R-KV) against their KV Cache Length. Accuracy is represented by gray bars, while KV Cache Length is represented by a blue dashed line with square markers.

### Components/Axes

* **X-axis:** Model names (Transformers, DynTS, Window StreamingLLM, SepLLM, H2O, SnapKV, R-KV)

* **Left Y-axis:** Accuracy (%), ranging from 0 to 70.

* **Right Y-axis:** KV Cache Length, ranging from 2k to 20k.

* **Legend:**

* Gray: Accuracy

* Blue: KV Cache Length

### Detailed Analysis

* **Accuracy (Gray Bars):**

* Transformers: 63.6%

* DynTS: 63.5%

* Window StreamingLLM: 49.4%

* SepLLM: 51.6%

* H2O: 54.5%

* SnapKV: 58.8%

* R-KV: 59.8%

* R-KV: 60.9%

* **KV Cache Length (Blue Dashed Line):**

* Transformers: Starts at approximately 17k, then drops sharply.

* DynTS: Drops to approximately 3k.

* Window StreamingLLM: Remains relatively constant at approximately 4k.

* SepLLM: Remains relatively constant at approximately 4k.

* H2O: Remains relatively constant at approximately 4k.

* SnapKV: Remains relatively constant at approximately 4k.

* R-KV: Remains relatively constant at approximately 4k.

### Key Observations

* Transformers and DynTS have the highest accuracy.

* Transformers has a significantly higher KV Cache Length compared to other models.

* DynTS has a low KV Cache Length despite having high accuracy.

* Window StreamingLLM, SepLLM, H2O, SnapKV, and R-KV have similar KV Cache Lengths.

### Interpretation

The chart suggests that DynTS achieves comparable accuracy to Transformers but with a significantly reduced KV Cache Length. This implies that DynTS is more memory-efficient. The other models (Window StreamingLLM, SepLLM, H2O, SnapKV, and R-KV) have lower accuracy and similar, low KV Cache Lengths. The data demonstrates a trade-off between accuracy and memory usage, with DynTS potentially offering a better balance. The high KV Cache Length of Transformers may be a limiting factor in certain applications.

</details>

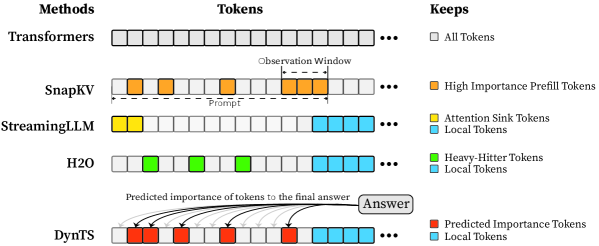

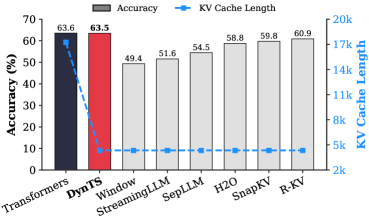

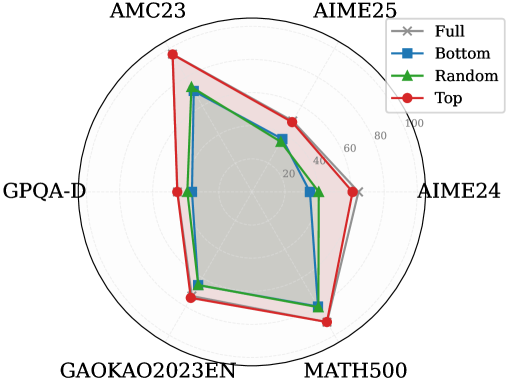

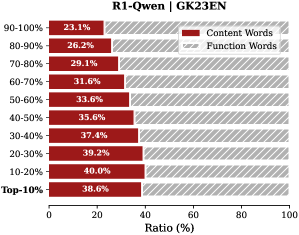

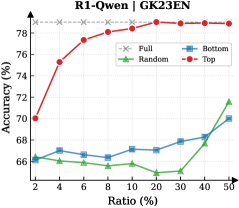

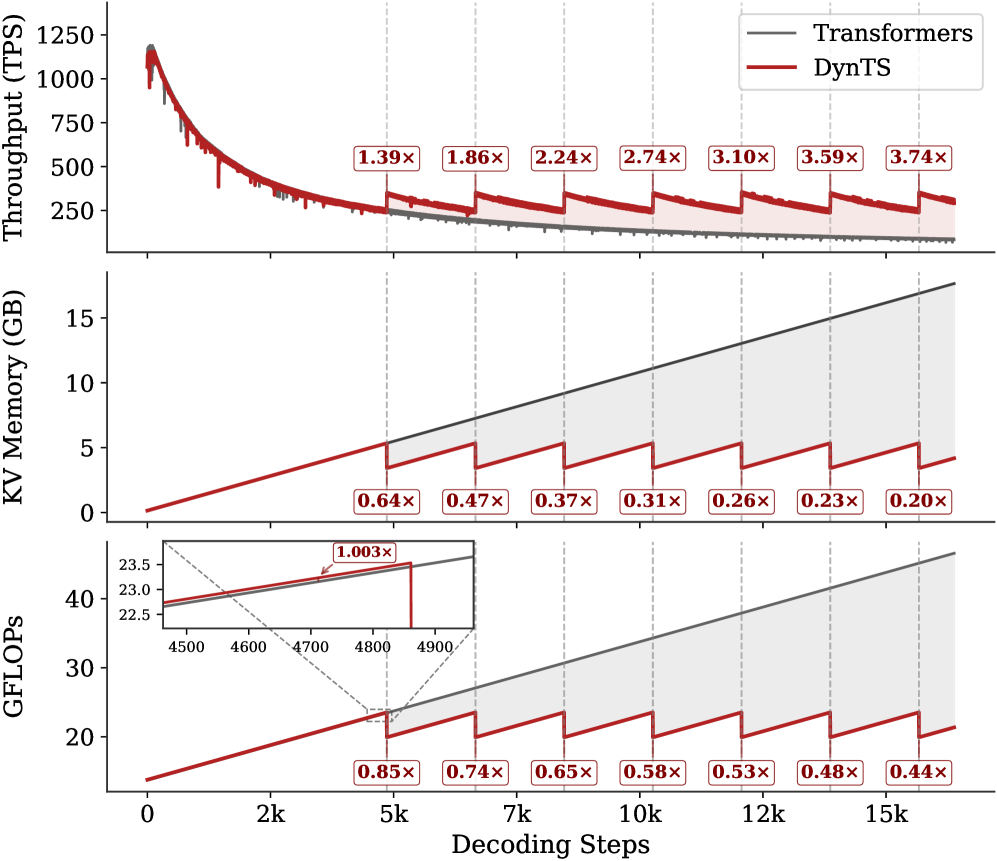

Figure 1: (Left) Comparison of token selection strategies across different KV cache eviction methods. In each row, colored blocks denote the retained high-importance tokens, while grey blocks represent the evicted tokens during LRM inference. (Right) The average reasoning performance and KV cache memory footprint on DeepSeek-R1-Distall-Llama-8B and DeepSeek-R1-Distall-Qwen-7B across six reasoning benchmarks.

Recent advancements in Large Reasoning Models (LRMs) (Chen et al., 2025) have significantly strengthened the reasoning capabilities of Large Language Models (LLMs). Representative models such as DeepSeek-R1 (Guo et al., 2025), Gemini-3-Pro (DeepMind, 2025), and ChatGPT-5.2 (OpenAI, 2025) support deep thinking mode to strengthen reasoning capability in the challenging mathematics, programming, and science tasks (Zhang et al., 2025b). These models spend a substantial number of intermediate thinking tokens on reflection, reasoning, and verification to derive the correct response during inference (Feng et al., 2025). However, the thinking process necessitates the immense KV cache memory footprint and attention-related computational cost, posing a critical deployment challenge in resource-constrained environments.

KV cache compression techniques aim to optimize the cache state by periodically evicting non-essential tokens (Shi et al., 2024; WEI et al., 2025; Liu et al., 2025b; Qin et al., 2025), typically guided by predefined token retention rules (Chen et al., 2024; Xiao et al., 2024; Devoto et al., 2024) or attention-based importance metrics (Zhang et al., 2023; Li et al., 2024; Choi et al., 2025). Nevertheless, incorporating them into the inference process of LRMs faces two key limitations: (1) Methods designed for long-context prefilling are ill-suited to the short-prefill and long-decoding scenarios of LRMs; (2) Methods tailored for long-decoding struggle to match the reasoning performance of the Full KV baseline (SOTA $60.9\%$ vs. Full KV $63.6\%$ , Fig. 1 Left). Specifically, in LRM inference, the model conducts an extensive reasoning process and then summarizes the reasoning content to derive the final answer (Minegishi et al., 2025). This implies that the correctness of the final answer relies on the thinking tokens within the preceding reasoning (Bogdan et al., 2025). However, existing compression methods cannot identify the tokens that are essential to the future answer. This leads to a significant misalignment between the retained tokens and the critical thinking tokens, resulting in degradation in the model’s reasoning performance.

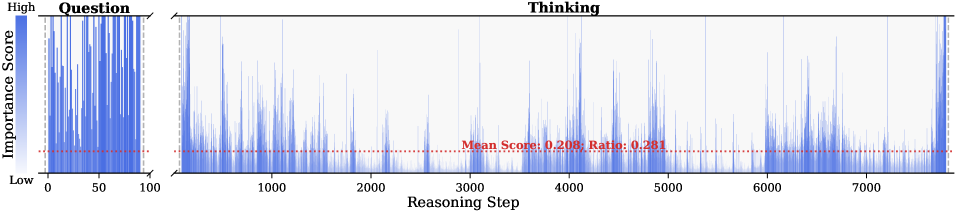

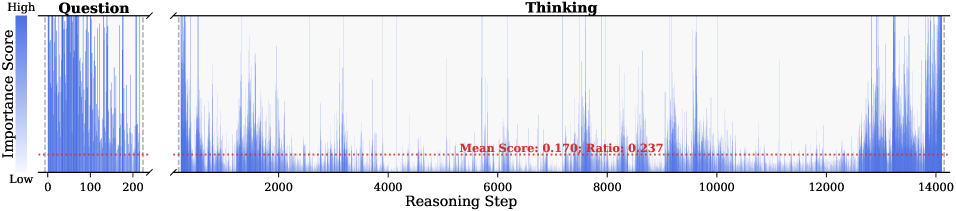

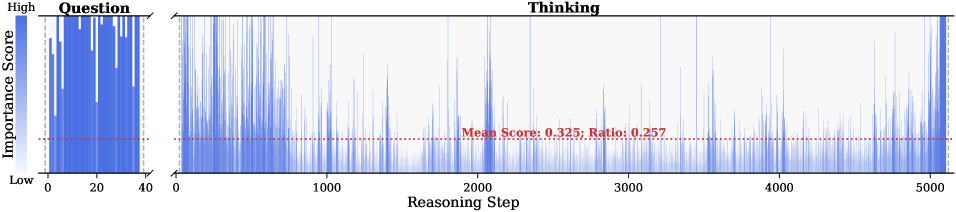

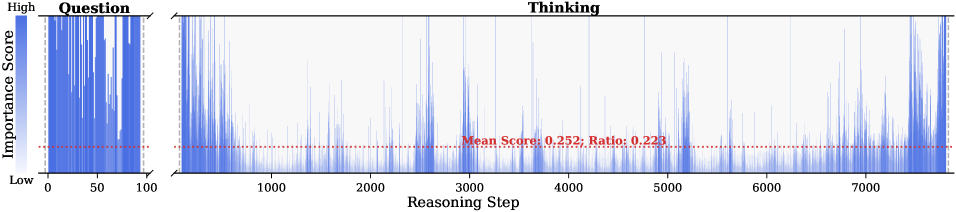

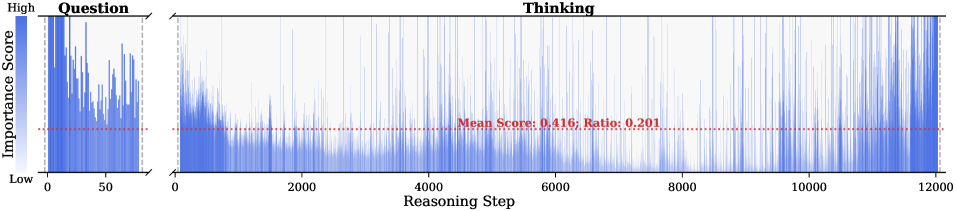

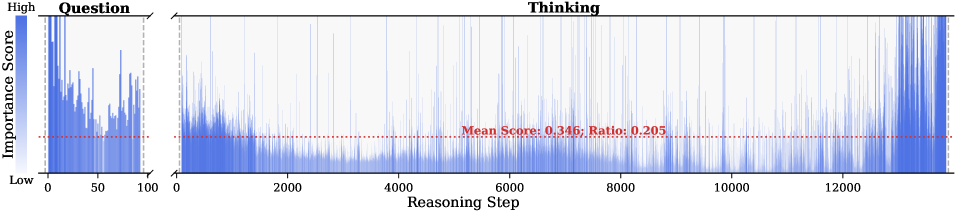

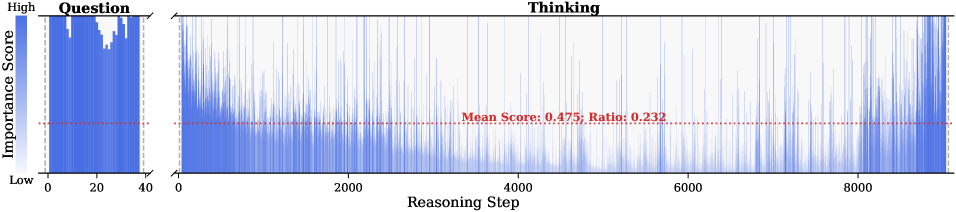

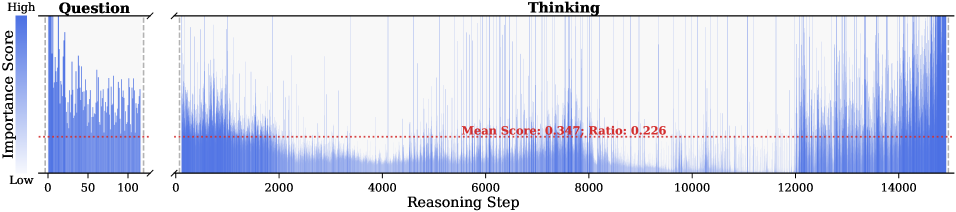

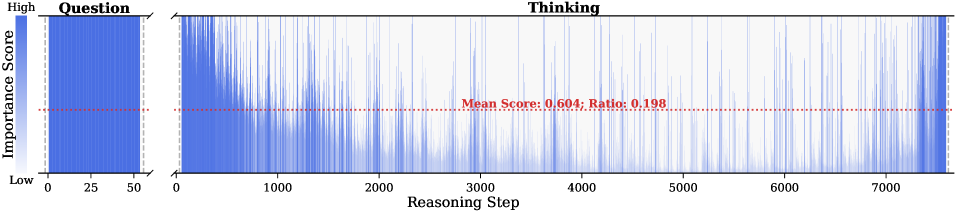

To address this issue, we analyze the LRM’s generated content and study which tokens are most important for the model to steer the final answer. Some works point out attention weights capturing inter-token dependencies (Vaswani et al., 2017; Wiegreffe and Pinter, 2019; Bogdan et al., 2025), which can serve as a metric to assess the importance of tokens. Consequently, we decompose the generated content into a reasoning trace and a final answer, and then calculate the importance score of each thinking token in the trajectory by aggregating the attention weights from the answer to thinking tokens. We find that only a small subset of thinking tokens ( $\sim 20\%$ tokens in the reasoning trace, see Section § 3.1) have significant scores, which may be critical for the final answer. To validate these hypotheses, we retain these tokens and prompt the model to directly generate the final answer. Experimental results show that the model maintains close accuracy compared to using the whole KV cache. This reveals a Pareto principle The Pareto principle, also known as the 80/20 rule, posits that $20\%$ of critical factors drive $80\%$ of the outcomes. In this paper, it implies that a small fraction of pivotal thinking tokens dictates the correctness of the model’s final response. in LRMs: only a small subset of decision-critical thinking tokens with high importance scores drives the model toward the final answer, while the remaining tokens contribute negligibly.

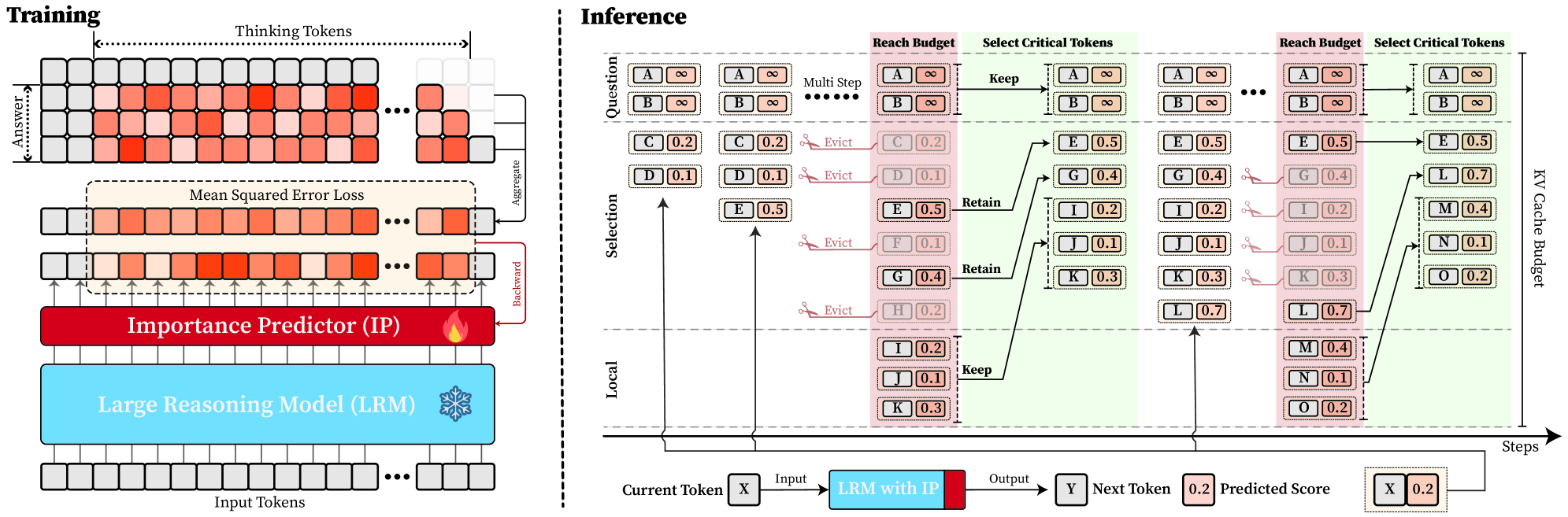

Based on the above insight, we introduce DynTS (Dyn amic T hinking-Token S election), a novel method for dynamically predicting and selecting decision-critical thinking tokens on-the-fly during decoding, as shown in Fig. 1 (Left). The key innovation of DynTS is the integration of a trainable, lightweight Importance Predictor at the final layer of LRMs, enabling the model to dynamically predict the importance of each thinking token to the final answer. By utilizing importance scores derived from sampled reasoning traces as supervision signals, the predictor learns to distinguish between critical tokens and redundant tokens. During inference, DynTS manages memory through a dual-window mechanism: generated tokens flow from a Local Window (which captures recent context) into a Selection Window (which stores long-term history). Once the KV cache reaches the budget, the system retains the KV cache of tokens with higher predicted importance scores in the Select Window and all tokens in the Local Window (Zhang et al., 2023; Chen et al., 2024). By evicting redundant KV cache entries, DynTS effectively reduces both system memory pressure and computational overhead. We also theoretically analyze the computational overhead introduced by the importance predictor and the savings from cache eviction, and derive a Break-Even Condition for net computational gain.

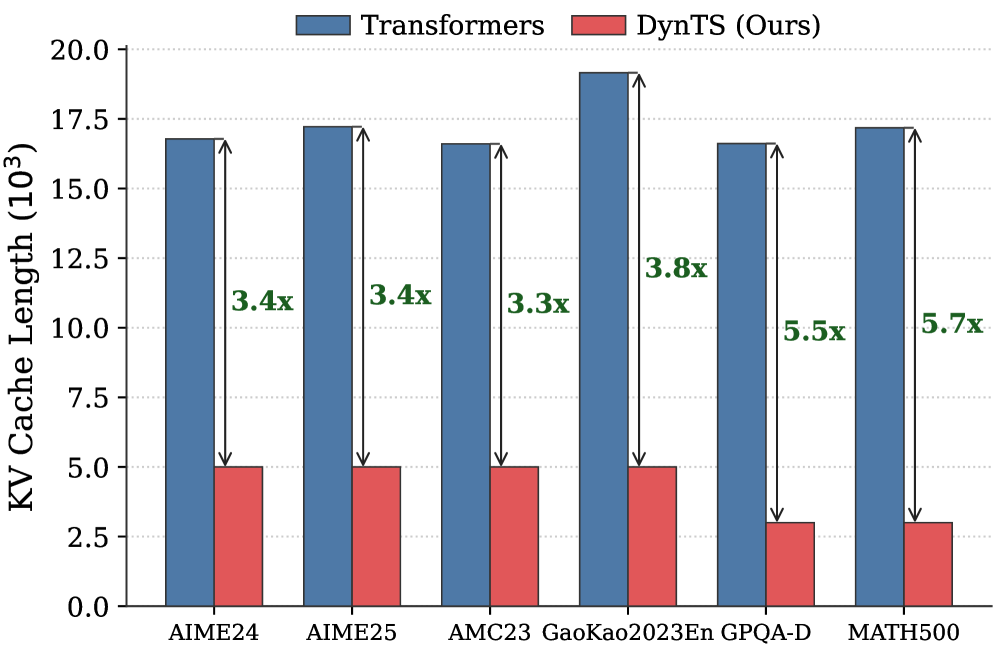

Then, we train the Importance Predictor based on the MATH (Hendrycks et al., 2021) train set, and evaluate DynTS on the other six reasoning benchmarks. The reasoning performance and KV cache length compare with the SOTA KV cache compression method, as reported in Fig. 1 (Right). Our method reduces the KV cache memory footprint by up to $3.32–5.73×$ without compromising reasoning performance compared to the full-cache transformer baseline. Within the same budget, our method achieves a $2.6\%$ improvement in accuracy over the SOTA KV cache compression approach.

2 Preliminaries

Large Reasoning Model (LRM).

Unlike standard LLMs that directly generate answers, LRMs incorporate an intermediate reasoning process prior to producing the final answer (Chen et al., 2025; Zhang et al., 2025a; Sui et al., 2025). Given a user prompt $\mathbf{x}=(x_{1},...,x_{M})$ , the model generated content represents as $\mathbf{y}$ , which can be decomposed into a reasoning trace $\mathbf{t}$ and a final answer $\mathbf{a}$ . The trajectory is delimited by a start tag <think> and an end tag </think>. Formally, the model output is defined as:

$$

\mathbf{y}=[\texttt{<think>},\mathbf{t},\texttt{</think>},\mathbf{a}], \tag{1}

$$

where the trajectory $\mathbf{t}=(t_{1},...,t_{L})$ composed of $L$ thinking tokens, and $\mathbf{a}=(a_{1},...,a_{K})$ represents the answer composed of $K$ tokens. During autoregressive generation, the model conducts a reasoning phase that produces thinking tokens $t_{i}$ , followed by an answer phase that generates the answer token $a_{i}$ . This process is formally defined as:

$$

P(\mathbf{y}|\mathbf{x})=\underbrace{\prod_{i=1}^{L}P(t_{i}|\mathbf{x},\mathbf{t}_{<i})}_{\text{Reasoning Phase}}\cdot\underbrace{\prod_{j=1}^{K}P(a_{j}|\mathbf{x},\mathbf{t},\mathbf{a}_{<j})}_{\text{Answer Phase}} \tag{2}

$$

Since the length of the reasoning trace significantly exceeds that of the final answer ( $L\gg K$ ) (Xu et al., 2025), we focus on selecting critical thinking tokens in the reasoning trace to reduce memory and computational overhead.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Question Importance Score vs. Step

### Overview

The image presents a line chart that visualizes the importance score of questions over a series of steps. The chart is divided into two sections: the "Question" section on the left, spanning steps 0-200, and the "Thinking" section on the right, spanning steps 200-14000. A horizontal dotted red line indicates the mean score and ratio.

### Components/Axes

* **Title:** Thinking

* **Y-axis:** "Importance Score" with a gradient scale from "Low" to "High" on the left.

* **X-axis:** "(Step)" ranging from 0 to approximately 14000. Tick marks are present at 0, 200, 2000, 4000, 6000, 8000, 10000, and 12000.

* **Data Series:** A blue line representing the importance score at each step.

* **Mean Score Line:** A horizontal dotted red line indicating "Mean Score: 0.126; Ratio: 0.211".

### Detailed Analysis

* **Question Section (Steps 0-200):** The importance score fluctuates rapidly and generally remains high. The blue line shows many peaks reaching near the "High" level of the y-axis.

* **Thinking Section (Steps 200-14000):** The importance score is generally lower than in the "Question" section. There are several spikes in importance score at approximately steps 2000, 4000, 6000, 10000, and 13000. The score remains relatively low between these spikes.

* **Mean Score:** The red dotted line representing the mean score is positioned relatively low on the y-axis, suggesting that the average importance score is closer to "Low" than "High".

### Key Observations

* The "Question" section exhibits significantly higher and more consistent importance scores compared to the "Thinking" section.

* The "Thinking" section shows periodic spikes in importance score, indicating moments of increased questioning or uncertainty.

* The mean score is relatively low, suggesting that the overall importance of questions is not consistently high throughout the entire process.

### Interpretation

The chart likely represents the importance of questions during a problem-solving or learning process. The initial "Question" phase (0-200 steps) is characterized by high questioning activity, possibly reflecting initial exploration and information gathering. The "Thinking" phase (200-14000 steps) shows a decrease in overall questioning, with intermittent spikes indicating moments where questions become more critical, perhaps when encountering challenges or new information. The relatively low mean score suggests that questioning is not a constant activity but rather a strategic one, used when needed to navigate the problem space. The ratio of 0.211 may represent the proportion of steps where the importance score exceeds a certain threshold, further emphasizing the intermittent nature of high-importance questions.

</details>

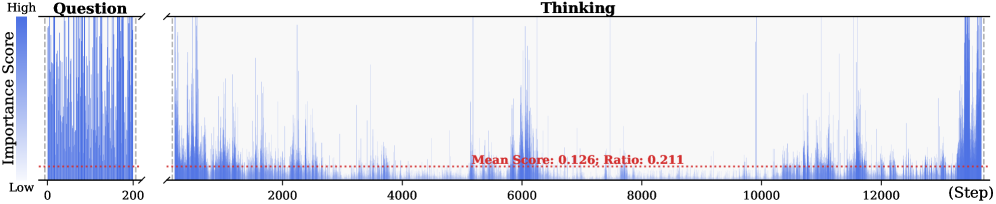

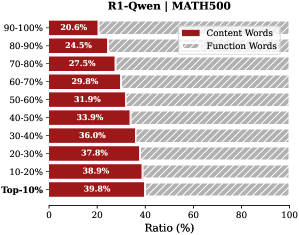

Figure 2: Importance scores of question tokens and thinking tokens in a reasoning trace, computed based on attention contributions to the answer. Darker colors indicate higher importance. The red dashed line shows the mean importance score, and the annotated ratio indicates the fraction of tokens with importance above the mean.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Ratio

### Overview

The image is a line chart comparing the accuracy of different methods ("Full", "Random", "Bottom", "Top") against varying ratios, ranging from 2% to 50%. The y-axis represents accuracy in percentage, and the x-axis represents the ratio in percentage.

### Components/Axes

* **X-axis:** Ratio (%), with markers at 2, 4, 6, 8, 10, 20, 30, 40, and 50.

* **Y-axis:** Accuracy (%), with markers at 65, 70, 75, 80, 85, 90, and 95.

* **Legend:** Located in the top-right of the chart.

* "Full" - Dashed gray line with 'x' markers.

* "Random" - Solid green line with triangle markers.

* "Bottom" - Solid blue line with square markers.

* "Top" - Solid red line with circle markers.

### Detailed Analysis

* **Full (Dashed Gray Line with 'x' markers):** This line remains almost constant at approximately 96% accuracy across all ratios.

* Ratio 2%: ~96%

* Ratio 50%: ~96%

* **Random (Solid Green Line with Triangle markers):** This line shows a general upward trend, indicating increasing accuracy with higher ratios.

* Ratio 2%: ~64%

* Ratio 6%: ~66%

* Ratio 20%: ~70%

* Ratio 50%: ~85%

* **Bottom (Solid Blue Line with Square markers):** This line also shows an upward trend, but with some fluctuations.

* Ratio 2%: ~66%

* Ratio 6%: ~63%

* Ratio 20%: ~70%

* Ratio 50%: ~80%

* **Top (Solid Red Line with Circle markers):** This line starts high and plateaus after a certain ratio.

* Ratio 2%: ~88%

* Ratio 6%: ~93%

* Ratio 20%: ~94%

* Ratio 50%: ~96%

### Key Observations

* The "Full" method consistently achieves the highest accuracy, remaining stable across all ratios.

* The "Top" method starts with high accuracy and quickly approaches the "Full" method's performance.

* The "Random" and "Bottom" methods show increasing accuracy as the ratio increases, but they remain significantly lower than the "Full" and "Top" methods.

* The "Bottom" method has a slight dip in accuracy between ratios 2% and 6%.

### Interpretation

The chart suggests that the "Full" method is the most reliable, maintaining high accuracy regardless of the ratio. The "Top" method is also effective, quickly reaching a high accuracy level. The "Random" and "Bottom" methods are less accurate, but their performance improves with higher ratios. This could indicate that as the ratio increases, the information captured by these methods becomes more relevant, leading to better accuracy. The "Bottom" method's initial dip might suggest that at very low ratios, it captures less useful information compared to the "Random" method.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

## Radar Chart: Performance Comparison

### Overview

The image is a radar chart comparing the performance of four different methods (Full, Bottom, Random, Top) across six categories: AIME24, AIME25, AMC23, GPQA-D, GAOKAO2023EN, and MATH500. The chart visualizes the relative strengths and weaknesses of each method in each category.

### Components/Axes

* **Axes:** The chart has six radial axes, each representing a category. The categories are:

* AIME24

* AIME25

* AMC23

* GPQA-D

* GAOKAO2023EN

* MATH500

* **Scale:** The radial scale ranges from 0 to 100, with markers at 20, 40, 60, 80, and 100.

* **Legend:** Located in the top-right corner, the legend identifies the four methods:

* Full (Gray line with an 'x' marker)

* Bottom (Blue line with a square marker)

* Random (Green line with a triangle marker)

* Top (Red line with a circle marker)

### Detailed Analysis

Here's a breakdown of the performance of each method in each category:

* **Full (Gray):**

* AIME24: Approximately 28

* AIME25: Approximately 28

* AMC23: Approximately 28

* GPQA-D: Approximately 28

* GAOKAO2023EN: Approximately 28

* MATH500: Approximately 28

* Trend: The "Full" method has a constant value across all categories.

* **Bottom (Blue):**

* AIME24: Approximately 20

* AIME25: Approximately 40

* AMC23: Approximately 80

* GPQA-D: Approximately 30

* GAOKAO2023EN: Approximately 20

* MATH500: Approximately 20

* Trend: The "Bottom" method shows variability, peaking at AMC23.

* **Random (Green):**

* AIME24: Approximately 25

* AIME25: Approximately 45

* AMC23: Approximately 75

* GPQA-D: Approximately 35

* GAOKAO2023EN: Approximately 25

* MATH500: Approximately 25

* Trend: The "Random" method shows variability, peaking at AMC23.

* **Top (Red):**

* AIME24: Approximately 30

* AIME25: Approximately 95

* AMC23: Approximately 95

* GPQA-D: Approximately 20

* GAOKAO2023EN: Approximately 10

* MATH500: Approximately 10

* Trend: The "Top" method shows significant variability, with high values for AIME25 and AMC23, and low values for GAOKAO2023EN and MATH500.

### Key Observations

* The "Full" method has a constant value across all categories.

* The "Top" method performs exceptionally well in AIME25 and AMC23 but poorly in GAOKAO2023EN and MATH500.

* The "Bottom" and "Random" methods show similar trends, with a peak in AMC23.

### Interpretation

The radar chart provides a clear visualization of the strengths and weaknesses of each method across different categories. The "Top" method appears to be highly specialized, excelling in some areas but failing in others. The "Full" method provides a baseline performance across all categories. The "Bottom" and "Random" methods offer intermediate performance, with a notable strength in AMC23. The choice of method would depend on the specific requirements and priorities of the task.

</details>

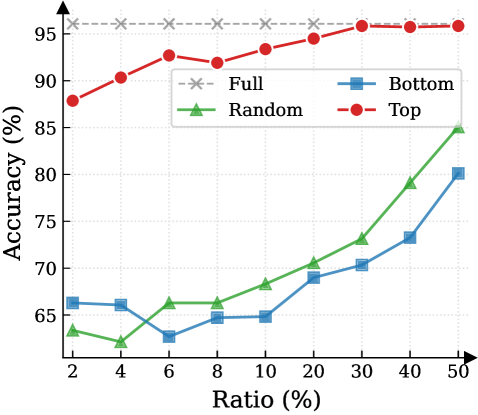

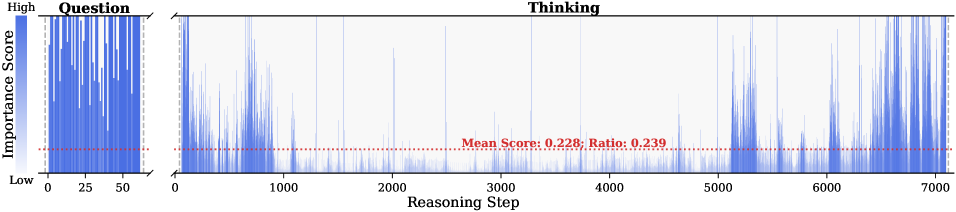

Figure 3: (Left) Reasoning performance trends as a function of thinking token retention ratio, where the $x$ -axis indicates the retention percentage and the $y$ -axis is the accuracy. (Right) Accuracy across all datasets when retaining $30\%$ of the thinking tokens.

Attention Mechanism.

Attention Mechanism is a core component of Transformer-based LRMs, such as Multi-Head Attention (Vaswani et al., 2017), Grouped-Query Attention (Ainslie et al., 2023), and their variants. To highlight the memory challenges in LRMs, we formulate the attention computation at the token level. Consider the decode step $t$ . Let $\mathbf{h}_{t}∈\mathbb{R}^{d}$ be the input hidden state of the current token. The model projects $\mathbf{h}_{t}$ into query, key, and value vectors:

$$

\mathbf{q}_{t}=\mathbf{W}_{Q}\mathbf{h}_{t},\quad\mathbf{k}_{t}=\mathbf{W}_{K}\mathbf{h}_{t},\quad\mathbf{v}_{t}=\mathbf{W}_{V}\mathbf{h}_{t}, \tag{3}

$$

where $\mathbf{W}_{Q},\mathbf{W}_{K},\mathbf{W}_{V}$ are learnable projection matrices. The query $\mathbf{q}_{t}$ attends to the keys of all preceding positions $j∈\{1,...,t\}$ . The attention weight $a_{t,j}$ between the current token $t$ and a past token $j$ is:

$$

\alpha_{t,j}=\frac{\exp(e_{t,j})}{\sum_{i=1}^{t}\exp(e_{t,i})},\qquad e_{t,j}=\frac{\mathbf{q}_{t}^{\top}\mathbf{k}_{j}}{\sqrt{d_{k}}}. \tag{4}

$$

These scores represent the relevance of the current step to the $j$ -th token. Finally, the output of the attention head $\mathbf{o}_{t}$ is the weighted sum of all historical value vectors:

$$

\mathbf{o}_{t}=\sum_{j=1}^{t}\alpha_{t,j}\mathbf{v}_{j}. \tag{5}

$$

As Equation 5 implies, calculating $\mathbf{o}_{t}$ requires access to the entire sequence of past keys and values $\{\mathbf{k}_{j},\mathbf{v}_{j}\}_{j=1}^{t-1}$ . In standard implementation, these vectors are stored in the KV cache to avoid redundant computation (Vaswani et al., 2017; Pope et al., 2023). In the LRMs’ inference, the reasoning trace is exceptionally long, imposing significant memory bottlenecks and increasing computational overhead.

3 Observations and Insight

This section presents the observed sparsity of thinking tokens and the Pareto Principle in LRMs, serving as the basis for DynTS. Detailed experimental settings and additional results are provided in Appendix § B.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Diagram: Training and Inference Process with Importance Predictor

### Overview

The image presents a diagram illustrating the training and inference processes of a Large Reasoning Model (LRM) enhanced with an Importance Predictor (IP). The diagram is split into two main sections: "Training" on the left and "Inference" on the right. The training section shows how the IP is trained using Mean Squared Error Loss, while the inference section demonstrates how the IP is used to manage a KV Cache Budget during multi-step reasoning.

### Components/Axes

**Training Section:**

* **Title:** Training

* **Elements:**

* Input Tokens: A series of gray boxes at the bottom, representing input tokens.

* Large Reasoning Model (LRM): A light blue box above the input tokens. A snowflake icon is present on the right side of the box.

* Importance Predictor (IP): A red box above the LRM. A flame icon is present on the right side of the box.

* Mean Squared Error Loss: A dashed box above the IP, containing a series of boxes with varying shades of red, representing the error loss.

* Thinking Tokens: A series of boxes with varying shades of red, representing the model's internal "thinking" process.

* Answer: A series of gray boxes, representing the correct answer.

* Arrows: Arrows indicate the flow of information:

* Upward arrows from the LRM to the IP and then to the Mean Squared Error Loss.

* "Aggregate" arrow pointing from the Thinking Tokens to the Mean Squared Error Loss.

* "Backward" arrow pointing from the Mean Squared Error Loss back to the IP.

**Inference Section:**

* **Title:** Inference

* **Axes:**

* Vertical Axis: Labeled "Selection" in the middle, and "Local" at the bottom.

* Horizontal Axis: Labeled "Steps" at the bottom-right, indicating the progression of the inference process.

* **Elements:**

* Question: Labeled at the top, containing token pairs A and B, each with a value of infinity.

* KV Cache Budget: Labeled on the right side, representing the memory allocated for key-value pairs.

* Reach Budget: A pink shaded region.

* Select Critical Tokens: A green shaded region.

* Tokens: Represented by boxes containing a letter and a numerical value. The color of the box indicates the token's importance or relevance.

* Arrows: Arrows indicate the flow of information and dependencies between tokens.

* Text Labels: "Evict," "Keep," and "Retain" indicate actions taken on tokens.

* Current Token: Labeled at the bottom-left, showing "Current Token X".

* LRM with IP: A light blue box with a red section on the right, representing the LRM enhanced with the IP.

* Next Token: Labeled at the bottom-right, showing "Next Token Y".

* Predicted Score: A box containing "0.2" with a red fill, representing the predicted score for the next token.

### Detailed Analysis

**Training Section:**

* The "Thinking Tokens" section shows a sequence of tokens, with the intensity of the red color indicating the level of "thinking" or processing associated with each token. The first 10 tokens are colored, with the 6th token being the most intense red. The last 3 tokens are gray.

* The "Mean Squared Error Loss" section shows a similar sequence of tokens, with the intensity of the red color indicating the magnitude of the error. The 6th token is the most intense red.

* The "Importance Predictor (IP)" receives input from the "Large Reasoning Model (LRM)" and is trained using the "Mean Squared Error Loss."

**Inference Section:**

* The "Question" tokens A and B have infinite values and are kept throughout the process.

* The "Selection" section shows a series of tokens (C, D, E, F, G, H) with associated values (0.2, 0.1, 0.5, 0.1, 0.4, 0.2). Some tokens are evicted based on their values.

* The "Local" section shows tokens (I, J, K) with associated values (0.2, 0.1, 0.3). These tokens are kept.

* The "Reach Budget" region highlights tokens that are considered for retention in the KV Cache.

* The "Select Critical Tokens" region shows the tokens that are ultimately retained in the KV Cache.

* The KV Cache Budget section shows the final set of tokens (L, M, N, O) with associated values (0.7, 0.4, 0.1, 0.2).

* The process starts with a "Current Token X," which is fed into the "LRM with IP" to predict the "Next Token Y" and its associated score.

**Token Values and Actions:**

| Token | Value | Action (Inference) |

|-------|-------|--------------------|

| A | ∞ | Keep |

| B | ∞ | Keep |

| C | 0.2 | Evict |

| D | 0.1 | Evict |

| E | 0.5 | Retain |

| F | 0.1 | Evict |

| G | 0.4 | Retain |

| H | 0.2 | Evict |

| I | 0.2 | Keep |

| J | 0.1 | Keep |

| K | 0.3 | Keep |

| L | 0.7 | - |

| M | 0.4 | - |

| N | 0.1 | - |

| O | 0.2 | - |

### Key Observations

* The "Training" section focuses on minimizing the error between the model's "thinking" process and the correct answer.

* The "Inference" section demonstrates how the IP is used to selectively retain important tokens in the KV Cache, optimizing memory usage and potentially improving performance.

* Tokens with higher values are more likely to be retained in the KV Cache.

* The "Reach Budget" and "Select Critical Tokens" regions visually represent the token selection process.

### Interpretation

The diagram illustrates a system where an Importance Predictor (IP) is trained to identify and retain critical tokens during the inference process of a Large Reasoning Model (LRM). The training process uses Mean Squared Error Loss to refine the IP's ability to predict the importance of tokens. During inference, the IP helps manage the KV Cache Budget by selectively retaining tokens based on their predicted importance, as indicated by their numerical values. This approach aims to optimize memory usage and potentially improve the efficiency and performance of the LRM by focusing on the most relevant information. The "Evict," "Keep," and "Retain" actions demonstrate the dynamic management of the KV Cache, highlighting the IP's role in prioritizing and preserving important tokens while discarding less relevant ones.

</details>

Figure 4: Overview of DynTS. (Left) Importance Predictor Training. The upper heatmap visualizes attention weights, where orange intensity represents the importance of thinking tokens to the answer. The lower part shows a LRM integrated with an Importance Predictor (IP) to learn these importance scores. (Right) Inference with KV Cache Selection. The model outputs the next token and a predicted importance score of the current token. When the cache budget is reached, the selection strategy retains the KV cache of question tokens, local tokens, and top-k thinking tokens based on the predicted importance score.

3.1 Sparsity for Thinking Tokens

Previous works (Bogdan et al., 2025; Zhang et al., 2023; Singh et al., 2024) have shown that attention weights (Eq. 4) serve as a reliable proxy for token importance. Building on this insight, we calculate an importance score for each question and thinking token by accumulating the attention they receive from all answer tokens. Formally, the importance scores are defined as:

$$

I_{x_{j}}=\sum_{i=1}^{K}\alpha_{a_{i},x_{j}},\qquad I_{t_{j}}=\sum_{i=1}^{K}\alpha_{a_{i},t_{j}}, \tag{6}

$$

where $I_{x_{j}}$ and $I_{t_{j}}$ denote the importance scores of the $j$ -th question token $x_{j}$ and thinking token $t_{j}$ . Here, $\alpha_{a_{i},x_{j}}$ and $\alpha_{a_{i},t_{j}}$ represent the attention weights from the $i$ -th answer token $a_{i}$ to the corresponding question or thinking token, and $K$ is the total number of answer tokens. We perform full autoregressive inference on LRMs to extract attention weights and compute token-level importance scores for both question and thinking tokens.

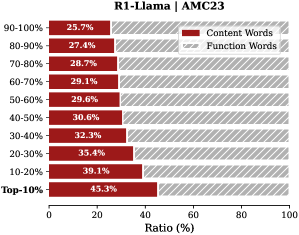

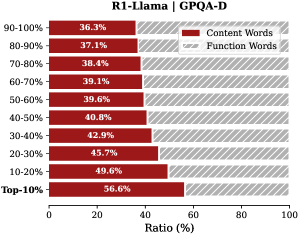

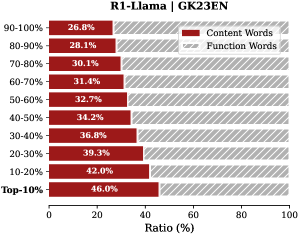

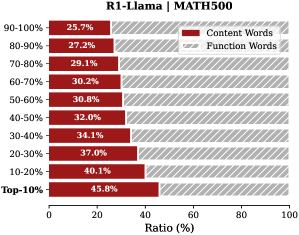

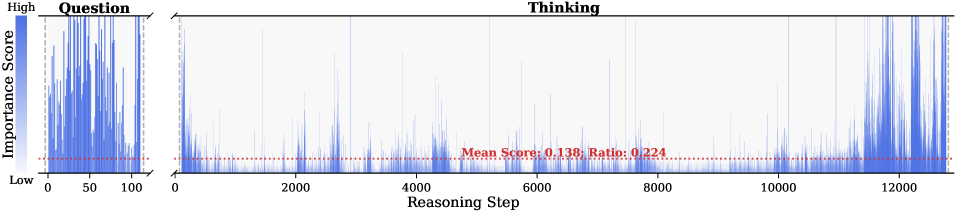

Observation. As illustrated in Fig. 2, the question tokens (left panel) exhibit consistently significant and dense importance scores. In contrast, the thinking tokens (right panel) display a highly sparse distribution. Despite the extensive reasoning trace (exceeding 12k tokens), only $21.1\%$ of thinking tokens exceed the mean importance score. This indicates that the vast majority of reasoning steps exert only a marginal influence on the final answer.

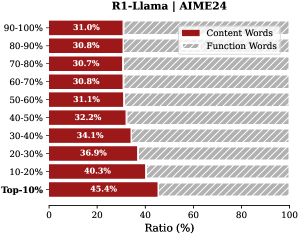

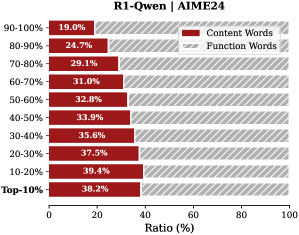

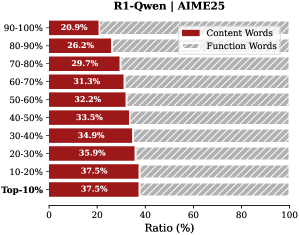

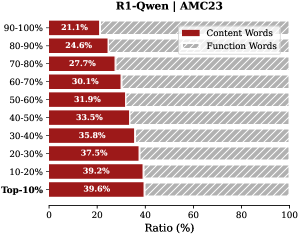

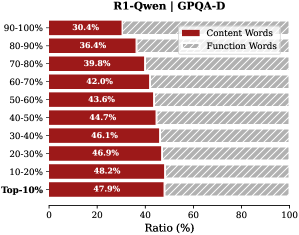

Analysis. Follow attention-based methods (Cai et al., 2025; Li et al., 2024; Cai et al., 2024), tokens with higher importance scores intuitively correspond to decision-critical reasoning steps, which are critical for the model to generate the final answer. The low-importance tokens serve as syntactic scaffolding or intermediate states that become redundant after reasoning progresses (We report the ratio of Content Words, see Appendix B.2). Consequently, we hypothesize that the model maintains close reasoning performance to that of the full token sequence, even when it selectively retains only these critical thinking tokens.

3.2 Pareto Principle in LRMs

To validate the aforementioned hypothesis, we retain all question tokens while preserving only the top- $p\%$ of thinking tokens ranked by importance score, and prompt the model to directly generate the final answer.

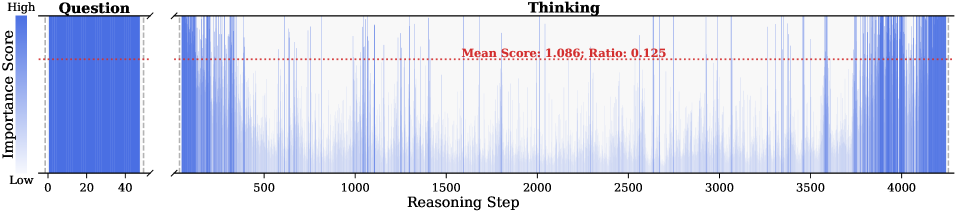

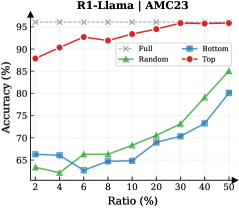

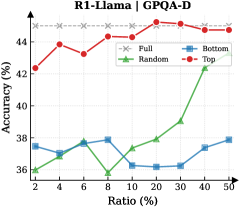

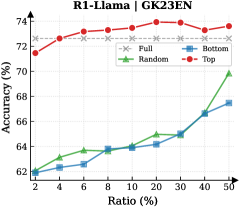

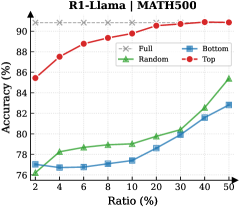

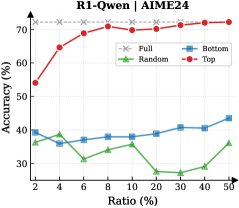

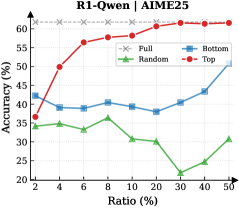

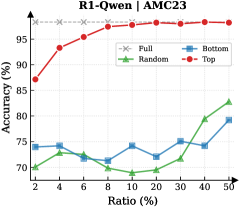

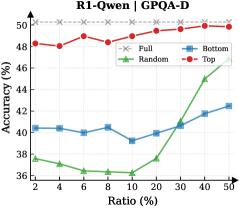

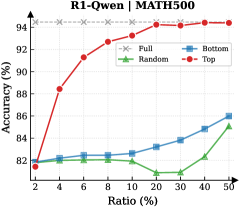

Observation. As illustrated in Fig. 3 (Left), the importance-based top- $p\%$ selection strategy substantially outperforms both random- and bottom-selection baselines. Notably, the model recovers nearly its full performance (grey dashed line) when retaining only $\sim 30\%$ thinking tokens with top importance scores. Fig. 3 (Right) further confirms this trend across six diverse datasets, where the performance polygon under the top- $30\%$ retention strategy almost completely overlaps with the full thinking tokens.

Insights. These empirical results illustrate and reveal the Pareto Principle in LRM reasoning: Only a small subset of thinking tokens ( $30\%$ ) with high importance scores serve as “pivotal nodes,” which are critical for the model to output a final answer, while the remaining tokens contribute negligibly to the outcome. This finding provides strong empirical support for LRMs’ KV cache compression, indicating that it is possible to reduce memory footprint and computational overhead without sacrificing performance.

4 Dynamic Thinking-Token Selection

Building on the Pareto Principle in LRMs, critical thinking tokens can be identified via the importance score computed by Equation 6. However, this computation requires the attention weights from the answer to the thinking tokens, which are inaccessible until the model completes the entire decoding stage. To address this limitation, we introduce an Importance Predictor that dynamically estimates the importance score of each thinking token during inference time. Furthermore, we design a decoding-time KV cache Selection Strategy that retains critical thinking tokens and evicts redundant ones. We refer to this approach as DynTS (Dyn amic T hinking Token S election), and the overview is illustrated in Fig. 4.

4.1 Importance Predictor

Integrate Importance Predictor in LRMs.

Transformer-based Large Language Models (LLMs) typically consist of stacked Transformer blocks followed by a language modeling head (Vaswani et al., 2017), where the output of the final block serves as a feature representation of the current token. Building on this architecture, we attach an additional lightweight MLP head to the final hidden state, named as Importance Predictor (Huang et al., 2024). It is used to predict the importance score of the current thinking token during model inference, capturing its contribution to the final answer. Formally, we define the modified LRM as a mapping function $\mathcal{M}$ that processes the input sequence $\mathbf{x}_{≤ t}$ to produce a dual-output tuple comprising the next token $x_{t+1}$ and the current importance score $s_{x_{t}}$ :

$$

\mathcal{M}(\mathbf{x}_{\leq t})\rightarrow(x_{t+1},s_{x_{t}}) \tag{7}

$$

Predictor Training.

To obtain supervision signals for training, we prompt the LRMs based on the training dataset to generate complete sequences denoted as $\{x_{1... M},t_{1... L},a_{1... K}\}$ , filtering out incorrect or incomplete reasoning. Here, $x$ , $t$ , and $a$ represent the question, thinking, and answer tokens, respectively. Based on the observation in Section § 3, the thinking tokens significantly outnumber answer tokens ( $L\gg K$ ), and question tokens remain essential. Therefore, DynTS only focuses on predicting the importance of thinking tokens. By utilizing the attention weights from answer to thinking tokens, we derive the ground-truth importance score $I_{t_{i}}$ for each thinking token according to Equation 6. Finally, the Importance Predictor parameters can be optimized by minimizing the Mean Squared Error (MSE) loss (Wang and Bovik, 2009) as follows:

$$

\mathcal{L}_{\text{MSE}}=\frac{1}{K}\sum_{i=1}^{K}(I_{t_{i}}-s_{t_{i}})^{2}. \tag{8}

$$

To preserve the LRMs’ original performance, we freeze the backbone parameters and optimize the Importance Predictor exclusively. The trained model can predict the importance of thinking tokens to the answer. This paper focuses on mathematical reasoning tasks. We optimize the Importance Predictor only on the MATH training set and validated across six other datasets (See Section § 6.1).

4.2 KV Cache Selection

During LRMs’ inference, we establish a maximum KV cache budget $B$ , which is composed of a question window $W_{q}$ , a selection window $W_{s}$ , and a local window $W_{l}$ , formulated as $B=W_{q}+W_{s}+W_{l}$ . Specifically, the question window stores the KV caches of question tokens generated during the prefilling phase, i.e., the window size $W_{q}$ is equal to the number of question tokens $M$ ( $W_{q}=M$ ). Since these tokens are critical for the final answer (see Section § 3), we assign an importance score of $+∞$ to these tokens, ensuring their KV caches are immune to eviction throughout the inference process.

In the subsequent decoding phase, we maintain a sequential stream of tokens. Newly generated KV caches and their corresponding importance scores are sequentially appended to the selection window ( $W_{s}$ ) and the local window ( $W_{l}$ ). Once the total token count reaches the budget limit $B$ , the critical token selection process is triggered, as illustrated in Fig. 4 (Right). Within the selection window, we retain the KV caches of the top- $k$ tokens with the highest scores and evict the remainder. Simultaneously, drawing inspiration from (Chen et al., 2024; Zhang et al., 2023; Zhao et al., 2024), we maintain the KV caches within the local window to ensure the overall coherence of the subsequently generated sequence. This inference process continues until decoding terminates.

5 Theoretical Overhead Analysis

In DynTS, the KV cache selection strategy reduces computational overhead by constraining cache length, while the importance predictor introduces a slight overhead. In this section, we theoretically analyze the trade-off between these two components and derive the Break-even Condition required to achieve net computational gains.

Notions. Let $\mathcal{M}_{\text{base}}$ be the vanilla LRM with $L$ layers and hidden dimension $d$ , and $\mathcal{M}_{\text{opt}}$ be the LRM with Importance Predictor (MLP: $d→ 2d→ d/2→ 1$ ). We define the prefill length as $M$ and the current decoding step as $i∈\mathbb{Z}^{+}$ . For vanilla decoding, the effective KV cache length grows linearly as $S_{i}^{\text{base}}=M+i$ . While DynTS evicts $K$ tokens by the KV Cache Selection when the effective KV cache length reaches the budget $B$ . Resulting in the effective length $S_{i}^{\text{opt}}=M+i-n_{i}· K$ , where $n_{i}=\max\left(0,\left\lfloor\frac{(M+i)-B}{K}\right\rfloor+1\right)$ denotes the count of cache eviction event at step $i$ . By leveraging Floating-Point Operations (FLOPs) to quantify computational overhead, we establish the following theorem. The detailed proof is provided in Appendix A.

**Theorem 5.1 (Computational Gain)**

*Let $\Delta\mathcal{C}(i)$ be the reduction FLOPs achieved by DynTS at decoding step $i$ . The gain function is derived as the difference between the eviction event savings from KV Cache Selection and the introduced overhead of the predictor:

$$

\Delta\mathcal{C}(i)=\underbrace{n_{i}\cdot 4LdK}_{\text{Eviction Saving}}-\underbrace{(6d^{2}+d)}_{\text{Predictor Overhead}}, \tag{9}

$$*

Based on the formulation above, we derive a critical corollary regarding the net computational gain.

**Corollary 5.2 (Break-even Condition)**

*To achieve a net computational gain ( $\Delta\mathcal{C}(i)>0$ ) at the $n_{i}$ -th eviction event, the eviction volume $K$ must satisfy the following inequality:

$$

K>\frac{6d^{2}+d}{n_{i}\cdot 4Ld}\approx\frac{1.5d}{n_{i}L} \tag{10}

$$*

This inequality provides a theoretical lower bound for the eviction volume $K$ . demonstrating that the break-even point is determined by the model’s architectural (hidden dimension $d$ and layer count $L$ ).

Table 1: Performance comparison of different methods on R1-Llama and R1-Qwen. We report the average Pass@1 and Throughput (TPS) across six benchmarks. “Transformers” denotes the full cache baseline, and “Window” represents the local window baseline.

| Transformers Window StreamingLLM | 47.3 18.6 20.6 | 215.1 447.9 445.8 | 28.6 14.6 16.6 | 213.9 441.3 445.7 | 86.5 59.5 65.0 | 200.6 409.4 410.9 | 46.4 37.6 37.8 | 207.9 408.8 407.4 | 73.1 47.0 53.4 | 390.9 622.6 624.6 | 87.5 58.1 66.1 | 323.4 590.5 592.1 | 61.6 39.2 43.3 | 258.6 486.7 487.7 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| SepLLM | 30.0 | 448.2 | 20.0 | 445.1 | 71.0 | 414.1 | 39.7 | 406.6 | 61.4 | 635.0 | 74.5 | 600.4 | 49.4 | 491.6 |

| H2O | 38.6 | 426.2 | 22.6 | 423.4 | 82.5 | 396.1 | 41.6 | 381.5 | 67.5 | 601.8 | 82.7 | 573.4 | 55.9 | 467.1 |

| SnapKV | 39.3 | 438.2 | 24.6 | 436.3 | 80.5 | 406.9 | 41.9 | 394.1 | 68.7 | 615.7 | 83.1 | 584.5 | 56.3 | 479.3 |

| R-KV | 44.0 | 437.4 | 26.0 | 434.7 | 86.5 | 409.5 | 44.5 | 394.9 | 71.4 | 622.6 | 85.2 | 589.2 | 59.6 | 481.4 |

| DynTS (Ours) | 49.3 | 444.6 | 29.3 | 443.5 | 87.0 | 412.9 | 46.3 | 397.6 | 72.3 | 631.8 | 87.2 | 608.2 | 61.9 | 489.8 |

| R1-Qwen | | | | | | | | | | | | | | |

| Transformers | 52.0 | 357.2 | 35.3 | 354.3 | 87.5 | 376.2 | 49.0 | 349.4 | 77.9 | 593.7 | 91.3 | 517.3 | 65.5 | 424.7 |

| Window | 41.3 | 650.4 | 31.3 | 643.0 | 82.0 | 652.3 | 45.9 | 634.1 | 71.8 | 815.2 | 85.0 | 767.0 | 59.5 | 693.7 |

| StreamingLLM | 42.0 | 655.7 | 29.3 | 648.5 | 85.0 | 657.2 | 45.9 | 631.1 | 71.2 | 824.0 | 85.8 | 786.1 | 59.8 | 700.5 |

| SepLLM | 38.6 | 650.0 | 31.3 | 647.6 | 85.5 | 653.2 | 45.6 | 639.5 | 72.0 | 820.1 | 84.4 | 792.2 | 59.6 | 700.4 |

| H2O | 42.6 | 610.9 | 33.3 | 610.7 | 84.5 | 609.9 | 48.1 | 593.6 | 74.1 | 780.1 | 87.0 | 725.4 | 61.6 | 655.1 |

| SnapKV | 48.6 | 639.6 | 33.3 | 633.1 | 87.5 | 633.2 | 46.5 | 622.0 | 74.9 | 787.4 | 88.2 | 768.7 | 63.2 | 680.7 |

| R-KV | 44.0 | 639.5 | 32.6 | 634.7 | 85.0 | 636.8 | 47.2 | 615.1 | 75.8 | 792.8 | 88.8 | 765.5 | 62.2 | 680.7 |

| DynTS (Ours) | 52.0 | 645.6 | 36.6 | 643.0 | 88.5 | 646.0 | 48.1 | 625.7 | 76.4 | 788.5 | 90.0 | 779.5 | 65.3 | 688.1 |

6 Experiment

This section introduces experimental settings, followed by the results, ablation studies on retanind tokens and hyperparameters, and the Importance Predictor analysis. For more detailed configurations and additional results, please refer to Appendix C and D.

6.1 Experimental Setup

Models and Datasets. We conduct experiments on two mainstream LRMs: R1-Qwen (DeepSeek-R1-Distill-Qwen-7B) and R1-Llama (DeepSeek-R1-Distill-Llama-8B) (Guo et al., 2025). To evaluate the performance and robustness of our method across diverse tasks, we select five mathematical reasoning datasets of varying difficulty levels—AIME24 (Zhang and Math-AI, 2024), AIME25 (Zhang and Math-AI, 2025), AMC23 https://huggingface.co/datasets/math-ai/amc23, GK23EN (GAOKAO2023EN) https://huggingface.co/datasets/MARIO-Math-Reasoning/Gaokao2023-Math-En, and MATH500 (Hendrycks et al., 2021) —along with the GPQA-D (GPQA-Diamond) (Rein et al., 2024) scientific question-answering dataset as evaluation benchmarks.

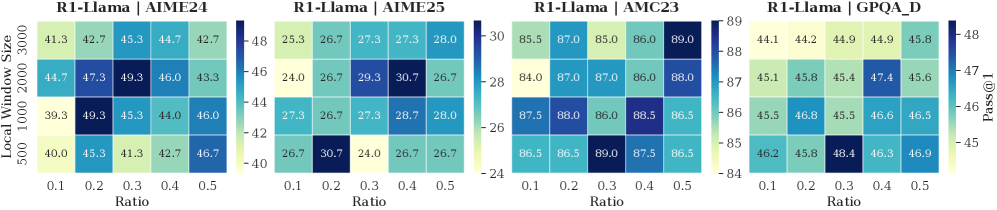

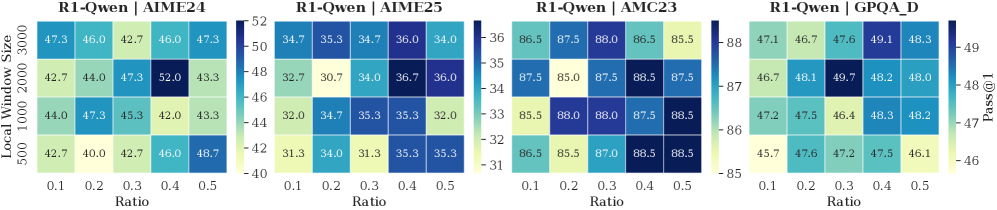

Implementation Details. (1) Training Settings: To train the importance predictor, we sample the model-generated contents with correct answers from the MATH training set and calculate the importance scores of thinking tokens. We freeze the model backbone and optimize only the predictor ( $3$ -layer MLP), setting the number of training epochs to 15, the learning rate to $5\text{e-}4$ , and the maximum sequence length to 18,000. (2) Inference Settings. Following (Guo et al., 2025), setting the maximum decoding steps to 16,384, the sampling temperature to 0.6, top- $p$ to 0.95, and top- $k$ to 20. We apply budget settings based on task difficulty. For challenging benchmarks (AIME24, AIME25, AMC23, and GPQA-D), we set the budget $B$ to 5,000 with a local window size of 2,000; For simpler tasks, the budget is set to 3,000 with a local window of 1,500 for R1-Qwen and 1,000 for R1-Llama. The token retention ratio in the selection window is set to 0.4 for R1-Qwen and 0.3 for R1-Llama. We generate 5 responses for each problem and report the average Pass@1 as the evaluation metric.

Baselines. Our approach focuses on compressing the KV cache by selecting critical tokens. Therefore, we compare our method against the state-of-the-art KV cache compressing approaches. These include StreamingLLM (Xiao et al., 2024), H2O (Zhang et al., 2023), SepLLM (Chen et al., 2024), and SnapKV (Li et al., 2024) (decode-time variant (Liu et al., 2025a)) for LLMs, along with R-KV (Cai et al., 2025) for LRMs. To ensure a fair comparison, all methods were set with the same token overhead and maximum budget. We also report results for standard Transformers and local window methods as evaluation baselines.

6.2 Main Results

Reasoning Accuracy. As shown in Table 1, our proposed DynTS consistently outperforms all other KV cache eviction baselines. On R1-Llama and R1-Qwen, DynTS achieves an average accuracy of $61.9\%$ and $65.3\%$ , respectively, significantly surpassing the runner-up methods R-KV ( $59.6\%$ ) and SnapKV ( $63.2\%$ ). Notably, the overall reasoning capability of DynTS is on par with the Full Cache Transformers baseline ( $61.9\%$ vs. $61.6\%$ on R1-Llama, $65.3\%$ vs. $65.5\%$ on R1-Llama). Even outperforms the Transformers on several challenging tasks, such as AIME24 on R1-Llama, where it improves accuracy by $2.0\%$ ; and AIME25 on R1-Qwen, where it improves accuracy by $1.3\%$ .

Table 2: Ablation study on different token retention strategies in DynTS, where w.o. Q / T / L denotes the removal of Question tokens (Q), critical Thinking tokens (T), and Local window tokens (L), respectively. T-Random and T-Bottom represent strategies that select thinking tokens randomly and the tokens with the bottom-k importance scores, respectively.

| DynTS w.o. L w.o. Q | 49.3 40.6 19.3 | 29.3 23.3 14.6 | 87.0 86.5 59.0 | 46.3 46.3 38.1 | 72.3 72.0 47.8 | 87.2 85.5 59.8 | 61.9 59.0 39.8 |

| --- | --- | --- | --- | --- | --- | --- | --- |

| w.o. T | 44.0 | 27.3 | 85.0 | 44.0 | 71.5 | 85.9 | 59.6 |

| T-Random | 24.6 | 16.0 | 59.5 | 37.4 | 51.7 | 63.9 | 42.2 |

| T-Bottom | 20.6 | 15.3 | 59.0 | 37.3 | 47.3 | 59.5 | 39.8 |

| R1-Qwen | | | | | | | |

| DynTS | 52.0 | 36.6 | 88.5 | 48.1 | 76.4 | 90.0 | 65.3 |

| w.o. L | 42.0 | 32.0 | 87.5 | 46.3 | 75.2 | 87.0 | 61.6 |

| w.o. Q | 46.0 | 36.0 | 86.0 | 43.9 | 75.1 | 89.0 | 62.6 |

| w.o. T | 47.3 | 34.6 | 85.5 | 49.1 | 75.1 | 89.2 | 63.5 |

| T-Random | 46.0 | 32.6 | 84.5 | 47.5 | 73.8 | 86.9 | 61.9 |

| T-Bottom | 38.0 | 30.0 | 80.0 | 44.3 | 69.8 | 83.3 | 57.6 |

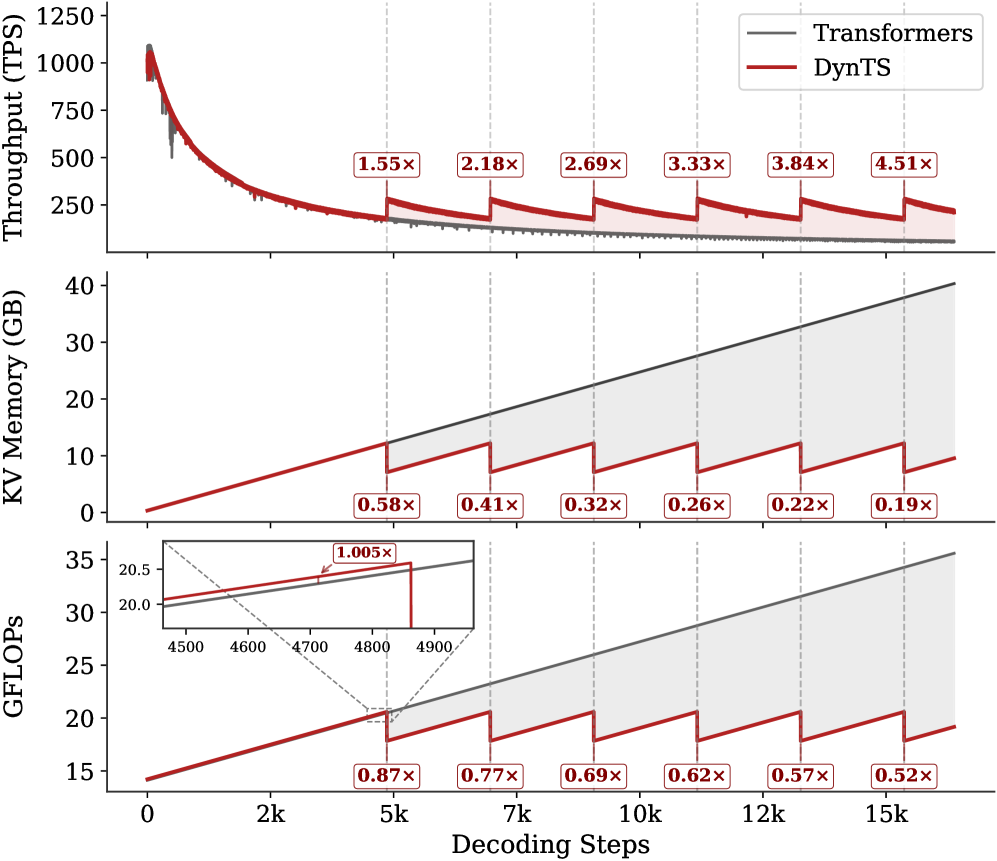

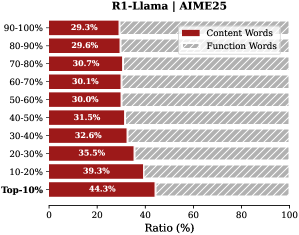

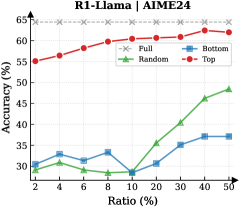

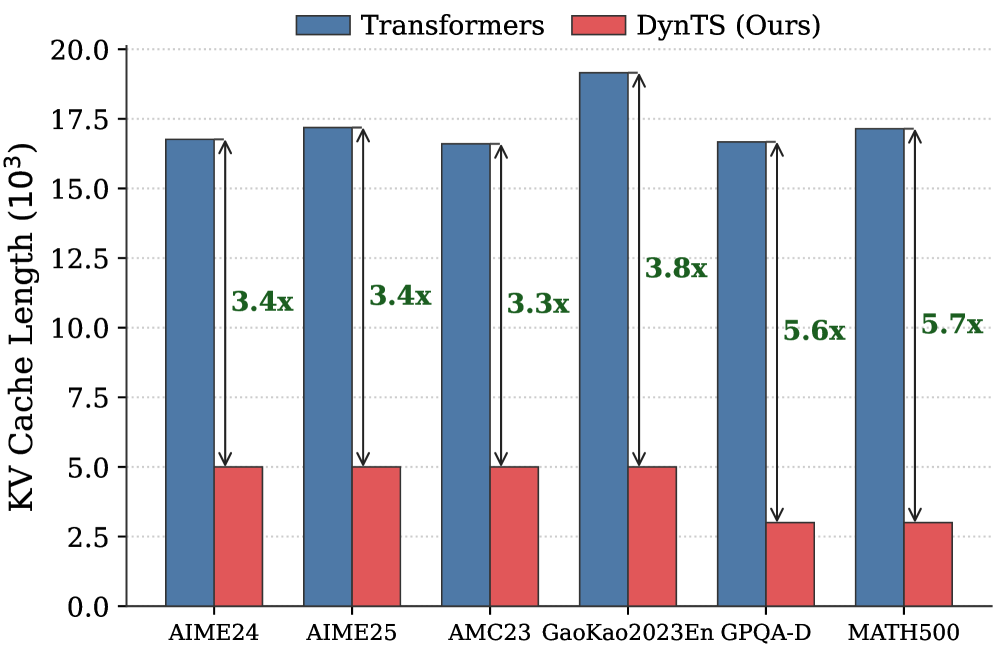

Inference Efficiency. Referring to Table 1, DynTS achieves $1.9×$ and $1.6×$ speedup compared to standard Transformers on R1-Llama and R1-Qwen, respectively, across all benchmarks. While DynTS maintains throughput comparable to other KV Cache compression methods. Further observing Figure 5, as the generated sequence length grows, standard Transformers suffer from linear accumulation in both memory footprint and compute overhead (GFLOPs), leading to continuous throughput degradation. In contrast, DynTS effectively bounds resource consumption. The distinctive sawtooth pattern illustrates our periodic compression mechanism, where the inflection points correspond to the execution of KV Cache Selection to evict the KV pairs of non-essential thinking tokens. Consequently, the efficiency advantage escalates as the decoding step increases, DynTS achieves a peak speedup of 4.51 $×$ , compresses the memory footprint to 0.19 $×$ , and reduces the compute overhead to 0.52 $×$ compared to the full-cache baseline. The zoom-in view reveals that the computational cost drops below the baseline immediately after the first KV cache eviction. This illustrates that our experimental settings are rational, as they satisfy the break-even condition ( $K=900≥\frac{1.5d}{n_{i}L}=192$ ) outlined in Corollary 5.2.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Performance Comparison: Transformers vs. DynTS

### Overview

The image presents a comparative performance analysis between "Transformers" (represented by gray lines) and "DynTS" (represented by red lines) across three key metrics: Throughput (TPS), KV Memory (GB), and GFLOPS, plotted against Decoding Steps. The chart aims to illustrate the efficiency and resource utilization of DynTS relative to Transformers during a decoding process. The x-axis represents decoding steps, ranging from 0 to 15k.

### Components/Axes

* **Top Chart:**

* Y-axis: Throughput (TPS), ranging from 0 to 1250.

* X-axis: Decoding Steps (shared across all charts).

* Legend (top-right):

* Transformers (gray line)

* DynTS (red line)

* **Middle Chart:**

* Y-axis: KV Memory (GB), ranging from 0 to 40.

* X-axis: Decoding Steps.

* **Bottom Chart:**

* Y-axis: GFLOPS, ranging from 15 to 35.

* X-axis: Decoding Steps.

* Inset: Zoomed view of GFLOPS between 4500 and 4900 decoding steps, Y-axis ranging from 20.0 to 20.5.

* **X-Axis (shared):** Decoding Steps, labeled from 0 to 15k in increments of 2k, with vertical dashed lines at approximately 5k, 7k, 10k, 12k, and 15k.

### Detailed Analysis

**1. Throughput (TPS):**

* **Transformers (gray):** Starts at approximately 1100 TPS and rapidly decreases to around 200 TPS, then gradually declines further, approaching 100 TPS by 15k decoding steps.

* **DynTS (red):** Starts at approximately 1100 TPS, decreases to around 300 TPS, and then exhibits a saw-tooth pattern, with periodic increases at intervals marked by vertical dashed lines.

* **Ratio Markers:**

* At 5k steps: 1.55x

* At 7k steps: 2.18x

* At 10k steps: 2.69x

* At 12k steps: 3.33x

* At 15k steps: 3.84x

* Beyond 15k steps: 4.51x

**2. KV Memory (GB):**

* **Transformers (gray):** Increases linearly from approximately 0 GB to 40 GB over 15k decoding steps.

* **DynTS (red):** Increases in a saw-tooth pattern, with linear increases followed by sharp drops at intervals marked by vertical dashed lines.

* **Ratio Markers:**

* At 5k steps: 0.58x

* At 7k steps: 0.41x

* At 10k steps: 0.32x

* At 12k steps: 0.26x

* At 15k steps: 0.22x

* Beyond 15k steps: 0.19x

**3. GFLOPS:**

* **Transformers (gray):** Increases linearly from approximately 14 GFLOPS to 34 GFLOPS over 15k decoding steps.

* **DynTS (red):** Increases in a saw-tooth pattern, with linear increases followed by sharp drops at intervals marked by vertical dashed lines.

* **Ratio Markers:**

* At 5k steps: 0.87x

* At 7k steps: 0.77x

* At 10k steps: 0.69x

* At 12k steps: 0.62x

* At 15k steps: 0.57x

* Beyond 15k steps: 0.52x

* **Inset Details:** The inset shows a zoomed-in view around 4500-4900 decoding steps. The Transformers line (gray) is slightly above the DynTS line (red), with a ratio marker of 1.005x near the 4800 step mark.

### Key Observations

* **Throughput:** DynTS maintains a higher throughput than Transformers after the initial drop, as indicated by the ratios greater than 1.

* **KV Memory:** DynTS uses significantly less KV Memory than Transformers, as indicated by the ratios less than 1.

* **GFLOPS:** DynTS requires fewer GFLOPS than Transformers, as indicated by the ratios less than 1.

* **Saw-tooth Pattern:** The saw-tooth pattern in DynTS's KV Memory and GFLOPS usage suggests a periodic memory release or optimization strategy.

### Interpretation

The data suggests that DynTS offers a more efficient alternative to Transformers, particularly in terms of KV Memory usage and GFLOPS. While the initial throughput is similar, DynTS manages to maintain a higher throughput while consuming fewer resources as the decoding process progresses. The saw-tooth pattern indicates a memory management strategy that periodically reduces memory footprint and computational load, leading to improved efficiency. The ratios provided at specific decoding steps quantify the performance gains achieved by DynTS over Transformers. The inset highlights a specific region where the GFLOPS performance is very close, but DynTS still maintains a slight advantage.

</details>

Figure 5: Real-time throughput, memory, and compute overhead tracking over total decoding step. The inflection points in the sawtooth correspond to the steps where DynTS executes KV Cache Selection.

6.3 Ablation Study

Impact of Retained Token. As shown in Tab. 2, the full DynTS method outperforms all ablated variants, achieving the highest average accuracy on both R1-Llama ( $61.9\%$ ) and R1-Qwen ( $65.3\%$ ). This demonstrates that all retained tokens of DynTS are critical for the model to output the correct final answer. Moreover, we observe that the strategy for selecting thinking tokens plays a critical role in the model’s reasoning performance. When some redundant tokens are retained (T-Random and T-Bottom strategies), there is a significant performance drop compared to completely removing thinking tokens ( $59.6\%→ 39.8\%$ on R1-Llama and $63.5\%→ 57.6\%$ on R1-Qwen). This finding demonstrates the effectiveness of our Importance Predictor to identify critical tokens. It also explains why existing KV cache compression methods hurt model performance: inadvertently retaining redundant tokens. Finally, the local window is crucial for preserving local linguistic coherence, which contributes to stable model performance.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Heatmap: R1-Llama vs. R1-Qwen Performance

### Overview

The image presents two heatmaps comparing the performance of "R1-Llama" and "R1-Qwen" models. The heatmaps visualize the "Pass@1" metric across different "Local Window Sizes" and "Ratio" values. The color intensity represents the Pass@1 score, with lighter shades indicating lower scores and darker shades indicating higher scores.

### Components/Axes

* **Titles:** "R1-Llama" (left heatmap), "R1-Qwen" (right heatmap)

* **Y-axis (Local Window Size):** 500, 1000, 2000, 3000

* **X-axis (Ratio):** 0.1, 0.2, 0.3, 0.4, 0.5

* **Color Legend (Pass@1):** Ranges from approximately 50 (lightest shade) to 56 (darkest shade). The legend shows a continuous color gradient.

### Detailed Analysis

**R1-Llama Heatmap:**

| Local Window Size | Ratio 0.1 | Ratio 0.2 | Ratio 0.3 | Ratio 0.4 | Ratio 0.5 |

| ----------------- | --------- | --------- | --------- | --------- | --------- |

| 3000 | 49.1 | 50.1 | 50.6 | 50.7 | 51.4 |

| 2000 | 49.5 | 51.7 | 52.8 | 52.5 | 50.9 |

| 1000 | 49.9 | 52.7 | 51.0 | 51.9 | 51.7 |

| 500 | 49.8 | 52.1 | 50.7 | 50.8 | 51.7 |

* **Trend:** The Pass@1 score for R1-Llama generally increases as the Ratio increases from 0.1 to 0.2. After 0.2, the performance fluctuates. The performance is generally lower for a Local Window Size of 3000.

**R1-Qwen Heatmap:**

| Local Window Size | Ratio 0.1 | Ratio 0.2 | Ratio 0.3 | Ratio 0.4 | Ratio 0.5 |

| ----------------- | --------- | --------- | --------- | --------- | --------- |

| 3000 | 53.9 | 53.9 | 53.2 | 54.4 | 53.8 |

| 2000 | 52.4 | 51.9 | 54.6 | 56.3 | 53.7 |

| 1000 | 52.2 | 54.4 | 53.8 | 53.3 | 53.0 |

| 500 | 51.5 | 51.8 | 52.0 | 54.3 | 54.6 |

* **Trend:** The Pass@1 score for R1-Qwen shows a more pronounced increase with higher Ratio values, particularly at a Local Window Size of 2000 and Ratio of 0.4, where the performance peaks.

### Key Observations

* R1-Qwen generally outperforms R1-Llama across most configurations.

* The highest Pass@1 score is achieved by R1-Qwen with a Local Window Size of 2000 and a Ratio of 0.4 (56.3).

* R1-Llama's performance seems less sensitive to changes in Ratio and Local Window Size compared to R1-Qwen.

### Interpretation

The heatmaps provide a visual comparison of the performance of two models, R1-Llama and R1-Qwen, under varying configurations of "Local Window Size" and "Ratio." The data suggests that R1-Qwen is a superior model, achieving higher Pass@1 scores across most parameter settings. The optimal configuration for R1-Qwen appears to be a Local Window Size of 2000 and a Ratio of 0.4, indicating that these settings are crucial for maximizing its performance. R1-Llama's relatively stable performance across different configurations might suggest a more robust but less optimized model. The choice of model and configuration should be guided by the specific application and the trade-off between performance and sensitivity to parameter tuning.

</details>

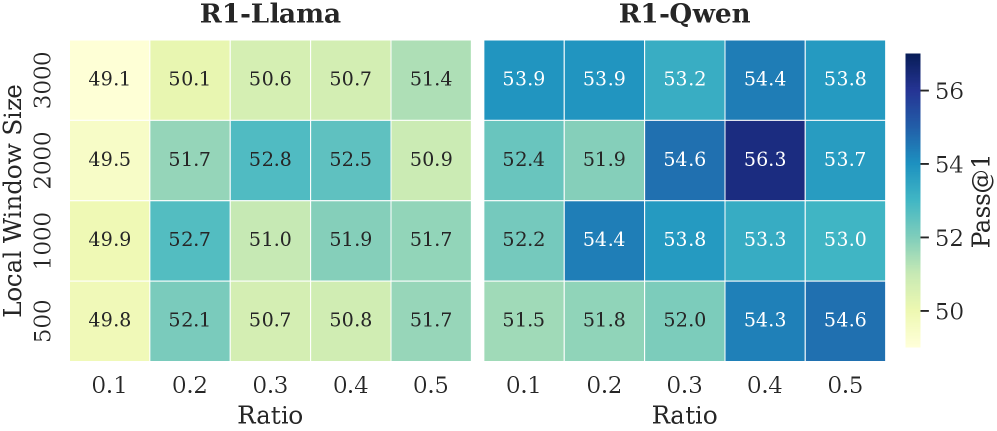

Figure 6: The accuracy of R1-Llama and R1-Qwen across different local window sizes and selection window retention ratios.

Local Window & Retention Ratio. As shown in Fig. 6, we report the model’s reasoning performance across different configurations. The performance improves with a larger local window and a higher retention ratio within a reasonable range. These two settings respectively ensure local contextual coherence and an adequate number of thinking tokens. Setting either to overly small values leads to pronounced performance degradation. However, excessively large values introduce a higher proportion of non-essential tokens, which in turn negatively impacts model performance. Empirically, a local window size of approximately 2,000 and a retention ratio of 0.3–0.4 yield optimal performance. We further observe that R1-Qwen is particularly sensitive to the local window size. This may be caused by the Dual Chunk Attention introduced during the long-context pre-training stage (Yang et al., 2025), which biases attention toward tokens within the local window.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Chart: MSE Loss, Kendall, and Overlap Rate vs. Step

### Overview

The image presents two line charts stacked vertically. The top chart displays the MSE Loss and Kendall values against the step number. The bottom chart shows the overlap rate (%) for different top percentages (20% to 90%) against the step number. Both charts share the same x-axis (Step).

### Components/Axes

**Top Chart:**

* **Title:** Value vs. Step

* **Y-axis Label:** Value

* **Y-axis Scale:** 0 to 3, with tick marks at 0, 1, 2, and 3.

* **X-axis Label:** Step (shared with the bottom chart)

* **Legend (Top-Right):**

* Blue line: MSE Loss

* Orange line: Kendall

**Bottom Chart:**

* **Title:** Overlap Rate (%) vs. Step

* **Y-axis Label:** Overlap Rate (%)

* **Y-axis Scale:** 20 to 100, with tick marks at 20, 40, 60, 80, and 100.

* **X-axis Label:** Step

* **X-axis Scale:** 0 to 400, with tick marks at intervals of 50 (0, 50, 100, 150, 200, 250, 300, 350, 400).

* **Legend (Bottom-Right):**

* Dark Purple: Top-20%

* Purple: Top-30%

* Dark Blue: Top-40%

* Light Blue: Top-50%

* Teal: Top-60%

* Green: Top-70%

* Light Green: Top-80%

* Yellow-Green: Top-90%

### Detailed Analysis

**Top Chart:**

* **MSE Loss (Blue):** Starts at approximately 3, rapidly decreases to around 0.2 within the first 50 steps, and then fluctuates around 0.2 for the remaining steps.

* **Kendall (Orange):** Starts at approximately 1.2, decreases to around 0.5 within the first 50 steps, and then remains relatively stable around 0.5 for the remaining steps.

**Bottom Chart:**

* **Top-20% (Dark Purple):** Starts at approximately 20%, increases to around 65% within the first 100 steps, and then fluctuates around 65% for the remaining steps.

* **Top-30% (Purple):** Starts at approximately 22%, increases to around 75% within the first 100 steps, and then fluctuates around 75% for the remaining steps.

* **Top-40% (Dark Blue):** Starts at approximately 25%, increases to around 82% within the first 100 steps, and then fluctuates around 82% for the remaining steps.

* **Top-50% (Light Blue):** Starts at approximately 30%, increases to around 88% within the first 100 steps, and then fluctuates around 88% for the remaining steps.

* **Top-60% (Teal):** Starts at approximately 35%, increases to around 92% within the first 100 steps, and then fluctuates around 92% for the remaining steps.

* **Top-70% (Green):** Starts at approximately 40%, increases to around 95% within the first 100 steps, and then fluctuates around 95% for the remaining steps.

* **Top-80% (Light Green):** Starts at approximately 42%, increases to around 97% within the first 100 steps, and then fluctuates around 97% for the remaining steps.

* **Top-90% (Yellow-Green):** Starts at approximately 45%, increases to around 98% within the first 100 steps, and then fluctuates around 98% for the remaining steps.

### Key Observations

* Both MSE Loss and Kendall values decrease significantly in the initial steps and then stabilize.

* The overlap rate for all top percentages increases rapidly in the initial steps and then stabilizes.

* Higher top percentages generally have higher overlap rates.

* The most significant changes in both charts occur within the first 100 steps.

### Interpretation

The charts illustrate the training process of a model, likely related to ranking or information retrieval. The MSE Loss and Kendall values in the top chart indicate the model's error and ranking correlation, respectively. The decrease in these values suggests that the model is learning and improving its performance.

The bottom chart shows the overlap rate between the top-ranked items predicted by the model and the ground truth. The increasing overlap rates for different top percentages indicate that the model is becoming more accurate in identifying the most relevant items. The higher overlap rates for higher top percentages suggest that the model is better at ranking the most relevant items at the top of the list.

The stabilization of both charts after the first 100 steps suggests that the model has reached a point of diminishing returns, where further training may not significantly improve its performance.

</details>

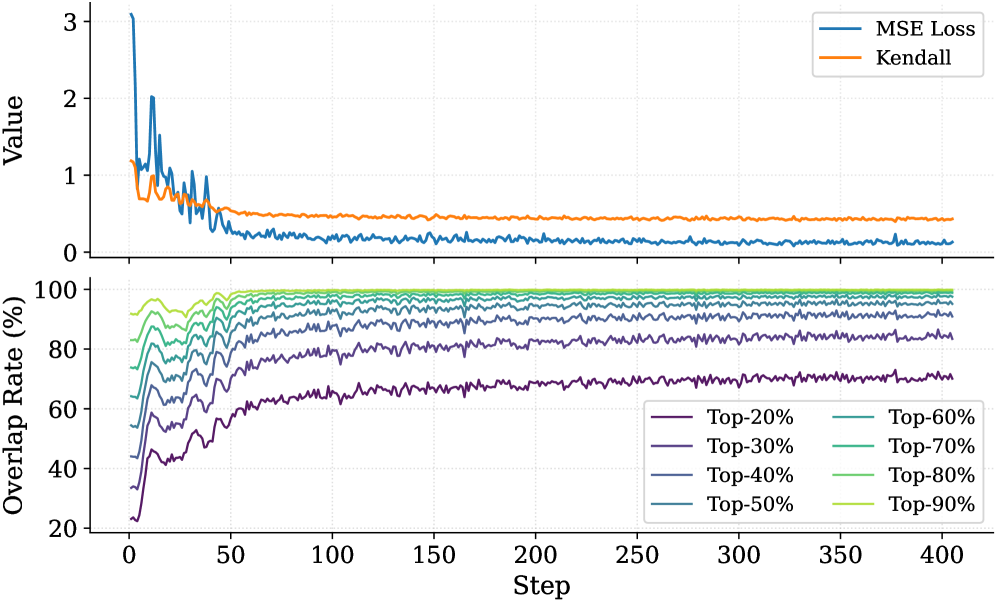

Figure 7: The top panel illustrates the convergence of MSE Loss and the Kendall rank correlation coefficient over training steps. The bottom panel tracks the overlap rate of the top- $20\%$ ground-truth tokens within the top- $p\%$ ( $p∈[20,90]$ ) predicted tokens.

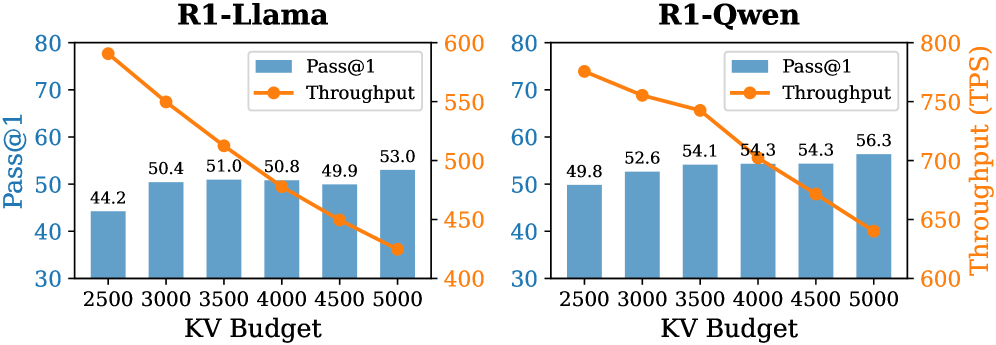

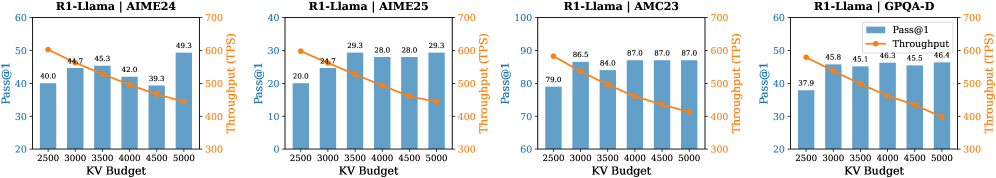

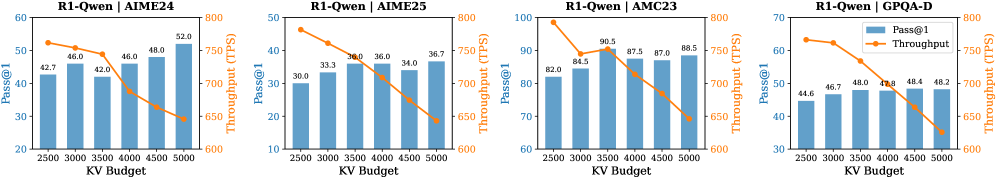

Budget. We report the model’s reasoning performance and throughput in different budget settings in Fig. 8. As expected, as the KV budget increases, the accuracy of R1-Llama and R1-Qwen improves, and the throughput decreases. At the maximum evaluated budget of 5000, DynTS delivers its strongest reasoning results ( $53.0\%$ for R1-Llama and $56.3\%$ for R1-Qwen), minimizing the performance gap with the full-cache baseline.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Bar and Line Chart: R1-Llama vs. R1-Qwen Performance

### Overview

The image presents two combined bar and line charts comparing the performance of "R1-Llama" and "R1-Qwen" models. Each chart plots "Pass@1" (as blue bars) and "Throughput" (as an orange line) against varying "KV Budget" values. The charts aim to illustrate the relationship between KV Budget, Pass@1 accuracy, and Throughput for each model.

### Components/Axes

* **Titles:**

* Left Chart: "R1-Llama"

* Right Chart: "R1-Qwen"

* **X-Axis (Shared):** "KV Budget" with values 2500, 3000, 3500, 4000, 4500, and 5000.

* **Left Y-Axis:** "Pass@1" ranging from 30 to 80.

* **Right Y-Axis:** "Throughput (TPS)" ranging from 600 to 800 (for R1-Qwen) and 400 to 600 (for R1-Llama).

* **Legend (Top-Center of each chart):**

* Blue bars: "Pass@1"

* Orange line: "Throughput"

### Detailed Analysis

**R1-Llama Chart:**

* **Pass@1 (Blue Bars):** The Pass@1 accuracy generally increases with the KV Budget.

* KV Budget 2500: Pass@1 = 44.2

* KV Budget 3000: Pass@1 = 50.4

* KV Budget 3500: Pass@1 = 51.0

* KV Budget 4000: Pass@1 = 50.8

* KV Budget 4500: Pass@1 = 49.9

* KV Budget 5000: Pass@1 = 53.0

* **Throughput (Orange Line):** The Throughput decreases as the KV Budget increases.

* KV Budget 2500: Throughput = 578 TPS (approximate)

* KV Budget 3000: Throughput = 525 TPS (approximate)

* KV Budget 3500: Throughput = 490 TPS (approximate)

* KV Budget 4000: Throughput = 470 TPS (approximate)

* KV Budget 4500: Throughput = 450 TPS (approximate)

* KV Budget 5000: Throughput = 420 TPS (approximate)

**R1-Qwen Chart:**

* **Pass@1 (Blue Bars):** The Pass@1 accuracy generally increases with the KV Budget.

* KV Budget 2500: Pass@1 = 49.8

* KV Budget 3000: Pass@1 = 52.6

* KV Budget 3500: Pass@1 = 54.1

* KV Budget 4000: Pass@1 = 54.3

* KV Budget 4500: Pass@1 = 54.3

* KV Budget 5000: Pass@1 = 56.3

* **Throughput (Orange Line):** The Throughput decreases as the KV Budget increases.

* KV Budget 2500: Throughput = 740 TPS (approximate)

* KV Budget 3000: Throughput = 700 TPS (approximate)

* KV Budget 3500: Throughput = 670 TPS (approximate)

* KV Budget 4000: Throughput = 670 TPS (approximate)

* KV Budget 4500: Throughput = 650 TPS (approximate)

* KV Budget 5000: Throughput = 620 TPS (approximate)

### Key Observations

* For both models, increasing the KV Budget generally improves the Pass@1 accuracy.

* For both models, increasing the KV Budget leads to a decrease in Throughput.

* R1-Qwen consistently achieves higher Throughput compared to R1-Llama across all KV Budget values.

* R1-Qwen also generally achieves higher Pass@1 accuracy compared to R1-Llama across all KV Budget values.

### Interpretation

The charts illustrate a trade-off between accuracy (Pass@1) and speed (Throughput) when adjusting the KV Budget for both R1-Llama and R1-Qwen models. Increasing the KV Budget allows the models to achieve higher accuracy, likely due to increased capacity to store and process information. However, this comes at the cost of reduced Throughput, possibly because larger KV Budgets require more computational resources and time to manage.

R1-Qwen appears to be a more efficient model than R1-Llama, as it achieves both higher accuracy and higher throughput across the tested KV Budget range. This suggests that R1-Qwen may have a more optimized architecture or implementation.

The data suggests that the optimal KV Budget would depend on the specific application and the relative importance of accuracy and speed. If accuracy is paramount, a higher KV Budget would be preferred. If speed is more critical, a lower KV Budget might be more suitable.

</details>

Figure 8: Accuracy and throughput across varying KV budgets.

6.4 Analysis of Importance Predictor

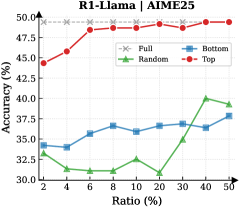

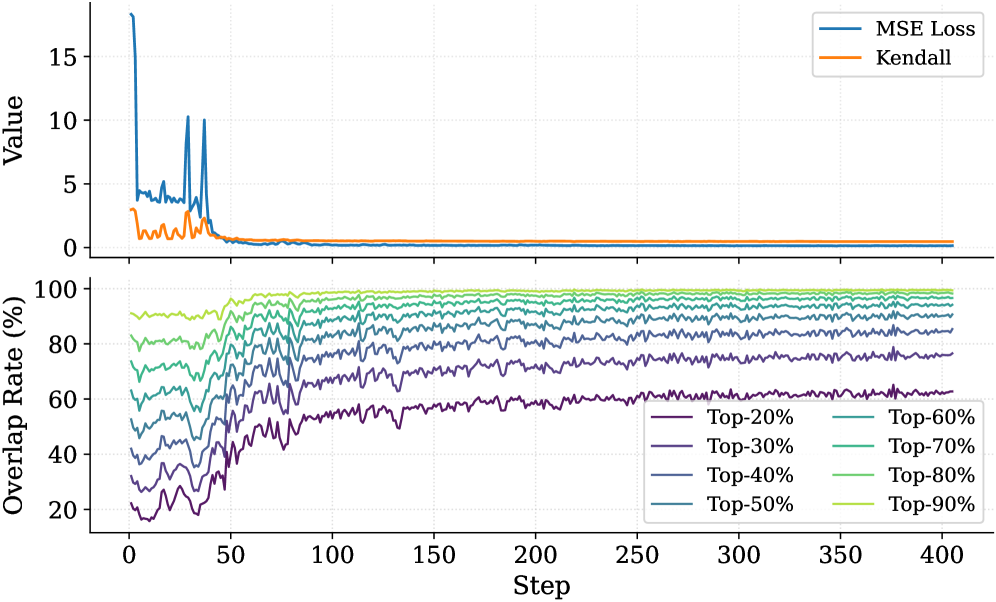

To validate that the Importance Predictor effectively learns the ground-truth thinking token importance scores, we report the MSE Loss and the Kendall rank correlation coefficient (Abdi, 2007) in the top panel of Fig. 7. As the number of training steps increases, both metrics exhibit clear convergence. The MSE loss demonstrates that the predictor can fit the true importance scores. The Kendall coefficient measures the consistency of rankings between the ground-truth importance scores and the predicted values. This result indicates that the predictor successfully captures each thinking token’s importance to the answer. Furthermore, we analyze the overlap rate of predicted critical thinking tokens, as shown in the bottom panel of Fig. 7. Notably, at the end of training, the overlap rate of critical tokens within the top $30\%$ of the predicted tokens exceeds $80\%$ . This confirms that the Importance Predictor in DynTS effectively identifies the most pivotal tokens, ensuring the retention of essential thinking tokens even at high compression rates.

7 Related Work

Recent works on KV cache compression have primarily focused on classical LLMs, applying eviction strategies based on attention scores or heuristic rules. One line of work addresses long-context pruning at the prefill stage. Such as SnapKV (Li et al., 2024), PyramidKV (Cai et al., 2024), and AdaKV (Feng et al., 2024). However, they are ill-suited for the inference scenarios of LRMs, which have short prefill tokens followed by long decoding steps. Furthermore, several strategies have been proposed specifically for the decoding phase. For instance, H2O (Zhang et al., 2023) leverages accumulated attention scores, StreamingLLM (Xiao et al., 2024) retains attention sinks and recent tokens, and SepLLM (Chen et al., 2024) preserves only the separator tokens. More recently, targeting LRMs, (Cai et al., 2025) introduced RKV, which adds a similarity-based metric to evict redundant tokens, while RLKV (Du et al., 2025) utilizes reinforcement learning to retain critical reasoning heads. However, these methods fail to accurately assess the contribution of intermediate tokens to the final answer. Consequently, they risk erroneously evicting decision-critical tokens, compromising the model’s reasoning performance.

8 Conclusion and Discussion

In this work, we investigated the relationship between the reasoning traces and their final answers in LRMs. Our analysis revealed a Pareto Principle in LRMs: only the decision-critical thinking tokens ( $20\%\sim 30\%$ in the reasoning traces) steer the model toward the final answer. Building on this insight, we proposed DynTS, a novel KV cache compression method. Departing from current strategies that rely on local attention scores for eviction, DynTS introduces a learnable Importance Predictor to predict the contribution of the current token to the final answer. Based on the predicted score, DynTS retains pivotal KV cache. Empirical results on six datasets confirm that DynTS outperforms other SOTA baselines. We also discuss the limitations of DynTS and outline potential directions for future improvement. Please refer to Appendix E for details.

Impact Statement

This paper presents work aimed at advancing the field of KV cache compression. There are many potential societal consequences of our work, none of which we feel must be specifically highlighted here. The primary impact of this research is to improve the memory and computational efficiency of LRM’s inference. By reducing memory requirements, our method helps lower the barrier to deploying powerful models on resource-constrained edge devices. We believe our work does not introduce specific ethical or societal risks beyond the general considerations inherent to advancing generative AI.

References

- H. Abdi (2007) The kendall rank correlation coefficient. Encyclopedia of measurement and statistics 2, pp. 508–510. Cited by: §6.4.

- J. Ainslie, J. Lee-Thorp, M. De Jong, Y. Zemlyanskiy, F. Lebrón, and S. Sanghai (2023) Gqa: training generalized multi-query transformer models from multi-head checkpoints. arXiv preprint arXiv:2305.13245. Cited by: §2.

- P. C. Bogdan, U. Macar, N. Nanda, and A. Conmy (2025) Thought anchors: which llm reasoning steps matter?. arXiv preprint arXiv:2506.19143. Cited by: §1, §1, §3.1.

- Z. Cai, W. Xiao, H. Sun, C. Luo, Y. Zhang, K. Wan, Y. Li, Y. Zhou, L. Chang, J. Gu, et al. (2025) R-kv: redundancy-aware kv cache compression for reasoning models. In The Thirty-ninth Annual Conference on Neural Information Processing Systems, Cited by: §3.1, §6.1, §7.

- Z. Cai, Y. Zhang, B. Gao, Y. Liu, Y. Li, T. Liu, K. Lu, W. Xiong, Y. Dong, J. Hu, et al. (2024) Pyramidkv: dynamic kv cache compression based on pyramidal information funneling. arXiv preprint arXiv:2406.02069. Cited by: §3.1, §7.

- G. Chen, H. Shi, J. Li, Y. Gao, X. Ren, Y. Chen, X. Jiang, Z. Li, W. Liu, and C. Huang (2024) Sepllm: accelerate large language models by compressing one segment into one separator. arXiv preprint arXiv:2412.12094. Cited by: §1, §1, §4.2, §6.1, §7.

- Q. Chen, L. Qin, J. Liu, D. Peng, J. Guan, P. Wang, M. Hu, Y. Zhou, T. Gao, and W. Che (2025) Towards reasoning era: a survey of long chain-of-thought for reasoning large language models. arXiv preprint arXiv:2503.09567. Cited by: §1, §2.

- D. Choi, J. Lee, J. Tack, W. Song, S. Dingliwal, S. M. Jayanthi, B. Ganesh, J. Shin, A. Galstyan, and S. B. Bodapati (2025) Think clearly: improving reasoning via redundant token pruning. arXiv preprint arXiv:2507.08806 4. Cited by: §1.

- G. DeepMind (2025) A new era of intelligence with gemini 3. Note: https://blog.google/products/gemini/gemini-3/#gemini-3-deep-think Cited by: §1.

- A. Devoto, Y. Zhao, S. Scardapane, and P. Minervini (2024) A simple and effective $L\_2$ norm-based strategy for kv cache compression. arXiv preprint arXiv:2406.11430. Cited by: §1.

- W. Du, L. Jiang, K. Tao, X. Liu, and H. Wang (2025) Which heads matter for reasoning? rl-guided kv cache compression. arXiv preprint arXiv:2510.08525. Cited by: §7.

- S. Feng, G. Fang, X. Ma, and X. Wang (2025) Efficient reasoning models: a survey. arXiv preprint arXiv:2504.10903. Cited by: §1.

- Y. Feng, J. Lv, Y. Cao, X. Xie, and S. K. Zhou (2024) Ada-kv: optimizing kv cache eviction by adaptive budget allocation for efficient llm inference. arXiv preprint arXiv:2407.11550. Cited by: §7.

- D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. (2025) Deepseek-r1: incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948. Cited by: §B.1, §1, §6.1, §6.1.

- D. Hendrycks, C. Burns, S. Kadavath, A. Arora, S. Basart, E. Tang, D. Song, and J. Steinhardt (2021) Measuring mathematical problem solving with the math dataset. arXiv preprint arXiv:2103.03874. Cited by: §1, §6.1.

- W. Huang, Z. Zhai, Y. Shen, S. Cao, F. Zhao, X. Xu, Z. Ye, Y. Hu, and S. Lin (2024) Dynamic-llava: efficient multimodal large language models via dynamic vision-language context sparsification. arXiv preprint arXiv:2412.00876. Cited by: §4.1.

- W. Kwon, Z. Li, S. Zhuang, Y. Sheng, L. Zheng, C. H. Yu, J. Gonzalez, H. Zhang, and I. Stoica (2023) Efficient memory management for large language model serving with pagedattention. In Proceedings of the 29th symposium on operating systems principles, pp. 611–626. Cited by: §B.1.

- Y. Li, Y. Huang, B. Yang, B. Venkitesh, A. Locatelli, H. Ye, T. Cai, P. Lewis, and D. Chen (2024) Snapkv: llm knows what you are looking for before generation. Advances in Neural Information Processing Systems 37, pp. 22947–22970. Cited by: §1, §3.1, §6.1, §7.

- M. Liu, A. Palnitkar, T. Rabbani, H. Jae, K. R. Sang, D. Yao, S. Shabihi, F. Zhao, T. Li, C. Zhang, et al. (2025a) Hold onto that thought: assessing kv cache compression on reasoning. arXiv preprint arXiv:2512.12008. Cited by: §6.1.

- Y. Liu, J. Fu, S. Liu, Y. Zou, S. Zhang, and J. Zhou (2025b) KV cache compression for inference efficiency in llms: a review. In Proceedings of the 4th International Conference on Artificial Intelligence and Intelligent Information Processing, pp. 207–212. Cited by: §1.

- G. Minegishi, H. Furuta, T. Kojima, Y. Iwasawa, and Y. Matsuo (2025) Topology of reasoning: understanding large reasoning models through reasoning graph properties. arXiv preprint arXiv:2506.05744. Cited by: §1.

- OpenAI (2025) OpenAI. External Links: Link Cited by: §1.