# Dynamic Thinking-Token Selection for Efficient Reasoning in Large Reasoning Models

**Authors**: Zhenyuan Guo, Tong Chen, Wenlong Meng, Chen Gong, Xin Yu, Chengkun Wei, Wenzhi Chen

## Abstract

Large Reasoning Models (LRMs) excel at solving complex problems by explicitly generating a reasoning trace before deriving the final answer. However, these extended generations incur substantial memory footprint and computational overhead, bottlenecking LRMs’ efficiency. This work uses attention maps to analyze the influence of reasoning traces and uncover an interesting phenomenon: only some decision-critical tokens in a reasoning trace steer the model toward the final answer, while the remaining tokens contribute negligibly. Building on this observation, we propose Dyn amic T hinking-Token S election (DynTS). This method identifies decision-critical tokens and retains only their associated Key-Value (KV) cache states during inference, evicting the remaining redundant entries to optimize efficiency. Across six benchmarks, DynTS surpasses the state-of-the-art KV cache compression methods, improving Pass@1 by $2.6\$ under the same budget. Compared to vanilla Transformers, it reduces inference latency by $1.84–2.62\times$ and peak KV-cache memory footprint by $3.32–5.73\times$ without compromising LRMs’ reasoning performance. The code is available at the github link. https://github.com/Robin930/DynTS

KV Cache Compression, Efficient LRM, LLM

## 1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Token Selection Strategies in Language Model Methods

### Overview

This diagram illustrates and compares five different methods for processing and retaining tokens from an input prompt in large language models. It visually demonstrates how each method selects which tokens to keep for computation, highlighting strategies for efficiency and context management. The diagram is structured as a table with rows for each method and columns for the method name, a visual representation of its token sequence, and a legend explaining the token types.

### Components/Axes

The diagram is organized into three main columns:

1. **Methods (Left Column):** Lists the five methods being compared: `Transformers`, `SnapKV`, `StreamingLLM`, `H2O`, and `DynTS`.

2. **Tokens (Center Column):** Displays a horizontal sequence of squares representing tokens for each method. The sequence is truncated with an ellipsis (`...`) on the right, indicating a continuing sequence. A dashed box labeled `Observation Window` is shown within the `SnapKV` row.

3. **Keeps (Right Column / Legend):** A key that maps colors to the type of token each method retains.

* **White Square:** `All Tokens`

* **Orange Square:** `High Importance Prefill Tokens`

* **Yellow Square:** `Attention Sink Tokens`

* **Light Blue Square:** `Local Tokens`

* **Green Square:** `Heavy-Hitter Tokens`

* **Red Square:** `Predicted Importance Tokens`

### Detailed Analysis

The diagram details the token retention strategy for each method:

* **Transformers:**

* **Visual Trend:** The entire token sequence is composed of white squares.

* **Data Points/Strategy:** Retains **All Tokens** from the prompt for processing. This is the baseline, full-context approach.

* **SnapKV:**

* **Visual Trend:** The sequence contains a mix of white and orange squares. A dashed box labeled `Observation Window` encloses a cluster of orange squares towards the right side of the visible sequence. A label `Prompt` points to the beginning of the sequence.

* **Data Points/Strategy:** Selectively keeps **High Importance Prefill Tokens** (orange). The `Observation Window` suggests these important tokens are identified within a specific segment of the prompt. The remaining tokens (white) are presumably discarded or not used for the key-value cache.

* **StreamingLLM:**

* **Visual Trend:** The sequence starts with a few yellow squares on the far left, followed by white squares, and ends with a block of light blue squares on the far right.

* **Data Points/Strategy:** Keeps two types of tokens: **Attention Sink Tokens** (yellow) from the very beginning of the sequence and **Local Tokens** (light blue) from the most recent part of the sequence. The middle tokens (white) are not retained.

* **H2O (Heavy-Hitter Oracle):**

* **Visual Trend:** The sequence shows green squares interspersed among white squares in the first half, followed by a block of light blue squares at the end.

* **Data Points/Strategy:** Keeps **Heavy-Hitter Tokens** (green), which are likely tokens that receive high attention scores, scattered throughout the prompt, and **Local Tokens** (light blue) from the recent context.

* **DynTS (Dynamic Token Selection):**

* **Visual Trend:** The sequence begins with red squares, followed by white squares, and ends with light blue squares. Curved arrows originate from the red squares and point to a box labeled `Answer`. Text above the arrows reads: `Predicted importance of tokens to the final answer`.

* **Data Points/Strategy:** Keeps **Predicted Importance Tokens** (red) and **Local Tokens** (light blue). The arrows indicate that the red tokens are dynamically selected based on a model's prediction of their importance for generating the final `Answer`.

### Key Observations

1. **Progression of Selectivity:** There is a clear evolution from the `Transformers` method (keeping everything) to increasingly selective strategies (`SnapKV`, `StreamingLLM`, `H2O`, `DynTS`) that aim to reduce computational load by retaining only a subset of tokens.

2. **Common Element:** Four of the five methods (all except `Transformers`) explicitly retain **Local Tokens** (light blue) from the end of the sequence, underscoring the importance of recent context.

3. **Diverse Selection Criteria:** The methods use different heuristics to select non-local tokens: fixed position (`StreamingLLM`'s attention sinks), attention scores (`H2O`'s heavy-hitters), importance within a window (`SnapKV`), or predicted relevance to the output (`DynTS`).

4. **Spatial Layout:** The `Observation Window` in `SnapKV` is positioned in the center-right of its token sequence. The `Answer` box for `DynTS` is placed to the right of its token sequence, with arrows creating a visual flow from selected tokens to the output.

### Interpretation

This diagram serves as a technical comparison of context window management techniques in efficient language model inference. It demonstrates the core challenge: balancing the need for long-context understanding with the computational cost of processing every token.

* **What the data suggests:** The field is moving beyond simply truncating context (which would be represented by keeping only local tokens) towards more intelligent, dynamic selection mechanisms. Methods like `DynTS` represent a shift towards task-aware processing, where token retention is directly tied to the goal of generating a correct answer.

* **How elements relate:** The `Tokens` column visually contrasts the "full" sequence of `Transformers` with the "sparse" or "filtered" sequences of the other methods. The `Keeps` legend is essential for decoding the strategy behind each pattern. The `Observation Window` and `Answer` annotations provide crucial context for understanding the operational logic of `SnapKV` and `DynTS`, respectively.

* **Notable patterns/anomalies:** The most striking pattern is the universal retention of local tokens. This implies that regardless of the selection strategy for earlier context, the most recent information is consistently deemed critical. The `DynTS` method is unique in its explicit, goal-oriented selection mechanism, visualized by the predictive arrows, suggesting a more sophisticated, possibly model-driven approach compared to the heuristic-based methods above it.

</details>

<details>

<summary>x2.png Details</summary>

### Visual Description

## Dual-Axis Bar & Line Chart: Model Accuracy vs. KV Cache Length

### Overview

This image is a technical comparison chart evaluating eight different models or methods (Transformers, DynTS, Window, StreamingLLM, SepLLM, H2O, SnapKV, R-KV) on two metrics: Accuracy (%) and KV Cache Length. It uses a dual-axis design with bars representing accuracy (left y-axis) and a line representing KV cache length (right y-axis).

### Components/Axes

* **Chart Type:** Combined bar chart and line chart with dual y-axes.

* **X-Axis (Categories):** Lists eight models/methods. From left to right: `Transformers`, `DynTS`, `Window`, `StreamingLLM`, `SepLLM`, `H2O`, `SnapKV`, `R-KV`.

* **Primary Y-Axis (Left):** Labeled `Accuracy (%)`. Scale runs from 0 to 70 in increments of 10.

* **Secondary Y-Axis (Right):** Labeled `KV Cache Length`. Scale runs from 2k to 20k in increments of 3k (2k, 5k, 8k, 11k, 14k, 17k, 20k).

* **Legend:** Positioned at the top center of the chart area.

* A gray rectangle is labeled `Accuracy`.

* A blue line with a circular marker is labeled `KV Cache Length`.

* **Data Series:**

1. **Accuracy (Bars):** The height of each bar corresponds to the accuracy percentage. The first two bars are colored distinctly (dark blue for `Transformers`, red for `DynTS`), while the remaining six bars are gray.

2. **KV Cache Length (Line):** A blue dashed line with circular markers connects data points for each model, corresponding to the right y-axis.

### Detailed Analysis

**Accuracy Data (Bars):**

* `Transformers`: 63.6% (Dark blue bar, tallest)

* `DynTS`: 63.5% (Red bar, nearly equal to Transformers)

* `Window`: 49.4% (Gray bar)

* `StreamingLLM`: 51.6% (Gray bar)

* `SepLLM`: 54.5% (Gray bar)

* `H2O`: 58.8% (Gray bar)

* `SnapKV`: 59.8% (Gray bar)

* `R-KV`: 60.9% (Gray bar)

**KV Cache Length Data (Line - Estimated from visual position against right axis):**

* **Trend Verification:** The line shows a dramatic, steep downward slope from the first point to the second, followed by a nearly flat, low plateau for the remaining six points.

* `Transformers`: ~18k (Highest point, near the 17k-20k range)

* `DynTS`: ~4k (Sharp drop, positioned just above the 2k line)

* `Window`: ~3.5k

* `StreamingLLM`: ~3.5k

* `SepLLM`: ~3.5k

* `H2O`: ~3.5k

* `SnapKV`: ~3.5k

* `R-KV`: ~3.5k

*(Note: Exact values for KV Cache Length are not labeled; these are visual approximations. The line markers for the last six models are all clustered closely together just above the 2k axis line.)*

### Key Observations

1. **Accuracy Cluster:** `Transformers` and `DynTS` form a high-accuracy cluster (~63.5%), significantly outperforming the other six methods, which range from 49.4% to 60.9%.

2. **Efficiency Leap:** There is a massive reduction in KV Cache Length between `Transformers` (~18k) and `DynTS` (~4k), despite nearly identical accuracy.

3. **Performance Plateau:** The six methods from `Window` to `R-KV` show a gradual, incremental improvement in accuracy (from 49.4% to 60.9%) while maintaining a consistently low and similar KV Cache Length (~3.5k).

4. **Visual Emphasis:** The use of distinct colors (dark blue, red) for the first two bars visually highlights them as the primary subjects of comparison against the baseline (gray) methods.

### Interpretation

This chart demonstrates a critical trade-off and advancement in the efficiency of language model inference, specifically regarding the Key-Value (KV) cache, which stores attention keys and values and is a major consumer of memory.

* **The Core Finding:** The `DynTS` method achieves accuracy on par with the standard `Transformers` model while reducing the KV cache memory footprint by approximately 78% (from ~18k to ~4k). This suggests `DynTS` is a highly efficient optimization that preserves performance.

* **The Landscape of Alternatives:** The other six methods (`Window`, `StreamingLLM`, etc.) represent a different point on the efficiency-accuracy curve. They achieve even lower cache usage (~3.5k) but at a notable cost to accuracy (5-14 percentage points lower than `Transformers`/`DynTS`). Their incremental accuracy improvements suggest ongoing refinement within this "low-cache" paradigm.

* **Strategic Implication:** The data positions `DynTS` as a potentially optimal solution for scenarios requiring both high accuracy and memory efficiency. The chart argues that significant cache reduction is possible without the accuracy penalty incurred by the other listed methods. The visualization effectively makes the case for `DynTS` as a superior approach by placing its red bar directly beside the high-accuracy `Transformers` baseline while showing its dramatic drop on the cache-length line.

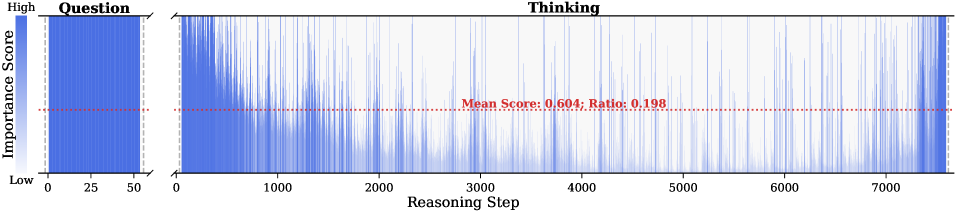

</details>

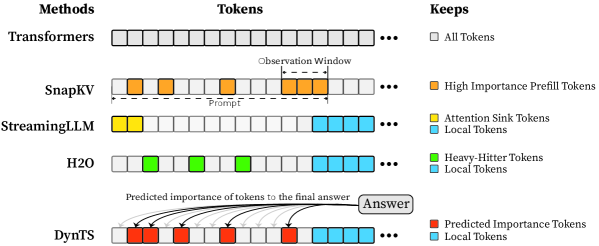

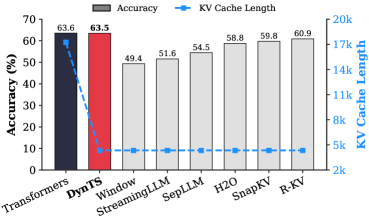

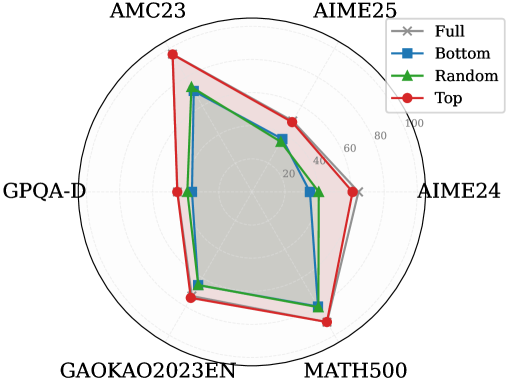

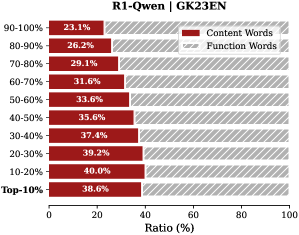

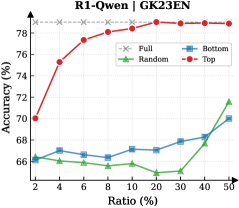

Figure 1: (Left) Comparison of token selection strategies across different KV cache eviction methods. In each row, colored blocks denote the retained high-importance tokens, while grey blocks represent the evicted tokens during LRM inference. (Right) The average reasoning performance and KV cache memory footprint on DeepSeek-R1-Distall-Llama-8B and DeepSeek-R1-Distall-Qwen-7B across six reasoning benchmarks.

Recent advancements in Large Reasoning Models (LRMs) (Chen et al., 2025) have significantly strengthened the reasoning capabilities of Large Language Models (LLMs). Representative models such as DeepSeek-R1 (Guo et al., 2025), Gemini-3-Pro (DeepMind, 2025), and ChatGPT-5.2 (OpenAI, 2025) support deep thinking mode to strengthen reasoning capability in the challenging mathematics, programming, and science tasks (Zhang et al., 2025b). These models spend a substantial number of intermediate thinking tokens on reflection, reasoning, and verification to derive the correct response during inference (Feng et al., 2025). However, the thinking process necessitates the immense KV cache memory footprint and attention-related computational cost, posing a critical deployment challenge in resource-constrained environments.

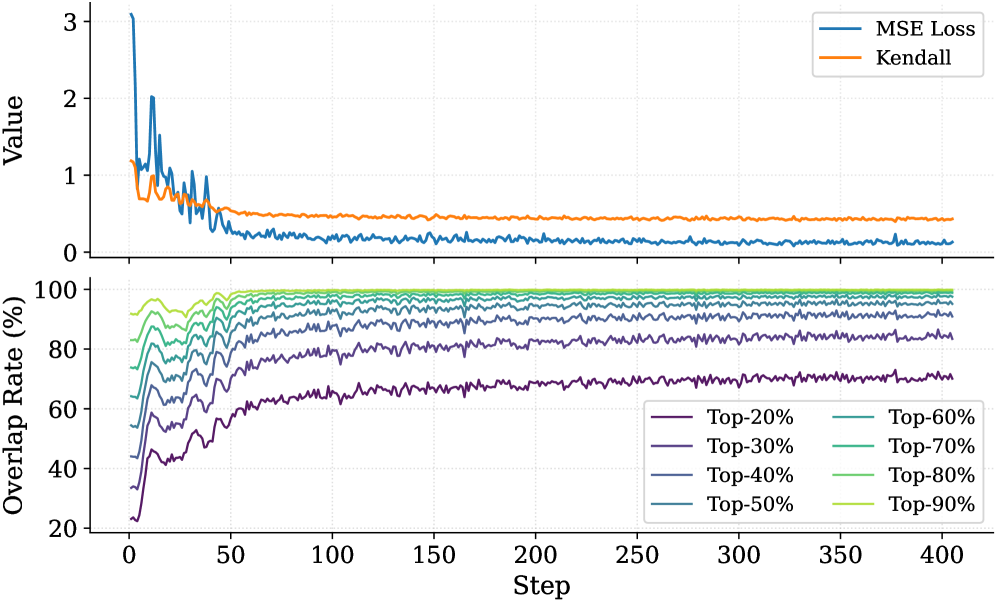

KV cache compression techniques aim to optimize the cache state by periodically evicting non-essential tokens (Shi et al., 2024; WEI et al., 2025; Liu et al., 2025b; Qin et al., 2025), typically guided by predefined token retention rules (Chen et al., 2024; Xiao et al., 2024; Devoto et al., 2024) or attention-based importance metrics (Zhang et al., 2023; Li et al., 2024; Choi et al., 2025). Nevertheless, incorporating them into the inference process of LRMs faces two key limitations: (1) Methods designed for long-context prefilling are ill-suited to the short-prefill and long-decoding scenarios of LRMs; (2) Methods tailored for long-decoding struggle to match the reasoning performance of the Full KV baseline (SOTA $60.9\$ vs. Full KV $63.6\$ , Fig. 1 Left). Specifically, in LRM inference, the model conducts an extensive reasoning process and then summarizes the reasoning content to derive the final answer (Minegishi et al., 2025). This implies that the correctness of the final answer relies on the thinking tokens within the preceding reasoning (Bogdan et al., 2025). However, existing compression methods cannot identify the tokens that are essential to the future answer. This leads to a significant misalignment between the retained tokens and the critical thinking tokens, resulting in degradation in the model’s reasoning performance.

To address this issue, we analyze the LRM’s generated content and study which tokens are most important for the model to steer the final answer. Some works point out attention weights capturing inter-token dependencies (Vaswani et al., 2017; Wiegreffe and Pinter, 2019; Bogdan et al., 2025), which can serve as a metric to assess the importance of tokens. Consequently, we decompose the generated content into a reasoning trace and a final answer, and then calculate the importance score of each thinking token in the trajectory by aggregating the attention weights from the answer to thinking tokens. We find that only a small subset of thinking tokens ( $\sim 20\$ tokens in the reasoning trace, see Section § 3.1) have significant scores, which may be critical for the final answer. To validate these hypotheses, we retain these tokens and prompt the model to directly generate the final answer. Experimental results show that the model maintains close accuracy compared to using the whole KV cache. This reveals a Pareto principle The Pareto principle, also known as the 80/20 rule, posits that $20\$ of critical factors drive $80\$ of the outcomes. In this paper, it implies that a small fraction of pivotal thinking tokens dictates the correctness of the model’s final response. in LRMs: only a small subset of decision-critical thinking tokens with high importance scores drives the model toward the final answer, while the remaining tokens contribute negligibly.

Based on the above insight, we introduce DynTS (Dyn amic T hinking-Token S election), a novel method for dynamically predicting and selecting decision-critical thinking tokens on-the-fly during decoding, as shown in Fig. 1 (Left). The key innovation of DynTS is the integration of a trainable, lightweight Importance Predictor at the final layer of LRMs, enabling the model to dynamically predict the importance of each thinking token to the final answer. By utilizing importance scores derived from sampled reasoning traces as supervision signals, the predictor learns to distinguish between critical tokens and redundant tokens. During inference, DynTS manages memory through a dual-window mechanism: generated tokens flow from a Local Window (which captures recent context) into a Selection Window (which stores long-term history). Once the KV cache reaches the budget, the system retains the KV cache of tokens with higher predicted importance scores in the Select Window and all tokens in the Local Window (Zhang et al., 2023; Chen et al., 2024). By evicting redundant KV cache entries, DynTS effectively reduces both system memory pressure and computational overhead. We also theoretically analyze the computational overhead introduced by the importance predictor and the savings from cache eviction, and derive a Break-Even Condition for net computational gain.

Then, we train the Importance Predictor based on the MATH (Hendrycks et al., 2021) train set, and evaluate DynTS on the other six reasoning benchmarks. The reasoning performance and KV cache length compare with the SOTA KV cache compression method, as reported in Fig. 1 (Right). Our method reduces the KV cache memory footprint by up to $3.32–5.73\times$ without compromising reasoning performance compared to the full-cache transformer baseline. Within the same budget, our method achieves a $2.6\$ improvement in accuracy over the SOTA KV cache compression approach.

## 2 Preliminaries

#### Large Reasoning Model (LRM).

Unlike standard LLMs that directly generate answers, LRMs incorporate an intermediate reasoning process prior to producing the final answer (Chen et al., 2025; Zhang et al., 2025a; Sui et al., 2025). Given a user prompt $\mathbf{x}=(x_{1},\dots,x_{M})$ , the model generated content represents as $\mathbf{y}$ , which can be decomposed into a reasoning trace $\mathbf{t}$ and a final answer $\mathbf{a}$ . The trajectory is delimited by a start tag <think> and an end tag </think>. Formally, the model output is defined as:

$$

\mathbf{y}=[\texttt{<think>},\mathbf{t},\texttt{</think>},\mathbf{a}], \tag{1}

$$

where the trajectory $\mathbf{t}=(t_{1},\dots,t_{L})$ composed of $L$ thinking tokens, and $\mathbf{a}=(a_{1},\dots,a_{K})$ represents the answer composed of $K$ tokens. During autoregressive generation, the model conducts a reasoning phase that produces thinking tokens $t_{i}$ , followed by an answer phase that generates the answer token $a_{i}$ . This process is formally defined as:

$$

P(\mathbf{y}|\mathbf{x})=\underbrace{\prod_{i=1}^{L}P(t_{i}|\mathbf{x},\mathbf{t}_{<i})}_{\text{Reasoning Phase}}\cdot\underbrace{\prod_{j=1}^{K}P(a_{j}|\mathbf{x},\mathbf{t},\mathbf{a}_{<j})}_{\text{Answer Phase}} \tag{2}

$$

Since the length of the reasoning trace significantly exceeds that of the final answer ( $L\gg K$ ) (Xu et al., 2025), we focus on selecting critical thinking tokens in the reasoning trace to reduce memory and computational overhead.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Time Series Chart: Importance Score Analysis Across "Question" and "Thinking" Phases

### Overview

The image displays a two-panel time series chart plotting an "Importance Score" over sequential "Steps." The chart is divided into two distinct temporal phases: a short "Question" phase on the left and a much longer "Thinking" phase on the right. A horizontal red dashed line indicates a mean score across the entire dataset.

### Components/Axes

* **Chart Title/Sections:**

* **Left Panel Title:** "Question" (positioned top-left).

* **Right Panel Title:** "Thinking" (positioned top-center).

* **Y-Axis:**

* **Label:** "Importance Score" (rotated vertically on the far left).

* **Scale:** Qualitative, marked only with "High" at the top and "Low" at the bottom. No numerical tick marks are provided.

* **X-Axis:**

* **Label:** "(Step)" (positioned bottom-right).

* **Scale:** Linear numerical scale. The "Question" panel spans steps 0 to 200. The "Thinking" panel spans steps 0 to over 12,000, with major tick marks at 0, 2000, 4000, 6000, 8000, 10000, and 12000.

* **Data Series:**

* A single data series is plotted as a filled area chart (or very dense line chart) in a semi-transparent blue color. The height of the blue area at any given step represents the Importance Score.

* **Reference Line & Annotation:**

* A red dashed horizontal line runs across both panels at a low position on the y-axis.

* **Annotation Text (centered in the "Thinking" panel, above the red line):** "Mean Score: 0.126; Ratio: 0.211"

### Detailed Analysis

* **"Question" Phase (Steps 0-200):**

* **Trend:** The Importance Score is consistently very high throughout this phase. The blue area frequently reaches the top of the chart ("High") and shows dense, rapid fluctuations. There is no period of low score within this window.

* **"Thinking" Phase (Steps 0-12,000+):**

* **Trend:** The Importance Score is highly variable and generally much lower than in the "Question" phase. The data shows a pattern of sporadic, sharp spikes interspersed with long periods of very low scores near the baseline.

* **Notable Clusters:** There are visible clusters of higher activity (more frequent and taller spikes) around steps 0-1000, 5000-6000, and 11,000-12,000.

* **Mean Line:** The red dashed line, representing a mean score of 0.126, sits very close to the bottom of the chart. The vast majority of the data points in the "Thinking" phase appear to be at or below this line, with only the sharp spikes exceeding it.

* **Statistical Annotation:**

* **Mean Score:** 0.126. This quantifies the average importance across all steps shown.

* **Ratio:** 0.211. This likely represents the proportion of steps where the Importance Score is above the mean (0.126), or another defined threshold. Given the visual distribution, it suggests only about 21.1% of steps are considered "important" by this metric.

### Key Observations

1. **Phase Dichotomy:** There is a stark contrast between the "Question" phase (uniformly high importance) and the "Thinking" phase (sporadic, spike-driven importance).

2. **Sparingly High Importance:** In the long "Thinking" phase, high importance scores are rare events, occurring as isolated spikes or brief clusters rather than sustained periods.

3. **Low Baseline:** The calculated mean score (0.126) is very low on the qualitative scale, confirming that the baseline state for the "Thinking" process is one of low measured importance.

4. **Spatial Layout:** The "Question" panel is compressed (200 steps) on the left, while the "Thinking" panel dominates the chart width (>12,000 steps), visually emphasizing the longer duration of the thinking process.

### Interpretation

This chart likely visualizes the output of an analytical model (e.g., an attention mechanism or an interpretability tool) applied to a two-stage process, such as a language model answering a query.

* **What it Suggests:** The "Question" phase is uniformly critical, implying the model dedicates consistent, high focus to parsing and understanding the input query. The subsequent "Thinking" phase is characterized by a low-level background process punctuated by brief moments of high computational or "attentional" importance. These spikes may correspond to key reasoning steps, retrieval of specific information, or decision points in the generation process.

* **Relationship Between Elements:** The "Question" sets the stage with high importance, defining the problem space. The "Thinking" phase then executes a process where most steps are routine (low score), but critical operations (high-score spikes) drive the solution forward. The mean score and ratio provide a quantitative summary of this sparsity.

* **Notable Anomaly/Pattern:** The most significant pattern is the extreme sparsity of high-importance events in the "Thinking" phase. This suggests the underlying process is not uniformly demanding; instead, it operates with a low overhead most of the time, concentrating its "effort" into discrete, intense bursts. This could be an efficient computational strategy or an inherent property of the reasoning process being measured. The clusters of spikes may indicate phases of complex integration or multi-step reasoning.

</details>

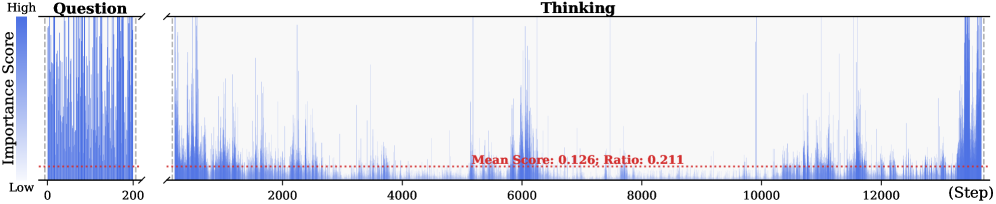

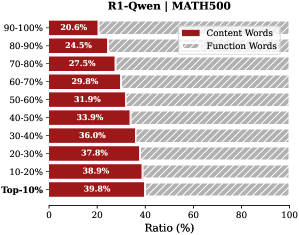

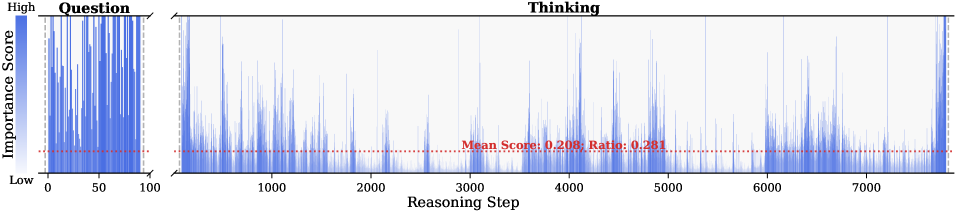

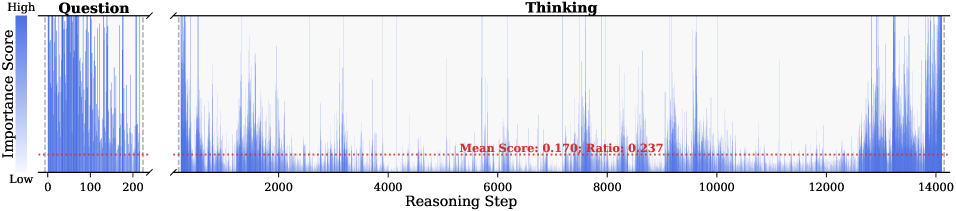

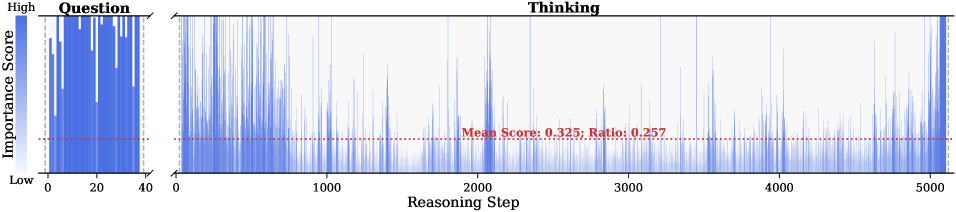

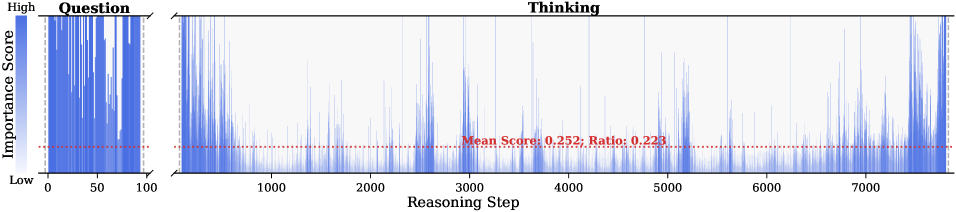

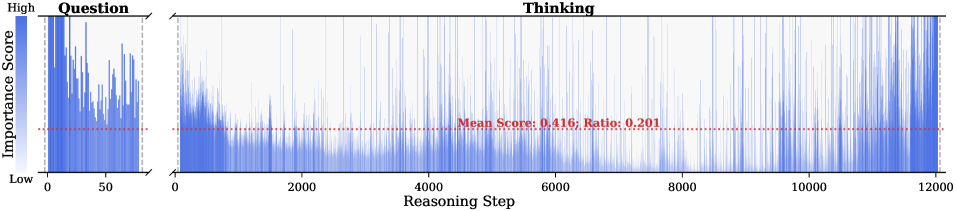

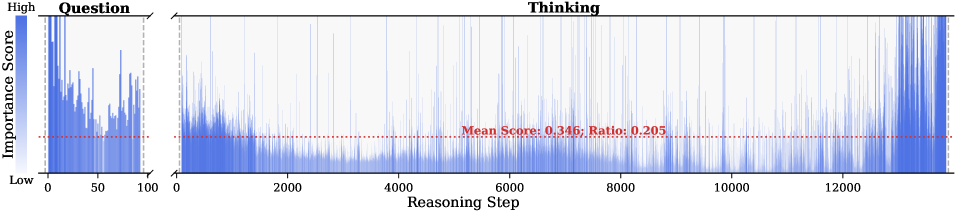

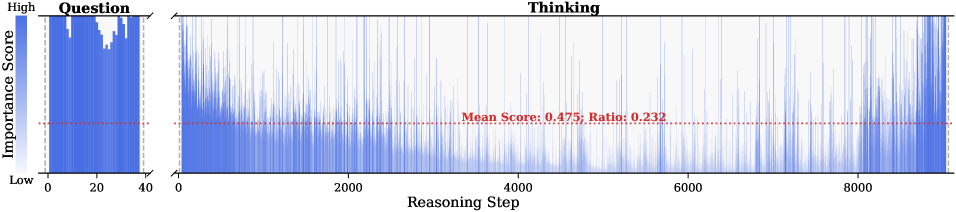

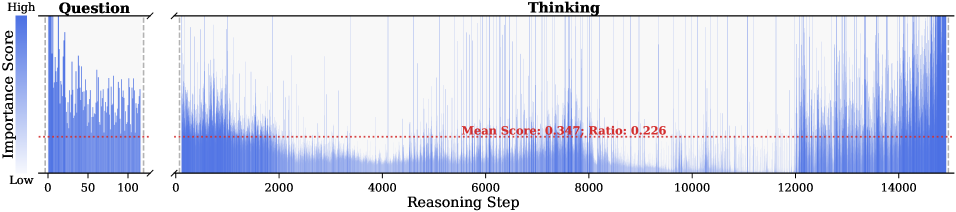

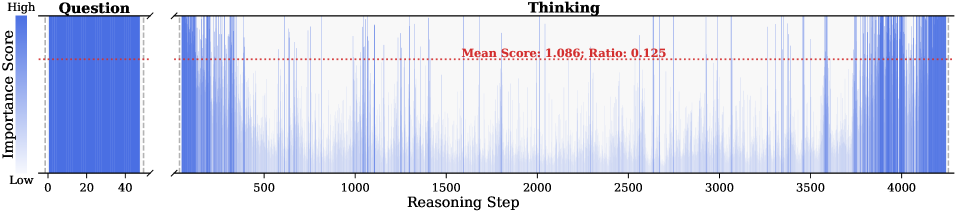

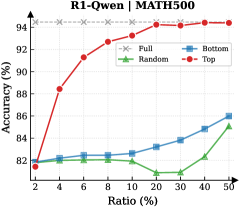

Figure 2: Importance scores of question tokens and thinking tokens in a reasoning trace, computed based on attention contributions to the answer. Darker colors indicate higher importance. The red dashed line shows the mean importance score, and the annotated ratio indicates the fraction of tokens with importance above the mean.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Ratio for Different Sampling Methods

### Overview

The image is a line chart comparing the performance (Accuracy %) of four different methods or data sampling strategies across a range of ratios (Ratio %). The chart demonstrates how accuracy changes as the ratio increases for each method.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:**

* **Label:** `Ratio (%)`

* **Scale:** Logarithmic or non-linear scale. The marked values are: 2, 4, 6, 8, 10, 20, 30, 40, 50.

* **Y-Axis:**

* **Label:** `Accuracy (%)`

* **Scale:** Linear scale from 65 to 95, with major gridlines at intervals of 5%.

* **Legend:** Located in the top-right quadrant of the chart area. It contains four entries:

1. `Full` - Gray dashed line with 'x' markers.

2. `Random` - Green solid line with upward-pointing triangle markers.

3. `Bottom` - Blue solid line with square markers.

4. `Top` - Red solid line with circle markers.

### Detailed Analysis

**Data Series and Trends:**

1. **Full (Gray, dashed line, 'x' markers):**

* **Trend:** Perfectly horizontal, constant line.

* **Data Points:** Maintains an accuracy of approximately **95%** across all ratio values from 2% to 50%. This appears to be the baseline or upper-bound performance.

2. **Top (Red, solid line, circle markers):**

* **Trend:** Consistently upward-sloping, showing the highest performance among the non-full methods.

* **Data Points (Approximate):**

* Ratio 2%: ~88%

* Ratio 4%: ~90%

* Ratio 6%: ~92.5%

* Ratio 8%: ~92%

* Ratio 10%: ~93%

* Ratio 20%: ~94%

* Ratio 30%: ~95% (converges with the 'Full' line)

* Ratio 40%: ~95%

* Ratio 50%: ~95%

3. **Random (Green, solid line, triangle markers):**

* **Trend:** Initial slight dip, followed by a steady, strong upward slope.

* **Data Points (Approximate):**

* Ratio 2%: ~63%

* Ratio 4%: ~62% (lowest point)

* Ratio 6%: ~66%

* Ratio 8%: ~66%

* Ratio 10%: ~68%

* Ratio 20%: ~70%

* Ratio 30%: ~73%

* Ratio 40%: ~79%

* Ratio 50%: ~85%

4. **Bottom (Blue, solid line, square markers):**

* **Trend:** Initial decline, a period of stagnation, then a steady upward slope.

* **Data Points (Approximate):**

* Ratio 2%: ~66%

* Ratio 4%: ~66%

* Ratio 6%: ~63% (lowest point)

* Ratio 8%: ~65%

* Ratio 10%: ~65%

* Ratio 20%: ~69%

* Ratio 30%: ~70%

* Ratio 40%: ~73%

* Ratio 50%: ~80%

### Key Observations

1. **Performance Hierarchy:** There is a clear and consistent performance order: `Full` > `Top` > `Random` > `Bottom` for almost all ratio values. The `Top` method significantly outperforms `Random` and `Bottom`.

2. **Convergence:** The `Top` method's accuracy converges with the `Full` baseline at a ratio of approximately **30%** and remains equal thereafter.

3. **Low-Ratio Instability:** Both the `Random` and `Bottom` methods show a performance dip or stagnation at very low ratios (between 2% and 10%) before beginning a consistent climb.

4. **Growth Rate:** The `Random` method shows the steepest rate of improvement (slope) from ratio 10% onward, closing some of the gap with the `Top` method at higher ratios. The `Bottom` method improves at a slower, steadier rate.

5. **Legend Placement:** The legend is positioned in the upper right, overlapping slightly with the `Full` and `Top` data lines but not obscuring critical data points.

### Interpretation

This chart likely illustrates the effectiveness of different data selection or sampling strategies for a machine learning or statistical model, where "Ratio (%)" represents the percentage of data used (e.g., for training, fine-tuning, or as a subset for evaluation).

* **`Full`** represents the model's performance using the complete dataset, serving as the gold standard.

* **`Top`** likely refers to selecting data points based on a high-confidence or high-relevance score. Its rapid ascent to match `Full` performance suggests that a small subset (30%) of the most "important" data can be as effective as the entire dataset for this task.

* **`Random`** represents a naive random sampling baseline. Its lower performance indicates that data quality/importance matters more than sheer volume. Its strong improvement with more data shows that random sampling eventually becomes effective, but requires a much larger ratio.

* **`Bottom`** likely refers to selecting the lowest-confidence or least-relevant data points. Its poor performance, especially at low ratios, confirms that low-quality data is detrimental. Its eventual rise suggests that even low-quality data provides some signal when enough of it is used.

**The core insight** is that intelligent data selection (`Top`) is highly efficient, achieving maximum performance with a fraction of the data. This has significant implications for reducing computational costs, training time, and data storage requirements without sacrificing model accuracy. The dip in `Random` and `Bottom` at low ratios may indicate a critical threshold of data needed to overcome noise or establish a reliable pattern.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Radar Chart: Performance Comparison Across Mathematical and Reasoning Benchmarks

### Overview

This image is a radar chart (also known as a spider chart) comparing the performance of four different methods or models across six distinct benchmarks. The chart uses a radial layout where each axis represents a benchmark, and the distance from the center represents a score, likely a percentage or accuracy metric. The four methods are distinguished by different colors and marker shapes.

### Components/Axes

* **Chart Type:** Radar Chart.

* **Benchmarks (Axes):** Six axes radiate from the center, each labeled with a benchmark name. Starting from the top and moving clockwise:

1. `AMC23`

2. `AIME25`

3. `AIME24`

4. `MATH500`

5. `GAOKAO2023EN`

6. `GPQA-D`

* **Radial Scale:** Concentric circles represent the scoring scale. The innermost circle is unlabeled (likely 0). The labeled circles, moving outward, are marked: `20`, `40`, `60`, `80`, and `100` at the outermost edge.

* **Legend:** Located in the top-right corner of the chart area. It defines four data series:

* `Full`: Gray line with 'x' markers.

* `Bottom`: Blue line with square markers.

* `Random`: Green line with triangle markers.

* `Top`: Red line with circle markers.

* **Data Series:** Four polygons are plotted, each connecting the scores of one method across all six benchmarks.

### Detailed Analysis

The chart compares the performance profiles of the four methods. Below is an analysis of each benchmark, describing the visual trend (from lowest to highest score) and providing approximate score values based on the radial scale.

**1. AMC23 (Top Axis):**

* **Trend:** The `Top` (red) method scores significantly higher than the others. `Full` (gray) and `Random` (green) are close, with `Bottom` (blue) scoring the lowest.

* **Approximate Scores:**

* Top: ~95

* Random: ~75

* Full: ~70

* Bottom: ~65

**2. AIME25 (Top-Right Axis):**

* **Trend:** `Top` (red) again leads. `Full` (gray) and `Random` (green) are tightly clustered in the middle. `Bottom` (blue) is the lowest.

* **Approximate Scores:**

* Top: ~70

* Random: ~55

* Full: ~50

* Bottom: ~45

**3. AIME24 (Right Axis):**

* **Trend:** `Top` (red) is the highest. `Full` (gray) and `Random` (green) are nearly identical and in the middle. `Bottom` (blue) is the lowest.

* **Approximate Scores:**

* Top: ~65

* Random: ~50

* Full: ~50

* Bottom: ~40

**4. MATH500 (Bottom-Right Axis):**

* **Trend:** `Top` (red) has the highest score. `Random` (green) and `Bottom` (blue) are very close, with `Full` (gray) scoring slightly lower than them.

* **Approximate Scores:**

* Top: ~85

* Random: ~75

* Bottom: ~73

* Full: ~68

**5. GAOKAO2023EN (Bottom-Left Axis):**

* **Trend:** `Top` (red) is the highest. `Random` (green) and `Bottom` (blue) are again very close. `Full` (gray) is the lowest.

* **Approximate Scores:**

* Top: ~80

* Random: ~70

* Bottom: ~68

* Full: ~60

**6. GPQA-D (Left Axis):**

* **Trend:** `Top` (red) is the highest. `Full` (gray) and `Random` (green) are close in the middle. `Bottom` (blue) is the lowest.

* **Approximate Scores:**

* Top: ~60

* Random: ~45

* Full: ~42

* Bottom: ~35

### Key Observations

1. **Consistent Leader:** The `Top` method (red line/circles) achieves the highest score on every single benchmark, forming the outermost polygon.

2. **Middle Cluster:** The `Full` (gray/x) and `Random` (green/triangles) methods frequently perform similarly, often occupying the middle range of scores. Their lines overlap or run close together on several axes (AIME24, AIME25, GPQA-D).

3. **Lower Performer:** The `Bottom` method (blue/squares) is consistently among the lowest-scoring, often forming the innermost polygon, though it is sometimes very close to `Random` (e.g., MATH500, GAOKAO2023EN).

4. **Benchmark Difficulty:** The spread between the highest (`Top`) and lowest (`Bottom`) scores varies by benchmark. The spread appears largest on `AMC23` and `GPQA-D`, suggesting these benchmarks may differentiate the methods more starkly. The spread is narrower on `MATH500` and `GAOKAO2023EN`.

### Interpretation

This radar chart visually demonstrates a clear performance hierarchy among the four evaluated methods across a suite of mathematical and reasoning benchmarks.

* **What the data suggests:** The method labeled `Top` is unequivocally the strongest performer, suggesting it represents a high-performing model, a model fine-tuned on top-tier data, or an optimized configuration. The `Bottom` method's consistently lower scores imply it may represent a baseline, a model trained on lower-quality data, or a less optimized setup.

* **Relationship between elements:** The close performance of `Random` and `Full` is intriguing. It suggests that, for these benchmarks, a model using a random subset of data (`Random`) performs comparably to one using the full dataset (`Full`). This could indicate diminishing returns from the full dataset or that the random subset is sufficiently representative. The `Bottom` method's proximity to `Random` on some tasks further implies that selecting the "bottom" performers for training may not be worse than random selection, and is often better than using the full, potentially noisy, dataset.

* **Notable patterns:** The consistent dominance of `Top` across diverse benchmarks (from AMC/AIME competitions to the Chinese Gaokao and the graduate-level GPQA-D) indicates robust and generalizable superior performance. The chart effectively argues that the strategy or data represented by `Top` is highly effective. The investigation would next focus on understanding what "Top" specifically refers to (e.g., top 10% of data, a top-performing model variant) to replicate its success.

</details>

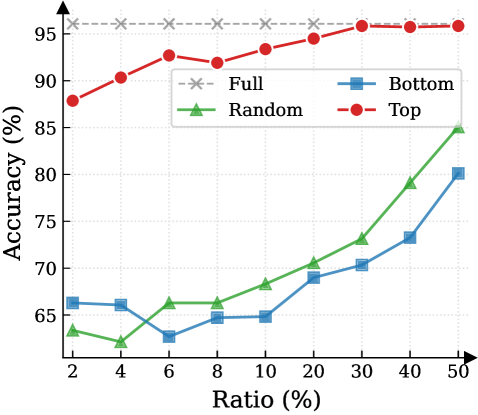

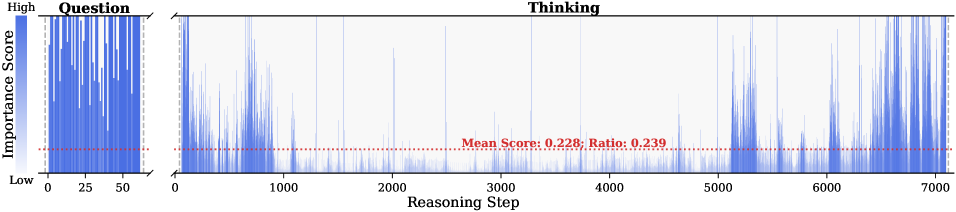

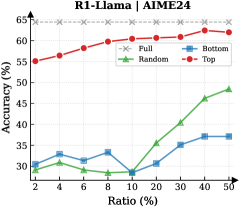

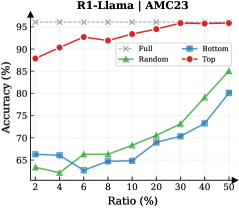

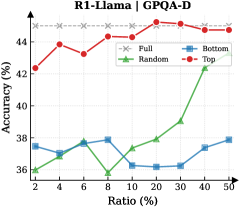

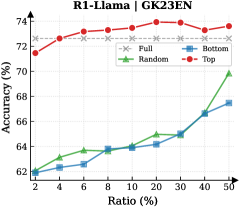

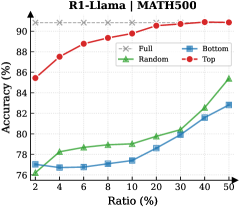

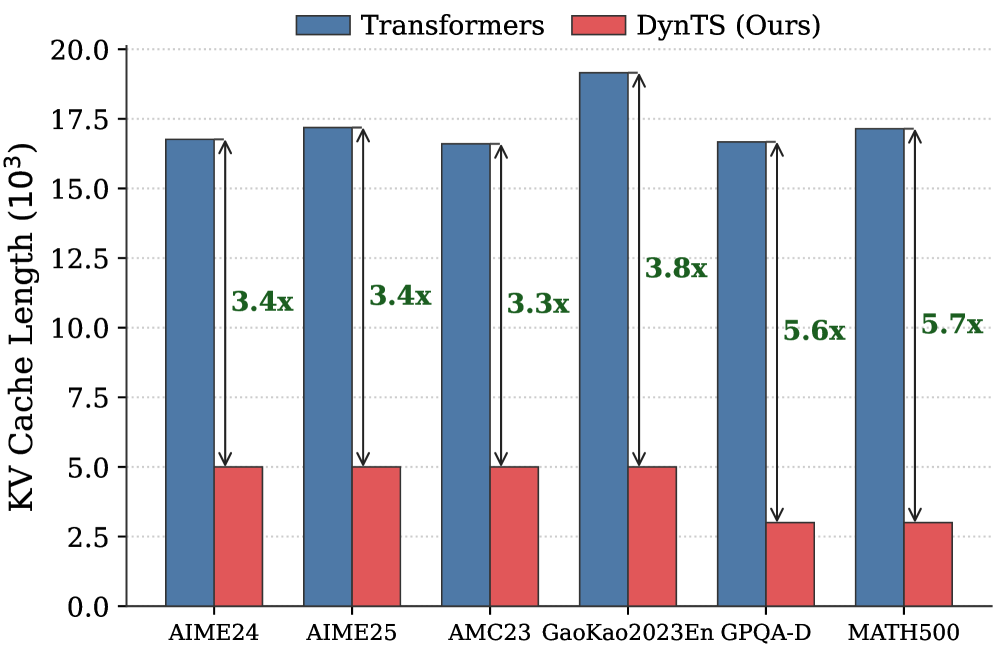

Figure 3: (Left) Reasoning performance trends as a function of thinking token retention ratio, where the $x$ -axis indicates the retention percentage and the $y$ -axis is the accuracy. (Right) Accuracy across all datasets when retaining $30\$ of the thinking tokens.

#### Attention Mechanism.

Attention Mechanism is a core component of Transformer-based LRMs, such as Multi-Head Attention (Vaswani et al., 2017), Grouped-Query Attention (Ainslie et al., 2023), and their variants. To highlight the memory challenges in LRMs, we formulate the attention computation at the token level. Consider the decode step $t$ . Let $\mathbf{h}_{t}\in\mathbb{R}^{d}$ be the input hidden state of the current token. The model projects $\mathbf{h}_{t}$ into query, key, and value vectors:

$$

\mathbf{q}_{t}=\mathbf{W}_{Q}\mathbf{h}_{t},\quad\mathbf{k}_{t}=\mathbf{W}_{K}\mathbf{h}_{t},\quad\mathbf{v}_{t}=\mathbf{W}_{V}\mathbf{h}_{t}, \tag{3}

$$

where $\mathbf{W}_{Q},\mathbf{W}_{K},\mathbf{W}_{V}$ are learnable projection matrices. The query $\mathbf{q}_{t}$ attends to the keys of all preceding positions $j\in\{1,\dots,t\}$ . The attention weight $a_{t,j}$ between the current token $t$ and a past token $j$ is:

$$

\alpha_{t,j}=\frac{\exp(e_{t,j})}{\sum_{i=1}^{t}\exp(e_{t,i})},\qquad e_{t,j}=\frac{\mathbf{q}_{t}^{\top}\mathbf{k}_{j}}{\sqrt{d_{k}}}. \tag{4}

$$

These scores represent the relevance of the current step to the $j$ -th token. Finally, the output of the attention head $\mathbf{o}_{t}$ is the weighted sum of all historical value vectors:

$$

\mathbf{o}_{t}=\sum_{j=1}^{t}\alpha_{t,j}\mathbf{v}_{j}. \tag{5}

$$

As Equation 5 implies, calculating $\mathbf{o}_{t}$ requires access to the entire sequence of past keys and values $\{\mathbf{k}_{j},\mathbf{v}_{j}\}_{j=1}^{t-1}$ . In standard implementation, these vectors are stored in the KV cache to avoid redundant computation (Vaswani et al., 2017; Pope et al., 2023). In the LRMs’ inference, the reasoning trace is exceptionally long, imposing significant memory bottlenecks and increasing computational overhead.

## 3 Observations and Insight

This section presents the observed sparsity of thinking tokens and the Pareto Principle in LRMs, serving as the basis for DynTS. Detailed experimental settings and additional results are provided in Appendix § B.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Diagram: Large Reasoning Model (LRM) with Importance Predictor (IP) - Training and Inference Architecture

### Overview

This technical diagram illustrates the architecture and workflow of a system that augments a Large Reasoning Model (LRM) with a trainable Importance Predictor (IP). The system is designed to optimize the Key-Value (KV) cache during inference by predicting the importance of tokens and selectively retaining or evicting them to meet a computational budget. The diagram is split into two primary sections: **Training** (left) and **Inference** (right).

### Components/Axes

The diagram is a flowchart/block diagram with the following major components and labels:

**Training Section (Left Panel):**

* **Input Tokens:** A sequence of grey squares at the bottom, representing the initial input.

* **Large Reasoning Model (LRM):** A large blue block labeled "Large Reasoning Model (LRM)" with a snowflake icon (❄️), indicating it is a frozen (non-trainable) component.

* **Importance Predictor (IP):** A red block labeled "Importance Predictor (IP)" with a fire icon (🔥), indicating it is a trainable component. It sits above the LRM.

* **Thinking Tokens:** A grid of squares above the IP, color-coded in shades of orange/red. A label "Thinking Tokens" with a double-headed arrow spans the top of this grid.

* **Answer:** A vertical label on the left side of the Thinking Tokens grid.

* **Mean Squared Error Loss:** A dashed box encompassing a row of orange/red squares, labeled "Mean Squared Error Loss".

* **Aggregate:** An arrow pointing from the right end of the Thinking Tokens grid to the loss calculation.

* **Backward:** An arrow pointing from the loss calculation back to the Importance Predictor (IP), indicating the backpropagation path for training.

**Inference Section (Right Panel):**

* **Steps:** A horizontal axis at the bottom labeled "Steps", indicating the progression of the inference process.

* **KV Cache Budget:** A vertical label on the far right, indicating the constraint on the cache size.

* **Multi Step:** A label at the top left of the inference flow.

* **Token Blocks:** Tokens are represented as pairs of boxes (e.g., `[A][∞]`, `[C][0.2]`). The first box contains a letter (token identifier), and the second contains a numerical score (predicted importance) or the infinity symbol (∞).

* **Process Labels:** The flow is annotated with labels: "Question", "Selection", "Local", "Reach Budget", "Select Critical Tokens", "Keep", "Evict", and "Retain".

* **LRM with IP:** A combined blue and red block at the bottom, labeled "LRM with IP".

* **Current Token / Next Token:** Boxes labeled "X" (Current Token) and "Y" (Next Token).

* **Predicted Score:** A box showing a numerical value (e.g., `0.2`).

**Color Coding & Legend:**

* **Grey Squares:** Input tokens, non-critical tokens, or evicted tokens.

* **Orange/Red Squares:** "Thinking Tokens" or tokens with high predicted importance scores.

* **Blue Block:** Frozen LRM component.

* **Red Block:** Trainable Importance Predictor (IP) component.

* **Pink/Red Background Shading:** Highlights tokens involved in the "Evict" action.

* **Green Background Shading:** Highlights the final set of retained tokens within the "KV Cache Budget".

### Detailed Analysis

The diagram details a two-phase process:

**1. Training Phase:**

* Input tokens are fed into the frozen **Large Reasoning Model (LRM)**.

* The LRM produces "Thinking Tokens" (a sequence of hidden states or activations).

* The trainable **Importance Predictor (IP)** processes these tokens.

* The system calculates a **Mean Squared Error Loss** by comparing the IP's predictions against an aggregated target derived from the thinking tokens.

* The loss is backpropagated (**Backward** arrow) to update only the Importance Predictor (IP), leaving the LRM frozen.

**2. Inference Phase (Multi-Step Process):**

The process flows from left to right across multiple steps, governed by a **KV Cache Budget**.

* **Initial State:** A set of tokens (A, B, C, D, E...) with their predicted importance scores (e.g., A: ∞, B: ∞, C: 0.2, D: 0.1, E: 0.5).

* **Selection & Eviction:** Based on the scores and the budget, tokens are processed:

* Tokens with infinite score (∞) or high score (e.g., E: 0.5) are **Kept** or **Retained**.

* Tokens with low scores (e.g., C: 0.2, D: 0.1, F: 0.1, H: 0.2) are marked for **Evict**ion (shown with scissors icons and pink background).

* **Local Context:** A separate set of recent tokens (I, J, K) is always **Kept** in a local cache.

* **Budget Enforcement:** The system selects the critical tokens (those not evicted and the local context) to fit within the **KV Cache Budget** (green shaded area). For example, after eviction, the retained set might be A, B, E, G, I, J, K.

* **Next Step Generation:** The current token (X) and the retained KV cache are input to the **LRM with IP**. The model outputs the next token (Y) and a new predicted importance score (e.g., 0.2) for the current token (X), which is then added to the cache for the next step. This cycle repeats.

### Key Observations

* **Selective Retention:** The core mechanism is the dynamic selection of tokens based on a learned importance score, not just recency.

* **Budget-Conscious:** The entire inference process is constrained by a predefined **KV Cache Budget**, forcing a trade-off between context length and computational efficiency.

* **Hybrid Cache:** The cache appears to consist of two parts: a **budget-constrained set** of historically important tokens and a **fixed local context** of recent tokens.

* **Training Focus:** Only the Importance Predictor is trained, using a self-supervised signal (MSE loss on thinking tokens), making the approach potentially efficient.

* **Symbolism:** The snowflake (❄️) on the LRM and fire (🔥) on the IP clearly distinguish between frozen and trainable components.

### Interpretation

This diagram presents a method for making large reasoning models more efficient during inference. The key innovation is decoupling the "reasoning" capability (frozen in the massive LRM) from the "memory management" capability (trainable in the lightweight IP).

The system learns *what to remember*. Instead of naively keeping all previous tokens in the KV cache (which grows linearly and becomes prohibitively expensive), it trains a predictor to assign importance scores. During inference, it actively curates the cache, evicting low-importance tokens to stay within a fixed budget. This allows the model to maintain a long effective context (by keeping critical past information) while controlling computational cost and memory usage.

The "Thinking Tokens" and the use of MSE loss suggest the IP is trained to predict which intermediate states of the LRM's reasoning process are most valuable for future generation. The multi-step inference flow demonstrates a practical, closed-loop system where the model's own predictions (both for the next token and for token importance) continuously update its working memory. This represents a shift from static context windows to dynamic, learned memory management for large language models.

</details>

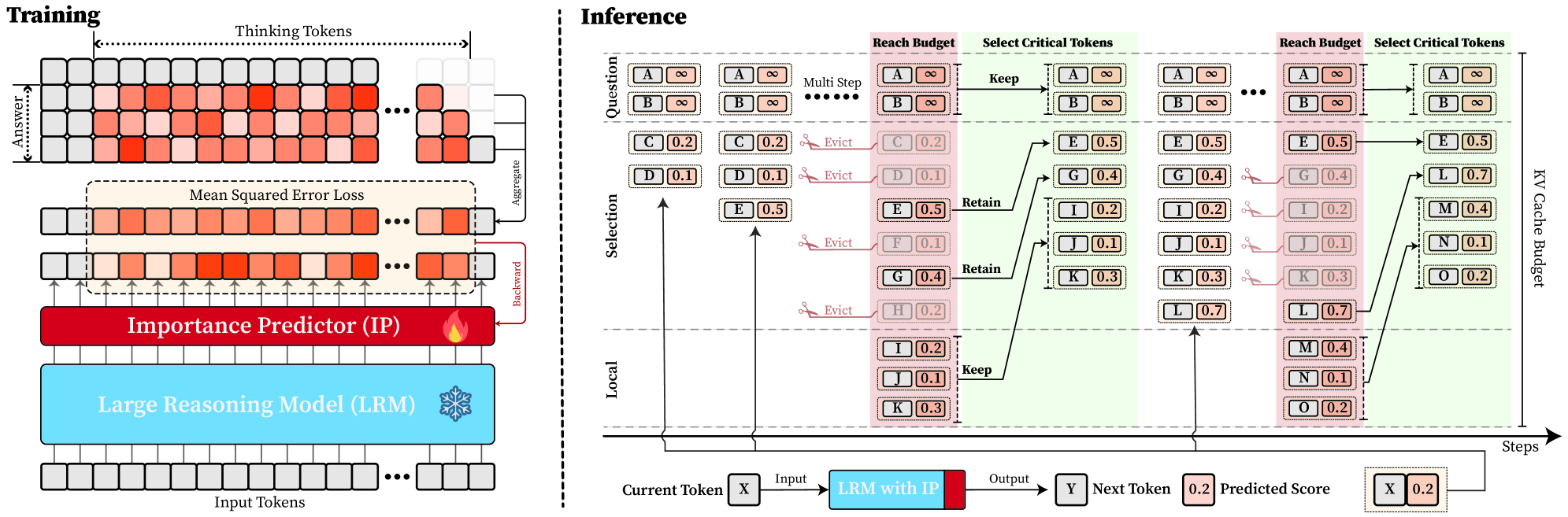

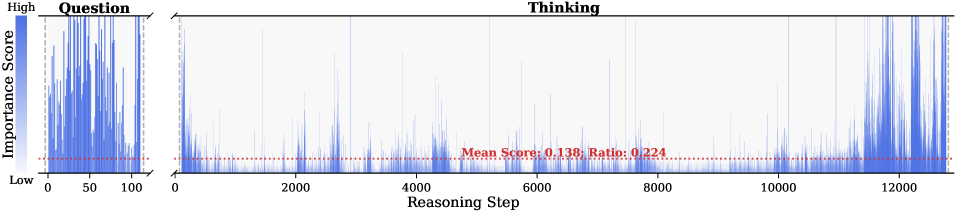

Figure 4: Overview of DynTS. (Left) Importance Predictor Training. The upper heatmap visualizes attention weights, where orange intensity represents the importance of thinking tokens to the answer. The lower part shows a LRM integrated with an Importance Predictor (IP) to learn these importance scores. (Right) Inference with KV Cache Selection. The model outputs the next token and a predicted importance score of the current token. When the cache budget is reached, the selection strategy retains the KV cache of question tokens, local tokens, and top-k thinking tokens based on the predicted importance score.

### 3.1 Sparsity for Thinking Tokens

Previous works (Bogdan et al., 2025; Zhang et al., 2023; Singh et al., 2024) have shown that attention weights (Eq. 4) serve as a reliable proxy for token importance. Building on this insight, we calculate an importance score for each question and thinking token by accumulating the attention they receive from all answer tokens. Formally, the importance scores are defined as:

$$

I_{x_{j}}=\sum_{i=1}^{K}\alpha_{a_{i},x_{j}},\qquad I_{t_{j}}=\sum_{i=1}^{K}\alpha_{a_{i},t_{j}}, \tag{6}

$$

where $I_{x_{j}}$ and $I_{t_{j}}$ denote the importance scores of the $j$ -th question token $x_{j}$ and thinking token $t_{j}$ . Here, $\alpha_{a_{i},x_{j}}$ and $\alpha_{a_{i},t_{j}}$ represent the attention weights from the $i$ -th answer token $a_{i}$ to the corresponding question or thinking token, and $K$ is the total number of answer tokens. We perform full autoregressive inference on LRMs to extract attention weights and compute token-level importance scores for both question and thinking tokens.

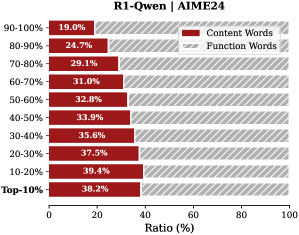

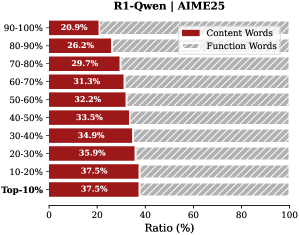

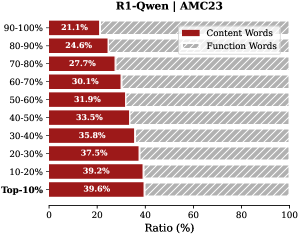

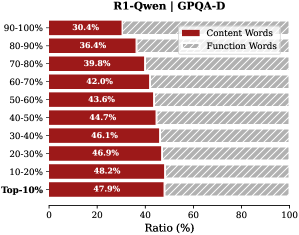

Observation. As illustrated in Fig. 2, the question tokens (left panel) exhibit consistently significant and dense importance scores. In contrast, the thinking tokens (right panel) display a highly sparse distribution. Despite the extensive reasoning trace (exceeding 12k tokens), only $21.1\$ of thinking tokens exceed the mean importance score. This indicates that the vast majority of reasoning steps exert only a marginal influence on the final answer.

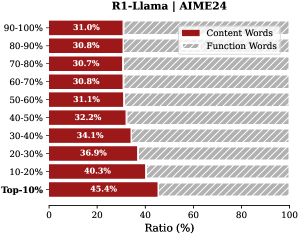

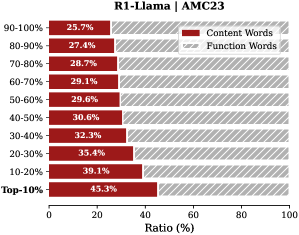

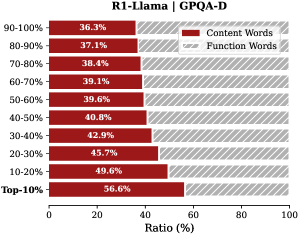

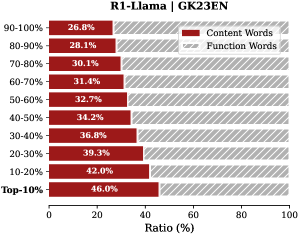

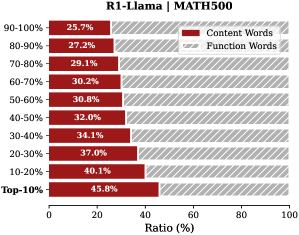

Analysis. Follow attention-based methods (Cai et al., 2025; Li et al., 2024; Cai et al., 2024), tokens with higher importance scores intuitively correspond to decision-critical reasoning steps, which are critical for the model to generate the final answer. The low-importance tokens serve as syntactic scaffolding or intermediate states that become redundant after reasoning progresses (We report the ratio of Content Words, see Appendix B.2). Consequently, we hypothesize that the model maintains close reasoning performance to that of the full token sequence, even when it selectively retains only these critical thinking tokens.

### 3.2 Pareto Principle in LRMs

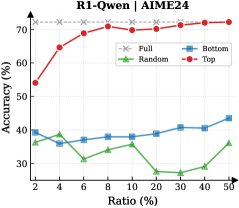

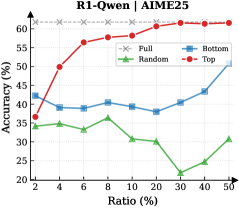

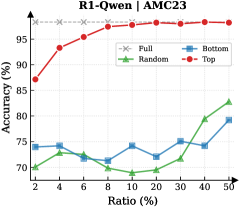

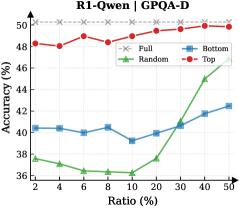

To validate the aforementioned hypothesis, we retain all question tokens while preserving only the top- $p\$ of thinking tokens ranked by importance score, and prompt the model to directly generate the final answer.

Observation. As illustrated in Fig. 3 (Left), the importance-based top- $p\$ selection strategy substantially outperforms both random- and bottom-selection baselines. Notably, the model recovers nearly its full performance (grey dashed line) when retaining only $\sim 30\$ thinking tokens with top importance scores. Fig. 3 (Right) further confirms this trend across six diverse datasets, where the performance polygon under the top- $30\$ retention strategy almost completely overlaps with the full thinking tokens.

Insights. These empirical results illustrate and reveal the Pareto Principle in LRM reasoning: Only a small subset of thinking tokens ( $30\$ ) with high importance scores serve as “pivotal nodes,” which are critical for the model to output a final answer, while the remaining tokens contribute negligibly to the outcome. This finding provides strong empirical support for LRMs’ KV cache compression, indicating that it is possible to reduce memory footprint and computational overhead without sacrificing performance.

## 4 Dynamic Thinking-Token Selection

Building on the Pareto Principle in LRMs, critical thinking tokens can be identified via the importance score computed by Equation 6. However, this computation requires the attention weights from the answer to the thinking tokens, which are inaccessible until the model completes the entire decoding stage. To address this limitation, we introduce an Importance Predictor that dynamically estimates the importance score of each thinking token during inference time. Furthermore, we design a decoding-time KV cache Selection Strategy that retains critical thinking tokens and evicts redundant ones. We refer to this approach as DynTS (Dyn amic T hinking Token S election), and the overview is illustrated in Fig. 4.

### 4.1 Importance Predictor

#### Integrate Importance Predictor in LRMs.

Transformer-based Large Language Models (LLMs) typically consist of stacked Transformer blocks followed by a language modeling head (Vaswani et al., 2017), where the output of the final block serves as a feature representation of the current token. Building on this architecture, we attach an additional lightweight MLP head to the final hidden state, named as Importance Predictor (Huang et al., 2024). It is used to predict the importance score of the current thinking token during model inference, capturing its contribution to the final answer. Formally, we define the modified LRM as a mapping function $\mathcal{M}$ that processes the input sequence $\mathbf{x}_{\leq t}$ to produce a dual-output tuple comprising the next token $x_{t+1}$ and the current importance score $s_{x_{t}}$ :

$$

\mathcal{M}(\mathbf{x}_{\leq t})\rightarrow(x_{t+1},s_{x_{t}}) \tag{7}

$$

#### Predictor Training.

To obtain supervision signals for training, we prompt the LRMs based on the training dataset to generate complete sequences denoted as $\{x_{1\dots M},t_{1\dots L},a_{1\dots K}\}$ , filtering out incorrect or incomplete reasoning. Here, $x$ , $t$ , and $a$ represent the question, thinking, and answer tokens, respectively. Based on the observation in Section § 3, the thinking tokens significantly outnumber answer tokens ( $L\gg K$ ), and question tokens remain essential. Therefore, DynTS only focuses on predicting the importance of thinking tokens. By utilizing the attention weights from answer to thinking tokens, we derive the ground-truth importance score $I_{t_{i}}$ for each thinking token according to Equation 6. Finally, the Importance Predictor parameters can be optimized by minimizing the Mean Squared Error (MSE) loss (Wang and Bovik, 2009) as follows:

$$

\mathcal{L}_{\text{MSE}}=\frac{1}{K}\sum_{i=1}^{K}(I_{t_{i}}-s_{t_{i}})^{2}. \tag{8}

$$

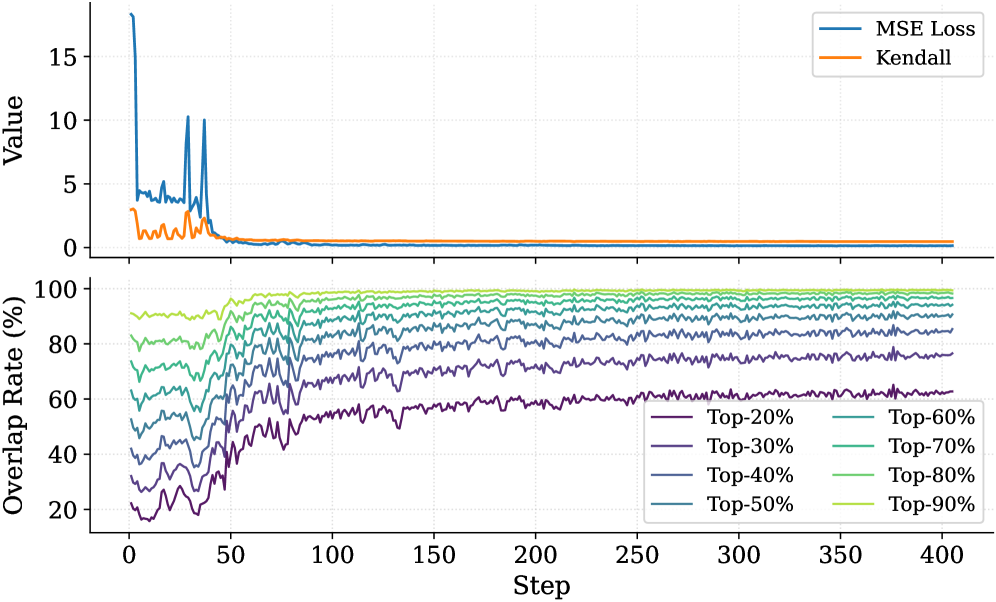

To preserve the LRMs’ original performance, we freeze the backbone parameters and optimize the Importance Predictor exclusively. The trained model can predict the importance of thinking tokens to the answer. This paper focuses on mathematical reasoning tasks. We optimize the Importance Predictor only on the MATH training set and validated across six other datasets (See Section § 6.1).

### 4.2 KV Cache Selection

During LRMs’ inference, we establish a maximum KV cache budget $B$ , which is composed of a question window $W_{q}$ , a selection window $W_{s}$ , and a local window $W_{l}$ , formulated as $B=W_{q}+W_{s}+W_{l}$ . Specifically, the question window stores the KV caches of question tokens generated during the prefilling phase, i.e., the window size $W_{q}$ is equal to the number of question tokens $M$ ( $W_{q}=M$ ). Since these tokens are critical for the final answer (see Section § 3), we assign an importance score of $+\infty$ to these tokens, ensuring their KV caches are immune to eviction throughout the inference process.

In the subsequent decoding phase, we maintain a sequential stream of tokens. Newly generated KV caches and their corresponding importance scores are sequentially appended to the selection window ( $W_{s}$ ) and the local window ( $W_{l}$ ). Once the total token count reaches the budget limit $B$ , the critical token selection process is triggered, as illustrated in Fig. 4 (Right). Within the selection window, we retain the KV caches of the top- $k$ tokens with the highest scores and evict the remainder. Simultaneously, drawing inspiration from (Chen et al., 2024; Zhang et al., 2023; Zhao et al., 2024), we maintain the KV caches within the local window to ensure the overall coherence of the subsequently generated sequence. This inference process continues until decoding terminates.

## 5 Theoretical Overhead Analysis

In DynTS, the KV cache selection strategy reduces computational overhead by constraining cache length, while the importance predictor introduces a slight overhead. In this section, we theoretically analyze the trade-off between these two components and derive the Break-even Condition required to achieve net computational gains.

Notions. Let $\mathcal{M}_{\text{base}}$ be the vanilla LRM with $L$ layers and hidden dimension $d$ , and $\mathcal{M}_{\text{opt}}$ be the LRM with Importance Predictor (MLP: $d\to 2d\to d/2\to 1$ ). We define the prefill length as $M$ and the current decoding step as $i\in\mathbb{Z}^{+}$ . For vanilla decoding, the effective KV cache length grows linearly as $S_{i}^{\text{base}}=M+i$ . While DynTS evicts $K$ tokens by the KV Cache Selection when the effective KV cache length reaches the budget $B$ . Resulting in the effective length $S_{i}^{\text{opt}}=M+i-n_{i}\cdot K$ , where $n_{i}=\max\left(0,\left\lfloor\frac{(M+i)-B}{K}\right\rfloor+1\right)$ denotes the count of cache eviction event at step $i$ . By leveraging Floating-Point Operations (FLOPs) to quantify computational overhead, we establish the following theorem. The detailed proof is provided in Appendix A.

**Theorem 5.1 (Computational Gain)**

*Let $\Delta\mathcal{C}(i)$ be the reduction FLOPs achieved by DynTS at decoding step $i$ . The gain function is derived as the difference between the eviction event savings from KV Cache Selection and the introduced overhead of the predictor:

$$

\Delta\mathcal{C}(i)=\underbrace{n_{i}\cdot 4LdK}_{\text{Eviction Saving}}-\underbrace{(6d^{2}+d)}_{\text{Predictor Overhead}}, \tag{9}

$$*

Based on the formulation above, we derive a critical corollary regarding the net computational gain.

**Corollary 5.2 (Break-even Condition)**

*To achieve a net computational gain ( $\Delta\mathcal{C}(i)>0$ ) at the $n_{i}$ -th eviction event, the eviction volume $K$ must satisfy the following inequality:

$$

K>\frac{6d^{2}+d}{n_{i}\cdot 4Ld}\approx\frac{1.5d}{n_{i}L} \tag{10}

$$*

This inequality provides a theoretical lower bound for the eviction volume $K$ . demonstrating that the break-even point is determined by the model’s architectural (hidden dimension $d$ and layer count $L$ ).

Table 1: Performance comparison of different methods on R1-Llama and R1-Qwen. We report the average Pass@1 and Throughput (TPS) across six benchmarks. “Transformers” denotes the full cache baseline, and “Window” represents the local window baseline.

| Transformers Window StreamingLLM | 47.3 18.6 20.6 | 215.1 447.9 445.8 | 28.6 14.6 16.6 | 213.9 441.3 445.7 | 86.5 59.5 65.0 | 200.6 409.4 410.9 | 46.4 37.6 37.8 | 207.9 408.8 407.4 | 73.1 47.0 53.4 | 390.9 622.6 624.6 | 87.5 58.1 66.1 | 323.4 590.5 592.1 | 61.6 39.2 43.3 | 258.6 486.7 487.7 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| SepLLM | 30.0 | 448.2 | 20.0 | 445.1 | 71.0 | 414.1 | 39.7 | 406.6 | 61.4 | 635.0 | 74.5 | 600.4 | 49.4 | 491.6 |

| H2O | 38.6 | 426.2 | 22.6 | 423.4 | 82.5 | 396.1 | 41.6 | 381.5 | 67.5 | 601.8 | 82.7 | 573.4 | 55.9 | 467.1 |

| SnapKV | 39.3 | 438.2 | 24.6 | 436.3 | 80.5 | 406.9 | 41.9 | 394.1 | 68.7 | 615.7 | 83.1 | 584.5 | 56.3 | 479.3 |

| R-KV | 44.0 | 437.4 | 26.0 | 434.7 | 86.5 | 409.5 | 44.5 | 394.9 | 71.4 | 622.6 | 85.2 | 589.2 | 59.6 | 481.4 |

| DynTS (Ours) | 49.3 | 444.6 | 29.3 | 443.5 | 87.0 | 412.9 | 46.3 | 397.6 | 72.3 | 631.8 | 87.2 | 608.2 | 61.9 | 489.8 |

| R1-Qwen | | | | | | | | | | | | | | |

| Transformers | 52.0 | 357.2 | 35.3 | 354.3 | 87.5 | 376.2 | 49.0 | 349.4 | 77.9 | 593.7 | 91.3 | 517.3 | 65.5 | 424.7 |

| Window | 41.3 | 650.4 | 31.3 | 643.0 | 82.0 | 652.3 | 45.9 | 634.1 | 71.8 | 815.2 | 85.0 | 767.0 | 59.5 | 693.7 |

| StreamingLLM | 42.0 | 655.7 | 29.3 | 648.5 | 85.0 | 657.2 | 45.9 | 631.1 | 71.2 | 824.0 | 85.8 | 786.1 | 59.8 | 700.5 |

| SepLLM | 38.6 | 650.0 | 31.3 | 647.6 | 85.5 | 653.2 | 45.6 | 639.5 | 72.0 | 820.1 | 84.4 | 792.2 | 59.6 | 700.4 |

| H2O | 42.6 | 610.9 | 33.3 | 610.7 | 84.5 | 609.9 | 48.1 | 593.6 | 74.1 | 780.1 | 87.0 | 725.4 | 61.6 | 655.1 |

| SnapKV | 48.6 | 639.6 | 33.3 | 633.1 | 87.5 | 633.2 | 46.5 | 622.0 | 74.9 | 787.4 | 88.2 | 768.7 | 63.2 | 680.7 |

| R-KV | 44.0 | 639.5 | 32.6 | 634.7 | 85.0 | 636.8 | 47.2 | 615.1 | 75.8 | 792.8 | 88.8 | 765.5 | 62.2 | 680.7 |

| DynTS (Ours) | 52.0 | 645.6 | 36.6 | 643.0 | 88.5 | 646.0 | 48.1 | 625.7 | 76.4 | 788.5 | 90.0 | 779.5 | 65.3 | 688.1 |

## 6 Experiment

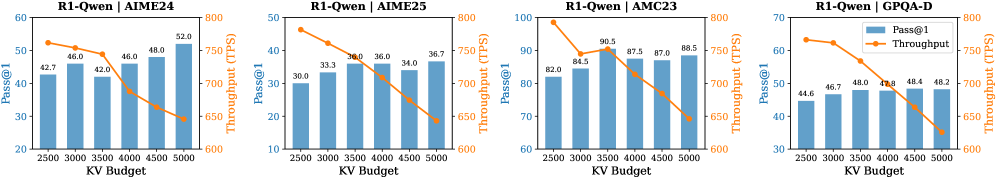

This section introduces experimental settings, followed by the results, ablation studies on retanind tokens and hyperparameters, and the Importance Predictor analysis. For more detailed configurations and additional results, please refer to Appendix C and D.

### 6.1 Experimental Setup

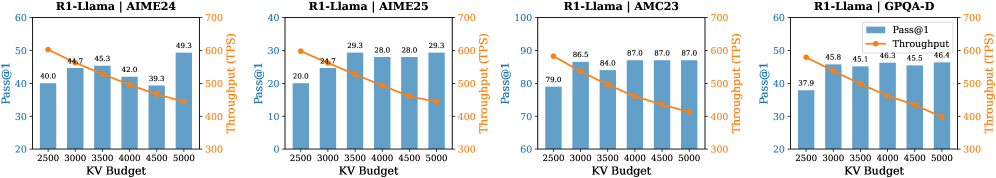

Models and Datasets. We conduct experiments on two mainstream LRMs: R1-Qwen (DeepSeek-R1-Distill-Qwen-7B) and R1-Llama (DeepSeek-R1-Distill-Llama-8B) (Guo et al., 2025). To evaluate the performance and robustness of our method across diverse tasks, we select five mathematical reasoning datasets of varying difficulty levels—AIME24 (Zhang and Math-AI, 2024), AIME25 (Zhang and Math-AI, 2025), AMC23 https://huggingface.co/datasets/math-ai/amc23, GK23EN (GAOKAO2023EN) https://huggingface.co/datasets/MARIO-Math-Reasoning/Gaokao2023-Math-En, and MATH500 (Hendrycks et al., 2021) —along with the GPQA-D (GPQA-Diamond) (Rein et al., 2024) scientific question-answering dataset as evaluation benchmarks.

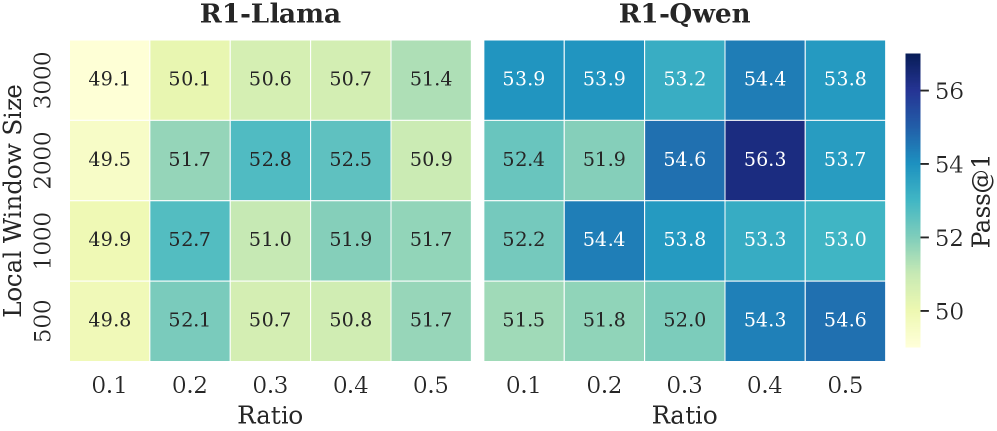

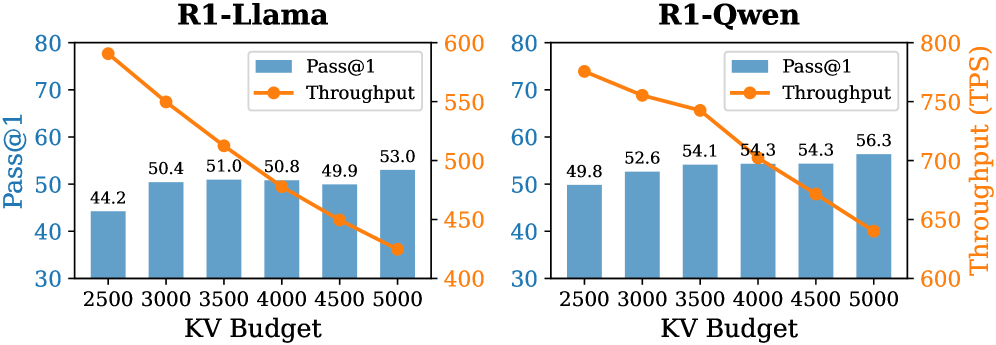

Implementation Details. (1) Training Settings: To train the importance predictor, we sample the model-generated contents with correct answers from the MATH training set and calculate the importance scores of thinking tokens. We freeze the model backbone and optimize only the predictor ( $3$ -layer MLP), setting the number of training epochs to 15, the learning rate to $5\text{e-}4$ , and the maximum sequence length to 18,000. (2) Inference Settings. Following (Guo et al., 2025), setting the maximum decoding steps to 16,384, the sampling temperature to 0.6, top- $p$ to 0.95, and top- $k$ to 20. We apply budget settings based on task difficulty. For challenging benchmarks (AIME24, AIME25, AMC23, and GPQA-D), we set the budget $B$ to 5,000 with a local window size of 2,000; For simpler tasks, the budget is set to 3,000 with a local window of 1,500 for R1-Qwen and 1,000 for R1-Llama. The token retention ratio in the selection window is set to 0.4 for R1-Qwen and 0.3 for R1-Llama. We generate 5 responses for each problem and report the average Pass@1 as the evaluation metric.

Baselines. Our approach focuses on compressing the KV cache by selecting critical tokens. Therefore, we compare our method against the state-of-the-art KV cache compressing approaches. These include StreamingLLM (Xiao et al., 2024), H2O (Zhang et al., 2023), SepLLM (Chen et al., 2024), and SnapKV (Li et al., 2024) (decode-time variant (Liu et al., 2025a)) for LLMs, along with R-KV (Cai et al., 2025) for LRMs. To ensure a fair comparison, all methods were set with the same token overhead and maximum budget. We also report results for standard Transformers and local window methods as evaluation baselines.

### 6.2 Main Results

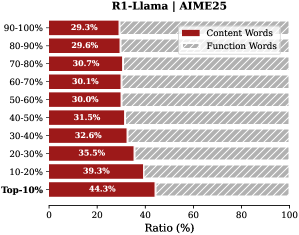

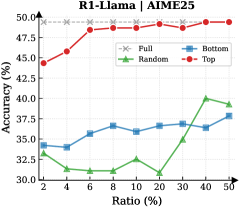

Reasoning Accuracy. As shown in Table 1, our proposed DynTS consistently outperforms all other KV cache eviction baselines. On R1-Llama and R1-Qwen, DynTS achieves an average accuracy of $61.9\$ and $65.3\$ , respectively, significantly surpassing the runner-up methods R-KV ( $59.6\$ ) and SnapKV ( $63.2\$ ). Notably, the overall reasoning capability of DynTS is on par with the Full Cache Transformers baseline ( $61.9\$ vs. $61.6\$ on R1-Llama, $65.3\$ vs. $65.5\$ on R1-Llama). Even outperforms the Transformers on several challenging tasks, such as AIME24 on R1-Llama, where it improves accuracy by $2.0\$ ; and AIME25 on R1-Qwen, where it improves accuracy by $1.3\$ .

Table 2: Ablation study on different token retention strategies in DynTS, where w.o. Q / T / L denotes the removal of Question tokens (Q), critical Thinking tokens (T), and Local window tokens (L), respectively. T-Random and T-Bottom represent strategies that select thinking tokens randomly and the tokens with the bottom-k importance scores, respectively.

| DynTS w.o. L w.o. Q | 49.3 40.6 19.3 | 29.3 23.3 14.6 | 87.0 86.5 59.0 | 46.3 46.3 38.1 | 72.3 72.0 47.8 | 87.2 85.5 59.8 | 61.9 59.0 39.8 |

| --- | --- | --- | --- | --- | --- | --- | --- |

| w.o. T | 44.0 | 27.3 | 85.0 | 44.0 | 71.5 | 85.9 | 59.6 |

| T-Random | 24.6 | 16.0 | 59.5 | 37.4 | 51.7 | 63.9 | 42.2 |

| T-Bottom | 20.6 | 15.3 | 59.0 | 37.3 | 47.3 | 59.5 | 39.8 |

| R1-Qwen | | | | | | | |

| DynTS | 52.0 | 36.6 | 88.5 | 48.1 | 76.4 | 90.0 | 65.3 |

| w.o. L | 42.0 | 32.0 | 87.5 | 46.3 | 75.2 | 87.0 | 61.6 |

| w.o. Q | 46.0 | 36.0 | 86.0 | 43.9 | 75.1 | 89.0 | 62.6 |

| w.o. T | 47.3 | 34.6 | 85.5 | 49.1 | 75.1 | 89.2 | 63.5 |

| T-Random | 46.0 | 32.6 | 84.5 | 47.5 | 73.8 | 86.9 | 61.9 |

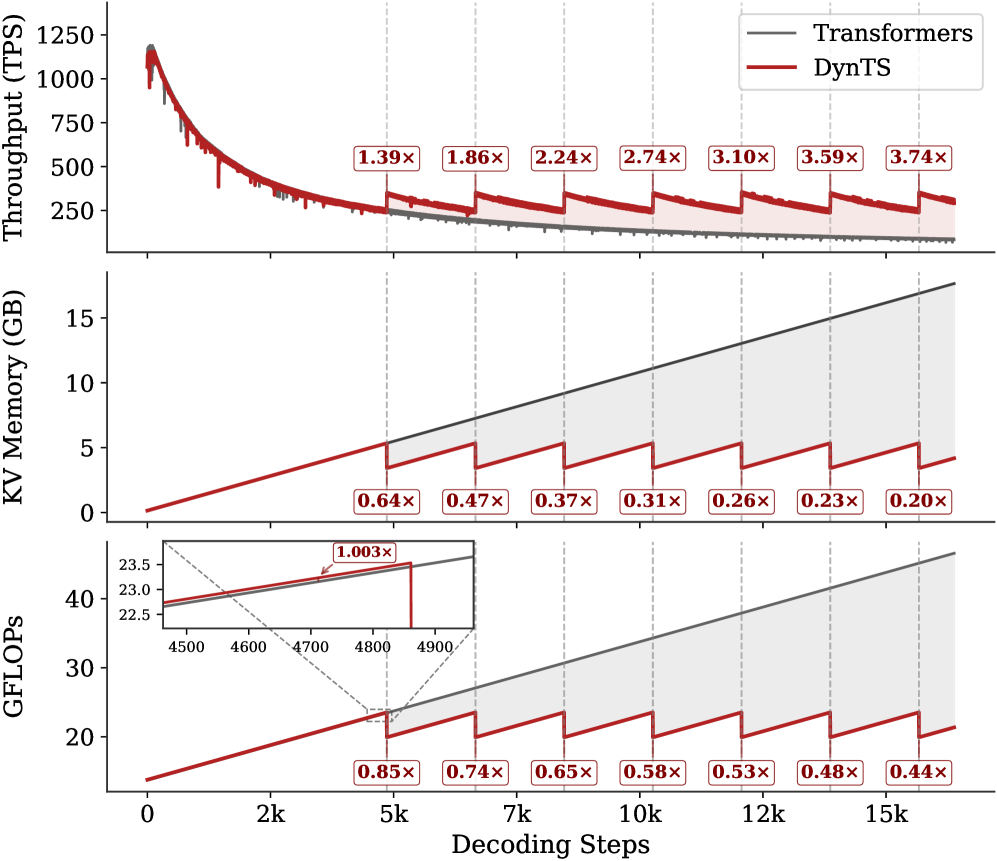

| T-Bottom | 38.0 | 30.0 | 80.0 | 44.3 | 69.8 | 83.3 | 57.6 |

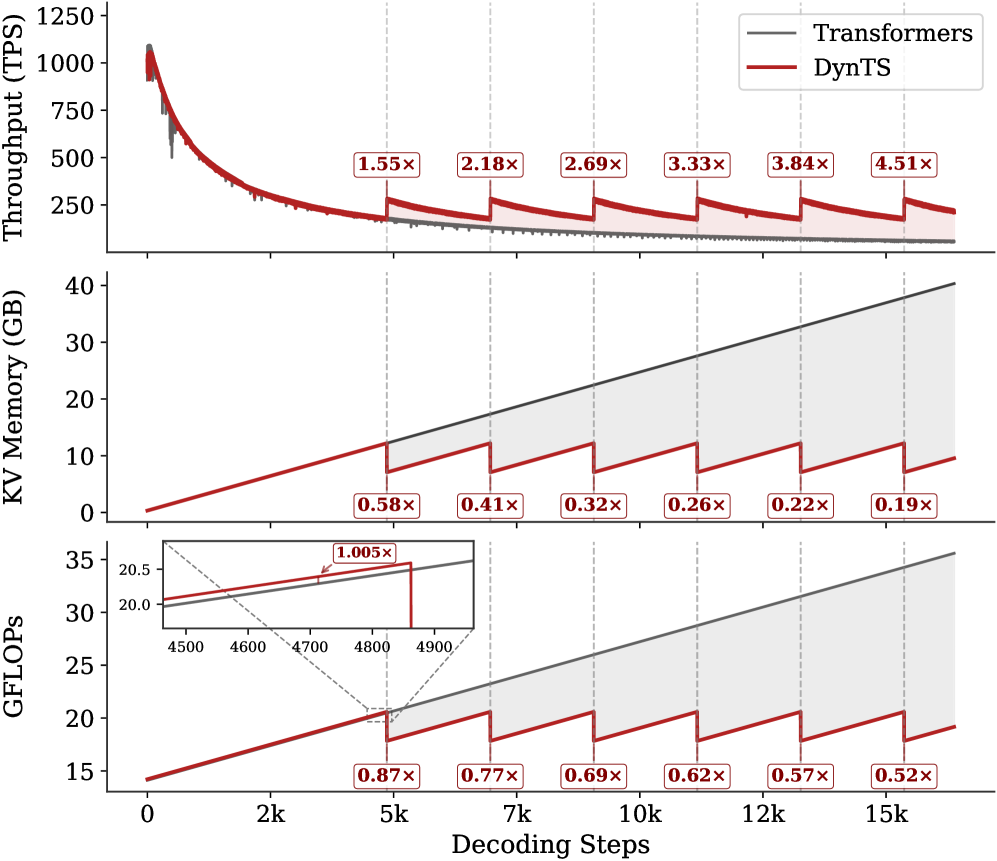

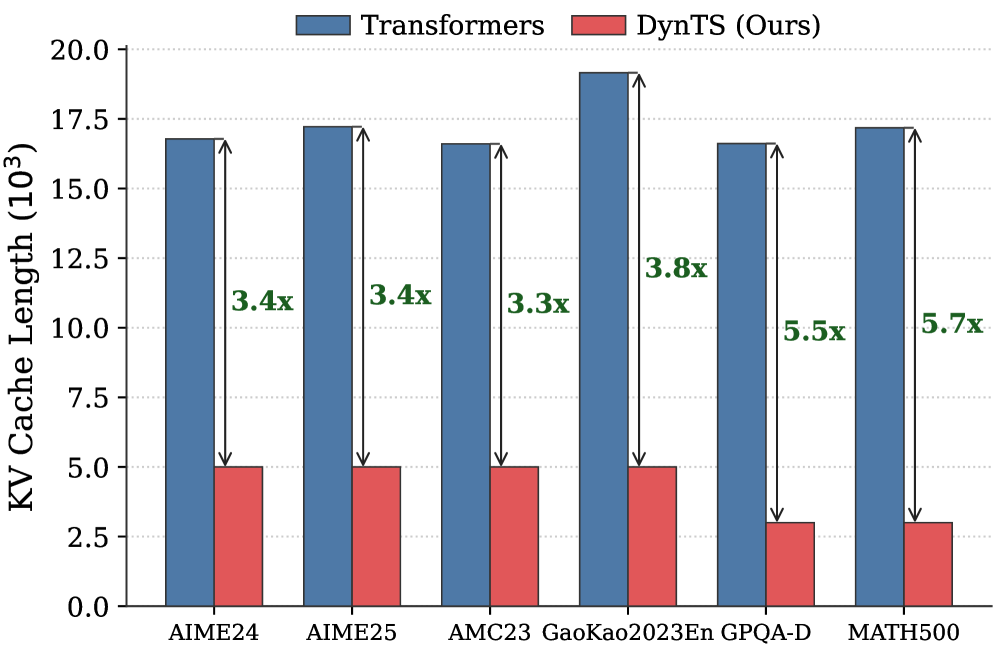

Inference Efficiency. Referring to Table 1, DynTS achieves $1.9\times$ and $1.6\times$ speedup compared to standard Transformers on R1-Llama and R1-Qwen, respectively, across all benchmarks. While DynTS maintains throughput comparable to other KV Cache compression methods. Further observing Figure 5, as the generated sequence length grows, standard Transformers suffer from linear accumulation in both memory footprint and compute overhead (GFLOPs), leading to continuous throughput degradation. In contrast, DynTS effectively bounds resource consumption. The distinctive sawtooth pattern illustrates our periodic compression mechanism, where the inflection points correspond to the execution of KV Cache Selection to evict the KV pairs of non-essential thinking tokens. Consequently, the efficiency advantage escalates as the decoding step increases, DynTS achieves a peak speedup of 4.51 $\times$ , compresses the memory footprint to 0.19 $\times$ , and reduces the compute overhead to 0.52 $\times$ compared to the full-cache baseline. The zoom-in view reveals that the computational cost drops below the baseline immediately after the first KV cache eviction. This illustrates that our experimental settings are rational, as they satisfy the break-even condition ( $K=900\geq\frac{1.5d}{n_{i}L}=192$ ) outlined in Corollary 5.2.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Multi-Panel Line Chart: Performance Comparison of Transformers vs. DynTS

### Overview

This image is a technical performance comparison chart consisting of three vertically stacked line plots sharing a common x-axis. It compares two systems: "Transformers" (represented by a gray line) and "DynTS" (represented by a red line) across three metrics—Throughput, KV Memory usage, and GFLOPs—as a function of decoding steps. The chart demonstrates the performance advantages of DynTS over standard Transformers, particularly as the sequence length (decoding steps) increases.

### Components/Axes

* **Shared X-Axis (Bottom):**

* **Label:** `Decoding Steps`

* **Scale:** Linear, from 0 to 15,000 (15k).

* **Major Tick Markers:** 0, 2k, 5k, 7k, 10k, 12k, 15k.

* **Top Panel - Y-Axis:**

* **Label:** `Throughput (TPS)` (Transactions Per Second).

* **Scale:** Linear, from 0 to 1250.

* **Major Tick Markers:** 0, 250, 500, 750, 1000, 1250.

* **Middle Panel - Y-Axis:**

* **Label:** `KV Memory (GB)` (Key-Value Memory in Gigabytes).

* **Scale:** Linear, from 0 to 40.

* **Major Tick Markers:** 0, 10, 20, 30, 40.

* **Bottom Panel - Y-Axis:**

* **Label:** `GFLOPs` (Giga Floating-Point Operations).

* **Scale:** Linear, from 15 to 35.

* **Major Tick Markers:** 15, 20, 25, 30, 35.

* **Legend (Top-Right of Top Panel):**

* **Position:** Top-right corner of the topmost chart.

* **Items:**

* A gray line labeled `Transformers`.

* A red line labeled `DynTS`.

* **Annotations:** Each panel contains red-bordered boxes with multiplier values (e.g., `1.55x`, `0.58x`) placed at specific decoding steps (5k, 7k, 10k, 12k, 15k). These indicate the performance ratio of DynTS relative to Transformers at those points.

* **Inset (Bottom Panel):** A small zoomed-in chart within the GFLOPs panel, focusing on the x-axis range of approximately 4500 to 4900 decoding steps.

### Detailed Analysis

**1. Top Panel: Throughput (TPS)**

* **Trend Verification:** Both lines show a steep, concave-upward decline in throughput as decoding steps increase from 0. The gray Transformers line declines smoothly. The red DynTS line follows a similar initial decline but exhibits a distinctive **sawtooth pattern**, with periodic sharp upward jumps followed by gradual declines.

* **Data Points & Annotations:**

* At ~0 steps: Both start near 1100 TPS.

* At 5k steps: DynTS shows a `1.55x` speedup over Transformers.

* At 7k steps: `2.18x` speedup.

* At 10k steps: `2.69x` speedup.

* At 12k steps: `3.33x` speedup.

* At 15k steps: `3.84x` speedup.

* A final annotation at the far right (beyond 15k) shows `4.51x`.

* **Interpretation:** DynTS maintains significantly higher throughput than Transformers as sequence length grows. The sawtooth pattern suggests periodic optimization or state reset events that temporarily boost performance.

**2. Middle Panel: KV Memory (GB)**

* **Trend Verification:** The gray Transformers line shows a **steady, linear increase** in memory usage. The red DynTS line also increases linearly but with a **sawtooth pattern of sharp drops**, resetting to a lower baseline at regular intervals.

* **Data Points & Annotations (DynTS Memory Reduction Factor vs. Transformers):**

* At 5k steps: `0.58x` (DynTS uses 58% of Transformers' memory).

* At 7k steps: `0.41x`.

* At 10k steps: `0.32x`.

* At 12k steps: `0.26x`.

* At 15k steps: `0.22x`.

* At the far right: `0.19x`.

* **Spatial Grounding:** The gray line is consistently above the red line. The shaded area between them represents the memory savings achieved by DynTS, which widens dramatically as steps increase.

* **Interpretation:** Transformers' memory grows without bound, which is a known limitation. DynTS employs a mechanism (likely eviction or compression) that periodically reduces KV cache memory, leading to massive savings (over 80% at 15k steps) and enabling longer context processing.

**3. Bottom Panel: GFLOPs**

* **Trend Verification:** Similar to the memory panel, the gray Transformers line shows a **steady, linear increase** in computational cost. The red DynTS line increases with a **sawtooth pattern of drops**.

* **Inset Analysis:** The inset zooms in on the region around 4500-4900 steps. It shows that just before a drop, DynTS's GFLOPs are slightly higher than Transformers (`1.005x` annotation), indicating a minor overhead before the optimization event triggers a significant reduction.

* **Data Points & Annotations (DynTS GFLOPs Reduction Factor vs. Transformers):**

* At 5k steps: `0.87x`.

* At 7k steps: `0.77x`.

* At 10k steps: `0.69x`.

* At 12k steps: `0.62x`.

* At 15k steps: `0.57x`.

* At the far right: `0.52x`.

* **Interpretation:** DynTS reduces the computational operations required for long-context inference. The savings grow with sequence length, complementing the memory savings and contributing to the higher throughput observed in the top panel.

### Key Observations

1. **Correlated Sawtooth Pattern:** The periodic drops in Memory and GFLOPs for DynTS are perfectly aligned vertically across the middle and bottom panels. These events correspond to the upward jumps in Throughput in the top panel, confirming they are the same optimization mechanism.

2. **Diverging Performance Gap:** The performance advantage of DynTS over Transformers (both in speedup and resource reduction) is not constant; it **widens progressively** as the number of decoding steps increases.

3. **Linear vs. Bounded Growth:** Transformers exhibit linear, unbounded growth in memory and compute. DynTS transforms this into a bounded, sawtooth growth pattern, which is crucial for scalability.

4. **Minor Overhead:** The inset in the GFLOPs chart reveals a very slight (`0.5%`) computational overhead for DynTS immediately before its optimization cycle, which is negligible compared to the subsequent ~50% reduction.

### Interpretation

This chart provides strong empirical evidence for the efficacy of the "DynTS" system in addressing the core scalability challenges of standard Transformer models for long-sequence inference.

* **What the data suggests:** The data demonstrates that DynTS implements a dynamic, periodic optimization strategy for the Key-Value (KV) cache. This strategy successfully curbs the linear growth in memory and computation that plagues standard Transformers.

* **How elements relate:** The three panels are causally linked. The periodic KV cache management events (seen as drops in Memory and GFLOPs) directly cause the periodic boosts in Throughput. This creates a virtuous cycle: saving memory and compute enables higher throughput, which is sustained over longer sequences.

* **Notable Implications:** The widening performance gap indicates that DynTS becomes **increasingly more valuable** for longer contexts. The final `4.51x` throughput and `0.19x` memory usage at extreme lengths suggest it could enable applications (e.g., very long document analysis, extended dialogues) that are prohibitively expensive with standard Transformers. The system trades a tiny, periodic computational overhead for massive, sustained savings in memory and operations, leading to a net positive impact on performance and scalability.

</details>

Figure 5: Real-time throughput, memory, and compute overhead tracking over total decoding step. The inflection points in the sawtooth correspond to the steps where DynTS executes KV Cache Selection.

### 6.3 Ablation Study

Impact of Retained Token. As shown in Tab. 2, the full DynTS method outperforms all ablated variants, achieving the highest average accuracy on both R1-Llama ( $61.9\$ ) and R1-Qwen ( $65.3\$ ). This demonstrates that all retained tokens of DynTS are critical for the model to output the correct final answer. Moreover, we observe that the strategy for selecting thinking tokens plays a critical role in the model’s reasoning performance. When some redundant tokens are retained (T-Random and T-Bottom strategies), there is a significant performance drop compared to completely removing thinking tokens ( $59.6\$ on R1-Llama and $63.5\$ on R1-Qwen). This finding demonstrates the effectiveness of our Importance Predictor to identify critical tokens. It also explains why existing KV cache compression methods hurt model performance: inadvertently retaining redundant tokens. Finally, the local window is crucial for preserving local linguistic coherence, which contributes to stable model performance.

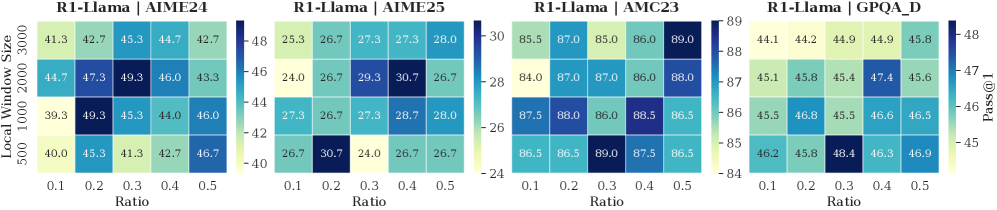

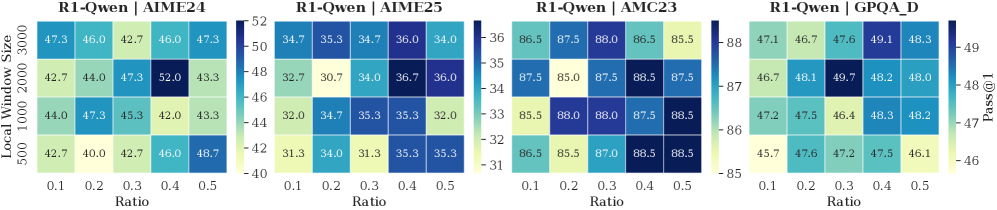

<details>

<summary>x8.png Details</summary>

### Visual Description

## Heatmap Comparison: R1-Llama vs. R1-Qwen Performance (Pass@1)

### Overview

The image displays two side-by-side heatmaps comparing the performance of two models, "R1-Llama" and "R1-Qwen," across different configurations. Performance is measured by the "Pass@1" metric, visualized through a color gradient. The analysis explores how this metric changes with variations in "Local Window Size" and "Ratio."

### Components/Axes

* **Titles:** Two main titles are positioned at the top: "R1-Llama" (left heatmap) and "R1-Qwen" (right heatmap).

* **Y-Axis (Left):** Labeled "Local Window Size." It has four discrete, categorical values listed from top to bottom: 3000, 2000, 1000, 500.

* **X-Axis (Bottom):** Labeled "Ratio." It has five discrete, categorical values listed from left to right: 0.1, 0.2, 0.3, 0.4, 0.5.

* **Color Scale/Legend (Right):** A vertical color bar labeled "Pass@1" on its right side. The scale ranges from approximately 50 (light yellow) to 56 (dark blue). Tick marks are present at 50, 52, 54, and 56.

* **Data Grids:** Each heatmap is a 4-row by 5-column grid. Each cell contains a numerical value representing the Pass@1 score for a specific combination of Local Window Size and Ratio.

### Detailed Analysis

**R1-Llama Heatmap (Left):**

* **Row 1 (Local Window Size 3000):** Values from left to right (Ratio 0.1 to 0.5): 49.1, 50.1, 50.6, 50.7, 51.4. The color transitions from light yellow to light green.

* **Row 2 (Local Window Size 2000):** Values: 49.5, 51.7, 52.8, 52.5, 50.9. Colors range from light yellow to teal, with the highest value (52.8) at Ratio 0.3.

* **Row 3 (Local Window Size 1000):** Values: 49.9, 52.7, 51.0, 51.9, 51.7. Colors are a mix of light yellow and teal.

* **Row 4 (Local Window Size 500):** Values: 49.8, 52.1, 50.7, 50.8, 51.7. Colors are similar to Row 3.

**R1-Qwen Heatmap (Right):**

* **Row 1 (Local Window Size 3000):** Values: 53.9, 53.9, 53.2, 54.4, 53.8. Colors are shades of medium blue.

* **Row 2 (Local Window Size 2000):** Values: 52.4, 51.9, 54.6, 56.3, 53.7. This row contains the highest value in the entire chart (56.3 at Ratio 0.4), shown in dark blue.

* **Row 3 (Local Window Size 1000):** Values: 52.2, 54.4, 53.8, 53.3, 53.0. Colors are shades of blue.

* **Row 4 (Local Window Size 500):** Values: 51.5, 51.8, 52.0, 54.3, 54.6. Colors range from light blue to medium blue.

### Key Observations

1. **Overall Performance Gap:** The R1-Qwen model consistently achieves higher Pass@1 scores than the R1-Llama model across all tested configurations. The R1-Qwen cells are predominantly blue (scores >52), while R1-Llama cells are mostly yellow-green (scores <53).

2. **Peak Performance:** The absolute highest Pass@1 score (56.3) is achieved by R1-Qwen with a Local Window Size of 2000 and a Ratio of 0.4.

3. **Sensitivity to Parameters:**

* For **R1-Llama**, performance does not show a strong, consistent trend with increasing Ratio or decreasing Window Size. The highest scores are scattered (e.g., 52.8 at Size 2000/Ratio 0.3, 52.7 at Size 1000/Ratio 0.2).

* For **R1-Qwen**, there is a more noticeable pattern. Performance tends to be higher at moderate Ratios (0.3-0.5) compared to the lowest Ratio (0.1). The configuration of Size 2000/Ratio 0.4 is a clear outlier peak.