# Visual Generation Unlocks Human-Like Reasoning through Multimodal World Models

**Authors**: Jialong Wu, Xiaoying Zhang, Hongyi Yuan, Xiangcheng Zhang, Tianhao Huang, Changjing He, Chaoyi Deng, Renrui Zhang, Youbin Wu, Mingsheng Long

1]Tsinghua University 2]ByteDance Seed [*]Work done at ByteDance Seed [†]Corresponding authors

(January 27, 2026)

## Abstract

Humans construct internal models of the world and reason by manipulating the concepts within these models. Recent advances in artificial intelligence (AI), particularly chain-of-thought (CoT) reasoning, approximate such human cognitive abilities, where world models are believed to be embedded within large language models. Expert-level performance in formal and abstract domains such as mathematics and programming has been achieved in current systems, which rely predominantly on verbal reasoning as their primary information-processing pathway. However, they still lag far behind humans in domains like physical and spatial intelligence, which require richer representations and prior knowledge. The emergence of unified multimodal models (UMMs) capable of both verbal and visual generation has therefore sparked interest in more human-like reasoning grounded in complementary multimodal pathways, though a clear consensus on their benefits has not yet been reached. From a world-model perspective, this paper presents the first principled study of when and how visual generation benefits reasoning. Our key position is the visual superiority hypothesis: for certain tasks–particularly those grounded in the physical world–visual generation more naturally serves as world models, whereas purely verbal world models encounter bottlenecks arising from representational limitations or insufficient prior knowledge. Theoretically, we formalize internal world modeling as a core component of deliberate CoT reasoning and analyze distinctions among different forms of world models from both informativeness and knowledge aspects. Empirically, we identify and design tasks that necessitate interleaved visual-verbal CoT reasoning, constructing a new evaluation suite, VisWorld-Eval. Through controlled experiments on a state-of-the-art UMM, we show that interleaved CoT significantly outperforms purely verbal CoT on tasks that favor visual world modeling. Conversely, it offers no clear advantage for tasks that do not require explicit visual modeling. Together, these insights and findings clarify the applicability and potential of multimodal world modeling and reasoning for more powerful, human-like multimodal AI. We publicly release our evaluation suite to facilitate further research.

[Project Lead]Jialong Wu at Mingsheng Long at , Xiaoying Zhang at [Project Page] https://thuml.github.io/Reasoning-Visual-World

## 1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: World Models in Human Minds and Multimodal AI Reasoning

### Overview

This image is a technical diagram or infographic illustrating the concept of "World Models" in human cognition and how similar principles are applied to reasoning in Multimodal AI. It is divided into three primary horizontal sections, each with a blue-bordered header. The diagram uses a combination of illustrations, text, equations, and example outputs to draw parallels between human mental processes and AI reasoning capabilities.

### Components/Axes

The diagram is structured into three main sections:

1. **Top Section: "World Models in Human Minds"**

* **Left Sub-section: "World Model: Mental Model of the World"**

* Illustration: A person thinking, with a thought bubble containing a flowchart and a globe.

* Text: "Approximate" and "Feedback" with arrows pointing to a realistic image of Earth.

* **Right Sub-section: "Dual Representations of World Knowledge"**

* Two columns:

* **Left Column: "Verbal/Symbolic Knowledge"**

* Illustration: A person shooting a basketball.

* Mathematical equations: `y = ax² + bx + c` and `F = ma`.

* Text: "Dislike in Daily Life" (in red).

* **Right Column: "Visual/Imagery Knowledge"**

* Illustration: A basketball trajectory diagram over a court.

* A red YouTube play button icon.

* Text: "Prefer in Daily Life" (in green).

2. **Middle Section: "Reasoning with Verbal World Modeling in Multimodal AI"**

* This section is divided into three vertical columns, each with a title and an example.

* **Column 1: "Mathematical Reasoning" & "Puzzle Solving"**

* Contains a math problem and its step-by-step solution.

* Contains a puzzle state description.

* **Column 2: "Travel Planning"**

* Contains a task description and a structured plan with `<think>`, `STATE`, and `ACTION` tags.

* **Column 3: "Everyday Activity Planning"**

* Contains a goal and a sequence of images showing cooking steps.

* Contains a `<think>` block describing the cooking process.

3. **Bottom Section: "Reasoning with Visual World Modeling in Multimodal AI"**

* **Title: "Real-World Spatial Reasoning"**

* **Left:** A question box: "When you took the photo in Figure 1, where was the iron refrigerator located relative to you?" with two small interior photos below.

* **Center & Right:** A sequence of three larger photos showing different views of a room (a doorway, a fireplace, a window). Each photo is accompanied by a `<think>` block of text describing the AI's reasoning process to locate the refrigerator.

### Detailed Analysis / Content Details

**Top Section Content:**

* **Text Transcription:**

* Main Header: "World Models in Human Minds"

* Left Sub-header: "World Model: Mental Model of the World"

* Right Sub-header: "Dual Representations of World Knowledge"

* Labels: "Approximate", "Feedback", "Verbal/Symbolic Knowledge", "Visual/Imagery Knowledge", "Dislike in Daily Life", "Prefer in Daily Life".

* Equations: `y = ax² + bx + c`, `F = ma`.

**Middle Section Content (Verbal World Modeling):**

* **Mathematical Reasoning:**

* **Question:** "If a > 1, then the sum of the real solutions of √(a - √(a + x)) = x is equal to"

* **Response:** "<think> To solve the equation √(a - √(a + x)) = x, let's start by squaring both... (a - √(a + x)) = x² => √(a + x) = a - x². Rearrange to isolate the inner square root term: (a - x²)² = a + x => a² - 2ax² + (x²)² = a + x => x⁴ - 2ax² - x + a² - a = 0 ..."

* **Puzzle Solving:**

* **Text:** "<think>From S at [452, 59], the only available move is West to [359, 59] ......."

* **Travel Planning:**

* **Task:** "Plan a trip with a budget of $1,700."

* **Plan:** "<think>STATE: Initial Budget: $1700, Spent: $0. Day 1: transportation: PENDING. Available: Flight F3573659: $474. ACTION: Plan day 1 transportation. Select Flight F3573659. NEXT STATE: After this action, you've spent $474, leaving you $1226 from your $1700 budget..."

* **Legend:** Green box labeled "State/Observation", Pink box labeled "Action".

* **Everyday Activity Planning:**

* **Goal:** "Cooking tomato and eggs"

* **Text:** "<think>... ACTION: Cook the eggs in the pan. STATE: This action changes the state of the eggs from liquid to a partially cooked state. The eggs are now partially cooked and are being transformed into curds. The heat from the pan cooks the eggs, and the stirring action ensures even cooking..."

**Bottom Section Content (Visual World Modeling):**

* **Question:** "When you took the photo in Figure 1, where was the iron refrigerator located relative to you?"

* **Reasoning Sequence (Transcribed from `<think>` blocks):**

1. "<think>First, let's analyze the images. [...] It's not visible in that initial view, so I need to change my perspective."

2. "The refrigerator is not visible in this 90-degree left turn view, [...] To be thorough, I will also check the view to the right."

3. "[...] My initial turn was 90 degrees left, but the refrigerator isn't at that exact angle. Let's try a smaller turn to the left. [...]"

### Key Observations

1. **Structural Parallelism:** The diagram explicitly parallels human cognitive processes (top section) with AI reasoning frameworks (middle and bottom sections). The "Dual Representations" in humans map to "Verbal World Modeling" and "Visual World Modeling" in AI.

2. **Use of `<think>` Tags:** The AI reasoning examples in both verbal and visual sections are annotated with `<think>` blocks, simulating an internal monologue or chain-of-thought process, mirroring the human "thought bubble" in the top section.

3. **Preference Dichotomy:** The human section highlights a preference for visual/imagery knowledge in daily life ("Prefer in Daily Life") over verbal/symbolic knowledge ("Dislike in Daily Life").

4. **Task Diversity:** The verbal modeling section demonstrates application across diverse domains: abstract math, logistics (travel), and procedural tasks (cooking). The visual section focuses on spatial reasoning.

5. **Iterative Process:** The visual reasoning example shows an iterative, hypothesis-testing approach ("not visible... need to change perspective... try a smaller turn").

### Interpretation

This diagram serves as a conceptual framework arguing that advanced AI, particularly multimodal AI, should emulate the structure of human world models to achieve robust reasoning.

* **Core Thesis:** Human intelligence relies on an internal, approximate world model that integrates both verbal/symbolic and visual/imagery knowledge. The diagram posits that for AI to reason effectively about the real world, it must develop analogous internal models.

* **Relationship Between Elements:** The top section establishes the human cognitive foundation. The middle section shows how AI can use a *verbal* world model (structured states, actions, and symbolic reasoning) to plan and solve problems. The bottom section shows how AI can use a *visual* world model (understanding spatial relationships and perspective from images) to answer questions about the physical world.

* **Underlying Message:** The inclusion of the "Dislike/Prefer" labels suggests that while symbolic reasoning is powerful, grounding AI in visual and experiential knowledge (like human preference) may lead to more intuitive and applicable intelligence. The `<think>` tags are crucial—they indicate that the reasoning process itself, not just the final output, is a key component of the model.

* **Investigative Reading:** The diagram implies that current AI often excels at one type of reasoning (e.g., verbal/symbolic for LLMs) but true "world modeling" requires the integration of both streams, much like the human mind. The travel planning example shows a stateful, grounded process, while the spatial reasoning example shows an embodied, perspective-taking process. Together, they illustrate a move from static pattern recognition towards dynamic, model-based reasoning.

</details>

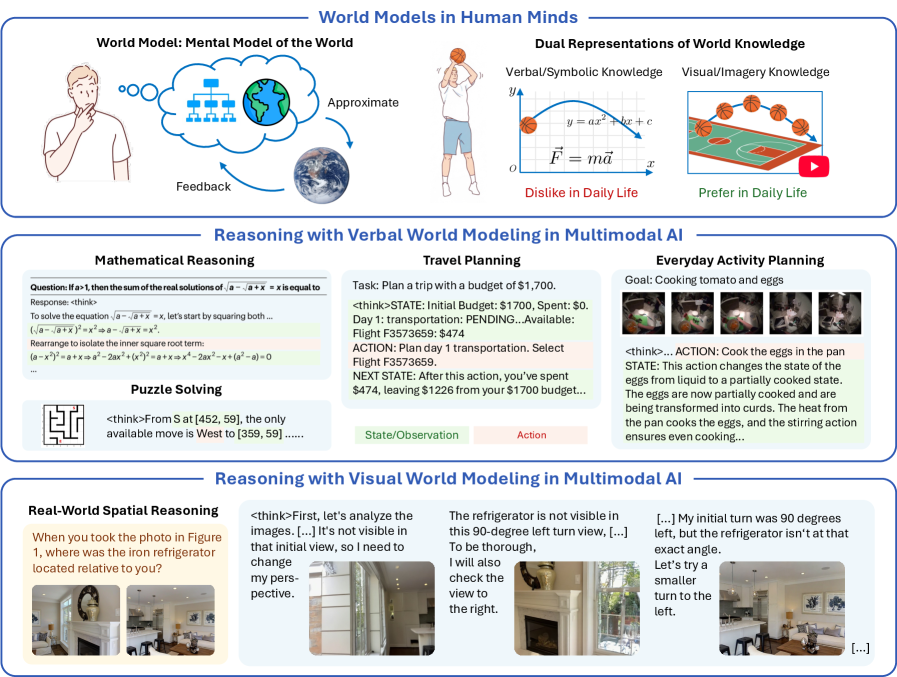

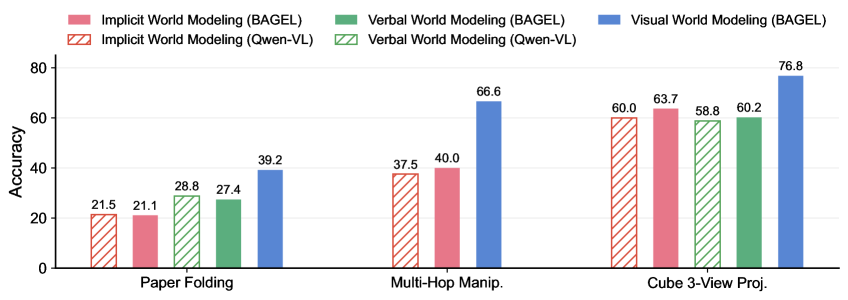

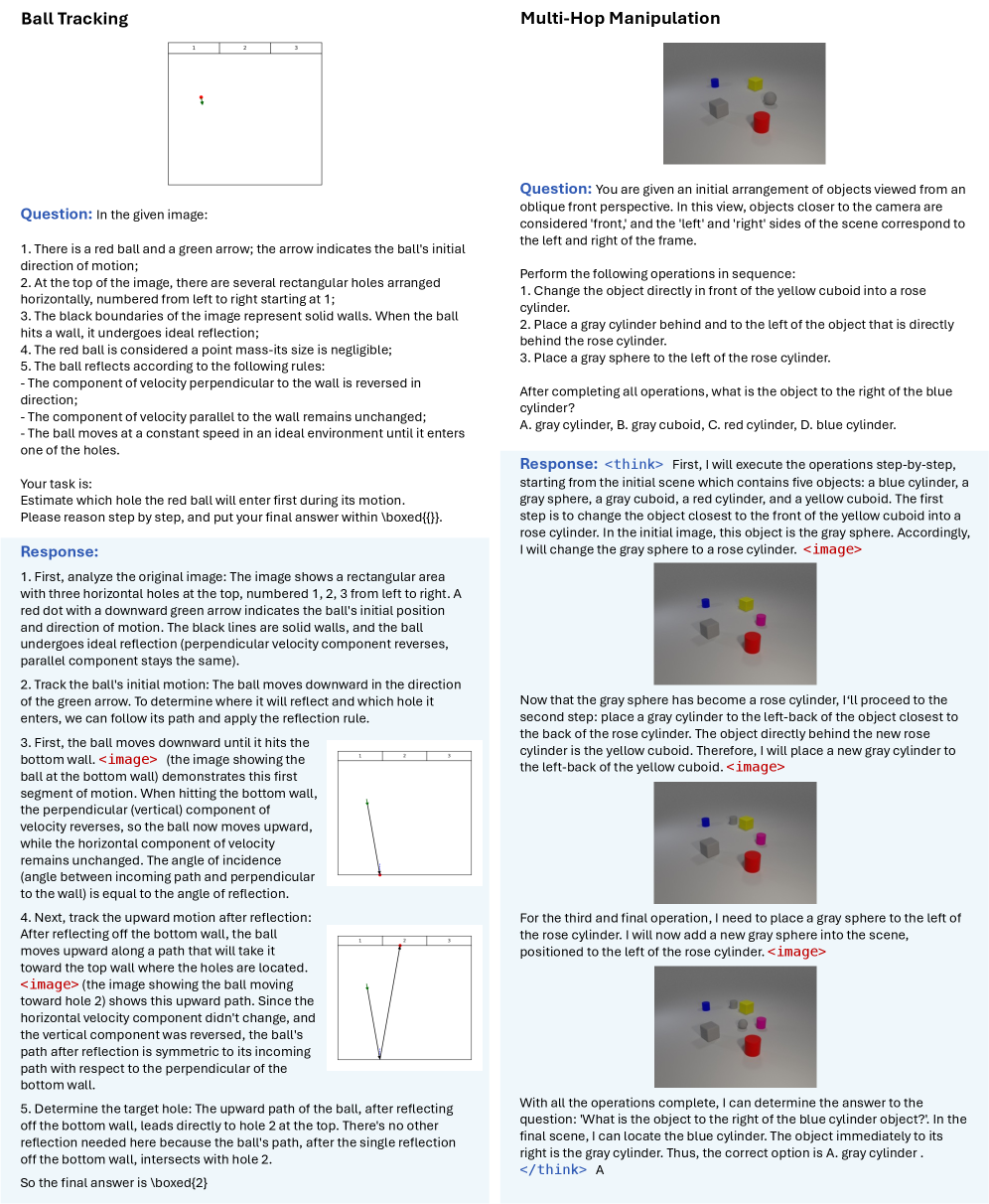

Figure 1: Overview of a world-model perspective on multimodal reasoning. (a) Humans construct mental models of the world, representing information and knowledge through two complementary channels–verbal and visual–to support reasoning, planning, and decision-making. (b) Recent advances in large language models (LLMs) and vision language models (VLMs) largely rely on verbal chain-of-thought reasoning, leveraging primarily verbal and symbolic world knowledge. (c) Unified multimodal models (UMMs) open a new paradigm by using visual generation for visual world modeling, advancing more human-like reasoning on tasks grounded in the physical world. Examples of reasoning with verbal world modeling are adapted from Guo et al. [18], Du et al. [14], Chen et al. [9], Zhang et al. [72].

Humans construct internal mental models of the external world that represent objects and concepts, along with their relationships, structures, and operational mechanisms [11, 16]. These models support reasoning and decision-making by enabling mental simulation, allowing individuals to anticipate the outcome of actions without actually taking them [40]. For example, if a glass of water is spilled on the table, people can rapidly mentally simulate the ensuing events: the water falling downward, spreading across the surface, and potentially dripping onto the floor. Such predictions lead them to quickly move valuable items away or reach for a towel. Beyond physical systems, mental models also extend to any domain where relational structures can be simulated, such as mathematics and logic [31, 32], making them fundamental to how humans understand and interact with all aspects of the world.

Cross-disciplinary researchers in philosophy, psychology, cognitive science, and related fields have a long history of developing computational models of human mental models [44]. Among them, artificial intelligence (AI) shares a core ambition of building machines that reason like people. Although debates remain, recent breakthroughs, especially in large language models (LLMs) and chain-of-thought (CoT) reasoning, have made a substantial step towards approximating human reasoning grounded in mental models of the world, often referred to as world models [24, 34] in the AI literature. During chain-of-thought reasoning, LLMs explore, reflect, and backtrack within the structured solution space, guided by world knowledge acquired through large-scale pre-training. These capabilities have already driven progress in diverse domains, including programming [18], mathematics [57, 18], scientific discovery [53], clinical medicine [58], and robotics [42].

Such reasoning capabilities have also been extended to multimodal AI systems, particularly vision language models (VLMs) [28, 6, 19, 70]. These systems typically incorporate visual inputs by aligning visual representations with the embedding space of LLMs, resulting in reasoning that remains primarily constrained to a linguistic space. In contrast, human mental models operate over multiple forms of mental representations. Dual-coding theory [45] suggests that the mind processes information through two complementary codes: verbal and imagery (particularly visual) representations. These pathways can function independently but often collaborate to support reasoning. Indeed, visual imagery has been shown to have advantages over words in memory encoding and retrieval [33]; and individuals with aphantasia, who lack the ability to visualize mental imagery, exhibit worse performance on tasks such as visual search [43]. These evidence from psychology and cognitive science therefore suggest that the absence of a dedicated visual information pathway may explain why current multimodal AI systems excel in formal and abstract domains dominated by verbal world knowledge, yet continue to fall far short of human performance on tasks involving physical and spatial reasoning [49, 8], which fundamentally depend on visual world modeling.

Next-generation multimodal AI systems are evolving to be built upon unified multimodal models (UMMs) [54, 63, 62, 13], which seamlessly integrate both verbal and visual generation capabilities. The newly introduced visual generation component offers the potential to explicitly realize visual world modeling, a critical element of multimodal world models in human-like reasoning that current systems largely lack. This naturally makes us ponder: Can current UMMs truly leverage their visual generation capability to enhance reasoning and thereby narrow the performance gap between multimodal AI and humans? A growing body of preliminary research [36, 77, 38, 76, 17] has begun exploring this question from different perspectives. However, the findings so far remain inconclusive. Reported empirical results are mixed, showing no consistent trends that visual generation reliably improves reasoning performance. Moreover, the evaluation tasks used in current studies are designed heuristically, lacking a principled basis for understanding when and how visual generation can meaningfully contribute to multimodal reasoning.

In this paper, we present the first principled study of when and how visual generation benefits reasoning from a world-model perspective (see Figure 1), making both theoretical and empirical contributions.

Theoretically, we rigorously bridge the concepts of world models and reasoning. (1) World model formulations: We formulate multimodal world models to approximate the underlying multi-observable Markov decision processes (MOMDP) of tasks, and define two fundamental capabilities of world models, namely world reconstruction and world simulation. (2) World model-based reasoning: To realize world models for reasoning, we introduce three reasoning formulations. Two rely solely on verbal CoTs through implicit or verbal world modeling, while the third interleaves verbal and visual CoTs that explicitly incorporate visual generation as a form of visual world modeling. (3) The visual superiority hypothesis: Under this framework, we analyze the distinctions among different world models, highlighting the richer informativeness and complementary prior knowledge afforded by visual world modeling. These insights motivate our central hypothesis that visual world modeling is superior for certain tasks, particularly those grounded in the physical world.

Empirically, we validate these insights through a series of controlled experiments. (4) The VisWorld-Eval suite: We identify and design tasks that specifically isolate and demand each atomic world model capability, forming a new evaluation suite to facilitate future research. This suite, VisWorld-Eval, collects seven tasks spanning both synthetic and real-world domains. (5) Empirical evaluation: Experiments with a state-of-the-art UMM [13] on VisWorld-Eval reveal findings consistent with our insights and theoretical analysis. In tasks where verbal world modeling suffers from representational bottlenecks or insufficient prior knowledge, interleaved CoT delivers substantial performance improvements. By contrast, it offers no clear advantages in tasks such as mazes and Sokoban, whose simple states do not require explicit visual world modeling. We further conduct dedicated analyses, including evidence revealing emergent implicit world modeling in the maze task.

We hope this work provides early evidence for the central role of multimodal world models in general-purpose AI, in which complementary verbal and visual knowledge emerge from generative modeling across modalities, with the latter being especially valuable for bringing human-like intelligence into the physical world.

## 2 Related Work

World models. The field of world models is rapidly evolving, yet remains far from reaching consensus on definitions or methodologies. Although psychology and cognitive science suggest that human mental models rely on compact representations that discard irrelevant details, how to scale approaches capable of learning such abstract representations [48, 26, 34] to arbitrary domains and modalities is still unclear. Consequently, most current techniques preserve complete information of observations, either through reconstructable latent representations [24, 25] or directly at the level of raw data. Prominent examples include modern video generation world models [12, 1, 2, 64] which capture concrete pixel-level dynamics. In contrast, language inherently provides a higher level of abstraction, making it more similar to human mental representations [60, 65, 59, 71, 9]. This motivates the promise of unified multimodal models that generate both languages and visuals as a new direction for building more human-like world models.

Unified multimodal models. Multmodal understanding [28, 6, 19] and visual generation [47, 50] have long developed in isolation. Recently, there has been growing interest in integrating these two capabilities into a single unified model. This can be straightforwardly achieved by forwarding the representations of vision language models to an external visual generation module [56, 46]. A more unified approach is to model both language and visual modalities within a single backbone. While language is predominantly modeled through autoregressive next-token prediction, the design space of visual modalities spans a wide spectrum, from discrete tokenization with autoregressive [62, 54, 63] or masked modeling [66, 22], to continuous tokenization with diffusion or flow-based modeling [75, 41, 13]. Among these efforts, BAGEL [13] is one of the most widely adopted open-source models achieving state-of-the-art performance. Despite substantial progress in building unified multimodal models (UMMs), existing evaluations still primarily assess their understanding and generation capabilities separately. One widely recognized advantage of UMMs lies in leveraging reasoning abilities of handling complex instructions to enhance visual generation or editing [74, 21]. Yet when and how visual generation, in turn, enhances reasoning remains insufficiently explored, lacking solid empirical evidence and community consensus.

Benchmarking visual generation for reasoning. This paper contributes to a growing line of research on visual generation for reasoning. RealUnify [52] and Uni-MMMU [77] design tasks in which generation is expected to enhance reasoning, but report mixed results without revealing clear trends regarding the benefits of visual generation. ROVER [38] reveals fundamental limitations of current models in generating meaningful visual reasoning steps, often resulting in minimal or even negative gains in final accuracy. In contrast, MIRA [76] conducts a sanity test by providing manually annotated visual cues, thereby bypassing the evaluation of visual world modeling capability. While the aforementioned works evaluate zero-shot performance, ThinkMorph [17] fine-tunes UMMs to reveal emergent reasoning behaviors but restricts each CoT to a single intermediate image, thereby not fully exploiting the potential of interleaved CoT. Our work distinguishes itself through a world-model perspective that enables a principled investigation, allowing us to both demonstrate and systematically explain when visual generation yields positive gains and when it does not.

## 3 A World Model Perspective on Multimodal Reasoning

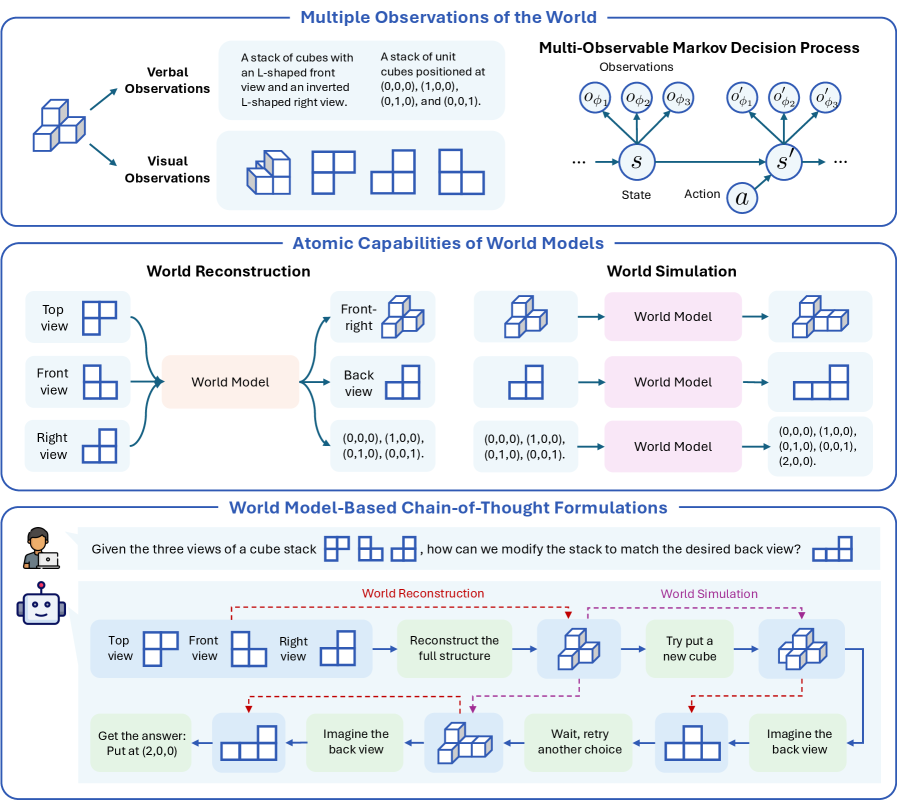

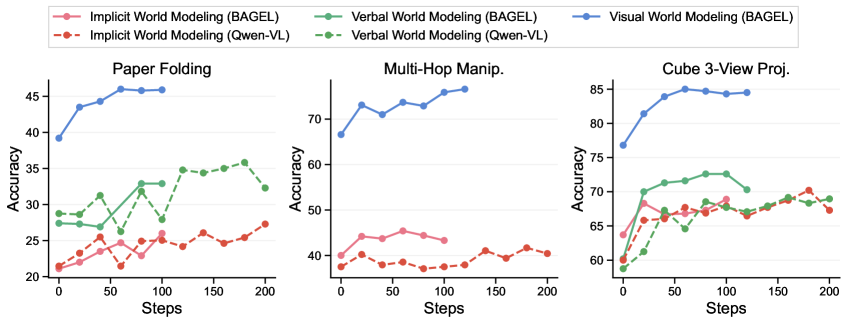

Inspired by the aforementioned connections between human cognition and artificial intelligence, we formalize our world-model perspective on multimodal reasoning (see Figure 2) in this section.

### 3.1 Formulation: Multiple Observations of the World

Without loss of generality, the world of a specific task can be formulated as a multi-observable Markov decision process (MOMDP) $\mathcal{M}=(\mathcal{S},\mathcal{A},p,\Phi,\mathcal{O}_{\phi},e_{\phi})$ , where $\mathcal{S}$ denotes the state space, $\mathcal{A}$ the action space, $p$ the transition function, $\Phi$ the parameter space of observation functions, $\mathcal{O}_{\phi}$ the observation space, and $e_{\phi}$ the observation function. Each $s\in\mathcal{S}$ represents the underlying state of the world, which is typically hidden and not directly observable. Instead, it can be perceived through different instantiations of observations (hereafter also referred to as views) [27], given by $o=e_{\phi}(s)\in\mathcal{O}_{\phi}$ , parameterized by $\phi\in\Phi$ . As illustrated in Figure 2 a, such views can span multiple modalities—for example, visual observations corresponding to different camera poses, or verbal descriptions expressed with different emphases or styles. When an action $a\in\mathcal{A}$ is applied to the current state, the world transits according to the dynamics $s^{\prime}\sim p(s^{\prime}|s,a)$ and yields new observations.

### 3.2 Atomic Capabilities of World Models

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: World Model Capabilities and Formulations

### Overview

This image is a technical diagram illustrating the concept of "World Models" in artificial intelligence, specifically focusing on their atomic capabilities (reconstruction and simulation) and their application in chain-of-thought reasoning. The diagram is divided into three horizontal panels, each exploring a different facet of the concept using a consistent example of 3D cube structures.

### Components/Axes

The diagram is structured into three main panels, each with a blue header:

1. **Top Panel: "Multiple Observations of the World"**

* **Left Section:** Shows a 3D cube structure (an L-shape with an inverted L on top) and two types of observations:

* **Verbal Observations:** Text descriptions: "A stack of cubes with an L-shaped front view and an inverted L-shaped right view." and "A stack of unit cubes positioned at (0,0,0), (1,0,0), (0,1,0), and (0,0,1)."

* **Visual Observations:** Four 2D line-drawing projections of the cube stack from different angles.

* **Right Section:** A diagram titled **"Multi-Observable Markov Decision Process"**.

* **Components:** A state circle (`s`), an action circle (`a`), and a next state circle (`s'`). Arrows indicate transitions.

* **Observations:** Above the state circles are observation nodes (`o_φ1`, `o_φ2`, `o_φ3` for state `s`; `o'_φ1`, `o'_φ2`, `o'_φ3` for state `s'`), indicating multiple observation types for each state.

* **Legend/Labels:** "Observations" (top), "State" (below `s`), "Action" (below `a`).

2. **Middle Panel: "Atomic Capabilities of World Models"**

* **Left Sub-panel: "World Reconstruction"**

* **Inputs:** Three 2D views labeled "Top view", "Front view", "Right view".

* **Process:** Arrows point from the views into a central pink box labeled "World Model".

* **Outputs:** Arrows point from the "World Model" to:

1. A 3D reconstruction of the cube stack.

2. A "Back view" (2D projection).

3. A coordinate list: "(0,0,0), (1,0,0), (0,1,0), (0,0,1)".

* **Right Sub-panel: "World Simulation"**

* **Inputs:** Three different starting states (a 3D cube stack, a 2D view, and a coordinate list).

* **Process:** Each input has an arrow pointing to a pink "World Model" box.

* **Outputs:** Each "World Model" box has an arrow pointing to a predicted next state:

1. A new 3D cube configuration.

2. A new 2D view.

3. A new coordinate list: "(0,0,0), (1,0,0), (0,1,0), (0,0,1), (2,0,0)".

3. **Bottom Panel: "World Model-Based Chain-of-Thought Formulations"**

* **Problem Statement:** A user icon asks: "Given the three views of a cube stack [icon] [icon] [icon], how can we modify the stack to match the desired back view [icon]?"

* **Process Flow:** A robot icon initiates a flowchart with two main phases, connected by red dashed lines indicating feedback loops.

* **Phase 1: "World Reconstruction"** (Left side, blue background)

* Steps: "Top view", "Front view", "Right view" → "Reconstruct the full structure" (3D icon) → "Imagine the back view" (2D icon).

* **Phase 2: "World Simulation"** (Right side, pink background)

* Steps: "Try put a new cube" (3D icon) → "Imagine the back view" (2D icon) → Decision point: "Wait, retry another choice" (loops back) or proceed.

* **Final Output:** An arrow leads to "Get the answer: Put at (2,0,0)".

### Detailed Analysis

* **Cube Structure:** The primary example is a 4-cube structure. Its verbal description and coordinate list define it as occupying positions (0,0,0), (1,0,0), (0,1,0), and (0,0,1) in a 3D grid.

* **World Model Functions:**

* **Reconstruction:** The model takes multiple 2D perspectives (top, front, right) as input and infers the complete 3D structure, its other 2D projections (back view), and its explicit coordinate representation.

* **Simulation:** The model takes a current state (in any representation: 3D, 2D, or coordinates) and an implied action (e.g., "add a cube") to predict the resulting future state. The example shows adding a cube at (2,0,0).

* **Chain-of-Thought Logic:** The bottom panel demonstrates a problem-solving loop. The agent first reconstructs the current object from given views. It then simulates potential actions (adding a cube), imagines the resulting back view, and compares it to the target. If mismatched, it loops back to try a different action. The solution is to place a cube at coordinate (2,0,0).

### Key Observations

1. **Multi-Modal Representation:** The diagram emphasizes that a "world" can be represented interchangeably as 3D models, 2D views, or numerical coordinates. The World Model operates across these modalities.

2. **MDP Integration:** The top-right explicitly frames the problem within a Multi-Observable Markov Decision Process, where a single underlying state (`s`) can produce multiple observation types (`o_φ`).

3. **Feedback-Driven Reasoning:** The chain-of-thought process is not linear but iterative, using simulation and imagination ("Imagine the back view") to test hypotheses before committing to an action.

4. **Consistent Example:** The same 4-cube L-shaped structure is used throughout all panels, providing a concrete thread to understand the abstract concepts.

### Interpretation

This diagram argues that robust AI reasoning about the physical world requires two core, interconnected capabilities: **reconstruction** (building an internal model from partial sensory data) and **simulation** (predicting the consequences of actions within that model). The "World Model" is presented as the central engine enabling both.

The **Peircean investigative reading** suggests the diagram is making a case for a specific architecture of intelligence:

* **The Sign (Representation):** The cube in its various forms (3D, 2D, coordinates) is the representational sign.

* **The Object (The Actual World):** The true, complete 3D structure is the object the signs point to.

* **The Interpretant (The Reasoning Process):** The chain-of-thought flowchart is the interpretant—the process of using signs (reconstructions) and predictive models (simulations) to derive meaning and solve problems. The feedback loops are critical, showing that understanding is an active, abductive process of hypothesizing and testing.

The practical implication is that for an AI to answer a question like "how do I change this object to look like that?", it cannot rely on pattern matching alone. It must first *understand* the current state (reconstruction), then *imagine* the effects of its actions (simulation), and use that internal simulation to guide its physical or logical intervention. The coordinate "(2,0,0)" is not just an answer; it's the output of a simulated experiment conducted within the model's internal world.

</details>

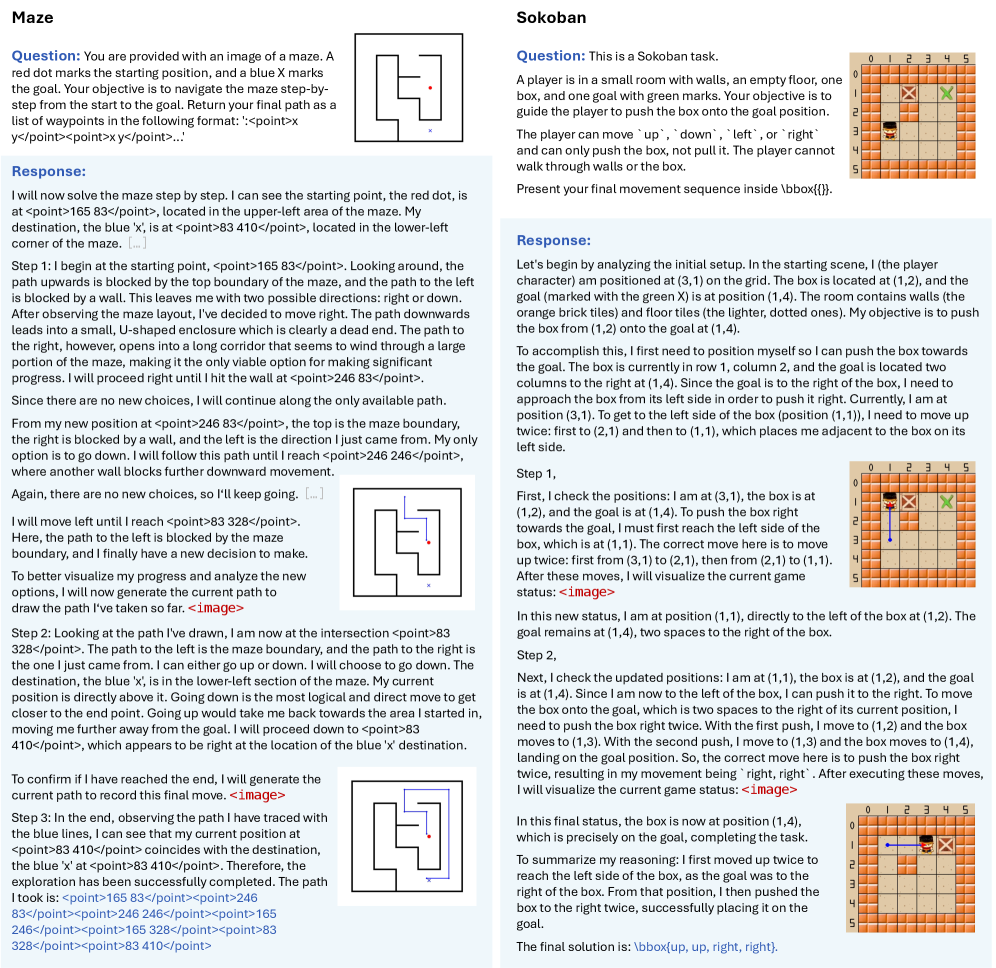

Figure 2: Theoretical formulation of the world model perspective on multimodal reasoning. (a) Observations of the same underlying world state can span multiple modalities, including verbal and visual observations, each reflecting different views or emphases. (b) Two atomic capabilities of world models are defined: world reconstruction, which infers complete structure from partial observations and enables novel view synthesis, and world simulation, which models dynamics to predict future observations. (c) Chain-of-thought reasoning includes internal world modeling, by explicitly maintaining an evolving sequence of observations, generated through either of the atomic world model capabilities.

A world model, analogous to human mental models, is then expected to support two fundamental capabilities [34], illustrated in Figure 2 b. The first is called world reconstruction. Humans are remarkably skilled at mentally reconstructing the structure of an environment from only a few partial observations [71], grounded in their prior knowledge of the world. Such mental reconstruction allows them to imagine novel views of the same underlying state, supporting skills such as mental rotation. Formally, the perception component of a world model encodes $n$ observations from limited views into an internal representation: $\hat{s}=\operatorname{enc}(o_{\phi_{1}},\dots,o_{\phi_{n}})\approx s$ . This representation approximates the true state We set aside the debate between compact and comprehensive representations. By treating abstract (e.g., sketches) and high-fidelity observations as different view specifications, this formulation allows the internal representation to flexibly adjust to the level of detail required by the desired views., and can then be decoded to generate an unseen observation: $\hat{o}_{\phi_{n+1}}=\mathrm{dec}(\hat{s},\phi_{n+1})\approx e_{\phi_{n+1}}(s)$ , providing an internal "experience" of navigating the world. In modern generative models, including UMMs, since their latent representations are not explicitly defined, the world reconstruction capability can be realized through end-to-end novel view generation:

$$

\displaystyle p_{\theta}(o_{\phi_{n+1}}\mid o_{\phi_{1}},\dots,o_{\phi_{n}}), \tag{1}

$$

which implicitly learns the internal representations required to synthesize the new view.

The second capability is world simulation. Humans can mentally simulate how the world evolves into the future, supporting reasoning and decision-making, either purely in their minds or with external aids such as a scratchpad. Formally, this corresponds to the prediction component of a world model, which predicts the transition of the current state and action: $\hat{s}^{\prime}\sim\operatorname{pred}(\hat{s},a)$ , providing an internal "experience" of interacting with the world. Similarly, for modern generative models, this capability is more typically realized through predictions of future observations:

$$

\displaystyle p_{\theta}(o_{t+1}\mid o_{\leq t},a_{\leq t}). \tag{2}

$$

In our new evaluation suite, we deliberately curate tasks that specifically demand each capability, allowing us to independently validate its contribution to multimodal reasoning (see Section 4.1).

### 3.3 Deliberate Reasoning with World Modeling Across Modalities

We then formalize how world-modeling capabilities within multimodal models contribute to reasoning. Given a question $Q$ and input images $I$ , the chain-of-thought reasoning process of a multimodal AI system can be expressed as a sequence of intermediate steps (or thoughts) $R=\tau_{1},\tau_{2},\dots,\tau_{H}$ , followed by the answer $A$ . Although this general formulation treats each reasoning step $\tau_{i}$ as an unconstrained, free-form operation, our world model perspective suggests that humans reason by prediction and planning, and each step inherently manipulates the underlying world observations of the problem [59, 10, 72]. We therefore refine the reasoning formulation as $\tau_{i}=(r_{i},o_{i})$ to explicitly incorporate an evolving sequence of observations:

$$

\displaystyle R=\left(r_{1},o_{1}\right),\left(r_{2},o_{2}\right),\dots,\left(r_{H},o_{H}\right), \tag{3}

$$

where $r_{i}$ We use $i$ to index reasoning steps in order to distinguish them from the true time step $t$ of the underlying MOMDP. The twos are not generally aligned, as we may include branching and backtracking in the reasoning. denotes a logical reasoning step based on the accumulated context, typically expressed in text, and $o_{i}$ denotes the observation generated at that step. Specifically, the input images serve as the initial observation $o_{0}=I$ , and subsequent observations are generated from previous reasoning and observations, by invoking atomic world modeling capabilities: world reconstruction (Eq. (1)) and world simulation (Eq. (2)), where reasoning steps imply actions $a$ and view transformations $\phi$ , as illustrated in Figure 2 c.

This formulation is modality-agnostic, allowing observations—and thus world modeling—to arise across various modalities. We focus specifically on verbal and visual observations, motivated by dual-coding theory in human cognition and by the fact that UMMs are equipped to generate both. This yields several concrete CoT instantiations. Specifically, verbal world modeling produces purely verbal CoTs, with $o_{i}$ as verbal descriptions, whereas visual world modeling produces verbal-visual interleaved CoTs, with $o_{i}$ as generated images. In addition, prior work has discovered that language models can implicitly learn world models with emergent internal representations of board-game states without explicit supervision [37]. Motivated by this, we also consider implicit world modeling, in which no explicit observation is generated ( $o_{i}=\emptyset$ ) In practice, strictly distinguishing implicit from verbal world modeling can be difficult, because there are often partial descriptions of the current state in the reasoning part $r_{i}$ . In this work, we treat verbal world modeling as explicitly expressing world states or observations in text, such as coordinates or symbolic matrices..

### 3.4 The Visual Superiority Hypothesis

Contemporary LLMs and VLMs have achieved impressive performance in structured and abstract domains, such as mathematics and programming, largely driven by large-scale language-centric pre-training and verbal chain-of-thought post-training. Although these models have accumulated extensive verbal and symbolic knowledge, their understanding of the visual world remains limited when trained under purely verbal supervision. As a result, they continue to struggle with tasks grounded in basic physical and spatial intuition that even young children naturally master [49, 8].

Visual world modeling is therefore essential for endowing multimodal AI with complementary forms of information and knowledge. (1) In terms of informativeness, while verbal and symbolic representations capture high-level semantic abstractions, they often suffer from ambiguity and representational bottlenecks. In contrast, visual observations are more concrete and information-rich, directly encoding physical properties such as motion and spatial relationships. This provides precise, fine-grained grounding for reasoning about the complex real world, particularly in spatial and physical tasks. (2) In terms of prior knowledge, visual world knowledge is inherently complementary to symbolic knowledge. Humans and animals acquire much of this knowledge (e.g., physical interactions and spatial transformations) through perception, largely independent of language. Consequently, humans naturally represent and communicate such knowledge visually—for example, by sketching an approximate parabolic trajectory without performing explicit calculations. This suggests that different aspects of world knowledge are concentrated in different data modalities, and learning from large-scale generative modeling of visual data can thereby expand the effective knowledge landscape available for multimodal reasoning.

We next formalize and justify these insights through theoretical analysis, with formal statements and proofs provided in Appendix 7.

Informativeness. For notational convenience, we denote the question $Q$ as $r_{0}$ , the input images as $o_{0}$ , and the final answer as $r_{H+1}$ . Prefixes of a CoT are defined as $R_{i}=(r_{0},o_{0},r_{1},o_{1},\dots,r_{i-1},o_{i-1}),\tilde{R}_{i}=(r_{0},o_{0},r_{1},o_{1},\dots,r_{i-1},o_{i-1},r_{i})$ . We use $\mathbb{H}(\cdot)$ and $\mathbb{I}(\cdot;\cdot)$ to denote Shannon entropy and mutual information, respectively. We first establish that the end-to-end answer error admits an upper bound that naturally decomposes into reasoning and world-modeling errors.

**Theorem 1**

*Let $p$ denote the distribution over optimal chain-of-thoughts and answers, and let $p_{\theta}$ be a learned reasoning model. Then the following inequality holds:

$$

\displaystyle\operatorname{KL}(p(A\mid Q,I)\mid\mid p_{\theta}(A\mid Q,I)) \displaystyle\leq\operatorname{KL}(p(R,A\mid Q,I)\mid\mid p_{\theta}(R,A\mid Q,I)) \displaystyle= \displaystyle\sum_{i=1}^{H+1}\underbrace{\mathbb{E}_{p}\left[\operatorname{KL}(p(r_{i}|R_{i})\mid\mid p_{\theta}(r_{i}|R_{i}))\right]}_{\textnormal{reasoning errors}}+\sum_{i=1}^{H}\underbrace{\mathbb{E}_{p}\left[\operatorname{KL}(p(o_{i}|\tilde{R}_{i})\mid\mid p_{\theta}(o_{i}|\tilde{R}_{i}))\right]}_{\textnormal{world-modeling errors}}. \tag{4}

$$*

This decomposition reveals a fundamental trade-off between the informativeness of world models for reasoning and the fidelity of the world model itself. In the case of implicit world modeling, where $o_{i}=\emptyset$ , we get rid of the world-modeling error. However, this typically comes at the cost of increased uncertainty and learning difficulty in reasoning, as all state transitions must be implicitly encoded. Empirically, world models that explicitly track the task states, serving as verbal or visual sketchpads, are generally beneficial for reasoning. We dive into the reasoning component of Eq. (4) to elucidate the factors underlying these benefits.

**Theorem 2**

*Let $s_{i}$ denote the latent states associated with the observations $o_{i}$ . Under appropriate assumptions, the reduction in reasoning uncertainty achieved by explicit world modeling satisfies the following properties:

1. Reasoning uncertainty does not increase: $\mathbb{H}(r_{i}|o_{0},r_{0:i-1})-\mathbb{H}(r_{i}|R_{i})=\mathbb{I}(o_{1:i-1};r_{i}|o_{0},r_{0:i-1})\geq 0.$

1. The reasoning uncertainty improvement is bounded by both (i) the information that observations provide about the underlying states and (ii) the information that the reasoning step requires about those states:

$$

\mathbb{I}(o_{1:i-1};r_{i}|o_{0},r_{0:i-1})\leq\min\left(\mathbb{I}(o_{1:i-1};s_{1:i-1}),\mathbb{I}(r_{i};s_{0:i-1},r_{0:i-1})\right). \tag{5}

$$*

The uncertainty of the target distribution is closely related to sample efficiency and learning difficulty. Consequently, the upper bound on the improvement of reasoning uncertainty (Eq. (5)) highlights another trade-off in the choice of observation modality for world modeling. The first term indicates that observations should be sufficiently informative about the underlying latent states. In contrast, the second suggests that they need only preserve the task-relevant aspects of the states required to select appropriate reasoning steps. Excessively detailed observations may be unnecessary and even detrimental, increasing world modeling errors.

Prior knowledge. Although visual world models are more informative, they are intrinsically more difficult to learn from scratch due to the high dimensionality and complexity of visual observations. Fortunately, modern AI systems are typically large-scale pre-trained, which endows them with strong prior knowledge and enables faster convergence and improved generalization during downstream post-training. As discussed earlier, humans tend to represent different aspects of world knowledge through different modalities. Consequently, for a given downstream task, the distribution shift between its transition distribution and that learned during large-scale Internet pre-training can vary substantially across modalities. The generalization bound in Theorem 6 of Appendix 7.2 suggests that this modality-dependent distribution shift is closely related to the post-training sample efficiency of the corresponding world model. This highlights the importance of acquiring broad prior knowledge across modalities during pre-training, and of leveraging the proper modality whose priors are best aligned with the downstream task.

Drawing on the above analysis, we formulate our central hypothesis regarding when and how visual generation benefits reasoning, thereby helping narrow the gap between multimodal AI and human capabilities.

The Visual Superiority Hypothesis: In multimodal reasoning tasks grounded in the physical world, visual generation as a world model yields representations that are more informative and knowledge-rich than those produced by verbal world models.

## 4 Experiment Settings

Finally, we empirically validate the insights and theoretical analyses presented above through a series of controlled experiments. In this section, we describe the evaluation tasks and model training procedures.

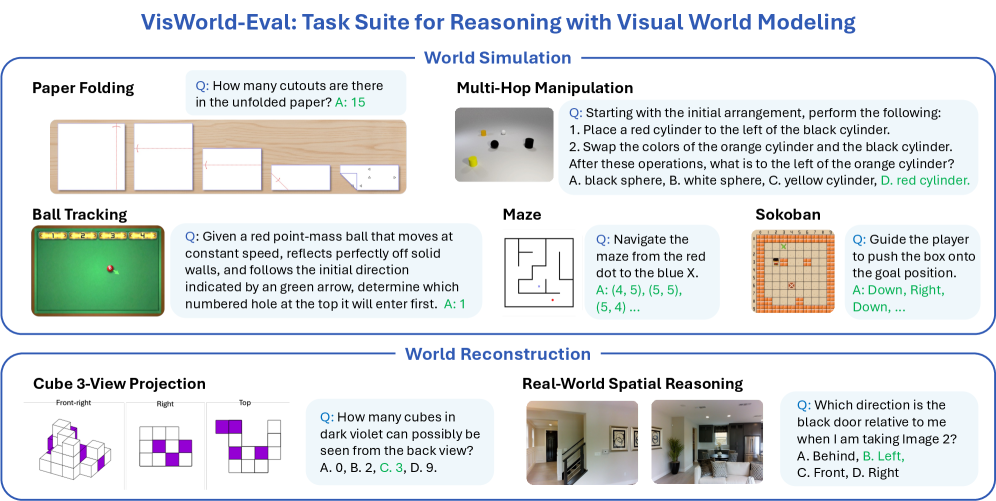

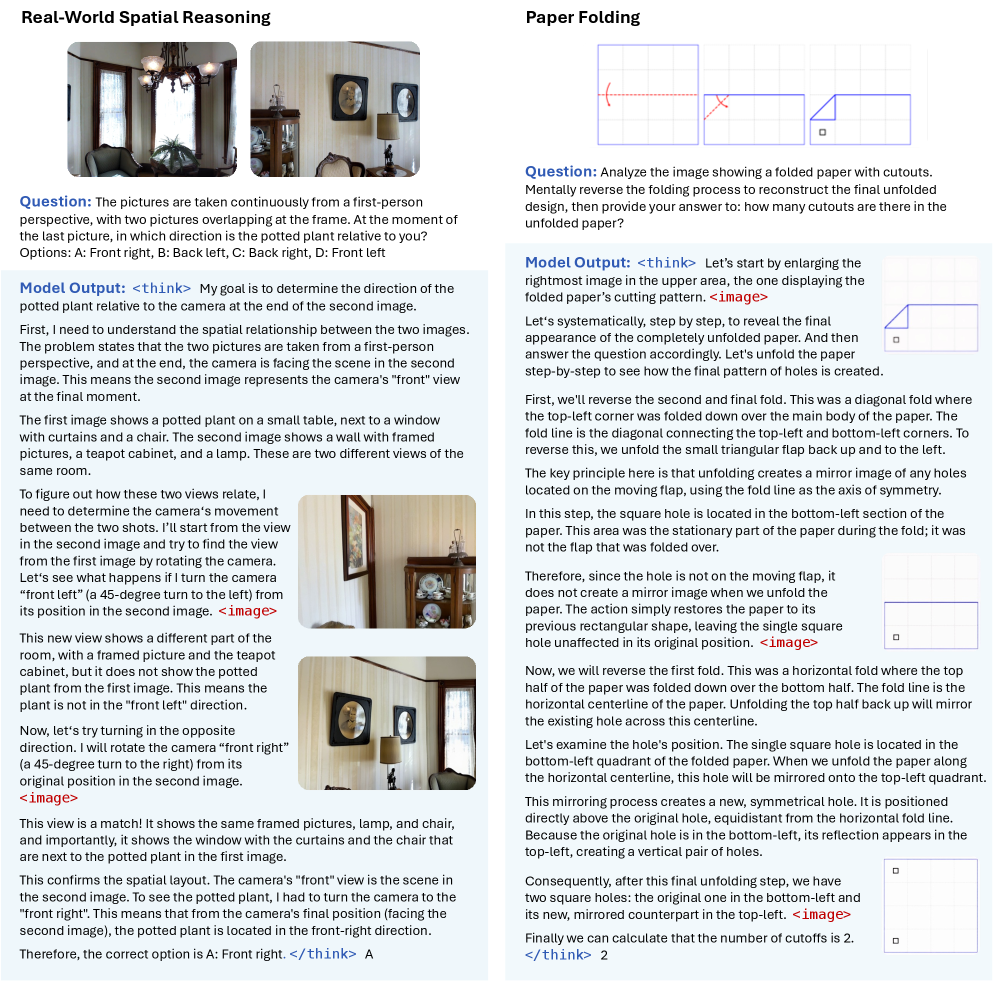

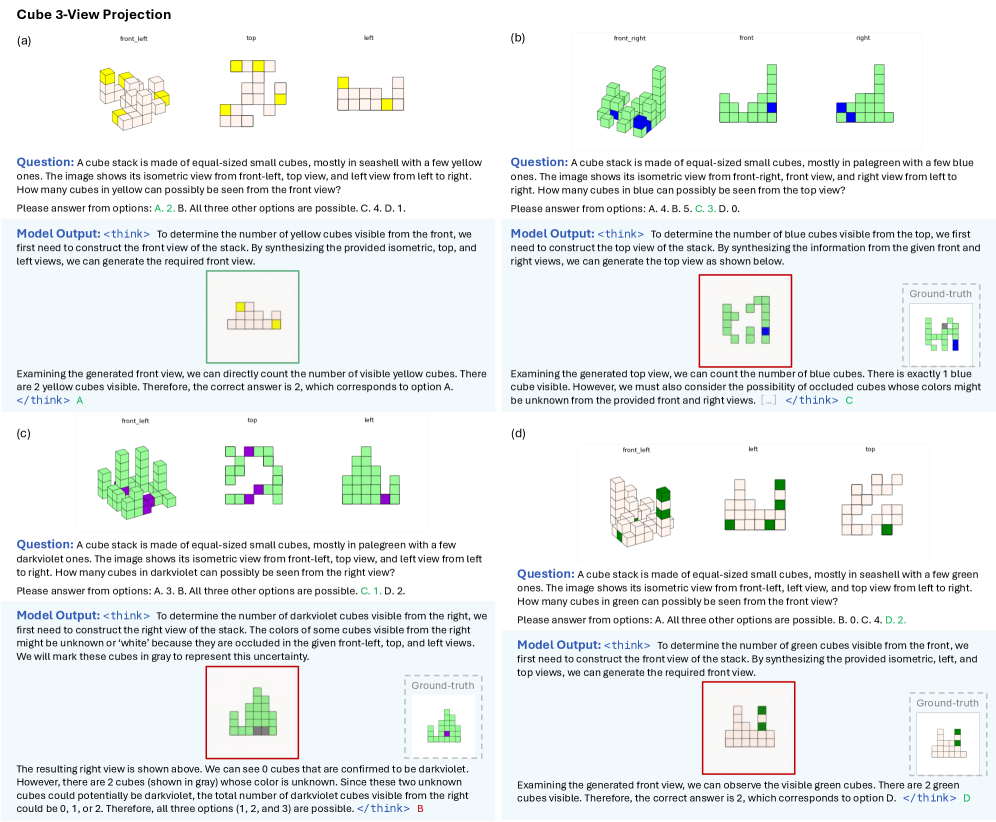

### 4.1 VisWorld-Eval: Task Suite for Reasoning with Visual World Modeling

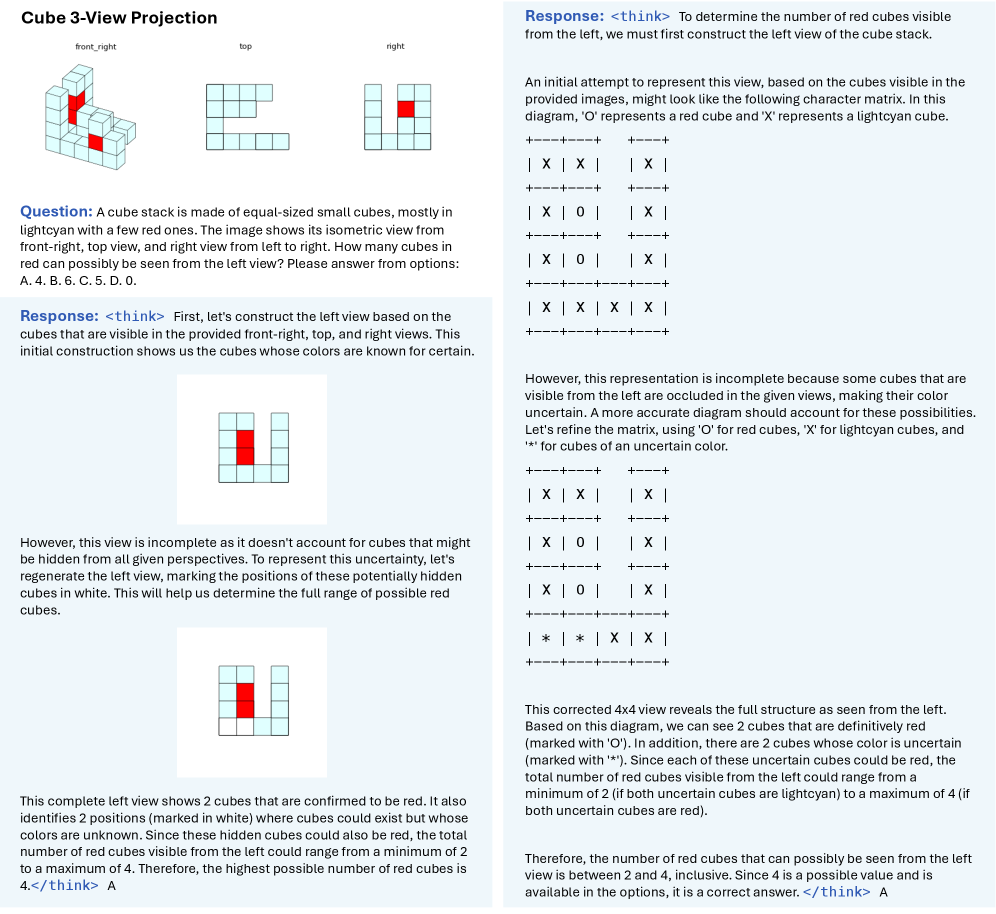

While prior work has primarily designed evaluation tasks heuristically, we principledly evaluate multimodal reasoning across tasks designed to specific world model capabilities. Building on related benchmarks, we identify and curate a total of seven tasks, forming an evaluation suite tailored to assess reasoning with visual world modeling. All tasks are framed as question answering with concise, verifiable answers, and performance is measured by answer accuracy. We refer to this suite as VisWorld-Eval, and summarize it in Figure 3.

World simulation. We consider the following tasks that primarily require simulating world dynamics over time: (1) Paper folding: Adapted from SpatialViz-Bench [61], this task presents a sequence of paper folds followed by hole punching, and asks for the distribution of holes after the paper is unfolded. Successfully solving this task requires simulating the unfolding process, relying on prior knowledge of symmetry and spatial transformations that is commonly grounded in visual experience. (2) Multi-hop manipulation: Build upon CLEVR [30], this task features a scene containing objects with various shapes and colors that undergo a sequence of operations, such as addition, removal, or color changes. The final question queries properties of the resulting layouts. Since target objects of operations are often specified via relative spatial relationships, this task places strong demands on state tracking and spatial understanding. (3) Ball tracking: Adapted from RBench-V [20], this task evaluates physical dynamics simulation by requiring the model to infer the trajectory of a ball undergoing ideal specular reflections within a given scene and predicting which numbered hole it will ultimately enter. In addition, we include (4) Maze [29] and (5) Sokoban [55], as these two grid-world tasks are commonly used in prior work of studying visual generation for reasoning [67, 36].

World reconstruction. We also evaluate tasks that emphasize reconstructing underlying world structure from partial observations: (6) Cube 3-view projection: Adapted from SpatialViz-Bench [61], this task provides an isometric view and two orthographic views of a connected cube stack, and asks about an unseen viewpoint. Solving the task requires reconstructing the full 3D structure and mentally rotating or projecting it into the queried view, a process closely aligned with human visual mental representations. (7) Real-world spatial reasoning: We focus on the positional relationship subset of MMSI-Bench [69]. Given multiple views of a realistic scene, these tasks ask about positional relationships among the cameras, objects, and regions. Successfully answering these questions requires constructing a coherent spatial mental model of the scene from limited viewpoints to support accurate spatial reasoning.

For each task, we construct SFT data by designing different CoT patterns with implicit, verbal, or visual world modeling, enabling controlled comparative evaluations. Data construction pipeline and examples across tasks are presented in Appendix 8.1.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: VisWorld-Eval Task Suite for Reasoning with Visual World Modeling

### Overview

The image is an informational diagram or infographic presenting "VisWorld-Eval," a task suite designed for evaluating reasoning capabilities involving visual world modeling. The diagram is organized into two primary sections: "World Simulation" and "World Reconstruction." Each section contains multiple example tasks, each presented with a title, a visual representation, a question (Q), and an answer (A). The overall design uses a clean, academic style with blue section headers and task titles.

### Components/Sections

The diagram is structured as follows:

1. **Main Title:** "VisWorld-Eval: Task Suite for Reasoning with Visual World Modeling" (centered at the top).

2. **Section 1: World Simulation** (top half, enclosed in a blue-bordered box).

* Contains five distinct tasks: Paper Folding, Multi-Hop Manipulation, Ball Tracking, Maze, and Sokoban.

3. **Section 2: World Reconstruction** (bottom half, enclosed in a blue-bordered box).

* Contains two distinct tasks: Cube 3-View Projection and Real-World Spatial Reasoning.

### Detailed Analysis / Content Details

#### **Section 1: World Simulation**

* **Task 1: Paper Folding** (Top-left)

* **Visual:** A sequence of four images showing a piece of paper being folded and cut.

* **Question (Q):** "How many cutouts are there in the unfolded paper?"

* **Answer (A):** "15" (displayed in green text).

* **Task 2: Multi-Hop Manipulation** (Top-right)

* **Visual:** A top-down view of a scene with several colored 3D objects (cylinders, spheres) on a gray surface.

* **Question (Q):** "Starting with the initial arrangement, perform the following: 1. Place a red cylinder to the left of the black cylinder. 2. Swap the colors of the orange cylinder and the black cylinder. After these operations, what is to the left of the orange cylinder?"

* **Answer (A):** "D. red cylinder." (The correct option is highlighted in green).

* **Task 3: Ball Tracking** (Middle-left)

* **Visual:** A green rectangular field with a red ball and a green arrow indicating its initial direction. The top edge has numbered holes (1 through 5).

* **Question (Q):** "Given a red point-mass ball that moves at constant speed, reflects perfectly off solid walls, and follows the initial direction indicated by a green arrow, determine which numbered hole at the top it will enter first."

* **Answer (A):** "1" (displayed in green text).

* **Task 4: Maze** (Center)

* **Visual:** A simple line-drawn maze with a red dot at the entrance and a blue 'X' at the goal.

* **Question (Q):** "Navigate the maze from the red dot to the blue X."

* **Answer (A):** "(4, 5), (5, 5), (5, 4) ..." (displayed in green text, indicating a sequence of coordinates).

* **Task 5: Sokoban** (Middle-right)

* **Visual:** A grid-based puzzle game screenshot showing a player character, boxes, and goal positions.

* **Question (Q):** "Guide the player to push the box onto the goal position."

* **Answer (A):** "Down, Right, Down, ..." (displayed in green text, indicating a sequence of moves).

#### **Section 2: World Reconstruction**

* **Task 6: Cube 3-View Projection** (Bottom-left)

* **Visual:** Three orthographic projection views of a 3D cube structure, labeled "Front-right," "Right," and "Top." The cubes are white with some faces colored dark violet.

* **Question (Q):** "How many cubes in dark violet can possibly be seen from the back view?"

* **Answer (A):** "C. 3." (The correct option is highlighted in green).

* **Task 7: Real-World Spatial Reasoning** (Bottom-right)

* **Visual:** Two photographs of an indoor living room scene, labeled "Image 1" and "Image 2," taken from different perspectives.

* **Question (Q):** "Which direction is the black door relative to me when I am taking Image 2?"

* **Answer (A):** "B. Left." (The correct option is highlighted in green).

### Key Observations

1. **Task Diversity:** The suite covers a wide range of reasoning types: spatial manipulation (Paper Folding, Multi-Hop Manipulation), physics prediction (Ball Tracking), path planning (Maze, Sokoban), geometric projection (Cube 3-View), and egocentric spatial understanding (Real-World Spatial Reasoning).

2. **Answer Format:** Answers are presented in different formats depending on the task: numerical (15, 1), multiple-choice (D, C, B), coordinate sequences, and action sequences.

3. **Visual Grounding:** Every task is paired with a specific visual input (diagram, simulation screenshot, or photograph) that is essential for solving the problem.

4. **Language:** All primary text (titles, questions, instructions) is in English. No other languages are present in the image.

### Interpretation

This diagram serves as a high-level overview of a benchmark designed to test artificial intelligence systems on complex visual reasoning. The tasks are not simple pattern recognition; they require an internal model of how objects behave in space and time (World Simulation) or how 3D scenes relate to 2D representations (World Reconstruction).

The "World Simulation" tasks test an agent's ability to mentally simulate the consequences of actions (folding, moving objects, ball physics, navigation) within a defined visual environment. The "World Reconstruction" tasks test the ability to infer 3D structure from 2D views or to understand spatial relationships from a changing first-person perspective.

The inclusion of both synthetic (e.g., Maze, Sokoban) and real-world (photographs) visual inputs suggests the benchmark aims to evaluate generalization across domains. The variety in answer formats indicates it assesses not just the correct outcome but also the ability to generate precise procedural knowledge (paths, move sequences). Overall, VisWorld-Eval appears to be a comprehensive test for embodied AI or advanced visual reasoning systems that need to interact with or understand dynamic visual worlds.

</details>

Figure 3: The VisWorld-Eval suite for assessing multimodal reasoning with visual world modeling. VisWorld-Eval comprises seven tasks spanning both synthetic and real-world domains, each designed to isolate and demand specific atomic world-model capabilities.

Table 1: Zero-shot evaluation of advanced VLMs on VisWorld-Eval. We report the average accuracy over five tasks (excluding Maze and Sokoban) and over all seven tasks.

| Models | Paper Folding | Multi-Hop Manip. | Ball Tracking | Cube 3-View | MMSI (Pos. Rel.) | Maze | Sokoban | Overall (5 tasks) | Overall (7 tasks) |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Proprietary Models | | | | | | | | | |

| Gemini 3 Flash | 25.6 | 75.4 | 55.3 | 52.7 | 41.3 | 73.9 | 99.3 | 50.0 | 60.5 |

| Gemini 3 Pro | 27.0 | 74.5 | 44.7 | 53.3 | 49.6 | 33.5 | 90.2 | 49.8 | 53.2 |

| Seed 1.8 | 10.6 | 75.2 | 24.4 | 42.5 | 38.8 | 83.9 | 68.3 | 38.3 | 49.1 |

| GPT 5.1 | 6.4 | 73.9 | 34.8 | 44.5 | 44.8 | 0.6 | 62.8 | 40.8 | 38.2 |

| o3 | 13.5 | 68.1 | 24.7 | 37.7 | 44.4 | 0.0 | 36.0 | 37.6 | 32.0 |

| Open-Source Models | | | | | | | | | |

| Qwen3-VL-8B-Thinking [5] | 11.0 | 49.3 | 17.8 | 21.2 | 27.7 | 0.0 | 5.8 | 25.4 | 18.9 |

| BAGEL-7B-MoT [13] | 11.2 | 31.6 | 19.4 | 26.8 | 27.2 | 0.0 | 0.2 | 23.2 | 16.6 |

Evaluation of advanced VLMs. Table 1 reports the zero-shot performance of advanced VLMs on VisWorld-Eval. Overall, these models perform suboptimally, highlighting limitations of current multimodal AI systems. Among them, Gemini 3 Flash and Gemini 3 Pro remarkably outperform the other models; however, their performance remains far from satisfactory on challenging tasks like paper folding, ball tracking, cube 3-view projection, and real-world spatial reasoning.

### 4.2 Unified Multimodal Model Training and Evaluation

Evaluation protocol. To investigate the benefits of visual generation in multimodal reasoning, we evaluate post-trained UMMs, rather than the zero-shot performance of base models. To the best of our knowledge, no open-source model has been natively optimized for interleaved verbal-visual generation for reasoning. Even commercial closed-source models currently exhibit fundamental limitations in generating visual intermediate reasoning steps [38, 76]. Focusing on post-trained models, therefore, provides a more meaningful estimate of the upper bound for multimodal reasoning performance, while reducing confounding effects arising from insufficient pre-training due to limited interleaved data availability or quality.

Model training. We adopt BAGEL [13], a state-of-the-art open-source unified multimodal model, as our base model. Most experiments are conducted by supervised fine-tuning (SFT) on task-specific datasets, where verbal and visual generation in both chain-of-thought reasoning and final answers are optimized using cross-entropy and flow-matching loss. Specifically, the loss for reasoning with visual world modeling is as follows:

$$

\mathcal{L}_{\theta}(Q,I,R,A)=-\sum_{i=1}^{H+1}\sum_{j=1}^{|r_{i}|}\log p_{\theta}\left(r_{i,j}\mid r_{i,<j},R_{i}\right)+\sum_{i=1}^{H}\mathbb{E}_{t,\epsilon}\left\|v_{\theta}(o_{i}^{t},t\mid\tilde{R}_{i})-(\epsilon-o_{i})\right\|_{2}^{2}, \tag{6}

$$

where $o_{i}^{t}=to_{i}+(1-t)\epsilon$ are noisy observations. We emphasize that in our formulation, $r_{i}$ refers to a verbal reasoning step, instead of a reward. We also perform reinforcement learning from verifiable rewards (RLVR) following SFT. During RL, only the verbal generation component is optimized by GRPO [18], while visual generation is regularized via the KL-divergence with respect to the SFT-trained reference model:

$$

\displaystyle\mathcal{J}_{\theta}(Q,I)=\mathbb{E}_{o,r\sim p_{\theta_{\text{old}}}}\Bigg[ \displaystyle\sum_{i=1}^{H+1}\sum_{j=1}^{|r_{i}|}\Bigg(\min\Big(\frac{p_{\theta}\left(r_{i,j}\mid r_{i,<j},R_{i}\right)}{p_{\theta_{\text{old}}}\left(r_{i,j}\mid r_{i,<j},R_{i}\right)}{A},\ \text{clip}\Big(\frac{p_{\theta}\left(r_{i,j}\mid r_{i,<j},R_{i}\right)}{p_{\theta_{\text{old}}}\left(r_{i,j}\mid r_{i,<j},R_{i}\right)},1-\varepsilon,1+\varepsilon\Big){A}\Big)\Bigg) \displaystyle-\sum_{i=1}^{H}\mathbb{E}_{t,\epsilon}\left\|v_{\theta}(o_{i}^{t},t\mid\tilde{R}_{i})-v_{\theta_{\text{ref}}}(o_{i}^{t},t\mid\tilde{R}_{i})\right\|_{2}^{2}\Bigg]. \tag{7}

$$

Full implementation details and hyperparameters are provided in Appendix 8.2.

## 5 Experimental Results

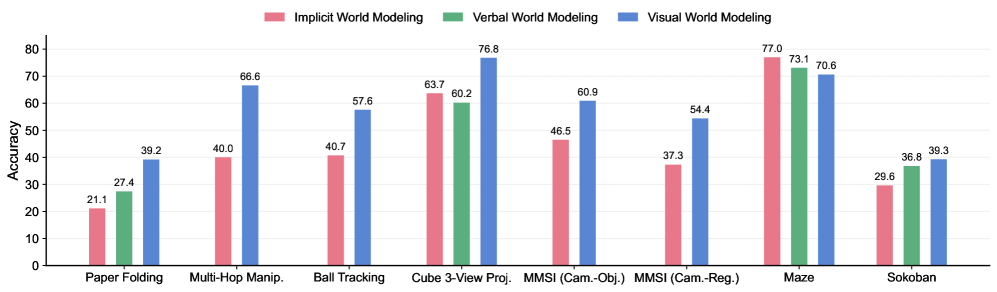

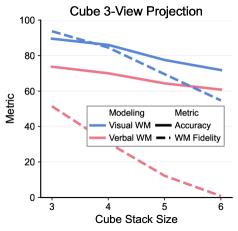

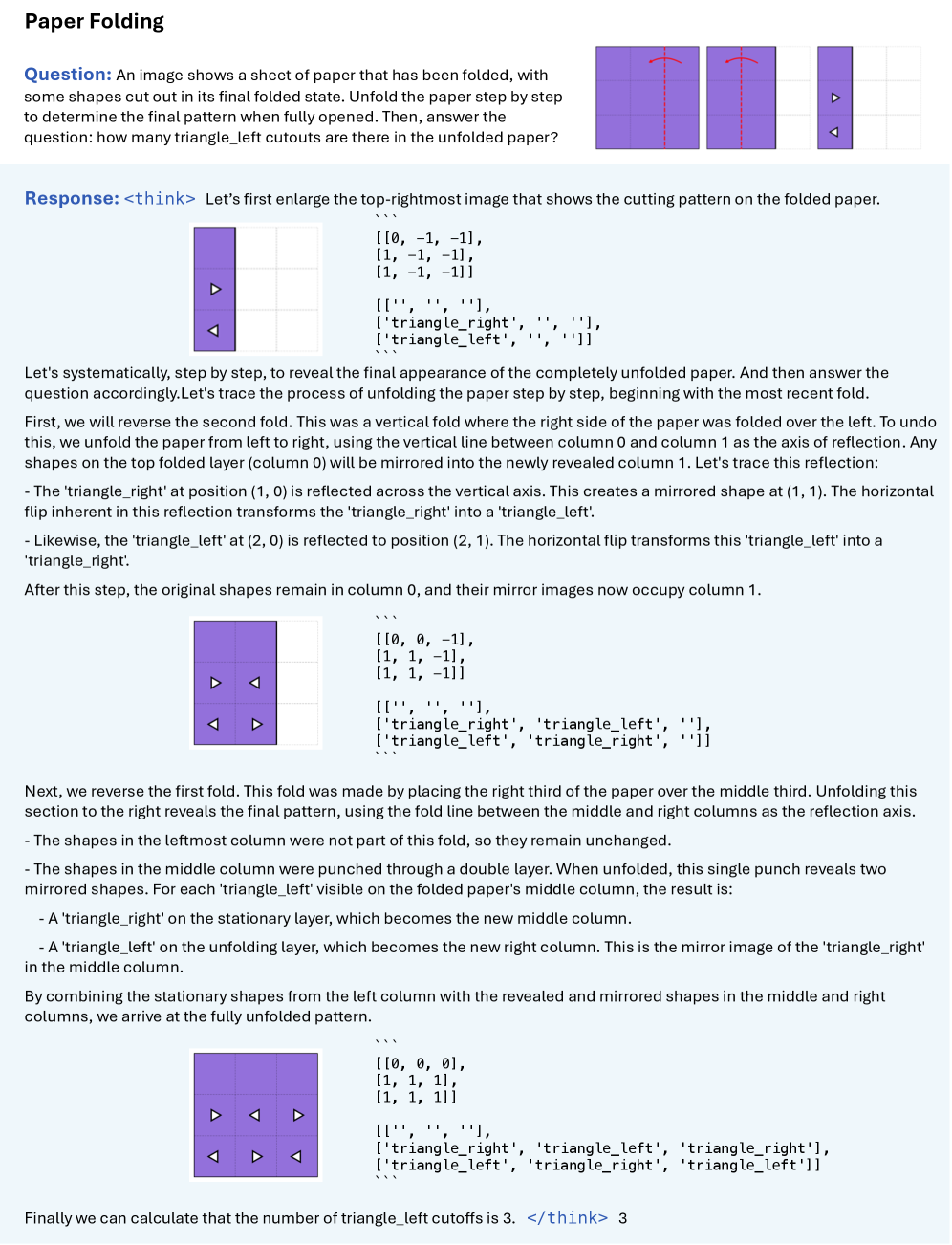

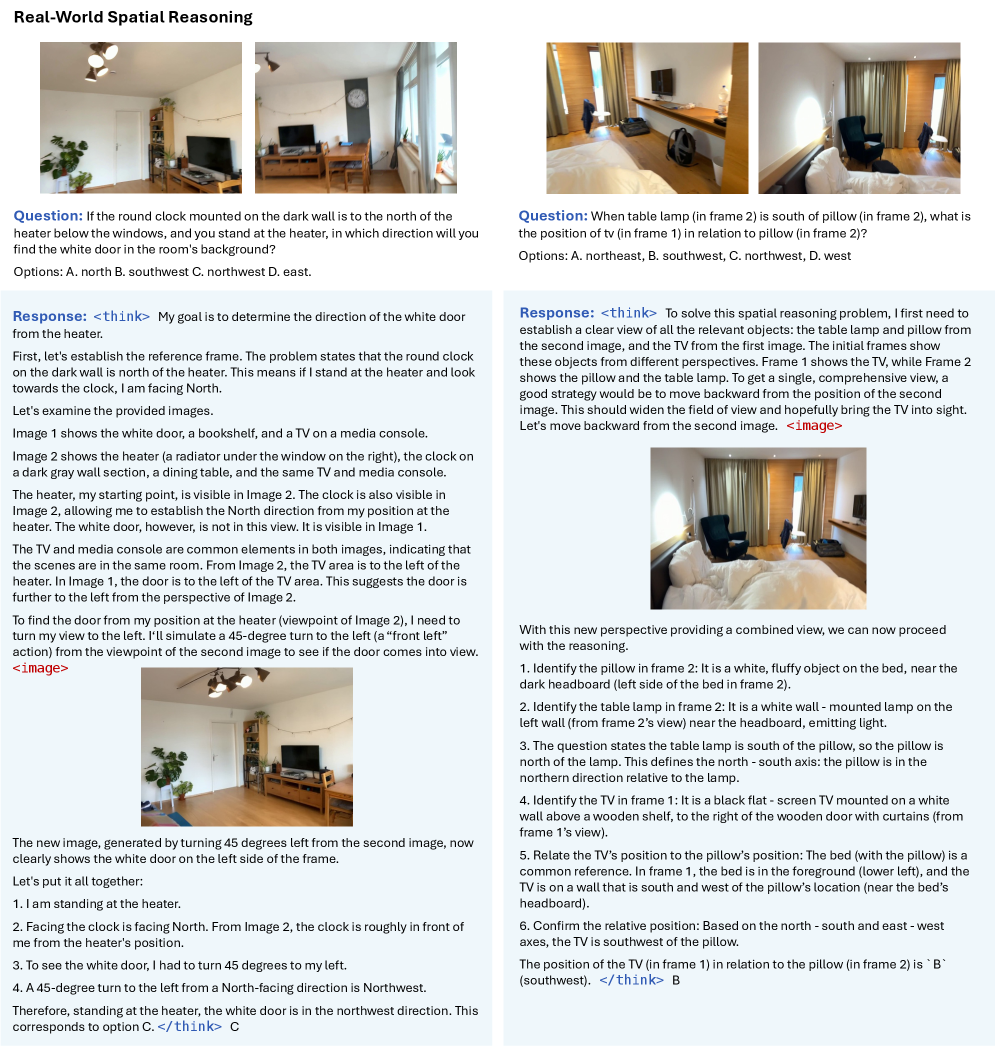

In this section, we demonstrate that visual world modeling boosts multimodal reasoning through two atomic capabilities: world simulation (Section 5.1) and world reconstruction (Section 5.2). We also identify tasks in which it is unhelpful (Section 5.3), where implicit or verbal world modeling is sufficient. We conduct analysis in detail. Interestingly, we reveal emergent internal representations in UMMs that support implicit world modeling on simple maze tasks.

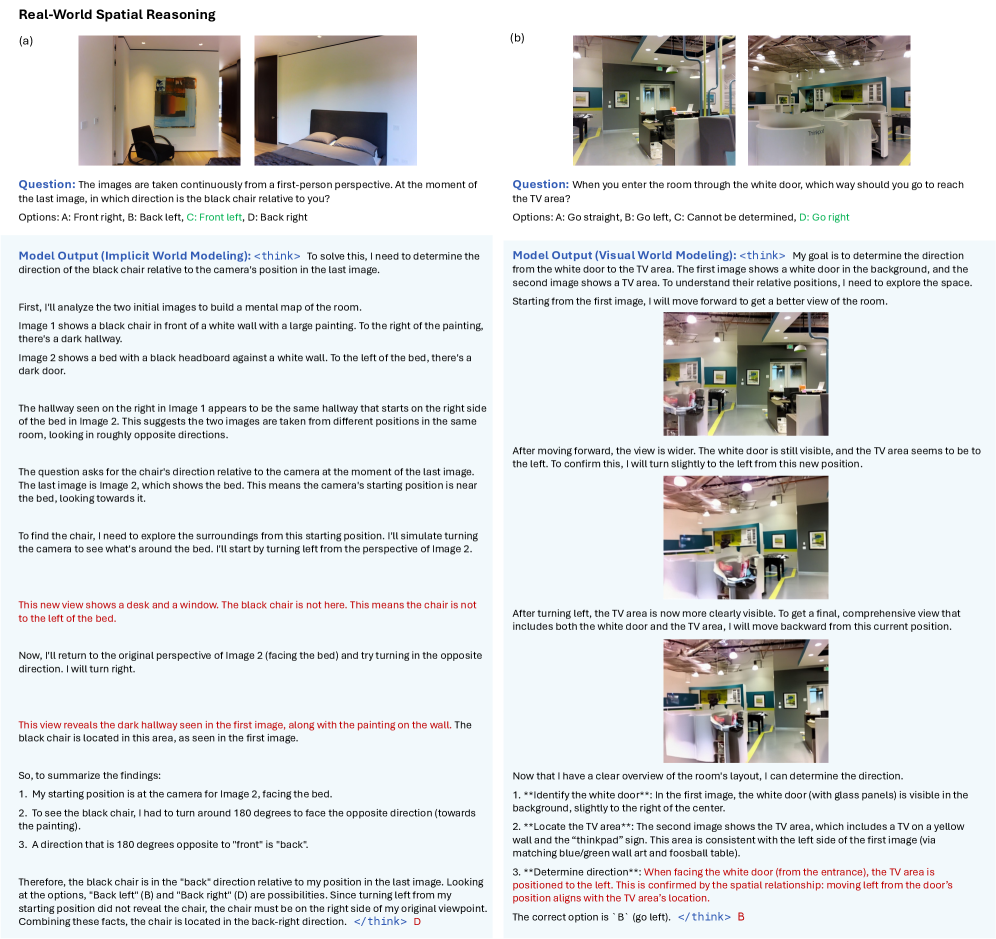

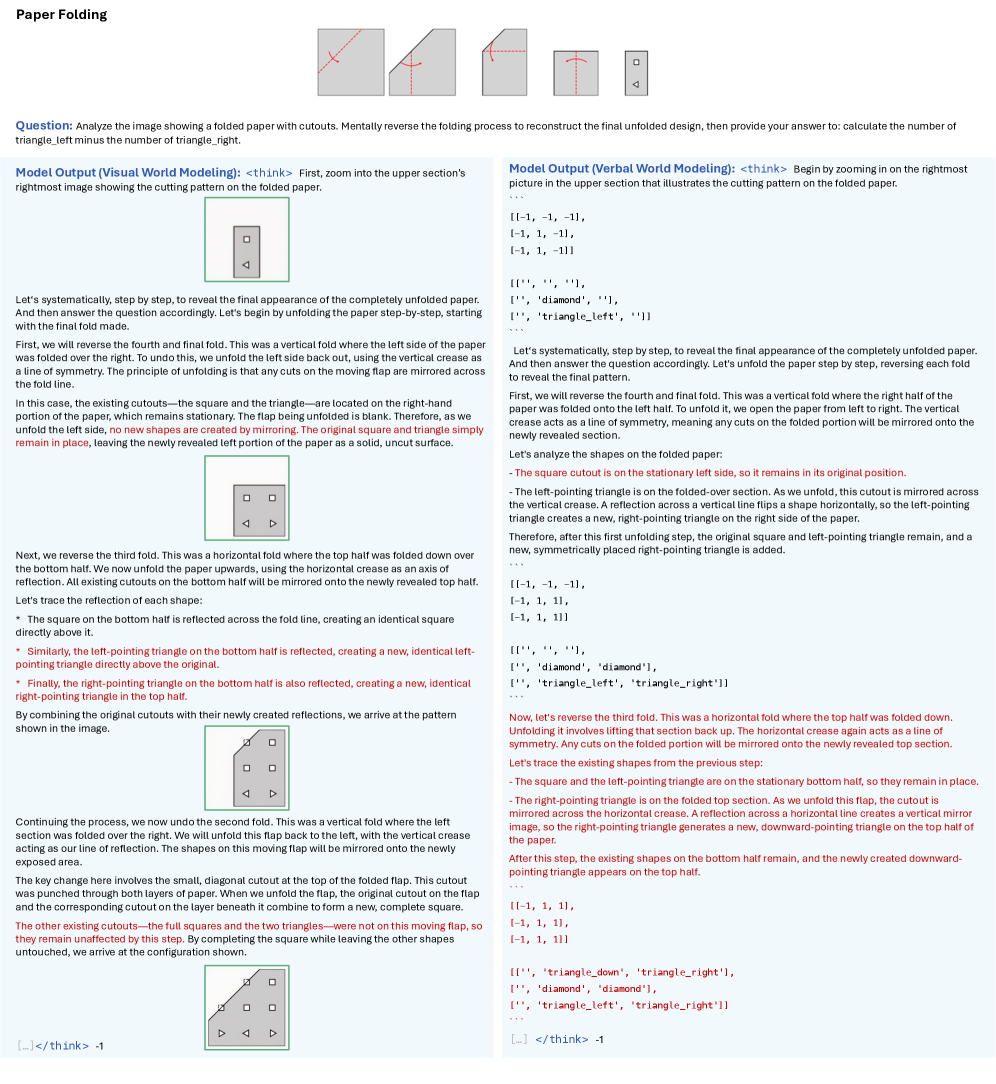

### 5.1 Visual World Simulation Boosts Multimodal Reasoning

Main results. Figure 4 summarizes the performance of SFT-trained UMMs under different chain-of-thought formulations across all tasks. We observe that interleaved CoT with visual world modeling significantly outperforms its purely verbal counterparts on three world simulation tasks: paper folding, multi-hop manipulation, and ball tracking. These gains are attributed to both the richer expressiveness and stronger prior knowledge afforded by the visual modality. In particular, it is difficult for models to precisely ground object coordinates and perform arithmetic operations without external tools in tasks such as multi-hop manipulation and ball tracking, with the latter being especially challenging. Thus, verbal world modeling is inappropriate and omitted in these tasks. This exacerbates ambiguity and hallucinations in purely verbal reasoning. Similarly, in paper folding, although models can track the states of holes, it remains difficult to completely depict the paper contour during unfolding. Moreover, as showcased in Figure 9 and 16, the spatial transformation involved in paper unfolding critically relies on an understanding of geometric symmetry, which can be more naturally learned from visual data like images and videos.

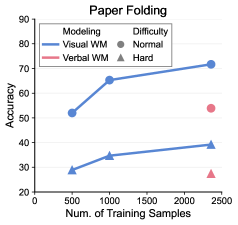

Sample efficiency. To further demonstrate the stronger prior knowledge embedded in the visual modality, we experiment comparing the sample efficiency of verbal and visual world modeling on the paper folding task. As shown in Figure 6, reasoning with visual world modeling exhibits substantially higher sample efficiency, achieving performance comparable to verbal world modeling while using more than $4\times$ less SFT data.

### 5.2 Visual World Reconstruction Boosts Multimodal Reasoning

Main results. As shown in Figure 4, multimodal reasoning tasks that rely on world reconstruction capabilities also benefit substantially from visual world modeling. In the cube 3-view task, predicting a novel view of stacked cubes, denoted symbolic character matrices, suffers from limited prior knowledge, whereas visually rotating objects has been a rich experience during pre-training with large-scale Internet videos. For MMSI tasks, fully describing a novel view of a realistic scene using text alone is similarly ill-suited as in the previous subsection, and we also discover hallucinations in pure verbal reasoning, which lacks grounding to visual generation. We do not observe consistent improvements on other positional-relationship subtasks in MMSI-Bench, except camera-object and camera-region, which we attribute to current UMM’s limitations in both spatial understanding during verbal reasoning and generation quality in visual world modeling. Full quantitative results and qualitative examples are provided in Appendix 9. We expect these limitations to be mitigated in future work with stronger base models.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Grouped Bar Chart: World Modeling Accuracy Across Tasks

### Overview

The image displays a grouped bar chart comparing the accuracy of three different world modeling approaches—Implicit, Verbal, and Visual—across eight distinct tasks. The chart is designed to show performance differences, with each task cluster containing up to three colored bars representing the modeling types.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:** Labeled "Accuracy". The scale runs from 0 to 80, with major tick marks at intervals of 10 (0, 10, 20, 30, 40, 50, 60, 70, 80).

* **X-Axis:** Lists eight task categories. From left to right:

1. Paper Folding

2. Multi-Hop Manip. (presumably "Manipulation")

3. Ball Tracking

4. Cube 3-View Proj. (presumably "Projection")

5. MMSI (Cam.-Obj.) (presumably "Camera-Object")

6. MMSI (Cam.-Reg.) (presumably "Camera-Region")

7. Maze

8. Sokoban

* **Legend:** Positioned at the top center of the chart. It defines the three data series:

* **Pink Bar:** Implicit World Modeling

* **Green Bar:** Verbal World Modeling

* **Blue Bar:** Visual World Modeling

* **Data Labels:** Each bar has its exact accuracy value printed directly above it.

### Detailed Analysis

Below is the extracted data for each task, following the order of bars (Pink, Green, Blue) as defined by the legend.

1. **Paper Folding:**

* Implicit (Pink): 21.1

* Verbal (Green): 27.4

* Visual (Blue): 39.2

* *Trend:* Accuracy increases sequentially from Implicit to Verbal to Visual.

2. **Multi-Hop Manip.:**

* Implicit (Pink): 40.0

* Verbal (Green): 40.7

* Visual (Blue): 66.6

* *Trend:* Implicit and Verbal are nearly equal, while Visual shows a significant jump in accuracy.

3. **Ball Tracking:**

* Implicit (Pink): 40.7

* Verbal (Green): **No bar present.**

* Visual (Blue): 57.6

* *Trend:* Visual outperforms Implicit. Data for Verbal World Modeling is absent for this task.

4. **Cube 3-View Proj.:**

* Implicit (Pink): 63.7

* Verbal (Green): 60.2

* Visual (Blue): 76.8

* *Trend:* Visual is highest, followed by Implicit, then Verbal.

5. **MMSI (Cam.-Obj.):**

* Implicit (Pink): 46.5

* Verbal (Green): **No bar present.**

* Visual (Blue): 60.9

* *Trend:* Visual significantly outperforms Implicit. Data for Verbal is absent.

6. **MMSI (Cam.-Reg.):**

* Implicit (Pink): 37.3

* Verbal (Green): **No bar present.**

* Visual (Blue): 54.4

* *Trend:* Visual significantly outperforms Implicit. Data for Verbal is absent.

7. **Maze:**

* Implicit (Pink): 77.0

* Verbal (Green): 73.1

* Visual (Blue): 70.6

* *Trend:* This is the only task where accuracy *decreases* across the sequence, with Implicit performing best.

8. **Sokoban:**

* Implicit (Pink): 29.6

* Verbal (Green): 36.8

* Visual (Blue): 39.3

* *Trend:* Accuracy increases sequentially from Implicit to Verbal to Visual.

### Key Observations

* **Performance Leader:** Visual World Modeling (blue bars) achieves the highest accuracy in 6 out of the 8 tasks (Paper Folding, Multi-Hop Manip., Ball Tracking, Cube 3-View Proj., MMSI (Cam.-Obj.), MMSI (Cam.-Reg.), and Sokoban).

* **Notable Exception:** The **Maze** task is a clear outlier. Here, Implicit World Modeling (77.0) outperforms both Verbal (73.1) and Visual (70.6). This is the only task where the pink bar is the tallest.

* **Missing Data:** Verbal World Modeling (green bars) has no recorded accuracy for three tasks: Ball Tracking, MMSI (Cam.-Obj.), and MMSI (Cam.-Reg.).

* **Highest & Lowest Scores:** The highest accuracy on the chart is 77.0 (Implicit, Maze). The lowest accuracy is 21.1 (Implicit, Paper Folding).

* **Task Difficulty:** Tasks like Paper Folding and Sokoban show relatively low accuracy across all models (<40), suggesting they are more challenging. In contrast, Maze and Cube 3-View Proj. show high accuracy (>60) for all models that attempted them.

### Interpretation

The data suggests a strong, general advantage for **Visual World Modeling** across a variety of tasks involving spatial reasoning, object manipulation, and projection. Its consistent high performance indicates that a visual representation is highly effective for modeling these types of physical or geometric environments.

The **Maze** task's inverted trend is the most significant finding. It implies that for complex navigation and pathfinding within a constrained environment, an **Implicit** modeling approach (perhaps learning a latent policy or value function) is superior to explicit visual or verbal reasoning. This could be because mazes require integrating long-term consequences of actions, which implicit models might capture more efficiently.

The absence of data for **Verbal World Modeling** in several tasks (Ball Tracking, MMSI variants) may indicate that a verbal or language-based representation was deemed unsuitable or was not tested for those specific, potentially highly dynamic or visual-spatial, tasks. Where it was tested, it generally performed as an intermediate between Implicit and Visual, except in the Maze task.

In summary, the chart demonstrates that the optimal world modeling strategy is **task-dependent**. While visual models are broadly robust, specialized tasks like maze navigation may favor implicit learning. The results could guide the selection or hybridization of modeling approaches for artificial intelligence systems based on the problem domain.

</details>

Figure 4: Performance of SFT-trained UMMs with different world model-based chain-of-thought formulations across seven tasks from VisWorld-Eval. Refer to Table 1 for zero-shot performance of advanced VLMs.

Effects of task difficulties. Figure 6 analyzes performance on the cube 3-view projection task across varying sizes of input cube stacks. We observe a consistent advantage of reasoning with visual world modeling over verbal world modeling across all difficulty levels. Notably, for cube stacks of size six—out of the training distribution—visual world modeling still yields approximately a $10\$ performance improvement.

World model fidelity. Modern AI models are known to exhibit hallucinations along their reasoning trajectories, even when producing correct final answers [38]. We therefore evaluate the fidelity of world modeling in the cube 3-view projection task by comparing ground-truth views with the intermediate views generated verbally or visually during reasoning. To focus on structural correctness, we compare only the shapes of the views and completely ignore color information. Even under this relaxed evaluation setting, Figure 6 shows that verbal world modeling exhibits dramatically low fidelity, with scores degrading to near zero. Notably, approximately half of the samples require predicting the opposite view of a given input view, a transformation that only involves horizontal mirroring. Visual world modeling, benefiting from stronger prior knowledge of such geometric transformations, captures these patterns effectively and achieves fidelity scores consistently exceeding $50\$ .

### 5.3 Visual World Modeling is Unhelpful for Certain Tasks

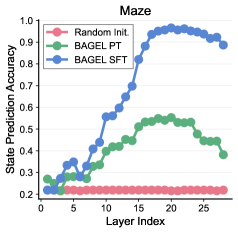

Main results. (Un)surprisingly, we do not observe notable improvements on grid-world tasks, including maze and Sokoban. In the maze tasks, reasoning with implicit world modeling—without explicitly tracking coordinates—achieves the best performance with a slight advantage. These results are consistent with recent empirical findings [14]. We argue that this is also well explained by our world model perspective. In these tasks, state tracking is relatively simple, typically requiring the maintenance of only one or two two-dimensional coordinates, which can be adequately handled through verbal reasoning alone. Furthermore, in the maze task, we hypothesize that such world modeling can be implicitly encoded in the model’s hidden representations [37], which helps explain the competitive performance of verbal reasoning without explicit coordinate tracking.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: BAGEL Model Architecture with MLP Head

### Overview

The image is a technical diagram illustrating a neural network architecture named "BAGEL." It depicts a multi-layered core model connected to a Multi-Layer Perceptron (MLP) head, which produces a specific coordinate output and a visualization. A text sequence with a masked token is shown below, suggesting the model's application in a language or sequence modeling task.

### Components/Axes

The diagram consists of three primary visual components arranged horizontally and vertically:

1. **BAGEL Core Model (Left/Center):**

* A large, light-blue rounded rectangle containing the model name and its internal structure.

* **Logo/Text:** A blue circular logo with a pattern of dots, followed by the text "**BAGEL**" in large, bold, blue sans-serif font.

* **Layer Stack:** Inside the rectangle, three horizontal bars represent model layers, labeled from bottom to top:

* `Layer 1`

* `Layer 2`

* `Layer N` (with ellipsis `...` between Layer 2 and Layer N, indicating a variable number of intermediate layers).

* A vertical arrow points upward from `Layer 1` through `Layer 2` to `Layer N`, indicating the flow of information through the stack.

2. **MLP Head & Output (Right):**

* A green rounded rectangle labeled "**MLP**" in black text.

* A blue arrow originates from the `Layer 2` bar within the BAGEL block and points to the MLP block, indicating a connection or data flow from that specific layer.

* **Output Visualization:** Above the MLP block, a small bar chart is depicted inside square brackets `[ ]`. It contains five vertical red bars of varying heights. The first two bars are the tallest, followed by three shorter bars of roughly equal height.

* **Coordinate Label:** Above the bar chart, the text "**Coordinate: (1,3)**" is displayed. The coordinate `(1,3)` is in red font.

3. **Contextual Text (Bottom):**

* A line of black monospaced text is positioned below the main diagram: `... proceed until I hit a wall, at [masked] ...`

* The token `[masked]` is highlighted in red font, matching the color of the coordinate output.

### Detailed Analysis

* **Spatial Grounding:** The BAGEL model block occupies the left and central portion of the image. The MLP head and its outputs are positioned to the right of the BAGEL block. The contextual text runs along the bottom edge.

* **Data Flow:** The diagram shows a clear flow: Information from `Layer 2` of the BAGEL model is fed into an MLP head. The MLP then produces two outputs: a specific coordinate value `(1,3)` and a feature vector visualized as a bar chart.

* **Text Transcription:**

* `BAGEL`

* `Layer 1`

* `Layer 2`

* `Layer N`

* `MLP`

* `Coordinate: (1,3)`

* `... proceed until I hit a wall, at [masked] ...`

* **Color Coding:**

* **Blue:** Used for the BAGEL model name, logo, and the connecting arrow, associating these elements with the core model.

* **Green:** Used for the MLP block, distinguishing it as a separate component.

* **Red:** Used for the output coordinate `(1,3)`, the bars in the output visualization, and the `[masked]` token. This color highlights the model's specific outputs and the target of the prediction task.

### Key Observations

1. **Layer-Specific Extraction:** The connection is explicitly drawn from `Layer 2`, not the final `Layer N`. This suggests the architecture may use intermediate layer representations for specific tasks, rather than only the final output.

2. **Output Duality:** The MLP produces both a discrete coordinate and a continuous vector (the bar chart). This could represent a multi-task prediction head.

3. **Masked Token Context:** The text snippet with a `[masked]` token strongly implies the model is being used for a masked language modeling (MLM) or similar sequence infilling task. The coordinate `(1,3)` likely corresponds to the position of this masked token within a sequence or a feature map.

### Interpretation

This diagram illustrates a **BAGEL model** being used for a **sequence prediction task**, likely involving masked token recovery. The key investigative insight is the **intermediate layer connection**.

* **What it suggests:** The architecture does not simply use the final output of the BAGEL stack. Instead, it taps into the representations at `Layer 2` to feed a specialized MLP head. This could be for efficiency (using a smaller, earlier representation) or because `Layer 2` contains features particularly suited for the task of predicting coordinates or the masked token.

* **Relationship of Elements:** The BAGEL core acts as a general-purpose feature extractor. The MLP is a task-specific "head" that interprets those features to produce a concrete output—the coordinate `(1,3)` and the associated vector. The red color linking the output coordinate and the `[masked]` token creates a direct visual and logical link: the model's output is the solution to the masked position in the input text.

* **Anomaly/Notable Pattern:** The output is a **coordinate `(1,3)`**, not a word. This is atypical for a standard language model. It suggests the task might be more complex than simple word prediction. Possibilities include:

* The model is predicting the **position** (e.g., row 1, column 3) of the masked token in a structured input (like a table or image patch sequence).

* The coordinate is a **latent variable** or **address** that points to the correct token in an external memory or embedding space.

* The bar chart represents a probability distribution over possible tokens, and `(1,3)` is a key or index derived from that distribution.

In essence, the diagram depicts a **hybrid system** where a large foundational model (BAGEL) provides rich representations, which are then efficiently decoded by a lightweight MLP for a precise, localized prediction task involving both a coordinate and a feature vector.

</details>

Figure 5: Probing implicit world models, by training a set of probes, i.e., MLPs which infer the masked point coordinates during reasoning from internal representations.

Demystifying implicit world modeling. To validate this hypothesis, we probe the internal representations of models, as illustrated in Figure 5. We consider the same architecture, BAGEL, with three different sets of weights: a randomly initialized model, the pre-trained model, and the model supervised fine-tuned on CoT data in the implicit world modeling format, in which special tokens mask all explicit point coordinates during the reasoning process. For each model, we extract the hidden representations of these special tokens at each layer. We then train multilayer perceptrons (MLPs) on these representations to predict the underlying true point coordinates.

Figure 6 reports the prediction accuracy on a validation set. As expected, the randomly initialized model completely fails to internally track point states, achieving only random-guess accuracy on $5\times 5$ mazes. In contrast, the pre-trained model [13] already exhibits emergent representations that are predictive of maze states. Notably, we observe a non-monotonic trend across layers: prediction accuracy increases from lower layers (which capture low-level features) to middle layers, and then decreases toward the final layers, which are likely specialized for next-token prediction. Finally, supervised fine-tuning on domain-specific data, despite providing no explicit coordinate supervision, substantially enhances this internal predictability, achieving near-perfect accuracy. These in-depth results help explain our main experimental findings: as the model already possesses the capability for implicit world modeling, it does not necessarily benefit from explicit verbal world modeling, let alone more complex forms of visual world modeling.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart with Scatter Points: Paper Folding

### Overview

The image displays a line chart titled "Paper Folding," plotting model accuracy against the number of training samples. It compares two modeling approaches ("Visual WM" and "Verbal WM") across two difficulty levels ("Normal" and "Hard"). The "Normal" difficulty is represented by lines connecting data points, while the "Hard" difficulty is represented by isolated scatter points at the highest training sample count.

### Components/Axes

* **Title:** "Paper Folding" (centered at the top).

* **X-Axis:** Labeled "Num. of Training Samples." The scale runs from 0 to 2500, with major tick marks at 0, 500, 1000, 1500, 2000, and 2500.

* **Y-Axis:** Labeled "Accuracy." The scale runs from 20 to 90, with major tick marks at 20, 30, 40, 50, 60, 70, 80, and 90.

* **Legend (Top-Left Corner):**

* **Modeling:**

* Blue line with circle markers: "Visual WM"

* Red line with triangle markers: "Verbal WM"

* **Difficulty:**

* Circle marker: "Normal"

* Triangle marker: "Hard"

### Detailed Analysis

**Data Series & Trends:**