# REASON: Accelerating Probabilistic Logical Reasoning for Scalable Neuro-Symbolic Intelligence

**Authors**: Zishen Wan, Che-Kai Liu, Jiayi Qian, Hanchen Yang, Arijit Raychowdhury, Tushar Krishna

## Abstract

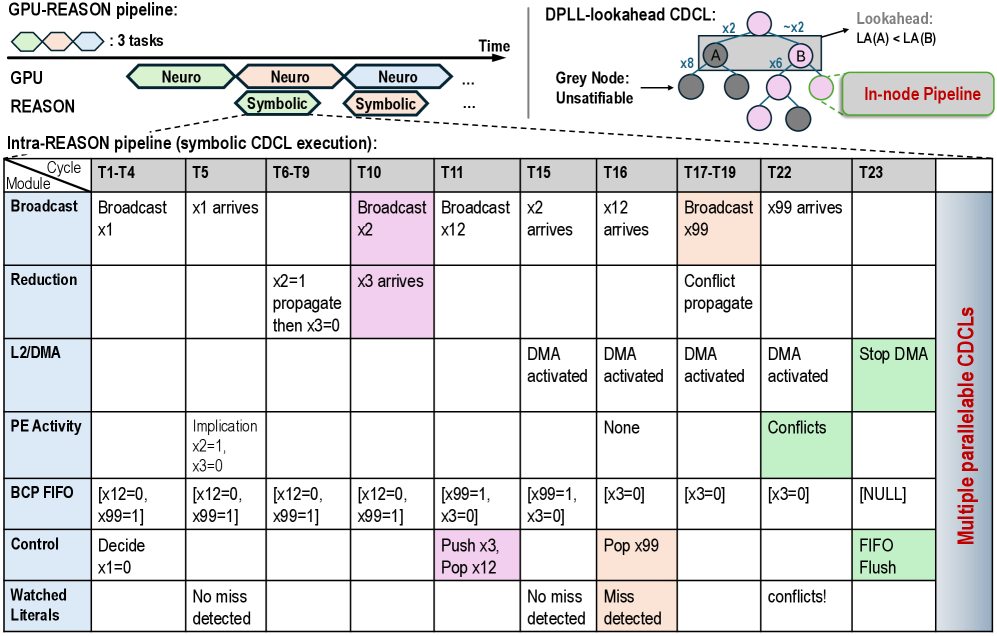

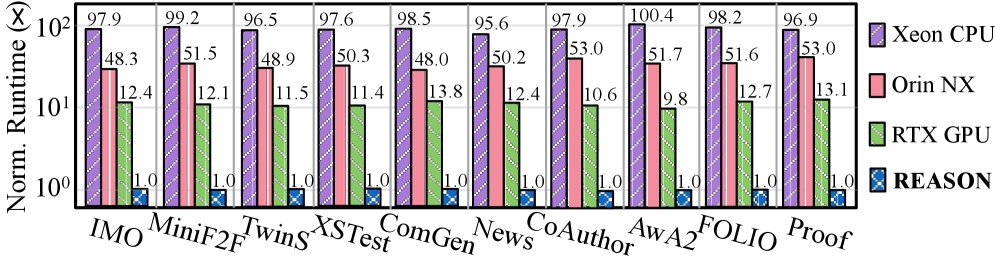

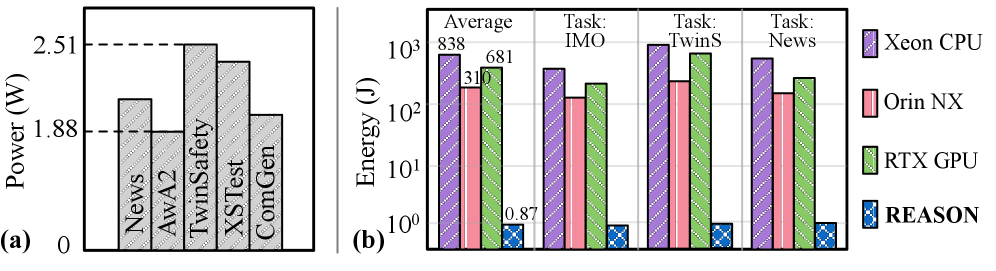

Neuro-symbolic AI systems integrate neural perception with symbolic and probabilistic reasoning to enable data-efficient, interpretable, and robust intelligence beyond purely neural models. Although this compositional paradigm has shown superior performance in domains such as mathematical reasoning, planning, and verification, its deployment remains challenging due to severe inefficiencies in symbolic and probabilistic inference. Through systematic analysis of representative neuro-symbolic workloads, we identify probabilistic logical reasoning as the inefficiency bottleneck, characterized by irregular control flow, low arithmetic intensity, uncoalesced memory accesses, and poor hardware utilization on CPUs and GPUs.

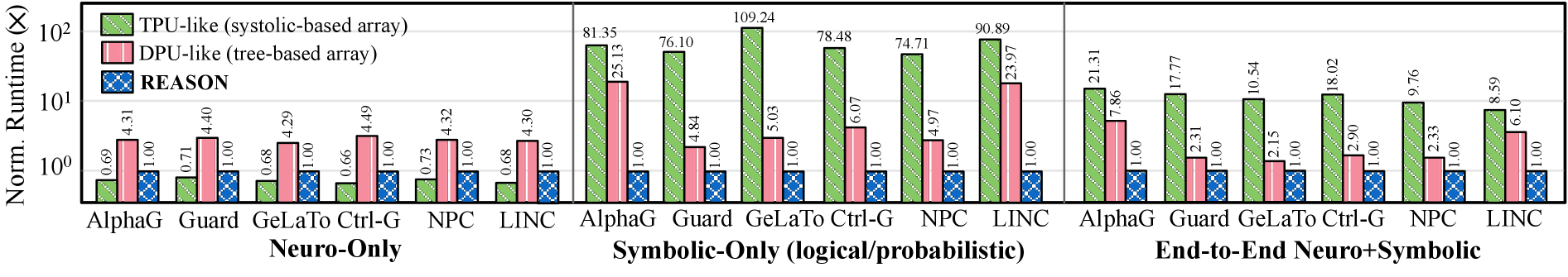

This paper presents REASON, an integrated acceleration framework for probabilistic logical reasoning in neuro-symbolic AI. At the algorithm level, REASON introduces a unified directed acyclic graph representation that captures common structure across symbolic and probabilistic models, coupled with adaptive pruning and regularization. At the architecture level, REASON features a reconfigurable, tree-based processing fabric optimized for irregular traversal, symbolic deduction, and probabilistic aggregation. At the system level, REASON is tightly integrated with GPU streaming multiprocessors through a programmable interface and multi-level pipeline that efficiently orchestrates neural, symbolic, and probabilistic execution. Evaluated across six neuro-symbolic workloads, REASON achieves 12-50 $\times$ speedup and 310-681 $\times$ energy efficiency over desktop and edge GPUs under TSMC 28 nm node. REASON enables real-time probabilistic logical reasoning, completing end-to-end tasks in 0.8 s with 6 mm ${}^{\text{2}}$ area and 2.12 W power, demonstrating that targeted acceleration of probabilistic logical reasoning is critical for practical and scalable neuro-symbolic AI and positioning REASON as a foundational system architecture for next-generation cognitive intelligence.

## I Introduction

Large Language Models (LLMs) have demonstrated remarkable capabilities in natural language understanding, image recognition, and complex pattern learning from vast datasets [23, 46, 42, 16]. However, despite their success, LLMs often struggle with factual accuracy, hallucinations, multi-step reasoning, and interpretability [35, 62, 2, 61]. These limitations have spurred the development of compositional AI systems, which integrate neural with symbolic and probabilistic reasoning to create robust, transparent, and intelligent cognitive systems. footnotetext: † Corresponding author

One promising compositional paradigm is neuro-symbolic AI, which integrates neural, symbolic, and probabilistic components into a unified cognitive architecture [60, 1, 72, 9, 75]. In this system, the neural module captures the statistical, pattern-matching behavior of learned models, performing rapid function approximation and token prediction for intuitive perception and feature extraction. The symbolic and probabilistic modules perform explicit, verifiable reasoning that is structured, interpretable, and robust under uncertainty, managing logic-based reasoning and probabilistic updates. This paradigm integrates intuitive generalization and deliberate reasoning.

Neuro-symbolic AI has demonstrated superior abstract deduction, complex question answering, mathematical reasoning, logical reasoning, and cognitive robotics [28, 66, 55, 81, 12, 38, 41, 71]. Its ability to learn efficiently from fewer data points, produce transparent and verifiable outputs, and robustly handle uncertainty and ambiguity makes it particularly advantageous compared to purely neural approaches. For example, recently Meta’s LIPS [28] and Google’s AlphaGeometry [66] leverage compositional neuro-symbolic approaches to solve complex math problems and achieve a level of human Olympiad gold medalists. R 2 -Guard [20] leverages LLM and probabilistic models to improve robust reasoning capability and resilience against jailbreaks. They represent a paradigm shift for AI that requires robust, verifiable, and explainable reasoning.

Despite impressive algorithmic advances in neuro-symbolic AI – often demonstrated on large-scale distributed GPU clusters – efficient deployment at the edge remains a fundamental challenge. Neuro-symbolic agents, particularly in robotics, planning, interactive cognition, and verification, require real-time logical inference to interact effectively with physical environments and multi-agent systems. For example, Ctrl-G, a text-infilling neuro-symbolic agent [83], must execute hundreds of reasoning steps per second to remain responsive, yet current implementations take over 5 minutes on a desktop GPU to complete a single task. This latency gap makes practical deployment of neuro-symbolic AI systems challenging.

To understand the root causes of this inefficiency, we systematically analyze a diverse set of neuro-symbolic workloads and uncover several system- and architecture-level challenges. Symbolic and probabilistic kernels frequently dominate end-to-end runtime and exhibit highly irregular execution characteristics, including heterogeneous compute patterns and memory-bound behavior with low ALU utilization. These kernels suffer from limited exploitable parallelism and irregular, uncoalesced memory accesses, leading to poor performance and efficiency on CPU and GPU architectures.

To address these challenges, we develop an integrated acceleration framework, REASON, which to the best of our knowledge, is the first to accelerate probabilistic logical reasoning-based neuro-symbolic AI systems. REASON is designed to close the efficiency gap of compositional AI by jointly optimizing algorithms, architecture, and system integration for the irregular and heterogeneous workloads inherent to neuro-symbolic reasoning.

At the algorithm level, REASON introduces a unified directed acyclic graph (DAG) representation that captures shared computational structure across symbolic and probabilistic kernels. An adaptive pruning and regularization technique further reduces model size and computational complexity while preserving task accuracy. At the architecture level, REASON features a flexible design optimized for various irregular symbolic and probabilistic computations, leveraging the unified DAG representation. The architecture comprises reconfigurable tree-based processing elements (PEs), compiler-driven workload mapping, and memory layout to enable highly parallel and energy-efficient symbolic and probabilistic computation. At the system level, REASON is tightly integrated with GPU streaming multiprocessors (SMs), forming a heterogeneous system with a programmable interface and multi-level execution pipeline that efficiently orchestrates neural, symbolic, and probabilistic kernels while maintaining high hardware utilization and scalability as neuro-symbolic models evolve. Notably, unlike conventional tree-like computing arrays optimized primarily for neural workloads, REASON provides reconfigurable support for neural, symbolic, and probabilistic kernels within a unified execution fabric, enabling efficient and scalable neuro-symbolic AI systems.

This paper, therefore, makes the following contributions:

- We conduct a systematic workload characterization of representative logical- and probabilistic-reasoning-based neuro-symbolic AI models, identifying key performance bottlenecks and architectural optimization opportunities (Sec. II, Sec. III).

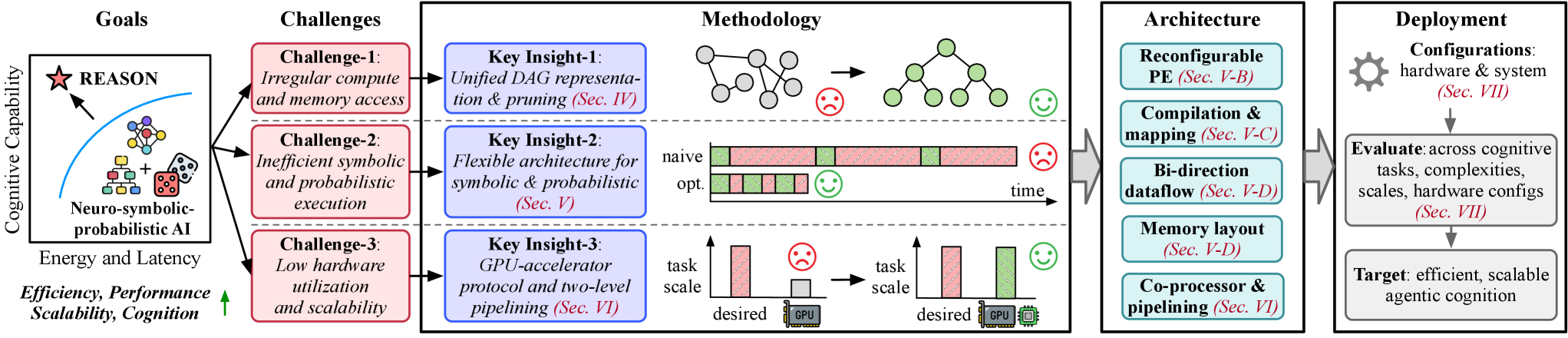

- We propose REASON, an integrated co-design framework, to efficiently accelerate probabilistic logical reasoning in neuro-symbolic AI, enabling practical and scalable deployment of compositional intelligence (Fig. 4).

- REASON introduces cross-layer innovations spanning (i) a unified DAG representation with adaptive pruning at the algorithm level (Sec. IV), (ii) a reconfigurable symbolic/probabilistic architecture and compiler-driven dataflow and mapping at the hardware level (Sec. V), and (iii) a programmable system interface with a multi-level execution pipeline at the system level (Sec. VI) to improve neuro-symbolic efficiency.

- Evaluated across cognitive tasks, REASON enables flexible support for symbolic and probabilistic operations, achieving 12-50 $\times$ speedup and 310-681 $\times$ energy efficiency compared to desktop and edge GPUs. REASON enables fast and efficient logical and probabilistic reasoning in 0.8 s per task with 6 mm 2 area and 2.12 W power consumption. (Sec. VII).

## II Neuro-Symbolic AI Systems

This section presents the preliminaries of neuro-symbolic AI with its algorithm flow (Sec. II-A), scaling performance analysis (Sec. II-B), and key computational primitives (Sec. II-C).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Hybrid AI Approaches: Neuro-Symbolic Integration

### Overview

The image illustrates a hybrid AI approach that combines neural (Neuro) and symbolic (Symbolic) methods. It shows how deep neural networks (DNNs) and large language models (LLMs) can be integrated with logical and probabilistic reasoning for various applications. The diagram highlights the strengths of each approach and provides examples of their combined use in different domains.

### Components/Axes

* **Top-Left:** "Neuro" - Represents the neural approach to AI.

* "DNN/LLM (Fast Thinking)" - Indicates the use of Deep Neural Networks and Large Language Models for fast processing.

* **Top-Center:** "Symbolic" - Represents the symbolic approach to AI.

* "Logical (Slow Thinking)" - Indicates logical reasoning processes.

* "Probabilistic (Bayesian Thinking)" - Indicates probabilistic reasoning processes.

* **Top-Right:** Examples of symbolic representations.

* "First-Order Logic (FOL) Boolean Satisfiability (SAT)" - Shows a logical representation with variables x1, x2, x3, x4 and outputs y1, y2.

* "Probabilistic Circuit (PC)" - Illustrates a probabilistic circuit with variables x1, x2, x3, x4.

* "Hidden Markov Model (HMM)" - Depicts a Hidden Markov Model with states S1, S2, S3 and observations X1, X2, X3.

* **Bottom:** "Application Examples" - Lists various applications of the hybrid approach.

* "Commonsense Reason:" - Application example.

* "Cognitive Robotics:" - Application example.

* "Medical Diagnosis:" - Application example.

* "Question Answering:" - Application example.

* "Math Solving:" - Application example.

### Detailed Analysis or ### Content Details

**Neuro Component:**

* The "Neuro" component is represented by a pink box containing the text "DNN/LLM (Fast Thinking)". A network of interconnected nodes is shown to the left of the text.

**Symbolic Component:**

* The "Symbolic" component is divided into two sub-components: "Logical (Slow Thinking)" in a light green box and "Probabilistic (Bayesian Thinking)" in a light blue box.

* The "Logical" component contains a diagram of a tree-like structure.

* The "Probabilistic" component contains an image of dice.

**Symbolic Examples:**

* **First-Order Logic (FOL) Boolean Satisfiability (SAT):**

* Variables: x1, x2, x3, x4

* Outputs: y1, y2

* Logical gates: AND (∩), OR (∪), NOT (¬)

* The diagram shows a network of logical gates connecting the input variables to the output variables.

* **Probabilistic Circuit (PC):**

* Variables: x1, x2, x3, x4

* The diagram shows a circuit with addition (+) and multiplication (×) operations.

* **Hidden Markov Model (HMM):**

* States: S1, S2, S3, ...

* Observations: X1, X2, X3, ...

* The diagram shows a sequence of states connected by arrows, with each state emitting an observation.

**Application Examples:**

The application examples are structured as follows:

* **Commonsense Reason:** feature extraction -> rule logic -> uncertainty infer.

* **Cognitive Robotics:** scene graph -> logic-based planning -> uncertainty infer.

* **Medical Diagnosis:** feature extraction -> rule reasoning -> likelihood infer.

* **Question Answering:** parsing -> symbolic query planning -> missing fact infer.

* **Math Solving:** initial sol. gen. -> algebra solver -> uncertainty infer.

The application examples follow a pattern of:

1. Initial stage (pink box)

2. Intermediate stage (green box)

3. Final stage (blue box)

### Key Observations

* The diagram illustrates a clear distinction between neural and symbolic AI approaches.

* The application examples demonstrate how these approaches can be combined to solve complex problems.

* The symbolic examples provide concrete illustrations of logical and probabilistic reasoning.

### Interpretation

The image presents a high-level overview of hybrid AI systems, emphasizing the integration of neural and symbolic methods. The "Neuro" component, representing DNNs and LLMs, is characterized by "Fast Thinking," suggesting its efficiency in tasks like pattern recognition and data processing. In contrast, the "Symbolic" component, encompassing "Logical (Slow Thinking)" and "Probabilistic (Bayesian Thinking)," highlights the strengths of symbolic AI in reasoning, planning, and handling uncertainty.

The application examples demonstrate how these two approaches can be combined to leverage their respective strengths. For instance, in "Commonsense Reason," feature extraction (neural) is followed by rule logic (symbolic) and uncertainty inference (symbolic), showcasing a pipeline where neural networks extract relevant features, and symbolic methods perform reasoning based on those features.

The symbolic examples (FOL, PC, HMM) provide concrete illustrations of the types of representations and reasoning techniques used in symbolic AI. These examples highlight the ability of symbolic AI to represent knowledge explicitly and perform logical or probabilistic inference.

Overall, the image suggests that hybrid AI systems can offer a more robust and versatile approach to AI by combining the strengths of neural and symbolic methods. This integration allows for the development of systems that can not only learn from data but also reason, plan, and handle uncertainty in a more human-like manner.

</details>

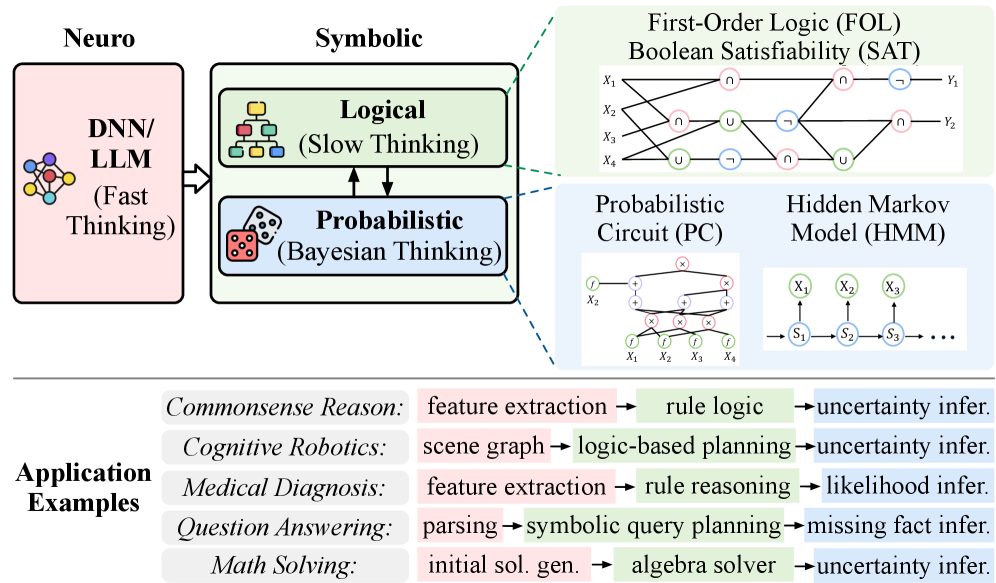

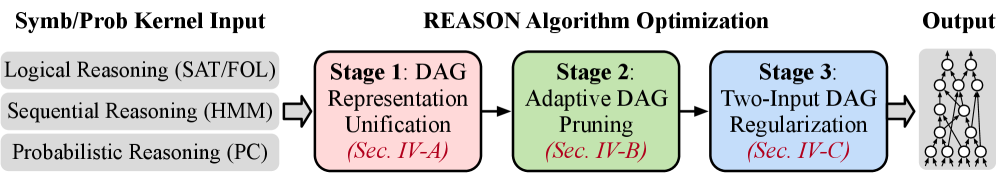

Figure 1: Neuro-symbolic algorithmic flow and operations. The neural module serves as a perceptual and intuitive engine for representation learning, while the symbolic module performs structured logical reasoning with probabilistic inference. This compositional pipeline enables complex cognitive tasks across diverse scenarios.

### II-A Neuro-Symbolic Cognitive Intelligence

LLMs and DNNs excel at natural language understanding and image recognition. However, they remain prone to factual errors, hallucinations, challenges in complex multi-step reasoning, and vulnerability to out-of-distribution or adversarial inputs. Their black-box nature also impedes interpretability and formal verification, undermining trust in safety-critical domains. These limitations motivate the development of compositional systems that integrate neural models with symbolic and probabilistic reasoning to achieve greater robustness, transparency, and intelligence.

Neuro-symbolic AI represents a paradigm shift toward more integrated and trustworthy intelligence by combining neural, symbolic, and probabilistic techniques. This hybrid approach has shown superior performance in abstract deduction [81, 12], complex question answering [38, 41], and logical reasoning [66, 55]. By learning from limited data and producing transparent, verifiable outputs, neuro-symbolic systems provide a foundation for cognitive intelligence. Fig. 1 presents a unified neuro-symbolic pipeline, illustrating how its components collaborate to solve complex tasks.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Chart: Scaling Performance and Efficiency Analysis

### Overview

The image presents four scatter plots analyzing the scaling performance and efficiency of different models across various tasks. The first three plots (a, b, c) depict "Task Accuracy vs. Model Size" for Complex Reasoning, Math Reasoning, and Question-Answering tasks, respectively. The fourth plot (d) shows "Task Runtime vs. Complexity" for Neuro-symbolic and RL-based models.

### Components/Axes

**General:**

* **Title:** Scaling Performance Analysis: Task Accuracy vs. Model Size (for plots a, b, c) and Scaling Efficiency Analysis: Task Runtime vs. Complexity (for plot d)

* Each plot is labeled with a letter: (a), (b), (c), (d) in the bottom left corner.

**Plot (a): Complex Reasoning Tasks**

* **X-axis:** Model Size (7B, 8B, 13B, 70B, GPT)

* **Y-axis:** Task Accuracy (%) (Scale from 0 to 100, increments of 20)

* **Legend (top-left):**

* Blue Square: Textedit (C)

* Orange Triangle: ACLUTRR (C)

* Green Circle: ProofWriter (C)

* Gray Square: Textedit (M)

* Gray Triangle: ACLUTRR (M)

* Gray Circle: ProofWriter (M)

**Plot (b): Math Reasoning Tasks**

* **X-axis:** Model Size (7B, 8B, 13B, 70B, GPT)

* **Y-axis:** Task Accuracy (%) (Scale from 0 to 100, increments of 20)

* **Legend (top-left):**

* Blue Square: GSM8K (C)

* Orange Triangle: SVAMP (C)

* Green Circle: TabMWP (C)

* Red Diamond: In-Domain GSM8K (C)

* Purple Diamond: In-Domain MATH (C)

**Plot (c): Question-Answering Tasks**

* **X-axis:** Model Size (7B, 8B, 13B, 70B, GPT)

* **Y-axis:** Task Accuracy (%) (Scale from 30 to 80, increments of 10)

* **Legend (top-left):**

* Blue Square: AmbigNQ (C)

* Orange Triangle: TriviaQA (C)

* Green Circle: HotpotQA (C)

* Gray Square: AmbigNQ (M)

* Gray Triangle: TriviaQA (M)

* Gray Circle: HotpotQA (M)

**Plot (d): Task Runtime vs. Complexity**

* **X-axis:** Inter. Math Olympics reasoning (Year_Problem) (00_P1, 08_P6, 04_P1, 12_P5, 20_P6, 19_P6)

* **Y-axis:** Task runtime (min) (Scale from 5 to 30, increments of 5)

* **Legend (top-right):**

* Blue Circle: Neuro-symbolic models (AlphaGeometry)

* Gray Circle: RL-based CoT reasoning models

* There is a right-pointing arrow at the bottom of the chart, indicating increasing complexity.

### Detailed Analysis

**Plot (a): Complex Reasoning Tasks**

* **Textedit (C):** Accuracy increases from approximately 85% at 7B to 98% at 70B.

* **ACLUTRR (C):** Accuracy increases from approximately 58% at 7B to 70% at 70B.

* **ProofWriter (C):** Accuracy increases from approximately 20% at 7B to 65% at 13B, then to 70% at 70B.

* **Textedit (M):** Accuracy increases from approximately 45% at 7B to 48% at 8B, then to 55% at 13B, and then decreases to 45% at 70B.

* **ACLUTRR (M):** Accuracy increases from approximately 25% at 7B to 35% at 13B, then to 45% at 70B.

* **ProofWriter (M):** Accuracy increases from approximately 20% at 7B to 25% at 8B, then to 25% at 13B, and then to 30% at 70B.

**Plot (b): Math Reasoning Tasks**

* The data points are scattered, making it difficult to discern clear trends for each model.

* **GSM8K (C):** Accuracy ranges from approximately 20% to 55%.

* **SVAMP (C):** Accuracy ranges from approximately 25% to 75%.

* **TabMWP (C):** Accuracy ranges from approximately 30% to 95%.

* **In-Domain GSM8K (C):** Accuracy ranges from approximately 40% to 75%.

* **In-Domain MATH (C):** Accuracy ranges from approximately 10% to 35%.

**Plot (c): Question-Answering Tasks**

* **AmbigNQ (C):** Accuracy increases from approximately 65% at 7B to 70% at 8B, then to 75% at 13B, and then to 80% at 70B.

* **TriviaQA (C):** Accuracy increases from approximately 55% at 7B to 68% at 8B, then to 60% at 13B, and then to 80% at 70B.

* **HotpotQA (C):** Accuracy increases from approximately 38% at 7B to 40% at 8B, then to 65% at 13B, and then to 75% at 70B.

* **AmbigNQ (M):** Accuracy increases from approximately 45% at 7B to 55% at 8B, then to 58% at 13B, and then to 75% at 70B.

* **TriviaQA (M):** Accuracy increases from approximately 50% at 7B to 58% at 8B, then to 58% at 13B, and then to 75% at 70B.

* **HotpotQA (M):** Accuracy increases from approximately 30% at 7B to 30% at 8B, then to 40% at 13B, and then to 50% at 70B.

**Plot (d): Task Runtime vs. Complexity**

* **Neuro-symbolic models (AlphaGeometry):** Task runtime increases linearly from approximately 8 minutes to 15 minutes as complexity increases.

* **RL-based CoT reasoning models:** Task runtime increases linearly from approximately 12 minutes to 28 minutes as complexity increases.

### Key Observations

* For Complex Reasoning and Question-Answering tasks, the "C" versions of the models generally outperform the "M" versions.

* In Math Reasoning tasks, the performance varies significantly across different models and model sizes.

* In the Task Runtime vs. Complexity plot, RL-based CoT reasoning models consistently have higher task runtime compared to Neuro-symbolic models (AlphaGeometry).

* The GPT model size is only present in the Task Accuracy plots, and not in the Task Runtime plot.

### Interpretation

The data suggests that increasing model size generally improves task accuracy for Complex Reasoning and Question-Answering tasks, but the effect is less consistent for Math Reasoning tasks. The difference in performance between "C" and "M" versions of the models indicates that certain model architectures or training methods are more effective for specific tasks. The Task Runtime vs. Complexity plot highlights a trade-off between model type and computational cost, with Neuro-symbolic models demonstrating lower runtime compared to RL-based models for the same level of complexity. The arrow on the x-axis of plot (d) indicates that the problems are ordered by increasing difficulty.

</details>

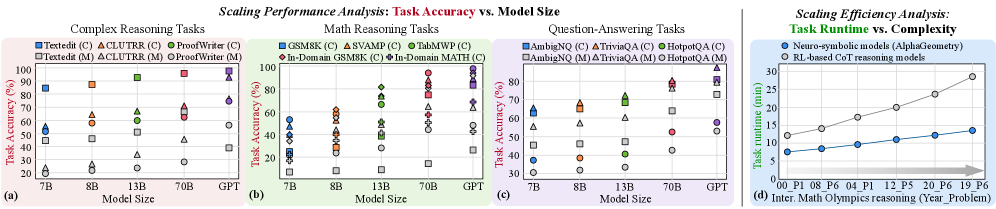

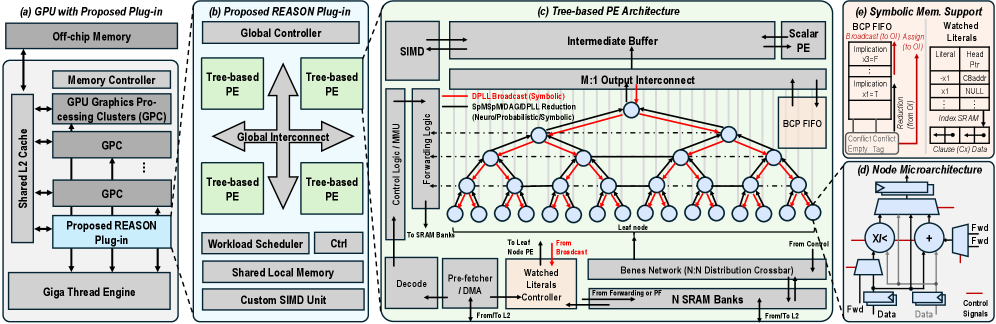

Figure 2: Scaling performance and efficiency. (a)-(c) Task accuracy of compositional LLM-symbolic models (C) and monolithic LLMs (M - shown in gray) across model sizes on complex reasoning, mathematical reasoning, and question-answering tasks. (d) Runtime efficiency comparison between LLM-symbolic models and RL-based CoT models on mathematical reasoning tasks [76].

Neural module. The neural module serves as the perceptual and intuitive engine, typically DNN or LLM, excelling at processing high-dimensional sensory inputs (e.g., images, audio, text) and converting them into feature representations. It handles perception, feature extraction, and associative learning, providing the abstractions needed for higher-level cognition.

Symbolic module. The symbolic module is the logical core operating on neural abstractions and includes symbolic and probabilistic operations. Logical components apply formal logic, rules, and ontologies for structured reasoning and planning, enabling logically sound solutions. Probabilistic components manage uncertainty by representing knowledge probabilistically, supporting belief updates and decision-making under ambiguity, reflecting a nuanced reasoning model.

Together, these modules form a complementary reasoning hierarchy. Neural module captures statistical, pattern-matching behavior of learned models, producing rapid but non-verifiable outputs (Fast Thinking), while symbolic modules perform explicit, verifiable reasoning that is structured and reliable (Slow Thinking). The probabilistic module complements both and enables robust planning under ambiguity (Bayesian Thinking). This framework integrates intuitive generalization with deliberate reasoning.

### II-B Scaling Performance Analysis of Neuro-Symbolic Systems

Scaling performance analysis. Neuro-symbolic AI systems exhibit superior reasoning capability and scaling behavior compared to monolithic LLMs on complex tasks. We compare representative neuro-symbolic systems against monolithic LLMs across complex reasoning, mathematical reasoning, and question-answering benchmarks (Fig. 2 (a)-(c)). The results reveal two advantages. First, higher accuracy: compositional neuro-symbolic models consistently outperform monolithic LLMs of comparable size. Second, improved scaling efficiency: smaller neuro-symbolic models are sufficient to match or exceed the performance of significantly larger closed-source LLMs. Together, these results highlight the potential scaling limitations of monolithic LLMs and the efficiency benefits of compositional neuro-symbolic reasoning.

Comparison with RL-based reasoning models. Beyond monolithic LLMs, recent advancements in reinforcement learning (RL) and chain-of-thought (CoT) prompting improve LLM reasoning accuracy but incur significant computational and scalability overheads (Fig. 2 (d)). First, computational cost: RL-based reasoning often requires hundreds to thousands of LLM queries per decision step, resulting in prohibitively high inference latency and energy consumption. Second, scalability: task-specific fine-tuning constrains generality, whereas neuro-symbolic models use symbolic and probabilistic reasoning modules or tools without retraining. Fig. 2 (d) reveals that neuro-symbolic models AlphaGeometry [66] achieve over $2\times$ efficiency gains and superior data efficiency compared to CoT-based LLMs on mathematical reasoning tasks.

### II-C Computational Primitives in Neuro-Symbolic AI

We identify the core computational primitives that are commonly used in neuro-symbolic AI systems (Fig. 1). While neural modules rely on DNNs or LLMs for perception and representation learning, the symbolic and probabilistic components implement structured reasoning. In particular, logical reasoning is typically realized through First-Order Logic (FOL) and Boolean Satisfiability (SAT), probabilistic reasoning through Probabilistic Circuits (PCs), and sequential reasoning through Hidden Markov Models (HMMs). Together, these primitives form the algorithmic foundation of neuro-symbolic systems that integrate learning, logic, and uncertainty-aware inference.

First-Order Logic (FOL) and Boolean Satisfiability (SAT). FOL provides a formal symbolic language for representing structured knowledge using predicates, functions, constants, variables and quantifiers ( $\forall,\exists$ ), combined with logical connectives. For instance, the statement “every student has a mentor” can be expressed as $\forall x\bigl(\mathrm{Student}(x)\to\exists y\,(\mathrm{Mentor}(y)\wedge\mathrm{hasMentor}(x,y))\bigr)$ , where predicates encode properties and relations over domain elements. FOL semantics map symbols to domain objects and relations, enabling precise and interpretable logical reasoning. SAT operates over propositional logic and asks whether a conjunctive normal form (CNF) formula $\varphi=\bigwedge_{i=1}^{m}\Bigl(\bigvee_{j=1}^{k_{i}}l_{ij}\Bigr)$ admits a satisfying assignment, where each literal $l_{ij}$ is a Boolean variable or its negation. Modern SAT solvers extend the DPLL algorithm with conflict-driven clause learning (CDCL), incorporating non-chronological backtracking and clause learning to improve scalability [40, 33]. Cube-and-conquer further parallelizes search by splitting into “cube” DPLL subproblems and concurrent CDCL “conquer” solvers [13, 67]. Together, FOL’s expressive representations and SAT’s solving mechanisms form the logic backbone of neuro-symbolic systems, enabling exact logical inference alongside neural learning.

| Representative Neuro- Symbolic Workloads | AlphaGeometry [66] | R 2 -Guard [20] | GeLaTo [82] | Ctrl-G [83] | NeuroPC [6] | LINC [52] | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Deployment Scenario | Application | Math theorem proving & reasoning | Unsafety detection | Constrained text generation | Interactive text editing, text infilling | Classification | Logical reasoning, Deductive reasoning |

| Advantage vs. LLM | Higher deductive reasoning, higher generalization | Higher LLM resilience, higher data efficiency, effective adaptability | Guaranteed constraint satisfaction, higher generalization | Guaranteed constraints satisfaction, higher generalization | Enhanced interpretability, theoretical guarantee | Higher precision, reduced overconfidence, higher scalability | |

| Computation Pattern | Neural | LLM | LLM | LLM | LLM | DNN | LLM |

| Symbolic | First-order logic, SAT solver, acyclic graph | First-order logic, probabilistic circuit, Hidden Markov model | First-order logic, SAT solver, Hidden Markov model | Hidden Markov model, probabilistic circuits | First-order logic, probabilistic circuit | First-order logic, solver | |

TABLE I: Representative neuro-symbolic workloads. Selected neuro-symbolic workloads used in our analysis, spanning diverse application domains, deployment scenarios, and neural-symbolic computation patterns.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Chart/Diagram Type: Multi-Panel Performance Comparison

### Overview

The image presents a multi-panel figure comparing the performance of different systems (AlphaGeo, R2-Guard, GeLaTo, Ctrl-G, NPC, LINC, LLaMA-3-8B, and NeuroPC) across various tasks and hardware configurations. The figure consists of four subplots: (a) Runtime percentage breakdown between Neuro and Symbolic components, (b) Runtime latency for different tasks with "Small" and "Large" configurations, (c) Runtime latency on different hardware (A6000 and Orin), and (d) Attainable performance versus operation intensity.

### Components/Axes

**Panel (a): Stacked Bar Chart - Runtime Percentage**

* **Title:** None explicitly given, but implied as "Runtime Percentage"

* **Y-axis:** Runtime Percentage, ranging from 0% to 100%.

* **X-axis:** Different systems: AlphaGeo, R2-Guard, GeLaTo, Ctrl-G, NPC, LINC. Each system is evaluated on different tasks such as IMO, MiniF2F, Twins, XSTest, Method, ComGen, ReviewGen, News, ComGen, TextF, Math, AwA2, FOLIO, Proof.

* **Legend (top-right):**

* Red (slanted lines): Neuro

* Green (dotted): Symbolic

**Panel (b): Grouped Bar Chart - Runtime Latency (min)**

* **Title:** None explicitly given, but implied as "Runtime Latency (min)"

* **Y-axis:** Runtime Latency (min), ranging from 0 to 12.

* **X-axis:** Different systems: Alpha, R2-G, GeLaTo, Ctrl-G, LINC. Each system is evaluated on "Small" and "Large" configurations.

* **Tasks (top):** IMO, Safety, CoGen, Text, FOLIO

* **Legend:**

* Red (slanted lines): Lower portion of the bar

* Green (dotted): Upper portion of the bar

**Panel (c): Grouped Bar Chart - Runtime Latency (min)**

* **Title:** None explicitly given, but implied as "Runtime Latency (min)"

* **Y-axis:** Runtime Latency (min), ranging from 0 to 24.

* **X-axis:** Different systems: Alpha, R2-G. Each system is evaluated on A6000 and Orin hardware.

* **Tasks (top):** MiniF, XSTest

* **Legend:**

* Red (slanted lines): Lower portion of the bar

* Green (dotted): Upper portion of the bar

**Panel (d): Scatter Plot - Attainable Performance vs. Operation Intensity**

* **Y-axis:** Attainable Performance (TFLOPS/s), logarithmic scale from 10<sup>-1</sup> to 10<sup>2</sup>.

* **X-axis:** Operation Intensity (FLOPS/Byte), logarithmic scale from 10<sup>-1</sup> to 10<sup>2</sup>.

* **Data Points:**

* AlphaGeo (Symb)

* LINC (Symb)

* Ctrl-G (Symb)

* R2-Guard (Symb)

* GeLaTo (Symb)

* NeuroPC (Symb)

* LLaMA-3-8B (Neuro)

### Detailed Analysis

**Panel (a): Runtime Percentage**

* **AlphaGeo:** Neuro runtime ranges from approximately 32.6% (IMO) to 65.1% (ReviewGen). Symbolic runtime ranges from 67.4% (IMO) to 34.9% (ReviewGen).

* **R2-Guard:** Neuro runtime ranges from approximately 39.8% (MiniF2F) to 36.1% (ComGen). Symbolic runtime ranges from 60.2% (MiniF2F) to 63.9% (ComGen).

* **GeLaTo:** Neuro runtime ranges from approximately 36.5% (Twins) to 39.9% (TextF). Symbolic runtime ranges from 63.5% (Twins) to 60.1% (TextF).

* **Ctrl-G:** Neuro runtime ranges from approximately 33.2% (XSTest) to 32.3% (Math). Symbolic runtime ranges from 66.8% (XSTest) to 67.7% (Math).

* **NPC:** Neuro runtime ranges from approximately 42.1% (Method) to 49.5% (AwA2). Symbolic runtime ranges from 57.9% (Method) to 50.5% (AwA2).

* **LINC:** Neuro runtime ranges from approximately 63.4% (ComGen) to 66.0% (FOLIO) to 64.3% (Proof). Symbolic runtime ranges from 36.6% (ComGen) to 34.0% (FOLIO) to 35.7% (Proof).

**Panel (b): Runtime Latency (min)**

* **Alpha:** Small configuration has a Neuro runtime of approximately 1.5 min and a Symbolic runtime of approximately 3.5 min. Large configuration has a Neuro runtime of approximately 2.2 min and a Symbolic runtime of approximately 4.8 min.

* **R2-G:** Small configuration has a Neuro runtime of approximately 0.5 min and a Symbolic runtime of approximately 2.5 min. Large configuration has a Neuro runtime of approximately 2.0 min and a Symbolic runtime of approximately 4.0 min.

* **GeLaTo:** Small configuration has a Neuro runtime of approximately 2.0 min and a Symbolic runtime of approximately 3.0 min. Large configuration has a Neuro runtime of approximately 4.5 min and a Symbolic runtime of approximately 3.5 min.

* **Ctrl-G:** Small configuration has a Neuro runtime of approximately 0.5 min and a Symbolic runtime of approximately 2.5 min. Large configuration has a Neuro runtime of approximately 1.5 min and a Symbolic runtime of approximately 5.5 min.

* **LINC:** Small configuration has a Neuro runtime of approximately 1.0 min and a Symbolic runtime of approximately 4.0 min. Large configuration has a Neuro runtime of approximately 2.5 min and a Symbolic runtime of approximately 7.5 min.

**Panel (c): Runtime Latency (min)**

* **Alpha:** A6000 has a Neuro runtime of approximately 1.0 min and a Symbolic runtime of approximately 3.0 min. Orin has a Neuro runtime of approximately 2.0 min and a Symbolic runtime of approximately 4.0 min.

* **R2-G:** A6000 has a Neuro runtime of approximately 0.5 min and a Symbolic runtime of approximately 2.5 min. Orin has a Neuro runtime of approximately 1.0 min and a Symbolic runtime of approximately 3.0 min.

**Panel (d): Attainable Performance vs. Operation Intensity**

* **LLaMA-3-8B (Neuro):** Located at approximately (1, 40) on the log-log scale.

* **AlphaGeo (Symb):** Located at approximately (0.2, 2).

* **LINC (Symb):** Located at approximately (0.1, 0.8).

* **Ctrl-G (Symb):** Located at approximately (10, 1).

* **R2-Guard (Symb):** Located at approximately (10, 2).

* **GeLaTo (Symb):** Located at approximately (0.1, 0.2).

* **NeuroPC (Symb):** Located at approximately (10, 0.1).

### Key Observations

* Panel (a) shows the percentage of runtime attributed to Neuro and Symbolic components across different systems and tasks. The Symbolic component generally dominates the runtime.

* Panel (b) shows that the "Large" configuration generally increases the runtime latency compared to the "Small" configuration.

* Panel (c) shows that the Orin hardware generally results in higher runtime latency compared to the A6000 hardware.

* Panel (d) shows the relationship between attainable performance and operation intensity for different systems. LLaMA-3-8B (Neuro) has a relatively high attainable performance, while NeuroPC (Symb) has a relatively low attainable performance.

### Interpretation

The data suggests that the Symbolic component often contributes more to the overall runtime than the Neuro component across various tasks and systems. The "Large" configurations in Panel (b) likely represent larger problem sizes or more complex scenarios, leading to increased runtime latency. The hardware comparison in Panel (c) indicates that the Orin hardware, despite potentially being more powerful, does not always translate to lower runtime latency, possibly due to overhead or optimization issues. The scatter plot in Panel (d) provides insights into the performance characteristics of different systems, with LLaMA-3-8B (Neuro) demonstrating a higher attainable performance compared to other systems. The placement of the systems on the plot indicates their suitability for different types of workloads based on their operation intensity and attainable performance.

</details>

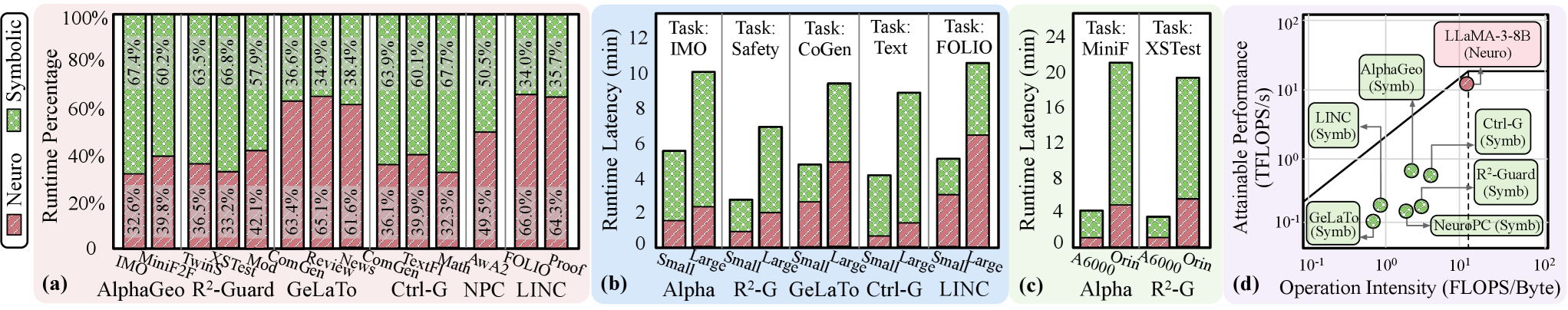

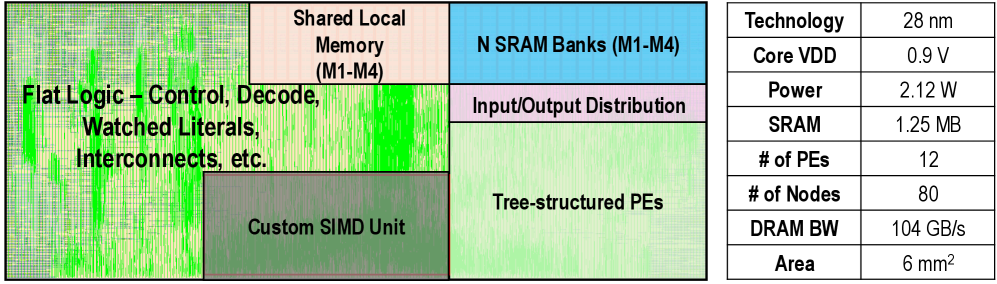

Figure 3: End-to-end neuro-symbolic workload characterization. (a) Benchmark six neuro-symbolic workloads (AlphaGeometry, R 2 -Guard, GeLaTo, Ctrl-G, NeuroPC, LINC) on CPU+GPU system, showing symbolic and probabilistic may serve as system bottlenecks. (b) Benchmark neuro-symbolic workloads on tasks with different scales, indicating that real-time performance cannot be satisfied and the potential efficiency issues. (c) Benchmark on A6000 and Orin GPU. (d) Roofline analysis, indicating server memory-bound of symbolic and probabilistic kernels.

Probabilistic Circuits (PC). PCs represent tractable probabilistic models over variables $\mathbf{X}$ as directed acyclic graphs [30, 22, 32]. Each node $n$ performs a probabilistic computation: leaf nodes specify primitive distributions $f_{n}(x)$ , while interior nodes combine their children $ch(n)$ via

$$

p_{n}(x)=\begin{cases}f_{n}(x),&\text{if }n\text{ is a leaf node}\\

\prod_{c\in\mathrm{ch}(n)}p_{c}(x),&\text{if }n\text{ is a product node}\\

\sum_{c\in\mathrm{ch}(n)}\theta_{n,c}p_{c}(x),&\text{if }n\text{ is a sum node}\end{cases} \tag{1}

$$

where $\theta_{n,c}$ denotes the non-negative weight associated with child $c$ . This recursive structure guarantees exact inference (e.g., marginals, conditionals) in time linear in circuit size. PCs’ combination of expressiveness and tractable computation makes them an ideal probabilistic backbone for neuro-symbolic systems, where neural modules learn circuit parameters while symbolic engines perform probabilistic reasoning.

Hidden Markov Model (HMM). HMMs are probabilistic model for sequential data [43], where a system evolves through hidden states governed by the first-order Markov property: the state at time step $t$ depends only on the state at time step $t-1$ . Each hidden state emits observations according to a probabilistic distribution. The joint distribution over sequence of hidden states $z_{1:T}$ and observations $x_{1:T}$ is given by

$$

p(z_{1:T},x_{1:T})=p(z_{1})p(x_{1}\mid z_{1})\prod_{t=2}^{T}p(z_{t}\mid z_{t-1})p(x_{t}\mid z_{t}) \tag{2}

$$

where $p(z_{1})$ is the initial state distribution, $p(z_{t}\mid z_{t-1})$ the transition probability, and $p(x_{t}\mid z_{t})$ the emission probability. HMMs naturally support sequential inference tasks such as filtering, smoothing, and decoding, enabling temporal reasoning in neuro-symbolic pipelines.

## III Neuro-Symbolic Workload Characterization

This section characterizes the system behavior of various neuro-symbolic workloads (Sec. III-A - III-B) and provides workload insights for computer architects (Sec. III-C - III-D).

Profiling workloads. To conduct comprehensive profiling analysis, we select six state-of-the-art representative neuro-symbolic workloads, as listed in Tab. I, covering a diverse range of applications and underlying computational patterns.

Profiling setup. We profile and analyze the selected neuro-symbolic models in terms of runtime, memory, and compute operators using cProfile for latency measurement, and NVIDIA Nsight for kernel-level profiling and analysis. Experiments are conducted on the system with NVIDIA A6000 GPU, Intel Sapphire Rapids CPUs, and DDR5 DRAM. Our software environment includes PyTorch 2.5 and JAX 0.4.6. We also conduct profiling on Jetson Orin [49] for edge scenario deployment. We track control and data flow by analyzing the profiling results in trace view and graph execution format.

### III-A Compute Latency Analysis

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Goals, Challenges, Methodology, Architecture, Deployment

### Overview

The image presents a diagram outlining the goals, challenges, methodology, architecture, and deployment aspects of a system, likely related to cognitive computing or AI. It illustrates the progression from high-level objectives to specific implementation details.

### Components/Axes

**Goals:**

* **Y-axis:** Cognitive Capability

* **X-axis:** Energy and Latency

* **Curve:** A blue curve indicates the desired relationship between cognitive capability and energy/latency.

* **Point:** A red star labeled "REASON" is positioned at the top-left of the graph, indicating a target state.

* **Text:** "Neuro-symbolic-probabilistic AI" is positioned in the center of the graph.

* **Text:** "Efficiency, Performance, Scalability, Cognition" with a green upward arrow.

**Challenges:**

* **Challenge-1:** Irregular compute and memory access

* **Challenge-2:** Inefficient symbolic and probabilistic execution

* **Challenge-3:** Low hardware utilization and scalability

**Methodology:**

* **Key Insight-1:** Unified DAG representation & pruning (Sec. IV)

* Diagram: A graph of interconnected nodes transforms into a tree structure. A red sad face is below the initial graph, and a green happy face is below the tree structure.

* **Key Insight-2:** Flexible architecture for symbolic & probabilistic (Sec. V)

* Diagram: A timeline labeled "naive" shows alternating red (cross-hatched) and green blocks. A timeline labeled "opt." shows a higher proportion of green blocks. A red sad face is to the right of the "naive" timeline, and a green happy face is to the right of the "opt." timeline.

* **Key Insight-3:** GPU-accelerator protocol and two-level pipelining (Sec. VI)

* Diagram: Two bar graphs labeled "task scale". The first shows a red (cross-hatched) bar for "desired" and a smaller gray bar for "GPU". The second shows a green bar for "desired" and a green bar for "GPU" and a green happy face.

**Architecture:**

* Reconfigurable PE (Sec. V-B)

* Compilation & mapping (Sec. V-C)

* Bi-direction dataflow (Sec. V-D)

* Memory layout (Sec. V-D)

* Co-processor & pipelining (Sec. VI)

**Deployment:**

* Configurations: hardware & system (Sec. VII)

* Evaluate: across cognitive tasks, complexities, scales, hardware configs (Sec. VII)

* Target: efficient, scalable agentic cognition

### Detailed Analysis

* **Goals:** The graph illustrates the trade-off between cognitive capability and energy/latency. The "REASON" point represents an ideal state of high cognitive capability with low energy/latency.

* **Challenges:** The challenges highlight key bottlenecks in achieving the desired goals, including irregular memory access, inefficient execution, and low hardware utilization.

* **Methodology:** The methodology section outlines key insights and approaches to address the identified challenges. These include unified DAG representation, flexible architecture, and GPU-accelerator protocols. The diagrams show the progression from a less optimal state (red sad face) to a more optimal state (green happy face).

* **Architecture:** The architecture section lists key components of the system, including reconfigurable processing elements, compilation and mapping strategies, bi-directional dataflow, memory layout, and co-processor pipelining.

* **Deployment:** The deployment section outlines the steps involved in deploying the system, including configuration, evaluation, and targeting efficient and scalable agentic cognition.

### Key Observations

* The diagram presents a clear and concise overview of the system's goals, challenges, methodology, architecture, and deployment.

* The use of diagrams and visual cues (e.g., happy/sad faces, upward arrow) effectively communicates the key concepts.

* The references to specific sections (e.g., Sec. IV, Sec. V-B) provide pointers to more detailed information.

### Interpretation

The diagram illustrates a systematic approach to developing a cognitive computing system. It starts with clearly defined goals, identifies key challenges, proposes innovative methodologies, outlines the system architecture, and describes the deployment process. The emphasis on efficiency, performance, scalability, and cognition suggests a focus on building a high-performance system that can effectively address complex cognitive tasks. The progression from challenges to key insights and methodologies demonstrates a problem-solving approach. The architecture and deployment sections provide a roadmap for implementing and deploying the system. The overall diagram suggests a well-thought-out and comprehensive approach to cognitive computing system development.

</details>

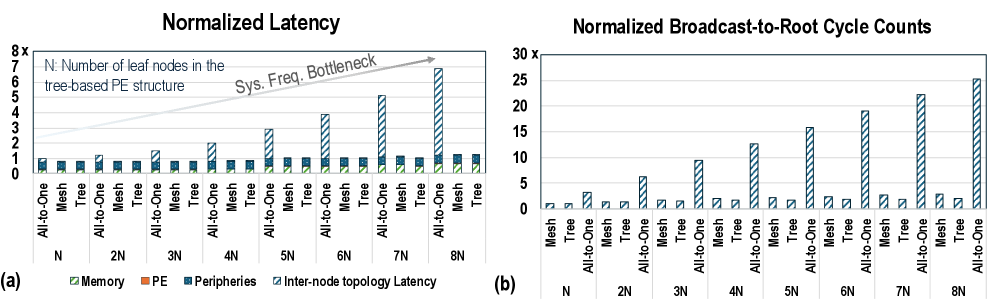

Figure 4: REASON overview. REASON is an integrated acceleration framework for probabilistic logical reasoning grounded neuro-symbolic AI with the goal to achieve efficient and scalable agentic cognition. REASON addresses the challenges of irregular compute and memory, symbolic and probabilistic latency bottleneck, and hardware underutilization, by proposing methodologies including unified DAG representation, reconfigurable PE, efficient dataflow, mapping, scalable architecture, two-level parallelism and programming interface. REASON is deployed across cognitive tasks and consistently demonstrates performance-efficiency improvements for compositional neuro-symbolic systems.

Latency bottleneck. We characterize the latency of representative neuro-symbolic workloads (Fig. 3 (a)). Compared to neuro kernels, symbolic and probabilistic kernels are not negligible in latency and may become system bottlenecks. For instance, the neural (symbolic) components account for 36.2% (63.8%), 37.3% (62.7%), 63.4% (36.6%), 36.1% (63.9%), 49.5% (50.5%), and 65.2% (34.8%) of runtime in AlphaGeometry, R 2 -Guard, GeLaTo, Ctrl-G, NeuroPC, and LINC, respectively. Symbolic kernels dominate AlphaGeometry’s runtime, and probabilistic kernels dominate R 2 -Guard and Ctrl-G’s, due to high irregular memory access, wrap divergence, thread underutilization, and execution parallelism. FLOPS and latency measurements further highlight this inefficiency. Notably, when using a smaller LLM (LLaMA-7B) for GeLaTo and LINC, overall accuracy remains stable, but the symbolic latency rises to 69.0% and 65.5%, respectively. We observe consistent trends in the Orin NX-based platform (Fig. 3 (c)). Symbolic components count for 63.8% of AlphaGeometry runtime on A6000 while its FLOPS count for only 19.3%, indicating inefficient hardware utilization.

Latency scalability. We evaluate runtime across reasoning tasks of varying difficulty and scale (Fig. 3 (b)). We observe that the relative runtime distribution between neural and symbolic components remains consistent of a single workload across task sizes. Total runtime increases with task complexity and scale. While LLM kernels scale efficiently due to their tensor-based GPU-friendly inference, logical and probabilistic kernels scale poorly due to the exponential growth of search space, making them slower compared to monolithic LLMs.

### III-B Roofline & Symbolic Operation & Inefficiency Analysis

Memory-bounded operation. Fig. 3 (d) presents a roofline analysis of GPU memory bandwidth versus compute efficiency. We observe that the symbolic and probabilistic components are typically memory-bound, limiting performance efficiency. For example, R 2 -Guard’s probabilistic circuits use sparse, scattered accesses for marginalization, and Ctrl-G’s HMM iteratively reads and writes state probabilities. Low compute per element makes these workloads constrained by memory access, underutilizing GPU compute resources.

TABLE II: Hardware inefficiency analysis. The compute, memory, and communication characteristics of representative neural, symbolic, and probabilistic kernels executed on CPU/GPU platform.

| | Neural Kernel | Symbolic Kernel | Probabilistic Kernel | | | |

| --- | --- | --- | --- | --- | --- | --- |

| MatMul | Softmax | Sparse MatVec | Logic | Marginal | Bayesian | |

| Compute Efficiency | | | | | | |

| Compute Throughput (%) | 96.8 | 62.2 | 32.5 | 14.7 | 35.0 | 31.1 |

| ALU Utilization (%) | 98.4 | 72.0 | 43.9 | 29.3 | 48.5 | 52.8 |

| Memory Behavior | | | | | | |

| L1 Cache Throughput (%) | 82.4 | 58.0 | 27.1 | 20.6 | 32.4 | 37.1 |

| L2 Cache Throughput (%) | 41.7 | 27.6 | 18.3 | 12.4 | 24.2 | 27.5 |

| L1 Cache Hit Rate (%) | 88.5 | 85.0 | 53.6 | 37.0 | 42.4 | 40.7 |

| L2 Cache Hit Rate (%) | 73.4 | 66.7 | 43.9 | 32.7 | 50.2 | 47.6 |

| DRAM BW Utilization (%) | 39.8 | 28.6 | 57.4 | 70.3 | 60.8 | 68.0 |

| Control Divergence and Scheduling | | | | | | |

| Warp Execution Efficiency (%) | 96.3 | 94.1 | 48.8 | 54.0 | 59.3 | 50.6 |

| Branch Efficiency (%) | 98.0 | 98.7 | 60.0 | 58.1 | 63.4 | 66.9 |

| Eligible Warps/Cycle (%) | 7.2 | 7.0 | 2.4 | 2.1 | 2.8 | 2.5 |

Hardware inefficiency analysis. We leverage Nsight Systems and Nsight Compute [51, 50] to analyze the computational, memory, and control irregularity of neural, symbolic, and probabilistic kernels, as listed in Tab. II. We observe that: First, compute throughput and ALU utilization: neural kernels achieve high throughput and ALU utilization, while symbolic/probabilistic kernels have low throughput and idle ALUs. Second, memory access and cache utilization: neural kernels see high L1 cache hit rates; symbolic kernels incur cache misses and stalls, and probabilistic kernels face high memory pressure. Third, DRAM bandwidth (BW) utilization and data movement overhead: neural workloads use on-chip caches with minimal DRAM usage, but symbolic/probabilistic workloads are DRAM-bound with heavy random-access overhead.

Sparsity analysis. We observe high, heterogeneous, irregular, and data-dependent sparsity across neuro-symbolic workloads. Symbolic and probabilistic kernels are often extremely sparse, exhibiting on average 82%, 87%, 75%, 83%, 89%, and 83% sparsity across six representative neuro-symbolic workloads, respectively, with many sparse computational paths based on low activation or probability mass. This observation motivates our adaptive DAG pruning (Sec IV-B).

### III-C Unique Characteristics of Neuro-Symbolic vs LLMs

In summary, neuro-symbolic workloads exhibit distinct characteristics compared to monolithic LLMs in compute kernels, memory behavior, dataflow, and performance scaling.

Compute kernels. LLMs are dominated by regular, highly parallel tensor operations well suited to GPUs. In contrast, neuro-symbolic workloads comprise heterogeneous symbolic and probabilistic kernels with irregular control flow, low arithmetic intensity, and poor cache locality, leading to low GPU utilization and frequent performance bottlenecks.

Memory behavior. Symbolic and probabilistic kernels are primarily memory-bound, operating over large, sparse, and irregular data structures. Probabilistic reasoning further increases memory pressure through large intermediate state caching, creating challenging trade-offs between latency, bandwidth, and on-chip storage.

Dataflow and parallelism. Neuro-symbolic workloads exhibit dynamic and tightly coupled data dependencies. Symbolic and probabilistic computations often depend on neural outputs or require compilation into LLM-compatible structures, resulting in serialized execution, limited parallelism, and amplified end-to-end latency.

Performance scaling. LLMs scale efficiently across GPUs via optimized data and model parallelism. In contrast, symbolic workloads are difficult to parallelize due to recursive control dependencies, while probabilistic kernels incur substantial inter-node communication, limiting scalability on multi-GPUs.

### III-D Identified Opportunities for Neuro-Symbolic Optimization

While neuro-symbolic systems show promise, improving their efficiency is critical for real-time and scalable deployment. Guided by the profiling insights above, we introduce REASON (Fig. 4), an algorithm-hardware co-design framework for accelerated probabilistic logical reasoning in neuro-symbolic AI. Algorithmically, a unified representation with adaptive pruning reduces memory footprint (Sec. IV). In hardware architecture, a flexible architecture and dataflow support various symbolic and probabilistic operations (Sec. V). REASON further provides adaptive scheduling and orchestration of heterogeneous LLM-symbolic agentic workloads through a programmable interface (Sec. VI). Across reasoning tasks, REASON consistently boosts performance, efficiency, and accuracy (Sec. VII).

## IV REASON: Algorithm Optimizations

This section introduces the algorithmic optimizations in REASON for symbolic and probabilistic reasoning kernels. We present a unified DAG-based computational representation (Sec. IV-A), followed by adaptive pruning (Sec. IV-B) and regularization techniques (Sec. IV-C) that jointly reduce model complexity and enable efficient neuro-symbolic systems.

### IV-A Stage 1: DAG Representation Unification

Motivation. Despite addressing different reasoning goals, symbolic and probabilistic reasoning kernels often share common underlying computational patterns. For instance, logical deduction in FOL, constraint propagation in SAT, and marginal inference in PCs all rely on iterative graph-based computations. Capturing this shared structure is essential to system acceleration. DAGs provide a natural abstraction to unify these diverse kernels under a flexible computational model.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: REASON Algorithm Optimization

### Overview

The image is a flowchart illustrating the REASON Algorithm Optimization process. It shows the input, three stages of the algorithm, and the output.

### Components/Axes

* **Header:**

* "Symb/Prob Kernel Input" (top-left)

* "REASON Algorithm Optimization" (top-center)

* "Output" (top-right)

* **Input:**

* A gray box containing:

* "Logical Reasoning (SAT/FOL)"

* "Sequential Reasoning (HMM)"

* "Probabilistic Reasoning (PC)"

* **Stages:**

* Stage 1: A rounded-corner red box labeled "Stage 1: DAG Representation Unification (Sec. IV-A)"

* Stage 2: A rounded-corner green box labeled "Stage 2: Adaptive DAG Pruning (Sec. IV-B)"

* Stage 3: A rounded-corner blue box labeled "Stage 3: Two-Input DAG Regularization (Sec. IV-C)"

* **Flow:** Arrows indicate the flow from input to Stage 1, Stage 1 to Stage 2, Stage 2 to Stage 3, and Stage 3 to output.

* **Output:** A gray box containing a diagram of a directed acyclic graph (DAG).

### Detailed Analysis or Content Details

* **Input:** The input consists of three types of reasoning: Logical, Sequential, and Probabilistic. The abbreviations in parentheses (SAT/FOL, HMM, PC) likely refer to specific methods or formalisms used for each type of reasoning.

* **Stage 1:** DAG Representation Unification: This stage likely involves converting the input into a unified Directed Acyclic Graph (DAG) representation. The reference "(Sec. IV-A)" suggests that more details can be found in Section IV-A of a related document.

* **Stage 2:** Adaptive DAG Pruning: This stage likely involves pruning or simplifying the DAG based on some adaptive criteria. The reference "(Sec. IV-B)" suggests that more details can be found in Section IV-B of a related document.

* **Stage 3:** Two-Input DAG Regularization: This stage likely involves regularizing the DAG to have two inputs per node. The reference "(Sec. IV-C)" suggests that more details can be found in Section IV-C of a related document.

* **Output:** The output is a DAG, visually represented as a network of nodes (circles) and directed edges (arrows).

### Key Observations

* The diagram presents a sequential process, with each stage building upon the previous one.

* The use of DAGs is central to the algorithm, as indicated by its presence in all three stages.

* The references to sections IV-A, IV-B, and IV-C suggest that this diagram is part of a larger document that provides more detailed explanations of each stage.

### Interpretation

The diagram illustrates the REASON Algorithm Optimization process, which takes symbolic and probabilistic reasoning inputs and transforms them into an optimized DAG representation through three key stages: DAG Representation Unification, Adaptive DAG Pruning, and Two-Input DAG Regularization. The algorithm aims to create a structured and simplified DAG that can be used for further processing or analysis. The use of specific techniques like SAT/FOL, HMM, and PC for the input reasoning types suggests that the algorithm is designed to handle a variety of reasoning tasks.

</details>

| SAT/FOL PC HMM | Literals and logical operators Primitive distributions, sum and product nodes Hidden state variables at each time step | Logical dependencies between literals, clauses, and formulas Weighted dependencies encoding probabilistic factorization State transition and emission dependencies | Search and deduction via traversal (DPLL/CDCL) Bottom-up probability aggregation and top-down flow propagation Sequential message passing (forward–backward, decoding) |

| --- | --- | --- | --- |

Figure 5: Unified DAG representations of neuro-symbolic kernels. Logical (SAT/FOL), probabilistic (PC), and sequential (HMM) reasoning are expressed using DAG abstraction. Nodes represent atomic reasoning operations, edges encode dependency structure, and graph traversals implement inference procedures. This unification enables shared compilation, pruning, and hardware mapping in REASON.

Methodology. We unify symbolic and probabilistic reasoning kernels under a DAG abstraction, where each node represents an atomic reasoning operation and each directed edge encodes a data/control dependency (Fig. 5). This representation enables a uniform compilation flow – construction, transformation, and scheduling – across heterogeneous kernels (logical deduction, constraint solving, probabilistic aggregation, and sequential message passing), and serves as the algorithmic substrate for subsequent pruning and regularization.

#### For FOL and SAT solvers

DAG nodes represent variables and logical connectives, with edges indicating dependencies between literals and clauses. We represent a propositional CNF formula $\varphi=\bigwedge_{i=1}^{m}\Bigl(\bigvee_{j=1}^{k_{i}}l_{ij}\Bigr)$ as DAG with three layers: literal nodes for each literal $l_{ij}$ , clause nodes implementing disjunction over literals in $\bigvee_{j}l_{ij}$ , and formula nodes implementing conjunction over clauses $\bigwedge_{i}$ . In SAT, DAG captures the branching and conflict resolution structures in DPLL/CDCL procedures. In FOL, formulas are encoded as DAGs where inference rules act as graph transformation operators that derive contradictions through node and edge expansion. The compiler converts FOL and SAT inputs (clauses in CNF or quantifier-free predicates) into DAGs via: Step- 1 Normalization: predicates are transformed to CNF, removing quantifiers and forming disjunctions of literals. Step- 2 Node creation: each literal becomes a leaf node, each clause an OR node over its literals, and the formula an AND node over clauses. Step- 3 Edge encoding: edges capture dependencies (literal $\rightarrow$ clause $\rightarrow$ formula), while watch-lists as metadata.

#### For PCs

DAG nodes correspond to sum (mixture) or product (factorization) operations $p_{n}(x)$ over input $x$ (to variable $\mathbf{X}$ ), with children $ch(x)$ . Leaves represent primitive distributions $f_{n}(x)$ . Edges model conditional dependencies. The DAG structure facilitates efficient inference through bottom-up probability evaluation, exploiting structural independence and enabling effective pruning and memorization during probability queries (Eq. 1). The compiler converts PC into DAGs through: Step- 1 Graph extraction: nodes represent random variables, factors, or sub-circuits parsed from expressions such as $P_{n}(x)$ . Step- 2 Node typing: arithmetic operators map to sum nodes for marginalization and product nodes for factor conjunction, while leaf nodes store constants or probabilities.

#### For HMMs

The unrolled DAG spans time steps, with nodes representing transition factors $p(z_{t}|z_{t-1})$ and emission factors $p(x_{t}|z_{t})$ (Eq. 2), and edges connecting factors across adjacent time steps to reflect Markov dependency. Sequential inference (filtering/smoothing/decoding) becomes structured message passing on this DAG: each step aggregates contributions from predecessor states through transition factors and then applies emission factors. The compiler converts HMMs into DAGs through: Step- 1 Sequence unroll: Each time step becomes a DAG layer, representing states and transitions. Step- 2 Node mapping: Product nodes combine transition and emission probabilities; sum nodes aggregate over prior states.

The unified DAG abstraction lays the algorithmic foundation for subsequent pruning, regularization, and hardware mapping, supporting efficient acceleration of neuro-symbolic workloads.

<details>

<summary>x6.png Details</summary>

### Visual Description

## System Architecture Diagrams

### Overview

The image presents a set of diagrams illustrating the architecture and components of a system incorporating a "Proposed REASON Plug-in." It includes diagrams of a GPU with the plug-in, the plug-in itself, a tree-based processing element (PE) architecture, a node microarchitecture, and symbolic memory support.

### Components/Axes

* **(a) GPU with Proposed Plug-in:**

* **Components:** Off-chip Memory, Memory Controller, GPU Graphics Processing Clusters (GPC), Shared L2 Cache, Proposed REASON Plug-in, Giga Thread Engine.

* **Flow:** Arrows indicate data flow between components.

* **(b) Proposed REASON Plug-in:**

* **Components:** Global Controller, Tree-based PE (x4), Global Interconnect, Workload Scheduler, Ctrl, Shared Local Memory, Custom SIMD Unit.

* **Flow:** Arrows indicate data flow between components. The Global Interconnect has arrows pointing in all four cardinal directions.

* **(c) Tree-based PE Architecture:**

* **Components:** Intermediate Buffer, M:1 Output Interconnect, Tree structure with nodes, Leaf node, Control Logic/MMU, Forwarding Logic, Decode, Pre-fetcher/DMA, Watched Literals Controller, Benes Network (N:N Distribution Crossbar), N SRAM Banks.

* **Data Flow:**

* DPLL Broadcast (Symbolic) and SpMSPM/DAG/DPLL Reduction (Neuro/Probabilistic/Symbolic) flow through the tree structure.

* Red arrows indicate "From Broadcast".

* Black arrows indicate other data flow.

* "To Leaf Node PE" and "From Broadcast" are indicated at the bottom.

* "From/To L2" is indicated at the bottom.

* **(d) Node Microarchitecture:**

* **Components:** Logic gates, adders, multiplexers, Fwd, Data, Control Signals.

* **Flow:** Arrows indicate data flow and control signals.

* **(e) Symbolic Mem. Support:**

* **Components:** BCP FIFO, Watched Literals, Index SRAM, Clause (Cx) Data.

* **BCP FIFO:** Shows "Broadcast (to Ol) Assign (to Ol)" and "Reduction (from Ol)".

* **Watched Literals:** Table with columns "Literal" and "Head Ptr". Example entries: "-x1" with "C8addr", "x1" with "NULL".

### Detailed Analysis or Content Details

* **GPU with Proposed Plug-in:**

* The Proposed REASON Plug-in is positioned between the GPC and the Giga Thread Engine.

* The Shared L2 Cache connects to the Memory Controller and the GPCs.

* **Proposed REASON Plug-in:**

* The Global Controller is connected to four Tree-based PEs via the Global Interconnect.

* The Workload Scheduler and Ctrl are connected to the Shared Local Memory and Custom SIMD Unit.

* **Tree-based PE Architecture:**

* The tree structure has multiple layers of nodes, culminating in the Leaf nodes.

* The M:1 Output Interconnect connects the top node to the Intermediate Buffer.

* The Watched Literals Controller is connected to the N SRAM Banks via the Benes Network.

* **Node Microarchitecture:**

* The diagram shows a detailed view of a node, including logic gates, adders, and multiplexers.

* "XI<" is a key component.

* **Symbolic Mem. Support:**

* The BCP FIFO handles broadcast and reduction operations.

* The Watched Literals table stores literal and head pointer information.

* The Index SRAM and Clause (Cx) Data are used for clause data storage.

### Key Observations

* The Proposed REASON Plug-in is designed to integrate with a GPU architecture.

* The Tree-based PE Architecture is a key component of the plug-in.

* Symbolic memory support is provided through the BCP FIFO and Watched Literals.

* The Node Microarchitecture provides a detailed view of the processing elements.

### Interpretation

The diagrams illustrate a complex system architecture designed for specialized processing, likely related to symbolic computation or reasoning. The Proposed REASON Plug-in appears to be an accelerator designed to enhance the capabilities of a GPU. The tree-based architecture suggests a hierarchical processing approach, while the symbolic memory support indicates the system's ability to handle symbolic data. The node microarchitecture provides insight into the low-level operations performed by the processing elements. The integration of these components suggests a system optimized for tasks requiring both parallel processing and symbolic reasoning.

</details>

Figure 6: Overview of the REASON hardware acceleration system. (a) Integration of REASON as a GPU co-processor. (b) REASON plug-in architecture with PEs, shared local memory, and global scheduling. (c) Tree-based PE architecture enabling broadcast, reduction, and irregular DAG execution. (d) Micro-architecture of a tree node supporting arithmetic and logical operations. (e) FIFO and memory layout supporting symbolic reasoning.

### IV-B Stage 2: Adaptive DAG Pruning

Motivation. While the unified DAG representation provides a common abstraction, it may contain significant redundancy, such as logically implied literals, inactive substructures, or low-probability paths, that inflate DAG size and degrade performance without improving inference quality.

Methodology. We propose adaptive DAG pruning, a semantics-preserving optimization that identifies and removes redundant paths in symbolic and probabilistic DAGs. For symbolic kernels, pruning targets literals and clauses that are logically redundant. For probabilistic kernels, pruning eliminates low-activation edges that minimally impact inference. This process significantly reduces model size and computational complexity while preserving correctness of logical and probabilistic inference.

#### Pruning of FOL and SAT via implication graph

For SAT solvers and FOL reasoning, we prune redundant literals using implication graphs. Given a CNF formula $\varphi=\bigwedge_{i}\left(\bigvee_{j}l_{ij}\right)$ , each binary clause $(l\lor l^{\prime})$ induces two directed implication edges: $\bar{l}\rightarrow l^{\prime}$ and $\bar{l^{\prime}}\rightarrow l$ . The resulting implication graph captures logical dependencies among literals. We perform a depth-first traversal to compute reachability relationships between literals. If a literal $l^{\prime}$ is always implied by another literal $l$ , then $l^{\prime}$ is a hidden literal. Clauses containing both $l$ and $l^{\prime}$ can safely drop $l^{\prime}$ , reducing clause width without semantic changes. For instance, a clause $C=(l\lor l^{\prime})$ is reduced to $C^{\prime}=(l)$ . This procedure removes redundant literals (e.g., hidden tautologies and failed literals), preserves satisfiability, and runs in time linear in the size of the implication graph.

#### Pruning of PCs and HMMs via circuit flow

For probabilistic DAGs such as PCs and HMMs, we prune edges based on probability flow, which quantifies each edge’s contribution to the overall likelihood.

In HMMs, the DAG is unrolled over time steps, with nodes representing transition factors $p(z_{t}\mid z_{t-1})$ and emission factors $p(x_{t}\mid z_{t})$ . We compute expected transition and emission usage via the forward-backward algorithm, yielding posterior state and transition probabilities. Edges corresponding to transitions or emissions with consistently low posterior probability are pruned, as their contribution to the joint likelihood $p(z_{1:T},x_{1:T})$ is negligible. This pruning preserves inference fidelity while reducing state-transition complexity.

In PCs, sum node $n$ computes $p_{n}(x)=\sum_{c\in\mathrm{ch}(n)}\theta_{n,c}\,p_{c}(x)$ , where $\theta_{n,c}\geq 0$ denotes the weight associated with child $c$ . For an input $x$ , we define the circuit flow through edge $(n,c)$ as $F_{n,c}(x)=\frac{\theta_{n,c}\,p_{c}(x)}{p_{n}(x)}\cdot F_{n}(x)$ , where $F_{n}(x)$ denotes the top-down flow reaching node $n$ . Intuitively, $F_{n,c}(x)$ measures the fraction of probability mass passing through edge $(n,c)$ for input $x$ . Given a dataset $\mathcal{D}$ , the cumulative flow for edge $(n,c)$ is $F_{n,c}(\mathcal{D})=\sum_{x\in\mathcal{D}}F_{n,c}(x)$ . Edges with the smallest cumulative flow are pruned, as they contribute least to the overall model likelihood. The resulting decrease in average log-likelihood is bounded by $\Delta\log\mathcal{L}\leq\frac{1}{|\mathcal{D}|}\sum_{x\in\mathcal{D}}F_{n,c}(x)$ , providing a theoretically grounded criterion for safe pruning.

### IV-C Stage 3: Two-Input DAG Regularization

Methodology. After pruning, the resulting DAGs may still have high fan-in or irregular branching, which hinders efficient hardware execution. To address this, we apply a regularization step that transforms DAGs into a canonical two-input form. Specifically, nodes with more than two inputs are recursively decomposed into balanced binary trees composed of two-input intermediate nodes, preserving the original computation semantics. This normalization promotes uniformity, enabling efficient parallel scheduling, pipelining, and mapping onto REASON architecture, without sacrificing model fidelity or expressive power.

For each symbolic or probabilistic kernel, the compiler generates an initial DAG, applies adaptive pruning, and then performs two-input regularization to produce a unified balanced representation. These DAGs are constructed offline and used to generate an execution binary that is programmed onto REASON hardware. This unification-pruning-regularization flow decouples algorithmic complexity from runtime execution and enables predictable performance.

## V REASON: Hardware Architecture

REASON features flexible co-processor plug-in architecture (Sec. V-A), reconfigurable symbolic/probabilistic PEs (Sec. V-B), flexible support for symbolic and probabilistic kernels (Sec. V-C - V-D). Sec. V-E presents cycle-by-cycle execution pipeline analysis. Sec. V-F discusses design space exploration and scalability.

### V-A Overall Architecture

Neuro-symbolic workloads exhibit heterogeneous compute and memory patterns with diverse sparsity, diverging from the GEMM-centric design of conventional hardware. Built on the unified DAG representation and optimizations (Fig. IV), REASON is a reconfigurable and flexible architecture designed to efficiently execute the irregular computations of symbolic and probabilistic reasoning stages in neuro-symbolic AI.

Overview. REASON operates as a programmable co-processor tightly integrated with GPU SMs, forming a heterogeneous system architecture. (Fig. 6 (a)). In this system, REASON serves as an efficient and reconfigurable “slow thinking” engine, accelerating symbolic and probabilistic kernels that are poorly suited to GPU execution. As illustrated in Fig. 6 (b), REASON comprises an array of tree-based PE cores that act as the primary computation engines. A global controller and workload scheduler manage the workload mapping. A shared local memory serves as a unified scratchpad for all cores. Communication between cores and shared memory is handled by a high-bandwidth global interconnect.

Tree architecture. Each PE core is organized as a tree-structured compute engine, as shown in Fig. 6 (c). Each tree node integrates a specialized datapath, memory subsystem, and control logic optimized for executing DAG-based symbolic and probabilistic operations.

Reconfigurable tree engine (RTE). At the core of each PE is a Reconfigurable Tree Engine (RTE), whose datapath forms a bidirectional tree of PEs (Fig. 6 (d)). The RTE supports both SAT-style symbolic broadcast patterns and probabilistic aggregation operations. A Benes network interconnect enables N-to-N routing, decoupling SRAM banking from DAG mapping and simplifying compilation of irregular graph structures (Sec. V-C). Forwarding logic routes intermediate and irregular outputs back to SRAM for subsequent batches.

Memory subsystem. To tackle the memory-bound nature of symbolic and probabilistic kernels, the RTE is backed by a set of dual-port, wide-bitline SRAM banks arranged as a banked L1 cache. A memory front-end with a prefetcher and high-throughput DMA engine moves data from shared scratchpad. A control/memory management unit (MMU) block handles address translation across the distributed memory system.

Core control and execution. A scalar PE acts as the core-level controller, fetching and issuing VLIW-like instructions that configure the RTE, memory subsystem, and specialized units. Outputs from the RTE are buffered before being consumed by the scalar PE or the SIMD Unit, which provides support for executing parallelable subset of symbolic solvers.

### V-B Reconfigurable Symbolic/Probabilistic PE

The PE architecture is designed to support a wide range of symbolic and probabilistic computation patterns via a VLIW-driven cycle-reconfigurable datapath. Each PE can switch among three operational modes to efficiently execute heterogeneous kernels mapped from the unified DAG representation.

Probabilistic mode. In probabilistic mode, the node executes irregular DAGs derived from unified probabilistic representations (Sec. V-C). The nodes are programmed by the VLIW instruction stream to perform arithmetic operations, either addition or multiplication, required by the DAG node mapped onto them. This mode supports probabilistic aggregation patterns such as sum-product computation and likelihood propagation, enabling efficient execution of PCs and HMMs.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Diagram: Unified Representation to Reordering

### Overview

The image is a diagram illustrating a five-step process, starting with a unified representation and ending with reordering. Each step is visually represented with diagrams and labels, showing the transformation of data and processes involved.

### Components/Axes

* **Step 1: Unified Representation:** Shows a complex network of nodes (A, B, C, D, E, F, G, H, I) and connections.

* **Step 2: Block Decomposition (BD):** Illustrates the decomposition of the network into blocks with "Intra-block Regularization" and "Inner-block Regularization" highlighted.

* **Step 3: PE and Register Mapping:** Depicts the assignment of blocks to Processing Elements (PEs) and a "Tree global scratchpad".

* **Step 4: Tree Mapping:** Shows a single PE with a tree structure mapped to a "Local PE SRAM".

* **Step 5: Reordering:** Represents the reordering process with labels "Load", "Block", "No-op", and "Block" at time steps T=0, T=1, T=2, and T=3 respectively.

### Detailed Analysis

* **Step 1: Unified Representation:**

* Node A is at the bottom-left.

* Nodes B and C are slightly above and to the right of A.

* Node D is above B and C.

* Nodes E, F, and G are to the right of D.

* Nodes H and I are at the top, with H to the left of I.

* Red arrows indicate connections from A to H and I, and from H to I.

* **Step 2: Block Decomposition (BD):**

* Two blocks are shown, each containing a tree structure.

* The top block shows two trees.

* The bottom block shows a tree and a few individual nodes.

* A red arrow labeled "Intra-block Regularization" connects node A (colored red) from Step 1 to the top tree.

* A green arrow labeled "Inner-block Regularization" connects a node within the bottom block (colored green) to the top of the tree in the bottom block.

* **Step 3: PE and Register Mapping:**

* An array of 8 "PE" blocks is shown.

* The text "Assign based on BD" is below the PE array.

* A table labeled "Tree global scratchpad" is shown below the assignment instruction.

* **Step 4: Tree Mapping:**

* A "Single PE" block is shown.

* A tree structure is mapped to a "Local PE SRAM".

* Arrows indicate data flow within the PE.

* **Step 5: Reordering:**

* Four blocks are labeled "Load", "Block", "No-op", and "Block".

* These blocks correspond to time steps T=0, T=1, T=2, and T=3 respectively.

### Key Observations

* The diagram illustrates a multi-step process for mapping a unified representation onto processing elements.

* Block decomposition and regularization are key steps in the process.

* The final step involves reordering operations for efficient execution.

### Interpretation

The diagram outlines a process for optimizing the execution of a complex task on parallel processing elements. The initial unified representation is decomposed into blocks, which are then assigned to PEs. Regularization techniques are applied to improve the structure of the blocks. The tree mapping step maps the computational structure onto the local memory of each PE. Finally, the reordering step optimizes the sequence of operations for efficient execution. The process aims to leverage parallel processing to accelerate the execution of the task.

</details>