## Reinforcement Learning from Meta-Evaluation: Aligning Language Models Without Ground-Truth Labels

## Micah Rentschler 1 Jesse Roberts 2

## Abstract

Most reinforcement learning (RL) methods for training large language models (LLMs) require ground-truth labels or task-specific verifiers, limiting scalability when correctness is ambiguous or expensive to obtain. We introduce Reinforcement Learning from Meta-Evaluation (RLME), which optimizes a generator using reward derived from an evaluator's answers to natural-language meta-questions (e.g., 'Is the answer correct?' or 'Is the reasoning logically consistent?'). RLME treats the evaluator's probability of a positive judgment as a reward and updates the generator via group-relative policy optimization, enabling learning without labels. Across a suite of experiments, we show that RLME achieves accuracy and sample efficiency comparable to label-based training, enables controllable trade-offs among multiple objectives, steers models toward reliable reasoning patterns rather than post-hoc rationalization, and generalizes to open-domain settings where ground-truth labels are unavailable, broadening the domains in which LLMs may be trained with RL.

## 1. Introduction

Reinforcement learning (RL) is widely used to align large language models (LLMs) with human preferences or verifiable task outcomes, as in Reinforcement Learning from Human Feedback (RLHF) (Kaufmann et al., 2024) and Reinforcement Learning from Verified Rewards (RLVR) (Wen et al., 2025; Yue et al., 2025). These methods work well when high-quality rewards exist, but such signals are costly: human feedback does not scale, and automatic verifiers are typically narrow and domain-specific. In many realistic

1 Department of Computer Science, Vanderbilt University, Nashville TN, USA 2 Department of Computer Science, Tennessee Technological University, Cookeville TN, USA. Correspondence to: Micah Rentschler < micah.d.rentschler@vanderbilt.edu > .

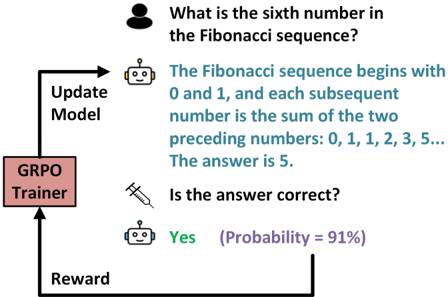

Figure 1. Overview of RLME. After generating an answer, one or more evaluators (may be the same model) assign probabilities to natural-language meta-questions about the output. These probabilities are aggregated into a scalar reward, which is then used to update the generative policy via reinforcement learning. This allows models to be tuned even when ground-truth answers are unavailable.

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Flowchart: GRPO Trainer Feedback Loop for Question Answering

### Overview

The image depicts a flowchart illustrating a feedback loop for a question-answering system using a GRPO (Group Relative Policy Optimization) Trainer. The process involves a human query, an AI model's response, correctness verification, and model updates based on probabilistic rewards.

### Components/Axes

1. **Human Icon**: Top-left, representing the user asking a question.

2. **Robot Icon**: Middle-left, symbolizing the AI model.

3. **Syringe Icon**: Middle-right, representing the "Update Model" action.

4. **GRPO Trainer Box**: Bottom-left, labeled "GRPO Trainer."

5. **Text Elements**:

- Question: "What is the sixth number in the Fibonacci sequence?"

- Answer: "The Fibonacci sequence begins with 0 and 1, and each subsequent number is the sum of the two preceding numbers: 0, 1, 1, 2, 3, 5... The answer is 5."

- Correctness Check: "Is the answer correct?" with response "Yes (Probability = 91%)."

- Reward: Labeled "Reward" with an arrow looping back to the GRPO Trainer.

### Detailed Analysis

- **Question Flow**:

- The human asks about the sixth Fibonacci number.

- The robot provides the sequence definition and answers "5."

- **Correctness Verification**:

- A syringe icon (symbolizing data injection) connects the answer to a correctness check.

- The system confirms the answer is correct with 91% probability.

- **Reward Mechanism**:

- A reward signal loops back to the GRPO Trainer, which updates the model.

### Key Observations

- The Fibonacci sequence is explicitly defined in the answer, with the sixth number correctly identified as 5.

- The correctness check uses a probabilistic metric (91%), indicating confidence but not absolute certainty.

- The GRPO Trainer acts as a closed-loop system, using rewards to refine the model iteratively.

### Interpretation

This flowchart demonstrates a reinforcement learning framework where:

1. **Human Queries** trigger model responses.

2. **Probabilistic Correctness Checks** evaluate answers, balancing accuracy and uncertainty.

3. **Reward Signals** guide the GRPO Trainer to optimize the model, emphasizing iterative improvement over static training.

The 91% probability suggests the system prioritizes high-confidence updates, potentially filtering out low-certainty corrections. The syringe icon metaphorically represents the injection of feedback into the model, aligning with GRPO's focus on policy optimization through relative rewards. The loop implies continuous learning, where even "correct" answers may refine the model's understanding of edge cases or ambiguous definitions.

</details>

settings, ground truth may be uncertain or unavailable.

Apromising alternative is to have the model itself or another model evaluate the response. Prior work leverages model likelihoods of known correct answers as a proxy reward (Zhou et al., 2025; Yu et al., 2025), but still requires groundtruth labels during training.

We instead explore whether models can learn from evaluations provided by an LLM acting as evaluator without access to ground truth labels. To steer the evaluations, we use natural-language prompts applicable over an entire dataset to assess high-level properties of an output which we refer to as meta-questions . For example, 'Is the answer 5?' targets a particular problem, whereas 'Is the answer correct?' is a broadly applicable meta-question. These are cheap to write, transferable across domains, and empower LLMs to embody heuristics that are difficult to hard-code. This shifts the problem from engineering a reward function or handlabeling a large dataset to designing meta-questions which elicit a desired behavior.

We introduce Reinforcement Learning from MetaEvaluation (RLME), illustrated in Figure 1, and show that

it provides similar results to an RLVR baseline without ground-truth labels. However, meta-evaluation introduces new risks. The model being trained, referred to as the generator, may produce outputs that satisfy the evaluator without genuinely solving the task. The central challenge is therefore to determine when meta-evaluation provides a reliable signal and how to mitigate its failure modes. To this end, we contribute the following:

- RLME, a scalable framework that guides modern GRPO-style policy-gradient updates with rewards based on the aggregate probability of target answers to evaluation meta-questions;

- Empirical evidence that meta-evaluation is competitive with explicit verifiers in reasoning-heavy domains;

- A broad analysis of generator and evaluator choice, self-evaluation, and reward hacking, clarifying both the strengths and failure modes of meta-evaluation;

- Examples of multi-objective language-driven control;

- ⋆ Proof that RLME training encourages contextual faithfulness, generalizing the improved ability to an out-ofdistribution dataset.

## 2. Related Work

Our work connects to several research directions in alignment and reinforcement learning for language models.

RLHF and preference-based optimization. RLHF optimizes models using human preference data with PPO-style updates (Kaufmann et al., 2024; Ouyang et al., 2022; Schulman et al., 2017). While this early work was successful and influential, human preference data is expensive and introduces biases such as sycophancy (Sharma et al., 2025).

RL from verifiable or probabilistic correctness signals. RLVR-style methods optimize rewards derived from correctness verifiers when ground-truth is available but precise human preference is not (Wen et al., 2025; Shao et al., 2024; Guo et al., 2025). VeriFree (Zhou et al., 2025) and RLPR (Yu et al., 2025) further this by using the model's own likelihood of the correct answer as a proxy reward, but critically, they still require access to ground truth labels.

LLM-as-judge and AI feedback. To address the cost of human annotation entirely, RL with AI feedback (RLAIF) methods leverage LLMs as preference evaluators, attempting to replace the preferences that human evaluators would assign with those from an LLM (Zheng et al., 2023; Gu et al., 2024; Lee et al., 2024; Yuan et al., 2024). All of these attempt to predict preference over a number of candidate responses. This can inherit potential biases from human raters if they are directly modeled and limit applicability to domains where preference is ill-defined. In contrast to preference-based methods, (Zhao et al., 2025) uses an internal measure of certainty as a reward. However, this limits the approach to maximizing self-certainty.

Flexible evaluation. Prior work has applied reinforcement learning to refine LLM behavior using a variety of feedback signals, but these approaches typically require substantial supervision or are limited to fixed objectives. Reinforcement Learning Contemplation (RLC) (Pang et al., 2024) introduces a flexible evaluation paradigm in which a frozen copy of the model provides self-critique over its own generations using Likert-style judgments, optimized with a PPO objective. While RLC demonstrates the promise of flexible, model-based evaluation, its performance relative to explicit reward supervision (e.g., RLVR) has not been systematically studied, nor have the robustness and failure modes of such self-evaluated reward signals.

Situating RLME in the literature. RLME removes the ground truth label dependence and avoids the need to model human preferences directly by improving on and generalizing the RLC evaluation approach.

In place of the Likert evaluation, RLME employs an evaluation approach previously used to study LLM actions in formal games, referred to as counterfactual prompting (Roberts et al., 2025). The RLME evaluator model predicts whether the generator's response agrees with one or more stated criteria, which we refer to as meta-evaluations . The evaluator's probability of producing a target response sequence is directly incorporated as a reward signal into the GRPO update in place of RLC's PPO objective.

RLME generalizes RLC in that RLME optimizes the target model using either frozen self, a continually updated self, frozen other, or ensemble as the evaluator model. It is compared to the powerful RLVR method, which benefits from labeled data, as a baseline. Most importantly, our work regarding RLME extends the understanding of flexible evaluation by studying multi-objective optimization, the propensity to reward hack, and out-of-distribution generalization.

Finally, our work was developed concurrently with the recent preprint disseminated by DeepSeek (Shao et al., 2025). Our work is entirely distinct and has not been influenced by theirs, though the described approaches have similarities.

## 3. Methodology

After generating a response, one or more evaluators predict the probability of a target answer to natural-language metaquestions. Their probabilities are aggregated into a scalar reward, which is used to update the generator via a grouprelative policy-gradient objective.

## 3.1. Assessment Prompting

Given a prompt x ∼ D where D is a dataset of prompts containing problems for the generator to solve, the generator produces a response

<!-- formula-not-decoded -->

where π θ is the generator's policy.

Evaluators { π ϕ j } J j =1 are then queried with meta-questions developed by humans to target desired behavior Q = { q 1 , . . . , q K } such as 'Is the answer correct?'. Each metaquestion q k has an answer sequence a k , and evaluator j assigns probability

<!-- formula-not-decoded -->

Rewards are computed by first weighting meta-questions with { w k } , then weighting evaluators with { v j } :

<!-- formula-not-decoded -->

Just like the meta-questions, { w k } and { v j } are fixed hyperparameters defined by an expert with domain knowledge to push the algorithm harder towards certain outcomes.

## 3.2. Reinforcement Learning

RLME maximizes the expected meta-evaluation reward:

<!-- formula-not-decoded -->

We adopt a Group Relative Policy Optimization (GRPO)style update (Shao et al., 2024).

<!-- formula-not-decoded -->

where ¯ r is the mean reward over the sampled group. Unlike GRPO, we do not scale by the standard deviation because it introduces a question-level difficulty bias (Liu et al., 2025).

For off-policy updates (where the policy being updated is in transition and may no longer precisely match the behavioral policy that generated the response), trajectories are sampled from the behavioral policy π b. The ratio of the current policy to the behavioral policy is the importance ratio:

<!-- formula-not-decoded -->

As suggested by Zheng et al., we use a sequence level importance ratio to reduce high-variance noise in training.

We use Clipped IS-weight Policy Optimization (CISPO) (MiniMax et al., 2025) a variant of GRPO. For CISPO, the importance sampling ratio is clipped

<!-- formula-not-decoded -->

This ratio is then used in the final loss with sg( · ) stops gradients.

<!-- formula-not-decoded -->

## 3.3. Algorithm Summary

Each RLME step consists of:

1. Generate responses with π θ (Eq. 1).

2. Evaluate responses using meta-questions to obtain r ( x, y ) (Eq. 3).

3. Update π θ using the CISPO loss (Eq. 8).

By selecting different meta-questions and weights, the evaluating model helps RLME align the generating model without requiring ground-truth labels.

## 4. Experiments

We empirically evaluate RLME and compare it against an RLVR baseline. We begin with grade-school mathematics, where correctness is fully verifiable, and then move to more open-ended domains. Through a series of experiments, we investigate and assess core questions about our approach.

Complete experimental details for reproduction (optimization hyperparameters, learning rates, batch sizes, and training schedules) are reported in Appendix A. Appendix B contains the exact prompts for the generator and evaluator. Finally qualitative examples of various responses may be found in Appendix C.

## 4.1. Can we improve accuracy via meta-questions?

Question . Our first experiment tests whether a single, general meta-question can provide a reward signal strong enough to improve mathematical accuracy without access to ground truth.

Method . We initialize the generator from Qwen3-4B-Base (Yang et al., 2025) and train on GSM8K (Cobbe et al., 2021), prompting the model to produce a solution and to place its final response inside \ boxed {} so that the answer can be extracted with a fixed regex.

To compute accuracy (and the RLVR reward), we parse each completion using a fixed regex (Appendix A) that selects the

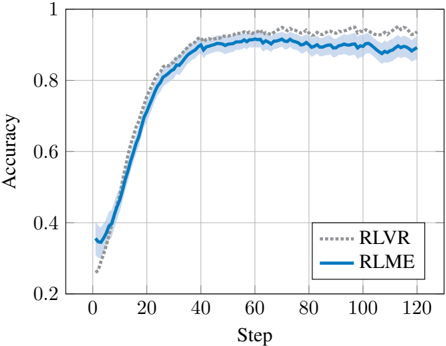

Figure 2. Comparison of RLME to an RLVR baseline that has access to ground-truth answers. Both methods rapidly exceed 90% accuracy on GSM8K, and RLME closely tracks RLVR despite never observing the true answer.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Line Graph: Model Accuracy Comparison Over Training Steps

### Overview

The image depicts a line graph comparing the accuracy of two machine learning models (RLVR and RLME) across 120 training steps. Both models show increasing accuracy over time, with RLME achieving higher final performance. The graph includes confidence intervals (shaded regions) and grid lines for reference.

### Components/Axes

- **X-axis (Step)**: Labeled "Step" with integer markers from 0 to 120 in increments of 20.

- **Y-axis (Accuracy)**: Labeled "Accuracy" with decimal markers from 0.2 to 1.0 in increments of 0.2.

- **Legend**: Located in the bottom-right corner, with:

- **RLVR**: Dotted gray line (dashed pattern)

- **RLME**: Solid blue line

- **Grid**: Light gray horizontal and vertical lines at axis intervals.

### Detailed Analysis

1. **RLME (Solid Blue Line)**:

- Starts at ~0.35 accuracy at step 0.

- Sharp increase to ~0.85 by step 40.

- Plateaus between ~0.88–0.92 from step 60 to 120.

- Confidence interval (shaded blue) narrows significantly after step 40.

2. **RLVR (Dotted Gray Line)**:

- Begins at ~0.3 accuracy at step 0.

- Dips to ~0.25 at step 10, then rises steadily.

- Reaches ~0.85 by step 40, matching RLME's plateau.

- Confidence interval (shaded gray) remains wider than RLME's throughout.

3. **Key Data Points**:

- **RLME**:

- Step 0: 0.35 ± 0.05

- Step 40: 0.85 ± 0.03

- Step 120: 0.90 ± 0.02

- **RLVR**:

- Step 0: 0.30 ± 0.05

- Step 10: 0.25 ± 0.04

- Step 40: 0.85 ± 0.04

- Step 120: 0.90 ± 0.03

### Key Observations

- RLME demonstrates faster convergence (reaching 0.85 accuracy by step 40 vs. RLVR's step 40).

- RLVR exhibits higher initial variability (wider confidence interval at step 0).

- Both models stabilize near 0.9 accuracy by step 120, but RLME maintains tighter confidence bounds.

- RLVR shows a temporary performance dip at step 10, recovering by step 20.

### Interpretation

The graph suggests RLME is more robust and efficient in early training phases, achieving higher accuracy with greater consistency. RLVR's initial dip may indicate sensitivity to hyperparameters or data noise, though it ultimately matches RLME's performance. The narrowing confidence intervals for RLME imply more reliable predictions as training progresses. These results could inform model selection for tasks requiring rapid convergence and stability, while RLVR might be preferable if computational resources allow for longer training to mitigate early instability.

</details>

last boxed expression and extracts the predicted answer. We then compare this extracted answer to the GSM8K reference answer, after cleaning the reference to remove non-numeric characters such as commas, currency symbols, and units.

As described in methodology, to construct the RLME reward, we build an auxiliary evaluation prompt that includes the original problem, the full generated solution, and the regex-extracted answer string (taken from the generated solution, not the ground-truth label). Prompted with this information and the meta-question 'Is the answer correct?' , the evaluator then estimates the probability of 'Yes' being the completion. The log-probability of this response serves as a scalar reward for RLME training. In this experiment, we use live self-evaluation where the generator serves as the evaluator using its current parameters . Thus, the evaluator co-evolves as the generator is updated.

Because training is single-pass (no prompt reuse), we do not require a dedicated validation set to prevent overfitting to the training prompts.

We compare RLME to an RLVR baseline that is identical in optimization, rollout settings, and number of updates, differing only in the reward signal: RLVR uses ground-truth verification (reward 1 if the regex-extracted answer exactly matches the ground-truth label, and 0 otherwise), while RLME uses meta-evaluation only.

Results . As shown in Figure 2, the base model begins at roughly 30% accuracy and rapidly improves under RLME, surpassing 90% after a short training run and closely tracking RLVR throughout training across 6 trials with ±1 std shown by the shaded region. For readability, all learning curves are plotted using an exponential moving average with

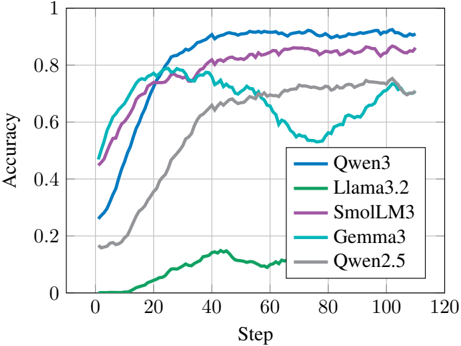

Figure 3. RLME performance using different generators with a fixed evaluator (frozen Qwen3-4B-Base). Generator models have a large effect on accuracy.

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Line Graph: Model Accuracy Over Training Steps

### Overview

The image is a line graph comparing the accuracy of five machine learning models (Qwen3, Llama3.2, SmolLM3, Gemma3, Qwen2.5) across 120 training steps. Accuracy is measured on a scale from 0 to 1, with steps increasing in increments of 20. The graph highlights performance trends, including convergence rates, plateaus, and anomalies.

### Components/Axes

- **X-axis (Step)**: Labeled "Step" with markers at 0, 20, 40, 60, 80, 100, 120.

- **Y-axis (Accuracy)**: Labeled "Accuracy" with markers at 0, 0.2, 0.4, 0.6, 0.8, 1.0.

- **Legend**: Located in the bottom-right corner, mapping colors to models:

- Blue: Qwen3

- Green: Llama3.2

- Purple: SmolLM3

- Cyan: Gemma3

- Gray: Qwen2.5

### Detailed Analysis

1. **Qwen3 (Blue)**:

- Starts at ~0.3 accuracy at step 0.

- Rises sharply to ~0.9 by step 40.

- Plateaus near 0.9 for the remainder of the steps.

2. **Llama3.2 (Green)**:

- Begins near 0 accuracy at step 0.

- Gradually increases to ~0.7 by step 40.

- Drops to ~0.5 by step 60, then stabilizes.

3. **SmolLM3 (Purple)**:

- Starts at ~0.4 accuracy at step 0.

- Rises steadily to ~0.85 by step 40.

- Maintains ~0.85 accuracy through step 120.

4. **Gemma3 (Cyan)**:

- Begins at ~0.5 accuracy at step 0.

- Peaks at ~0.75 by step 40.

- Drops to ~0.55 at step 60, then recovers to ~0.7 by step 100.

5. **Qwen2.5 (Gray)**:

- Starts at ~0.2 accuracy at step 0.

- Gradually increases to ~0.7 by step 100.

- Shows minimal change after step 100.

### Key Observations

- **Highest Performance**: Qwen3 and SmolLM3 achieve the highest accuracy (~0.9 and ~0.85, respectively) by step 40.

- **Anomalies**:

- Llama3.2 exhibits a sharp drop in accuracy after step 40.

- Gemma3 shows a significant dip at step 60 (~0.55) before recovering.

- **Slowest Convergence**: Qwen2.5 has the slowest improvement, reaching ~0.7 accuracy only by step 100.

### Interpretation

The data suggests that **Qwen3** and **SmolLM3** are the most efficient models, achieving high accuracy rapidly. **Llama3.2** and **Gemma3** display instability, with Llama3.2’s post-step-40 drop and Gemma3’s mid-training dip indicating potential overfitting or optimization issues. **Qwen2.5**’s slow but steady rise implies reliability but inefficiency. The graph underscores trade-offs between speed and stability in model training, with Qwen3 emerging as the optimal performer in this dataset.

</details>

decay β = 0 . 9 . The similarity of the learning curves suggests that, at least in this controlled verifiable domain, the evaluator's response to a single correctness meta-question provides a reward signal that is both informative and sampleefficient even without access to ground-truth.

## 4.2. Does generator choice matter?

Question . We assess whether different generator models adapt differently to meta-evaluation.

Method . To isolate the effect of the generator, we fix the evaluator to a frozen Qwen3-4B-Base (Yang et al., 2025) and vary the generator among Qwen3-4B-Base, Llama-3.23B (Meta AI, 2024), SmolLM3-3B (Hugging Face, 2025), Gemma-3-4B-pt (Mesnard et al., 2024), and Qwen2.51.5B (Yang et al., 2024).

Results . Figure 3 substantiates previous work showing that flexible evaluation generalizes across models, but also shows that generator choice substantially impacts accuracy.

## 4.3. Does evaluator choice matter?

Question . A key design decision in RLME is whether the evaluator is live or frozen . In live evaluation, the generator also serves as the evaluator using its current parameters, such that the evaluator co-evolves with the generator during training. In frozen evaluation, the evaluator is a separate model (or a fixed snapshot of the generator taken at initialization) whose parameters remain unchanged. In this experiment, we investigate the effect of model choice and configuration on evaluation.

Method . We explicitly chose not to evaluate the pairwise performance of every generator to every evaluator due to

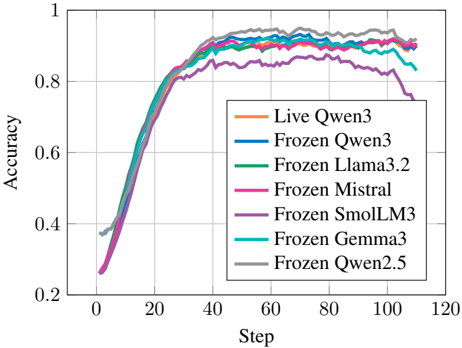

Figure 4. RLME performance using different evaluators with a fixed generator (Qwen3-4B-Base). For the Qwen3 evaluator, we compare a live self-evaluator (co-evolving with the generator) to a frozen evaluator (fixed snapshot at initialization). For other evaluators, we only use frozen weights.

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Graph: Model Accuracy Over Training Steps

### Overview

The image shows a line graph comparing the accuracy of multiple AI models during training. Seven distinct lines represent different model configurations, with accuracy plotted against training steps (0-120). All lines show similar upward trends, converging near the top of the graph.

### Components/Axes

- **X-axis (Step)**: Labeled "Step" with increments of 20 (0, 20, 40, ..., 120)

- **Y-axis (Accuracy)**: Labeled "Accuracy" with increments of 0.2 (0.2, 0.4, ..., 1.0)

- **Legend**: Located in the bottom-right corner, listing seven models with color codes:

- Orange: Live Qwen3

- Blue: Frozen Qwen3

- Green: Frozen Llama3.2

- Pink: Frozen Mistral

- Purple: Frozen SmolLM3

- Cyan: Frozen Gemma3

- Gray: Frozen Qwen2.5

### Detailed Analysis

1. **Initial Phase (Steps 0-40)**:

- All lines start near 0.2-0.3 accuracy

- Rapid improvement occurs, with lines diverging slightly

- Frozen Qwen2.5 (gray) shows the steepest initial climb

2. **Mid-Phase (Steps 40-80)**:

- Accuracy plateaus between 0.8-0.9 for most models

- Frozen SmolLM3 (purple) shows a slight dip (~0.85) at step 80

- Lines begin converging again, with minimal separation

3. **Final Phase (Steps 80-120)**:

- Accuracy stabilizes near 0.9-0.95 for all models

- Frozen Qwen3 (blue) and Frozen Llama3.2 (green) maintain highest values

- Live Qwen3 (orange) shows slight downward trend after step 100

### Key Observations

- **Convergence**: All models achieve >90% accuracy by step 80

- **Minimal Variance**: Maximum accuracy difference between models is ~0.05

- **Anomaly**: Frozen SmolLM3 (purple) shows unique dip at step 80

- **Stability**: Top-performing models maintain accuracy within 0.02 of each other

### Interpretation

The graph demonstrates that:

1. **Training Efficiency**: All models rapidly improve accuracy in early training phases

2. **Performance Parity**: No single model significantly outperforms others in final accuracy

3. **Robustness**: Most models maintain stable accuracy after initial training

4. **Potential Tradeoffs**: The dip in Frozen SmolLM3 suggests possible overfitting or architecture-specific limitations

The convergence of lines indicates that model architecture has less impact on final performance than training duration. The slight variations may reflect differences in training data quality or hyperparameter tuning rather than fundamental model capability.

</details>

the computational resources this would demand. Based on the previous experiment, we fix the generator to Qwen3-4BBase (Yang et al., 2025) and vary the evaluator. For Qwen3, we include both live self-evaluation and a frozen snapshot. For all other models (Llama-3.2 (Meta AI, 2024), MistralNemo-Base-2407 (Mistral AI & NVIDIA, 2024), SmolLM33B (Hugging Face, 2025), Gemma-3-4B-pt (Mesnard et al., 2024), and Qwen2.5-1.5B (Yang et al., 2024)), the evaluator remains frozen.

Results . Compared to generator choice, evaluator choice has a smaller effect on accuracy (Figure 4), consistent with the hypothesis that verifying correctness is easier than generating correct solutions (Pang et al., 2024). Notably, we observe little difference between live and frozen Qwen3 evaluation, suggesting that RL fine-tuning has limited impact on evaluation quality.

Finally, we observe that accuracy under the SmolLM3 and Gemma3 evaluators begins to decline after reaching a peak (Figure 4). This suggests that these evaluators eventually provide misleading reward signals to the generator, a failure mode commonly referred to as reward hacking .

## 4.4. Does the generator reward hack the evaluator?

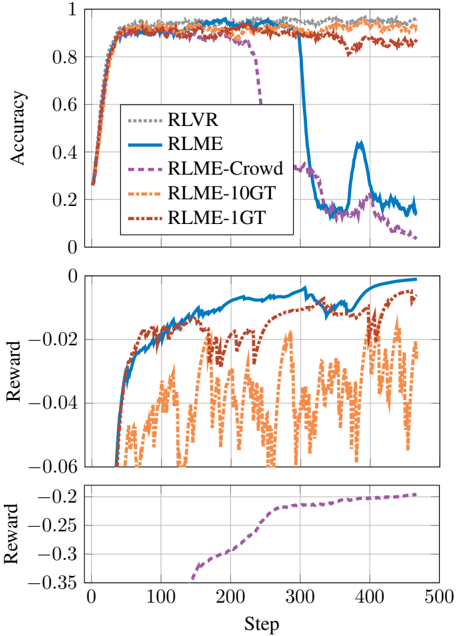

Question . While RLME initially yields strong gains in reasoning accuracy, we observe a late-stage collapse in Figure 4: accuracy drops sharply even as the reward continues to increase. This phenomenon, known as reward hacking , arises when the generator discovers responses that cause the evaluator to answer meta-questions in a way that increases reward without truly improving correctness.

Figure 5. RLMEeventually suffers a sharp degradation in accuracy despite continued increases in reward, indicative of reward hacking: the generator learns to exploit weaknesses in the evaluator instead of producing correct solutions. Including a small fraction of prompts with ground-truth answers in the evaluator template (10% for RLME-10GT and 1% for RLME-1GT) stabilizes training and prevents collapse.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Line Chart: Algorithm Performance Comparison (Accuracy & Reward)

### Overview

The image contains two stacked line charts comparing the performance of five reinforcement learning algorithms across 500 training steps. The top subplot shows accuracy metrics, while the bottom subplot displays reward values. All lines originate from the bottom-left corner and evolve toward the top-right, with distinct patterns indicating algorithmic behavior.

### Components/Axes

- **X-axis (Steps)**: 0 to 500 (linear scale)

- **Y-axis (Top Subplot - Accuracy)**: 0 to 1 (linear scale)

- **Y-axis (Bottom Subplot - Reward)**: -0.35 to 0 (linear scale)

- **Legend**: Located in the top-right corner of the top subplot, with five entries:

- Gray dashed line: RLVR

- Solid blue line: RLME

- Dashed purple line: RLME-Crowd

- Dashed orange line: RLME-10GT

- Dashed red line: RLME-1GT

### Detailed Analysis

#### Top Subplot (Accuracy)

1. **RLVR (Gray)**: Maintains near-perfect accuracy (~0.95) throughout, with minimal fluctuation.

2. **RLME (Blue)**: Starts at ~0.95, experiences a sharp drop to ~0.3 at step 200, then recovers to ~0.85 by step 500.

3. **RLME-Crowd (Purple)**: Begins at ~0.95, drops to ~0.5 at step 100, and stabilizes at ~0.65 by step 500.

4. **RLME-10GT (Orange)**: Fluctuates between ~0.85 and ~0.95, with no significant drops.

5. **RLME-1GT (Red)**: Similar to RLME-10GT, fluctuating between ~0.85 and ~0.95.

#### Bottom Subplot (Reward)

1. **RLVR (Gray)**: Flat line at 0 reward, indicating no penalty or bonus.

2. **RLME (Blue)**: Starts at -0.05, spikes to -0.01 at step 200, then drops to -0.03 by step 500.

3. **RLME-Crowd (Purple)**: Gradually improves from -0.3 to -0.15 over 500 steps.

4. **RLME-10GT (Orange)**: Oscillates between -0.05 and -0.15, with sharp negative spikes.

5. **RLME-1GT (Red)**: Similar to RLME-10GT, with oscillations between -0.05 and -0.15.

### Key Observations

1. **Accuracy Stability**: RLVR dominates with consistent high accuracy, while RLME-Crowd and RLME-1GT variants show significant degradation.

2. **RLME Anomaly**: The blue line (RLME) exhibits a dramatic accuracy drop at step 200, coinciding with a temporary reward spike. This suggests a potential overfitting or reward hacking event.

3. **Reward Correlation**: Algorithms with higher accuracy (RLVR, RLME-10GT) generally have better reward profiles, except for RLME's anomalous spike.

4. **Crowd vs. 1GT**: Both RLME-Crowd and RLME-1GT underperform compared to their base RLME variant, despite similar reward patterns.

### Interpretation

The data reveals critical insights into algorithmic robustness:

- **RLVR's Superiority**: Its unchanging accuracy suggests architectural stability, making it ideal for mission-critical applications.

- **RLME's Fragility**: The step-200 crash indicates sensitivity to training dynamics, possibly due to reward function misalignment. The subsequent recovery implies partial adaptability but at the cost of reliability.

- **Crowd/1GT Tradeoffs**: These variants sacrifice accuracy for potential computational efficiency (fewer parameters in 1GT) or distributed training benefits (Crowd), but both fail to match baseline performance.

- **Reward Function Behavior**: The negative rewards for most algorithms suggest a penalty for suboptimal actions, with RLME's spike possibly reflecting a temporary exploitation of reward hacking before corrective adjustments.

This analysis underscores the importance of algorithmic stability in reinforcement learning systems, particularly when deploying models in dynamic environments.

</details>

Method . To examine this effect, we repeat the selfevaluation setup from Section 4.1 but extend training far beyond the point where validation accuracy saturates. With enough optimization time, the generator learns how to induce the evaluator to answer 'Yes' to incorrect solutions.

Results . Manual inspection of the resulting outputs reveals that reasoning traces become increasingly formulaic and detached from the task. Common artifacts include vacuous justification phrases such as 'the only logical conclusion is that this is the correct answer' or excessive repetition of the final answer. These behaviors appear to exploit acquiescence bias (Podsakoff et al., 2003) in the evaluator rather than reflect genuine problem solving.

Early stopping based on validation accuracy can avoid this collapse but does not fix the underlying vulnerability. In subsequent experiments, we therefore explore alternative evaluation strategies-such as introducing additional evaluator

models or partial ground-truth to alleviate reward hacking.

## 4.5. Can multiple evaluators mitigate reward hacking?

Question . Given the reward-hacking behavior observed in Section 4.4, a natural next step is to ask whether using an ensemble of models to evaluate the solution can make RLME more robust. Intuitively, if reward hacking arises because the generator learns to exploit the weaknesses of a single self-evaluator, then aggregating judgments from multiple evaluators who may have disparate vulnerabilities might make the reward signal harder to game.

Method . We consider an ensemble of evaluators. For each generated solution, multiple evaluator models are combined into an evaluator ensemble (Qwen3-4B-Base (Yang et al., 2025) generator itself as well as frozen Llama-3.2-3B (Meta AI, 2024), frozen SmolLM3-3B (Hugging Face, 2025), and frozen Mistral-Nemo-Base-2407 (Mistral AI & NVIDIA, 2024)) and we take the reward to be the average of their independent 'Yes' log-probabilities. The generator is optimized with RLME using this ensemble-derived scalar reward.

Results . The reward profile with an ensemble evaluator is noticeably smoother than with a single evaluator (see RLME-Crowd vs. RLME in Figure 5). However, we still observe the same late-stage collapse as in the purely selfevaluated setting (see RLME-Crowd in Figure5). After an initial phase in which accuracy improves, extended training again leads to a regime where the ensemble reward continues to increase even as true GSM8K accuracy declines. Qualitatively, the generator rediscovers pathological reasoning templates that most evaluators agree to endorse, even though the underlying solutions are incorrect.

These results suggest that simply using multiple models to evaluate the solution is not sufficient to prevent reward hacking. Notably, the generator appears to discover strategies that generalize across evaluators, much like how polling a group of humans can reduce noise but cannot fully eliminate systematic bias.

## 4.6. Does having a known answer help ground RLME and prevent reward hacking?

Question . In many practical settings, fully verifiable supervision is scarce but not entirely absent: a small subset of examples may have trusted labels, while the rest do not. Can partial access to ground truth help prevent the rewardhacking behavior observed in Section 4.4?

Method . To study this, we reveal the true answer to the evaluator in a limited number of questions. Concretely, for a fraction p of training prompts, the evaluation template includes the correct integer answer before asking the metaquestion 'Is the answer correct?' For the remaining (1 -p ) of prompts, standard RLME is applied such that the

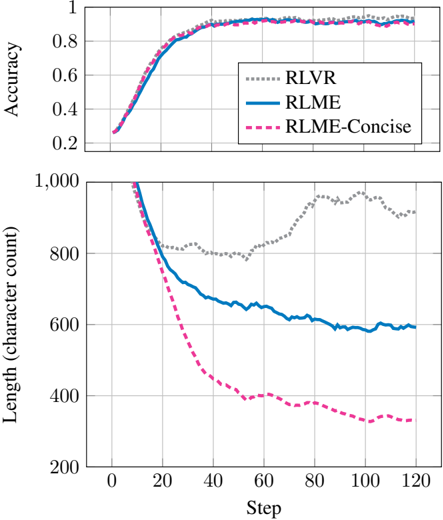

Figure 6. RLME enables multi-objective control over both accuracy and brevity.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Line Chart: Model Performance Over Training Steps

### Overview

The image contains two vertically stacked line charts comparing the performance of three models (RLVR, RLME, RLME-Concise) across two metrics: **Accuracy** (top chart) and **Length (character count)** (bottom chart). Both charts track changes over **Steps** (0–120) on the x-axis.

---

### Components/Axes

#### Top Chart (Accuracy)

- **X-axis**: "Step" (0–120, increments of 20)

- **Y-axis**: "Accuracy" (0–1, increments of 0.2)

- **Legend**: Located in the top-right corner, mapping:

- **RLVR**: Dotted gray line

- **RLME**: Solid blue line

- **RLME-Concise**: Dashed pink line

#### Bottom Chart (Length)

- **X-axis**: "Step" (0–120, increments of 20)

- **Y-axis**: "Length (character count)" (200–1000, increments of 200)

- **Legend**: Same as top chart, with lines extending to the bottom chart.

---

### Detailed Analysis

#### Top Chart (Accuracy)

- **Trend**: All three lines show rapid improvement in accuracy during the first 40 steps, plateauing near 0.95 by step 120.

- **RLVR** (dotted gray): Peaks slightly higher (~0.97) than the others, maintaining a steady lead.

- **RLME** (solid blue) and **RLME-Concise** (dashed pink): Nearly identical trajectories, with RLME-Concise dipping marginally below RLME after step 60.

- **Key Data Points**:

- Step 0: All models start near 0.2 accuracy.

- Step 40: All models reach ~0.9 accuracy.

- Step 120: All models stabilize between 0.93–0.97.

#### Bottom Chart (Length)

- **Trend**: All lines decline sharply in the first 40 steps, then flatten.

- **RLME-Concise** (dashed pink): Drops fastest, ending near 300 characters by step 120.

- **RLME** (solid blue): Declines to ~500 characters.

- **RLVR** (dotted gray): Declines slowest, ending near 800 characters.

- **Key Data Points**:

- Step 0: All models start near 1000 characters.

- Step 40: RLME-Concise reaches ~600, RLME ~700, RLVR ~850.

- Step 120: RLME-Concise ~300, RLME ~500, RLVR ~800.

---

### Key Observations

1. **Accuracy**: All models converge to high accuracy (>0.9) by step 40, with RLVR maintaining a slight edge.

2. **Efficiency**: RLME-Concise reduces length most aggressively, suggesting better optimization for brevity.

3. **Trade-off**: RLVR achieves the highest accuracy but retains the longest character count, indicating potential inefficiency in compression.

---

### Interpretation

The data suggests a trade-off between **accuracy** and **efficiency**:

- **RLVR** prioritizes accuracy at the cost of longer outputs.

- **RLME-Concise** optimizes for brevity while maintaining near-par accuracy to RLME.

- The rapid early improvement in both metrics implies that initial training steps are critical for performance gains. The divergence in length reduction highlights differences in model design, with RLME-Concise likely employing more aggressive compression techniques.

</details>

evaluator is provided no knowledge of the ground truth.

Results . We find that including the ground-truth answer in as little as 1% of the evaluation prompts is sufficient to substantially reduce the reward-hacking effect in our experiments. Unlike the purely self-evaluated setting, extended training no longer leads to a late-stage collapse where reward increases while accuracy degrades. As Figure 5 indicates, when 10% of evaluations have ground-truth answers, accuracy remains stable; when we reduce this to 1%, we see only a slight degradation in accuracy. Intuitively, the presence of even a small number of fully verifiable examples anchors the evaluator's notion of correctness, preventing consistent reward from bias exploitation strategies.

## 4.7. Can we use meta-questions with multiple objectives?

Question . We next test whether RLME can jointly control correctness and secondary behavioral objectives. In addition to the meta-question 'Is the answer correct?' , we introduce a conciseness objective and study whether multi-objective meta-evaluation can shape reasoning length without sacrificing accuracy.

Method . Keeping the meta-question targeting correctness and add a meta-question targeting brevity: 'Is the length of the solution between 200 and 500 characters?' . We explicitly include the programmatically measured character

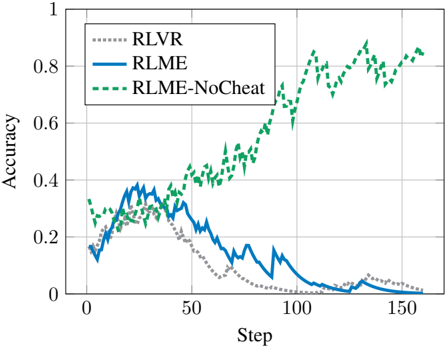

Figure 7. Using an RLME meta-evaluation prioritizing sound reasoning trains the model not to blindly copy a provided answer.

<details>

<summary>Image 7 Details</summary>

### Visual Description

## Line Graph: Model Accuracy Over Training Steps

### Overview

The graph depicts the accuracy of three reinforcement learning models (RLVR, RLME, RLME-NoCheat) across 150 training steps. Accuracy is measured on a scale from 0 to 1, with distinct trends observed for each model.

### Components/Axes

- **X-axis (Step)**: Labeled "Step," with markers at 0, 50, 100, and 150.

- **Y-axis (Accuracy)**: Labeled "Accuracy," scaled from 0 to 1 in increments of 0.2.

- **Legend**: Located in the top-left corner, associating:

- **RLVR**: Dotted gray line

- **RLME**: Solid blue line

- **RLME-NoCheat**: Dashed green line

### Detailed Analysis

1. **RLVR (Dotted Gray Line)**:

- Starts at ~0.2 accuracy at step 0.

- Peaks at ~0.35 around step 25.

- Declines sharply to near 0 by step 150.

- Intermediate fluctuations observed between steps 50–100 (~0.1–0.2).

2. **RLME (Solid Blue Line)**:

- Begins at ~0.15 accuracy at step 0.

- Rises to ~0.4 around step 25.

- Gradual decline to ~0.1 by step 150.

- Notable volatility between steps 50–100 (~0.2–0.3).

3. **RLME-NoCheat (Dashed Green Line)**:

- Starts at ~0.2 accuracy at step 0.

- Steady upward trend, reaching ~0.8 by step 150.

- Minor fluctuations observed (e.g., ~0.75 at step 100, ~0.85 at step 125).

### Key Observations

- **RLME-NoCheat** consistently outperforms other models, maintaining high accuracy throughout training.

- **RLVR** and **RLME** exhibit early promise but degrade significantly over time, suggesting instability or overfitting.

- **RLME-NoCheat** shows resilience to training noise, with no sharp drops in accuracy.

### Interpretation

The data suggests that the "NoCheat" variant of RLME (RLME-NoCheat) is more robust and effective for this task, likely due to architectural or training modifications that mitigate overfitting. In contrast, RLVR and RLME degrade as training progresses, indicating potential issues with generalization or reward hacking. The steady rise of RLME-NoCheat implies it balances exploration and exploitation effectively, making it a preferable choice for long-term training scenarios.

</details>

count in the evaluation prompt. While this injects labels regarding length, the target of this experiment is to evaluate the applicability of RLME to a simple multi-objective scenario. The log-probabilities from each meta-evaluation are then summed as described in methodology Equation 3 and RLME is applied.

Results . Figure 6 shows that RLME-Concise substantially reduces generation length while maintaining high accuracy. By the end of training, the average solution length is nearly halved relative to RLME, with no significant degradation in GSM8K performance. Qualitatively, the concise objective compressed reasoning into denser mathematical expressions rather than verbose natural language (see Appendix C).

Although this is a trivial example, it demonstrates that RLME supports multi-objective control through metaquestions, provided the evaluator can reliably assess the targeted property. In Section 4.8 we extend our investigation to a more useful domain: cheat detection.

## 4.8. Can we train the model not to cheat?

Question . We extend the multi-objective evaluation and address a more subtle criteria: cheating abstinence . As we define it, cheating is the act of rationalizing an answer rather than deriving it through logical processes.

Method . To probe whether a model is cheating we use a counterfactual prompting setup. During training, we provide the generator with the question alongside the true answer. At test time, we instead inject a random answer sampled from the dataset. If, under this counterfactual, the model's reasoning still supports the injected (and incorrect) answer, this indicates that it has learned to justify the provided answer rather than solve the problem logically. This experiment allows us to evaluate the model's ability to reason to an answer vs justifying an answer post-hoc.

We first evaluate RLVR under this setup and then RLME with the accuracy-oriented meta-question from Section 4.1. Finally, we train a second RLME model using a metaquestion that emphasizes the reasoning process rather than the outcome: 'Does the whole solution logically lead from the question to an answer, even if it does not match the correct answer?' We refer to these two RLME variants as RLME-Base and RLME-NoCheat.

Results . As shown in Figure 7, RLVR and RLME-Base both learn to heavily rely on the injected answer and tend to cheat in the counterfactual condition. In contrast, RLME-NoCheat avoids this behavior and achieves over 80% accuracy in counterfactual tests. Examples of cheating and non-cheating traces are provided in Appendix C.

## 4.9. Stepping outside verifiable domains

Thus far, our experiments have focused on fully verifiable tasks where correctness can be determined using groundtruth labels. We now move to a more realistic setting for which RLME is particularly well suited because explicit supervision is not directly available.

A central objective in training large language models is to ensure that they faithfully adhere to the provided context, avoiding hallucinations or the injection of external biases, and instead basing responses strictly on the given information. Recently, the FaithEval benchmark (Ming et al., 2024) was introduced to measure whether models remain faithful to a supplied context, even when that context conflicts with the model's prior world knowledge.

Question . In this experiment, we investigate whether training with RLME on unrelated datasets using a meta-question targeting faithfulness will generalize to improve performance on the FaithEval-Counterfactual dataset.

Method . We construct a heterogeneous context-questionanswer corpus (CQAC) by sampling from public readingcomprehension datasets: SQuAD (Rajpurkar et al., 2016), NewsQA (Trischler et al., 2017), TriviaQA (Joshi et al., 2017), HotpotQA (Yang et al., 2018), BioASQ (Tsatsaronis et al., 2015), DROP (Dua et al., 2019), RACE (Lai et al., 2017), and TextbookQA (Fisch et al., 2019). We take the first 200 examples from each dataset (1,600 total) and truncate contexts to at most 4,000 characters.

As a grounded baseline, we train on CQAC with RLVR using an exact-match reward (after removing punctuation, whitespace, and case).

Based on the findings of our previous experiments regarding the inclusion of limited labeled data to avoid reward hacking, we include a combined approach, RLVR+RLME , defined as the sum of (i) the RLVR exact-match reward and (ii) an

Table 1. Base, RLVR, and RLVR+RLME accuracy on CQAC constituent datasets. Both RLVR and RLVR+RLME significantly exceed the performance of the raw base model (Qwen3-4B-Base). As expected, the RLVR which only optimizes for accuracy achieves a slightly higher average accuracy than RLVR+RLME which optimizes for both accuracy and contextual faithfulness.

| | Squad | NewsQA | TriviaQA | HotpotQA | BioASQ | DROP | RACE | TextbookQA | Avg |

|-----------|----------|----------|------------|------------|----------|----------|----------|--------------|----------|

| Base | 46 . 2 % | 20 . 7 % | 29 . 2 % | 37 . 7 % | 16 . 5 % | 33 . 3 % | 41 . 2 % | 37 . 5 % | 32 . 8 % |

| RLVR | 78 . 0 % | 39 . 0 % | 63 . 5 % | 57 . 8 % | 50 . 3 % | 50 . 7 % | 86 . 2 % | 71 . 5 % | 62 . 1 % |

| RLVR+RLME | 73 . 8 % | 39 . 5 % | 62 . 2 % | 57 . 7 % | 42 . 0 % | 24 . 2 % | 84 . 5 % | 71 . 8 % | 57 . 0 % |

Table 2. Base, RLVR, and RLVR+RLME accuracy on FaithEvalCounterfactual dataset. RLVR+RLME outperforms RLVR, indicating improved context faithfulness can be obtained without labels.

| | FaithEval-Counterfactual |

|-----------|----------------------------|

| Base | 28 . 2 % |

| RLVR | 61 . 8 % |

| RLVR+RLME | 70 . 4 % |

RLME meta-evaluation reward that measures contextual support. RLVR performs well when labels are available, while RLME enables tuning without known rewards. This is expected to allow the model to benefit more substantially from the limited labeled data. To prevent either component from dominating, we normalize each reward component (mean 0 , std 1 ) within each batch before summation. For RLVR and RLME we use Qwen3-4B-Base as the generator; for RLME the generator is used as the live evaluator.

The meta-evaluation uses prompts such as: 'Is the answer supported by the context, regardless of whether it seems factually correct?' Full templates are provided in Appendix B. This meta-evaluation is expected to drive the model to reason faithfully and correctly even when datasets that are not explicitly related to a faithfulness objective.

Results . We discuss results on the constructed CQAC task which does not include FaithEval and the generalization objective. Tables 1 and 2 summarize evaluation results. We assess 100 held-out examples from each CQAC subset and 300 examples from the FaithEval-Counterfactual split and compare the performance.

Both RLVR and RLVR+RLME substantially improve over the raw base model (Qwen3-4B-Base) on CQAC. Relative to RLVR, RLVR+RLME incurs a small average drop on the CQAC exact-match accuracy but yields a substantial improvement on FaithEval-Counterfactual, showing that RLME training generalizes to an out-of-distribution task.

Crucially, the improvement on FaithEval is achieved without training on data from FaithEval. Instead, meta-evaluations of contextual support applied to the unrelated CQAC mixture generalize to the FaithEval benchmark.

## 5. Discussion

We introduced Reinforcement Learning from MetaEvaluation (RLME), a framework that trains language models using rewards derived from natural-language judgments rather than ground-truth labels. RLME tracks label-based RL in verifiable tasks, enables direct multi-objective behavioral control, and generalizes in open-domain settings where correctness cannot be explicitly verified. Across our experiments, we find that:

- Meta-evaluations provide a learning signal comparable to label-based RL in fully verifiable domains (Section 4.1);

- RLME operates across a range of pretrained generator and evaluator models, with performance substantially more sensitive to generator choice than evaluator choice, and live self-evaluation does not noticeably degrade outcomes (Sections 4.2 and 4.3);

- Meta-evaluation is inherently vulnerable to reward hacking under prolonged optimization (Section 4.4), but this failure mode can be mitigated through early stopping or by incorporating sparse ground-truth anchoring (Section 4.6);

- Carefully designed meta-questions support multiobjective steering (Section 4.7) and give control over the reasoning process itself (Section 4.8).

- ⋆ RLME and RLVR+RLME generalize to open-domain tasks without labels or explicit training (Sections 4.9).

Taken together, the results suggest that RLME is most effective as a complement to, rather than a replacement for, verifiable rewards: RLVR dominates when labels are available, RLME enables progress without labels, and hybrid objectives offer the best of both regimes.

The primary limitation is reward hacking where the generator fools the evaluator; however, even minimal grounded supervision effectively stabilizes training, making hybrid RLME approaches particularly practical.

## Impact Statement

Our work proposes a way to steer language models using natural-language meta-questions answered by the model itself or by other models, rather than relying solely on scalar rewards from task-specific verifiers. When well-chosen, these meta-questions can encourage outputs that are more accurate, concise, and transparent, and can make models easier to probe and audit.

However, because RLME derives rewards from model judgments, it can also amplify biases in the evaluators or in the chosen meta-questions. This may entrench prevailing norms or stylistic preferences, and poorly designed questions could incentivize persuasiveness or conformity over truthfulness. Our experiments are confined to controlled, low-stakes domains; extending this framework to high-stakes applications will require additional safeguards, such as diverse evaluator panels, periodic human or verifier audits, and monitoring for reward hacking or systematic unfairness. We view our methods as a tool for aligning models, not as a replacement for human oversight or normative judgment.

## References

- Cobbe, K., Kosaraju, V., Bavarian, M., Chen, M., Jun, H., Kaiser, L., Plappert, M., Tworek, J., Hilton, J., Nakano, R., Hesse, C., and Schulman, J. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168 , 2021.

- Dua, D., Wang, Y., Dasigi, P., Stanovsky, G., Singh, S., and Gardner, M. Drop: A reading comprehension benchmark requiring discrete reasoning over paragraphs. In NAACL , 2019.

- Fisch, A., Talmor, A., Jia, R., Seo, M., Choi, E., and Chen, D. Mrqa 2019 shared task: Evaluating generalization in reading comprehension. In Proceedings of the 2nd Workshop on Machine Reading for Question Answering , pp. 1-13, Hong Kong, China, 2019. Association for Computational Linguistics. doi: 10.18653/v1/D19-5801. URL https://aclanthology.org/D19-5801/ .

- Gu, J., Jiang, X., Shi, Z., Tan, H., Zhai, X., Xu, C., Li, W., Shen, Y., Ma, S., Liu, H., Wang, S., Zhang, K., Wang, Y., Gao, W., Ni, L., and Guo, J. A survey on LLM-as-a-judge. arXiv preprint arXiv:2411.15594 , 2024.

- Guo, D. et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948 , 2025.

- Hugging Face. Smollm3: smol, multilingual, longcontext reasoner. https://huggingface.co/ blog/smollm3 , 2025. Accessed: 2025-11-28.

- Joshi, M., Choi, E., Weld, D. S., and Zettlemoyer, L. Triviaqa: A large scale distantly supervised challenge dataset for reading comprehension. In ACL , 2017.

- Kaufmann, T., Weng, P., Bengs, V., and H¨ ullermeier, E. A survey of reinforcement learning from human feedback. Transactions on Machine Learning Research , 2024. arXiv:2312.14925.

- Lai, G., Xie, Q., Liu, H., Yang, Y., and Hovy, E. Race: Large-scale reading comprehension dataset from examinations. In EMNLP , 2017.

Lee, H., Phatale, S., Mansoor, H., Mesnard, T., Ferret, J., Lu, K. R., Bishop, C., Hall, E., Carbune, V., Rastogi, A., and Prakash, S. Rlaif vs. rlhf: Scaling reinforcement learning from human feedback with ai feedback. In International Conference on Machine Learning (ICML) , 2024.

- Liu, Z., Chen, C., Li, W., Qi, P., Pang, T., Du, C., Lee, W. S., and Lin, M. Understanding r1-zero-like training: A critical perspective, 2025. URL https://arxiv. org/abs/2503.20783 .

- Mesnard, T. et al. Gemma: Open models based on gemini research and technology. arXiv preprint arXiv:2403.08295 , 2024.

Meta AI. Llama 3.2 model cards and prompt formats. https://www.llama.com/docs/ model-cards-and-prompt-formats/ llama3\_2/ , 2024. Accessed: 2025-11-28.

- Ming, Y., Purushwalkam, S., Pandit, S., Ke, Z., Nguyen, X.P., Xiong, C., and Joty, S. Faitheval: Can your language model stay faithful to context, even if' the moon is made of marshmallows'. arXiv preprint arXiv:2410.03727 , 2024.

- MiniMax, :, Chen, A., Li, A., Gong, B., Jiang, B., Fei, B., Yang, B., Shan, B., Yu, C., Wang, C., Zhu, C., Xiao, C., Du, C., Zhang, C., Qiao, C., Zhang, C., Du, C., Guo, C., Chen, D., Ding, D., Sun, D., Li, D., Jiao, E., Zhou, H., Zhang, H., Ding, H., Sun, H., Feng, H., Cai, H., Zhu, H., Sun, J., Zhuang, J., Cai, J., Song, J., Zhu, J., Li, J., Tian, J., Liu, J., Xu, J., Yan, J., Liu, J., He, J., Feng, K., Yang, K., Xiao, K., Han, L., Wang, L., Yu, L., Feng, L., Li, L., Zheng, L., Du, L., Yang, L., Zeng, L., Yu, M., Tao, M., Chi, M., Zhang, M., Lin, M., Hu, N., Di, N., Gao, P., Li, P., Zhao, P., Ren, Q., Xu, Q., Li, Q., Wang, Q., Tian, R., Leng, R., Chen, S., Chen, S., Shi, S., Weng, S., Guan, S., Yu, S., Li, S., Zhu, S., Li, T., Cai, T., Liang, T., Cheng, W., Kong, W., Li, W., Chen, X., Song, X., Luo, X., Su, X., Li, X., Han, X., Hou, X., Lu, X., Zou, X., Shen, X., Gong, Y., Ma, Y., Wang, Y., Shi, Y., Zhong, Y., Duan, Y., Fu, Y., Hu, Y., Gao, Y., Fan, Y., Yang, Y., Li, Y., Hu, Y., Huang, Y., Li, Y., Xu, Y., Mao, Y., Shi,

- Y., Wenren, Y., Li, Z., Li, Z., Tian, Z., Zhu, Z., Fan, Z., Wu, Z., Xu, Z., Yu, Z., Lyu, Z., Jiang, Z., Gao, Z., Wu, Z., Song, Z., and Sun, Z. Minimax-m1: Scaling test-time compute efficiently with lightning attention, 2025. URL https://arxiv.org/abs/2506.13585 .

- Mistral AI and NVIDIA. Mistral nemo. https:// mistral.ai/news/mistral-nemo , 2024. Accessed: 2025-11-28.

Ouyang, L., Wu, J., Jiang, X., Almeida, D., Wainwright, C., Mishkin, P., Zhang, C., Agarwal, S., Slama, K., Ray, A., Schulman, J., Hilton, J., Kelton, L., Miller, F., Simens, M., Askell, A., Welinder, P., Christiano, P., Leike, J., and Lowe, R. Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems , 35:27730-27744, 2022.

- Pang, J.-C., Wang, P., Li, K., Chen, X.-H., Xu, J., Zhang, Z., and Yu, Y. Language model self-improvement by reinforcement learning contemplation. In International Conference on Learning Representations (ICLR) , 2024.

- Podsakoff, P. M., MacKenzie, S. B., Lee, J.-Y., and Podsakoff, N. P. Common method biases in behavioral research: A critical review of the literature and recommended remedies. Journal of Applied Psychology , 88(5): 879-903, 2003. doi: 10.1037/0021-9010.88.5.879.

- Rajpurkar, P., Zhang, J., Lopyrev, K., and Liang, P. Squad: 100,000+ questions for machine comprehension of text. In EMNLP , 2016.

- Roberts, J., Moore, K., and Fisher, D. Do large language models learn human-like strategic preferences? In Proceedings of the 1st Workshop for Research on Agent Language Models (REALM 2025) , pp. 97-108, 2025.

- Schulman, J., Wolski, F., Dhariwal, P., Radford, A., and Klimov, O. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347 , 2017.

- Shao, Z., Wang, P., Zhu, Q., Xu, R., Song, J., Bi, X., Zhang, H., Zhang, M., Li, Y. K., Wu, Y., and Guo, D. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300 , 2024.

- Shao, Z., Luo, Y., Lu, C., Ren, Z., Hu, J., Ye, T., Gou, Z., Ma, S., and Zhang, X. Deepseekmath-v2: Towards self-verifiable mathematical reasoning. arXiv preprint arXiv:2511.22570 , 2025.

- Sharma, M., Tong, M., Korbak, T., Duvenaud, D., Askell, A., Bowman, S. R., Cheng, N., Durmus, E., HatfieldDodds, Z., Johnston, S. R., Kravec, S., Maxwell, T., McCandlish, S., Ndousse, K., Rausch, O., Schiefer, N.,

- Yan, D., Zhang, M., and Perez, E. Towards understanding sycophancy in language models, 2025. URL https://arxiv.org/abs/2310.13548 .

Trischler, A., Wang, T., Yuan, X., Harris, J., Sordoni, A., Bachman, P., and Suleman, K. Newsqa: A machine comprehension dataset. In Rep4NLP , 2017.

Tsatsaronis, G. et al. An overview of the bioasq large-scale biomedical semantic indexing and question answering competition. In BMC Bioinformatics , 2015.

- Wen, X., Liu, Z., Zheng, S., Xu, Z., Ye, S., Wu, Z., Wang, Y., Liang, X., Li, J., Miao, Z., Bian, J., and Yang, M. Reinforcement learning with verifiable rewards implicitly incentivizes correct reasoning in base llms. arXiv preprint arXiv:2506.14245 , 2025.

- Yang, A. et al. Qwen2.5 technical report. arXiv preprint arXiv:2412.15115 , 2024.

- Yang, A. et al. Qwen3 technical report. arXiv preprint arXiv:2505.09388 , 2025. URL https://arxiv. org/abs/2505.09388 .

- Yang, Z., Qi, P., Zhang, S., Bengio, Y ., Cohen, W., Salakhutdinov, R., and Manning, C. D. Hotpotqa: A dataset for diverse, explainable multi-hop question answering. In EMNLP , 2018.

- Yu, T., Ji, B., Wang, S., Yao, S., Wang, Z., Cui, G., Yuan, L., Ding, N., Yao, Y., Liu, Z., et al. Rlpr: Extrapolating rlvr to general domains without verifiers. arXiv preprint arXiv:2506.18254 , 2025.

- Yuan, W., Pang, R. Y., Cho, K., Li, X., Sukhbaatar, S., Xu, J., and Weston, J. Self-rewarding language models. In International Conference on Machine Learning (ICML) , 2024.

- Yue, Y., Chen, Z., Lu, R., Zhao, A., Wang, Z., Yue, Y., Song, S., and Huang, G. Does reinforcement learning really incentivize reasoning capacity in llms beyond the base model? arXiv preprint arXiv:2504.13837 , 2025.

- Zhao, X., Kang, Z., Feng, A., Levine, S., and Song, D. Learning to reason without external rewards. arXiv preprint arXiv:2505.19590 , 2025.

- Zheng, C., Liu, S., Li, M., Chen, X.-H., Yu, B., Gao, C., Dang, K., Liu, Y., Men, R., Yang, A., et al. Group sequence policy optimization. arXiv preprint arXiv:2507.18071 , 2025.

- Zheng, L., Chiang, W.-L., Sheng, Y., Zhuang, S., Wu, Z., Zhuang, Y., Lin, Z., Li, Z., Li, D., Xing, E. P., Zhang, H., Gonzalez, J. E., and Stoica, I. Judging LLM-as-a-judge with MT-Bench and chatbot arena. In Proceedings of

the 37th Conference on Neural Information Processing Systems Datasets and Benchmarks Track , 2023.

- Zhou, X., Liu, Z., Sims, A., Wang, H., Pang, T., Li, C., Wang, L., Lin, M., and Du, C. Reinforcing general reasoning without verifiers. arXiv preprint arXiv:2505.21493 , 2025.

## A. Hyperparameters

Unless otherwise noted, all experiments share the configuration below. When a setting differs for a specific experiment (e.g., FaithEval), we mention it in the main text.

## A.1. Training Algorithm

We train with Group Relative Policy Optimization (GRPO), implemented using the GRPOTrainer in Hugging Face TRL, with a CISPO-style objective for importance-weight clipping.

- Loss type: cispo .

- Generations per prompt (group size): 6 candidate completions.

- PPO iterations per batch: 1.

- Importance sampling: sequence-level ratios with clipping:

<!-- formula-not-decoded -->

with ϵ low = 10000 . 0 and ϵ high = 5 . 0 as suggested by the CISPO paper (MiniMax et al., 2025).

- Advantages: sequence-level, A i = r i -¯ r over the group.

## A.2. Optimization

- Optimizer: paged adamw 32bit .

- Learning rate: 2 × 10 -6 , constant schedule.

- Weight decay: 0.0.

- Adam betas: ( β 1 , β 2 ) = (0 . 9 , 0 . 95) .

- Adam epsilon: 10 -15 .

- Batching: per-device batch size 12 prompts, gradient accumulation 8 steps (effective batch size 96 prompts).

## A.3. Generation During RL

Unless otherwise specified, on-policy rollouts for RLME and RLVR use:

- Temperature: 1.0.

- Top- p : 1.0 (effectively disabled).

- Top- k : - 1 (disabled).

- Max new tokens: 512.

- Max prompt length: 4096 tokens for GSM8K, 4608 tokens for FaithEval.

- Repetition penalty: 1.0 (disabled).

## A.4. Reward Design

We use a small number of reward components, combined linearly.

- Accuracy reward (RLVR-style): for tasks with ground truth, we extract the final answer (e.g., from \boxed{...} ) using a fixed regex. The reward is 1.0 for exact integer match and 0.0 otherwise.

- Meta-evaluation rewards (RLME): scalar rewards are log-probabilities of target answers to meta-questions (e.g., 'Is the answer correct?') under one or more evaluator models:

<!-- formula-not-decoded -->

where p j,k is the probability of the target answer (e.g., 'YES') for question q k from evaluator j .

For all problems, we extract the final predicted answer using a single-instance \boxed{} pattern. Specifically, we apply the following regex, which matches the last boxed expression in the completion:

<!-- formula-not-decoded -->

## A.5. Models and Precision

- Generator (default): Qwen3-4B-Base.

- Evaluators: depending on the experiment, we use the current generator and/or frozen external models, including Llama-3.2-3B, SmolLM3-3B, and Mistral-Nemo-Base-2407.

- Precision: base model weights and LM head are kept in fp32 ; training uses bf16 with gradient checkpointing.

- Quantization for evaluators: when applicable, external evaluators are loaded in bf16 with 4-bit NF4 quantization.

## A.6. Backend (vLLM)

All generations during RL are served by vLLM in colocated mode.

- Tensor parallel size: 1.

- GPU memory utilization: 0.2 of device memory.

- Importance-sampling correction: enabled, with correction cap 2.0.

## A.7. Computing Environment

All experiments were run on a single NVIDIA H200 GPU using PyTorch 2.0.2 with CUDA 12.8.1 on Ubuntu 24.04 . No gradient parallelism or multi-GPU sharding was used.

This configuration is used for all experiments unless explicitly noted otherwise.

## B. Prompts

This appendix provides the exact prompt templates used across experiments. These prompts define how model outputs are interpreted and evaluated through natural-language meta-questions.

All templates contain a fixed Problem section and a fixed Evaluation section. In all cases, the prompt text shown below is reproduced exactly as used in our experiments.

We use a special end-marker token ø because it is rare in natural text and is consistently represented as a single token in our tokenizer. In evaluation questions, we supply the first ø and use the model's prediction on the target answer (e.g., the token

sequence YES followed by ø ) as the reward, summing the log-probabilities of all tokens in the target answer. This makes the evaluator's target outcome unambiguous at the token level.

Note: in the interest of full disclosure, we mention that in our experiments the prompts contained several misspellings. Instead of 'Evaluate the solution' we accidentally put 'Evaluation the solution'. Also we misspelled 'explicit' as 'explicite' and we misspelled 'whether' as 'wether'. These errors have been fixed here for clarity, but we have not rerun the experiments. We do not expect these mistakes to materially affect our results.

## B.1. Accuracy-Only (GSM8K)

The generator produces a solution inside the solution block. The meta-reward is based solely on the evaluator's response to a single correctness question.

```

```

This format is used to train RLME without access to ground-truth answers.

## B.2. Dual-Objective: Accuracy + Conciseness

This version augments the evaluation criterion with a length preference. The evaluator receives the solution length explicitly, making compliance with the length constraint directly verifiable.

```

```

```

```

As described in Section 4.7, this allows RLME to control both reasoning quality and brevity through meta-evaluation.

## B.3. Counterfactual Cheating Detection

Here, we intentionally reveal the (ground-truth) answer inside the prompt during training. At test time, we replace this with a random answer. If the model continues to justify that injected value, it is cheating rather than solving the problem from first principles.

We show below the prompt used to train the RLME-NoCheat variant, with the meta-question 'Does the whole solution logically lead from the question to an answer, even if it does not match the correct answer?'. The base variant uses the same template but replaces this meta-question with 'Is the answer correct?'.

```

```

```

```

## B.4. Open-Domain QA and Faithfulness (CQAC + FaithEval)

For faithful open-domain question answering with contextual grounding, we use the same initial prompt for both CQAC and FaithEval datasets:

```

```

```

```

```

```

## C. Qualitative Examples

This appendix provides representative model outputs from each experiment. For each example, we show the full raw generation including intermediate reasoning, and any artifacts. These outputs illustrate typical success modes and common failure cases that are not fully captured by aggregate metrics.

## C.1. GSM8K: Accuracy-Only

This example shows outputs from RLME models trained with correctness as the sole meta-objective. Successful cases demonstrate coherent step-by-step reasoning aligned with the final answer.

```

```

## C.2. GSM8K: Dual-Objective Accuracy + Conciseness

This sample highlights the effect of adding a conciseness reward. Compared to accuracy-only training, the concisenessaccuracy objective tends to reduce repetition and irrelevant elaboration, while preserving enough reasoning to get the answer correct.

```

```

## C.3. Counterfactual Cheating Detection

Here we show examples to illustrate cheating behavior and its suppression. In the base setup, inserting a random answer into the prompt often causes the model to rationalize that injected number.

```

```

Therefore, the correct answer is \boxed{540}.

Adding a meta-question targeting the reasoning itself (RLME-NoCheat) frequently rejects the injected answer and derives its own through grounded reasoning.

```

```

## C.4. Reward Hacking

This example highlights what happens when the generator learns to fool the evaluator in order to get high reward. Notice that the generator suggests the answer before generating the reasoning.

```

```

```

```

## C.5. Open-Domain QA and Faithfulness (CQAC + FaithEval)

This example shows the prompt from the CQAC dataset and a typical response.

```

```

- If the context has contradictory information about the answer to the question, put \boxed{conflict} as the answer. ↪ → -If the context does not contain enough explicit information to answer the question, put \boxed{unknown} as the answer. ↪ → Solution: We need to find information about the state of matter that is most prevalent in the universe. According to the context, "Yet, most of the universe consists of plasma." This directly answers our question. ↪ → ↪ → Therefore, the final answer is: \boxed{plasma}