# Pushing the Boundaries of Natural Reasoning: Interleaved Bonus from Formal-Logic Verification in Language Models

**Authors**: Chuxue Cao, Jinluan Yang, Haoran Li, Kunhao Pan, Zijian Zhao, Zhengyu Chen, Yuchen Tian, Lijun Wu, Conghui He, Sirui Han, Yike Guo

Abstract

Large Language Models (LLMs) show remarkable capabilities, yet their stochastic next-token prediction creates logical inconsistencies and reward hacking that formal symbolic systems avoid. To bridge this gap, we introduce a formal logic verification-guided framework that dynamically interleaves formal symbolic verification with the natural language generation process, providing real-time feedback to detect and rectify errors as they occur. Distinguished from previous neuro-symbolic methods limited by passive post-hoc validation, our approach actively penalizes intermediate fallacies during the reasoning chain. We operationalize this framework via a novel two-stage training pipeline that synergizes formal logic verification-guided supervised fine-tuning and policy optimization. Extensive evaluation on six benchmarks spanning mathematical, logical, and general reasoning demonstrates that our 7B and 14B models outperform state-of-the-art baselines by average margins of 10.4% and 14.2%, respectively. These results validate that formal verification can serve as a scalable mechanism to significantly push the performance boundaries of advanced LLM reasoning. We will release our data and models for further exploration soon.

Machine Learning, ICML

1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

## Screenshot: Problem-Solving Scenario with Logic Consistency Analysis

### Overview

The image presents a multi-part problem-solving scenario involving relational logic comparisons between four individuals (Alice, Bob, Charlie, Diana) and a bar chart analyzing logic consistency in reasoning chains. The content includes:

1. A textual problem with multiple reasoning approaches

2. A bar chart comparing logic consistency percentages

3. Conflicting conclusions from different reasoning methods

### Components/Axes

**Textual Elements:**

- **Problem Statement (Top):**

- "Alice > Bob, Charlie < Alice, Diana > Charlie. Who scores higher: Bob or Diana?"

- Three reasoning approaches:

- **NL Reasoning (Left):**

- "Charlie < Diana < Alice > Bob → Therefore: Diana > Bob"

- Answer marked incorrect (red X)

- **NL Reasoning (Right):**

- Identical steps to left panel

- Answer marked correct (green checkmark)

- **Formal Logic Reasoning (Bottom-Left):**

- Code snippets using a solver:

```python

solver.add(bob > diana)

result = solver.check()

solver.add(diana > bob)

result = solver.check()

```

- Compiler output: "Unknown"

- Answer: "Relationship is undetermined"

- **Bar Chart (Bottom-Right):**

- **X-Axis:** "Logic Consistency in NL Reasoning Chains"

- **Y-Axis:** "Percentage (%)"

- **Legend (Right):**

- Blue: Consistent Logic

- Red: Inconsistent Logic

- **Categories:**

- Correct CoT (60.7% blue / 39.3% red)

- Wrong CoT (47.6% blue / 52.4% red)

### Detailed Analysis

**Textual Reasoning:**

1. **NL Reasoning Panels:**

- Both panels derive "Diana > Bob" through transitive logic:

- Charlie < Diana < Alice > Bob

- Contradiction: Left panel marks this answer incorrect despite valid logic

- Right panel accepts the same conclusion as correct

2. **Formal Logic Reasoning:**

- Uses SAT solver with conflicting constraints:

- First constraint: `bob > diana`

- Second constraint: `diana > bob`

- Solver returns "Unknown" due to contradictory inputs

- Final answer acknowledges indeterminacy

**Bar Chart Analysis:**

- **Correct CoT:**

- Consistent Logic: 60.7%

- Inconsistent Logic: 39.3%

- **Wrong CoT:**

- Consistent Logic: 47.6%

- Inconsistent Logic: 52.4%

- **Color Verification:**

- Blue bars consistently represent Consistent Logic across categories

- Red bars represent Inconsistent Logic

### Key Observations

1. **Logic Consistency Trends:**

- Consistent Logic dominates in Correct CoT (60.7% vs 39.3%)

- Inconsistent Logic becomes dominant in Wrong CoT (52.4% vs 47.6%)

2. **Reasoning Method Conflicts:**

- Natural Language Reasoning produces contradictory conclusions

- Formal Logic/Solver approach identifies indeterminacy

3. **Answer Discrepancies:**

- Two identical NL Reasoning chains receive conflicting validity markers

- Compiler output rejects both conclusions

### Interpretation

The data reveals fundamental challenges in automated reasoning systems:

1. **NL Reasoning Limitations:**

- High consistency in Correct CoT suggests surface-level pattern matching

- Collapse in performance for Wrong CoT indicates poor error handling

- Conflicting validity markers demonstrate unreliability in self-assessment

2. **Formal Logic Shortcomings:**

- Solver's "Unknown" output exposes inability to resolve contradictory constraints

- Highlights need for constraint validation before problem formulation

3. **Educational Implications:**

- 60.7% consistency in Correct CoT suggests NL reasoning works for straightforward cases

- 52.4% inconsistent logic in Wrong CoT reveals critical failure modes

- Contradictory conclusions between identical reasoning chains indicate non-determinism

4. **Technical System Design:**

- The system appears to lack:

- Constraint consistency checking

- Reasoning chain validation

- Error propagation mechanisms

- The green checkmark on the right panel suggests possible human intervention or post-hoc validation

This analysis demonstrates the complex interplay between human-like reasoning patterns and formal logic systems, revealing both potential and limitations in current automated reasoning approaches.

</details>

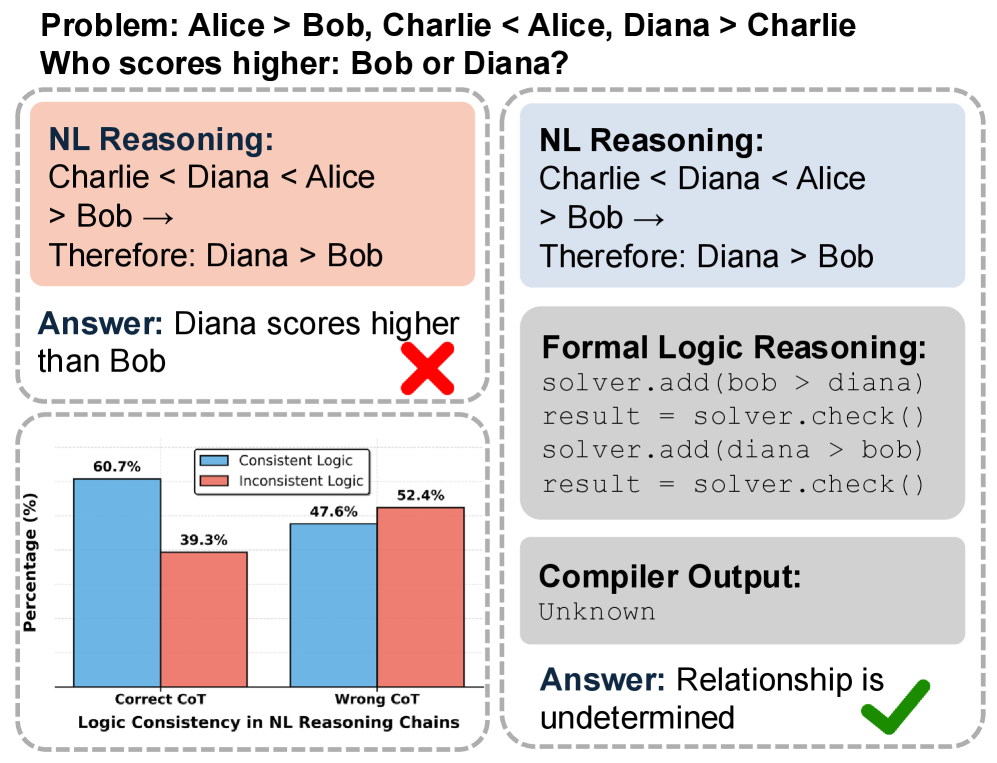

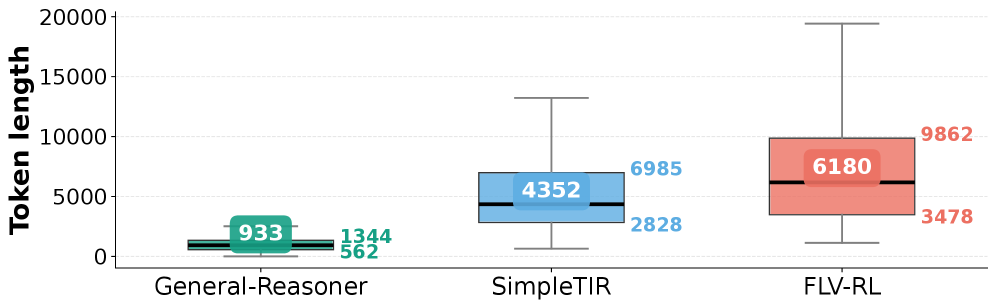

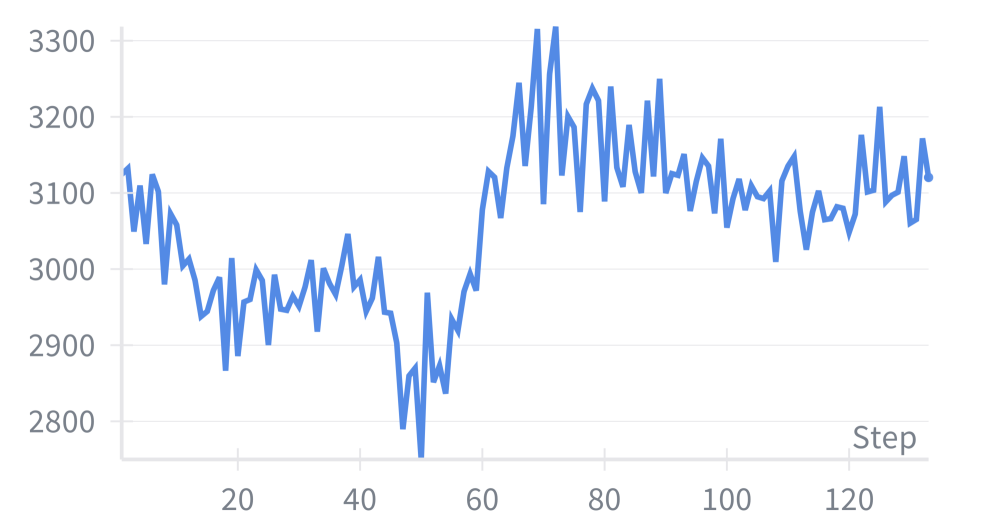

Figure 1: Comparison between natural language reasoning and formal logic verification-guided reasoning. Formal verification detects logical errors in natural language reasoning and provides corrected reasoning paths. “NL” means Natural Language.

Large Language Models (LLMs) demonstrate impressive proficiency in mathematical and logical reasoning (Ahn et al., 2024; Ji et al., 2025; Liu et al., 2025b; Chen et al., 2025a), yet their probabilistic decoding process lacks inherent mechanisms to ensure consistency (Sheng et al., 2025b; Baker et al., 2025). This tension creates significant risks, including hallucinations (Li and Ng, 2025; Sheng et al., 2025b), safety vulnerabilities (Zhou et al., 2025; Cao et al., 2025b), and reward hacking (Chen et al., 2025b; Baker et al., 2025). Although recent efforts have employed model-based verifiers to offer denser feedback than sparse ground-truth labels (Ma et al., 2025; Liu et al., 2025c; Guo et al., 2025b), they often overlook intermediate reasoning steps. To enforce more rigorous supervision, subsequent research has incorporated formal tools like theorem provers and code interpreters (Ospanov et al., 2025; Kamoi et al., 2025; Liu et al., 2025a) to address this drawback. However, existing formal approaches face critical limitations: they are often restricted to specific domains (e.g., Mathematics) (Ospanov et al., 2025; Liu et al., 2025a), rely on uncertain autoformalization (Ospanov et al., 2025; Feng et al., 2025b), or utilize post-hoc verification that cannot actively prevent error propagation (Kamoi et al., 2025; Feng et al., 2025b). This yields the primary question to be explored:

(Q) Can we utilize the formal verification to further enhance the LLM reasoning across diverse domains?

To explore this question, we first quantified the logical consistency gap in current LLMs by conducting a formal verification analysis of generated reasoning chains. A critical finding emerges as shown in Figure 1: even chains that arrive at correct final answers suffer from substantial logical inconsistency, with 39.3% of steps formally disproved, a trend consistent with previous research (Sheng et al., 2025b; Leang et al., 2025). For chains leading to incorrect answers, this failure rate rises to 52.4%. The comparative example in Figure 1 illustrates this gap: natural language reasoning incorrectly concludes “Diana $>$ Bob” from the given constraints, while formal verification identifies the incorrect conclusion and lead to an correct answer. This phenomenon reveals pervasive “reward hacking,” where models exploit superficial patterns to reach correct labels without establishing sound logical foundations (Skalse et al., 2022). Ultimately, these results expose a fundamental limitation of natural language reasoning: without explicit verification mechanisms, models cannot guarantee reasoning validity or global logical coherence across multi-step inference.

To bridge this gap, we propose a novel framework that synergizes probabilistic natural language reasoning with formal symbolic verification. Distinguished from prior approaches relying on static filtering or narrow domains, our method dynamically interleaves formal verification into the generation process. By incorporating feedback from satisfiability results, counterexamples, and execution outputs, we extend the standard chain-of-thought paradigm to enable real-time error detection and rectification. To effectively operationalize this interleaved reasoning approach, we introduce a formal logic verification-guided training framework comprising two synergistic stages: (i) Supervised Fine-tuning (SFT), which employs a hierarchical data synthesis pipeline. Crucially, we mitigate the noise of raw autoformalization by enforcing execution-based validation, thereby ensuring high alignment between natural language and formal proofs; and (ii) Reinforcement Learning (RL), which utilizes Group Relative Policy Optimization (GRPO) with a composite reward function to enforce structural integrity and penalize logical fallacies. Empirical evaluations across logical, mathematical, and general reasoning domains demonstrate that this framework substantially enhances reasoning accuracy, highlighting the potential of formal verification to push the performance boundaries of LLM reasoning. Our contributions can be concluded as follows:

- We propose the first framework that dynamically interleaves formal verification into LLM reasoning across diverse domains, utilizing the real-time feedback via symbolic interpreters to transcend the limitations of passive post-hoc filtering and domain-specific theorem proving.

- We introduce a two-stage training framework that combines formal verification-guided supervised fine-tuning with policy optimization, featuring a novel data synthesis pipeline with execution-based validation to enforce logical soundness and structural integrity.

- Extensive evaluations on six benchmarks demonstrate that our models break performance ceilings, surpassing SOTA by 10.4% (7B) and 14.2 % (14B). This validates the scalability and effectiveness of our proposed method.

2 Related Works

2.1 Large Language Models for Natural Reasoning

Supervised fine-tuning (SFT) on chain-of-thought examples (Wei et al., 2022) and step-by-step solutions (Cobbe et al., 2021) has been foundational for developing reasoning capabilities in LLMs, with recent efforts curating high-quality datasets across mathematics (LI et al., 2024), code (Xu et al., 2025), and science (Wang et al., 2022). However, SFT alone cannot effectively optimize for complex objectives beyond imitation and struggles with multi-step error correction (Lightman et al., 2023; Uesato et al., 2022; Zhou et al., 2026). Recent RL advances using outcome-based optimization methods have achieved remarkable success in mathematical reasoning (Cobbe et al., 2021; Zeng et al., 2025), code generation (Le et al., 2022; Feng et al., 2025a), and general-domain reasoning (Ma et al., 2025; Chen et al., 2025c). However, optimizing solely for final answer correctness creates perverse incentives where models learn correct conclusions through logically invalid pathways (Uesato et al., 2022), leading to reward hacking (Skalse et al., 2022) and brittleness under distribution shift (Hendrycks et al., 2021). To address these limitations, process-based rewards incorporate feedback from intermediate steps, providing dense supervision through human-annotated judgments (Uesato et al., 2022; Lightman et al., 2023; She et al., 2025; Khalifa et al., 2025). However, the probabilistic nature of language model-based verifiers introduces errors and biases (Zheng et al., 2023), limiting their ability to detect subtle logical inconsistencies that emerge during multi-step reasoning.

2.2 Formal Reasoning and Verification

Recent work has integrated formal verification tools, including theorem provers (Yang et al., 2023; Cao et al., 2025a; Tian et al., 2025), code interpreters (Feng et al., 2025a), and symbolic solvers (Li et al., 2025a), to provide machine-checkable validation beyond the biases of LLM-as-a-judge approaches (Li and Ng, 2025; Uesato et al., 2022; Lightman et al., 2023). This direction is increasingly recognized as critical for grounding generative models in verifiable systems (Ren et al., 2025; Wang et al., 2025; Hu et al., 2025). Existing approaches differ in how verification is applied. HERMES (Ospanov et al., 2025) interleaves informal reasoning with Lean-verified steps, ensuring real-time soundness but requiring mature formal libraries. Safe (Liu et al., 2025a) applies post-hoc verification to audit completed reasoning chains, though this passive mode cannot prevent error accumulation during generation. VeriCoT (Feng et al., 2025b) checks logical consistency on extracted first-order logic, while others train verifier models using formal tool signals (Kamoi et al., 2025). Tool-integrated methods (Xue et al., 2025; Zeng et al., 2025; Li et al., 2025b; Feng et al., 2025a) embed interpreter calls into generation for calculation or simulation, but often lack strict logical guarantees. These approaches face key limitations: specialization to mathematical theorem proving, treating verification as separate filtering without guiding generation, or relying on uncertain logic extraction and neural verifiers. In contrast, we propose verification-guided reasoning that extends formal verification to general logical domains and employs real-time feedback as dynamic, in-process guidance to steer reasoning trajectories and enable self-correction beyond specialized formal tasks.

3 Preliminaries

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart: Performance Comparison of Natural-SFT and FLV-SFT Across Domains

### Overview

The chart compares the number of correct answers achieved by two methods, **Natural-SFT** (blue) and **FLV-SFT** (red), across three domains: **Logical**, **Mathematical**, and **General**. Percentage improvements for FLV-SFT over Natural-SFT are annotated in green text above each red bar.

### Components/Axes

- **X-axis (Domain)**: Categorized into "Logical," "Mathematical," and "General."

- **Y-axis (Number of Correct Answers)**: Ranges from 0 to 350 in increments of 50.

- **Legend**: Located in the top-right corner, with:

- **Blue**: Natural-SFT

- **Red**: FLV-SFT

- **Annotations**: Green text above each red bar indicates percentage improvement (e.g., "+32.8%").

### Detailed Analysis

1. **Logical Domain**:

- Natural-SFT: 219 correct answers.

- FLV-SFT: 291 correct answers (+32.8% improvement).

2. **Mathematical Domain**:

- Natural-SFT: 163 correct answers.

- FLV-SFT: 243 correct answers (+49.3% improvement).

3. **General Domain**:

- Natural-SFT: 166 correct answers.

- FLV-SFT: 213 correct answers (+28.5% improvement).

### Key Observations

- FLV-SFT consistently outperforms Natural-SFT in all domains.

- The largest improvement is in the **Mathematical domain** (+49.3%), followed by **Logical** (+32.8%) and **General** (+28.5%).

- Natural-SFT shows relatively stable performance across domains, with minor variation (219 → 163 → 166).

### Interpretation

The data demonstrates that **FLV-SFT significantly enhances performance** across all tested domains, with the most pronounced gains in **Mathematical tasks** (+49.3%). This suggests FLV-SFT may be particularly effective in structured, rule-based reasoning. The smaller improvement in the **General domain** (+28.5%) implies potential limitations in handling less structured or ambiguous problems. The consistent outperformance of FLV-SFT highlights its methodological advantages, possibly due to architectural or training differences not specified in the chart. The y-axis scale (0–350) contextualizes the absolute performance, showing FLV-SFT achieves 60–80 more correct answers per domain than Natural-SFT.

</details>

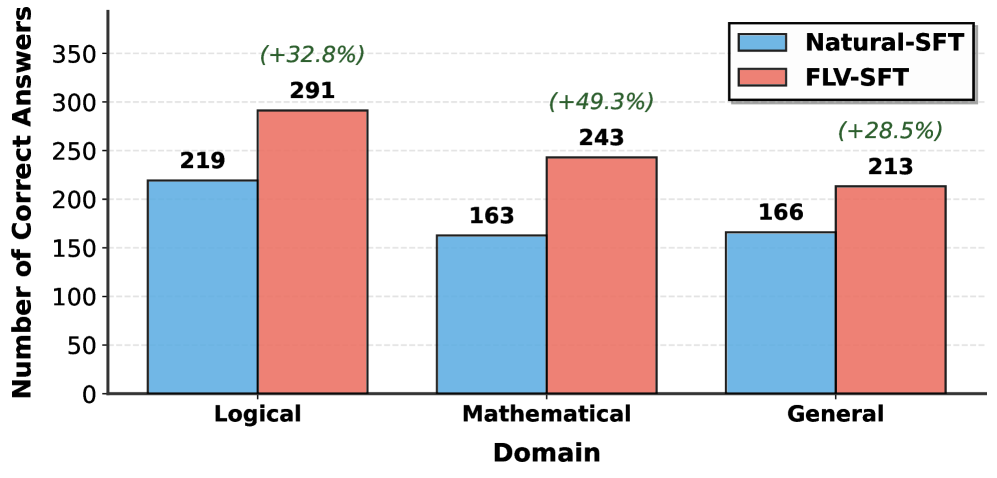

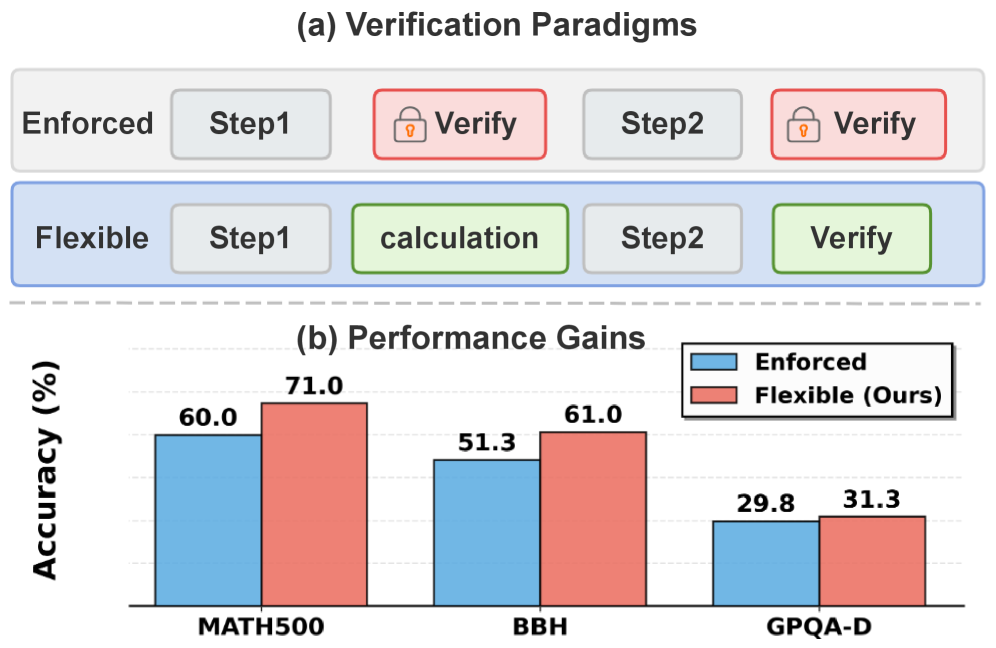

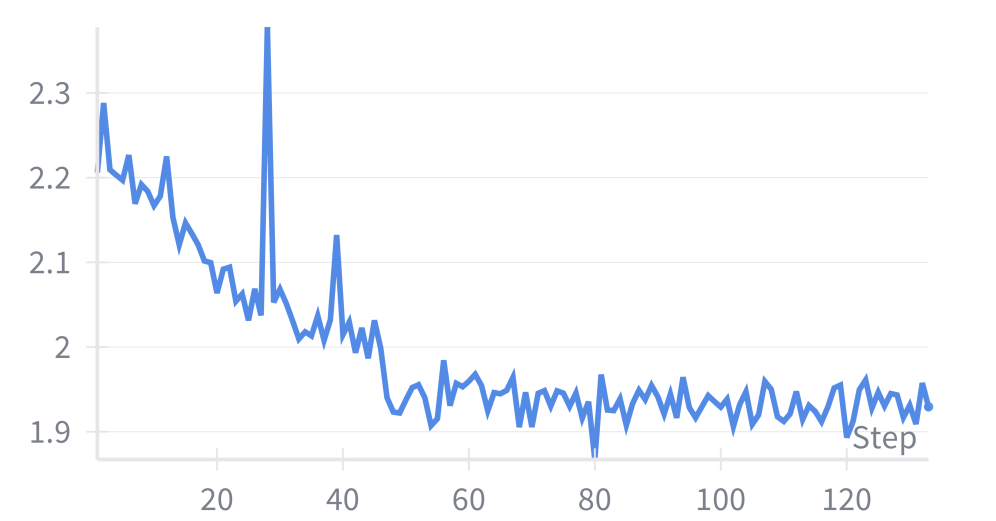

Figure 2: Number of correct answers using natural language reasoning versus formal logic verification. We randomly sampled 500 instances from each domain for this comparison.

We first present empirical evidence illustrating the gap between probabilistic natural language reasoning and formal verification in LLM reasoning. We then formally define our reasoning paradigm and introduce the preliminaries on the symbolic verification methods utilized in our framework.

3.1 Natural vs. Formal Reasoning in LLMs

Our previous experiments demonstrate that LLMs lack mechanisms to ensure global logical consistency (Figure 1), motivating us to explore formal logic verification. Formal logic provides a rigorous framework where reasoning steps can be reliably validated using formal solvers. As shown in Figure 2, integrating formal logic verification with natural language reasoning yields significant performance improvements across diverse domains. We compare two approaches: Natural-SFT, which relies solely on natural language reasoning, and FLV-SFT, which incorporates formal logic verification. Across 500 randomly sampled instances from each domain, FLV-SFT consistently outperforms Natural-SFT, achieving 291 vs. 219 correct answers in the Logical domain (+32.8%), 243 vs. 163 in the Mathematical domain (+49.3%), and 213 vs. 166 in the General domain (+28.5%). These substantial improvements across all three categories demonstrate that formal verification effectively addresses the consistency gaps inherent in purely neural approaches. These results underscore the significant potential of formal verification to bridge the reasoning gap and strongly motivate our approach of interleaving natural language reasoning with formal verification throughout the reasoning process.

3.2 Problem Formulation

Formally, let $\mathcal{D}=\{(x,y)\}$ be a dataset of reasoning tasks, where $x$ denotes the input context (e.g., problem description) and $y$ denotes the ground-truth answer.

Standard CoT Paradigm. In conventional Chain-of-Thought reasoning, an LLM $P_{\theta}$ generates a sequence of reasoning steps $z=(s_{1},s_{2},...,s_{n})$ in natural language, aiming to maximize:

$$

P_{\theta}(y,z\mid x)=P_{\theta}(y\mid z,x)\cdot\prod_{i=1}^{n}P_{\theta}(s_{i}\mid s_{<i},x) \tag{1}

$$

However, this objective does not guarantee that $z$ is logically valid or formally verifiable.

Our Paradigm: Formal Logic Verification-Guided Reasoning. We propose augmenting the reasoning chain with formal verification at each step. Specifically, we define an extended reasoning chain $z^{\prime}=(s_{1},f_{1},v_{1},s_{2},f_{2},v_{2},...,s_{n},f_{n},v_{n})$ , where:

- $s_{i}$ : Natural language reasoning step (as in standard CoT)

- $f_{i}$ : Formal specification that encodes the logical correctness of $s_{i}$ (e.g., symbolic constraints, SAT clauses, SMT formulas, or executable code)

- $v_{i}$ : Formal Logic Verification result returned by a formal verifier $\mathcal{V}$ when applied to $f_{i}$

During training, our objective is to maximize the likelihood of correct final answers:

$$

\max_{\theta}\mathbb{E}{(x,y)\sim\mathcal{D}}\left[\log P\theta(y,z^{\prime}\mid x)\right] \tag{2}

$$

During inference, the verification function $\mathcal{V}$ takes the formal reasoning as input and returns detailed feedback at each reasoning step. This feedback may include satisfiability results, counterexamples, proof traces, execution outputs, or error messages, which guide the model to generate logically sound and verifiable subsequent reasoning steps.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Flowchart: Two-Stage SFT and RL Process with Formal Verification

### Overview

The diagram illustrates a two-stage technical process combining Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) with formal logic verification. It includes input distillation, filtering, verification loops, and reward optimization. Key components include policy models, verifiers, and GRPO (Generalized Reinforcement Policy Optimization) advantage calculations.

### Components/Axes

1. **Stage 1: SFT**

- Input box labeled "Input q" (green)

- Robot icon labeled "Distill" with red X (rejected) and green check (accepted)

- Filtering step labeled "Filter by Fail rate"

- Output: "Difficult Questions" with formal logic verification

- Verification components:

- "Formal Logic Verification Trace"

- "Natural Language Reasoning"

- "Formal Logic Reasoning" (code snippet)

- Verifier with green check (verified) and red X (rejected)

- Outputs: "Verified" (green) and "Rejected" (red) paths

- FLV-SFT Model (robot icon)

2. **Stage 2: RL**

- Interleaved Reasoning Loop:

- Step tn: "Natural Language Reasoning & Formal Action" with code snippet

- Step tn+1: "Verifier Observation" with question/answer

- Step tn: "Next Natural Language Reasoning"

- Policy Model (robot icon)

- Reward system:

- "Correct Reward"

- "Instruct Reward"

- GRPO Advantage A_i (graph icon)

3. **Legend**

- Green checkmark: Accepted/Verified

- Red X: Rejected/Failed

- Positioned in top-left corner

### Detailed Analysis

- **Stage 1 Flow**:

1. Input q → Distill (robot)

2. Filtering by fail rate → Difficult Questions

3. Formal verification:

- Natural Language Reasoning → Formal Logic Reasoning (code: `implies(And(both_optimal, duality_holds), both_feasible)`)

- Verifier checks equivalence (e.g., "Are the triple iff and strong duality equivalent? False")

4. Verified questions proceed to SFT; rejected go to FLV-SFT Model

- **Stage 2 Flow**:

1. Policy Model generates reasoning steps

2. Interleaved loop:

- Natural Language Reasoning + Formal Action (code execution)

- Verifier observation feedback

- Iterative refinement of reasoning

3. GRPO Advantage A_i calculation (graph icon) for reward optimization

### Key Observations

1. **Dual Verification**: Questions undergo both natural language and formal logic verification

2. **Iterative Refinement**: Stage 2 uses an explicit interleaved reasoning loop for continuous improvement

3. **Reward Structure**: Combines correct/instruct rewards with GRPO optimization

4. **Failure Handling**: Rejected questions in Stage 1 are reprocessed through FLV-SFT Model

5. **Code Integration**: Direct execution of formal logic (Z3 solver) within reasoning steps

### Interpretation

This diagram represents a hybrid AI training pipeline that:

1. **Filters low-quality inputs** through fail rate analysis before formal verification

2. **Combines symbolic reasoning** (Z3 solver) with natural language processing in an RL framework

3. **Optimizes rewards** using GRPO advantage calculations, suggesting a focus on policy gradient methods

4. **Emphasizes verification** at multiple stages, indicating safety-critical applications

5. **Uses visual metaphors** (robot icons, checkmarks) to represent automated processes

The architecture suggests a system designed for complex reasoning tasks requiring both linguistic understanding and formal verification, with continuous improvement through reinforcement learning. The GRPO component implies optimization of policy updates using advantage estimation, likely for more efficient learning than standard PPO.

</details>

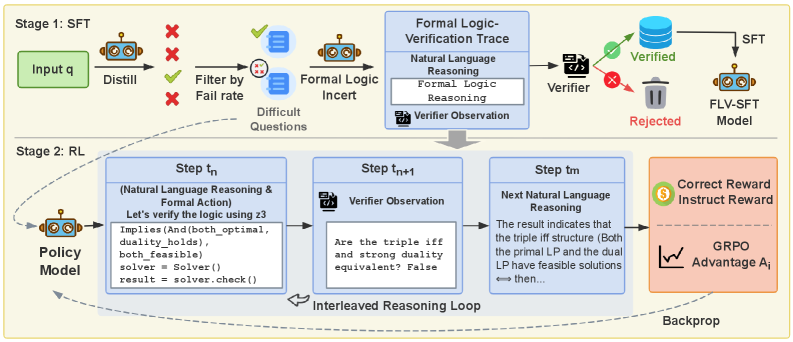

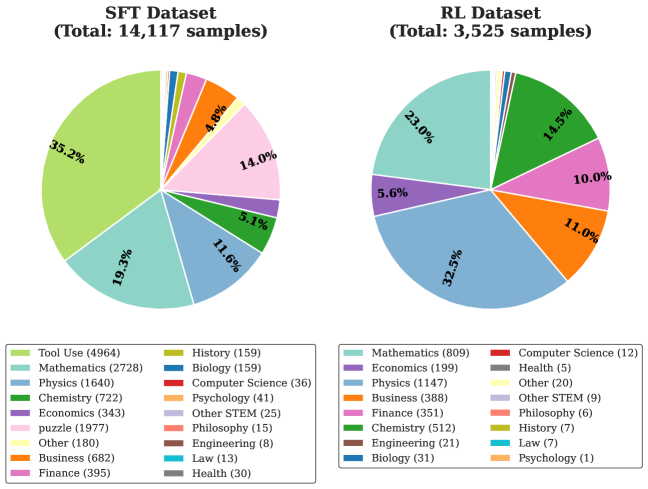

Figure 3: Overview of the formal logic verification-guided training framework. The framework operates in two stages: (1) SFT: A teacher model synthesizes formal logic verification traces, which are validated by a verifier. A subset of verified samples is used to fine-tune the model, while challenging samples are reserved for RL training. (2) RL: The policy model generates natural language reasoning followed by formal reasoning. A formal interpreter verifies the formal reasoning and provides feedback, enabling iterative refinement until the model produces a final answer or reaches the maximum number of interpreter calls. Rewards computed from verification outcomes are used to calculate advantages and update the policy model via reinforcement learning.

4 Methodology

To address logical inconsistencies and hallucinations in pure natural language reasoning (Section 3), we propose integrating formal logic verification into the reasoning process. Our approach consists of two stages: (i) Supervised Fine-tuning to enable the model to generate interleaved natural language and formal proofs, and (ii) Reinforcement Learning to optimize the model using composite rewards that enforce logical soundness and correctness.

4.1 Formal Logic Verification-Guided SFT

The primary goal of the SFT stage is to align the model’s output distribution with a structured reasoning format that supports self-verification. Since large-scale datasets containing interleaved reasoning and formal proofs are scarce, we employ a hierarchical formal proof data synthesis pipeline.

4.1.1 Data Synthesis Pipeline

CoT Generation. Given a raw reasoning problem $q$ , we first employ a strong teacher model to generate $K=4$ candidate reasoning chains. A judge model evaluates the correctness of the final answers to calculate pass rates. We select a subset of verified chains that yield correct solutions for subsequent processing. Let $z$ denote a selected correct reasoning chain. To incorporate formal logic, we utilize an LLM to decompose $z$ into discrete logical modules $\{s_{k}\}_{k=1}^{N}$ . For each module $s_{k}$ , the LLM synthesizes a corresponding formal proof $f_{k}$ and predicts an expected execution output $v_{k}^{\text{exp}}$ . The prompt template is provided in Figure 9.

Execution-based Validation and Correction. To ensure the fidelity of synthesized formal proofs, we implement a rigorous validation mechanism. Each generated formal proof $f_{k}$ is executed in a sandbox to obtain the actual output $v_{k}^{\text{act}}$ . We then perform a three-stage validation:

Stage 1: Exact Match. If the actual output exactly matches the expected output ( $v_{k}^{\text{act}}=v_{k}^{\text{exp}}$ ), the proof is accepted immediately and integrated into the training data.

Stage 2: Semantic Equivalence Check. In cases where $v_{k}^{\text{act}}≠ v_{k}^{\text{exp}}$ , we employ a verification model to assess whether the discrepancy is semantically negligible (e.g., differences in capitalization, output ordering, or numerical precision). If the outputs are deemed equivalent under mathematical or logical semantics, we proceed to Stage 3.

Stage 3: Proof Rewriting. When minor inconsistencies are detected, we require the LLM to regenerate the natural language reasoning $s_{k}^{\prime}$ conditioned on the actual execution result $v_{k}^{\text{act}}$ . This ensures that the natural language reasoning module $s_{k}^{\prime}$ , the formal proof $f_{k}^{\prime}$ , and the execution output $v_{k}^{\text{act}}$ maintain strict logical coherence. Proofs that fail both exact match and semantic equivalence checks are discarded. The resulting training instance is structured as follows:

$$

\begin{split}z_{\text{aug}}=\bigoplus_{k=1}^{N}\Big(s_{k}\oplus\texttt{<code>}f_{k}^{\prime}\texttt{</code>}\\

\oplus\texttt{<interpreter>}v_{k}^{\text{act}}\texttt{</interpreter>}\Big)\end{split} \tag{3}

$$

where $f_{k}^{\prime}$ denotes the validated formal proof and $v_{k}^{\text{act}}$ is the verified execution output. This pipeline ensures that every training example $(s,f,v)$ exhibits perfect alignment between natural language hypotheses, formal logic reasoning, and execution feedback, thereby providing high-quality supervision for the model to learn reliable reasoning patterns. See Appendix B for dataset construction details.

4.1.2 Optimization Objective

Given the augmented training dataset $\mathcal{D}_{\text{SFT}}=\{(q_{i},z_{\text{aug},i})\}_{i=1}^{M}$ , we optimize the model parameters $\theta$ by maximizing the log-likelihood of generating structured reasoning sequences:

$$

\mathcal{L}_{\text{SFT}}(\theta)=-\mathbb{E}_{(q,z_{\text{aug}})\sim\mathcal{D}_{\text{SFT}}}\left[\log P_{\theta}(z_{\text{aug}}\mid q)\right] \tag{4}

$$

This can be decomposed into the sequential generation of reasoning modules, formal proofs, and execution outputs:

$$

\begin{split}\mathcal{L}_{\text{SFT}}(\theta)=-\mathbb{E}_{(q,z_{\text{aug}})\sim\mathcal{D}_{\text{SFT}}}\Bigg[\sum_{k=1}^{N}\Big(\log P_{\theta}(s_{k}\mid q,z_{<k})\\

+\log P_{\theta}(f_{k}^{\prime}\mid q,z_{<k},s_{k})+\log P_{\theta}(v_{k}^{\text{act}}\mid q,z_{<k},s_{k},f_{k}^{\prime})\Big)\Bigg]\end{split} \tag{5}

$$

where $z_{<k}$ denotes all previous modules. We train using AdamW with cosine learning rate scheduling and gradient clipping. This stage enables the model to generate verifiable, formally grounded reasoning chains.

4.2 Formal Verification-Guided Policy Optimization

To further enhance the formal logic verification-guided reasoning capabilities of LLMs, we employ reinforcement learning. The core of this stage is a multi-dimensional reward function that provides fine-grained feedback on structure, semantics, and computational efficiency.

4.2.1 Hierarchical Reward Design

To ensure both generation stability and reasoning rigor, we design a hierarchical reward function $R(y)$ that evaluates responses in a strictly prioritized order: Format Integrity $\succ$ Structural Compliance $\succ$ Logical Correctness. The unified reward is formulated as:

$$

R(y)=\begin{cases}R_{\text{fatal}}&y\in\mathbb{C}_{\text{fatal}}\qquad\text{(L1: Fatal)}\\

R_{\text{invalid}}&y\in\mathbb{C}_{\text{invalid}}\qquad\text{(L2: Format)}\\

R_{\text{total}}(y)&\text{otherwise}\qquad\text{(L3: Valid)}\end{cases} \tag{6}

$$

The total reward for valid responses is:

$$

R_{\text{total}}(y)=R_{\text{struct}}(y)+R_{\text{logic}}(y) \tag{7}

$$

Level 1 & 2: Penalties for Malformed Generation. We first filter out pathological behaviors to prevent reward hacking and infinite loops during training.

- Fatal Errors ( $\mathbb{C}_{\text{fatal}}$ ): Responses with severe and unrecoverable execution failures (e.g., timeouts, repetition loops, excessive tool calls). We assign a harsh penalty $R_{\text{fatal}}=-\gamma_{\text{struct}}-W$ to strictly inhibit these states, where $W>0$ is a correctness weight hyperparameter.

- Format Violations ( $\mathbb{C}_{\text{invalid}}$ ): Responses that are technically executable but structurally flawed (e.g., missing termination tags, no extractable final answer, excessive verbosity). These incur a moderate penalty $R_{\text{invalid}}=-\beta_{\text{struct}}-W$ , where $\gamma_{\text{struct}}>\beta_{\text{struct}}>0$ .

Level 3: Incentives for Valid Reasoning. For responses that pass the format checks ( $y∉\mathbb{C}_{\text{fatal}}\cup\mathbb{C}_{\text{invalid}}$ ), the reward is a composite of structural quality and logical correctness.

(i) Structural Reward $R_{\text{struct}}(y)$ : Encourages concise and compliant tool usage.

$$

R_{\text{struct}}(y)=\alpha-\lambda_{\text{tag}}\cdot N_{\text{undef}}-\lambda_{\text{call}}\cdot\max(N_{\text{call}}-N_{\text{max}},0) \tag{8}

$$

Here, $\alpha$ is a base bonus, $N_{\text{undef}}$ tracks undefined tags, and the last term penalizes excessive tool invocations beyond a threshold $N_{\text{max}}$ .

(ii) Logical Correctness Reward $R_{\text{logic}}(y)$ : Evaluates the final answer $\hat{a}$ against the ground truth $a^{*}$ .

$$

R_{\text{logic}}(y)=\begin{cases}W-\lambda_{\text{len}}\cdot\Delta_{\text{len}}(\hat{a},a^{*})&\text{if }\hat{a}=a^{*}\\

-W&\text{if }\hat{a}\neq a^{*}\end{cases} \tag{9}

$$

where $\Delta_{\text{len}}$ penalizes length discrepancies to discourage verbose reasoning and promote conciseness. Detailed hyperparameter settings are provided in Appendix C.

Table 1: Comparative evaluation between our proposed formal-language verification (FLV) methods (gray background) and other approaches. Bold values denote the best results. KOR-Bench and BBH contain multiple subfields and report macro-averaged scores.

| Model | Logical | Mathemathcal | General | AVG | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| KOR-Bench | BBH | MATH500 | AIME24 | GPQA-D | TheoremQA | | |

| Qwen2.5-7B | | | | | | | |

| Base | 13.2 | 41.9 | 60.2 | 6.5 | 29.3 | 29.1 | 30.0 |

| Qwen2.5-7B-Instruct | 40.2 | 67.0 | 75.0 | 9.4 | 33.8 | 36.6 | 43.7 |

| SimpleRL-Zoo | 34.2 | 59.8 | 74.0 | 14.8 | 24.2 | 43.1 | 41.7 |

| General-Reasoner | 43.9 | 61.9 | 73.4 | 12.7 | 38.9 | 45.3 | 46.0 |

| RLPR | 42.2 | 66.2 | 77.2 | 14.2 | 37.9 | 44.3 | 47.0 |

| Synlogic | 48.1 | 66.5 | 74.6 | 15.4 | 27.8 | 39.2 | 45.3 |

| ZeroTIR | 30.0 | 40.0 | 62.4 | 28.5 | 28.8 | 36.4 | 37.7 |

| SimpleTIR | 37.0 | 62.0 | 82.6 | 41.0 | 22.7 | 51.1 | 49.4 |

| \rowcolor [HTML]E0E0E0 FLV-SFT (Ours) | 48.0 | 68.5 | 77.2 | 20.0 | 32.3 | 53.0 | 49.8 |

| \rowcolor [HTML]E0E0E0 FLV-RL (Ours) | 51.0 | 70.0 | 78.6 | 20.8 | 35.4 | 55.7 | 51.9 |

| Qwen2.5-14B | | | | | | | |

| Base | 37.4 | 52.0 | 65.4 | 3.6 | 32.8 | 33.0 | 37.4 |

| Qwen2.5-14B-Instruct | 51.5 | 72.9 | 77.4 | 12.2 | 41.4 | 41.9 | 49.6 |

| SimpleRL-Zoo | 37.2 | 72.7 | 77.2 | 12.9 | 39.4 | 48.9 | 48.1 |

| General-Reasoner | 41.3 | 71.5 | 78.6 | 17.5 | 43.4 | 55.3 | 51.3 |

| \rowcolor [HTML]E0E0E0 FLV-SFT (Ours) | 54.0 | 77.5 | 79.8 | 21.9 | 40.4 | 60.6 | 55.7 |

| \rowcolor [HTML]E0E0E0 FLV-RL (Ours) | 57.0 | 78.0 | 81.4 | 30.2 | 41.4 | 63.5 | 58.6 |

4.2.2 Optimization Objective

We optimize $\pi_{\theta}$ using GRPO. For each input $x\sim\mathcal{D}_{\text{difficult}}$ , we sample $G$ outputs ${y_{1},...,y_{G}}$ and compute:

$$

\begin{split}\mathcal{L}_{\text{GRPO}}(\theta)&=\mathbb{E}_{x\sim\mathcal{D}_{\text{difficult}}}\Bigg[\frac{1}{G}\sum_{i=1}^{G}\sum_{t=1}^{|y_{i}|}\bigg(\\

&\qquad\min\big(r_{i,t}\hat{A}_{i},\mathrm{clip}(r_{i,t},1-\epsilon,1+\epsilon)\hat{A}_{i}\big)\\

&\qquad-\beta\log\frac{\pi_{\theta}(y_{i,t}|x,y_{i,<t})}{\pi_{\text{ref}}(y_{i,t}|x,y_{i,<t})}\bigg)\Bigg]\end{split} \tag{10}

$$

where $r_{i,t}=\pi_{\theta}(y_{i,t}|x,y_{i,<t})/\pi_{\text{old}}(y_{i,t}|x,y_{i,<t})$ . The advantage $\hat{A}_{i}$ is group-normalized on $R(y_{i})$ , stabilizing training by emphasizing relative quality over absolute reward.

5 Experiment

5.1 Experimental Setup

Models. We utilize the Qwen2.5-7B and Qwen2.5-14B (Qwen-Team, 2025) as our backbone architectures. These models serve as the initialization point for both the SFT and subsequent Policy Optimization stages.

Training Data. Our training corpus is constructed using three datasets: WebInstruct-Verified (Ma et al., 2025), K&K (Xie et al., 2024), and NuminaMath-TIR (LI et al., 2024). These sources provide a collection of diverse, verifiable reasoning tasks across multiple domains. We employ DeepSeek-R1 (Guo et al., 2025a) for data distillation and difficulty assessment via pass-rate. We utilize GPT-4o (Hurst et al., 2024) as a judge for answer correctness and Claude-Sonnet-4.5 (Anthropic, 2024) to synthesize the interleaved formal logic steps as detailed in Section 4. The RL data is selected based on the pass rate of answers generated by the teacher model DeepSeek-R1, retaining only questions with a pass rate below 50%. The categorical distribution of our curated training data is illustrated in Figure 7.

Evaluation. We conduct a comprehensive evaluation across three distinct reasoning domains to assess models:

- Logical Reasoning: We employ KOR (Ma et al., 2024) to evaluate knowledge-grounded logical reasoning across diverse domains and BBH (Suzgun et al., 2023) for challenging tasks requiring multi-step deduction.

- Mathematical Reasoning: We evaluate performance on MATH-500 (Hendrycks et al., 2024) for competition-level mathematics problems and AIME 2024 for Olympiad-level mathematical reasoning challenges.

- General Reasoning: We utilize GPQA-Diamond (Rein et al., 2024) for graduate-level reasoning in subdomains including physics, chemistry, and biology. Additionally, we use TheoremQA (Chen et al., 2023) to assess graduate-level theorem application across mathematics, physics, Electrical Engineering & Computer Science (EE&CS), and Finance, testing the model’s ability to correctly apply and reason with formal theorems.

All evaluations use OpenCompass (Contributors, 2023) with greedy decoding, except AIME24 which reports avg@16 from sampling runs following RLPR (Yu et al., 2025).

Baselines. To validate the effectiveness of our framework, we compare our approach against five categories of models: (i) Base Models: Qwen2.5-7B/14B (Qwen-Team, 2025), establishing the baseline performance to measure the net gain of our training methodology. (ii) Simple-RL-Zoo (Zeng et al., 2025), a comprehensive collection of mathematics-focused RL models. (iii) General-Reasoner (Ma et al., 2025), a suite of general-domain RL models trained using a model-based verifier. (iv) RLPR-7B (Yu et al., 2025), a general-domain RL model trained via a simplified verifier-free framework. (v) SynLogic-7B (Liu et al., 2025b), a specialized model trained to enhance the logical reasoning capabilities of LLMs. (vi) ZeroTIR (Mai et al., 2025), a tool-integrated reasoning model specifically designed to execute Python code for solving mathematical problems. (vii) SimpleTIR (Xue et al., 2025), a multi-turn tool-integrated reasoning model for mathematical reasoning problems.

Implementation Details. We implement our RL training using the verl framework (Sheng et al., 2025a), following ToRL (Li et al., 2025b). SFT Stage: We use a learning rate of $1× 10^{-5}$ with cosine scheduling and a global batch size of 32. The model is trained for 3 epochs. RL Stage: We employ a learning rate of $5× 10^{-7}$ . We generate 8 rollouts per prompt with a temperature of 1.0. To prevent policy divergence, we set the KL coefficient to 0.05 and the clip ratio to 0.3. The training utilizes a batch size of 1024 and a context length of 16,384 tokens. Training proceeds for 120 steps on a cluster of 16 NVIDIA H800 GPUs. To manage computational overhead, we limit the formal verification process to a maximum of 4 iterative rounds.

5.2 Main Results

Table 2: We compare the performance of our proposed method (FLV) against natural language baselines across two training stages: SFT and GRPO. Natural-SFT/GRPO denotes models trained on the same data but without formal logic verification components. FLV-SFT/GRPO denotes our method incorporating formal logic modules and execution feedback.

| Model | Logical | Mathematical | General | AVG | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| KOR-Bench | BBH | MATH500 | AIME24 | GPQA-D | TheoremQA | | |

| Base | 13.2 | 41.9 | 60.2 | 6.5 | 29.3 | 29.1 | 30.0 |

| Natural-SFT | 30.4 | 55.9 | 56.6 | 8.5 | 27.3 | 39.1 | 36.3 |

| FLV-SFT (Ours) | 48.0 | 68.5 | 77.2 | 20.0 | 32.3 | 53.0 | 49.8 |

| Natural-RL | 35.7 | 55.4 | 54.4 | 4.8 | 30.3 | 41.2 | 37.0 |

| FLV-RL (Ours) | 51.0 | 70.0 | 78.6 | 20.8 | 35.4 | 55.7 | 51.9 |

Table 1 presents the comprehensive evaluation of our Formal Logic Verification (FLV) approach against standard baselines across Qwen2.5-7B and 14B scales.

Formal logic verification-guided methods outperform traditional natural language-based methods. As shown in the results, our proposed FLV framework demonstrates superior performance compared to standard natural language reasoning approaches. Notably, even our supervised fine-tuning stage (FLV-SFT) surpasses all comparative RL baselines on the 7B scale. On Qwen2.5-7B, FLV-SFT achieves an average score of 49.8, outperforming the strongest natural language baseline (RLPR, 47.0) by 2.8 points. This suggests that integrating formal logic verification during the SFT phase provides a more robust reasoning foundation than standard RL training on natural language chains alone. Furthermore, the application of Group Relative Policy Optimization (FLV-RL) yields consistent improvements over the SFT stage. For the 14B model, FLV-RL improves upon FLV-SFT by increasing the average score from 55.7 to 58.6, with significant gains in hard mathematical tasks like AIME 2024 (+8.3%) and general theorem application in TheoremQA (+2.9%). This confirms that our verifier-guided RL effectively refines the policy beyond the supervised baseline.

Formal logic verification unlocks model reasoning potential, achieving SOTA with limited data. Despite utilizing a concise training set (approx. 17k samples total), our approach establishes new state-of-the-art performance among models of similar size, significantly outperforming baselines that typically rely on larger-scale data consumption. (i) On the challenging AIME 2024 benchmark, our FLV-RL-14B model achieves 30.2%, nearly doubling the performance of the General-Reasoner baseline (17.5%) and far exceeding the Base model (3.6%). Similarly, on MATH-500, we achieve 81.4%, surpassing all baselines. (ii) We observe dominant performance on TheoremQA (63.5% on 14B), outperforming the nearest competitor by over 8 points. In logical reasoning (KOR-Bench), our method achieves a 15.7% improvement over the General-Reasoner on the 14B scale (57.0 vs 41.3). While FLV shows a slight weakness on GPQA-Diamond (likely due to benchmark reliability issues discussed in Appendix G), our method consistently excels in tasks requiring rigorous multi-step deduction and symbolic manipulation, validating the hypothesis that formal verification serves as a catalyst for deep reasoning capabilities.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: SimpleTIR vs FLV-RL Usage Distribution by Category

### Overview

The chart compares the usage distribution of two methods, **SimpleTIR** (blue) and **FLV-RL** (red), across three categories: **Other**, **Numerical/Scientific**, and **Symbolic/Logic**. Percentages represent the proportion of usage for each method within their respective categories. Arrows at the bottom indicate which method dominates in each category.

### Components/Axes

- **Y-Axis (Categories)**:

- "Other" (top)

- "Numerical/Scientific" (middle)

- "Symbolic/Logic" (bottom)

- **X-Axis (Percentage)**: Ranges from 0% to 80%.

- **Legend**:

- Blue = SimpleTIR

- Red = FLV-RL

- **Title**: "SimpleTIR vs FLV-RL Usage Distribution by Category"

### Detailed Analysis

1. **Other Category**:

- SimpleTIR: 35.7% (blue bar)

- FLV-RL: 17.1% (red bar)

- **Trend**: SimpleTIR usage is twice that of FLV-RL.

2. **Numerical/Scientific Category**:

- SimpleTIR: 21.8% (blue bar)

- FLV-RL: 20.4% (red bar)

- **Trend**: SimpleTIR usage slightly exceeds FLV-RL by 1.4%.

3. **Symbolic/Logic Category**:

- SimpleTIR: 42.5% (blue bar)

- FLV-RL: 62.5% (red bar)

- **Trend**: FLV-RL usage dominates by 20 percentage points.

### Key Observations

- **Symbolic/Logic Dominance**: FLV-RL uses significantly more in this category (62.5%) compared to SimpleTIR (42.5%).

- **Balanced Usage in Other Categories**: SimpleTIR leads in "Other" and "Numerical/Scientific," but the margins are narrow (1.4% and 17.1% differences).

- **Proportional Distribution**: Both methods' percentages sum to 100% across categories, indicating normalized usage distributions.

### Interpretation

The data suggests **FLV-RL is specialized for Symbolic/Logic tasks**, allocating over 60% of its usage there, while **SimpleTIR is more generalized**, with balanced but slightly higher usage in "Other" and "Numerical/Scientific" categories. The stark contrast in Symbolic/Logic usage implies FLV-RL may be optimized for logic-based workflows, whereas SimpleTIR serves broader applications. The narrow margins in non-symbolic categories highlight potential overlap in functionality, but FLV-RL’s specialization gives it a clear advantage in logic-heavy scenarios.

</details>

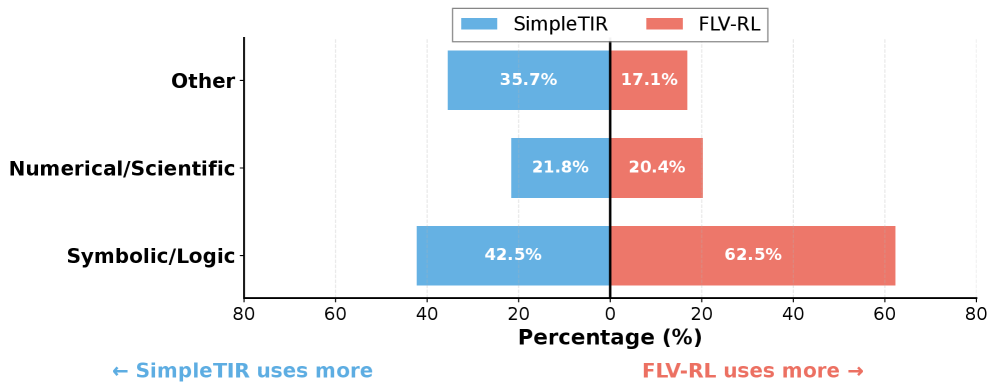

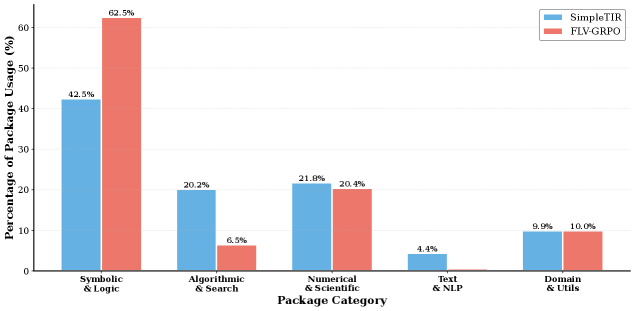

Figure 4: Python packages type distribution invoked by SimpleTIR (blue) vs. FLV-RL (red) across three domains.

Formal verification instills a shift from calculation to symbolic reasoning, enabling superior generalization. While tool-integrated baselines like SimpleTIR primarily utilize tools as “solvers” for direct computation (achieving 41.0 on AIME24), this paradigm struggles with tasks requiring rigorous logical deduction. In contrast, our FLV framework employs formal methods as a “verifier” to enforce logical consistency. This approach yields dominant performance on logic-heavy benchmarks such as KOR-Bench (51.0 vs. 37.0 for SimpleTIR) and GPQA-Diamond (35.4 vs. 28.8 for ZeroTIR). To understand the mechanism behind this reliability, we analyze the distribution of invoked Python packages in Figure 4. The data reveals a distinct behavioral shift: whereas SimpleTIR relies significantly on generic utility packages (Other), FLV-RL demonstrates a massive surge in the usage of Symbolic/Logic libraries. These formal tools constitute 62.5% of FLV-RL’s calls—a 20-point increase over SimpleTIR. Meanwhile, the usage of Numerical/Scientific libraries remains stable ( $\sim$ 21%), indicating that our method’s gains are driven specifically by the adoption of symbolic logic engines to verify reasoning processes, rather than merely computing numerical answers. See Appendix 9 for the package categorization principles.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Box Plot: Token Length Distribution Across Models

### Overview

The image is a comparative box plot visualizing the distribution of token lengths generated by three language models: **General-Reasoner**, **SimpleTIR**, and **FLV-RL**. The y-axis represents token length (0–20,000), while the x-axis categorizes the models. Each box plot includes a median line, quartile boundaries, and whiskers extending to minimum/maximum values. Numerical annotations highlight key statistics.

---

### Components/Axes

- **Y-Axis**: "Token length" (linear scale, 0–20,000).

- **X-Axis**: Three categories:

- **General-Reasoner** (green box)

- **SimpleTIR** (blue box)

- **FLV-RL** (red box)

- **Legend**: Implicit color coding (no explicit legend box). Colors correspond to model names.

- **Annotations**:

- **Median values** (bold black lines within boxes):

- General-Reasoner: **933**

- SimpleTIR: **4,352**

- FLV-RL: **6,180**

- **Maximum values** (above whiskers):

- General-Reasoner: **1,344**

- SimpleTIR: **6,985**

- FLV-RL: **9,862**

- **Minimum values** (below whiskers):

- General-Reasoner: **562**

- SimpleTIR: **2,828**

- FLV-RL: **3,478**

---

### Detailed Analysis

1. **General-Reasoner** (Green):

- Narrowest distribution (IQR: ~382 tokens).

- Median token length is the lowest (**933**), with a maximum of **1,344** and minimum of **562**.

- Symmetrical distribution with no extreme outliers.

2. **SimpleTIR** (Blue):

- Wider distribution (IQR: ~4,127 tokens).

- Median (**4,352**) is ~4.7x higher than General-Reasoner.

- Maximum (**6,985**) and minimum (**2,828**) suggest variability in output complexity.

3. **FLV-RL** (Red):

- Broadest distribution (IQR: ~5,382 tokens).

- Highest median (**6,180**), ~6.6x higher than General-Reasoner.

- Extreme maximum (**9,862**) and moderate minimum (**3,478**), indicating potential for outlier token lengths.

---

### Key Observations

- **Token Length Trends**:

- FLV-RL consistently generates longer token sequences than the other models.

- SimpleTIR shows intermediate complexity, with token lengths ~4.7x higher than General-Reasoner.

- **Outliers**:

- FLV-RL’s maximum (**9,862**) is 7.3x its minimum (**1,344** for General-Reasoner), suggesting potential inefficiencies or task-specific variability.

- **Distribution Shape**:

- General-Reasoner’s box is tightly clustered, while FLV-RL’s box is elongated, reflecting greater variability in output length.

---

### Interpretation

The data suggests that **FLV-RL** prioritizes longer, potentially more detailed responses compared to **General-Reasoner** and **SimpleTIR**. The significant difference in median token lengths (933 vs. 6,180) may indicate architectural differences (e.g., FLV-RL’s reliance on chain-of-thought reasoning) or task-specific optimizations. The high maximum token length for FLV-RL raises questions about computational efficiency or the need for post-processing to trim excessive outputs.

The **General-Reasoner**’s compact distribution implies a focus on concise, precise outputs, which could be advantageous for latency-sensitive applications. However, its lower median token length might limit its ability to handle complex reasoning tasks requiring extended context.

The **SimpleTIR** model balances token length and variability, suggesting a middle-ground approach between brevity and depth. Its wider IQR highlights potential inconsistencies in output quality or task adaptability.

---

### Spatial Grounding & Color Verification

- **General-Reasoner** (green): Leftmost box, median **933**, max **1,344**, min **562**.

- **SimpleTIR** (blue): Center box, median **4,352**, max **6,985**, min **2,828**.

- **FLV-RL** (red): Rightmost box, median **6,180**, max **9,862**, min **3,478**.

All colors align with model labels, confirming accurate legend association.

---

### Final Notes

This visualization underscores trade-offs between token efficiency and response depth across models. FLV-RL’s performance may suit tasks requiring detailed explanations, while General-Reasoner excels in brevity. Further analysis of task-specific metrics (e.g., accuracy, latency) would clarify practical implications.

</details>

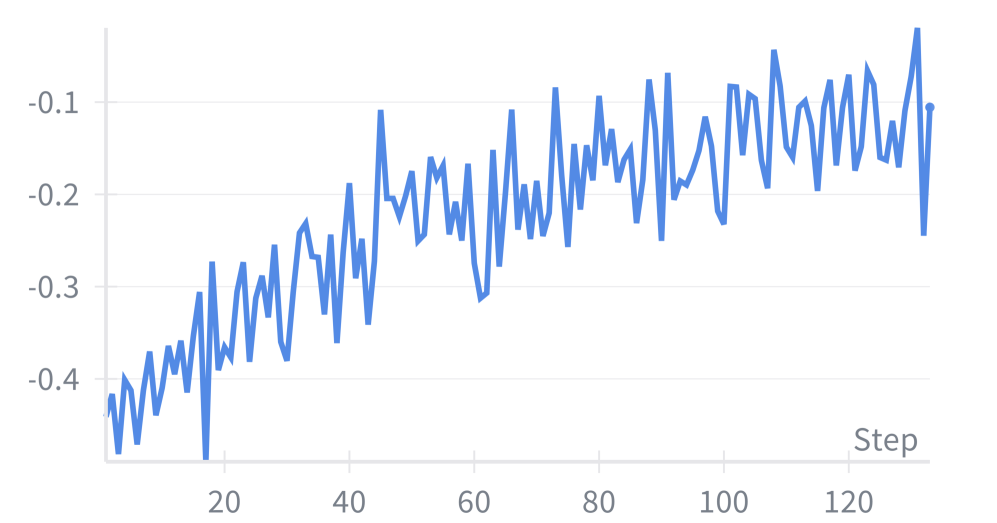

Figure 5: Token length distribution comparison across General-Reasoner, SimpleTIR, and FLV-RL. The box plots illustrate the median token usage (center line) and interquartile ranges.

Efficiency Analysis. We analyze the computational cost of our approach by comparing the token length distributions of the natural language baseline (General-Reasoner), tool-integrated reasoning (SimpleTIR), and our FLV-RL method (Figure 5). While FLV-RL incurs a moderate computational overhead, we argue that this cost is justified by the substantial performance gains observed across diverse domains. The increased token consumption represents a necessary trade-off for achieving breakthrough generalization and ensuring logical soundness in high-stakes reasoning tasks.

5.3 Ablation Studies

To evaluate the individual contributions of our proposed components, we conducted an ablation study examining two critical dimensions: the impact of FLV versus pure natural language reasoning, and the effectiveness of different training paradigms. Results are presented in Table 2.

Impact of formal logic verification. Comparing FLV-based models against natural language baselines trained on identical data reveals substantial improvements. FLV-SFT achieves 49.8% average accuracy versus 36.5% for Natural-SFT, with particularly strong gains on logic-intensive tasks (KOR-Bench: +16.2 points, TheoremQA: +13.9 points). This demonstrates that formal proofs and execution validation fundamentally improve reasoning by grounding outputs in verifiable logic rather than probabilistic patterns.

Impact of multi-stage training We can observe that supervised fine-tuning establishes strong foundations, improving from 30.0% (Base) to 49.8% (FLV-SFT). Policy optimization yields further substantial gains to 51.9% (FLV-RL). Notably, natural language baselines barely improve with RL (37.0% vs 36.5%), while FLV-RL substantially outperforms FLV-SFT, indicating formal verification provides more stable and reliable reward signals for policy optimization.

5.4 Verification Paradigm: Balancing Formalism and Computational Fluency

Our initial data construction enforced explicit verification outputs (e.g., proved / disproved) after each logical module. However, this rigid format introduced two critical issues: (i) formal language redundancy, and (ii) suppression of direct calculation. When computational verification was needed, models would bypass direct arithmetic in favor of indirect validation via z3-solver (e.g., asserting A + B == C is proved rather than computing the sum), significantly degrading mathematical performance. To address this, we adopted a flexible verification strategy that decouples calculation from validation: (i) Calculation as inference: Models invoke numerical tools directly during reasoning without mandatory verification keywords. (ii) Logic as validation: Formal verification serves as post-hoc validation rather than a per-step constraint. Figure 6 compares performance across logic, general, and math subsets under both paradigms. The flexible approach substantially improves math scores while preserving logical reasoning capability. Representative cases are detailed in Appendix E.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Combined Diagram and Bar Chart: Verification Paradigms and Performance Gains

### Overview

The image contains two primary components:

1. **(a) Verification Paradigms**: A comparative diagram illustrating two verification workflows ("Enforced" and "Flexible") with labeled steps and verification points.

2. **(b) Performance Gains**: A grouped bar chart comparing accuracy (%) between "Enforced" and "Flexible" paradigms across three tasks: MATH500, BBH, and GPQA-D.

---

### Components/Axes

#### (a) Verification Paradigms

- **Structure**:

- **Enforced**:

- Step1 (gray box) → Verify (red box with lock icon) → Step2 (gray box) → Verify (red box with lock icon).

- **Flexible**:

- Step1 (gray box) → calculation (green box) → Step2 (gray box) → Verify (green box).

- **Colors**:

- Enforced: Blue background with red-highlighted "Verify" steps.

- Flexible: Light blue background with green-highlighted "Verify" step.

- **Text**:

- Labels: "Enforced", "Flexible", "Step1", "Step2", "Verify", "calculation".

- Icons: Lock symbols in red "Verify" steps (Enforced) and green "Verify" step (Flexible).

#### (b) Performance Gains

- **Axes**:

- **Y-axis**: Accuracy (%) from 0 to 80 (linear scale).

- **X-axis**: Tasks labeled "MATH500", "BBH", "GPQA-D".

- **Bars**:

- **Enforced**: Blue bars (left in each group).

- **Flexible (Ours)**: Red bars (right in each group).

- **Legend**:

- Located in the top-right corner of the chart.

- Blue = Enforced, Red = Flexible (Ours).

---

### Detailed Analysis

#### (a) Verification Paradigms

- **Enforced Workflow**:

- Two rigid verification steps (Step1 and Step2) separated by mandatory "Verify" checks (red boxes with locks).

- **Flexible Workflow**:

- Replaces Step2 with a "calculation" phase (green box), followed by a single "Verify" step (green box).

- **Spatial Notes**:

- Enforced is positioned above Flexible, separated by a dashed line.

- "Verify" steps are visually emphasized via color (red/green) and lock icons.

#### (b) Performance Gains

- **Data Points**:

- **MATH500**:

- Enforced: 60.0%

- Flexible: 71.0%

- **BBH**:

- Enforced: 51.3%

- Flexible: 61.0%

- **GPQA-D**:

- Enforced: 29.8%

- Flexible: 31.3%

- **Trends**:

- Flexible paradigm consistently outperforms Enforced across all tasks.

- Largest gain in MATH500 (+11.0%), followed by BBH (+9.7%), and minimal gain in GPQA-D (+1.5%).

---

### Key Observations

1. **Performance Gains**:

- Flexible paradigm improves accuracy by **11.0% (MATH500)**, **9.7% (BBH)**, and **1.5% (GPQA-D)** compared to Enforced.

2. **Verification Step Impact**:

- Enforced requires two verification steps, while Flexible replaces Step2 with a calculation phase and a single verification.

3. **Task-Specific Variability**:

- GPQA-D shows the smallest gain, suggesting task-dependent effectiveness of the Flexible approach.

---

### Interpretation

- **Paradigm Effectiveness**:

The Flexible paradigm’s higher accuracy suggests that reducing rigid verification steps (e.g., replacing Step2 with a calculation phase) improves performance. This may indicate that overly strict verification introduces unnecessary constraints.

- **Task Dependency**:

The minimal gain in GPQA-D implies that the benefits of flexibility are more pronounced in tasks like MATH500 and BBH, which may involve more structured or calculative reasoning.

- **Design Implications**:

The diagram highlights a trade-off between verification rigor and efficiency. The Flexible approach’s success suggests that adaptive verification (e.g., calculation-phase validation) could be prioritized in workflows without compromising accuracy.

---

**Note**: All values and trends are extracted directly from the chart and diagram labels. Colors and spatial relationships were cross-verified with the legend and positional cues.

</details>

Figure 6: Enforced vs. Flexible Verification Paradigms. (a) Enforced verification imposes rigid checkpoints throughout the reasoning process, while flexible verification enables adaptive utilization of logic verification. (b) Performance gains after switching to flexible reasoning across three representative benchmarks.

6 Conclusion

In this work, we addressed the fundamental tension between probabilistic language generation and logical consistency in LLM reasoning by introducing a framework that dynamically integrates formal logic verification into the reasoning process. Through our two-stage training methodology combining FLV-SFT’s rigorous data synthesis pipeline with formal logic verification-guided policy optimization, we demonstrated that real-time symbolic feedback can effectively mitigate logical fallacies that plague standard Chain-of-Thought approaches. Empirical evaluation across six diverse benchmarks validates our approach, with our 7B and 14B models achieving average improvements of 10.4% and 14.2% respectively over SOTA baselines, while providing interpretable step-level correctness guarantees. Beyond performance gains, our framework establishes a principled foundation for trustworthy reasoning systems by bridging neural fluency with symbolic rigor, thereby enabling more robust logical inference. This opens pathways toward more reliable AI that scales effectively to complex real-world problems across domains requiring strict logical soundness.

Impact Statement

This paper presents work whose goal is to advance the field of Machine Learning. There are many potential societal consequences of our work, none which we feel must be specifically highlighted here.

References

- J. Ahn, R. Verma, R. Lou, D. Liu, R. Zhang, and W. Yin (2024) Large language models for mathematical reasoning: progresses and challenges. arXiv preprint arXiv:2402.00157. Cited by: §1.

- A. Anthropic (2024) Claude 3.5 sonnet model card addendum. Claude-3.5 Model Card. External Links: Link Cited by: §5.1.

- B. Baker, J. Huizinga, L. Gao, Z. Dou, M. Y. Guan, A. Madry, W. Zaremba, J. Pachocki, and D. Farhi (2025) Monitoring reasoning models for misbehavior and the risks of promoting obfuscation. arXiv preprint arXiv:2503.11926. Cited by: §1.

- C. Cao, M. Li, J. Dai, J. Yang, Z. Zhao, S. Zhang, W. Shi, C. Liu, S. Han, and Y. Guo (2025a) Towards advanced mathematical reasoning for llms via first-order logic theorem proving. arXiv preprint arXiv:2506.17104. Cited by: §2.2.

- C. Cao, H. Zhu, J. Ji, Q. Sun, Z. Zhu, Y. Wu, J. Dai, Y. Yang, S. Han, and Y. Guo (2025b) SafeLawBench: towards safe alignment of large language models. arXiv preprint arXiv:2506.06636. Cited by: §1.

- J. Chen, Q. He, S. Yuan, A. Chen, Z. Cai, W. Dai, H. Yu, Q. Yu, X. Li, J. Chen, et al. (2025a) Enigmata: scaling logical reasoning in large language models with synthetic verifiable puzzles. arXiv preprint arXiv:2505.19914. Cited by: §1.

- W. Chen, M. Yin, M. Ku, P. Lu, Y. Wan, X. Ma, J. Xu, X. Wang, and T. Xia (2023) Theoremqa: a theorem-driven question answering dataset. arXiv preprint arXiv:2305.12524. Cited by: 3rd item.

- Y. Chen, J. Benton, A. Radhakrishnan, J. Uesato, C. Denison, J. Schulman, A. Somani, P. Hase, M. Wagner, F. Roger, et al. (2025b) Reasoning models don’t always say what they think. arXiv preprint arXiv:2505.05410. Cited by: §1.

- Z. Chen, J. Yang, T. Xiao, R. Zhou, L. Zhang, X. Xi, X. Shi, W. Wang, and J. Wang (2025c) Reinforcement learning for tool-integrated interleaved thinking towards cross-domain generalization. arXiv preprint arXiv:2510.11184. Cited by: §2.1.

- K. Cobbe, V. Kosaraju, M. Bavarian, M. Chen, H. Jun, L. Kaiser, M. Plappert, J. Tworek, J. Hilton, R. Nakano, et al. (2021) Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168. Cited by: §2.1.

- O. Contributors (2023) OpenCompass: a universal evaluation platform for foundation models. Note: https://github.com/open-compass/opencompass Cited by: §5.1.

- J. Feng, S. Huang, X. Qu, G. Zhang, Y. Qin, B. Zhong, C. Jiang, J. Chi, and W. Zhong (2025a) Retool: reinforcement learning for strategic tool use in llms. arXiv preprint arXiv:2504.11536. Cited by: §2.1, §2.2.

- Y. Feng, N. Weir, K. Bostrom, S. Bayless, D. Cassel, S. Chaudhary, B. Kiesl-Reiter, and H. Rangwala (2025b) VeriCoT: neuro-symbolic chain-of-thought validation via logical consistency checks. arXiv preprint arXiv:2511.04662. Cited by: §1, §2.2.

- D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. (2025a) Deepseek-r1: incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948. Cited by: §5.1.

- J. Guo, Z. Chi, L. Dong, Q. Dong, X. Wu, S. Huang, and F. Wei (2025b) Reward reasoning model. arXiv preprint arXiv:2505.14674. Cited by: §1.

- D. Hendrycks, C. Burns, S. Kadavath, A. Arora, S. Basart, E. Tang, D. Song, and J. Steinhardt (2021) Measuring mathematical problem solving with the MATH dataset. In Thirty-fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track (Round 2), External Links: Link Cited by: §2.1.

- D. Hendrycks, C. Burns, S. Kadavath, A. Arora, S. Basart, E. Tang, D. Song, and J. Steinhardt (2024) Measuring mathematical problem solving with the math dataset, 2021. URL https://arxiv. org/abs/2103.03874 2. Cited by: 2nd item.

- J. Hu, J. Zhang, Y. Zhao, and T. Ringer (2025) HybridProver: augmenting theorem proving with llm-driven proof synthesis and refinement. arXiv preprint arXiv:2505.15740. Cited by: §2.2.

- A. Hurst, A. Lerer, A. P. Goucher, A. Perelman, A. Ramesh, A. Clark, A. Ostrow, A. Welihinda, A. Hayes, A. Radford, et al. (2024) Gpt-4o system card. arXiv preprint arXiv:2410.21276. Cited by: §5.1.

- Y. Ji, X. Tian, S. Zhao, H. Wang, S. Chen, Y. Peng, H. Zhao, and X. Li (2025) AM-thinking-v1: advancing the frontier of reasoning at 32b scale. arXiv preprint arXiv:2505.08311. Cited by: §1.

- R. Kamoi, Y. Zhang, N. Zhang, S. S. S. Das, and R. Zhang (2025) Training step-level reasoning verifiers with formal verification tools. arXiv preprint arXiv:2505.15960. Cited by: §1, §2.2.

- M. Khalifa, R. Agarwal, L. Logeswaran, J. Kim, H. Peng, M. Lee, H. Lee, and L. Wang (2025) Process reward models that think. arXiv preprint arXiv:2504.16828. Cited by: §2.1.

- H. Le, Y. Wang, A. D. Gotmare, S. Savarese, and S. C. H. Hoi (2022) Coderl: mastering code generation through pretrained models and deep reinforcement learning. Advances in Neural Information Processing Systems 35, pp. 21314–21328. Cited by: §2.1.

- J. O. J. Leang, G. Hong, W. Li, and S. B. Cohen (2025) Theorem prover as a judge for synthetic data generation. arXiv preprint arXiv:2502.13137. Cited by: §1.

- C. Li, Z. Tang, Z. Li, M. Xue, K. Bao, T. Ding, R. Sun, B. Wang, X. Wang, J. Lin, et al. (2025a) CoRT: code-integrated reasoning within thinking. arXiv preprint arXiv:2506.09820. Cited by: §2.2.

- J. LI, E. Beeching, L. Tunstall, B. Lipkin, R. Soletskyi, S. C. Huang, K. Rasul, L. Yu, A. Jiang, Z. Shen, Z. Qin, B. Dong, L. Zhou, Y. Fleureau, G. Lample, and S. Polu (2024) NuminaMath. Numina. Note: [https://huggingface.co/AI-MO/NuminaMath-CoT](https://github.com/project-numina/aimo-progress-prize/blob/main/report/numina_dataset.pdf) Cited by: §2.1, §5.1.

- J. Li and H. T. Ng (2025) The hallucination dilemma: factuality-aware reinforcement learning for large reasoning models. arXiv preprint arXiv:2505.24630. Cited by: §1, §2.2.

- X. Li, H. Zou, and P. Liu (2025b) ToRL: scaling tool-integrated rl. External Links: 2503.23383, Link Cited by: §2.2, §5.1.

- H. Lightman, V. Kosaraju, Y. Burda, H. Edwards, B. Baker, T. Lee, J. Leike, J. Schulman, I. Sutskever, and K. Cobbe (2023) Let’s verify step by step. In The Twelfth International Conference on Learning Representations, Cited by: §2.1, §2.2.

- C. Liu, Y. Yuan, Y. Yin, Y. Xu, X. Xu, Z. Chen, Y. Wang, L. Shang, Q. Liu, and M. Zhang (2025a) Safe: enhancing mathematical reasoning in large language models via retrospective step-aware formal verification. arXiv preprint arXiv:2506.04592. Cited by: §1, §2.2.

- J. Liu, Y. Fan, Z. Jiang, H. Ding, Y. Hu, C. Zhang, Y. Shi, S. Weng, A. Chen, S. Chen, et al. (2025b) SynLogic: synthesizing verifiable reasoning data at scale for learning logical reasoning and beyond. arXiv preprint arXiv:2505.19641. Cited by: §1, §5.1.

- S. Liu, H. Liu, J. Liu, L. Xiao, S. Gao, C. Lyu, Y. Gu, W. Zhang, D. F. Wong, S. Zhang, et al. (2025c) Compassverifier: a unified and robust verifier for llms evaluation and outcome reward. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, pp. 33454–33482. Cited by: §1.

- K. Ma, X. Du, Y. Wang, H. Zhang, Z. Wen, X. Qu, J. Yang, J. Liu, M. Liu, X. Yue, W. Huang, and G. Zhang (2024) KOR-bench: benchmarking language models on knowledge-orthogonal reasoning tasks. External Links: 2410.06526, Link Cited by: 1st item.

- X. Ma, Q. Liu, D. Jiang, G. Zhang, Z. Ma, and W. Chen (2025) General-reasoner: advancing llm reasoning across all domains. arXiv preprint arXiv:2505.14652. Cited by: Appendix C, §1, §2.1, §5.1, §5.1.

- X. Mai, H. Xu, W. Wang, Y. Zhang, W. Zhang, et al. (2025) Agentic rl scaling law: spontaneous code execution for mathematical problem solving. In The Thirty-ninth Annual Conference on Neural Information Processing Systems, Cited by: §5.1.

- A. Ospanov, Z. Feng, J. Sun, H. Bai, X. Shen, and F. Farnia (2025) HERMES: towards efficient and verifiable mathematical reasoning in llms. arXiv preprint arXiv:2511.18760. Cited by: §1, §2.2.

- Qwen-Team (2025) Qwen2.5 technical report. External Links: 2412.15115, Link Cited by: §5.1, §5.1.

- D. Rein, B. L. Hou, A. C. Stickland, J. Petty, R. Y. Pang, J. Dirani, J. Michael, and S. R. Bowman (2024) Gpqa: a graduate-level google-proof q&a benchmark. In First Conference on Language Modeling, Cited by: 3rd item.

- Z. Ren, Z. Shao, J. Song, H. Xin, H. Wang, W. Zhao, L. Zhang, Z. Fu, Q. Zhu, D. Yang, et al. (2025) Deepseek-prover-v2: advancing formal mathematical reasoning via reinforcement learning for subgoal decomposition. arXiv preprint arXiv:2504.21801. Cited by: §2.2.

- S. She, J. Liu, Y. Liu, J. Chen, X. Huang, and S. Huang (2025) R-prm: reasoning-driven process reward modeling. arXiv preprint arXiv:2503.21295. Cited by: §2.1.

- G. Sheng, C. Zhang, Z. Ye, X. Wu, W. Zhang, R. Zhang, Y. Peng, H. Lin, and C. Wu (2025a) Hybridflow: a flexible and efficient rlhf framework. In Proceedings of the Twentieth European Conference on Computer Systems, pp. 1279–1297. Cited by: §5.1.

- J. Sheng, L. Lyu, J. Jin, T. Xia, A. Gu, J. Zou, and P. Lu (2025b) Solving inequality proofs with large language models. arXiv preprint arXiv:2506.07927. Cited by: §1, §1.

- J. Skalse, N. Howe, D. Krasheninnikov, and D. Krueger (2022) Defining and characterizing reward gaming. Advances in Neural Information Processing Systems 35, pp. 9460–9471. Cited by: §1, §2.1.

- M. Suzgun, N. Scales, N. Schärli, S. Gehrmann, Y. Tay, H. W. Chung, A. Chowdhery, Q. Le, E. Chi, D. Zhou, et al. (2023) Challenging big-bench tasks and whether chain-of-thought can solve them. In Findings of the Association for Computational Linguistics: ACL 2023, pp. 13003–13051. Cited by: 1st item.

- Y. Tian, R. Huang, X. Wang, J. Ma, Z. Huang, Z. Luo, H. Lin, D. Zheng, and L. Du (2025) EvolProver: advancing automated theorem proving by evolving formalized problems via symmetry and difficulty. arXiv preprint arXiv:2510.00732. Cited by: §2.2.

- J. Uesato, N. Kushman, R. Kumar, F. Song, N. Siegel, L. Wang, A. Creswell, G. Irving, and I. Higgins (2022) Solving math word problems with process-and outcome-based feedback. arXiv preprint arXiv:2211.14275. Cited by: §2.1, §2.2.

- H. Wang, M. Unsal, X. Lin, M. Baksys, J. Liu, M. D. Santos, F. Sung, M. Vinyes, Z. Ying, Z. Zhu, et al. (2025) Kimina-prover preview: towards large formal reasoning models with reinforcement learning. arXiv preprint arXiv:2504.11354. Cited by: §2.2.

- Y. Wang, S. Mishra, P. Alipoormolabashi, Y. Kordi, A. Mirzaei, A. Arunkumar, A. Ashok, A. S. Dhanasekaran, A. Naik, D. Stap, et al. (2022) Super-naturalinstructions: generalization via declarative instructions on 1600+ nlp tasks. arXiv preprint arXiv:2204.07705. Cited by: §2.1.

- J. Wei, X. Wang, D. Schuurmans, M. Bosma, F. Xia, E. Chi, Q. V. Le, D. Zhou, et al. (2022) Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems 35, pp. 24824–24837. Cited by: §2.1.

- C. Xie, Y. Huang, C. Zhang, D. Yu, X. Chen, B. Y. Lin, B. Li, B. Ghazi, and R. Kumar (2024) On memorization of large language models in logical reasoning. External Links: 2410.23123, Link Cited by: §5.1.

- Z. Xu, Y. Liu, Y. Yin, M. Zhou, and R. Poovendran (2025) Kodcode: a diverse, challenging, and verifiable synthetic dataset for coding. arXiv preprint arXiv:2503.02951. Cited by: §2.1.

- Z. Xue, L. Zheng, Q. Liu, Y. Li, X. Zheng, Z. Ma, and B. An (2025) Simpletir: end-to-end reinforcement learning for multi-turn tool-integrated reasoning. arXiv preprint arXiv:2509.02479. Cited by: §2.2, §5.1.

- K. Yang, A. Swope, A. Gu, R. Chalamala, P. Song, S. Yu, S. Godil, R. J. Prenger, and A. Anandkumar (2023) Leandojo: theorem proving with retrieval-augmented language models. Advances in Neural Information Processing Systems 36, pp. 21573–21612. Cited by: §2.2.

- T. Yu, B. Ji, S. Wang, S. Yao, Z. Wang, G. Cui, L. Yuan, N. Ding, Y. Yao, Z. Liu, et al. (2025) RLPR: extrapolating rlvr to general domains without verifiers. arXiv preprint arXiv:2506.18254. Cited by: §5.1, §5.1.

- S. Zeng (2026) External Links: Link Cited by: Appendix G.

- W. Zeng, Y. Huang, Q. Liu, W. Liu, K. He, Z. Ma, and J. He (2025) Simplerl-zoo: investigating and taming zero reinforcement learning for open base models in the wild. arXiv preprint arXiv:2503.18892. Cited by: §2.1, §2.2, §5.1.

- L. Zheng, W. Chiang, Y. Sheng, S. Zhuang, Z. Wu, Y. Zhuang, Z. Lin, Z. Li, D. Li, E. Xing, et al. (2023) Judging llm-as-a-judge with mt-bench and chatbot arena. Advances in neural information processing systems 36, pp. 46595–46623. Cited by: §2.1.

- K. Zhou, C. Liu, X. Zhao, S. Jangam, J. Srinivasa, G. Liu, D. Song, and X. E. Wang (2025) The hidden risks of large reasoning models: a safety assessment of r1. arXiv preprint arXiv:2502.12659. Cited by: §1.

- Y. Zhou, C. Cao, J. Yang, L. Wu, C. He, S. Han, and Y. Guo (2026) LRAS: advanced legal reasoning with agentic search. arXiv preprint arXiv:2601.07296. Cited by: §2.1.

Limitations

Despite significant improvements in logical reasoning capabilities, our framework faces two primary limitations. First, integrating real-time formal verification introduces computational overhead, increasing RL training time by approximately 2× compared to standard baselines. However, this cost is acceptable given the substantial performance gains (10.4%-14.2% improvement) and superior data efficiency—we achieve comparable results using only a fraction of the training data required by existing methods, such that reduced data collection costs offset the increased training time. Second, our data synthesis pipeline faces formalization challenges when translating natural language into verifiable formal representations. While conversion success rates are high in structured domains like mathematics and logic, ambiguous or commonsense-heavy descriptions may produce mapping errors that generate incorrect verification feedback, limiting generalizability to open-ended reasoning tasks and highlighting the need for more robust auto-formalization techniques.

Appendix A Reward Calculation Pseudocode

Table 3: Hierarchical reward function for formal logic verification-guided policy optimization

| Input: Output $y$ , Ground truth answer $a^{*}$ , Predicted answer $\hat{a}$ |

| --- |

| Output: Total reward $R(y)$ |

| Hyperparameters: |

| $W=3$ (correctness weight) |

| $\gamma_{\text{struct}}=3.0$ , $\beta_{\text{struct}}=1.0$ (severity penalties) |

| $\alpha=1.0$ (base structural score) |

| $\lambda_{\text{tag}}=0.005$ , $\tau_{\text{tag}}=200$ (tag penalty coefficients) |

| $\lambda_{\text{call}}=0.5$ , $N_{\text{max}}=3$ (tool call limits) |

| $\lambda_{\text{len}}=0.04$ , $\delta_{\text{max}}=10$ (length penalty) |

| Step 1: Check Fatal Errors ( $\mathbb{C}_{\text{fatal}}$ ) |

| if token-level repetition detected or |

| execution timeout or |

| tool calls $>2× N_{\text{max}}$ or |

| multiple termination tags then |

| return $R(y)=-\gamma_{\text{struct}}-W=-8.0$ |

| Step 2: Check Invalid Format ( $\mathbb{C}_{\text{invalid}}\setminus\mathbb{C}_{\text{fatal}}$ ) |

| if solution extraction fails or |

| solution length $>512$ tokens or |

| missing closing tag or |

| $N_{\text{max}}<$ tool calls $≤ 2× N_{\text{max}}$ then |

| return $R(y)=-\beta_{\text{struct}}-W=-6.0$ |

| Step 3: Compute Structural Reward $R_{\text{struct}}(y)$ |

| $N_{\text{undef}}=$ count of undefined tags |

| $N_{\text{call}}=$ count of tool invocations |

| $R_{\text{struct}}(y)=\alpha-\lambda_{\text{tag}}·\min(N_{\text{undef}},\tau_{\text{tag}})$ |

| $-\lambda_{\text{call}}·\max(N_{\text{call}}-N_{\text{max}},0)$ |

| Step 4: Compute Correctness Reward $R_{\text{correct}}(y)$ |

| $f_{\text{len}}(\hat{a},a^{*})=\min(|\text{len}(\hat{a})-\text{len}(a^{*})|,\delta_{\text{max}})$ |

| if $\hat{a}$ matches $a^{*}$ then |

| $R_{\text{correct}}(y)=W-\lambda_{\text{len}}· f_{\text{len}}(\hat{a},a^{*})$ |

| else |

| $R_{\text{correct}}(y)=-W$ |

| Step 5: Compute Total Reward |

| $R(y)=R_{\text{struct}}(y)+R_{\text{correct}}(y)$ |

Table 3 provides the complete algorithmic implementation of our multi-component reward function used in FLV-RL training. The pseudocode details the step-by-step computation of format rewards, correctness rewards, and formal verification rewards, including all constraint checks and penalty mechanisms described in Section 4.

The time complexity for calculating the reward $R(y)$ is dominated by the verification of structural constraints and semantic correctness. Let $L$ denote the length of the generated response $y$ in tokens. The initial screening for pathological states ( $\mathbb{C}_{\text{fatal}}$ ) and invalid formats ( $\mathbb{C}_{\text{invalid}}$ ) requires a linear scan of the output tokens to detect repetition loops, count tool invocations ( $N_{\text{call}}$ ), and validate tags, resulting in $O(L)$ complexity. If the response is valid, computing $R_{\text{struct}}(y)$ involves constant-time arithmetic operations after the initial scan. The semantic verification $R_{\text{correct}}(y)$ depends on the evaluation metric; assuming string matching or metric comparison between the extracted answer $\hat{a}$ and ground truth $a^{*}$ , this step operates in $O(|\hat{a}|+|a^{*}|)$ . Therefore, the total time complexity per generation is $O(L)$ , ensuring the reward calculation remains efficient and does not introduce significant computational overhead during training.

Appendix B Dataset Construction Details

Our data construction pipeline systematically processes a reasoning question through multiple stages of generation, logic extraction, formal translation, and verification to create high-quality training data for supervised fine-tuning. The resulting dataset combines natural language reasoning with formally verified logical modules, enabling our models to learn both human-readable reasoning patterns and mathematically sound logical validation.