# Localizing and Correcting Errors for LLM-based Planners

**Authors**: Aditya Kumar, William Cohen

Abstract

Large language models (LLMs) have demonstrated strong reasoning capabilities on math and coding, but frequently fail on symbolic classical planning tasks. Our studies, as well as prior work, show that LLM-generated plans routinely violate domain constraints given in their instructions (e.g., walking through walls). To address this failure, we propose iteratively augmenting instructions with Localized In-Context Learning (L-ICL) demonstrations: targeted corrections for specific failing steps. Specifically, L-ICL identifies the first constraint violation in a trace and injects a minimal input-output example giving the correct behavior for the failing step. Our proposed technique of L-ICL is much effective than explicit instructions or traditional ICL, which adds complete problem-solving trajectories, and many other baselines. For example, on an 8×8 gridworld, L-ICL produces valid plans 89% of the time with only 60 training examples, compared to 59% for the best baseline, an increase of 30%. L-ICL also shows dramatic improvements in other domains (gridworld navigation, mazes, Sokoban, and BlocksWorld), and on several LLM architectures.

Machine Learning, ICML

1 Introduction

Large language models (LLMs) and agentic systems reason and plan effectively in domains such as mathematics, coding, and question answering (Khattab et al., 2023; Yao et al., 2023a), suggesting that modern LLMs possess strong general planning capabilities. However, studies on classical planning benchmarks reveals a more nuanced picture: LLMs frequently fail, even on simple planning tasks that symbolic planners solve easily (Valmeekam et al., 2023; Stechly et al., 2024). Past researchers have analyzed plans produced by LLMs such as SearchFormer (Lehnert et al., 2024), which are fine-tuned to generate structured reasoning chains that can be parsed, and shown that LLMs frequently violate domain constraints given in their instructions (Stechly et al., 2024). For example, LLMs might propose plans that walk through a wall in a maze, or pick up a block when the robot’s gripper is already occupied.

Table 1: Performance on an 8 $×$ 8 two-room gridworld using DeepSeek V3. Paths start in one room and end in the other. Valid plans never leave the grid or cross walls; Successful plans reach their goals; and Optimal plans are successful and use the minimum number of steps. L-ICL[ $m$ ] denotes our method trained on $m$ examples, with the corresponding character count of L-ICL examples provided. All experiments are provided with an ASCII representation of the grid.

| Zero-Shot | 16 | 0 | 0 |

| --- | --- | --- | --- |

| RAG-ICL [10k chars] | 20 | 6 | 6 |

| RAG-ICL [20k chars] | 21 | 9 | 9 |

| ReAct | 48 | 41 | 37 |

| Self-Consistency ( $k{=}5$ ) | 59 | 45 | 43 |

| Self-Refine ( $k{=}5$ ) | 51 | 44 | 38 |

| PTP PTP, introduced in (Cohen and Cohen, 2024) describes a method to prompt LLMs with partially specified programs / L-ICL [ $m=0$ ] | 40 | 33 | 28 |

| L-ICL [ours, $m=60$ , 2k chars] | 89 | 89 | 77 |

Table 1 demonstrates this on a very simple 8 $×$ 8 two-room gridworld navigation task. Despite receiving complete information about the domain (grid layout and obstacles) no baseline method produces valid plans even 60% of the time. Agentic and test-time-scaling approaches perform better, but still produce many invalid plans. We conjecture that LLMs cannot build valid plans for this task because they fail to access the necessary domain-specific knowledge in the prompt consistently. This hypothesis is consistent with the failure of LLMs in these domains, and with their success in math and coding, where the necessary knowledge is general, and hence learnable in pre-training or fine-tuning.

In-context learning (ICL) is a natural remedy. However, complete solution trajectories demonstrate that plans work, not why individual steps are valid—leaving constraints implicit. As Table 1 shows, even 20,000 characters of retrieved trajectories RAG-ICL, retrieving demonstrations for tasks with similar start and end goals. yield only 9% success. The rules must still be inferred, and inference fails.

L-ICL escapes this trap by letting failures reveal which constraints need explicit specification. Rather than full trajectories, we augment prompts with localized examples that demonstrate correct behavior on individual steps where models err. We call this approach Localized In-Context Learning (L-ICL). This approach achieves higher performance with much less context: 2,000 characters of targeted corrections outperforms 20,000 characters of trajectories. Generating L-ICL examples requires analyzing and correcting reasoning traces at training time, which we enable by prompting models to produce structured reasoning traces, and then correcting the traces with a symbolic planner. Thus, L-ICL might be viewed as distilling domain knowledge from a symbolic system into an LLM.

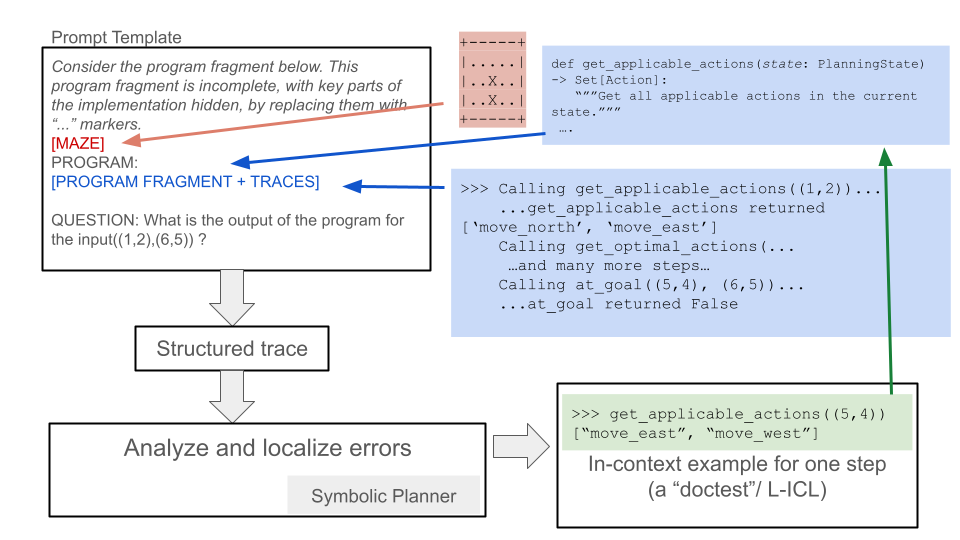

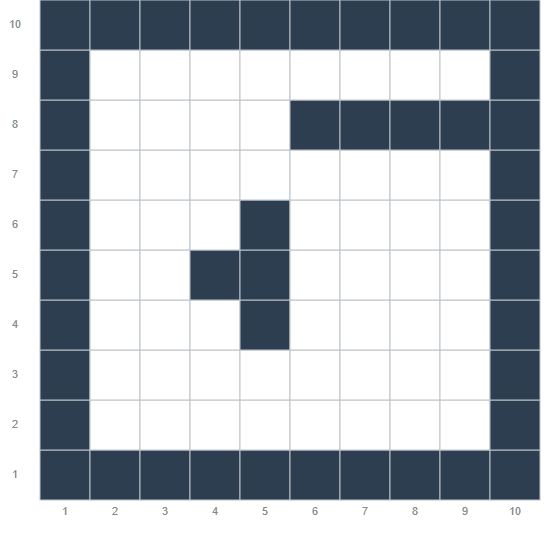

Figure 1 summarizes our approach, which builds on Program Trace Prompting (PTP) (Cohen and Cohen, 2024). PTP recasts reasoning as producing a “program trace” for a partially specified program. A PTP prompt includes, for each type of reasoning “step”, documentation (but not code) for a corresponding subroutine, along with (optional) example inputs and outputs. For instance, a gridworld navigation task might include a subroutine get_applicable_actions(cell) that returns the set of obstacle-free cells adjacent to the input cell. Because no executable code is provided in PTP, just documentation, the LLM must infer how to perform the reasoning step: e.g., in gridworld navigation, the LLM must infer which moves are valid for a task. PTP’s prompting scheme provides a natural insertion point for localized corrections: when a subroutine call fails, we locally augment that subroutine’s documentation by adding a new input/output example. The input/output examples use Python’s doctest syntax, a format well-represented in LLM training data, so readily understandable by code-trained LLMs.

<details>

<summary>graphs/corrected/Research_Presentation.png Details</summary>

### Visual Description

## Diagram: Prompt Template and Error Analysis Flow

### Overview

The image presents a diagram illustrating a prompt template used in conjunction with program analysis and error localization. It outlines the process of using a program fragment with hidden implementation details, tracing its execution, and analyzing the results to identify errors. The diagram includes code snippets, example calls, and a symbolic planner component.

### Components/Axes

The diagram consists of the following components:

1. **Prompt Template (Top-Left)**:

* Text: "Prompt Template"

* Text: "Consider the program fragment below. This program fragment is incomplete, with key parts of the implementation hidden, by replacing them with "..." markers."

* Label: "[MAZE]" (connected by a red arrow to a maze representation)

* Label: "PROGRAM: [PROGRAM FRAGMENT + TRACES]" (connected by a blue arrow to program execution traces)

* Text: "QUESTION: What is the output of the program for the input((1,2),(6,5)) ?"

2. **Maze Representation (Top-Center)**:

* A grid-like structure with "..." and "X" characters.

* Appears to represent a maze or grid-based environment.

* The grid contains the following elements:

* `+` characters at the corners.

* `-` characters forming the top and bottom borders.

* `|` characters forming the left and right borders.

* `.` and `X` characters within the grid.

* The grid appears to be 3 rows by 5 columns.

* Row 1: `...+...`

* Row 2: `|.X.X.|`

* Row 3: `|.X.X.|`

* Row 4: `...+...`

3. **Code Snippet (Top-Right)**:

* `def get_applicable_actions(state: PlanningState) -> Set[Action]:`

* `"""Get all applicable actions in the current state."""`

4. **Execution Traces (Center-Right)**:

* `>>> Calling get_applicable_actions((1,2))...`

* `...get_applicable_actions returned ['move_north', 'move_east']`

* `Calling get_optimal_actions(...`

* `...and many more steps...`

* `Calling at_goal((5,4), (6,5))...`

* `...at_goal returned False`

5. **"Structured trace" (Center)**:

* A rectangular box with the text "Structured trace" inside.

* Connected by a downward arrow from the "Prompt Template" box.

6. **"Analyze and localize errors" (Bottom-Left)**:

* A rectangular box with the text "Analyze and localize errors" inside.

* Connected by a downward arrow from the "Structured trace" box.

* "Symbolic Planner" is written below the box.

7. **In-context Example (Bottom-Right)**:

* `>>> get_applicable_actions((5,4))`

* `["move_east", "move_west"]`

* `In-context example for one step (a "doctest"/ L-ICL)`

### Detailed Analysis or ### Content Details

* **Prompt Template**: The prompt template defines the structure of the input provided to the system. It includes a program fragment with missing parts (indicated by "..." markers), a maze representation, and a question about the program's output for a given input.

* **Maze Representation**: The maze representation provides a context or environment for the program to operate within. The 'X' characters likely represent obstacles or walls.

* **Code Snippet**: The code snippet defines a function `get_applicable_actions` that takes a `PlanningState` as input and returns a set of possible actions.

* **Execution Traces**: The execution traces show the sequence of function calls and return values during the program's execution. It includes calls to `get_applicable_actions` and `at_goal`, along with intermediate steps.

* **Structured Trace**: This represents the structured representation of the program's execution, which is used for error analysis.

* **Analyze and localize errors**: This step involves analyzing the structured trace to identify and locate errors in the program.

* **Symbolic Planner**: The symbolic planner is a component used to reason about the program's behavior and identify potential errors.

* **In-context Example**: This provides a specific example of how the `get_applicable_actions` function is used in a particular state.

### Key Observations

* The diagram illustrates a process for analyzing and localizing errors in a program by using a prompt template, tracing the program's execution, and analyzing the results.

* The maze representation provides a context for the program to operate within.

* The execution traces show the sequence of function calls and return values during the program's execution.

* The symbolic planner is used to reason about the program's behavior and identify potential errors.

### Interpretation

The diagram describes a system for automated program debugging. The "Prompt Template" provides a structured input to the system, including a program fragment, a maze environment, and a question. The system then executes the program fragment, generating "Traces" of its execution. These traces are converted into a "Structured Trace," which is then analyzed to "localize errors." The "Symbolic Planner" likely uses symbolic execution or other formal methods to reason about the program's behavior and identify discrepancies between the expected and actual behavior. The "In-context example" provides a concrete illustration of how the `get_applicable_actions` function works, which can be used to guide the error localization process. The overall goal is to automate the process of finding and fixing bugs in programs.

</details>

Figure 1: Overview of L-ICL. The prompt template follows PTP: it includes documentation for each subroutine but no executable code. Prompting an LLM produces a trace that follows the format of the $k$ provided example traces. The trace is parsed to find the first failing step, and the failing input is passed to an oracle that returns the correct output. This yields a localized example (e.g., $x{=}\texttt{(5,4)}$ , $y{=}\texttt{['move\_east','move\_west']}$ ) that is inserted into the subroutine’s documentation. This process iterates over training instances to accumulate examples in a failure-driven manner.

Given a planning task, we first prompt the LLM to generate a trace using the PTP format. We then analyze this trace programmatically to identify the first failing step, i.e., the first subroutine call whose output violates domain constraints. An oracle (a symbolic simulator or verifier) provides the correct output for that input, yielding a localized correction. This correction is then inserted into the prompt. For instance, if the LLM’s first invalid move is from cell $(3,4)$ , we L-ICL will add to the prompt an example showing get_applicable_actions((3,4)) should return [’move_north’, ’move_south’]. This localized correction directly addresses the failure, and of course can also be generalized by the LLM to other similar cases.

This process iterates over multiple training instances, accumulating a bank of targeted examples that progressively refine the model’s understanding of domain constraints. Crucially, the oracle is required only during training.

Experimentally, prompt augmentation with L-ICL dramatically reduces domain violations, and thus improves LLM planning performance across multiple domains. Beyond the results of Table 1 and other gridworld tasks, we evaluate on classical planning benchmarks like BlocksWorld and Sokoban, seeing similar gains. L-ICL is also remarkably sample-efficient: peak performance is typically achieved with only 30–60 training examples. L-ICL works on multiple LLM architectures (DeepSeek V3, DeepSeek V3.1, Claude Haiku 4.5, Claude Sonnet 4.5), and learned constraints can transfer across problem sizes (see Appendix B).

To summarize our contributions: (1) Using the PTP variant of semi-structured reasoning, we precisely measure constraint violation rates in LLM-generated plans across multiple planning domains, revealing that such violations are the dominant failure mode. (2) We introduce L-ICL, a method that improves planning validity through localized, failure-driven corrections, and show that targeted examples outperform retrieval of complete trajectories even when the latter uses 10 $×$ more context. (3) We demonstrate consistent improvements across multiple planning domains and four LLM architectures. (4) We release our benchmark suite and code to facilitate future research on LLM planning.

2 Related Work

2.1 LLM Planning: Capabilities and Limitations

The planning capabilities of LLMs remain contested. One line of work reports strong performance on some planning tasks when LLMs are augmented with appropriate scaffolding: e.g., Tree of Thoughts achieves 74% on Game of 24 versus 4% for chain-of-thought (Yao et al., 2023a), RAP-MCTS reaches 100% on Blocksworld instances requiring 6 or fewer steps (Hao et al., 2023), and ReAct improves interactive decision-making by 34% over baselines (Yao et al., 2023b). However, systematic evaluation on classical planning benchmarks reveals persistent failures. Valmeekam et al. (2023) show GPT-4 achieves only 12% success on International Planning Competition (IPC) domains; and Stechly et al. (2024) demonstrate that chain-of-thought improvements are brittle and fail to generalize beyond surface patterns. The LLM-Modulo framework (Kambhampati et al., 2024) argues that LLMs function as approximate knowledge sources rather than autonomous planners, achieving strong results only when paired with external verifiers. Kaesberg et al. (2025) also documented that LLMs are challenged by 2D navigation tasks, similar to ones we study here. Most recently, Shojaee et al. (2025) identify a “complexity collapse” phenomenon: reasoning models’ performance degrades sharply beyond certain problem complexities, with accuracy dropping to zero on harder instances even when token budgets remain available.

We follow Stechly et al. (2024) in working to diagnose why LLMs violate constraints using structured reasoning chains; however, we work with PTP as a prompting scheme, rather than models fine-tuned to produce structured reasoning chains, allowing us to consider more kinds of models, and more powerful ones. With L-ICL, we also propose a practical method to reduce these violations. Our work confirms that constraint violations are a common failure mode, and shows that targeted corrections outperform both agentic scaffolding and retrieval-based ICL approaches.

2.2 Approaches to Improve LLM Reasoning

Prior work addresses LLM reasoning limitations through three main strategies: structured output formats, test-time compute scaling, and in-context learning. Structured Reasoning. Chain-of-thought prompting (Wei et al., 2022) improves performance by eliciting intermediate steps, though explanations may be unfaithful to actual computation (Turpin et al., 2023). PTP (Cohen and Cohen, 2024) offers interpretable traces: prompts specify subroutine signatures without implementations, and the LLM produces structured outputs that can be parsed and verified (Leng et al., 2025). We build on PTP because its explicit subroutine structure provides natural insertion points for localized corrections. Test-Time Compute. Several methods improve reasoning by expending more computation at inference. Self-Consistency (Wang et al., 2023) aggregates multiple sampled paths via majority voting; Tree of Thoughts (Yao et al., 2023a) explores branching reasoning trajectories; and Self-Refine (Madaan et al., 2023) iteratively improves outputs through self-critique. Tool-augmented approaches interleave reasoning with execution: Program of Thoughts (Chen et al., 2022), PAL (Gao et al., 2023), and Chain of Code (Li et al., 2023) generate executable code, while ReAct (Yao et al., 2023b) interleaves reasoning with tool calls. These methods require multiple LLM calls or external tools at inference. Critically, Stechly et al. (2025) show that LLM self-verification is unreliable, making self-critique ineffective for planning. In-Context Learning. ICL enables task adaptation through examples (Brown et al., 2020), with effectiveness depending on example selection (Liu et al., 2022) and format (Min et al., 2022). For planning, a natural approach is retrieving complete solution trajectories (RAG-ICL). However, we find this ineffective: 20,000 characters of retrieved trajectories yield only 9% success on our gridworld benchmark. Complete trajectories demonstrate that solutions work but leave implicit why individual steps are valid. L-ICL addresses this by providing localized input-output pairs that directly encode constraints. Table 2 summarizes how L-ICL relates to prior approaches.

Table 2: Comparison of L-ICL with related approaches. L-ICL uniquely combines example-based training with localized feedback while requiring only single-pass inference.

| Self-Refine Tree of Thoughts Self-Consistency | none none none | many many many | none none none |

| --- | --- | --- | --- |

| ReAct | none | many | none |

| ReAct + oracle f/b | none | many | yes |

| Fine-tuning | trajectory | one | none |

| RAG-ICL | trajectory | one | none |

| L-ICL (ours) | one step | one | train only |

3 Method

We first describe Program Trace Prompting (PTP), the structured reasoning framework underlying our approach. We then introduce Localized In-Context Learning (L-ICL), our method for iteratively injecting domain constraints into the prompt. Finally, we describe our experimental domains and evaluation setup.

3.1 Background: Program Trace Prompting

Program Trace Prompting (PTP) (Cohen and Cohen, 2024) recasts reasoning as producing an execution trace for a partially specified program. A PTP prompt contains documentation for each subroutine (function name, typed arguments, return type, and a natural language description of its purpose), a small number of example traces showing how subroutines are called, and the query problem to solve. Crucially, subroutine implementations are withheld; the LLM must infer correct behavior from context. For planning tasks, we define subroutines corresponding to planning primitives. For instance, a gridworld navigation task includes a subroutine that returns applicable actions from a given state (those that stay in bounds and avoid walls), a subroutine that returns the resulting state after executing an action, and a subroutine that checks whether the current state satisfies the goal. The LLM generates a trace by repeatedly invoking these subroutines, producing outputs consistent with the documentation and examples. Because the trace follows a predictable structure, we can parse it programmatically and verify each step against a ground-truth oracle. This explicit subroutine structure provides natural insertion points for corrections: when a specific subroutine call fails, we can augment that subroutine’s documentation without modifying the rest of the prompt. Full subroutine specifications for each domain appear in Appendix E.

3.2 Localized In-Context Learning (L-ICL)

The key insight behind L-ICL is that domain constraints can be taught more effectively through targeted examples than through complete solution trajectories. When an LLM violates a constraint (e.g., proposing to move through a wall), traditional approaches either reject the entire plan or provide feedback on the final outcome. L-ICL instead identifies the precise point of failure and injects a minimal correction for that specific subroutine call. First Failure Identification. Given an LLM-generated trace, we parse each subroutine call and verify its output against an oracle. Let $c_{1},c_{2},...,c_{n}$ denote the sequence of subroutine calls in the trace. We identify the first failing call $c_{i^{*}}$ such that the LLM’s output differs from the oracle’s:

$$

i^{*}=\min\{i:\text{LLM}(c_{i})\neq\text{Oracle}(c_{i})\}

$$

Focusing on the first failure is deliberate. Planning errors cascade: an invalid move at step $k$ renders all subsequent state representations incorrect, making later “errors” artifacts of the initial mistake rather than independent failures. Correcting the root cause addresses multiple downstream errors simultaneously. Localized Correction. For the failing call $c_{i^{*}}$ with input $x$ and incorrect output $\hat{y}$ , we query the oracle to obtain the correct output $y^{*}=\text{Oracle}(x)$ . This yields a correction tuple $(f,x,y^{*})$ where $f$ is the subroutine name. We format this correction as a doctest-style example and insert it into the documentation for subroutine $f$ , augmenting the original description with an additional input-output pair. This format, drawn from Python’s widely used doctest convention, is well-represented in LLM training data. Appendix E.3 provides concrete examples of the correction format. Iterative Accumulation. L-ICL iterates over a set of training problems $\{P_{1},P_{2},...,P_{m}\}$ . For each problem, we generate a trace using the current prompt, identify the first failing subroutine call (if any), and add the corresponding correction to the prompt. Corrections accumulate across training problems, progressively “hardening” the prompt to avoid constraint violations. Algorithm 1 provides pseudocode. L-ICL converges quickly: we see diminishing returns after only 30–60 training examples on our benchmark tasks (see Section 4).

Algorithm 1 Localized In-Context Learning (L-ICL)

0: Base prompt $\mathcal{P}_{0}$ with PTP structure, training problems $\{P_{1},...,P_{m}\}$ , oracle $\mathcal{O}$

0: Augmented prompt $\mathcal{P}$

$\mathcal{P}←\mathcal{P}_{0}$

$\mathcal{C}←\emptyset$ $\triangleright$ Correction set

for $j=1$ to $m$ do

$\tau←\textsc{GenerateTrace}(\mathcal{P}_{0},P_{j})$

$\{c_{1},...,c_{n}\}←\textsc{ParseCalls}(\tau)$

for $i=1$ to $n$ do

$(f,x,\hat{y})← c_{i}$

$y^{*}←\mathcal{O}(f,x)$

if $\hat{y}≠ y^{*}$ then

$\mathcal{C}←\mathcal{C}\cup\{(f,x,y^{*})\}$ $\triangleright$ Record first failure

break

end if

end for

end for

$\mathcal{P}←\textsc{InsertCorrections}(\mathcal{P}_{0},\mathcal{C})$ $\triangleright$ Batch update

return $\mathcal{P}$

3.3 Experimental Domains

We design our experimental domains as a progressive ablation study that isolates different facets of planning difficulty. Starting from simple navigation, we incrementally add complexity along several axes: spatial structure, action diversity, state tracking requirements, and strategic reasoning. Table 3 summarizes how each domain isolates specific challenges.

Table 3: Progressive ablation across experimental domains. Each domain adds complexity along one or more axes while controlling others.

| 8 $×$ 8 Grid | Simple | 4 | 1 | No |

| --- | --- | --- | --- | --- |

| 10 $×$ 10 Maze | Complex | 4 | 1 | No |

| Sokoban Grid | Complex | 4 | 1 | No |

| Full Sokoban | Complex | 8 | 3 | Yes |

| BlocksWorld | None | 2 | 5 | No |

The 8 $×$ 8 Two-Room Gridworld is our simplest setting, testing basic spatial reasoning: an agent must navigate between two rooms connected by a single doorway. The 10 $×$ 10 Maze increases spatial complexity with narrow corridors and dead ends, requiring longer plans (typically 15–25 steps versus 8–12 for the gridworld). Full Sokoban introduces the critical challenge of multi-object state tracking (an agent and a box), where the agent must coordinate its position with multiple box positions, and where certain pushes lead to irreversible trap states. Sokoban-Style Gridworld ablates Sokoban by removing pushable boxes, but keeping the spatial layout and action semantics, isolating the effect of richer environment structure. Finally, BlocksWorld differs qualitatively from navigation: every object (block) is dynamic, constraints depend on relational configurations rather than spatial positions, and we provide an algorithmic sketch to test whether L-ICL can improve adherence to prescribed planning strategies. Full domain specifications appear in Appendix C.

3.4 Baselines and Metrics

We compare L-ICL against several approaches spanning prompting strategies, agentic methods, and retrieval. Zero-Shot. The LLM receives the problem description and instructions with no in-context examples, measuring baseline capability without demonstration. RAG-ICL. We retrieve complete CoT-formatter solution trajectories for similar problems based on start/goal similarity, and evaluate at 10k and 20k character budgets. ReAct. The LLM is instructed to interleave reasoning and action selection in its output, following the prompt format specified in Appendix F.2. We evaluate a prompt-only version and an oracle-augmented version that queries a verifier during planning. Self-Consistency. Majority voting with $k{=}5$ reasoning paths sampled at temperature 0.7. Self-Refine. The LLM generates a solution, then critiques and refines it, based on its own feedback, for $k{=}5$ iterations. Tree-of-Thoughts. The LLM explores a tree of intermediate steps, evaluating and pruning branches (prompt-only, no external search). Crucially, ReAct (Oracle) queries the verifier at test time for each proposed action, while L-ICL uses the oracle only during training. At inference, L-ICL requires a single forward pass with no external dependencies. For L-ICL, we report results with different numbers of training examples $m$ (denoted L-ICL[ $m$ ]) to assess sample efficiency.

We evaluate plans along three axes that form a natural hierarchy. A plan is valid if it violates no domain constraints (e.g., no wall collisions). A plan is successful if it is valid and reaches the goal state. A plan is optimal if it is successful and uses the minimum number of steps. Hence, a large valid-to-success gap indicates the model follows rules but fails to reach goals, and a large success-to-optimal gap indicates inefficient but functional plans.

3.5 Experimental Setup

Our primary experiments use DeepSeek V3 (DeepSeek-AI, 2024), with additional evaluation on DeepSeek V3.1, Claude 4.5 Haiku, and Claude Sonnet 4.5 (Anthropic, 2025) to assess cross-architecture generalization. For each domain, we generate 100 test problems with random start and goal configurations. Training problems for L-ICL are drawn from a disjoint pool of 250 instances. For domains other than blocks world, prompts use a textual state representation, as suggested in Figure 1, and unless stated otherwise, use an ASCII representation of the grid. Oracles are domain-specific: simple simulators for gridworlds and mazes, and the Fast Downward planner (Helmert, 2006) and tools like the K-Star Planner (Katz and Lee, 2023; Lee et al., 2023) for Sokoban and BlocksWorld. We use temperature 1 for optimal model performance (DeepSeek-AI, 2024) unless stated. L-ICL is trained on up to 240 examples.

4 Results

We evaluate L-ICL across our domain suite, demonstrating that localized corrections dramatically improve constraint adherence while remaining sample-efficient. We ask four key questions about L-ICL: (1) Does it learn domain constraints? (2) Is it more efficient than retrieval-based ICL? (3) Does it require explicit spatial representations? (4) Does it generalize across LLM architectures?

4.1 L-ICL Learns Domain Constraints

Table 4 presents our main results across all domains. L-ICL consistently outperforms all baselines, often by substantial margins. Beyond raw performance gains, the pattern of results across our progressive domain suite reveals which aspects of planning L-ICL addresses effectively.

Table 4: Main results across all domains. We report %(V)alid and %(S)uccessful. All baselines receive ASCII grid representations. L-ICL[ $m$ ] denotes training on $m$ examples. Best results in bold, second-best underlined. $\dagger$ ReAct (Oracle f/b) receives oracle feedback at inference time. ∗ L-ICL (no grid) methods are handicapped: they receive no ASCII grid, and rely purely on L-ICL to infer structure.

| | 8 $×$ 8 Grid | 10 $×$ 10 Maze | Sokoban Grid | Full Sokoban | BlocksWorld | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Method | V | S | V | S | V | S | V | S | V | S |

| Zero-Shot | 16 | 0 | 3 | 0 | 15 | 0 | 1 | 0 | 10 | 10 |

| RAG-ICL (10k chars) | 20 | 6 | 7 | 1 | 17 | 4 | 31 | 11 | 25 | 25 |

| RAG-ICL (20k chars) | 21 | 9 | 7 | 4 | 25 | 10 | 36 | 15 | 32 | 32 |

| ReAct (Prompt-Only) | 48 | 41 | 6 | 5 | 19 | 12 | 1 | 0 | 46 | 45 |

| Self-Consistency ( $k{=}5$ ) | 59 | 45 | 3 | 3 | 10 | 5 | 2 | 1 | 31 | 31 |

| Self-Refine ( $k{=}5$ ) | 51 | 44 | 3 | 1 | 13 | 8 | 0 | 0 | 49 | 49 |

| ToT (Prompt-Only) | 33 | 12 | 1 | 0 | 3 | 2 | 0 | 0 | 50 | 40 |

| ReAct (Oracle f/b) † | 55 | 45 | 6 | 5 | 21 | 13 | 3 | 0 | 51 | 51 |

| L-ICL[ $m{=}0$ ] (ours) | 40 | 33 | 20 | 16 | 21 | 17 | 19 | 13 | 50 | 48 |

| L-ICL[ $m{=}60$ ] (ours) | 89 | 89 | 40 | 21 | 63 | 49 | 46 | 20 | 68 | 66 |

| L-ICL[ $m{=}0$ ] ∗ (ours) | 19 | 12 | 7 | 6 | 10 | 8 | 12 | 9 | 50 | 48 |

| L-ICL[ $m{=}60$ ] ∗ (ours) | 73 | 63 | 57 | 27 | 62 | 44 | 42 | 14 | 68 | 66 |

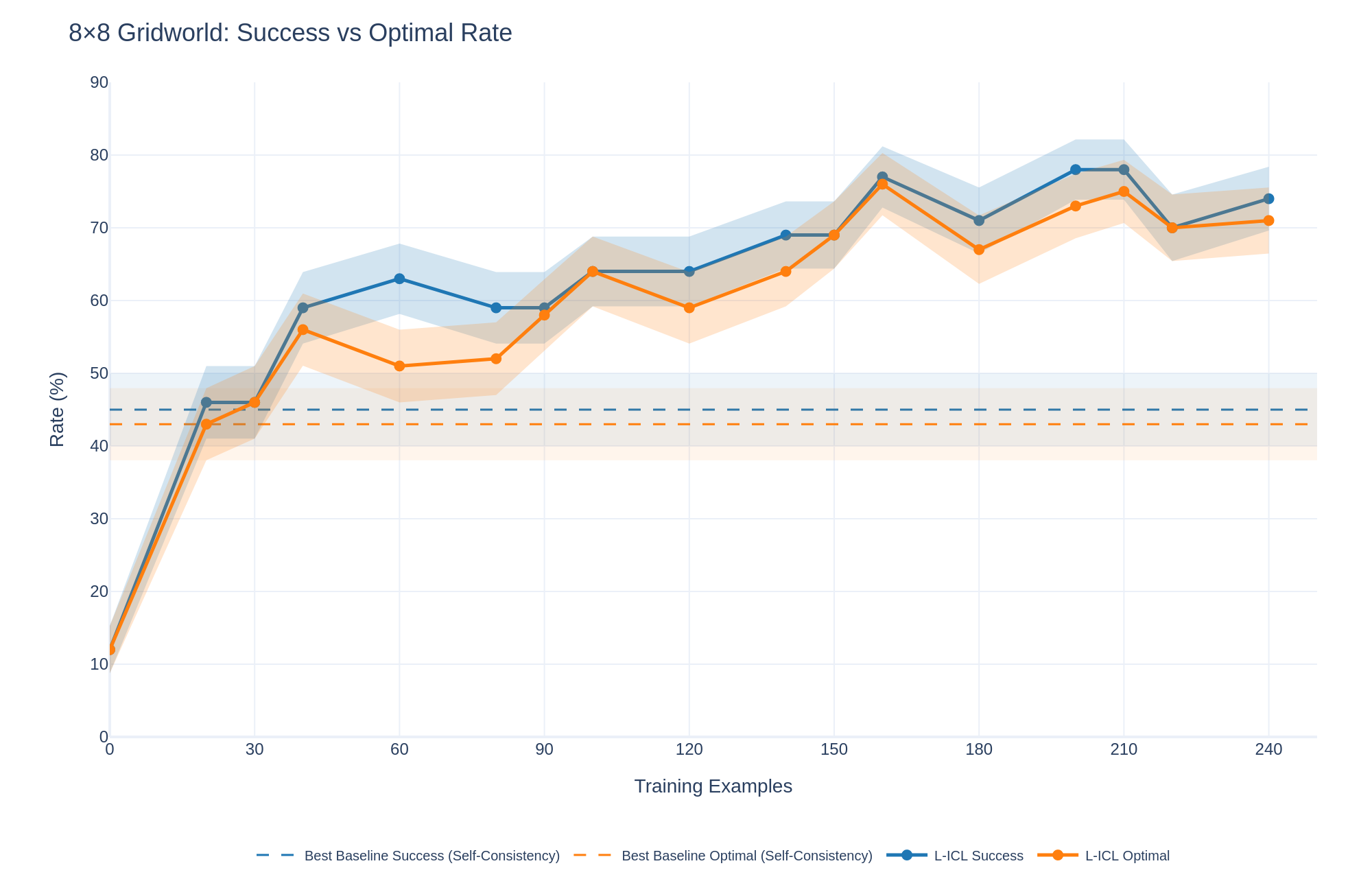

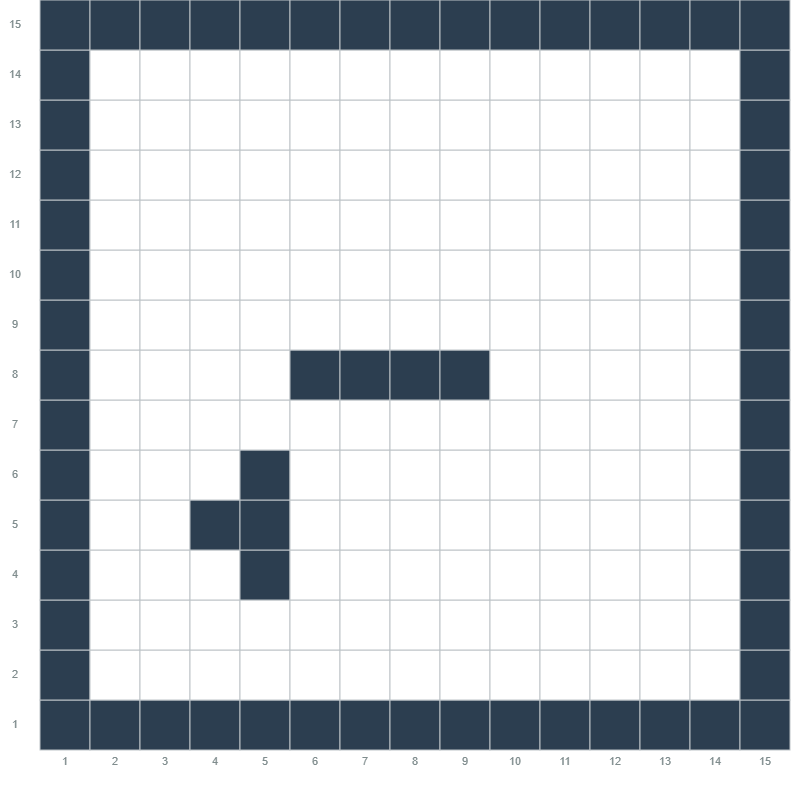

8 $×$ 8 Gridworld. The complete failure of zero-shot prompting (0%) on this simple two-room task is striking: the model receives full information about walls, start, and goal, yet fails completely. This reveals that the bottleneck is not knowledge but application. L-ICL achieves 63% success, demonstrating that localized corrections bridge this gap. Figure 2 shows rapid improvement in the first 30 examples, with continued gains for $≈$ 160 examples before plateauing. 10 $×$ 10 Maze. The maze’s narrow corridors and longer optimal paths (15–25 steps) challenge all methods. L-ICL reaches 27% success where baselines achieve at most 5%. Notably, valid rates reach 57%, indicating that most L-ICL plans respect maze constraints even when they fail to reach the goal. This valid-to-success gap suggests that constraint satisfaction and goal-directed search are separable challenges; L-ICL addresses the former effectively. Sokoban Grid. Despite adopting Sokoban’s richer spatial structure, this domain (without pushable boxes) yields results intermediate between the prior domains: L-ICL achieves 49% success versus 13% for the best baseline. The similarity suggests that spatial complexity, not action vocabulary, dominates difficulty in navigation tasks. Full Sokoban. Introducing pushable boxes causes the sharpest performance degradation across all methods. L-ICL improves success from 13% to only 20%, yet increases valid action rates from 19% to 46%. This dissociation isolates multi-object state tracking as a distinct challenge: L-ICL teaches which pushes are legal, but coordinating agent and box positions toward the goal requires capabilities beyond constraint satisfaction, furhter analyzed in Appendix A. BlocksWorld. This domain differs qualitatively: constraints are relational (“block A is on block B”) rather than spatial, and every object is dynamic. L-ICL still improves success from 48% to 66%, demonstrating that localized corrections generalize beyond navigation.

<details>

<summary>graphs/misc/8x8_nogrid_success_optimal_combined.png Details</summary>

### Visual Description

## Line Chart: 8x8 Gridworld: Success vs Optimal Rate

### Overview

The image is a line chart comparing the success rate and optimal rate of two methods, "Best Baseline" and "L-ICL," across varying numbers of training examples in an 8x8 Gridworld environment. The chart displays the performance of each method, along with shaded regions indicating variability or confidence intervals.

### Components/Axes

* **Title:** 8x8 Gridworld: Success vs Optimal Rate

* **X-axis:** Training Examples, with markers at 0, 30, 60, 90, 120, 150, 180, 210, and 240.

* **Y-axis:** Rate (%), with markers at 0, 10, 20, 30, 40, 50, 60, 70, 80, and 90.

* **Legend:** Located at the bottom of the chart.

* Best Baseline Success (Self-Consistency) - Dashed Blue Line

* Best Baseline Optimal (Self-Consistency) - Dashed Orange Line

* L-ICL Success - Solid Blue Line

* L-ICL Optimal - Solid Orange Line

### Detailed Analysis

* **Best Baseline Success (Self-Consistency):** Represented by a dashed blue line. The line is approximately flat at a rate of 45%.

* **Best Baseline Optimal (Self-Consistency):** Represented by a dashed orange line. The line is approximately flat at a rate of 45%.

* **L-ICL Success:** Represented by a solid blue line.

* Starts at approximately 10% at 0 Training Examples.

* Rises sharply to approximately 46% at 30 Training Examples.

* Increases to approximately 63% at 60 Training Examples.

* Decreases slightly to approximately 59% at 90 Training Examples.

* Increases to approximately 63% at 120 Training Examples.

* Increases to approximately 69% at 150 Training Examples.

* Increases to approximately 77% at 180 Training Examples.

* Decreases slightly to approximately 73% at 210 Training Examples.

* Increases to approximately 74% at 240 Training Examples.

* **L-ICL Optimal:** Represented by a solid orange line.

* Starts at approximately 10% at 0 Training Examples.

* Rises sharply to approximately 46% at 30 Training Examples.

* Decreases slightly to approximately 51% at 60 Training Examples.

* Increases to approximately 63% at 90 Training Examples.

* Increases to approximately 65% at 120 Training Examples.

* Increases to approximately 69% at 150 Training Examples.

* Decreases to approximately 67% at 180 Training Examples.

* Increases to approximately 78% at 210 Training Examples.

* Decreases slightly to approximately 71% at 240 Training Examples.

### Key Observations

* The "Best Baseline" methods (both Success and Optimal) remain relatively constant across all training examples, hovering around 45%.

* The "L-ICL" methods (both Success and Optimal) show a significant increase in rate as the number of training examples increases, particularly in the early stages.

* The "L-ICL Success" rate is generally higher than the "L-ICL Optimal" rate, especially after 60 training examples.

* Both "L-ICL" lines show some fluctuation, but generally trend upwards.

### Interpretation

The data suggests that the "L-ICL" methods are more effective than the "Best Baseline" methods in the 8x8 Gridworld environment, as they achieve higher success and optimal rates with increasing training examples. The "Best Baseline" methods appear to have a fixed performance level, regardless of the number of training examples. The fluctuations in the "L-ICL" lines could be due to the learning process, where the model adjusts its strategy based on the training data. The shaded regions around the lines likely represent the variance in the results across multiple runs or experiments, indicating the reliability of the observed trends.

</details>

Figure 2: 8 $×$ 8 Gridworld learning curves. Success and Optimal rates vs. training examples. L-ICL (without being given the ASCII grid) improves rapidly in the first 30–60 examples, substantially outperforming all baselines, which are given access to the ASCII grid (horizontal line shows best baseline).

4.2 L-ICL Is More Efficient Than Retrieval-Based ICL

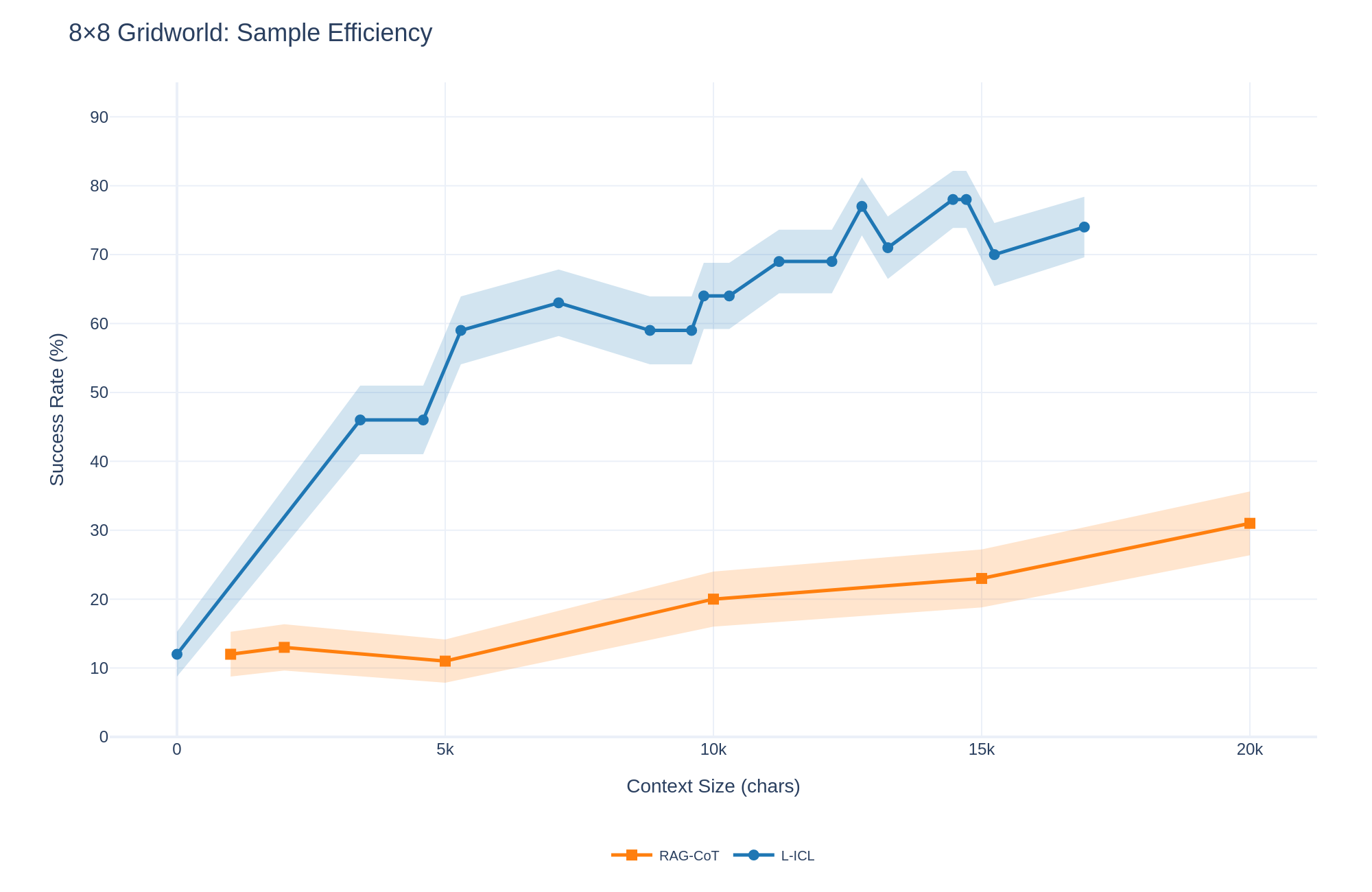

A key advantage of L-ICL is sample efficiency: localized corrections convey more information per token than complete solution trajectories. Figure 3 compares L-ICL and RAG-ICL as a function of context size. RAG-ICL with 20,000 characters of retrieved trajectories achieves 16% success. L-ICL matches this performance with approximately 5,000 characters and reaches 63% success with 7,000 characters. At matched context size, L-ICL outperforms RAG-ICL by 40+ percentage points. This efficiency stems from the compression achieved by localized examples. A complete trajectory demonstrates that a solution works but leaves implicit why individual steps are valid. A local example like get_applicable_actions((3,4)) -> [’move_north’,’move_south’] directly encodes that eastward movement from (3,4) is blocked.

<details>

<summary>graphs/efficiency/8x8_grid_nogrid_efficiency.png Details</summary>

### Visual Description

## Line Chart: 8x8 Gridworld: Sample Efficiency

### Overview

The image is a line chart comparing the sample efficiency of two methods, RAG-CoT and L-ICL, in an 8x8 Gridworld environment. The chart plots the success rate (in percentage) against the context size (in characters). Both lines have shaded regions around them, indicating a confidence interval or standard deviation.

### Components/Axes

* **Title:** 8x8 Gridworld: Sample Efficiency

* **X-axis:** Context Size (chars), with markers at 0, 5k, 10k, 15k, and 20k.

* **Y-axis:** Success Rate (%), with markers at 0, 10, 20, 30, 40, 50, 60, 70, 80, and 90.

* **Legend:** Located at the bottom of the chart.

* RAG-CoT (orange line with square markers)

* L-ICL (blue line with circle markers)

### Detailed Analysis

* **RAG-CoT (orange line):**

* The line starts at approximately 12% success rate at 0 context size.

* It dips slightly to around 11% at 5k context size.

* It then increases to approximately 20% at 10k context size.

* Continues to increase to approximately 23% at 15k context size.

* Reaches approximately 31% at 20k context size.

* The trend is generally upward, indicating increasing success rate with larger context size.

* **L-ICL (blue line):**

* The line starts at approximately 12% success rate at 0 context size.

* It increases sharply to approximately 46% at 5k context size.

* It plateaus around 60-65% between 5k and 10k context size.

* It fluctuates between 70% and 80% between 10k and 15k context size.

* It ends at approximately 74% at 20k context size.

* The trend is initially sharply upward, then plateaus with some fluctuations, indicating a higher success rate compared to RAG-CoT, especially with larger context sizes.

### Key Observations

* L-ICL consistently outperforms RAG-CoT across all context sizes.

* L-ICL shows a significant initial improvement in success rate with increasing context size, while RAG-CoT's improvement is more gradual.

* The shaded regions around the lines suggest that the variance in success rate is higher for L-ICL than for RAG-CoT, especially at larger context sizes.

### Interpretation

The chart demonstrates that L-ICL is more sample efficient than RAG-CoT in the 8x8 Gridworld environment. This means that L-ICL achieves a higher success rate with the same amount of context. The initial sharp increase in L-ICL's success rate suggests that it benefits more from the initial context provided, while RAG-CoT requires a larger context size to achieve comparable performance. The fluctuations in L-ICL's success rate at larger context sizes could indicate that it is more sensitive to the specific context provided, leading to higher variance in performance. The data suggests that L-ICL is a better choice for this task, especially when context size is limited.

</details>

Figure 3: Sample efficiency: L-ICL vs. RAG-ICL. Success rate vs. context size (characters) on 8 $×$ 8 Gridworld. L-ICL achieves higher performance with substantially less context.

4.3 L-ICL Does Not Need Full Domain Knowledge

In Table 4, in the tasks aside from BlocksWorld, all prompting schemes use an ASCII grid visualization of the gridworld to be explored (preliminary experiments suggested this approach was most effective for these tasks.) Since L-ICL learns to correct domain violations, a natural question is whether the ASCII grid is actually necessary for it: can it learn the domain from examples alone?

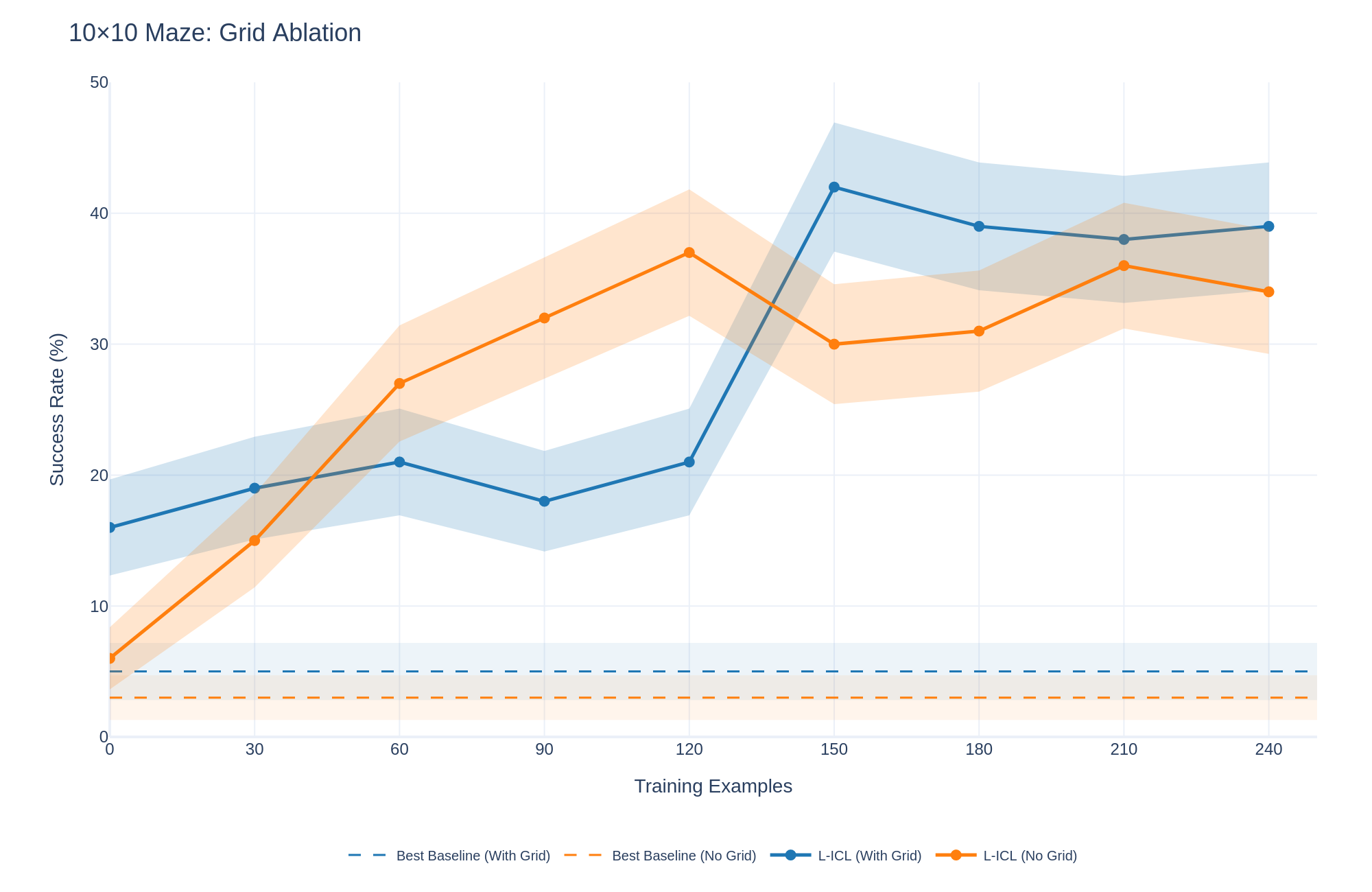

Figure 4 shows the learning curve for L-ICL on the 10x10 grid task with and without the ASCII visualization of the grid. The visualization accelerates performance early on (21% at $m{=}30$ with grid vs. 15% without), but peak performance is comparable (39% vs. 37%). Thus, L-ICL does not require visual scaffolding, although the grid provides useful inductive bias during early training. However, to obtain the full benefit of such scaffolding, the LLM requires some L-ICL training; with more examples being needed for more complex domains. Thus, the 8 $×$ 8 grid almost immediately benefits, whereas all harder domains only display the benefit of the scaffolded version over the non-scaffolded version later on in their training, as seen in the figure.

<details>

<summary>graphs/misc/10x10_maze_grid_ablation.png Details</summary>

### Visual Description

## Line Chart: 10x10 Maze: Grid Ablation

### Overview

The image is a line chart comparing the success rate (%) of different models (L-ICL and Best Baseline) with and without a grid, across varying numbers of training examples. The x-axis represents the number of training examples, and the y-axis represents the success rate in percentage. Shaded regions around the lines indicate variability or confidence intervals.

### Components/Axes

* **Title:** 10x10 Maze: Grid Ablation

* **X-axis:**

* **Label:** Training Examples

* **Scale:** 0, 30, 60, 90, 120, 150, 180, 210, 240

* **Y-axis:**

* **Label:** Success Rate (%)

* **Scale:** 0, 10, 20, 30, 40, 50

* **Legend:** Located at the bottom of the chart.

* Best Baseline (With Grid) - solid blue line

* Best Baseline (No Grid) - solid orange line

* L-ICL (With Grid) - blue line with circular markers

* L-ICL (No Grid) - orange line with circular markers

### Detailed Analysis

* **Best Baseline (With Grid):** (solid blue line)

* This line is nearly flat, hovering around a success rate of approximately 4%.

* Training Examples: 0, Success Rate: ~4%

* Training Examples: 240, Success Rate: ~4%

* **Best Baseline (No Grid):** (solid orange line)

* This line is also nearly flat, hovering around a success rate of approximately 2%.

* Training Examples: 0, Success Rate: ~2%

* Training Examples: 240, Success Rate: ~2%

* **L-ICL (With Grid):** (blue line with circular markers)

* The line starts at approximately 16% and generally increases, peaking around 150 training examples, then slightly decreasing and stabilizing.

* Training Examples: 0, Success Rate: ~16%

* Training Examples: 30, Success Rate: ~19%

* Training Examples: 60, Success Rate: ~21%

* Training Examples: 90, Success Rate: ~18%

* Training Examples: 120, Success Rate: ~22%

* Training Examples: 150, Success Rate: ~42%

* Training Examples: 180, Success Rate: ~39%

* Training Examples: 210, Success Rate: ~38%

* Training Examples: 240, Success Rate: ~39%

* **L-ICL (No Grid):** (orange line with circular markers)

* The line starts low, increases sharply until 120 training examples, then decreases slightly before increasing again and stabilizing.

* Training Examples: 0, Success Rate: ~6%

* Training Examples: 30, Success Rate: ~14%

* Training Examples: 60, Success Rate: ~27%

* Training Examples: 90, Success Rate: ~32%

* Training Examples: 120, Success Rate: ~37%

* Training Examples: 150, Success Rate: ~30%

* Training Examples: 180, Success Rate: ~31%

* Training Examples: 210, Success Rate: ~36%

* Training Examples: 240, Success Rate: ~34%

### Key Observations

* The "Best Baseline" models (both with and without grid) perform significantly worse than the "L-ICL" models.

* The "L-ICL (With Grid)" model shows a higher peak success rate compared to the "L-ICL (No Grid)" model.

* Both "L-ICL" models show a general increasing trend in success rate as the number of training examples increases, although there are fluctuations.

* The shaded regions around the "L-ICL" lines indicate variability in performance, which is more pronounced at certain training example counts.

### Interpretation

The chart suggests that using L-ICL significantly improves the success rate in the 10x10 Maze task compared to the "Best Baseline" models. The presence of a grid further enhances the performance of the L-ICL model, especially around 150 training examples. The variability indicated by the shaded regions suggests that the performance of the L-ICL models can fluctuate, possibly due to the stochastic nature of the training process or the complexity of the maze environment. The flat lines of the "Best Baseline" models indicate that they are not learning effectively from the training examples.

</details>

Figure 4: Grid representation ablation on 10 $×$ 10 Maze. The ASCII grid accelerates early learning but does not change peak performance. Without L-ICL, the grid provides little benefit.

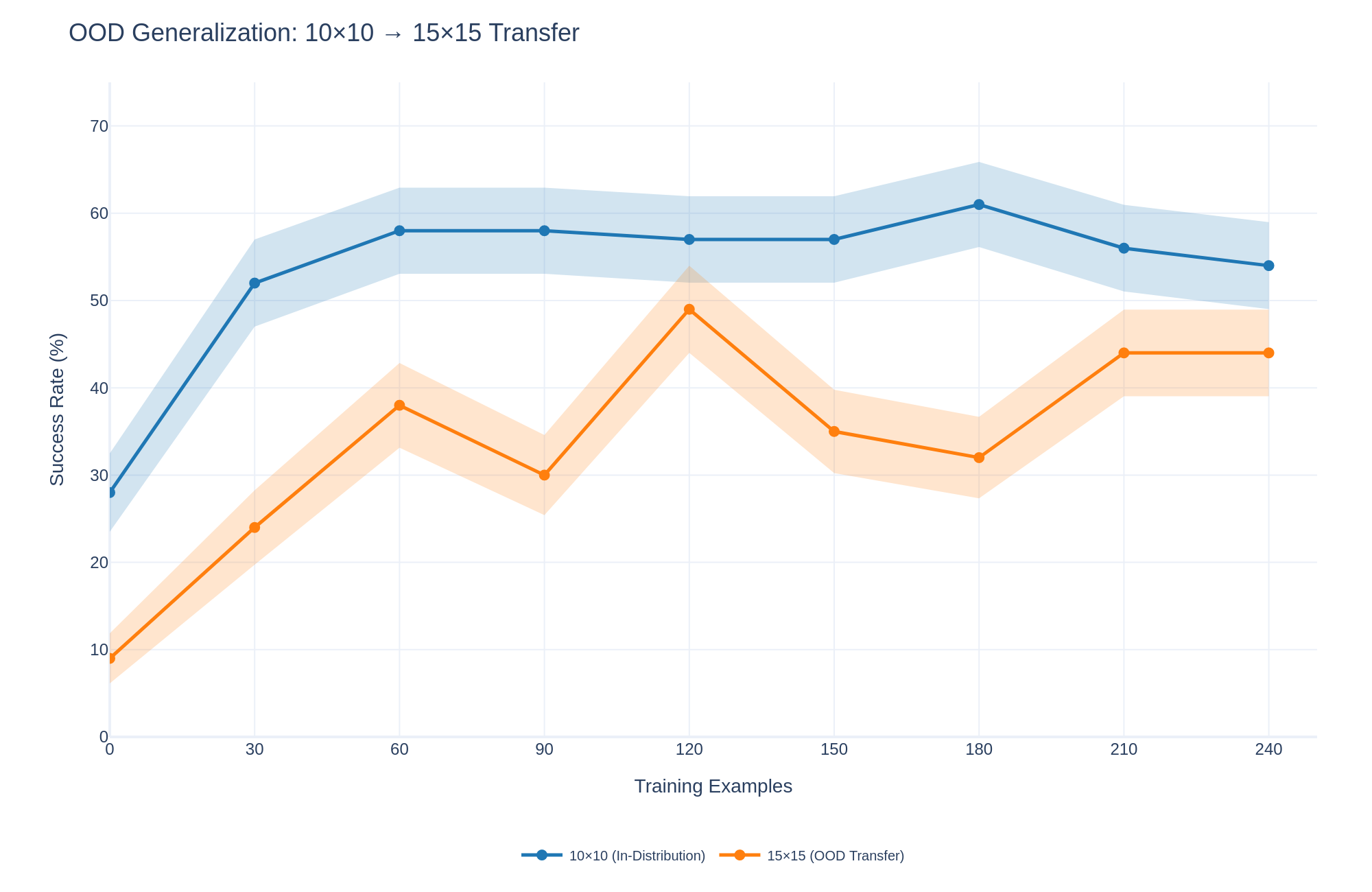

4.4 L-ICL Works On Many LLM Architectures

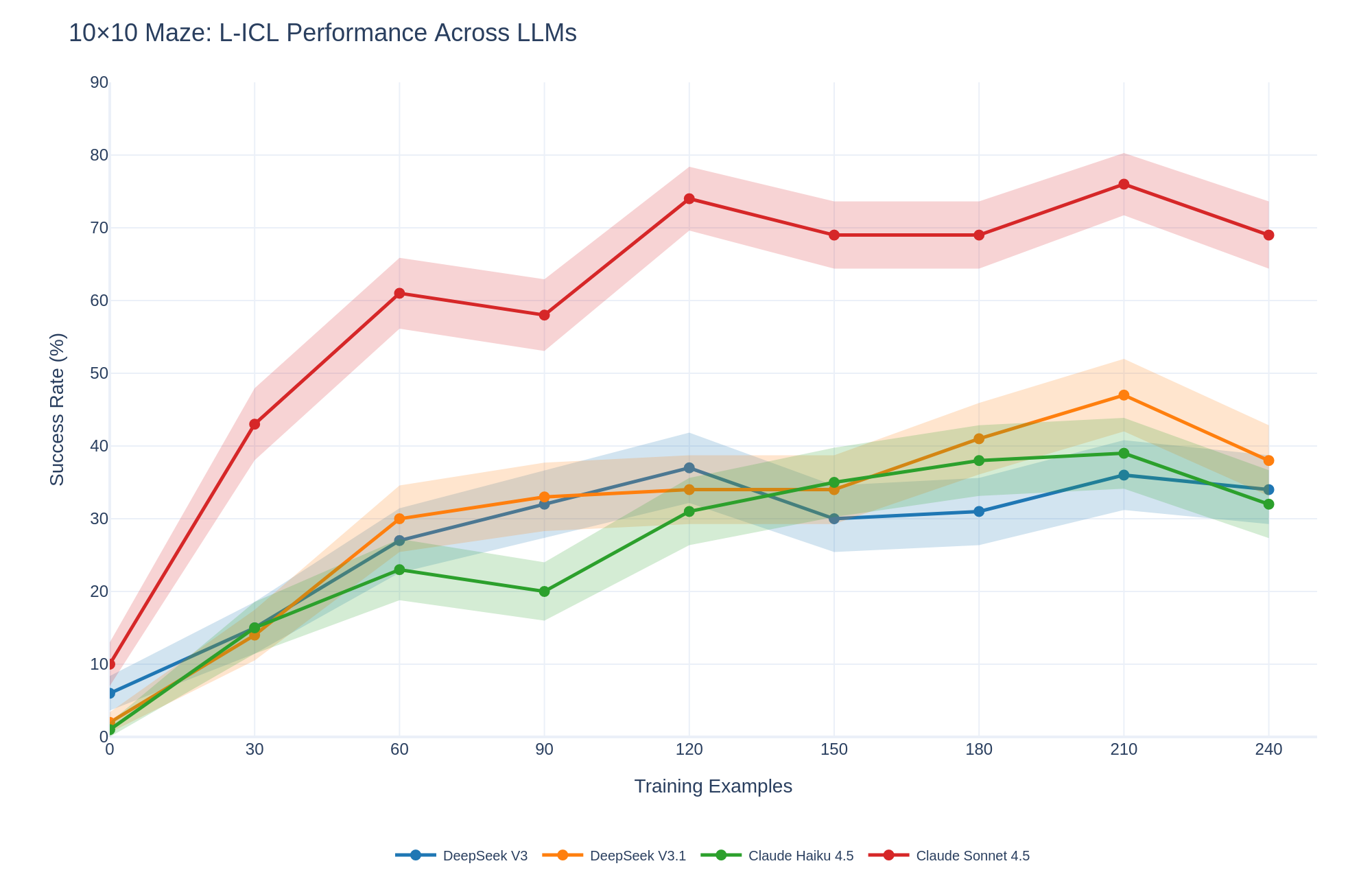

To assess whether L-ICL’s benefits are architecture-specific, we evaluate on three additional models: DeepSeek V3.1, Claude 4.5 Haiku, and Claude Sonnet 4.5. Figure 5 shows results on the 10 $×$ 10 Maze. All models improve substantially with L-ICL. Claude Sonnet 4.5 shows the strongest gains (10% to 74%), followed by DeepSeek V3.1 (2% to 47%) and Claude 4.5 Haiku (1% to 39%). The relative ordering changes with training: at $m{=}0$ models are comparable, but by $m{=}120$ Claude Sonnet 4.5 leads substantially. This suggests stronger models leverage accumulated corrections more effectively, though all models benefit.

<details>

<summary>graphs/misc/llm_ablation_success.png Details</summary>

### Visual Description

## Line Chart: 10x10 Maze: L-ICL Performance Across LLMs

### Overview

The image is a line chart comparing the performance of four different Large Language Models (LLMs) on a 10x10 maze task. The chart plots the success rate (%) of each model against the number of training examples. The models compared are DeepSeek V3, DeepSeek V3.1, Claude Haiku 4.5, and Claude Sonnet 4.5. The chart includes shaded regions around each line, representing the uncertainty or variance in the performance.

### Components/Axes

* **Title:** 10x10 Maze: L-ICL Performance Across LLMs

* **X-axis:** Training Examples

* Scale: 0 to 240, with markers at 0, 30, 60, 90, 120, 150, 180, 210, and 240.

* **Y-axis:** Success Rate (%)

* Scale: 0 to 90, with markers at 0, 10, 20, 30, 40, 50, 60, 70, 80, and 90.

* **Legend:** Located at the bottom of the chart.

* DeepSeek V3 (Blue)

* DeepSeek V3.1 (Orange)

* Claude Haiku 4.5 (Green)

* Claude Sonnet 4.5 (Red)

### Detailed Analysis

* **DeepSeek V3 (Blue):**

* Trend: Generally increasing, but plateaus and slightly decreases towards the end.

* Data Points:

* 0 Training Examples: ~2%

* 30 Training Examples: ~10%

* 60 Training Examples: ~27%

* 90 Training Examples: ~33%

* 120 Training Examples: ~37%

* 150 Training Examples: ~30%

* 180 Training Examples: ~32%

* 210 Training Examples: ~38%

* 240 Training Examples: ~35%

* **DeepSeek V3.1 (Orange):**

* Trend: Increasing, peaks around 210 training examples, then decreases.

* Data Points:

* 0 Training Examples: ~3%

* 30 Training Examples: ~12%

* 60 Training Examples: ~30%

* 90 Training Examples: ~33%

* 120 Training Examples: ~35%

* 150 Training Examples: ~38%

* 180 Training Examples: ~43%

* 210 Training Examples: ~47%

* 240 Training Examples: ~38%

* **Claude Haiku 4.5 (Green):**

* Trend: Increasing, plateaus, and slightly decreases towards the end.

* Data Points:

* 0 Training Examples: ~5%

* 30 Training Examples: ~15%

* 60 Training Examples: ~22%

* 90 Training Examples: ~18%

* 120 Training Examples: ~32%

* 150 Training Examples: ~35%

* 180 Training Examples: ~38%

* 210 Training Examples: ~35%

* 240 Training Examples: ~32%

* **Claude Sonnet 4.5 (Red):**

* Trend: Rapidly increasing initially, plateaus, and then slightly decreases.

* Data Points:

* 0 Training Examples: ~7%

* 30 Training Examples: ~43%

* 60 Training Examples: ~61%

* 90 Training Examples: ~58%

* 120 Training Examples: ~74%

* 150 Training Examples: ~69%

* 180 Training Examples: ~68%

* 210 Training Examples: ~76%

* 240 Training Examples: ~69%

### Key Observations

* Claude Sonnet 4.5 (Red) significantly outperforms the other models, achieving a much higher success rate with fewer training examples.

* DeepSeek V3 (Blue) has the lowest overall performance.

* DeepSeek V3.1 (Orange) and Claude Haiku 4.5 (Green) have similar performance trends, with DeepSeek V3.1 generally performing slightly better.

* All models show diminishing returns with increased training examples, with performance plateauing or even decreasing after a certain point.

* The shaded regions indicate variability in performance, with Claude Sonnet 4.5 showing the widest range of variability.

### Interpretation

The chart demonstrates the effectiveness of different LLMs in solving a 10x10 maze task through In-Context Learning (ICL). Claude Sonnet 4.5 exhibits superior learning capabilities, achieving high success rates with fewer training examples, suggesting a more efficient learning algorithm or a better-suited architecture for this specific task. The plateauing or decreasing performance after a certain number of training examples suggests that the models may be overfitting to the training data or reaching the limits of what can be learned through ICL for this particular maze complexity. The variability in performance, as indicated by the shaded regions, highlights the instability or sensitivity of the models to different training sets or initial conditions. The data suggests that model selection and optimization of training examples are crucial for maximizing performance in ICL tasks.

</details>

Figure 5: L-ICL across LLM architectures. Success rate on 10 $×$ 10 Maze for four models. All improve substantially; Claude Sonnet 4.5 shows the largest gains (10% $→$ 74%).

4.5 Summary of Findings

(1) L-ICL dramatically improves constraint adherence, achieving consistently higher success rates than baselines across all domains. (2) L-ICL is sample-efficient: 30–90 training examples typically suffice, and L-ICL outperforms RAG-ICL while using 4 $×$ less context. (3) Explicit spatial representations are not required: ASCII grids accelerate early learning but do not change peak performance. (4) L-ICL generalizes across architectures: four LLMs from different families all benefit substantially. (5) Multi-object tracking and strategic planning remain challenging: the valid-to-success gap in Sokoban and BlocksWorld indicates that localized corrections address constraint violations but do not fully solve long-horizon coordination (see Appendix A).

5 Discussion

Our experiments demonstrate that L-ICL consistently improves LLM planning performance, often by substantial margins. Beyond raw performance gains, these results support a specific conceptual interpretation that clarifies both what L-ICL achieves and where challenges remain.

5.1 L-ICL as In-Context Unit Testing

In software engineering, unit testing is a means of “hardening” code subroutines (i.e., making them more reliable and predictable), and it is considered good practice to use unit tests even when end-to-end tests exist. ICL demonstrations instruct a model as to desired behavior, rather than confirming that it has a desired behavior; modulo this important difference, however, L-ICL demonstrations are analogous to unit tests, and traditional ICL demonstrations are analogous to end-to-end tests. L-ICL demonstrations can be viewed as a technique for “hardening” individual reasoning steps, in that they makes an LLM’s instruction-following behavior more reliable and consistent.

Full-trajectory demonstrations are more like end-to-end tests; in software engineering, these tests have a different role than unit tests, confirming that individual modules interact correctly: in LLM terms, they encourage process correctness, and only incidentally encourage step correctness. In planning tasks, an invalid plan may have many correctly perform steps and only a single invalidly performed step, so adding a full-trajectory demonstration is at best an inefficient way to improve performance, in terms of the useful information per prompt token, relative to accumulating local demonstrations in a failure-driven way.

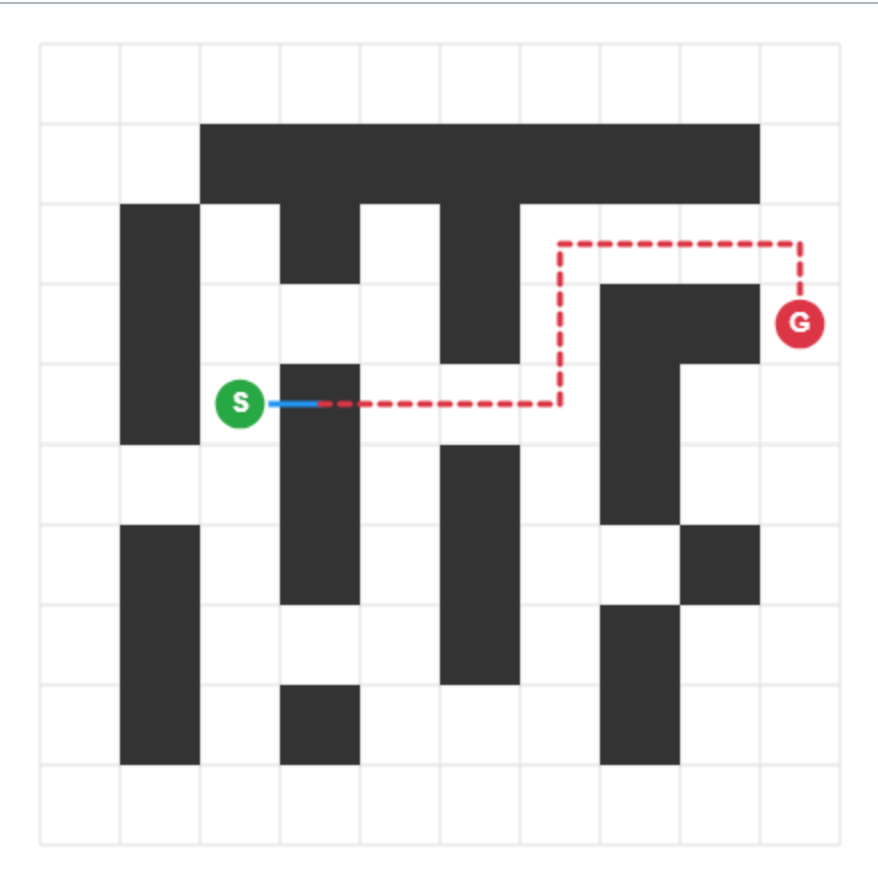

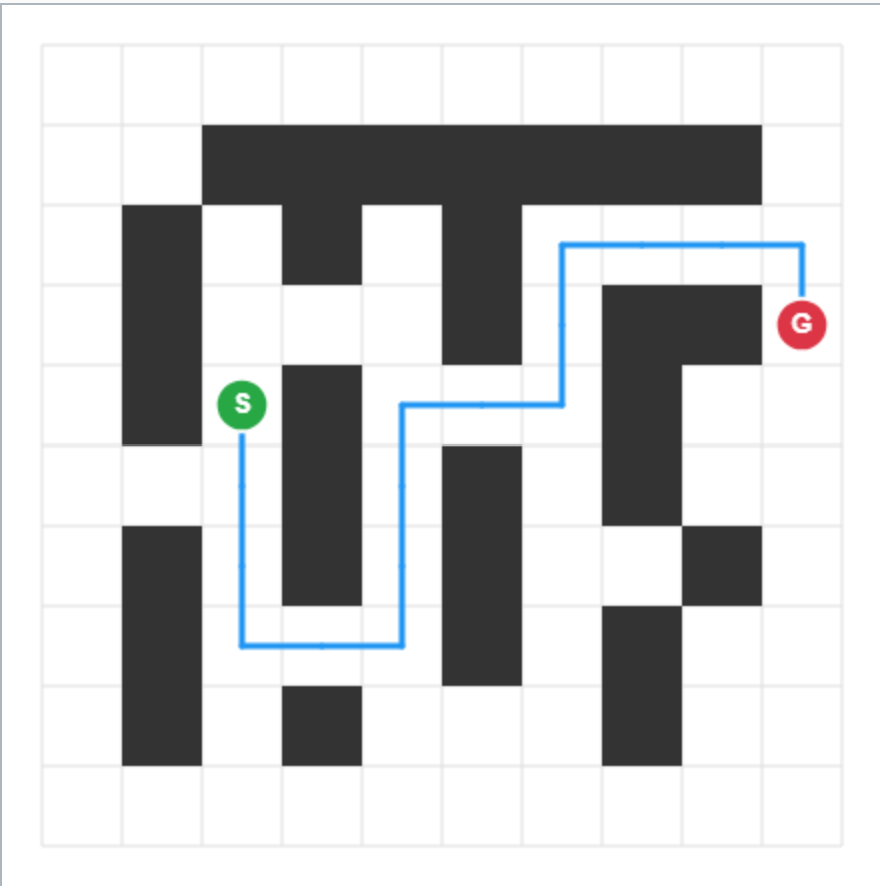

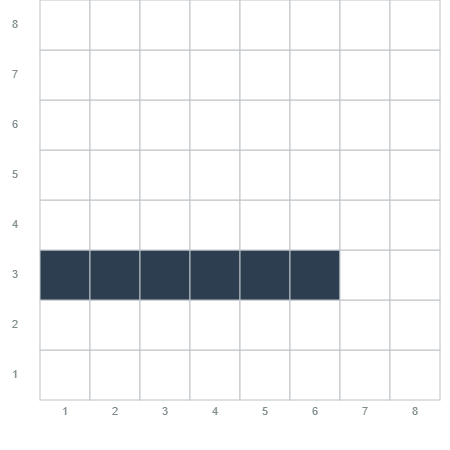

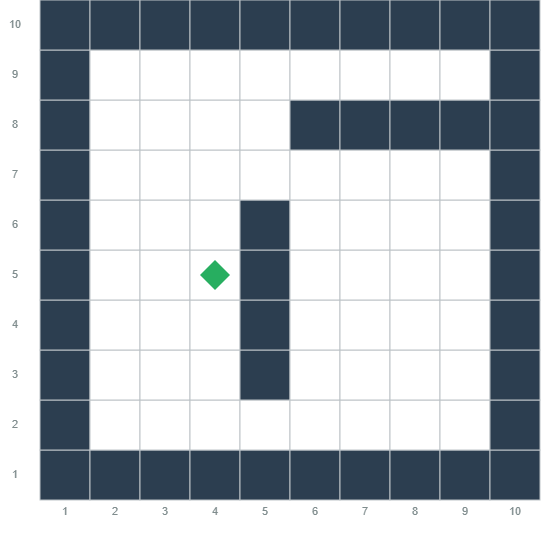

5.2 Qualitative Evidence: From Guessing to Navigation

Figure 6 provides visual evidence of L-ICL’s effect. At $m{=}0$ , the model proposes moves without regard for walls, quickly entering invalid states. By $m{=}60$ , it produces a coherent start-to-goal path respecting all walls. Crucially, this improvement occurs without the model ever seeing the ASCII grid. The doctests encode constraints implicitly through input-output pairs, and the model learns to satisfy them. This demonstrates that L-ICL induces a transferable constraint prior rather than memorizing specific layouts.

<details>

<summary>graphs/misc/maze_pictures/0_final.png Details</summary>

### Visual Description

## Maze Diagram: Pathfinding

### Overview

The image is a diagram of a maze on a grid. The maze consists of black blocks representing walls and white blocks representing open paths. A green circle labeled "S" marks the start point, and a red circle labeled "G" marks the goal point. A blue line segment extends from "S", and a red dashed line indicates a possible path from the end of the blue line to "G".

### Components/Axes

* **Grid:** The maze is laid out on a square grid.

* **Walls:** Black blocks represent the walls of the maze.

* **Paths:** White blocks represent the open paths within the maze.

* **Start Point (S):** A green circle labeled "S" indicates the starting location.

* **Goal Point (G):** A red circle labeled "G" indicates the goal location.

* **Initial Path:** A short blue line segment extends from the start point "S".

* **Proposed Path:** A red dashed line shows a possible path from the end of the blue line to the goal point "G".

### Detailed Analysis

* **Start Point (S):** Located approximately at grid coordinates (2, 3) from the top-left corner.

* **Goal Point (G):** Located approximately at grid coordinates (8, 2) from the top-left corner.

* **Initial Path:** The blue line extends one grid unit to the right from "S".

* **Proposed Path:** The red dashed line starts from the end of the blue line, moves horizontally to the right, then upwards, then horizontally to the right again, and finally downwards to reach "G". The path avoids the black wall blocks.

### Key Observations

* The maze has a complex structure with multiple dead ends and turns.

* The proposed path is not a straight line and requires navigating around the walls.

* The initial blue line segment indicates a starting direction.

### Interpretation

The diagram illustrates a pathfinding problem within a maze. The goal is to find a path from the start point "S" to the goal point "G" while avoiding the walls. The red dashed line represents a possible solution, demonstrating a route that navigates the maze's obstacles. The initial blue line segment could represent an initial move or direction chosen by a pathfinding algorithm. The diagram is a visual representation of a common problem in computer science and robotics.

</details>

<details>

<summary>graphs/misc/maze_pictures/60_final.png Details</summary>

### Visual Description

## Maze Navigation Diagram

### Overview

The image depicts a maze with a solution path highlighted. The maze consists of black blocks representing walls and white blocks representing open space. A blue line indicates the path from the starting point (marked with a green "S") to the goal (marked with a red "G"). The maze is overlaid on a light gray grid.

### Components/Axes

* **Maze:** A grid of black and white squares representing walls and open paths, respectively.

* **Start Point (S):** A green circle containing the letter "S", located on the left side of the maze.

* **Goal Point (G):** A red circle containing the letter "G", located on the right side of the maze.

* **Solution Path:** A blue line indicating the path from the start point to the goal point.

* **Grid:** A light gray grid providing a visual reference for the maze structure.

### Detailed Analysis

The maze is approximately 10x10 grid cells. The start point "S" is located at approximately (2,5) in grid coordinates, where (0,0) is the top-left corner. The goal point "G" is located at approximately (8,2).

The blue solution path starts at "S" and moves:

1. Down one cell.

2. Right two cells.

3. Up four cells.

4. Right two cells.

5. Down two cells.

6. Right one cell.

7. Up two cells.

8. Right one cell to reach "G".

The black blocks form a complex arrangement of walls, creating a non-trivial path between "S" and "G".

### Key Observations

* The solution path is not a straight line; it requires multiple turns to navigate the maze.

* The maze has several dead ends and blocked pathways.

* The solution path appears to be the shortest possible route through the maze.

### Interpretation

The diagram illustrates a pathfinding problem and a possible solution. The maze represents a complex environment, and the blue line represents a successful navigation strategy. The presence of the start and goal points, along with the solution path, suggests a problem-solving scenario where an agent needs to find the optimal route between two points in a constrained environment. The diagram could be used to demonstrate pathfinding algorithms or to visualize the complexity of navigating a maze.

</details>

Figure 6: From blind guessing to structured navigation. Two rollouts on the same held-out maze as training examples $m$ increase. At $m{=}0$ (left), the model ignores walls entirely. By $m{=}60$ (right), the model produces a valid trajectory without ever seeing the grid representation, demonstrating that L-ICL induces transferable constraint knowledge.

5.3 Limitations and Scope

One limitation is that L-ICL requires an oracle that can verify constraint satisfaction and provide correct outputs during training; however, this oracle is needed only during training —at test time, L-ICL requires a single forward pass with no external dependencies, distinguishing it from methods like ReAct with oracle feedback that require verification at inference. Extending to domains without formal specifications may require weaker supervision (learned verifiers, stronger models) that could introduce noise.

A second limitation of this work is that we have only addressed one problem for LLM planners: their difficulty in correctly applying domain knowledge. LLM planners also struggle with strategic reasoning, i.e., performing valid actions in a way that quickly reaches the goal. While L-ICL excels improving validity, this does not always lead to good strategic reasoning, as shown by the valid-to-success gap in Sokoban (46% valid, 20% success). We leave to future work the question of whether localized corrections, or some extension of them, can also correct strategic failures, which seem to require multi-step lookahead, or whether L-ICL must be combined with complementary approaches such as search or value functions.

A third limitation of this paper is that we consider only formally-describable planning benchmarks from the LLM planning literature. Transfer to open-ended natural-language tasks is not studied.

6 Conclusion

We began with a puzzle: LLMs receive complete specifications of domain constraints yet routinely violate them. For example, stating that an agent cannot walk through walls is insufficient, because models do not consistently apply that information at test time. L-ICL addresses this issue in a simple way: when a constraint is violated, we add a minimal input-output example correcting that error, hence putting additional emphasis on the precise knowledge that was not applied. These minimal corrections are accumulating during training, progressively distilling behavioral knowledge from an oracle symbolic system into the prompt. The improvement is remarkable: on an 8 $×$ 8 gridworld where zero-shot prompting achieves 0% success, L-ICL reaches 89% with only 60 training examples, and L-ICL consistently outperforms other baselines across domains.

One key finding is that demonstration structure matters more than quantity. L-ICL achieves higher performance with 2,000 characters of targeted corrections than RAG-ICL achieves with 20,000 characters of complete trajectories. Complete solutions demonstrate that a plan works; localized examples demonstrate why individual steps are valid. This compression explains L-ICL’s sample efficiency and suggests a broader principle: LLM reliability can be improved by making implicit knowledge explicit at the point of application. This also reduces prompt engineering burden: rather than exhaustively specifying every constraint upfront, practitioners can let L-ICL discover them through failure-driven corrections.

L-ICL does not solve planning. The valid-to-success gap in Sokoban shows that respecting domain constraints is necessary but not sufficient; strategic reasoning remains challenging in this domain. We view this not as a limitation but as a clarification of scope. L-ICL provides a procedural hardening layer: a reliable foundation of constraint-satisfying primitives on which higher-level reasoning can build. Just as unit tests do not write the program but ensure its components behave correctly, L-ICL does not plan but ensures that proposed actions respect domain physics. We hope this decomposition proves useful for future work on LLM reasoning systems.

Impact Statement

This paper presents work whose goal is to advance the field of Machine Learning. There are many potential societal consequences of our work, none which we feel must be specifically highlighted here.

References

- Anthropic (2025) Claude 4.5 model family. Note: https://www.anthropic.com/claude Sonnet 4.5 released September 2025; Haiku 4.5 released October 2025 Cited by: §3.5.

- T. Brown, B. Mann, N. Ryder, M. Subbiah, J. D. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askell, et al. (2020) Language models are few-shot learners. In Advances in Neural Information Processing Systems, Vol. 33, pp. 1877–1901. Cited by: §2.2.

- W. Chen, X. Ma, X. Wang, and W. W. Cohen (2022) Program of thoughts prompting: disentangling computation from reasoning for numerical reasoning tasks. arXiv preprint arXiv:2211.12588. Cited by: §2.2.

- C. A. Cohen and W. W. Cohen (2024) Watch your steps: observable and modular chains of thought. arXiv preprint arXiv:2409.15359. Cited by: §1, §2.2, §3.1, footnote 1.

- DeepSeek-AI (2024) DeepSeek-V3 technical report. arXiv preprint arXiv:2412.19437. Cited by: §3.5.

- G. Francés, M. Ramirez, and Collaborators (2018) Tarski: an AI planning modeling framework. GitHub. Note: https://github.com/aig-upf/tarski Cited by: §E.5.

- L. Gao, A. Madaan, S. Zhou, U. Alon, P. Liu, Y. Yang, J. Callan, and G. Neubig (2023) PAL: program-aided language models. In International Conference on Machine Learning, pp. 10764–10799. Cited by: §2.2.

- S. Hao, Y. Gu, H. Ma, J. J. Hong, Z. Wang, D. Z. Wang, and Z. Hu (2023) Reasoning with language model is planning with world model. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pp. 8154–8173. Cited by: §2.1.

- M. Helmert (2006) The fast downward planning system. In Journal of Artificial Intelligence Research, Vol. 26, pp. 191–246. Cited by: §E.4.2, §E.5, §3.5.

- R. Howey, D. Long, and M. Fox (2004) VAL: automatic plan validation, continuous effects and mixed initiative planning using pddl. In 16th IEEE International Conference on Tools with Artificial Intelligence, Vol. , pp. 294–301. External Links: Document Cited by: §E.4.1.

- L. B. Kaesberg, J. P. Wahle, T. Ruas, and B. Gipp (2025) SPaRC: a spatial pathfinding reasoning challenge. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, C. Christodoulopoulos, T. Chakraborty, C. Rose, and V. Peng (Eds.), Suzhou, China, pp. 10359–10390. External Links: Link, Document, ISBN 979-8-89176-332-6 Cited by: §2.1.

- S. Kambhampati, K. Valmeekam, L. Guan, M. Verma, K. Stechly, S. Bhambri, L. Saldyt, and A. Murthy (2024) Position: llms can’t plan, but can help planning in llm-modulo frameworks. In Proceedings of the 41st International Conference on Machine Learning, ICML’24. Cited by: §2.1.

- M. Katz and J. Lee (2023) K* search over orbit space for top-k planning. In Proceedings of the 32nd International Joint Conference on Artificial Intelligence (IJCAI 2023), Cited by: §E.5, §3.5.

- O. Khattab, A. Singhvi, P. Maheshwari, Z. Zhang, K. Santhanam, S. Vardhamanan, S. Haq, A. Sharma, T. T. Joshi, H. Moazam, H. Miller, M. Zaharia, and C. Potts (2023) DSPy: compiling declarative language model calls into self-improving pipelines. External Links: 2310.03714, Link Cited by: §1.

- J. Lee, M. Katz, and S. Sohrabi (2023) On k* search for top-k planning. In Symposium on Combinatorial Search, External Links: Link Cited by: §3.5.

- L. Lehnert, S. Sukhbaatar, D. Su, Q. Zheng, P. McVay, M. Rabbat, and Y. Tian (2024) Beyond A*: better planning with transformers via search dynamics bootstrapping. arXiv preprint arXiv:2402.14083. Cited by: §1.

- J. Leng, C. A. Cohen, Z. Zhang, C. Xiong, and W. W. Cohen (2025) Semi-structured llm reasoners can be rigorously audited. External Links: 2505.24217, Link Cited by: §2.2.

- C. Li, J. Liang, A. Zeng, X. Chen, K. Hausman, D. Sadigh, S. Levine, L. Fei-Fei, F. Xia, and B. Ichter (2023) Chain of code: reasoning with a language model-augmented code emulator. arXiv preprint arXiv:2312.04474. Cited by: §2.2.

- J. Liu, D. Shen, Y. Zhang, B. Dolan, L. Carin, and W. Chen (2022) What makes good in-context examples for GPT-3?. In Proceedings of Deep Learning Inside Out (DeeLIO 2022): The 3rd Workshop on Knowledge Extraction and Integration for Deep Learning Architectures, E. Agirre, M. Apidianaki, and I. Vulić (Eds.), Dublin, Ireland and Online, pp. 100–114. External Links: Link, Document Cited by: §2.2.

- A. Madaan, N. Tandon, P. Gupta, S. Hallinan, L. Gao, S. Wiegreffe, U. Alon, N. Dziri, S. Prabhumoye, Y. Yang, S. Gupta, B. P. Majumder, K. Hermann, S. Welleck, A. Yazdanbakhsh, and P. Clark (2023) Self-refine: iterative refinement with self-feedback. In Advances in Neural Information Processing Systems, Vol. 36. Cited by: §D.1.4, §2.2.

- S. Min, X. Lyu, A. Holtzman, M. Arber, M. Lewis, H. Hajishirzi, and L. Zettlemoyer (2022) Rethinking the role of demonstrations: what makes in-context learning work?. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, pp. 11048–11064. Cited by: §2.2.

- P. Shojaee, I. Mirzadeh, K. Alizadeh, M. Horton, S. Bengio, and M. Farajtabar (2025) The illusion of thinking: understanding the strengths and limitations of reasoning models via the lens of problem complexity. In Advances in Neural Information Processing Systems, Vol. 38. Cited by: §2.1.

- K. Stechly, K. Valmeekam, and S. Kambhampati (2024) Chain of thoughtlessness? an analysis of cot in planning. In Advances in Neural Information Processing Systems, Vol. 37. Cited by: 3rd item, §F.1.3, §1, §2.1, §2.1.

- K. Stechly, K. Valmeekam, and S. Kambhampati (2025) On the self-verification limitations of large language models on reasoning and planning tasks. In The Thirteenth International Conference on Learning Representations, External Links: Link Cited by: §D.1.4, §2.2.

- M. Turpin, J. Michael, E. Perez, and S. R. Bowman (2023) Language models don’t always say what they think: unfaithful explanations in chain-of-thought prompting. In Thirty-seventh Conference on Neural Information Processing Systems, External Links: Link Cited by: §2.2.

- K. Valmeekam, M. Marquez, S. Sreedharan, and S. Kambhampati (2023) On the planning abilities of large language models–a critical investigation. In Advances in Neural Information Processing Systems, Vol. 36. Cited by: §1, §2.1.

- X. Wang, J. Wei, D. Schuurmans, Q. Le, E. Chi, S. Narang, A. Chowdhery, and D. Zhou (2023) Self-consistency improves chain of thought reasoning in language models. In International Conference on Learning Representations, Cited by: §D.1.3, §2.2.

- J. Wei, X. Wang, D. Schuurmans, M. Bosma, B. Ichter, F. Xia, E. Chi, Q. Le, and D. Zhou (2022) Chain-of-thought prompting elicits reasoning in large language models. In Advances in Neural Information Processing Systems, Vol. 35, pp. 24824–24837. Cited by: §2.2.

- S. Yao, D. Yu, J. Zhao, I. Shafran, T. L. Griffiths, Y. Cao, and K. Narasimhan (2023a) Tree of thoughts: deliberate problem solving with large language models. In Advances in Neural Information Processing Systems, Vol. 36. Cited by: §D.1.6, §1, §2.1, §2.2.

- S. Yao, J. Zhao, D. Yu, N. Du, I. Shafran, K. Narasimhan, and Y. Cao (2023b) ReAct: synergizing reasoning and acting in language models. In International Conference on Learning Representations, Cited by: §D.1.5, §2.1, §2.2.

Appendix A Analysis: The Valid-to-Success Gap

While L-ICL dramatically improves constraint adherence, a gap often remains between validity and success. This gap is most pronounced in Full Sokoban, where L-ICL achieves 46% valid plans but only 20% success (Table 4). Understanding this gap illuminates both L-ICL’s strengths and its limitations.

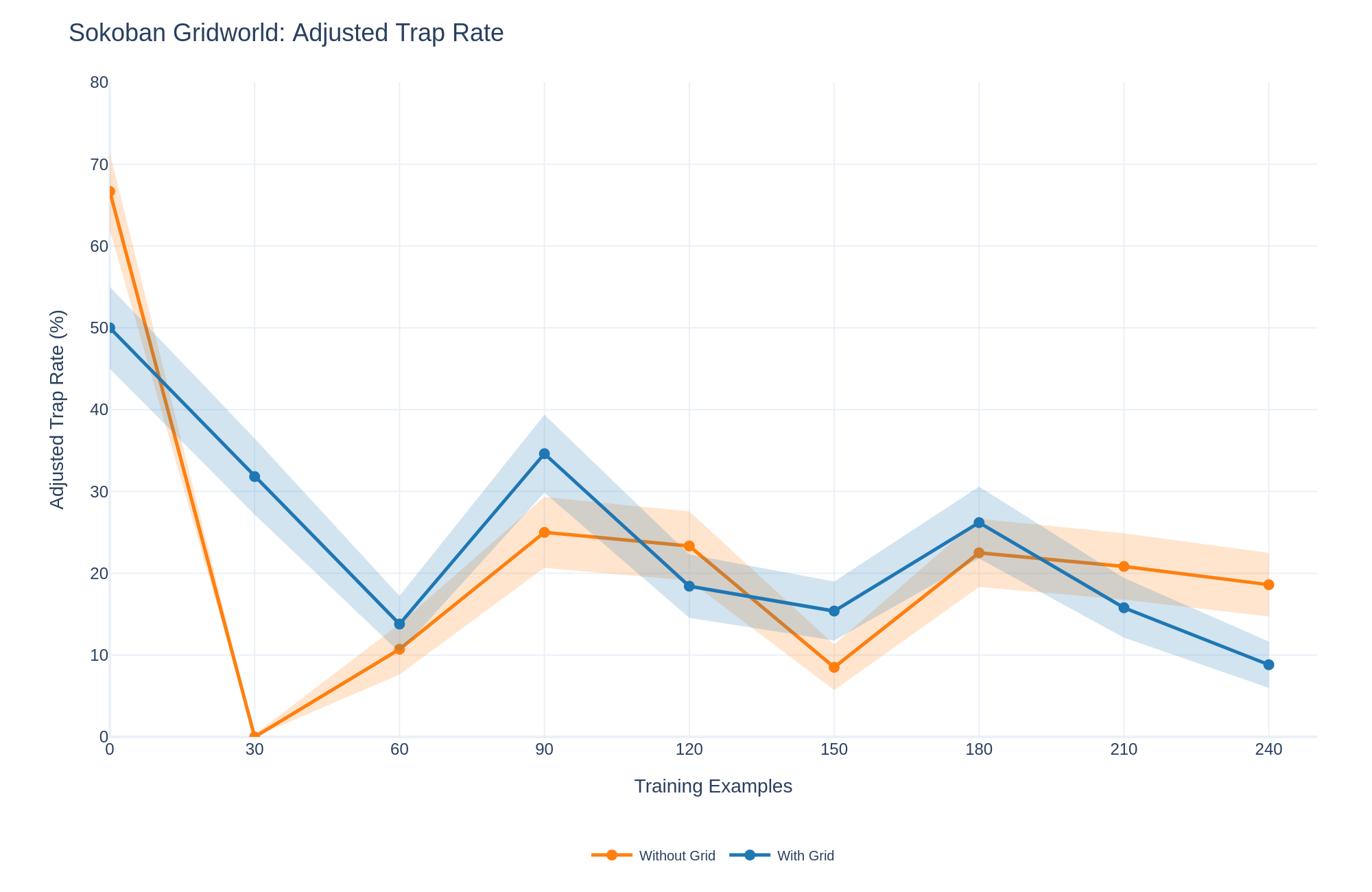

A.1 Trap Rate Analysis

In Sokoban, certain states are traps: configurations from which the goal is unreachable regardless of future actions (e.g., a box pushed into a corner). We measure the adjusted trap rate: among valid plans, what fraction enters a trap state?

Figure 7 shows that L-ICL reduces trap rates. On Sokoban Grid, the adjusted trap rate drops from 50% at $m{=}0$ to 10% at $m{=}210$ . This indicates that L-ICL teaches not only immediate constraint satisfaction but also some degree of trap avoidance.

However, the absolute trap rate remains non-negligible, and the valid-to-success gap persists. We hypothesize that trap avoidance requires multi-step lookahead that localized corrections cannot fully provide. A correction like “pushing box B east from (3,4) is valid” does not encode that this push leads to an unsolvable configuration three moves later. Addressing this limitation may require complementary approaches such as search or learned value functions.

<details>

<summary>graphs/misc/sokoban_trap_rate_adjusted.png Details</summary>

### Visual Description

## Line Chart: Sokoban Gridworld: Adjusted Trap Rate

### Overview

The image is a line chart comparing the adjusted trap rate (%) in a Sokoban Gridworld environment with and without a grid, as a function of the number of training examples. The chart displays two lines, one representing "Without Grid" (orange) and the other representing "With Grid" (blue). Shaded regions around each line indicate the uncertainty or variability in the data.

### Components/Axes

* **Title:** Sokoban Gridworld: Adjusted Trap Rate

* **X-axis:** Training Examples, with markers at 0, 30, 60, 90, 120, 150, 180, 210, and 240.

* **Y-axis:** Adjusted Trap Rate (%), with markers at 0, 10, 20, 30, 40, 50, 60, 70, and 80.

* **Legend:** Located at the bottom of the chart.

* Orange line: Without Grid

* Blue line: With Grid

### Detailed Analysis

**Without Grid (Orange Line):**

* **Trend:** The line starts high, drops sharply, then increases, and then decreases again, showing a fluctuating pattern.

* **Data Points:**

* 0 Training Examples: Approximately 68%

* 30 Training Examples: Approximately 0%

* 60 Training Examples: Approximately 11%

* 90 Training Examples: Approximately 25%

* 120 Training Examples: Approximately 23%

* 150 Training Examples: Approximately 8%

* 180 Training Examples: Approximately 23%

* 210 Training Examples: Approximately 21%

* 240 Training Examples: Approximately 19%

**With Grid (Blue Line):**

* **Trend:** The line starts high, drops, then increases, and then decreases again, showing a fluctuating pattern.

* **Data Points:**

* 0 Training Examples: Approximately 50%

* 30 Training Examples: Approximately 38%

* 60 Training Examples: Approximately 14%

* 90 Training Examples: Approximately 35%

* 120 Training Examples: Approximately 18%

* 150 Training Examples: Approximately 15%

* 180 Training Examples: Approximately 26%

* 210 Training Examples: Approximately 15%

* 240 Training Examples: Approximately 9%

### Key Observations

* Initially, the "Without Grid" trap rate is higher than the "With Grid" trap rate.

* Both lines show a significant drop in the trap rate between 0 and 60 training examples.

* Both lines fluctuate, indicating that the adjusted trap rate varies with the number of training examples.

* The shaded regions around the lines suggest variability in the trap rate for both conditions.

### Interpretation

The chart compares the adjusted trap rate in a Sokoban Gridworld environment with and without a grid. The data suggests that initially, the absence of a grid leads to a higher trap rate. However, as the number of training examples increases, both conditions exhibit fluctuating trap rates. The variability indicated by the shaded regions suggests that the performance is not consistent and may depend on other factors not explicitly represented in the chart. The "With Grid" line generally shows a lower trap rate after the initial drop, suggesting that the grid provides some benefit in reducing the likelihood of traps.

</details>

Figure 7: Trap rate decreases with L-ICL. Adjusted trap rate (fraction of valid plans entering unsolvable states) on Sokoban Grid. L-ICL reduces trap rates from 50% to 10%, indicating partial learning of strategic constraints.

A.2 Multi-Object State Tracking

Comparing Sokoban Grid (no boxes) to Full Sokoban reveals the cost of multi-object tracking. With identical spatial layouts, Sokoban Grid achieves 49% success while Full Sokoban reaches only 20%. The difference lies in state complexity: Full Sokoban requires tracking the agent position and all box positions, with constraints that depend on their joint configuration.

This difficulty is also evident in BlocksWorld, where every object is dynamic. L-ICL improves BlocksWorld success from 48% to 66%, but a gap remains between validity (68%) and success. The pattern suggests that relational constraint learning, while improved by L-ICL, remains more challenging than spatial constraint learning.

A.3 Decomposing Planning Difficulty

The valid-to-success gap reveals a clean decomposition of planning difficulty:

1. Constraint satisfaction: Generating actions that respect domain physics. L-ICL addresses this effectively across all domains.

1. Strategic selection: Among valid actions, choosing those that lead toward the goal without entering traps. This requires multi-step reasoning that localized corrections do not directly provide.

This decomposition suggests a practical architecture: use L-ICL to harden constraint satisfaction, then layer strategic reasoning (search, learned policies, or hierarchical planning) on top. The hardened base ensures that any action proposed by the strategic layer is physically valid, separating concerns and simplifying both components.

Table 5 summarizes the valid-to-success gaps across domains, highlighting where strategic failures dominate.

Table 5: Valid-to-success gap analysis across domains with L-ICL[ $m{=}60$ ]. Larger gaps indicate that constraint satisfaction alone is insufficient—strategic reasoning is the bottleneck.

| 8 $×$ 8 Grid 10 $×$ 10 Maze Sokoban Grid | 89 57 63 | 89 27 49 | 0 30 14 |

| --- | --- | --- | --- |

| Full Sokoban | 46 | 20 | 26 |

| BlocksWorld | 68 | 66 | 2 |