# Kimi K2.5: Visual Agentic Intelligence

**Authors**: Kimi Team

## Abstract

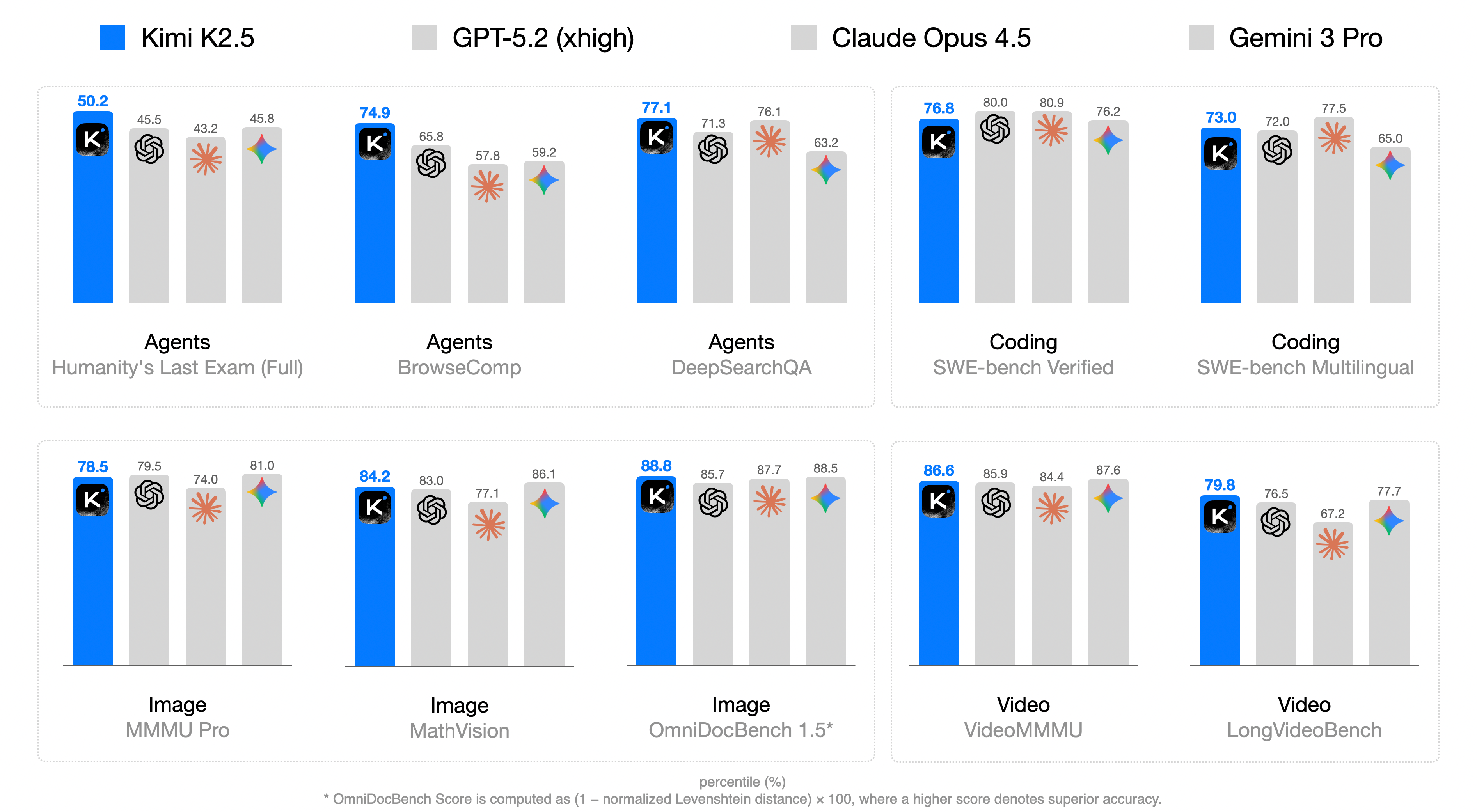

We introduce Kimi K2.5, an open-source multimodal agentic model designed to advance general agentic intelligence. K2.5 emphasizes the joint optimization of text and vision so that two modalities enhance each other. This includes a series of techniques such as joint text-vision pre-training, zero-vision SFT, and joint text-vision reinforcement learning. Building on this multimodal foundation, K2.5 introduces Agent Swarm, a self-directed parallel agent orchestration framework that dynamically decomposes complex tasks into heterogeneous sub-problems and executes them concurrently. Extensive evaluations show that Kimi K2.5 achieves state-of-the-art results across various domains including coding, vision, reasoning, and agentic tasks. Agent Swarm also reduces latency by up to $4.5×$ over single-agent baselines. We release the post-trained Kimi K2.5 model checkpoint https://huggingface.co/moonshotai/Kimi-K2.5 to facilitate future research and real-world applications of agentic intelligence.

<details>

<summary>figures/k25-main-result.png Details</summary>

### Visual Description

## Grouped Bar Chart: AI Model Performance Across Diverse Benchmarks

### Overview

This image is a grouped bar chart comparing the performance of four AI models—**Kimi K2.5** (blue bars), **GPT-5.2 (xhigh)** (light gray bars), **Claude Opus 4.5** (light gray bars with orange star icon), and **Gemini 3 Pro** (light gray bars with blue star icon)—across 10 benchmarks grouped into four categories: Agents, Coding, Image, and Video. Performance is measured in percentiles (%), with higher scores indicating better performance. A footnote clarifies the score calculation for one benchmark.

### Components/Axes

- **Legend (Top)**: Four models with distinct visual identifiers:

- Kimi K2.5: Blue bar + black square icon with white "K"

- GPT-5.2 (xhigh): Light gray bar + black spiral icon

- Claude Opus 4.5: Light gray bar + orange star icon

- Gemini 3 Pro: Light gray bar + blue star icon

- **Benchmark Categories (X-axis Groupings)**:

- Agents: Humanity's Last Exam (Full), BrowseComp, DeepSearchQA

- Coding: SWE-bench Verified, SWE-bench Multilingual

- Image: MMMU Pro, MathVision, OmniDocBench 1.5*

- Video: VideoMMMU, LongVideoBench

- **Score Metric**: Percentiles (%) (implied by the footnote and numerical labels above bars)

- **Footnote (Bottom)**: "* OmniDocBench Score is computed as (1 – normalized Levenshtein distance) × 100, where a higher score denotes superior accuracy."

### Detailed Analysis

Below are the percentile scores for each model across all benchmarks (scores labeled above bars):

#### Agents Category

| Benchmark | Kimi K2.5 | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro |

|----------------------------|-----------|-----------------|-----------------|--------------|

| Humanity's Last Exam (Full)| 50.2 | 45.5 | 43.2 | 45.8 |

| BrowseComp | 74.9 | 65.8 | 57.8 | 59.2 |

| DeepSearchQA | 77.1 | 71.3 | 76.1 | 63.2 |

#### Coding Category

| Benchmark | Kimi K2.5 | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro |

|----------------------------|-----------|-----------------|-----------------|--------------|

| SWE-bench Verified | 76.8 | 80.0 | 80.9 | 76.2 |

| SWE-bench Multilingual | 73.0 | 72.0 | 77.5 | 65.0 |

#### Image Category

| Benchmark | Kimi K2.5 | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro |

|----------------------------|-----------|-----------------|-----------------|--------------|

| MMMU Pro | 78.5 | 79.5 | 74.0 | 81.0 |

| MathVision | 84.2 | 83.0 | 77.1 | 86.1 |

| OmniDocBench 1.5* | 88.8 | 85.7 | 87.7 | 88.5 |

#### Video Category

| Benchmark | Kimi K2.5 | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro |

|----------------------------|-----------|-----------------|-----------------|--------------|

| VideoMMMU | 86.6 | 85.9 | 84.4 | 87.6 |

| LongVideoBench | 79.8 | 76.5 | 67.2 | 77.7 |

### Key Observations

1. **Agents Benchmarks**: Kimi K2.5 leads in all three agent-focused tasks (50.2–77.1), with a significant margin in BrowseComp (74.9 vs. next-highest 65.8).

2. **Coding Benchmarks**: Claude Opus 4.5 outperforms others in both SWE-bench tasks (80.9 in Verified, 77.5 in Multilingual), while GPT-5.2 is competitive in SWE-bench Verified (80.0).

3. **Image Benchmarks**: Gemini 3 Pro leads in MMMU Pro (81.0) and MathVision (86.1), while Kimi K2.5 narrowly leads in OmniDocBench 1.5 (88.8 vs. Gemini’s 88.5).

4. **Video Benchmarks**: Gemini 3 Pro leads in VideoMMMU (87.6), and Kimi K2.5 leads in LongVideoBench (79.8).

5. **Outlier**: Claude Opus 4.5 has a notably low score in LongVideoBench (67.2), far below the other three models (76.5–79.8).

6. **Consistency**: Kimi K2.5 is top or near-top in 8 of 10 benchmarks, showing strong cross-category performance.

### Interpretation

This chart illustrates the competitive landscape of leading AI models across specialized tasks. The percentile scores reflect relative performance within each benchmark, so higher values indicate better capability for that specific task. Key takeaways:

- **Kimi K2.5** excels in agent-oriented tasks (e.g., browsing, deep search) and document understanding (OmniDocBench 1.5), suggesting strong reasoning and information retrieval abilities.

- **Claude Opus 4.5** dominates coding benchmarks, indicating superior software engineering and code generation capabilities.

- **Gemini 3 Pro** performs best in image understanding tasks (MMMU Pro, MathVision), highlighting strengths in visual reasoning.

- The OmniDocBench 1.5 footnote clarifies that its score measures document accuracy via Levenshtein distance, making Kimi’s lead here meaningful for document processing use cases.

Overall, the data shows no single model dominates all tasks—each has niche strengths, which is critical for users selecting models for specific applications (e.g., coding vs. image analysis).

</details>

Figure 1: Kimi K2.5 main results.

## 1 Introduction

Large Language Models (LLMs) are rapidly evolving toward agentic intelligence. Recent advances, such as GPT-5.2 [41], Claude Opus 4.5 [4], Gemini 3 Pro [19], and Kimi K2-Thinking [1], demonstrate substantial progress in agentic capabilities, particularly in tool calling and reasoning. These models increasingly exhibit the ability to decompose complex problems into multi-step plans and to execute long sequences of interleaved reasoning and actions.

In this report, we introduce the training methods and evaluation results of Kimi K2.5. Concretely, we improve the training of K2.5 over previous models in the following two key aspects.

Joint Optimization of Text and Vision. A key insight from the practice of K2.5 is that joint optimization of text and vision enhances both modalities and avoids the conflict. Specifically, we devise a set of techniques for this purpose. During pre-training, in contrast to conventional approaches that add visual tokens to a text backbone at a late stage [7, 20], we find early vision fusion with lower ratios tends to yield better results given the fixed total vision-text tokens. Therefore, K2.5 mixes text and vision tokens with a constant ratio throughout the entire training process.

Architecturally, Kimi K2.5 employs MoonViT-3D, a native-resolution vision encoder incorporating the NaViT packing strategy [14], enabling variable-resolution image inputs. For video understanding, we introduce a lightweight 3D ViT compression mechanism: consecutive frames are grouped in fours, processed through the shared MoonViT encoder, and temporally averaged at the patch level. This design allows Kimi K2.5 to process videos up to 4 $×$ longer within the same context window while maintaining complete weight sharing between image and video encoders.

During post-training, we introduce zero-vision SFT—text-only SFT alone activates visual reasoning and tool use. We find that adding human-designed visual trajectories at this stage hurts generalization. In contrast, text-only SFT performs better—likely because joint pretraining already establishes strong vision-text alignment, enabling capabilities to generalize naturally across modalities. We then apply joint RL on both text and vision tasks. Crucially, we find visual RL enhances textual performance rather than degrading it, with improvements on MMLU-Pro and GPQA-Diamond. This bidirectional enhancement—text bootstraps vision, vision refines text—represents superior cross-modal alignment in joint training.

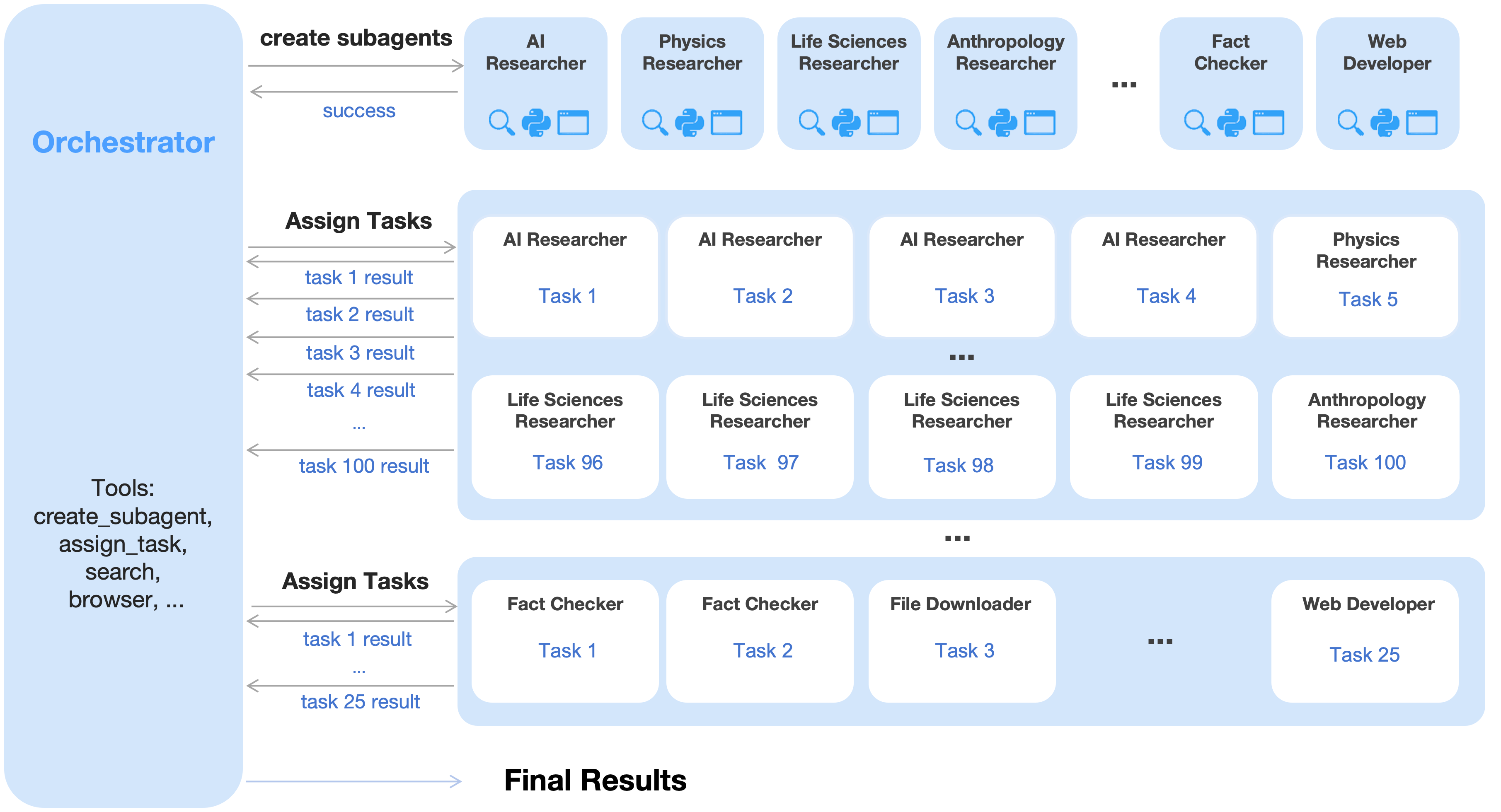

Agent Swarm: Parallel Agent Orchestration. Most existing agentic models rely on sequential execution of tool calls. Even systems capable of hundreds of reasoning steps, such as Kimi K2-Thinking [1], suffer from linear scaling of inference time, leading to unacceptable latency and limiting task complexity. As agentic workloads grow in scope and heterogeneity—e.g., building a complex project that involves massive-scale research, design, and development—the sequential paradigm becomes increasingly inefficient.

To overcome the latency and scalability limits of sequential agent execution, Kimi K2.5 introduces Agent Swarm, a dynamic framework for parallel agent orchestration. We propose a Parallel-Agent Reinforcement Learning (PARL) paradigm that departs from traditional agentic RL [2]. In addition to optimizing tool execution via verifiable rewards, the model is equipped with interfaces for sub-agent creation and task delegation. During training, sub-agents are frozen and their execution trajectories are excluded from the optimization objective; only the orchestrator is updated via reinforcement learning. This decoupling circumvents two challenges of end-to-end co-optimization: credit assignment ambiguity and training instability. Agent Swarm enables complex tasks to be decomposed into heterogeneous sub-problems executed concurrently by domain-specialized agents, transforming task complexity from linear scaling to parallel processing. In wide-search scenarios, Agent Swarm reduces inference latency by up to 4.5 $×$ while improving item-level F1 from 72.8% to 79.0% compared to single-agent baselines.

Kimi K2.5 represents a unified architecture for general-purpose agentic intelligence, integrating vision and language, thinking and instant modes, chats and agents. It achieves strong performance across a broad range of agentic and frontier benchmarks, including state-of-the-art results in visual-to-code generation (image/video-to-code) and real-world software engineering in our internal evaluations, while scaling both the diversity of specialized agents and the degree of parallelism. To accelerate community progress toward General Agentic Intelligence, we open-source our post-trained checkpoints of Kimi K2.5, enabling researchers and developers to explore, refine, and deploy scalable agentic intelligence.

## 2 Joint Optimization of Text and Vision

Kimi K2.5 is a native multimodal model built upon Kimi K2 through large-scale joint pre-training on approximately 15 trillion mixed visual and text tokens. Unlike vision-adapted models that compromise either linguistic or visual capabilities, our joint pre-training paradigm enhances both modalities simultaneously. This section describes the multimodal joint optimization methodology that extends Kimi K2 to Kimi K2.5.

### 2.1 Native Multimodal Pre-Training

Table 1: Performance comparison across different vision-text joint-training strategies. Early fusion with a lower vision ratio yields better results given a fixed total vision-text token budget.

| | Vision Injection Timing | Vision-Text Ratio | Vision Knowledge | Vision Reasoning | OCR | Text Knowledge | Text Reasoning | Code |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Early | 0% | 10%:90% | 25.8 | 43.8 | 65.7 | 45.5 | 58.5 | 24.8 |

| Mid | 50% | 20%:80% | 25.0 | 40.7 | 64.1 | 43.9 | 58.6 | 24.0 |

| Late | 80% | 50%:50% | 24.2 | 39.0 | 61.5 | 43.1 | 57.8 | 24.0 |

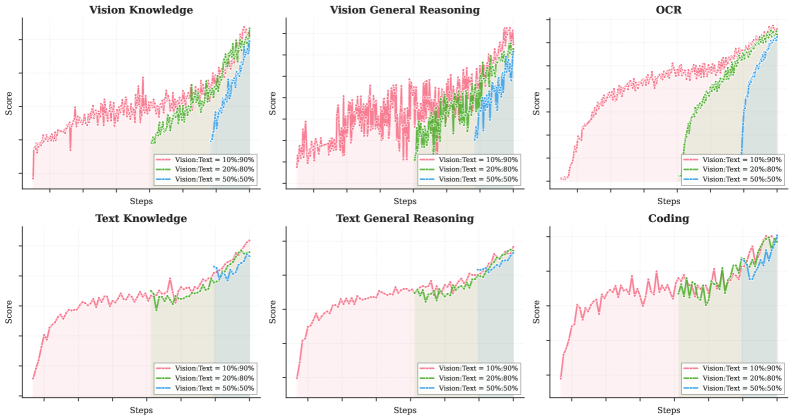

A key design question for multimodal pre-training is: Given a fixed vision-text token budget, what is the optimal vision-text joint-training strategy. Conventional wisdom [7, 20] suggests introducing vision tokens predominantly in the later stages of LLM training at high ratios (e.g., 50% or higher) should accelerate multimodal capability acquisition, treating multimodal capability as a post-hoc add-on to linguistic competence.

However, our experiments (as shown in Table 1 Figure 9) reveal a different story. We conducted ablation studies varying the vision ratio and vision injection timing while keeping the total vision and text token budgets fixed. To strictly meet the targets for different ratios, we pre-trained the model with text-only tokens for a specifically calculated number of tokens before introducing vision data. Surprisingly, we found that the vision ratio has minimal impact on final multimodal performance. In fact, early fusion with a lower vision ratio yields better results given a fixed total vision-text token budget. This motivates our native multimodal pre-training strategy: rather than aggressive vision-heavy training concentrated at the end, we adopt a moderate vision ratio integrated early in the training process, allowing the model to naturally develop balanced multimodal representations while benefiting from extended co-optimization of both modalities.

### 2.2 Zero-Vision SFT

Pretrained VLMs do not naturally perform vision-based tool-calling, which poses a cold-start problem for multimodal RL. Conventional approaches address this issue through manually annotated or prompt-engineered chain-of-thought (CoT) data [7], but such methods are limited in diversity, often restricting visual reasoning to simple diagrams and primitive tool manipulations (crop, rotate, flip).

An observation is that high-quality text SFT data are relatively abundant and diverse. We propose a novel approach, zero-vision SFT, that uses only text SFT data to activate the visual, agentic capabilities during post-training. In this approach, all image manipulations are proxied through programmatic operations in IPython, effectively serving as a generalization of traditional vision tool-use. This "zero-vision" activation enables diverse reasoning behaviors, including pixel-level operations such as object size estimation via binarization and counting, and generalizes to visually grounded tasks such as object localization, counting, and OCR.

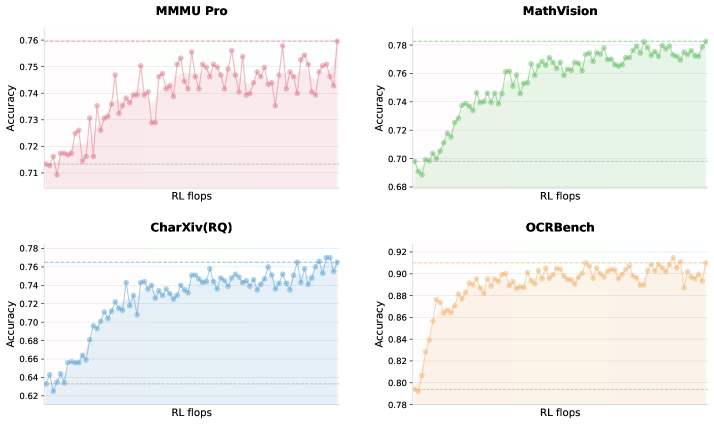

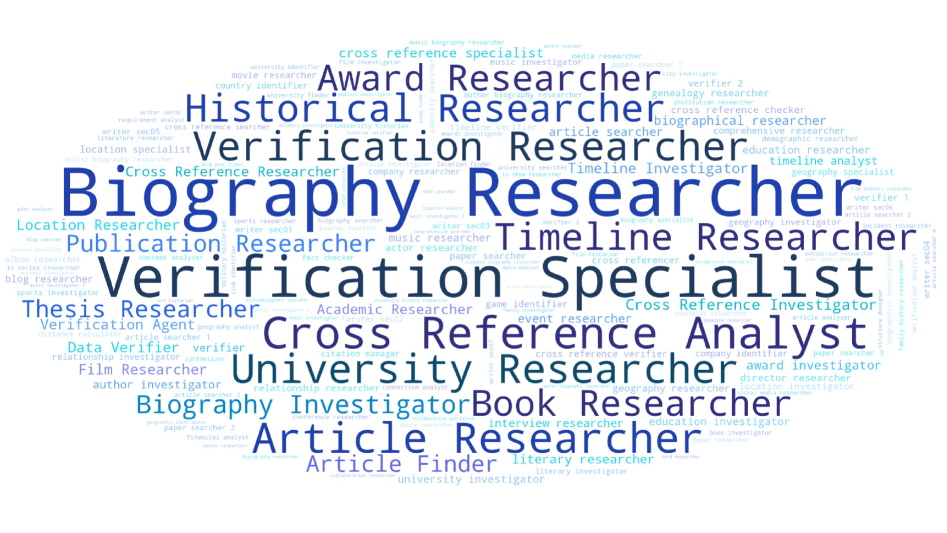

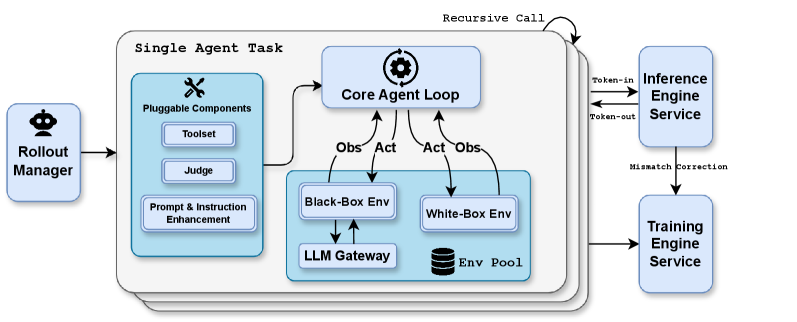

Figure 2 illustrates the RL training curves, where the starting points are obtained from zero-vision SFT. The results show that zero-vision SFT is sufficient for activating vision capabilities while ensuring generalization across modalities. This phenomenon is likely due to the joint pretraining of text and vision data as described in Section 2.1. Compared to zero-vision SFT, our preliminary experiments show that text-vision SFT yields much worse performance on visual, agentic tasks, possibly because of the lack of high-quality vision data.

### 2.3 Joint Multimodal Reinforcement Learning (RL)

In this section, we describe the methodology implemented in K2.5 that enables effective multimodal RL, from outcome-based visual RL to emergent cross-modal transfer that enhances textual performance.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Charts: Model Accuracy vs. RL Flops

### Overview

The image displays four separate line charts arranged in a 2x2 grid. Each chart plots the "Accuracy" of a different model or benchmark against "RL flops" (Reinforcement Learning floating-point operations). The charts illustrate how performance changes with increased computational training effort. All charts share the same x-axis label but have different y-axis scales and data trends.

### Components/Axes

* **Common Elements:**

* **X-axis (All Charts):** Labeled "RL flops". The axis represents a progression of increasing computational effort, though specific numerical markers are not visible.

* **Y-axis (All Charts):** Labeled "Accuracy". The scale and range differ for each chart.

* **Chart Type:** Line chart with data points marked by small circles. Each chart uses a distinct color for its line and a light shaded area beneath it.

* **Individual Chart Details (Spatial Grounding):**

1. **Top-Left Chart: "MMMU Pro"**

* **Title:** "MMMU Pro" (centered at top).

* **Y-axis Scale:** Ranges from approximately 0.71 to 0.76. Major gridlines are visible at 0.71, 0.72, 0.73, 0.74, 0.75, 0.76.

* **Line Color:** Red.

2. **Top-Right Chart: "MathVision"**

* **Title:** "MathVision" (centered at top).

* **Y-axis Scale:** Ranges from approximately 0.68 to 0.78. Major gridlines are visible at 0.68, 0.70, 0.72, 0.74, 0.76, 0.78.

* **Line Color:** Green.

3. **Bottom-Left Chart: "CharXiv(RQ)"**

* **Title:** "CharXiv(RQ)" (centered at top).

* **Y-axis Scale:** Ranges from approximately 0.62 to 0.78. Major gridlines are visible at 0.62, 0.64, 0.66, 0.68, 0.70, 0.72, 0.74, 0.76, 0.78.

* **Line Color:** Blue.

4. **Bottom-Right Chart: "OCRBench"**

* **Title:** "OCRBench" (centered at top).

* **Y-axis Scale:** Ranges from approximately 0.78 to 0.92. Major gridlines are visible at 0.78, 0.80, 0.82, 0.84, 0.86, 0.88, 0.90, 0.92.

* **Line Color:** Orange.

### Detailed Analysis

* **MMMU Pro (Red Line - Top-Left):**

* **Trend Verification:** The line shows a general upward trend with significant volatility. It starts low, rises sharply, then enters a phase of high-frequency oscillation within a band.

* **Data Points (Approximate):** Begins near 0.715. Shows a steep climb to ~0.745. The majority of subsequent data points fluctuate between ~0.735 and ~0.755, with a final point near the top of the range at ~0.76.

* **MathVision (Green Line - Top-Right):**

* **Trend Verification:** Shows a strong, consistent upward trend that begins to plateau in the latter half.

* **Data Points (Approximate):** Starts at the lowest point on its chart, ~0.69. Climbs steadily, crossing 0.74 and 0.76. The trend flattens in the upper region, with most later points oscillating between ~0.77 and ~0.78.

* **CharXiv(RQ) (Blue Line - Bottom-Left):**

* **Trend Verification:** Exhibits a very clear and steady upward trend with moderate noise.

* **Data Points (Approximate):** Begins at the lowest value across all charts, ~0.62. Shows a consistent rise, passing through 0.66, 0.70, and 0.74. The final data points are near ~0.77.

* **OCRBench (Orange Line - Bottom-Right):**

* **Trend Verification:** Demonstrates a rapid initial ascent followed by a stable plateau with minor fluctuations.

* **Data Points (Approximate):** Starts at ~0.79. Increases very quickly to ~0.88. The line then stabilizes, with the majority of points oscillating in a narrow band between ~0.89 and ~0.91. The final point is slightly lower, near ~0.90.

### Key Observations

1. **Universal Improvement:** All four benchmarks show a positive correlation between "RL flops" and "Accuracy," indicating that increased computational training generally improves model performance on these tasks.

2. **Performance Ceiling & Volatility:** Each model appears to approach a performance ceiling. MMMU Pro shows the most volatile plateau, while OCRBench shows the most stable one.

3. **Benchmark Difficulty:** The starting and ending accuracy values suggest varying difficulty. CharXiv(RQ) starts lowest (~0.62), implying it may be the most challenging task initially. OCRBench reaches the highest absolute accuracy (~0.91), suggesting models achieve higher proficiency on this task relative to the others.

4. **Learning Rate:** The slope of the initial ascent varies. OCRBench and MathVision show very steep initial learning curves, while CharXiv(RQ) has a more gradual, sustained climb.

### Interpretation

This composite figure likely comes from a research paper or technical report evaluating the scaling laws of a vision-language model or a reinforcement learning process. The data suggests that:

* **Investment Pays Off:** Allocating more computational resources (RL flops) during training yields measurable accuracy gains across diverse multimodal benchmarks (visual reasoning, math, chart understanding, OCR).

* **Task-Specific Scaling:** The model's learning dynamics are task-dependent. Some tasks (like OCRBench) are mastered quickly and then refined, while others (like CharXiv(RQ)) show continuous, steady improvement, indicating they may require more data or complexity to master.

* **Stability vs. Volatility:** The stability of the plateau (e.g., OCRBench vs. MMMU Pro) may reflect the nature of the task. Noisy tasks with less clear-cut answers might lead to more volatile performance metrics even after extensive training.

* **Practical Implication:** For a practitioner, this chart helps decide the optimal training budget. For example, training beyond a certain point for OCRBench yields diminishing returns, whereas for CharXiv(RQ), further investment might still be beneficial. The charts provide a visual cost-benefit analysis for scaling training compute.

</details>

Figure 2: Vision RL training curves on vision benchmarks starting from minimal zero-vision SFT. By scaling vision RL FLOPs, the performance continues to improve, demonstrating that zero-vision activation paired with long-running RL is sufficient for acquiring robust visual capabilities.

Outcome-Based Visual RL

Following the zero-vision SFT, the model requires further refinement to reliably incorporate visual inputs into reasoning. Text-initiated activation alone exhibits notable failure modes: visual inputs are sometimes ignored, and images may not be attended to when necessary. We employ outcome-based RL on tasks that explicitly require visual comprehension for correct solutions. We categorize these tasks into three domains:

- Visual grounding and counting: Accurate localization and enumeration of objects within images;

- Chart and document understanding: Interpretation of structured visual information and text extraction;

- Vision-critical STEM problems: Mathematical and scientific questions filtered to require visual inputs.

Outcome-based RL on these tasks improves both basic visual capabilities and more complex agentic behaviors. Extracting these trajectories for rejection-sampling fine-tuning (RFT) enables a self-improving data pipeline, allowing subsequent joint RL stages to leverage richer multimodal reasoning traces.

Visual RL Improves Text Performance

Table 2: Cross-Modal Transfer: Vision RL Improves Textual Knowledge

| Benchmark | Before Vision-RL | After Vision-RL | Improvement |

| --- | --- | --- | --- |

| MMLU-Pro | 84.7 | 86.4 | +1.7 |

| GPQA-Diamond | 84.3 | 86.4 | +2.1 |

| LongBench v2 | 56.7 | 58.9 | +2.2 |

To investigate potential trade-offs between visual and textual performance, we evaluated text-only benchmarks before and after visual RL. Surprisingly, outcome-based visual RL produced measurable improvements in textual tasks, including MMLU-Pro (84.7% $→$ 86.4%), GPQA-Diamond (84.3% $→$ 86.4%), and LongBench v2 (56.7% $→$ 58.9%) (Table 2). Analysis suggests that visual RL enhances calibration in areas requiring structured information extraction, reducing uncertainty on queries that resemble visually grounded reasoning (e.g., counting, OCR). These findings indicate that visual RL can contribute to cross-modal generalization, improving textual reasoning without observable degradation of language capabilities.

Joint Multimodal RL Motivated by the finding that robust visual capabilities can emerge from zero-vision SFT paired with vision RL—which further enhances general text abilities—we adopt a joint multimodal RL paradigm during Kimi K2.5’s post-training. Departing from conventional modality-specific expert divisions, we organize RL domains not by input modality but by abilities—knowledge, reasoning, coding, agentic, etc. These domain experts jointly learn from both pure-text and multimodal queries, while the Generative Reward Model (GRM) similarly optimizes across heterogeneous traces without modality barriers. This pardaigm ensures that capability improvements acquired through either textual or visual inputs inherently generalize to enhance related abilities across the alternate modality, thereby maximizing cross-modal capability transfer.

## 3 Agent Swarm

The primary challenge of existing agent-based systems lies in their reliance on sequential execution of reasoning and tool-calling steps. While this structure may be effective for simpler, short-horizon tasks, it becomes inadequate as the complexity of the task increases and the accumulated context grows. As tasks evolve to contain broad information gathering and intricate, multi-branch reasoning, sequential systems often encounter significant bottlenecks [6, 4, 5]. The limited capacity of a single agent working through each step one by one can lead to the exhaustion of practical reasoning depth and tool-call budgets, ultimately hindering the system’s ability to handle more complex scenarios.

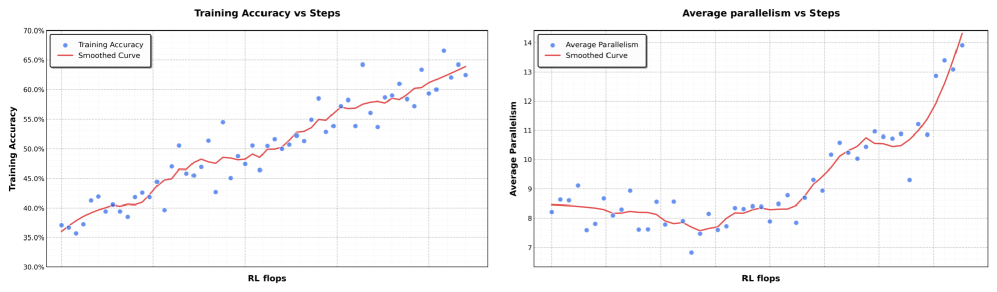

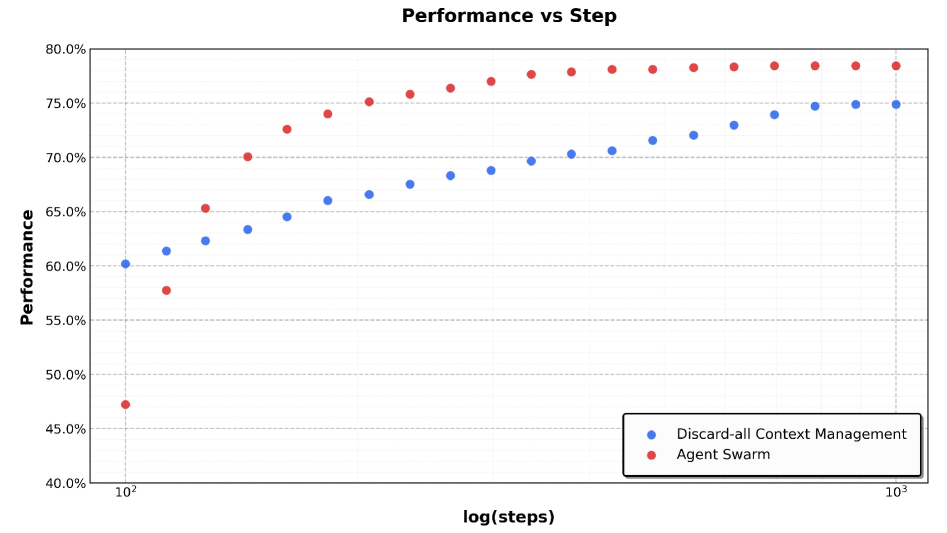

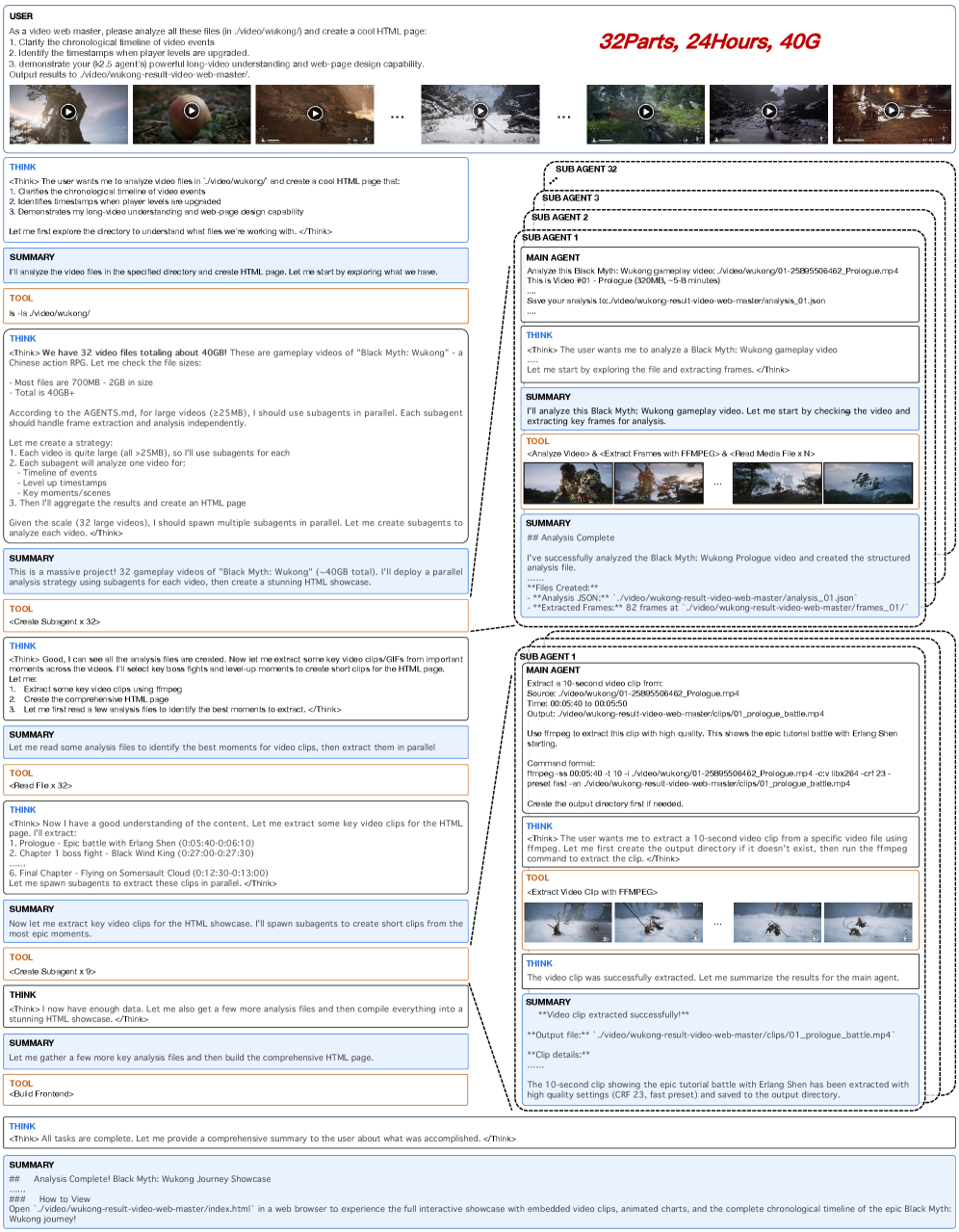

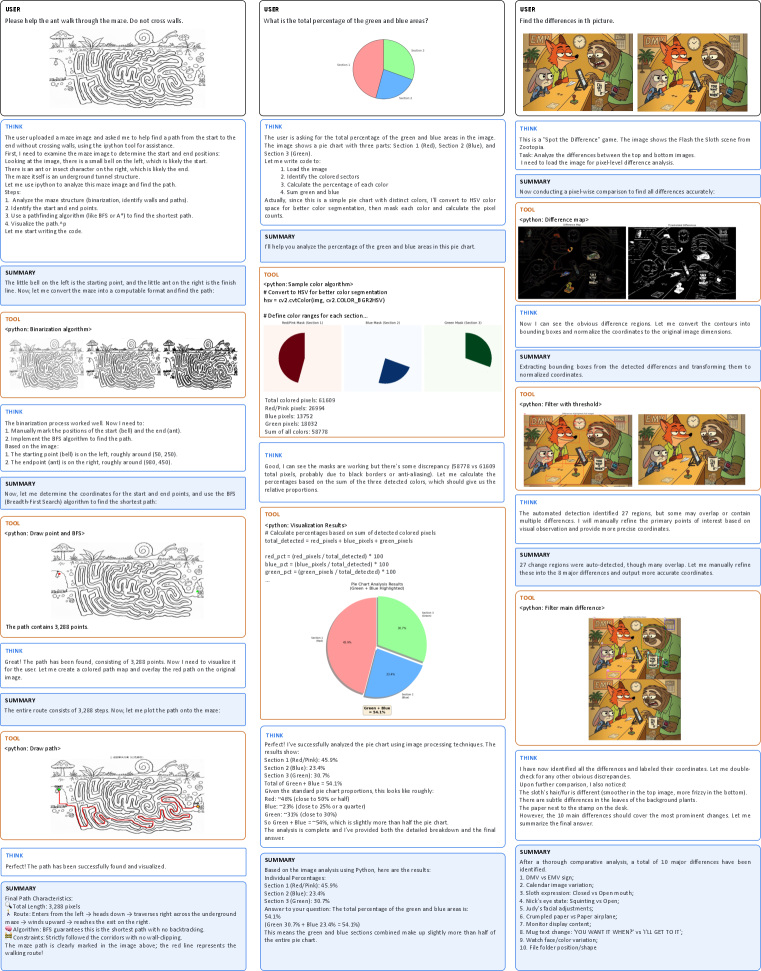

To address this, we introduce Agent Swarm and Parallel Agent Reinforcement Learning (PARL). Instead of executing a task as a reasoning chain or relying on pre-specified parallelization heuristics, K2.5 initiates an Agent Swarm through dynamic task decomposition, subagent instantiation, and parallel subtask scheduling. Importantly, parallelism is not presumed to be inherently advantageous; decisions regarding whether, when, and how to parallelize are explicitly learned through environmental feedback and RL-driven exploration. As shown in Figure 4, the progression of performance demonstrates this adaptive capability, with the cumulative reward increasing smoothly as the orchestrator optimizes its parallelization strategy throughout training.

<details>

<summary>figures/multi-agent-rl-system.png Details</summary>

### Visual Description

## System Architecture Diagram: Multi-Agent Orchestration Workflow

### Overview

The image is a technical diagram illustrating a hierarchical multi-agent system orchestrated by a central "Orchestrator." The system demonstrates a workflow where the Orchestrator creates specialized subagents, assigns tasks to them in parallel, collects results, and produces a final output. The diagram is structured in three horizontal tiers, showing the flow from agent creation to task execution and result aggregation.

### Components/Axes

**Primary Component (Left Column):**

* **Orchestrator:** A large, light-blue vertical rectangle on the left side. It acts as the central controller.

* **Tools List:** Located within the Orchestrator box, listing its capabilities: `create_subagent`, `assign_task`, `search`, `browser, ...`.

**Top Tier (Agent Creation):**

* **Action:** An arrow labeled `create subagents` points from the Orchestrator to a row of subagent boxes.

* **Subagent Types (Top Row, Left to Right):**

* `AI Researcher`

* `Physics Researcher`

* `Life Sciences Researcher`

* `Anthropology Researcher`

* `...` (ellipsis indicating more types)

* `Fact Checker`

* `Web Developer`

* **Subagent Icons:** Each subagent box contains three small icons: a magnifying glass (search), a Python logo, and a browser window.

* **Feedback:** A return arrow labeled `success` points back to the Orchestrator.

**Middle Tier (Research Task Assignment):**

* **Action:** An arrow labeled `Assign Tasks` points from the Orchestrator to a large container box.

* **Task Grid:** Inside the container, white rounded rectangles represent individual tasks assigned to specific subagents.

* **Row 1:** `AI Researcher` (Task 1), `AI Researcher` (Task 2), `AI Researcher` (Task 3), `AI Researcher` (Task 4), `Physics Researcher` (Task 5).

* **Ellipsis:** `...` (indicating tasks 6-95 are not shown).

* **Row 2:** `Life Sciences Researcher` (Task 96), `Life Sciences Researcher` (Task 97), `Life Sciences Researcher` (Task 98), `Life Sciences Researcher` (Task 99), `Anthropology Researcher` (Task 100).

* **Result Flow:** Multiple return arrows point back to the Orchestrator, labeled sequentially: `task 1 result`, `task 2 result`, `task 3 result`, `task 4 result`, `...`, `task 100 result`.

**Bottom Tier (Utility Task Assignment):**

* **Action:** A second arrow labeled `Assign Tasks` points from the Orchestrator to another container box.

* **Task Grid:** Shows tasks for different agent types.

* `Fact Checker` (Task 1), `Fact Checker` (Task 2), `File Downloader` (Task 3), `...`, `Web Developer` (Task 25).

* **Result Flow:** Return arrows labeled `task 1 result`, `...`, `task 25 result`.

**Final Output:**

* A single arrow points from the bottom of the Orchestrator to the text `Final Results` at the bottom center of the diagram.

### Detailed Analysis

**Workflow Sequence:**

1. **Initialization:** The Orchestrator uses its `create_subagent` tool to instantiate a pool of specialized agents (Researchers, Fact Checker, Web Developer).

2. **Parallel Task Execution (Research):** The Orchestrator uses `assign_task` to distribute 100 discrete tasks (Task 1 to Task 100) across the researcher agents. The diagram shows a clear mapping: AI Researchers handle the initial batch (Tasks 1-4), a Physics Researcher handles Task 5, Life Sciences Researchers handle a later batch (Tasks 96-99), and an Anthropology Researcher handles Task 100. This implies task specialization or load distribution.

3. **Parallel Task Execution (Utilities):** A separate batch of 25 tasks (Task 1 to Task 25) is assigned to utility agents like Fact Checkers, a File Downloader, and a Web Developer.

4. **Result Aggregation:** All task results (100 from researchers, 25 from utilities) are sent back to the Orchestrator.

5. **Synthesis:** The Orchestrator processes the aggregated results and outputs the `Final Results`.

**Spatial & Visual Relationships:**

* The Orchestrator is the persistent, central entity on the left.

* Subagent creation is a one-to-many relationship shown at the top.

* Task assignment is a one-to-many relationship shown in two distinct parallel batches (middle and bottom).

* The use of ellipses (`...`) in both the agent list and task lists indicates the system is scalable and can handle more agent types and tasks than are explicitly drawn.

### Key Observations

* **Scalability:** The diagram emphasizes scalability through the use of ellipses and numbered tasks (up to 100 and 25), suggesting the system can manage a large volume of concurrent operations.

* **Specialization:** Agents are domain-specific (AI, Physics, Life Sciences, Anthropology) or role-specific (Fact Checker, Web Developer, File Downloader), indicating a design for complex, multi-disciplinary problems.

* **Tool Integration:** Each subagent is equipped with a standard set of tools (search, coding, browsing), enabling them to perform autonomous research and development tasks.

* **Centralized Control:** All coordination, task assignment, and result collection flows through the single Orchestrator, highlighting a hub-and-spoke control model.

### Interpretation

This diagram models a **scalable, multi-agent AI system for complex research and development projects**. The Orchestrator functions as a project manager or "conductor," breaking down a large, overarching problem into discrete sub-tasks. These tasks are then delegated to a fleet of specialized AI agents that can work in parallel, leveraging their domain expertise and tool access.

The separation into two task assignment batches (100 research tasks, 25 utility tasks) suggests a possible two-phase workflow: a primary research phase followed by a validation/implementation phase (fact-checking, file handling, web development). The system's strength lies in its ability to **parallelize work across many specialized agents**, dramatically speeding up processes that would be sequential for a single AI. The final output is a synthesized product of all these distributed efforts. This architecture is indicative of advanced AI frameworks designed for autonomous scientific discovery, large-scale data analysis, or complex software development projects.

</details>

Figure 3: An agent swarm has a trainable orchestrator that dynamically creates specialized frozen subagents and decomposes complex tasks into parallelizable subtasks for efficient distributed execution.

Architecture and Learning Setup

The PARL framework adopts a decoupled architecture comprising a trainable orchestrator and frozen subagents instantiated from fixed intermediate policy checkpoints. This design deliberately avoids end-to-end co-optimization to circumvent two fundamental challenges: credit assignment ambiguity and training instability. In this multi-agent setting, outcome-based rewards are inherently sparse and noisy; a correct final answer does not guarantee flawless subagent execution, just as a failure does not imply universal subagent error. By freezing the subagents and treating their outputs as environmental observations rather than differentiable decision points, we disentangle high-level coordination logic from low-level execution proficiency, leading to more robust convergence. To improve efficiency, we first train the orchestrator using small-size subagents before transitioning to larger models. Our RL framework also supports dynamically adjusting the inference instance ratios between subagents and the orchestrator, thereby maximizing the resource usage across the cluster.

PARL Reward

Training a reliable parallel orchestrator is challenging due to the delayed, sparse, and non-stationary feedback inherent in independent subagent execution. To address this, we define the PARL reward as:

| | $\displaystyle r_PARL(x,y)=λ_1·\mspace{-26.0mu}\underbrace{r_parallel}_instantiation reward\mspace{-9.0mu}+\mspace{18.0mu}λ_2·\mspace{-32.0mu}\underbrace{r_finish}_sub-agent finish rate+\underbrace{r_perf(x,y)}_task-level outcome .$ | |

| --- | --- | --- |

The performance reward $r_perf$ evaluates the overall success and quality of the solution $y$ for a given task $x$ . This is augmented by two auxiliary rewards, each addressing a distinct challenge in learning parallel orchestration. The reward $r_parallel$ is introduced to mitigate serial collapse —a local optimum where the orchestrator defaults to single-agent execution. By incentivizing subagent instantiation, this term encourages the exploration of concurrent scheduling spaces. The $r_finish$ reward focuses on the successful completion of assigned subtasks. It is used to prevent spurious parallelism, a reward-hacking behavior in which the orchestrator increases parallel metrics dramatically by spawning many subagents without meaningful task decomposition. By rewarding completed subtasks, $r_finish$ enforces feasibility and guides the policy toward valid and effective decompositions.

To ensure the final policy optimizes for the primary objective, the hyperparameters $λ_1$ and $λ_2$ are annealed to zero over the course of training.

Critical Steps as Resource Constraint

To measure computational time cost in a parallel-agent setting, we define critical steps by analogy to the critical path in a computation graph. We model an episode as a sequence of execution stages indexed by $t=1,\dots,T$ . In each stage, the main agent executes an action, which corresponds to either direct tool invocation or the instantiation of a group of subagents running in parallel. Let $S_main^(t)$ denote the number of steps taken by the main agent in stage $t$ (typically $S_main^(t)=1$ ), and $S_sub,i^(t)$ denote the number of steps taken by the $i$ -th subagent in that parallel group. The duration of stage $t$ is governed by the longest-running subagent within that cohort. Consequently, the total critical steps for an episode are defined as

| | $\displaystyleCriticalSteps=∑_t=1^T≤ft(S_main^(t)+\max_iS_sub,i^(t)\right).$ | |

| --- | --- | --- |

By constraining training and evaluation using critical steps rather than total steps, the framework explicitly incentivizes effective parallelization. Excessive subtask creation that does not reduce the maximum execution time of parallel groups yields little benefit under this metric, while well-balanced task decomposition that shortens the longest parallel branch directly reduces critical steps. As a result, the orchestrator is encouraged to allocate work across subagents in a way that minimizes end-to-end latency, rather than merely maximizing concurrency or total work performed.

Prompt Construction for Parallel-agent Capability Induction

To incentivize the orchestrator to leverage the advantages of parallelization, we construct a suite of synthetic prompts designed to stress the limits of sequential agentic execution. These prompts emphasize either wide search, requiring simultaneous exploration of many independent information sources, or deep search, requiring multiple reasoning branches with delayed aggregation. We additionally include tasks inspired by real-world workloads, such as long-context document analysis and large-scale file downloading. When executed sequentially, these tasks are difficult to complete within fixed reasoning-step and tool-call budgets. By construction, they encourage the orchestrator to allocate subtasks in parallel, enabling completion within fewer critical steps than would be feasible for a single sequential agent. Importantly, the prompts do not explicitly instruct the model to parallelize. Instead, they shape the task distribution such that parallel decomposition and scheduling strategies are naturally favored.

<details>

<summary>x3.png Details</summary>

### Visual Description

## [Chart Type]: Dual-Panel Line Charts with Scatter Points

### Overview

The image displays two side-by-side charts that plot different performance metrics against a common computational cost metric ("RL flops"). Both charts use a scatter plot of individual data points (blue dots) overlaid with a red smoothed trend line. The charts appear to analyze the training progression of a machine learning model, likely in a Reinforcement Learning (RL) context.

### Components/Axes

**Common Elements:**

* **X-Axis (Both Charts):** Label: "RL flops". This axis represents the computational cost or training steps, measured in floating-point operations (flops) for a Reinforcement Learning process. The scale is linear but unlabeled with specific numerical markers.

* **Legend (Both Charts):** Positioned in the top-left corner of each chart's plot area.

* Left Chart: "Training Accuracy" (blue dots), "Smoothed Curve" (red line).

* Right Chart: "Average Parallelism" (blue dots), "Smoothed Curve" (red line).

**Left Chart: "Training Accuracy vs Steps"**

* **Y-Axis:** Label: "Training Accuracy". Scale: Linear, ranging from 30.0% to 70.0% with major gridlines at 5.0% intervals (30.0%, 35.0%, 40.0%, 45.0%, 50.0%, 55.0%, 60.0%, 65.0%, 70.0%).

**Right Chart: "Average parallelism vs Steps"**

* **Y-Axis:** Label: "Average Parallelism". Scale: Linear, ranging from 7 to 14 with major gridlines at integer intervals (7, 8, 9, 10, 11, 12, 13, 14).

### Detailed Analysis

**Left Chart - Training Accuracy:**

* **Trend Verification:** The data series shows a clear, consistent upward trend. The blue dots and the red smoothed curve both slope upward from left to right.

* **Data Points & Values:**

* **Start (Low RL flops):** Training accuracy begins at approximately 35-37%.

* **Mid-Range:** Accuracy crosses the 50% threshold at a mid-point on the x-axis. The data shows moderate scatter around the trend line.

* **End (High RL flops):** The final data points cluster between approximately 62% and 66%. The smoothed curve ends at roughly 63-64%.

* **Distribution:** The scatter of blue dots around the red line is relatively uniform, suggesting consistent variance in accuracy measurements throughout training.

**Right Chart - Average Parallelism:**

* **Trend Verification:** The trend is non-linear. It begins relatively flat, shows a slight dip, then rises gradually before a sharp, accelerating increase at the far right.

* **Data Points & Values:**

* **Start (Low RL flops):** Average parallelism starts around 8.0-8.5.

* **Mid-Range (Dip & Plateau):** There is a noticeable dip where values fall to approximately 7.5-8.0. Following this, the metric recovers and plateaus in the 8.0-9.0 range for a significant portion of the x-axis.

* **End (High RL flops):** A sharp, near-exponential increase occurs. The final data points reach values between 13.0 and 14.0, with the smoothed curve ending at approximately 14.0.

* **Distribution:** The scatter is tighter during the initial flat/dip phase and increases significantly during the final sharp rise, indicating greater variability in parallelism at higher computational scales.

### Key Observations

1. **Positive Correlation:** Both training accuracy and average parallelism show a positive correlation with increased RL flops (training steps/computation).

2. **Divergent Growth Patterns:** While accuracy grows in a roughly linear fashion, parallelism exhibits a "hockey stick" or phase-change growth pattern, with a dramatic acceleration after a long period of modest change.

3. **Initial Parallelism Dip:** The right chart shows a distinct, temporary decrease in average parallelism early in training before it begins its sustained increase.

4. **Increased Variance at Scale:** The scatter (variance) of the "Average Parallelism" data points increases markedly during its final growth phase, unlike the more consistent scatter in the accuracy chart.

### Interpretation

These charts together suggest a narrative about the training dynamics of this RL system:

* **Performance Improves with Compute:** The left chart confirms the expected outcome: investing more computational resources (RL flops) leads to a steady improvement in the model's task performance (accuracy).

* **System Behavior Changes with Scale:** The right chart reveals a more complex underlying system behavior. The "Average Parallelism" likely measures how the computational workload is distributed (e.g., across multiple processors or threads). The initial dip and plateau suggest an initial phase where the system's parallelization strategy is stable or even slightly hindered. The final sharp rise indicates a **critical scaling point** where the system's architecture or the nature of the task allows for a massive increase in parallel execution efficiency.

* **Implication:** The most significant gains in computational efficiency (parallelism) are unlocked only after a substantial amount of training has already occurred. This could imply that the model's structure or the problem's state space evolves to become more amenable to parallel processing later in training. The increased variance at high parallelism might reflect instability or sensitivity in the system when operating at this high-efficiency frontier.

**Language Declaration:** All text in the image is in English.

</details>

Figure 4: In our parallel-agent reinforcement learning environment, the training accuracy increases smoothly as training progresses. At the same time, the level of parallelism during training also gradually increases.

## 4 Method Overview

### 4.1 Foundation: Kimi K2 Base Model

The foundation of Kimi K2.5 is Kimi K2 [53], a trillion-parameter mixture-of-experts (MoE) transformer [59] model pre-trained on 15 trillion high-quality text tokens. Kimi K2 employs the token-efficient MuonClip optimizer [29, 33] with QK-Clip for training stability. The model comprises 1.04 trillion total parameters with 32 billion activated parameters, utilizing 384 experts with 8 activated per token (sparsity of 48). For detailed descriptions of MuonClip, architecture design, and training infrastructure, we refer to the Kimi K2 technical report [53].

### 4.2 Model Architecture

The multimodal architecture of Kimi K2.5 consists of three components: a three-dimensional native-resolution vision encoder (MoonViT-3D), an MLP projector, and the Kimi K2 MoE language model, following the design principles established in Kimi-VL [54].

MoonViT-3D: Shared Embedding Space for Images and Videos

In Kimi-VL, we employ MoonViT to natively process images at their original resolutions, eliminating the need for complex sub-image splitting and splicing operations. Initialized from SigLIP-SO-400M [77], MoonViT incorporates the patch packing strategy from NaViT [14], where single images are divided into patches, flattened, and sequentially concatenated into 1D sequences, thereby enabling efficient simultaneous training on images at varying resolutions.

To maximize the transfer of image understanding capabilities to video, we introduce MoonViT-3D with a unified architecture, fully shared parameters, and a consistent embedding space. By generalizing the “patch n’ pack“ philosophy to the temporal dimension, up to four consecutive frames are treated as a spatiotemporal volume: 2D patches from these frames are jointly flattened and packed into a single 1D sequence, allowing the identical attention mechanism to operate seamlessly across both space and time. While the extra temporal attention improves understanding on high-speed motions and visual effects, the sharing maximizes knowledge generalization from static images to dynamic videos, achieving strong video understanding performance (see in Tab. 4) without requiring specialized video modules or architectural bifurcation. Prior to the MLP projector, lightweight temporal pooling aggregates patches within each temporal chunk, yielding $4×$ temporal compression to significantly extend feasible video length. The result is a unified pipeline where knowledge and ability obtained from image pretraining transfers holistically to videos through one shared parameter space and feature representation.

### 4.3 Pre-training Pipeline

As illustrated in Table 3, Kimi K2.5’s pre-training builds upon the Kimi K2 language model checkpoint and processes approximately 15T tokens across three stages: first, standalone ViT training to establish a robust native-resolution visual encoder; second, joint pre-training to simultaneously enhance language and multimodal capabilities; and third, mid-training on high-quality data and long-context activation to refine capabilities and extend context windows.

Table 3: Overview of training stages: data composition, token volumes, sequence lengths, and trainable components.

| Stages | ViT Training | Joint Pre-training | Joint Long-context Mid-training |

| --- | --- | --- | --- |

| Data | Alt text Synthesis Caption Grounding, OCR, Video | + Text, Knowledge Interleaving Video, OS Screenshot | + High-quality Text & Multimodal Long Text, Long Video Reasoning, Long-CoT |

| Sequence length | 4096 | 4096 | 32768 $→$ 262144 |

| Tokens | 1T | 15T | 500B $→$ 200B |

| Training | ViT | ViT & LLM | ViT & LLM |

ViT Training Stage

The MoonViT-3D is continual pre-trained from SigLIP [77] on image-text and video-text pairs, where the text components consist of a variety of targets: image alt texts, synthetic captions of images and videos, grounding bboxes, and OCR texts. Unlike the implementation in Kimi-VL [54], this continual pre-training does not include a contrastive loss, but incorporates solely cross-entropy loss ${L}_caption$ for caption generation conditioned on input images and videos. We adopt a two-stage alignment strategy. In the first stage, we update the MoonViT-3D to align it with Moonlight-16B-A3B [33] via the caption loss, consuming about 1T tokens with very few training FLOPs. This stage allows MoonViT-3D to primarily understand high-resolution images and videos. A very short second stage follows, updating only the MLP projector to bridge the ViT with the 1T LLM for smoother joint pre-training.

Joint Training Stages

The joint pre-training stage continues from a near-end Kimi K2 checkpoint over additional 15T vision-text tokens at 4K sequence length. The data recipe extends Kimi K2’s pre-training distribution by introducing unique tokens, adjusting data proportions with increased weight on coding-related content, and controlling maximum epochs per data source. The third stage performs long-context activation with integrated higher-quality mid-training data, sequentially extending context length via YaRN [44] interpolation. This yields significant generalization improvements in long-context text understanding and long video comprehension.

### 4.4 Post-Training

#### 4.4.1 Supervised Fine-Tuning

Following the SFT pipeline established by Kimi K2 [53], we developed K2.5 by synthesizing high-quality candidate responses from K2, K2 Thinking and a suite of proprietary in-house expert models. Our data generation strategy employs specialized pipelines tailored to specific domains, integrating human annotation with advanced prompt engineering and multi-stage verification. This methodology produced a large-scale instruction-tuning dataset featuring diverse prompts and intricate reasoning trajectories, ultimately training the model to prioritize interactive reasoning and precise tool-calling for complex, real-world applications.

#### 4.4.2 Reinforcement Learning

Reinforcement learning constitutes a crucial phase of our post-training. To facilitate joint optimization across text and vision modalities, as well as to enable PARL for agent swarm, we develop a Unified Agentic Reinforcement Learning Environment (Appendix D) and optimize the RL algorithms. Both text-vision joint RL and PARL are built upon the algorithms described in this section.

Policy Optimization

For each problem $x$ sampled from a dataset $D$ , $K$ responses $\{y_1,\dots,y_K\}$ are generated using the previous policy $π_old$ . We optimize the model $π_θ$ with respect to the following objective:

$$

\displaystyle L_RL(θ)=E_x∼D≤ft[\frac{1}{N}∑_j=1^K∑_i=1^|y_j|Clip≤ft(\frac{π_θ(y_j^i|x,y_j^0:i)}{π_old(y_j^i|x,y_j^0:i)},α,β\right)({r}(x,y_j)-\bar{r}(x))-τ≤ft(\log\frac{π_θ(y_j^i|x,y_j^0:i)}{π_old(y_j^i|x,y_j^0:i)}\right)^2\right] . \tag{1}

$$

Here $α,β,τ>0$ are hyperparameters, $y^j_0:i$ is the prefix up to the $i$ -th token of the $j$ -th response, $N=∑_i=1^K|y_i|$ is the total number of generated tokens in a batch, $\bar{r}(x)=\frac{1}{K}∑_j=1^Kr(x,y_j)$ is the mean reward of all generated responses.

This loss function departs from the policy optimization algorithm used in K1.5 [30] by introducing a token-level clipping mechanism designed to mitigate the off-policy divergence amplified by discrepancies between training and inference frameworks. The mechanism functions as a simple gradient masking scheme: policy gradients are computed normally for tokens with log-ratios within the interval $[α,β]$ , while gradients for tokens falling outside this range are zeroed out. Notably, a key distinction from standard PPO clipping [50] is that our method relies strictly on the log-ratio to explicitly bound off-policy drift, regardless of the sign of the advantages. This approach aligns with recent strategies proposed to stabilize large-scale RL training [74, 78]. Empirically, we find this mechanism essential for maintaining training stability in complex domains requiring long-horizon, multi-step tool-use reasoning. We employ the MuonClip optimizer [29, 33] to minimize this objective.

Reward Function

We apply a rule-based outcome reward for tasks with verifiable solutions, such as reasoning and agentic tasks. To optimize resource consumption, we also incorporate a budget-control reward aimed at enhancing token efficiency. For general-purpose tasks, we employ Generative Reward Models (GRMs) that provide granular evaluations aligned with Kimi’s internal value criteria. In addition, for visual tasks, we design task-specific reward functions to provide fine-grained supervision. For visual grounding and point localization tasks, we employ an F1-based reward with soft matching: grounding tasks derive soft matches from Intersection over Union (IoU) and point tasks derive soft matches from Gaussian-weighted distances under optimal matching. For polygon segmentation tasks, we rasterize the predicted polygon into a binary mask and compute the segmentation IoU against the ground-truth mask to assign the reward. For OCR tasks, we adopt normalized edit distance to quantify character-level alignment between predictions and ground-truth. For counting tasks, rewards are assigned based on the absolute difference between predictions and ground-truth. Furthermore, we synthesize complex visual puzzle problems and utilize an LLM verifier (Kimi K2) to provide feedback.

Generative Reward Models

Kimi K2 leverages a self-critique rubric reward for open-ended generation [53], and K2.5 extends this line of work by systematically deploying Generative Reward Models (GRMs) across a broad range of agentic behaviors and multimodal trajectories. Rather than limiting reward modeling to conversational outputs, we apply GRMs on top of verified reward signals in diverse environments, including chat assistants, coding agents, search agents, and artifact-generating agents. Notably, GRMs function not as binary adjudicators, but as fine-grained evaluators aligned with Kimi’s values that are critical to user experiences, such as helpfulness, response readiness, contextual relevance, appropriate level of detail, aesthetic quality of generated artifacts, and strict instruction following. This design allows the reward signal to capture nuanced preference gradients that are difficult to encode with purely rule-based or task-specific verifiers. To mitigate reward hacking and overfitting to a single preference signal, we employ multiple alternative GRM rubrics tailored to different task contexts.

Token Efficient Reinforcement Learning

Token efficiency is central to LLMs with test-time scaling. While test-time scaling inherently trades computation for reasoning quality, practical gains require algorithmic innovations that actively navigate this trade-off. Our previous findings indicate that imposing a problem-dependent budget effectively constrains inference-time compute, incentivizing the model to generate more concise chain of thought reasoning patterns without unnecessary token expansion [30, 53]. However, we also observe a length-overfitting phenomenon: models trained under rigid budget constraints often fail to generalize to higher compute scales. Consequently, they cannot effectively leverage additional inference-time tokens to solve complex problems, instead defaulting to truncated reasoning patterns.

To this end, we propose Toggle, a training heuristic that alternates between inference-time scaling and budget-constrained optimization: for learning iteration $t$ , the reward function is defined by

| | $\displaystyle\tilde{r}(x,y)=\begin{cases}r(x,y)·I≤ft\{\frac{1}{K}∑_i=1^Kr(x,y_i)<λ or |y_i|≤budget(x)\right\}&if \lfloor t/m\rfloor±od{2}=0 ({Phase0})\ r(x,y)&if \lfloor t/m\rfloor±od{2}=1 ({Phase1})\end{cases} .$ | |

| --- | --- | --- |

where $λ$ and $m$ are hyper-parameters of the algorithm and $K$ is the number of rollouts per problem. Specifically, the algorithm alternates between two optimization phases every $m$ iterations:

- Phase0 (budget limited phase): The model is trained to solve the problem within a task-dependent token budget. To prevent a premature sacrifice of quality for efficiency, this constraint is conditionally applied: it is only enforced when the model’s mean accuracy for a given problem exceeds the threshold $λ$ .

- Phase1 (standard scaling phase): The model generates responses up to the maximum token limit, encouraging the model to leverage computation for better inference-time scaling.

The problem-dependent budget is estimated from the $ρ$ -th percentile of token lengths among the subset of correct responses:

$$

budget(x)=Percentile≤ft(\{|y_j|\mid r(x,y_i)=1,i=1,\dots,K\},ρ\right) . \tag{2}

$$

This budget is estimated once at the beginning of training and remains fixed thereafter. Notably, Toggle functions as a stochastic alternating optimization for a bi-objective problem. It is specifically designed to reconcile reasoning capabilities with computational efficiency.

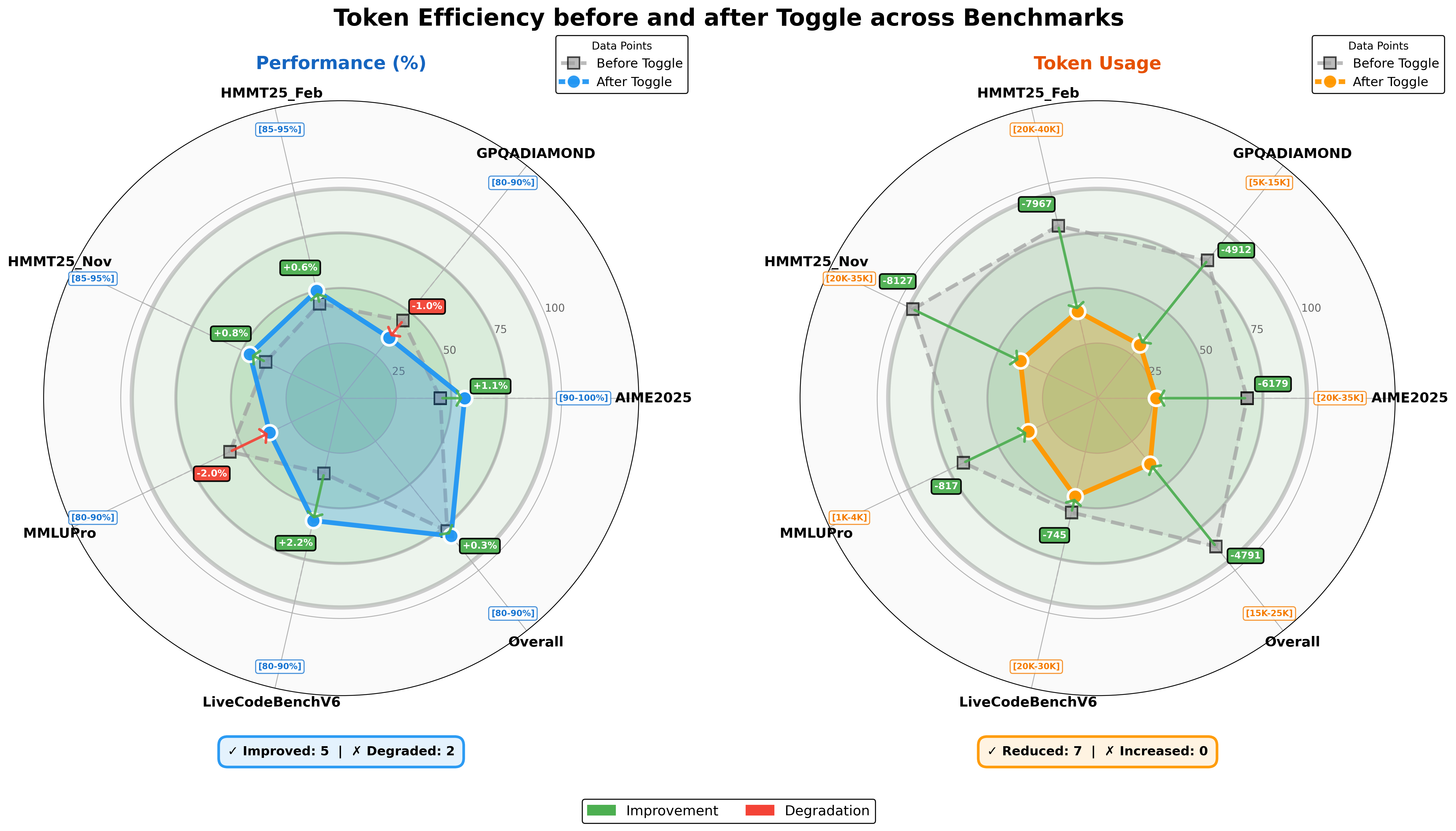

<details>

<summary>figures/te-k2-thinking-radar.png Details</summary>

### Visual Description

## [Dual Radar Chart]: Token Efficiency before and after Toggle across Benchmarks

### Overview

The image contains two side-by-side radar (spider) charts comparing system performance (left, in %) and token usage (right, in numerical values) **before** (gray squares) and **after** (blue/orange circles) a “Toggle” (configuration/model change) across seven benchmarks: *HMMT25_Feb, GPQADIAMOND, AIME2025, Overall, LiveCodeBenchV6, MMLUPro, HMMT25_Nov*.

### Components/Axes

#### Left Chart: Performance (%)

- **Axes**: Radial axes with benchmarks (HMMT25_Feb, GPQADIAMOND, AIME2025, Overall, LiveCodeBenchV6, MMLUPro, HMMT25_Nov) around the perimeter.

- **Legend**: “Data Points” (gray square = *Before Toggle*, blue circle = *After Toggle*).

- **Performance Ranges** (blue boxes):

- HMMT25_Feb: [85–95%]

- GPQADIAMOND: [80–90%]

- AIME2025: [90–100%]

- Overall: [80–90%]

- LiveCodeBenchV6: [80–90%]

- MMLUPro: [80–90%]

- HMMT25_Nov: [85–95%]

- **Change Indicators**: Green boxes (+X% = improvement) or red boxes (-X% = degradation) between *Before* and *After* points.

#### Right Chart: Token Usage

- **Axes**: Same benchmarks as the left chart.

- **Legend**: “Data Points” (gray square = *Before Toggle*, orange circle = *After Toggle*).

- **Token Ranges** (orange boxes):

- HMMT25_Feb: [20K–40K]

- GPQADIAMOND: [5K–15K]

- AIME2025: [20K–35K]

- Overall: [15K–25K]

- LiveCodeBenchV6: [20K–30K]

- MMLUPro: [1K–4K]

- HMMT25_Nov: [20K–35K]

- **Change Indicators**: Green boxes (-X = token reduction) between *Before* and *After* points.

#### Bottom Legends

- Left (Performance): *“✓ Improved: 5 | ✗ Degraded: 2”* (blue box).

- Right (Token Usage): *“✓ Reduced: 7 | ✗ Increased: 0”* (orange box).

- Overall: Green = *Improvement*, Red = *Degradation*.

### Detailed Analysis (Performance Chart)

| Benchmark | Before → After (Change) | Performance Range |

|-----------------|-------------------------|-------------------|

| HMMT25_Feb | +0.6% (improvement) | [85–95%] |

| GPQADIAMOND | -1.0% (degradation) | [80–90%] |

| AIME2025 | +1.1% (improvement) | [90–100%] |

| Overall | +0.3% (improvement) | [80–90%] |

| LiveCodeBenchV6 | +2.2% (improvement) | [80–90%] |

| MMLUPro | -2.0% (degradation) | [80–90%] |

| HMMT25_Nov | +0.8% (improvement) | [85–95%] |

### Detailed Analysis (Token Usage Chart)

| Benchmark | Before → After (Change) | Token Range |

|-----------------|-------------------------|-------------------|

| HMMT25_Feb | -7967 (reduction) | [20K–40K] |

| GPQADIAMOND | -4912 (reduction) | [5K–15K] |

| AIME2025 | -6179 (reduction) | [20K–35K] |

| Overall | -4791 (reduction) | [15K–25K] |

| LiveCodeBenchV6 | -745 (reduction) | [20K–30K] |

| MMLUPro | -817 (reduction) | [1K–4K] |

| HMMT25_Nov | -8127 (reduction) | [20K–35K] |

### Key Observations

- **Performance**: 5/7 benchmarks improved (green), 2 degraded (red: *GPQADIAMOND, MMLUPro*). Largest improvement: *LiveCodeBenchV6* (+2.2%); largest degradation: *MMLUPro* (-2.0%).

- **Token Usage**: All 7 benchmarks reduced tokens (green). Largest reduction: *HMMT25_Nov* (-8127); smallest: *LiveCodeBenchV6* (-745).

- **Correlation**: Most performance improvements align with token reductions, except *GPQADIAMOND* (performance degraded, tokens reduced) and *MMLUPro* (performance degraded, tokens reduced).

### Interpretation

The “Toggle” (configuration/model change) **improves efficiency** by reducing token usage across all benchmarks (7/7) and enhancing performance in most cases (5/7). The two performance degradations (*GPQADIAMOND, MMLUPro*) suggest the Toggle may not be optimal for all tasks, but token efficiency is consistently improved. This implies the Toggle optimizes resource usage (tokens) while maintaining or enhancing performance in most scenarios, making it a beneficial change for overall efficiency—though task-specific tuning may be needed for the two degraded benchmarks.

</details>

Figure 5: Comparison of model performance and token usage for Kimi K2 Thinking following token-efficient RL.

We evaluate the effectiveness of Toggle on K2 Thinking [1]. As shown in Figure 5, we observe a consistent reduction in output length across nearly all benchmarks. On average, Toggle decreases output tokens by 25 $∼$ 30% with a negligible impact on performance. We also observe that redundant patterns in the chain-of-thought, such as repeated verifications and mechanical calculations, decrease substantially. Furthermore, Toggle shows strong domain generalization. For example, when trained exclusively on mathematics and programming tasks, the model still achieves consistent token reductions on GPQA and MMLU-Pro with only marginal degradation in performance (Figure 5).

### 4.5 Training Infrastructure

Kimi K2.5 inherits the training infrastructure from Kimi K2 [53] with minimal modifications. For multimodal training, we propose Decoupled Encoder Process, where the vision encoder is incorporated into the existing pipeline with negligible additional overhead.

#### 4.5.1 Decoupled Encoder Process (DEP)

In a typical multimodal training paradigm utilizing Pipeline Parallelism (PP), the vision encoder and text embedding are co-located in the first stage of the pipeline (Stage-0). However, due to the inherent variations of multimodal input size (e.g., image counts and resolutions), Stage-0 suffers from drastic fluctuations in both computational load and memory usage. This forces existing solutions to adopt custom PP configurations for vision-language models — for instance, [54] manually adjusts the number of text decoder layers in Stage-0 to reserve memory. While this compromise alleviates memory pressure, it does not fundamentally resolve the load imbalance caused by multimodal input sizes. More critically, it precludes the direct reuse of parallel strategies that have been highly optimized for text-only training.

Leveraging the unique topological position of the visual encoder within the computation graph — specifically, its role as the start of the forward pass and the end of the backward pass — our training uses Decoupled Encoder Process (DEP), which is composed of three stages in each training step:

- Balanced Vision Forward: We first execute the forward pass for all visual data in the global batch. Because the vision encoder is small, we replicate it on all GPUs regardless of other parallelism strategies. During this phase, the forward computational workload is evenly distributed across all GPUs based on load metrics (e.g., image or patch counts). This eliminates load-imbalance caused by PP and visual token counts. To minimize peak memory usage, we discard all intermediate activations, retaining only the final output activations. The results are gathered back to PP Stage-0;

- Backbone Training: This phase performs the forward and backward passes for the main transformer backbone. By discarding intermediate activations in the preceding phase, we can now fully leverage any efficient parallel strategies validated in pure text training. After this phase, gradients are accumulated at the visual encoder output;

- Vision Recomputation & Backward: We re-compute the vision encoder forward pass, followed by a backward pass to compute gradients for parameters in the vision encoder;

DEP not only achieves load-balance, but also decouples the optimization strategy of the vision encoder and the main backbone. K2.5 seamlessly inherits the parallel strategy of K2, achieving a multimodal training efficiency of 90% relative to text-only training. We note a concurrent work, LongCat-Flash-Omni [55], shares a similar design philosophy.

## 5 Evaluations

### 5.1 Main Results

#### 5.1.1 Evaluation Settings

Benchmarks

We evaluate Kimi K2.5 on a comprehensive benchmark suite spanning text-based reasoning, competitive and agentic coding, multimodal understanding (image and video), autonomous agentic execution, and computer use. Our benchmark taxonomy is organized along the following capability axes:

- Reasoning & General: Humanity’s Last Exam (HLE) [46], AIME 2025 [40], HMMT 2025 (Feb) [58], IMO-AnswerBench [36], GPQA-Diamond [47], MMLU-Pro [64], SimpleQA Verified [21], AdvancedIF [22], and LongBench v2 [8].

- Coding: SWE-Bench Verified [28], SWE-Bench Pro (public) [15], SWE-Bench Multilingual [28], Terminal Bench 2.0 [38], PaperBench (CodeDev) [52], CyberGym [66], SciCode [56], OJBench (cpp) [65], and LiveCodeBench (v6) [27].

- Agentic Capabilities: BrowseComp [68], WideSearch [69],DeepSearchQA [60], FinSearchComp (T2&T3) [25], Seal-0 [45], GDPVal [43].

- Image Understanding: (math & reasoning) MMMU-Pro [76], MMMU (val) [75], CharXiv (RQ) [67], MathVision [61] and MathVista (mini) [35]; (vision knowledge) SimpleVQA [12] and WorldVQA https://github.com/MoonshotAI/WorldVQA; (perception) ZeroBench (w/ and w/o tools) [48], BabyVision [11], BLINK [17] and MMVP [57]; (OCR & document) OCRBench [34], OmniDocBench 1.5 [42] and InfoVQA [37].

- Video Understanding: VideoMMMU [24], MMVU [79], MotionBench [23], Video-MME [16] (with subtitles), LongVideoBench [70], and LVBench [62].

- Computer Use: OSWorld-Verified [72, 73], and WebArena [80].

Table 4: Performance comparison of Kimi K2.5 against open-source and proprietary models. Bold denotes the global SOTA; Data points marked with * are taken from our internal evaluations. † refers to their scores of text-only subset.

| | | Proprietary | Open Source | | | |

| --- | --- | --- | --- | --- | --- | --- |

| Benchmark | Kimi K2.5 | Claude Opus 4.5 | GPT-5.2 (xhigh) | Gemini 3 Pro | DeepSeek-V3.2 | Qwen3-VL-235B-A22B |

| Reasoning & General | | | | | | |

| HLE-Full | 30.1 | 30.8 | 34.5 | 37.5 | 25.1 † | - |

| HLE-Full w/ tools | 50.2 | 43.2 | 45.5 | 45.8 | 40.8 † | - |

| AIME 2025 | 96.1 | 92.8 | 100 | 95.0 | 93.1 | - |

| HMMT 2025 (Feb) | 95.4 | 92.9* | 99.4 | 97.3* | 92.5 | - |

| IMO-AnswerBench | 81.8 | 78.5* | 86.3 | 83.1* | 78.3 | - |

| GPQA-Diamond | 87.6 | 87.0 | 92.4 | 91.9 | 82.4 | - |

| MMLU-Pro | 87.1 | 89.3* | 86.7* | 90.1 | 85.0 | - |

| SimpleQA Verified | 36.9 | 44.1 | 38.9 | 72.1 | 27.5 | - |

| AdvancedIF | 75.6 | 63.1 | 81.1 | 74.7 | 58.8 | - |

| LongBench v2 | 61.0 | 64.4* | 54.5* | 68.2* | 59.8* | - |

| Coding | | | | | | |

| SWE-Bench Verified | 76.8 | 80.9 | 80.0 | 76.2 | 73.1 | - |

| SWE-Bench Pro (public) | 50.7 | 55.4* | 55.6 | - | - | - |

| SWE-Bench Multilingual | 73.0 | 77.5 | 72.0 | 65.0 | 70.2 | - |

| Terminal Bench 2.0 | 50.8 | 59.3 | 54.0 | 54.2 | 46.4 | - |

| PaperBench (CodeDev) | 63.5 | 72.9* | 63.7* | - | 47.1 | - |

| CyberGym | 41.3 | 50.6 | - | 39.9* | 17.3* | - |

| SciCode | 48.7 | 49.5 | 52.1 | 56.1 | 38.9 | - |

| OJBench (cpp) | 57.4 | 54.6* | - | 68.5* | 54.7* | - |

| LiveCodeBench (v6) | 85.0 | 82.2* | - | 87.4* | 83.3 | - |

| Agentic | | | | | | |

| BrowseComp | 60.6 | 37.0 | 65.8 | 37.8 | 51.4 | - |

| BrowseComp (w/ ctx manage) | 74.9 | 57.8 | | 59.2 | 67.6 | - |

| BrowseComp (Agent Swarm) | 78.4 | - | - | - | - | - |

| WideSearch | 72.7 | 76.2* | - | 57.0 | 32.5* | - |

| WideSearch (Agent Swarm) | 79.0 | - | - | - | - | - |

| DeepSearchQA | 77.1 | 76.1* | 71.3* | 63.2* | 60.9* | - |

| FinSearchCompT2&T3 | 67.8 | 66.2* | - | 49.9 | 59.1* | - |

| Seal-0 | 57.4 | 47.7* | 45.0 | 45.5* | 49.5* | - |

| GDPVal-AA | 41.0 | 45.0 | 48.0 | 35.0 | 34.0 | - |

| Image | | | | | | |

| MMMU-Pro | 78.5 | 74.0 | 79.5* | 81.0 | - | 69.3 |

| MMMU (val) | 84.3 | 80.7 | 86.7* | 87.5* | - | 80.6 |

| CharXiv (RQ) | 77.5 | 67.2* | 82.1 | 81.4 | - | 66.1 |

| MathVision | 84.2 | 77.1* | 83.0 | 86.1* | - | 74.6 |

| MathVista (mini) | 90.1 | 80.2* | 82.8* | 89.8* | - | 85.8 |

| SimpleVQA | 71.2 | 69.7* | 55.8* | 69.7* | - | 56.8* |

| WorldVQA | 46.3 | 36.8 | 28.0 | 47.4 | - | 23.5 |

| ZeroBench | 9 | 3* | 9* | 8* | - | 4* |

| ZeroBench w/ tools | 11 | 9* | 7* | 12* | - | 3* |

| BabyVision | 36.5 | 14.2 | 34.4 | 49.7 | - | 22.2 |

| BLINK | 78.9 | 68.8* | - | 78.7* | - | 68.9 |

| MMVP | 87.0 | 80.0* | 83.0* | 90.0* | - | 84.3 |

| OmniDocBench 1.5 | 88.8 | 87.7* | 85.7 | 88.5 | - | 82.0* |

| OCRBench | 92.3 | 86.5* | 80.7* | 90.3* | - | 87.5 |

| InfoVQA (test) | 92.6 | 76.9* | 84* | 57.2* | - | 89.5 |

| Video | | | | | | |

| VideoMMMU | 86.6 | 84.4* | 85.9 | 87.6 | - | 80.0 |

| MMVU | 80.4 | 77.3* | 80.8* | 77.5* | - | 71.1 |

| MotionBench | 70.4 | 60.3* | 64.8* | 70.3 | - | - |

| Video-MME | 87.4 | 77.6* | 86.0* | 88.4* | - | 79.0 |

| LongVideoBench | 79.8 | 67.2* | 76.5* | 77.7* | - | 65.6* |

| LVBench | 75.9 | 57.3 | - | 73.5* | - | 63.6 |

| Computer Use | | | | | | |

| OSWorld-Verified | 63.3 | 66.3 | 8.6* | 20.7* | - | 38.1 |

| WebArena | 58.9 | 63.4 * | - | - | - | 26.4* |

Table 5: Performance and token efficiency of some reasoning models. Average output token counts (in thousands) are shown in parentheses.

| Benchmark | Kimi K2.5 | Kimi K2 | Gemini-3.0 | DeepSeek-V3.2 |

| --- | --- | --- | --- | --- |

| Thinking | Pro | Thinking | | |

| AIME 2025 | 96.1 (25k) | 94.5 (30k) | 95.0 (15k) | 93.1 (16k) |

| HMMT Feb 2025 | 95.4 (27k) | 89.4 (35k) | 97.3 (16k) | 92.5 (19k) |

| HMMT Nov 2025 | 91.1 (24k) | 89.2 (32k) | 94.5 (15k) | 90.2 (18k) |

| IMO-AnswerBench | 81.8 (36k) | 78.6 (37k) | 83.1 (18k) | 78.3 (27k) |

| LiveCodeBench | 85.0 (18k) | 82.6 (25k) | 87.4 (13k) | 83.3 (16k) |

| GPQA Diamond | 87.6 (14k) | 84.5 (13k) | 91.9 (8k) | 82.4 (7k) |

| HLE-Text | 31.5 (24k) | 23.9 (29k) | 38.4 (13k) | 25.1 (21k) |

Baselines

We benchmark against state-of-the-art proprietary and open-source models. For proprietary models, we compare against Claude Opus 4.5 (with extended thinking) [4], GPT-5.2 (with xhigh reasoning effort) [41], and Gemini 3 Pro (with high reasoning-level) [19]. For open-source models, we include DeepSeek-V3.2 (with thinking mode enabled) [13] for text benchmarks, while vision benchmarks report Qwen3-VL-235B-A22B-Thinking [7] instead.

Evaluation Configurations

Unless otherwise specified, all Kimi K2.5 evaluations use temperature = 1.0, top-p = 0.95, and a context length of 256k tokens. Benchmarks without publicly available scores were re-evaluated under identical conditions and marked with an asterisk (*). The full evaluation settings can be found in appendix E.

#### 5.1.2 Evaluation Results

Comprehensive results comparing Kimi K2.5 against proprietary and open-source baselines are presented in Table 4. We highlight key observations across core capability domains:

Reasoning and General

Kimi K2.5 achieves competitive performance with top-tier proprietary models on rigorous STEM benchmarks. On Math tasks, AIME 2025, K2.5 scores 96.1%, approaching GPT-5.2’s perfect score while outperforming Claude Opus 4.5 (92.8%) and Gemini 3 Pro (95.0%). This high-level performance extends to the HMMT 2025 (95.4%) and IMO-AnswerBench (81.8%), demonstrating K2.5’s superior reasoning depth. Kimi K2.5 also exhibits remarkable knowledge and scientific reasoning capabilities, scoring 36.9% on SimpleQA Verified, 87.1% on MMLU-Pro and 87.6% on GPQA. Notably, on HLE without the use of tools, K2.5 achieves an HLE-Full score of 30.1%, with component-wise scores of 31.5% on text subset and 21.3% on image subset. When tool-use is enabled, K2.5’s HLE-Full score rises to 50.2%, with 51.8% (text) and 39.8% (image), significantly outperforming Gemini 3 Pro (45.8%) and GPT-5.2 (45.5%). In addition to reasoning and knowledge, K2.5 shows strong instruction-following performance (75.6% on AdvancedIF) and competitive long-context abilities, achieving 61.0% on LongBench v2 compared to both proprietary and open-source models.

Complex Coding and Software Engineering

Kimi K2.5 exhibits strong software engineering capabilities, especially on realistic coding and maintenance tasks. It achieves 76.8% on SWE-Bench Verified and 73.0% on SWE-Bench Multilingual, outperforming Gemini 3 Pro while remaining competitive with Claude Opus 4.5 and GPT‑5.2. On LiveCodeBench v6, Kimi K2.5 reaches 85.0%, surpassing DeepSeek‑V3.2 (83.3%) and Claude Opus 4.5 (82.2%), highlighting its robustness on live, continuously updated coding challenges. On TerminalBench 2.0, PaperBench, and SciCode, it scores 50.8%, 63.5%, and 48.7% respectively, demonstrating stable competition‑level performance in automated software engineering and problem solving across diverse domains. In addition, K2.5 attains a score of 41.3 on CyberGym, on the task of finding previously discovered vulnerabilities in real open‑source software projects given only a high‑level description of the weakness, further underscoring its effectiveness in security‑oriented software analysis.

Agentic Capabilities

Kimi K2.5 establishes new state-of-the-art performance on complex agentic search and browsing tasks. On BrowseComp, K2.5 achieves 60.6% without context management techniques, 74.9% with Discard-all context management [13] — substantially outperforming GPT-5.2’s reported 65.8%, Claude Opus 4.5 (37.0%) and Gemini 3 Pro (37.8%). Similarly, WideSearch reaches 72.7% on item-f1. On DeepSearchQA (77.1%), FinSearchCompT2&T3 (67.8%) and Seal-0 (57.4%), K2.5 leads all evaluated models, demonstrating superior capacity for agentic deep research, information synthesis, and multi-step tool orchestration.

Vision Reasoning, Knowledge and Perception

Kimi K2.5 demonstrates strong visual reasoning and world knowledge capabilities. It scores 78.5% on MMMU-Pro, spanning multi-disciplinary multimodal tasks. For world knowledge question answering, K2.5 achieves 71.2% on SimpleVQA and 46.3% on WorldVQA. For visual reasoning, it achieves 84.2% on MathVision, 90.1% on MathVista (mini), and 36.5% on BabyVision. For OCR and document understanding, K2.5 delivers outstanding results with 77.5% on CharXiv (RQ), 92.3% on OCRBench, 88.8% on OmniDocBench 1.5, and 92.6% on InfoVQA (test). On the challenging ZeroBench, Kimi K2.5 achieves 9% and 11% with tool augmentation, substantially ahead of competing models. On basic visual perception benchmarks BLINK (78.9%) and MMVP (87.0%), we also observe competitive performance of Kimi K2.5, demonstrating its robust real-world visual perceptions.

Video Understanding

Kimi K2.5 achieves state-of-the-art performance across diverse video understanding tasks. It attains 86.6% on VideoMMMU and 80.4% on MMVU, rivaling frontier leaderships. With the context-compression and dense temporal understanding abilities of MoonViT-3D, Kimi K2.5 also establishes new global SOTA records in long-video comprehension with 75.9% on LVBench and 79.8% on LongVideoBench by feeding over 2,000 frames, while demonstrating robust dense-motion understanding at 70.4% on the highly-dimensional MotionBench.

Computer-Use Capability

Kimi K2.5 demonstrates state-of-the-art computer-use capability on real-world tasks. On the computer-use benchmark OSWorld-Verified [72, 73], it achieves a 63.3% success rate relying solely on GUI actions without external tools. This substantially outperforms open-source models such as Qwen3-VL-235B-A22B (38.1%) and OpenAI’s computer-use agent framework Operator (o3-based) (42.9%), while remaining competitive with the current leading CUA model, Claude Opus 4.5 (66.3%). On WebArena [80], an established benchmark for GUI-based web browsing, Kimi K2.5 achieves a 58.9% success rate, surpassing OpenAI’s Operator (58.1%) and approaching the performance of Claude Opus 4.5 (63.4%).

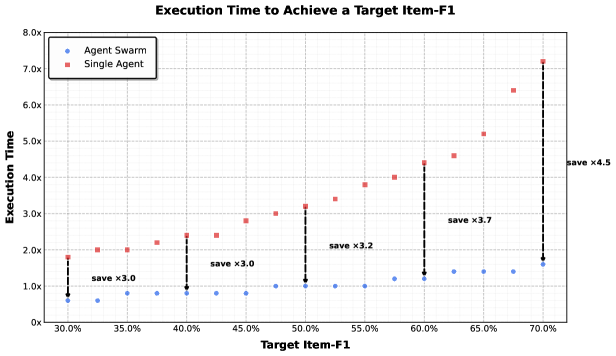

### 5.2 Agent Swarm Results

Benchmarks

To rigorously evaluate the effectiveness of the agent swarm framework, we select three representative benchmarks that collectively cover deep reasoning, large-scale retrieval, and real-world complexity:

- BrowseComp: A challenging deep-research benchmark that requires multi-step reasoning and complex information synthesis.

- WideSearch: A benchmark designed to evaluate the ability to perform broad, multi-step information seeking and reasoning across diverse sources.

- In-house Swarm Bench: An internally developed Swarm benchmark, designed to evaluate the agent swarm performance under real-world, high-complexity conditions. It covers four domains: WildSearch (unconstrained, real-world information retrieval over the open web), Batch Download (large-scale acquisition of diverse resources), WideRead (large-scale document comprehension involving more than 100 input documents), and Long-Form Writing (coherent generation of extensive content exceeding 100k words). This benchmark incorporates extreme-scale scenarios that stress-test the orchestration, scalability, and coordination capabilities of agent-based systems.

Table 6: Performance comparison of Kimi K2.5 Agent Swarm against single-agent and proprietary baselines on agentic search benchmarks. Bold denotes the best result per benchmark.

| Benchmark | K2.5 Agent Swarm | Kimi K2.5 | Claude Opus 4.5 | GPT-5.2 | GPT-5.2 Pro |

| --- | --- | --- | --- | --- | --- |

| BrowseComp | 78.4 | 60.6 | 37.0 | 65.8 | 77.9 |

| WideSearch | 79.0 | 72.7 | 76.2 | - | - |

| In-house Swarm Bench | 58.3 | 41.6 | 45.8 | - | - |

Performance