# HugRAG: Hierarchical Causal Knowledge Graph Design for RAG

**Authors**: Nengbo Wang, Tuo Liang, Vikash Singh, Chaoda Song, Van Yang, Yu Yin, Jing Ma, Jagdip Singh, Vipin Chaudhary

Abstract

Retrieval augmented generation (RAG) has enhanced large language models by enabling access to external knowledge, with graph-based RAG emerging as a powerful paradigm for structured retrieval and reasoning. However, existing graph-based methods often over-rely on surface-level node matching and lack explicit causal modeling, leading to unfaithful or spurious answers. Prior attempts to incorporate causality are typically limited to local or single-document contexts and also suffer from information isolation that arises from modular graph structures, which hinders scalability and cross-module causal reasoning. To address these challenges, we propose HugRAG, a framework that rethinks knowledge organization for graph-based RAG through causal gating across hierarchical modules. HugRAG explicitly models causal relationships to suppress spurious correlations while enabling scalable reasoning over large-scale knowledge graphs. Extensive experiments demonstrate that HugRAG consistently outperforms competitive graph-based RAG baselines across multiple datasets and evaluation metrics. Our work establishes a principled foundation for structured, scalable, and causally grounded RAG systems.

Machine Learning, ICML

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Comparison of RAG Methods for Blackout Analysis

### Overview

The image compares three Retrieval-Augmented Generation (RAG) methods—Standard RAG, Graph-based RAG, and HugRAG—using diagrams and text to explain how each handles a query about citywide commute delays caused by a blackout. The diagrams use color-coded nodes and connections to represent knowledge graphs, causal relationships, and modularity.

---

### Components/Axes

1. **Query & Answer Section**

- **Text**:

- Query: *"Why did citywide commute delays surge right after the blackout?"*

- Answer: *"Blackout knocked out signal controllers, intersections went flashing, gridlock spread."*

- **Highlighted Text**:

- *"Substation fault caused a citywide blackout"* (Standard RAG)

- *"Signal controller network lost power. Many junctions went flashing"* (Standard RAG)

2. **Standard RAG Diagram**

- **Text**:

- *"Substation fault caused a citywide blackout"*

- *"Stop and go backups and gridlock across major corridors"*

- *"Signal controller network lost power. Many junctions went flashing"*

- **Color Legend**:

- Black dots: Knowledge Graph

- Blue: Seed Node

- Yellow: N-hop Nodes (Spurious Nodes)

- Gray: Graph Modules

- Blue arrows: Causal Gate

- Blue arrows with checkmark: Causal Path

3. **Graph-based RAG Diagram**

- **Nodes**:

- **M1**: Power Outage (yellow), Substation restored (yellow), Blackout (yellow)

- **M2**: Signal Control (black), Controllers down (black), Flashing mode (black)

- **M3**: Road Outcomes (black), Traffic Delays (black), Unmanaged junctions (black)

- **Connections**:

- M1 → M2 (Power Outage → Signal Control)

- M2 → M3 (Gridlock → Traffic Delays)

- **Text**:

- *"Hard to break communities / intrinsic modularity"*

4. **HugRAG Diagram**

- **Nodes**:

- **M1**: Power Outage (blue), Substation restored (blue), Blackout (blue)

- **M2**: Signal Control (black), Controllers down (black), Flashing mode (black)

- **M3**: Road Outcomes (black), Traffic Delays (black), Unmanaged junctions (black)

- **Connections**:

- M1 → M2 → M3 (Causal Path: Power Outage → Signal Control → Traffic Delays)

- **Text**:

- *"Break information isolation & Identify causal path"*

---

### Detailed Analysis

1. **Standard RAG**

- **Text Content**:

- Highlights "Substation fault caused a citywide blackout" and "Signal controller network lost power."

- Misses key context: *"Semantic search misses key context"* (marked with an X).

- **Diagram**:

- No explicit nodes or connections. Relies on textual summaries.

2. **Graph-based RAG**

- **Nodes**:

- **M1 (Power Outage)**: Yellow (Seed Node)

- **M2 (Signal Control)**: Black (Knowledge Graph)

- **M3 (Road Outcomes)**: Black (Knowledge Graph)

- **Connections**:

- M1 → M2 (Power Outage → Signal Control)

- M2 → M3 (Gridlock → Traffic Delays)

- **Text**:

- *"Hard to break communities / intrinsic modularity"* (indicates limitations in modularity).

3. **HugRAG**

- **Nodes**:

- **M1 (Power Outage)**: Blue (N-hop Node)

- **M2 (Signal Control)**: Black (Knowledge Graph)

- **M3 (Road Outcomes)**: Black (Knowledge Graph)

- **Connections**:

- M1 → M2 → M3 (Causal Path: Power Outage → Signal Control → Traffic Delays)

- **Text**:

- *"Break information isolation & Identify causal path"* (emphasizes causal reasoning).

---

### Key Observations

1. **Standard RAG**

- Relies on text summaries but fails to capture causal relationships (e.g., "Semantic search misses key context").

- Highlights critical events (substation fault, signal controller failure) but lacks structural analysis.

2. **Graph-based RAG**

- Uses nodes to represent events (e.g., Power Outage, Signal Control) and connections to show relationships.

- Struggles with modularity ("Hard to break communities"), suggesting difficulty in isolating specific causal chains.

3. **HugRAG**

- Explicitly identifies a **causal path** (M1 → M2 → M3) using blue arrows with checkmarks.

- Combines modularity (graph modules) with causal reasoning (causal gates).

---

### Interpretation

1. **Standard RAG**

- Provides a basic summary but lacks depth in explaining causality. Its failure to capture key context (e.g., "signal controller network lost power") suggests limitations in retrieval precision.

2. **Graph-based RAG**

- Represents events as nodes and relationships as edges but cannot fully disentangle modular components (e.g., "intrinsic modularity" issue). This may lead to incomplete or fragmented explanations.

3. **HugRAG**

- Outperforms others by explicitly tracing the causal chain (Power Outage → Signal Control → Traffic Delays). The use of **causal gates** and **causal paths** indicates a structured approach to linking events, making it more effective for complex queries.

4. **Color Coding Significance**

- **Yellow (Seed Nodes)**: Highlight critical events (e.g., Power Outage) in Graph-based RAG.

- **Blue (N-hop Nodes)**: Represent intermediate steps in HugRAG’s causal path.

- **Black (Knowledge Graph)**: Core events (e.g., Signal Control) are consistently marked across methods.

---

### Conclusion

The diagram illustrates how HugRAG’s integration of causal reasoning and modularity surpasses Standard RAG and Graph-based RAG in explaining complex, interconnected events like blackouts. By breaking information isolation and identifying causal paths, HugRAG provides a more accurate and structured analysis of the root causes and consequences of citywide commute delays.

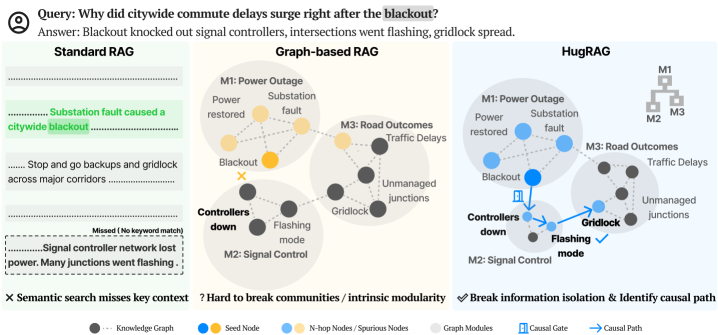

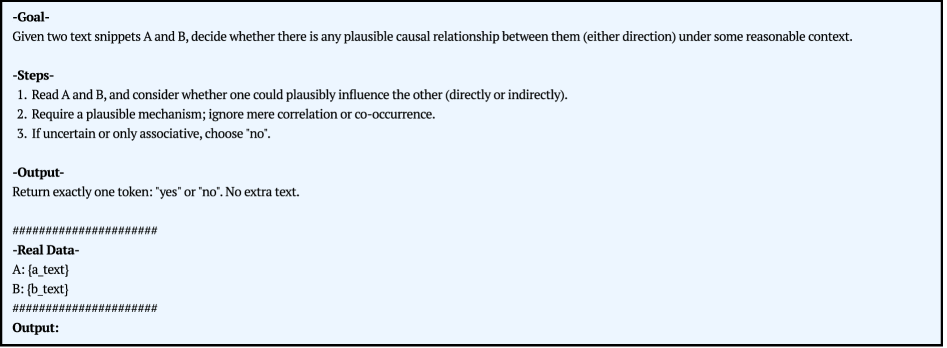

</details>

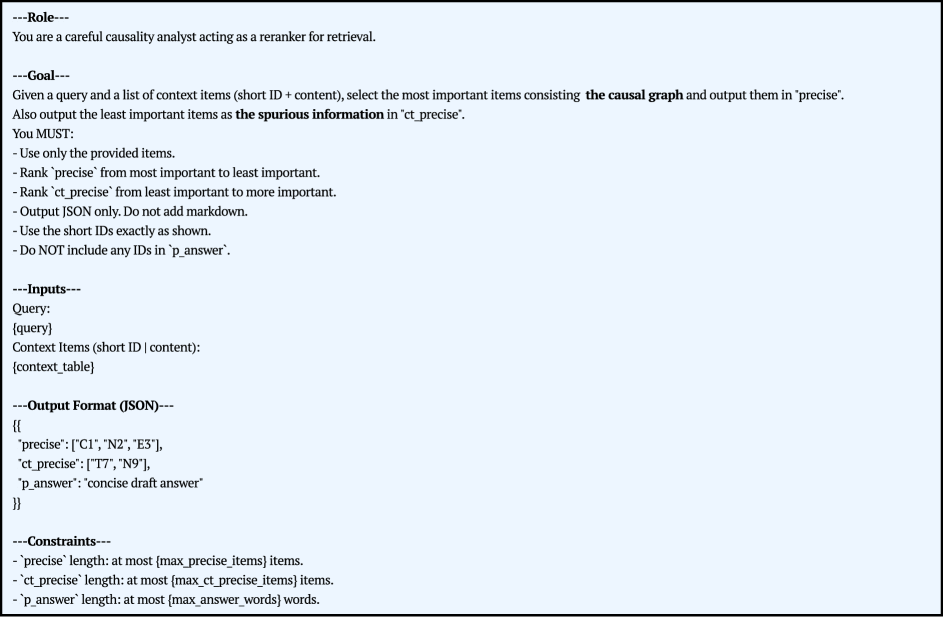

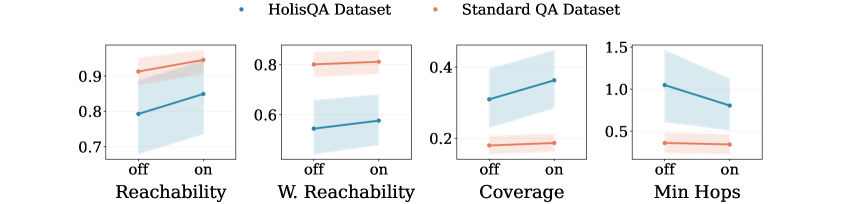

Figure 1: Comparison of three retrieval paradigms, Standard RAG, Graph-based RAG, and HugRAG, on a citywide blackout query. Standard RAG misses key evidence under semantic retrieval. Graph-based RAG can be trapped by intrinsic modularity or grouping structure. HugRAG leverages hierarchical causal gates to bridge modular boundaries, effectively breaking information isolation and explicitly identifying the underlying causal path.

1 Introduction

While Retrieval-Augmented Generation (RAG) effectively extends Large Language Models (LLMs) with external knowledge (Lewis et al., 2021), traditional pipelines predominantly rely on text chunking and semantic embedding search. This paradigm implicitly frames knowledge access as a flat similarity matching problem, overlooking the structured and interdependent nature of real-world concepts. Consequently, as knowledge bases scale in complexity, these methods struggle to maintain retrieval efficiency and reasoning fidelity.

Graph-based RAG has emerged as a promising solution to address these gaps, led by frameworks like GraphRAG (Edge et al., 2024) and extended through agentic search (Ravuru et al., 2024), GNN-guided refinement (Liu et al., 2025b), and hypergraph representations (Luo et al., ). However, three unintended limitations still persist. First, current research prioritizes retrieval policies while overlooking knowledge graph organization. As graphs scale, intrinsic modularity (Fortunato and Barthélemy, 2007) often restricts exploration within dense modules, triggering information isolation. Common grouping strategies ranging from communities (Edge et al., 2024), passage nodes (Gutiérrez et al., 2025), node-edge sets (Guo et al., 2024) to semantic grouping (Zhang et al., 2025) often inadvertently reinforce these boundaries, severely limiting global recall. Second, most formulations rely on semantic proximity and superficial traversal on graphs without causal awareness, leading to a locality issue where spurious nodes and irrelevant noise degrade precision (see Figure 1). Despite the inherent causal discovery potential of LLMs, this capability remains largely untapped for filtering noise within RAG pipelines. Finally, these systemic flaws are often masked by popular QA datasets evaluation, which reward entity-level “hits” over holistic comprehension. Consequently, there is a pressing need for a retrieval framework that reconciles global knowledge accessibility with local reasoning precision to support robust, causally-grounded generation.

To address these challenges, we propose HugRAG, a framework that rethinks knowledge graph organization through hierarchical causal gate structures. HugRAG formulates the knowledge graph as a multi-layered representation where fine-grained facts are organized into higher-level schemas, enabling multi-granular reasoning. This hierarchical architecture, integrated with causal gates, establishes logical bridges across modules, thereby naturally breaking information isolation and enhancing global recall. During retrieval, HugRAG transcends pointwise semantic matching to explicit reasoning over causal graphs. By actively distinguishing genuine causal dependencies from spurious associations, HugRAG mitigates the locality issue and filters retrieval noise to ensure precise, grounded, and interpretable generation.

To validate the effectiveness of HugRAG, we conduct extensive evaluations across datasets in multiple domains, comparing it against a diverse suite of competitive RAG baselines. To address the previously identified limitations of existing QA datasets, we introduce a large-scale cross-domain dataset HolisQA focused on holistic comprehension, designed to evaluate reasoning capabilities in complex, real-world scenarios. Our results consistently demonstrate that causal gating and causal reasoning effectively reconcile the trade-off between recall and precision, significantly enhancing retrieval quality and answer reliability.

| Method Standard RAG (Lewis et al., 2021) Graph RAG (Edge et al., 2024) | Knowledge Graph Organization Flat text chunks, unstructured. $\mathcal{G}_{\text{idx}}=\{d_{i}\}_{i=1}^{N}$ Partitioned communities with summaries. $\mathcal{G}_{\text{idx}}=\{\text{Sum}(c)\mid c∈\mathcal{C}\}$ | Retrieval and Generation Process Semantic vector search over chunks. $S=\mathrm{TopK}(\text{sim}(q,d_{i}));\;\;y=\mathsf{G}(q,S)$ Map-Reduce over community summaries. $A_{\text{part}}=\{\mathsf{G}(q,\text{Sum}(m))\};\;\;y=\mathsf{G}(A_{\text{part}})$ |

| --- | --- | --- |

| Light RAG (Guo et al., 2024) | Dual-level indexing (Entities + Relations). $\mathcal{G}_{\text{idx}}=(V_{\text{ent}}\cup V_{\text{rel}},E)$ | Keyword-based vector retrieval + neighbor. $K_{q}=\mathsf{Key}(q);\;\;S=\mathrm{Vec}(K_{q},\mathcal{G}_{\text{idx}})\cup\mathcal{N}_{1}$ |

| HippoRAG 2 (Gutiérrez et al., 2025) | Dense-sparse integration (Phrase + Passage). $\mathcal{G}_{\text{idx}}=(V_{\text{phrase}}\cup V_{\text{doc}},E)$ | PPR diffusion from LLM-filtered seeds. $U_{\text{seed}}=\mathsf{Filter}(q,V);\;\;S=\mathsf{PPR}(U_{\text{seed}},\mathcal{G}_{\text{idx}})$ |

| LeanRAG (Zhang et al., 2025) | Hierarchical semantic clusters (GMM). $\mathcal{G}_{\text{idx}}=\text{Tree}(\text{Semantic Aggregation})$ | Bottom-up traversal to LCA (Ancestor). $U=\mathrm{TopK}(q,V);\;\;S=\mathsf{LCA}(U,\mathcal{G}_{\text{idx}})$ |

| CausalRAG (Wang et al., 2025a) | Flat graph structure. $\mathcal{G}_{\text{idx}}=(V,E)$ | Top-K retrieval + Implicit causal reasoning. $S=\mathsf{Expand}(\mathrm{TopK}(q,V));\;\;y=\mathsf{G}(q,S)$ |

| \rowcolor gray!10 HugRAG (Ours) | Hierarchical Causal Gates across modules. $\mathcal{G}_{\text{idx}}=\mathcal{H}=\{H_{0},...,H_{L}\}$ | Causal Gating + Causal Path Filtering. $S=\underbrace{\mathsf{Traverse}(q,\mathcal{H})}_{\text{Break Isolation}}\cap\underbrace{\mathsf{Filter}_{\text{causal}}(S)}_{\text{Reduce Noise}}$ |

Table 1: Comparison of RAG frameworks based on knowledge organization and retrieval mechanisms. Notation: $\mathcal{M}$ modules, $\text{Sum}(·)$ summary, $\mathsf{PPR}$ Personalized PageRank, $\mathcal{H}$ hierarchy, $\mathcal{N}_{1}$ 1-hop neighborhood.

2 Related Work

2.1 RAG

Retrieval augmented generation grounds LLMs in external knowledge, but chunk level semantic search can be brittle and inefficient for large, heterogeneous, or structured corpora (Lewis et al., 2021). Graph-based RAG has therefore emerged to introduce structure for more informed retrieval.

Graph-based RAG.

GraphRAG constructs a graph structured index of external knowledge and performs query time retrieval over the graph, improving question focused access to large scale corpora (Edge et al., 2024). Building on this paradigm, later work studies richer selection mechanisms over structured graph. Agent driven retrieval explores the search space iteratively (Ravuru et al., 2024). Critic guided or winnowing style methods prune weak contexts after retrieval (Dong et al., ; Wang et al., 2025b). Others learn relevance scores for nodes, subgraphs, or reasoning paths, often with graph neural networks (Liu et al., 2025b). Representation extensions include hypergraphs for higher order relations (Luo et al., ) and graph foundation models for retrieval and reranking (Wang et al., ).

Knowledge Graph Organization.

Despite these advances, limitations related to graph organization remain underexamined. Most work emphasizes retrieval policies, while the organization of the underlying knowledge graph is largely overlooked, which strongly influences downstream retrieval behavior. As graphs scale, intrinsic modularity can emerge (Fortunato and Barthélemy, 2007; Newman, 2018), making retrieval prone to staying within dense modules rather than crossing them, largely limiting the retrieved information. Moreover, many work assume grouping knowledge for efficiency at scale, such as communities (Edge et al., 2024), phrases and passages (Gutiérrez et al., 2025), node edge sets (Guo et al., 2024), or semantic aggregation (Zhang et al., 2025) (see Table 1), which can amplify modular confinement and yield information isolation. This global issue primarily manifests as reduced recall. Some hierarchical approaches like LeanRAG attempt to bridge these gaps via semantic aggregation, but they remain constrained by semantic clustering and rely on tree-structured traversals (Zhang et al., 2025), often failing to capture logical dependencies that span across semantically distinct clusters.

Retrieval Issue.

A second limitation concerns how retrieval is formulated. Much work operates as a multi-hop search over nodes or subgraphs (Gutiérrez et al., 2025; Liu et al., 2025a), prioritizing semantic proximity to the query without explicit awareness of the reasoning in this searching process. This design can pull in topically similar yet causally irrelevant evidence, producing conflated retrieval results. Even when the correct fact node is present, the generator may respond with generic or superficial content, and the extra noise can increase the risk of hallucination. We view this as a locality issue that lowers precision.

QA Evaluation Issue.

These tendencies can be reinforced by common QA evaluation practice. First, many QA datasets emphasize short answers such as names, nationalities, or years (Kwiatkowski et al., 2019; Rajpurkar et al., 2016), so hitting the correct entity in the graph may be sufficient even without reasoning. Second, QA datasets often comprise thousands of independent question-answer-context triples. However, many approaches still rely on linear context concatenation to construct a graph, and then evaluate performance on isolated questions. This setup largely reduces the incentive for holistic comprehension of the underlying material, even though such end-to-end understanding is closer to real-world use cases. Third, some datasets are stale enough that answers may be partially memorized by pretrained LLM models, confounding retrieval quality with parametric knowledge. Therefore, these QA dataset issues are critical for evaluating RAG, yet relatively few works explicitly address them by adopting open-ended questions and fresher materials in controlled experiments.

2.2 Causality

LLM for Identifying Causality.

LLMs have demonstrated exceptional potential in causal discovery. By leveraging vast domain knowledge, LLMs significantly improve inference accuracy compared to traditional methods (Ma, 2024). Frameworks like CARE further prove that fine-tuned LLMs can outperform state-of-the-art algorithms (Dong et al., 2025). Crucially, even in complex texts, LLMs maintain a direction reversal rate under 1.1% (Saklad et al., 2026), ensuring highly reliable results.

Causality and RAG.

While LLMs increasingly demonstrate reliable causal reasoning capabilities, explicitly integrating causal structures into RAG remains largely underexplored. Current research predominantly focuses on internal attribution graphs for model interpretability (Walker and Ewetz, 2025; Dai et al., 2025), rather than external knowledge retrieval. Recent advances like CGMT (Luo et al., 2025) and LACR (Zhang et al., 2024) have begun to bridge this gap, utilizing causal graphs for medical reasoning path alignment or constraint-based structure induction. However, these works inherently differ in scope from our objective, as they prioritize rigorous causal discovery or recovery tasks in specific domain, which limits their scalability to the noisy, open-domain environments that we address. Existing causal-enhanced RAG frameworks either utilize causal feedback implicitly in embedding (Khatibi et al., 2025) or, like CausalRAG (Wang et al., 2025a), are restricted to small-scale settings with implicit causal reasoning. Consequently, a significant gap persists in leveraging causal graphs to guide knowledge graph organization and retrieval across large-scale, heterogeneous knowledge bases. Note that in this work, we use the term causal to denote explicit logical dependencies and event sequences described in the text, rather than statistical causal discovery from observational data.

3 Problem Formulation

We aim to retrieve an optimal subgraph $S^{*}⊂eq\mathcal{G}$ for a query $q$ to generate an answer $y$ . Graph-based RAG ( $S=\mathcal{R}(q,\mathcal{G})$ ) usually faces two structural bottlenecks.

1. Global Information Isolation (Recall Gap).

Intrinsic modularity often traps retrieval in local seeds, missing relevant evidence $v^{*}$ located in topologically distant modules (i.e., $S\cap\{v^{*}\}=\emptyset$ as no path exists within $h$ hops). HugRAG introduces causal gates across $\mathcal{H}$ , to bypass modular boundaries and bridge this gap. The efficacy of causal gates is empirically verified in Appendix E and further analyzed in the ablation study (see Section 5.3).

2. Local Spurious Noise (Precision Gap).

Semantic similarity $\text{sim}(q,v)$ often retrieves topically related but causally irrelevant nodes $\mathcal{V}_{sp}$ , diluting precision (where $|S\cap\mathcal{V}_{sp}|\gg|S\cap\mathcal{V}_{causal}|$ ). We address this by leveraging LLMs to identify explicit causal paths, filtering $\mathcal{V}_{sp}$ to ensure groundedness. While as discussed LLMs have demonstrated causal identification capabilities surpassing human experts (Ma, 2024; Dong et al., 2025) and proven effectiveness in RAG (Wang et al., 2025a), we further corroborate the validity of identified causal paths through expert knowledge across different domains (see Section 5.1). Consequently, HugRAG redefines retrieval as finding a mapping $\Phi:\mathcal{G}→\mathcal{H}$ and a causal filter $\mathcal{F}_{c}$ to simultaneously minimize isolation and spurious noise.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Flowchart Diagram: Knowledge Graph Construction and Query Processing Pipeline

### Overview

The diagram illustrates a two-phase system for knowledge graph construction and query processing. The left side depicts an offline "Graph Construction" pipeline, while the right side shows an online "Retrieve and Answer" workflow. Both phases involve hierarchical graph structures, causal reasoning, and large language model (LLM) integration.

### Components/Axes

**Left Section (Graph Construction - Offline):**

1. **Input**: Raw Texts → Knowledge Graph (via IE) → Vector Store

2. **Processing Steps**:

- Partition → Hierarchical Graph

- Embed → Identify Causality (LLM) → Graph with Causal Gates

3. **Output**: Multi-layered hierarchical graph (H₀ to Hₙ) with causal gates

**Right Section (Retrieve and Answer - Online):**

1. **Input**: Query → Embed and Score

2. **Processing Steps**:

- Top K entities → N-hop via gates, cross modules

- Distinguish Causal vs Spurious (LLM) → Context

3. **Output**: Answer with Query

**Visual Elements**:

- **Colors**:

- Blue: LLM components (Identify Causality, Distinguish Causal vs Spurious)

- Green: Causal gates

- **Arrows**: Indicate data flow direction

- **Hierarchical Structure**: Stacked graph layers (H₀ to Hₙ) in both sections

### Detailed Analysis

**Offline Phase**:

1. Raw texts are processed through Information Extraction (IE) to create a Knowledge Graph.

2. The graph is partitioned and embedded into a Vector Store.

3. A Hierarchical Graph is constructed, with layers labeled H₀ to Hₙ.

4. Causal relationships are identified using LLM, resulting in a Graph with Causal Gates.

**Online Phase**:

1. Queries are embedded and scored against the vector store.

2. Top K relevant entities are retrieved using N-hop traversal through causal gates and cross-module connections.

3. LLM distinguishes between causal and spurious relationships to refine context.

4. Final answer is generated by combining query context with retrieved information.

### Key Observations

1. **Modular Design**: The system separates offline graph construction from online query processing.

2. **Causal Reasoning**: Explicit emphasis on identifying and leveraging causal relationships throughout the pipeline.

3. **LLM Integration**: Used at two critical points - causality identification and spurious relationship filtering.

4. **Hierarchical Navigation**: Query processing involves multi-layered graph traversal (N-hop) through causal gates.

5. **Contextualization**: Final answer generation combines retrieved entities with context-aware reasoning.

### Interpretation

This architecture demonstrates a sophisticated approach to knowledge graph utilization:

- The offline phase focuses on building a causally-aware graph structure optimized for efficient querying.

- The online phase leverages this preprocessed structure to enable context-aware question answering.

- The use of LLM for both causality identification and spurious relationship filtering suggests an attempt to mitigate hallucination risks in knowledge graph applications.

- The hierarchical graph structure with causal gates implies a design for handling complex, multi-relational data while maintaining query efficiency.

The system appears to balance computational intensity between offline preprocessing and online query handling, potentially optimizing for real-time performance while maintaining high-quality causal reasoning capabilities.

</details>

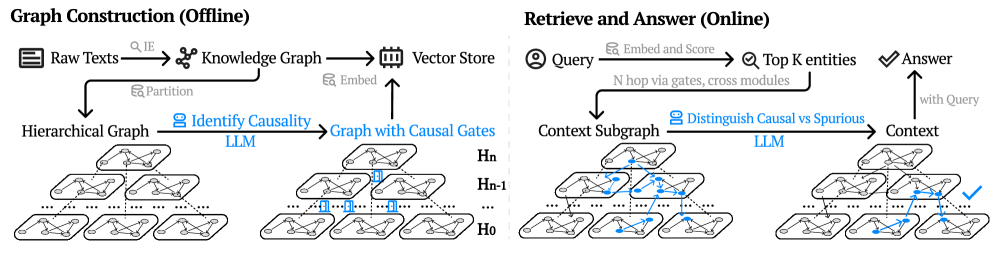

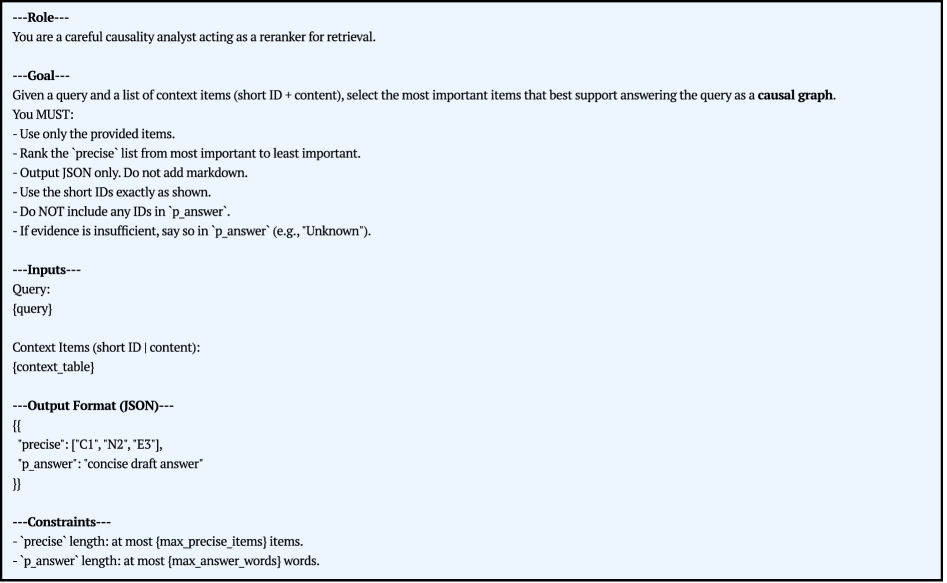

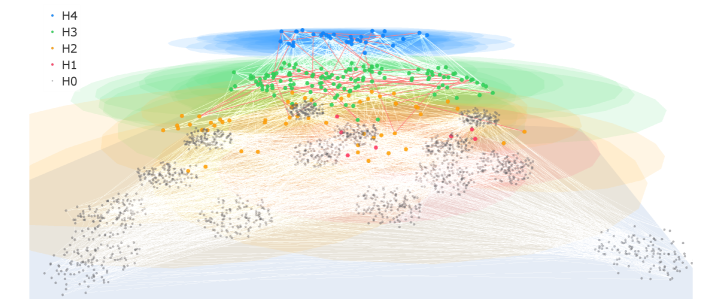

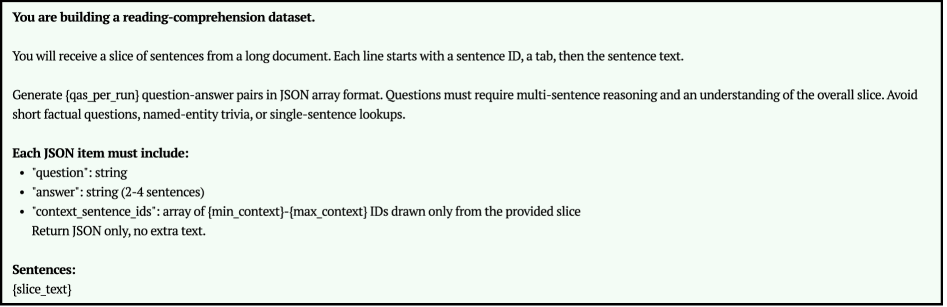

Figure 2: Overview of the HugRAG pipeline. In the offline stage, raw texts are embedded to build a knowledge graph and a vector store, then partitioning forms a hierarchical graph and an LLM identifies causal relations to construct a graph with causal gates. In the online stage, the query is embedded and scored to retrieve top K entities, then N hop traversal uses causal gates to cross modules and assemble a context subgraph; an LLM further distinguishes causal versus spurious relations to produce the final context and answer.

Algorithm 1 HugRAG Algorithm Pipeline

0: Corpus $\mathcal{D}$ , query $q$ , hierarchy levels $L$ , seed budget $\{K_{\ell}\}_{\ell=0}^{L}$ , hop $h$ , gate threshold $\tau$

0: Answer $y$ , Support Subgraph $S^{*}$

1: // Phase 1: Offline Hierarchical Organization

2: $G_{0}=(V_{0},E_{0})←\textsc{BuildBaseGraph}(\mathcal{D})$

3: $\mathcal{H}=\{H_{0},...,H_{L}\}←\textsc{LeidenPartition}(G_{0},L)$ {Organize into modules $\mathcal{M}$ }

4: $\mathcal{G}_{c}←\emptyset$

5: for all pair $(m_{i},m_{j})∈\textsc{ModulePairs}(\mathcal{M})$ do

6: $score←\textsc{LLM-EstCausal}(m_{i},m_{j})$

7: if $score≥\tau$ then

8: $\mathcal{G}_{c}←\mathcal{G}_{c}\cup\{(m_{i}→ m_{j},score)\}$ {Establish causal gates}

9: end if

10: end for

11: // Phase 2: Online Retrieval & Reasoning

12: $U←\bigcup_{\ell=0}^{L}\mathrm{TopK}(\text{sim}(q,u),K_{\ell},H_{\ell})$ {Multi-level semantic seeding}

13: $S_{raw}←\textsc{GatedTraversal}(U,\mathcal{H},\mathcal{G}_{c},h)$ {Break isolation via gates}

14: $S^{*}←\textsc{CausalFilter}(q,S_{raw})$ {Remove spurious nodes $\mathcal{V}_{sp}$ }

15: $y←\textsc{LLM-Generate}(q,S^{*})$

4 Method

Overview.

As illustrated in Figure 2, HugRAG operates in two distinct phases to address the aforementioned structural bottlenecks. In the offline phase, we construct a hierarchical knowledge structure $\mathcal{H}$ partitioned into modules, which are then interconnected via causal gates $\mathcal{G}_{c}$ to enable logical traversals. In the online phase, HugRAG performs a gated expansion to break modular isolation, followed by a causal filtering step to eliminate spurious noise. The overall procedure is formalized in Algorithm 1, and we detail each component in the subsequent sections.

4.1 Hierarchical Graph with Causal Gating

To address the global information isolation challenge (Section 3), we construct a multi-scale knowledge structure that balances global retrieval recall with local precision.

Hierarchical Module Construction.

We first extract a base entity graph $G_{0}=(V_{0},E_{0})$ from the corpus $\mathcal{D}$ using an information extraction pipeline (see details in Appendix B.1), followed by entity canonicalization to resolve aliasing. To establish the hierarchical backbone $\mathcal{H}=\{H_{0},...,H_{L}\}$ , we iteratively partition the graph into modules using the Leiden algorithm (Traag et al., 2019), which optimizes modularity to identify tightly-coupled semantic regions. Formally, at each level $\ell$ , nodes are partitioned into modules $\mathcal{M}_{\ell}=\{m_{1}^{(\ell)},...,m_{k}^{(\ell)}\}$ . For each module, we generate a natural language summary to serve as a coarse-grained semantic anchor.

Offline Causal Gating.

While hierarchical modularity improves efficiency, it risks trapping retrieval within local boundaries. We introduce Causal Gates to explicitly model cross-module affordances. Instead of fully connecting the graph, we construct a sparse gate set $\mathcal{G}_{c}$ . Specifically, we identify candidate module pairs $(m_{i},m_{j})$ that are topologically distant but potentially logically related. An LLM then evaluates the plausibility of a causal connection between their summaries. We formally define the gate set via an indicator function $\mathbb{I}(·)$ :

$$

\mathcal{G}_{c}=\left\{(m_{i}\to m_{j})\mid\mathbb{I}_{\text{causal}}(m_{i},m_{j})=1\right\}, \tag{1}

$$

where $\mathbb{I}_{\text{causal}}$ denotes the LLM’s assessment (see Appendix B.1 for construction prompts and the Top-Down Hierarchical Pruning strategy we employed to mitigate the $O(N^{2})$ evaluation complexity). These gates act as shortcuts in the retrieval space, permitting the traversal to jump across disjoint modules only when logically warranted, thereby breaking information isolation without causing semantic drift (see Appendix C for visualizations of hierarchical modules and causal gates).

4.2 Retrieve Subgraph via Causally Gated Expansion

Given the hierarchical structure $\mathcal{H}$ and causal gates $\mathcal{G}_{c}$ , HugRAG retrieves a support subgraph $S$ by coupling multi-granular anchoring with a topology-aware expansion. This process is designed to maximize recall (breaking isolation) while suppressing drift (controlled locality).

Multi-Granular Hybrid Seeding.

Graph-based RAG often struggles to effectively differentiate between local details and global contexts within multi-level structures (Zhang et al., 2025; Edge et al., 2024). We overcome this by identifying a seed set $U$ across multiple levels of the hierarchy. We employ a hybrid scoring function $s(q,v)$ that interpolates between semantic embedding similarity and lexical overlap (details in Appendix B.2). This function is applied simultaneously to fine-grained entities in $H_{0}$ and coarse-grained module summaries in $H_{\ell>0}$ . Crucially, to prevent the semantic redundancy problem where seeds cluster in a single redundant neighborhood, we apply a diversity-aware selection strategy (MMR) to ensure the initial seeds $U$ cover distinct semantic facets of the query. This yields a set of anchors that serve as the starting nodes for expansion.

Gated Priority Expansion.

Starting from the seed set $U$ , we model retrieval as a priority-based traversal over a unified edge space $\mathcal{E}_{\text{uni}}$ . This space integrates three distinct types of connectivity: (1) Structural Edges ( $E_{\text{struc}}$ ) for local context, (2) Hierarchical Edges ( $E_{\text{hier}}$ ) for vertical drill-down, and (3) Causal Gates ( $\mathcal{G}_{c}$ ) for cross-module reasoning.

$$

\mathcal{E}_{\text{uni}}={E}_{\text{struc}}\cup E_{\text{hier}}\cup\mathcal{G}_{c}. \tag{2}

$$

The expansion follows a Best-First Search guided by a query-conditioned gain function. For a frontier node $v$ reached from a predecessor $u$ at hop $t$ , the gain is defined as:

$$

\text{Gain}(v)=s(q,v)\cdot\gamma^{t}\cdot w(\text{type}(u,v)), \tag{3}

$$

where $\gamma∈(0,1)$ is a standard decay factor to penalize long-distance traversal. The weight function $w(·)$ adjusts traversal priorities: we simply assign higher importance to causal gates and hierarchical links to encourage logic-driven jumps over random structural walks. By traversing $\mathcal{E}_{\text{uni}}$ , HugRAG prioritizes paths that drill down (via $E_{\text{hier}}$ ), explore locally (via $E_{\text{struc}}$ ), or leap to a causally related domain (via $\mathcal{G}_{c}$ ), effectively breaking modular isolation. The expansion terminates when the gain drops below a threshold or the token budget is exhausted.

| Datasets | Nodes | Edges | Modules | Size (Char) | Domain |

| --- | --- | --- | --- | --- | --- |

| MS MARCO (Bajaj et al., 2018) | 3,403 | 3,107 | 446 | 1,557,990 | Web |

| NQ (Kwiatkowski et al., 2019) | 5,579 | 4,349 | 505 | 767,509 | Wikipedia |

| 2WikiMultiHopQA (Ho et al., 2020) | 10,995 | 8,489 | 1,088 | 1,756,619 | Wikipedia |

| QASC (Khot et al., 2020) | 77 | 39 | 4 | 58,455 | Science |

| HotpotQA (Yang et al., 2018) | 20,354 | 15,789 | 2,359 | 2,855,481 | Wikipedia |

| HolisQA-Biology | 1,714 | 1,722 | 165 | 1,707,489 | Biology |

| HolisQA-Business | 2,169 | 2,392 | 292 | 1,671,718 | Business |

| HolisQA-CompSci | 1,670 | 1,667 | 158 | 1,657,390 | Computer Science |

| HolisQA-Medicine | 1,930 | 2,124 | 226 | 1,706,211 | Medicine |

| HolisQA-Psychology | 2,019 | 1,990 | 211 | 1,751,389 | Psychology |

Table 2: Statistics of the datasets used in evaluation.

4.3 Causal Path Identification and Grounding

The raw subgraph $S_{raw}$ retrieved via gated expansion optimizes for recall but inevitably includes spurious associations (e.g., high-degree hubs or coincidental co-occurrences). To address the local spurious noise challenge (Section 3), HugRAG employs a causal path refinement stage to directly distill $S_{raw}$ into a causally grounded graph $S^{\star}$ . See Appendix D for a full example of the HugRAG pipeline.

Causal Path Refinement.

We formulate the path refinement task as a structural pruning process. We first linearize the subgraph $S_{raw}$ into a token-efficient table where each node and edge is mapped to a unique short identifier (see Appendix B.3). The LLM is then prompted to analyze the topology and output the subset of identifiers that constitute valid causal paths connecting the query to the potential answer. Leveraging the robust causal identification capabilities of LLMs (Saklad et al., 2026), this operation effectively functions as a reranker, distilling the noisy subgraph into an explicit causal structure:

$$

S^{\star}=\textsc{LLM-CausalExpert}(S_{raw},q). \tag{4}

$$

The returned subgraph $S^{\star}$ contains only model-validated nodes and edges, effectively filtering irrelevant context.

Spurious-Aware Grounding.

To further improve the precision of this selection, we employ a spurious-aware prompting strategy (see prompts in Appendix A.1). In this configuration, the LLM is instructed to explicitly distinguish between causal supports and spurious correlations during its reasoning process. While the prompt may ask the model to identify spurious items as an auxiliary reasoning step, the primary objective remains the extraction of the valid causal subset. This explicit contrast helps the model resist hallucinated connections induced by semantic similarity, yielding a cleaner $S^{\star}$ compared to standard selection prompts and consequently improving downstream generation quality. This mechanism specifically targets the precision challenges outlined in Section 4.2. Finally, the answer $y$ is generated by conditioning the LLM solely on the text content corresponding to the pruned subgraph $S^{\star}$ (see prompts in Appendix A.2), ensuring that the generation is strictly grounded in verified evidence.

5 Experiments

Overview.

We conducted extensive experiments on diverse datasets across various domains to comprehensively evaluate and compare the performance of HugRAG against competitive baselines. Our analysis is guided by the following five research questions:

RQ1 (Overall Performance). How does HugRAG compare against state-of-the-art graph-based baselines across diverse, real-world knowledge domains?

RQ2 (QA vs. Holistic Comprehension). Do popular QA datasets implicitly favor the entity-centric retrieval paradigm, thereby inflating graph-based RAG that finds the right node without assembling a support chain?

RQ3 (Trade-off Reconciliation). Can HugRAG simultaneously improve Context Recall (Globality) and Answer Relevancy (Precision), mitigating the classic trade-off via hierarchical causal gating?

RQ4 (Ablation Study). What are the individual contributions of different components in HugRAG?

RQ5 (Scalability Robustness). How does HugRAG’s performance scale and remain robust under varying context lengths?

Table 3: Main results on HolisQA across five domains. We report F1 (answer overlap), CR (Context Recall: how much gold context is covered by retrieved evidence), and AR (Answer Relevancy: evaluator-judged relevance of the answer to the question), all scaled to $\%$ for readability. Bold indicates best per column. NaiveGeneration has CR $=0$ by definition (no retrieval).

| Baselines \rowcolor black!10 Naive Baselines | Medicine F1 | Computer Science CR | Business AR | Biology F1 | Psychology CR | AR | F1 | CR | AR | F1 | CR | AR | F1 | CR | AR |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| NaiveGeneration | 12.63 | 0.00 | 44.70 | 18.93 | 0.00 | 48.79 | 18.58 | 0.00 | 46.14 | 11.71 | 0.00 | 45.76 | 22.91 | 0.00 | 50.00 |

| BM25 | 17.72 | 52.04 | 50.64 | 24.00 | 39.12 | 52.40 | 28.11 | 37.06 | 55.52 | 19.61 | 43.02 | 52.32 | 30.46 | 33.44 | 56.63 |

| StandardRAG | 26.87 | 61.08 | 56.24 | 28.87 | 49.44 | 57.10 | 47.57 | 46.79 | 67.42 | 28.31 | 42.69 | 57.58 | 37.19 | 52.21 | 59.85 |

| \rowcolor black!10 Graph-based RAG | | | | | | | | | | | | | | | |

| GraphRAG Global | 17.13 | 54.56 | 48.19 | 23.75 | 37.65 | 53.17 | 23.62 | 25.01 | 48.12 | 20.67 | 40.90 | 52.41 | 31.09 | 34.26 | 54.62 |

| GraphRAG Local | 19.03 | 56.07 | 49.52 | 25.10 | 39.90 | 53.30 | 25.01 | 27.36 | 49.05 | 22.21 | 41.88 | 52.73 | 32.31 | 35.22 | 55.02 |

| LightRAG | 12.16 | 52.38 | 44.15 | 22.59 | 41.86 | 51.62 | 29.98 | 34.22 | 54.50 | 17.70 | 41.24 | 50.32 | 33.63 | 45.54 | 56.42 |

| \rowcolor black!10 Structural / Causal Augmented | | | | | | | | | | | | | | | |

| HippoRAG2 | 21.12 | 57.50 | 51.08 | 16.94 | 21.05 | 47.29 | 21.10 | 18.34 | 45.83 | 12.60 | 16.85 | 44.56 | 20.10 | 34.13 | 46.77 |

| LeanRAG | 34.25 | 60.43 | 56.60 | 30.51 | 57.61 | 55.45 | 48.30 | 59.29 | 60.35 | 33.82 | 58.43 | 56.10 | 42.85 | 57.46 | 58.65 |

| CausalRAG | 31.12 | 58.90 | 58.77 | 30.98 | 54.10 | 57.54 | 45.20 | 44.55 | 66.10 | 33.50 | 51.20 | 58.90 | 42.80 | 55.60 | 61.90 |

| HugRAG (ours) | 36.45 | 69.91 | 60.65 | 31.60 | 60.94 | 58.34 | 51.51 | 67.34 | 68.76 | 34.80 | 61.97 | 59.99 | 44.42 | 60.87 | 63.53 |

Table 4: Main results on five QA datasets. Metrics follow Section 5: F1, CR (Context Recall), and AR (Answer Relevancy), reported in $\%$ . Bold and underline denote best and second-best per column.

| Baselines \rowcolor black!10 Naive Baselines | MSMARCO F1 | NQ CR | TwoWiki AR | QASC F1 | HotpotQA CR | AR | F1 | CR | AR | F1 | CR | AR | F1 | CR | AR |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| NaiveGeneration | 5.28 | 0.00 | 15.06 | 7.17 | 0.00 | 10.94 | 9.15 | 0.00 | 11.77 | 2.69 | 0.00 | 13.74 | 14.38 | 0.00 | 15.74 |

| BM25 | 6.97 | 45.78 | 20.33 | 4.68 | 49.98 | 9.13 | 9.43 | 37.12 | 13.73 | 2.49 | 6.12 | 13.17 | 15.81 | 41.08 | 16.08 |

| StandardRAG | 14.93 | 48.55 | 31.11 | 7.57 | 45.82 | 11.14 | 10.33 | 32.28 | 13.57 | 2.01 | 5.50 | 13.16 | 6.68 | 43.17 | 14.66 |

| \rowcolor black!10 Graph-based RAG | | | | | | | | | | | | | | | |

| GraphRAG Global | 9.41 | 3.65 | 13.08 | 3.91 | 4.48 | 8.00 | 1.41 | 9.42 | 9.55 | 0.68 | 3.38 | 3.56 | 6.28 | 14.59 | 16.26 |

| GraphRAG Local | 30.87 | 25.71 | 57.76 | 23.56 | 44.56 | 44.68 | 18.85 | 32.03 | 37.29 | 8.30 | 9.54 | 46.59 | 33.14 | 44.07 | 40.82 |

| LightRAG | 37.70 | 54.22 | 63.54 | 24.97 | 60.65 | 50.53 | 14.44 | 40.98 | 36.56 | 8.20 | 20.40 | 44.35 | 28.39 | 48.17 | 43.78 |

| \rowcolor black!10 Structural / Causal Augmented | | | | | | | | | | | | | | | |

| HippoRAG2 | 23.35 | 45.45 | 55.18 | 29.64 | 57.21 | 37.50 | 18.47 | 55.53 | 17.34 | 14.73 | 4.38 | 49.94 | 38.80 | 42.06 | 24.66 |

| LeanRAG | 38.02 | 54.01 | 58.49 | 35.46 | 65.91 | 49.87 | 20.27 | 40.53 | 38.37 | 13.19 | 22.80 | 45.51 | 48.68 | 46.29 | 43.50 |

| CausalRAG | 27.66 | 39.38 | 46.03 | 29.45 | 68.04 | 17.35 | 15.93 | 28.38 | 19.76 | 7.65 | 46.86 | 35.56 | 40.00 | 27.83 | 21.32 |

| HugRAG (ours) | 38.40 | 60.48 | 66.02 | 49.50 | 70.36 | 55.09 | 31.97 | 41.95 | 42.67 | 13.35 | 70.80 | 49.40 | 64.83 | 40.30 | 45.72 |

5.1 Experimental Setup

Datasets.

We evaluate HugRAG on a diverse suite of datasets covering complementary difficulty profiles. For standard evaluation, we use five established datasets: MS MARCO (Bajaj et al., 2018) and Natural Questions (Kwiatkowski et al., 2019) emphasize large-scale open-domain retrieval; HotpotQA (Yang et al., 2018) and 2WikiMultiHop (Ho et al., 2020) require evidence aggregation; and QASC (Khot et al., 2020) targets compositional scientific reasoning. However, these datasets often suffer from entity-centric biases and potential data leakage (memorization by LLMs). To rigorously test the holistic understanding capability of RAG, we introduce HolisQA, a dataset derived from high-quality academic papers sourced (Priem et al., 2022). Spanning over diverse domains (including Biology, Computer Science, Medicine, etc.), HolisQA features dense logical structures that naturally demand holistic comprehension (see more details in Appendix F.2). All dataset statistics are summarized in Table 2. While LLMs have demonstrated strong capabilities in identifying causality (Ma, 2024; Dong et al., 2025) and effectiveness in RAG (Wang et al., 2025a), to ensure rigorous evaluation, we incorporated cross-domain expert review to validate the quality of baseline answers and confirm the legitimacy of the induced causal relations.

Baselines.

We compare HugRAG against eight baselines spanning three retrieval paradigms. First, to cover Naive and Flat approaches, we include Naive Generation (no retrieval) as a lower bound, alongside BM25 (sparse) and Standard RAG (Lewis et al., 2021) (dense embedding-based), representing mainstream unstructured retrieval. Second, we evaluate established graph-based frameworks: GraphRAG (Local and Global) (Edge et al., 2024), utilizing community summaries; and LightRAG (Guo et al., 2024), relying on dual-level keyword-based search. Third, we benchmark against RAGs with structured or causal augmentation: HippoRAG 2 (Gutiérrez et al., 2025), utilizing passage nodes and Personalized PageRank diffusion; LeanRAG (Zhang et al., 2025), employing semantic aggregation hierarchies and tree-based LCA retrieval; and CausalRAG (Wang et al., 2025a), which incorporates causality without explicit causal reasoning. This selection comprehensively covers the spectrum from unstructured search to advanced structure-aware and causally augmented graph methods.

Metrics.

For metrics, we first report the token-level answer quality metric F1 for surface robustness. To measure whether retrieval actually supports generation, we additionally compute grounding metrics, context recall and answer relevancy (Es et al., 2024), which jointly capture coverage and answer quality (see Appendix F.4).

Implementation Details.

For all experiments, we utilize gpt-5-nano as the backbone LLM for both the open IE extraction and generation stages, and Sentence-BERT (Reimers and Gurevych, 2019) for semantic vectorization. For HugRAG, we set the hierarchical seed budget to $K_{L}=3$ for modules and $K_{0}=3$ for entities, causal gate is enabled by default except ablation study. Experiments run on a cluster using 10-way job arrays; each task uses 2 CPU cores and 16 GB RAM (20 cores, 160GB in total). See more implementation details in Appendix F.3.

5.2 Main Experiments

Overall Performance (RQ1).

HugRAG consistently achieves superior performance across all HolisQA domains and standard QA metrics (Section 5, Section 5). While traditional methods (e.g., BM25, Standard RAG) struggle with structural dependencies, graph-based baselines exhibit distinct limitations. GraphRAG-Global relies heavily on high-level community summaries and largely suffers from detailed QA tasks, necessitating its GraphRAG Local variant to balance the granularity trade-off. LightRAG struggles to achieve competitive results, limited by its coarse-grained key-value lookup mechanism. Regarding structurally augmented methods, while LeanRAG (utilizing semantic aggregation) and HippoRAG2 (leveraging phrase/passage nodes) yield slight improvements in context recall, they fail to fully break information isolation compared to our causal gating mechanism. Finally, although CausalRAG occasionally attains high Answer Relevancy due to its causal reasoning capability, it struggles to scale to large datasets due to the lack of efficient knowledge graph organization.

Holistic Comprehension vs. QA (RQ2).

The contrast between the results on HolisQA (Section 5) and standard QA datasets (Section 5) is revealing. On popular QA benchmarks, entity-centric methods like LightRAG, GraphRAG-Local, LeanRAG could occasionally achieve good scores. However, their performance degrades collectively and significantly on HolisQA. A striking counterexample is GraphRAG-Global: while its reliance on community summaries hindered performance on granular standard QA tasks, now it rebounds significantly in HolisQA. This discrepancy strongly suggests that standard QA datasets, which often favor short answers, implicitly reward the entity-centric paradigm. In contrast, HolisQA, with its open-ended questions and dense logical structures, necessitates a comprehensive understanding of the underlying document—a scenario closer to real-world applications. Notably, HugRAG is the only framework that remains robust across this paradigm shift, demonstrating competitive performance on both entity-centric QA and holistic comprehension tasks.

Reconciling the Accuracy-Grounding Trade-off (RQ3).

HugRAG effectively reconciles the fundamental tension between Recall and Precision. While hierarchical causal gating expands traversal boundaries to secure superior Context Recall (Globality), the explicit causal path identification rigorously prunes spurious noise to maintain high F1 Score and Answer Relevancy (Locality). This dual mechanism allows HugRAG to simultaneously optimize for global coverage and local groundedness, achieving a balance often missed by prior methods.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: Model Performance Comparison Across Metrics

### Overview

The chart compares the performance scores of six different model configurations across three evaluation metrics: F1, CR (Causal Reasoning), and AR (Answer Relevance). Each configuration varies in the inclusion of three components: **H** (likely a feature/technique), **CG** (another feature/technique), and **Causal/SP-Causal** (reasoning mechanisms). The configurations are color-coded in the legend for clarity.

### Components/Axes

- **X-axis (Metrics)**: Labeled "Metric" with categories: **F1**, **CR**, **AR**.

- **Y-axis (Score)**: Labeled "Score" with a range from 0 to 70.

- **Legend**: Positioned in the top-right corner, with six configurations:

1. **w/o H · w/o CG · w/o Causal** (teal)

2. **w/ H · w/o CG · w/o Causal** (yellow)

3. **w/ H · w/ CG · w/o Causal** (blue)

4. **w/o H · w/o CG · w/ Causal** (pink)

5. **w/ H · w/ CG · w/ Causal** (green)

6. **w/ H · w/ CG · w/ SP-Causal** (orange)

### Detailed Analysis

#### F1 Metric

- **w/o H · w/o CG · w/o Causal** (teal): 26.8

- **w/ H · w/o CG · w/o Causal** (yellow): 24.0

- **w/ H · w/ CG · w/o Causal** (blue): 23.3

- **w/o H · w/o CG · w/ Causal** (pink): 30.1

- **w/ H · w/ CG · w/ Causal** (green): 36.8

- **w/ H · w/ CG · w/ SP-Causal** (orange): 38.6

#### CR Metric

- **w/o H · w/o CG · w/o Causal** (teal): 54.7

- **w/ H · w/o CG · w/o Causal** (yellow): 58.0

- **w/ H · w/ CG · w/o Causal** (blue): 60.2

- **w/o H · w/o CG · w/ Causal** (pink): 55.4

- **w/ H · w/ CG · w/ Causal** (green): 60.0

- **w/ H · w/ CG · w/ SP-Causal** (orange): 60.4

#### AR Metric

- **w/o H · w/o CG · w/o Causal** (teal): 55.7

- **w/ H · w/o CG · w/o Causal** (yellow): 53.6

- **w/ H · w/ CG · w/o Causal** (blue): 52.6

- **w/o H · w/o CG · w/ Causal** (pink): 60.0

- **w/ H · w/ CG · w/ Causal** (green): 64.1

- **w/ H · w/ CG · w/ SP-Causal** (orange): 67.4

### Key Observations

1. **F1 Metric**:

- The **SP-Causal** configuration (orange) achieves the highest score (38.6), outperforming all others.

- Including **H** and **CG** improves performance, but **Causal** alone (pink) underperforms compared to combinations with **H** and **CG**.

2. **CR Metric**:

- The **SP-Causal** configuration (orange) again leads with 60.4, followed closely by **Causal** (green, 60.0).

- **H** and **CG** inclusion consistently boosts scores, even without **Causal**.

3. **AR Metric**:

- **SP-Causal** (orange) dominates with 67.4, significantly higher than **Causal** (green, 64.1).

- **H** and **CG** inclusion improves performance, but **Causal** alone (pink) underperforms compared to combinations.

### Interpretation

- **H** and **CG** are critical for performance across all metrics, with **H** showing a stronger impact in F1 and CR.

- **Causal** and **SP-Causal** enhance performance, but **SP-Causal** (orange) consistently outperforms **Causal** (pink), especially in AR.

- The **w/ H · w/ CG · w/ SP-Causal** configuration (orange) is the most effective overall, suggesting that combining **H**, **CG**, and **SP-Causal** yields optimal results.

- **w/o H · w/o CG · w/o Causal** (teal) is the baseline, performing poorly across all metrics.

### Spatial Grounding & Trend Verification

- **Legend**: Top-right, aligned with bar colors.

- **Trends**:

- F1: Scores increase from teal (26.8) to orange (38.6).

- CR: Scores rise from teal (54.7) to orange (60.4).

- AR: Scores peak at orange (67.4), with a notable drop in blue (52.6) compared to other configurations.

- **Color Consistency**: All bars match their legend labels (e.g., orange = SP-Causal).

### Conclusion

The data demonstrates that **H** and **CG** are foundational for performance, while **SP-Causal** provides the greatest incremental improvement, particularly in AR. This suggests that advanced reasoning mechanisms (SP-Causal) paired with robust features (H, CG) are key to achieving high scores in complex tasks.

</details>

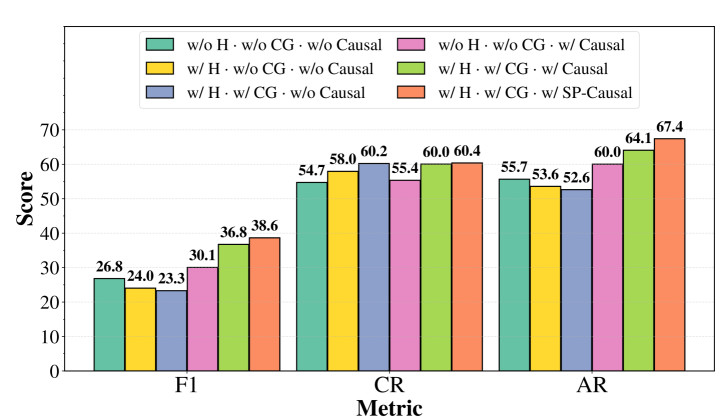

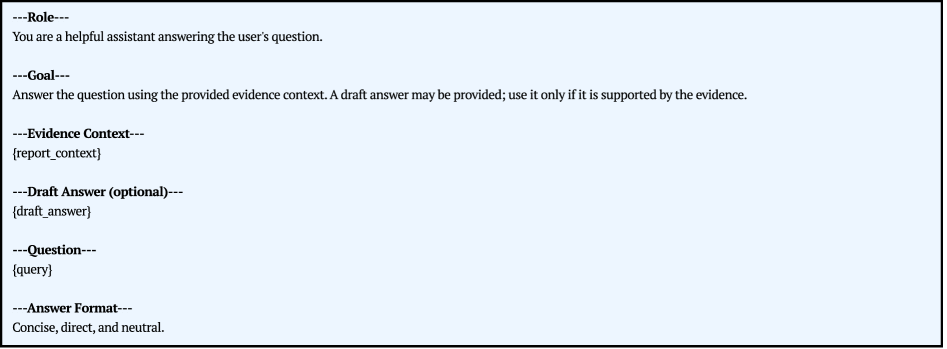

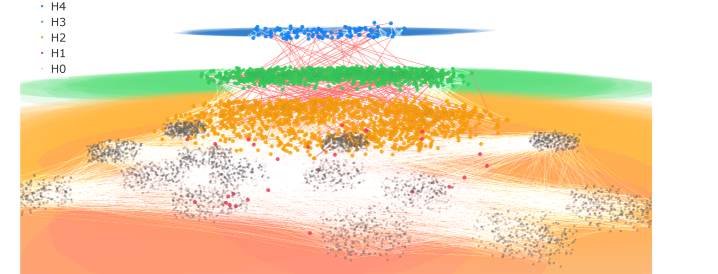

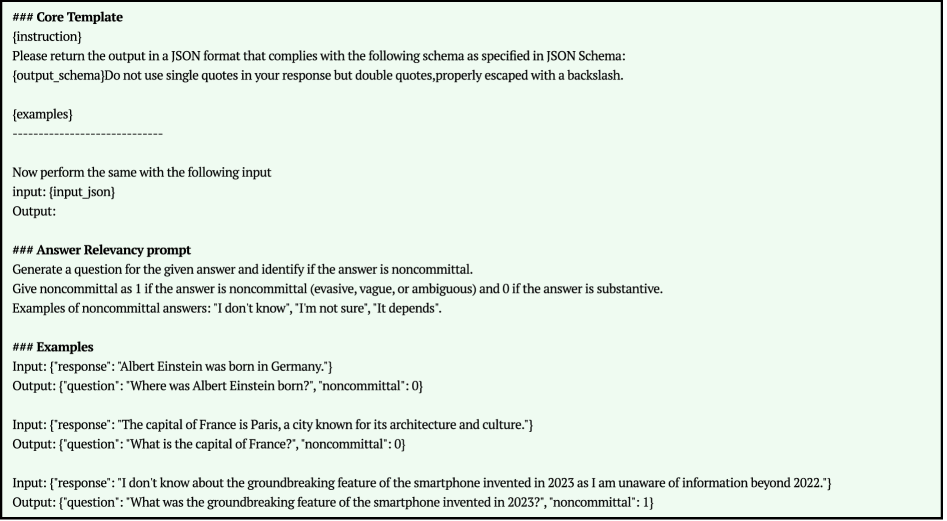

Figure 3: Ablation Study. H: Hierarchical Structure; CG: Causal Gates; Causal/SP-Causal: Standard vs. Spurious-Aware Causal Identification. w/o and w/ denote exclusion or inclusion.

5.3 Ablation Study

To address RQ4, we ablate hierarchy, causal gates, and causal path refinement components (see Figure 3), finding that their combination yields optimal results. Specifically, we observe a mutually reinforcing dynamic: while hierarchical gates break information isolation to boost recall, the spurious-aware causal identification is indispensable for filtering the resulting noise and achieving a significant improvement. This mutual reinforcement allows HugRAG to reconcile global coverage with local groundedness, significantly outperforming any isolated component.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Graph: RAG Model Performance vs. Source Text Length

### Overview

The graph compares the performance scores of various RAG (Retrieval-Augmented Generation) models across different source text lengths (5K to 1.5M characters). Performance is measured on a scale of 0-60, with higher scores indicating better performance.

### Components/Axes

- **X-axis**: Source Text Length (chars)

Markers: 5K, 10K, 25K, 100K, 300K, 750K, 1M, 1.5M

- **Y-axis**: Score (0-60)

- **Legend**: Positioned on the right, mapping colors/markers to models:

- Naive (gray circles)

- BM25 (gray squares)

- Standard RAG (gray triangles)

- GraphRAG Global (blue squares)

- GraphRAG Local (dark blue stars)

- LeanRAG (dashed blue line)

- LightRAG (light blue triangles)

- CausalRAG (light blue diamonds)

- HugRAG (red stars)

### Detailed Analysis

1. **HugRAG (red stars)**:

- Starts at ~55 at 5K, dips slightly to ~53 at 10K, then stabilizes between 55-57 up to 1.5M.

- **Trend**: Consistently highest performer across all text lengths.

2. **GraphRAG Global (blue squares)**:

- Starts at ~50 at 5K, drops to ~0 at 10K, rises to ~10 at 25K, peaks at ~15 at 100K, then declines to ~5 at 1.5M.

- **Trend**: Volatile, with significant drops at smaller text lengths.

3. **GraphRAG Local (dark blue stars)**:

- Starts at ~50 at 5K, dips to ~30 at 10K, peaks at ~40 at 100K, then declines to ~30 at 1.5M.

- **Trend**: Strong at mid-range text lengths but underperforms at extremes.

4. **LeanRAG (dashed blue line)**:

- Starts at ~50 at 5K, remains stable between 50-52 up to 1.5M.

- **Trend**: Most consistent high performer after HugRAG.

5. **LightRAG (light blue triangles)**:

- Starts at ~30 at 5K, dips to ~20 at 10K, rises to ~30 at 100K, then declines to ~25 at 1.5M.

- **Trend**: Moderate performance with mid-range peaks.

6. **CausalRAG (light blue diamonds)**:

- Starts at ~15 at 5K, rises to ~20 at 10K, stabilizes between 18-20 up to 1.5M.

- **Trend**: Steady but lower performance.

7. **Naive (gray circles)**:

- Starts at ~20 at 5K, dips to ~15 at 10K, stabilizes between 15-18 up to 1.5M.

- **Trend**: Low but stable.

8. **BM25 (gray squares)**:

- Starts at ~18 at 5K, dips to ~15 at 10K, stabilizes between 15-17 up to 1.5M.

- **Trend**: Slightly better than Naive but still low.

9. **Standard RAG (gray triangles)**:

- Starts at ~15 at 5K, dips to ~12 at 10K, rises to ~18 at 100K, then declines to ~15 at 1.5M.

- **Trend**: Volatile but outperforms Naive/BM25 at mid-range lengths.

### Key Observations

- **HugRAG** and **LeanRAG** dominate performance across all text lengths.

- **GraphRAG Global** and **Local** show significant drops at 10K but recover at larger lengths.

- Traditional models (**Naive**, **BM25**, **Standard RAG**) underperform but remain stable.

- **LightRAG** and **CausalRAG** exhibit moderate performance, with LightRAG peaking at 100K.

### Interpretation

The data suggests that newer RAG models (e.g., HugRAG, LeanRAG) outperform traditional methods (Naive, BM25) and even standard RAG variants, particularly as text length increases. GraphRAG models excel at larger text sizes but struggle with smaller inputs, indicating potential optimization opportunities. The consistency of LeanRAG and HugRAG highlights their robustness, making them ideal for applications requiring reliable performance across diverse text lengths. Traditional models, while less effective, may still be viable for specific use cases with limited computational resources.

</details>

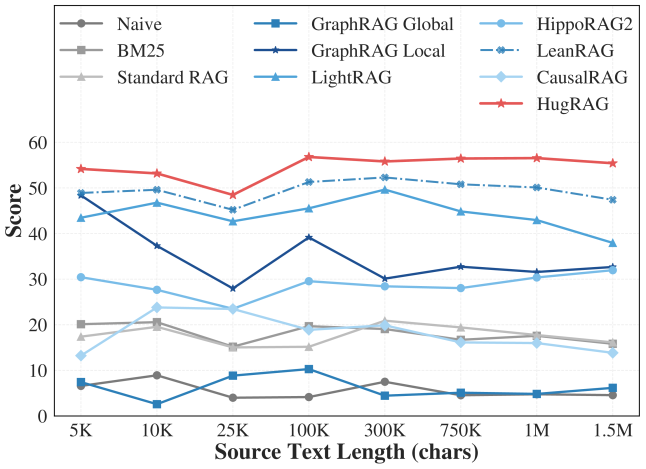

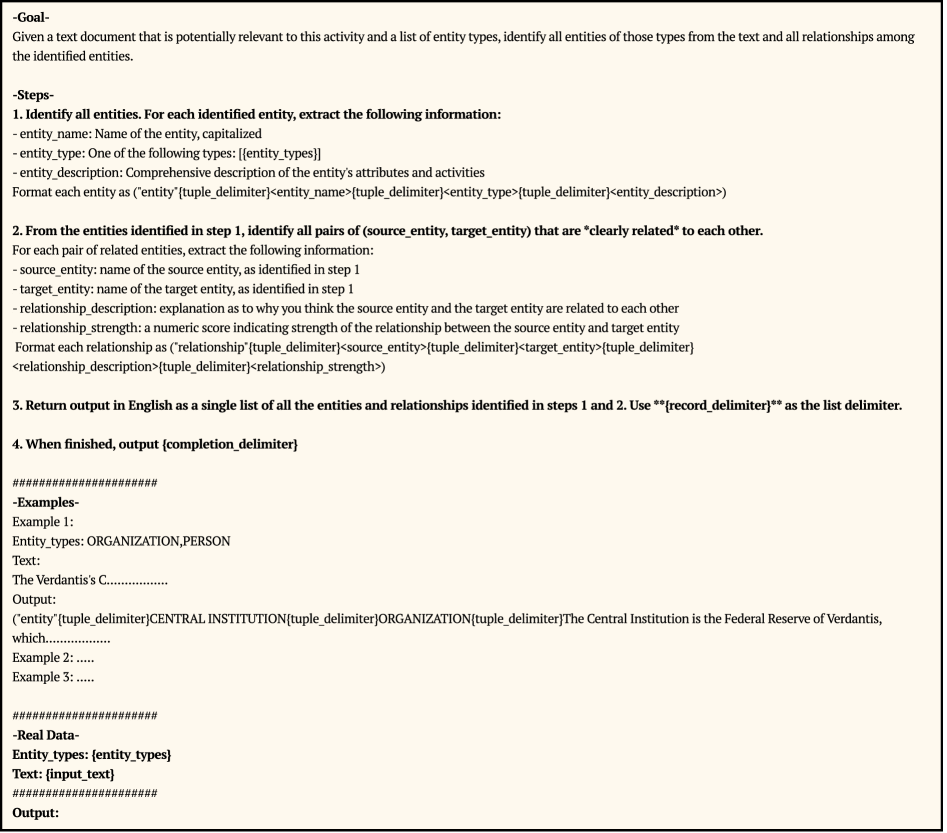

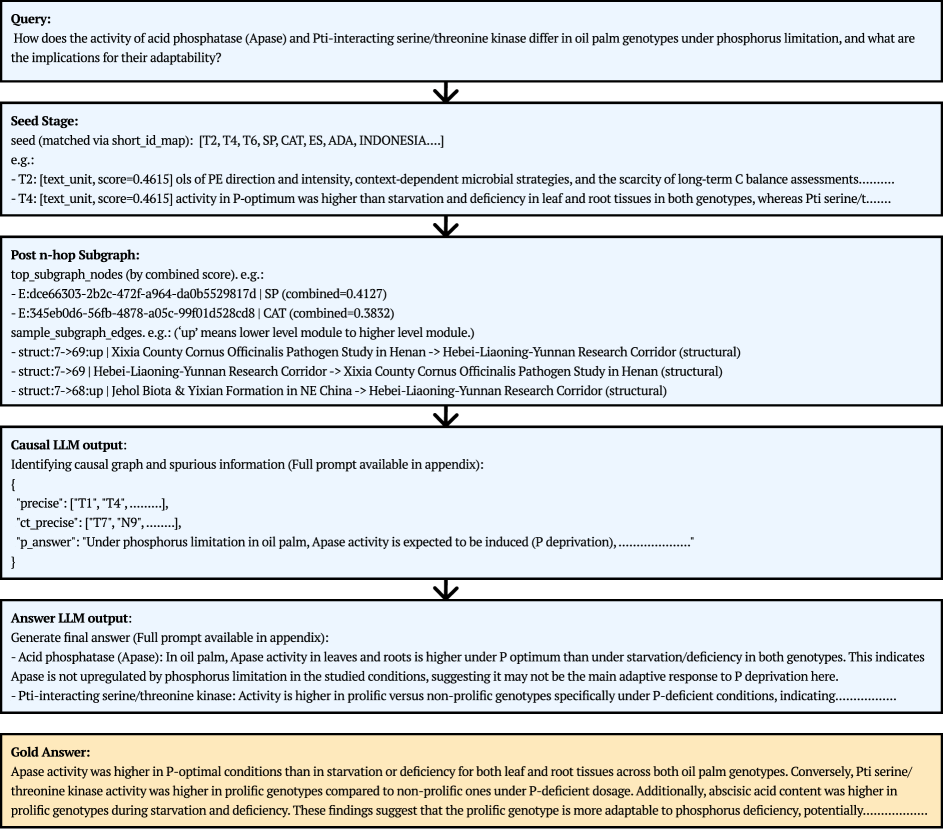

Figure 4: Scalability analysis of HugRAG and other RAG baselines across varying source text lengths (5K to 1.5M characters).

5.4 Scalability Analysis

Robustness to Information Scale (RQ5).

To assess robustness against information overload, we evaluated performance across varying source text lengths ( $5k$ to $1.5M$ characters) sampled from HolisQA, reporting the mean of F1, Context Recall, and Answer Relevancy (see Figure 4). As illustrated, HugRAG (red line) exhibits remarkable stability across all scales, maintaining high scores even at 1.5M characters. This confirms that our hierarchical causal gating structure effectively encapsulates complexity, enabling the retrieval process to scale via causal gates without degrading reasoning fidelity.

6 Conclusion

We introduced HugRAG to resolve information isolation and spurious noise in graph-based RAG. By leveraging hierarchical causal gating and explicit identification, HugRAG reconciles global context coverage with local evidence grounding. Experiments confirm its superior performance not only in standard QA but also in holistic comprehension, alongside robust scalability to large knowledge bases. Additionally, we introduced HolisQA to evaluate complex reasoning capabilities for RAG. We hope our findings contribute to the ongoing development of RAG research.

Impact Statement

This paper presents work whose goal is to advance the field of machine learning, specifically by improving the reliability and interpretability of retrieval-augmented generation. There are many potential societal consequences of our work, none of which we feel must be specifically highlighted here.

References

- P. Bajaj, D. Campos, N. Craswell, L. Deng, J. Gao, X. Liu, R. Majumder, A. McNamara, B. Mitra, T. Nguyen, M. Rosenberg, X. Song, A. Stoica, S. Tiwary, and T. Wang (2018) MS MARCO: A Human Generated MAchine Reading COmprehension Dataset. arXiv. External Links: 1611.09268, Document Cited by: Table 2, §5.1.

- X. Dai, K. Guo, C. Lo, S. Zeng, J. Ding, D. Luo, S. Mukherjee, and J. Tang (2025) GraphGhost: Tracing Structures Behind Large Language Models. arXiv. External Links: 2510.08613, Document Cited by: §2.2.

- [3] G. Dong, J. Jin, X. Li, Y. Zhu, Z. Dou, and J. Wen RAG-Critic: Leveraging Automated Critic-Guided Agentic Workflow for Retrieval Augmented Generation. Cited by: §2.1.

- J. Dong, Y. Liu, A. Aloui, V. Tarokh, and D. Carlson (2025) CARE: Turning LLMs Into Causal Reasoning Expert. arXiv. External Links: 2511.16016, Document Cited by: §2.2, §3, §5.1.

- D. Edge, H. Trinh, N. Cheng, J. Bradley, A. Chao, A. Mody, S. Truitt, and J. Larson (2024) From Local to Global: A Graph RAG Approach to Query-Focused Summarization. arXiv. External Links: 2404.16130 Cited by: Figure 8, §B.1, Table 1, §1, §2.1, §2.1, §4.2, §5.1.

- S. Es, J. James, L. Espinosa Anke, and S. Schockaert (2024) RAGAs: Automated Evaluation of Retrieval Augmented Generation. In Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations, N. Aletras and O. De Clercq (Eds.), St. Julians, Malta, pp. 150–158. External Links: Document Cited by: Figure 15, §F.3, §F.4, §5.1.

- S. Fortunato and M. Barthélemy (2007) Resolution limit in community detection. Proceedings of the National Academy of Sciences 104 (1), pp. 36–41. External Links: Document Cited by: §1, §2.1.

- Z. Guo, L. Xia, Y. Yu, T. Ao, and C. Huang (2024) LightRAG: Simple and Fast Retrieval-Augmented Generation. arXiv. External Links: 2410.05779 Cited by: Table 1, §1, §2.1, §5.1.

- B. J. Gutiérrez, Y. Shu, W. Qi, S. Zhou, and Y. Su (2025) From RAG to Memory: Non-Parametric Continual Learning for Large Language Models. arXiv. External Links: 2502.14802, Document Cited by: Table 1, §1, §2.1, §2.1, §5.1.

- X. Ho, A. D. Nguyen, S. Sugawara, and A. Aizawa (2020) Constructing A Multi-hop QA Dataset for Comprehensive Evaluation of Reasoning Steps. arXiv. External Links: 2011.01060, Document Cited by: Table 2, §5.1.

- E. Khatibi, Z. Wang, and A. M. Rahmani (2025) CDF-RAG: Causal Dynamic Feedback for Adaptive Retrieval-Augmented Generation. arXiv. External Links: 2504.12560, Document Cited by: §2.2.

- T. Khot, P. Clark, M. Guerquin, P. Jansen, and A. Sabharwal (2020) QASC: A Dataset for Question Answering via Sentence Composition. arXiv. External Links: 1910.11473, Document Cited by: Table 2, §5.1.

- T. Kwiatkowski, J. Palomaki, O. Redfield, M. Collins, A. Parikh, C. Alberti, D. Epstein, I. Polosukhin, J. Devlin, K. Lee, K. Toutanova, L. Jones, M. Kelcey, M. Chang, A. M. Dai, J. Uszkoreit, Q. Le, and S. Petrov (2019) Natural questions: a benchmark for question answering research. Transactions of the Association for Computational Linguistics 7, pp. 452–466. External Links: Document Cited by: §2.1, Table 2, §5.1.

- P. Lewis, E. Perez, A. Piktus, F. Petroni, V. Karpukhin, N. Goyal, H. Küttler, M. Lewis, W. Yih, T. Rocktäschel, S. Riedel, and D. Kiela (2021) Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. arXiv. External Links: 2005.11401, Document Cited by: Table 1, §1, §2.1, §5.1.

- H. Liu, Z. Wang, X. Chen, Z. Li, F. Xiong, Q. Yu, and W. Zhang (2025a) HopRAG: Multi-Hop Reasoning for Logic-Aware Retrieval-Augmented Generation. arXiv. External Links: 2502.12442, Document Cited by: §2.1.

- H. Liu, S. Wang, and J. Li (2025b) Knowledge Graph Retrieval-Augmented Generation via GNN-Guided Prompting. Cited by: §1, §2.1.

- H. Luo, J. Zhang, and C. Li (2025) Causal Graphs Meet Thoughts: Enhancing Complex Reasoning in Graph-Augmented LLMs. arXiv. External Links: 2501.14892, Document Cited by: §2.2.

- [18] H. Luo, Q. Lin, Y. Feng, Z. Kuang, M. Song, Y. Zhu, and L. A. Tuan HyperGraphRAG: Retrieval-Augmented Generation via Hypergraph-Structured Knowledge Representation. Cited by: §1, §2.1.

- J. Ma (2024) Causal Inference with Large Language Model: A Survey. arXiv. External Links: 2409.09822 Cited by: §2.2, §3, §5.1.

- M. Newman (2018) Networks. Vol. 1, Oxford University Press. External Links: Document, ISBN 978-0-19-880509-0 Cited by: §2.1.

- J. Priem, H. Piwowar, and R. Orr (2022) OpenAlex: A fully-open index of scholarly works, authors, venues, institutions, and concepts. Cited by: §F.2, §5.1.

- P. Rajpurkar, J. Zhang, K. Lopyrev, and P. Liang (2016) SQuAD: 100,000+ Questions for Machine Comprehension of Text. arXiv. External Links: 1606.05250, Document Cited by: §2.1.

- C. Ravuru, S. S. Sakhinana, and V. Runkana (2024) Agentic Retrieval-Augmented Generation for Time Series Analysis. arXiv. External Links: 2408.14484, Document Cited by: §1, §2.1.

- N. Reimers and I. Gurevych (2019) Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), Hong Kong, China, pp. 3980–3990. External Links: Document Cited by: §F.3, §5.1.

- R. Saklad, A. Chadha, O. Pavlov, and R. Moraffah (2026) Can Large Language Models Infer Causal Relationships from Real-World Text?. arXiv. External Links: 2505.18931, Document Cited by: §2.2, §4.3.

- V. Traag, L. Waltman, and N. J. van Eck (2019) From Louvain to Leiden: guaranteeing well-connected communities. Scientific Reports 9 (1), pp. 5233. External Links: 1810.08473, ISSN 2045-2322, Document Cited by: §B.1, §4.1.

- C. Walker and R. Ewetz (2025) Explaining the Reasoning of Large Language Models Using Attribution Graphs. arXiv. External Links: 2512.15663, Document Cited by: §2.2.

- N. Wang, X. Han, J. Singh, J. Ma, and V. Chaudhary (2025a) CausalRAG: Integrating Causal Graphs into Retrieval-Augmented Generation. In Findings of the Association for Computational Linguistics: ACL 2025, W. Che, J. Nabende, E. Shutova, and M. T. Pilehvar (Eds.), Vienna, Austria, pp. 22680–22693. External Links: Document, ISBN 979-8-89176-256-5 Cited by: Table 1, §2.2, §3, §5.1, §5.1.

- S. Wang, Z. Chen, P. Wang, Z. Wei, Z. Tan, Y. Meng, C. Shen, and J. Li (2025b) Separate the Wheat from the Chaff: Winnowing Down Divergent Views in Retrieval Augmented Generation. arXiv. External Links: 2511.04700, Document Cited by: §2.1.

- [30] X. Wang, Z. Liu, J. Han, and S. Deng RAG4GFM: Bridging Knowledge Gaps in Graph Foundation Models through Graph Retrieval Augmented Generation. Cited by: §2.1.

- Z. Yang, P. Qi, S. Zhang, Y. Bengio, W. W. Cohen, R. Salakhutdinov, and C. D. Manning (2018) HotpotQA: A Dataset for Diverse, Explainable Multi-hop Question Answering. arXiv. External Links: 1809.09600, Document Cited by: Table 2, §5.1.

- Y. Zhang, R. Wu, P. Cai, X. Wang, G. Yan, S. Mao, D. Wang, and B. Shi (2025) LeanRAG: Knowledge-Graph-Based Generation with Semantic Aggregation and Hierarchical Retrieval. arXiv. External Links: 2508.10391, Document Cited by: Table 1, §1, §2.1, §4.2, §5.1.

- Y. Zhang, Y. Zhang, Y. Gan, L. Yao, and C. Wang (2024) Causal Graph Discovery with Retrieval-Augmented Generation based Large Language Models. arXiv. External Links: 2402.15301 Cited by: §2.2.

Appendix A Prompts used in Online Retrieval and Reasoning

This section details the prompt engineering employed during the online retrieval phase of HugRAG. We rely on Large Language Models to perform two critical reasoning tasks: identifying causal paths within the retrieved subgraph and generating the final grounded answer.

A.1 Causal Path Identification

To address the local spurious noise issue, we design a prompt that instructs the LLM to act as a “causality analyst.” The model receives a linearized list of potential evidence (nodes and edges) and must select the subset that forms a coherent causal chain.

Spurious-Aware Selection (Main Setting).

Our primary prompt, illustrated in Figure 5, explicitly instructs the model to differentiate between valid causal supports (output in precise) and spurious associations (output in ct_precise). By forcing the model to articulate what is not causal (e.g., mere correlations or topical coincidence), we improve the precision of the selected evidence.

Standard Selection (Ablation).

To verify the effectiveness of spurious differentiation, we also use a simplified prompt variant shown in Figure 6. This version only asks the model to identify valid causal items without explicitly labeling spurious ones.

A.2 Final Answer Generation

Once the spurious-filtered support subgraph $S^{\star}$ is obtained, it is passed to the generation module. The prompt shown in Figure 7 is used to synthesize the final answer. Crucially, this prompt enforces strict grounding by instructing the model to rely only on the provided evidence context, minimizing hallucination.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Screenshot: Technical Document for Causal Graph Reranking Task

### Overview

The image is a screenshot of a technical document outlining a reranking task for a causality analyst. It defines the role, goal, inputs, output format, and constraints for processing queries and context items to extract causal relationships. The document emphasizes strict adherence to formatting rules and precise ranking of items based on causal graphs.

### Components/Axes

- **Role**: Defines the user as a "careful causality analyst acting as a reranker for retrieval."

- **Goal**:

- Select the most important items from provided context using a causal graph.

- Output items in `"precise"` (most important) and `"ct_precise"` (least important).

- Include a concise draft answer in `"p_answer"`.

- **Inputs**:

- `Query`: A placeholder for the input query (`[query]`).

- `Context Items`: A table of items with short IDs and content (`[context_table]`).

- **Output Format (JSON)**:

- `"precise"`: List of most important items (e.g., `"C1"`, `"N2"`, `"E3"`).

- `"ct_precise"`: List of least important items (e.g., `"T7"`, `"N9"`).

- `"p_answer"`: Concise draft answer (e.g., `"concise draft answer"`).

- **Constraints**:

- Maximum lengths for `"precise"`, `"ct_precise"`, and `"p_answer"` (variables like `max_precise_items` are placeholders).

### Detailed Analysis

- **Role**: Explicitly states the user’s role as a causality analyst focused on reranking for retrieval.

- **Goal**:

- Requires constructing a causal graph from context items to identify "spurious information" (least important items).

- Mandates ranking items in two directions: `precise` (most to least important) and `ct_precise` (least to more important).

- **Inputs**:

- The query and context items are placeholders, indicating this is a template for dynamic input.

- **Output Format**:

- JSON structure with strict formatting rules (no markdown, exact short IDs).

- Example values in the JSON are illustrative (e.g., `"C1"`, `"T7"`).

- **Constraints**:

- Maximum lengths for output fields are defined as variables (e.g., `max_precise_items`), suggesting configurability.

### Key Observations

- The document enforces strict adherence to formatting (e.g., no markdown, exact short IDs).

- The causal graph is central to determining item importance, with "spurious information" explicitly flagged as least important.

- Placeholders (`[query]`, `[context_table]`) indicate this is a reusable template for varied inputs.

### Interpretation

This document serves as a protocol for a reranking task in a retrieval system, where causality analysis is used to prioritize context items. The emphasis on causal graphs suggests the system aims to filter out irrelevant or misleading information ("spurious data") by leveraging causal relationships. The JSON output format ensures machine readability, while constraints like maximum lengths prevent overfitting or excessive output. The task highlights the importance of precise ranking in retrieval systems, where causal reasoning enhances relevance judgment beyond keyword matching.

</details>

Figure 5: Prompt for Causal Path Identification with Spurious Distinction (HugRAG Main Setting). The model is explicitly instructed to segregate non-causal associations into a separate list to enhance reasoning precision.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Screenshot: Role-Based Retrieval Instructions

### Overview

This image contains structured instructions for a causality analyst acting as a reranker for retrieval tasks. It defines roles, goals, input formats, output requirements, and constraints for processing queries and context items.

### Components/Axes

- **Sections**:

- **Role**: Defines the user's role as a "careful causality analyst acting as a reranker for retrieval."

- **Goal**: Lists mandatory tasks for the analyst.

- **Inputs**: Specifies query and context item formats.

- **Output Format (JSON)**: Describes the required JSON structure for responses.

- **Constraints**: Limits on output length and precision.

### Detailed Analysis

#### Role

- Text: "You are a careful causality analyst acting as a reranker for retrieval."

#### Goal

- Tasks:

1. Use only provided items.

2. Rank the `precise` list from most to least important.

3. Output JSON only (no markdown).

4. Use short IDs exactly as shown.

5. Exclude IDs in `p_answer`.

6. If evidence is insufficient, state "Unknown" in `p_answer`.

#### Inputs

- **Query**: Placeholder `{query}`.

- **Context Items**: Table format with short ID and content (placeholder `{context_table}`).

#### Output Format (JSON)

- Structure:

</details>

Figure 6: Ablation Prompt: Causal Path Identification without differentiating spurious relationships. This baseline is used to assess the contribution of the spurious filtering mechanism.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Screenshot: Prompt Template for a Helpful Assistant

### Overview

The image displays a structured prompt template designed to guide an AI assistant in answering user queries. It includes labeled sections for defining the assistant's role, goals, evidence context, optional draft answers, user questions, and response formatting requirements.

### Components/Axes

- **Labels**:

- `---Role---`

- `---Goal---`

- `---Evidence Context---`

- `---Draft Answer (optional)---`

- `---Question---`

- `---Answer Format---`

- **Text Content**:

- **Role**: "You are a helpful assistant answering the user's question."

- **Goal**: "Answer the question using the provided evidence context. A draft answer may be provided; use it only if it is supported by the evidence."

- **Evidence Context**: Placeholder `{report_context}` (no specific content provided).

- **Draft Answer**: Placeholder `{draft_answer}` (marked as optional).

- **Question**: Placeholder `{query}` (no specific query provided).

- **Answer Format**: "Concise, direct, and neutral."

### Detailed Analysis

- **Role and Goal**: Explicitly define the assistant's purpose and constraints (evidence-based responses, optional draft usage).

- **Evidence Context**: A variable placeholder for contextual data (e.g., reports, documents).

- **Draft Answer**: Optional input to guide the assistant, but responses must align with evidence.

- **Question**: User-provided query to be addressed.

- **Answer Format**: Emphasizes brevity, clarity, and neutrality in responses.

### Key Observations

- The template enforces a strict workflow: evidence → draft (optional) → final answer.

- Placeholders (`{report_context}`, `{draft_answer}`, `{query}`) indicate customizable inputs.

- No numerical data, charts, or diagrams are present; the focus is on textual structure.

### Interpretation

This template ensures the assistant prioritizes accuracy by grounding responses in provided evidence. The optional draft answer acts as a hint but does not override evidence-based reasoning. The emphasis on neutrality and conciseness suggests the template is designed for factual, unbiased information retrieval. The absence of specific data implies this is a generic framework adaptable to various domains (e.g., legal, technical, or general knowledge queries).

</details>

Figure 7: Prompt for Final Answer Generation. The model is conditioned solely on the filtered causal subgraph $S^{\star}$ to ensure groundedness.

Appendix B Algorithm Details of HugRAG

This section provides granular details on the offline graph construction process and the specific algorithms used during the online retrieval phase, complementing the high-level description in Section 4.

B.1 Graph Construction

Entity Extraction and Deduplication.

The base graph $H_{0}$ is constructed by processing text chunks using LLM. We utilize the prompt shown in Appendix 8, adapted from (Edge et al., 2024), to extract entities and relations (see prompts in Figure 8). Since raw extractions from different chunks inevitably contain duplicates (e.g., “J. Biden” vs. “Joe Biden”), we employ a two-stage deduplication strategy. First, we perform surface-level canonicalization using fuzzy string matching. Second, we use embedding similarity to identify semantically identical nodes, merging their textual descriptions and pooling their supporting evidence edges.

Hierarchical Partitioning.

We employ the Leiden algorithm (Traag et al., 2019) to maximize the modularity $Q$ of the partition. We recursively apply this partitioning to build bottom-up levels $H_{1},...,H_{L}$ , stopping when the summary of a module fits within a single context window.

Causal Gates.

The prompt we used to build causal gates is shown in Figure 9. Constructing causal gates via exhaustive pairwise verification across all modules results in a quadratic time complexity $O(N^{2})$ , where $N$ is the total number of modules. Consequently, as the hierarchy depth scales, this becomes computationally prohibitive for LLM-based verification. To address this, we implement a Top-Down Hierarchical Pruning strategy that constructs gates layer-by-layer, from the coarsest semantic level ( $H_{L}$ ) down to $H_{1}$ . The core intuition leverages the transitivity of causality: if a causal link is established between two parent modules, it implicitly covers the causal flow between their respective sub-trees (see full algorithm in Algorithm 2).

The pruning process follows three key rules:

1. Layer-wise Traversal: We iterate from top ( $L$ ) (usually sparse) to bottom ( $1$ ) (usually dense).

1. Intra-layer Verification: We first identify causal connections between modules within the current layer.

1. Inter-layer Look-Ahead Pruning: When searching for connections between a module $u$ (current layer) and modules in the next lower layer ( $l-1$ ), we prune the search space by:

- Excluding $u$ ’s own children (handled by hierarchical inclusion).

- Excluding children of modules already causally connected to $u$ . If $u→ v$ is established, we assume the high-level connection covers the relationship, skipping individual checks for $Children(v)$ .

This strategy ensures that we only expend computational resources on discovering subtle, granular causal links that were not captured at higher levels, effectively reducing the complexity from quadratic to near-linear in practice.

Algorithm 2 Top-Down Hierarchical Pruning for Causal Gates

0: Hierarchy $\mathcal{H}=\{H_{0},H_{1},...,H_{L}\}$

0: Set of Causal Gates $\mathcal{G}_{c}$

1: $\mathcal{G}_{c}←\emptyset$

2: for $l=L$ down to $1$ do

3: for each module $u∈ H_{l}$ do

4: // 1. Intra-layer Verification

5: $ConnectedPeers←\emptyset$

6: for $v∈ H_{l}\setminus\{u\}$ do

7: if $\text{LLM\_Verify}(u,v)$ then

8: $\mathcal{G}_{c}.\text{add}((u,v))$

9: $ConnectedPeers.\text{add}(v)$

10: end if

11: end for

12: // 2. Inter-layer Pruning (Look-Ahead)

13: if $l>1$ then

14: $Candidates← H_{l-1}$

15: // Prune own children

16: $Candidates← Candidates\setminus Children(u)$

17: // Prune children of connected parents

18: for $v∈ ConnectedPeers$ do

19: $Candidates← Candidates\setminus Children(v)$

20: end for

21: // Only verify remaining candidates

22: for $w∈ Candidates$ do

23: if $\text{LLM\_Verify}(u,w)$ then

24: $\mathcal{G}_{c}.\text{add}((u,w))$

25: end if

26: end for

27: end if

28: end for

29: end forreturn $\mathcal{G}_{c}$

B.2 Online Retrieval

Hybrid Scoring and Diversity.

To robustly anchor the query, our scoring function combines semantic and lexical signals:

$$

s_{\alpha}(q,x)=\alpha\cdot\cos(\mathrm{Enc}(q),\mathrm{Enc}(x))+(1-\alpha)\cdot\mathrm{Lex}(q,x), \tag{5}

$$