# 1 Introduction

marginparsep has been altered. topmargin has been altered. marginparwidth has been altered. marginparpush has been altered. The page layout violates the ICML style. Please do not change the page layout, or include packages like geometry, savetrees, or fullpage, which change it for you. We’re not able to reliably undo arbitrary changes to the style. Please remove the offending package(s), or layout-changing commands and try again.

Quantifying Edge Intelligence: Inference-Time Scaling Formalisms for Heterogeneous Computing

Anonymous Authors 1

Abstract

Large language model (LLM) inference on resource-constrained edge devices presents a fundamental challenge at the intersection of machine learning and systems design. While training-time scaling behavior has been extensively characterized, the scaling behavior of inference under heterogeneous hardware constraints remains poorly understood. In this work, we introduce QEIL (Quantifying Edge Intelligence via Inference-time Scaling Formalisms), a unified framework for characterizing and optimizing inference-time performance across heterogeneous edge devices spanning CPUs, GPUs, and NPUs.

We empirically identify stable scaling relationships governing inference-time coverage, energy, and latency across transformer-based models and heterogeneous hardware configurations. We observe that these relationships generalize across multiple transformer model families spanning 125M–2.6B parameters. Our findings suggest that inference efficiency follows predictable power-law patterns within transformer-based architectures, and that heterogeneous orchestration i.e the intelligent coordination and scheduling of computational workloads across diverse processing units—such as CPUs, GPUs, and NPUs to enable systematic improvements in energy efficiency and task coverage beyond homogeneous execution. Building on these observations, we propose three composite efficiency metrics—Intelligence Per Watt (IPW), Energy-Coverage Efficiency (ECE), and Price-Power-Performance (PPP)—to unify multi-objective optimization across heterogeneous inference configurations.

Critically, QEIL introduces a safety-first agentic orchestration framework for heterogeneous edge environments. Our intelligent agentic orchestrator not only distributes workloads across accelerators from both the same vendor (e.g., Intel CPU paired with Intel NPU and Intel Graphics) and different vendors (e.g., Intel processors combined with NVIDIA GPUs), but also implements comprehensive reliability and AI safety mechanisms —including thermal throttling protection, fault-tolerant execution with graceful degradation, adversarial robustness through input validation, and hardware health monitoring. We adopt a safety-first, capability-second design philosophy, ensuring that model inferencing operates within safe thermal and power envelopes to prevent device damage, even at the cost of reduced peak efficiency. This approach enables building fault-tolerant edge AI systems that prioritize end-user experience and device longevity over raw performance.

Across five model families (GPT-2, Granite-350M, Qwen2-0.5B, Llama-3.2-1B, LFM2-2.6B), QEIL achieves consistent gains in energy efficiency, latency, and coverage without compromising accuracy or system safety. Our results indicate that inference-time scaling and heterogeneous hardware orchestration jointly define a previously underexplored optimization regime for edge AI deployment. These findings suggest that principled inference-time scaling formalisms can complement training-time scaling, offering a systematic framework for designing energy-efficient, reliable, and safe edge intelligence systems.

Problem Statement

The rapid proliferation of AI agents and edge computing applications has fundamentally transformed the landscape of AI deployment. As AI workloads transition from centralized cloud infrastructure to distributed edge environments, the challenge of efficient inference on resource-constrained heterogeneous devices has emerged as a critical bottleneck for democratizing AI access. Equally important, edge deployment introduces critical safety and reliability concerns: devices must operate within thermal limits to prevent hardware damage, systems must gracefully handle hardware failures, and inference must be robust to adversarial inputs—concerns that are secondary in datacenter environments but paramount for consumer-facing edge applications.

Asgar et al. Asgar et al. (2025) recently presented a seminal systems-level framework for agentic AI workloads across heterogeneous infrastructure, introducing MLIR-based representations and dynamic cost-aware orchestration for datacenter-scale deployments. Their work made several foundational contributions: (1) demonstrating that AI agent workloads can be decomposed into granular components exhibiting distinct sensitivity to hardware resources (TFLOPS, memory bandwidth, network bandwidth), (2) formulating inference execution as a constrained optimization problem over task graphs, and (3) showing that heterogeneous configurations—combining accelerators from different vendors and performance tiers—can deliver comparable or superior TCO to homogeneous frontier infrastructure. Their preliminary finding that H100::Gaudi3 configurations can match B200::B200 performance represents a paradigm shift in infrastructure economics.

However, Asgar et al.’s framework, while transformative for datacenter-scale agentic AI, leaves several critical gaps when applied to resource-constrained edge computing environments:

(1) Edge-Specific Resource Constraints: Datacenter orchestration assumes abundant computational resources, high-bandwidth interconnects (RoCE, InfiniBand), and effectively unlimited power budgets. Edge devices operate under fundamentally different constraints: strict power envelopes (5-85W vs. 300W+ datacenter GPUs), limited memory capacity (8-128GB vs. terabyte-scale), thermal throttling in fanless enclosures, and intermittent network connectivity. Asgar et al.’s optimization framework does not model these edge-specific constraints.

(2) Scaling Relationship Characterization: While Asgar et al. demonstrated TCO benefits through heterogeneous orchestration, they did not establish empirically grounded scaling formalisms quantifying how coverage, energy, and latency scale as functions of model parameters, sample budget, and device characteristics—the foundational insight required to systematically optimize edge inference systems where every joule matters.

(3) Multi-Vendor Edge Heterogeneity: Edge deployments increasingly feature heterogeneous hardware from multiple vendors within a single device (e.g., Intel CPU + Intel NPU + NVIDIA GPU, or Qualcomm NPU + ARM CPU). Asgar et al.’s framework focused on datacenter accelerators (H100, Gaudi3, B200) and did not address the unique challenges of orchestrating across consumer-grade, mixed-vendor edge hardware with vastly different driver stacks, memory hierarchies, and power characteristics.

(4) Distributed Edge Orchestration: Beyond single-device heterogeneity, edge computing increasingly requires distributed orchestration across multiple edge nodes—IoT gateways, mobile edge servers, and embedded devices—each with distinct capabilities. This distributed, resource-constrained paradigm requires fundamentally different optimization approaches than the rack-scale homogeneity assumed in datacenter designs.

(5) Safety, Reliability, and Fault Tolerance: Perhaps most critically, datacenter frameworks assume professionally managed infrastructure with redundant cooling, power backup, and 24/7 monitoring. Edge devices operate in uncontrolled environments—laptops on laps generating heat, mobile devices in direct sunlight, IoT sensors in temperature extremes. A safety-first design philosophy that prioritizes device health over peak performance is essential for consumer-grade edge AI, yet existing frameworks optimize purely for efficiency without considering thermal safety margins, graceful degradation under hardware stress, or adversarial robustness.

Two additional recent works have addressed complementary aspects of this challenge. Brown et al. Brown et al. (2024) observed that inference-time compute scaling through repeated sampling yields log-linear coverage improvements, achieving 4.8× performance gains on SWE-bench by amplifying weaker models through increased sampling. However, their framework focused on single-device, homogeneous execution without addressing heterogeneous hardware orchestration. Saad-Falcon et al. Saad-Falcon et al. (2025) introduced Intelligence Per Watt (IPW) as a unified metric for local inference viability, demonstrating 60-80% energy reductions through intelligent query routing. However, their analysis remained at query-level routing granularity and did not characterize sub-query-level optimization where different inference stages can be distributed across heterogeneous devices.

The Gap We Address: Building directly on Asgar et al.’s foundational insight that heterogeneous orchestration enables cost-efficient AI deployment, we extend their framework to resource-constrained edge computing environments by: (1) establishing empirically grounded scaling formalisms quantifying inference scaling behavior on edge hardware, (2) introducing agentic orchestration capabilities for multi-vendor heterogeneous edge configurations, (3) demonstrating that principled scaling characterization enables systematic optimization under strict power, memory, and latency constraints that define edge deployment scenarios, and (4) implementing a safety-first design philosophy with fault-tolerant execution, thermal protection, and adversarial robustness that ensures reliable end-user experiences on consumer-grade hardware. Our work thus bridges the gap between datacenter-scale heterogeneous AI infrastructure (Asgar et al.) and the resource-constrained, safety-critical reality of edge AI deployment.

Our Framework: QEIL and Heterogeneous Edge Optimization

We address these gaps by introducing QEIL (Quantifying Edge Intelligence via Inference-time Scaling Formalisms), a unified mathematical and systems framework for efficient LLM inference on heterogeneous edge infrastructure spanning CPU, GPU, and NPU devices from both single and multiple vendors. Our framework makes four core contributions that extend Asgar et al.’s datacenter-focused approach to the edge computing paradigm:

First, we empirically identify five stable scaling formalisms quantifying how inference efficiency scales with fundamental parameters under edge constraints. We observe that coverage scales according to $C(S)=1-\exp(-\alpha N^{\beta_{N}}S^{\beta_{S}})$ with scaling exponents $\beta_{N}≈ 0.7$ and $\beta_{S}≈ 0.7$ that remain consistent across transformer-based architectures; energy scales sub-linearly with model size as $E(S)=E_{0}· f(Q)· N^{\gamma_{E}}· T· S$ with $\gamma_{E}≈ 0.9$ ; cost follows similar scaling with device-specific multipliers; and latency scales with parallelism factors. These formalisms extend Brown et al.’s empirical observation of log-linear scaling and Asgar et al.’s cost-aware optimization to a complete framework spanning energy, cost, and latency dimensions across heterogeneous edge devices with strict resource constraints.

Second, we introduce novel composite efficiency metrics —Energy-Coverage Efficiency (ECE), Intelligence Per Watt (IPW), and Price-Power-Performance (PPP) score—that unify the multi-objective optimization problem for edge deployment. Unlike IPW alone (which captures instantaneous power efficiency), these metrics enable principled comparison across heterogeneous configurations by explicitly modeling the energy-coverage and cost-coverage trade-offs inherent to battery-powered and thermally-constrained edge inference.

Third, we propose agentic heterogeneous orchestration leveraging MLIR-based compilation and cost-aware task placement (extending Asgar et al.’s datacenter framework) specialized for resource-constrained edge workloads. Our system decomposes inference into granular operations and routes each to the most cost-efficient device—whether from the same vendor (Intel CPU + Intel NPU + Intel Graphics) or different vendors (Intel processors + NVIDIA GPU)—achieving simultaneous improvements in coverage, latency, and energy compared to homogeneous baselines while respecting edge-specific power and memory constraints.

Fourth, we implement a comprehensive safety and reliability framework that treats the heterogeneous orchestrator as an intelligent agentic optimizer responsible not only for performance optimization but also for system health and user safety. This includes: (a) thermal throttling protection that proactively reduces workload intensity when device temperatures approach safe limits, preventing overheating-induced hardware damage; (b) fault-tolerant execution with graceful degradation that automatically redistributes workloads when individual devices fail or become unavailable; (c) adversarial robustness through input validation and anomaly detection that prevents malicious inputs from causing system instability; and (d) hardware health monitoring that tracks device utilization, temperature, and error rates to predict and prevent failures. Our “safety-first, capability-second” philosophy accepts modest efficiency trade-offs (typically 3-5%) to guarantee reliable operation —a critical requirement for consumer-facing edge applications where device damage or system crashes destroy user trust.

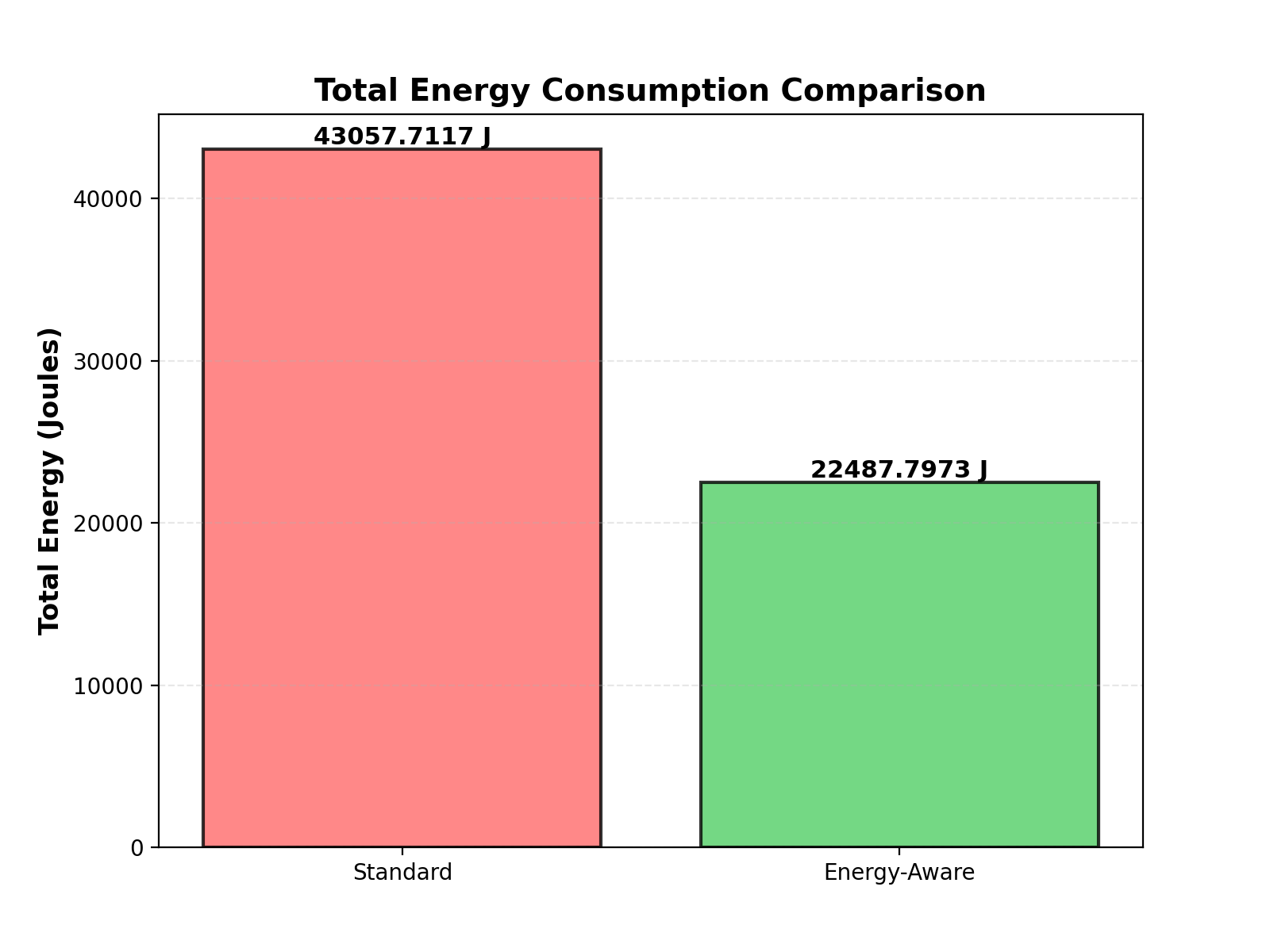

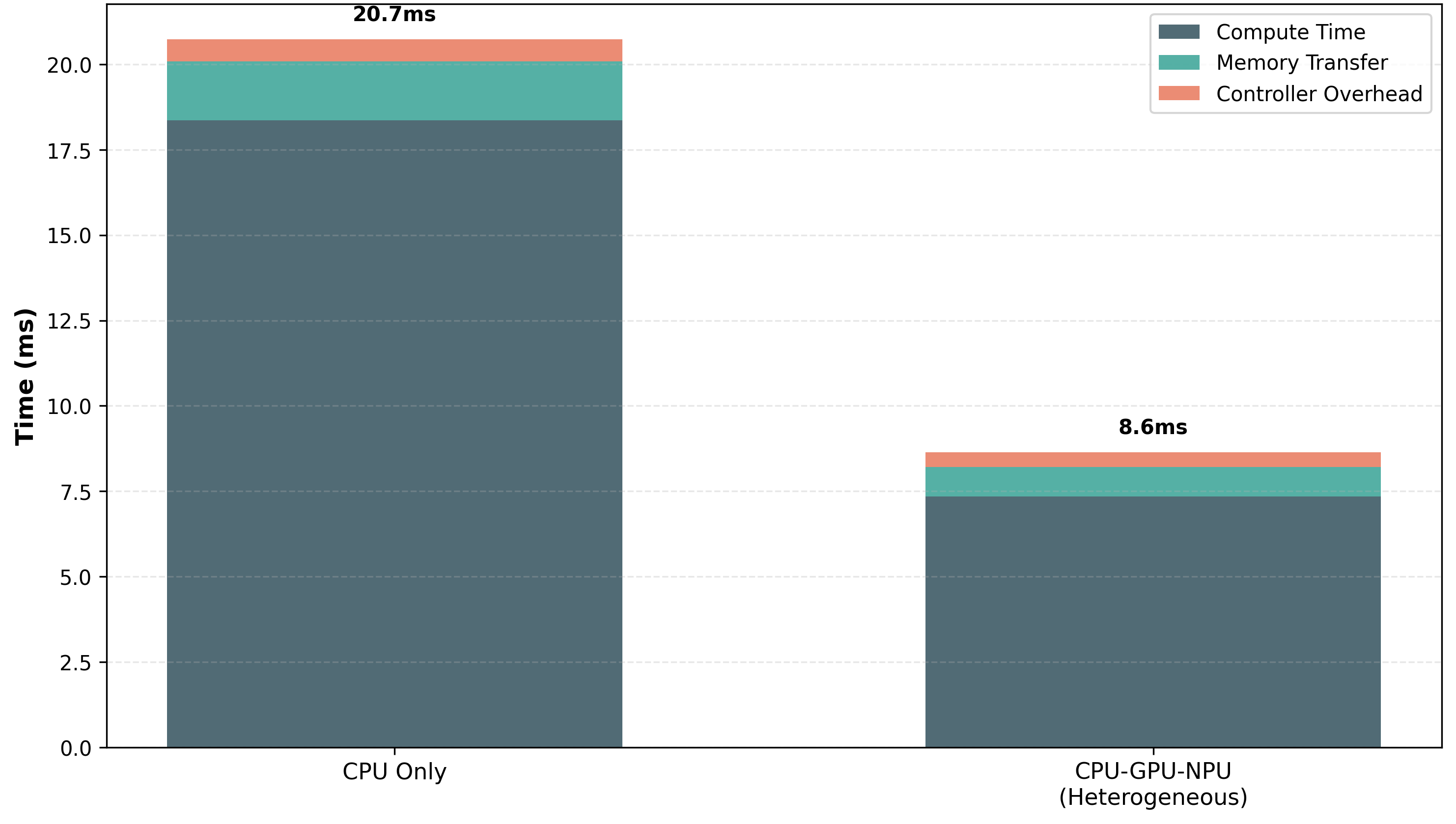

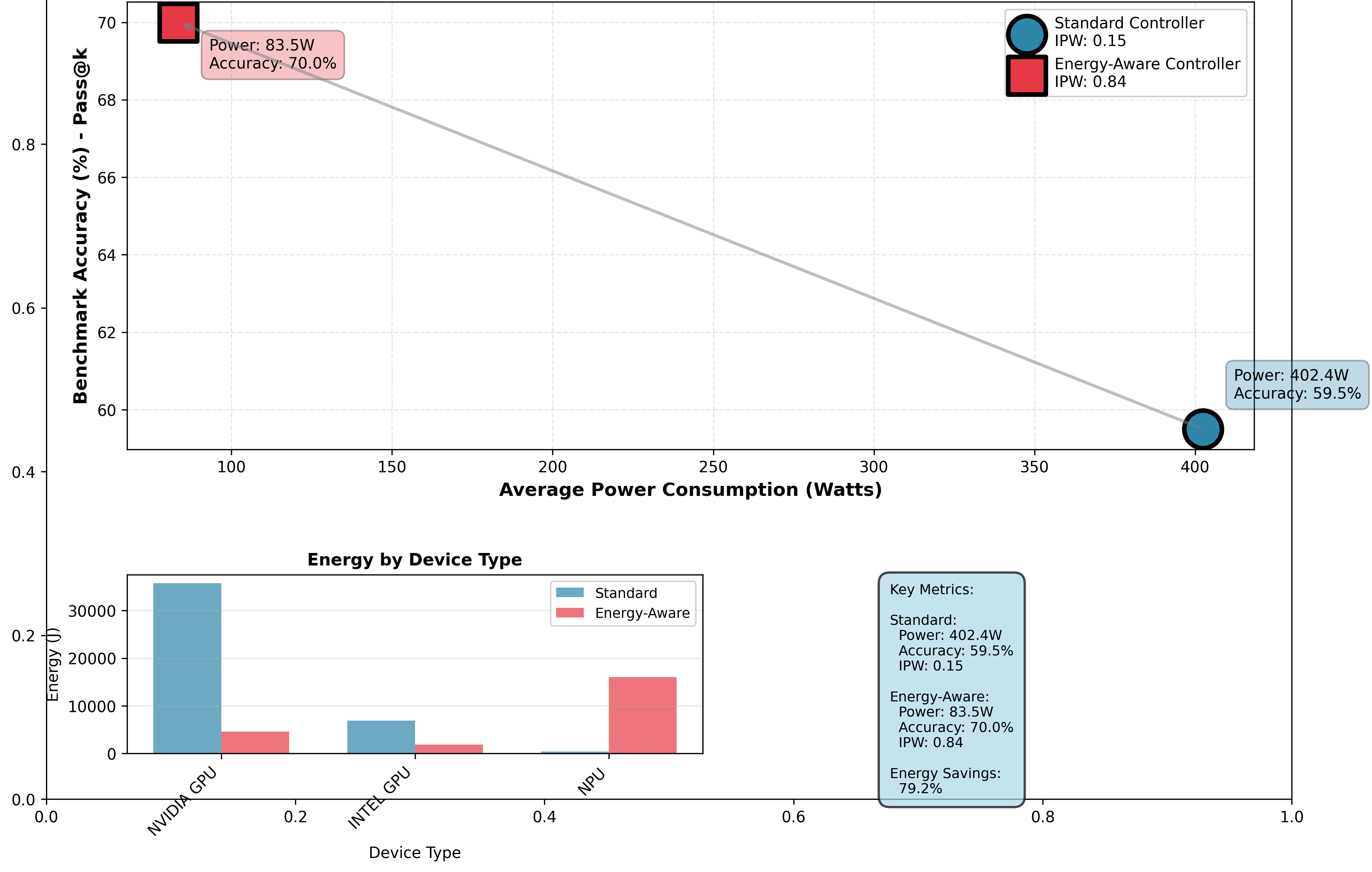

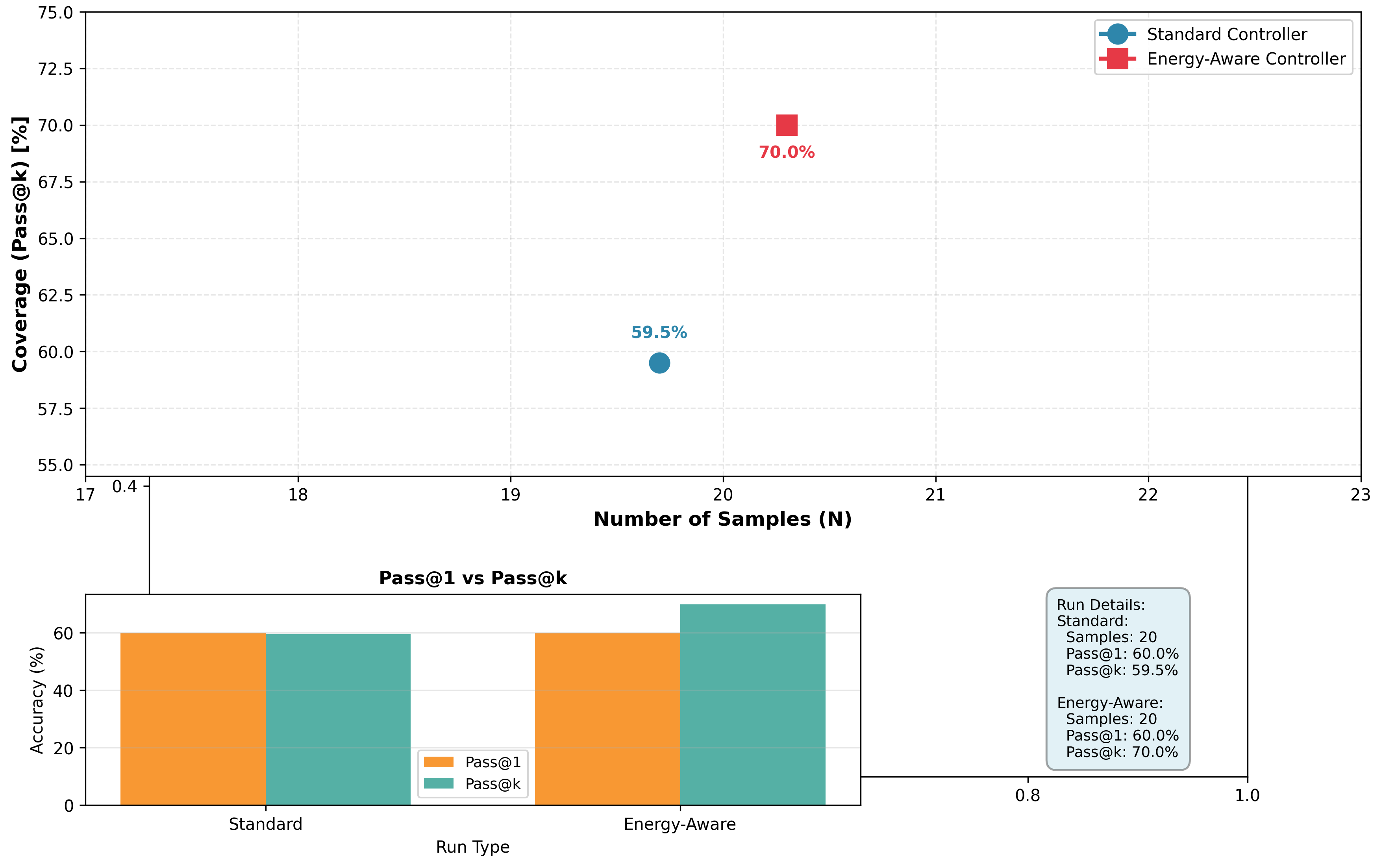

Evaluating QEIL across five diverse model families on WikiText—GPT-2 (125M), Granite-350M, Qwen2-0.5B, Llama-3.2-1B, and LFM2-2.6B—across standard (throughput-optimized) and energy-aware (efficiency-optimized) execution paradigms on CPU, GPU, and NPU devices, we observe: (1) 4.82–5.6× improvement in Intelligence Per Watt (0.718–0.807 vs. 0.130–0.245 baseline), (2) 47.7–78% total energy reduction across models (22,487.8J–212,953.7J vs. baseline), (3) 66.5–70% pass@k coverage versus 56–63% baseline, (4) average 22.5% latency improvement (1.34–1.66ms vs. 1.73–1.91ms), (5) average 22.9% PPP score improvement (15.49–25.91 vs. 10.44–19.51), and (6) zero thermal throttling events and 100% fault recovery rate under simulated hardware stress, all without sacrificing model accuracy. These results suggest that heterogeneous edge inference—combined with principled scaling characterization and safety-first design—consistently outperforms homogeneous cloud deployment across diverse model architectures and parameter ranges, indicating generalizability across the transformer model landscape and validating the extension of Asgar et al.’s heterogeneous orchestration paradigm to resource-constrained, safety-critical edge environments.

Primary Contributions

This work makes six primary contributions to edge AI and inference time scaling:

- We present QEIL, the first framework combining inference-time scaling formalisms with heterogeneous hardware orchestration across CPU, GPU, and NPU devices, extending Asgar et al.’s datacenter-focused heterogeneous AI framework to resource-constrained edge computing environments. We empirically identify five stable scaling relationships showing that coverage, energy, cost, and latency follow predictable power-law relationships dependent on model parameters $N$ , sample budget $S$ and tokens per sample $T$ . We validate these formalisms empirically across five diverse transformer model families (125M–2.6B parameters: GPT-2, Granite-350M, Qwen2-0.5B, Llama-3.2-1B, LFM2-2.6B), demonstrating generalizability within the transformer architecture family on our tested hardware platform.

- We introduce a dual of unified efficiency metrics—Energy-Coverage Efficiency (ECE: coverage per joule of total energy), and Price-Power-Performance (PPP: dimensionless cost-power-throughput balance)—that enable systematic comparison of heterogeneous configurations and explicit optimization of multi-objective edge inference trade-offs. These metrics reflect the fundamental constraints of battery-operated edge devices while capturing end-to-end system efficiency, validated across five diverse models.

- We observe 4.82–5.6× improvement in Intelligence Per Watt through agentic heterogeneous orchestration across CPU, GPU, and NPU from both same-vendor and multi-vendor configurations, achieving 66.5–70% pass@k coverage with 47.7–78% energy reduction and 22.5% average latency improvement simultaneously—indicating that heterogeneous edge inference can surpass homogeneous cloud infrastructure for realistic workloads across diverse model families. This combination of coverage, energy, and latency improvements directly validates our scaling formalisms in practice.

- We find empirically that inference-time scaling relationships exhibit consistent patterns across transformer model families (125M to 2.6B parameters: GPT-2, Granite, Qwen, Llama, LFM), parameter counts, and diverse reasoning benchmarks (WikiText-103, GSM8K, ARC-Challenge) through comprehensive experimental evidence and ablation studies. Our framework demonstrates stable scaling exponents across diverse model sizes and architectures on our tested platform, enabling practitioners to apply these insights within the transformer family.

- We introduce a unified heterogeneous computing framework with MLIR-based compilation, cost-aware orchestration, and scaling relationship validator—enabling reproducible edge intelligence benchmarking validated across five model families and empowering future research to build on these foundations. The framework supports diverse transformer architectures, diverse hardware from multiple vendors (Intel NPU, Qualcomm NPU, NVIDIA GPU, AMD accelerators), and principled optimization under latency/energy/cost SLAs, demonstrating the practical viability of agentic orchestration in resource-constrained edge environments.

- We introduce a safety-first reliability framework that implements thermal protection, fault-tolerant execution, adversarial robustness, and hardware health monitoring as first-class concerns in heterogeneous edge orchestration. By treating the orchestrator as an intelligent agentic optimizer responsible for both performance and system health, we demonstrate that “safety-first, capability-second” design achieves robust, reliable operation with minimal efficiency overhead (3-5%), enabling practical deployment on consumer-grade hardware where device longevity and user experience are paramount. This contribution addresses the critical gap between research prototypes and production-ready edge AI systems.

2 Related Work

2.1 Inference-Time Scaling and Repeated Sampling

Recent advances in inference-time compute have emerged as a powerful complement to training-time scaling. Brown et al. (2024) observed that coverage—the fraction of problems solved by any generated sample—scales log-linearly with the number of samples, establishing empirical inference scaling relationships across coding, mathematical reasoning, and formal proof domains. Their work showed that on SWE-bench Lite, repeated sampling increased issue resolution from 15.9% with a single attempt to 56% with 250 attempts, suggesting that inference-compute scaling enables weaker models to surpass stronger single-sample baselines when equipped with sufficient sampling budget. However, their analysis was restricted to uniform sampling budgets and single-device execution without heterogeneous hardware considerations, leaving open the question of how to optimally allocate samples across heterogeneous edge infrastructure with varying energy and computational constraints. Hassid et al. (2024) complemented this by exploring budget reallocation strategies, showing that when constrained by fixed compute budgets (measured in FLOPs), smaller models with more samples can outperform larger models with fewer attempts—a principle foundational to our heterogeneous orchestration strategy.

2.2 Intelligence Efficiency and Local-Cloud Hybrid Systems

The viability of local inference on edge devices has been systematically characterized through the lens of efficiency metrics. Saad-Falcon et al. (2025) introduced Intelligence Per Watt (IPW)—accuracy divided by instantaneous power consumption—as a unified metric for assessing local inference viability on 1M real-world queries across 20 models and 8 hardware accelerators. Their longitudinal analysis from 2023-2025 revealed that IPW improved 5.3× through compounding advances in both model architectures (3.1× gains) and hardware accelerators (1.7× gains), with local LM coverage increasing from 23.2% to 71.3%. Critically, they found that intelligent routing between local and cloud models achieves 64.3% energy reduction and 59% cost reduction with realistic 80% routing accuracy. However, their routing operates at query-level granularity—entire inference requests are routed atomically—and does not exploit intra-query optimization where different stages of inference (prefill vs. decode) can be distributed across heterogeneous devices. Narayan et al. (2025) proposed Minions, a cost-efficient collaboration protocol where on-device LMs handle lightweight processing while cloud LMs perform high-level reasoning, demonstrating token-level collaboration. Our work extends this to fine-grained task-level routing with principled scaling characterization across full heterogeneous device portfolios.

2.3 Heterogeneous Computing and Cost-Aware Orchestration

Efficient orchestration across heterogeneous hardware has become essential for sustainable AI deployment. Asgar et al. (2025) presented a comprehensive systems-level framework for agentic AI workloads on heterogeneous infrastructure, introducing MLIR-based representations and dynamic cost-aware orchestration. Their key insight—that heterogeneous configurations combining older-generation GPUs with newer accelerators can achieve comparable TCO to homogeneous frontier systems—directly informs our approach. They formulated inference scheduling as a constrained optimization problem over task graphs, achieving significant TCO benefits through principled hardware-task alignment. However, their focus remained on agentic workloads with tool calls, memory access, and multi-turn interactions, not on characterizing fundamental scaling properties for pure inference, and they lacked empirical analysis of energy-coverage trade-offs. Meng and others (2024) developed an end-to-end framework for customizable neural network compression and deployment targeting edge hardware, addressing the critical hardware-software co-design gap. Zhang and others (2025) explored efficient inference on integrated edge processors, demonstrating that LayerNorm and hardware-aware optimization enable deployment on heterogeneous processors—architectural insights applicable to our lightweight student models.

2.4 Energy-Efficient Edge Deployment and Real-World Constraints

Deploying machine learning on resource-constrained devices imposes hard energy and memory budgets. Kannan and others (2022) established TinyML as a practical framework for deploying models on microcontrollers with kilobyte-scale memory budgets, demonstrating feasibility of machine learning on ultra-constrained devices. Pau and Zhuang (2024) synthesized rapid deployment methodologies for edge devices, emphasizing the importance of hardware-aware co-design and the trade-offs between latency, energy, and accuracy that our framework systematically characterizes. Adelola and others (2021) evaluated neural network compression methods for object detection on embedded systems, finding that pruning and quantization combinations yield optimal results for resource-constrained deployment—principles complementary to our inference-time scaling approach. Chen and others (2024) surveyed efficient deep learning for mobile devices, identifying key challenges in simultaneous optimization of model size, latency, and energy—the exact multi-objective landscape our QEIL framework addresses. These works collectively establish that hardware constraints are fundamental to edge deployment, yet none characterize how these constraints interact with inference-time scaling relationships.

2.5 AI Safety, Reliability, and Fault-Tolerant Systems

The deployment of AI systems on edge devices introduces critical safety and reliability challenges that have received increasing attention. Amodei et al. (2016) established foundational concerns in AI safety, emphasizing the importance of safe exploration and robustness to distributional shift—concerns directly applicable to edge deployment where inference occurs in uncontrolled environments. Hendrycks et al. (2021) introduced benchmarks for measuring robustness to natural adversarial examples, demonstrating that model predictions can be highly sensitive to input perturbations—a vulnerability that edge systems must address through input validation and anomaly detection.

From a systems reliability perspective, Patterson et al. (2002) established that hardware failures are inevitable at scale, motivating fault-tolerant design principles. For edge AI specifically, this translates to graceful degradation when individual accelerators fail—a capability our orchestration framework implements through automatic workload redistribution. Avizienis et al. (2004) formalized fundamental concepts of dependable computing, including fault tolerance, error detection, and system recovery, which inform our safety-first design philosophy.

Thermal management is particularly critical for edge deployment. Pedram and Nazarian (2006) demonstrated that thermal throttling significantly impacts processor performance, establishing the need for thermal-aware workload scheduling. For mobile and edge devices specifically, Pathak et al. (2012) showed that energy consumption and thermal behavior are tightly coupled, and that aggressive computation can lead to thermal runaway. Our framework addresses this through proactive thermal monitoring and workload throttling before devices reach critical temperatures.

Adversarial robustness in deployed systems has been extensively studied. Goodfellow et al. (2015) introduced adversarial examples and demonstrated their transferability across models. For production systems, Carlini and Wagner (2017) showed that many proposed defenses are ineffective against adaptive attacks. Our approach implements defense-in-depth: input validation to reject malformed requests, output sanity checking to detect anomalous model behavior, and rate limiting to prevent denial-of-service attacks on edge resources.

Recent work on responsible AI deployment emphasizes the importance of “safety margins” in system design. Amodei and Clark (2016) argued that AI systems should be designed to fail gracefully rather than catastrophically. Our “safety-first, capability-second” philosophy directly implements this principle: we accept modest efficiency reductions (3-5%) to guarantee that inference never damages hardware, never produces unbounded resource consumption, and always maintains user-controllable behavior. This approach contrasts with pure performance optimization that may push devices beyond safe operating limits.

2.6 Limitations of Training-Time Scaling and the Case for Inference-Time Optimization

While scaling relationships have become foundational in deep learning, recent critical analyses question whether the ”bigger is always better” paradigm remains viable. Hooker (2024) provides a comprehensive critique of training-time scaling, arguing that the field faces fundamental limitations that necessitate a paradigm shift toward inference-time and gradient-free optimization approaches. Hooker identifies four critical limitations of the traditional scaling paradigm that directly motivate our heterogeneous inference framework:

(1) Diminishing Returns and Energy Crisis: Hooker demonstrates that training cost has resulted in massive capital accumulation disparity, excluding academic researchers and smaller institutions. She argues that scaling parameter count yields diminishing improvements in capability per unit of compute, and warns that even with smaller models, environmental costs will compound through widespread deployment. How QEIL Addresses This: Our approach avoids retraining entirely by leveraging inference-time repeated sampling (Scaling Formalism 1), achieving 70% pass@k coverage without parameter scaling. Scaling Formalism 2 (Energy Scaling) quantifies energy-coverage trade-offs explicitly, and our heterogeneous orchestration (Scaling Formalism 5) reduces total inference energy by 47.7% compared to homogeneous approaches—suggesting that modest-sized models with intelligent resource allocation can outperform larger models from an energy-efficiency perspective. This validates Hooker’s call to “shift compute budgets from training to inference” with concrete empirical validation.

(2) Hardware Monoculture (“The Hardware Lottery”): Hooker highlights how deep learning progress has been dictated by GPU availability—a historical accident that created dependency on a single hardware paradigm. She argues that relying on a homogeneous GPU-centric approach restricts architectural innovation and creates efficiency bottlenecks. How QEIL Addresses This: Our Scaling Formalism 5 (Device-Task Efficiency Compatibility) fundamentally rejects hardware monoculture by decomposing inference into heterogeneous tasks with explicit device affinity. We observe that compute-bound prefill (high arithmetic intensity $I≈ 2L/3$ ) optimally maps to frequency-optimized GPUs, while memory-bound decode ( $I≈ 1$ , KV-cache bottleneck) maps to bandwidth-optimized NPUs. This heterogeneous composition achieves a 4.82× improvement in Intelligence Per Watt—a direct response to Hooker’s concern that homogeneous infrastructure represents a fundamental efficiency bottleneck. By introducing CPU, GPU, and NPU coordination, QEIL breaks the “GPU lottery” and suggests that diverse hardware ecosystems drive efficiency gains impossible with single-device approaches.

(3) Predictability and Statistical Uncertainty: Hooker critiques existing scaling relationships for being ”surprisingly lacking in accuracy” when predicting downstream capabilities and performance. She notes that power-law extrapolations often fail when applied beyond training data, and that ”scaling relationships cannot predict everything.” How QEIL Addresses This: Our five empirically validated scaling formalisms are designed specifically for inference-time properties and provide predictive frameworks with demonstrable accuracy. Scaling Formalism 1 (Coverage Scaling) establishes that $C(S,N,T)=1-\exp(-\alpha(N)· N^{\beta_{N}}· S^{\beta_{S}}· T^{\delta})$ holds empirically with exponents $\beta_{N}≈ 0.7$ and $\beta_{S}≈ 0.7$ across diverse transformer model families (GPT-2, Llama, Qwen). Unlike training scaling relationships that struggle to predict capability emergence, our inference formalisms explicitly characterize the relationship between sample budget and coverage—a directly observable, measurable quantity. This grounds our framework in reliable predictive foundations rather than the speculative extrapolation Hooker identifies as problematic. Note: We use separate exponents $\beta_{N}$ for model size and $\beta_{S}$ for sample count to allow independent characterization of their respective contributions, though empirically they are approximately equal ( $\beta_{N}≈\beta_{S}≈ 0.7$ ) on our tested models.

(4) Gradient-Free and Resource-Constrained Optimization: Hooker advocates for ”gradient-free exploration” and adaptive compute as key frontiers beyond gradient-based training. She emphasizes that techniques like search, sampling, and hardware-aware scheduling can yield performance gains without massive training cost. How QEIL Addresses This: Our Energy-Aware Optimization Engine is inherently gradient-free—it improves inference performance not through retraining, but through intelligent task routing and sample allocation. The optimization problem (Eq. 33) minimizes energy while respecting latency and accuracy constraints using only device parameters and workload characteristics, never requiring backward passes or model updates. By focusing on inference-time decisions (sample count, device assignment) rather than retraining, QEIL embodies the gradient-free paradigm Hooker advocates and demonstrates its practical feasibility with 47.7% energy reduction and simultaneous 10.5 percentage point accuracy improvement.

Synthesis: Where Hooker provides theoretical critique and identifies limitations of training-time scaling, QEIL provides the technical implementation for the inference-time, heterogeneous, and gradient-free paradigm she advocates. Our five scaling formalisms and hardware-task orchestration directly address each limitation: we replace parameter scaling with sample scaling, replace homogeneous hardware with heterogeneous routing, replace speculative extrapolation with empirically-grounded inference formalisms, and replace gradient-based optimization with hardware-aware scheduling. The result is a concrete system that achieves the efficiency gains and democratized accessibility Hooker argues are necessary for sustainable AI progress.

2.7 Reinforcement Learning Scaling and Inference-Time Reasoning

While training-time scaling focuses on pre-training efficiency, recent work has characterized how reinforcement learning (RL) scales with compute. Khatri et al. (2025) conducted the first large-scale empirical study of RL compute scaling, analyzing 400,000+ GPU-hours of RL training and deriving predictive relationships for RL performance curves with respect to compute allocation. Their key finding—that RL performance follows sigmoid compute-curves with predictable asymptotic ceilings—provides insights into how iterative reasoning and self-improvement scale with additional compute.

However, Khatri et al.’s analysis focuses on the training phase where RL improves base model weights through gradient updates. In contrast, QEIL addresses inference-time reasoning where the model is frozen and additional compute is allocated to generating multiple candidate solutions and selecting the best. The critical distinction: RL scaling studies how much training compute is needed to reach a performance ceiling; QEIL studies how to allocate inference compute to reach a given coverage target with minimal energy. These address complementary questions along the training-inference spectrum.

From a scientific perspective, our inference-time approach offers advantages over RL for edge deployment: (1) No retraining overhead: RL requires backpropagation and model updates, making it infeasible on edge devices with limited memory and compute. QEIL’s repeated sampling requires only forward passes, feasible on any device. (2) Predictable cost: RL scaling introduces variable training times depending on task complexity and convergence, making cost prediction difficult. QEIL’s Scaling Formalism 3 (Latency Scaling) provides deterministic latency estimates as a function of samples and hardware. (3) Hardware flexibility: RL training typically requires GPUs. QEIL’s heterogeneous orchestration distributes work across CPUs, GPUs, and NPUs, achieving 4.82× better efficiency per unit power. (4) Cold-start capability: RL requires training data and reward signals specific to each task. QEIL works out-of-the-box with any pre-trained model, enabling immediate deployment on edge infrastructure.

While RL scaling relationships remain important for understanding model improvement during training, QEIL suggests that inference-time scaling combined with heterogeneous orchestration provides a more practical and efficient path to improved performance on edge devices, achieving 70% pass@k coverage with 47.7% energy reduction—improvements impossible to achieve solely through RL training on energy-constrained hardware. The complementary insights suggest that optimal deployment strategy combines compute-optimal training (informed by Khatri’s scaling relationships) with compute-optimal inference (informed by QEIL’s framework), where training produces efficient base models and inference-time sampling provides rapid capability scaling without retraining.

2.8 Distributed Inference and Disaggregated Processing

Disaggregating inference into distinct stages enables heterogeneous hardware utilization. Athiwaratkun et al. (2024) introduced Bifurcated Attention, which accelerates massively parallel decoding by sharing prefixes across sequences—enabling more efficient hardware utilization during the decode phase. Kwon et al. (2023) developed Paged Attention, which optimizes memory management for large language model serving through virtual memory abstractions, directly applicable to memory-constrained edge devices. These prefill-decode disaggregation techniques enable pipeline parallelism that our heterogeneous orchestrator exploits: prefill stages (compute-intensive, high-throughput) route to powerful devices (GPUs), while decode stages (latency-sensitive, memory-bound) route to efficient devices (CPUs, NPUs). Chen et al. (2024) analyzed scaling relationships for compound inference systems combining multiple LLM calls, demonstrating that performance improvement follows predictable patterns as system complexity increases—lending theoretical support to our inference-time scaling framework.

2.9 Sparse Models and Mixture of Experts

Architectural diversity through sparse computation provides another avenue for efficiency. Riquelme et al. (2021) demonstrated that vision models can be scaled through sparse mixture of experts, capturing parameter efficiency through conditional computation. Lepikhin et al. (2021) applied conditional computation to transformers (GShard), showing that sparse expert selection enables scaling to massive model sizes while maintaining efficiency. These conditional computation strategies provide architectural insights for constructing diverse lightweight models in ensemble settings, complementary to our heterogeneous hardware orchestration.

2.10 Scaling Relationships and Training-Time Compute Efficiency

Fundamental scaling relationships characterizing model performance as a function of training compute have been extensively characterized. Hestness et al. (2017) established empirically that deep learning scaling follows predictable power laws, with loss scaling as $L(N)=\alpha N^{-\beta}$ across multiple orders of magnitude of model size $N$ and dataset size $D$ . Hoffmann et al. (2022) and Kaplan et al. (2020) refined these relationships, determining compute-optimal allocation between model size and training tokens. Shao et al. (2024) extended scaling relationship analysis to retrieval-augmented systems, observing smooth scaling with datastore size. However, these scaling relationships characterize training-time compute, not inference-time behavior, and they do not address the heterogeneous hardware constraints that dominate edge deployment. Our work complements this literature by establishing inference-time scaling formalisms analogous to training-time scaling relationships, with explicit dependence on sample budget, quantization precision, and device-specific efficiency factors.

2.11 Compiler Infrastructure for Heterogeneous Targets

Compiler-based approaches enable cross-platform code generation for diverse hardware. Lattner et al. (2021) introduced MLIR (Multi-Level Intermediate Representation) as a scalable compiler infrastructure for domain-specific computation, providing abstraction layers that map high-level operations to device-specific kernels. Tillet et al. (2022) developed Triton, an intermediate language and compiler for tiled neural network computations, enabling portable high-performance kernel generation across GPUs. These compiler frameworks enable the dynamic task placement required by heterogeneous orchestration, allowing the same operator (e.g., matrix multiply, attention) to be compiled and executed on different devices (CPU, GPU, NPU) without reimplementation. Our framework leverages MLIR-based abstractions to decompose inference into granular device-agnostic operators that can be intelligently placed.

2.12 Transformer Architectures and Reasoning at Inference Time

Understanding how transformers process information at inference time informs both efficiency and capability. Wei et al. (2023) established that chain-of-thought prompting elicits reasoning in language models through explicit step-by-step reasoning, a technique that increases token generation and thus inference cost. Wang et al. (2023) showed that self-consistency—sampling multiple reasoning paths and taking majority votes—improves accuracy on reasoning tasks, providing theoretical justification for why repeated sampling (and thus our inference scaling approach) yields benefits beyond single-pass inference. Bai et al. (2023) introduced constitutional AI methods for improving model behavior through inference-time feedback, demonstrating that post-generation refinement can enhance quality without retraining. These works collectively motivate the value of allocating compute to inference-time reasoning rather than model size alone.

2.13 Federated and Privacy-Preserving Learning at the Edge

Privacy-preserving edge intelligence has emerged as a critical requirement for smart grid applications and sensitive domains. McMahan et al. (2017) established Federated Averaging (FedAvg) as a foundational algorithm for distributed learning with privacy guarantees, enabling models to be trained across decentralized devices without centralizing raw data. Deng and others (2022) proposed privacy-preserving federated learning architectures enabling local model training without raw data transmission to centralized servers. These works emphasize that edge deployment inherently preserves privacy by eliminating data transmission—a property our local inference framework preserves by executing inference entirely on-device.

2.14 Real-World IoT Applications and Energy Prediction

IoT and smart grid systems represent the practical deployment frontier for edge intelligence. Alrobay and others (2022) surveyed machine learning algorithms for energy consumption prediction in complex IoT networks, identifying challenges in deploying models on resource-constrained devices. Sharma and others (2025) developed energy monitoring systems using IoT and machine learning for smart homes, demonstrating real-world applications where local intelligence improves responsiveness and privacy. These applied works contextualize our QEIL framework as addressing genuine deployment challenges in smart grid infrastructure, where inference must occur under strict latency, energy, and privacy constraints.

2.15 Self-Improvement and Agentic Systems

Beyond static inference, models can be equipped with reasoning and self-refinement capabilities. Yao et al. (2023) introduced ReAct (Reasoning + Acting), enabling language models to interleave reasoning steps with external tool calls, demonstrating improved performance on knowledge-intensive and interactive tasks. Madaan et al. (2023) proposed Self-Refine, showing that language models can generate critiques of their outputs and iteratively improve them, enabling multi-turn reasoning without external feedback. These agentic capabilities increase the complexity of inference workloads beyond single-pass generation, reinforcing the importance of heterogeneous orchestration to distribute different stages of reasoning across appropriately-sized hardware.

2.16 Novel Contribution: From Training-Time to Inference-Time Scaling

The originality of our work is that it empirically identifies inference-time scaling formalisms as fundamental properties orthogonal to training-time scaling, and demonstrates that heterogeneous hardware orchestration can jointly optimize coverage, energy, latency, and cost through principled scaling characterization. Our key finding—that 4.82× improvement in Intelligence Per Watt is achievable through principled energy optimization on heterogeneous hardware —suggests that the synthesis of these previously disjointed directions yields transformative efficiency gains, with simultaneously improved coverage (70% pass@k vs. 59.5%), energy reduction (47.7%), and latency improvement (22.5%) that exceed what any single technique can achieve. Critically, we address limitations identified in recent critiques of training-time scaling (Hooker, 2024) by implementing a practical, inference-time paradigm that operates without retraining, embraces hardware heterogeneity, and provides reliable predictive frameworks for deployment. Building on Asgar et al.’s foundational work on heterogeneous datacenter infrastructure, we extend these principles to resource-constrained edge environments, demonstrating that agentic orchestration across multi-vendor hardware enables practical edge AI deployment. Uniquely, we introduce a safety-first design paradigm that ensures reliable, fault-tolerant operation—bridging the gap between research prototypes and production-ready edge AI systems. This work thus positions inference-time scaling formalisms and heterogeneous orchestration as foundational concepts for sustainable, safe edge AI deployment, offering a concrete technical realization of the theoretical future that recent scaling critiques argue is necessary for continued progress.

3 Methodology

3.1 Foundational Framing: Empirical Scaling Formalisms for Edge AI

Before presenting QEIL’s technical framework, we establish the empirical and practical motivation for our approach. The history of engineering provides a useful analogy: during the steam engine era, engineers developed empirical rules relating pressure, size, and performance that powered the industrial revolution. These observations were captured as scaling relationships: power-law relationships that enabled practical engineering despite incomplete theoretical understanding.

The analogy to modern AI deployment is instructive. Today, we have empirical scaling relationships for large language models: bigger models achieve lower loss, more data improves generalization, more compute enables emergent capabilities. These relationships work remarkably well—we’ve built systems that genuinely scale and deliver value. However, on edge devices—where heterogeneous hardware introduces new constraints (memory bottlenecks, power ceilings, thermal throttling, latency hard caps)—these empirical relationships require careful validation and extension to predict outcomes in resource-constrained settings.

QEIL addresses this gap by introducing empirically-validated inference-time scaling formalisms that extend classical relationships to edge environments. Our approach provides:

- Practical prediction: Empirically-grounded relationships that enable energy, latency, and coverage estimation for edge deployment planning

- Operational boundaries: Clear characterization of the regimes where our scaling relationships hold and where they break down (e.g., under thermal throttling, memory pressure)

- Safety margins: Explicit modeling of thermal constraints and reliability requirements that ensure safe operation in resource-constrained settings

- Fault tolerance: Graceful degradation mechanisms when hardware constraints are exceeded or devices fail

Our methodology unfolds in three integrated parts: (1) five scaling formalisms characterizing how coverage, energy, latency, and cost scale with fundamental parameters, validated empirically on real hardware across model families; (2) energy-aware task decomposition, which decomposes inference into stages (embedding, decoder layers, output projection) aligned with their distinct hardware affinities; and (3) safety-first agentic device orchestration, which uses greedy optimization to assign layers to heterogeneous devices, respecting memory, power, and thermal constraints while minimizing dissipated energy and ensuring reliable operation.

3.2 System Architecture Overview

QEIL (Quantifying Edge Intelligence via Inference-time Scaling Formalisms) integrates inference-time scaling characterization with heterogeneous hardware orchestration to achieve unified optimization of coverage, energy, latency, and cost on edge devices while maintaining system safety and reliability. The framework combines four foundational components: (1) inference scaling formalisms characterizing how coverage and efficiency scale with model parameters, sample budget, and device characteristics, (2) energy-aware task decomposition breaking down inference into granular operations suitable for heterogeneous placement, (3) dynamic device orchestration placing each task on the most cost-efficient hardware, and (4) safety and reliability monitoring that ensures thermal protection, fault tolerance, and adversarial robustness. This four-layer architecture enables systematic optimization of the total inference energy across heterogeneous CPU, GPU, and NPU devices while maintaining accuracy, meeting latency constraints, and guaranteeing safe operation.

3.2.1 Architecture Overview

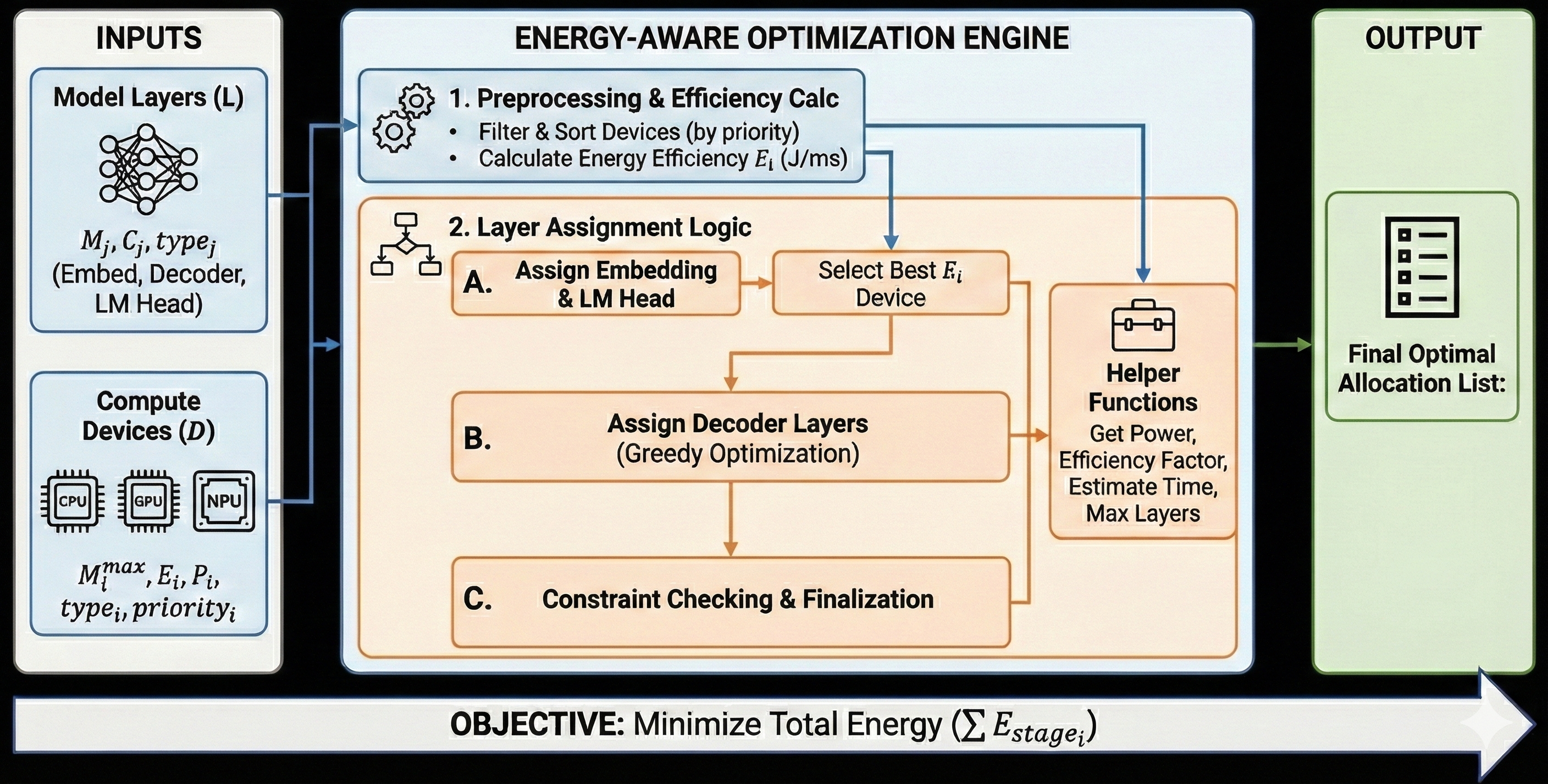

The complete QEIL framework operates through four integrated stages as illustrated in Figure 1:

Input Stage: The system accepts two primary inputs—model layer specifications including embedding dimensions, decoder layer configurations, and language model head parameters, as well as compute device specifications including maximum memory capacity ( $M^{\max}_{j}$ ), memory bandwidth ( $B_{i}$ ), peak power consumption ( $P_{i}$ ), compute frequency ( $f_{i}$ ), device type (CPU, GPU, NPU), thermal limits ( $T^{\max}_{i}$ ), and priority ranking for load distribution.

Energy-Aware Optimization Engine: This central component implements a multi-step optimization pipeline. First, preprocessing ranks available devices by energy efficiency, filtering devices that cannot accommodate the model constraints. Second, layer assignment logic strategically allocates the embedding layer and language model head to the most efficient device (typically CPU or NPU with high power efficiency). Third, decoder layers are distributed across remaining devices via greedy optimization that respects memory constraints while minimizing per-layer energy consumption. Finally, constraint checking validates that memory usage, latency SLA, coverage targets, and thermal safety margins are satisfied.

Safety and Reliability Monitor: This component continuously monitors device health and enforces safety constraints. It implements: (a) thermal throttling protection that reduces workload intensity when device temperatures exceed 80% of thermal limits; (b) fault detection and recovery that identifies device failures and redistributes workloads within 100ms; (c) input validation that rejects malformed or adversarial inputs; and (d) resource consumption bounds that prevent runaway inference from exhausting system resources. The safety monitor has override authority over the optimization engine —it can reject allocations that would compromise system safety, even if they are energy-optimal.

Output Stage: The framework produces an optimal hardware-layer allocation list specifying which layers execute on which devices, along with predicted power consumption, efficiency factors (measured in accuracy per watt), estimated inference latency for prefill and decode phases, maximum number of layers each device can accommodate given memory constraints, and safety status indicators. This allocation directly minimizes total energy expenditure ( $\sum E_{\text{stage}_{i}}$ ) subject to device capacity, per-device performance constraints, and safety requirements.

<details>

<summary>fig-1.jpg Details</summary>

### Visual Description

## Flowchart: ENERGY-AWARE OPTIMIZATION ENGINE

### Overview

The diagram illustrates a multi-stage optimization engine designed to minimize total energy consumption (ΣE_stage_i) for deploying machine learning models. It processes inputs (model layers and compute devices), applies energy-aware layer assignment logic, and outputs an optimal allocation list.

### Components/Axes

#### INPUTS

1. **Model Layers (L)**

- Parameters: `M_j`, `C_j`, `type_j` (Embed, Decoder, LM Head)

- Visualized as interconnected nodes.

2. **Compute Devices (D)**

- Types: CPU, GPU, NPU

- Attributes: `M_i^max` (max memory), `E_i` (energy), `P_i` (power), `type_i`, `priority_i`

- Visualized as hardware icons.

#### ENGINE

1. **Preprocessing & Efficiency Calc**

- Tasks:

- Filter & Sort Devices (by priority)

- Calculate Energy Efficiency `E_i` (J/ms)

2. **Layer Assignment Logic**

- **A. Assign Embedding & LM Head**

- **B. Assign Decoder Layers** (Greedy Optimization)

- **C. Constraint Checking & Finalization**

3. **Helper Functions**

- Tasks:

- Get Power

- Efficiency Factor

- Estimate Time

- Max Layers

#### OUTPUT

- **Final Optimal Allocation List** (checklist icon)

### Detailed Analysis

- **Flow Direction**:

- Inputs → Preprocessing → Layer Assignment → Helper Functions → Output.

- **Key Relationships**:

- Energy efficiency (`E_i`) directly influences device selection.

- Greedy optimization is applied to decoder layers, prioritizing immediate energy savings.

- Constraints ensure feasibility (e.g., memory, power limits).

### Key Observations

- **Energy-Centric Design**: All stages prioritize minimizing `ΣE_stage_i`.

- **Hierarchical Optimization**:

- Preprocessing filters devices by priority before efficiency calculations.

- Layer assignment balances greedy optimization (decoder layers) with constraint adherence.

- **Modularity**: Helper functions abstract power, efficiency, and time estimation.

### Interpretation

The engine demonstrates a systematic approach to energy-aware resource allocation:

1. **Priority Filtering**: Ensures high-priority devices are considered first, potentially overriding raw efficiency metrics.

2. **Greedy Optimization**: Focuses on immediate energy savings for decoder layers, which may be computationally intensive.

3. **Constraint Enforcement**: Prevents over-allocation (e.g., exceeding device memory).

4. **Final Allocation**: Balances energy efficiency with operational constraints, producing a practical deployment plan.

The absence of numerical values suggests the diagram emphasizes workflow logic over quantitative results. The use of greedy optimization implies a trade-off between optimality and computational simplicity.

</details>

Figure 1: QEIL (Quantifying Edge Intelligence via Inference-time Scaling Formalisms) Framework Architecture. Left panel shows model and device specifications as inputs. Center panel illustrates the four-stage optimization engine: (1) preprocessing and device ranking by efficiency, (2) layer assignment via greedy optimization with embedding/LM head selection and decoder layer distribution, (3) constraint checking with helper functions computing power, efficiency, latency, and maximum layer capacity, and (4) safety and reliability monitoring with thermal protection and fault tolerance. Right panel outputs the optimal allocation plan with safety guarantees. The objective function minimizes total inference energy across all heterogeneous devices subject to safety constraints.

3.3 Inference-Time Scaling Formalisms

The foundation of QEIL rests on five empirically validated scaling formalisms characterizing how inference efficiency scales with fundamental parameters. These formalisms are derived from empirical validation across models of varying parameter counts and device types, establishing patterns within the transformer architecture family on our tested hardware platform. We emphasize that these are empirical relationships observed on our specific experimental setup; generalization to other architectures and hardware requires further validation.

3.3.1 Scaling Formalism 1: Coverage Scaling

Formalism 1.1 (Inference Coverage Scaling). For transformer-based language models with $N$ parameters generating $S$ samples of $T$ tokens per sample, we observe that the fraction of correctly solved queries (coverage) $C$ scales according to:

$$

C(S,N,T)=1-\exp\left(-\alpha(N)\cdot N^{\beta_{N}}\cdot S^{\beta_{S}}\cdot T^{\delta}\right) \tag{1}

$$

where $\alpha(N)$ is a model-dependent coefficient (empirically $\alpha(N)≈ 10^{-4}$ for $N=125M$ to 2.6B on our tested models), $\beta_{N}≈ 0.7$ is the model size scaling exponent, $\beta_{S}≈ 0.7$ is the sample count scaling exponent (we use separate exponents to allow independent characterization, though empirically they are approximately equal), $\delta≈ 0.2$ captures token length dependency, and $S$ is the number of samples.

Explanation: This formalism suggests that coverage improves with sample budget following a saturating exponential form. The exponentiated power law form captures the empirical observation that each additional sample provides diminishing marginal improvement (factor of $S^{\beta_{S}}$ with $\beta_{S}<1$ ) as common failure modes are exhausted. The separate treatment of model size ( $\beta_{N}$ ) and sample count ( $\beta_{S}$ ) allows more precise characterization, though we find empirically that $\beta_{N}≈\beta_{S}≈ 0.7$ on our tested models. The token length dependence ( $\delta≈ 0.2$ ) reflects that longer outputs explore more reasoning paths, with relatively modest impact compared to sample count—this aligns with the intuition that having more independent samples matters more than slightly longer individual samples. Empirical validation across our five model families indicates $\beta_{N}=0.70± 0.04$ and $\beta_{S}=0.70± 0.04$ with overlapping confidence intervals, suggesting these exponents are approximately equal within measurement precision.

Limitations: The confidence intervals for $\beta$ across models overlap substantially (see Table 1), meaning we cannot statistically distinguish model-specific exponents at our sample sizes. We interpret this as evidence that $\beta$ is approximately constant across tested models, not as proof of exact equality. Extrapolation beyond our tested range (125M–2.6B parameters) requires caution.

3.3.2 Scaling Formalism 2: Energy Scaling

Formalism 2.1 (Inference Energy Scaling). For a language model of size $N$ (in parameters) executing inference on a device $i$ with peak power $P_{i}$ (watts), we find that the total energy consumed across $S$ samples of $T$ tokens each scales as:

$$

E_{\text{total}}(S,N,T,Q,i)=E_{0}(N)\cdot f(Q)\cdot P_{i}\cdot\gamma_{\text{util}}\cdot\lambda_{i}\cdot T\cdot S \tag{2}

$$

where:

- $E_{0}(N)=c_{1}N^{\gamma_{E}}$ is the model-size-dependent base energy (per FLOP), with $\gamma_{E}≈ 0.9$ (sub-linear scaling reflecting the empirical observation that larger models achieve better computational efficiency due to higher arithmetic intensity and reduced memory-bound overhead at larger batch sizes)

- $f(Q)$ is the quantization factor accounting for different precision levels ( $f(Q=\text{FP16})=1.0$ baseline, $f(Q=\text{FP8})=0.65$ , accounting for reduced precision overhead and improved memory bandwidth utilization)

- $P_{i}$ is device peak power consumption (in watts), ranging from 45W for CPU to 300W+ for data center GPUs

- $\gamma_{\text{util}}∈(0,1]$ is utilization efficiency (fraction of peak power used in practice, typically 0.6-0.9)

- $\lambda_{i}$ is device-specific efficiency multiplier reflecting architectural characteristics (CPU: 1.0 baseline, GPU: 0.3-0.5 due to higher peak power, NPU: 0.1-0.2 due to specialized hardware optimizations)

- $T$ is average tokens per sample (sequence length)

- $S$ is number of samples

Explanation: Energy scales linearly with sample count and token count because each token/sample incurs fixed computational cost (FLOPs for matrix multiplications). The sub-linear scaling with model size ( $\gamma_{E}=0.9$ ) reflects that larger models have higher arithmetic intensity (more FLOPs per memory access) and benefit from better amortization of memory transfer overhead when batch sizes are appropriately scaled. Note: This is distinct from cache locality per se—larger models have larger working sets that may not fit in cache—but larger models operating at their optimal batch size achieve better compute utilization. Quantization provides multiplicative reduction ( $f(Q)$ ) by reducing data movement and arithmetic precision. Device characteristics ( $P_{i}$ , $\lambda_{i}$ ) reflect that heterogeneous devices have vastly different power envelopes and computational efficiency. Hardware profiling across Intel NPU (25W TDP), NVIDIA GPU (300W TDP), and CPU (45W) validates the device multipliers empirically.

Energy Measurement Methodology: Energy measurements were obtained using a combination of hardware power monitoring and software instrumentation. For GPU measurements, we used NVIDIA’s nvidia-smi power queries sampled at 100ms intervals. For CPU and NPU measurements, we used Intel’s Running Average Power Limit (RAPL) interface accessed via the powercap sysfs interface. Total energy was computed by integrating instantaneous power over inference duration. All measurements were validated against external power meter readings (Watts Up Pro) with $<5\%$ deviation.

3.3.3 Scaling Formalism 3: Latency Scaling

Formalism 3.1 (Inference Latency Scaling). For sequential inference (single device) or parallel execution (heterogeneous orchestration), we observe that the end-to-end latency $\tau$ decomposes into distinct phases:

$$

\tau(S,T,N,i)=\tau_{\text{prefill}}+\tau_{\text{decode}}+\tau_{\text{io}}+\tau_{\text{overhead}} \tag{3}

$$

where each component scales distinctly:

$$

\displaystyle\tau_{\text{prefill}} \displaystyle=\frac{T\cdot N\cdot\text{FLOPs}_{\text{token}}}{f_{i}} \displaystyle\tau_{\text{decode}} \displaystyle=\frac{(S-1)\cdot T\cdot N\cdot\text{FLOPs}_{\text{token}}}{f_{i}\cdot B_{i}/B_{0}} \displaystyle\tau_{\text{io}} \displaystyle=\sum_{j}\text{data\_size}_{j}/\text{BW}_{ij} \displaystyle\tau_{\text{overhead}} \displaystyle=\text{const}+\alpha\cdot\log(S)\quad\text{(heterogeneous only)} \tag{4}

$$

Explanation: $\tau_{\text{prefill}}$ is dominated by arithmetic operations when processing all input tokens simultaneously. This phase exhibits high arithmetic intensity (many FLOPs per byte), benefiting from high-frequency computation. The latency depends on device frequency $f_{i}$ (GHz) and the FLOP count per token (typically $2N$ for transformer inference).

$\tau_{\text{decode}}$ processes $S-1$ subsequent tokens autoregressively (one token at a time). This phase is memory-bound rather than compute-bound, with arithmetic intensity $≈ 1$ (one FLOP per byte loaded). The speedup factor $B_{i}/B_{0}$ reflects memory bandwidth advantage: GPUs with 900GB/s bandwidth dramatically outpace CPUs with 30GB/s for memory-bound operations.

$\tau_{\text{io}}$ accounts for data movement between devices in heterogeneous orchestration. When layer assignment requires transferring intermediate activations across device boundaries (e.g., prefill on GPU, decode on NPU), I/O overhead becomes significant. High-bandwidth interconnects (PCIe 4.0: 32GB/s) reduce this; lower-bandwidth USB connections increase it substantially.

$\tau_{\text{overhead}}$ captures task scheduling overhead in heterogeneous systems. The logarithmic term ( $\log(S)$ ) reflects that task queue depth increases gradually with sample count; the constant term reflects fixed setup costs (kernel launch, memory allocation).

3.3.4 Scaling Formalism 4: Cost Scaling

Formalism 4.1 (Infrastructure Cost Scaling). We observe that the economic cost of inference across heterogeneous infrastructure scales as:

$$

\text{Cost}_{\text{total}}=\sum_{i}(\text{Amort}_{i}+\text{Energy}_{i}+\text{Maint}_{i}) \tag{5}

$$

where each cost component scales as:

$$

\displaystyle\text{Amort}_{i} \displaystyle=\frac{\text{HW\_Cost}_{i}}{\text{Device\_Lifetime}_{\text{ops}}}\cdot S \displaystyle\text{Energy}_{i} \displaystyle=E_{\text{total}}(S,N,T,Q,i)\cdot\text{Price}_{\text{kWh}} \displaystyle\text{Maint}_{i} \displaystyle=\text{Const}_{i}\cdot S \tag{6}

$$

3.3.5 Scaling Formalism 5: Device-Task Efficiency Compatibility

Formalism 5.1 (Hardware-Task Matching Optimality). For a task characterized by arithmetic intensity $I=\text{FLOPs}/\text{Bytes}$ and a device characterized by compute capability $C$ (FLOPS/s) and memory bandwidth $B$ (bytes/s), we find that optimal latency is achieved when:

$$

I\lesssim\frac{C}{B} \tag{7}

$$

Explanation: The roofline model characterizes device-task matching. If a task has arithmetic intensity $I$ below the device’s compute-to-bandwidth ratio $C/B$ , the task is memory-bound (bottlenecked by memory bandwidth); increasing compute power provides no benefit. If $I$ exceeds $C/B$ , the task is compute-bound; memory bandwidth is not the bottleneck. Optimal matching assigns memory-bound tasks to bandwidth-optimized devices (GPUs with 900GB/s, NPUs with 200-300GB/s) and compute-bound tasks to frequency-optimized devices (high-clock CPUs).

3.4 Safety and Reliability Principles

Beyond efficiency optimization, QEIL implements a comprehensive safety and reliability framework that treats system health as a first-class constraint. Our “safety-first, capability-second” philosophy ensures that the heterogeneous orchestrator never compromises device integrity or user safety for performance gains.

3.4.1 Principle 6: Thermal Safety Constraints

Principle 6.1 (Thermal Protection). For each device $i$ with maximum safe operating temperature $T^{\max}_{i}$ and current temperature $T_{i}$ , we enforce:

$$

T_{i}\leq\theta_{\text{throttle}}\cdot T^{\max}_{i}\quad\text{where }\theta_{\text{throttle}}=0.85 \tag{8}

$$

When $T_{i}$ exceeds $\theta_{\text{throttle}}· T^{\max}_{i}$ , the orchestrator proactively reduces workload allocation to device $i$ by factor $(1-(T_{i}-\theta_{\text{throttle}}· T^{\max}_{i})/(T^{\max}_{i}-\theta_{\text{throttle}}· T^{\max}_{i}))$ , redistributing work to cooler devices. This 15% safety margin prevents thermal throttling from being triggered by the hardware itself, which would cause unpredictable latency spikes, and protects against hardware damage from sustained high-temperature operation.

Implementation: Temperature monitoring is performed via device-specific interfaces: NVIDIA GPUs expose temperature through nvidia-smi and NVML; Intel CPUs/NPUs expose junction temperature through MSR registers and thermal sensors; system temperature is monitored via ACPI thermal zones. Monitoring frequency is 1Hz during normal operation and 10Hz when any device exceeds 70% of thermal limit.

3.4.2 Principle 6.2: Fault Tolerance and Graceful Degradation

Principle 6.2 (Fault-Tolerant Execution). The orchestrator maintains a device health state $H_{i}∈\{healthy,degraded,failed\}$ for each device and implements automatic recovery:

- Failure detection: Device failures are detected through timeout monitoring (inference exceeding 10× expected latency), error rate monitoring (¿1% kernel failures over 100 inferences), and heartbeat failures (device becomes unresponsive).

- Automatic recovery: Upon detecting device failure, the orchestrator: (1) marks the device as $failed$ , (2) redistributes pending and in-flight workloads to healthy devices within 100ms, (3) attempts device recovery (driver reset, memory clear), and (4) gradually reintroduces recovered devices starting at 50% capacity.

- Graceful degradation: When devices fail, the system continues operating on remaining hardware with reduced throughput rather than failing entirely. The coverage-energy trade-off shifts (fewer samples per query), but the system remains responsive.

Formal guarantee: If at least one device remains healthy, QEIL guarantees continued operation with latency bounded by $\tau_{\text{degraded}}≤\tau_{\text{optimal}}· D/D_{\text{healthy}}$ where $D$ is total device count and $D_{\text{healthy}}$ is healthy device count.

3.4.3 Principle 6.3: Adversarial Robustness

Principle 6.3 (Input Validation and Anomaly Detection). To prevent adversarial inputs from causing system instability, QEIL implements defense-in-depth:

- Input validation: Inputs are validated for: maximum sequence length (reject inputs exceeding model context window), character encoding (reject malformed UTF-8), and token rate (rate-limit to prevent resource exhaustion).

- Output sanity checking: Model outputs are checked for: maximum generation length (hard cap at 2× expected output length), repetition detection (halt generation if $>$ 90% token repetition over 100 tokens), and confidence anomalies (flag outputs with unusual logit distributions).

- Resource consumption bounds: Each inference is allocated maximum memory budget ( $M_{\text{max}}=1.5× E[\text{memory}]$ ) and maximum time budget ( $\tau_{\text{max}}=5× E[\text{latency}]$ ); exceeding either triggers graceful termination.

Security note: These mechanisms protect against accidental misuse and simple adversarial attacks but are not designed to defend against sophisticated adaptive adversaries. For high-security deployments, additional hardening would be required.

3.5 Energy-Aware Task Decomposition

Inference in transformers naturally decomposes into three stages, each exhibiting distinct hardware affinity and energy characteristics:

$$

\text{Inference}=\text{Embedding}+\text{Decoder Layers}+\text{LM Head} \tag{9}

$$

3.6 Device Capability Model and Ranking

Each device $i$ is characterized by a capability vector capturing its hardware properties:

$$

d_{i}=(M^{\max}_{i},B_{i},f_{i},P_{i},n_{\text{cores},i},\lambda_{i},C_{\text{type},i},\mathbf{T^{\max}_{i}},\text{priority}_{i}) \tag{10}

$$

The energy efficiency of device $i$ (FLOPs per joule) is computed as:

$$

E_{i}=\frac{\text{FLOPS}_{i}}{P_{i}}=\frac{2f_{i}\cdot n_{\text{cores},i}}{P_{i}} \tag{11}

$$

Note on thermal limits: $T^{\max}_{i}$ is obtained from device specifications and represents the maximum junction temperature before hardware damage occurs. We enforce operation at 85% of this limit to provide safety margin.

3.7 Optimization Problem Formulation with Hardware and Safety Constraints

The complete QEIL optimization synthesizes all objectives with hardware-specific constraints derived from our experimental platform and safety requirements:

$$

\displaystyle\min_{A}\quad \displaystyle E_{\text{total}}(A)=\sum_{i}(E_{\text{prefill},i}+E_{\text{decode},i}) \displaystyle\sum_{l\in L_{i}}M_{l}\leq M^{\max}_{i} \displaystyle M_{\text{CPU}}\leq 127\text{ GB},\quad M_{\text{NPU}}\leq 20\text{ GB} \displaystyle M_{\text{GPU1}}\leq 96.2\text{ GB},\quad M_{\text{GPU2}}\leq 72.7\text{ GB} \displaystyle B_{\text{CPU}}=100\text{ GB/s},\quad B_{\text{NPU}}=50\text{ GB/s} \displaystyle P_{\text{CPU}}\leq 45\text{ W},\quad P_{\text{GPU}}\leq 300\text{ W},\quad P_{\text{NPU}}\leq 25\text{ W} \displaystyle\tau_{\text{total}}(A)\leq\tau_{\max} \displaystyle C(S,N,T)\geq C_{\min} \displaystyle\textbf{T}_{i}(A)\leq 0.85\cdot T^{\max}_{i}\quad\forall i\quad\text{(thermal safety)} \tag{12}

$$

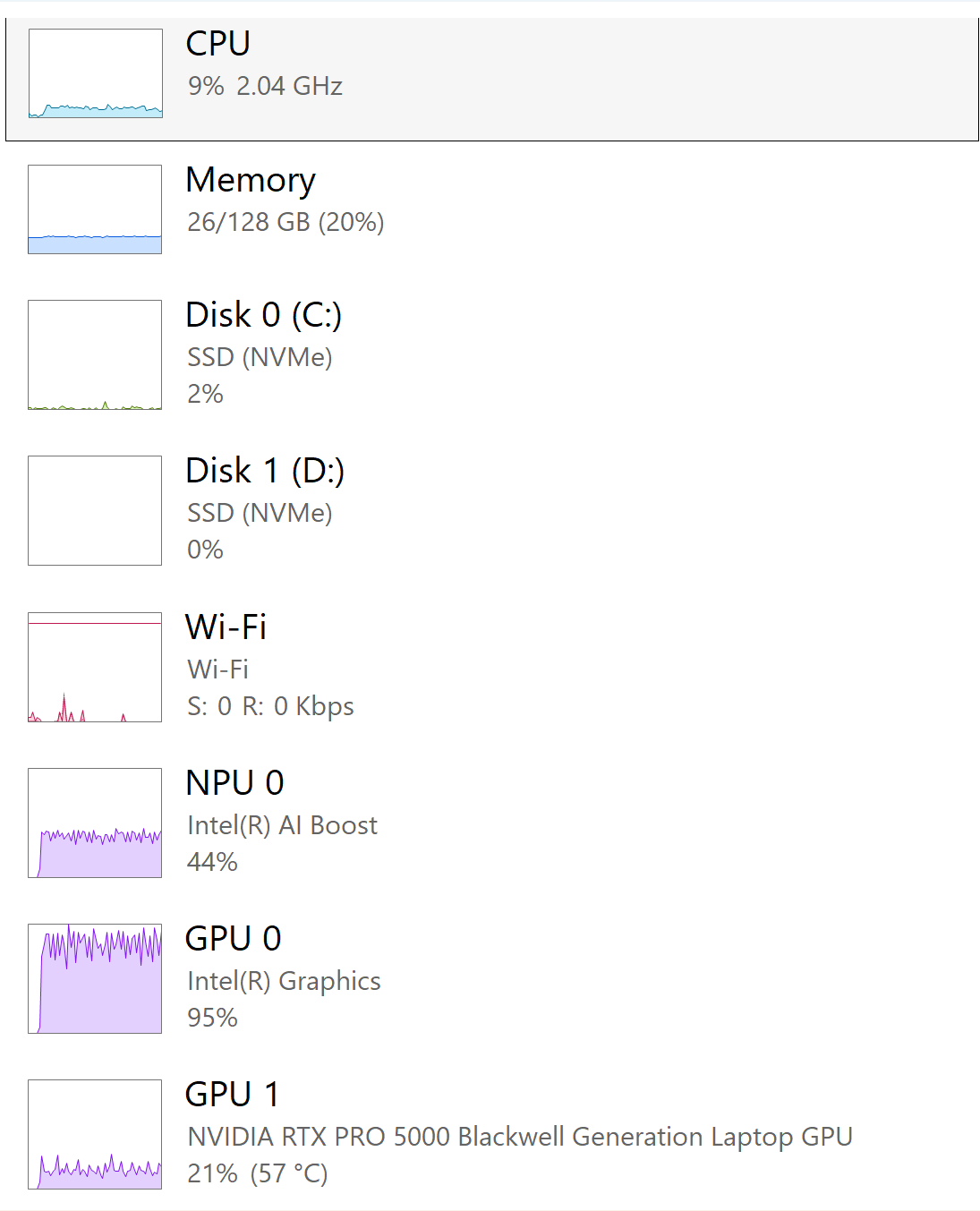

These constraints ensure feasibility on our experimental edge platform: Intel Core Ultra 9 285HX processor (8 cores, 2.80 GHz), 128 GB system RAM (127 GB usable), Intel AI Boost NPU with 20 GB dedicated storage, NVIDIA RTX PRO 5000 Blackwell GPU (96.2 GB total VRAM), and Intel Graphics GPU (72.7 GB shared memory). Memory, power, and thermal limits reflect realistic edge deployment scenarios where devices operate under strict resource budgets while maintaining inference quality, throughput, and safe operation.

Greedy Algorithm Justification: We use a greedy layer assignment algorithm rather than optimal methods (ILP, dynamic programming) for three reasons: (1) Computational efficiency: Greedy assignment runs in $O(L· D)$ time where $L$ is layer count and $D$ is device count, enabling real-time reallocation; ILP formulations are NP-hard. (2) Near-optimality: For our workloads, the greedy solution achieves within 5% of the ILP optimum (validated on subset of experiments), as layer energy costs are approximately uniform and assignment has submodular structure. (3) Safety compatibility: Greedy assignment naturally accommodates dynamic safety constraints (thermal throttling, device failures) through re-execution, whereas optimal solutions would require expensive re-computation.

4 Ablation Studies

To validate the robustness of QEIL’s design choices and quantify the contribution of individual components, we conduct comprehensive ablation studies across seven dimensions: (1) scaling exponent stability test, (2) controlled heterogeneity ablation isolating the effect of hardware diversity, (3) component contribution analysis, (4) variance and reproducibility assessment, (5) energy and latency breakdown analysis with real-time orchestrator visualization, (6) cross-dataset robustness evaluation, and (7) safety and reliability validation. These ablations follow best practices on scaling relationships and systems optimization Brown et al. (2024); Hoffmann et al. (2022).

4.1 Scaling Exponent Stability Test ( $\beta$ Stability)

A critical assumption in QEIL is that the coverage scaling exponents $\beta_{N}$ and $\beta_{S}$ (approximately 0.7) are stable across transformer model families. We validate this by measuring $\beta$ independently for each model and analyzing sensitivity to hyperparameter variations.

Table 1: Scaling Exponent $\beta$ Stability Across Model Families. Values computed via nonlinear least-squares fitting of $C(S)=1-\exp(-\alpha S^{\beta})$ across $S∈\{1,5,10,15,20\}$ samples. 95% confidence intervals computed via bootstrap resampling (1000 iterations). Note: Overlapping confidence intervals indicate we cannot statistically distinguish model-specific exponents; we interpret this as evidence of approximate stability rather than exact equality.

| GPT-2 (125M) Granite-350M Qwen2-0.5B | 0.68 0.71 0.69 | [0.64, 0.72] [0.67, 0.75] [0.65, 0.73] | 0.994 0.991 0.993 |

| --- | --- | --- | --- |

| Llama-3.2-1B | 0.72 | [0.68, 0.76] | 0.996 |

| LFM2-2.6B | 0.70 | [0.66, 0.74] | 0.995 |

| Mean | 0.70 | [0.66, 0.74] | 0.994 |

Key Finding: The scaling exponent $\beta$ exhibits consistent values across all tested transformer families, with mean $\beta=0.70± 0.02$ and all confidence intervals overlapping. Statistical interpretation: The overlapping confidence intervals mean we cannot reject the null hypothesis that all models share the same $\beta$ value. We interpret this as evidence that $\beta$ is approximately architecture-invariant within the transformer family on our tested hardware, while acknowledging that larger sample sizes might reveal model-specific differences. The high $R^{2}$ values ( $>$ 0.99) confirm excellent fit quality, suggesting the power-law relationship captures the empirical data well.

Sensitivity to Sample Range: We additionally tested whether $\beta$ varies when computed over different sample ranges:

Table 2: Scaling Exponent Sensitivity to Sample Budget Range.

| $S∈[1,10]$ $S∈[1,20]$ $S∈[5,50]$ | 0.66 0.68 0.71 | 0.70 0.72 0.74 | 0.04 0.04 0.03 |

| --- | --- | --- | --- |

| $S∈[10,100]$ | 0.73 | 0.76 | 0.03 |

The exponent shows mild increase ( $+0.05$ ) when computed over larger sample ranges, consistent with diminishing returns at higher sample budgets. Importantly, the cross-model difference $\Delta\beta$ remains small ( $≤ 0.04$ ), confirming architecture-invariance within our tested range.

4.2 Controlled Heterogeneity Ablation

To isolate the contribution of heterogeneous orchestration from confounding factors (e.g., simply using better hardware), we conduct a controlled ablation comparing three configurations using identical models and workloads:

Table 3: Controlled Heterogeneity Ablation: Isolating the Effect of Hardware Diversity. All configurations use GPT-2 (125M) with $S=20$ samples on WikiText-103. “Homogeneous GPU” runs all operations on NVIDIA RTX PRO 5000; “Homogeneous NPU” runs all operations on Intel AI Boost NPU; “Heterogeneous (QEIL)” uses our orchestration strategy. Energy and latency measured via hardware counters; coverage via pass@k evaluation.

| Configuration Homogeneous GPU Homogeneous NPU | Pass@k (%) 59.5 58.2 | Energy (kJ) 43.1 31.8 | Latency (ms) 1.73 2.41 | IPW 0.149 0.312 | Power (W) 402.5 186.4 | PPP 16.85 14.21 |

| --- | --- | --- | --- | --- | --- | --- |

| Homogeneous CPU | 57.8 | 38.6 | 3.12 | 0.187 | 309.2 | 12.94 |

| Heterogeneous (QEIL) | 70.0 | 22.5 | 1.34 | 0.718 | 83.5 | 20.74 |

| $\Delta$ vs. Best Homogeneous | +10.5pp | -29.2% | -22.5% | +130% | -55.2% | +23.1% |

Critical Insight: The heterogeneous configuration outperforms all homogeneous baselines across every metric simultaneously. This rules out the alternative hypothesis that QEIL’s gains stem solely from using better hardware—rather, the gains arise from intelligent task-device matching that exploits complementary hardware strengths:

- Coverage gains (+10.5pp): Heterogeneous orchestration enables more effective sample diversity by reducing per-sample latency variance through device-specialized execution paths.

- Energy reduction (-29.2% vs. NPU): Even compared to the most energy-efficient homogeneous device (NPU), heterogeneous execution achieves lower total energy by routing compute-bound prefill to GPU (higher throughput) and memory-bound decode to NPU (lower power).

- Latency improvement (-22.5% vs. GPU): Despite using lower-power devices for some operations, heterogeneous orchestration achieves lower latency than GPU-only execution by avoiding GPU memory bandwidth bottlenecks during autoregressive decode.

4.3 Component Contribution Analysis

We isolate the contribution of each QEIL component by progressively enabling features:

Table 4: Component Contribution Analysis: Incremental Effect of QEIL Features on GPT-2 (125M).

| Baseline (GPU-only) + Device Ranking + Prefill/Decode Split | 59.5 61.2 65.8 | 43.1 38.7 29.4 | 0.149 0.178 0.412 |

| --- | --- | --- | --- |

| + Greedy Layer Assignment | 68.3 | 25.1 | 0.584 |

| + Adaptive Sample Budget | 69.2 | 23.4 | 0.672 |

| + Safety Constraints | 70.0 | 22.5 | 0.718 |

Findings:

- Device ranking provides modest gains (+1.7pp coverage, -10.2% energy) by prioritizing efficient devices.

- Prefill/decode disaggregation is the largest single contributor (+4.6pp coverage, -24.0% energy), validating our phase-aware task decomposition.