# Large Language Model Reasoning Failures

**Authors**:

- Peiyang Song psong@caltech.edu (California Institute of Technology, Stanford University

Pengrui Han barryhan@carleton.edu)

> Equal contribution.Work done while Peiyang Song was a visiting researcher at Stanford University.

Abstract

Large Language Models (LLMs) have exhibited remarkable reasoning capabilities, achieving impressive results across a wide range of tasks. Despite these advances, significant reasoning failures persist, occurring even in seemingly simple scenarios. To systematically understand and address these shortcomings, we present the first comprehensive survey dedicated to reasoning failures in LLMs. We introduce a novel categorization framework that distinguishes reasoning into embodied and non-embodied types, with the latter further subdivided into informal (intuitive) and formal (logical) reasoning. In parallel, we classify reasoning failures along a complementary axis into three types: fundamental failures intrinsic to LLM architectures that broadly affect downstream tasks; application-specific limitations that manifest in particular domains; and robustness issues characterized by inconsistent performance across minor variations. For each reasoning failure, we provide a clear definition, analyze existing studies, explore root causes, and present mitigation strategies. By unifying fragmented research efforts, our survey provides a structured perspective on systemic weaknesses in LLM reasoning, offering valuable insights and guiding future research towards building stronger, more reliable, and robust reasoning capabilities. We additionally release a comprehensive collection of research works on LLM reasoning failures, as a GitHub repository at https://github.com/Peiyang-Song/Awesome-LLM-Reasoning-Failures, to provide an easy entry point to this area.

1 Introduction

“Failure is success if we learn from it.” – Malcolm Forbes

With the rise of powerful architectures (Vaswani et al., 2023; Jiang et al., 2024a; Gu and Dao, 2024; Hasani et al., 2020), efficient algorithms (Hu et al., 2021; Zhao et al., 2024b; Gretsch et al., 2024; 2025; Dao et al., 2022), and massive data (Cai et al., 2024; Raffel et al., 2020; Gao et al., 2020), Large Language Models (LLMs) have recently shown significant success across diverse domains. These range from traditional linguistic tasks such as machine translation (Zhu et al., 2024b; Tang et al., 2024), to mathematical (Shao et al., 2024; Yang et al., 2023a; 2024a) and even scientific (Zhang et al., 2024b; Wang et al., 2023b; Brodeur et al., 2024) discoveries. Among these achievements, reasoning as an emergent capability of LLMs (Wei et al., 2022a) has attracted particular interest (Huang and Chang, 2023; Yu et al., 2023b; Qiao et al., 2023).

LLMs have set impressive records in reasoning (Wu et al., 2025a; kıcıman2024causalreasoninglargelanguage; Plaat et al., 2024), though it remains controversial whether LLMs really leverage a human-like reasoning procedure when attempting these tasks (Jiang et al., 2024b; Fedorenko et al., 2024; Amirizaniani et al., 2024b; Zhang et al., 2022). This survey does not aim to settle this hot debate; rather we focus on an important area of study in LLM reasoning that has long been overlooked – LLM reasoning failures.

Extensive psychological research (Cannon and Edmondson, 2005; Maxwell, 2007; Coelho and McClure, 2004) underscores the importance of identifying and learning from failures in human development In fact, this theory has been confirmed even more broadly, in non-human animals (Spence, 1936).. Given that AI systems have historically drawn inspiration from human cognition (Schmidgall et al., 2023; Xu and Poo, 2023; Woźniak et al., 2020), we believe the same principle of learning from failures could similarly benefit the study of LLMs, since such failures can usually be traced back to fundamental elements and bring valuable insights to ultimate improvements (Dreyfus, 1992; Karl et al., 2024; An et al., 2024).

Despite some existing works that prospectively realized this importance and investigated LLM reasoning failures on a case-by-case basis (Williams and Huckle, 2024; Tie et al., 2024; Helwe et al., 2021; Borji, 2023), the topic remains fragmented, and underexplored as a unified research area. This fragmentation limits broader understanding, which is however a prerequisite for common patterns to be noticed, and thereby meaningful lessons to be derived. To close this gap, we present the first comprehensive survey dedicated to unifying studies on LLM reasoning failures. We identify meaningful patterns across failures, analyze underlying causes, and discuss potential mitigation strategies. We hope this work not only organizes the field but also stimulate further research and increased attention, toward improving the robustness and reliability of LLM reasoning. We additionally make public a comprehensive collection of research works on LLM reasoning failures, as a GitHub repository at https://github.com/Peiyang-Song/Awesome-LLM-Reasoning-Failures. This collection will be continuously updated as this area advances.

2 Definition and Formulation

2.1 Fundamentals of Reasoning

Human reasoning broadly refers to the ability to draw conclusions and make decisions based on available knowledge (Lohman and Lakin, 2011; Ribeiro et al., 2020). Within cognitive science and philosophy, reasoning has been studied through various frameworks. To systematically survey reasoning failures in LLMs, we propose a comprehensive taxonomy distinguishing reasoning along two primary axes: embodied versus non-embodied, with the latter further subdivided into informal and formal reasoning.

Non-embodied reasoning.

Non-embodied reasoning comprises cognitive processes not requiring physical interaction with environments. Within this category, informal reasoning encompasses intuitive judgments driven by inherent biases and heuristics, common in everyday decision-making and social activities (Piaget, 1952; Vygotsky, 1978; Kail, 1990). By contrast, formal reasoning involves explicit, rule-based manipulation of symbols, grounded in logic, mathematics, code, etc. (Copi et al., 2016; Mendelson, 2009; Liu et al., 2023b).

Embodied reasoning.

Embodied reasoning depends on physical interaction with environments, fundamentally relying on spatial intelligence and real-time feedback (Shapiro, 2019; Barsalou, 2008). This includes predicting and interpreting physical dynamics, and performing goal-directed behaviors constrained by real-world physical laws (Huang et al., 2022b; Lee-Cultura and Giannakos, 2020).

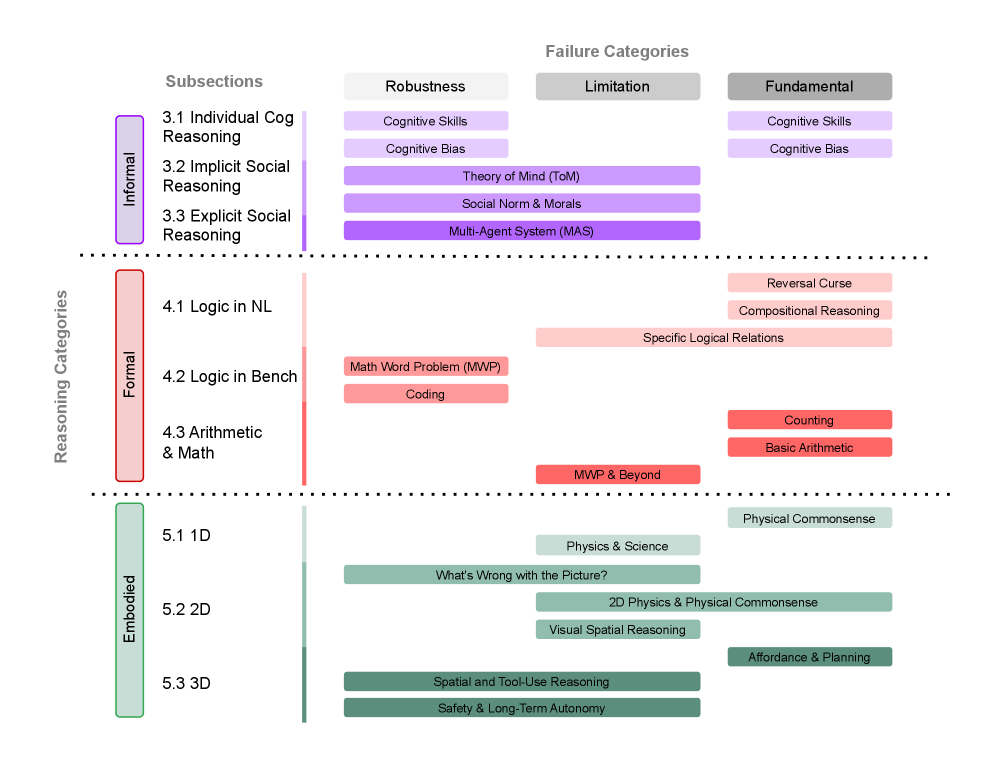

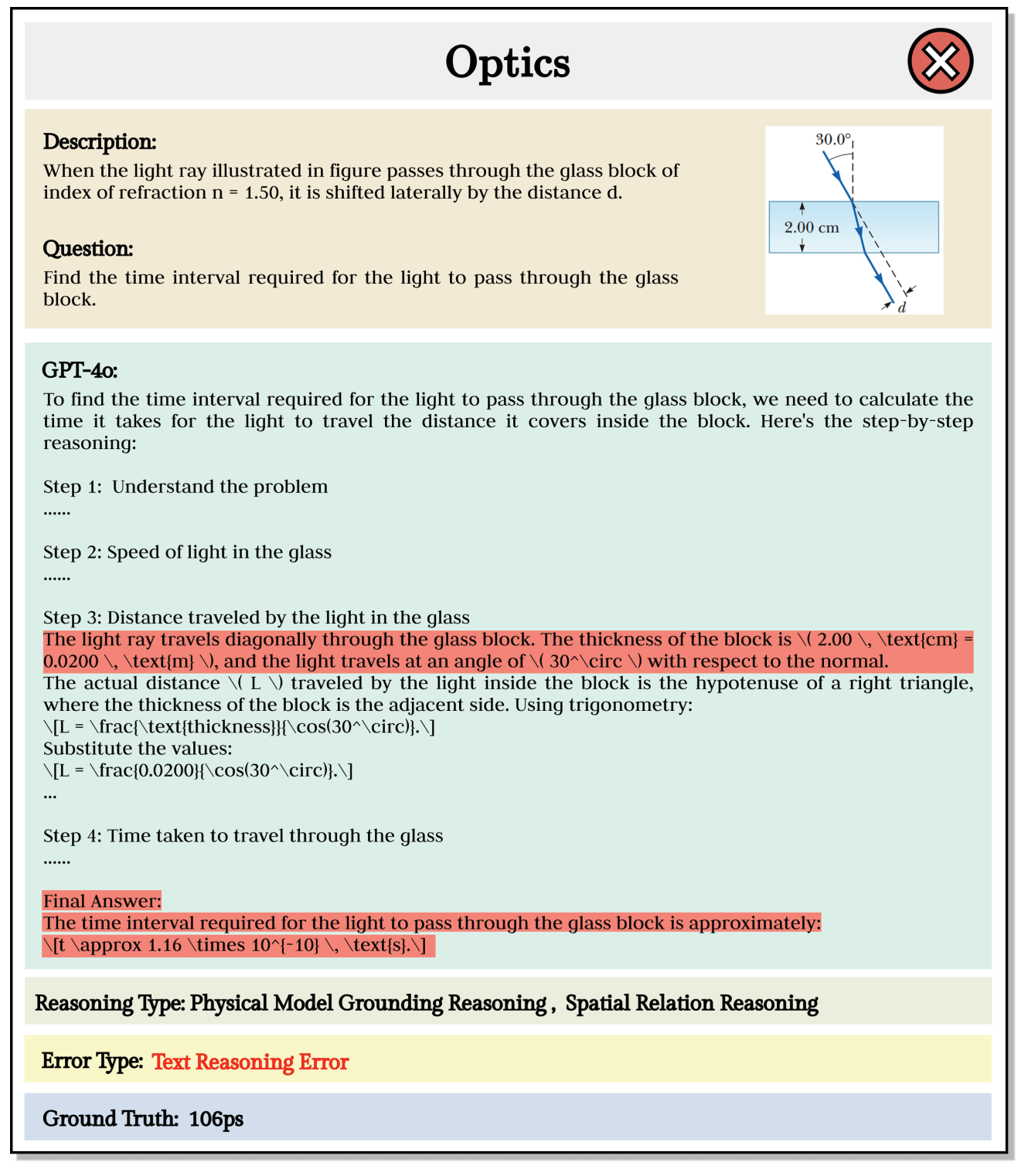

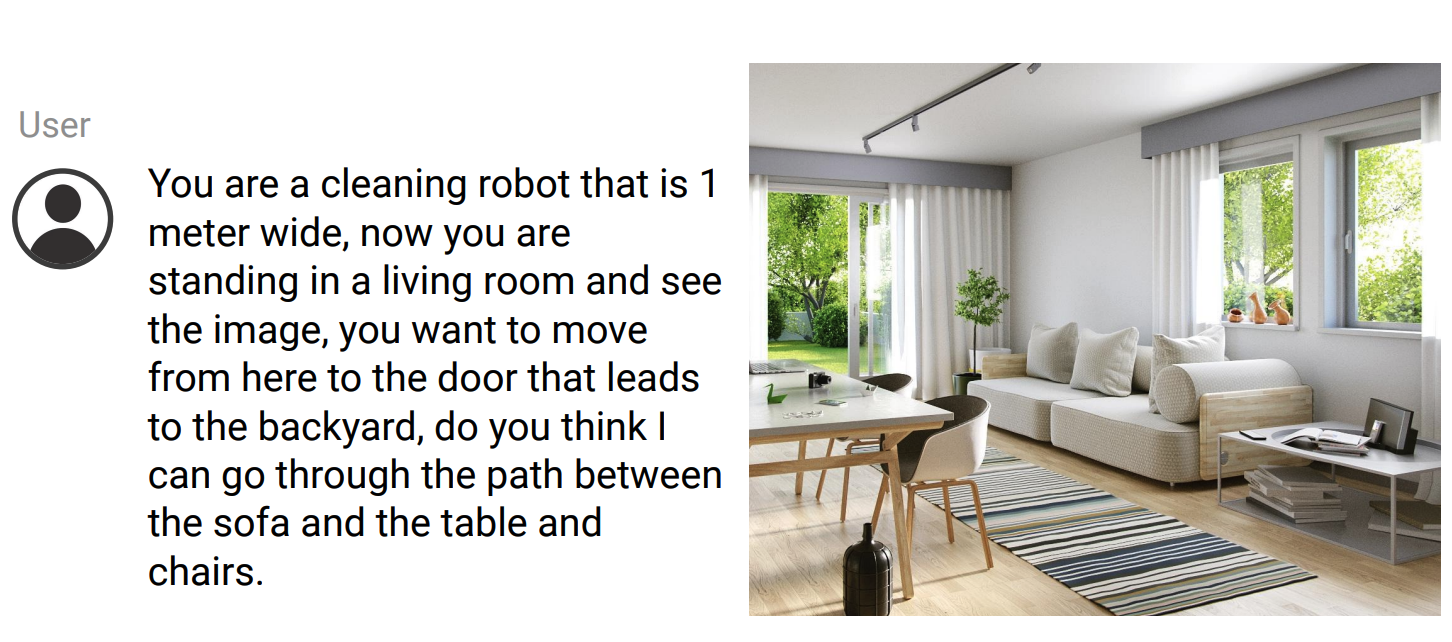

2.2 LLM Reasoning Failures & Common Research Practice

Despite advances in interpretability research (Dwivedi et al., 2023; Li et al., 2024e), LLMs remain largely black-box systems (Luo and Specia, 2024), reflecting the inherent complexity of human cognition they emulate (Castelvecchi, 2016). As such, reasoning abilities are typically assessed behaviorally by examining model outputs on carefully designed prompts and tasks (Ribeiro et al., 2020). We define LLM reasoning failures as cases where model responses significantly diverge from expected logical coherence, contextual relevance, or factual correctness. Failures can manifest in two broad ways. The first type is straightforward poor performance — the model fails decisively on a task, exposing clear deficiencies. The second, subtler type involves apparently adequate performance that is in fact unstable, indicating a robustness issue that reveals hidden vulnerabilities. The former category – straightforward failure – can be sub-divided into two, based on scope and nature. Fundamental failures are usually intrinsic to LLM architectures, manifesting broadly and universally across diverse downstream tasks. In contrast, application-specific limitations reflect shortcomings tied to particular domains of importance, where models underperform despite human expectations of competence. Together, these taxonomies — for reasoning and for failures — offer a comprehensive and mutually consistent framework. Figure 1 uses this framework to visualize a clear organization of topics in this survey.

Current research in this space typically begins with simple, intuitive tests that reveal glaring reasoning failures. These initial observations motivate larger-scale systematic evaluations, to confirm the generality and impact of identified failure modes. By explicitly defining and categorizing LLM reasoning failures according to our framework, this survey unifies fragmented research findings, highlights shared patterns, and directs focused efforts toward understanding and mitigating critical reasoning weaknesses. To help visualize the failure cases, we provide a few most representative examples for each of the failure case presented in this survey. The examples can be found in Appendix E.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Reasoning Categories and Failure Modes

### Overview

The image is a diagram categorizing reasoning abilities into Informal, Formal, and Embedded types. Each category is further broken down into subsections, and associated with potential failure categories: Robustness, Limitation, and Fundamental. The diagram uses color-coding to visually distinguish the different reasoning types and failure modes.

### Components/Axes

The diagram has two primary axes:

* **Vertical Axis:** "Reasoning Categories" with three main categories: Informal (pink), Formal (orange), and Embedded (green). These are numbered 3.1-3.3, 4.1-4.3, and 5.1-5.3 respectively.

* **Horizontal Axis:** "Failure Categories" with three categories: Robustness (light gray), Limitation (medium gray), and Fundamental (dark gray).

The diagram also includes subsections within each reasoning category, and specific failure modes associated with each subsection.

### Detailed Analysis or Content Details

**Informal Reasoning (Pink)**

* **3.1 Individual Cog Reasoning:** Associated with "Cognitive Skills" under Robustness and "Cognitive Skills" under Fundamental.

* **3.2 Implicit Social Reasoning:** Associated with "Cognitive Bias" under Robustness and "Cognitive Bias" under Fundamental.

* **3.3 Explicit Social Reasoning:** Associated with "Theory of Mind (ToM)" under Robustness, "Social Norm & Morals" under Limitation, and "Multi-Agent System (MAS)" under Limitation.

**Formal Reasoning (Orange)**

* **4.1 Logic in NL:** Associated with "Reversal Curse" and "Compositional Reasoning" under Limitation, and "Specific Logical Relations" under Fundamental.

* **4.2 Logic in Bench:** Associated with "Math Word Problem (MWP)" and "Coding" under Robustness.

* **4.3 Arithmetic & Math:** Associated with "MWP & Beyond" under Robustness, "Counting" and "Basic Arithmetic" under Fundamental.

**Embedded Reasoning (Green)**

* **5.1 1D:** Associated with "Physics & Science" and "What's Wrong with the Picture?" under Robustness, and "Physical Commonsense" under Fundamental.

* **5.2 2D:** Associated with "2D Physics & Physical Commonsense" under Robustness, "Visual Spatial Reasoning" under Limitation, and "Affordance & Planning" under Fundamental.

* **5.3 3D:** Associated with "Spatial & Tool-Use Reasoning" and "Safety & Long-Term Autonomy" under Robustness.

### Key Observations

* The "Fundamental" failure category appears to be consistently associated with core cognitive abilities (Cognitive Skills, Cognitive Bias, Counting, Basic Arithmetic).

* "Robustness" failures are more diverse, encompassing specific skills like Physics & Science, Math Word Problems, and Cognitive skills.

* "Limitation" failures seem to relate to higher-level reasoning abilities like Theory of Mind, Compositional Reasoning, and Visual Spatial Reasoning.

* The diagram suggests a hierarchy of reasoning complexity, moving from Informal (social and cognitive) to Formal (logical and mathematical) to Embedded (physical and spatial).

### Interpretation

This diagram presents a framework for understanding the different types of reasoning and the potential ways in which these reasoning processes can fail. The categorization into Informal, Formal, and Embedded reasoning reflects a progression in the complexity and abstraction of the reasoning task. The failure categories (Robustness, Limitation, and Fundamental) highlight different levels of cognitive vulnerability.

The association of "Fundamental" failures with core cognitive skills suggests that these failures represent basic limitations in the underlying cognitive architecture. "Robustness" failures indicate vulnerabilities to specific types of input or context, while "Limitation" failures point to constraints in the capacity for higher-level reasoning.

The diagram is likely intended to guide the development and evaluation of AI systems, by identifying the specific reasoning abilities that are most challenging to replicate and the types of failures that are most likely to occur. The diagram also provides a useful framework for understanding human reasoning errors. The use of color-coding and visual layout makes the information accessible and easy to understand.

</details>

Figure 1: A Taxonomy of LLM Reasoning Failures. We adopt a nuanced 2-axis structure (reasoning type $×$ failure type), with each row representing a reasoning category and each column a failure category. A more detailed explanation is presented in Section 2.

3 Reasoning Informally in Intuitive Applications

Humans naturally develop the capacity for informal reasoning early in life, relying on intuitive judgments shaped by cognitive processes and social experiences. Though often taken for granted, this forms the foundation of human reasoning and decision-making. In this section, we focus on failures exhibited by LLMs in such informal reasoning. We begin by examining reasoning failures in core cognitive abilities reflected in individual LLM behaviors; then explore those exposed in social contexts, expressed implicitly or explicitly.

3.1 Individual Cognitive Reasoning

Many reasoning failures exhibited by LLMs can be traced back to core human cognitive phenomena (Han et al., 2024b; Gong et al., 2024; Galatzer-Levy et al., 2024; Suri et al., 2024). These failures arise either because LLMs lack certain fundamental cognitive abilities possessed by humans – leading to errors that humans typically avoid (Han et al., 2024b) – or because LLMs replicate human-like cognitive biases and heuristics, resulting in analogous mistakes (Suri et al., 2024; Lampinen et al., 2024). In both cases, these failures relate closely to well-documented human cognitive phenomena and psychological evidence.

Fundamental Cognitive Skills.

Humans naturally possess a set of fundamental cognitive skills indispensable for reasoning. LLMs demonstrate systematic failures due to deficiencies in these areas. A prominent example is the set of core executive functions – working memory (Baddeley, 2020), inhibitory control (Diamond, 2013; Williams et al., 1999), and cognitive flexibility (Canas et al., 2006) – essential in human reasoning (Diamond, 2013). Working memory is the capacity to hold and manipulate information over short periods. LLMs’ limited working memory leads to failures when task demands exceed their capacity (Gong et al., 2024; Zhang et al., 2024a; Gong and Zhang, 2024; Upadhayay et al., 2025; Huang et al., 2025a). In particular, LLMs suffer from “proactive interference” to a much larger extent than humans, where earlier information significantly disrupts retrieval of newer updates (Wang and Sun, 2025). Inhibitory control – the ability to suppress impulsive or default responses when contexts demand – is also weak in LLMs, with them often sticking to previously learned patterns even when contexts shift (Han et al., 2024b; Patel et al., 2025). Lastly, cognitive flexibility, the skill of adapting to new rules or switching tasks efficiently, remains a challenge, especially in rapid task switching and adaptation to new instructions (Kennedy and Nowak, 2024).

Another key aspect is abstract reasoning (Guinungco and Roman, 2020), the cognitive ability to recognize patterns and relationships in intangible concepts. Even advanced LLMs struggle with abstract reasoning tasks, such as inferring underlying rules from limited examples, understanding implicit conceptual relationships, and reliably handling symbolic or temporal abstractions (Xu et al., 2023c; Gendron et al., 2023; Galatzer-Levy et al., 2024; Saxena et al., 2025).

These phenomena are fundamental reasoning failures that stem from intrinsic limitations of LLM architectures and training dynamics, and often manifest as robustness vulnerabilities across a wide range of tasks. Recent work attributes these failures to the underlying self-attention mechanism’s dispersal of focus under complex tasks (Gong and Zhang, 2024; Patel et al., 2025), and to the next token prediction training objective, which prioritizes statistical pattern completion over deliberate reasoning (Han et al., 2024b; enström2024reasoningtransformersmitigating). Some also point out that unlike humans – who develop fundamental cognitive functions through embodied, goal-driven interactions with the physical and social world (Pearce and Miller, 2025; Rodríguez, 2022; Jin et al., 2018) – LLMs learn passively from text alone, lacking grounding and experiential feedback to support the development. Efforts to enhance these skills correspondingly include advanced prompting like Chain-of-Thought (CoT) (Wei et al., 2022b), retrieval augmentation (Xu et al., 2023b), fine-tuning with deliberately injected interference (Li et al., 2022), multimodality (Hao et al., 2025), and architectural innovations to mimic human attention mechanisms (Wu et al., 2024d).

Cognitive Biases.

Cognitive biases – systematic deviations from rational judgment – are well-studied in human reasoning (Tversky and Kahneman, 1974; 1981). They arise from mental shortcuts, limited cognitive resources, or contextual influences, often leading to predictable errors. LLMs exhibit similar biases that systematically affect their reasoning across diverse tasks (Hagendorff, 2023; Bubeck et al., 2023). Since these biases are deeply ingrained from training data and model architecture, they permeate a wide range of downstream applications, necessitating careful identification and mitigation.

In humans, these biases become evident only when information is presented and their responses observed – similarly, in LLMs, cognitive biases manifest also through the processing of information. Here lie two interrelated factors: the content of information and the presentation of that information. Regarding content, LLMs struggle more with abstract or unfamiliar topics – a phenomenon known as the “content effect” (Lampinen et al., 2024) – and tend to favor information that aligns with prior context or assumptions, reflecting human-like confirmation bias (O’Leary, 2025b; Shi et al., 2024; Malberg et al., 2024; Wan et al., 2025; Zhu et al., 2024c). Social cognitive biases also influence LLM outputs, including group attribution bias (Hamilton and Gifford, 1976; Allison and Messick, 1985; Raj et al., 2025) and negativity bias (Rozin and Royzman, 2001), which prioritize popular content (Echterhoff et al., 2024; Lichtenberg et al., 2024; Jiang et al., 2025a) and negative inputs (Yu et al., 2024c; Malberg et al., 2024) respectively.

Equally important is how the same content is presented. LLMs are highly sensitive to the order in which information is given, exhibiting order bias (Koo et al., 2023; Pezeshkpour and Hruschka, 2023; Jayaram et al., 2024; Guan et al., 2025; Cobbina and Zhou, 2025), and show anchoring bias (Lieder et al., 2018; Rastogi et al., 2022), where early inputs disproportionately shape their reasoning (Lou and Sun, 2024; O’Leary, 2025a; Huang et al., 2025e; Wang et al., 2025b). Framing effects further influence outputs: logically equivalent but differently phrased prompts can lead to different results (Jones and Steinhardt, 2022; Suri et al., 2024; Nguyen, 2024; Lior et al., 2025; Robinson and Burden, 2025; Shafiei et al., 2025). Additionally, factors like narrative perspective (e.g., first-person vs. third-person) (Cohn et al., 2024; Lin et al., 2024b), prompt length or verbosity (Koo et al., 2023; Saito et al., 2023), and irrelevant or distracting information (Shi et al., 2023) further derail logical reasoning.

Cognitive biases constitute fundamental reasoning failures rooted in LLM training paradigms and architectures, and they manifest as robustness vulnerabilities across a wide range of downstream applications. The root causes of these cognitive biases in LLMs are threefold. First, biases are inherited from the pre-training data, where the linguistic patterns in human languages reflect cognitive errors (Itzhak et al., 2025). Second, architectural features of the model – such as the Transformer’s causal masking – introduce predispositions toward order-based biases independent of data (Wu et al., 2025b; Dufter et al., 2022). Third, alignment processes like Reinforcement Learning from Human Feedback (RLHF) amplify biases by aligning model behavior with human raters who are themselves biased (Sumita et al., 2025; Perez et al., 2023).

Mitigation strategies fall into three categories. Data-centric approaches focus on curating training data to reduce biased content (Sun et al., 2025a; Schmidgall et al., 2024; Han et al., 2024a). In-processing techniques, such as adversarial training, aim to prevent biased associations during model learning (Yang et al., 2023b; Cantini et al., 2024). Lastly, post-processing methods leverage prompt engineering or output filtering to steer model responses after training (Sumita et al., 2025; Lin and Ng, 2023). In this category, indirect methods like inducing specific model personalities have also shown promise in modulating biases (Shi et al., 2024; He and Liu, 2025). Nonetheless, even when mitigated in one context, cognitive biases often re-emerge when contexts shift. The diverse and penetrative nature of cognitive biases makes them difficult to be fully eliminated.

3.2 Implicit Social Reasoning

Certain cognitive reasoning failures manifest only within social contexts. We define implicit social reasoning as an individual model’s capacity to internally infer and reason about (1) others’ mental states (e.g., beliefs, emotions, intentions) and (2) shared social norms without requiring direct interaction.

Theory of Mind (ToM).

ToM is the cognitive ability to attribute mental states – beliefs, intentions, emotions – to oneself and others, and to understand that others’ mental states may differ from one’s own (Frith and Frith, 2005). ToM enables humans to interpret behaviors, predict actions, and navigate complex interpersonal interactions, central in social reasoning. Typically emerging in early childhood with milestones like passing false belief tasks (understand that others’ beliefs may be incorrect or different) (Wimmer and Perner, 1983), ToM has been a central focus in human psychology and cognitive science.

Under this inspiration, recent research evaluates the ToM capacity of LLMs, to gauge their ability to engage in social reasoning. Early studies focused on classic ToM tasks, such as false-belief (van Duijn et al., 2023; Kim et al., 2023), perspective-taking (infer what another individual perceives) (Sap et al., 2022), and unexpected content tasks (predicting what others would believe is inside a mislabeled unopened container) (Pi et al., 2024). Surprisingly, even advanced models such as GPT-4 struggle with these tasks trivial for human children. Moreover, minor modifications in task phrasing lead to drastic drops in performance, showing LLM ToM reasoning is unstable (Ullman, 2023; Kosinski, 2023; Pi et al., 2024; Shapira et al., 2023).

While there has been clear progress from early models like GPT-3 – which largely failed at ToM tasks – to newer models such as GPT-4o and reasoning models like o1-mini, which can solve many standard ToM tests, their underlying reasoning remains brittle under simple perturbations (Gu et al., 2024; Zhou et al., 2023d). Also, LLMs still struggle with higher-order, more complex aspects of ToM, such as predicting others’ behaviors, making appropriate moral or social judgments, and translating this understanding into coherent actions (He et al., 2023; Gu et al., 2024; Marchetti et al., 2025; Amirizaniani et al., 2024a; Strachan et al., 2024). Particularly, on dynamic, conversational benchmarks (Xiao et al., 2025; Kim et al., 2023), even state-of-the-art models fail to demonstrate consistent ToM capabilities and perform significantly worse than humans. Furthermore, current models exhibit deficits in emotional reasoning. This includes difficulties in emotional intelligence (EI) (Sabour et al., 2024; Hu et al., 2025; Amirizaniani et al., 2024b; Vzorinab et al., 2024), susceptibility to affective bias (Chochlakis et al., 2024), and limited understanding of cultural variations in emotional expression and interpretation (Havaldar et al., 2023).

While prompting techniques like CoT offer some improvements (Gandhi et al., 2024), fundamental gaps remain due to deeper limitations from the LLM architecture, training paradigms, and a lack of embodied cognition (Strachan et al., 2024; Sclar et al., 2023). Failures in this domain constitute important application-specific limitations, and because ToM underlies many socially grounded tasks, such failures often result in significant robustness vulnerabilities. Given ToM’s centrality to social reasoning, future work should move beyond prompting, to probe deeper root causes and general mitigation.

Social Norms and Moral Values.

LLMs also struggle with reasoning about social norms, moral values, and ethical principles that govern human behavior. Unlike humans, who develop moral and ethical reasoning through experience, LLMs, trained purely on text, often exhibit inconsistent and unreliable social, moral, and ethical reasoning (Ji et al., 2024; Jain et al., 2024b).

One key limitation is that LLMs cannot reason and apply moral values (Ji et al., 2024) and social norms (Jain et al., 2024b) consistently. They often produce contradictory ethical judgments or varied moral reasoning performance when questions are slightly reworded (Bonagiri et al., 2024), generalized (Tanmay et al., 2023), or presented in a different language (Agarwal et al., 2024). Fine-tuning further exacerbates these inconsistencies, leading to sometimes prioritizing task-specific optimization over ethical coherence (Yu et al., 2024a).

Beyond inconsistencies, LLMs show notable disparities compared to humans in reasoning with social norms and moral values. These models fail significantly in understanding real-world social norms (Rezaei et al., 2025), aligning with human moral judgments (Garcia et al., 2024; Takemoto, 2024), and adapting to cultural differences (Jiang et al., 2025b). Without consistent and reliable moral reasoning, LLMs are not fully ready for real-world decision-making involving ethical considerations.

These inconsistencies and disparities constitute important application-specific limitations for safety, privacy, sensitivity, and morality-related tasks, and such failures often create severe robustness vulnerabilities, including susceptibility to jailbreaks and other forms of manipulation. Many argue that these failures stem from a fundamental absence of robust, internalized representations of ethical principles, normative frameworks, and moral intentionality (Chakraborty et al., 2025; Wang et al., 2025a; Pock et al., 2023; Almeida et al., 2024). Although training procedures such as RLHF and instruction fine-tuning introduce alignment signals, they often operate superficially and fail to produce coherent moral behavior in complex contexts (Dahlgren Lindström et al., 2025; Wang et al., 2025a; Barnhart et al., 2025; Han et al., 2025). Current efforts to address these limitations mainly include prompt-based interventions (Chakraborty et al., 2025; Ma et al., 2023), internal activation steering (Tlaie, 2024; Turner et al., 2023), and direct fine-tuning on curated moral reasoning benchmarks (Senthilkumar et al., 2024; Karpov et al., 2024). However, in practice, these methods often suffer from the same limitations as RLHF, offering surface-level and task-specific improvements that remain vulnerable against prompt manipulations and jailbreaks.

3.3 Explicit Social Reasoning

In reasoning, “society” can refer to not just an abstract concept but real-world settings involving interactions among multiple agents. In Multi-Agent Systems (MAS), explicit social reasoning is the capacity of AI systems to collaboratively plan and solve complex tasks, an area challenging for current LLMs.

Currently, key challenges include (1) long-horizon planning, (2) communications and ToM, and (3) robustness and adaptability. Long-horizon planning is the ability to maintain coherent and coordinated strategies over extended interactions, where LLMs frequently fail (Li et al., 2023a; Cross et al., 2024; Guo et al., 2024c; Han et al., 2024c; Zhou et al., 2025) as they rely excessively on local or recent information (Piatti et al., 2024; Zhang et al., 2023; Han et al., 2024c). Furthermore, individual agents’ social reasoning failures (discussed in Section 3.2, e.g., inefficient communication and ToM) (Guo et al., 2024c; Agashe et al., 2024; Zhou et al., 2025), lead to misinterpretations and inaccurate representations of other agents, causing strategic misalignment (Pan et al., 2025; Li et al., 2023a; Cross et al., 2024; Han et al., 2024c). Lastly, MAS face robustness and adaptability issues (Li et al., 2023a; Cross et al., 2024), lacking resilience to disruptive or malicious disturbances (Huang et al., 2024) and struggling with task verification and termination (Pan et al., 2025; Baker et al., 2025).

These failures stem from both individual LLM capabilities and MAS system design (Pan et al., 2025), representing key application-specific failures, and they frequently manifest as robustness vulnerabilities in multi-agent settings. Standard LLMs, optimized for next-token prediction, lack the explicit reasoning depth needed for multi-step, jointly conditioned objectives, and their fragile ToM representations cause coordination breakdowns. Individual limitations in cognitive skills, such as working memory, and cognitive biases, such as the anchoring effect, can also lead to MAS failures like difficulties with long-horizon planning. On the system level, many MAS often lack effective robustness layers – clear role specifications, cross-verification among agents, and reliable termination checks – allowing errors to cascade (Huang et al., 2024; Pan et al., 2025).

Mitigation research thus targets (i) richer internal models like belief tracking and hypothesis testing (Li et al., 2023a; Cross et al., 2024), (ii) structured communication protocols with mandatory verification phases (Pan et al., 2025), and (iii) dedicated inspector or challenger agents that monitor and contest questionable outputs (Huang et al., 2024; Baker et al., 2025). While these approaches reduce errors, none eliminate them and all require significant task-specific engineering that is difficult to generalize. In parallel, the recent rise of context engineering (Mei et al., 2025) – which focuses on a systematic optimization of the entire information payload fed to an LLM during inference – is increasingly seen as a more robust alternative to traditional prompt engineering in MAS. Real-world deployment will hence require an integrated stack combining all three strands with domain fine-tuning and formal safety guarantees (Lindemann and Dimarogonas, 2025; de Witt, 2025).

4 Reasoning Formally in Logic

When reasoning goes beyond intuition, a formal framework is needed to ensure rigor. As introduced in Section 2, logic is directly about doing “correct” reasoning, ensuring premises support conclusions (Jaakko and Sandu, 2002). LLM failures in logical reasoning (Liu et al., 2025) thus pose serious risks, potentially leading to flawed thought processes and harmful decisions. Logic spans a continuum from implicit structures in natural languages (Iwańska, 1993), to explicit symbolic (Lewis et al., 1959) and mathematical (Shoenfield, 2018) representations. This section follows that progression, examining failures in increasingly formal reasoning paradigms.

4.1 Logic in Natural Languages

Reversal Curse.

While natural languages are not fully logical structures (Fedorenko et al., 2024), they do hold simple logical relations (Sampson, 1979; Stich, 1975) that humans trivially grasp. A representative failure of LLMs is reversal curse: despite being trained on “A is B,” models often fail to infer the equivalent “B is A” – a trivial bidirectional equivalence for humans. Such failures occur even when a factual sentence from training data is just restated as a question during inference. First observed by Berglund et al. (2023) as a fundamental failure that occur widely across tasks on GPT-based (Radford and Narasimhan, 2018) models, this phenomenon is later shown in Wu et al. (2024a) not to affect BERT (Devlin et al., 2019).

This failure has been attributed to uni-directional training objectives of Transformer-based LLMs (Lv et al., 2024; Lin et al., 2024c), which induce structural asymmetry in model weights (Zhu et al., 2024a) and inability to predict antecedent words within training data (Guo et al., 2024b; Youssef et al., 2024). Golovneva et al. (2024) further argues that scaling alone cannot resolve the issue due to Zipf’s law (Newman, 2005). Mitigation efforts accordingly center on reducing directional bias through training data augmentation. Early approaches syntactically reverse facts (Lu et al., 2024; Ma et al., 2024b), while later methods introduce substring-preserving reversals (Golovneva et al., 2024) and permuting semantic units in training data (Guo et al., 2024b). Despite differing in complexity, all methods share a common goal: exposing models to bidirectional formulations to restore logical symmetry.

Compositional Reasoning.

Compositional reasoning requires combining multiple pieces of knowledge or arguments into a coherent inference. Fundamental failures arise when LLMs are capable of each component but fail in integrating them. Studies show systematic failures in basic two-hop reasoning – combining only two facts across documents – and even worsening performance with increased compositional depth and the addition of distractors (Zhao and Zhang, 2024; Xu et al., 2024b; Guo et al., 2025a). This fundamental weakness extends beyond basic tasks, to compositions of math problems (Zhao et al., 2024c; Hosseini et al., 2024; Sun et al., 2025b) (i.e., LLMs succeed in individual problems but fail in composed ones), multi-fact claim verification (Dougrez-Lewis et al., 2024), and other inherently compositional tasks (Dziri et al., 2023).

This failure is attributed to an inability of holistic planning and in-depth thinking. CoT prompting improves on this by making reasoning steps explicit at inference time. Still, latent compositionality is more efficient in practice yet harder to achieve (Yang et al., 2024c). Toward this, Li et al. (2024f) identifies faulty implicit reasoning in mid-layer multi-head self-attention (MHSA) modules and edit them, while Zhou et al. (2024a) enhances training with graph-structured reasoning path data, similar to distilling CoT reasoning process into training data (Yu et al., 2024b). Both converge in spirit to improving latent compositional reasoning by explicitly guiding models’ internal reasoning mechanisms.

Specific Logical Relations.

Both reversal curse and compositional reasoning reflect fundamental failures affecting a broad range of reasoning tasks, exposed across general corpora or arbitrary logical statements. In contrast, another line of work focuses on specific logical relations, uncovering targeted LLM reasoning failures, which requires purpose-built datasets for quantitative analysis at scale. Using this approach, studies reveal LLM weaknesses in specific types of logic such as converse binary relations (Qi et al., 2023), syllogistic reasoning (Ando et al., 2023), causal inference (Joshi et al., 2024), and even shallow yes/no questions (Clark et al., 2019). Those weaknesses appear as both fundamental inabilities in reasoning with certain logic, and limitations in specific corresponding downstream applications: more complexities are added by testing divergences between factual inference and logical entailment (Chan et al., 2024), or putting causal reasoning in contexts (Zhao et al., 2024d). To scale up, some synthetically generate natural language data from symbolic templates (Wan et al., 2024; Wang et al., 2024; Gui et al., 2024). Alternatively, Chen et al. (2024d) seed known failures and leverage LLMs to synthetically expand the dataset. While root causes are harder to isolate for those specific logic, the curated datasets offer a natural mitigation by direct fine-tuning.

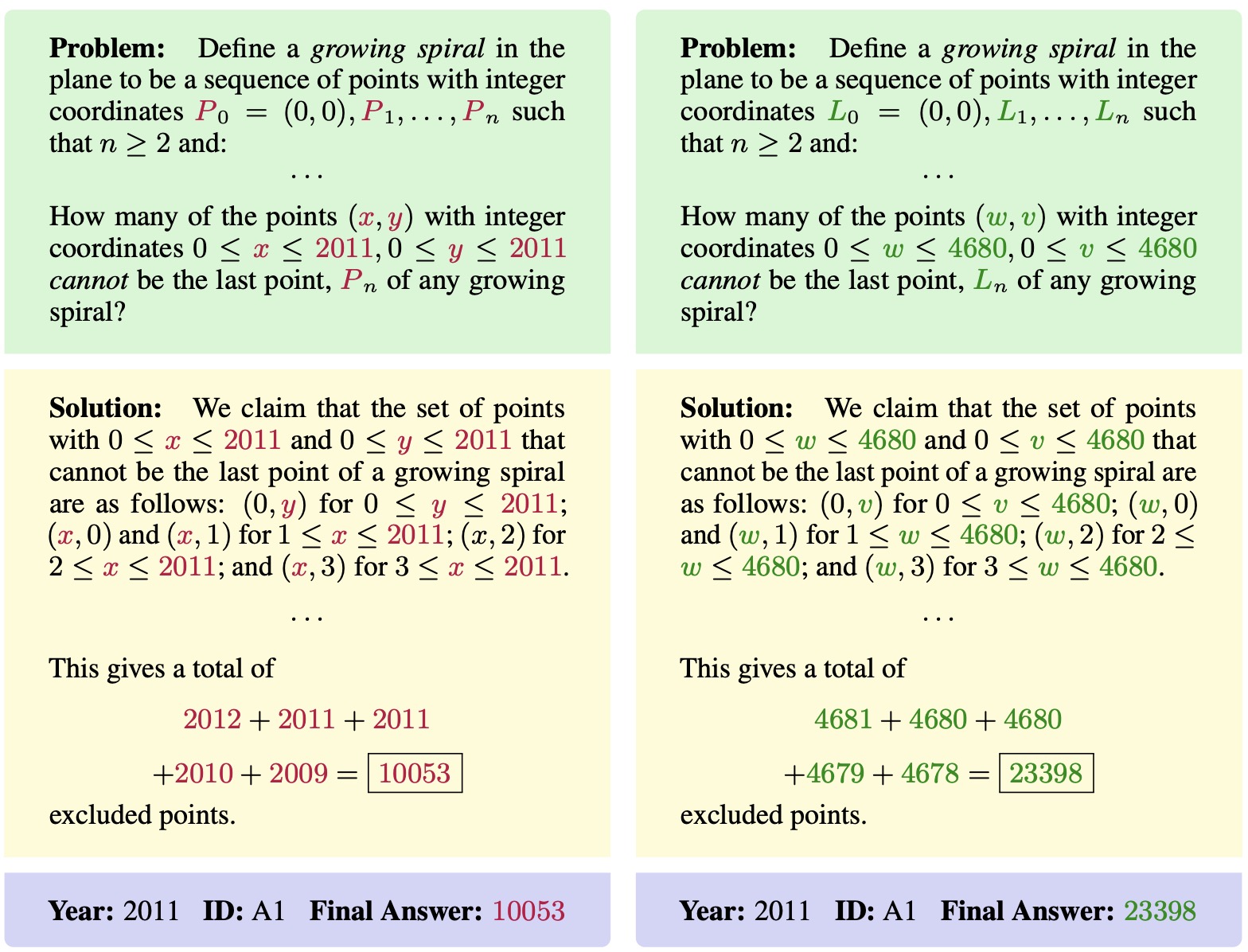

4.2 Logic in Benchmarks

While Section 4.1 studies LLM reasoning failures directly within natural language logic, another growing body of work leverages logical structures implicit in benchmarks to systematically uncover robustness issues in LLM reasoning. Motivated by rising concerns about the reliability of static benchmarks (Zhou et al., 2023c; Zheng et al., 2024b; Xu et al., 2024a; Patel et al., 2021), these studies introduce logic-preserving transformations based on particular task structures, such as reordering options in multiple-choice questions (MCQs) (Zheng et al., 2023; Pezeshkpour and Hruschka, 2023; Alzahrani et al., 2024; Gupta et al., 2024; Ni et al., 2024), rearranging parallel premises and events (Chen et al., 2024c; Yamin et al., 2024), or superficially editing unimportant contexts (e.g., character names) (Jiang et al., 2024b; Mirzadeh et al., 2024; Shi et al., 2023; Wang and Zhao, 2024). Such modifications keep the tasks semantically the same. Performance drops thus point to reduced trustworthiness and reveal critical robustness issues: despite strong static benchmark scores, the model’s reasoning must remain consistent on the reasoning tasks being tested.

Math Word Problem (MWP) Benchmarks.

Certain benchmarks inherently possess richer logical structures that facilitate targeted perturbations. MWPs exemplify this, as their logic can be readily abstracted into reusable templates. Researchers use this property to generate variants by sampling numeric values (Gulati et al., 2024; Qian et al., 2024; Li et al., 2024b) or substituting irrelevant entities (Shi et al., 2023; Mirzadeh et al., 2024). Structural transformations – such as exchanging known and unknown components (Deb et al., 2024; Guo et al., 2024a) or applying small alterations that change the logic needed to solve problems (Huang et al., 2025b) – further highlight deeper robustness limitations.

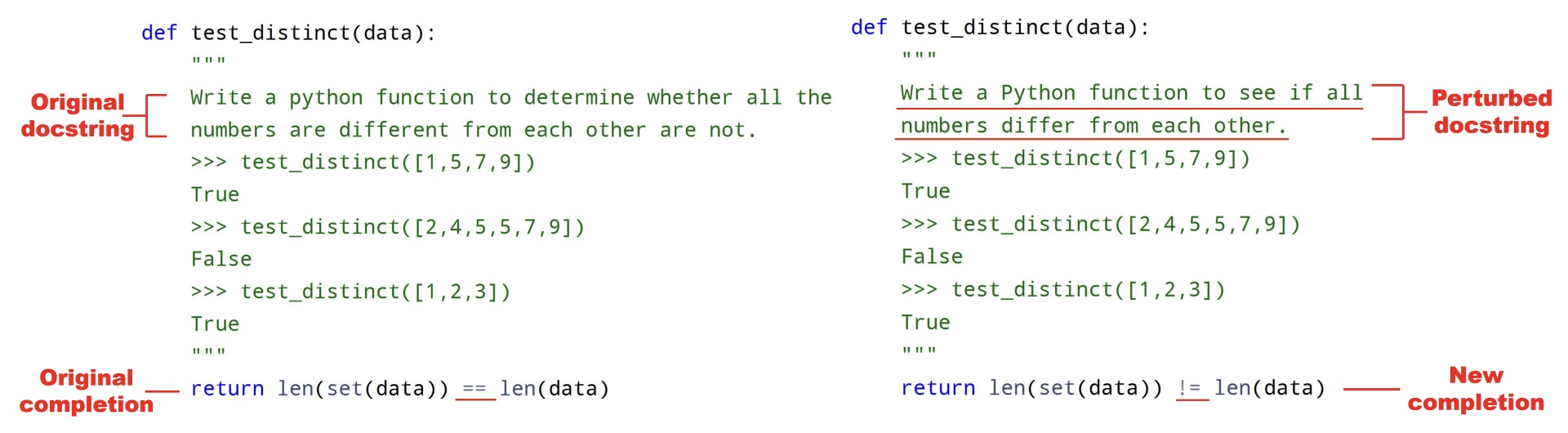

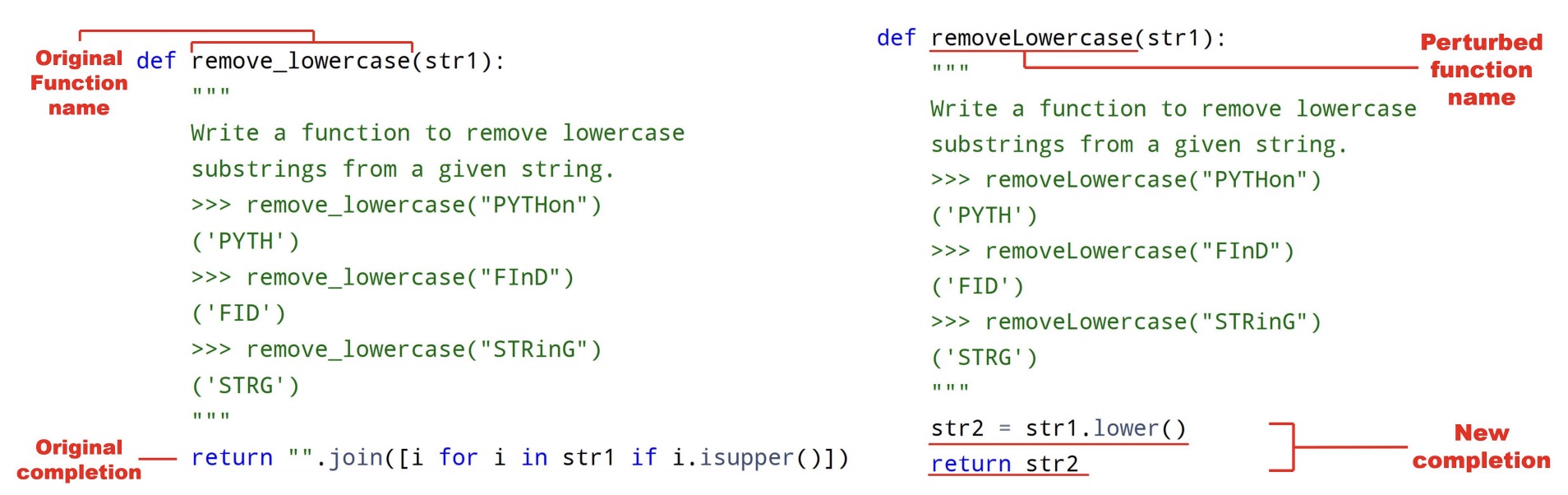

Coding Benchmarks.

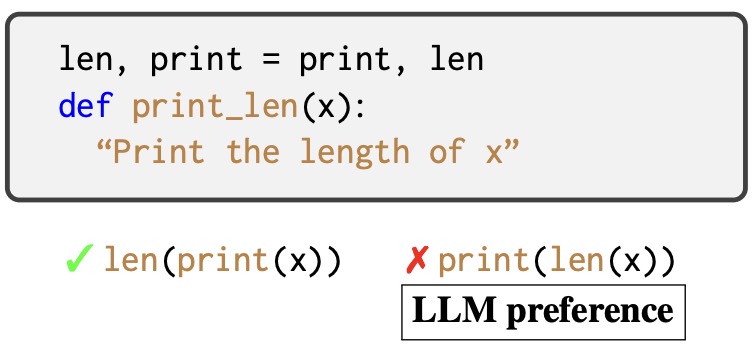

Another example is coding benchmarks, which ask to generate code snippets based on function definitions, doc strings specifying coding tasks, and optional starter code. Common transformations include syntactically editing doc strings (Xia et al., 2024; Wang et al., 2022; Sarker et al., 2024; Roh et al., 2025), renaming functions and variables (Wang et al., 2022; Hooda et al., 2024), and altering control-flow logic such as swapping if-else cases (Hooda et al., 2024). Beyond preserving the task logic, some studies introduce adversarial code changes to test whether LLMs identify and adapt to them (Miceli-Barone et al., 2023; Dinh et al., 2023), thereby evaluating deeper reliability. Beyond perturbations, a rising approach utilizes meta-theorems such as the Monadic Second-Order logic from CS theory to synthesize algorithmic coding problems at scale (Beniamini et al., 2025), posing a significant challenge even for state-of-the-art large reasoning models (LRMs) (Xu et al., 2025a).

Mitigation & Extensions.

These failures are attributed to a lack of robustness or overfitting to public datasets. Robustness-related issues are commonly mitigated by applying perturbations to diversify training data (Patel et al., 2021), thus enhancing resilience to variations. Though effective, these approaches are expensive in compute and limited in domain, making them hard to generalize. Overfitting concerns are addressed through dynamically evolving (Jain et al., 2024a; White et al., 2024) or privately maintained datasets (Rajore et al., 2024). They ensure rigorous evaluation, a necessary first step for steering LLM improvement toward better reasoning in the benchmark subjects.

Beyond individual benchmarks, Hong et al. (2024) automates a set of transformations across math and coding benchmarks, and Wu et al. (2024e) alters common assumptions of well-known tasks. Shojaee et al. (2025) further moves beyond standard math and coding benchmarks – which assess models solely by final-answer accuracy – by evaluating them on logic puzzles like the Tower of Hanoi, where both reasoning steps and solutions can be systematically assessed. The study finds that even state-of-the-art LRMs suffer an “accuracy collapse” as puzzle complexity increases, though Lawsen (2025) criticizes aspects of the experimental design, suggesting these may unfairly impact reported accuracy.

4.3 Arithmetic & Mathematics

Mathematics, historically a universal framework for rigorous reasoning (Shoenfield, 2018), has exposed fundamental limits in LLM reasoning, particularly in arithmetic-related tasks.

Counting.

Despite its simplicity, counting poses a notable fundamental challenge for LLMs (Xu and Ma, 2024; Chang and Bisk, 2024; Zhang and He, 2024; Fu et al., 2024; Conde et al., 2025; Yehudai et al., 2024), even the reasoning ones (Malek et al., 2025), which extend to basic character-level operations like reordering or replacement (Shin and Kaneko, 2024) and affect a wide range of downstream reasoning applications (Vo et al., 2025; Guo et al., 2025b; Parcalabescu et al., 2021). Although the failures manifest at the application level, much work suggest that they originate primarily from architectural and representational limits, including tokenization (Zhang et al., 2024f; Shin and Kaneko, 2024), positional encoding (Chang and Bisk, 2024), and training data composition (Allen-Zhu and Li, 2024), rather than from superficial prompting or task framing on the application-level. Mitigation via supervised fine-tuning (Zhang and He, 2024) and engaged reasoning (Xu and Ma, 2024) have been proposed, yet robust counting remains elusive for current models. Since the limitations largely arise from current LLM architectures, future work should consider deeper mitigation through architectural innovations.

Basic Arithmetic.

Another fundamental failure is that LLMs quickly fail in arithmetic as operands increase (Yuan et al., 2023; Testolin, 2024), especially in multiplication. Research shows models rely on superficial pattern-matching rather than arithmetic algorithms, thus struggling notably in middle-digits (Deng et al., 2024). Surprisingly, LLMs fail at simpler tasks (determining the last digit) but succeed in harder ones (first digit identification) (Gambardella et al., 2024). Those fundamental inconsistencies lead to failures for practical tasks like temporal reasoning (Su et al., 2024).

These issues stem from heuristic-driven reasoning strategies (Nikankin et al., 2024) and limited numerical precision (Feng et al., 2024a). Proposed solutions include detailed step-by-step training datasets (Yang et al., 2023c), digit-order reversals to focus attention on least significant digits – mirroring human multiplication strategies (Zhang-Li et al., 2024; Shen et al., 2024), LLM self-improvement methods (Lee et al., 2025), and neuro-symbolic augmentations that enable internal arithmetic reasoning (Dugan et al., 2024). Despite these advances, fundamental research on intrinsic arithmetic capabilities is increasingly overshadowed by the prevalent reliance on external tool use.

Math Word Problems & Beyond.

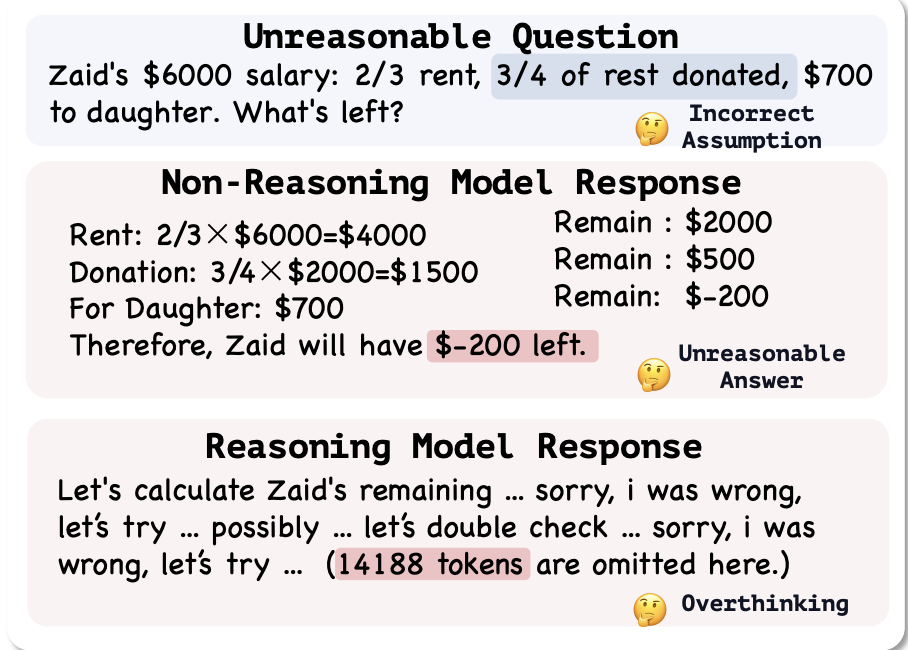

Beyond counting and basic arithmetic – two fundamental failures that propagate into many downstream reasoning applications – Math Word Problems (MWPs) represent a more specific yet highly consequential application domain. Math Word Problems (MWPs) combine arithmetic with contextual logical reasoning, making them a prominent application for assessing LLM capabilities. Beyond using transformations to expose reasoning flaws (Section 4.2), research identifies challenges ranging from specific simple tasks (Nezhurina et al., 2024) to large-scale evaluations on a domain of math (Wei et al., 2023b; Boye and Moell, 2025; Fan et al., 2024; Sun et al., 2025b). Additionally, LLMs exhibit susceptibility when faced with unsolvable or faulty MWPs (Ma et al., 2024a; Rahman et al., 2024; Tian et al., 2024). LLMs struggle even in assessing reasoning process on MWPs (Zhang et al., 2024g), an arguably easier task than generation. Given these persistent challenges, current efforts in MWPs prioritize developing general methods to improve overall reasoning performance rather than investigating and addressing individual failures.

5 Reasoning in Embodied Environments

Reasoning is not merely an abstract process; it is deeply grounded in reality (Shapiro and Spaulding, 2024), requiring the ability to perceive, interpret, predict, and act within the physical world, with accurate understanding of spatial relationships, object dynamics, and physical laws (Lee-Cultura and Giannakos, 2020). While humans (Varela et al., 2017) – and even many animals (Andrews and Monsó, 2021) – develop such embodied reasoning naturally through sensory and motor experiences, LLMs remain fundamentally limited by their lack of true physical grounding in the physical world. This gap leads to systematic errors and unrealistic predictions when LLMs attempt even basic physical reasoning (Wang et al., 2023c; Ghaffari and Krishnaswamy, 2024a). Despite growing interest in spatial intelligence, research into LLMs’ physical reasoning failures is still sparse. In this section, we survey failures across three progressively complex embodied reasoning modalities: (1) 1D text-based, (2) 2D perception-based, and (3) 3D real-world physical reasoning.

5.1 1D – Text-Based Physical Reasoning Failures

Text-Based Physical Commonsense Reasoning.

Physical commonsense reasoning refers to the intuitive understanding of how objects interact in the physical world. Failures of LLMs include lack of knowledge about object attributes (e.g., size, weight, softness) (Wang et al., 2023c; Liu et al., 2022b; Shu et al., 2023; Kondo et al., 2023), spatial relationships (e.g., above, inside, next to) (Liu et al., 2022b; Shu et al., 2023; Kondo et al., 2023), simple physical laws (e.g., gravity, motion, and force) (Gregorcic and Pendrill, 2023), and object affordance (possible actions/reactions an object can make) (Aroca-Ouellette et al., 2021; Adak et al., 2024; Pensa et al., 2024). Humans acquire this kind of reasoning effortlessly through embodied experience, whereas LLMs struggle in it, as they rely solely on textual data without direct perceptual or embodied experience. Even in purely text-based settings, when tasks require more than semantic comprehension, demanding real-world understanding, LLMs exhibit systematic failures. These failures are fundamental to current LLMs. While their architectures and training paradigms support impressive language-based learning, they lack the physical grounding.

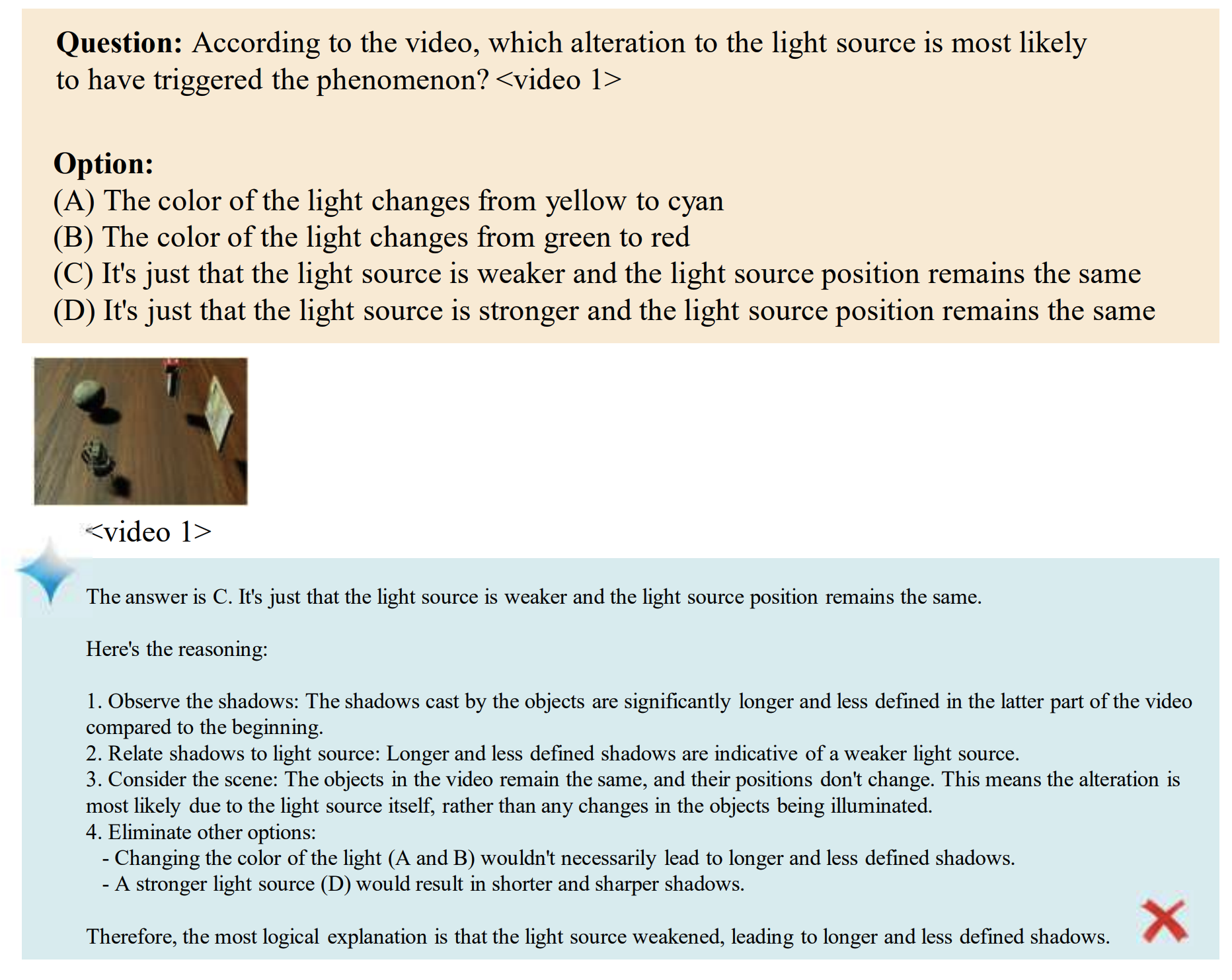

Physics & Scientific Reasoning.

Beyond basic physical commonsense, LLMs struggle with formal physics reasoning and scientific problem-solving, which require not just factual recall and intuition but multi-step logical deduction, quantitative reasoning, and correct use of physical laws – areas where even state-of-the-art models like o1 (Jaech et al., 2024) and o3-mini (OpenAI, 2025) have notable deficits (Zhang et al., 2025a; Xu et al., 2025b; Gupta, 2023; Chung et al., 2025; Zhang et al., 2025b; Qiu et al., 2025). Notably, even when LLMs possess these scientific skills, they often fail to apply them effectively in complex problems and real-world scientific discovery (Jaiswal et al., 2024; Ouyang et al., 2023; Chen et al., 2025). These failures result in strong limitations in LLMs’ application in scientific domains.

Text-Based Mitigation.

These failures largely reflect limitations inherent to the text modality, since semantic and linguistic understanding alone cannot guarantee grounded physical insight (Wang et al., 2023c; Zhang et al., 2025b). Text-based mitigation strategies focus on three fronts: training, prompting, and integration with external tools. First, LLMs are fine-tuned on corpora that explicitly encode structured physical knowledge – such as object attributes, spatial relationships, or physical laws – to better align model priors with real-world dynamics (Lyu et al., 2024; Wang et al., 2023c). Second, prompting methods like CoT encourage models to reason explicitly, reducing reliance on shallow text-based pattern-matching and enabling discovery of more nuanced causal and spatial relationships (Wei et al., 2022b; Ding et al., 2023). Third, LLMs are increasingly paired with external tools – such as code executors or physics engines – that allow models to verify, simulate, or compute outcomes directly and tangibly (Ma et al., 2024c; Cherian et al., 2024).

5.2 2D – Perception-Based Physical Reasoning Failures

What’s Wrong with the Picture?

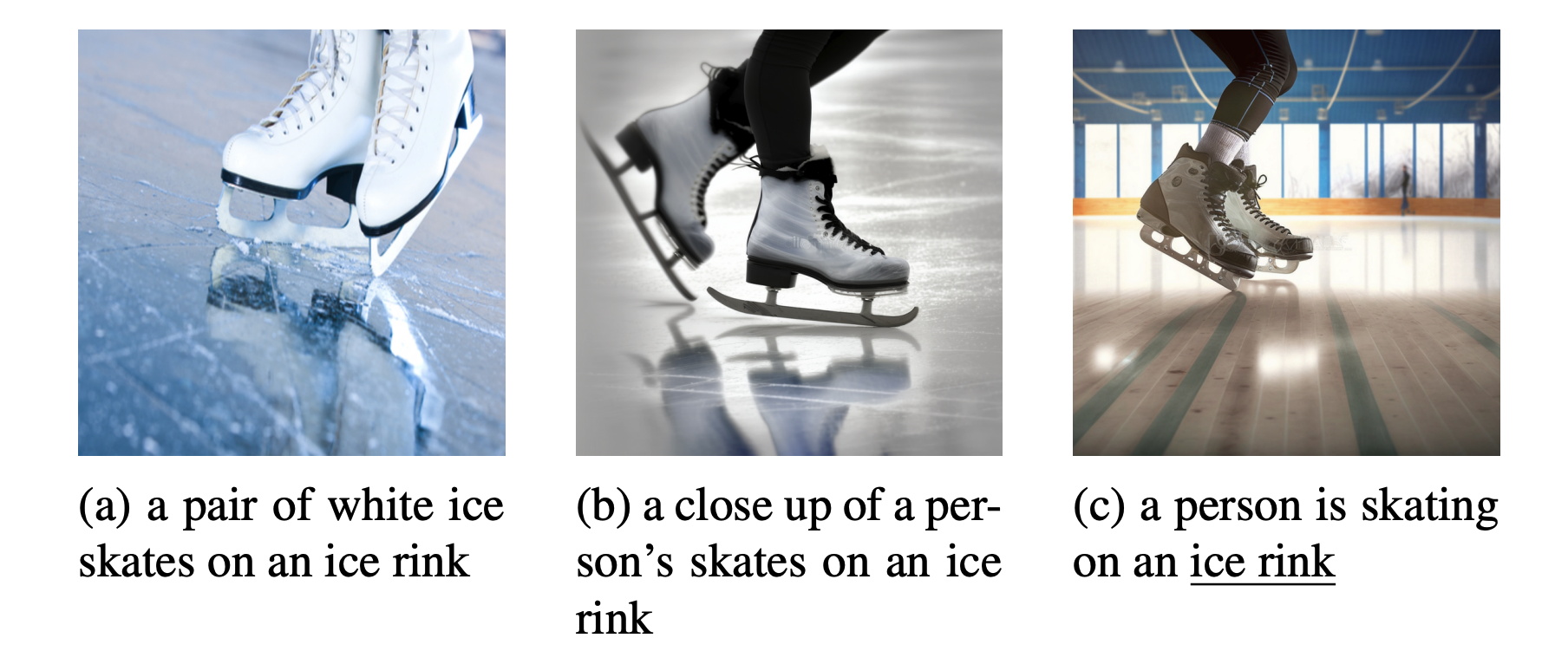

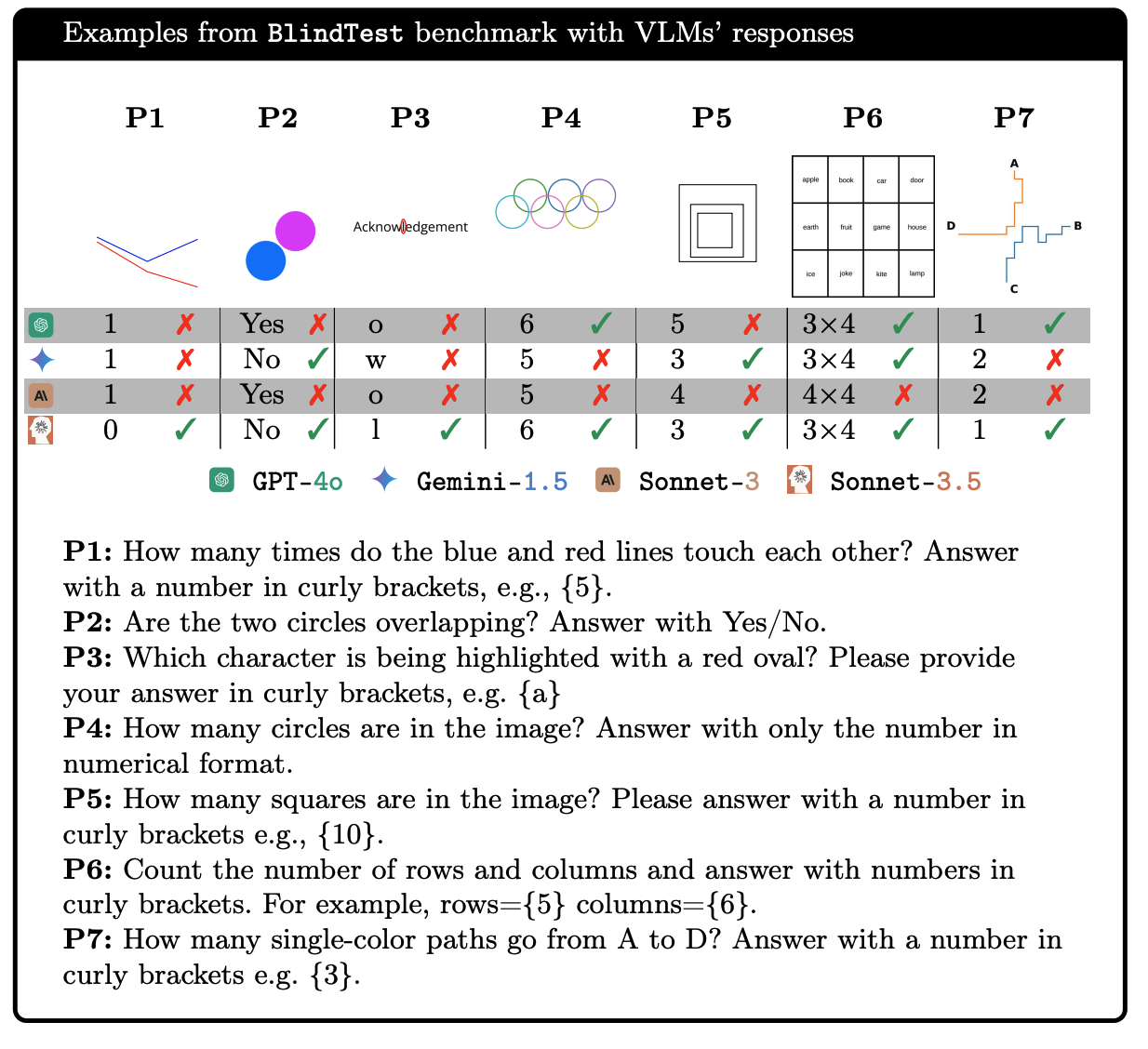

The classic “What’s Wrong with the Picture?” visual reasoning game challenges participants to spot anomalies in static images. Applied to vision-language models (VLMs), similar tasks reveal surprising failures in simple tasks such as anomaly detection (Bitton-Guetta et al., 2023; Zhou et al., 2023b), object counting and overlap identification (Rahmanzadehgervi et al., 2024), and spatial relation understanding from the image content (Liu et al., 2023a; Zhao et al., 2024a). These failures constitute key perception-related limitations and robustness vulnerabilities.

2D Physics and Physical Commonsense.

Extending beyond detecting simple anomalies or object properties in static images, VLMs face deeper challenges reasoning about the physics in visual contexts. Despite the addition of visual inputs, VLMs still struggle with physical commonsense (Li et al., 2024d; Ghaffari and Krishnaswamy, 2024b; Schulze Buschoff et al., 2025; Dagan et al., 2023; Balazadeh et al., 2024b; Chow et al., 2025; Bear et al., 2021; Xu et al., 2025c) and advanced physics (Ates et al., 2020; Anand et al., 2024; Shen et al., 2025), exhibiting performance gaps similar to those seen in text-only settings discussed in Section 5.1. Similar to the 1D setting, these weaknesses reflect fundamental failures of current models and lead to significant limitations in applying them to scientific and perception-based domains.

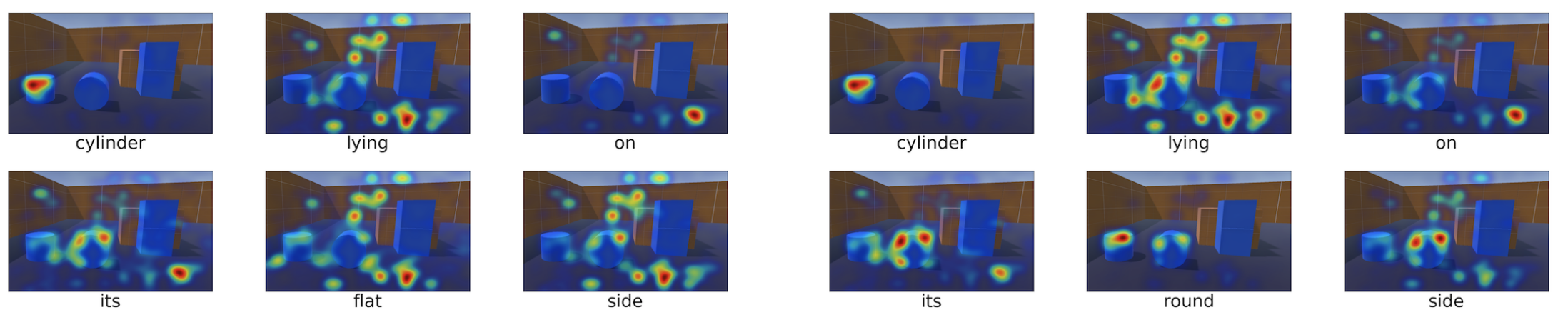

Visual Input for Spatial Reasoning.

Real-world spatial reasoning requires understanding evolving spatial relationships rather than isolated snapshots. Recent works use 2D simulated environments to test models’ grasp of motion and object interactions (e.g., predicting post-impact trajectories) (Cherian et al., 2024), spatial prediction and manipulation (e.g., object placement for stability) (Ghaffari and Krishnaswamy, 2024a), spatial communication and alignment (e.g., conveying location information) (Kar et al., 2025), and embodied planning in multi-step tasks (Chia et al., 2024; Paglieri et al., 2024; Xu et al., 2025c). While VLMs exhibit some basic spatial knowledge, they consistently fail to compose and apply it in dynamic, interactive tasks, revealing a gap in structured spatial reasoning. This failure is an indication of limitations on 2D relevant applications.

Perception-Based Mitigation.

These errors arise from three key sources. First, models often over-rely on text or common scenarios from their training data, rather than accurately interpreting visual inputs (Deng et al., 2025a; Bitton-Guetta et al., 2023; Zhou et al., 2023b). Second, some failures may be explained by the binding problem from cognitive science, where the brain – or a model – struggles to process multiple distinct objects simultaneously due to limited shared resources (Campbell et al., 2025). Third, just as language alone does not guarantee grounded physical understanding, visual inputs alone may also lack sufficient spatial semantics; plain image recognition does not automatically confer an understanding of spatial object dynamics and causality (Chen et al., 2024a; Qi et al., 2025). To mitigate, recent work focuses on curating balanced, augmented datasets to reduce bias toward text inputs, or directly using 2D physics data to improve physical understanding (Deng et al., 2025a; Balazadeh et al., 2024a). Another strategy targets training and model architecture (Cheng et al., 2024), by introducing spatially grounded, sequential attention mechanisms (Izadi et al., 2025) and leveraging reinforcement learning to align models with spatial commonsense (Sarch et al., 2025). Finally, beyond end-to-end learning, integration with external physical simulation tools has also emerged, to enable explicit trial-and-error (Liu et al., 2022a; Cherian et al., 2024; Zhu et al., 2025).

5.3 3D – Real-World Physical Reasoning Failures

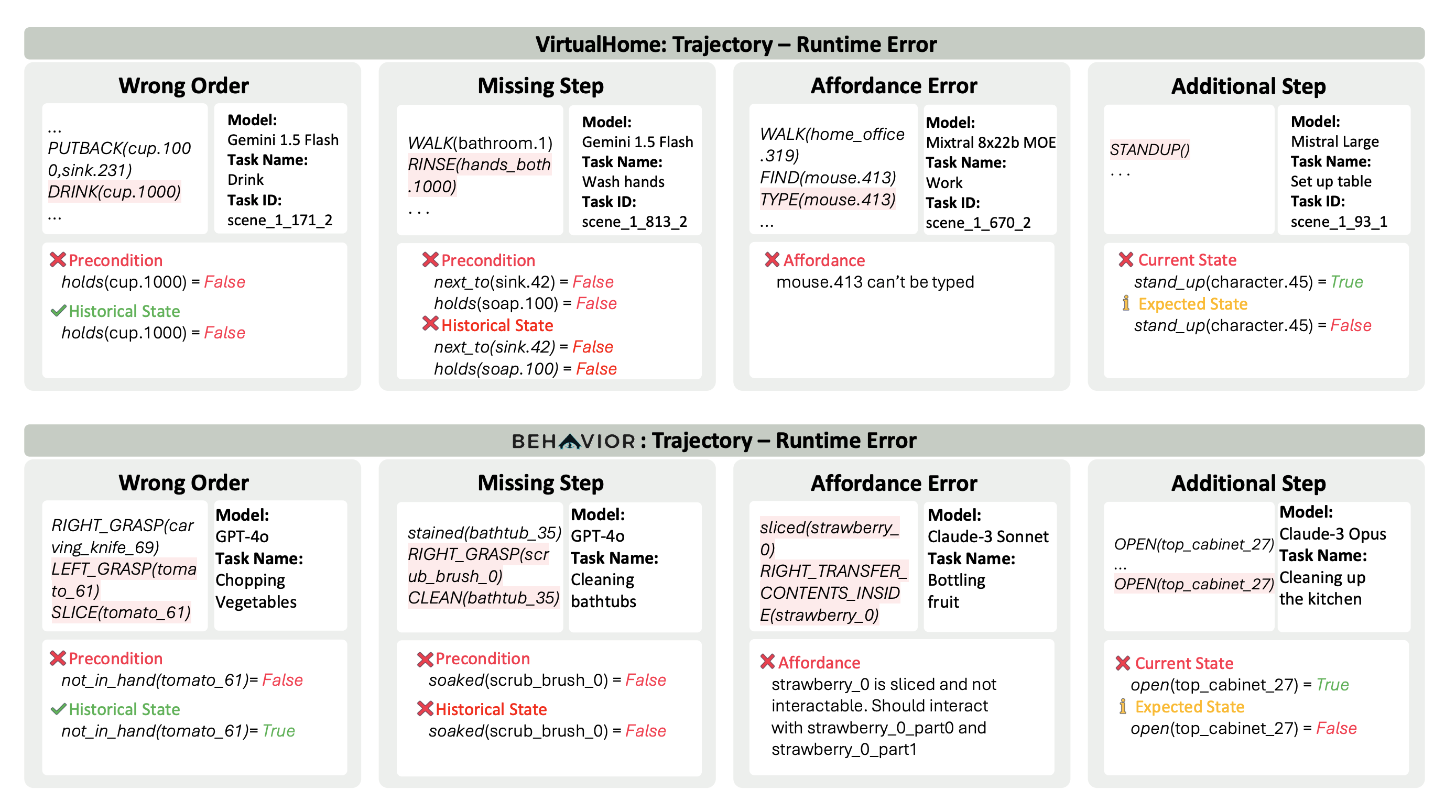

Real embodied reasoning requires agents to actively interact with their environment, through robotics or interactive simulations that go beyond static images or simple 2D snapshots. Such agents must process real-time goals and feedback, and execute physical actions. Unlike 1D (text-only) and 2D (image-based) tasks, 3D embodied reasoning centers on action rather than passive analysis. Despite advances in robotics and embodied AI, LLMs and VLMs face persistent challenges including inaccurate spatial modeling, unrealistic affordance prediction, tool-use failures, and unsafe behaviors. This subsection highlights these key failure cases from both simulated and real-world studies.

Real-World Failures in Affordance and Planning.

A key failure is models’ inability to generate feasible and coherent action plans. LLMs and VLMs often produce physically impossible or inefficient actions due to affordance errors (incorrect reasoning about possible object actions) (Ahn et al., 2022; Li et al., 2025; Hu et al., 2024; Huang et al., 2022a; Jin et al., 2024) and causal real-world reasoning limitations that cause illogical or looping behaviors (Jin et al., 2024; Hu et al., 2024). These fundamental shortcomings in modeling real-world affordances and planning significantly constrain the deployment of LLMs in embodied and real-world applications, motivating emerging research on world models and robotics systems that can more effectively perceive, plan, and interact with the physical environment.

Spatial and Tool-Use Reasoning.

Even when LLMs successfully decompose tasks and generate seemingly valid plans, failures arise due to poor spatial reasoning (Dao and Vu, 2025; Mecattaf et al., 2024) and the inability to generalize tool-use strategies (Xu et al., 2023a). Concretely, LLMs often struggle with 3D distance estimation (Mecattaf et al., 2024; Chen et al., 2024a), object localization (Mecattaf et al., 2024), and multi-step manipulation (Guran et al., 2024), leading to systematic failures in both spatial awareness and interaction with physical environments. These failures limit the adaptability of LLMs in many real-world settings where they must quickly understand, adapt to, and utilize the environment.

Safety and Long-Term Autonomy.

Safety and reliability of LLM-driven embodied agents are ongoing concerns. LLM-generated robotic task plans are highly sensitive to prompt phrasing (Liang et al., 2023) and vulnerable to adversarial manipulation (Zhang et al., 2024c). Moreover, these systems fail to align with human ethical requirements and are easily jailbroken to perform harmful actions, such as recording private information (Rezaei et al., 2025; Zhang et al., 2024c). These findings on limitations and robustness concerns underscore the urgent need for robust, self-correcting, and safety-aware embodied AI systems before real-world deployment.

Embodied Mitigation.

A critical factor underlying these failures is the auto-regressive nature of LLMs. Naive LLMs and VLMs generate plans step by step, lacking mechanisms to detect and correct earlier mistakes or execution errors (Liang et al., 2023; Huang et al., 2022b; Duan et al., 2024). Incorporating feedback mechanisms or explicit error-handling strategies significantly reduces these errors (Liang et al., 2023; Wang et al., 2023a). Another major factor is the absence of a robust internal world model (Dao and Vu, 2025; Wu et al., 2025a), which often forces LLMs to rely on external aids – such as explicit spatial prompts – to compensate for their limited spatial and real-world reasoning. To advance embodied intelligence, future research should focus on strengthening LLMs’ internal representations of space, including spatial memory, real-world causal dynamics, and quantitative spatial understanding.

6 Discussions & Conclusion

Along the Failure Axis.

While our main taxonomy organizes failures by reasoning type, examining them along the complementary failure axis reveals cross-cutting patterns. Fundamental failures – stemming from intrinsic architectural or training constraints – manifest across all reasoning types. For example, the reversal curse (Section 4.1), cognitive biases such as confirmation bias (Section 3.1), and working memory limitations that cause proactive interference (Section 3.1) appear in informal reasoning, formal logic, and embodied settings alike. Root cause analyses in those categories are particularly rich, suggesting meaningful methods not only for mitigating the specific failures, but for generally improving the architecture and our understanding of it. Application-specific limitations cluster in certain domains: Theory of Mind instability in implicit social reasoning (Section 3.2), inability to generalize to novel Math Word Problem structures in formal reasoning (Section 4.2), or systematic affordance prediction errors in 3D embodied reasoning (Section 5.3). These typically require domain-specific mitigation strategies, such as integrating physics simulators for embodied tasks or symbolic augmentation for mathematics. Tracing the failure cases back to fundamental elements in data or architecture has, on the other hand, attracted less attention from existing literature. Robustness issues cut across domains but are particularly well-studied in benchmark-based evaluations (Section 4.2) and social reasoning (Section 3.2, where minor, semantically-preserving perturbations – such as reordering options in multiple-choice questions, renaming variables in code, or paraphrasing moral dilemmas – can lead to large and inconsistent shifts in model outputs). Approaches to detect robustness issues largely revolve around applying such perturbations at scale, often automatically, to stress-test model stability. This perturbation-based paradigm has proven transferable across domains, from coding benchmarks to ToM evaluations, suggesting its utility as a unified detection methodology.

Suggestions for Future Directions.

Our survey highlights several gaps and opportunities. First, root cause analyses remain incomplete for some failures, including compositional reasoning breakdowns (Section 4.1), higher-order ToM failures (Section 3.2), physical commonsense gaps in 2D and 3D environments (Sections 5.2, 5.3), and brittle multi-agent planning (Section 3.3). Bridging these requires connecting behavioral errors to specific internal mechanisms, e.g., faulty attention head coordination or insufficient intermediate representation alignment. Second, the field would benefit from unified, persistent failure benchmarks that span all failure types, akin to the very recent effort Malek et al. (2025), updated regularly to test the latest general-purpose and reasoning-specialized models. Such benchmarks should preserve historically challenging cases while incorporating newly discovered ones, enabling longitudinal tracking of failure persistence. Third, failure-injection principles could be applied not only to dedicated robustness benchmarks but also to general reasoning benchmarks – by adding adversarial sections, multi-level task difficulty, or cross-domain compositions designed to trigger known weaknesses. Fourth, dynamic and event-driven benchmarks could combat overfitting and encourage continual improvement. Promising strategies include (i) (partially) private benchmarks (Phan et al., 2025; Rajore et al., 2024; Zhang et al., 2024d), (ii) dynamically evolving suites (Jain et al., 2024a; White et al., 2024; Zheng et al., 2025), and (iii) adapting regularly occurring events into benchmarks, such as annual competitions (e.g., AIMO AIMO Prize: https://aimoprize.com/. for mathematical reasoning), which naturally provide fresh, unseen evaluation items. In combination, these approaches would make reasoning evaluation both more comprehensive and more resistant to short-term overfitting.

A Broad Picture.

Admittedly, existing literature, and therefore this survey, may over-represent certain reasoning or failure types, leaving some areas less explored. In particular, multi-turn and interactive contexts remain closer to real-world deployment conditions but are underrepresented in current literature; persistent coordination breakdowns in multi-agent simulations (Section 3.3) illustrate the complexity and significance of these scenarios. Future work should expand benchmark diversity to better capture reasoning failures that arise in such realistic, interactive settings. Overall, the systematic study of reasoning failures in LLMs parallels fault-tolerance research in early computing and incident analysis in safety-critical industries: understanding and categorizing failure is a prerequisite for building resilient systems. By unifying fragmented observations into a structured, two-axis taxonomy, this survey lays a foundation for a mature subfield dedicated to anticipating, detecting, and mitigating reasoning failures. As reasoning-specialized models become more prevalent, sustained attention to failure modes will be essential to ensure that future LLMs not only perform better in reasoning tasks, but fail better (gracefully, transparently, recoverably).

Acknowledgments

We thank Gabriel Poesia for very helpful suggestions and valuable feedback on an initial version of this paper, and Emily Gu for early contributions and discussions on an initial version of Section 5. We greatly appreciate valuable suggestions from anonymous reviewers and action editor at TMLR, which helped strengthen this paper substantially.

References

- S. Adak, D. Agrawal, A. Mukherjee, and S. Aditya (2024) TEXT2AFFORD: probing object affordance prediction abilities of language models solely from text. arXiv preprint arXiv:2402.12881. Cited by: §5.1.

- U. Agarwal, K. Tanmay, A. Khandelwal, and M. Choudhury (2024) Ethical reasoning and moral value alignment of llms depend on the language we prompt them in. arXiv preprint arXiv:2404.18460. Cited by: §3.2.

- S. Agashe, Y. Fan, A. Reyna, and X. E. Wang (2024) LLM-coordination: evaluating and analyzing multi-agent coordination abilities in large language models. External Links: 2310.03903, Link Cited by: §3.3.

- M. Ahn, A. Brohan, N. Brown, Y. Chebotar, O. Cortes, B. David, C. Finn, C. Fu, K. Gopalakrishnan, K. Hausman, et al. (2022) Do as i can, not as i say: grounding language in robotic affordances. arXiv preprint arXiv:2204.01691. Cited by: §5.3.

- Z. Allen-Zhu and Y. Li (2024) Physics of language models: part 3.1, knowledge storage and extraction. External Links: 2309.14316, Link Cited by: §4.3.

- S. T. Allison and D. M. Messick (1985) The group attribution error. Journal of Experimental Social Psychology 21 (6), pp. 563–579. Cited by: §3.1.

- G. F.C.F. Almeida, J. L. Nunes, N. Engelmann, A. Wiegmann, and M. d. Araújo (2024) Exploring the psychology of llms’ moral and legal reasoning. Artificial Intelligence 333, pp. 104145. External Links: ISSN 0004-3702, Link, Document Cited by: §3.2.

- N. Alzahrani, H. A. Alyahya, Y. Alnumay, S. Alrashed, S. Alsubaie, Y. Almushaykeh, F. Mirza, N. Alotaibi, N. Altwairesh, A. Alowisheq, et al. (2024) When benchmarks are targets: revealing the sensitivity of large language model leaderboards. arXiv preprint arXiv:2402.01781. Cited by: §4.2.

- M. Amirizaniani, E. Martin, M. Sivachenko, A. Mashhadi, and C. Shah (2024a) Can llms reason like humans? assessing theory of mind reasoning in llms for open-ended questions. In Proceedings of the 33rd ACM International Conference on Information and Knowledge Management, pp. 34–44. Cited by: §3.2.

- M. Amirizaniani, E. Martin, M. Sivachenko, A. Mashhadi, and C. Shah (2024b) Do llms exhibit human-like reasoning? evaluating theory of mind in llms for open-ended responses. arXiv preprint arXiv:2406.05659. Cited by: §1, §3.2.

- S. An, Z. Ma, Z. Lin, N. Zheng, J. Lou, and W. Chen (2024) Learning from mistakes makes llm better reasoner. External Links: 2310.20689, Link Cited by: §1.

- A. Anand, J. Kapuriya, A. Singh, J. Saraf, N. Lal, A. Verma, R. Gupta, and R. Shah (2024) Mm-phyqa: multimodal physics question-answering with multi-image cot prompting. In Pacific-Asia Conference on Knowledge Discovery and Data Mining, pp. 53–64. Cited by: §5.2.

- R. Ando, T. Morishita, H. Abe, K. Mineshima, and M. Okada (2023) Evaluating large language models with neubaroco: syllogistic reasoning ability and human-like biases. External Links: 2306.12567, Link Cited by: §4.1.

- K. Andrews and S. Monsó (2021) Animal Cognition. In The Stanford Encyclopedia of Philosophy, E. N. Zalta (Ed.), Note: https://plato.stanford.edu/archives/spr2021/entries/cognition-animal/ Cited by: §5.

- S. Aroca-Ouellette, C. Paik, A. Roncone, and K. Kann (2021) Prost: physical reasoning about objects through space and time. Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, pp. 4597–4608. External Links: Document Cited by: §5.1.

- T. Ates, M. S. Atesoglu, C. Yigit, I. Kesen, M. Kobas, E. Erdem, A. Erdem, T. Goksun, and D. Yuret (2020) Craft: a benchmark for causal reasoning about forces and interactions. arXiv preprint arXiv:2012.04293. Cited by: §5.2.

- A. Baddeley (2020) Working memory. Memory, pp. 71–111. Cited by: §3.1.

- H. Bai, Y. Sun, W. Hu, S. Qiu, M. Z. Huan, P. Song, R. Nowak, and D. Song (2025) How and why llms generalize: a fine-grained analysis of llm reasoning from cognitive behaviors to low-level patterns. arXiv preprint arXiv:2512.24063. Cited by: Appendix C.

- X. Bai, A. Wang, I. Sucholutsky, and T. L. Griffiths (2024) Measuring implicit bias in explicitly unbiased large language models. arXiv preprint arXiv:2402.04105. Cited by: Appendix D.

- B. Baker, J. Huizinga, L. Gao, Z. Dou, M. Y. Guan, A. Madry, W. Zaremba, J. Pachocki, and D. Farhi (2025) Monitoring reasoning models for misbehavior and the risks of promoting obfuscation. arXiv preprint arXiv:2503.11926. Cited by: §3.3, §3.3.

- V. Balazadeh, M. Ataei, H. Cheong, A. H. Khasahmadi, and R. G. Krishnan (2024a) Physics context builders: a modular framework for physical reasoning in vision-language models. arXiv preprint arXiv:2412.08619. Cited by: §5.2.

- V. Balazadeh, M. Ataei, H. Cheong, A. H. Khasahmadi, and R. G. Krishnan (2024b) Synthetic vision: training vision-language models to understand physics. arXiv preprint arXiv:2412.08619. Cited by: §5.2.

- L. Barnhart, R. A. Bafghi, S. Becker, and M. Raissi (2025) Aligning to what? limits to rlhf based alignment. External Links: 2503.09025, Link Cited by: §3.2.

- L. W. Barsalou (2008) Grounded cognition. Annu. Rev. Psychol. 59 (1), pp. 617–645. Cited by: §2.1.

- D. M. Bear, E. Wang, D. Mrowca, F. J. Binder, H. F. Tung, R. Pramod, C. Holdaway, S. Tao, K. Smith, F. Sun, et al. (2021) Physion: evaluating physical prediction from vision in humans and machines. arXiv preprint arXiv:2106.08261. Cited by: §5.2.

- Y. Bengio, S. Mindermann, D. Privitera, T. Besiroglu, R. Bommasani, S. Casper, Y. Choi, P. Fox, B. Garfinkel, D. Goldfarb, H. Heidari, A. Ho, S. Kapoor, L. Khalatbari, S. Longpre, S. Manning, V. Mavroudis, M. Mazeika, J. Michael, J. Newman, K. Y. Ng, C. T. Okolo, D. Raji, G. Sastry, E. Seger, T. Skeadas, T. South, E. Strubell, F. Tramèr, L. Velasco, N. Wheeler, D. Acemoglu, O. Adekanmbi, D. Dalrymple, T. G. Dietterich, E. W. Felten, P. Fung, P. Gourinchas, F. Heintz, G. Hinton, N. Jennings, A. Krause, S. Leavy, P. Liang, T. Ludermir, V. Marda, H. Margetts, J. McDermid, J. Munga, A. Narayanan, A. Nelson, C. Neppel, A. Oh, G. Ramchurn, S. Russell, M. Schaake, B. Schölkopf, D. Song, A. Soto, L. Tiedrich, G. Varoquaux, A. Yao, Y. Zhang, F. Albalawi, M. Alserkal, O. Ajala, G. Avrin, C. Busch, A. C. P. de Leon Ferreira de Carvalho, B. Fox, A. S. Gill, A. H. Hatip, J. Heikkilä, G. Jolly, Z. Katzir, H. Kitano, A. Krüger, C. Johnson, S. M. Khan, K. M. Lee, D. V. Ligot, O. Molchanovskyi, A. Monti, N. Mwamanzi, M. Nemer, N. Oliver, J. R. L. Portillo, B. Ravindran, R. P. Rivera, H. Riza, C. Rugege, C. Seoighe, J. Sheehan, H. Sheikh, D. Wong, and Y. Zeng (2025) International ai safety report. External Links: 2501.17805, Link Cited by: Appendix D.

- G. Beniamini, Y. Dor, A. Vinnikov, S. G. Peled, O. Weinstein, O. Sharir, N. Wies, T. Nussbaum, I. B. Shaul, T. Zekharya, Y. Levine, S. Shalev-Shwartz, and A. Shashua (2025) FormulaOne: measuring the depth of algorithmic reasoning beyond competitive programming. External Links: 2507.13337, Link Cited by: §4.2.

- L. Berglund, M. Tong, M. Kaufmann, M. Balesni, A. C. Stickland, T. Korbak, and O. Evans (2023) The reversal curse: llms trained on" a is b" fail to learn" b is a". arXiv preprint arXiv:2309.12288. Cited by: Table 7, §4.1.

- A. Bhattacharyya, S. Panchal, M. Lee, R. Pourreza, P. Madan, and R. Memisevic (2024) Look, remember and reason: grounded reasoning in videos with language models. External Links: 2306.17778, Link Cited by: Appendix C.

- F. Bianchi, P. Kalluri, E. Durmus, F. Ladhak, M. Cheng, D. Nozza, T. Hashimoto, D. Jurafsky, J. Zou, and A. Caliskan (2023) Easily accessible text-to-image generation amplifies demographic stereotypes at large scale. In 2023 ACM Conference on Fairness, Accountability, and Transparency, FAccT ’23, pp. 1493–1504. External Links: Link, Document Cited by: Appendix D.

- N. Bitton-Guetta, Y. Bitton, J. Hessel, L. Schmidt, Y. Elovici, G. Stanovsky, and R. Schwartz (2023) Breaking common sense: whoops! a vision-and-language benchmark of synthetic and compositional imagesbreaking common sense: whoops! a vision-and-language benchmark of synthetic and compositional images. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 2616–2627. Cited by: Table 12, §5.2, §5.2.

- V. K. Bonagiri, S. Vennam, M. Gaur, and P. Kumaraguru (2024) Measuring moral inconsistencies in large language models. arXiv preprint arXiv:2402.01719. Cited by: §3.2.

- A. Borah and R. Mihalcea (2024) Towards implicit bias detection and mitigation in multi-agent llm interactions. arXiv preprint arXiv:2410.02584. Cited by: Appendix D.

- A. Borji (2023) A categorical archive of chatgpt failures. External Links: 2302.03494, Link Cited by: §1.

- J. Boye and B. Moell (2025) Large language models and mathematical reasoning failures. External Links: 2502.11574, Link Cited by: §4.3.

- P. G. Brodeur, T. A. Buckley, Z. Kanjee, E. Goh, E. B. Ling, P. Jain, S. Cabral, R. Abdulnour, A. Haimovich, J. A. Freed, A. Olson, D. J. Morgan, J. Hom, R. Gallo, E. Horvitz, J. Chen, A. K. Manrai, and A. Rodman (2024) Superhuman performance of a large language model on the reasoning tasks of a physician. External Links: 2412.10849, Link Cited by: §1.

- S. Bubeck, V. Chandrasekaran, R. Eldan, J. Gehrke, E. Horvitz, E. Kamar, P. Lee, Y. T. Lee, Y. Li, S. Lundberg, et al. (2023) Sparks of artificial general intelligence: early experiments with gpt-4. arXiv preprint arXiv:2303.12712. Cited by: §3.1.

- T. Cai, X. Song, J. Jiang, F. Teng, J. Gu, and G. Zhang (2024) ULMA: unified language model alignment with human demonstration and point-wise preference. External Links: 2312.02554, Link Cited by: §1.

- D. Campbell, S. Rane, T. Giallanza, C. N. De Sabbata, K. Ghods, A. Joshi, A. Ku, S. Frankland, T. Griffiths, J. D. Cohen, et al. (2025) Understanding the limits of vision language models through the lens of the binding problem. Advances in Neural Information Processing Systems 37, pp. 113436–113460. Cited by: §5.2.

- J. J. Canas, I. Fajardo, and L. Salmeron (2006) Cognitive flexibility. International encyclopedia of ergonomics and human factors 1 (3), pp. 297–301. Cited by: §3.1.

- M. D. Cannon and A. C. Edmondson (2005) Failing to learn and learning to fail (intelligently): how great organizations put failure to work to innovate and improve. Long Range Planning 38 (3), pp. 299–319. Note: Organizational Failure External Links: ISSN 0024-6301, Document, Link Cited by: §1.

- R. Cantini, G. Cosenza, A. Orsino, and D. Talia (2024) Are large language models really bias-free? jailbreak prompts for assessing adversarial robustness to bias elicitation. In International Conference on Discovery Science, pp. 52–68. Cited by: Appendix D, §3.1.

- D. Castelvecchi (2016) Can we open the black box of ai?. Nature News 538 (7623), pp. 20. Cited by: §2.2.

- M. Chakraborty, L. Wang, and D. Jurgens (2025) Structured moral reasoning in language models: a value-grounded evaluation framework. External Links: 2506.14948, Link Cited by: §3.2.

- J. Chan, R. Gaizauskas, and Z. Zhao (2024) Rulebreakers challenge: revealing a blind spot in large language models’ reasoning with formal logic. External Links: 2410.16502, Link Cited by: §4.1.

- Y. Chang and Y. Bisk (2024) Language models need inductive biases to count inductively. External Links: 2405.20131, Link Cited by: §4.3.

- B. Chen, Z. Xu, S. Kirmani, B. Ichter, D. Sadigh, L. Guibas, and F. Xia (2024a) Spatialvlm: endowing vision-language models with spatial reasoning capabilities. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 14455–14465. Cited by: Table 15, §5.2, §5.3.

- J. Chen, H. Lin, X. Han, and L. Sun (2024b) Benchmarking large language models in retrieval-augmented generation. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 38, pp. 17754–17762. Cited by: Appendix D.

- T. Chen, S. Anumasa, B. Lin, V. Shah, A. Goyal, and D. Liu (2025) Auto-bench: an automated benchmark for scientific discovery in llms. arXiv preprint arXiv:2502.15224. Cited by: §5.1.

- X. Chen, R. A. Chi, X. Wang, and D. Zhou (2024c) Premise order matters in reasoning with large language models. External Links: 2402.08939, Link Cited by: §4.2.

- Y. Chen, Y. Liu, J. Yan, X. Bai, M. Zhong, Y. Yang, Z. Yang, C. Zhu, and Y. Zhang (2024d) See what llms cannot answer: a self-challenge framework for uncovering llm weaknesses. External Links: 2408.08978, Link Cited by: §4.1.

- A. Cheng, H. Yin, Y. Fu, Q. Guo, R. Yang, J. Kautz, X. Wang, and S. Liu (2024) Spatialrgpt: grounded spatial reasoning in vision-language models. Advances in Neural Information Processing Systems 37, pp. 135062–135093. Cited by: §5.2.

- A. Cherian, R. Corcodel, S. Jain, and D. Romeres (2024) Llmphy: complex physical reasoning using large language models and world models. arXiv preprint arXiv:2411.08027. Cited by: §5.1, §5.2, §5.2.

- I. Chern, S. Chern, S. Chen, W. Yuan, K. Feng, C. Zhou, J. He, G. Neubig, and P. Liu (2023) FacTool: factuality detection in generative ai–a tool augmented framework for multi-task and multi-domain scenarios, 2023. URL https://arxiv. org/abs/2307.13528. Cited by: Appendix D.

- Y. K. Chia, Q. Sun, L. Bing, and S. Poria (2024) Can-do! a dataset and neuro-symbolic grounded framework for embodied planning with large multimodal models. arXiv preprint arXiv:2409.14277. Cited by: §5.2.

- J. Cho, A. Zala, and M. Bansal (2023) DALL-eval: probing the reasoning skills and social biases of text-to-image generation models. External Links: 2202.04053, Link Cited by: Appendix D.

- G. Chochlakis, A. Potamianos, K. Lerman, and S. Narayanan (2024) The strong pull of prior knowledge in large language models and its impact on emotion recognition. arXiv preprint arXiv:2403.17125. Cited by: §3.2.

- W. Chow, J. Mao, B. Li, D. Seita, V. Guizilini, and Y. Wang (2025) PhysBench: benchmarking and enhancing vision-language models for physical world understanding. arXiv preprint arXiv:2501.16411. Cited by: Table 14, §5.2.

- Z. Chu, Z. Wang, and W. Zhang (2024) Fairness in large language models: a taxonomic survey. ACM SIGKDD explorations newsletter 26 (1), pp. 34–48. Cited by: Appendix D.

- D. J. Chung, Z. Gao, Y. Kvasiuk, T. Li, M. Münchmeyer, M. Rudolph, F. Sala, and S. C. Tadepalli (2025) Theoretical physics benchmark (tpbench)–a dataset and study of ai reasoning capabilities in theoretical physics. arXiv preprint arXiv:2502.15815. Cited by: §5.1.

- C. Clark, K. Lee, M. Chang, T. Kwiatkowski, M. Collins, and K. Toutanova (2019) BoolQ: exploring the surprising difficulty of natural yes/no questions. External Links: 1905.10044, Link Cited by: §4.1.

- K. Cobbina and T. Zhou (2025) Where to show demos in your prompt: a positional bias of in-context learning. arXiv preprint arXiv:2507.22887. Cited by: §3.1.

- P. R. P. Coelho and J. E. McClure (2004) Learning from Failure. Working Papers Technical Report 200402, Ball State University, Department of Economics. External Links: Link, Document Cited by: §1.

- M. Cohn, M. Pushkarna, G. O. Olanubi, J. M. Moran, D. Padgett, Z. Mengesha, and C. Heldreth (2024) Believing anthropomorphism: examining the role of anthropomorphic cues on trust in large language models. In Extended Abstracts of the CHI Conference on Human Factors in Computing Systems, pp. 1–15. Cited by: §3.1.

- K. M. Collins, S. Frieder, J. Bayer, J. Loader, J. Lim, P. Song, F. Zaiser, L. Zhou, S. Li, S. Looi, et al. (2025) AI impact on human proof formalization workflows. In The 5th Workshop on Mathematical Reasoning and AI at NeurIPS 2025, Cited by: Appendix C.

- J. Conde, G. Martínez, P. Reviriego, Z. Gao, S. Liu, and F. Lombardi (2025) Can chatgpt learn to count letters?. Computer 58 (3), pp. 96–99. Cited by: §4.3.

- I. M. Copi, C. Cohen, and K. McMahon (2016) Introduction to logic. Routledge. Cited by: §2.1.