# Towards Autonomous Mathematics Research

**Authors**: Tony Feng, Trieu H. Trinh, Garrett Bingham, Dawsen Hwang, Yuri Chervonyi, Junehyuk Jung, Joonkyung Lee, Carlo Pagano, Sang-hyun Kim, Federico Pasqualotto, Sergei Gukov, Jonathan N. Lee, Junsu Kim, Kaiying Hou, Golnaz Ghiasi, Yi Tay, YaGuang Li, Chenkai Kuang, Yuan Liu, Hanzhao Lin, Evan Zheran Liu, Nigamaa Nayakanti, Xiaomeng Yang, Heng-Tze Cheng, Demis Hassabis, Koray Kavukcuoglu, Quoc V. Le, Thang Luong, Project leads, Mathematicians. Work conducted under Google DeepMind

Corresponding author:

fengtony@google.com, thangluong@google.com. External affiliations: UC Berkeley (Tony Feng), Brown University (Junehyuk Jung), Yonsei University (Joonkyung Lee), Concordia University (Carlo Pagano), Korea Institute for Advanced Study (Sang-hyun Kim), UC San Diego (Federico Pasqualotto), Caltech (Sergei Gukov).

Abstract

Recent advances in foundational models have yielded reasoning systems capable of achieving a gold-medal standard at the International Mathematical Olympiad. The transition from competition-level problem solving to professional research, however, requires navigating vast literature and constructing long-horizon proofs. In this work, we introduce Aletheia, a math research agent that iteratively generates, verifies, and revises solutions end-to-end in natural language. Specifically, Aletheia is powered by by three main sources: (i) an advanced version of Gemini Deep Think for challenging reasoning problems, (ii) a novel inference-time scaling law that extends beyond Olympiad-level problems, and (iii) intensive tool use to navigate the complexities of mathematical research. We demonstrate the capability of Aletheia from Olympiad problems to PhD-level exercises and most notably, through several distinct milestones in AI-assisted mathematics research: (a) a research paper [Feng2026] generated by AI without any human intervention in calculating certain structure constants in arithmetic geometry called eigenweights; (b) a research paper [LeeSeo2026] demonstrating human-AI collaboration in proving bounds on systems of interacting particles called independent sets; and (c) an extensive semi-autonomous evaluation erdos of 700 open problems on Bloom’s Erdős Conjectures database, including autonomous solutions to four open questions. In order to help the public better understand the developments pertaining to AI and mathematics, we suggest quantifying standard levels of autonomy and novelty of AI-assisted results, as well as propose a novel concept of human-AI interaction cards for transparency. We conclude with reflections on human-AI collaboration in mathematics and share all prompts as well as model outputs at https://github.com/google-deepmind/superhuman/tree/main/aletheia.

1 Introduction

Recent years have witnessed rapid progress in the reasoning capabilities of natural language-based AI in competition mathematics luong2025robustmathematicalreasoning. Notably, AI models have already achieved gold-medal performance in the 2025 International Mathematical Olympiad (IMO) imo-gold, widely regarded as the world’s premier mathematics competition. This milestone motivates a fundamental question regarding AI-driven scientific discovery: can AI autonomously discover and prove new mathematical theorems? Transitioning from contest to research mathematics presents significant challenges. Unlike self-contained competition problems, research questions require synthesizing advanced techniques from an extensive body of literature. This poses a major hurdle for foundation or large language models, which often hallucinate and exhibit only a superficial understanding of specialized topics—limitations likely stemming from a scarcity of relevant training data.

To explore this challenge, we developed Aletheia, a math research agent that can iteratively generate, verify, and revise solutions end-to-end in natural language. Aletheia is powered by three main sources: (i) an advanced version of Gemini Deep Think deepmind:gemini2p5deepthink for tackling extremely hard reasoning problems, (ii) a novel inference-time scaling law that extends from Olympiad-level problems to PhD-level exercises, and (iii) intensive tool use such as Google Search and web browsing to navigate the complexities of mathematical research. On Olympiad-level problems, Aletheia, without internet access, has achieved a new state-of-the-art performance of $95.1\%$ accuracy on the advanced version of IMO-ProofBench luong2025robustmathematicalreasoning, according to human experts This significantly outperforms the previous best result of $65.7\%$ achieved by Gemini Deep Think (IMO Gold) in July 2025. See full leaderboard in https://imobench.github.io/.. Similarly, on an internal benchmark (FutureMath Basic) of PhD-level exercises, Aletheia also demonstrated state-of-the-art performance. Notably, this paper presents and reflects on the initial wave of mathematical research papers achieved by Aletheia See our project page https://github.com/google-deepmind/superhuman/tree/main/aletheia for detailed prompts and model outputs. in collaboration with mathematicians:

1. Reliable autonomous research. A research paper [Feng2026] generated by AI without any human intervention, calculating certain structure constants in arithmetic geometry called eigenweights.

1. AI-guided collaboration. A research paper [LeeSeo2026] demonstrating human-AI collaboration in proving bounds on systems of interacting particles called independent sets.

1. An extensive semi-autonomous evaluation on the Erdős problems. A report erdos on a systematic deployment of our agent on the 700 open problems on Bloom’s Erdős Conjectures database https://www.erdosproblems.com/., including four open questions of Erdős that it resolves, verified semi-autonomously using both AI grading and human experts. Beyond solving Erdős-1051 completely, our model drove a generalization which resulted in a research paper [BKKKZ].

1. In addition, the agent contributed intermediate propositions on two further research papers, [FYZ4] and [ACGKMP], improving prior proofs by the human authors.

Given the current pace of improvement, it seems inevitable that AI will play an increasing role in mathematics research. However, for the vast majority of mathematics research results, only a few experts are equipped to properly evaluate their novelty and significance. This evaluation gap has enabled misinformation about AI-generated mathematics to spread unchecked in popular media. With a view towards transparent communication of future developments to the public, we propose codifying representations of AI-generated mathematics according to a standard taxonomy of “Autonomous Mathematics Research Levels”, analogous to the SAE Levels of Vehicle Autonomy.

| | Primarily Human (secondary AI input) | Human-AI Collaboration | Essentially Autonomous |

| --- | --- | --- | --- |

| Level 0: Negligible Novelty | | | Erdős-652, 654, 1040 erdos |

| Level 1: Minor Novelty | | | Erdős-1051 erdos |

| Complexity Bounds [ACGKMP] | Generalized Erdős-1051 [BKKKZ] | Eigenweights [Feng2026] | |

| Level 2: Publishable Research ∗ | Arithmetic Volumes [FYZ4] | Independence Polynomials [LeeSeo2026] | |

| Level 3: Major Advance | | | |

| Level 4: Landmark Breakthrough | | | |

Table 1: Classification of all AI-assisted mathematics results encompassed in this work. Detailed descriptions of the categories, with discussion and examples, can be found in § 5.1. ∗ Works listed as Level 2 in this table have been submitted for publications.

While the ultimate specifics of such a taxonomy should be left to the mathematical community, we suggest features that would be informative, such as separate axes for representing the mathematical significance and the degree of AI contribution. For concreteness, we sketch one possible taxonomy in § 5.1, and use it to organize our own AI-assisted mathematics results, as displayed in Table 1. In particular, this contextualizes the ‘Open’ (according to ErdosProblems.com) Erdős problems that we solved, most of which turned out—despite being open for several decades—to be quite elementary. In fact, one such ‘Open’ problem (Erdős-397, posed in 1980) was eventually discovered to be nearly identical to a problem on the 2012 Team Selection Test for the Chinese IMO team AoPS12, a (challenging) exam for high school students; we exclude it from our classification since we consider the solution to be already in the literature. It also clarifies that our autonomous results, while milestones for artificial intelligence, are not claimed to be “major advances” for mathematics (as defined in § 5.1).

2 Aletheia agent: From Olympiads to Research-level Mathematics

This section outlines the methodological framework utilized in the development of agents capable of addressing research-grade mathematics. There are many challenges involved in transferring capabilities from contest problem-solving to mathematics research. While IMO problems require ingenuity, their solutions usually span only a few pages and rely only on standard theorems from the high school curriculum. By contrast, research-level mathematics draws on advanced techniques from vast literature, with papers often spanning dozens of pages. Human mathematicians, even IMO medalists, usually take many years of postgraduate study to reach the frontier of mathematical research. While foundation models possess a large knowledge base from pretraining, their understanding of advanced subjects remains superficial due to data scarcity, and they are also prone to hallucinations.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Flowchart: Aletheia Workflow Powered by Gemini Deep Think

### Overview

The image depicts a technical workflow diagram titled "Aletheia Powered by Gemini Deep Think." It illustrates a multi-stage process for problem-solving, featuring feedback loops and verification mechanisms. The diagram uses a parchment-style aesthetic with classical architectural elements (columns, laurel wreaths) and symbolic icons (scales of justice, sunburst).

### Components/Axes

1. **Title**: "Aletheia" (center top) with subtitle "Powered by Gemini Deep Think"

2. **Process Components**:

- **Problem** (leftmost box, scroll icon)

- **Generator** (second box, column icon)

- **Candidate Solution** (third box, scroll icon)

- **Reviser** (fourth box, column icon)

- **Verifier** (central circular seal with scales of justice and hand symbol)

- **Final Output** (rightmost box, sunburst icon)

3. **Arrows/Connections**:

- Red arrow labeled "Critically flawed" (from Verifier to Generator)

- Green arrow labeled "Correct" (from Verifier to Final Output)

- Gray arrow labeled "Minor fixes needed" (from Reviser to Candidate Solution)

4. **Visual Elements**:

- Parchment-textured background with geometric border

- Sunburst glow around Final Output

- Laurel wreath encircling Verifier seal

### Detailed Analysis

- **Problem → Generator**: Initial input flows to the Generator component

- **Generator → Candidate Solution**: Output from Generator becomes Candidate Solution

- **Candidate Solution → Reviser**: Revisions occur here before returning to Candidate Solution

- **Candidate Solution → Verifier**: Solutions are evaluated for correctness

- **Verifier → Final Output**: Correct solutions proceed to final output

- **Verifier → Generator**: Critically flawed solutions trigger re-generation

- **Reviser → Candidate Solution**: Iterative refinement process

### Key Observations

1. **Feedback Loops**: Two distinct feedback mechanisms exist:

- Red loop for critical flaws (immediate re-generation)

- Gray loop for minor fixes (iterative improvement)

2. **Verification Gatekeeper**: The Verifier acts as a decision node determining solution validity

3. **Iterative Design**: The Reviser component enables multiple refinement cycles

4. **Visual Hierarchy**: Central placement of Verifier emphasizes its critical role

### Interpretation

This flowchart represents an AI-assisted problem-solving framework with built-in quality control. The "Gemini Deep Think" power suggests advanced reasoning capabilities, while the Verifier's scales of justice symbolize ethical or logical validation. The dual feedback loops indicate a robust error-handling system:

- Critical flaws trigger complete re-generation (red path)

- Minor issues enable targeted improvements (gray path)

The sunburst effect on Final Output visually reinforces its importance as the endpoint. The classical design elements (columns, laurel wreaths) may symbolize the pursuit of truth (aletheia) through structured reasoning. Notably absent are quantitative metrics, suggesting this is a conceptual rather than performance-oriented diagram.

</details>

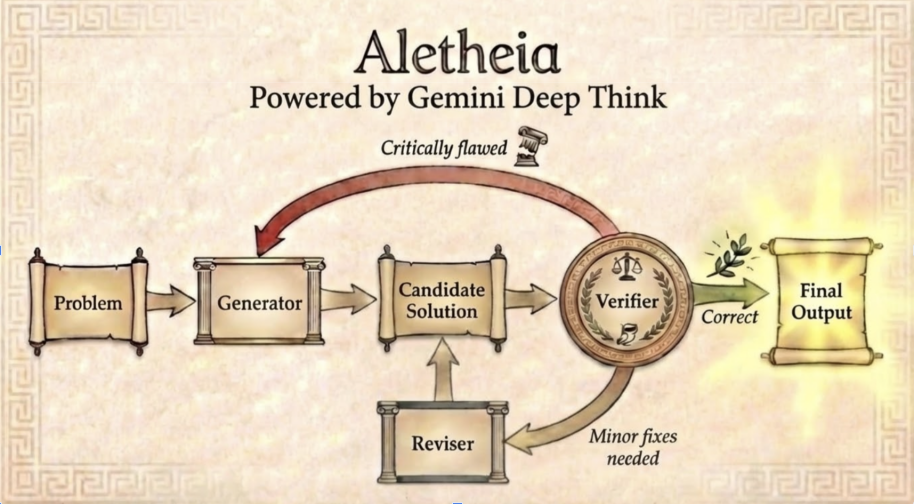

Figure 1: Overview of Aletheia, a math research agent powered by Deep Think that can iteratively generate, verify, and revise for research-level math problems.

To address these issues, we built a math research agent, internally codenamed Aletheia, on top of Gemini Deep Think. Aletheia include three subagents, a (solution) Generator, a Verifier, and a Reviser that interact continuously until a solution is found that the Verifier approves, or until the attempts reach a preset (hyperparameter) limit. The subagent orchestration is sketched in Figure 1. In turn, each of the three subagents involves internal orchestration of calls to a Gemini base model.

In contrast to AlphaGeometry Trinh2024-alphageometry-1, chervonyi2025goldmedalistperformancesolvingolympiad-alphageometry2 and AlphaProof Hubert2025, that use formal language, Aletheia operates end-to-end in natural language. At the same time, designs similar to Aletheia have been demonstrated elsewhere. For example, Huang–Yang huang2025winninggoldimo2025 showed that a manually crafted solver-verifier harness could boost GPT-5, Gemini 2.5 Pro, or Grok 4 to Gold Medal performance on the 2025 IMO. Another example is the FullProof system The authors were not aware of this work until after the results of this paper were collected. built by Salafatinos bryan2026motivic, which is another mathematics specific research agent incorporating a separate informal verifier mechanism.

2.1 Scaling Laws and the Evolution of Deep Think

<details>

<summary>AletheiaProofBench.png Details</summary>

### Visual Description

## LineGraph: Performance Comparison of Gemini Deep Think Versions and Aletheia

### Overview

The image is a line graph comparing the performance scores of two versions of "Gemini Deep Think" and a benchmark labeled "Aletheia" across varying inference-time compute levels (log scale). The y-axis represents performance scores (%), while the x-axis represents inference-time compute in logarithmic increments (2⁰ to 2¹¹). A red star marks a specific data point labeled "Aletheia."

---

### Components/Axes

- **X-Axis (Horizontal)**:

- Label: "Inference-Time Compute (Log Scale)"

- Values: 2⁰, 2¹, 2², ..., 2¹¹ (logarithmic scale).

- Position: Bottom of the graph.

- **Y-Axis (Vertical)**:

- Label: "Score (%)"

- Range: 0% to 90% (linear scale).

- Position: Left side of the graph.

- **Legend**:

- Located in the bottom-right corner.

- Colors and labels:

- **Blue line**: "Gemini Deep Think (advanced version, Jan 2026)"

- **Orange line**: "Gemini Deep Think (IMO Gold, Jul 2025)"

- **Red star**: "Aletheia"

---

### Detailed Analysis

#### Blue Line (Gemini Deep Think - Advanced Version, Jan 2026):

- **Trend**: Starts at 40% at 2⁰, rises sharply to 90% at 2⁸, then plateaus with minor fluctuations.

- **Key Data Points**:

- 2⁰: 40%

- 2³: 67%

- 2⁴: 73%

- 2⁵: 78%

- 2⁶: 82%

- 2⁷: 85%

- 2⁸: 90%

- 2⁹: 85%

- 2¹⁰: 90%

- 2¹¹: 88%

#### Orange Line (Gemini Deep Think - IMO Gold, Jul 2025):

- **Trend**: Starts at 33% at 2⁰, drops to 29% at 2³, then rises steadily to 68% at 2¹⁰, followed by a slight decline.

- **Key Data Points**:

- 2⁰: 33%

- 2³: 29%

- 2⁴: 43%

- 2⁵: 52%

- 2⁶: 58%

- 2⁷: 59%

- 2⁸: 63%

- 2⁹: 66%

- 2¹⁰: 68%

- 2¹¹: 65%

#### Red Star (Aletheia):

- **Position**: At 2⁸ on the x-axis and 90% on the y-axis.

- **Note**: Overlaps with the peak of the blue line at 2⁸.

---

### Key Observations

1. **Blue Line Dominance**: The advanced Gemini version (blue) consistently outperforms the IMO Gold version (orange) across all compute levels, with a significant gap at higher compute levels (e.g., 2⁸–2¹¹).

2. **Aletheia Outlier**: The red star ("Aletheia") aligns with the blue line’s peak at 2⁸, suggesting it may represent a benchmark or idealized performance.

3. **Orange Line Lag**: The IMO Gold version (orange) shows a delayed improvement, starting below the blue line and never surpassing it.

4. **Log Scale Impact**: The x-axis’s logarithmic scale emphasizes exponential growth in compute, making early improvements (e.g., 2⁰–2³) appear less impactful than later gains.

---

### Interpretation

- **Performance Scaling**: The advanced Gemini version demonstrates superior scalability, achieving near-maximal performance (90%) at 2⁸ compute, while the IMO Gold version lags significantly.

- **Aletheia’s Role**: The red star’s alignment with the blue line’s peak implies it may represent an aspirational or theoretical maximum, or a specific optimized configuration.

- **Version Comparison**: The advanced version (Jan 2026) outperforms the IMO Gold version (Jul 2025) by ~25% at 2⁸ compute, highlighting the importance of version updates.

- **Anomalies**: The orange line’s dip at 2³ (29%) suggests potential instability or inefficiency at mid-range compute levels.

This graph underscores the critical role of compute resources and version optimization in achieving high performance, with the advanced Gemini version setting a new benchmark.

</details>

(a) IMO-ProofBench Advanced (Olympiad Level).

<details>

<summary>AletheiaFutureMath.png Details</summary>

### Visual Description

## Line Graph: Performance vs. Compute (Log Scale)

### Overview

The image depicts a line graph comparing the performance (score %) of two systems—**Gemini Deep Think (advanced version, Jan 2026)** and **Aletheia**—across varying levels of inference-time compute (measured on a logarithmic scale). The x-axis represents compute in powers of 2 (2⁰ to 2¹¹), while the y-axis shows performance scores as percentages (0–40%). A red star marks a notable outlier for Aletheia.

---

### Components/Axes

- **X-Axis (Inference-Time Compute)**: Logarithmic scale from 2⁰ (1) to 2¹¹ (2048). Ticks labeled as 2⁰, 2¹, ..., 2¹¹.

- **Y-Axis (Score %)**: Linear scale from 0% to 40%, with increments of 10%.

- **Legend**:

- **Blue line**: Gemini Deep Think (advanced version, Jan 2026).

- **Red star**: Aletheia.

- **Data Points**:

- Blue line has 10 marked points at 2⁰, 2³, 2⁴, 2⁵, 2⁶, 2⁷, 2⁸, 2⁹, 2¹⁰, and 2¹¹.

- Red star is placed at 2⁹ (512 compute units).

---

### Detailed Analysis

#### Gemini Deep Think (Blue Line)

- **Trend**: The blue line shows a general upward trend with fluctuations.

- **Key Data Points**:

- 2⁰ (1 compute unit): 0% score.

- 2³ (8 compute units): ~19% score.

- 2⁴ (16 compute units): ~30% score.

- 2⁵ (32 compute units): ~19% score.

- 2⁶ (64 compute units): ~20.5% score.

- 2⁷ (128 compute units): ~17.5% score.

- 2⁸ (256 compute units): ~20.5% score.

- 2⁹ (512 compute units): ~22% score.

- 2¹⁰ (1024 compute units): ~34.5% score.

- 2¹¹ (2048 compute units): ~38% score.

- **Pattern**: Performance increases with compute, but there are dips (e.g., 2⁵ and 2⁷). The steepest rise occurs between 2⁹ and 2¹¹.

#### Aletheia (Red Star)

- **Position**: A single red star is placed at 2⁹ (512 compute units) with a score of ~45%, significantly higher than Gemini’s ~22% at the same compute level.

---

### Key Observations

1. **Gemini’s Performance**:

- Scores improve with increased compute, but the relationship is non-linear (e.g., dips at 2⁵ and 2⁷).

- The largest performance jump occurs between 2⁹ and 2¹¹ (from 22% to 38%).

2. **Aletheia’s Outlier**:

- At 2⁹ (512 compute units), Aletheia achieves a score of ~45%, far exceeding Gemini’s ~22% at the same compute level.

- This suggests Aletheia may be more efficient or optimized for this specific task.

---

### Interpretation

- **Gemini’s Scalability**: The data indicates that Gemini’s performance improves with compute, but the relationship is not strictly linear. The dips suggest potential inefficiencies or bottlenecks at certain compute levels.

- **Aletheia’s Advantage**: The red star at 2⁹ highlights a significant performance gap. This could imply that Aletheia is either more optimized for the task, uses compute more effectively, or has a different architectural design.

- **Log Scale Implications**: The logarithmic x-axis emphasizes the exponential growth of compute, making the performance differences at higher compute levels (e.g., 2¹⁰ and 2¹¹) more pronounced.

---

### Final Notes

- **Data Accuracy**: All values are approximate, as the graph lacks gridlines for precise extraction. The red star’s position at 2⁹ is explicitly marked, while Gemini’s scores are inferred from the line’s trajectory.

- **Contextual Relevance**: The graph compares two systems under the same compute constraints, suggesting a focus on efficiency or optimization trade-offs.

</details>

(b) FutureMath Basic (Ph.D. Level Exercises).

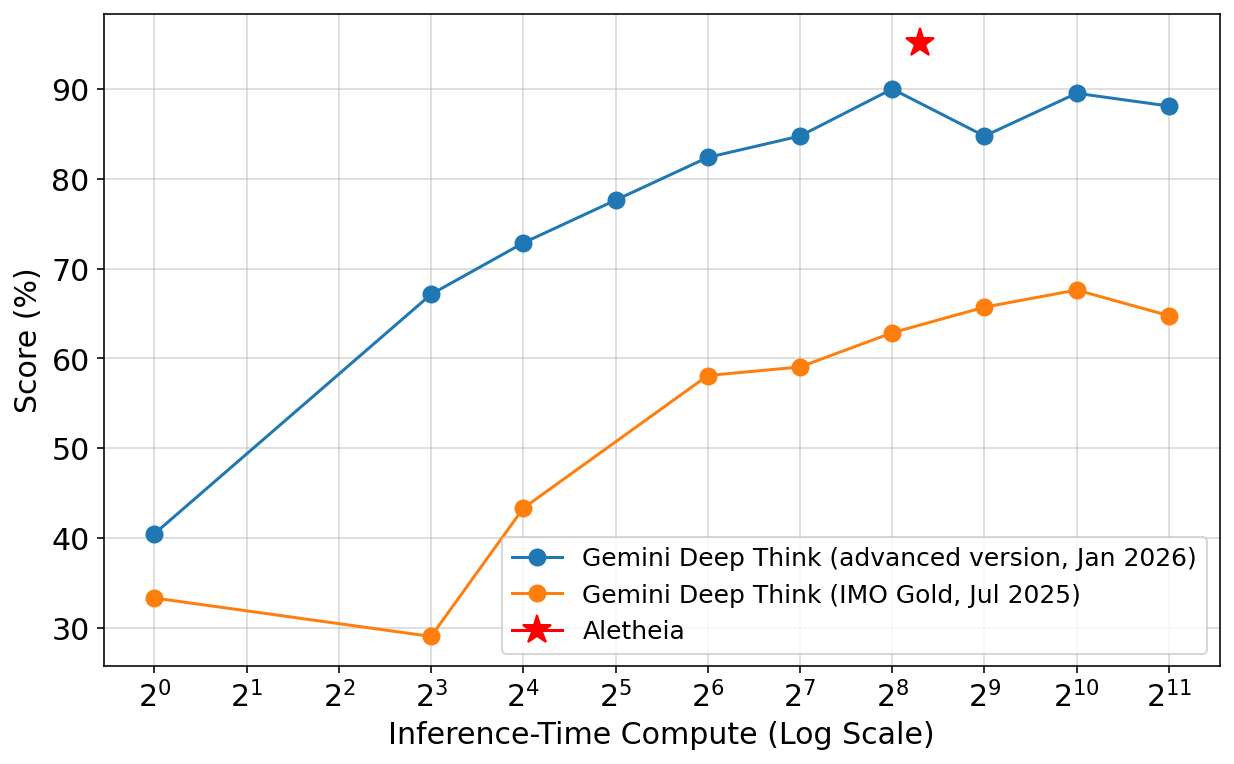

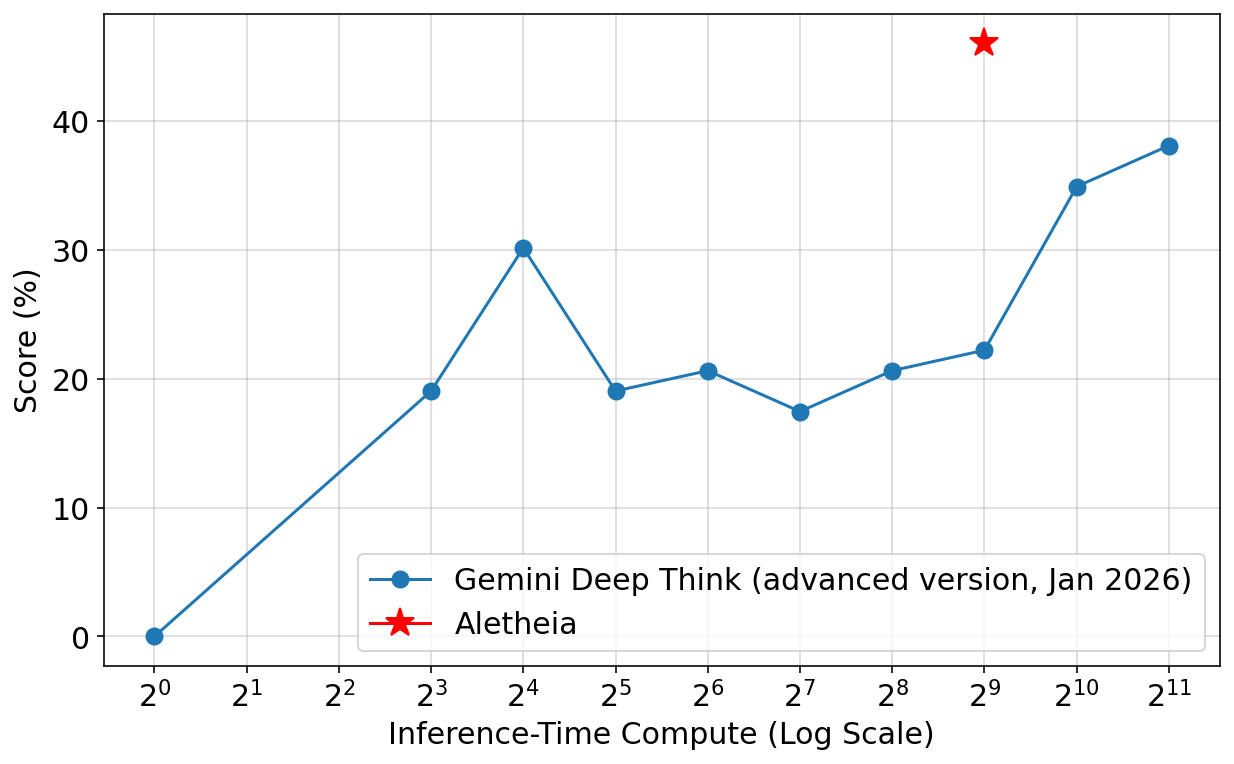

Figure 2: The latest advanced version of Deep Think, as of Jan 2026, has significantly outperformed the IMO-Gold version (Jul 2025) on Olympiad-level problems. The inference-time scaling law also transfers to PhD-level exercises. Aletheia makes further leaps in terms of reasoning quality with lower inference-time compute. All results were graded by human experts.

We first discuss some empirical findings about inference time scaling laws for Olympiad-level and PhD-level problems, which underlie the development of Deep Think. To investigate the inference scaling behavior, we evaluated different advanced versions of Gemini Deep Think deepmind:gemini2p5deepthink. Since Deep Think leverages parallel thinking to explore multiple ideas at once, we were able to flexibly adjust the amount of compute spent at inference time. To ensure that results were not cherry-picked, we ran the model exactly once for each problem at each compute scale, with tool use disabled, and the outputs were carefully graded according to the grading guideline.

For Olympiad level, we leveraged the the advanced subset of IMO-ProofBench luong2025robustmathematicalreasoning which consists of 30 problems similar in difficulty and problem style to those found at the IMO. This was the benchmark used during our IMO 2025 preparation. We observed that the inference-time compute could be increased by orders of magnitude while yielding substantial gains in accuracy, as graded by human experts, before eventually plateauing (Figure 2(a)). Ultimately, an advanced version of Gemini Deep Think achieved a gold-medal standard at the IMO in July 2025, perfectly solving five out of six problems imo-gold.

Building upon the key recipes developed for the IMO-gold model and incorporating a suite of novel technical improvements, we trained a stronger model, Gemini Deep Think (advanced version, Jan 2026), that significantly improved inference efficiency. The compute required to achieve equivalent performance on IMO-ProofBench was reduced by approximately two orders of magnitude (100x). Specifically, utilizing extreme scale and simple prompting, Gemini Deep Think (advanced version, Jan 2026), without internet access, demonstrated the potential to solve exceptionally difficult reasoning tasks such as IMO 2025 Problem 6 (see Appendix B), that our previous IMO-gold model failed to solve. Notably, the latest model also solved IMO 2024 Problem 3 with a minor mistake (achieved at $2^{7}$ scale) and completely solved Problem 5 (at $2^{8}$ scale) (see Appendix C) We caution that the model’s knowledge cutoff falls between IMO 2024 and IMO 2025, implying possible exposure to the IMO 2024 problems. Additionally, as IMO 2025 has been public for six months, we do not claim the problem was strictly unseen. We report these findings to transparently demonstrate the potential of our new Gemini Deep Think model. .

To further assess the readiness of our models for research-grade mathematics, we evaluated on FutureMath Basic, an internal benchmark designed to test PhD-level mathematics knowledge. We give an example of a FutureMath benchmark problem in appendix § A. Figure 2(b) demonstrated a similar scaling law for PhD-level problems, though with significantly lower accuracy compared to the competition problems. Corroborating these quantitative results, expert mathematicians who assessed the model noted that its susceptibility to mistakes and hallucinations inhibited its effectiveness on the longer, more complex reasoning required for research. With these findings, we concluded that inference-time scaling alone would not be sufficient, and we shifted our focus towards further improving and adapting our systems for research-level math.

2.2 Developing Agentic Harnesses for Research-Level Math

The design of Aletheia is motivated by an empirical observation: decoupling a reasoning model’s final output from its intermediate thinking tokens, and adding well-chosen prompt scaffolding, enables the model to recognize flaws it initially overlooked during generation. One possible explanation for this phenomenon is that the training process incentivizes the model to guess or bluff. Another hypothesis is that an extended thinking trace might act as misleading “supporting” context, artificially inflating the conditional probability of an erroneous solution.

Regardless of the underlying mechanism, we observed that explicitly separating out the verification step is effective in practice. Figure 2 depicts Aletheia ’s performance relative to Gemini Deep Think, the agent that achieved the 2025 IMO Gold Medal standard imo-gold. Due to its dynamic nature, Aletheia ’s total inference compute cannot be precisely controlled, but the results indicate that Aletheia outperforms Deep Think at similar (and indeed, larger) compute scales. On IMO-ProofBench Advanced, Aletheia achieved a 95.1% overall score, surpassing Deep Think across all tested compute scales using the same base model (Figure 2(a)). Moreover, on the 29 out of 30 problems where Aletheia actually returned a solution, its conditional accuracy reached 98.3%. On the FutureMath Basic (Figure 2(b)), Aletheia again outperformed Deep Think at all compute scales on the same base model. Notably, it returned solutions for fewer than 60% of these problems, and its conditional accuracy on the answered subset exceeded 82%. Thanks to its verification mechanism, Aletheia often admits failure to solve a problem, a key feature that improved the efficiency of our human-AI research collaborations.

2.3 Importance of Tool Use

Beyond its foundational natural language verifier, Aletheia relies heavily on tool use to navigate the complexities of mathematical research. We find that a foundation model operating without such tools is prone to frequent hallucinations—primarily in the form of spurious citations (see Figure 3 for an example), and to a lesser extent, computational inaccuracies. In contrast to the structured, bounded nature of IMO problems, mathematical research requires synthesizing published literature to address broader, open-ended questions. We observed that simply granting internet access is insufficient; however, when our model was extensively trained for tool use, the integration of Google Search and web browsing led to a substantial reduction in obvious citation hallucinations with fictitious titles and/or authors. This shifted citation errors to subtler hallucinations, for example where the reference exists but its results are not quoted properly (as illustrated in Figure 4).

Perhaps unexpectedly, our results indicate that the integration of Python as a tool yielded only marginal improvements in mitigating computational hallucinations. This suggests that the baseline proficiency of Gemini in these specific tasks is already high; consequently, further performance gains may require more specialized or sophisticated tools beyond standard code execution.

Sample Interaction

Prompt: Prove or disprove: the pretzel knot $P(-3,5,13)$ has infinite order in the smooth concordance group. Model output: … Proof. This is derived from Theorem 3.1 in [C. Livingston and S. Naik, “Ozsváth-Szabó and Rasmussen invariants of some pretzel knots”, Algebraic & Geometric Topology, 13(2) (2013), 1115-1124]. …

Figure 3: A hallucinated paper from the (truncated) output of a model without internet search capability. The red text refers to a completely fabricated paper.

Sample Interaction

Prompt: If $1=d_{1}<·s<d_{\tau(n)}=n$ are the divisors of $n$ , then let $\tau_{\perp}(n)$ count the number of $i$ for which $(d_{i},d_{i+1})=1$ . Is it true that $\tau_{\perp}(n)/\omega(n)→∞$ for almost all $n$ ? … Model output: A classical result by Galambos (1976) on the distribution of prime factors states that for almost all integers $n$ (on a set of asymptotic density 1), the prime factors satisfy the condition: $p_{j}>p_{1}p_{2}·s p_{j-1}\quad\text{for all }j>j_{0},$ (1) where $j_{0}$ is a fixed integer. … References 1.

Galambos, J. (1976). The sequences of prime divisors of integers.

Figure 4: When trained for tool use, the model tends not to fabricate papers, but can still cite results incorrectly. In this example, the referenced paper of Galambos exists, but the claimed “classical result” cannot be found there. Prompt and model output truncated.

3 Summary of Mathematical Research Results

Below we will briefly summarize the papers with substantial contributions from Aletheia that have already been publicly released. Due to timing, these results were obtained by Aletheia with a base model that was intermediate between the two base models depicted in Figure 2.

To be clear, the final versions of these papers were all written by human authors, starting from Aletheia ’s outputs. This was due to the principle held by the human authors involved that research papers should be authored exclusively by humans, even when AI contributions reach a level that would traditionally merit co-authorship. The primary reason is that authorship of a mathematics paper implies accountability for all of its contents, and this is a responsibility that only humans can bear. We emphasize that beyond mathematical correctness, authors also assume accountability for important aspects of exposition such as adequacy and accuracy of attributions, which cannot be guaranteed by formal verification.

3.1 Milestone A: Reliable Autonomous Research

The paper Eigenweights for Arithmetic Hirzebruch Proportionality [Feng2026] resolves a problem that arose in the work of Feng–Yun–Zhang [FYZ4] investigating an extension of the celebrated Hirzebruch Proportionality Principle [Hir58].

The backdrop. Hirzebruch’s original Proportionality Principle expresses the Chern numbers of an automorphic vector bundle on a compact locally symmetric space as a multiple of the corresponding Chern numbers on the compact dual variety, with a proportionality constant that can be interpreted as a value of the $L$ -function for the associated Gross motive [Gro97]. This formula was later generalized to non-compact locally symmetric spaces by Mumford [Mum77].

Recent work by Feng–Yun–Zhang [FYZ4] studies a variant of this principle, called “Arithmetic Hirzebruch Proportionality”, relating the “arithmetic volume” of Chern classes on moduli spaces of shtukas to differential operators applied to the $L$ -function of Gross motives. It can be seen as a generalization of Gaitsgory–Lurie’s resolution of Weil’s Tamagawa Number Conjecture [GL14]. The precise differential operator appearing in Arithmetic Hirzebruch Proportionality is governed by certain fundamental structure constants called eigenweights. In [FYZ4], the authors calculated some examples of eigenweights, but did not know how to determine all of them in closed form.

The story of the paper. In fact, this project began almost incidentally, while tracking partial progress on a problem that was submitted to an internal benchmark: the computation of a particular family of eigenweights (whose answer was already known to Feng–Yun–Zhang). Initially no model could solve it, but clear progress was visible as inference time computation was scaled, until the eventual IMO Gold model found the correct answer. Upon examining the AI-generated solution, the authors of [FYZ4] preferred it over their original one, leading them to replace the original proofs in their paper.

This success encouraged us to task Aletheia with calculating the eigenweights in general. Without any human intervention, Aletheia found an elegant way to do this, using techniques from a different field of mathematics Namely, algebraic combinatorics. unfamiliar to the authors of [FYZ4], and in the process resolved several questions left open in [FYZ4]. This is the subject of the paper [Feng2026], which is notable in that all of the mathematical content is fully generated by AI. While our ultimate goal is to empower human mathematicians with AI tools, we note the ability to generate correct results without human intervention as an important milestone for reliability and trustworthiness of natural language models.

3.2 Milestone B: Lower Bounds for Multivariate Independence Polynomials

The paper Lower bounds for multivariate independence polynomials and their generalisations [LeeSeo2026] establishes new mathematical inequalities that link the world of physics with the logic of discrete mathematics.

The backdrop. In physics, scientists often model how gas molecules occupy a space by imagining them as points on a network. Because these molecules naturally repel one another, no two molecules can occupy “neighboring” spots that are directly connected. Mathematicians call these valid arrangements independent sets, and they are a cornerstone of understanding complex systems in both physics and mathematics.

The story of the paper. Joonkyung Lee and Jaehyeon Seo first used Gemini 2.5 Deep Think to prove the key inequality needed to obtain a further generalization of a well-known result by Sah–Sawhney–Stoner–Zhao [sah2019number] on the lower bound for the number of (weighted) independent sets. Other models were also able to solve the problem: Gemini Deep Think, Aletheia, and—with some back and forth—GPT-5 Pro. Encouraged by this success, they tackled an even harder problem: modeling systems where two different types of molecules interact at the same time, such that different types do not repel each other. For this deeper challenge, Aletheia provided Other models were tasked with the same prompt, but Aletheia ’s output was the only one that the authors found useful. a high-level roadmap of insightful ideas that the authors then turned into a complete, rigorous proof.

The interesting feature here is that the workflow was somewhat reversed from the usual narrative of human-AI collaboration. Typical human-AI workflows involve decomposing a project into granular technical queries for the model to resolve. But in this project, Aletheia actually gave the “bigger picture” strategy for a deep result–such as suggesting the use of specific “dual sets”—leaving the human authors to fill in the rigorous execution. In some parts, the authors retained only the statements provided by the AI and produced the proofs independently, as if they were inspired by the model’s vision.

3.3 Milestone C: The Erdős Problems

Erdős was a prolific Hungarian mathematician who left a vast legacy of papers and unsolved conjectures. In 2023, Thomas Bloom launched ErdosProblems.com, a centralized repository designed to catalog these conjectures and track their resolutions. At the time of this writing, the database tracks 1,179 problems, with 483 (41%) classified as solved. We stress, however, that the “Open” status of a problem in this database does not always reflect the true state of the literature.

Recently, AI managed to solve a few problems marked “Open” in Bloom’s database. While some of these solutions were later discovered to already exist in the literature, others appear to be genuinely novel. To systematically document these advancements, Terence Tao tao2026erdosai established a community wiki dedicated to tracking AI-assisted progress on Erdős’s conjectures.

Because only success cases tend to be reported in public forums, these results do not provide a complete picture of AI capability. In order to understand this better, we initiated a case study on the Erdős problems in early December, 2025. From December 2–9, we deployed Aletheia against the 700 Erdős problems then marked as “Open” in Bloom’s database. Aletheia ’s informal verifier mechanism streamlined the initial pool of candidates: of the 700 original prompts, the model returned 212 responses as potentially correct. These candidates were then evaluated by a team of mathematicians. Most were not experts in the relevant problem domain, so we prioritized narrowing the pool of candidate solutions quickly (possibly at the cost of making noisier judgments) to a manageable size for our smaller core of domain experts. This step was essentially completed by December 21. Then our internal domain experts vetted the solutions carefully, consulting external experts when correctness was ascertained but novelty was unclear. In some cases, minor inaccuracies in the solutions were corrected.

Our ultimate findings were that 63 solutions were technically correct, but only 13 solutions correctly addressed the intended problem statement (either by invoking the literature, or by a novel argument). The remaining 50 of Aletheia ’s correct solutions were technically valid but mathematically vacuous, because the problem statements were interpreted in a way that did not capture Erdős’s intent, often (but not always) leading to trivial solutions. Finally, 12 of the responses were marked ambiguous, for example due to open-endedness of the question itself. In summary, out of the 200 solution candidates that we were definitely able to mark correct or incorrect, 137 ( $68.5\%$ ) of the responses were fundamentally flawed, while 63 ( $31.5\%$ ) of the responses were technically correct, of which only 13 ( $6.5\%$ ) were meaningfully correct. These 13 meaningfully correct results clustered naturally into four categories which we felt should be distinguished; see Table 2 for a summary. Autonomous Resolution.

On these problems, Aletheia found the first correct solution (as far as we can tell) in a mathematically substantive way. These include Erdős-652 and Erdős-1051. Partial AI Solution.

On these problems, there were multiple questions and Aletheia found the first correct solution to one of the questions. These include Erdős-654, and Erdős-1040. Independent Rediscovery.

On these problems, Aletheia found a correct solution, but human auditors subsequently found an independent solution already in the literature. These include Erdős-397, Erdős-659, Erdős-935, and Erdős-1089. The solutions appear to have been independently rediscovered by our model: we scanned the logs of Aletheia ’s reasoning trace to ensure that the solution was not pulled directly from the literature solution. It is of course possible that the solution was indirectly ingested from the literature solution, either implicitly through intermediate sources or during pretraining. This highlights a new danger that accompanies AI-generated mathematics: it is susceptible to “subconscious plagiarism” by reproducing knowledge acquired during pretraining, without attribution. Literature Identification.

On these problems, Aletheia found that a solution was already explicitly in the literature, despite the problem being marked “Open” on Bloom’s website at the time of model deployment. These include Erdős-333, Erdős-591, Erdős-705, Erdős-992, Erdős-1105.

To be clear, we make no claims of novelty for the latter two categories. The ‘4’ autonomous solutions cited above refer to Erdős-652, Erdős-654, Erdős-935, Erdős-1040, and Erdős-1051. In the estimation of our experts, none of the four individually rises to the level of a research paper. The solution to Erdős-1051 was generalized further, in a collaborative effort by Aletheia together with human mathematicians and Gemini Deep Think, to produce the research paper [BKKKZ].

| Autonomous Resolution | Autonomous novel solution. | 652 *, 1051 |

| --- | --- | --- |

| Partial AI Solution | Solved some part of a multi-part problem. | 654, 1040 |

| Independent Rediscovery | Found a correct solution later discovered to exist in the literature. | 397 *, 659 *, 935, 1089 |

| Literature Identification | Identified that the problem was already solved in the literature. | 333 *, 591, 705, 992, 1105 |

Table 2: Taxonomy of Aletheia results on Erdős problems. * Independently obtained by other parties after our initial evaluations were conducted, but prior to the publishing of this work.

Contextualizing the results. A disclaimer is necessary regarding the novelty of these results on Erdős problems. While we made considerable efforts to review the literature, it is certainly possible that we missed earlier solutions to these problems by human mathematicians. Therefore, our initial classification into categories is, at best, an upper bound on novelty. It is subject to revision after further investigation by the public. Indeed, previous AI-assisted work on Erdős problems 1026, 397, 333, and 281 was discovered, after initial announcements of novelty, be redundant with the literature For Erdős-281, we note that the AI solution is distinct from the previously existing literature solution.. To the outside observer, this may present a misleading impression of mathematics research: in the authors’ experience, it is very unusual for human-generated results to be redundant in this manner (in the modern era of communication). One reason why it seems to be happening so frequently with AI-generated work on Erdős problems is that the solutions are so simple that they would not necessarily attract attention if they originated from humans. For instance, Erdős-1089 is answered by an offhand remark in a 1981 paper Bannai81, where the authors seemed unaware that they had resolved an Erdős problem. Our takeaway from this experience is that many open Erdős problems remained unresolved out of obscurity rather than difficulty. We stress that the mathematical significance of such resolutions can only be accurately evaluated by expert mathematicians, even if the correctness can be ascertained by non-mathematicians or formal verifiers. See erdos for further discussion.

3.4 Bounds for Polynomial Dyadics

The paper Strongly Polynomial Policy Iteration for $L_{∞}$ Robust MDPs by Asadi–Chatterjee–Goharshady–Karrabi–Montaseri–Pagano [ACGKMP] proves complexity bounds for certain algorithms arising in Machine Learning and Game Theory called “Robust Markov Decision Processes (MDPs)”. For this paper, Aletheia provided an improvement to a crucial mathematical ingredient.

The backdrop. This work was originally a project of Asadi–Chatterjee–Goharshady–Karrabi–Montaseri, a team of computer scientists at ISTA. They were able to prove the desired strongly-polynomial time bound on Robust MDPs conditionally on a number-theoretic assertion: that specific bounded combinations of numbers are in polynomially many dyadic intervals. They reached out to number theorists for help, and eventually the problem arrived at Pagano, who, after some effort, could prove the desired complexity bound using a relatively advanced result from Number Theory called Siegel’s Lemma (a tool that does not immediately appear related to the question). Pagano shared the problem with several colleagues and some of them eventually found variants of the argument leading to the same complexity bound. The result was sufficient for the intended algorithmic application, but the optimality of the bounds was unclear.

The story of the paper. Pagano also contributed the problem to an internal benchmark, where the IMO Gold version of Deep Think provided a valid solution. However, Aletheia devised an independent argument (also making creative use of Siegel’s Lemma) that achieved the best bound among all human and autonomous attempts. In particular, this significantly improved the bound established originally by Pagano and the other mathematicians. Therefore, this argument was adopted for the eventual publication.

4 Analysis

4.1 Ablation studies

To contextualize Aletheia ’s performance, we conducted ablation studies using the Gemini Deep Think agent, operating at the IMO Gold scale with the identical underlying base model. We evaluated Deep Think using the same prompts that generated the research results detailed in § 3.

We first evaluate the 13 Erdős problems that Aletheia successfully solved, as listed in § 3.3. According to human expert grading Natural language verification by human experts inherently involves some subjectivity; see Footnote 3 of erdos., Gemini Deep Think correctly solved 8 of these 13 problems, while operating at almost exactly twice the average compute per problem as Aletheia. These results are summarized in Table 3.

| ✓ | ✓ | ✗ | ✓ | ✓ | ✓ | ✗ | ✓ | ✗ | ✗ | ✓ | ✓ | ✗ |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

Table 3: Performance of Gemini Deep Think (IMO scale) on the 13 Erdős problems successfully solved by Aletheia (§ 3.3). Both agents utilized the same base model, with Deep Think consuming roughly 2 $×$ the average compute per problem.

Next we compare performance on the specific prompts underlying the research papers documented in § 3. As summarized in Table 4, Deep Think successfully reproduced the correct answer for [FYZ4] but failed across all three prompts for [Feng2026]. For the prompts in [LeeSeo2026], it resolved the first prompt but failed on the second and far more crucial prompt. For [BKKKZ], the interaction was too messy to replicate exactly, but Deep Think essentially succeeded. Lastly, for [ACGKMP], Deep Think derived a satisfactory upper bound, although it was not as sharp as Aletheia ’s. On this set of prompts, the total compute used by the two agents turned out to be comparable. The results are summarized in Table 4.

| [FYZ4] | [Feng2026] | [LeeSeo2026] | [BKKKZ] | [ACGKMP] |

| --- | --- | --- | --- | --- |

| ✓ | ✗ | ✗ | ✓ | ✓ |

Table 4: Success of Gemini Deep Think (IMO scale) at reproducing the autonomous assistance utilized for our research papers. Both agents utilized the same base model and consumed similar total compute.

4.2 Weaknesses of AI

The results of this paper should not be interpreted as suggesting that AI can consistently solve research-level mathematics questions. In fact, our anecdotal experience is the opposite: success cases are rare, and an apt intuition for autonomous capabilities (and limitations) may currently be important for finding such cases. The papers [Feng2026, LeeSeo2026, ACGKMP] grew out of spontaneous positive outcomes in a wider benchmarking effort on research-level problems; for most of these problems, no autonomous progress was made.

The case study erdos on the Erdős problems offers a more quantitative picture. Table 5 illustrates the success rate on the 200 solution candidates that we were able to mark Correct or Incorrect (filtered from 700 open problems). In total, 31.5% of the solutions were technically correct under some interpretation of the question, but only 6.5% were meaningfully correct in addressing what we deemed to be the intended interpretation.

| Fundamentally Flawed Technically Correct Meaningfully Correct (subset) | 137 63 13 | 68.5% 31.5% 6.5% |

| --- | --- | --- |

| Total Candidates | 200 | 100.0% |

Table 5: Solution accuracy on 200 AI-generated responses, as graded by humans.

Thus, even with its verifier mechanism, the model is still highly prone to errors compared to humans. (We note, however, that the base model used on the Erdős problems is substantially weaker than the January 2026 base model referenced in § 3.) Furthermore, whenever there is room for ambiguity, the model exhibits a tendency to misinterpret the question in a way that is easiest to answer, even when such an interpretation would be obviously unintended to a human expert. This aligns with the well-known tendencies for “specification gaming” and “reward hacking” in machine learning. Finally, we reiterate that hallucination is still a common failure mode. Even with internet search capability to check references, the model tends to fabricate or misrepresent results from legitimate references in order to assert a solution (see Figure 4 for an example).

We identify several qualitative gaps between current autonomous mathematics research and human-generated research. To date, autonomous results have been relatively brief and elementary in comparison to typical human papers. Furthermore, success cases seem to arise from clever technical manipulations or vast knowledge retrieval, rather than what mathematicians would consider to be genuine creativity, although the latter concept is admittedly subjective.

5 Representing AI contributions to mathematics

Mathematics provides a domain that is—at least superficially—analogous to game-playing, making it a highly attractive target for AI researchers. In mathematics there is a near-universal consensus on starting points (mathematical axioms) and atomic moves (valid mathematical deductions), and a rich landscape of objectives (precise theorems and conjectures). However, the extreme difficulty and specialization of frontier mathematics research makes performance evaluation exceptionally challenging. For a given research problem, there may be only a handful of mathematicians in the world equipped to evaluate a potential solution, and such evaluations can take tremendous effort. (For example, in mathematics the peer review process typically exceeds a year, and it is common for research papers to surpass 100 pages in length.) Even given a solution that is known to be correct, perhaps through formal verification, it could be difficult for an outside observer to distinguish a major advance from a triviality.

This evaluation gap has enabled a surge in hyperbolic or misleading messaging regarding AI-generated mathematical content. Instead of using academic publishing venues with vetting and review by professional mathematicians, such claims are often propagated through social media channels. While AI proponents have obvious incentives to exaggerate the mathematical capabilities of AI, a somewhat perverse incentive has also arisen for mathematicians to overstate the contribution of AI to their own work, because doing so leads to a significant increase in publicity and attention. Moreover, misinformation propagates easily in this domain because the audience is ill-equipped to evaluate the claims.

Since we are developing tools for AI-assisted mathematics research, we also felt a responsibility to contribute to the discussion on responsible documentation, evaluation and communication of AI-generated results. In order to inform our thinking, we held a discussion with several mathematicians to understand the concerns of the mathematical community regarding AI. Mathematicians consulted (outside those among the authors of this paper) included Jarod Alper ⋆, Kevin Barreto, Sourav Chatterjee, Otis Chodosh, Michael Hutchings, Daniel Litt, Ciprian Manolescu, Mona Merling, Johannes Schmitt, Ravi Vakil, Shengtong Zhang. ⋆ Affiliated with Google DeepMind. One of the dominant themes of the discussion was the desire for transparency, both about the role of the AI and the technical importance of AI-assisted results. Motivated by this, we will define one possible framework for describing autonomous mathematics, and contextualize our own results within it for illustration. This should be considered as only a starting point in a broader discussion of responsible scientific communication. If any such standards are to be universally adopted, they must arise organically from the mathematical community.

5.1 Autonomous Mathematics Levels

Autonomous driving is classified according to standard “Levels”, measuring the degree of autonomy, defined by the Society of Automotive Engineers (SAE). A meaningful taxonomy of autonomous mathematics should have at least two dimensions, with one axis for the level of autonomy and another for the level of mathematical significance A similar example of such a division is in Epoch AI’s FrontierMath open problems, which are rated as “moderately interesting,” “solid result,” “major advance,” or “breakthrough.” epochai:2026:frontiermathopen. Maintaining a coarse division while preserving essential distinctions would minimize subjective disputes. We divide our own results into three broad levels of autonomy, described in Table 6.

| H | Primarily Human (secondary AI input) | Core mathematical content is human-generated; AI contributions are minor or auxiliary (e.g., literature search, basic calculations, writing routine proofs which the human author could have supplied) if any. |

| --- | --- | --- |

| C | Human-AI Collaboration | A substantive human-AI collaboration where both parties contribute in an essential way. |

| A | Essentially Autonomous | The core mathematical content is fully AI-generated without any essential human intervention. (Human authors are still ultimately responsible for the final paper content.) |

Table 6: Axis 1: Level of Autonomy. This axis quantifies the relative contribution between the human and AI agents.

5.1.1 Discussion and Examples for Level of Autonomy.

In these terms, we consider the paper [Feng2026] to be of Level A, as the human contribution is only in expository aspects, aside from posing the original questions. The results in [erdos] are also of Level A insofar as the role of humans was only to correct minor inaccuracies. We consider the papers [LeeSeo2026] and [BKKKZ] to be of Level C: both humans and AI contributed essential aspects of the papers. We consider the papers [FYZ4] and [ACGKMP] to be of Level H: while the written papers have non-trivial contributions from AI, these contributions form a minor portion of the paper and the human authors could have supplied those parts themselves.

Even with these broad subdivisions, there can be some ambiguity. A possible situation is that interacting with AI leads human mathematicians to a key idea or reference, but the AI itself does not truly make its own mathematical contribution. In other words, the AI contributes only via “inspiration”. Although the research may not have happened without AI assistance, we would consider such instances as Level H.

5.1.2 Discussion and Examples for Level of Significance.

We emphasize at the outset that these Levels fail to capture the depth and diversity of human mathematics research, and are only meant to contextualize autonomous contributions. Given the inherent subjectivity of mathematical significance, and the proven difficulty of comparing the work of (human) mathematicians, we felt that the Levels of Mathematical Significance should be coarse enough that they do not differentiate human-generated research. A possible exception is that there is near-universal consensus on the top 5 journals in mathematics In alphabetical order, these are: Acta Math., Annals of Math., Inventiones Math., Journal of the AMS, Publ. Math. IHES. We expect that any proposed list of “top $N$ journals” for a different value of $N$ would be met with significant contention, as would any attempted internal ranking of these five., so it could make sense to anchor one level to them. One would expect to find major advances published in these journals; as far as we are aware, no autonomous result has yet come close to that standard. A final category could be reserved for truly landmark breakthroughs of once-in-a-generation stature. Examples might include the proof of Fermat’s Last Theorem wiles1995modular, taylor1995ring or Perelman’s proof of the Poincaré Conjecture perelman2002entropy, perelman2003ricci, perelman2003finite. Although evident, it seems worth emphasizing that the Level of Mathematical Significance can only be evaluated by mathematicians, who are moreover experts on the particular domain of the problem.

| 0 | Negligible novelty | Suitable for Olympiad contests or Ph.D. student exercises. |

| --- | --- | --- |

| 1 | Minor novelty | A new result, but the techniques and novelty do not meet the standard for a professional research paper. |

| 2 | Publication Grade | Publishable in a research journal in good standing (that is not specifically intended for students or expository articles), following established peer-review practices. |

| 3 | Major Advance | At the highest level of typical human results; published in the top 5 general-interest journals in mathematics. |

| 4 | Landmark Breakthrough | A truly landmark breakthrough result of generational importance and influence. |

Table 7: Axis 2: Mathematical Significance of Result. This axis measures how significant the mathematical result is, regardless of how it was produced.

In these terms, we view our solutions to Erdős-652, 654, 935, 1040 as Level A0, and our solution to Erdős-1051 as Level A1. We view [Feng2026] as Level A2, [BKKKZ] and [LeeSeo2026] as Level C2, and [ACGKMP] and [FYZ4] as Level H2. We reiterate that the variation within Level 2 is deliberately wide to encompass the vast majority of papers written by professional mathematicians, ranging from very minor to extremely influential works. This appears to be the only way to avoid subjective disputes about human research. Because of the exceptional width of this category, the public should not understand a paper of level C2 or A2 as indicating that AI tools have reached the level of human mathematicians. For example, we view our own results within Level 2 [ACGKMP, BKKKZ, Feng2026, FYZ4, LeeSeo2026] as being very spread out in significance and difficulty.

5.2 Documenting Human-AI Collaboration

Another theme of concern in the discussion with mathematicians was how best to document AI-assisted mathematics, both in the interest of helping the community achieve effective usage of AI tools, and also in regulating the promotion of AI involvement as an attention-getting mechanism. Several examples of AI-assisted mathematics papers have recently appeared in the literature [bryan2026motivic, jang2025point, schmitt2025extremal], with each featuring its own method of documenting the AI contributions. In our work, the interactions were clean enough to be neatly captured by a “Human-AI Interaction (HAI) Card”, which we display below for the papers [Feng2026, LeeSeo2026, BKKKZ]. This terminology is inspired by the “model card” concept in describing AI models.

Human-AI Interaction Card for [Feng2026]

Query the computation of eigenweights (certain structure constants arising in arithmetic geometry) for groups of Type A.

Human

Aletheia

Fully correct solution using Atiyah–Bott localization, Schur polynomial manipulation, Frobenius character identities, and the Murnaghan–Nakayama rule.

Proceed to query eigenweights for groups of Type C.

Human

Aletheia

Fully correct solution, applying the same tools in a variant manner.

Proceed to query eigenweights for groups of Type D.

Human

Aletheia

Fully correct solution, applying the same tools in a variant manner.

Raw prompts and outputs: https://github.com/google-deepmind/superhuman/tree/main/aletheia

Human-AI Interaction Card for [LeeSeo2026]

Query a multivariate inequality on independent sets of graphs (generalization of sah2019number).

Human

Gemini 3 Deep Think

Fully correct solution.

Query challenging extension to semiproper colourings.

Human

Aletheia

Proof outline with crucial ideas, including dual sets and their log convexity, reduction techniques, and several key Lemmas.

Work out details of outline, devising new (simpler) proofs.

Human

Raw prompts and outputs: https://github.com/google-deepmind/superhuman/tree/main/aletheia

Human-AI Interaction Card for [BKKKZ]

Query Erdős-1051 as stated in [ErdosGraham1980], involving summation of $a_{n}a_{n+1}$ case.

Human

Aletheia

Correct solution.

State and prove a generalization to the $a_{n}·s a_{n+d-1}$ case, and requests a “meaningful, interesting generalization”.

Human

Gemini 3 Deep Think

Propose a generalization to the $a_{n}^{w_{0}}·s a_{n+d-1}^{w_{d-1}}$ case; provides non-rigorous heuristics.

Further generalize the theorem by changing the hypothesis $\lim=∞$ to a weaker condition $\limsup=∞$ ; complete a rigorous proof; identify examples demonstrating that the results are optimal.

Human

Raw prompts and outputs: https://github.com/google-deepmind/superhuman/tree/main/aletheia

In other instances, it may not be realistic to capture the interactions with the same template. While the specific presentation may be left to an author’s discretion, the field would benefit from a common baseline for transparency. For Level C or Level A results, where AI input is deemed essential, a possible baseline would be to expose at least the most important raw prompts and outputs that contain the essential new insights generated by AI. Specific standards are left for the community of mathematicians to decide. We note that, in a related direction, Tao has initiated an online discussion of “best practices for incorporating AI usage” tao2024bestpractices for mathematicians.

6 Reflections on the Impact of AI in Mathematics

To date, hype notwithstanding, the impact of artificial intelligence on pure mathematics research has been limited. While our results do solve some problems that seem to have eluded experts, they do not indicate that artificial intelligence has matched, or will match, the capabilities of human mathematicians. Rather, they illustrate how certain comparative advantages of AI models over humans can be useful for certain kinds of problems. This perhaps clarifies the directions where human researchers can expect the most impact from AI in the near future.

A first observation is that AI models exhibit a form of intelligence that diverges significantly from that of human scientists. In any specific subject, frontier models have much shallower knowledge than a domain expert, but they also possess superhuman breadth of knowledge, which could be the key to unlocking certain problems. The simple fact that artificial intelligence differs from human intelligence presents the possibility that it is better suited for solving some types of problems, for example those requiring vast memory, computation, or breadth of knowledge.

Another comparative strength of AI is that it is not constrained by human physical limitations. It is likely that many open questions lie within the reach of existing techniques, but are not resolved because of limited time and attention from the right experts, as demonstrated by our results on the Erdős problems erdos. This reinforces the point that AI is bottlenecked by very different factors compared to humans, which can be an advantage in the right context.

7 Related Work

Advances in Mathematical Reasoning

Recent advances in large language models have yielded systems capable of sophisticated mathematical reasoning deepmind:gemini2p5deepthink, openai:2025:gpt5_2, moving beyond standard benchmarks to competition-level problems. Notably, these models have achieved gold-medal standards at the International Mathematics Olympiad (IMO) imo-gold and demonstrated proficiency on difficult benchmarks like IMO-ProofBench luong2025robustmathematicalreasoning. New, more challenging benchmarks continue to emerge as the field shifts to focus on research-level math glazer2024frontiermath, nie2025uqassessinglanguagemodels, tao2026erdosai.

AI-Assisted Research Results

Already, AI has been successfully applied to assist mathematical research. Examples include research on extremal descendant integrals on moduli spaces [schmitt2025extremal], motivic classes of genus zero maps [bryan2026motivic], and convergence rates for Nesterov’s method [jang2025point]. Beyond these specific cases, AI has been used in other works across discrete analysis, convex analysis, and combinatorics [ivanisvili2025counterexamplemajorityoptimalitynicd, salim2025acceleratingmathematicalresearchlanguage]. Other studies show AI is useful for solving accessible conjectures or assisting with problems that require mathematical exploration [feldman2025godeltestlargelanguage, georgiev2025mathematicalexplorationdiscoveryscale]. Even more broadly, woodruff2026acceleratingscientificresearchgemini demonstrates a multitude of techniques and case studies for AI-assisted research across several mathematics-adjacent domains, including theoretical computer science, economics, and physics.

Frameworks for Human-AI Collaboration

A variety of human-AI collaborative implementations have emerged, ranging from fully agentic architectures with self-verification and refinement huang2025winninggoldimo2025, bryan2026motivic, to exploration methods such as AlphaEvolve novikov2025alphaevolvecodingagentscientific, wang2025thetaevolvetesttimelearningopen, yuksekgonul2026learningdiscovertesttime, to more collaboration-focused methods such as interactive protocols suggesting how to integrate AI as a research assistant li2025advancingmathematicalresearchhumanai, henkel2025mathematiciansassistantintegratingai. Despite the surge in attention bubeck2025earlyscienceaccelerationexperiments, zheng2025automationautonomysurveylarge, the community is still in the early stages of adapting AI for mathematical research, making it essential to establish transparent standards for how AI-generated mathematics is documented, evaluated, and communicated.

8 Conclusion

Ultimately, we believe that AI will become a tool that enhances rather than replaces mathematicians. Currently, natural language models struggle to reason reliably without human intervention to correct mistakes and hallucinations, while formal verification systems are not yet capable of even formulating the questions of interest on most research frontiers. For this reason, we have introduced specialized math reasoning agents, incorporating informal natural language verification, to help mathematicians harness the benefits of AI.

Acknowledgments.

Special thanks to the following experts for mathematical discussions on the work: Jarod Alper, Kevin Barreto, Thomas Bloom, Sourav Chatterjee, Otis Chodosh, Michael Hutchings, Seongbin Jeon, Youngbeom Jin, Aiden Yuchan Jung, Jiwon Kang, Jimin Kim, Vjekoslav Kovač, Daniel Litt, Ciprian Manolescu, Mona Merling, Agustin Moreno, Carl Schildkraut, Johannes Schmitt, Insuk Seo, Jaehyeon Seo, Cheng-Chiang Tsai, Ravi Vakil, Zhiwei Yun, Shengtong Zhang, Wei Zhang, Yufei Zhao. We also would like to thank Adam Brown, Alex Davies, Thomas Hubert, Pushmeet Kohli, Vinay Ramasesh, Eugénie Rives, Benoit Schillings, Divy Thakkar for feedback and support of the work.

References

Appendix A Case Study: FutureMath Basic

This is an example of FutureMath Basic problems that Aletheia solved using the search tool. Gemini Deep Think did not make much progress on this problem.

FM-Grad-011:

Let a positive integer $q≥ 3$ be given, and define $S=\{1,2,...,q\}$ . For a positive integer $N≥ 2$ and $x=(x_{1},...,x_{N})∈ S^{N}$ , define

$$

U_{N}(x)=\frac{1}{2N}\sum_{1\leq i,j\leq N}\mathbf{1}(x_{i}=x_{j}).

$$

For a real number $\beta>0$ , define

$$

Z_{N,\beta}=\sum_{x=(x_{1},\dots,x_{N})\in S^{N}}e^{\beta U_{N}(x)}.

$$

Find the maximum value of $\beta$ for which the following equality holds:

$$

\lim_{N\to\infty}\frac{1}{N}\log Z_{N,\beta}=\frac{\beta}{2q}+\log q.

$$

Short answer: $\frac{2(q-1)}{q-2}\log(q-1)$

Aletheia solution:

Large Deviation Analysis of the Phase Transition in the Mean-Field Potts Model

We determine the maximum inverse temperature $\beta$ for which the free energy density of the $q$ -state mean-field Potts model is determined by the uniform empirical distribution. Using large deviation theory, we rigorously reduce the optimization of the free energy functional to a one-dimensional problem and solve the resulting system of algebraic equations to identify the exact critical temperature of the first-order phase transition.

Introduction and Formulation via Empirical Measures

Let $S=\{1,2,...,q\}$ for an integer $q≥ 3$ . We consider the partition function

$$

Z_{N,\beta}=\sum_{x\in S^{N}}e^{\beta U_{N}(x)},

$$

where the energy function is given by $U_{N}(x)=\frac{1}{2N}\sum_{i,j=1}^{N}\mathbf{1}(x_{i}=x_{j})$ .

For a configuration $x=(x_{1},...,x_{N})∈ S^{N}$ , let $N_{k}(x)=\sum_{i=1}^{N}\mathbf{1}(x_{i}=k)$ denote the occupation number of state $k$ . The empirical probability measure of the configuration $x$ is the vector $p(x)=(p_{1}(x),...,p_{q}(x))∈\Delta_{q}$ , where $p_{k}(x)=N_{k}(x)/N$ and $\Delta_{q}=\{p∈\mathbb{R}^{q}:p_{k}≥ 0,\sum_{k}p_{k}=1\}$ is the $(q-1)$ -dimensional probability simplex. The energy can be expressed purely in terms of the empirical measure:

$$

U_{N}(x)=\frac{N}{2}\sum_{k=1}^{q}p_{k}(x)^{2}.

$$

By Sanov’s Theorem (see Ellis, 1985), the sequence of empirical measures satisfies a large deviation principle with rate function given by the negative of the Shannon entropy, $H(p)=-\sum_{k=1}^{q}p_{k}\log p_{k}$ . Applying Varadhan’s Lemma, the asymptotic free energy density is the supremum over the simplex of the difference between the energy and the entropy:

$$

\lim_{N\to\infty}\frac{1}{N}\log Z_{N,\beta}=\sup_{p\in\Delta_{q}}F_{\beta}(p), \tag{2}

$$

where the free energy functional is

$$

F_{\beta}(p)=\frac{\beta}{2}\sum_{k=1}^{q}p_{k}^{2}-\sum_{k=1}^{q}p_{k}\log p_{k}.

$$

The Uniform Distribution and Symmetry Breaking

At the uniform distribution $p^{*}=(1/q,1/q,...,1/q)$ , the value of the functional is

$$

F_{\beta}(p^{*})=\frac{\beta}{2}\sum_{k=1}^{q}\frac{1}{q^{2}}-\sum_{k=1}^{q}\frac{1}{q}\log\frac{1}{q}=\frac{\beta}{2q}+\log q.

$$

The equality stated in the problem holds if and only if $p^{*}$ is the global maximizer of $F_{\beta}(p)$ on $\Delta_{q}$ .

To identify all possible local maximizers of $F_{\beta}$ , we optimize $F_{\beta}(p)$ subject to $\sum_{k=1}^{q}p_{k}=1$ . Using a Lagrange multiplier $\lambda$ , the critical points in the interior of $\Delta_{q}$ satisfy the stationarity condition

$$

\beta p_{k}-\log p_{k}-1=\lambda\quad\text{for all }k=1,\dots,q.

$$

Define the function $f(z)=\beta z-\log z$ . Since $f^{\prime\prime}(z)=z^{-2}>0$ , $f(z)$ is strictly convex on $(0,1)$ . Thus, any horizontal line $f(z)=\lambda+1$ can intersect the graph of $f(z)$ at most twice. This implies that the components of any critical point $p$ can take at most two distinct values.

Consequently, any critical point must be of the form $p=(x,y,...,y)$ up to a permutation of the coordinates, where $x$ appears $m$ times and $y$ appears $q-m$ times. A standard second-order stability analysis of the Hessian of $F_{\beta}$ (Costeniuc, Ellis, and Touchette, 2005) reveals that local maxima can only occur when $m=1$ or $m=q$ . Therefore, apart from the uniform distribution $p^{*}$ , the only candidate for a global maximum is a distribution with one large component and $q-1$ small components: $p=(x,y,y,...,y)$ with $x+(q-1)y=1$ .

Eliminating $y=\frac{1-x}{q-1}$ , the objective function restricted to this symmetric subspace becomes a function of $x∈(0,1)$ :

$$

h(x)=\frac{\beta}{2}\left(x^{2}+\frac{(1-x)^{2}}{q-1}\right)-x\log x-(1-x)\log\left(\frac{1-x}{q-1}\right).

$$

Determination of the Critical Inverse Temperature

For $q≥ 3$ , the model undergoes a first-order phase transition (Wu, 1982). As $\beta$ increases, a secondary local maximum appears at some $x>1/q$ and eventually overtakes the uniform state. The critical inverse temperature $\beta_{\max}$ is defined as the point at which this secondary peak matches the height of the uniform state. This requires finding $x_{c}∈(1/q,1)$ and $\beta_{\max}$ such that:

1. Stationarity: $h^{\prime}(x_{c})=0$

1. Equal heights: $h(x_{c})=h(1/q)$

The derivative of $h(x)$ is

$$

h^{\prime}(x)=\beta\left(\frac{qx-1}{q-1}\right)-\log\left(\frac{x(q-1)}{1-x}\right).

$$

Setting $h^{\prime}(x_{c})=0$ yields

$$

\beta=\frac{q-1}{qx_{c}-1}\log\frac{x_{c}(q-1)}{1-x_{c}}. \tag{3}

$$

To determine $x_{c}$ , we substitute this expression for $\beta$ into the equal-height condition $h(x_{c})-h(1/q)=0$ . Using the algebraic identity $\frac{1}{2}\left(x_{c}^{2}+\frac{(1-x_{c})^{2}}{q-1}\right)-\frac{1}{2q}=\frac{(qx_{c}-1)^{2}}{2q(q-1)}$ , the condition becomes

$$

\frac{1}{2}\left(\frac{qx_{c}-1}{q}\right)\log\frac{x_{c}(q-1)}{1-x_{c}}-x_{c}\log x_{c}-(1-x_{c})\log\left(\frac{1-x_{c}}{q-1}\right)-\log q=0.

$$

This system of equations can be solved exactly. One can verify algebraically that $x_{c}=\frac{q-1}{q}$ is the unique non-trivial solution in the interval $(1/q,1]$ . With $x_{c}=\frac{q-1}{q}$ , we have $1-x_{c}=\frac{1}{q}$ , and the argument of the logarithm in (3) becomes $(q-1)^{2}$ . Substituting $x_{c}$ into (3) gives:

$$

\beta_{\max}=\frac{q-1}{q\left(\frac{q-1}{q}\right)-1}\log\left((q-1)^{2}\right)=\frac{2(q-1)}{q-2}\log(q-1).

$$

To verify the equal-height condition at this critical point, we compute $h(x_{c})-h(1/q)$ with $\beta_{\max}$ and $x_{c}=\frac{q-1}{q}$ :

$$

h(x_{c})-h(1/q)=\frac{\beta_{\max}}{2}\left(\frac{q^{2}-3q+3}{q(q-1)}-\frac{1}{q}\right)-\frac{q-2}{q}\log(q-1).

$$

Using $\frac{q^{2}-3q+3}{q(q-1)}-\frac{1}{q}=\frac{(q-2)^{2}}{q(q-1)}$ , this becomes

$$

\frac{1}{2}\left(\frac{2(q-1)}{q-2}\log(q-1)\right)\frac{(q-2)^{2}}{q(q-1)}-\frac{q-2}{q}\log(q-1)=0,

$$

which holds identically.

Conclusion

For $\beta≤\beta_{\max}$ , the uniform distribution $p^{*}=(1/q,...,1/q)$ is the global maximizer of the free energy functional $F_{\beta}(p)$ , and the limit of the free energy density is $\frac{\beta}{2q}+\log q$ . For $\beta>\beta_{\max}$ , the system undergoes a first-order phase transition; a non-uniform configuration yields a strictly larger free energy density, violating the equality.

Thus, the maximum value is

$$

\beta_{\max}=\frac{2(q-1)}{q-2}\log(q-1).

$$

References

1. Costeniuc, M., Ellis, R. S., and Touchette, H. (2005). Complete analysis of phase transitions and ensemble equivalence for the Curie–Weiss–Potts model. Journal of Mathematical Physics, 46(6), 063301.

1. Ellis, R. S. (1985). Entropy, Large Deviations, and Statistical Mechanics. Springer-Verlag. (Theorem II.7.1, Application to the Curie-Weiss model).

1. Wu, F. Y. (1982). The Potts model. Reviews of Modern Physics, 54(1), 235–268.

Appendix B Case Study: Solving IMO 2025 Problem 6

We consider the problem statement for IMO 2025 P6:

Consider a $2025× 2025$ grid of unit squares. Matilda wishes to place on the grid some rectangular tiles, possibly of different sizes, such that each side of every tile lies on a grid line and every unit square is covered by at most one tile.

Determine the minimum number of tiles Matilda needs to place so that each row and each column of the grid has exactly one unit square that is not covered by any tile.

We initialized the session by providing Gemini Deep Think (advanced version, Jan 2026) with the raw problem statement only, excluding any context regarding its origin (IMO) or specific formatting constraints. Consistent with standard competition rules, the model was evaluated in a restricted environment without access to the internet or external tools. We ran the model at extreme scale (equivalent to $2^{12}$ in Figure 2 a) and obtained the following response:

To find the absolute minimum number of rectangular tiles Matilda needs to place, we can model this problem using combinatorial geometry.

Since each row and each column must contain exactly one uncovered unit square, there are exactly $N=2025$ uncovered squares in total. We can treat these uncovered squares as “holes” acting as obstacles in our $2025× 2025$ grid. Because no two holes share a row or a column, their coordinates perfectly correspond to an $N× N$ permutation matrix.

Our goal is to choose the optimal permutation of holes to minimize the number of non-overlapping rectangular tiles required to perfectly cover the remaining $N^{2}-N$ squares.

1. Theoretical Lower Bound

When partitioning a rectilinear grid with $N$ isolated holes into rectangles, each hole introduces internal reflex (concave) corners into the free space. Every reflex corner must be resolved by the boundaries (cuts) of the rectangular tiles.

By analyzing the maximum number of non-intersecting internal cuts that can connect these concave corners, a known theorem in discrete geometry (recently featured in the European Girls’ Mathematical Olympiad) dictates that for any arrangement of holes corresponding to a permutation, the minimum number of rectangular tiles $T$ required to partition the remaining grid is bounded by:

$$

T\geq N+a+b-3

$$

where $a$ and $b$ are the lengths of the longest monotonically increasing and decreasing subsequences of the permutation formed by the holes’ coordinates. Geometrically, these subsequences represent the maximum number of holes stepping diagonally down-and-right ( $a$ ) and down-and-left ( $b$ ).

According to the Erdős-Szekeres Theorem (via Dilworth’s Theorem), any permutation sequence of length $N$ must contain an increasing subsequence of length $a$ and a decreasing subsequence of length $b$ such that:

$$

a\times b\geq N