# Internalizing Meta-Experience into Memory for Guided Reinforcement Learning in Large Language Models

Abstract

Reinforcement Learning with Verifiable Rewards (RLVR) has emerged as an effective approach for enhancing the reasoning capabilities of Large Language Models (LLMs). Despite its efficacy, RLVR faces a meta-learning bottleneck: it lacks mechanisms for error attribution and experience internalization intrinsic to the human learning cycle beyond practice and verification, thereby limiting fine-grained credit assignment and reusable knowledge formation. We term such reusable knowledge representations derived from past errors as meta-experience. Based on this insight, we propose M eta- E xperience L earning (MEL), a novel framework that incorporates self-distilled meta-experience into the model’s parametric memory. Building upon standard RLVR, we introduce an additional design that leverages the LLM’s self-verification capability to conduct contrastive analysis on paired correct and incorrect trajectories, identify the precise bifurcation points where reasoning errors arise, and summarize them into generalizable meta-experience. The meta-experience is further internalized into the LLM’s parametric memory by minimizing the negative log-likelihood, which induces a language-modeled reward signal that bridges correct and incorrect reasoning trajectories and facilitates effective knowledge reuse. Experimental results demonstrate that MEL achieves consistent improvements on benchmarks, yielding 3.92%–4.73% Pass@1 gains across varying model sizes.

Shiting Huang 1 Zecheng Li 1 Yu Zeng 1 Qingnan Ren 1 Zhen Fang 1 Qisheng Su 1 Kou Shi 1 Lin Chen 1 Zehui Chen 1 Feng Zhao 1 🖂 1 University of Science and Technology of China 🖂: Corresponding Author

1 Introduction

Reinforcement Learning (RL) has emerged as a pivotal paradigm for enhancing the reasoning capabilities of Large Language Models (LLMs) on complex tasks, such as mathematics, programming, and logic reasoning (Shao et al., 2024; Chen et al., 2025; Zeng et al., 2025a; Wang et al., 2025; Zeng et al., 2025b, 2026; Huang et al., 2026). By leveraging feedback signals obtained from interaction with the task environment, RL enables LLMs to move beyond passive imitation learning toward goal-directed reasoning and action (Schulman et al., 2017; Ouyang et al., 2022; Wulfmeier et al., 2024). Furthermore, by replacing learned reward models with programmatically verifiable signals, Reinforcement Learning with Verifiable Rewards (RLVR) eliminates the need for expensive human annotations and mitigates reward hacking, thereby enabling models to explore problem-solving strategies more effectively, which has contributed to its growing attention (Lambert et al., 2024).

However, RLVR still faces a fundamental bottleneck regarding the granularity and utilization of learning signals. From a meta-learning perspective, the human learning cycle involves three critical components: practice and verification, error attribution, and experience internalization. While RLVR primarily drives policy updates through practice and verification, it overlooks the critical stages of error attribution and experience internalization, both of which are essential for fine-grained credit assignment and the formation of reusable knowledge (Wu et al., 2025; Zhang et al., 2025a). In other words, RLVR is largely limited to assessing the overall quality of entire trajectories, while struggling to reason about fine-grained knowledge at the level of intermediate steps (Xie et al., 2025). Although RL approaches (Lightman et al., 2023; Khalifa et al., 2025) employing Process Reward Models (PRMs) to provide dense learning signals attempt to mitigate this limitation, their reliance on trained proxies makes them inherently susceptible to reward hacking (Cheng et al., 2025; Guo et al., 2025), and poses a fundamental tension with the RLVR paradigm, which is centered on programmatically verifiable rewards.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Standard RLVR vs. MEL (Ours)

### Overview

The image presents a comparative diagram illustrating the difference between a "Standard RLVR" approach and a "MEL (Ours)" approach, likely in the context of reinforcement learning. Both diagrams depict a decision tree-like structure, but the MEL approach incorporates a "Meta-Experience" component for knowledge-level optimization.

### Components/Axes

**Diagram (a): Standard RLVR**

* **Title:** (a) Standard RLVR (top-left)

* **Nodes:** A series of interconnected nodes, starting with a node labeled "Q" at the top. The nodes are arranged in a tree-like structure, branching downward. The nodes are colored either light green or light red.

* **Rewards:** "Reward=1" is associated with the left branch, and "Reward=0" is associated with the right branch.

* **Trajectory-Level Optimization:** Text label indicating "Trajectory-Level optimization"

* **Encourage/Suppress:** Arrows pointing downwards, labeled "Encourage" (green arrow) and "Suppress" (red arrow), indicating the effect of the reward on the optimization process.

* **Agent:** A sad-faced robot at the bottom, representing the agent being optimized.

**Diagram (b): MEL (Ours)**

* **Title:** (b) MEL (Ours) (top-right)

* **Nodes:** Similar to the Standard RLVR, with a "Q" node at the top and branching nodes below, colored light green or light red. The nodes are enclosed in a dashed-line box.

* **Rewards:** "Reward=1" is associated with the left branch, and "Reward=0" is associated with the right branch.

* **Trajectory-Level Optimization:** Text label indicating "Trajectory-Level optimization"

* **Encourage/Suppress:** Arrows pointing downwards, labeled "Encourage" (green arrow) and "Suppress" (red arrow), indicating the effect of the reward on the optimization process.

* **Meta-Experience:** A cloud-shaped element on the right, labeled "Meta-Experience" with the subtext "(bifurcation point, critique, heuristic)".

* **Knowledge-Level Optimization:** Text label indicating "Knowledge-level optimization"

* **Agent:** A happy-faced robot with a lightbulb above its head at the bottom, representing the agent being optimized.

### Detailed Analysis

**Diagram (a): Standard RLVR**

* The diagram starts with a "Q" node, which likely represents a state or query.

* The tree branches into two paths. The left path leads to a "Reward=1" node (green), and the right path leads to a "Reward=0" node (red).

* The "Encourage" arrow (green) suggests that a reward of 1 encourages the trajectory-level optimization.

* The "Suppress" arrow (red) suggests that a reward of 0 suppresses the trajectory-level optimization.

* The sad-faced robot indicates a potentially suboptimal outcome.

**Diagram (b): MEL (Ours)**

* The diagram also starts with a "Q" node and branches into two paths with "Reward=1" (green) and "Reward=0" (red) nodes.

* The "Encourage" and "Suppress" arrows function similarly to the Standard RLVR.

* The key difference is the "Meta-Experience" component, which receives input from the decision tree.

* The "Meta-Experience" component then feeds into "Knowledge-level optimization", which in turn influences the agent.

* The happy-faced robot with a lightbulb suggests an improved outcome due to the incorporation of meta-experience.

### Key Observations

* The primary difference between the two approaches is the addition of the "Meta-Experience" component in the MEL approach.

* The "Meta-Experience" component allows for knowledge-level optimization, which is absent in the Standard RLVR.

* The visual representation suggests that the MEL approach leads to a more positive outcome (happy robot with a lightbulb) compared to the Standard RLVR (sad robot).

### Interpretation

The diagram illustrates the advantage of incorporating meta-experience into reinforcement learning. The Standard RLVR relies solely on trajectory-level optimization based on immediate rewards. In contrast, the MEL approach leverages meta-experience to learn higher-level knowledge, which can then be used to improve the agent's performance. The "bifurcation point, critique, heuristic" subtext suggests that the meta-experience component analyzes decision points, provides critiques of past actions, and develops heuristics for future actions. This allows the agent to learn more effectively and achieve better results, as indicated by the happy robot with a lightbulb. The dashed box around the nodes in the MEL diagram might indicate that this part of the process is encapsulated or treated as a single unit within the larger system.

</details>

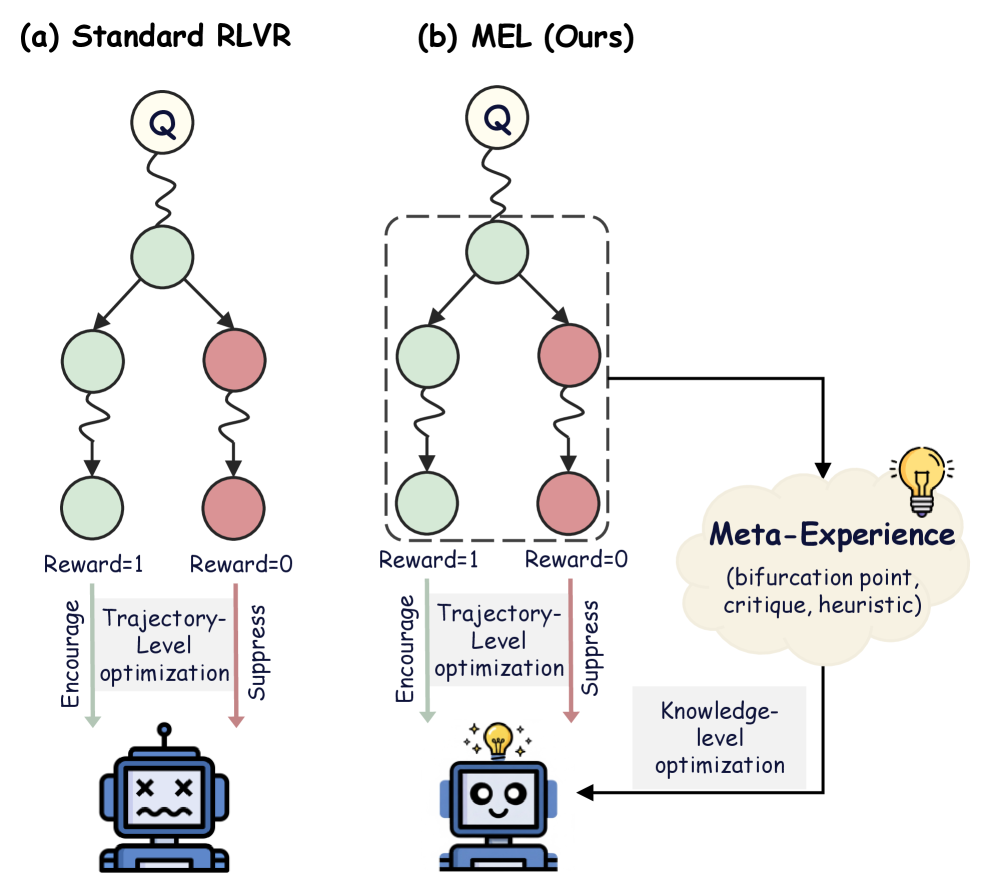

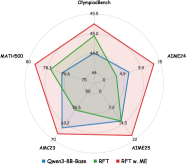

Figure 1: Paradigm comparison between standard RLVR and MEL, where MEL extends RLVR with an explicit knowledge-level learning loop.

Recently, a growing number of studies have explored integrating experience learning within the RLVR framework to address the above challenge. Early attempts, such as StepHint (Zhang et al., 2025c) utilizes experience as hints to elicit superior reasoning paths from the original problems, treating these trajectories as off-policy migration signals. However, the resulting off-policy deviation in response distribution can compromise optimization stability, undermining the theoretical benefits of on-policy reinforcement learning. To alleviate such instability, Scaf-GRPO (Zhang et al., 2025d) leverages superior models to generate multi-level knowledge-intensive experience, injecting them as on-policy prefixes for policy updates. Yet, while effective in teaching models to reason within specific experience-augmented distributions, such prefixes are unavailable during inference, inducing a severe distributional mismatch, thereby limiting performance gains. Critically, despite their differences, these approaches utilize retrieved experience primarily as external hints. While these strategies effectively elicit better reasoning paths during training, the resulting learning signals remain predominantly at the trajectory-level, yielding superficial corrections rather than intrinsic cognitive enhancements.

Building on this insight, we introduce the concept of meta-experience, elevating experience learning from trajectory-level instances to knowledge-level representations. Through contrastive analysis on paired correct and incorrect trajectories, we pinpoint the bifurcation points underlying reasoning failures and abstracts them into reusable meta-experiences. Accordingly, we propose M eta- E xperience L earning (MEL), a framework explicitly designed to enable knowledge-level internalization and reuse of meta-experiences. During training phase, MEL leverages meta-experiences to inject generalizable insights via a self-distillation mechanism, and internalizes them by minimizing the negative log-likelihood in the model’s parametric memory. As shown in Figure 1, MEL differs from standard RLVR, which relies on coarse-grained outcome rewards and treats correct and incorrect trajectories independently, by explicitly connecting them via meta-experiences. Hence, this process can be viewed as a language-modeled process-level reward signal, providing continuous and fine-grained guidance for improving reasoning capability. To further enhance stability and effectiveness during RLVR training, we propose empirical validation via replay, which uses meta-experiences as auxiliary in-context hints to assess their contribution to output accuracy. Meta-experiences that pass validation are integrated via negative log-likelihood minimization, while those that fail validation are excluded. In summary, our main contributions are as follows:

- We propose MEL, a novel framework that integrates self-distilled meta-experience with reinforcement learning, addressing the limitations of standard RLVR in error attribution and experience internalization by embedding these meta-experiences directly into the parametric memory of LLMs.

- We validate the effectiveness of MEL through extensive experiments on five challenging mathematical reasoning benchmarks across multiple LLM scales (4B, 8B, and 14B). Compared with both the vanilla GRPO baseline and the corresponding base models, MEL consistently improves performance across Pass@1, Avg@8, and Pass@8 metrics.

- Empirical results confirm that MEL seamlessly integrates with diverse paradigms (e.g., RFT, GRPO, REINFORCE++) to reshape reasoning patterns and elevate performance ceilings. Notably, these benefits exhibit strong scalability, becoming increasingly pronounced as model size expands.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Reinforcement Learning with Verifiable Rewards

### Overview

The image is a diagram illustrating a reinforcement learning framework with verifiable rewards. It shows the flow of information and processes involved in training a policy model using meta-experience and reinforcement learning with verifiable rewards. The diagram includes components such as a policy model, meta-experience, reinforcement learning with verifiable rewards, trajectories, rewards, and advantages. The diagram also shows the optimization processes at both knowledge and trajectory levels.

### Components/Axes

* **Question:** Located at the top-left, represents the initial query or task.

* **Policy Model:** A neural network-like structure that takes a question as input and outputs trajectories.

* **Trajectories:** Represented as Y1, Y2, ..., YG, these are the sequences of actions generated by the policy model.

* **Meta-Experience:** Located at the top-right, it consists of:

* **Bifurcation Point s\***: A branching point in the decision-making process.

* **Critique C:** A mechanism for evaluating the quality of the trajectories.

* **Heuristic H:** A rule or guideline used to improve the policy.

* **Reinforcement Learning with Verifiable Rewards:** Located at the bottom-center, it includes:

* **Contrastive Pair:** A pair of trajectories, one successful (green checkmark) and one unsuccessful (red "X").

* A sequence of interconnected circles, some green and some red, representing the states in the trajectories.

* **Reward:** r1, r2, ..., rG, representing the rewards associated with each trajectory.

* **Group Norm:** A normalization process applied to the rewards.

* **Advantage:** A1, A2, ..., AG, representing the advantage function for each trajectory.

* **Abstraction & Validation:** A process that transforms the question into a suitable format for the policy model and validates the model's output.

* **Knowledge-Level Optimization:** A feedback loop that uses meta-experience to improve the policy model.

* **Trajectory-Level Optimization:** A feedback loop that uses reinforcement learning with verifiable rewards to improve the policy model.

### Detailed Analysis or Content Details

* **Flow of Information:**

* A "Question" is fed into the "Policy Model".

* The "Policy Model" generates "Trajectories" (Y1, Y2, ..., YG).

* The "Trajectories" are used in "Reinforcement Learning with Verifiable Rewards".

* "Meta-Experience" is used for "Knowledge-Level Optimization" of the "Policy Model".

* "Reinforcement Learning with Verifiable Rewards" is used for "Trajectory-Level Optimization" of the "Policy Model".

* **Contrastive Pair Details:**

* The contrastive pair consists of two documents. The top document has a green checkmark, indicating a positive example. The bottom document has a red "X", indicating a negative example.

* The sequence of circles represents the states in the trajectories. Green circles represent successful states, while red circles represent unsuccessful states. The connections between the circles represent the transitions between states.

* **Reward and Advantage:**

* The rewards (r1, r2, ..., rG) are normalized using a "Group Norm" function.

* The normalized rewards are used to calculate the advantages (A1, A2, ..., AG).

### Key Observations

* The diagram illustrates a closed-loop system where the policy model is continuously improved using both meta-experience and reinforcement learning with verifiable rewards.

* The use of contrastive pairs in reinforcement learning helps to guide the policy model towards generating successful trajectories.

* The knowledge-level and trajectory-level optimization processes work together to improve the overall performance of the policy model.

### Interpretation

The diagram presents a sophisticated approach to reinforcement learning that incorporates meta-experience and verifiable rewards. This framework aims to improve the efficiency and effectiveness of policy learning by leveraging prior knowledge and providing clear signals for successful and unsuccessful trajectories. The use of contrastive pairs is a key element, as it allows the model to learn from both positive and negative examples, leading to more robust and reliable policies. The combination of knowledge-level and trajectory-level optimization ensures that the policy model is continuously refined at both high and low levels of abstraction. The diagram suggests a system designed for complex tasks where learning from experience and leveraging prior knowledge are crucial for success.

</details>

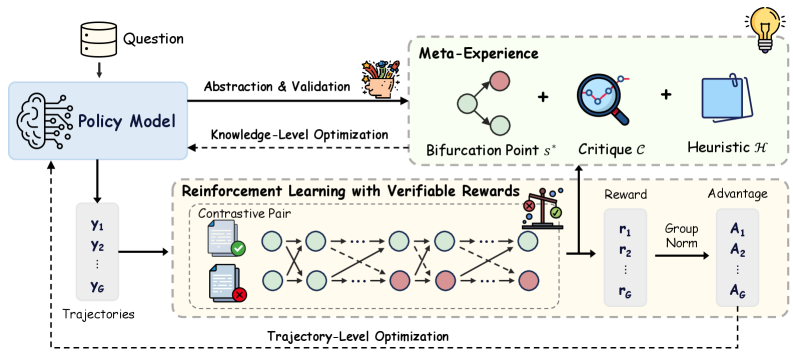

Figure 2: Overview of M eta- E xperience L earning (MEL), which constructs meta-experiences from contrastive pairs via abstraction and validation, thereby introducing an explicit knowledge-level learning loop on top of standard RLVR.

2 Related Work

Reinforcement Learning with Verifiable Rewards.

Reinforcement Learning with Verifiable Rewards (RLVR) leverages rule-based validators to provide deterministic feedback on models’ self-generated solutions (Lambert et al., 2024). Extensive research has systematically investigated RLVR, exploring how this paradigm improves the performance of complex reasoning (Jaech et al., 2024; Guo et al., 2025; Liu et al., 2025; Zhang et al., 2025b). The pioneering framework Group Relative Policy Optimization (GRPO) (Shao et al., 2024) estimates advantages via group-wise relative comparisons, eliminating the need for a separate value model. Building on this base method, recent studies have introduced a range of algorithmic variants to improve training stability and efficiency. For instance, REINFORCE++ (Hu, 2025) enhances stability through global advantage normalization; DAPO (Yu et al., 2025) mitigates entropy collapse and improves reward utilization via relaxed clipping and dynamic sampling; and GSPO (Zheng et al., 2025) reduces gradient estimation variance with sequence-level clipping. Despite these algorithmic advancements, a fundamental limitation persists: current RLVR methods predominantly rely on outcome-level rewards. This failure to assign fine-grained credit to specific knowledge points prevents the construction of reusable knowledge formation, fundamentally constraining the development of systematic and generalizable reasoning capabilities.

Experience Learning.

Recent studies have increasingly recognized that leveraging various forms of experience can substantially enhance LLM reasoning capabilities. One prominent line of research lies in test-time scaling methods, which store experience in external memory pools. For example, SpeedupLLM (Pan and Zhao, 2025) appends memories of previously reasoning traces as experience to accelerate inference, while Training Free GRPO (Cai et al., 2025) and ReasoningBank (Ouyang et al., 2025) distill accumulated experience into structured memory entries for retrieval-based augmentation. However, these approaches rely on ever-growing external memory, preventing the experience from being truly internalized and thus failing to substantively enhance intrinsic reasoning capabilities. Complementarily, another stream of research integrates experience directly into RL training as guiding signals. Methods such as Scaf-GRPO (Zhang et al., 2025d) and StepHint (Zhang et al., 2025c) employ external models to generate experiential hints, which are injected as prefixes or migration signals, to guide the policy toward higher-quality trajectories. Similarly, approaches like LUFFY (Yan et al., 2025) and SRFT (Fu et al., 2025) incorporate expert solution traces as additional experience. Despite improving exploration efficiency, these methods primarily induce trajectory-level imitation. Consequently, models become proficient at following specific patterns but fail to develop the meta-cognitive understanding required for establishing reusable knowledge structures.

3 Meta-Experience Learning

Human learning follows a recurrent cognitive cycle consisting of practice and verification, error attribution, and experience internalization, which in turn informs subsequent practice. Motivated by this cognitive process, we define meta-experience for LLMs as generalizable and reusable knowledge derived from accumulated reasoning trials, capturing both underlying knowledge concepts and common failure modes. Building on this notion, we propose M eta- E xperience L earning (MEL), a framework operating within the RLVR paradigm and expressly designed to internalize such self-distilled, knowledge-level insights into the model’s parametric memory. As illustrated in Figure 2, we first formalize the model exploration stage in RLVR (§ 3.1), then present the details of the Meta-Experience construction (§ 3.2). Finally, we describe the internalization mechanism (§ 3.3) for consolidating these insights into parametric memory, followed by the joint training objective for policy optimization (§ 3.4).

3.1 Explorative Rollout and Verifiable Feedback

Mirroring the “practice and check” phase in human learning, the RLVR framework engages the model in exploring potential solutions for reasoning tasks, while the environment serves as a deterministic verifier that provides verifiable feedback on the final answers. As mastering complex logic typically requires traversing the solution space through multiple attempts, we simulate this stochastic exploration by adopting the group rollout formulation from Group Relative Policy Optimization (GRPO) (Shao et al., 2024).

Formally, given a query $x$ sampled from the dataset $\mathcal{D}$ , the policy model $\pi_{\theta}$ performs stochastic exploration over the solution space and generates a group of $G$ independent reasoning trajectories $\mathcal{Y}=\{y_{1},y_{2},...,y_{G}\}.$ A rule-based verifier then evaluates each trajectory using a verification function $V(·)$ , which compares the extracted final answer from $y_{i}$ against the ground-truth answer $y^{*}$ and assigns a binary outcome reward:

$$

r_{i}=\mathbb{I}\big[V(y_{i},y^{*})\big]\in\{0,1\}. \tag{1}

$$

This process partitions the generated group $\mathcal{Y}$ into two distinct subsets: the set of correct trajectories $\mathcal{Y}^{+}=\{y_{i}\mid r_{i}=1\}$ and the set of incorrect trajectories $\mathcal{Y}^{-}=\{y_{i}\mid r_{i}=0\}$ .

The coexistence of $\mathcal{Y}^{+}$ and $\mathcal{Y}^{-}$ under the same prompt distribution suggests that the model is capable of solving the task, while producing diverse reasoning trajectories. For our method, such diversity constitutes a beneficial property and serves a dual role. On the one hand, it supplies the variance necessary for effective policy updates in standard RLVR. On the other hand, it enables the extraction of knowledge-level meta-expression through systematic contrast between correct and incorrect reasoning outcomes.

3.2 Meta-Experience Construction

Prior studies (Xie et al., 2025; Khalifa et al., 2025; Huang et al., 2025) have shown that effective learning does not arise from merely knowing that a final answer is incorrect, but rather from identifying the specific bifurcation point at which the reasoning process deviates from the correct trajectory, a critical cognitive process known as error attribution. Building on this insight, we leverage pairs of correct and incorrect trajectories to localize reasoning errors and distill such bifurcation points into explicit meta-experiences.

Locating the Bifurcation Point.

To extract knowledge-level learning signals from the exploration results, we focus on identifying the bifurcation points where the reasoning logic diverges into an erroneous path. With the exploration results partitioned into $\mathcal{Y}^{+}$ and $\mathcal{Y}^{-}$ by the verifier, we construct a set of contrastive pairs $\mathcal{P}_{x}=\{(y^{+},y^{-})\mid y^{+}∈\mathcal{Y}^{+},\,y^{-}∈\mathcal{Y}^{-}\}$ for each query $x$ , whose contrast naturally exposes the specific errors in the reasoning process. Such contrastive analysis requires the presence of both positive and negative trajectories; accordingly, we only consider gradient-informative samples with non-empty $\mathcal{Y}^{+}$ and $\mathcal{Y}^{-}$ .

For fine-grained comparison within each pair, each trajectory $y$ can be formatted as a reasoning chain $y=(s_{1},s_{2},...,s_{L},a)$ , where each $s_{t}$ denotes an atomic reasoning step and $a$ indicates the final answer. Since both trajectories originate from the same context, they typically share a correct reasoning path until a critical divergence step $s^{*}$ occurs.

Given deterministic verification signals and full access to the reasoning chains, identifying the bifurcation point can be viewed as a discriminative task that is easier than reasoning from scratch (Saunders et al., 2022; Swamy et al., 2025). Motivated by this observation, we task the policy model with analyzing each contrastive pair to identify the reasoning bifurcation point $s^{*}$ :

$$

\displaystyle s^{*} \displaystyle\sim\pi_{\theta}\big(\cdot\mid I,x,y^{+},y^{-}\big). \tag{2}

$$

Where $I$ denotes a structured instruction guiding introspective analysis.

Deep Diagnosis and Abstraction.

Identifying the bifurcation point $s^{*}$ localizes where the reasoning fails, serving as the raw material for subsequent learning. Anchored at $s^{*}$ , the policy model conducts a deep diagnostic analysis to contrast the strategic choices underlying the successful and failed trajectories. Specifically, the model examines the local reasoning context around $s^{*}$ to pinpoint the root cause of failure, such as violated assumptions, erroneous sub-goals, overlooked constraints, or the misuse of specific principles. Complementarily, it inspects the successful trajectory to uncover the mechanisms that prevented such pitfalls, including precise knowledge application, explicit constraint verification, coherent knowledge representations, or emergent self-correction behaviors. By jointly synthesizing these perspectives, the model distills the structural divergence between the correct and incorrect logic, crystallizing it into explicit knowledge. Formally, we model this diagnostic process as generating a critique $\mathcal{C}$ that encapsulates the error attribution, the comparative strategic gap, and the corresponding corrective principle:

$$

\displaystyle\mathcal{C}\sim\pi_{\theta}\big(\cdot\mid I,x,y^{+},y^{-},s^{*}\big). \tag{3}

$$

To ensure generalization, it is imperative for the model to distill instance-specific critiques into abstract heuristics capable of guiding future reasoning. This abstraction mechanism systematically strips away context-dependent variables, mapping the concrete logic of success and failure onto a generalized space of preconditions and responses. Structurally, such heuristics synthesize abstract problem categorization with the corresponding reasoning principles, encompassing the essential knowledge points, theoretical theorems, and decision criteria. Crucially, they also demarcate error-prone boundaries, explicitly highlighting potential pitfalls or latent constraints associated with the specific problem class. We define the extraction of this heuristic knowledge $\mathcal{H}$ as a generation process conditioned on the full critique context:

$$

\mathcal{H}\sim\pi_{\theta}\big(\cdot\mid I,x,y^{+},y^{-},s^{*},\mathcal{C}\big). \tag{4}

$$

Finally, we consolidate these components into a unified Meta-Experience tuple $\mathcal{M}$ , which elevates experience learning from trajectory-level instances to knowledge-level representations.

$$

\mathcal{M}=\big(s^{*},\mathcal{C},\mathcal{H}\big). \tag{5}

$$

This formulation enables meta-experiences to be reused across tasks that share analogous reasoning structures, serving as a fine-grained learning signal. By applying the extraction process across distinct contrastive pairs for a query $x$ , we construct a candidate pool of meta-experiences $\mathcal{D}_{\mathcal{M}}=\{(x,y^{+}_{i},y^{-}_{i},\mathcal{M}_{i})\}_{i=1}^{N}$ , where $N$ denotes the total number of meta-experiences derived from $x$ , and $(y^{+}_{i},y^{-}_{i})$ represents the specific contrastive pair used to derive $\mathcal{M}_{i}$ .

Empirical Validation via Replay.

Closing the cognitive loop requires re-instantiating theoretical insights derived from past failures in future problem-solving to assess their validity. We recognize that the raw meta-experience $\mathcal{M}$ may still suffer from intrinsic hallucinations or causal misalignment. To mitigate this, we conduct empirical verification by incorporating the extracted tuple $\mathcal{M}$ as short-term working memory into the prompt, thereby guiding the model to re-attempt the original query $x$ . This procedure tests whether the injected meta-experience can effectively steer the model away from the previously identified bifurcation point $s^{*}$ and toward a correct reasoning trajectory.

We retain a meta-experience only if the corresponding replay trajectory $y_{\text{val}}\sim\pi_{\theta}(·\mid x,\mathcal{M})$ satisfies the verifier by producing the correct answer.

$$

\mathcal{D}_{\mathcal{M^{*}}}=\left\{(x,y^{+},y^{-},\mathcal{M})\in\mathcal{D}_{\mathcal{M}}\;\middle|\;\mathbb{I}\big[V\!\left(y_{\text{val}},y^{*}\right)=1\big]\right\}. \tag{6}

$$

Consequently, this empirical validation preserves only high-quality meta-experiences for integration into parametric long-term memory, guaranteeing the reliability of the supervision signals used in the subsequent optimization phase.

3.3 Internalization Mechanism

The verified meta-experiences $\mathcal{D}_{\mathcal{M}^{*}}$ constitute a high-quality reservoir of reasoning guidance. However, treating these insights solely as retrieval-augmented memory imposes a substantial computational burden during the inference forward pass, as it necessitates processing elongated contexts for every query. To overcome this limitation, we propose to transfer these insights from the transient context window to the model’s parametric memory. Unlike the finite context buffer, the model parameters offer a virtually unlimited capacity for accumulating diverse meta-experiences, allowing the policy to internalize vast amounts of reasoning patterns without incurring inference-time latency.

We establish this internalization process as a self-distilled paradigm, where the model learns from its own verified experiences. Specifically, we employ fine-tuning based on the token-averaged negative log-likelihood (NLL) objective to compile the meta-experiences into the policy’s weights. Formally, given the retrospective context $C_{\text{retro}}=[I,x,y^{+},y^{-}]$ , the internalization loss is defined as:

$$

\displaystyle\mathcal{L}_{\text{NLL}}(\theta)=- \displaystyle\mathbb{E}_{(x,y^{+},y^{-},\mathcal{M}^{*})\sim\mathcal{D}_{\mathcal{M}^{*}}} \displaystyle\Big[\frac{1}{|\mathcal{M}^{*}|}\sum_{t=1}^{|\mathcal{M}^{*}|}\log\pi_{\theta}(\mathcal{M}^{*}_{t}\mid C_{\text{retro}},\mathcal{M}^{*}_{<t})\Big] \displaystyle=- \displaystyle\mathbb{E}_{x\sim\mathcal{D},\,\{y_{i}\}_{i=1}^{G}\sim\pi_{\theta_{\mathrm{old}}}(\cdot\mid x)} \displaystyle\Bigg[\mathbb{E}_{(y^{+},y^{-},\mathcal{M}^{*})\sim\mathcal{T}(x,\{y_{i}\}_{i=1}^{G})} \displaystyle\Big[\frac{1}{|\mathcal{M}^{*}|}\sum_{t=1}^{|\mathcal{M}^{*}|}\log\pi_{\theta}(\mathcal{M}^{*}_{t}\mid C_{\text{retro}},\mathcal{M}^{*}_{<t})\Big]\Bigg] \tag{7}

$$

where $\mathcal{T}(·)$ represents the stochastic construction process detailed in § 3.2.

Based on this formulation, the internalization process can be viewed as a specialized sampling form within the RLVR framework. By inverting the loss, we define the Meta-Experience Return $\mathcal{R}_{\text{MEL}}$ as the expected log-likelihood over the stochastically constructed verification set:

$$

\displaystyle\mathcal{R}_{\text{MEL}} \displaystyle=\mathbb{E}_{(y^{+},y^{-},\mathcal{M}^{*})\sim\mathcal{T}(x,\{y_{i}\}_{i=1}^{G})} \displaystyle\Bigg[\frac{1}{|\mathcal{M}^{*}|}\sum_{t=1}^{|\mathcal{M}^{*}|}\log\pi_{\theta}(\mathcal{M}^{*}_{t}\mid C_{\text{retro}},\mathcal{M}^{*}_{<t})\Bigg]. \tag{8}

$$

3.4 Joint Training Objective

Table 1: Main Results Comparison. Comparison of Pass@1, Avg@8, and Pass@8 accuracy (%) across different model scales. The best results within each model scale are marked in bold.

| Method Qwen3-4B-Base | AIME 2024 Pass@1 | AIME 2025 Avg@8 | AMC 2023 Pass@8 | Pass@1 | Avg@8 | Pass@8 | Pass@1 | Avg@8 | Pass@8 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Baseline | 13.33 | 9.90 | 30.00 | 10.00 | 6.56 | 23.33 | 45.00 | 42.73 | 72.50 |

| GRPO | 13.33 | 18.33 | 30.00 | 6.67 | 17.50 | 30.00 | 57.50 | 58.13 | 85.00 |

| \rowcolor MintCream MEL | 20.00 | 20.83 | 33.00 | 16.67 | 18.33 | 33.00 | 60.00 | 60.31 | 87.50 |

| Qwen3-8B-Base | | | | | | | | | |

| Baseline | 6.67 | 10.00 | 26.67 | 13.33 | 15.00 | 33.33 | 65.00 | 52.50 | 87.50 |

| GRPO | 16.67 | 24.58 | 43.33 | 20.00 | 20.83 | 36.67 | 67.50 | 69.06 | 87.50 |

| \rowcolor MintCream MEL | 30.00 | 25.42 | 60.00 | 23.33 | 23.33 | 36.67 | 70.00 | 70.31 | 90.00 |

| Qwen3-14B-Base | | | | | | | | | |

| Baseline | 13.33 | 10.83 | 36.67 | 6.66 | 9.58 | 33.33 | 60.00 | 51.25 | 82.50 |

| GRPO | 30.00 | 35.41 | 56.67 | 33.33 | 24.17 | 43.33 | 75.00 | 75.94 | 95.00 |

| \rowcolor MintCream MEL | 33.33 | 35.83 | 60.00 | 36.67 | 30.00 | 46.67 | 82.50 | 82.81 | 95.00 |

| Method Qwen3-4B-Base | MATH 500 Pass@1 | OlympiadBench Avg@8 | Average Pass@8 | Pass@1 | Avg@8 | Pass@8 | Pass@1 | Avg@8 | Pass@8 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Baseline | 74.20 | 65.74 | 89.60 | 39.17 | 35.37 | 60.38 | 36.34 | 32.06 | 55.16 |

| GRPO | 81.80 | 82.20 | 93.00 | 48.51 | 48.46 | 67.21 | 41.56 | 44.92 | 61.04 |

| \rowcolor MintCream MEL | 82.20 | 82.30 | 93.80 | 48.51 | 49.48 | 69.73 | 45.48 | 46.25 | 63.41 |

| Qwen3-8B-Base | | | | | | | | | |

| Baseline | 77.00 | 73.40 | 91.40 | 44.51 | 39.41 | 64.09 | 41.30 | 38.06 | 60.60 |

| GRPO | 84.40 | 86.28 | 95.40 | 53.56 | 54.60 | 73.74 | 48.43 | 51.07 | 67.33 |

| \rowcolor MintCream MEL | 86.60 | 86.70 | 96.20 | 54.01 | 55.60 | 73.00 | 52.79 | 52.27 | 71.17 |

| Qwen3-14B-Base | | | | | | | | | |

| Baseline | 80.80 | 74.15 | 93.60 | 45.25 | 40.50 | 65.58 | 41.21 | 37.26 | 62.34 |

| GRPO | 85.00 | 88.35 | 96.40 | 58.16 | 58.46 | 74.78 | 56.30 | 56.47 | 73.24 |

| \rowcolor MintCream MEL | 90.80 | 90.80 | 97.20 | 61.87 | 60.90 | 75.82 | 61.03 | 60.07 | 74.94 |

To simultaneously encourage solution exploration and consolidate the internalized meta-experiences, achieving dual optimization across trajectory-level behaviors and knowledge-level representations, we train the policy model $\pi_{\theta}$ using a joint optimization objective. To simultaneously encourage solution exploration and consolidate the internalized meta-experiences, achieving dual optimization across trajectory-level behaviors and knowledge-level representations, we train the policy model $\pi_{\theta}$ using a joint optimization objective. This objective synergizes the RLVR signal derived from diverse explorative rollouts with the supervised signal distilled from high-quality meta-experiences:

$$

\mathcal{J}(\theta)=\mathcal{J}_{\text{RLVR}}(\theta)+\mathcal{J}_{\text{MEL}}(\theta). \tag{9}

$$

We adopt GRPO (Shao et al., 2024) as the RLVR component and compute group-normalized advantages by standardizing rewards within the sampled group and broadcast them to each token. Let $y_{i,t}$ denote the $t$ -th token in trajectory $y_{i}$ and $y_{i,<t}$ , the corresponding prefix. Substituting the definition of $\mathcal{R}_{\text{MEL}}$ from Eq. 8, the joint objective in Eq. 9 is explicitly expanded as:

$$

\displaystyle\mathcal{J}(\theta)= \displaystyle\mathbb{E}_{x\sim\mathcal{D},\,\{y_{i}\}_{i=1}^{G}\sim\pi_{\theta_{\mathrm{old}}}(\cdot\mid x)} \displaystyle\Big[\frac{1}{G}\sum_{i=1}^{G}\frac{1}{|y_{i}|}\sum_{t=1}^{|y_{i}|}\min\Big(\rho_{i,t}(\theta)\hat{A}_{i,t},\; \displaystyle\mathrm{clip}\big(\rho_{i,t}(\theta),1-\epsilon,1+\epsilon\big)\hat{A}_{i,t}\Big)+\mathcal{R}_{\text{MEL}}\Big]. \tag{10}

$$

Although derived from a log-likelihood objective, its optimization gradient is mathematically equivalent to a policy gradient update where the reward signal is a constant positive scalar. Consequently, the total objective $\mathcal{J}(\theta)$ can be unified as maximizing the expected cumulative return of a hybrid reward function. In this unified view, the meta-experiences function as a dense process reward model.

Unlike the sparse outcome rewards in standard RLVR that only evaluate the final correctness, $\mathcal{R}_{\text{MEL}}$ provides explicit, step-by-step reinforcement for the reasoning process itself. This ensures that the model not only pursues correct outcomes via broad exploration but is also continuously shaped by the dense supervision of its own successful cognitive patterns, effectively bridging the gap between trajectory-level search and token-level knowledge encoding.

4 Experiments

Datasets.

We train our model on the DAPO-Math-17k dataset (Yu et al., 2025) and evaluate it on five challenging mathematical reasoning benchmarks: AIME24, AIME25, AMC23 (Li et al., 2024), MATH500 (Hendrycks et al., 2021), and OlympiadBench (He et al., 2024).

Setups.

All reinforcement learning training is conducted using the VERL framework (Sheng et al., 2024) on 8 $×$ H20 GPUs, with Math-Verify providing rule-based outcome verification. During training, we sample 8 responses per prompt at a temperature of 1.0 with a batch size of 128. Optimization uses a learning rate of $1× 10^{-6}$ and a mini-batch size of 128. For evaluation, we report Pass@1 at temperature 0, and Avg@8 and Pass@8 at temperature 0.6.

Models and Baselines.

To demonstrate the general applicability of MEL, we conduct experiments across a diverse range of model scales, including Qwen3-4B-Base, Qwen3-8B-Base, and Qwen3-14B-Base (Yang et al., 2025). We adopt GRPO (Shao et al., 2024) as the base reinforcement learning algorithm for MEL, and thus perform a direct and controlled comparison between the vanilla GRPO and our meta-experience learning approach.

4.1 Experimental Results

As shown in Table 1, MEL achieves consistent and significant improvements over vanilla GRPO and the basemodel across multiple benchmarks and model scales. We report three complementary metrics: Pass@1 reflects one-shot reliability, Avg@8 measures the average performance over 8 samples, and Pass@8 reports the best-of-8 success rate.

First, the gains in Pass@1 demonstrate that MEL substantially enhances the model’s confidence in following correct reasoning paths. Across all model scales, it achieves a consistent improvement of 3.92–4.73% over the strong GRPO baseline. This indicates that MEL effectively internalizes the explored insights into the model’s parametric memory. By consolidating these successful reasoning patterns, the model generates high-confidence solutions, markedly reducing the need for extensive test-time sampling. This reliability is further corroborated by the gains in Avg@8, which reveal that MEL significantly enhances reasoning consistency and output stability. High performance on this metric supports our hypothesis that internalized meta-experiences function as intrinsic process-level guidance, continuously steering the generation toward valid logic and effectively reducing variance across sampled outputs. Finally, the sustained gains in Pass@8 suggest that learning from meta-experience does not harm exploration; instead, it expands the reachable solution space and raises the upper bound of best-of- $k$ performance.

4.2 Training Dynamics and Convergence Analysis

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Charts: Training Reward vs. Training Steps for GRPO and MEL Models

### Overview

The image presents three line charts comparing the training reward of two models, GRPO and MEL, across different parameter sizes (4B, 8B, and 14B). Each chart plots "Training Reward" on the y-axis against "Training Steps" on the x-axis. The charts show the performance of each model over 120 training steps.

### Components/Axes

* **X-axis (Horizontal):** "Training Steps", ranging from 0 to 120 in increments of 20.

* **Y-axis (Vertical):** "Training Reward", ranging from 0.2 to 0.4 (left), 0.2 to 0.6 (center), and 0.2 to 0.7 (right), with increments of 0.1.

* **Legends (Top-Left of each chart):**

* **Left Chart:** GRPO (4B) (blue), MEL (4B) (pink)

* **Center Chart:** GRPO (8B) (blue), MEL (8B) (pink)

* **Right Chart:** GRPO (14B) (blue), MEL (14B) (pink)

* **Chart Titles:** Implicitly defined by the legend, indicating the model sizes (4B, 8B, 14B).

### Detailed Analysis

**Left Chart: 4B Parameter Size**

* **GRPO (4B) - Blue:** The line starts at approximately 0.15 and generally increases to around 0.38 by step 120. The trend is upward, with some fluctuations.

* **MEL (4B) - Pink:** The line starts at approximately 0.14 and increases to around 0.40 by step 120. The trend is upward, with some fluctuations.

**Center Chart: 8B Parameter Size**

* **GRPO (8B) - Blue:** The line starts at approximately 0.20 and increases to around 0.45 by step 120. The trend is upward, with some fluctuations.

* **MEL (8B) - Pink:** The line starts at approximately 0.20 and increases to around 0.50 by step 120. The trend is upward, with some fluctuations.

**Right Chart: 14B Parameter Size**

* **GRPO (14B) - Blue:** The line starts at approximately 0.20 and increases to around 0.52 by step 120. The trend is upward, with some fluctuations.

* **MEL (14B) - Pink:** The line starts at approximately 0.20 and increases to around 0.60 by step 120. The trend is upward, with some fluctuations.

### Key Observations

* Both GRPO and MEL models show an increase in training reward as the number of training steps increases across all parameter sizes.

* The MEL model generally achieves a higher training reward than the GRPO model for all parameter sizes.

* Increasing the parameter size from 4B to 14B appears to improve the final training reward for both models.

### Interpretation

The charts suggest that both GRPO and MEL models benefit from increased training steps, as evidenced by the upward trend in training reward. The MEL model consistently outperforms the GRPO model, indicating it may be a more effective architecture or training strategy for this particular task. Furthermore, increasing the model size (number of parameters) leads to improved performance, suggesting that the models can better capture the underlying patterns in the data with more capacity. The fluctuations in the lines indicate some variability in the training process, which is typical in machine learning. Overall, the data supports the idea that larger models, trained for longer durations, tend to achieve higher rewards in this context.

</details>

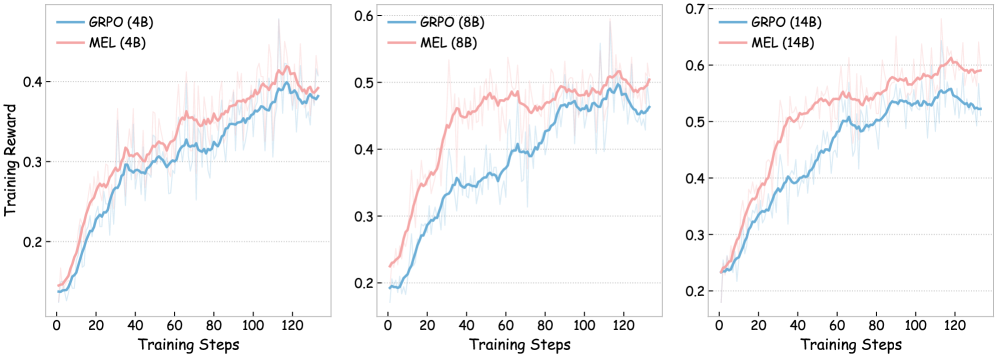

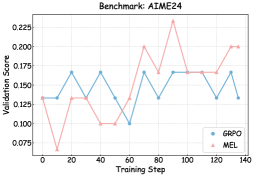

Figure 3: Training curves comparing GRPO and MEL.

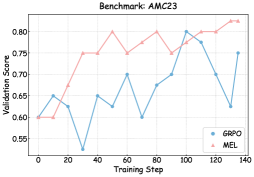

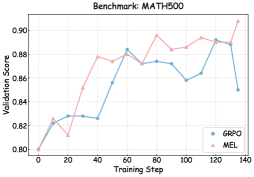

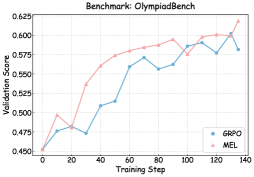

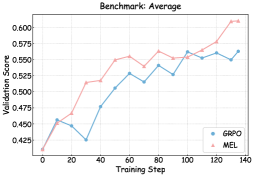

To understand the mechanisms driving the performance gains under MEL, we monitored the training dynamics and validation performance in Figures 3 and 6 – 8.

Vanilla GRPO methods often struggle to obtain positive reinforcement in the early stages, particularly when initial performance is low, due to the sparsity of outcome-based rewards. As illustrated in the training curve, vanilla GRPO exhibits a relatively slow ascent during the initial phase. In contrast, MEL demonstrates a sharp, rapid trajectory growth immediately from the onset of training. This acceleration is attributed to the internalized meta-experience return, $\mathcal{R}_{\text{MEL}}$ . By functioning as a dense, language-modeling process reward, $\mathcal{R}_{\text{MEL}}$ continuously provides informative gradient signals for every reasoning step, even when successful trajectories yielding positive reinforcement are scarce.

Beyond sample efficiency, MEL achieves a consistently higher performance upper bound. The training curves show that the average reward of MEL consistently surpasses that of vanilla GRPO throughout the entire training process. Crucially, the downstream validation trajectories reveal that even as performance growth begins to plateau in the later stages, MEL maintains a distinct and sustained advantage over the baseline. This phenomenon demonstrates that the internalization of meta-experiences empowers the model to effectively navigate and explore more complex, long-horizon solutions that remain inaccessible to the baseline.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Problem Solving Approaches in Geometry

### Overview

The image presents a geometry problem involving a triangle, its circumcircle, and tangents. It showcases three different approaches (GRPO, Meta-Experience, and MEL) to solving the problem, along with the initial problem statement.

### Components/Axes

* **Question (Top-Left)**: Defines the geometry problem.

* Triangle ABC has AB = 4, BC = 5, and CA = 6.

* Points A', B', C' are such that B'C' is tangent to the circumcircle of triangle ABC at A, C'A' is tangent to the circumcircle at B, and A'B' is tangent to the circumcircle at C.

* Find the length B'C'.

* **GRPO (Bottom-Left)**: Represents one approach to solving the problem.

* Begins with an initial statement of understanding the problem.

* Step 1: Find the circumcircle of triangle ABC.

* Semi-perimeter: s = (4 + 5 + 6) / 2 = 7.5

* Area: K = sqrt(s(s-a)(s-b)(s-c)) = sqrt(7.5 * 3.5 * 2.5 * 1.5)

* States a formula relating side lengths of the tangential triangle to the sides and angles of the original triangle:

* a' = 2R sin(A/2)

* b' = 2R sin(B/2)

* c' = 2R sin(C/2)

* Conclusion: The length B'C' is \boxed(2)

* **Meta-Experience in Early Stage (Top-Right)**: Describes meta-cognitive aspects of problem-solving.

* Failure Resolution Path & Error Pattern Recognition:

* Failure Point: The error occurs in the use of the circumcircle formula. The formula should be BC = 2R sin ∠BAC, but the incorrect solution uses BC = 2R sin(∠BAC / 2).

* Latent Cognitive Pattern: The error is due to a conceptual confusion in formula usage.

* Subject Heuristics (Internalized Experience):

* Angle Verification Rule: When using a formula of the form 2Rsin θ in a circumcircle, always ensure that θ is the full geometric angle spanning the chord or tangent segment, not a derived half-angle.

* Formula-Geometry Consistency Rule: Before applying trigonometric formulas for lengths on the circumcircle, confirm that the chosen angle corresponds exactly to the geometric length being calculated, in order to avoid errors caused by half-angle substitution.

* **MEL (Bottom-Right)**: Represents another approach to solving the problem.

* Begins with an initial statement of understanding the problem.

* Lists properties:

* Tangent to a Circle: A tangent to a circle is perpendicular to the radius at the point of tangency.

* Circumcircle: The circle that passes through all three vertices of a triangle.

* Step 1: Find the Circumradius R of triangle ABC.

* States a formula to find the circumradius of a triangle: R = abc / (4K), where a, b, c are the side lengths, and K is the area of the triangle.

* Uses Heron's formula: K = sqrt(s(s-a)(s-b)(s-c)), where s = (a+b+c)/2 is the semi-perimeter.

* States the length B'C' is related to the circumradius and the angles of the triangle and can be calculated using the formula: B'C' = 2R sin θ

* Final Answer: \boxed(5)

### Detailed Analysis or ### Content Details

* **GRPO Approach**:

* Calculates the semi-perimeter (s) as 7.5.

* Calculates the area (K) as sqrt(7.5 * 3.5 * 2.5 * 1.5).

* Applies formulas relating tangential triangle side lengths to the original triangle.

* Concludes that the length B'C' is 2.

* **Meta-Experience**:

* Identifies a common error in using the circumcircle formula, specifically halving the angle.

* Emphasizes the importance of using the full geometric angle in calculations.

* **MEL Approach**:

* Recalls properties of tangents and circumcircles.

* States the formula for circumradius (R) and Heron's formula for area (K).

* Relates B'C' to circumradius and angles using the formula B'C' = 2R sin θ.

* Concludes that the final answer is 5.

### Key Observations

* The GRPO approach arrives at an answer of 2, while the MEL approach arrives at an answer of 5.

* The Meta-Experience section highlights a common error in using the circumcircle formula, which might explain the discrepancy in the answers.

### Interpretation

The image illustrates different problem-solving strategies in geometry. The GRPO and MEL approaches represent direct attempts to solve the problem, while the Meta-Experience section provides a higher-level reflection on potential errors and heuristics. The discrepancy in the final answers suggests that one or both approaches contain errors, and the Meta-Experience section points to a potential source of error in the use of the circumcircle formula. The image demonstrates the importance of not only applying formulas but also understanding the underlying concepts and potential pitfalls.

</details>

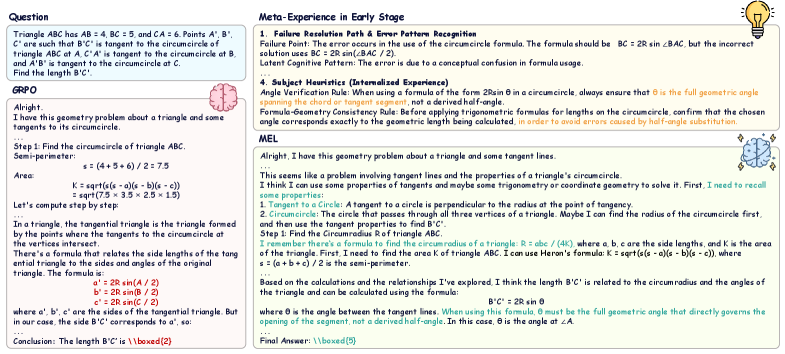

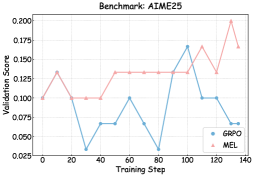

Figure 4: Case study comparing GRPO and MEL, with visualization of meta-experience in early stage.

4.3 How Meta-Experience Shapes Reasoning Patterns

To investigate how MEL shapes the model’s cognitive processes beyond numerical metrics, we conduct a qualitative analysis comparing the reasoning trajectories of MEL and the baseline GRPO model, as visualized in Figure 4.

A distinct behavioral divergence is observed from the onset of the solution. While the GRPO baseline tends to prioritize immediate execution through direct numerical operations, MEL adopts a structured preparatory strategy by explicitly outlining relevant theorems and formulas. Although the direct approach may appear efficient for simple queries, it increases the susceptibility to errors in complex tasks due to the lack of a holistic view of problem constraints.

Notably, MEL exhibits an emergent cognitive behavior. When applying specific theorems, it spontaneously activates internalized “bitter lessons” as endogenous safeguards to regulate its actions. These active signals effectively reduce reasoning drift by encouraging earlier constraint checking and consistent self-correction when the model enters uncertain regions.

4.4 Generality Across Learning Paradigms

<details>

<summary>x5.png Details</summary>

### Visual Description

## Radar Chart: Olympiabench

### Overview

The image is a radar chart comparing the performance of three models (Qwen3-8B-Base, RFT, and RFT w. ME) across five different benchmarks: AIME24, AIME25, AMC23, MATH500, and Olympiabench. The chart displays the scores achieved by each model on each benchmark, with higher scores indicating better performance.

### Components/Axes

* **Title:** Olympiabench

* **Axes:** The radar chart has five axes, each representing a different benchmark:

* AIME24 (at approximately 15 degrees)

* AIME25 (at approximately 70 degrees)

* AMC23 (at approximately 140 degrees)

* MATH500 (at approximately 210 degrees)

* Olympiabench (at approximately 300 degrees)

* **Scale:** The radial scale ranges from 0 to 80, with markers at 0, 25, 50, and 75.

* **Legend:** Located at the bottom of the chart:

* Blue: Qwen3-8B-Base

* Green: RFT

* Red: RFT w. ME

### Detailed Analysis

* **Qwen3-8B-Base (Blue):**

* AIME24: Approximately 9.9

* AIME25: Approximately 14.5

* AMC23: Approximately 53.2

* MATH500: Approximately 76.6

* Olympiabench: Approximately 44.6

* **RFT (Green):**

* AIME24: Approximately 6.9

* AIME25: Approximately 7.2

* AMC23: Approximately 38.6

* MATH500: Approximately 79.3

* Olympiabench: Approximately 45.0

* **RFT w. ME (Red):**

* AIME24: Approximately 15

* AIME25: Approximately 20

* AMC23: Approximately 70

* MATH500: Approximately 80

* Olympiabench: Approximately 45.5

### Key Observations

* RFT w. ME (Red) consistently outperforms the other two models across all benchmarks.

* Qwen3-8B-Base (Blue) generally performs better than RFT (Green), except for the MATH500 benchmark.

* All models perform relatively poorly on the AIME24 and AIME25 benchmarks compared to AMC23 and MATH500.

* The performance on Olympiabench is similar across all three models.

### Interpretation

The radar chart provides a visual comparison of the performance of three models on five different benchmarks. The RFT w. ME model demonstrates superior performance across all benchmarks, suggesting that the "ME" component significantly enhances the model's capabilities. The relatively low scores on AIME24 and AIME25 across all models indicate that these benchmarks may be more challenging or require different skills than AMC23 and MATH500. The similar performance on Olympiabench suggests that this benchmark may not be as discriminating between the models as the others. The data suggests that RFT w. ME is the most effective model among the three for the given set of benchmarks.

</details>

<details>

<summary>x6.png Details</summary>

### Visual Description

## Radar Chart: Olympiabench

### Overview

The image is a radar chart comparing the performance of three models (Qwen3-8B-Base, REINFORCE++, and REINFORCE++ w. ME) across five different benchmarks: Olympiabench, AIME24, AIME25, AMC23, and MATH500. The chart displays the performance of each model as a percentage score on each benchmark.

### Components/Axes

* **Title:** Olympiabench

* **Axes:** The chart has five axes, each representing a different benchmark. The axes are labeled as follows:

* Top: Olympiabench

* Top-Right: AIME24

* Bottom-Right: AIME25

* Bottom-Left: AMC23

* Top-Left: MATH500

* **Scale:** The radial scale ranges from 0 to 100, with intermediate values marked.

* **Legend:** Located at the bottom of the chart.

* Blue: Qwen3-8B-Base

* Green: REINFORCE++

* Red: REINFORCE++ w. ME

### Detailed Analysis

The chart displays the performance of each model on each benchmark. The values are approximate due to the resolution of the image.

* **Qwen3-8B-Base (Blue):**

* Olympiabench: ~62%

* AIME24: ~9.6%

* AIME25: ~15.6%

* AMC23: ~45.6%

* MATH500: ~78.6%

* **REINFORCE++ (Green):**

* Olympiabench: ~47.6%

* AIME24: ~79%

* AIME25: ~19.2%

* AMC23: ~69.2%

* MATH500: ~64.2%

* **REINFORCE++ w. ME (Red):**

* Olympiabench: ~54.2%

* AIME24: ~79%

* AIME25: ~26%

* AMC23: ~74%

* MATH500: ~86%

### Key Observations

* REINFORCE++ w. ME (Red) generally outperforms the other two models, especially on MATH500 and AMC23.

* Qwen3-8B-Base (Blue) performs well on MATH500 but poorly on AIME24 and AIME25.

* REINFORCE++ (Green) shows a balanced performance across all benchmarks, with a notable performance on AIME24.

* All models struggle on AIME25, with scores below 30%.

### Interpretation

The radar chart provides a visual comparison of the performance of three models across five different benchmarks. The data suggests that REINFORCE++ w. ME is the most effective model overall, particularly on MATH500 and AMC23. Qwen3-8B-Base shows strong performance on MATH500 but struggles on AIME24 and AIME25. REINFORCE++ offers a more balanced performance across all benchmarks. The low scores on AIME25 for all models indicate that this benchmark may be particularly challenging. The chart highlights the strengths and weaknesses of each model, allowing for a more informed decision when selecting a model for a specific task.

</details>

Figure 5: Impact of meta-experience across different training methods, including Rejection Sampling Fine-Tuning (RFT) and REINFORCE++. ME denotes Meta-Experience.

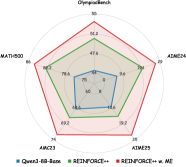

To demonstrate the versatility of meta-experience, we integrated it into RFT and REINFORCE++ using the Qwen-8B-Base model as the backbone and the same training set in our experiments. As shown in Figure 5, while vanilla RFT often suffers from rote memorization and tends to overfit to specific samples in this training set, the incorporation of meta-experiences introduces robust reasoning heuristics. This allows the model to internalize the underlying logic rather than merely imitating specific answers, thereby effectively mitigating overfitting and enhancing generalization to unseen test sets. Similarly, applying meta-experiences to REINFORCE++ significantly raises the performance ceiling on benchmarks. This confirms that the benefit of internalized meta-experiences is a universal enhancement, not limited to the GRPO framework.

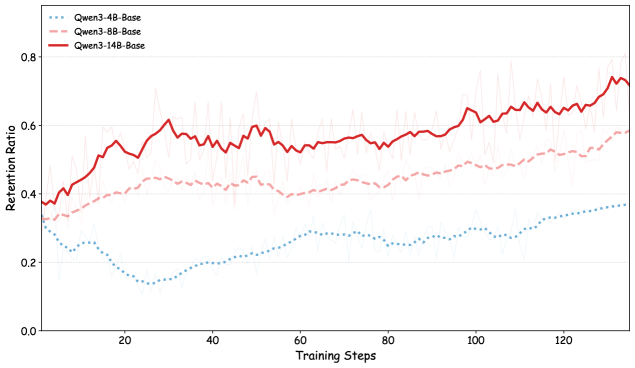

4.5 Scalability Analysis

As indicated by the training curves in Figure 3, the method exhibits a distinct positive scaling law: the performance margin between MEL and the baseline widens significantly as the model size increases. This phenomenon consistently extends to downstream validation benchmarks.

We attribute this effect to the quality of self-generated supervision, which is inherently bounded by the model’s intrinsic capability. As shown in Figure 9, the 14B model achieves a significantly higher yield rate of valid meta-experiences than its smaller counterparts. While limited-capacity models introduce noise due to imprecise error attribution, larger models benefit from stronger self-verification, enabling the distillation of high-quality heuristics that provide more accurate gradient signals and fully realize the potential of our framework.

5 Conclusion

In this paper, we introduced MEL, a novel framework designed to overcome the meta-learning bottleneck in standard RLVR by transforming instance-specific failure patterns into reusable cognitive assets. Unlike traditional methods that rely solely on outcome-oriented rewards, MEL empowers models to perform granular error attribution, distilling specific failure modes into natural language heuristics—termed Meta-Experiences. By internalizing these experiences into parametric memory, our approach bridges the gap between verifying a solution and understanding the underlying reasoning logic. Extensive empirical evaluations confirm that MEL consistently boosts mathematical reasoning across diverse model scales.

Impact Statement

This paper presents research aimed at advancing the field of reinforcement learning. While our work may have broader societal implications, we do not identify any specific impacts that require particular attention at this stage.

References

- Y. Cai, S. Cai, Y. Shi, Z. Xu, L. Chen, Y. Qin, X. Tan, G. Li, Z. Li, H. Lin, et al. (2025) Training-free group relative policy optimization. arXiv preprint arXiv:2510.08191. Cited by: §2.

- J. Chen, Q. He, S. Yuan, A. Chen, Z. Cai, W. Dai, H. Yu, Q. Yu, X. Li, J. Chen, et al. (2025) Enigmata: scaling logical reasoning in large language models with synthetic verifiable puzzles. arXiv preprint arXiv:2505.19914. Cited by: §1.

- J. Cheng, G. Xiong, R. Qiao, L. Li, C. Guo, J. Wang, Y. Lv, and F. Wang (2025) Stop summation: min-form credit assignment is all process reward model needs for reasoning. arXiv preprint arXiv:2504.15275. Cited by: §1.

- Y. Fu, T. Chen, J. Chai, X. Wang, S. Tu, G. Yin, W. Lin, Q. Zhang, Y. Zhu, and D. Zhao (2025) SRFT: a single-stage method with supervised and reinforcement fine-tuning for reasoning. arXiv preprint arXiv:2506.19767. Cited by: §2.

- D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. (2025) Deepseek-r1: incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948. Cited by: §1, §2.

- C. He, R. Luo, Y. Bai, S. Hu, Z. Thai, J. Shen, J. Hu, X. Han, Y. Huang, Y. Zhang, et al. (2024) Olympiadbench: a challenging benchmark for promoting agi with olympiad-level bilingual multimodal scientific problems. In Proceedings of the Association for Computational Linguistics, pp. 3828–3850. Cited by: §4.

- D. Hendrycks, C. Burns, S. Kadavath, A. Arora, S. Basart, E. Tang, D. Song, and J. Steinhardt (2021) Measuring mathematical problem solving with the math dataset. arXiv preprint arXiv: 2103.03874. Cited by: §4.

- J. Hu (2025) Reinforce++: a simple and efficient approach for aligning large language models. arXiv preprint arXiv:2501.03262. Cited by: §2.

- S. Huang, Z. Fang, Z. Chen, S. Yuan, J. Ye, Y. Zeng, L. Chen, Q. Mao, and F. Zhao (2025) CRITICTOOL: evaluating self-critique capabilities of large language models in tool-calling error scenarios. arXiv preprint arXiv:2506.13977. Cited by: §3.2.

- W. Huang, Y. Zeng, Q. Wang, Z. Fang, S. Cao, Z. Chu, Q. Yin, S. Chen, Z. Yin, L. Chen, et al. (2026) Vision-deepresearch: incentivizing deepresearch capability in multimodal large language models. arXiv preprint arXiv:2601.22060. Cited by: §1.

- A. Jaech, A. Kalai, A. Lerer, A. Richardson, A. El-Kishky, A. Low, A. Helyar, A. Madry, A. Beutel, A. Carney, et al. (2024) Openai o1 system card. arXiv preprint arXiv:2412.16720. Cited by: §2.

- M. Khalifa, R. Agarwal, L. Logeswaran, J. Kim, H. Peng, M. Lee, H. Lee, and L. Wang (2025) Process reward models that think. arXiv preprint arXiv:2504.16828. Cited by: §1, §3.2.

- N. Lambert, J. Morrison, V. Pyatkin, S. Huang, H. Ivison, F. Brahman, L. J. V. Miranda, A. Liu, N. Dziri, S. Lyu, et al. (2024) Tulu 3: pushing frontiers in open language model post-training. arXiv preprint arXiv:2411.15124. Cited by: §1, §2.

- J. Li, E. Beeching, L. Tunstall, B. Lipkin, R. Soletskyi, S. Huang, K. Rasul, L. Yu, A. Q. Jiang, Z. Shen, et al. (2024) Numinamath: the largest public dataset in ai4maths with 860k pairs of competition math problems and solutions. Hugging Face repository 13 (9), pp. 9. Cited by: §4.

- H. Lightman, V. Kosaraju, Y. Burda, H. Edwards, B. Baker, T. Lee, J. Leike, J. Schulman, I. Sutskever, and K. Cobbe (2023) Let’s verify step by step. In Proceedings of the International Conference on Learning Representations, Cited by: §1.

- K. Liu, D. Yang, Z. Qian, W. Yin, Y. Wang, H. Li, J. Liu, P. Zhai, Y. Liu, and L. Zhang (2025) Reinforcement learning meets large language models: a survey of advancements and applications across the llm lifecycle. arXiv preprint arXiv:2509.16679. Cited by: §2.

- L. Ouyang, J. Wu, X. Jiang, D. Almeida, C. Wainwright, P. Mishkin, C. Zhang, S. Agarwal, K. Slama, A. Ray, et al. (2022) Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems 35, pp. 27730–27744. Cited by: §1.

- S. Ouyang, J. Yan, I. Hsu, Y. Chen, K. Jiang, Z. Wang, R. Han, L. T. Le, S. Daruki, X. Tang, et al. (2025) Reasoningbank: scaling agent self-evolving with reasoning memory. arXiv preprint arXiv:2509.25140. Cited by: §2.

- B. Pan and L. Zhao (2025) Can past experience accelerate llm reasoning?. arXiv preprint arXiv:2505.20643. Cited by: §2.

- W. Saunders, C. Yeh, J. Wu, S. Bills, L. Ouyang, J. Ward, and J. Leike (2022) Self-critiquing models for assisting human evaluators. arXiv preprint arXiv:2206.05802. Cited by: §3.2.

- J. Schulman, F. Wolski, P. Dhariwal, A. Radford, and O. Klimov (2017) Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347. Cited by: §1.

- Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, X. Bi, H. Zhang, M. Zhang, Y. Li, Y. Wu, et al. (2024) Deepseekmath: pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300. Cited by: §1, §2, §3.1, §3.4, §4.

- G. Sheng, C. Zhang, Z. Ye, X. Wu, W. Zhang, R. Zhang, Y. Peng, H. Lin, and C. Wu (2024) HybridFlow: a flexible and efficient rlhf framework. arXiv preprint arXiv: 2409.19256. Cited by: §4.

- G. Swamy, S. Choudhury, W. Sun, Z. S. Wu, and J. A. Bagnell (2025) All roads lead to likelihood: the value of reinforcement learning in fine-tuning. arXiv preprint arXiv:2503.01067. Cited by: §3.2.

- Q. Wang, R. Ding, Y. Zeng, Z. Chen, L. Chen, S. Wang, P. Xie, F. Huang, and F. Zhao (2025) VRAG-rl: empower vision-perception-based rag for visually rich information understanding via iterative reasoning with reinforcement learning. arXiv preprint arXiv:2505.22019. Cited by: §1.

- R. Wu, X. Wang, J. Mei, P. Cai, D. Fu, C. Yang, L. Wen, X. Yang, Y. Shen, Y. Wang, et al. (2025) Evolver: self-evolving llm agents through an experience-driven lifecycle. arXiv preprint arXiv:2510.16079. Cited by: §1.

- M. Wulfmeier, M. Bloesch, N. Vieillard, A. Ahuja, J. Bornschein, S. Huang, A. Sokolov, M. Barnes, G. Desjardins, A. Bewley, et al. (2024) Imitating language via scalable inverse reinforcement learning. Advances in Neural Information Processing Systems 37, pp. 90714–90735. Cited by: §1.

- G. Xie, Y. Shi, H. Tian, T. Yao, and X. Zhang (2025) Capo: towards enhancing llm reasoning through verifiable generative credit assignment. arXiv e-prints, pp. arXiv–2508. Cited by: §1, §3.2.

- J. Yan, Y. Li, Z. Hu, Z. Wang, G. Cui, X. Qu, Y. Cheng, and Y. Zhang (2025) Learning to reason under off-policy guidance. arXiv preprint arXiv:2504.14945. Cited by: §2.

- A. Yang, A. Li, B. Yang, B. Zhang, B. Hui, B. Zheng, B. Yu, C. Gao, C. Huang, C. Lv, et al. (2025) Qwen3 technical report. arXiv preprint arXiv:2505.09388. Cited by: §4.

- Q. Yu, Z. Zhang, R. Zhu, Y. Yuan, X. Zuo, Y. Yue, W. Dai, T. Fan, G. Liu, L. Liu, et al. (2025) Dapo: an open-source llm reinforcement learning system at scale. arXiv preprint arXiv:2503.14476. Cited by: §2, §4.

- Y. Zeng, W. Huang, Z. Fang, S. Chen, Y. Shen, Y. Cai, X. Wang, Z. Yin, L. Chen, Z. Chen, et al. (2026) Vision-deepresearch benchmark: rethinking visual and textual search for multimodal large language models. arXiv preprint arXiv:2602.02185. Cited by: §1.

- Y. Zeng, W. Huang, S. Huang, X. Bao, Y. Qi, Y. Zhao, Q. Wang, L. Chen, Z. Chen, H. Chen, et al. (2025a) Agentic jigsaw interaction learning for enhancing visual perception and reasoning in vision-language models. arXiv preprint arXiv:2510.01304. Cited by: §1.

- Y. Zeng, Y. Qi, Y. Zhao, X. Bao, L. Chen, Z. Chen, S. Huang, J. Zhao, and F. Zhao (2025b) Enhancing large vision-language models with ultra-detailed image caption generation. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, pp. 26703–26729. Cited by: §1.

- K. Zhang, X. Chen, B. Liu, T. Xue, Z. Liao, Z. Liu, X. Wang, Y. Ning, Z. Chen, X. Fu, et al. (2025a) Agent learning via early experience. arXiv preprint arXiv:2510.08558. Cited by: §1.

- K. Zhang, Y. Zuo, B. He, Y. Sun, R. Liu, C. Jiang, Y. Fan, K. Tian, G. Jia, P. Li, et al. (2025b) A survey of reinforcement learning for large reasoning models. arXiv preprint arXiv:2509.08827. Cited by: §2.

- K. Zhang, A. Lv, J. Li, Y. Wang, F. Wang, H. Hu, and R. Yan (2025c) StepHint: multi-level stepwise hints enhance reinforcement learning to reason. arXiv preprint arXiv:2507.02841. Cited by: §1, §2.

- X. Zhang, S. Wu, Y. Zhu, H. Tan, S. Yu, Z. He, and J. Jia (2025d) Scaf-grpo: scaffolded group relative policy optimization for enhancing llm reasoning. arXiv preprint arXiv:2510.19807. Cited by: §1, §2.

- C. Zheng, S. Liu, M. Li, X. Chen, B. Yu, C. Gao, K. Dang, Y. Liu, R. Men, A. Yang, et al. (2025) Group sequence policy optimization. arXiv preprint arXiv:2507.18071. Cited by: §2.

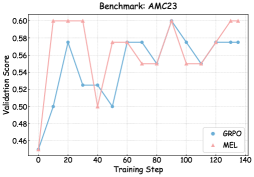

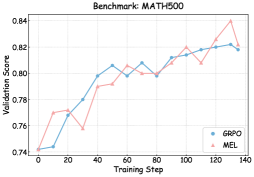

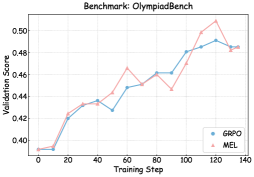

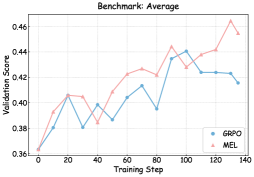

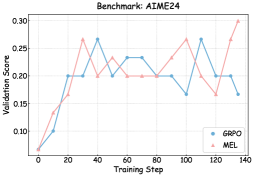

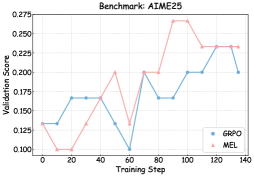

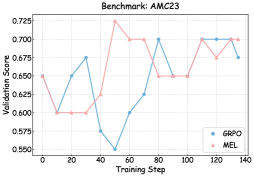

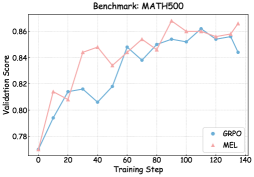

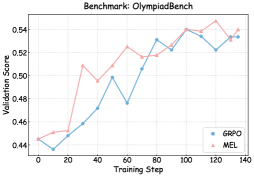

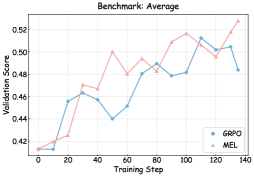

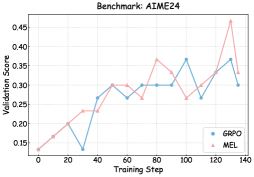

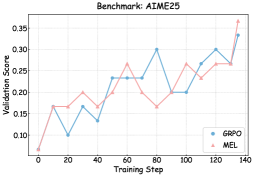

Appendix A Result of Performance Evolution

As illustrated in Figures 6, 7, and 8, we visualize the performance evolution of models with different scales (Qwen3-4B-Base, Qwen3-8B-Base, and Qwen3-14B-Base) across multiple benchmarks throughout training. It can be observed that MEL consistently outperforms standard GRPO in terms of average performance on all benchmarks.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Chart: Validation Score vs. Training Step for AIME24 Benchmark

### Overview

The image is a line chart comparing the validation scores of two models, GRPO and MEL, over training steps for the AIME24 benchmark. The x-axis represents the training step, and the y-axis represents the validation score.

### Components/Axes

* **Title:** Benchmark: AIME24

* **X-axis:** Training Step, with markers at 0, 20, 40, 60, 80, 100, 120, and 140.

* **Y-axis:** Validation Score, ranging from 0.075 to 0.225, with markers at intervals of 0.025.

* **Legend:** Located in the bottom-right corner.

* Blue line: GRPO

* Pink line: MEL

### Detailed Analysis

* **GRPO (Blue):**

* Starts at approximately 0.135 at step 0.

* Increases to approximately 0.165 by step 20.

* Decreases to approximately 0.135 by step 40.

* Increases to approximately 0.165 by step 40.

* Decreases to approximately 0.100 by step 60.

* Increases to approximately 0.165 by step 80.

* Remains at approximately 0.165 by step 100.

* Remains at approximately 0.165 by step 120.

* Increases to approximately 0.165 by step 140.

* **MEL (Pink):**

* Starts at approximately 0.135 at step 0.

* Decreases to approximately 0.070 by step 20.

* Increases to approximately 0.135 by step 40.

* Decreases to approximately 0.100 by step 60.

* Increases to approximately 0.200 by step 80.

* Increases to approximately 0.230 by step 100.

* Decreases to approximately 0.165 by step 120.

* Increases to approximately 0.200 by step 140.

### Key Observations

* GRPO shows a more stable validation score compared to MEL.

* MEL has a higher peak validation score around step 100, but also exhibits more fluctuation.

* Both models start at approximately the same validation score.

### Interpretation

The chart compares the performance of two models, GRPO and MEL, on the AIME24 benchmark. GRPO demonstrates more consistent performance across training steps, while MEL shows higher potential but also greater instability. The choice between the two models would depend on the specific requirements of the application, with GRPO being preferable if stability is paramount and MEL being considered if the potential for higher performance outweighs the risk of fluctuation.

</details>

<details>

<summary>x8.png Details</summary>

### Visual Description

## Chart: Validation Score vs. Training Step for AIME25 Benchmark

### Overview

The image is a line chart comparing the validation scores of two models, GRPO and MEL, over training steps for the AIME25 benchmark. The chart displays the validation score on the y-axis and the training step on the x-axis.

### Components/Axes

* **Title:** Benchmark: AIME25

* **X-axis:** Training Step, with markers at 0, 20, 40, 60, 80, 100, 120, and 140.

* **Y-axis:** Validation Score, with markers at 0.025, 0.050, 0.075, 0.100, 0.125, 0.150, 0.175, and 0.200.

* **Legend:** Located in the bottom-right corner.

* GRPO (Blue)

* MEL (Pink)

### Detailed Analysis

* **GRPO (Blue):**

* Starts at approximately 0.100 at training step 0.

* Decreases to approximately 0.033 at training step 20.

* Relatively stable around 0.067 between training steps 40 and 60.

* Decreases to approximately 0.025 at training step 80.

* Increases sharply to approximately 0.167 at training step 100.

* Decreases to approximately 0.100 at training step 120.

* Remains at approximately 0.100 at training step 140.

* **MEL (Pink):**

* Starts at approximately 0.100 at training step 0.

* Increases to approximately 0.133 at training step 20.

* Decreases to approximately 0.100 at training step 40.

* Relatively stable around 0.133 between training steps 60 and 100.

* Decreases to approximately 0.167 at training step 120.

* Decreases to approximately 0.133 at training step 120.

* Increases sharply to approximately 0.200 at training step 140.

* Decreases to approximately 0.167 at training step 140.

### Key Observations

* The GRPO model shows more volatility in its validation score compared to the MEL model.

* The MEL model generally maintains a higher validation score than the GRPO model, especially in the later training steps.

* Both models show fluctuations in their validation scores, indicating potential overfitting or the need for further optimization.

### Interpretation

The chart compares the performance of two models, GRPO and MEL, on the AIME25 benchmark. The validation scores indicate how well each model generalizes to unseen data during training. The MEL model appears to perform better overall, achieving higher validation scores and demonstrating more stability. The GRPO model, while showing some improvement during training, exhibits more significant fluctuations, suggesting it may be more sensitive to the training data or require different hyperparameter tuning. The sharp increase in MEL's validation score near the end of training suggests it may be converging towards a better solution, while GRPO's performance plateaus. These observations can inform decisions about model selection, hyperparameter tuning, and further experimentation to improve the performance of both models.

</details>

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Chart: Benchmark AMC23

### Overview

The image is a line chart comparing the validation scores of two models, GRPO and MEL, over training steps. The chart shows how the validation scores change as the models are trained.

### Components/Axes

* **Title:** Benchmark: AMC23

* **X-axis:** Training Step (ranging from 0 to 140)

* Axis markers: 0, 20, 40, 60, 80, 100, 120, 140

* **Y-axis:** Validation Score (ranging from 0.46 to 0.60)

* Axis markers: 0.46, 0.48, 0.50, 0.52, 0.54, 0.56, 0.58, 0.60

* **Legend:** Located in the bottom-right corner.

* GRPO (blue line with circle markers)

* MEL (pink line with triangle markers)

### Detailed Analysis

* **GRPO (blue line):**

* Trend: Initially increases, then fluctuates, and finally stabilizes.

* Data Points:

* (0, 0.45)

* (20, 0.50)

* (40, 0.525)

* (50, 0.50)

* (60, 0.575)

* (80, 0.55)

* (100, 0.60)

* (120, 0.575)

* (140, 0.575)

* **MEL (pink line):**

* Trend: Initially increases sharply, plateaus, then fluctuates before stabilizing.

* Data Points:

* (0, 0.45)

* (20, 0.60)

* (40, 0.60)

* (60, 0.575)

* (80, 0.55)

* (100, 0.575)

* (120, 0.55)

* (140, 0.575)

### Key Observations

* Both models start with the same validation score at the beginning of training.

* MEL initially performs better, reaching a higher validation score faster than GRPO.

* Both models appear to converge to a similar validation score towards the end of the training steps.

### Interpretation

The chart compares the performance of two models, GRPO and MEL, on the AMC23 benchmark. The validation scores indicate how well each model generalizes to unseen data during training. MEL shows a faster initial improvement, but both models eventually achieve similar performance levels. The fluctuations in validation scores suggest that both models experience some instability during training, possibly due to overfitting or other factors. The stabilization towards the end indicates that the models are converging and learning effectively.

</details>

<details>

<summary>x10.png Details</summary>

### Visual Description

## Line Chart: Benchmark MATH500

### Overview

The image is a line chart comparing the validation scores of two methods, GRPO and MEL, over training steps. The chart shows how the validation score changes as the training progresses for each method.

### Components/Axes

* **Title:** Benchmark: MATH500

* **X-axis:** Training Step (ranging from 0 to 140)

* Axis markers: 0, 20, 40, 60, 80, 100, 120, 140

* **Y-axis:** Validation Score (ranging from 0.74 to 0.84)

* Axis markers: 0.74, 0.76, 0.78, 0.80, 0.82, 0.84

* **Legend:** Located in the bottom-right corner.

* GRPO (blue line with circle markers)

* MEL (pink line with triangle markers)

### Detailed Analysis

* **GRPO (blue line):**

* Starts at approximately 0.74 at training step 0.

* Increases to approximately 0.77 at training step 20.

* Increases to approximately 0.80 at training step 40.

* Fluctuates around 0.80 between training steps 40 and 80.

* Gradually increases to approximately 0.82 at training step 120.

* Remains relatively stable around 0.82 between training steps 120 and 140.

* **MEL (pink line):**

* Starts at approximately 0.74 at training step 0.

* Increases to approximately 0.77 at training step 20.

* Increases to approximately 0.79 at training step 40.

* Fluctuates around 0.80 between training steps 40 and 80.

* Increases to approximately 0.82 at training step 100.

* Dips to approximately 0.81 at training step 120.

* Increases to approximately 0.84 at training step 130.

* Decreases to approximately 0.82 at training step 140.

### Key Observations

* Both GRPO and MEL show an increasing trend in validation score as the training step increases.

* MEL shows more fluctuation in validation score compared to GRPO, especially towards the end of the training steps.