# Latent Thoughts Tuning: Bridging Context and Reasoning with Fused Information in Latent Tokens

**Authors**: Weihao Liu, Dehai Min, Lu Cheng

## Abstract

While explicit Chain-of-Thought (CoT) equips Large Language Models (LLMs) with strong reasoning capabilities, it requires models to verbalize every intermediate step in text tokens, constraining the model thoughts to the discrete vocabulary space. Recently, reasoning in continuous latent space has emerged as a promising alternative, enabling more robust inference and flexible computation beyond discrete token constraints. However, current latent paradigms often suffer from feature collapse and instability, stemming from distribution mismatches when recurrently using hidden states as the input embeddings, or alignment issues when relying on assistant models. To address this, we propose Latent Thoughts Tuning (LT-Tuning), a framework that redefines how latent thoughts are constructed and deployed. Instead of relying solely on raw hidden states, our method introduces a Context-Prediction-Fusion mechanism that jointly leveraging contextual hidden states and predictive semantic guidance from the vocabulary embedding space. Combined with a progressive three-stage curriculum learning pipeline, LT-Tuning also enables dynamically switching between latent and explicit thinking modes. Experiments demonstrate that our method outperforms existing latent reasoning baselines, effectively mitigating feature collapse and achieving robust reasoning accuracy. Repository URL.

Machine Learning, ICML

## 1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Comparison of Latent Token Generation Methods

### Overview

The image is a technical diagram comparing five different approaches for generating latent tokens in AI models, particularly for reasoning tasks. Each method is presented as a horizontal flowchart showing the sequence of processing from a question ("Que.") to an answer ("Ans."). The diagram uses a consistent visual language with colored boxes and arrows to represent different types of tokens and processing steps.

### Components/Axes

The diagram is divided into five horizontal sections, each with a title and a flowchart. A legend at the bottom defines the token types:

* **Gray Box**: Latent Token

* **Green Box**: Text Token

**Section Titles (from top to bottom):**

1. Explicit CoT Reasoning

2. Latent Tokens From Model Hidden States (Coconut)

3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking)

4. Latent Tokens From Assistant Models (SoftCoT, SemCoT ...)

5. Dynamic Latent Token Generation with LT-Tuning (Ours)

**Flowchart Elements:**

* **Que.**: Blue box, representing the input question.

* **Ans.**: White box, representing the output answer.

* **Arrows**: Indicate the flow of information or processing sequence.

* **Text Labels**: Annotations describing specific processes or constraints within a method.

### Detailed Analysis

**1. Explicit CoT Reasoning**

* **Flow**: `Que.` -> [Green Token] -> ... -> [Green Token] -> `Ans.`

* **Description**: This represents a standard Chain-of-Thought (CoT) approach where reasoning steps are explicit, intermediate text tokens (green) are generated between the question and the final answer.

**2. Latent Tokens From Model Hidden States (Coconut)**

* **Flow**: `Que.` -> [Gray Token] -> [Gray Token] -> [Gray Token] -> `Ans.`

* **Annotation**: "Fixed Number" is written above the sequence of three gray latent tokens.

* **Description**: This method (Coconut) generates a fixed number of latent tokens (gray) derived from the model's hidden states. These tokens are not human-readable text but are used internally for reasoning before producing the final answer.

**3. Latent Tokens From Probability Weighted Interpolation (Soft-Thinking)**

* **Flow**: `Que.` -> [Green Token] -> [Gray Token with a small bar chart icon above it] -> [Green Token] -> `Ans.`

* **Annotation**: "Cold Stop" is written above a circular arrow pointing back to the gray token.

* **Description**: The Soft-Thinking method involves a mix of text and latent tokens. The gray token is annotated with a bar chart, suggesting it's generated via probability weighting or interpolation. The "Cold Stop" loop indicates a potential iterative or stopping condition based on confidence or probability.

**4. Latent Tokens From Assistant Models (SoftCoT, SemCoT ...)**

* **Flow**: `Que.` -> [Dashed box containing two gray tokens] -> [Green Token] -> [Green Token] -> `Ans.`

* **Annotation**: An arrow points from a blue box labeled "Assistant Model" to the dashed box containing the latent tokens.

* **Description**: This approach uses separate "Assistant Models" to generate the initial latent tokens (gray). These tokens are then processed by the main model to produce explicit text tokens (green) leading to the answer. The dashed box groups the assistant-generated latent tokens.

**5. Dynamic Latent Token Generation with LT-Tuning (Ours)**

* **Flow**: `Que.` -> [Gray Token] -> [Green Token] -> ... -> [Gray Token] -> [Green Token] -> `Ans.`

* **Annotation**: "Confidence-Driven, Context-Prediction Fusion" is written in red above the sequence.

* **Description**: The proposed method ("Ours") features a dynamic, interleaved sequence of latent (gray) and text (green) tokens. The red annotation specifies the core mechanisms: generation is driven by model confidence and involves fusing context predictions. This suggests an adaptive process where the model decides when to use latent vs. explicit reasoning steps.

### Key Observations

1. **Progression of Complexity**: The diagram shows an evolution from purely explicit text reasoning (CoT) to purely latent reasoning (Coconut), then to hybrid models (Soft-Thinking, Assistant Models), culminating in a dynamic, interleaved approach (LT-Tuning).

2. **Token Type Legend**: The consistent use of green for text tokens and gray for latent tokens is critical for interpreting each method's strategy.

3. **Spatial Grounding**: The legend is positioned at the bottom-center. Each method's flowchart is left-aligned within its section. Annotations are placed directly above the relevant part of the flow they describe.

4. **Proposed Method Distinction**: The final method is labeled "(Ours)" and uses red text for its key mechanism, visually setting it apart as the authors' contribution. Its flow is the most complex, showing a non-uniform, interleaved pattern of token types.

### Interpretation

This diagram serves as a conceptual taxonomy and a pitch for a new method. It visually argues that existing approaches to latent reasoning fall into distinct categories: fully explicit, fully latent, or hybrid with fixed patterns. The proposed "LT-Tuning" method is presented as a more advanced, dynamic alternative.

The core innovation suggested is **adaptive reasoning granularity**. Instead of committing to a fixed number of latent steps (Coconut) or a simple alternation, the model using LT-Tuning can flexibly generate latent tokens for internal computation and text tokens for explicit reasoning at points where they are most useful, guided by confidence and context prediction. This aims to combine the interpretability of chain-of-thought with the efficiency and power of latent reasoning, potentially leading to more robust and capable AI reasoning systems. The diagram effectively communicates that the field is moving from static reasoning pathways toward dynamic, model-controlled reasoning processes.

</details>

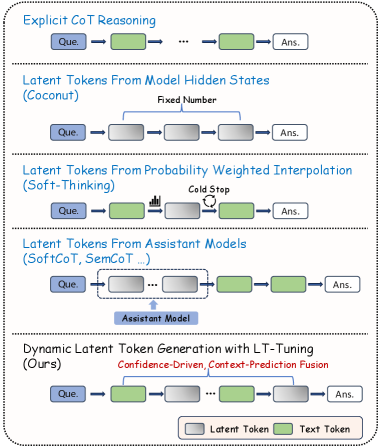

Figure 1: Comparison of reasoning paradigms. Explicit CoT verbalizes all steps as text tokens. Coconut uses a fixed number of latent tokens from hidden states. Soft-Thinking constructs latent tokens via probability-weighted interpolation with entropy-based stopping. Assistant-based methods rely on external models. Our LT-Tuning dynamically interleaves text and latent tokens through confidence-driven insertion and Context-Prediction Fusion.

The capability of Large Language Models (LLMs) to perform multi-step reasoning has largely depended on generating explicit text steps, known as Chain-of-Thought (CoT) (Wei et al., 2022; Chen et al., 2023). Although effective, this approach requires the model to perform reasoning in a discrete token sequence, which means the model can not “think twice before acting”, or they demand extra cost on extremely long text output (Jaech et al., 2024; Yeo et al., 2025; Guo et al., 2025; Seed et al., 2025) and self-reflection (Renze and Guven, 2024; Kang et al., 2025; Yu et al., 2025).

Motivated by these limitations, recent work has explored reasoning in continuous latent spaces as an alternative (Zhu et al., 2025a; Chen et al., 2025). By allowing models to reason directly in high-dimensional hidden states rather than explicit tokens (Hao et al., 2024; Shen et al., 2025; Wei et al., 2025), this line of research aims to decouple internal reasoning from explicit text generation. While promising, latent-space reasoning methods face two fundamental challenges:

- Constructing well-aligned latent representations. Latent tokens must be semantically expressive while remaining compatible with the model’s internal embedding space. Methods relying on external assistant models (Xu et al., 2025; He et al., 2025) struggle with representational misalignment, whereas purely intrinsic approaches (Chen et al., 2025) risk distribution mismatch between input embeddings and output hidden states—particularly in models with untied input and output embeddings—which can lead to instability or feature collapse.

- Adapting reasoning cost dynamically. Most existing methods employ static reasoning schedules, ignoring the fact that step difficulty varies. This fixed allocation is often inefficient, as it wastes computation on trivial steps while failing to provide sufficient depth for complex reasoning.

To address these challenges, we propose Latent Thoughts Tuning (LT-Tuning), a framework that enables LLMs to perform robust latent reasoning without external assistants. An illustration of the difference between our method and mainstream methods in latent reasoning is visualized in Figure 1. Our core innovation is a Context-Prediction Fusion mechanism that constructs latent tokens by combining two complementary sources: the contextual history encoded in hidden states, and the predictive semantic guidance from probability-weighted vocabulary embeddings. This fusion bridges the gap between the model’s output space and input embedding manifold, mitigating feature collapse in larger models. Additionally, we introduce a confidence-driven strategy that allows the model to dynamically determine when to engage latent reasoning, avoiding the inefficiency of static allocation. The entire framework is trained through a three-stage curriculum learning that progressively transitions from purely explicit CoT to reasoning with latent thoughts.

Our main contributions can be summarized as follows.

(1) A unified latent reasoning method. We introduce LT-Tuning, a latent-space reasoning framework that enables adaptive and stable continuous reasoning without architectural modifications. The method integrates (i) confidence-driven dynamic decision on explicit CoT or latent reasoning, (ii) context–prediction fusion to construct well-aligned latent tokens by combining contextual hidden states with predictive semantic guidance, and (iii) a progressive curriculum learning strategy that stabilizes latent-space optimization and mitigates feature collapse.

(2) Comprehensive empirical evaluation and scaling analysis. We conduct extensive experiments on mathematical reasoning benchmarks across model scales from 1B to 8B parameters. Results show that LT-Tuning consistently outperforms existing latent reasoning baselines at all scales, achieving up to a 4.3% average improvement over the strongest prior method. Notably, while prior approaches such as Coconut (Hao et al., 2024) degrade severely on larger models due to feature collapse, LT-Tuning exhibits robust and healthy scaling behavior across benchmarks.

## 2 Related Work

Explicit Reasoning. The emergence of Chain-of-Thought (CoT) prompting (Wei et al., 2022) marked a paradigm shift in how we elicit reasoning from large language models. By decomposing complex problems into verbalizable intermediate steps, CoT enables models to tackle tasks that would otherwise exceed their direct inference capabilities. Subsequent works have extended this paradigm through program-aided reasoning (Chen et al., 2023), self-consistency decoding (Wang et al., 2022), and tree-structured exploration (Yao et al., 2024). More recently, reasoning-focused models such as OpenAI o1 (Jaech et al., 2024) and DeepSeek-R1 (Guo et al., 2025) leverage reinforcement learning to generate extended reasoning chains, achieving strong performance on complex tasks. However, these approaches often produce extremely long reasoning traces, incurring substantial computational cost and inference latency. Although some works have explored condensed reasoning (Deng et al., 2023; Cheng and Van Durme, 2024), they share a fundamental constraint: reasoning must be externalized as discrete tokens, restricting the model’s “thoughts” to concepts expressible in natural language.

Reasoning with Latent Tokens. The constraints of explicit reasoning have motivated exploration into continuous latent spaces. Coconut (Hao et al., 2024) pioneered this direction by feeding the last hidden state directly as the next input embedding, enabling recurrent reasoning without token generation (Shen et al., 2025; Wei et al., 2025). However, this approach directly reuses hidden states as input embeddings, ignoring the distributional gap between the two spaces. Soft-Thinking (Zhang et al., 2025; Zhou et al., 2025) addresses this partially by constructing latent tokens via probabilistic mixtures over vocabulary embeddings, but discards the contextual information encoded in hidden states. Another line of work employs assistant models to generate latent representations (Xu et al., 2025; He et al., 2025), avoiding training large models but introducing potential misalignment between the assistant’s output space and the reasoning model’s embedding space. Recent work also explores reinforcement learning for latent reasoning (Butt et al., 2025). Additionally, recurrent transformers (Dehghani et al., 2018; Yang et al., 2023; Gatmiry et al., 2024) offer a related paradigm for iterative refinement, but typically require pretraining from scratch (Zhu et al., 2025b; Bae et al., 2025), limiting applicability to existing LLMs. In contrast, LT-Tuning enables recurrent latent computation through a post-training pipeline applicable to off-the-shelf models.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Three-Stage LLM Enhancement with Latent Tokens

### Overview

The image is a technical diagram illustrating a three-stage process for enhancing a Large Language Model (LLM) through the use of latent tokens and context-prediction fusion. The diagram is divided into three distinct, labeled sections (Stage 1, Stage 2, Stage 3), each depicting a different phase of the model's training or inference architecture. The overall flow suggests a progression from explicit training to a more complex, dynamic system that integrates predictive guidance and contextual history.

### Components/Axes

The diagram is not a chart with axes but a process flow diagram. Its primary components are:

* **Three Main Stages:** Labeled "Stage 1: Explicit CoT Training", "Stage 2: Learn Dynamic Latent Tokens Generation", and "Stage 3: Context-Prediction Fusion for Latent Tokens".

* **Central Processing Unit:** A large, light blue rectangle labeled "LLM" is present in all three stages, representing the core language model.

* **Token Types (Legend):** A legend in the bottom-right corner defines the color-coding for various elements:

* Light Green Rectangle: **Input Text Tokens**

* Light Purple Rectangle: **Label Text Tokens**

* Light Purple Rectangle (with a different shade/pattern): **Latent Tokens**

* Red Rectangle: **Final Answer**

* Blue Rectangle: **Hidden States**

* Yellow Rectangle: **Predicted Tokens**

* Blue Rectangle with a gray border: **Fused Embedding**

* Solid Black Arrow: **Input or Output**

* Dashed Red Arrow: **Latent Generation**

* Dashed Red Arrow with a dot: **Explicit Generation**

* Dashed Red Arrow with a circle: **Latent Fusion**

* **Flow Arrows:** Various solid and dashed arrows indicate the direction of data flow, generation, and fusion between components.

* **Sub-components:** Specific boxes within stages, such as "Predictive Guidance" and "Contextual History" in Stage 3.

### Detailed Analysis

**Stage 1: Explicit CoT Training**

* **Process:** This stage depicts a standard supervised training setup.

* **Flow:** A sequence of **Input Text Tokens** (light green) is fed into the **LLM**. The LLM processes them and outputs a sequence of **Label Text Tokens** (light purple), culminating in a **Final Answer** (red). The solid black arrows show a direct input-to-output mapping.

**Stage 2: Learn Dynamic Latent Tokens Generation**

* **Process:** This stage introduces the generation of **Latent Tokens** alongside standard text tokens.

* **Flow:**

1. **Input Text Tokens** (light green) enter the **LLM** (Step ①).

2. The LLM produces **Hidden States** (blue).

3. A decision point occurs based on confidence (illustrated by a bar chart icon and "High Conf." / "Low Conf." labels).

4. **Latent Tokens** (light purple, distinct from label tokens) are generated via **Latent Generation** (dashed red arrow) and fed back into the LLM (Step ②).

5. **Text Tokens** (light purple, likely label tokens) are generated via **Explicit Generation** (dashed red arrow with a dot) (Step ③).

6. The process outputs a **Final Answer** (red).

**Stage 3: Context-Prediction Fusion for Latent Tokens**

* **Process:** This is the most complex stage, adding predictive guidance and fusing information.

* **Flow:**

1. **Input Text Tokens** (light green) enter the **LLM** (Step ①).

2. The LLM produces **Hidden States** (blue).

3. **Predictive Guidance:** A box on the left provides a ranked list ("top-1, p₁", "top-2, p₂", etc.) of **Predicted Tokens** (yellow) with their probabilities. This guidance is weighted and used to inform the process.

4. **Contextual History:** A central box shows the integration of **Hidden States** (blue) with a history of previous states (represented by a bar chart icon and a red arrow labeled "Contextual History").

5. **Fusion:** The predictive guidance and contextual history are fused, creating a **Fused Embedding** (blue with gray border).

6. **Latent Token Generation:** The **Fused Embedding** is used to generate **Latent Tokens** (light purple) via **Latent Fusion** (dashed red arrow with a circle) (Step ②).

7. **Text Token Generation:** **Text Tokens** (light purple) are also generated (Step ③).

8. Both latent and text tokens are fed back into the LLM (Step ④), leading to the final output.

### Key Observations

1. **Progressive Complexity:** The architecture evolves from a simple encoder-decoder (Stage 1) to a system with internal latent feedback (Stage 2), and finally to a system that incorporates external predictive signals and historical context (Stage 3).

2. **Role of Latent Tokens:** Latent tokens are introduced in Stage 2 as an intermediate, non-textual representation that the model learns to generate dynamically based on confidence. They become a core component fused with other signals in Stage 3.

3. **Confidence-Based Routing:** Stage 2 explicitly shows a decision mechanism ("High Conf." / "Low Conf.") that likely determines when to generate latent versus explicit text tokens.

4. **Information Fusion:** Stage 3's key innovation is the "Fused Embedding," which combines real-time predictive guidance (from a separate module) with the model's own contextual history before generating latent tokens.

5. **Feedback Loops:** Stages 2 and 3 feature prominent feedback loops (dashed red arrows) where generated tokens (latent or text) are fed back into the LLM, suggesting an iterative or autoregressive refinement process.

### Interpretation

This diagram outlines a sophisticated method for improving the reasoning and generation capabilities of Large Language Models. The core idea is to move beyond generating only human-readable text tokens.

* **Stage 1** establishes a baseline by training the model to produce explicit Chain-of-Thought (CoT) reasoning steps.

* **Stage 2** enhances the model by teaching it to create its own internal "thought" representations (Latent Tokens). These tokens likely capture abstract reasoning states or hypotheses that are more flexible and powerful than discrete text. The confidence-based routing suggests the model learns to use this latent pathway when uncertain or when deeper reasoning is required.

* **Stage 3** represents a significant architectural advancement. It doesn't rely solely on the model's internal state. Instead, it actively fuses two powerful external signals: 1) **Predictive Guidance**, which could come from a separate, specialized model or a retrieval system offering candidate next steps, and 2) **Contextual History**, ensuring continuity in long reasoning chains. By fusing these into a single embedding before generating latent tokens, the model can make more informed, context-aware, and guided "internal thoughts."

The overall progression suggests a research direction aimed at creating LLMs that can perform more deliberate, multi-step reasoning by developing an internal "workspace" of latent concepts, which can be dynamically shaped by both external knowledge and the model's own historical context. This could lead to improvements in complex problem-solving, planning, and tasks requiring long-term coherence.

</details>

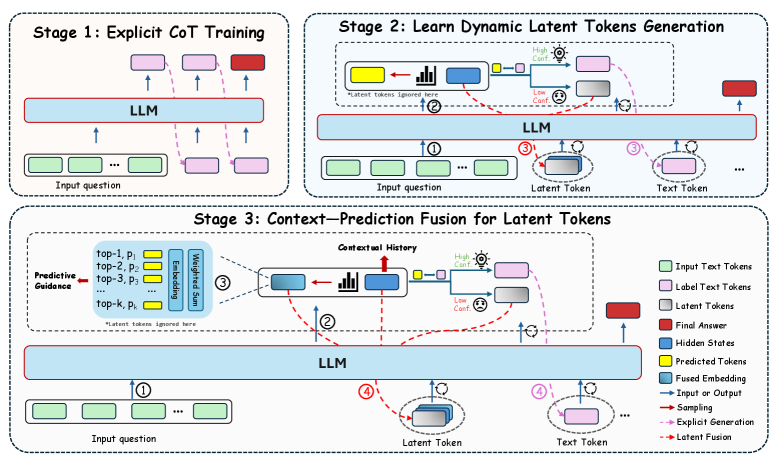

Figure 2: Overview of the three-stage LT-Tuning framework. Stage 1: standard explicit CoT fine-tuning to establish reasoning capabilities. Stage 2: learning to generate latent tokens with confidence-driven insertion, where hidden states serve as the initial latent representations. Stage 3: Context-Prediction Fusion, which combines contextual history information (hidden states) with predicted semantic guidance (fused embeddings) to construct high-quality latent tokens.

## 3 Preliminaries

### 3.1 Autoregressive Language Modeling

Let $V$ denote the discrete vocabulary space and $X=\{x_1,\dots,x_T\}$ be a sequence of tokens where $x_t∈V$ . A standard decoder-only Transformer (Vaswani et al., 2017) defines a probability distribution over the sequence factorization:

$$

p_θ(x)=∏_t=1^Tp_θ(x_t\mid x_<t), \tag{1}

$$

where $x_<t$ represents the prefix history. The model processes the input through multiple layers, producing a final hidden state $h_t∈ℝ^d$ at step $t$ . The probability distribution for the next token is obtained via a linear projection head $W_u∈ℝ^|V|× d$ followed by a softmax function:

$$

p_θ(x_t+1\mid x_<t)=Softmax(W_uh_t). \tag{2}

$$

Crucially, the input embedding for the next step $t+1$ is strictly coupled to the discrete token selection: $e_t+1=Embed(argmax(p_θ))$ or sampled from the distribution. This restricts the reasoning trace to the discrete grid of $V$ .

### 3.2 Using Latent Tokens for Reasoning

Latent reasoning is typically formulated as a recurrent state evolution process over continuous vectors. Let $Z⊂ℝ^d$ be the continuous latent space. Unlike standard decoding where the input at step $t$ is strictly constrained to the embedding of a discrete token index $E(w_t)$ , latent reasoning allows the model to process a sequence of continuous latent tokens $z_1,\dots,z_k$ , where $z_i∈Z$ . These vectors serve as the direct input to the Transformer block function $F_θ$ :

$$

h_t=F_θ(h_t-1,z_t), \tag{3}

$$

where $h_t$ represents the updated contextualized state. This formulation allows the model to maintain and evolve a “thought process” in the high-dimensional vector space without collapsing into discrete tokens at every step.

For example, Coconut (Hao et al., 2024) treats the input latent embedding as a direct recurrence of the preceding output state, i.e., $z_t:=h_t-1$ . While computationally convenient, this approach introduces a distribution mismatch, as $h_t-1$ resides in the output contextualized space rather than the input embedding manifold for which the Transformer weights were trained. Furthermore, relying solely on the raw hidden state ignores the semantic probabilistic guidance provided by the vocabulary projection (Eq. 2), which typically helps organize reasoning steps.

Our goal is to resolve this mismatch by defining a constructive mapping function $Φ(·)$ such that the latent input $z_t=Φ(h_t-1,p_θ(·))$ , effectively fusing the contextual history captured in the hidden state with the predictive semantic guidance of the vocabulary distribution to stabilize the latent reasoning trajectory.

## 4 Methodology

In this section, we present Latent Thoughts Tuning (LT-Tuning), a post-training framework designed to enhance latent reasoning capabilities. Unlike prior approaches that enforce a static allocation of latent tokens, our method empowers models to dynamically determine when to engage in latent reasoning and when to revert to explicit text generation. As illustrated in Figure 2, this is achieved through a progressive three-stage curriculum that evolves the latent space from a simple hidden-state recurrence into a guided latent reasoning process, effectively mitigating optimization instability.

### 4.1 Stage 1: Explicit Reasoning Warm-up

To establish a foundation for reasoning, we first perform supervised fine-tuning (SFT) on the pretrained base model using CoT data. Let $D=\{(x,y_cot,y_ans)\}$ be the dataset containing questions, explicit reasoning steps, and final answers. The model is trained to maximize the likelihood of the CoT sequence and the answer:

$$

L_CoT=-∑_t\log p_θ(y_t\mid x,y_<t). \tag{4}

$$

This stage ensures the model acquires the fundamental capability to decompose complex problems through step-by-step reasoning, serving as the foundation for the subsequent latent phases.

### 4.2 Stage 2: Dynamic Latent Tokens Generation

To achieve more efficient and robust latent reasoning, instead of uniformly applying a fixed number of latent tokens, we train the model to dynamically determine whether to engage latent reasoning based on prediction confidence.

#### Confidence-Driven Data Construction.

We preprocess the training data by identifying positions where the model is uncertain. Specifically, for a target token $y_t$ , if the model’s prediction confidence $p_θ(y_t|y_<t)$ falls below a threshold $τ$ , we insert <thinking> placeholders at that position:

$$

Mode(t)=\begin{cases}Latent ({<thinking>)},&if p_θ(y_t|y_<t)<τ\\

Explicit (Text),&otherwise\end{cases} \tag{5}

$$

Notably, the <thinking> token functions exclusively as a control signal. Since its input representation is dynamically derived from previous hidden states rather than a static embedding, the model treats it as a non-verbalizable latent step during generation.

#### Latent Token Initialization.

The input embeddings for <thinking> tokens are initialized using the hidden states $h_t-1,I$ from layer $I$ at position $t-1$ . This ensures latent reasoning is reserved for uncertain steps, preventing the model from learning spurious patterns on trivial tokens. The model is then trained to predict the subsequent explicit tokens conditioned on this mixed sequence of text and latent tokens.

### 4.3 Stage 3: Context-Prediction Fusion in Latent Tokens

While Stage 2 uses raw hidden states as latent token embeddings, this can cause distribution mismatch between the output and input spaces. Stage 3 addresses this by fusing two complementary sources of information.

#### Predictive Component.

Similar to Soft-Thinking, we compute a probability-weighted embedding from the model’s output distribution. Given the logit distribution $l_t-1$ from the previous step, we apply temperature scaling and Top- $p$ filtering to focus on high-confidence predictions. After masking the <thinking> token and renormalizing, we compute:

$$

e_pred=∑_w∈V\hat{P}(w)·E(w), \tag{6}

$$

where $E(w)∈ℝ^d$ is the embedding vector for token $w$ . This projects the model’s predictive distribution onto the embedding manifold.

#### Context-Prediction Fusion.

To avoid relying exclusively on this predictive vector, we fuse it with the hidden state to preserve contextual history. Specifically, we combine $e_pred$ with the hidden state $h_t-1,I$ from layer $I$ :

$$

e_fusion=α· h_t-1,I+(1-α)· e_pred, \tag{7}

$$

where $α$ is a balancing coefficient. This fused representation serves as the input embedding $z_t$ for the <thinking> token, ensuring compatibility with the input space while retaining contextual information.

Algorithm 1 LT-Tuning Forward Pass

Input: Sequence $x$ , Model $M$ , Embedding matrix $E$ , Fusion weight $α$ , Top- $p$ threshold $p$ , Layer index $I$ , Temperature $T$

Output: Logits sequence $Y$

$idx← 0$ ; $KV←∅$ ; $Y←∅$

while $idx<len(x)$ do

$k←$ index of next <thinking> in $x[idx:]$ , or $len(x)$ if none

$h,logits,KV←M.forward(x[idx:k],KV)$

Append logits to $Y$

if $k<len(x)$ then

// Context component

$h_ctx← h[-1][I]$

// Prediction component

$\hat{P}←TopP(Softmax(logits[-1]/T), p)$

$e_pred←\hat{P}·E$

// Fusion

$e_fusion←α· h_ctx+(1-α)· e_pred$

Use $e_fusion$ as input embedding for position $k$

$idx← k+1$

else

break

end if

end while

return $Y$

## 5 Experiments

### 5.1 Setup

Models and Datasets. We conduct experiments on three different model sizes to ensure the robustness of our method. More specifically, we use Llama-3.2-1B, Llama-3.2-3B and Llama-3.1-8B (Grattafiori et al., 2024) as the backbone LLMs in our method. All models are trained on the GSM8K training set (Cobbe et al., 2021) and evaluated on four mathematical reasoning benchmarks, including GSM8K-NL (Cobbe et al., 2021), ASDiv-Aug (Xu et al., 2025), MultiArith (Roy and Roth, 2015) and SVAMP (Patel et al., 2021). We report accuracy for all experiments. Please refer to the Appendix A to get the statistics of these datasets.

Implementation Details. We adjust batch size and learning rate for each model scale to accommodate GPU memory constraints and ensure stable optimization. For the 8B model, whose input and output embedding matrices are not shared, we add a lightweight adapter to bridge the representation gap. For the 1B and 3B models, no adapter is applied as they use tied input-output embeddings. All experiments were conducted on 4 $×$ NVIDIA A100 80GB GPUs. Full training details and hyperparameters are provided in Appendix B.

Model GSM8K-NL ASDiv-Aug MultiArith SVAMP Average Llama-3.2-1B Explicit CoT 14.9 44.8 37.8 22.3 29.9 Soft-Thinking 13.7 39.3 32.2 20.3 26.4 Coconut 14.6 42.5 22.8 21.0 25.2 SoftCoT 14.9 54.1 38.9 25.0 33.2 SemCoT 15.0 40.0 33.3 25.5 28.5 lightblue!20 LT-Tuning (ours) 15.8 ( $↑$ +0.8) 53.9 51.7 ( $↑$ +12.8) 24.3 36.4 ( $↑$ +3.2) Llama-3.2-3B Explicit CoT 29.5 69.8 57.2 45.7 50.5 Soft-Thinking 24.2 70.3 46.1 43.7 44.5 Coconut 31.8 61.9 63.3 44.0 50.3 SoftCoT 26.9 55.5 57.8 34.5 43.7 SemCoT 16.0 52.5 32.7 31.5 33.2 lightblue!20 LT-Tuning (ours) 32.1 ( $↑$ +0.3) 67.2 64.4 ( $↑$ +1.1) 45.7 52.4 ( $↑$ +1.9) Llama-3.1-8B Explicit CoT 49.5 69.6 78.3 49.3 61.7 Soft-Thinking 53.1 74.9 85.0 51.0 66.0 Coconut 32.7 38.8 51.7 43.0 41.5 SoftCoT 36.8 46.2 74.4 40.0 46.1 SemCoT 21.5 77.0 67.8 46.5 53.2 lightblue!20 LT-Tuning (ours) 58.1 72.2 92.8 52.3 68.8 lightblue!20 LT-Tuning + Adapter (ours) 58.5 ( $↑$ +5.4) 70.7 96.1 ( $↑$ +11.1) 55.7 ( $↑$ +4.7) 70.3 ( $↑$ +4.3)

Table 1: Main results on mathematical reasoning benchmarks. All models are fine-tuned on the GSM8K training set (Yu et al., 2023) and evaluated on four test benchmarks. Bold: best results. Underline: best baseline. ( $↑$ ): absolute gain over best baseline.

Setting GSM8K- NL ASDiv- Aug Multi- Arith SVAMP Average 3B LT-Tuning 32.1 67.2 64.4 45.7 52.4 w/o Stage 2 29.3 63.0 60.0 41.7 48.5 ( $↓$ -3.9) w/o Stage 3 31.2 52.8 56.1 37.3 44.4 ( $↓$ -8.0) red!15 w/o Latent red!1526.0 red!1555.0 red!1551.1 red!1532.3 red!1541.1 ( $↓$ -11.3) w/o TT-Latent 24.9 62.0 42.8 43.3 43.3 ( $↓$ -9.1) 8B LT-Tuning 58.1 72.2 92.8 52.3 68.8 w/o Stage 2 51.4 62.0 88.3 46.7 62.1 ( $↓$ -6.7) red!15 w/o Stage 3 red!1533.7 red!1536.5 red!1582.2 red!1528.7 red!1545.3 ( $↓$ -23.5) w/o Latent 49.7 58.7 93.3 44.7 61.6 ( $↓$ -7.2) w/o TT-Latent 52.4 55.0 87.8 54.0 62.3 ( $↓$ -6.5)

Table 2: Ablation study on the contribution of each training stage and component. w/o Stage 2: static latent token allocation. w/o Stage 3: raw hidden states without fusion. w/o Latent: treat <thinking> tokens as pause tokens. w/o TT-Latent: use latent tokens while training but ignore them during T est- T ime (TT). Highlighted: critical degradation.

### 5.2 Baselines

To comprehensively evaluate the effectiveness of our proposed framework, we compare LT-Tuning against a diverse set of baselines. These latent reasoning baselines represent different strategies for utilizing latent representations for continuous space reasoning: (1) Explicit CoT (Wei et al., 2022): Standard explicit reasoning where the model generates discrete text tokens as intermediate steps. (2) Coconut (Hao et al., 2024): An intrinsic latent reasoning method that directly feeds the previous hidden state as the next input embedding ( $z_t=h_t-1$ ). (3) Soft-Thinking (Zhang et al., 2025): An intrinsic training-free method that constructs soft concept tokens via a probability-weighted sum of top- $k$ vocabulary embeddings, without incorporating hidden-state context ( $z_t=e_pred$ ). (4) SoftCoT (Xu et al., 2025): An assistant-based method that uses a separate assistant model to speculatively generate instance-specific soft thought tokens as the initial chain of thoughts. (5) SemCoT (He et al., 2025): An assistant-based approach that employs a distilled model to generate semantic consistent latent embeddings through training via contrastive learning, improving the interpretability and stability of the latent space.

### 5.3 Results and Analysis

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: Average Number of <thinking> Tokens by Question Difficulty and Model Size

### Overview

This is a grouped bar chart comparing the average number of `<thinking>` tokens generated by three different model sizes (1B, 3B, and 8B parameters) across six levels of question difficulty (0 through 5). The chart illustrates how token usage varies with both model scale and task complexity.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **X-Axis (Horizontal):** Labeled **"Question Difficulty"**. It has six discrete categories marked with the integers: `0`, `1`, `2`, `3`, `4`, `5`.

* **Y-Axis (Vertical):** Labeled **"Average Number of <thinking> Tokens"**. The scale runs from 4 to 8, with major tick marks at every integer (4, 5, 6, 7, 8).

* **Legend:** Located in the **top-left corner** of the chart area. It defines the three data series:

* **Blue square:** `1B`

* **Red square:** `3B`

* **Green square:** `8B`

* **Data Series:** For each difficulty level on the x-axis, there is a cluster of three bars, one for each model size, ordered left-to-right as 1B (blue), 3B (red), 8B (green).

### Detailed Analysis

The following table reconstructs the approximate data points from the chart. Values are estimated based on the y-axis scale.

| Question Difficulty | 1B (Blue) Avg. Tokens | 3B (Red) Avg. Tokens | 8B (Green) Avg. Tokens |

| :--- | :--- | :--- | :--- |

| **0** | ~5.2 | ~3.9 | ~6.2 |

| **1** | ~5.6 | ~4.4 | ~6.9 |

| **2** | ~5.7 | ~4.5 | ~7.2 |

| **3** | ~5.8 | ~4.6 | ~7.4 |

| **4** | ~6.0 | ~4.7 | ~7.4 |

| **5** | ~5.7 | ~4.9 | ~7.8 |

**Trend Verification per Data Series:**

* **8B (Green):** The green bars show a clear and consistent **upward trend**. Starting at ~6.2 for difficulty 0, the height increases with each step, reaching its peak at ~7.8 for difficulty 5.

* **1B (Blue):** The blue bars show a **general upward trend with a slight dip at the end**. Values rise from ~5.2 (diff 0) to a peak of ~6.0 (diff 4), then decrease slightly to ~5.7 at difficulty 5.

* **3B (Red):** The red bars show a **gradual, consistent upward trend**. Starting at the lowest point of ~3.9 (diff 0), the height increases slowly but steadily across all difficulty levels, ending at ~4.9 (diff 5).

### Key Observations

1. **Consistent Hierarchy:** At every difficulty level, the 8B model (green) uses the most tokens, followed by the 1B model (blue), with the 3B model (red) using the fewest. This order is maintained without exception.

2. **Scale vs. Token Usage:** There is not a simple linear relationship between model size (1B, 3B, 8B) and token count. The 3B model consistently uses fewer tokens than the smaller 1B model.

3. **Impact of Difficulty:** All three models show an overall increase in average thinking tokens as question difficulty increases from 0 to 5, suggesting more complex problems require more internal processing (as measured by token generation).

4. **Anomaly at Difficulty 5 for 1B:** The 1B model's token count peaks at difficulty 4 and then drops at difficulty 5, breaking its upward trend. This is the only instance where a model's token count decreases at a higher difficulty level.

### Interpretation

This chart provides insight into the "thinking" behavior of language models of different scales. The data suggests several key points:

* **Processing Effort Scales with Problem Complexity:** The general upward trend for all models indicates that more difficult questions elicit longer internal reasoning chains (more `<thinking>` tokens). This aligns with the expectation that harder problems require more computation.

* **Model Size Does Not Dictate Token Efficiency:** The most striking finding is that the mid-sized 3B model is the most "token-efficient," using significantly fewer thinking tokens than both the smaller 1B and larger 8B models at all difficulty levels. This could imply differences in training, architecture, or internal reasoning strategies. The 8B model, while using the most tokens, may be engaging in more exhaustive or verbose reasoning.

* **Potential Saturation or Strategy Shift:** The dip in the 1B model's tokens at the highest difficulty (5) could indicate a few possibilities: the model may have hit a limit in its reasoning capacity for very hard problems, it might be employing a different, more direct strategy that requires fewer tokens, or the sample of questions at this difficulty may have properties that lead to shorter outputs.

* **Practical Implications:** For applications where computational cost (tied to token count) is a concern, the 3B model appears most efficient. However, efficiency must be balanced against performance accuracy, which is not shown here. The 8B model's high token usage suggests it may be capable of deeper reasoning but at a higher operational cost.

In summary, the chart reveals that thinking token usage is influenced by both model scale and task difficulty in non-trivial ways, with the 3B model demonstrating a uniquely efficient processing pattern across the tested difficulty spectrum.

</details>

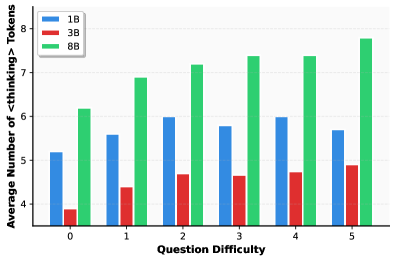

Figure 3: Average number of <thinking> tokens generated versus question difficulty across models of varying sizes. Difficulty is measured by the error rate of Llama-3.1-8B-Instruct over 5 sampling trials. Models generally demonstrate a positive correlation between question difficulty and the number of generated latent tokens, indicating that our method learns to adaptively scale latent reasoning effort based on problem complexity.

Table 1 presents results across three model scales on all four datasets. We have the following observations according to the experimental results and further analysis:

#### Consistent Improvements Across Scales.

LT-Tuning achieves the best average performance at all model scales: 36.4% (1B), 52.4% (3B), and 68.8% (8B). In contrast, baseline methods exhibit inconsistent behavior and lack scaling robustness. Most notably, Coconut performs reasonably on smaller models but degrades sharply at the 8B scale (50.3% $→$ 41.5% average), falling below even explicit CoT. This degradation reflects our theoretical motivation: larger models with untied embedding weights suffer severely when hidden states are directly recycled as inputs. LT-Tuning exhibits healthy scaling behavior, with the 8B model achieving nearly double Coconut’s accuracy. Adding an adapter layer for the 8B model further improves performance to 70.3%, with notable gains on MultiArith (92.8% $→$ 96.1%), confirming that explicit projection improves compatibility in architectures without weight tying.

Intrinsic vs. Assistant-based Methods. Assistant-based methods (SoftCoT, SemCoT) show erratic performance—SemCoT achieves 73.5% on ASDiv-Aug but collapses to 6.6% on MultiArith for the 3B model. This volatility suggests that externally generated representations may fail to align with specific reasoning patterns required by different tasks. In contrast, our intrinsic approach constructs latent tokens from the model’s own distributions, avoiding such alignment failures and delivering stable improvements across all benchmarks.

Adaptive Latent Computation for Varying Difficulty. We conducted a statistical analysis across the entire test set to examine the relationship between latent computational allocation and problem complexity. To rigorously quantify “difficulty”, we employed a consistency-based metric using Llama-3.1-8B-Instruct. Specifically, each question was sampled five times, and the difficulty score was defined as the aggregate count of incorrect responses (ranging from 0 to 5). As illustrated in Figure 3, we track the average quantity of generated <thinking> tokens across these difficulty tiers for the 1B, 3B, and 8B models. A distinct positive correlation is observable, particularly in the 8B model, where the number of the latent tokens grows consistently with problem difficulty. This demonstrates that LT-Tuning effectively empowers the model with difficulty-aware dynamic latent token generation capabilities, achieving a desirable balance between inference efficiency and reasoning robustness.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Charts: Entropy and Attention Analysis During Generation

### Overview

The image contains two vertically stacked line charts, labeled (a) and (b), which compare the behavior of two models—"LT-Tuning" and "w/o Latent (Pause)"—across 400 generation steps. The charts track "Entropy" and "Attention Proportion to `<thinking>` Tokens," respectively. The overall visual suggests a comparison of model stability and focus during a text generation process.

### Components/Axes

* **Chart (a) - Top Chart:**

* **Title:** `(a) Entropy`

* **Y-axis Label:** `Entropy`

* **Y-axis Scale:** Linear, ranging from 0.0 to 1.5, with major ticks at 0.0, 0.5, 1.0, and 1.5.

* **X-axis (Shared):** `Generation Step`, ranging from 0 to 400, with major ticks every 50 steps.

* **Legend:** Positioned in the top-right corner of the plot area.

* Blue line: `LT-Tuning`

* Orange line: `w/o Latent (Pause)`

* **Chart (b) - Bottom Chart:**

* **Title:** `(b) Attention to <thinking> Tokens`

* **Y-axis Label:** `Attention Proportion`

* **Y-axis Scale:** Linear, ranging from 0.00 to 0.20, with major ticks at 0.00, 0.05, 0.10, 0.15, and 0.20.

* **X-axis (Shared):** `Generation Step`, ranging from 0 to 400.

* **Legend:** Positioned in the top-right corner of the plot area, identical to chart (a).

* Blue line: `LT-Tuning`

* Orange line: `w/o Latent (Pause)`

### Detailed Analysis

**Chart (a): Entropy**

* **LT-Tuning (Blue Line):** The line starts near 0.0. It exhibits a small, sharp peak to approximately 0.25 around step 25. Following this, it fluctuates at a low level, generally between 0.0 and 0.2, with a slight downward trend, approaching 0.0 by step 400. The line is relatively smooth with minor noise.

* **w/o Latent (Pause) (Orange Line):** This line starts higher, around 0.5. It shows an immediate, sharp peak to approximately 0.8 within the first 10-20 steps. It then declines to fluctuate around 0.3-0.4 until approximately step 250. After step 250, the line becomes highly volatile, with large, rapid oscillations between ~0.1 and ~1.2, continuing until step 400. The shaded area (likely representing variance or confidence interval) is much wider for this series, especially after step 250.

**Chart (b): Attention to `<thinking>` Tokens**

* **LT-Tuning (Blue Line):** The attention proportion starts near 0.00. It shows a small, broad rise to about 0.03-0.04 between steps 25-75. It then remains very low, near 0.00-0.01, until a dramatic, singular spike occurs at approximately step 325, reaching a peak of about 0.18. After this spike, it returns to near 0.00.

* **w/o Latent (Pause) (Orange Line):** This line also starts near 0.00. It remains flat until approximately step 225, where it begins a gradual rise. It forms a broader, multi-peaked region of elevated attention between steps 250-275, with a maximum peak of about 0.06. After step 275, it declines back to near 0.00. The shaded variance region is most prominent during its peak period.

### Key Observations

1. **Divergent Behavior Post-Step 250:** The most significant pattern is the dramatic divergence in behavior between the two models after generation step 250. The "w/o Latent (Pause)" model's entropy becomes highly unstable, while the "LT-Tuning" model's entropy remains low and stable.

2. **Attention Spike vs. Hump:** The "LT-Tuning" model exhibits a single, sharp, high-magnitude attention spike late in the process (step 325). In contrast, the "w/o Latent (Pause)" model shows a lower, broader "hump" of attention earlier (steps 250-275).

3. **Initial Transient:** Both models show an initial transient phase in the first ~50 steps, with the "w/o Latent (Pause)" model showing a much larger initial entropy spike.

4. **Correlation of Instability and Diffuse Attention:** The period of high entropy volatility in the "w/o Latent (Pause)" model (steps 250-400) coincides with its period of elevated, but diffuse, attention to thinking tokens.

### Interpretation

The data suggests a fundamental difference in how the two models manage their internal state during generation. The "LT-Tuning" method appears to promote stability (low, stable entropy) and punctuated, decisive focus (a single sharp attention spike). This could indicate a model that processes "thinking" in a concentrated, efficient burst.

Conversely, the model "w/o Latent (Pause)" demonstrates instability (high, volatile entropy) and a more diffuse, prolonged period of attention. This pattern might reflect a model that struggles to maintain a coherent internal state, leading to erratic confidence (entropy) and a scattered, less efficient allocation of attention to its reasoning process. The late, sharp spike in the LT-Tuning model's attention, occurring after a long period of low entropy, could signify a critical decision point or the culmination of a latent reasoning process that the "w/o Latent" model fails to replicate, instead exhibiting noisy behavior. The charts visually argue that the "LT-Tuning" technique leads to more controlled and potentially more reliable generation dynamics.

</details>

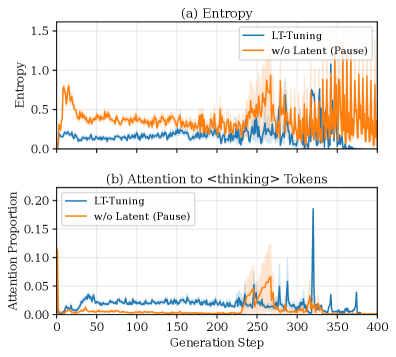

Figure 4: Visualization of step-wise model entropy and attention weights on latent tokens for Llama-3.1-8B. Shaded regions indicate ±1 standard error. Generation steps beyond 400 are truncated for clarity.

### 5.4 Ablation Study

To validate the contribution of each component, we conduct extensive ablation experiments on the 3B and 8B models. We ablate training stages (w/o Stage 2: no curriculum learning; w/o Stage 3: no latent fusion) and two variations of latent thinking strategies: w/o Latent for treating <thinking> tokens as explicit pause tokens (Goyal et al., 2024; Pfau et al., 2024) throughout the pipeline, and w/o TT-Latent for ignoring latent tokens during t est- t ime (Butt et al., 2025).

As shown in Table 2, removing Stage 2 reduces average accuracy by 3.9% (3B) and 6.7% (8B), demonstrating the importance of confidence-driven dynamic allocation. Stage 3 and latent reasoning are also critical, with their removal causing substantial performance drops. Notably, the dominant bottleneck differs by scale. For 3B, removing latent reasoning entirely (w/o Latent) leads to the largest degradation ( $-$ 11.3%), indicating that latent reasoning itself is most impactful at smaller scales. In contrast, for 8B, removing Stage 3 (fusion) causes the most severe drop ( $-$ 23.5%), while w/o Latent reduces accuracy by only 7.2%. This supports our hypothesis that larger models suffer more from distribution mismatch, making high-quality latent token construction via fusion essential. Notably, on 8B, w/o Latent (61.6%) significantly outperforms w/o Stage 3 (45.3%), showing that poorly constructed latent tokens can be worse than no latent reasoning at all. The w/o TT-Latent variant shows consistent degradation ( $-$ 9.1% for 3B, $-$ 6.5% for 8B), confirming that our latent reasoning at test time is indeed necessary and beneficial.

## 6 In-Depth Analyses of LT-Tuning

To further demonstrate the effectiveness of our latent thinking approach, we conduct in-depth analyses of models trained with LT-Tuning.

<details>

<summary>x5.png Details</summary>

### Visual Description

## 3D Scatter Plot Series: LT-Tuning Method Comparison Across Steps

### Overview

The image displays a series of four 3D scatter plots arranged in a 2x2 grid. Each plot visualizes the spatial distribution of data points for three different methods at a specific training or processing "Step." The plots are designed to compare the evolution of these methods' representations in a three-dimensional latent or feature space.

### Components/Axes

* **Legend:** Positioned at the top center of the entire figure. It defines three data series:

* **Red Circle:** `LT-Tuning`

* **Blue Circle:** `LT-Tuning w/o Stage 3`

* **Green Circle:** `Coconut`

* **Plot Titles:** Each subplot is labeled with a step number in the top-left corner of its respective area:

* Top-left plot: `Step 1`

* Top-right plot: `Step 3`

* Bottom-left plot: `Step 4`

* Bottom-right plot: `Step 6`

* **Axes:** Each 3D plot has three axes labeled `X`, `Y`, and `Z`. The numerical ranges are consistent across plots but vary slightly in their displayed limits.

* **X-axis:** Ranges approximately from -1.5 to 1.5. Major tick marks are at -1.5, -1.0, -0.5, 0.0, 0.5, 1.0, 1.5.

* **Y-axis:** Ranges approximately from -1.0 to 1.0. Major tick marks are at -1.0, -0.5, 0.0, 0.5, 1.0.

* **Z-axis:** The vertical axis. Its range varies per plot to accommodate the data:

* Step 1: ~ -1 to 6

* Step 3: ~ -2 to 2

* Step 4: ~ -1 to 2

* Step 6: ~ -1 to 4

### Detailed Analysis

**Step 1 (Top-Left):**

* **LT-Tuning (Red):** A tight cluster of points located near the origin (X≈0, Y≈0) at a low Z-value (Z≈0).

* **Coconut (Green):** A distinct, tight cluster positioned at a higher Z-value (Z≈3-4) and slightly positive X and Y.

* **LT-Tuning w/o Stage 3 (Blue):** Points are widely scattered across the positive X and Y quadrant, spanning a broad range of Z-values from low to high.

**Step 3 (Top-Right):**

* **LT-Tuning (Red):** The cluster has moved significantly upward along the Z-axis (Z≈1-2) and appears slightly more elongated vertically.

* **Coconut (Green):** Remains a tight cluster, now at a lower Z-value (Z≈0) compared to Step 1.

* **LT-Tuning w/o Stage 3 (Blue):** Points remain widely scattered, primarily in the positive X/Y region, with Z-values mostly between -1 and 2.

**Step 4 (Bottom-Left):**

* **LT-Tuning (Red):** The points now form a clear, nearly vertical line or very tight column, extending from Z≈0 to Z≈2.

* **Coconut (Green):** Still a tight cluster, located at low Z (Z≈0) and near the origin.

* **LT-Tuning w/o Stage 3 (Blue):** Scattered distribution persists, similar to previous steps.

**Step 6 (Bottom-Right):**

* **LT-Tuning (Red):** The vertical line/column structure is even more pronounced and extends higher (Z≈0 to Z≈4).

* **Coconut (Green):** The cluster remains tight and is positioned at a mid-range Z-value (Z≈2).

* **LT-Tuning w/o Stage 3 (Blue):** Points continue to be scattered, with no obvious cohesive structure.

### Key Observations

1. **Structural Evolution of LT-Tuning (Red):** This series shows the most dramatic change. It progresses from a low-Z cluster (Step 1) to a high-Z cluster (Step 3), and finally organizes into a distinct vertical line/column by Steps 4 and 6. This suggests a strong, directional organization of its representations along the Z-dimension over time.

2. **Stability of Coconut (Green):** The Coconut method maintains a tight, cohesive cluster across all steps. Its primary change is its position along the Z-axis (high in Step 1, low in Steps 3 & 4, mid in Step 6), indicating stable internal relationships but shifting global placement.

3. **Persistent Dispersion of LT-Tuning w/o Stage 3 (Blue):** This method shows no trend toward cohesion. Its points remain widely scattered in the X-Y plane across all observed steps, suggesting an inability to form a structured representation without "Stage 3."

4. **Spatial Separation:** In all plots, the three methods occupy distinct regions of the 3D space, with minimal overlap. This indicates they learn fundamentally different representations.

### Interpretation

This visualization likely comes from a machine learning research context, comparing representation learning techniques. The data suggests:

* **The Critical Role of "Stage 3":** The stark contrast between `LT-Tuning` (red) and `LT-Tuning w/o Stage 3` (blue) implies that "Stage 3" is a crucial component for achieving structured, organized latent representations. Without it, the model's outputs remain entangled and dispersed.

* **Different Learning Dynamics:** The three methods exhibit distinct learning trajectories. `LT-Tuning` appears to undergo a phase transition from a cluster to a highly ordered linear structure, which could correspond to mastering a specific, hierarchical feature. `Coconut` learns a consistent, localized representation but its global position drifts. The version without Stage 3 fails to converge to a structured state.

* **Potential Meaning of the Z-axis:** The vertical (Z) axis seems to be the primary dimension of interest for `LT-Tuning`. Its progression along this axis could represent the learning of a semantic hierarchy, confidence score, or a primary factor of variation in the data. The final vertical line suggests all data points are being ordered along this single, dominant factor.

In summary, the chart provides visual evidence that the `LT-Tuning` method, particularly with Stage 3, successfully structures its internal representations in a way that the other compared methods do not, which may correlate with superior performance or interpretability.

</details>

Figure 5: PCA visualization of latent token embeddings across different reasoning steps for intrinsic methods on Llama-3.1-8B (we only show four key steps here for a better view). Each point represents a different sample from the test set. Coconut (green) exhibits severe feature collapse, where latent tokens from different samples converge to nearly identical points after just two reasoning steps. LT-Tuning w/o Stage 3 (blue) shows initial exploration in early positions but gradually collapses to similar representations in later steps. LT-Tuning (red) maintains semantic diversity even at six latent tokens, demonstrating its effectiveness in mitigating feature collapse while preserving exploration capacity in the latent space.

Generation Entropy and Attention Allocation. We analyzed the generation dynamics by computing the entropy of the output distribution and the attention allocated to <thinking> tokens at each generation step. Specifically, for each token position $t$ , we compute the entropy $H_t=-∑_ip_i\log p_i$ where $p_i$ is the softmax probability of token $i$ . For attention analysis, we extract the last-layer attention weights, average across all heads, and compute the proportion of attention directed to <thinking> token positions. As shown in Figure 4, we tested the models with 100 samples and found that our LT-Tuning can effectively reduce the uncertainty during generation, and have much fewer uncertainty peaks compared with using pause tokens (w/o Latent). Meanwhile, our method allocates substantially more attention to the latent <thinking> tokens compared to the baseline’s attention allocation on pause tokens. This suggests that the model actively leverages the information encoded in the generated latent tokens during reasoning, rather than merely benefiting from additional computation time as in the pause token approach.

Feature Collapse Mitigation. A key challenge in latent reasoning is feature collapse, where latent token representations from different samples converge to similar points, causing the model to lose the ability to maintain sample-specific reasoning information. To investigate whether different methods suffer from this problem, we visualize latent token embeddings using Principal Component Analysis (PCA) in Figure 5. Specifically, we prepend six latent tokens for each of twenty samples, extract the input embeddings at each position, and project them into 3D space. We show four key steps (1, 3, 4, 6) for clarity. The visualization reveals critical distinctions among methods. Coconut (green) exhibits severe feature collapse, with latent tokens from different samples converging to nearly identical points after just two reasoning steps. LT-Tuning w/o Stage 3 (blue) shows initial exploration in early positions but gradually collapses in later steps, suggesting that relying solely on hidden states is insufficient. In contrast, LT-Tuning (red) maintains semantic diversity even at Step 6, demonstrating that our fusion mechanism effectively mitigates feature collapse.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Accuracy (%) vs. Layer Index for Various Benchmarks

### Overview

The image displays a line chart comparing the performance (accuracy percentage) of four different benchmarks or models across a series of layers, indexed from 1 to 12. The chart illustrates how accuracy changes as the layer index increases for each benchmark.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:**

* **Title:** "Layer Index"

* **Scale:** Linear, with major tick marks and labels at indices 1, 2, 3, 6, 8, 10, and 12.

* **Y-Axis:**

* **Title:** "Accuracy (%)"

* **Scale:** Linear, ranging from 0 to 80, with major tick marks every 10 units (0, 10, 20, 30, 40, 50, 60, 70, 80).

* **Legend:**

* **Position:** Bottom-left corner of the chart area.

* **Entries (from top to bottom as listed in legend):**

1. **GSM8K:** Blue line with circle markers.

2. **MATH (Aqua):** Orange line with square markers.

3. **MMLU:** Purple line with diamond markers.

4. **SVAMP:** Green line with triangle markers.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **GSM8K (Blue line, circles):**

* **Trend:** Relatively flat, hovering in the low 30% range with minor fluctuations.

* **Data Points:**

* Layer 1: ~32%

* Layer 2: ~31%

* Layer 3: ~30%

* Layer 6: ~31%

* Layer 8: ~30%

* Layer 10: ~29%

* Layer 12: ~32%

2. **MATH (Aqua) (Orange line, squares):**

* **Trend:** Shows a general upward trend from layer 1 to 8, followed by a dip at layer 10 and a recovery at layer 12. It is the highest-performing series for most layers.

* **Data Points:**

* Layer 1: ~69%

* Layer 2: ~65%

* Layer 3: ~62%

* Layer 6: ~68%

* Layer 8: ~73% (Peak)

* Layer 10: ~64%

* Layer 12: ~71%

3. **MMLU (Purple line, diamonds):**

* **Trend:** Starts high, dips slightly at layer 3, recovers, and then shows a notable decline from layer 10 to 12.

* **Data Points:**

* Layer 1: ~70%

* Layer 2: ~68%

* Layer 3: ~66%

* Layer 6: ~69%

* Layer 8: ~70%

* Layer 10: ~70%

* Layer 12: ~61% (Significant drop)

4. **SVAMP (Green line, triangles):**

* **Trend:** Very stable and flat, consistently positioned in the mid-40% range across all layers.

* **Data Points:**

* Layer 1: ~45%

* Layer 2: ~46%

* Layer 3: ~45%

* Layer 6: ~42%

* Layer 8: ~45%

* Layer 10: ~45%

* Layer 12: ~43%

### Key Observations

* **Performance Hierarchy:** MATH (Aqua) and MMLU consistently achieve the highest accuracy (60-70%+ range), followed by SVAMP (~45%), with GSM8K being the lowest (~30%).

* **Stability:** SVAMP and GSM8K show remarkably stable performance across layers, with minimal variance. In contrast, MATH (Aqua) and MMLU exhibit more volatility.

* **Notable Anomaly:** The MMLU series experiences a sharp, significant drop in accuracy of approximately 9 percentage points between Layer 10 (~70%) and Layer 12 (~61%).

* **Peak Performance:** The highest single accuracy point on the chart is achieved by MATH (Aqua) at Layer 8 (~73%).

* **Layer Sensitivity:** The chart suggests that the performance of MATH (Aqua) and MMLU is more sensitive to the specific layer index than that of SVAMP or GSM8K.

### Interpretation

This chart likely visualizes the performance of a multi-layer neural network model (or models) on different reasoning or knowledge benchmarks. The "Layer Index" probably corresponds to the depth within the model's architecture.

* **What the data suggests:** The model's ability to solve different types of problems (as categorized by the benchmarks) is not uniform and evolves differently across its layers. The high and volatile performance on MATH (Aqua) and MMLU indicates these tasks engage complex, layer-sensitive processing pathways. The stability of SVAMP and GSM8K suggests these tasks rely on features that are either learned early and remain constant or are processed in a more layer-agnostic manner.

* **How elements relate:** The divergence in trends implies that deeper layers (e.g., 10-12) may be specializing or suffering from degradation for certain tasks (like MMLU) while continuing to benefit others (like MATH at layer 12). The consistent gap between benchmark groups highlights inherent differences in task difficulty or the model's inductive biases.

* **Notable Outlier:** The sharp decline in MMLU accuracy at the final layer is a critical finding. It could indicate overfitting, a breakdown in representation for that specific task at extreme depth, or an artifact of the model's training objective not aligning with the MMLU benchmark in the deepest layers. This warrants further investigation into the model's internal representations at layers 10 through 12.

</details>

Figure 6: Effect of hidden layer selection on Llama-3.2-3B. Performance remains stable across different layer indices, indicating that LT-Tuning is robust to this hyperparameter choice.

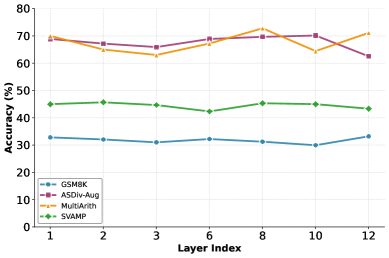

Layer Selection for Context Information. Traditional latent methods select the last hidden states as the initial input embedding for the latent token (Hao et al., 2024; Shen et al., 2025; Wei et al., 2025). So we test the impact of choosing different layers where we get the past context information. Figure 6 shows that performance is relatively robust to the choice of hidden layer for context extraction. Llama-3.2-3B generally shows little performance change when selecting different layers. For Llama-3.1-8B, it is better using the last layer. The related analysis are provided in Appendix C.2. This robustness also suggests that the fusion learning in Stage 3 compensates for suboptimal layer choices, playing a more important role in the training framework.

## 7 Conclusion

In this work, we present Latent Thoughts Tuning (LT-Tuning), a novel framework that advances the capability of LLMs to reason within a continuous latent space. We identified a critical bottleneck in existing paradigms: the distribution mismatch and lack of semantic guidance arising from the direct recurrence of raw hidden states or the reliance on purely probabilistic vectors. To bridge this gap, we proposed the Context-Prediction-Fusion, which synthesizes the dense semantic history of the model with the semantic foresight of the vocabulary distribution. Coupled with our confidence-driven dynamic switching and a progressive three-stage curriculum learning, our method effectively boosts the performance and efficiency of latent thinking. Empirical evaluations across model scales from 1B to 8B demonstrate that LT-Tuning significantly outperforms existing baselines on mathematical reasoning benchmarks while successfully mitigating feature collapse, a particularly severe issue in larger models with untied embeddings. By enabling LLMs to “think” with both historical context and predictive structure, LT-Tuning establishes a foundation for efficient, robust, and scalable latent cognition. Future work may explore the integration of process-based supervision or reinforcement learning to further refine latent reasoning within these fused latent paths.

## Impact Statement

This paper presents work whose goal is to advance the field of Machine Learning, specifically in improving the reasoning capabilities of Large Language Models (LLM) through latent space computation. Our method enables more efficient and robust reasoning by reducing the reliance on verbose intermediate text generation, which has potential benefits for reducing computational costs and inference latency in deployed systems. We acknowledge several considerations regarding broader impact. On the positive side, more efficient reasoning could democratize access to capable AI systems by reducing computational requirements. The interpretability of our dynamic insertion mechanism, which explicitly marks positions of model uncertainty, may also provide useful signals for understanding model behavior. On the other hand, as with any advancement in language model capabilities, improved reasoning could be misused for generating more convincing misinformation or automating harmful content creation. However, we believe these risks are not uniquely exacerbated by our specific contributions, as our work focuses on the efficiency and robustness of reasoning rather than fundamentally new capabilities. We will release our code and trained models to facilitate reproducibility and encourage the research community to build upon this work responsibly.

## References

- S. Bae, Y. Kim, R. Bayat, S. Kim, J. Ha, T. Schuster, A. Fisch, H. Harutyunyan, Z. Ji, A. Courville, et al. (2025) Mixture-of-recursions: learning dynamic recursive depths for adaptive token-level computation. arXiv preprint arXiv:2507.10524. Cited by: §2.

- N. Butt, A. Kwiatkowski, I. Labiad, J. Kempe, and Y. Ollivier (2025) Soft tokens, hard truths. arXiv preprint arXiv:2509.19170. Cited by: §2, §5.4.

- W. Chen, X. Ma, X. Wang, and W. W. Cohen (2023) Program of thoughts prompting: disentangling computation from reasoning for numerical reasoning tasks. Transactions on Machine Learning Research. Note: External Links: ISSN 2835-8856, Link Cited by: §1, §2.

- X. Chen, A. Zhao, H. Xia, X. Lu, H. Wang, Y. Chen, W. Zhang, J. Wang, W. Li, and X. Shen (2025) Reasoning beyond language: a comprehensive survey on latent chain-of-thought reasoning. arXiv preprint arXiv:2505.16782. Cited by: 1st item, §1.

- J. Cheng and B. Van Durme (2024) Compressed chain of thought: efficient reasoning through dense representations. arXiv preprint arXiv:2412.13171. Cited by: §2.

- K. Cobbe, V. Kosaraju, M. Bavarian, M. Chen, H. Jun, L. Kaiser, M. Plappert, J. Tworek, J. Hilton, R. Nakano, et al. (2021) Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168. Cited by: §5.1.

- M. Dehghani, S. Gouws, O. Vinyals, J. Uszkoreit, and L. Kaiser (2018) Universal transformers. In International Conference on Learning Representations, Cited by: §2.

- Y. Deng, K. Prasad, R. Fernandez, P. Smolensky, V. Chaudhary, and S. Shieber (2023) Implicit chain of thought reasoning via knowledge distillation. arXiv preprint arXiv:2311.01460. Cited by: §2.

- K. Gatmiry, N. Saunshi, S. J. Reddi, S. Jegelka, and S. Kumar (2024) Can looped transformers learn to implement multi-step gradient descent for in-context learning?. In International Conference on Machine Learning, pp. 15130–15152. Cited by: §2.

- S. Goyal, Z. Ji, A. S. Rawat, A. K. Menon, S. Kumar, and V. Nagarajan (2024) Think before you speak: training language models with pause tokens. In The Twelfth International Conference on Learning Representations, Cited by: §5.4.

- A. Grattafiori, A. Dubey, A. Jauhri, A. Pandey, A. Kadian, A. Al-Dahle, A. Letman, A. Mathur, A. Schelten, A. Vaughan, et al. (2024) The llama 3 herd of models. arXiv preprint arXiv:2407.21783. Cited by: §5.1.

- D. Guo, D. Yang, H. Zhang, J. Song, P. Wang, Q. Zhu, R. Xu, R. Zhang, S. Ma, X. Bi, et al. (2025) DeepSeek-r1 incentivizes reasoning in llms through reinforcement learning. Nature 645 (8081), pp. 633–638. Cited by: §1, §2.

- S. Hao, S. Sukhbaatar, D. Su, X. Li, Z. Hu, J. Weston, and Y. Tian (2024) Training large language models to reason in a continuous latent space. arXiv preprint arXiv:2412.06769. Cited by: Table 8, §1, §1, §2, §3.2, §5.2, §6.

- Y. He, W. Zheng, Y. Zhu, Z. Zheng, L. Su, S. Vasudevan, Q. Guo, L. Hong, and J. Li (2025) SemCoT: accelerating chain-of-thought reasoning through semantically-aligned implicit tokens. In The Thirty-ninth Annual Conference on Neural Information Processing Systems, Cited by: Table 8, 1st item, §2, §5.2.

- A. Jaech, A. Kalai, A. Lerer, A. Richardson, A. El-Kishky, A. Low, A. Helyar, A. Madry, A. Beutel, A. Carney, et al. (2024) Openai o1 system card. arXiv preprint arXiv:2412.16720. Cited by: §1, §2.

- L. Kang, Y. Deng, Y. Xiao, Z. Mo, W. S. Lee, and L. Bing (2025) First try matters: revisiting the role of reflection in reasoning models. arXiv preprint arXiv:2510.08308. Cited by: §1.

- I. Loshchilov and F. Hutter (2019) Decoupled weight decay regularization. In International Conference on Learning Representations, External Links: Link Cited by: §B.1.

- A. Patel, S. Bhattamishra, and N. Goyal (2021) Are nlp models really able to solve simple math word problems?. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pp. 2080–2094. Cited by: §5.1.

- J. Pfau, W. Merrill, and S. R. Bowman (2024) Let’s think dot by dot: hidden computation in transformer language models. In First Conference on Language Modeling, Cited by: §5.4.

- M. Renze and E. Guven (2024) Self-reflection in llm agents: effects on problem-solving performance. arXiv preprint arXiv:2405.06682. Cited by: §1.

- S. Roy and D. Roth (2015) Solving general arithmetic word problems. In Proceedings of the 2015 conference on empirical methods in natural language processing, pp. 1743–1752. Cited by: §5.1.

- B. Seed, J. Chen, T. Fan, X. Liu, L. Liu, Z. Lin, M. Wang, C. Wang, X. Wei, W. Xu, et al. (2025) Seed1. 5-thinking: advancing superb reasoning models with reinforcement learning. arXiv preprint arXiv:2504.13914. Cited by: §1.

- Z. Shen, H. Yan, L. Zhang, Z. Hu, Y. Du, and Y. He (2025) CODI: compressing chain-of-thought into continuous space via self-distillation. arXiv preprint arxiv:2502.21074. Cited by: §1, §2, §6.

- A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser, and I. Polosukhin (2017) Attention is all you need. Advances in neural information processing systems 30. Cited by: §3.1.

- X. Wang, J. Wei, D. Schuurmans, Q. V. Le, E. H. Chi, S. Narang, A. Chowdhery, and D. Zhou (2022) Self-consistency improves chain of thought reasoning in language models. In The Eleventh International Conference on Learning Representations, Cited by: §2.

- J. Wei, X. Wang, D. Schuurmans, M. Bosma, F. Xia, E. Chi, Q. V. Le, D. Zhou, et al. (2022) Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems 35, pp. 24824–24837. Cited by: §1, §2, §5.2.

- X. Wei, X. Liu, Y. Zang, X. Dong, Y. Cao, J. Wang, X. Qiu, and D. Lin (2025) SIM-cot: supervised implicit chain-of-thought. arXiv preprint arXiv:2509.20317. Cited by: §1, §2, §6.

- Y. Xu, X. Guo, Z. Zeng, and C. Miao (2025) SoftCoT: soft chain-of-thought for efficient reasoning with llms. In Proceedings of ACL, Cited by: Table 8, 1st item, §2, §5.1, §5.2.

- L. Yang, K. Lee, R. D. Nowak, and D. Papailiopoulos (2023) Looped transformers are better at learning learning algorithms. In The Twelfth International Conference on Learning Representations, Cited by: §2.

- S. Yao, D. Yu, J. Zhao, I. Shafran, T. Griffiths, Y. Cao, and K. Narasimhan (2024) Tree of thoughts: deliberate problem solving with large language models. Advances in Neural Information Processing Systems 36. Cited by: §2.

- E. Yeo, Y. Tong, M. Niu, G. Neubig, and X. Yue (2025) Demystifying long chain-of-thought reasoning in llms. arXiv preprint arXiv:2502.03373. Cited by: §1.

- L. Yu, W. Jiang, H. Shi, J. Yu, Z. Liu, Y. Zhang, J. T. Kwok, Z. Li, A. Weller, and W. Liu (2023) Metamath: bootstrap your own mathematical questions for large language models. arXiv preprint arXiv:2309.12284. Cited by: Table 1, Table 1.

- Z. Yu, W. Xia, X. Yan, B. XU, H. Zhang, Y. Du, and J. Wang (2025) Self-verifying reflection helps transformers with cot reasoning. In The Thirty-ninth Annual Conference on Neural Information Processing Systems, Cited by: §1.

- Z. Zhang, X. He, W. Yan, A. Shen, C. Zhao, S. Wang, Y. Shen, and X. E. Wang (2025) Soft thinking: unlocking the reasoning potential of llms in continuous concept space. arXiv preprint arXiv:2505.15778. Cited by: Table 8, §2, §5.2.

- Y. Zhou, Y. Wang, X. Yin, S. Zhou, and A. R. Zhang (2025) The geometry of reasoning: flowing logics in representation space. arXiv preprint arXiv:2510.09782. Cited by: §2.

- R. Zhu, T. Peng, T. Cheng, X. Qu, J. Huang, D. Zhu, H. Wang, K. Xue, X. Zhang, Y. Shan, et al. (2025a) A survey on latent reasoning. arXiv preprint arXiv:2507.06203. Cited by: §1.

- R. Zhu, Z. Wang, K. Hua, T. Zhang, Z. Li, H. Que, B. Wei, Z. Wen, F. Yin, H. Xing, et al. (2025b) Scaling latent reasoning via looped language models. arXiv preprint arXiv:2510.25741. Cited by: §2.

## Appendix A Dataset Statistics

Table 3 summarizes the metadata of the training data (GSM8K-NL) and evaluation benchmarks used in our experiments. All datasets focus on mathematical word problems requiring multi-step arithmetic reasoning.

| Dataset | #Train | #Test |

| --- | --- | --- |

| GSM8K-NL | 7,473 | 1,319 |

| ASDiv-Aug | 4,180 | 1,041 |

| MultiArith | 420 | 180 |

| SVAMP | 700 | 300 |

Table 3: Statistics of the evaluation datasets used in our experiments.

## Appendix B Training Configuration

### B.1 Training Hyperparameters

Table 4 presents the detailed training hyperparameters for each model scale across all three stages of LT-Tuning. We adopt different batch sizes and learning rates to accommodate the varying memory requirements and optimization dynamics of different model sizes.

| Model | Stage | LR | BS | Epochs | Optimizer | Scheduler |

| --- | --- | --- | --- | --- | --- | --- |

| Llama-3.2-1B | Stage 1 (CoT) | 5e-5 | 32 | 1 | AdamW | Cosine |

| Stage 2 (Dynamic) | 5e-5 | 16 | 2 | AdamW | Cosine | |

| Stage 3 (Fusion) | 5e-5 | 16 | 7 | AdamW | Cosine | |

| Llama-3.2-3B | Stage 1 (CoT) | 5e-5 | 16 | 1 | AdamW | Cosine |

| Stage 2 (Dynamic) | 5e-5 | 8 | 2 | AdamW | Cosine | |

| Stage 3 (Fusion) | 5e-5 | 8 | 7 | AdamW | Cosine | |

| Llama-3.1-8B | Stage 1 (CoT) | 1e-5 | 8 | 1 | AdamW | Cosine |

| Stage 2 (Dynamic) | 1e-5 | 4 | 1 | AdamW | Cosine | |

| Stage 3 (Fusion) | 1e-5 | 4 | 3 | AdamW | Cosine | |

Table 4: Training hyperparameters for different model scales. LR denotes learning rate and BS denotes batch size.

For all experiments, we use AdamW optimizer (Loshchilov and Hutter, 2019) with $β_1=0.9$ , $β_2=0.999$ , and weight decay of $0.01$ .

## Appendix C Stage-Specific Implementation Details

### C.1 Stage 1: Explicit Reasoning Warm-up

In the first stage, we perform standard supervised fine-tuning on Chain-of-Thought (CoT) data. The CoT annotations are sourced from the GSM8K training set, where each problem is paired with a step-by-step natural language solution. We use the following prompt template:

Stage 1 Prompt Template

{question} {cot_reasoning} The final answer is:\n### {answer}.

### C.2 Stage 2: Dynamic Latent Tokens Generation

| Model | Threshold $τ$ | #Maximum Latent Tokens per Insert $k$ | Layer Selection $I$ |

| --- | --- | --- | --- |

| Llama-3.2-1B | 0.7 | 2 | -2 |

| Llama-3.2-3B | 0.7 | 2 | -2 |

| Llama-3.1-8B | 0.6 | 4 | -1 |

Table 5: Confidence threshold $τ$ , hidden states layer selection and latent token configurations.

#### Confidence Threshold Selection.