# LLM-Based Scientific Equation Discovery via Physics-Informed Token-Regularized Policy Optimization

**Authors**: Boxiao Wang, Kai Li, Tianyi Liu, Chen Li, Junzhe Wang, Yifan Zhang, Jian Cheng

> wangboxiao22@mails.ucas.ac.cn0009-0008-2970-7575Institute of Automation, Chinese Academy of SciencesBeijingChina

> kai.li@ia.ac.cnInstitute of Automation, Chinese Academy of SciencesBeijingChina

> franktyliu@outlook.comState Key Laboratory of AerodynamicsMianyang, SichuanChina

> lichen@skla.cardc.cnState Key Laboratory of AerodynamicsMianyang, SichuanChina

> wangjunzhe21@mails.ucas.ac.cnSchool of Mathematical Sciences,

University of Chinese Academy of SciencesBeijingChina

> yifan.zhang@ia.ac.cnInstitute of Automation, Chinese Academy of SciencesBeijingChina

> jian.cheng@ia.ac.cnInstitute of Automation, Chinese Academy of SciencesBeijingChina

Abstract.

Symbolic regression aims to distill mathematical equations from observational data. Recent approaches have successfully leveraged Large Language Models (LLMs) to generate equation hypotheses, capitalizing on their vast pre-trained scientific priors. However, existing frameworks predominantly treat the LLM as a static generator, relying on prompt-level guidance to steer exploration. This paradigm fails to update the model’s internal representations based on search feedback, often yielding physically inconsistent or mathematically redundant expressions. In this work, we propose PiT-PO (Physics-informed Token-regularized Policy Optimization), a unified framework that evolves the LLM into an adaptive generator via reinforcement learning. Central to PiT-PO is a dual-constraint mechanism that rigorously enforces hierarchical physical validity while simultaneously applying fine-grained, token-level penalties to suppress redundant structures. Consequently, PiT-PO aligns LLM to produce equations that are both scientifically consistent and structurally parsimonious. Empirically, PiT-PO achieves state-of-the-art performance on standard benchmarks and successfully discovers novel turbulence models for challenging fluid dynamics problems. We also demonstrate that PiT-PO empowers small-scale models to outperform closed-source giants, democratizing access to high-performance scientific discovery. conference: ; ;

1. Introduction

Symbolic Regression (SR) (Makke and Chawla, 2024b) stands as a cornerstone of data-driven scientific discovery, uniquely capable of distilling interpretable mathematical equations from observational data. Unlike black-box models that prioritize mere prediction, SR elucidates the fundamental mechanisms governing system behavior, proving instrumental in uncovering physical laws (Makke and Chawla, 2024a; Reuter et al., 2023), modeling chemical kinetics (Chen et al., 2025; Deng et al., 2023), and analyzing complex biological dynamics (Wahlquist et al., 2024; Shi et al., 2024).

However, the search for exact governing equations represents a formidable challenge, formally classified as an NP-hard problem (Virgolin and Pissis, 2022). To navigate this vast search space, algorithmic strategies have evolved from Genetic Programming (GP) (Schmidt and Lipson, 2009; Cranmer, 2023) and Reinforcement Learning (RL) (Petersen et al., 2021) to Transformer-based architectures that map numerical data directly to symbolic equations (Biggio et al., 2021; Kamienny et al., 2022; Zhang et al., 2025). Most recently, the advent of Large Language Models (LLMs) has introduced a new paradigm. Methods such as LLM-SR (Shojaee et al., 2025a) and LaSR (Grayeli et al., 2024) leverage the pre-trained scientific priors and in-context learning capabilities of LLMs to generate equation hypotheses. These approaches typically employ an evolutionary search paradigm, where candidates are evaluated, and high-performing solutions are fed back via prompt-level conditioning to steer subsequent generation.

Despite encouraging progress, existing LLM-based SR methods remain constrained by severe limitations. First, most approaches treat the LLM as a static generator, relying primarily on prompt-level, verbal guidance to steer the evolutionary search (Shojaee et al., 2025a; Grayeli et al., 2024). This “frozen” paradigm inherently neglects the opportunity to adapt and enhance the generative capability of the LLM itself based on evaluation signals, preventing the model from internalizing feedback and adjusting its generation strategies to the specific problem. Second, they typically operate in a physics-agnostic manner, prioritizing syntactic correctness over physical validity (Shojaee et al., 2025a; Grayeli et al., 2024). Without rigorous constraints, LLMs often generate equations that fit the data numerically but violate fundamental physical principles, rendering them prone to overfitting and practically unusable.

In this work, we propose to fundamentally shift the role of the LLM in SR from a static proposer to an adaptive generator. We establish a dynamic feedback loop in which evolutionary exploration and parametric learning reinforce each other: evolutionary search uncovers diverse candidate equations and generates informative evaluation signals, while parametric adaptation enables the LLM to consolidate effective symbolic patterns and guide subsequent exploration more efficiently. By employing in-search fine-tuning, i.e., updating the LLM parameters during the evolutionary search process, we move beyond purely verbal, prompt-level guidance and introduce numerical guidance that allows feedback to be directly internalized into the model parameters, progressively aligning LLM with the intrinsic properties of the target system.

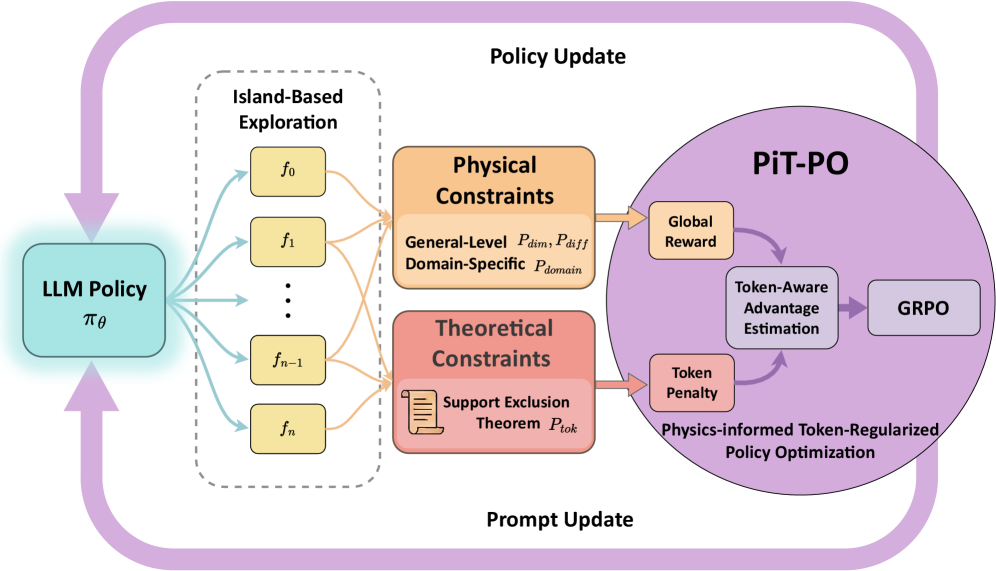

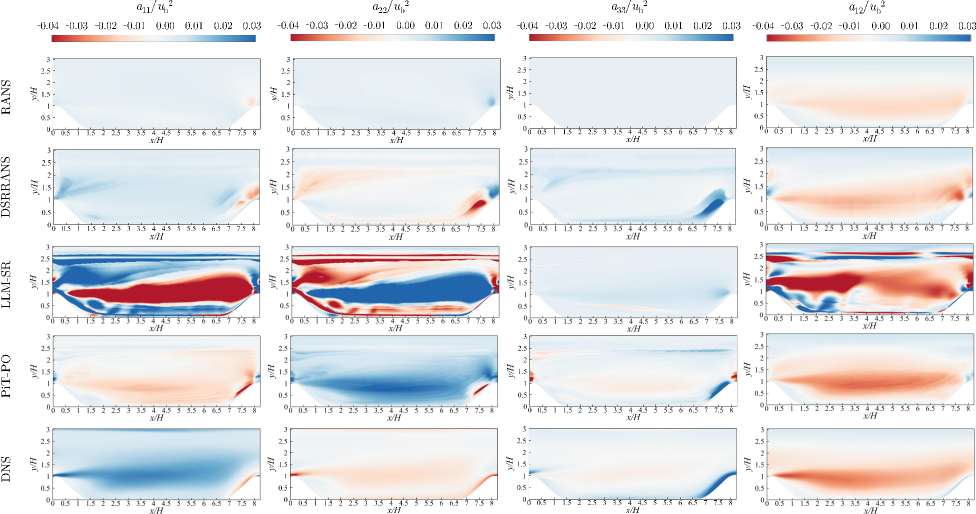

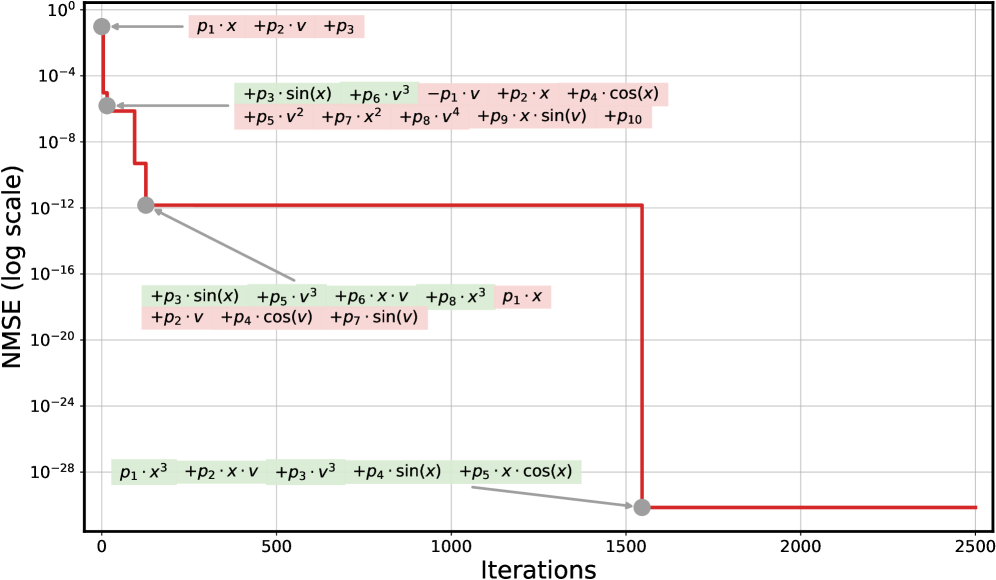

To realize this vision, we introduce PiT-PO (Physics-informed Token-regularized Policy Optimization), a unified framework that bridges LLM-driven evolutionary exploration with rigorous verification. PiT-PO is built upon two technical components. 1) In-Search LLM Evolution. We implement the numerical guidance via reinforcement learning, which efficiently updates the LLM’s parameters during the search process. Instead of relying on static pre-trained knowledge, this in-search policy optimization enables LLM to dynamically align its generative distribution with the structural characteristics of the specific task, effectively transforming general scientific priors into domain-specific expertise on the fly. 2) Dual Constraints as Search Guidance. We enforce hierarchical physical constraints to ensure scientific validity, and uniquely, we incorporate a fine-grained regularization based on our proposed Support Exclusion Theorem. This theorem allows us to identify mathematically redundant terms and translate them into token-level penalties, effectively pruning the search space and guiding LLM toward physically meaningful and structurally parsimonious equations.

Comprehensive experimental results demonstrate that PiT-PO achieves state-of-the-art performance across standard SR benchmarks, including the LLM-SR Suite (Shojaee et al., 2025a) and LLM-SRBench (Shojaee et al., 2025b), and recovers the largest number of ground-truth equations among all evaluated methods. In addition to synthetic benchmarks, the effectiveness of PiT-PO is validated on an application-driven turbulence modeling task involving flow over periodic hills. PiT-PO improves upon traditional Reynolds-Averaged Navier-Stokes (RANS) approaches by producing anisotropic Reynolds stresses closer to Direct Numerical Simulation (DNS) references. The learned method shows enhanced physical consistency, with reduced non-physical extremes and better flow field predictions. Finally, PiT-PO maintains robust performance even when using resource-constrained small models, such as a quantized version of Llama-8B, and remains efficient under strict wall-clock time budgets, establishing a practical methodology for automated scientific discovery.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: PiT-PO Framework

### Overview

The image presents a diagram of the Physics-informed Token-Regularized Policy Optimization (PiT-PO) framework. It illustrates the interaction between an LLM Policy, Island-Based Exploration, Physical and Theoretical Constraints, and the PiT-PO module itself, showing how these components contribute to policy and prompt updates.

### Components/Axes

* **LLM Policy:** Represented by a light blue rounded rectangle labeled "LLM Policy" with the notation "πθ" below it. Located on the left side of the diagram.

* **Island-Based Exploration:** Enclosed in a dashed gray rounded rectangle. Contains multiple yellow rounded rectangles labeled "f0", "f1", ..., "fn-1", "fn".

* **Physical Constraints:** An orange rounded rectangle labeled "Physical Constraints". Sub-labels include "General-Level Pdim, Pdiff" and "Domain-Specific Pdomain".

* **Theoretical Constraints:** A red rounded rectangle labeled "Theoretical Constraints". Contains a document icon and the sub-label "Support Exclusion Theorem Ptok".

* **PiT-PO:** A large purple circle containing several components:

* "Global Reward" (orange rounded rectangle)

* "Token-Aware Advantage Estimation" (gray rounded rectangle)

* "GRPO" (gray rounded rectangle)

* "Token Penalty" (red rounded rectangle)

* "Physics-informed Token-Regularized Policy Optimization" (text label at the bottom of the circle)

* **Policy Update:** Text label at the top of the diagram.

* **Prompt Update:** Text label at the bottom of the diagram.

### Detailed Analysis

* **Flow:**

* The LLM Policy (πθ) sends outputs (represented by light blue arrows) to the Island-Based Exploration module.

* The Island-Based Exploration module sends outputs (represented by orange arrows) to both the Physical Constraints and Theoretical Constraints modules.

* The Physical and Theoretical Constraints modules send outputs (represented by orange arrows) to the PiT-PO module's "Global Reward" and "Token Penalty" components, respectively.

* Within the PiT-PO module, there is a feedback loop between "Token-Aware Advantage Estimation" and "Token Penalty" (represented by purple arrows).

* "Token-Aware Advantage Estimation" sends output (represented by a purple arrow) to "GRPO".

* The PiT-PO module sends feedback (represented by a purple arrow) back to the LLM Policy (πθ), completing the loop.

* A large purple arrow loops from the PiT-PO module back to the LLM Policy, labeled "Prompt Update".

* A large purple arrow loops from the LLM Policy to the Physical and Theoretical Constraints, labeled "Policy Update".

* **Island-Based Exploration Details:**

* The Island-Based Exploration module contains multiple "f" nodes, indexed from 0 to n.

* **Constraint Details:**

* Physical Constraints are divided into General-Level (Pdim, Pdiff) and Domain-Specific (Pdomain) constraints.

* Theoretical Constraints are based on the Support Exclusion Theorem (Ptok).

### Key Observations

* The diagram illustrates a closed-loop system where the LLM Policy is updated based on feedback from the PiT-PO module, which incorporates physical and theoretical constraints.

* The Island-Based Exploration module seems to provide diverse inputs to the constraint modules.

* The PiT-PO module uses a token-aware approach, incorporating both global rewards and token penalties.

### Interpretation

The diagram describes the PiT-PO framework, which aims to improve LLM policies by incorporating physical and theoretical constraints during the optimization process. The Island-Based Exploration module likely serves to generate a diverse set of candidate policies, which are then evaluated against the constraints. The PiT-PO module uses a token-aware approach, suggesting that it considers the impact of individual tokens on the overall policy performance. The feedback loop ensures that the LLM policy is continuously refined based on the constraints and rewards. The "Prompt Update" and "Policy Update" arrows indicate that both the prompt and the policy of the LLM are being updated during the process. This framework appears to be designed to create more robust and reliable LLM policies by grounding them in real-world constraints and physics-based principles.

</details>

Figure 1. The overall framework of PiT-PO. PiT-PO transforms the LLM from a static proposer into an adaptive generator via a closed-loop evolutionary process. The framework integrates dual-constraint evaluation—comprising physical constraints and theoretical constraints—to generate fine-grained token-level learning signals. These signals guide the LLM policy update via reinforcement learning, ensuring the discovery of parsimonious, physically consistent equations.

2. Preliminaries

2.1. Problem Setup

In SR, a dataset of input–output observations is given:

$$

D=\{(x_{i},y_{i})\}_{i=1}^{n},\;x_{i}\in\mathbb{R}^{d},\;y_{i}\in\mathbb{R}. \tag{1}

$$

The objective is to identify a compact and interpretable function $f∈\mathcal{F}$ such that $f(x_{i})≈ y_{i}$ for the observed samples, while retaining the ability to generalize to unseen inputs.

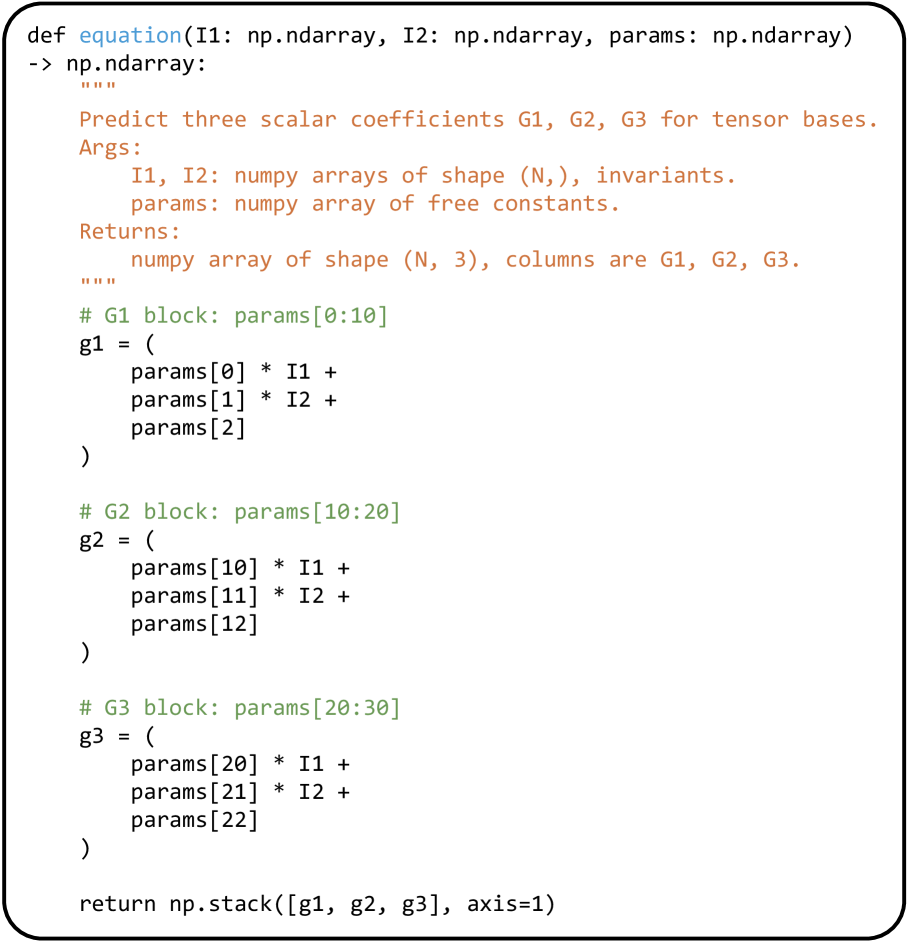

2.2. LLM-based SR Methods

Contemporary LLM-based approaches reformulate SR as a iterative program synthesis task. In this paradigm, typified by frameworks such as LLM-SR (Shojaee et al., 2025a), the discovery process is decoupled into two phases: structure proposal and parameter estimation. Specifically, the LLM functions as a symbolic generator, emitting functional skeletons with placeholders for learnable coefficients. A numerical optimizer (e.g., BFGS (Fletcher, 1987)) subsequently fits these constants to the observed data. To navigate the combinatorial search space, these methods employ an evolutionary-style feedback loop: high-fitness equations are maintained in a pool to serve as in-context examples, prompting the LLM to refine subsequent generations. Our work leverages this architecture as a backbone, but fundamentally redefines the LLM’s role from a static proposer to an adaptive generator.

2.3. Group Relative Policy Optimization

Group Relative Policy Optimization (GRPO) (Shao et al., 2024) is a RL algorithm tailored for optimizing LLMs on reasoning tasks, characterized by its efficient baseline estimation without the need for a separate value network. In the context of LLM-based SR, the generation process is modeled as a Markov Decision Process (MDP), where LLM functions as a policy $\pi_{\theta}$ that generates a sequence of tokens $o=(t_{1},...,t_{L})$ given a prompt $q$ . For each $q$ , GRPO samples a group of $G$ outputs $\{o_{1},...,o_{G}\}$ from the sampling policy $\pi_{\theta_{old}}$ . GRPO maximizes the following surrogate loss function:

$$

\mathcal{J}_{GRPO}(\theta)=\mathbb{E}_{q\sim P(Q),\{o_{i}\}\sim\pi_{\theta_{old}}}\left[\frac{1}{G}\sum_{i=1}^{G}\left(\frac{1}{L_{i}}\sum_{k=1}^{L_{i}}\mathcal{L}^{clip}_{i,k}(\theta)-\beta\mathbb{D}_{KL}(\pi_{\theta}||\pi_{ref})\right)\right], \tag{2}

$$

where $\pi_{ref}$ is the reference policy to prevent excessive deviation, and $\beta$ controls the KL-divergence penalty. The clipping term $\mathcal{L}^{clip}_{i,k}(\theta)$ ensures trust region updates:

$$

\mathcal{L}^{clip}_{i,k}(\theta)=\min\left(\frac{\pi_{\theta}(t_{i,k}|q,o_{i,<k})}{\pi_{\theta_{old}}(t_{i,k}|q,o_{i,<k})}\hat{A}_{i},\;\text{clip}\left(\frac{\pi_{\theta}(t_{i,k}|q,o_{i,<k})}{\pi_{\theta_{old}}(t_{i,k}|q,o_{i,<k})},1-\epsilon,1+\epsilon\right)\hat{A}_{i}\right). \tag{3}

$$

Here, $\epsilon$ is the clipping coefficient. A distinctive feature of GRPO lies in its advantage estimation, it computes the advantage $\hat{A}_{i}$ by standardizing the reward $R(o_{i})$ relative to the group:

$$

\hat{A}_{i}=\frac{R(o_{i})-\text{mean}(\{R(o_{j})\})}{\text{std}(\{R(o_{j})\})}. \tag{4}

$$

Consequently, every token within the sequence $o_{i}$ is assigned the exact same feedback signal. This coarse granularity treats valid and redundant terms indistinguishably, a limitation that our work addresses by introducing fine-grained, token-level regularization.

3. Method

We propose PiT-PO (Physics-informed Token-regularized Policy Optimization), a framework that evolves LLM into an adaptive, physics-aware generator. PiT-PO establishes a closed-loop evolutionary process driven by two synergistic mechanisms: (1) a dual-constraint evaluation system that rigorously assesses candidates through hierarchical physical verification and theorem-guided redundancy pruning; and (2) a novel policy optimization strategy that updates LLM using fine-grained, token-level feedback derived from these constraints. This combination effectively aligns the LLM with the intrinsic structure of the problem, guiding the LLM toward solutions that are not only numerically accurate but also structurally parsimonious and scientifically consistent.

3.1. Dual-Constraint Learning Signals

Navigating the combinatorial space of symbolic equations requires rigorous guidance. We employ a reward system driven by dual constraints: physical constraints delineate the scientifically valid region, while theoretical constraints drive the search toward simpler equations by identifying and pruning redundant terms.

3.1.1. Hierarchical Physical Constraints.

To ensure scientific validity, we construct a hierarchical filter that categorizes constraints into two levels: general properties and domain-specific priors.

General-Level Constraints. We enforce fundamental physical properties applicable across scientific disciplines. To prune physically impossible structures (e.g., adding terms with mismatched units), we assign penalty-based rewards for Dimensional Homogeneity ( $P_{dim}$ ) and Differentiability ( $P_{diff}$ ). The former strictly penalizes equations with unit inconsistencies, while the latter enforces smoothness on the data-defined domain.

Domain-Specific Constraints. To tackle specialized tasks, we inject expert knowledge as inductive biases. We define the domain-specific penalty $P^{(j)}_{domain}$ to penalize candidate equations that violate the $j$ -th domain-specific constraint. Taking the turbulence modeling task (detailed in Appendix E.4) as a representative instantiation, we enforce four rigorous constraints: (1) Realizability (Pope, 2000), ensuring the Reynolds stress tensor has positive eigenvalues; (2) Boundary Condition Consistency (Monkewitz, 2021), requiring stresses to decay to zero at the wall; (3) Asymptotic Scaling (Tennekes and Lumley, 1972; WANG et al., 2019), enforcing the cubic relationship between stress and wall distance in the viscous sublayer; and (4) Energy Consistency (Pope, 2000; MOCHIZUKI and OSAKA, 2000), aligning predicted stress with turbulent kinetic energy production.

This hierarchical design effectively embeds physical consistency as a hard constraint in the reward function, prioritizing scientific validity over mere empirical fitting.

3.1.2. Theorem-Guided Mathematical Constraints

While physical constraints ensure validity, they do not prevent mathematical redundancy. To rigorously distinguish between essential terms and redundant artifacts, we introduce the Support Exclusion Theorem.

Let $\mathcal{S}$ denote the full support set containing all candidate basis functions $\{\phi_{j}\}$ . The ground truth equation is $f^{*}=\sum_{j∈\mathcal{S}^{\prime}}a_{j}\phi_{j}$ , where $\mathcal{S}^{\prime}⊂eq\mathcal{S}$ is the true support set (i.e., the indices of basis functions that truly appear in the governing equation), and $\{a_{j}\}_{j∈\mathcal{S}^{\prime}}$ are the corresponding true coefficients. Consider a candidate equation $f=\sum_{j∈\mathcal{K}}b_{j}\phi_{j}$ , where $\mathcal{K}⊂eq\mathcal{S}$ represents the current support set (i.e., the selected terms in the skeleton), $\mathbf{b}=\{b_{j}\}_{j∈\mathcal{K}}$ are the optimized coefficients derived from the data. We define the empirical Gram matrix of these basis functions as $G∈\mathbb{R}^{|\mathcal{S}|×|\mathcal{S}|}$ and the corresponding Projection Matrix as $T$ , where $T_{ij}:=G_{ji}/G_{ii}$ .

** Theorem 3.1 (Support Exclusion Theorem)**

*Assume the ground-truth support is finite and satisfies $|\mathcal{S}^{\prime}|≤ M$ , and let the true function coefficients be bounded by $A≤|a_{j}|≤ B$ for all $j∈\mathcal{S}^{\prime}$ . A term $\phi_{i}$ ( $i∈\mathcal{K}$ ) is theoretically guaranteed to be a false discovery (not in the true support $\mathcal{S}^{\prime}$ ) if its fitted coefficient magnitude satisfies:

$$

|b_{i}|<A-\left(\underbrace{\sum_{j\in\mathcal{K},j\neq i}(B+|b_{j}|)|T_{ij}|}_{\text{Internal Interference}}+\underbrace{B\sum_{k=1}^{m}s_{(k)}}_{\text{External Interference}}\right). \tag{5}

$$

$s(k)$ denotes the $k$ -th largest value in $\{|T_{i\ell}|:\ell∈\mathcal{S}\setminus\mathcal{K}\}$ , and $m:=\min\!\big(M-1,\;|\mathcal{S}\setminus\mathcal{K}|\big)$ .*

Detailed definitions of all notations and the rigorous proof of Theorem 3.1 are provided in Appendix B. This theorem formalizes the intuition that coefficients of redundant terms (absent from the true support $\mathcal{S}^{\prime}$ ) have significantly smaller magnitudes than those of valid components.

Specifically, after fitting $\mathbf{b}$ , we compute the normalized coefficient ratio $\tau_{i}=|b_{i}|/(\sum_{j}|b_{j}|+\epsilon)$ . We introduce a threshold $\rho∈(0,1)$ to identify potentially redundant terms. Terms satisfying $\tau_{i}>\rho$ incur no penalty, while components with $\tau_{i}≤\rho$ are considered redundant. To suppress these redundancies, we define a token penalty for each token in redundant term $i$ :

$$

P_{tok}=p\cdot\max\left(0,-\log\left(|b_{i}|+\epsilon\right)\right), \tag{6}

$$

where $p>0$ is a scaling coefficient. We use a logarithmic scale to impose stronger penalties on terms with smaller coefficients.

By integrating this penalty into the policy optimization, we guide the LLM to reduce the probability of generating redundant terms, thereby steering the optimization toward parsimonious equations.

3.2. Token-Aware Policy Update

Our proposed PiT-PO effectively operationalize the hierarchical constraints and theoretical insights derived in Section 3.1. Unlike standard GRPO that assign a uniform scalar reward to the entire generated sequence, our method transitions the learning process from coarse-grained sequence scoring to fine-grained token-level credit assignment. This ensures that the policy not only learns to generate physically valid equations but also explicitly suppresses theoretically redundant terms.

3.2.1. Global Reward with Gated Constraints

The optimization is driven by a composite global reward, $R_{global}$ , which balances fitting accuracy, structural parsimony, and physical consistency. Formally, for a sampled equation $o_{i}$ , the rewards are defined as follows:

Fitting Accuracy ( $R_{fit}$ ). We use the normalized log-MSE to encourage precise data fitting:

$$

R_{fit}=-\alpha\log(\text{MSE}+\epsilon), \tag{7}

$$

where $MSE=\frac{1}{n}\sum_{i=1}^{n}(y_{i}-\hat{y}_{i})^{2}$ .

Complexity Penalty ( $P_{cplx}$ ). Adhering to Occam’s Razor, we penalize structural complexity based on the Abstract Syntax Tree (AST) (Neamtiu et al., 2005) node count:

$$

P_{cplx}=\lambda_{len}\cdot\text{Length}(\text{AST}). \tag{8}

$$

In PiT-PO, each equation generated by the LLM is represented as a Python function and parsed into an AST, where each node corresponds to a variable or operator. The total node count provides a meaningful estimate of structural complexity.

Gated Physical Penalty ( $P_{phy}$ ). Imposing strict physical constraints too early can hinder exploration, causing the model to discard potentially promising functional forms. We therefore activate physical penalties only after the candidate equation reaches a baseline fitting accuracy threshold ( $\delta_{gate}$ ). Specifically, we define

where $\mathbb{1}(·)$ is the indicator function. This mechanism effectively creates a soft curriculum: it allows “free” exploration in the early stages and enforcing strict physical compliance only after the solution enters a plausible region.

The total reward $R_{global}$ is then formulated as:

$$

R_{global}(o_{i})=R_{fit}(o_{i})-P_{cplx}(o_{i})-P_{phy}(o_{i}). \tag{10}

$$

3.2.2. Fine-Grained Advantage Estimation

Standard GRPO applies a uniform advantage across all tokens in a sequence. We refine this by synthesizing the global reward with the token-level penalty $P_{tok}$ (Equation 6). Specifically, we define the token-aware advantage $\hat{A}_{i,k}$ for the $k$ -th token in the $i$ -th sampled equation as:

$$

\hat{A}_{i,k}=\underbrace{\frac{R_{global}(o_{i})-\mu_{group}}{\sigma_{group}}}_{\text{Global Standardization}}-\underbrace{P_{i,k}}_{\text{Local Pruning}}. \tag{11}

$$

Here, the first term standardizes the global reward against the group statistics ( $\mu_{group},\sigma_{group}$ ), reinforcing equations that satisfy multi-objective criteria relative to their peers. The second term, $P_{i,k}$ , applies a targeted penalty to suppress redundancy. Specifically, we set $P_{i,k}=0$ if token $k$ belongs to a non-redundant term, and $P_{i,k}=P_{tok}$ otherwise. This ensures that penalties are applied exclusively to tokens contributing to mathematically redundant structures, while valid terms remain unaffected.

Substituting this token-aware advantage into the GRPO objective, the policy gradient update of our PiT-PO becomes:

$$

\nabla\mathcal{J}_{PiT-PO}\propto\sum_{i,k}\hat{A}_{i,k}\nabla\log\pi_{\theta}(t_{i,k}|o_{i,<k}). \tag{12}

$$

This creates a dual-pressure optimization landscape: global rewards guide the policy toward physically consistent and accurate equations, while local penalties surgically excise redundant terms. This ensures the final output aligns with the sparse, underlying physical laws rather than merely overfitting numerical data.

Input: Dataset $D=\{(x_{i},y_{i})\}_{i=1}^{n}$ ; LLM $\pi_{\theta}$ ; number of islands $N$ ; group size $G$ ; iterations $T$ .

Output: Best equation $o^{*}$ .

1

2 1ex

3 Initialize $o^{*}$ , $s^{*}$ , and buffers $\mathcal{B}_{j}←\emptyset$ for $j=1,...,N$

4

5 for $t← 1$ to $T$ do

// Stage 1: Island-Based Exploration

6 for $j← 1$ to $N$ do

$q_{j}←\textsc{BuildPrompt}(D,\mathcal{B}_{j})$

// in-context rule

$\{o_{i}\}_{i=1}^{G}\sim\pi_{\theta}(·\mid q_{j})$

// sample a group

7 for $i← 1$ to $G$ do

$(R_{i},\{P_{i,k}\})←\textsc{DualConstraintEval}(o_{i},D)$

// $R_{i}=R_{\mathrm{global}}(o_{i})$

8 $\mathcal{B}_{j}←\mathcal{B}_{j}\cup\{(q_{j},o_{i},R_{i},\{P_{i,k}\})\}$

9 if $R_{i}>s^{*}$ then

10 $o^{*}← o_{i}$ ; $s^{*}← R_{i}$

11

12

13

// Stage 2: In-Search LLM Evolution

14 $\theta←\textsc{PiT-PO\_Update}(\theta,\{\mathcal{B}_{j}\}_{j=1}^{N},\pi_{{\theta}})$

// Stage 3: Hierarchical Selection

15 $\{\mathcal{B}_{j}\}_{j=1}^{N}←\textsc{SelectAndReset}(\{\mathcal{B}_{j}\}_{j=1}^{N})$

16 return $o^{*}$

Algorithm 1 PiT-PO Overall Training Pipeline

3.3. Overall Training Pipeline

We orchestrate the PiT-PO framework through a closed-loop evolutionary RL cycle. As illustrated in Algorithm 1, the training process iterates through three synergistic phases:

Phase 1: Island-Based Exploration (Data Generation). To prevent premature convergence to local optima, a common pitfall in SR, we employ a standard multi-island topology ( $N$ islands) to structurally enforce search diversity (Cranmer, 2023; Romera-Paredes et al., 2024). Each island $j$ maintains an isolated experience buffer $\mathcal{B}_{j}$ , evolving its own lineage of equations. This information isolation allows distinct islands to cultivate diverse functional forms independently.

Phase 2: In-Search LLM Evolution (Policy Update). This phase transforms the collected data into parametric knowledge. We aggregate the trajectories from all $N$ islands into a global batch to perform policy optimization using PiT-PO. By minimizing the loss, the model explicitly lowers the probability of generating mathematically redundant tokens and physically inconsistent structures. To ensure computational efficiency during this iterative search, we implement the update using Low-Rank Adaptation (LoRA) (Hu et al., 2021).

Phase 3: Hierarchical Selection (Population Management). We apply standard survival-of-the-fittest mechanisms to maintain population quality. Local buffers $\mathcal{B}_{j}$ are updated by retaining only top-performing candidates, while underperforming islands are periodically reset with high-fitness seeds to escape local optima.

This cycle establishes a reciprocal reinforcement mechanism: the island-based exploration maintains search diversity, while policy update consolidates these findings into the model weights, progressively transforming the LLM into a domain-specialized scientific discoverer.

4. Experiments

4.1. Setup

Benchmarks. To provide a comprehensive evaluation of PiT-PO, we adopt two widely used benchmarks to compare against state-of-the-art baselines:

LLM-SR Suite (Shojaee et al., 2025a). This suite comprises four tasks spanning multiple scientific domains: Oscillation 1 & 2 (Nonlinear Oscillatory Systems) feature dissipative couplings and non-polynomial nonlinearities with explicit forcing, making recovery of the correct interaction terms from trajectory data non-trivial; E. coli Growth (Monod, 1949; Rosso et al., 1995) models multivariate population dynamics with strongly coupled, multiplicative effects from nutrients, temperature, and acidity; and Stress-Strain (Aakash et al., 2019) uses experimental measurements of Aluminum 6061-T651 and exhibits temperature-dependent, piecewise non-linear deformation behavior. Detailed information about these tasks is provided in Appendix D.1.

LLM-SRBench: (Shojaee et al., 2025b) To evaluate generalization beyond canonical forms, we adopt the comprehensive LLM-SRBench benchmark, which contains 239 tasks organized into two complementary subsets, LSR-Transform and LSR-Synth. LSR-Transform changes the prediction target to rewrite well-known physics equations into less common yet analytically equivalent forms, producing 111 transformed tasks. This design aims to reduce reliance on direct memorization of canonical templates and tests whether a method can recover the same physical law under non-trivial variable reparameterizations. Complementarily, LSR-Synth composes equations from both known scientific terms and synthetic but plausible terms to further assess discovery beyond memorized templates: candidate terms are proposed by an LLM under domain context, assembled into full equations, and then filtered through multiple checks, including numerical solvability, contextual novelty, and expert plausibility, yielding 128 synthetic tasks. Further details are given in Appendix D.2.

Baselines.

We compare PiT-PO against representative baselines spanning both classical and LLM-based SR methods. For the four tasks in the LLM-SR Suite, we include GPlearn, a genetic programming-based SR approach; PySR (Grayeli et al., 2024), which couples evolutionary search with symbolic simplification; uDSR (Landajuela et al., 2022), which replaces the RNN policy in DSR with a pretrained Transformer and employs neural-guided decoding; RAG-SR (Zhang et al., 2025), which incorporates structure retrieval to assist equation generation; and LLM-SR (Shojaee et al., 2025a). For the broader LLM-SRBench benchmark, we further compare against leading LLM-based SR methods, including SGA (Ma et al., 2024), which integrates LLM-driven hypothesis proposal with physics-informed parameter optimization in a bilevel search framework, and LaSR (Grayeli et al., 2024), which leverages abstract symbolic concepts distilled from prior equations to guide hybrid LLM–evolutionary generation.

Evaluation metrics. We evaluate methods using Accuracy to Tolerance and Normalized Mean Squared Error (NMSE). For a tolerance $\tau$ , we report $\mathrm{Acc}_{\mathrm{all}}(\tau)$ (Biggio et al., 2021) and $\mathrm{Acc}_{\mathrm{avg}}(\tau)$ based on relative error: $\mathrm{Acc}_{\mathrm{all}}(\tau)=\mathbbm{1}\!\bigl(\max_{1≤ i≤ N_{\mathrm{test}}}\bigl|\tfrac{\hat{y}_{i}-y_{i}}{y_{i}}\bigr|≤\tau\bigr)$ and $\mathrm{Acc}_{\mathrm{avg}}(\tau)=\tfrac{1}{N_{\mathrm{test}}}\sum_{i=1}^{N_{\mathrm{test}}}\mathbbm{1}\!\bigl(\bigl|\tfrac{\hat{y}_{i}-y_{i}}{y_{i}}\bigr|≤\tau\bigr)$ , where $\hat{y}_{i}$ and $y_{i}$ denote the predicted and ground-truth values at the $i$ -th test point, respectively. We additionally report $\mathrm{NMSE}=\frac{1}{N_{\mathrm{test}}}\sum_{i=1}^{N_{\mathrm{test}}}\frac{(\hat{y}_{i}-y_{i})^{2}}{\mathrm{Var}(y)}$ to assess overall numerical accuracy. We additionally adopt the Symbolic Accuracy (SA) metric (Shojaee et al., 2025b), which directly measures whether the discovered equation recovers the correct symbolic form (i.e., whether it is mathematically equivalent to the ground-truth equation up to fitted constants).

Hyperparameter Configurations. All experiments were run for 2,500 search iterations. To ensure a fair comparison, all hyperparameters related to LLM generation and search were kept consistent with the default configuration of LLM-SR. For the in-search policy optimization specific to PiT-PO, we use a learning rate of $1× 10^{-6}$ , a group size of $G=4$ , and a multi-island setting of $N=4$ , resulting in an effective per-device batch size of $G× N=16$ . The coefficient of the KL regularization term was set to 0.01, and the LoRA rank was set to $r=16$ . In addition, experiments were conducted on a single NVIDIA RTX 3090 using 4-bit quantized Llama-3.2-1B-Instruct, Llama-3.2-3B-Instruct, and Llama-3.1-8B-Instruct (Kassianik et al., 2025) to evaluate the training stability and performance transferability of PiT-PO across different parameter scales under constrained compute and memory budgets. More details are in Appendix A.

| GPlern uDSR PySR | 0.11 1.78 3.80 | 0.0972 0.0002 0.0003 | 0.05 0.36 7.02 | 0.2000 0.0856 0.0002 | 0.76 1.12 2.80 | 1.0023 0.5059 0.4068 | 28.43 59.15 70.60 | 0.3496 0.0639 0.0347 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| RAG-SR | 39.47 | 1.49e-6 | 0.43 | 0.0282 | 2.04 | 0.2754 | 76.28 | 0.0282 |

| LLM-SR (Mixtral) | 100.00 | 1.32e-11 | 99.98 | 1.18e-11 | 2.88 | 0.0596 | 71.44 | 0.0276 |

| LLM-SR (4o-mini) | 99.92 | 8.84e-12 | 99.97 | 8.70e-10 | 5.52 | 0.0453 | 85.33 | 0.0245 |

| LLM-SR (Llama-3.1-8B) | 59.58 | 1.17e-6 | 99.96 | 9.66e-10 | 4.86 | 0.0555 | 77.74 | 0.0246 |

| LLM-SR (Llama-3.2-3B) | 39.45 | 1.76e-6 | 66.34 | 6.99e-7 | 1.08 | 0.3671 | 74.78 | 0.0324 |

| LLM-SR (Llama-3.2-1B) | 3.28 | 4.47e-4 | 7.81 | 0.0002 | 1.70 | 0.5801 | 30.35 | 0.3801 |

| PiT-PO (Llama-3.1-8B) | 100.00 | 6.41e-31 | 99.99 | 2.11e-13 | 10.42 | 0.0090 | 84.45 | 0.0136 |

| PiT-PO (Llama-3.2-3B) | 100.00 | 7.58e-31 | 99.97 | 9.77e-10 | 7.01 | 0.0248 | 84.54 | 0.0156 |

| PiT-PO (Llama-3.2-1B) | 99.95 | 1.34e-11 | 99.97 | 1.70e-8 | 4.76 | 0.0240 | 76.91 | 0.1767 |

Table 1. Overall performance on LLM-SR Suite.

4.2. PiT-PO Demonstrates Superior Equation Discovery Capability

As evidenced in Table 1, PiT-PO establishes a new state-of-the-art on LLM-SR Suite. It consistently dominates baseline methods across all metrics, achieving the highest accuracy while maintaining the lowest NMSE in nearly all test cases. Crucially, when controlling for the LLM backbone, PiT-PO yields a substantial performance margin over LLM-SR, validating the effectiveness of our in-search policy optimization framework. Notably, PiT-PO is the only approach to successfully identify the exact ground-truth equation for the Oscillator 1. This structural precision extends to the larger-scale LLM-SRBench (Table 2), where PiT-PO achieves the highest symbolic accuracy across all categories. These results collectively demonstrate that PiT-PO not only fits data numerically but excels in uncovering the true underlying equations.

These quantitative gains are not accidental but stem from PiT-PO’s structural awareness. Analysis of the iterative trajectories of LLM-SR and PiT-PO in Appendix C.4 corroborates this conclusion: the iterations of LLM-SR remain persistently influenced by clearly incorrect terms, which violate physical meaning despite providing strong numerical fits, as well as by additional nuisance terms. Consequently, the search of LLM-SR often stagnates in a low-MSE regime without reaching the correct structure. In contrast, once PiT-PO enters the same regime, the dual constraints rapidly eliminate terms that improve fitting performance but are structurally incorrect. This behavior highlights the central advantage of the proposed dual-constraint learning signals, in which the physical constraints and token-level penalties provide data-driven signals for precise structural correction, guiding the LLM toward the true underlying equation rather than mere numerical overfitting.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Multiple Line Charts: NMSE vs. Iteration for Different Scenarios

### Overview

The image contains four line charts arranged in a 2x2 grid. Each chart plots the NMSE (Normalized Mean Squared Error) on a logarithmic scale against the iteration number. Two algorithms, LLM-SR (blue line) and PiT-PO (red line), are compared across four different scenarios: Oscillation 1, Oscillation 2, E. coli Growth, and Stress-Strain. Shaded regions around each line represent the uncertainty or variance associated with each algorithm's performance.

### Components/Axes

* **X-axis (all charts):** Iteration, with tick marks at 0, 625, 1250, 1875, and 2500.

* **Y-axis (all charts):** NMSE (log scale). The scale varies slightly between charts.

* Oscillation 1: 10^-25 to 10^-1

* Oscillation 2: 10^-11 to 10^-2

* E. coli Growth: 10^-2 to 10^0 (100)

* Stress-Strain: 10^-2 to 10^-1

* **Legend (top):** Located at the top of the image, spanning across the two top charts.

* LLM-SR: Blue line

* PiT-PO: Red line

* **Chart Titles:**

* Top-left: Oscillation 1

* Top-right: Oscillation 2

* Bottom-left: E. coli Growth

* Bottom-right: Stress-Strain

### Detailed Analysis

**1. Oscillation 1**

* **LLM-SR (blue):** The line starts at approximately 10^-1 and quickly drops to around 10^-6, where it remains relatively constant throughout the iterations.

* **PiT-PO (red):** The line starts around 10^-6, then drops in steps at approximately iterations 625, 1250, and 1875, reaching approximately 10^-24 by the end.

**2. Oscillation 2**

* **LLM-SR (blue):** The line starts at approximately 10^-2 and decreases to approximately 10^-6 by iteration 625, then remains relatively constant.

* **PiT-PO (red):** The line starts at approximately 10^-3 and decreases to approximately 10^-10 by iteration 1250, then remains relatively constant.

**3. E. coli Growth**

* **LLM-SR (blue):** The line starts at approximately 10^0 (100) and decreases to approximately 10^-1 by iteration 625, then remains relatively constant.

* **PiT-PO (red):** The line starts at approximately 10^0 (100) and decreases in steps at approximately iterations 625, 1250, and 1875, reaching approximately 10^-2 by the end.

**4. Stress-Strain**

* **LLM-SR (blue):** The line starts at approximately 10^-1 and decreases to approximately 10^-2 by iteration 1250, then remains relatively constant.

* **PiT-PO (red):** The line starts at approximately 10^-1 and decreases in steps at approximately iterations 625, 1250, and 1875, reaching approximately 10^-2 by the end.

### Key Observations

* In all four scenarios, both algorithms show a decrease in NMSE as the number of iterations increases, indicating improved performance over time.

* The PiT-PO algorithm tends to have more pronounced step-wise decreases in NMSE, while the LLM-SR algorithm often plateaus after an initial drop.

* The shaded regions indicate the variability in performance, with some scenarios showing wider bands than others.

### Interpretation

The charts compare the performance of two algorithms (LLM-SR and PiT-PO) in terms of NMSE across four different applications. The data suggests that both algorithms are effective in reducing error over iterations, but their convergence behavior differs. LLM-SR appears to converge quickly to a certain error level and then plateaus, while PiT-PO shows more gradual, step-wise improvements. The choice of algorithm may depend on the specific application and the desired trade-off between initial convergence speed and final error level. The shaded regions provide insight into the robustness of each algorithm, with wider bands indicating greater sensitivity to initial conditions or noise in the data.

</details>

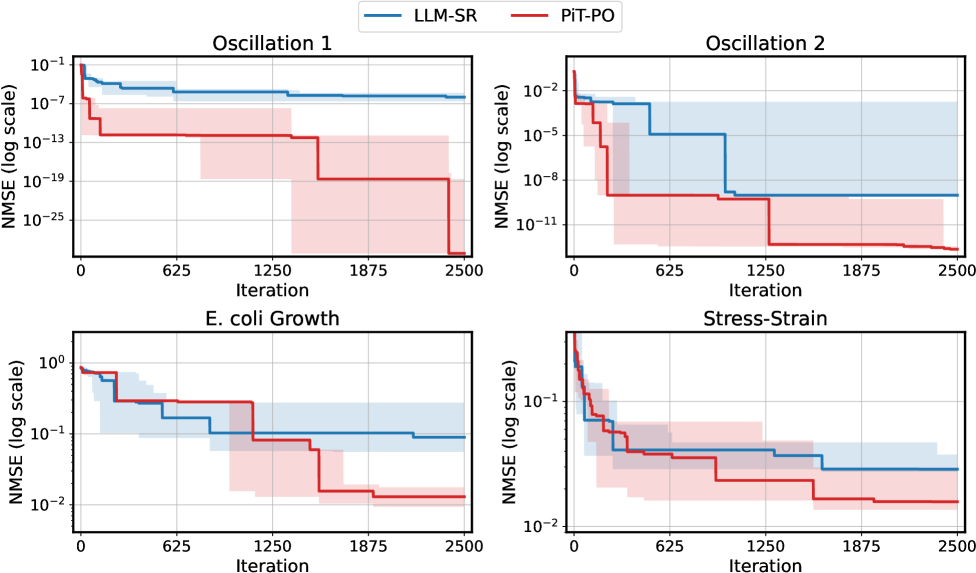

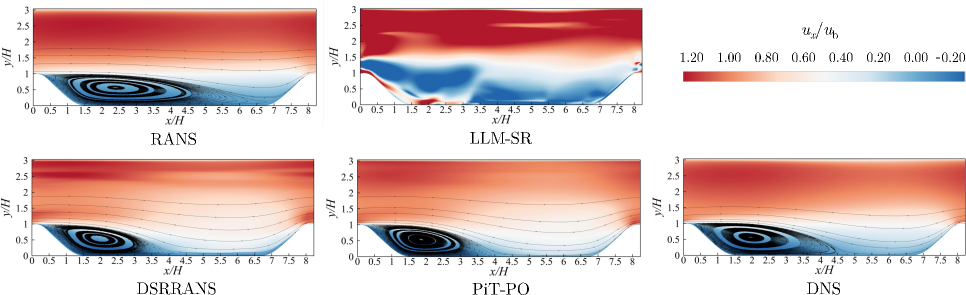

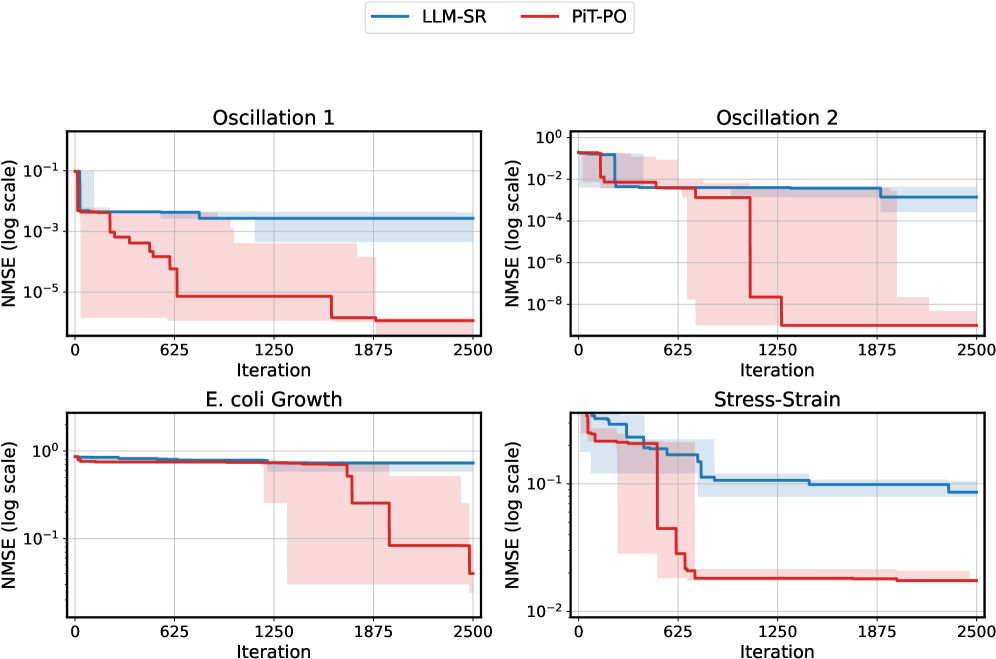

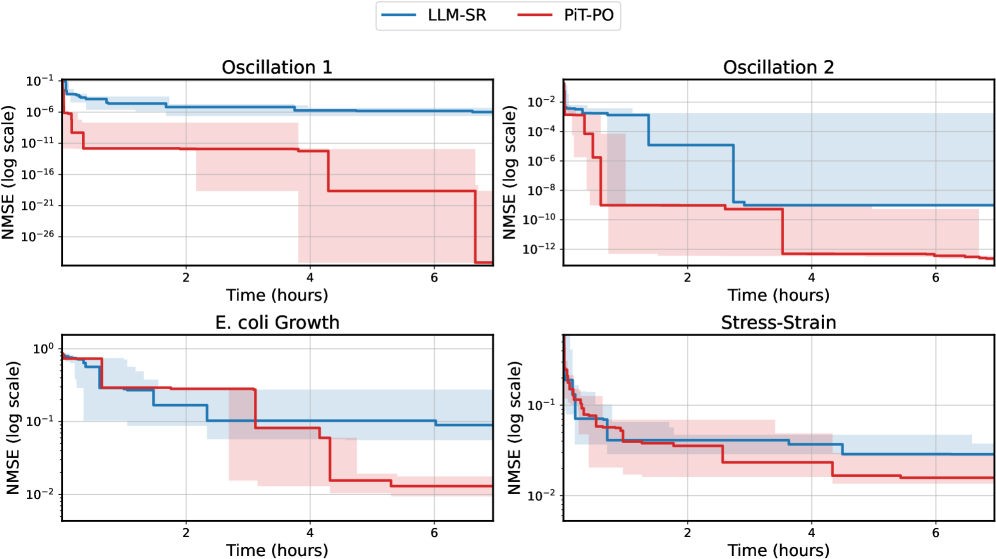

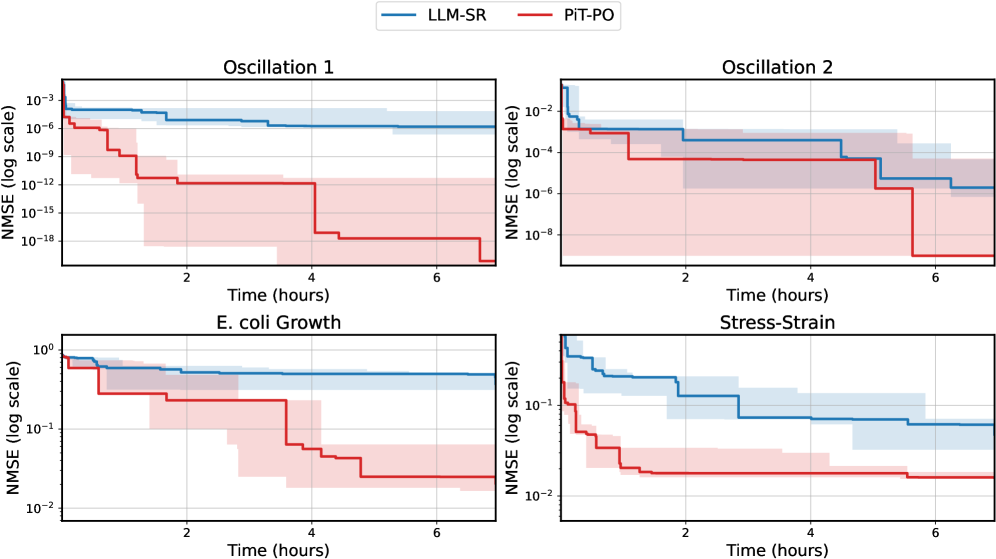

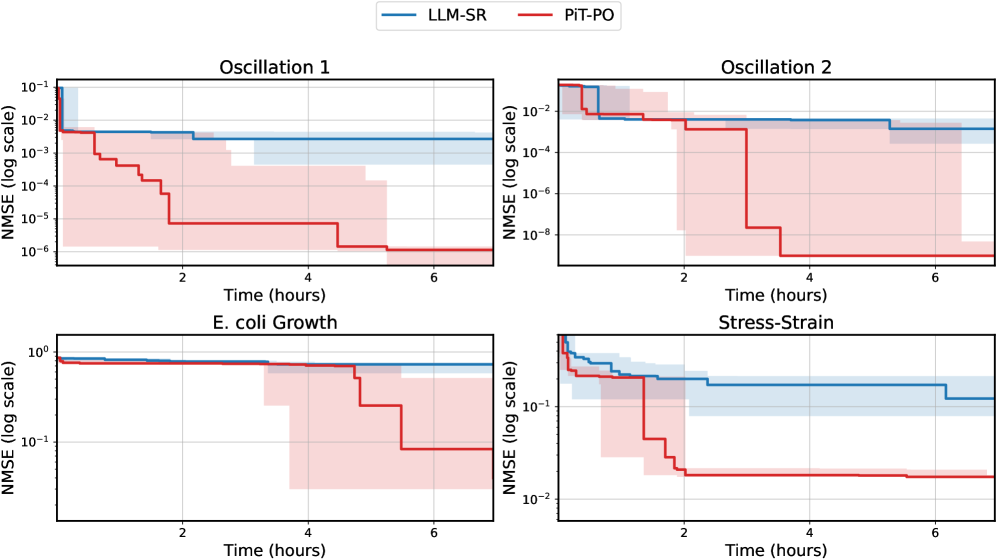

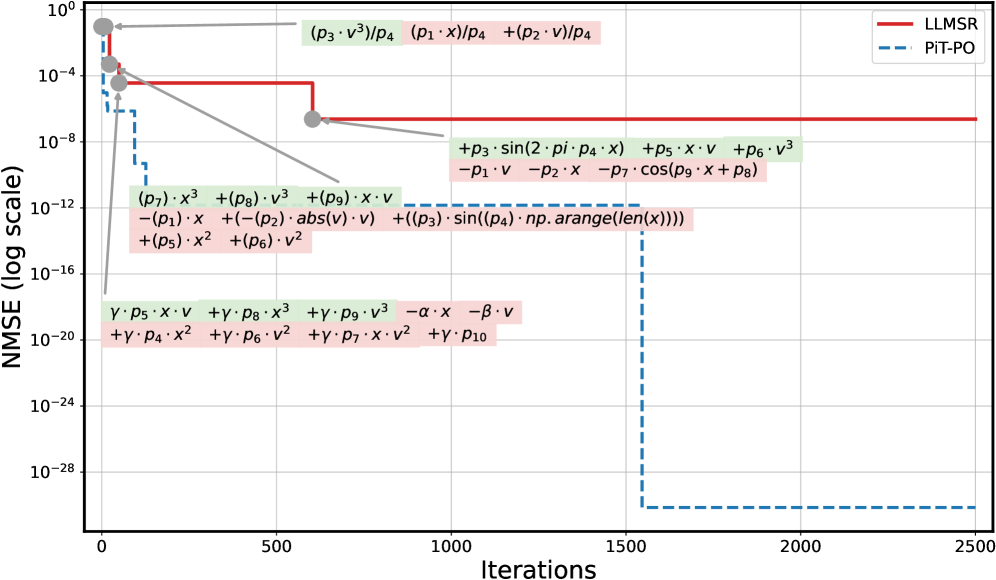

Figure 2. NMSE trajectories (log scale) over search iterations for LLM-SR and PiT-PO (Llama-3.1-8B) on LLM-SR Suite. Lines denote the median over seeds, and shaded regions indicate the min–max range.The remaining iteration curves for smaller backbones (3B and 1B) are deferred to Appendix C.1.

| Direct Prompting SGA LaSR | 3.61 2.70 5.41 | 1.801 0.909 45.94 | 0.3697 0.3519 0.0021 | 0.00 0.00 0.00 | 0.00 8.33 27.77 | 0.0644 0.0458 2.77e-4 | 0.00 0.00 4.16 | 0.00 0.00 16.66 | 0.5481 0.2416 2.73e-4 | 0.00 0.00 4.54 | 0.00 2.27 25.02 | 0.0459 0.1549 0.0018 | 0.00 0.00 8.21 | 0.00 12.12 64.22 | 0.0826 0.0435 7.44e-5 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| LLM-SR | 30.63 | 38.55 | 0.0101 | 8.33 | 66.66 | 8.01e-6 | 25.30 | 58.33 | 1.04e-6 | 6.97 | 34.09 | 1.23e-4 | 4.10 | 88.12 | 1.15e-7 |

| PiT-PO | 34.23 | 46.84 | 0.0056 | 13.89 | 77.78 | 4.13e-7 | 29.17 | 70.83 | 9.37e-8 | 11.36 | 40.91 | 6.57e-5 | 12.00 | 92.00 | 1.18e-8 |

Table 2. Overall performance on LLM-SRBench (Llama-3.1-8B-Instruct).

4.3. PiT-PO Empowers Lightweight Backbones to Rival Large Models

As shown in Table 1, the performance of PiT-PO with the Llama-3.1-8B, Llama-3.2-3B, and Llama-3.2-1B backbones is competitive with, and often exceeds, the performance of LLM-SR that relies on substantially larger or proprietary models, including Mixtral 8 $×$ 7B and 4o-mini.

These results indicate that PiT-PO effectively bridges the capability gap between lightweight open-source models and large-scale commercial systems. From a practical standpoint, this reduces the barrier to entry for scientific discovery: by delivering state-of-the-art performance on consumer-grade hardware (even maintaining competitiveness with a 1B backbone), PiT-PO eliminates the dependence on massive compute and closed-source APIs, thereby democratizing access to powerful SR tools.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: NMSE Comparison

### Overview

The image is a bar chart comparing the Normalized Mean Squared Error (NMSE) on a logarithmic scale for different methods: "w/o Phy", "w/o TokenReg", and "PiT-PO". The chart compares the NMSE for two conditions: In-Distribution (ID) and Out-of-Distribution (OOD).

### Components/Axes

* **Y-axis:** NMSE (log scale). The y-axis ranges from approximately 10<sup>-29</sup> to 10<sup>-11</sup>, with gridlines at each power of 10.

* **X-axis:** Categorical axis representing the different methods: "w/o Phy", "w/o TokenReg", and "PiT-PO".

* **Legend:** Located in the top-right corner.

* White square: ID (In-Distribution)

* Diagonal lines: OOD (Out-of-Distribution)

* **Bar Colors:**

* ID: Solid blue or orange

* OOD: Light blue or light orange with diagonal lines

### Detailed Analysis

* **w/o Phy:**

* ID (blue): NMSE = 7.60e-21

* OOD (light blue, diagonal lines): NMSE = 2.06e-10

* **w/o TokenReg:**

* ID (blue): NMSE = 2.77e-19

* OOD (light blue, diagonal lines): NMSE = 9.97e-11

* **PiT-PO:**

* ID (orange): NMSE = 6.40e-31

* OOD (light orange, diagonal lines): NMSE = 1.63e-30

### Key Observations

* For "w/o Phy" and "w/o TokenReg", the OOD NMSE is significantly higher than the ID NMSE.

* For "PiT-PO", both ID and OOD NMSE values are very low, close to the bottom of the scale (10<sup>-30</sup> range).

* The NMSE values for PiT-PO are several orders of magnitude lower than the other two methods.

### Interpretation

The chart demonstrates that the "PiT-PO" method significantly outperforms "w/o Phy" and "w/o TokenReg" in terms of NMSE, for both in-distribution and out-of-distribution data. The large difference between ID and OOD NMSE for "w/o Phy" and "w/o TokenReg" suggests that these methods do not generalize well to out-of-distribution data. In contrast, "PiT-PO" maintains a low NMSE even for OOD data, indicating better generalization capabilities.

</details>

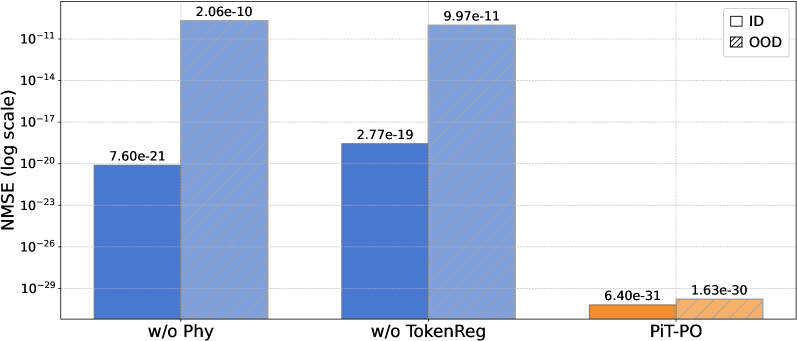

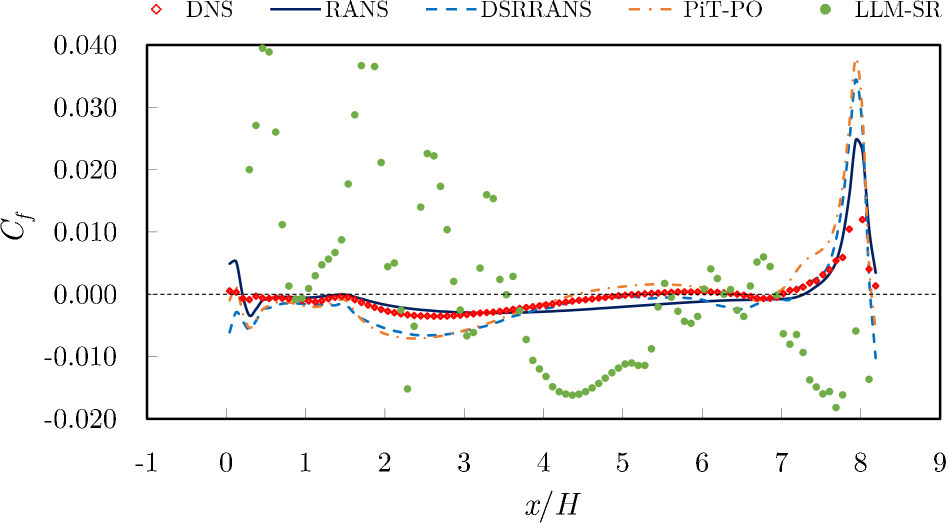

Figure 3. Ablation results of PiT-PO and its variants.

4.4. PiT-PO Enhances Search Efficiency and Breaks Stagnation

Figure 2 shows that PiT-PO achieves superior search efficiency in discovering accurate equations. In the early search stage, the red and blue curves are close across all four tasks: both methods primarily rely on the fitting signal (MSE) and therefore exhibit comparable per-iteration progress. As NMSE enters a lower regime, the trajectories consistently separate: PiT-PO exhibits abrupt step-wise drops while LLM-SR tends to plateau, yielding a clear red–blue gap in every subplot. Concretely, once the search reaches these lower-error regions, PiT-PO repeatedly exits stagnation and transitions to the next accuracy phase with orders-of-magnitude NMSE reductions (most prominently in Oscillation 1 and Oscillation 2, and also evident in E. coli Growth and Stress-Strain), whereas LLM-SR often remains trapped near its current error floor. This behavior confirms that the proposed dual-constraint mechanism effectively activates exactly when naive MSE feedback becomes insufficient. By penalizing physical inconsistencies and structural redundancy, PiT-PO forces the LLM to exit stagnation and transition toward the correct functional form.

While the in-search fine-tuning introduces a computational overhead, this cost is decisively outweighed by the substantial gains in performance. As detailed in Appendix C.2, PiT-PO maintains a significant performance edge even when evaluated under equivalent wall-clock time, demonstrating that the accelerated convergence speed effectively compensates for the additional training time.

4.5. Ablation Study

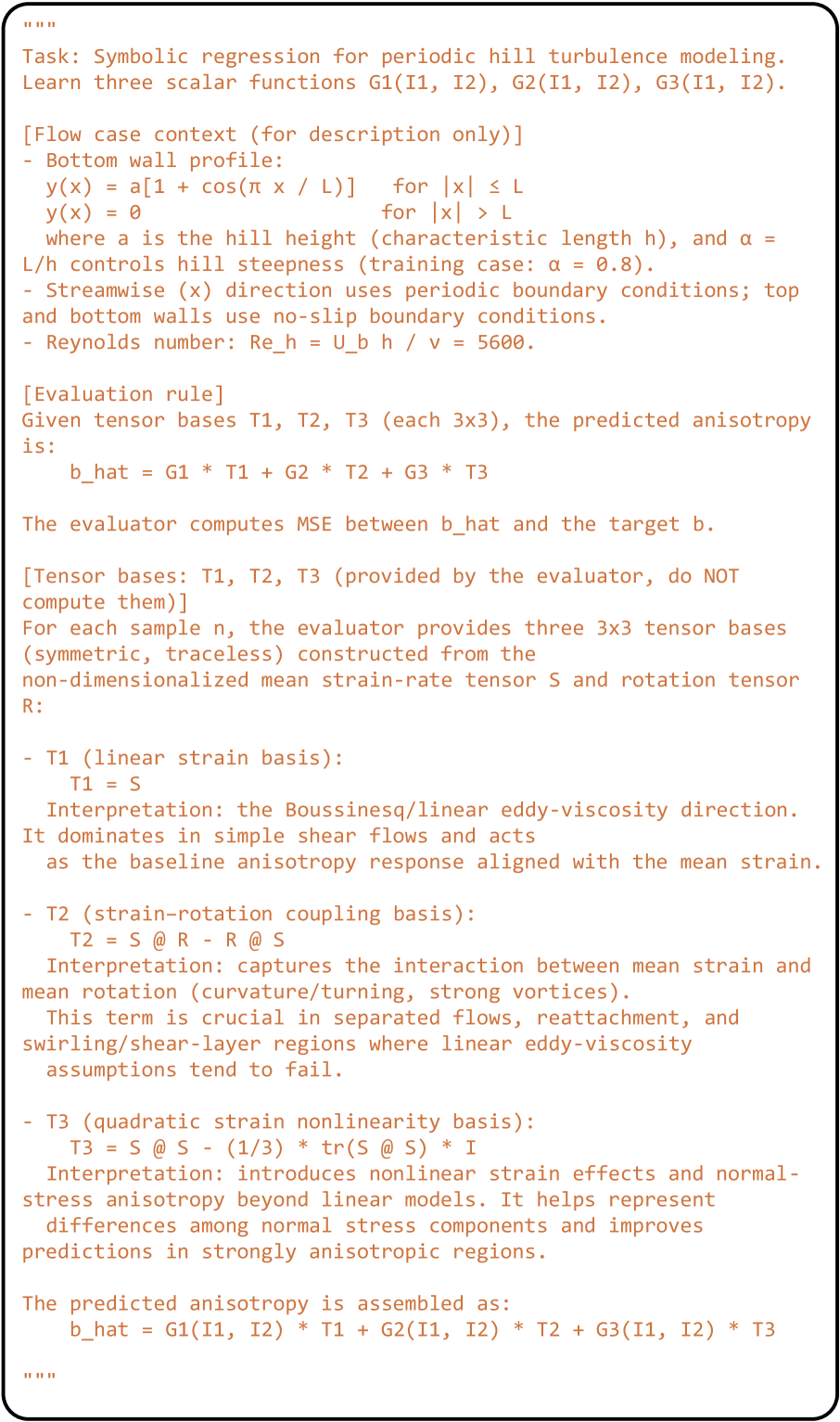

To rigorously validate the contribution of each algorithmic component, we conduct an ablation study across three settings: w/o Phy, which excludes the physics-consistency penalty $P_{\text{phy}}$ ; w/o TokenReg, which removes the redundancy-aware token-level regularization; and the full PiT-PO framework. As shown in Figure 3, removing any single component leads to a substantial deterioration of NMSE and a larger generalization gap between In-Distribution (ID) and Out-Of-Distribution (OOD) data. These empirical results underscore the necessity of the complete framework, demonstrating that the proposed dual constraints are indispensable for ensuring both search stability and robust generalization.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Flow Channel with Varying Obstructions

### Overview

The image depicts a two-dimensional flow channel with obstructions of varying shapes and sizes. The channel is defined by solid walls and cyclic boundaries. The diagram illustrates the impact of different obstruction profiles on the flow.

### Components/Axes

* **X-axis:** x/H, ranging from 0 to 9.

* **Y-axis:** y/H, ranging from 0 to 3.

* **Boundaries:**

* Solid walls (top and bottom)

* Cyclic boundaries (left and right sides)

* **Obstructions:** Three different obstruction profiles are shown, each corresponding to a different value of alpha (α).

* α = 0.5 (brown line)

* α = 0.8 (teal line)

* α = 1.0 (dark teal line)

* **Flow Direction:** Indicated by a yellow arrow pointing from left to right.

* **Region H:** A yellow shaded region near the right side of the channel, labeled "H".

### Detailed Analysis

* **Channel Geometry:** The channel is rectangular, with the x-axis representing the horizontal length and the y-axis representing the vertical height.

* **Boundary Conditions:** The top and bottom boundaries are solid walls, implying no-slip conditions. The left and right boundaries are cyclic, indicating periodic boundary conditions.

* **Obstructions:**

* The obstruction for α = 0.5 (brown) starts at x/H ≈ 0, rises to y/H ≈ 1, remains constant until x/H ≈ 6, then drops back down to y/H ≈ 0.

* The obstruction for α = 0.8 (teal) starts at x/H ≈ 0, rises to y/H ≈ 1, remains constant until x/H ≈ 7, then drops back down to y/H ≈ 0.

* The obstruction for α = 1.0 (dark teal) starts at x/H ≈ 0, rises to y/H ≈ 1, remains constant until x/H ≈ 7.75, then drops back down to y/H ≈ 0.

* **Flow Direction:** The flow is from left to right, as indicated by the arrow. The obstructions will influence the flow field, creating pressure gradients and velocity variations.

* **Region H:** The yellow shaded region labeled "H" likely represents the height of the channel or a characteristic length scale.

### Key Observations

* The obstructions are located on the bottom wall of the channel.

* The parameter α controls the shape and size of the obstruction.

* As α increases, the obstruction becomes taller and extends further into the channel.

* The cyclic boundary conditions suggest that the flow is expected to repeat periodically along the x-axis.

### Interpretation

The diagram illustrates the impact of different obstruction profiles on the flow within a channel. The parameter α is a key design variable that controls the shape and size of the obstruction. By varying α, the flow field can be manipulated to achieve desired performance characteristics. The cyclic boundary conditions imply that this setup is modeling a repeating section of a larger flow domain. The "H" region likely indicates a reference height used for normalization or analysis of the flow behavior. The diagram is likely used to study the effects of different obstruction shapes on flow characteristics such as pressure drop, velocity distribution, and turbulence.

</details>

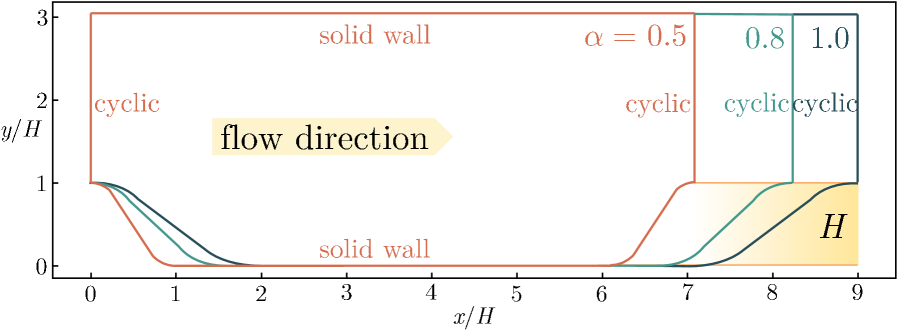

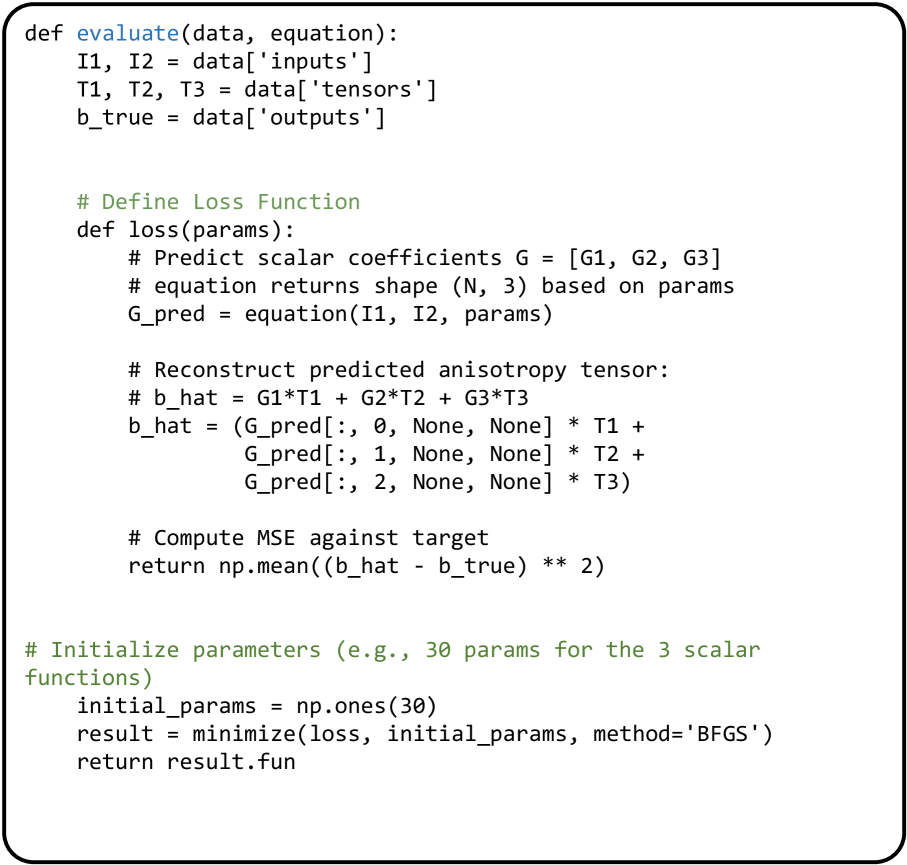

Figure 4. Schematic of the geometries for periodic hills.

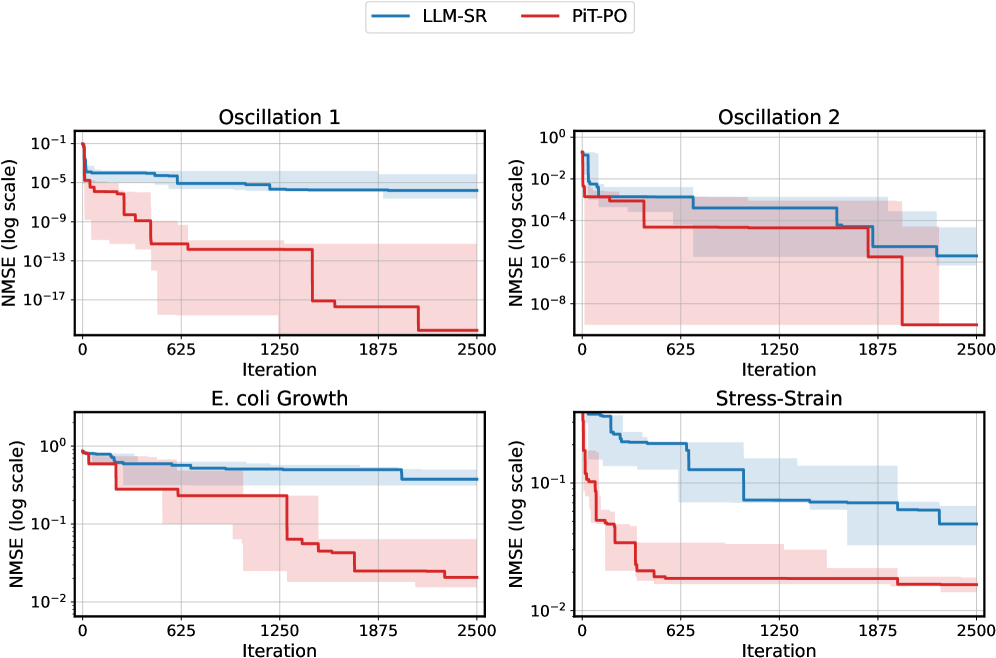

4.6. Case Study: Turbulence Modeling

To validate the practical utility of PiT-PO in high-fidelity scientific discovery, we select the Flow over Periodic Hills (Xiao et al., 2020) (Figure 4) as a testbed. This problem is widely recognized in Computational Fluid Dynamics (CFD) (Pope, 2000) as a benchmark for Separated Turbulent Flows, presenting complex features such as strong adverse pressure gradients, massive flow detachment, and reattachment.

Problem Definition and Physics: The geometry consists of a sequence of polynomially shaped hills arranged periodically in the streamwise direction. The flow is driven by a constant body force at a bulk Reynolds number of $Re_{b}=5600$ (based on hill height $H$ and bulk velocity $U_{b}$ ). The domain height is fixed at $L_{y}/H=3.036$ , while the streamwise length $L_{x}$ varies with the slope factor $\alpha$ according to $L_{x}/H=3.858\alpha+5.142$ . Periodic boundary conditions are applied in the streamwise direction, with no-slip conditions on the walls.

The Scientific Challenge: The challenge lies in the Separation Bubble (Pope, 2000), a region where turbulence exhibits strong anisotropy due to streamline curvature. Traditional Linear Eddy Viscosity Models (LEVM) (Pope, 2000), such as the $k$ - $\omega$ SST model (Menter, 1994; Menter et al., 2003), rely on the Boussinesq hypothesis which assumes isotropic turbulence. Consequently, they systematically fail to predict key flow features, such as the separation bubble size and reattachment location.

Discovery Objective: Instead of fitting a simple curve, our goal is to discover a Non-linear Constitutive Relation for the Reynolds stress anisotropy tensor $a_{ij}$ and the dimensionless Reynolds stress anisotropy tensor $b_{ij}$ . By learning the Reynolds stress tensor $\tau_{ij}$ from high-fidelity Direct Numerical Simulation (DNS) (Pope, 2000) data, PiT-PO aims to formulate a symbolic correction term that captures the anisotropic physics missed by linear models.

Baselines: We follow standard turbulence modeling protocols and compare primarily against the standard $k$ - $\omega$ SST model of RANS. We also include LLM-SR and DSRRANS (Tang et al., 2023a), a strong SR-based turbulence modeling method specifically designed for turbulence tasks.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Heatmap: Reynolds Stress Anisotropy Components

### Overview

The image presents a series of heatmaps visualizing the Reynolds stress anisotropy components (a11/ub^2, a22/ub^2, a33/ub^2, a12/ub^2) for different turbulence models (RANS, DSRRANS, LLM-SR, PIT-PO, DNS) in a channel flow with a varying bottom topography. The x-axis represents the streamwise direction (x/H), and the y-axis represents the vertical direction (y/H). The color scale indicates the magnitude of the anisotropy components, with blue representing negative values and red representing positive values.

### Components/Axes

* **Rows (Turbulence Models):**

* RANS (Reynolds-Averaged Navier-Stokes)

* DSRRANS (Data-Driven Scale Resolving RANS)

* LLM-SR (Likelihood Maximization Model - Scale Resolving)

* PIT-PO (Pressure Implicit with Turbulence - Partially Optimized)

* DNS (Direct Numerical Simulation)

* **Columns (Anisotropy Components):**

* Column 1: a11/ub^2

* Column 2: a22/ub^2

* Column 3: a33/ub^2

* Column 4: a12/ub^2

* **X-axis:** x/H (Streamwise direction), ranging from approximately 0 to 8, with tick marks at intervals of 0.5.

* **Y-axis:** y/H (Vertical direction), ranging from 0 to 3, with tick marks at intervals of 0.5.

* **Color Scale:** Ranges from approximately -0.04 (dark blue) to 0.03 (dark red).

### Detailed Analysis

**Row 1: RANS**

* **a11/ub^2:** Predominantly light blue, indicating slightly negative values.

* **a22/ub^2:** Predominantly light blue, indicating slightly negative values.

* **a33/ub^2:** Predominantly light blue, indicating slightly negative values.

* **a12/ub^2:** Predominantly light blue, indicating slightly negative values.

**Row 2: DSRRANS**

* **a11/ub^2:** Mostly light blue, with a slight increase in magnitude near the bottom topography.

* **a22/ub^2:** Mostly light blue, with a slight increase in magnitude near the bottom topography.

* **a33/ub^2:** Mostly light blue, with a slight increase in magnitude near the bottom topography.

* **a12/ub^2:** Mostly light blue, with a slight increase in magnitude near the bottom topography.

**Row 3: LLM-SR**

* **a11/ub^2:** Shows a distinct region of positive (red) values near the bottom topography, surrounded by negative (blue) values.

* **a22/ub^2:** Shows a distinct region of negative (blue) values near the bottom topography, surrounded by positive (red) values.

* **a33/ub^2:** Shows a complex pattern of positive and negative values, with a concentration of negative values near the bottom topography.

* **a12/ub^2:** Shows a complex pattern of positive and negative values, with a concentration of negative values near the bottom topography.

**Row 4: PIT-PO**

* **a11/ub^2:** Shows a region of positive (red) values near the bottom topography, surrounded by negative (blue) values.

* **a22/ub^2:** Shows a region of negative (blue) values near the bottom topography, surrounded by positive (red) values.

* **a33/ub^2:** Shows a complex pattern of positive and negative values, with a concentration of negative values near the bottom topography.

* **a12/ub^2:** Shows a complex pattern of positive and negative values, with a concentration of negative values near the bottom topography.

**Row 5: DNS**

* **a11/ub^2:** Shows a region of positive (red) values near the bottom topography, surrounded by negative (blue) values.

* **a22/ub^2:** Shows a region of negative (blue) values near the bottom topography, surrounded by positive (red) values.

* **a33/ub^2:** Shows a complex pattern of positive and negative values, with a concentration of negative values near the bottom topography.

* **a12/ub^2:** Shows a complex pattern of positive and negative values, with a concentration of negative values near the bottom topography.

### Key Observations

* RANS and DSRRANS models show relatively uniform and low magnitudes for all anisotropy components.

* LLM-SR, PIT-PO, and DNS models exhibit more complex patterns, particularly near the bottom topography.

* The a11/ub^2 component tends to be positive near the bottom topography for LLM-SR, PIT-PO, and DNS.

* The a22/ub^2 component tends to be negative near the bottom topography for LLM-SR, PIT-PO, and DNS.

* The a33/ub^2 and a12/ub^2 components show more intricate distributions with both positive and negative regions.

### Interpretation

The heatmaps illustrate the differences in how various turbulence models capture the Reynolds stress anisotropy in a channel flow with complex geometry. RANS and DSRRANS, being simpler models, predict relatively uniform anisotropy distributions. In contrast, LLM-SR, PIT-PO, and DNS, which are more sophisticated, resolve more complex anisotropy patterns, especially in the vicinity of the bottom topography. The DNS results, considered the most accurate, serve as a benchmark for evaluating the performance of the other models. The differences in anisotropy components suggest variations in how these models represent the turbulent stresses and their impact on the flow field. The alternating regions of positive and negative values indicate the presence of complex flow structures and gradients in the turbulent stresses.

</details>

Figure 5. Comparison of the four anisotropic Reynolds stress components for periodic hill training flow using RANS, DSRRANS, LLM-SR, PiT-PO and DNS, respectively.

We cast turbulence closure modeling as a SR problem (Tang et al., 2023b) (see Appendix E for details). After obtaining the final symbolic equation, we embed it into a RANS solver of OpenFOAM (Weller et al., 1998) and run CFD simulations on the periodic-hill configuration. We compare the resulting Reynolds-stress components, mean-velocity fields, and skin-friction profiles against DNS references. Figures 5 – 7 visualize these quantities, enabling a direct assessment of physical fidelity and flow-field prediction quality.

Based on the comparative analysis of the anisotropic Reynolds stress contours (Figure 5), DSRRANS and PiT-PO show enhancement over the traditional RANS approach. Among them, PiT-PO performs the best: its contour matches the DNS reference most closely, with reduced error compared to DSRRANS and LLM-SR, demonstrating less severe non-physical extremes.

The stream-wise velocity contours illustrate the correction of the bubble size, a region of reversed flow that forms when fluid detaches from a surface. In Figure 6, PiT-PO most accurately represents the extent and shape of the recirculation zone, where fluid circulates within the separated region, closely consistent with the DNS data throughout the domain, particularly within the separation region and the recovery layer, where flow re-attaches to the surface.

The skin friction coefficient (Figure 7), defined as the ratio of the wall stress to the dynamic pressure of the flow along the bottom wall, is a sensitive metric for predicting flow separation. The $k$ - $\omega$ SST model of RANS underestimates the magnitude of the skin friction and predicts a delayed reattachment location compared to the DNS. The learned model (PiT-PO) improves the prediction, aligning more closely with the DNS profile.

These results demonstrate that PiT-PO can generate symbolic equations tailored to turbulence modeling and that, under a posteriori CFD evaluation, the resulting predictions more closely match DNS references, which increases the practical value of LLM-based SR in real scientific and engineering workflows. With the proposed dual constraints,

PiT-PO provides targeted search and learning signals that enable the internalization of turbulence priors during equation discovery, thereby steering the model toward physically consistent and domain-relevant structures.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Flow Simulation Comparison

### Overview

The image presents a comparison of flow simulations using different computational models: RANS, LLM-SR, DSRRANS, PiT-PO, and DNS. Each simulation visualizes the flow field over a backward-facing step, displaying the velocity component *u_x / u_b* as a color gradient and streamlines to indicate flow direction.

### Components/Axes

* **Title:** The title is not explicitly stated in the image, but the sub-titles of each subplot indicate the simulation method used.

* **X-axis:** *x/H*, ranging from 0 to 8. This represents the normalized horizontal distance.

* **Y-axis:** *y/H*, ranging from 0 to 3. This represents the normalized vertical distance.

* **Colorbar:** Located at the top-right, indicating the velocity component *u_x / u_b*. Red corresponds to positive values (1.20 to 0.40), white corresponds to 0.00, and blue corresponds to negative values (-0.20).

* **Streamlines:** Black lines indicating the direction of flow.

### Detailed Analysis

Each subplot represents a different simulation method:

1. **RANS (Reynolds-Averaged Navier-Stokes):**

* A large recirculation zone is visible behind the step (x/H ≈ 1 to 4, y/H ≈ 0 to 1).

* The flow reattaches around x/H ≈ 6.

* The color gradient shows a relatively smooth transition from negative (blue) to positive (red) velocities.

2. **LLM-SR (Likely Large-eddy Simulation - Spectral Relaxation):**

* The recirculation zone is less defined compared to RANS.

* Significant regions of negative velocity (blue) extend further upwards (y/H ≈ 1.5) and downstream.

* The color gradient shows more turbulent structures.

3. **DSRRANS (Detached Eddy Simulation RANS):**

* The recirculation zone is similar to RANS but slightly smaller.

* The flow reattaches around x/H ≈ 6.

* The color gradient is smoother than LLM-SR but shows some turbulent features.

4. **PiT-PO (Perturbation-Informed Training - Physics-Only):**

* The recirculation zone is similar in size and shape to RANS and DSRRANS.

* The flow reattaches around x/H ≈ 6.

* The color gradient is relatively smooth.

5. **DNS (Direct Numerical Simulation):**

* The recirculation zone is similar to RANS, DSRRANS, and PiT-PO.

* The flow reattaches around x/H ≈ 6.

* The color gradient is relatively smooth.

### Key Observations

* **Recirculation Zone:** All simulations show a recirculation zone behind the step, but the size and intensity vary. LLM-SR shows a more extended region of negative velocity.

* **Flow Reattachment:** The flow reattaches at approximately the same location (x/H ≈ 6) in RANS, DSRRANS, PiT-PO, and DNS.

* **Turbulence:** LLM-SR exhibits more turbulent structures in the color gradient compared to the other methods.

### Interpretation

The image compares the performance of different turbulence models in simulating flow over a backward-facing step. RANS, DSRRANS, PiT-PO, and DNS show qualitatively similar results, with a well-defined recirculation zone and flow reattachment at approximately the same location. LLM-SR, on the other hand, predicts a more extended region of negative velocity and exhibits more turbulent structures. This suggests that LLM-SR captures more of the unsteady flow features compared to the other models. The choice of turbulence model depends on the specific application and the desired level of accuracy. RANS models are computationally cheaper but may not accurately capture complex flow features, while DNS is the most accurate but also the most computationally expensive. LLM-SR and DSRRANS offer a compromise between accuracy and computational cost.

</details>

Figure 6. Non-dimensional stream-wise velocity contours obtained by the learned model and the standard $k$ - $\omega$ SST model of RANS, compared with DNS data.

5. Related Work

Traditional SR has been studied through several lines, including genetic programming , reinforcement learning (Petersen et al., 2021), and transformer-based generation (Biggio* et al., 2021). Genetic programming (Koza, 1990) casts equation discovery as an evolutionary search over tree-structured programs, where candidate expressions are iteratively refined via mutation and crossover. Reinforcement learning-based SR, introduced by Petersen et al. (Petersen et al., 2021), has developed into a family of policy-optimization frameworks (Mundhenk et al., 2021; Landajuela et al., 2021; Crochepierre et al., 2022; Du et al., 2023) that formulate SR as a sequential decision-making process. More recently, transformer-based models (Valipour et al., 2021; Vastl et al., 2024; Kamienny et al., 2022; Li et al., 2023; Zhang et al., 2025) have been adopted for SR, using large-scale pretraining to map numerical data directly to equations. However, these methods typically fail to incorporate scientific prior knowledge.

Recent progress in natural language processing has further enabled LLM-based SR methods, including LLM-SR (Shojaee et al., 2025a), LaSR (Grayeli et al., 2024), ICSR (Merler et al., 2024), CoEvo (Guo et al., 2025), and SR-Scientist (Xia et al., 2025). LLM-SR exploits scientific priors that are implicitly captured by LLMs to propose plausible functional forms, followed by data-driven parameter estimation. LaSR augments SR with abstract concept generation to guide hypothesis formation, while ICSR reformulates training examples as in-context prompts to elicit function generation. However, a unifying limitation across these methods is their reliance on the LLM as a frozen generator, which precludes incorporating search feedback to update the generation strategy and consequently restricts their ability to adapt to complex problems.

While some recent works, such as SOAR (Pourcel et al., 2025) and CALM (Huang et al., 2025), have begun to explore adaptive in-search tuning, they primarily focus on algorithm discovery or combinatorial optimization problems, whereas our method is specifically tailored for SR. By integrating hierarchical physical constraints and theorem-guided token regularization, PiT-PO establishes an adaptive framework capable of discovering accurate and physically consistent equations.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Cf vs x/H

### Overview

The image is a line chart comparing different computational fluid dynamics (CFD) models against Direct Numerical Simulation (DNS) data. The chart plots the coefficient of friction (Cf) on the y-axis against the normalized distance (x/H) on the x-axis. Several models are compared: RANS, DSRRANS, PiT-PO, and LLM-SR.

### Components/Axes

* **Title:** Implicit, but the chart compares Cf vs x/H for different models.

* **X-axis:**

* Label: x/H

* Scale: -1 to 9, with tick marks at every integer value.

* **Y-axis:**

* Label: Cf

* Scale: -0.020 to 0.040, with tick marks at -0.020, -0.010, 0.000, 0.010, 0.020, 0.030, and 0.040.

* **Legend:** Located at the top of the chart.

* DNS: Red diamonds

* RANS: Solid dark blue line

* DSRRANS: Dashed light blue line

* PiT-PO: Dash-dotted orange line

* LLM-SR: Green dots

### Detailed Analysis

* **DNS (Red Diamonds):**

* Trend: Relatively flat near zero from x/H = -1 to approximately x/H = 7.5, then sharply increases to a peak around x/H = 8, followed by a sharp drop.

* Data Points:

* x/H = 0, Cf ≈ 0.001

* x/H = 2, Cf ≈ -0.003

* x/H = 4, Cf ≈ -0.003

* x/H = 6, Cf ≈ 0.001

* x/H = 8, Cf ≈ 0.022

* **RANS (Solid Dark Blue Line):**

* Trend: Similar to DNS, relatively flat near zero from x/H = -1 to approximately x/H = 7.5, then increases to a peak around x/H = 8, followed by a drop.

* Data Points:

* x/H = 0, Cf ≈ -0.003

* x/H = 2, Cf ≈ -0.004

* x/H = 4, Cf ≈ -0.004

* x/H = 6, Cf ≈ 0.000

* x/H = 8, Cf ≈ 0.024

* **DSRRANS (Dashed Light Blue Line):**

* Trend: Similar to DNS and RANS, relatively flat near zero from x/H = -1 to approximately x/H = 7.5, then increases to a peak around x/H = 8, followed by a sharp drop.

* Data Points:

* x/H = 0, Cf ≈ -0.006

* x/H = 2, Cf ≈ -0.005

* x/H = 4, Cf ≈ -0.004

* x/H = 6, Cf ≈ -0.001

* x/H = 8, Cf ≈ 0.038

* **PiT-PO (Dash-dotted Orange Line):**

* Trend: Similar to DNS, RANS, and DSRRANS, relatively flat near zero from x/H = -1 to approximately x/H = 7.5, then increases to a peak around x/H = 8, followed by a drop.

* Data Points:

* x/H = 0, Cf ≈ -0.004

* x/H = 2, Cf ≈ -0.004

* x/H = 4, Cf ≈ -0.002

* x/H = 6, Cf ≈ 0.001

* x/H = 8, Cf ≈ 0.015

* **LLM-SR (Green Dots):**

* Trend: Highly scattered data points. From x/H = -1 to approximately x/H = 3, the data points are scattered above the zero line. From x/H = 3 to x/H = 8, the data points are scattered below the zero line. There is a large amount of variance.

* Data Points: The data is too scattered to provide accurate point estimates.

### Key Observations

* The RANS, DSRRANS, and PiT-PO models generally follow the trend of the DNS data, but there are some deviations, particularly around the peak at x/H = 8.

* The LLM-SR model shows a high degree of scatter and does not closely follow the trend of the DNS data.

* All models converge to near zero for x/H values between 2 and 7.

* All models show a peak in Cf around x/H = 8.

### Interpretation

The chart compares the performance of different CFD models in predicting the coefficient of friction (Cf) against DNS data, which is considered the most accurate. The RANS, DSRRANS, and PiT-PO models show reasonable agreement with the DNS data, suggesting they can capture the general trend of the flow. However, the DSRRANS model overestimates the peak at x/H = 8, while the PiT-PO model underestimates it. The LLM-SR model's high scatter indicates that it is not a reliable predictor of Cf in this scenario. The peak at x/H = 8 likely corresponds to a significant flow feature, such as a separation or reattachment point, which the models capture with varying degrees of accuracy.

</details>

Figure 7. Skin friction distribution along the bottom obtained by the learned model and the standard $k$ - $\omega$ SST model of RANS, compared with DNS data.

6. Conclusion

In this work, we introduced PiT-PO, a unified framework that fundamentally transforms LLMs from static equation proposers into adaptive, physics-aware generators for SR. By integrating in-search policy optimization with a novel dual-constraint evaluation mechanism, PiT-PO rigorously enforces hierarchical physical validity while leveraging theorem-guided, token-level penalties to eliminate structural redundancy. This synergistic design aligns generation with numerical fitness, scientific consistency, and parsimony, establishing new state-of-the-art performance on SR benchmarks. Beyond synthetic tasks, PiT-PO demonstrates significant practical utility in turbulence modeling, where the discovered symbolic corrections improve Reynolds stress and flow-field predictions. Notably, PiT-PO achieves these results using small open-source backbones, making it a practical and accessible tool for scientific communities with limited computational resources. Looking forward, we plan to extend PiT-PO to broader scientific and engineering domains by enriching the library of domain-specific constraints and validating it across more complex, real-world systems. Moreover, we anticipate that integrating PiT-PO with larger-scale multi-modal foundation models could further unlock its potential in processing heterogeneous scientific data.

References

- (1)

- Aakash et al. (2019) B. S. Aakash, JohnPatrick Connors, and Michael D Shields. 2019. Stress-strain data for aluminum 6061-T651 from 9 lots at 6 temperatures under uniaxial and plane strain tension. Data in Brief 25 (Aug 2019), 104085. doi: 10.1016/j.dib.2019.104085

- Biggio et al. (2021) Luca Biggio, Tommaso Bendinelli, Alexander Neitz, Aurelien Lucchi, and Giambattista Parascandolo. 2021. Neural Symbolic Regression that Scales. arXiv:2106.06427 [cs.LG] https://arxiv.org/abs/2106.06427

- Biggio* et al. (2021) L. Biggio*, T. Bendinelli*, A. Neitz, A. Lucchi, and G. Parascandolo. 2021. Neural Symbolic Regression that Scales. In Proceedings of 38th International Conference on Machine Learning (ICML 2021) (Proceedings of Machine Learning Research, Vol. 139). PMLR, 936–945. https://proceedings.mlr.press/v139/biggio21a.html *equal contribution.

- Chen et al. (2025) Jindou Chen, Jidong Tian, Liang Wu, ChenXinWei, Xiaokang Yang, Yaohui Jin, and Yanyan Xu. 2025. KinFormer: Generalizable Dynamical Symbolic Regression for Catalytic Organic Reaction Kinetics. In International Conference on Representation Learning, Y. Yue, A. Garg, N. Peng, F. Sha, and R. Yu (Eds.), Vol. 2025. 67058–67080. https://proceedings.iclr.cc/paper_files/paper/2025/file/a76b693f36916a5ed84d6e5b39a0dc03-Paper-Conference.pdf

- Cranmer (2023) Miles Cranmer. 2023. Interpretable Machine Learning for Science with PySR and SymbolicRegression.jl. arXiv:2305.01582 [astro-ph.IM] https://arxiv.org/abs/2305.01582

- Crochepierre et al. (2022) Laure Crochepierre, Lydia Boudjeloud-Assala, and Vincent Barbesant. 2022. Interactive Reinforcement Learning for Symbolic Regression from Multi-Format Human-Preference Feedbacks. In IJCAI 2022- 31st International Joint Conference on Artificial Intelligence. Vienne, Austria. https://hal.science/hal-03695471

- Deng et al. (2023) Song Deng, Junjie Wang, Li Tao, Su Zhang, and Hongwei Sun. 2023. EV charging load forecasting model mining algorithm based on hybrid intelligence. Computers and Electrical Engineering 112 (2023), 109010. doi: 10.1016/j.compeleceng.2023.109010

- Du et al. (2023) Mengge Du, Yuntian Chen, and Dongxiao Zhang. 2023. DISCOVER: Deep identification of symbolically concise open-form PDEs via enhanced reinforcement-learning. arXiv:2210.02181 [cs.LG] https://arxiv.org/abs/2210.02181

- Fletcher (1987) Roger Fletcher. 1987. Practical Methods of Optimization (2nd ed.). John Wiley & Sons, Chichester, New York.

- Grayeli et al. (2024) Arya Grayeli, Atharva Sehgal, Omar Costilla-Reyes, Miles Cranmer, and Swarat Chaudhuri. 2024. Symbolic Regression with a Learned Concept Library. arXiv:2409.09359 [cs.LG] https://arxiv.org/abs/2409.09359

- Guo et al. (2025) Ping Guo, Qingfu Zhang, and Xi Lin. 2025. CoEvo: Continual Evolution of Symbolic Solutions Using Large Language Models. arXiv:2412.18890 [cs.AI] https://arxiv.org/abs/2412.18890

- Hu et al. (2021) Edward J. Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, and Weizhu Chen. 2021. LoRA: Low-Rank Adaptation of Large Language Models. arXiv:2106.09685 [cs.CL] https://arxiv.org/abs/2106.09685

- Huang et al. (2025) Ziyao Huang, Weiwei Wu, Kui Wu, Jianping Wang, and Wei-Bin Lee. 2025. CALM: Co-evolution of Algorithms and Language Model for Automatic Heuristic Design. arXiv:2505.12285 [cs.NE] https://arxiv.org/abs/2505.12285

- Kamienny et al. (2022) Pierre-Alexandre Kamienny, Stéphane d’Ascoli, Guillaume Lample, and François Charton. 2022. End-to-end symbolic regression with transformers. arXiv:2204.10532 [cs.LG] https://arxiv.org/abs/2204.10532

- Kassianik et al. (2025) Paul Kassianik, Baturay Saglam, Alexander Chen, Blaine Nelson, Anu Vellore, Massimo Aufiero, Fraser Burch, Dhruv Kedia, Avi Zohary, Sajana Weerawardhena, Aman Priyanshu, Adam Swanda, Amy Chang, Hyrum Anderson, Kojin Oshiba, Omar Santos, Yaron Singer, and Amin Karbasi. 2025. Llama-3.1-FoundationAI-SecurityLLM-Base-8B Technical Report. arXiv:2504.21039 [cs.CR] https://arxiv.org/abs/2504.21039

- Koza (1990) J.R. Koza. 1990. Genetically breeding populations of computer programs to solve problems in artificial intelligence. In [1990] Proceedings of the 2nd International IEEE Conference on Tools for Artificial Intelligence. 819–827. doi: 10.1109/TAI.1990.130444

- Landajuela et al. (2022) Mikel Landajuela, Chak Shing Lee, Jiachen Yang, Ruben Glatt, Claudio P Santiago, Ignacio Aravena, Terrell Mundhenk, Garrett Mulcahy, and Brenden K Petersen. 2022. A Unified Framework for Deep Symbolic Regression. In Advances in Neural Information Processing Systems, S. Koyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, and A. Oh (Eds.), Vol. 35. Curran Associates, Inc., 33985–33998. https://proceedings.neurips.cc/paper_files/paper/2022/file/dbca58f35bddc6e4003b2dd80e42f838-Paper-Conference.pdf

- Landajuela et al. (2021) Mikel Landajuela, Brenden K. Petersen, Soo K. Kim, Claudio P. Santiago, Ruben Glatt, T. Nathan Mundhenk, Jacob F. Pettit, and Daniel M. Faissol. 2021. Improving exploration in policy gradient search: Application to symbolic optimization. arXiv:2107.09158 [cs.LG] https://arxiv.org/abs/2107.09158

- Li et al. (2023) Wenqiang Li, Weijun Li, Linjun Sun, Min Wu, Lina Yu, Jingyi Liu, Yanjie Li, and Song Tian. 2023. Transformer-based model for symbolic regression via joint supervised learning. In International Conference on Learning Representations. https://api.semanticscholar.org/CorpusID:259298765