# Neuro-symbolic Action Masking for Deep Reinforcement Learning

**Authors**: Shuai Han, Mehdi Dastani, Shihan Wang

ifaamas [AAMAS ’26]Proc. of the 25th International Conference on Autonomous Agents and Multiagent Systems (AAMAS 2026)May 25 – 29, 2026 Paphos, CyprusC. Amato, L. Dennis, V. Mascardi, J. Thangarajah (eds.) 2026 2026 Utrecht University Utrecht the Netherland Utrecht University Utrecht the Netherland Utrecht University Utrecht the Netherland

## Abstract

Deep reinforcement learning (DRL) may explore infeasible actions during training and execution. Existing approaches assume a symbol grounding function that maps high-dimensional states to consistent symbolic representations and a manually specified action masking techniques to constrain actions. In this paper, we propose Neuro-symbolic Action Masking (NSAM), a novel framework that automatically learn symbolic models, which are consistent with given domain constraints of high-dimensional states, in a minimally supervised manner during the DRL process. Based on the learned symbolic model of states, NSAM learns action masks that rules out infeasible actions. NSAM enables end-to-end integration of symbolic reasoning and deep policy optimization, where improvements in symbolic grounding and policy learning mutually reinforce each other. We evaluate NSAM on multiple domains with constraints, and experimental results demonstrate that NSAM significantly improves sample efficiency of DRL agent while substantially reducing constraint violations.

Key words and phrases: Deep reinforcement learning, neuro-symbolic learning, action masking

doi: JWPH6906

## 1. Introduction

With the powerful representation capability of neural networks, deep reinforcement learning (DRL) has achieved remarkable success in a variety of complex domains that require autonomous agents, such as autonomous driving autodriving4_1; autodriving4_2; autodriving4_3, resource management resourcem4_1; resourcem4_2, algorithmic trading autotrading4_1; autotrading4_2 and robotics roboticRL4_1; roboticRL4_2; roboticRL4_3. However, in real-world scenarios, agents face the challenges of learning policies from few interactions roboticRL4_2 and keeping violations of domain constraints to a minimum during training and execution autodriving_safe. To address these challenges, an increasing number of neuro-symbolic reinforcement learning (NSRL) approaches have been proposed, aiming to exploit the structural knowledge of the problem to improve sample efficiency shindo2024blendrl; RM; nsrl2025_planning or to constrain agents to select actions PLPG; PPAM; nsrl2024_plpg_multi.

Among these NSRL approaches, a promising practice is to exclude infeasible actions for the agents. We use the term infeasible actions throughout the paper, which can also be considered as unsafe, unethical or in general undesirable actions. This is typically achieved by assuming a predefined symbolic grounding nsplanning or label function RM that maps high-dimensional states into symbolic representations and manually specify action masking techniques actionmasking_app1; actionmasking_app3; actionmasking_app4. However, predefining the symbolic grounding function is often expensive neuroRM, as it requires complete knowledge of the environmental states, and could be practically impossible when the states are high-dimensional or infinite. Learning symbolic grounding from environmental state is therefore crucial for NSRL approaches and remains a highly challenging problem neuroRM.

In particular, there are three main challenges. First, real-world environments should often satisfy complex constraints expressed in a domain specific language, which makes learning the symbolic grounding function difficult ahmed2022semantic. Second, obtaining full supervision for learning symbolic representations in DRL environments is unrealistic, as those environments rarely provide the ground-truth symbolic description of every state. Finally, even if symbolic grounding can be learned, integrating it into reinforcement learning to achieve end-to-end learning remains a challenge.

To address these challenges, we propose Neuro-symbolic Action Masking (NSAM), a framework that integrates symbolic reasoning into deep reinforcement learning. The basic idea is to use probabilistic sentential decision diagrams (PSDDs) to learn symbolic grounding. PSDDs serve two purposes: they guarantee that any learned symbolic model satisfies domain constraints expressed in a domain specific language kisa2014probabilistic, and they allow the agent to represent probability distributions over symbolic models conditioned on high-dimensional states. In this way, PSDDs bridge the gap between numerical states and symbolic reasoning without requiring manually defined mappings. Based on the learned PSDDs, NSAM combines action preconditions with the inferred symbolic model of numeric states to construct action masks, thereby filtering out infeasible actions. Crucially, this process only relies on minimal supervision in the form of action explorablility feedback, rather than full symbolic description at every state. Finally, NSAM is trained end-to-end, where the improvement of symbolic grounding and policy optimization mutually reinforce each other.

We evaluate NSAM on four DRL decision-making domains with domain constraints, and compare it against a series of state-of-the-art baselines. Experimental results demonstrate that NSAM not only learns more efficiently, consistently surpassing all baselines, but also substantially reduces constraint violations during training. The results further show that the symbolic grounding plays a crucial role in exploiting underlying knowledge structures for DRL.

## 2. Problem setting

We study reinforcement learning (RL) on a Markov Decision Process (MDP) RL1998 $\mathcal{M}=(\mathcal{S},\mathcal{A},\mathcal{T},R,\gamma)$ where $\mathcal{S}$ is a set of states, $\mathcal{A}$ is a finite set of actions, $\mathcal{T}:\mathcal{S}\times\mathcal{A}\times\mathcal{S}\rightarrow[0,1]$ is a transition function, $\gamma\in[0,1)$ is a discount factor and $R:\mathcal{S}\times\mathcal{A}\times\mathcal{S}\rightarrow\mathbb{R}$ is a reward function. An agent employs a policy $\pi$ to interact with the environment. At a time step $t$ , the agent takes action $a_{t}$ according to the current state $s_{t}$ . The environment state will transfer to next state $s_{t+1}$ based on the transition probability $\mathcal{T}$ . The agent will receive the reward $r_{t}$ . Then, the next round of interaction begins. The goal of this agent is to find the optimal policy $\pi^{*}$ that maximizes the expected return: $\mathbb{E}[\sum_{t=0}^{T}\gamma^{t}r_{t}|\pi]$ , where $T$ is the terminal time step.

To augment RL with symbolic domain knowledge, we extend the normal MDP with the following modules $(\mathcal{P},\mathcal{AP},\phi)$ where $\mathcal{P}=\{p_{1},..,p_{K}\}$ is a finite set of atomic propositions (each $p\in\mathcal{P}$ represents a Boolean property of a state $s\in\mathcal{S}$ ), $\mathcal{AP}=\{(a,\varphi)|a\in\mathcal{A},\varphi\in L(\mathcal{P})\}$ is the set of actions with their preconditions, and $L(\mathcal{P})$ denotes the propositional language over $\mathcal{P}$ . We use $(a,\varphi)$ to state that action $a$ is explorable All actions $a\in\mathcal{A}$ can in principle be chosen by the agent. However, we use the term explorable to distinguish actions whose preconditions are satisfied (safe, ethical, desriable actions) from those whose preconditions are not satisfied (unsafe, unethical, undesirable actions). in a state if and only if its precondition $\varphi$ holds in that state, $\phi\in L(\mathcal{P})$ is a domain constraint. We use $|[\phi]|=\{\bm{m}|\bm{m}\models\phi\}$ to denote the set of all possible symbolic models of $\phi$ a model is a truth assignment to all propositions in $\mathcal{P}$ .

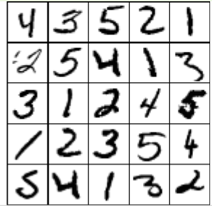

To illustrate how symbolic domain knowledge $(\mathcal{P},\mathcal{AP},\phi)$ is reflected in our formulation, we consider the Visual Sudoku task as a concrete example. In this environment, each state is represented as a non-symbolic image input. The properties of a state can be described using propositions in $\mathcal{P}$ . For example, the properties of the state in Figure 1(a) include ‘position (1,1) is number 1’, ‘position (1,2) is empty’, etc. Each action $a$ of filling a number in a certain position corresponds to a symbolic precondition $\varphi$ , represented by $(a,\varphi)\in\mathcal{AP}$ . For example, the action ‘filling number 1 at position (1,1)’ requires that both propositions ‘position (1,2) is number 1’ and ‘position (2,1) is number 1’ are false. Finally, $\phi$ is used to constrain the set of possible states, e.g., ‘position (1,1) is number 1’ and ‘position (1,1) is number 2’ cannot both be simultaneously true for a given state. To leverage this knowledge, challenges arise due to the following problems.

<details>

<summary>sudo1.png Details</summary>

### Visual Description

## Image Analysis: Divided Square with Diagonal Line

### Overview

The image depicts a square divided into four equal quadrants. A diagonal line, composed of dark pixels, is present in the top-left quadrant. The other three quadrants are empty.

### Components/Axes

* **Quadrants:** The square is divided into four quadrants by two perpendicular lines intersecting at the center.

* **Diagonal Line:** A line composed of dark pixels runs diagonally from the bottom-left to the top-right of the top-left quadrant.

### Detailed Analysis

* **Top-Left Quadrant:** Contains a diagonal line. The line appears to be approximately 1/3 the length of the quadrant's diagonal.

* **Top-Right Quadrant:** Empty.

* **Bottom-Left Quadrant:** Empty.

* **Bottom-Right Quadrant:** Empty.

### Key Observations

* The diagonal line is the only element present in the image besides the dividing lines of the square.

* The line is not perfectly straight, showing some pixelation.

### Interpretation

The image is a simple composition. The diagonal line in the top-left quadrant is the focal point. The empty quadrants create a sense of balance and contrast. The image does not provide any specific data or facts beyond its visual elements. It could be interpreted as an abstract representation or a component of a larger visual system.

</details>

(a)

<details>

<summary>sudo2.png Details</summary>

### Visual Description

## Image Analysis: Grid with Lines

### Overview

The image is a 2x2 grid. The top-left cell contains a vertical line, and the bottom-left cell contains a diagonal line. The other two cells are empty.

### Components/Axes

* **Grid:** 2x2 grid structure.

* **Top-Left Cell:** Contains a vertical line.

* **Bottom-Left Cell:** Contains a diagonal line.

* **Top-Right Cell:** Empty.

* **Bottom-Right Cell:** Empty.

### Detailed Analysis or ### Content Details

* **Vertical Line:** Located in the top-left cell. It is approximately vertical, with some minor deviations.

* **Diagonal Line:** Located in the bottom-left cell. It slopes upwards from left to right.

### Key Observations

* The lines are simple, stylized representations.

* The grid provides a clear spatial context for the lines.

### Interpretation

The image presents a basic visual arrangement of lines within a grid. The vertical and diagonal lines are distinct elements, and their placement in the grid creates a simple composition. The empty cells contribute to the overall balance of the image. There is no data or specific information being conveyed beyond the visual arrangement.

</details>

(b)

<details>

<summary>sudo3.png Details</summary>

### Visual Description

## Image: Number Grid

### Overview

The image is a 2x2 grid. The top-left cell contains the number "1", and the bottom-left cell contains the number "2". The other two cells are empty.

### Components/Axes

* **Grid:** 2x2 grid structure.

* **Top-Left Cell:** Contains the number "1".

* **Bottom-Left Cell:** Contains the number "2".

* **Top-Right Cell:** Empty.

* **Bottom-Right Cell:** Empty.

### Detailed Analysis

* The number "1" in the top-left cell is vertically oriented and slightly distorted.

* The number "2" in the bottom-left cell is clearly visible and well-formed.

* The top-right and bottom-right cells are completely blank.

### Key Observations

* The grid contains only two numbers, "1" and "2", in the specified cells.

* The other cells are empty, suggesting a possible sequence or pattern that is incomplete.

### Interpretation

The image presents a simple numerical arrangement within a grid. The presence of "1" and "2" in adjacent cells might indicate a sequence or a counting exercise. The empty cells suggest that the sequence or pattern is not fully represented, leaving room for further interpretation or completion.

</details>

(c)

Figure 1. Example states in the Visual Sudoku environment

(P1) Numerical–symbolic gap. Knowledge is based on symbolic property of states, but only raw numerical states are available.

(P2) Constraint satisfaction. The truth values of propositions in $\mathcal{P}$ mapped from a DRL state $s$ must satisfy domain constraints $\phi$ .

<details>

<summary>pr.png Details</summary>

### Visual Description

## Data Table: Probability Distribution

### Overview

The image presents a data table showing a probability distribution over three binary variables (p1, p2, p3) and their corresponding probabilities (Pr). The table lists all possible combinations of the binary variables and their associated probabilities.

### Components/Axes

* **Columns:**

* p1: Binary variable 1 (0 or 1)

* p2: Binary variable 2 (0 or 1)

* p3: Binary variable 3 (0 or 1)

* Pr: Probability associated with the combination of p1, p2, and p3

### Detailed Analysis or ### Content Details

The table contains the following data:

| p1 | p2 | p3 | Pr |

| -- | -- | -- | --- |

| 0 | 0 | 0 | 0.2 |

| 0 | 0 | 1 | 0.2 |

| 0 | 1 | 0 | 0 |

| 0 | 1 | 1 | 0.1 |

| 1 | 0 | 0 | 0 |

| 1 | 0 | 1 | 0.3 |

| 1 | 1 | 0 | 0.1 |

| 1 | 1 | 1 | 0.1 |

### Key Observations

* The probabilities sum to 1.0 (0.2 + 0.2 + 0 + 0.1 + 0 + 0.3 + 0.1 + 0.1 = 1.0).

* The combination (1, 0, 1) has the highest probability (0.3).

* The combinations (0, 1, 0) and (1, 0, 0) have zero probability.

### Interpretation

The table represents a joint probability distribution over three binary variables. It specifies the probability of each possible combination of the variables. The distribution is not uniform, as some combinations are more likely than others. The zero probabilities indicate that certain combinations are impossible or highly unlikely under the given distribution.

</details>

(a) Distribution

<details>

<summary>psdd_sdd.png Details</summary>

### Visual Description

## Logic Diagram: Fault Tree Analysis

### Overview

The image presents a fault tree diagram, a top-down, deductive failure analysis used to determine how systems can fail, identify the best ways to reduce risk, and determine (or get a feeling for) event rates of a safety accident or a particular system level (functional) failure. The diagram uses standard logic gate symbols (OR and AND) to represent the relationships between events leading to a top-level event. The diagram is rendered in green.

### Components/Axes

* **Logic Gates:**

* OR Gate: Represented by a curved-bottom symbol. An OR gate indicates that the output event occurs if at least one of the input events occurs.

* AND Gate: Represented by a flat-bottom symbol. An AND gate indicates that the output event occurs only if all input events occur.

* **Events:** Represented by text labels (p1, p2, p3, ¬p1, ¬p2, ¬p3). The '¬' symbol indicates the negation of the event.

* **Legend:** Located in the top-left corner, explaining the symbols for OR and AND gates.

### Detailed Analysis

The fault tree diagram starts with a single output at the top and branches down to the input events at the bottom.

* **Top Level:** A single OR gate at the top.

* **Second Level:** The top OR gate connects to two gates: an AND gate on the left and an OR gate on the right.

* **Third Level (Left Branch):** The AND gate connects to two gates: an AND gate on the left and an OR gate on the right.

* **Third Level (Right Branch):** The OR gate connects to a single event, p3.

* **Fourth Level (Left Branch):** The AND gate connects to two events: p3 and ¬p3.

* **Fourth Level (Right Branch):** The OR gate connects to two gates: an AND gate on the left and an AND gate on the right.

* **Bottom Level:** The bottom level consists of the following events: p1, p2, ¬p1, ¬p2, p1, ¬p2, p1, p2.

### Key Observations

* The diagram uses a combination of OR and AND gates to model the relationships between events.

* The negation symbol (¬) is used to represent the complement of an event.

* The diagram shows how multiple events can combine to cause a top-level event.

### Interpretation

The fault tree diagram is a visual representation of the logical relationships between events that can lead to a system failure. By analyzing the diagram, one can identify the critical events and combinations of events that are most likely to cause the failure. This information can be used to improve system design, implement safety measures, and reduce the risk of failure. The presence of both p3 and ¬p3 in the same branch suggests a potential contradiction or a scenario where both the event and its negation are considered. The repetition of p1, p2, ¬p1, and ¬p2 at the bottom level indicates that these events are fundamental to the overall system failure.

</details>

(b) SDD

<details>

<summary>psdd.png Details</summary>

### Visual Description

## Fault Tree Diagram: Boolean Logic Tree

### Overview

The image presents a fault tree diagram, a top-down, deductive failure analysis used to determine how system failures can occur. The diagram uses logic gates (AND, OR) to represent the relationships between events leading to a top-level fault. The diagram is rendered in light green, with probabilities associated with certain branches.

### Components/Axes

* **Logic Gates:** The diagram uses rounded-corner rectangles to represent logic gates.

* **Events:** Events are represented by the inputs to the logic gates, labeled with propositional variables (p1, p2, p3) or combinations thereof.

* **Probabilities:** Numerical values (e.g., 0.6, 0.4, 0.33) are associated with branches, representing probabilities of events occurring.

* **Labels:** The labels include propositional variables (p1, p2, p3) and their negations (¬p1, ¬p2, ¬p3).

### Detailed Analysis

The diagram can be broken down into levels, starting from the top:

* **Top Level:** A single OR gate at the top.

* The output of the top OR gate is connected to two inputs with probabilities 0.6 and 0.4.

* **Second Level:** The left input of the top OR gate is connected to an AND gate. The right input of the top OR gate is connected to an OR gate with an additional input.

* The right input of the top OR gate has an additional input labeled "p3".

* **Third Level:** The left AND gate is connected to two OR gates. The right OR gate is connected to a single AND gate.

* The left OR gate has probabilities 0.33 and 0.67.

* The right OR gate has probabilities 0.75 and 0.25.

* The left AND gate has probabilities 0.5 and 0.5, with labels "p3" and "¬p3".

* **Bottom Level:** The bottom level consists of inputs to the OR and AND gates, labeled with propositional variables and their negations.

* The left OR gate has inputs "p1" and "p2".

* The right OR gate has inputs "¬p1" and "¬p2".

* The left OR gate has inputs "p1" and "¬p2".

* The right OR gate has inputs "¬p1" and "p2".

The probabilities are associated with the branches leading into the logic gates. For example, the top OR gate has inputs with probabilities 0.6 and 0.4. The sum of these probabilities is 1.0.

### Key Observations

* The diagram represents a fault tree, showing how different events can lead to a top-level failure.

* The probabilities associated with the branches indicate the likelihood of each event occurring.

* The logic gates (AND, OR) define the relationships between the events.

* The propositional variables (p1, p2, p3) represent the basic events in the system.

### Interpretation

The fault tree diagram provides a visual representation of the potential causes of a system failure. By analyzing the diagram, it is possible to identify the most critical events and the most likely paths to failure. The probabilities associated with the branches allow for a quantitative assessment of the risk associated with each event. The diagram can be used to improve system design, identify potential weaknesses, and develop mitigation strategies to reduce the likelihood of failure. The presence of both p3 and ¬p3 suggests that the system's behavior depends on both the occurrence and non-occurrence of event p3. The diagram highlights the importance of considering both individual component failures and the interactions between components when assessing system reliability.

</details>

(c) PSDD

<details>

<summary>vtree.png Details</summary>

### Visual Description

## Diagram: Tree Structure

### Overview

The image depicts a tree diagram with nodes labeled with numbers and variables. The tree has a hierarchical structure, branching from a root node to several leaf nodes. All nodes are green.

### Components/Axes

* **Nodes:** Represented by green circles, each labeled with a number.

* Node 0

* Node 1

* Node 2

* Node 3

* Node 4

* **Edges:** Represented by green lines connecting the nodes, indicating the relationships between them.

* **Labels:** Each node has a numerical label and some nodes have an additional variable label.

* Node 0 is labeled with "0" and "p₁"

* Node 1 is labeled with "1"

* Node 2 is labeled with "2" and "p₂"

* Node 3 is labeled with "3"

* Node 4 is labeled with "4" and "p₃"

### Detailed Analysis or ### Content Details

The tree structure can be described as follows:

* Node 1 is the parent node, connected to nodes 0 and 2.

* Node 3 is the parent node, connected to nodes 1 and 4.

* Nodes 0, 2, and 4 are leaf nodes.

The connections are:

* Node 3 connects to Node 1.

* Node 3 connects to Node 4.

* Node 1 connects to Node 0.

* Node 1 connects to Node 2.

### Key Observations

* The tree has a clear hierarchical structure with a single root (Node 3).

* The nodes are labeled with consecutive numbers, but the numbering doesn't seem to follow a strict depth-first or breadth-first traversal.

* The variables p₁, p₂, and p₃ are associated with the leaf nodes 0, 2, and 4, respectively.

### Interpretation

The diagram likely represents a decision tree or a similar hierarchical structure used in computer science or mathematics. The numerical labels could represent states, steps, or indices, while the variables p₁, p₂, and p₃ might represent probabilities or parameters associated with the leaf nodes. The tree structure visualizes the relationships and dependencies between these elements.

</details>

(d) Vtree

<details>

<summary>nml.png Details</summary>

### Visual Description

## Logic Diagram: Prime and Sub-Prime Logic Gate Network

### Overview

The image depicts a logic diagram consisting of multiple AND gates feeding into a single OR gate. The inputs to the AND gates are labeled as "prime" and "sub", with numerical subscripts. The outputs of the AND gates are labeled with alpha symbols, also with numerical subscripts. The entire diagram is rendered in a light green color.

### Components/Axes

* **Logic Gates:** The diagram uses two types of logic gates: AND gates and an OR gate.

* **Inputs:** The inputs to the AND gates are labeled as "prime₁ sub₁ ... primeₙ subₙ".

* **Outputs:** The outputs of the AND gates are labeled as "α₁", "α₂", ..., "αₙ".

* **Connectors:** Lines connect the outputs of the AND gates to the inputs of the OR gate.

### Detailed Analysis

* **AND Gates:** There are multiple AND gates, each with two inputs labeled "primeᵢ" and "subᵢ", where 'i' ranges from 1 to 'n'. The AND gates are represented by a curved shape with two inputs and one output.

* **OR Gate:** A single OR gate is present at the top of the diagram. It has multiple inputs, each connected to the output of an AND gate. The OR gate is represented by a curved shape with multiple inputs and one output.

* **Input Labels:** The inputs to the AND gates are labeled as follows:

* "prime₁" and "sub₁" for the first AND gate.

* "primeₙ" and "subₙ" for the last AND gate.

* The inputs for the intermediate AND gates are represented by ellipsis (...).

* **Output Labels:** The outputs of the AND gates are labeled as follows:

* "α₁" for the first AND gate.

* "α₂" for the second AND gate.

* "αₙ" for the last AND gate.

* The outputs for the intermediate AND gates are represented by ellipsis (...).

* **Connections:** The outputs "α₁", "α₂", ..., "αₙ" are connected to the inputs of the OR gate.

### Key Observations

* The diagram represents a logic network where the outputs of multiple AND gates are combined using an OR gate.

* The inputs to the AND gates are labeled as "prime" and "sub", suggesting a relationship between these inputs.

* The number of AND gates is 'n', as indicated by the subscripts on the input and output labels.

### Interpretation

The diagram likely represents a logical function where the OR gate's output is true if at least one of the AND gates outputs a true value. Each AND gate requires both its "prime" and "sub" inputs to be true in order to output a true value. The overall function could be interpreted as a condition where at least one "prime" and its corresponding "sub" condition must be met for the final output to be true. The ellipsis indicate that the pattern continues for an unspecified number of AND gates.

</details>

(e) A general fragment

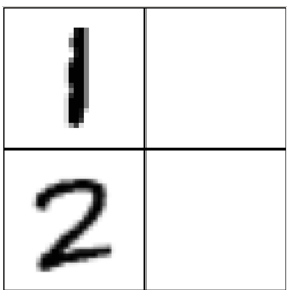

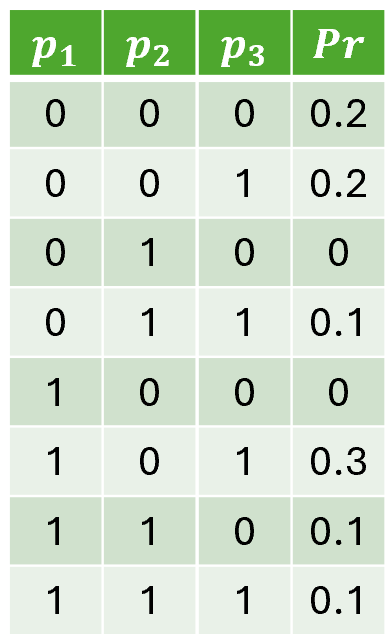

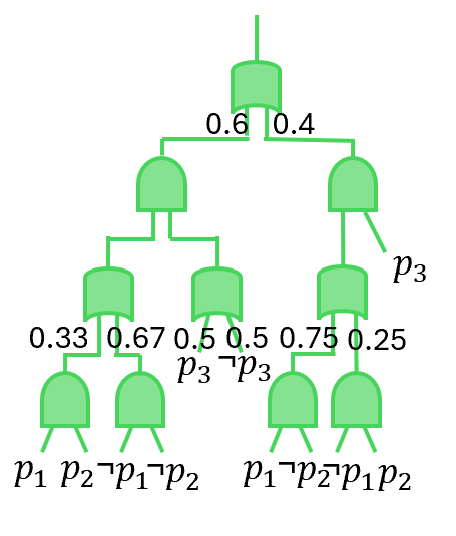

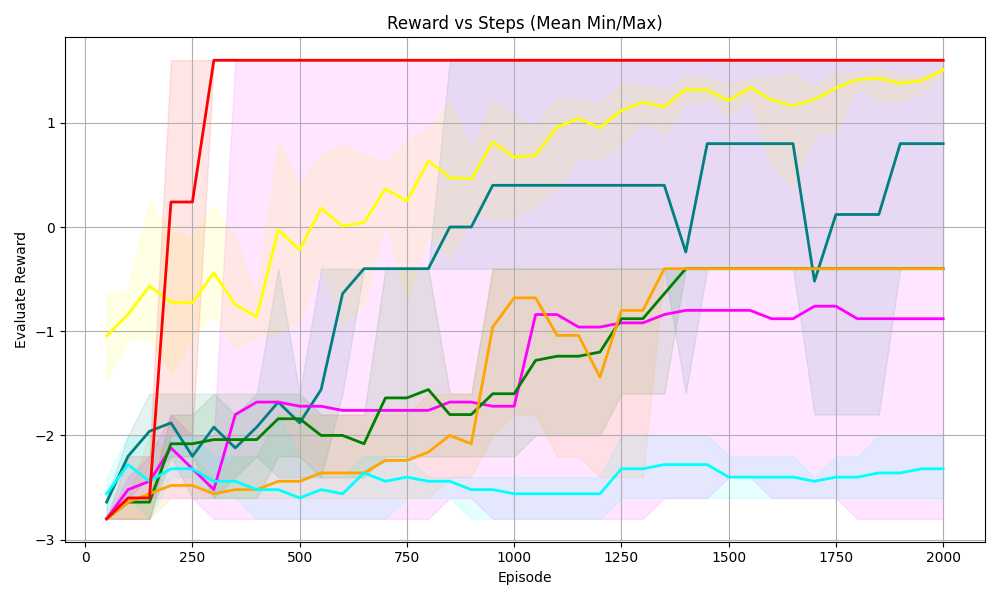

Figure 2. (a) An example of joint distribution for three propositions $p_{1},p_{2}$ and $p_{3}$ with the constraint $(p_{1}\leftrightarrow p_{2})\lor p_{3}$ . (b) A SDD circuit with ‘OR’ and ‘AND’ logic gate to represent the constrain $(p_{1}\leftrightarrow p_{2})\lor p_{3}$ . (c) The PSDD circuit to represent the distribution in Fig. 2(a). (d) The vtree used to group variables. (e) A general fragment to show the structure of SDD and PSDD.

(P3) Minimal supervision. The RL environment cannot provide full ground truth of propositions at each state.

(P4) Differentiability. The symbolic reasoning with $\varphi$ introduces non-differentiable process, which could be conflicting with gradient-based DRL algorithms that require differentiable policies.

(P5) End-to-end learning. Achieving end-to-end training on prediction of propositions, symbolic reasoning over preconditions and optimization of policy is challenging.

In summary, the above challenges fall into three categories. (P1–P3) concern learning symbolic models from high-dimensional states in DRL, which we address in Section 3. (P4) relates to the differentiability barrier when combining symbolic reasoning with gradient-based DRL, which we tackle in Section 4. (P5) raises the need for an end-to-end training, which we present in Section 5.

## 3. Learning Symbolic Grounding

This section introduces how NSAM learns symbolic grounding. At a high level, the goal is to learn whether an action is explorable in a state. Specifically, the agent receives minimal supervision from state transitions after executing an action $a$ . Using this supervision, NSAM learns to estimate the symbolic model of the high-dimensional input state that is in turn used to check the satisfiability of action preconditions. To achieve this, Section 3.1 presents a knowledge compilation step to encode domain constraints into a symbolic structure, while Section 3.2 explains how this symbolic structure is parameterized and learned from minimal supervision.

### 3.1. Compiling the Knowledge

To address P2 (Constraint satisfaction), we introduce the Probabilistic Sentential Decision Diagram (PSDD) kisa2014probabilistic. PSDDs are designed to represent probability distributions $Pr(\bm{m})$ over possible models, where any model $\bm{m}$ that violates domain constraints is assigned zero probability conditionalPSDD. For example, consider the distribution in Figure 2(a). The first step in constructing a PSDD is to build a Boolean circuit that captures the entries whose probability values are always zero, as shown in Figure 2(b). Specifically, the circuit evaluates to $0$ for model $\bm{m}$ if and only if $\bm{m}\not\models\phi$ . The second step is to parameterize this Boolean circuit to represent the (non-zero) probability of valid entries, yielding the PSDD in Figure 2(c).

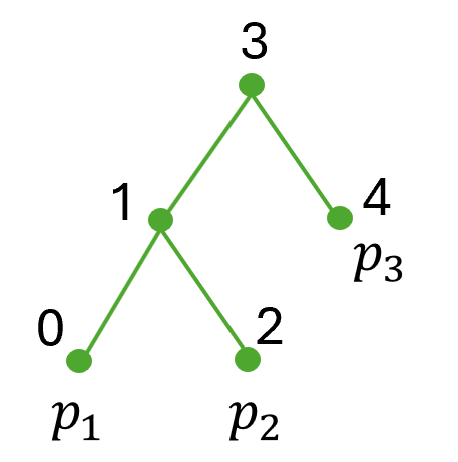

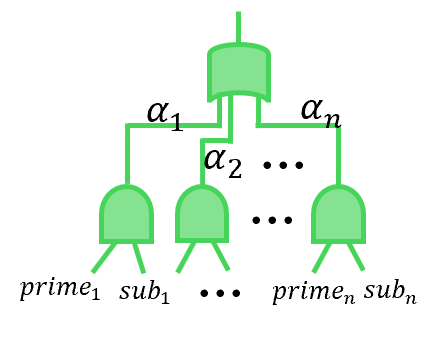

To obtain the Boolean circuit in Figure 2(b), we represent the domain constraint $\phi$ using a general data structure called a Sentential Decision Diagram (SDD) sdd. An SDD is a normal form of a Boolean formula that generalizes the well-known Ordered Binary Decision Diagram (OBDD) OBDD; OBDD2. SDD circuits satisfy specific syntactic and semantic properties defined with respect to a binary tree, called a vtree, whose leaves correspond to propositions (see Figure 2(d)). Following Darwiche’s definition sdd; psdd_infer1, an SDD normalized for a vtree $v$ is a Boolean circuit defined as follows: If $v$ is a leaf node labeled with variable $p$ , the SDD is either $p$ , $\neg p$ , $\top$ , $\bot$ , or an OR gate with inputs $p$ and $\neg p$ . If $v$ is an internal node, the SDD has the structure shown in Figure 2(e), where $\textit{prime}_{1},\ldots,\textit{prime}_{n}$ are SDDs normalized for the left child $v^{l}$ , and $\textit{sub}_{1},\ldots,\textit{sub}_{n}$ are SDDs normalized for the right child $v^{r}$ . SDD circuits alternate between OR gates and AND gates, with each AND gate having exactly two inputs. The OR gates are mutually exclusive in that at most one of their inputs evaluates to true under any circuit input sdd; psdd_infer1.

A PSDD is obtained by annotating each OR gate in an SDD with parameters $(\alpha_{1},\ldots,\alpha_{n})$ over its inputs kisa2014probabilistic; psdd_infer1, where $\sum_{i}\alpha_{i}=1$ (see Figure 2(e)). The probability distribution defined by a PSDD is as follows. Let $\bm{m}$ be a model that assigns truth values to the PSDD variables, and suppose the underlying SDD evaluates to $0$ under $\bm{m}$ ; then $Pr(\bm{m})=0$ . Otherwise, $Pr(\bm{m})$ is obtained by multiplying the parameters along the path from the output gate.

The key advantage of using PSDDs in our setting is twofold. First, PSDDs strictly enforce domain constraints by assigning zero probability to any model $\bm{m}$ that violates $\phi$ conditionalPSDD, thereby ensuring logical consistency (P2). Second, by ruling out impossible truth assignment through domain knowledge, PSDDs effectively reduce the scale of the probability distribution to be learned ahmed2022semantic.

Besides, PSDDs also support tractable probabilistic queries PCbooks; psdd_infer1. While PSDD compilation can be computationally expensive as its size grows exponentially in the number of propositions and constraints, it is a one-time offline cost. Once compilation is completed, PSDD inference is linear-time, making symbolic reasoning efficient during both training and execution psdd_infer1.

### 3.2. Learning the parameters of PSDD in DRL

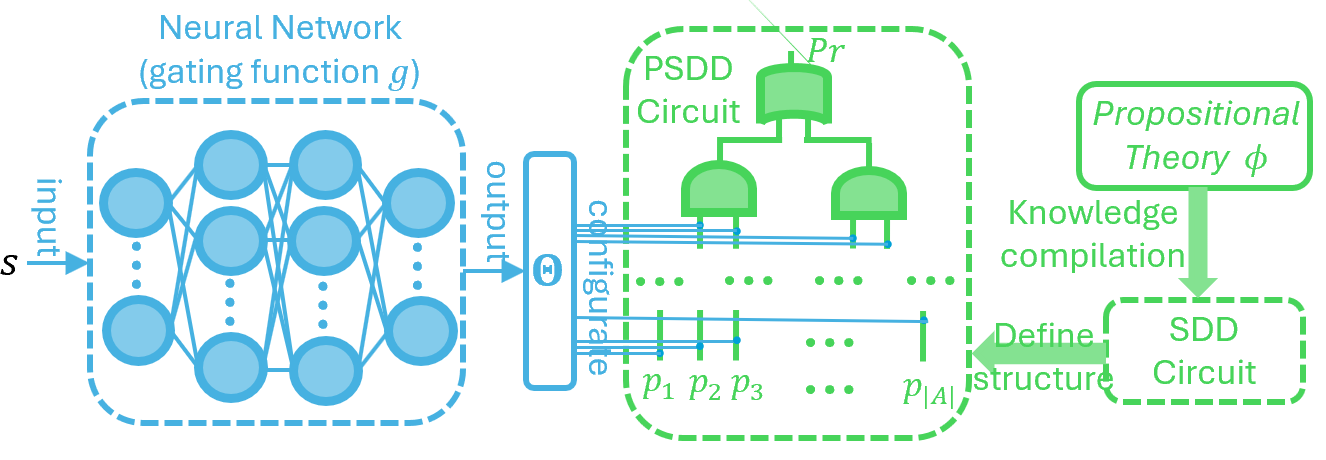

To address P1 (Numerical–symbolic gap), we need to learn distributions of models that satisfy the domain constraints. Inspired by recent deep supervised learning work on PSDDs ahmed2022semantic, we parameterize the PSDD using the output of gating function $g$ . This gating function is a neural network that maps high-dimensional RL states to PSDD parameters $\Theta=g(s)$ . This design allows the PSDD to represent state-conditioned distributions over propositions through its learned parameters, while strictly adhering to domain constraints (via its structure defined by symbolic knowledge $\phi$ ). The overall process is shown in Figure 3. We use $Pr(\bm{m}\mid\bm{\Theta}=g(s),\bm{m}\models\phi)$ to denote the probability of model $\bm{m}$ that satisfy the domain constrains $\phi$ given the state $s$ (this is calculated by PSDD in Figure 3).

After initializing $g$ and the PSDD according to the structure in Figure 3, we obtain a distribution over $\bm{m}$ such that for all $\bm{m}\not\models\phi$ , $Pr(\bm{m}\mid\bm{\Theta}=g(s),\bm{m}\not\models\phi)=0$ . However, for the probability distribution over $\bm{m}$ that does satisfy $\phi$ , we still need to learn from data to capture the probability of different $\bm{m}$ by adjusting parameters of gating function $g$ . To train the PSDD from minimal supervision signals (for problem (P3) in Section 2), we construct the supervision data from $\Gamma_{\phi}$ , which consists of tuples $(s,a,s^{\prime},y)$ where transitions $(s,a,s^{\prime})$ are explored from the environment and $y$ is calculated by:

$$

y=\begin{cases}1,&\text{if }s\;\text{and}\;s^{\prime}\;\text{do not violate}\;\phi,\\

0,&\text{otherwise.}\end{cases} \tag{1}

$$

That is, the action $a$ is labeled as explorable (i.e., $y=1$ ) in state $s$ if it does not lead to a violation of the domain constraint $\phi$ ; otherwise the action a is not explorable (i.e., $y=0$ ).

<details>

<summary>Framework.png Details</summary>

### Visual Description

## System Diagram: Neural Network Gating for PSDD and SDD Circuits

### Overview

The image is a system diagram illustrating the flow from a neural network to a PSDD circuit and then to an SDD circuit, involving propositional theory and knowledge compilation. The diagram shows how a neural network's output configures a PSDD circuit, which is then used to define the structure of an SDD circuit derived from a propositional theory.

### Components/Axes

* **Neural Network (gating function g):** Located on the left side of the diagram, represented by a dashed blue box containing a multi-layered neural network. It takes an input 'S' and produces an output.

* **Output Configuration:** The output of the neural network is labeled "output" and is connected to a block labeled "configure".

* **PSDD Circuit:** Located in the center of the diagram, enclosed in a dashed green box. It contains a circuit diagram with logic gates (AND/OR) and input lines labeled p1, p2, p3, ..., p|A|. The top of the PSDD circuit is labeled "Pr".

* **Propositional Theory φ:** Located on the right side of the diagram, enclosed in a rounded green box.

* **SDD Circuit:** Located below the "Propositional Theory φ", enclosed in a dashed green box.

* **Arrows:** Arrows indicate the flow of information and processes.

* An arrow from the Neural Network's "output" to the "configure" block.

* An arrow from the "configure" block to the PSDD circuit.

* An arrow from "Propositional Theory φ" to "SDD Circuit" labeled "Knowledge compilation".

* An arrow from the PSDD circuit to the SDD circuit labeled "Define structure".

### Detailed Analysis or Content Details

* **Neural Network:** The neural network has multiple layers of interconnected nodes. The input is labeled 'S', and the output is labeled 'output'.

* **PSDD Circuit:** The circuit consists of AND and OR gates. The inputs to the circuit are labeled p1, p2, p3, and p|A|. The top of the circuit is labeled "Pr".

* **Propositional Theory φ:** This block represents a propositional theory, which is compiled into an SDD circuit.

* **SDD Circuit:** The SDD circuit is derived from the propositional theory and its structure is defined by the PSDD circuit.

### Key Observations

* The diagram illustrates a process where a neural network's output is used to configure a PSDD circuit.

* The PSDD circuit then defines the structure of an SDD circuit, which is derived from a propositional theory.

* The flow is from left to right, starting with the neural network and ending with the SDD circuit.

### Interpretation

The diagram describes a system that integrates a neural network with logical circuits (PSDD and SDD) to perform knowledge compilation. The neural network acts as a gating function, influencing the configuration of the PSDD circuit. This configuration, in turn, guides the structure of the SDD circuit, which is derived from a propositional theory. This suggests a method for incorporating learned patterns from data (via the neural network) into logical reasoning and knowledge representation (via the SDD circuit). The system could be used to create more efficient or adaptive logical systems by leveraging the strengths of both neural networks and logical circuits.

</details>

Figure 3. The architecture design to calculate the probability of symbolic model $\bm{m}$ given DRL state $s$ .

Unlike a fully supervised setting that expensively requires labeling every propositional variable in $P$ , Eq. (1) only requires labeling whether a given state violates the domain constraint $\phi$ , which is a minimal supervision signal. In practice, the annotation of $y$ can be obtained either (i) by providing labeled data on whether the resulting state $s^{\prime}$ violates the constraint $\phi$ book2006, or (ii) via an automatic constraint-violation detection mechanism autochecking1; autochecking2.

We emphasize that action preconditions $\varphi$ and the domain constraints $\phi$ are two separate elements and treated differently. We first automatically generate training data to learn PSDD parameters by constructing tuples $(s,a,s^{\prime},y)$ as defined in Equation (1). The argument $y$ in tuples $(s,a,s’,y)$ is then used as an indicator for action preconditions. Specifically, we use $y$ to label whether action $a$ is excutable in state $s$ , i.e., if transition $(s,a,s^{\prime})$ is explored by DRL policy in a non-violating states $s$ and $s^{\prime}$ , then $y=1$ , meaning that action a is explorable in $s$ ; otherwise $y=0$ . We thus use $y$ in $(s,a,s’,y)$ as a minimal supervision signal to estimate the probability of the precondition of action $a$ being satisfied in non-violating $s$ during PSDD training.

By continuously rolling out the DRL agent in the environment, we store $(s,a,s^{\prime},y)$ into a buffer $\mathcal{D}$ . After collecting sufficient data, we sample batches from $\mathcal{D}$ and update $g$ via stochastic gradient descent SDG; ADAM. Concretely, the update proceeds as follows. Given samples $(s,a,s^{\prime},y)$ , we first use the current PSDD to estimate the probability that action $a$ is explorable in state $s$ , i.e., the probability that $s$ satisfies the precondition $\varphi$ associated with $a$ in $\mathcal{AP}$ :

$$

\hat{P}(a|s)=\sum_{\bm{m}\models\varphi}Pr(\bm{m}|\bm{\Theta}=g(s),\bm{m}\models\phi) \tag{2}

$$

Note that $\hat{P}(a|s)$ here does not represent a policy as in standard DRL; rather, it denotes the estimated probability that action $a$ is explorable in state $s$ . As shown in Equation (2), this probability is calculated by aggregating the probabilities of all models $\bm{m}$ that satisfy the precondition $\varphi$ . In addition, to evaluate if $\bm{m}\models\varphi$ , we assign truth values to the leaf variables of $\varphi$ ’s SDD circuit based on $\bm{m}$ and propagate them bottom-up through the ‘OR’ and ‘AND’ gates, where the Boolean value at the root indicates the satisfiability.

Given the probability estimated from Equation (2), we compute the cross-entropy loss CROSSENTR by comparing it with the explorability label $y$ . Specifically, for a single data $(s,a,s^{\prime},y)$ , the loss is:

$$

L_{g}=-[y\cdot log(\hat{P}(a|s))+(1-y)\cdot log(1-\hat{P}(a|s))] \tag{3}

$$

The intuition of this loss is straightforward: at each $s$ it encourages the PSDD to generate higher probability to actions that are explorable (when $y=1$ ), and generate lower probability to those that are not explorable (when $y=0$ ).

## 4. Combining symbolic reasoning with gradient-based DRL

Through the training of the gating function defined in Equation (3), the PSDD in Figure 3 can predict, for a given DRL state, a distribution over the symbolic model $\bm{m}$ for atomic propositions in $\mathcal{P}$ . This distribution then can be used to evaluate the truth values of the preconditions in $\mathcal{AP}$ and to reason about the explorability of actions. However, directly applying symbolic logical formula of preconditions to take actions results in non-differentiable decision-making sg2_1, which prevents gradient flow during policy optimization. This raises a key challenge on integrating symbolic reasoning with gradient-based DRL training in a way that preserves differentiability, i.e., problem (P4) in Section 2.

To address this issue, we employ the PSDD to perform maximum a posteriori (MAP) query PCbooks, obtaining the most likely model $\hat{\bm{m}}$ for the current state. Based on $\hat{\bm{m}}$ and the precondition $\varphi$ of each action $a$ , we re-normalize the action probabilities from a policy network. In this way, the learned symbolic representation from the PSDD can be used to constrain action selection, while the underlying policy network still provides a probability distribution that can be updated through gradient-based optimization.

Concretely, before the DRL agent makes a decision, we first use the PSDD to obtain the most likely model describing the state:

$$

\hat{\bm{m}}=argmax_{\bm{m}}Pr(\bm{m}|\bm{\Theta}=g(s),\bm{m}\models\phi) \tag{4}

$$

Importantly, the argmax operation on the PSDD does not require enumerating all possible $\bm{m}$ . Instead, it can be computed in linear time with respect to the PSDD size by exploiting its structural properties on decomposability and Determinism (see psdd_infer1). This linear-time inference makes PSDDs particularly attractive for DRL, where efficient evaluation of candidate actions are essential anokhinhandling.

After obtaining the symbolic model of the state, we renormalize the probability of each action $a$ according to its precondition $\varphi$ :

$$

\pi^{+}(s,a,\phi)=\frac{\pi(s,a)\cdot C_{\varphi}(\hat{\bm{m}})}{\sum_{a^{\prime}\in\mathcal{A}}\pi(s,a^{\prime})\cdot C_{\varphi^{\prime}}(\hat{\bm{m}})} \tag{5}

$$

where $\pi(s,a)$ denotes the probability of action $a$ at state $s$ predicted by the policy network, $C_{\varphi}(\hat{\bm{m}})$ is the evaluation of the SDD encoding from $\varphi$ under the model $\hat{\bm{m}}$ , and $\varphi^{\prime}$ is the precondition of action $a^{\prime}$ . The input of Equation (5) explicitly includes $\phi$ , as $\phi$ is required for evaluating the model $\hat{\bm{m}}$ in Equation (4). Intuitively, $C_{\varphi}(\hat{\bm{m}})$ acts as a symbolic mask. It equals to 1 if $\hat{\bm{m}}\models\varphi$ (i.e., the precondition is satisfied) and 0 otherwise. As a result, actions whose preconditions are violated are excluded from selection, while the probabilities of the remaining actions are renormalized as a new distribution. It is important to note that during the execution, we use the PSDD (trained by $y$ in Equation (2) and (3) ) to infer the most probable symbolic model of the current state (in Equation (4)), and therefore can formally verify whether each action’s precondition is satisfied with this symbolic model (happened in $C_{\varphi}$ in Equation (5)).

According to prior work, such $0$ - $1$ masking and renormalization still yield a valid policy gradient, thereby preserving the theoretical guarantees of policy optimization actionmasking_vPG. In practice, we optimize the masked policy $\pi^{+}$ using the Proximal Policy Optimization (PPO) objective schulman2017ppo. Concretely, the loss is:

$$

\mathcal{L}_{\text{PPO}}(\pi^{+})=\mathbb{E}_{t}\!\left[\min\!\Big(\mathfrak{r}_{t}(\pi^{+})\,\hat{A}_{t},\text{clip}(\mathfrak{r}_{t}(\pi^{+}),1-\epsilon,1+\epsilon)\,\hat{A}_{t}\Big)\right] \tag{6}

$$

where $\mathfrak{r}_{t}(\pi^{+})$ denotes the probability ratio between the new and old masked policies, ‘ clip ’ is the clip function and $\hat{A}_{t}$ is the advantage estimate schulman2017ppo. In this way, the masked policy can be trained with PPO to effectively exploit symbolic action preconditions, leading to safer and more sample-efficient learning.

## 5. End-to-end training framework

After deriving the gating function loss of PSDD in Equation (3) and the DRL policy loss in Equation (6), we now introduce an end-to-end training framework that combines the two components.

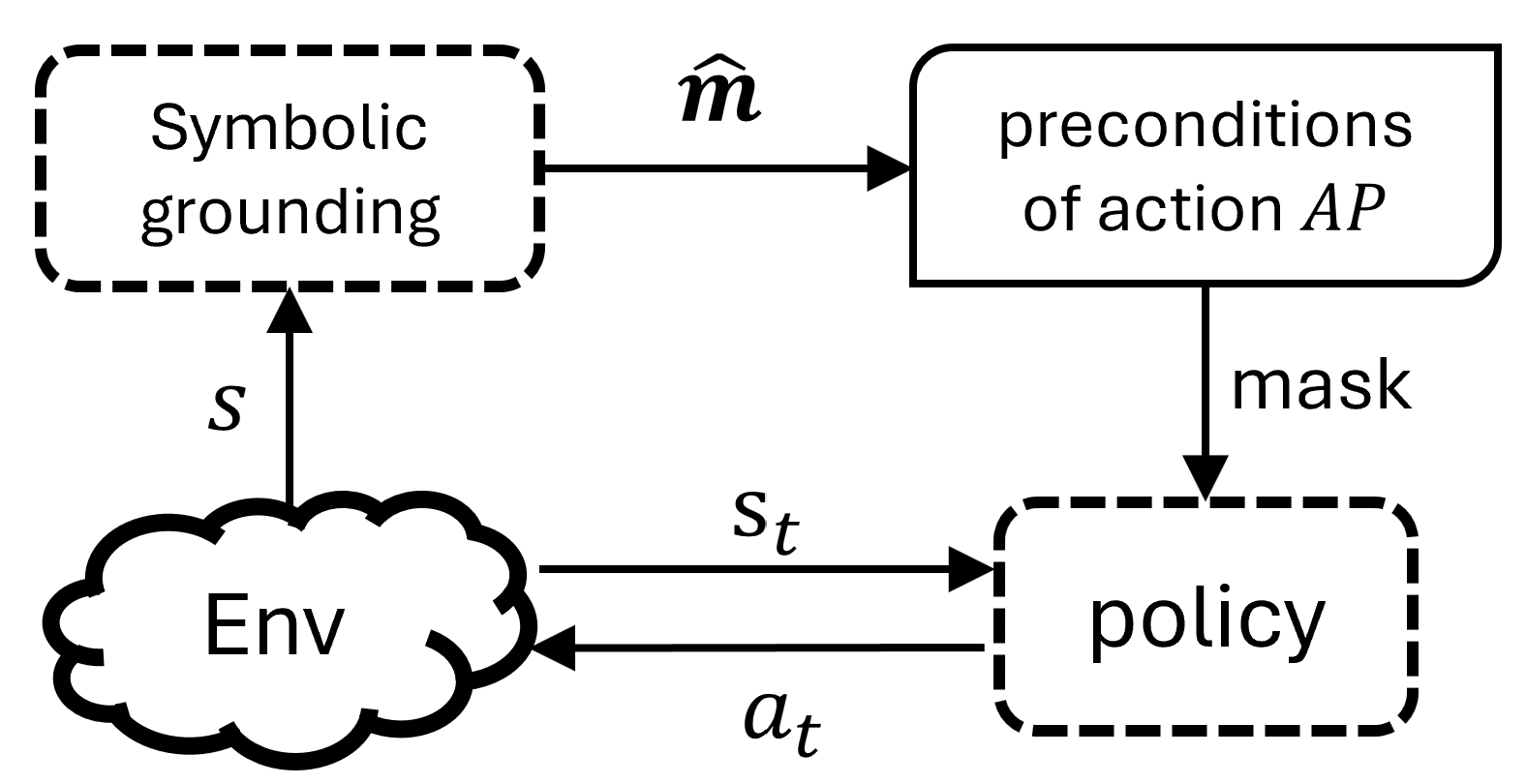

Before presenting the training procedure, we first summarize how the agent makes decisions, as illustrated in Figure 4. At each time step, the state $s$ is first input into the symbolic grounding module, whose internal structure is shown in Figure 3. Within this module, the PSDD produces the most probable symbolic description of the state, i.e., a model $\hat{\bm{m}}$ , according to Equation (4). The agent then leverages the preconditions in $\mathcal{AP}$ (following Equation (5)) to mask the action distribution from policy network, and samples an action from the renormalized distribution to interact with the environment.

<details>

<summary>probSetting.png Details</summary>

### Visual Description

## Diagram: Symbolic Grounding and Policy Interaction

### Overview

The image is a diagram illustrating the interaction between symbolic grounding, preconditions of an action, an environment, and a policy. It depicts a flow of information and control between these components.

### Components/Axes

* **Symbolic grounding:** A dashed-line rectangle at the top-left.

* **preconditions of action AP:** A solid-line rectangle with rounded corners at the top-right.

* **Env:** A cloud-shaped object at the bottom-left.

* **policy:** A dashed-line rectangle at the bottom-right.

* **Arrows:** Indicate the direction of information flow.

* An arrow labeled "$\hat{m}$" points from "Symbolic grounding" to "preconditions of action AP".

* An arrow labeled "s" points from "Env" to "Symbolic grounding".

* An arrow labeled "mask" points from "preconditions of action AP" to "policy".

* An arrow labeled "$s_t$" points from "Env" to "policy".

* An arrow labeled "$a_t$" points from "policy" to "Env".

### Detailed Analysis or ### Content Details

The diagram shows the following relationships:

1. Symbolic grounding provides information ($\hat{m}$) to the preconditions of an action AP.

2. The environment (Env) provides state information (s) to symbolic grounding.

3. The preconditions of action AP provide a mask to the policy.

4. The environment (Env) provides state information ($s_t$) to the policy.

5. The policy outputs an action ($a_t$) that affects the environment (Env).

### Key Observations

* The diagram represents a closed-loop system.

* Symbolic grounding and preconditions of action AP are at a higher level of abstraction compared to the environment and policy.

* The policy interacts directly with the environment.

### Interpretation

The diagram illustrates a system where symbolic knowledge (grounding and preconditions) influences a policy's behavior within an environment. The symbolic grounding provides a high-level understanding of the environment, which is used to define the preconditions for actions. The policy then uses these preconditions, along with the current state of the environment, to select an action. The action, in turn, affects the environment, creating a feedback loop. The "mask" suggests that the preconditions filter or constrain the policy's actions. The diagram suggests a hierarchical control structure where symbolic reasoning guides the policy's decision-making process.

</details>

Figure 4. An illustration of the decision process of our agent, where the symbolic grounding module is as in Figure 3 and $\hat{\bm{m}}$ is calculated via the PSDD by Equation (4).

An illustration of the decision process of our agent.

Algorithm 1 Training framework.

1: Compile $\phi$ as SDD to obtain structure of PSDD

2: Initialize gating network $g$ according to the structure of PSDD

3: Initialize policy network $\pi$ , total step $T\leftarrow 0$

4: Initialize a data buffer $\mathcal{D}$ for learning PSDD

5: for $Episode=1\to M$ do

6: Reset $Env$ and get $s$

7: while not terminal do

8: Calculate action distribution before masking $\pi(s,a)$

9: Calculate $\Theta=g(s)$ and assign parameter $\Theta$ to PSDD

10: Calculate $\hat{\bm{m}}$ in Equation (4)

11: Calculate action distribution after masking $\pi^{+}(s,a,\phi)$

12: Sample an action $a$ from $\pi^{+}(s,a,\phi)$

13: Execute $a$ and get $r$ , $s^{\prime}$ from $Env$

14: Obtain the truth-value $y$ according to Equ. (1)

15: Store $(s,a,\varphi)$ into $\mathcal{D}$

16: if terminal then

17: Update policy $\pi^{+}(s,a,\phi)$ using the trajectory of this episode with Equation (6)

18: end if

19: if $(T+1)\;\$ then

20: Sample batches from $\mathcal{D}$

21: Update gating function $g$ with Equation (3)

22: end if

23: $s\leftarrow s^{\prime}$ , $T\leftarrow T+1$

24: end while

25: end for

To achieve end-to-end training, we propose Algorithm 1. The key idea for this training framework is to periodically update the gating function of the PSDD during the agent’s interaction with the environment, while simultaneously training the policy network under the guidance of action masks. As the RL agent explores, it continuously generates minimally supervised feedback for the PSDD via $\Gamma_{\phi}$ , thereby improving the quality of the learned action masks. In turn, the improved action masking reduces infeasible actions and guides the agent toward higher rewards and more informative trajectories, which accelerates policy learning.

Concretely, before the start of training, the domain constrain $\phi$ is compiled into an SDD in Line 1, which determines both the structure of the PSDD and the output dimensionality of the gating function. Lines 2 $\sim$ 4 initialize the gating function, the RL policy network, and a replay buffer $\mathcal{D}$ that stores minimally supervised feedback for PSDD training. In Lines 5 $\sim$ 25, the agent interacts with the environment and jointly learns the gating function and policy network. At each step (lines 8 $\sim$ 11), the agent computes the masked action distribution based on the current gating function and policy network, which is crucial to minimizing the selection of infeasible actions during training. At the end of each episode (lines 16 $\sim$ 18), the policy network is updated using the trajectory of this episode. In this process, the gating function is kept frozen. In addition, the gating function is periodically updated (lines 19 $\sim$ 22) with frequency $freq_{g}$ . This periodically update enables the PSDD to provide increasingly accurate action masks in subsequent interactions, which simultaneously improves policy optimization and reduces constraint violations.

## 6. Related work

Symbolic grounding in neuro-symbolic learning. In the literature on neuro-symbolic systems NSsys; NSsys2, symbol grounding refers to learning a mapping from raw data to symbolic representations, which is considered as a key challenge for integrating neural networks with symbolic knowledge neuroRM. Various approaches have been proposed to address this challenge in deep supervised learning. sg1_1; sg1_2; sg1_3 leverage logic abduction or consistency checking to periodically correct the output symbolic representations. To achieve end-to-end differentiable training, the most common methods are embedding symbolic knowledge into neural networks through differentiable relaxations of logic operators, such as fuzzy logic sg2_2 or Logic Tensor Networks (LTNs) sg2_1. These methods approximate logical operators with smooth functions, allowing symbolic supervision to be incorporated into gradient-based optimization sg2_2; sg2_3; sg2_4; neuroRM. More recently, advances in probabilistic circuits psdd_infer1; PCbooks give rise to efficient methods that embed symbolic knowledge via differentiable probabilistic representations, such as PSDD kisa2014probabilistic. In these methods, symbolic knowledge is first compiled into SDD sdd to initialize the structure, after which a neural network is used to learn the parameters for predicting symbolic outputs ahmed2022semantic. This class of approaches has been successfully applied to structured output prediction tasks, including multi-label classification ahmed2022semantic and routing psdd_infer2.

Symbolic grounding is also crucial in DRL. NRM neuroRM learn to capture the symbolic structure of reward functions in non-Markovian reward settings. In contrast, our approach learns symbolic properties of states to constrain actions under Markovian reward settings. KCAC KCAC has extended PSDDs to MDP with combinatorial action spaces, where symbolic knowledge is used to constrain action composition. Our work also uses PSDDs but differs from KCAC. We use preconditions of actions as symbolic knowledge to determine the explorability of each individual action in a standard DRL setting, whereas KCAC incorporates knowledge about valid combinations of actions in a DRL setting with combinatorial action spaces.

Action masking. In DRL, action masking refers to masking out invalid actions during training to sample actions from a valid set actionmasking_vPG. Empirical studies in early real-world applications show that masking invalid actions can significantly improve sample efficiency of DRL actionmasking_app1; actionmasking_app2; actionmasking_app3; actionmasking_app4; actionmasking_app5; actionmasking_app6. Following the systematic discussion of action masking in DRL actionmasking_review, actionmasking_onoffpolicy investigates the impact of action masking on both on-policy and off-policy algorithms. Works such as actionmasking_continous; actionmasking_continous2 extend action masking to continuous action spaces. actionmasking_vPG proves that binary action masking have Valid policy gradients during learning. In contrast to these approaches, our method does not assume that the set of invalid actions is predefined by the environment. Instead, we learn the set of invalid actions in each state for DRL using action precondition knowledge.

Another line of work employs a logical system (e.g., linear temporal logic LTL1985) to restrict the agent’s actions shielding1; shielding2; shielding3; PPAM. These approaches require a predefined symbol grounding function to map states into its symbolic representations, whereas our method learn such function (via PSDD) from data. PLPG PLPG learns the probability of applying shielding with action constraints formulated in probabilistic logics. By contrast, our preconditions are hard constraints expressed in propositional logic: if the precondition of an action is evaluated to be false, the action is strictly not explorable.

Cost-based safe reinforcement learning. In addition to action masking, a complementary approach is to jointly optimize rewards and a safety-wise cost function to improve RL safety. In these cost-based settings, a policy is considered safe if its expected cumulative cost remains below a pre-specified threshold safeexp1; PPOlarg. A representative foundation of such cost-based approach is the constrained Markov decision process (CMDP) framework CMDP1994, which aims to maximize expected reward while ensuring costs below a threshold. Subsequent works often adopt Lagrangian relaxation to incorporate constraints into the optimization objective ppo-larg1; ppo-larg2; ppo-larg3; ppo-larg4; PPOlarg. However, these methods often suffer from unsafe behaviors in the early stages of training highvoil1. To address such issues, safe exploration approaches emphasize to control the cost during exploration in unknown environments shielding1; shielding2. Recently, SaGui safeexp1 employed imitation learning and policy distillation to enable agents to acquire safe behaviors from a teacher agent during early training. RC-PPO RCPPO augmented unsafe states to allow the agent to anticipate potential future losses. While constraints can in principle be reformulated as cost functions, our approach does not rely on cost-based optimization. Instead, we directly exploit them to learn masks to avoid the violation of actions constraints.

## 7. Experiment

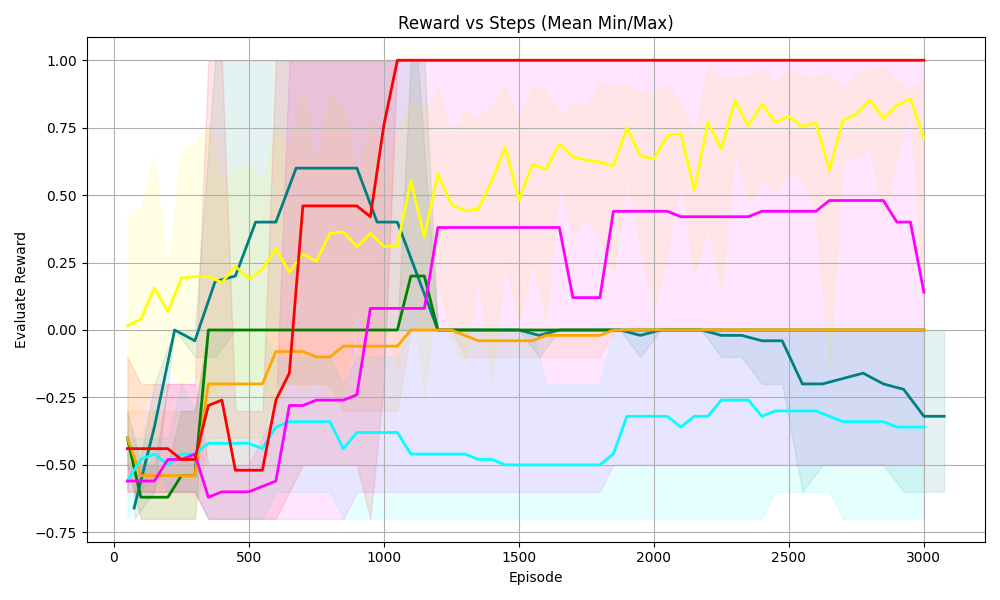

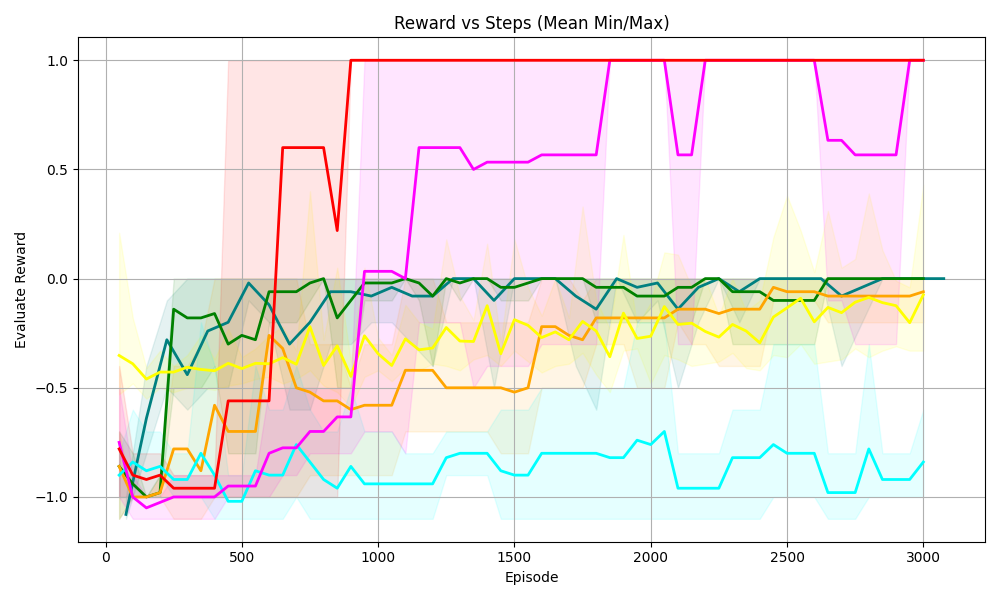

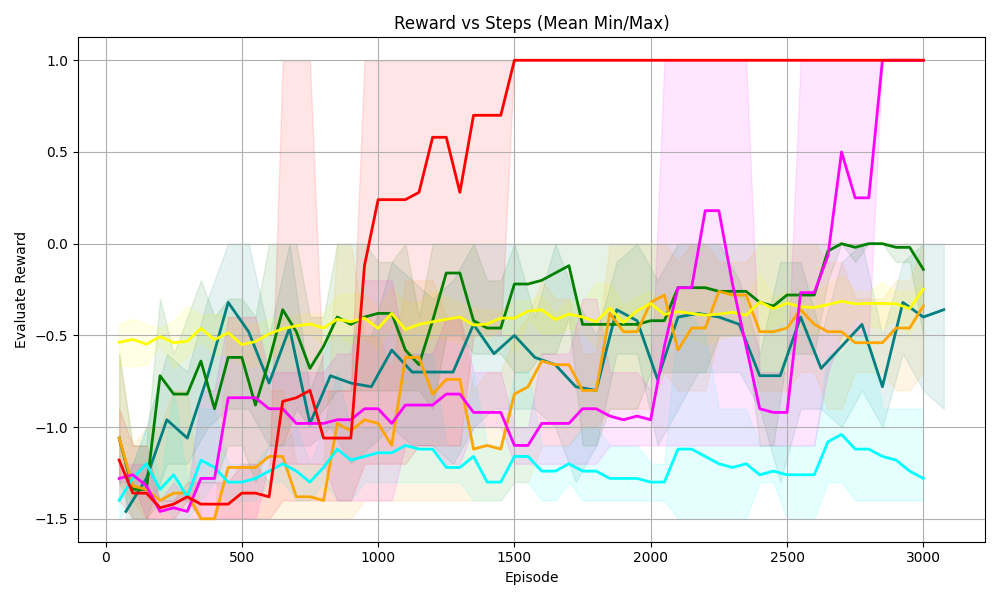

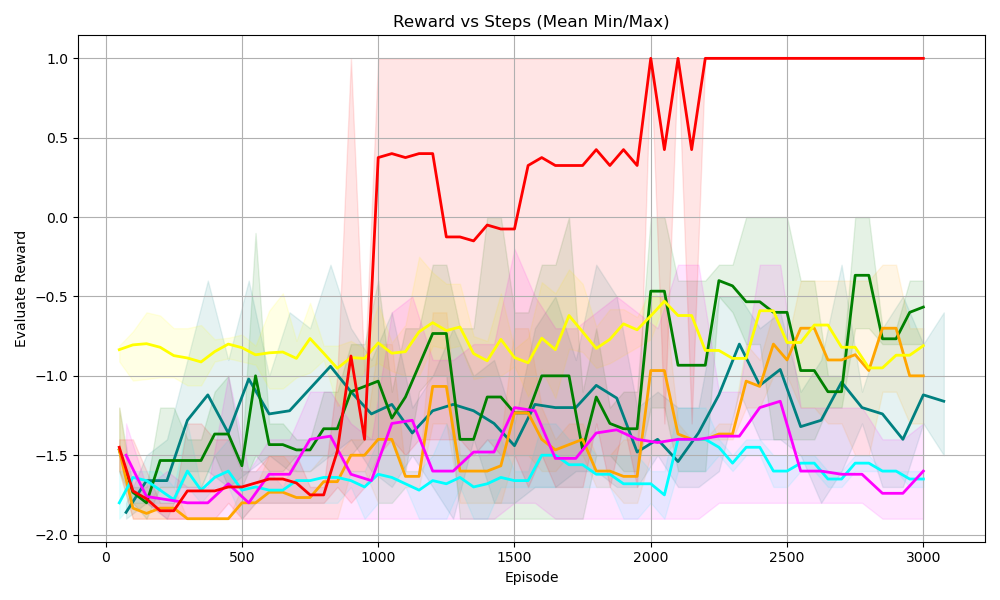

The experimental design aims to answer the following questions:

Q1: Without predefined symbolic grounding, can NSAM leverage symbolic knowledge to improve the sample efficiency of DRL?

Q2: By jointly learning symbolic grounding and masking strategies, can NSAM significantly reduce constraint violations during exploration, thereby enhancing safety?

Q3: In NSAM, is symbolic grounding with PSDDs more effective than replacing it with a module based on standard neural network?

Q4: In what ways does symbolic knowledge contribute to the learning process of NSAM?

### 7.1. Environments

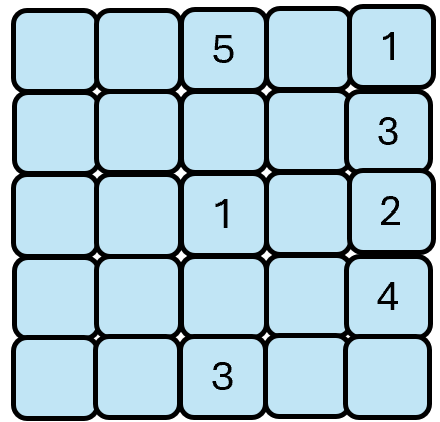

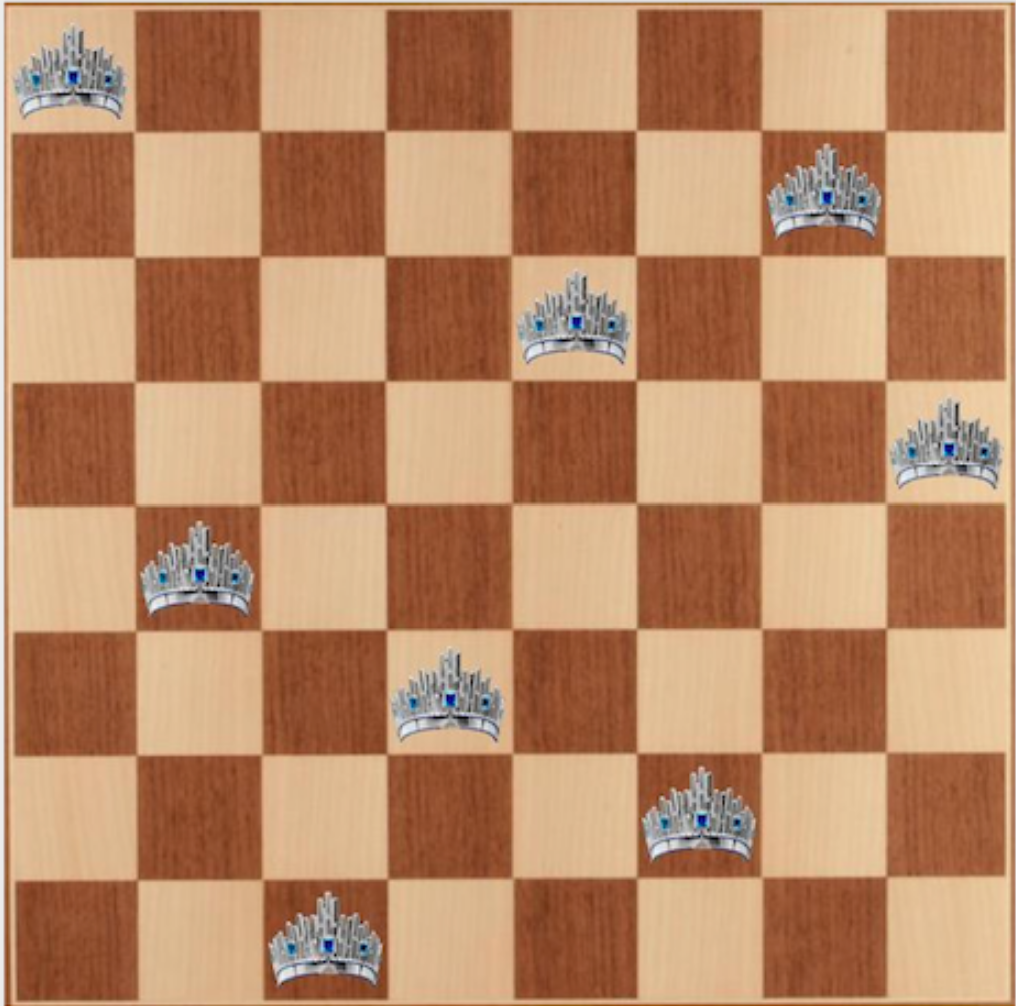

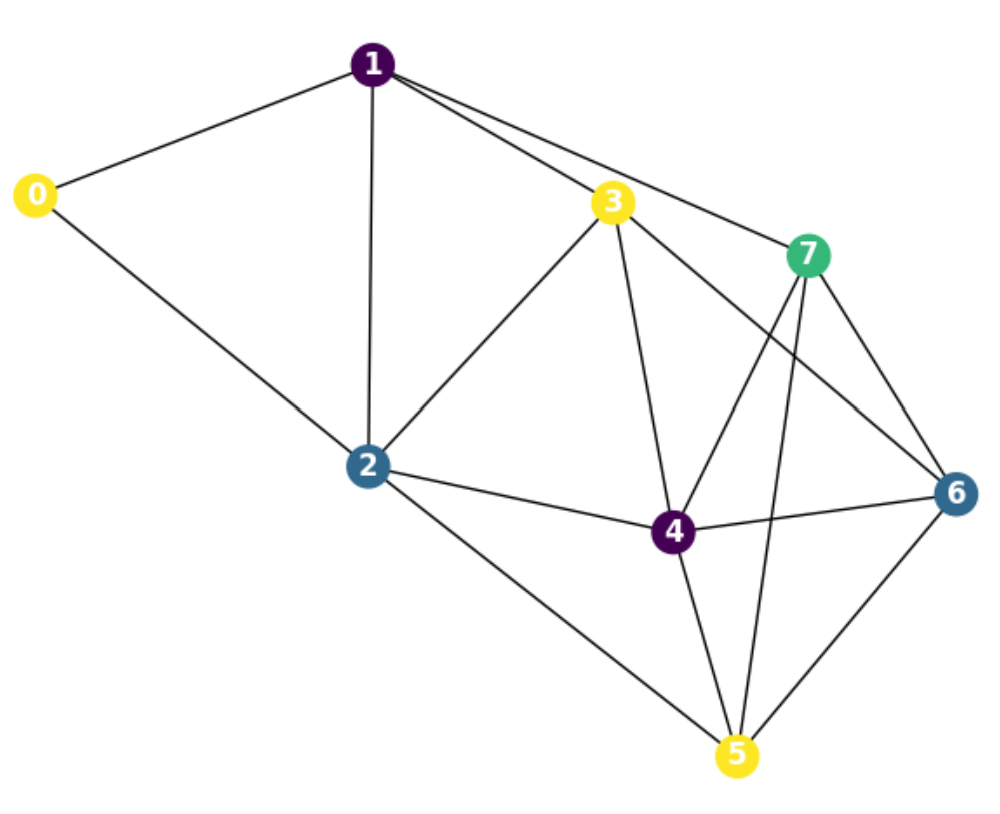

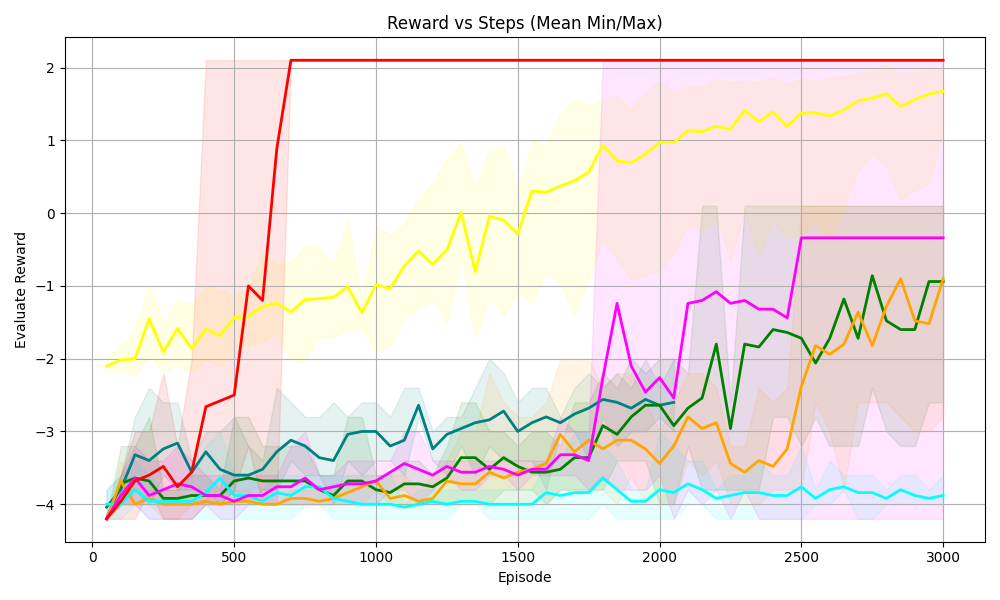

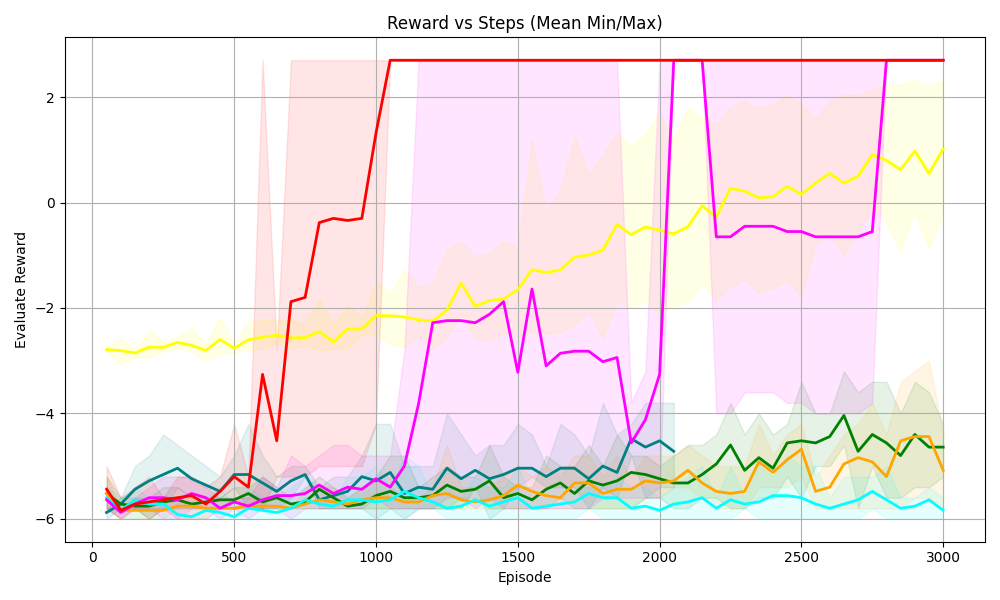

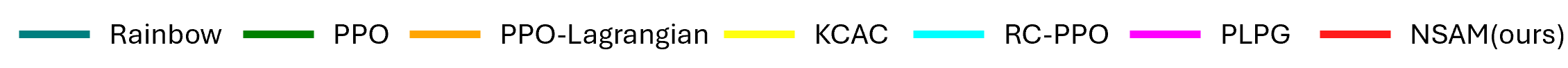

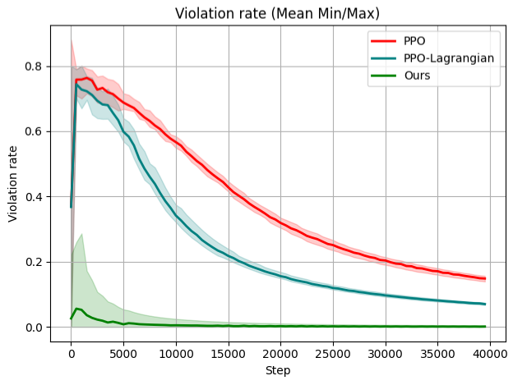

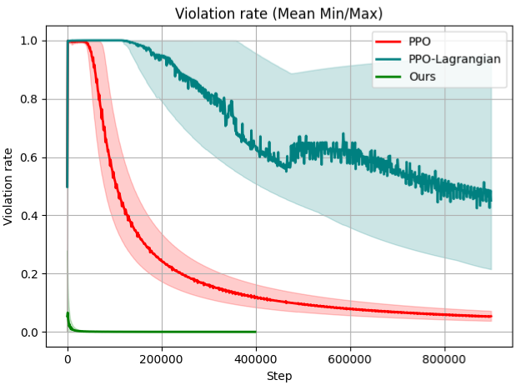

We evaluate ASG on four highly challenging reinforcement learning domains with logical constraints as shown in Figure 5. Across all these environments, agents receive inputs in the form of unknown representation such as vectors or images.

<details>

<summary>Sudoku.png Details</summary>

### Visual Description

## Grid Diagram: Numbered Cells

### Overview

The image is a 5x5 grid of rounded squares. Most squares are light blue and empty, but some contain a single digit number. The numbers present are 1, 2, 3, 4, and 5.

### Components/Axes

* **Grid:** 5 rows and 5 columns.

* **Cells:** Each cell is a rounded square with a light blue fill and a black border.

* **Numbers:** Digits 1 through 5 are present in some cells.

### Detailed Analysis

The grid is structured as follows:

| | Column 1 | Column 2 | Column 3 | Column 4 | Column 5 |

| :---- | :------- | :------- | :------- | :------- | :------- |

| Row 1 | | | 5 | | 1 |

| Row 2 | | | | | 3 |

| Row 3 | | | 1 | | 2 |

| Row 4 | | | | | 4 |

| Row 5 | | | 3 | | |

Specifically:

* The cell in Row 1, Column 3 contains the number 5.

* The cell in Row 1, Column 5 contains the number 1.

* The cell in Row 2, Column 5 contains the number 3.

* The cell in Row 3, Column 3 contains the number 1.

* The cell in Row 3, Column 5 contains the number 2.

* The cell in Row 4, Column 5 contains the number 4.

* The cell in Row 5, Column 3 contains the number 3.

### Key Observations

* The numbers are only present in Columns 3 and 5.

* Column 1, 2, and 4 are empty.

* The numbers in Column 5 are in ascending order from top to bottom, excluding the first row.

* The numbers in Column 3 are 5, 1, 3 from top to bottom.

### Interpretation

The diagram appears to represent a sparse matrix or a visual representation of data points within a grid. The numbers could represent values, counts, or identifiers associated with specific locations in the grid. The pattern of numbered cells suggests a non-uniform distribution of data, with concentrations in Columns 3 and 5. The ascending order of numbers in Column 5 might indicate a sequential or hierarchical relationship.

</details>

(a) Sudoku

<details>

<summary>nqueens.png Details</summary>

### Visual Description

## Chessboard Diagram: Crown Placement

### Overview

The image depicts a standard 8x8 chessboard with alternating light and dark squares. Seven crown icons are placed on various squares of the board. The crowns appear to be identical in design, featuring a silver or white base with blue jewel accents.

### Components/Axes

* **Chessboard:** An 8x8 grid with alternating light and dark squares. The light squares are a pale yellow or beige, while the dark squares are a medium brown.

* **Crown Icons:** Seven identical crown icons are placed on the board. The crowns are silver or white with blue jewels.

### Detailed Analysis

The crown icons are positioned on the following squares (using standard algebraic notation, assuming the bottom-left square is a1):

* **a8:** Top-left corner.

* **e8:** Top row, fifth column.

* **h6:** Sixth row, eighth column.

* **b4:** Fourth row, second column.

* **e4:** Fourth row, fifth column.

* **d2:** Second row, fourth column.

* **a1:** Bottom-left corner.

### Key Observations

* The crowns are not placed in any immediately obvious pattern.

* There are no crowns on the first or eighth columns, or the first or eighth rows, except for the corners.

* The crowns are scattered across the board.

### Interpretation

The image appears to be a visual puzzle or a representation of a specific game state or problem involving the placement of crowns on a chessboard. Without additional context, the significance of the crown positions is unclear. It could represent a strategic arrangement, a mathematical problem, or simply an artistic composition. The placement of the crowns in the corners and the scattering of the other crowns suggests a deliberate, but currently unknown, purpose.

</details>

(b) N-queens

<details>

<summary>coloringG.png Details</summary>

### Visual Description

## Graph Diagram: Network of Nodes

### Overview

The image depicts a graph diagram consisting of 8 nodes (labeled 0 through 7) connected by edges. The nodes are colored differently, and the edges represent relationships or connections between them.

### Components/Axes

* **Nodes:** 8 nodes, labeled 0, 1, 2, 3, 4, 5, 6, and 7. Each node is represented by a colored circle with a number inside.

* Node 0: Yellow

* Node 1: Purple

* Node 2: Dark Blue

* Node 3: Yellow

* Node 4: Purple

* Node 5: Yellow

* Node 6: Dark Blue

* Node 7: Green

* **Edges:** Black lines connecting the nodes, indicating relationships between them.

### Detailed Analysis

The graph shows the following connections:

* Node 0 (Yellow) is connected to Node 1 (Purple) and Node 2 (Dark Blue).

* Node 1 (Purple) is connected to Node 0 (Yellow), Node 2 (Dark Blue), and Node 3 (Yellow).

* Node 2 (Dark Blue) is connected to Node 0 (Yellow), Node 1 (Purple), Node 3 (Yellow), Node 4 (Purple), and Node 5 (Yellow).

* Node 3 (Yellow) is connected to Node 1 (Purple), Node 2 (Dark Blue), Node 4 (Purple), and Node 7 (Green).

* Node 4 (Purple) is connected to Node 2 (Dark Blue), Node 3 (Yellow), Node 5 (Yellow), Node 6 (Dark Blue), and Node 7 (Green).

* Node 5 (Yellow) is connected to Node 2 (Dark Blue), Node 4 (Purple), and Node 6 (Dark Blue).

* Node 6 (Dark Blue) is connected to Node 4 (Purple), Node 5 (Yellow), and Node 7 (Green).

* Node 7 (Green) is connected to Node 3 (Yellow), Node 4 (Purple), and Node 6 (Dark Blue).

### Key Observations

* Node 2 (Dark Blue) has the most connections (5 edges).

* Node 0 (Yellow) has the fewest connections (2 edges).

* The graph appears to be undirected, meaning the edges do not have a specific direction.

* Nodes 3, 4, 6, and 7 form a highly interconnected cluster.

### Interpretation

The graph diagram represents a network where nodes are interconnected based on certain relationships. The different colors assigned to the nodes could represent different categories or attributes. The connections between nodes indicate interactions or dependencies. The density of connections varies across the graph, with some nodes being more central or influential than others. The diagram could be used to visualize social networks, communication networks, or any system where entities are related to each other.

</details>

(c) Graph coloring

<details>

<summary>sudokuV.png Details</summary>

### Visual Description

## Image: Handwritten Digits Grid

### Overview

The image shows a 5x5 grid of handwritten digits, ranging from 1 to 5. Each cell contains a single digit written in black ink on a white background. The digits vary in style and clarity.

### Components/Axes

* **Grid:** 5 rows and 5 columns.

* **Digits:** Handwritten numbers from 1 to 5.

### Detailed Analysis

The grid contains the following digits, read row by row:

* **Row 1:** 4, 3, 5, 2, 1

* **Row 2:** 2, 5, 4, 1, 3

* **Row 3:** 3, 1, 2, 4, 5

* **Row 4:** 1, 2, 3, 5, 4

* **Row 5:** 5, 4, 1, 3, 2

### Key Observations

* The digits are handwritten and vary in style.

* All digits from 1 to 5 are represented.

* There is no apparent pattern in the arrangement of the digits.

### Interpretation

The image likely represents a sample of handwritten digits, possibly used for training or testing a machine learning model for digit recognition. The variability in the handwriting styles highlights the challenges involved in accurately recognizing handwritten digits. The random arrangement suggests the data is not ordered in any meaningful way.

</details>

(d) Visual Sudoku

Figure 5. Four tasks with logical constraints

<details>

<summary>S33.png Details</summary>

### Visual Description

## Line Chart: Reward vs Steps (Mean Min/Max)

### Overview

The image is a line chart that plots the "Evaluate Reward" against "Episode" steps. It shows the mean, minimum, and maximum reward values for multiple data series, each represented by a different colored line. The chart includes a grid for easier reading and shaded regions indicating the min/max range for each series.

### Components/Axes

* **Title:** Reward vs Steps (Mean Min/Max)

* **X-axis:** Episode, with markers at 0, 250, 500, 750, 1000, 1250, 1500, 1750, and 2000.

* **Y-axis:** Evaluate Reward, with markers at -3, -2, -1, 0, and 1.

* **Data Series:** There are six distinct data series, each represented by a different color: red, yellow, teal, dark green, orange, and magenta. Each series has a solid line representing the mean reward and a shaded area around the line representing the min/max range.

### Detailed Analysis

**Red Series:**

* **Trend:** The red line increases sharply from approximately -2.75 to 1.25 between episode 0 and 250, then remains constant at approximately 1.25 until episode 2000.

* **Min/Max Range:** The shaded area is relatively narrow, indicating a small variance.

**Yellow Series:**

* **Trend:** The yellow line starts at approximately -1 at episode 0, fluctuates between -1 and 1.25 until episode 2000.

* **Min/Max Range:** The shaded area is wider than the red series, indicating a larger variance.

**Teal Series:**

* **Trend:** The teal line starts at approximately -2.75 at episode 0, increases to approximately -2.25 by episode 250, and then remains relatively constant between -2.25 and -2.5 until episode 2000.

* **Min/Max Range:** The shaded area is relatively narrow, indicating a small variance.

**Dark Green Series:**

* **Trend:** The dark green line starts at approximately -2.75 at episode 0, increases to approximately -1.75 by episode 500, fluctuates between -2 and -1 until episode 1250, and then remains relatively constant between -1.25 and -1.5 until episode 2000.

* **Min/Max Range:** The shaded area is wider than the red series, indicating a larger variance.

**Orange Series:**

* **Trend:** The orange line starts at approximately -2.75 at episode 0, increases to approximately -2.5 by episode 250, increases to approximately -2 by episode 500, increases to approximately -1.75 by episode 750, increases to approximately -0.5 by episode 1000, and then remains relatively constant between -0.5 and -0.25 until episode 2000.

* **Min/Max Range:** The shaded area is wider than the red series, indicating a larger variance.

**Magenta Series:**

* **Trend:** The magenta line starts at approximately -2.75 at episode 0, increases to approximately -2.5 by episode 250, increases to approximately -1.75 by episode 500, and then remains relatively constant between -1.75 and -1.5 until episode 2000.

* **Min/Max Range:** The shaded area is wider than the red series, indicating a larger variance.

### Key Observations

* The red series shows the most rapid and stable increase in reward, reaching a high plateau early on.

* The yellow series exhibits the most fluctuation in reward.

* The teal series shows the least improvement in reward over the episodes.

* The other series (dark green, orange, and magenta) show gradual improvements in reward over time.

* The min/max ranges vary across the series, indicating different levels of stability or variance in the reward values.

### Interpretation

The chart compares the performance of different agents or algorithms (represented by the different colored lines) in terms of reward earned over a series of episodes. The red series demonstrates the most successful and stable learning, quickly achieving a high reward and maintaining it. The yellow series, on the other hand, shows inconsistent performance. The other series show varying degrees of learning progress. The shaded areas provide insight into the consistency of the reward for each agent, with wider areas indicating more variability. The data suggests that the red agent/algorithm is the most effective in this environment, while the yellow agent/algorithm may require further tuning or a different approach.

</details>

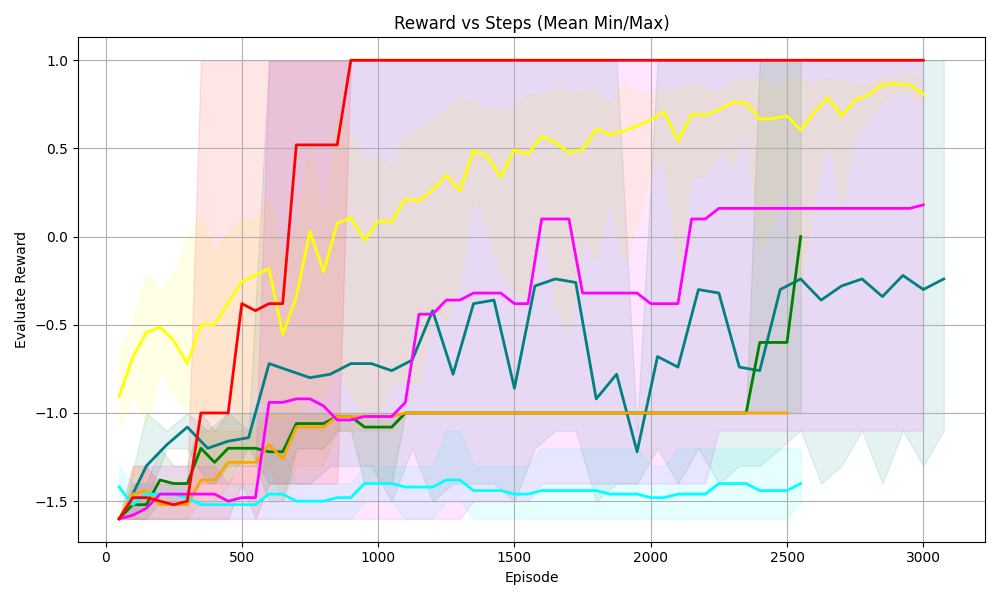

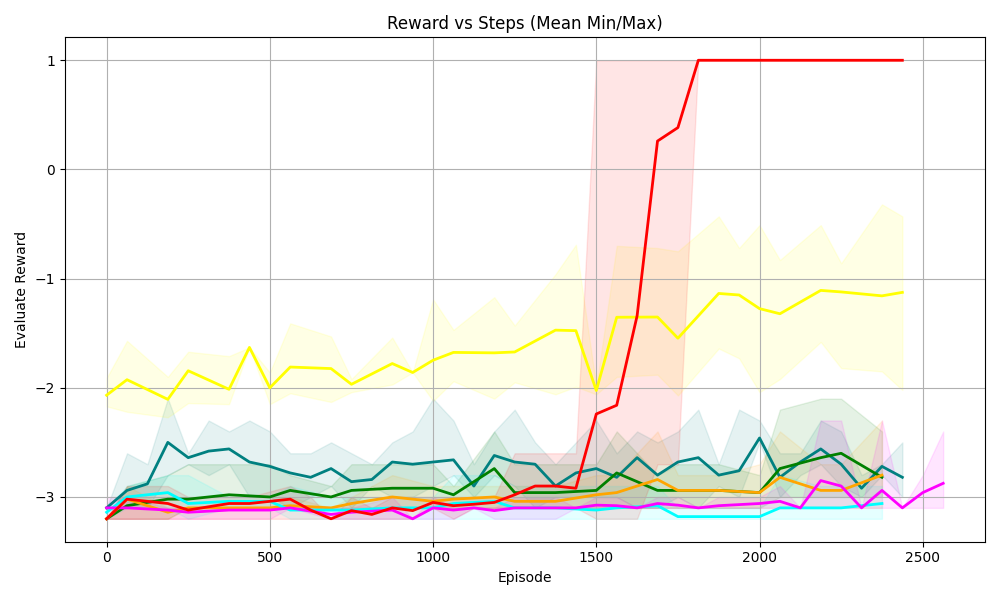

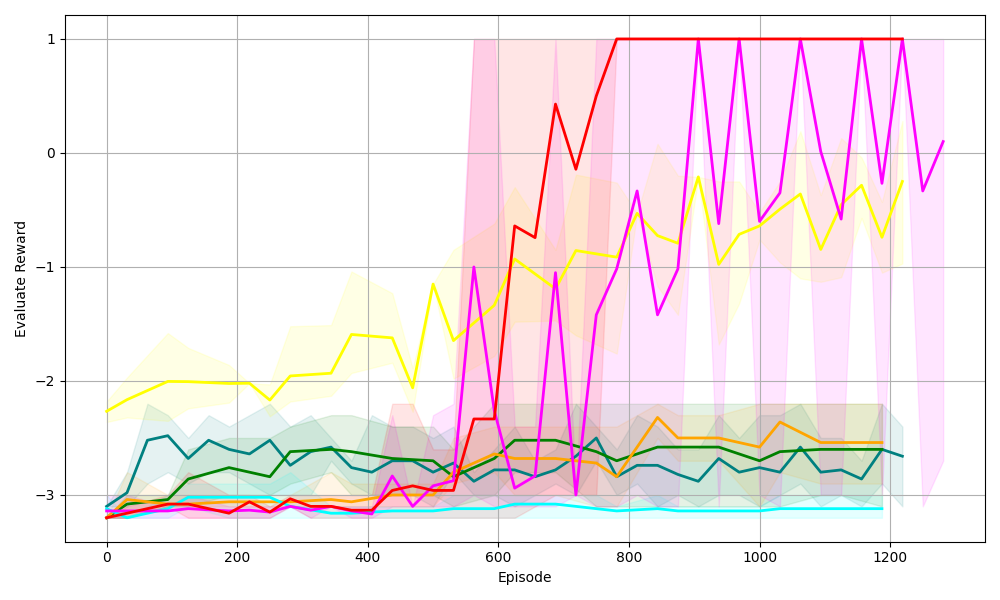

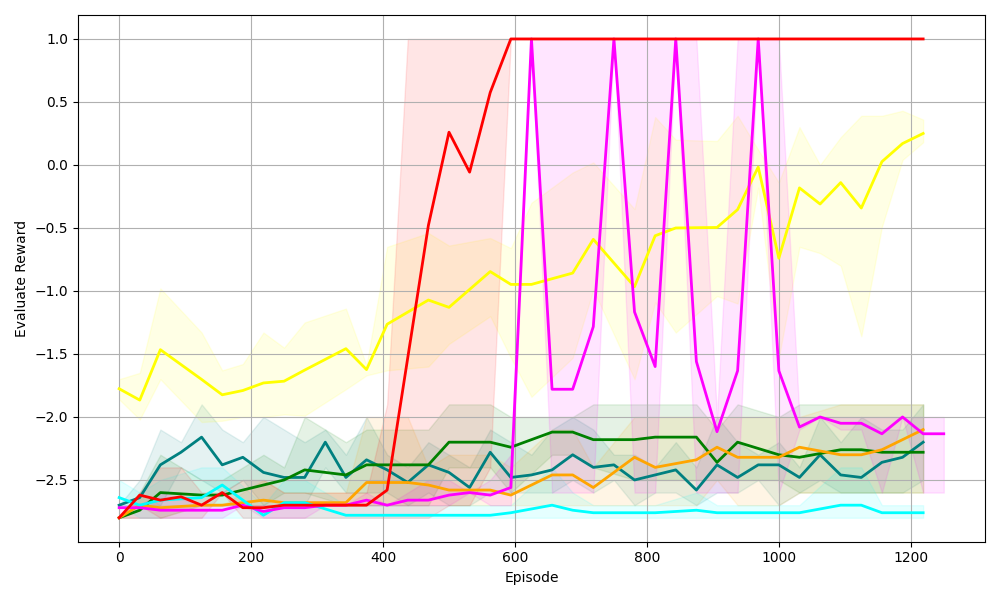

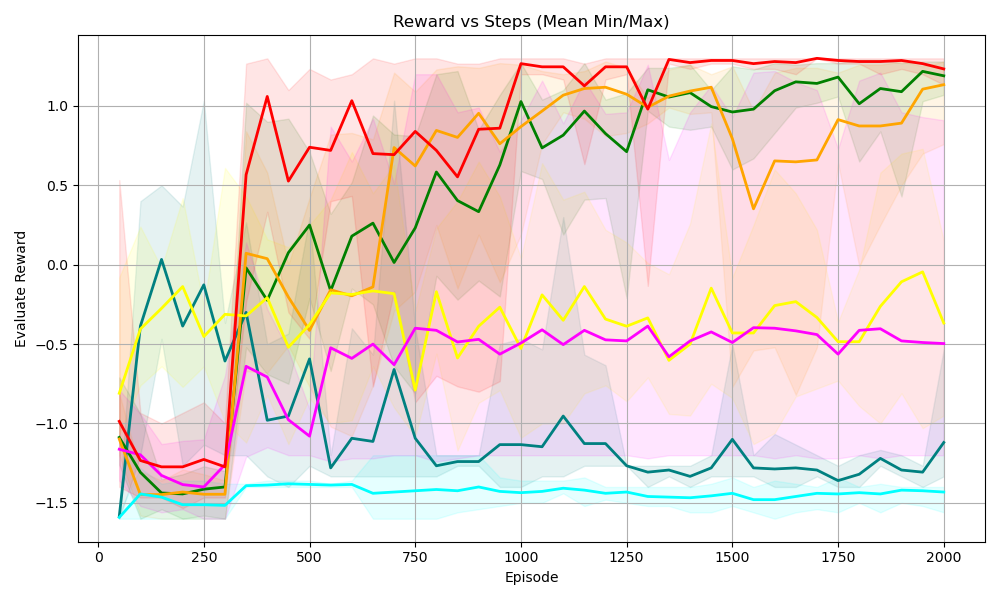

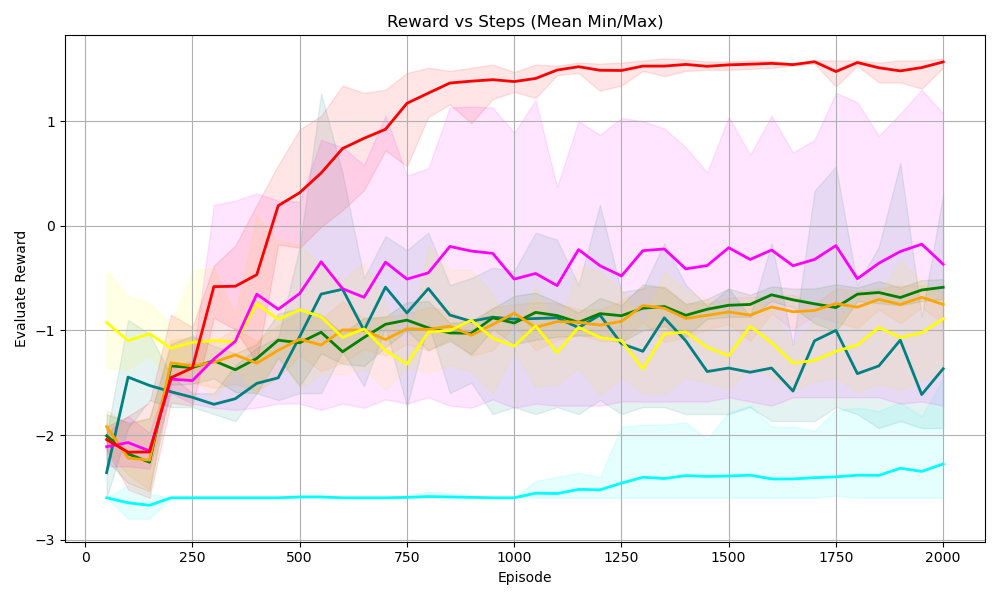

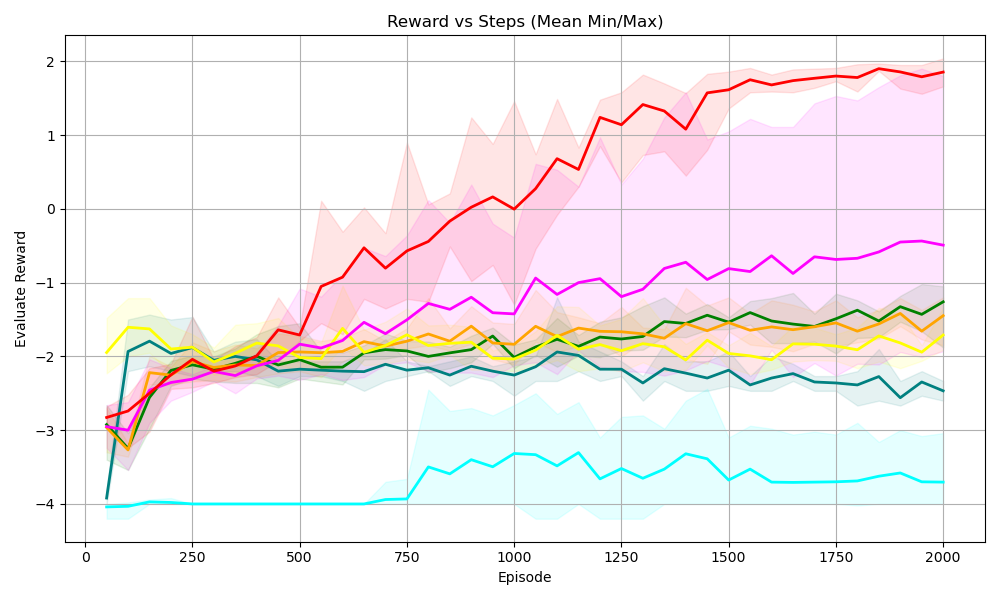

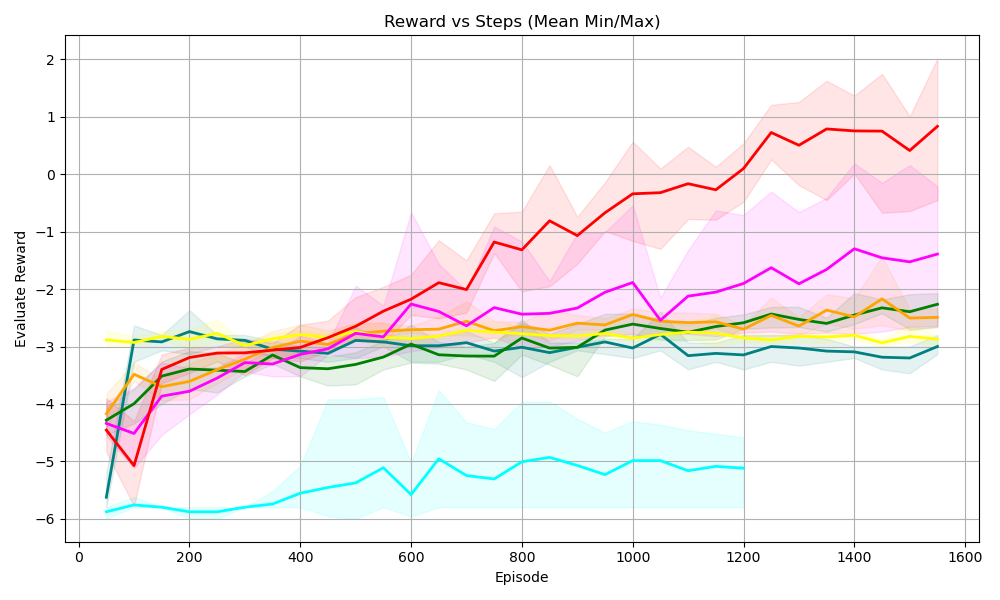

(a) Sudoku 3 $\times$ 3

<details>

<summary>S44.png Details</summary>

### Visual Description

## Chart: Reward vs Steps (Mean Min/Max)

### Overview

The image is a line chart displaying the "Evaluate Reward" versus "Episode" (steps). There are multiple lines, each representing a different algorithm or configuration. The chart also includes shaded regions around some of the lines, indicating the min/max range of the reward.

### Components/Axes

* **Title:** Reward vs Steps (Mean Min/Max)

* **X-axis:** Episode, ranging from 0 to 3000 in increments of 500.

* **Y-axis:** Evaluate Reward, ranging from -4 to 2 in increments of 1.

* **Grid:** Present in the background.

* **Data Series:** Multiple lines with different colors, each representing a different algorithm or configuration.

* Red: Starts at approximately -4, increases sharply to approximately 2 by episode 750, and then remains constant at approximately 2.

* Yellow: Starts at approximately -2, gradually increases to approximately 1.8 by episode 3000.

* Green: Starts at approximately -4, fluctuates and gradually increases to approximately -1 by episode 3000.

* Orange: Starts at approximately -4, fluctuates and gradually increases to approximately -1 by episode 3000.

* Magenta: Starts at approximately -4, fluctuates, increases to approximately 0 by episode 2500, and then remains constant.

* Cyan: Starts at approximately -4, remains relatively constant at approximately -4.

* **Shaded Regions:** Shaded regions around some of the lines, indicating the min/max range of the reward.

* Red: Shaded region around the red line.

* Yellow: Shaded region around the yellow line.

* Green: Shaded region around the green line.

* Orange: Shaded region around the orange line.

* Magenta: Shaded region around the magenta line.

* Cyan: Shaded region around the cyan line.

### Detailed Analysis

* **Red Line:** The red line shows a rapid increase in reward early in the training process, quickly reaching a plateau at a reward of approximately 2. This suggests a fast-learning algorithm.

* **Yellow Line:** The yellow line shows a more gradual increase in reward, eventually reaching a reward of approximately 1.8 by the end of the training process. This suggests a slower-learning algorithm.

* **Green Line:** The green line shows a fluctuating reward, with a gradual increase over time. This suggests an algorithm that is learning but experiencing some instability.

* **Orange Line:** The orange line shows a fluctuating reward, with a gradual increase over time. This suggests an algorithm that is learning but experiencing some instability.

* **Magenta Line:** The magenta line shows a fluctuating reward, with a gradual increase over time. This suggests an algorithm that is learning but experiencing some instability.

* **Cyan Line:** The cyan line shows a relatively constant reward, suggesting that the algorithm is not learning.

### Key Observations

* The red line shows the fastest learning and highest reward.

* The yellow line shows a slower learning but still achieves a high reward.

* The green, orange, and magenta lines show fluctuating rewards, suggesting instability.

* The cyan line shows no learning.

### Interpretation

The chart compares the performance of different algorithms or configurations in terms of reward versus episode. The red line represents the most successful algorithm, achieving a high reward quickly. The yellow line represents a slower-learning but still successful algorithm. The green, orange, and magenta lines represent algorithms that are learning but experiencing some instability. The cyan line represents an algorithm that is not learning. The shaded regions around the lines indicate the min/max range of the reward, providing a measure of the variability of the algorithm's performance. The chart suggests that the red algorithm is the most effective, while the cyan algorithm is the least effective. The other algorithms show varying degrees of success and instability.

</details>

(b) Sudoku 4 $\times$ 4

<details>

<summary>S55.png Details</summary>

### Visual Description

## Line Chart: Reward vs Steps (Mean Min/Max)

### Overview

The image is a line chart displaying the relationship between "Reward" and "Episode" steps. It shows multiple data series, each represented by a different colored line, along with shaded regions indicating the min/max range for each series. The chart aims to visualize the performance of different algorithms or configurations over time, as measured by the "Evaluate Reward" metric.

### Components/Axes

* **Title:** Reward vs Steps (Mean Min/Max)

* **X-axis:** Episode

* Scale: 0 to 3000, with markers at 0, 500, 1000, 1500, 2000, 2500, and 3000.

* **Y-axis:** Evaluate Reward

* Scale: -6 to 2, with markers at -6, -4, -2, 0, and 2.

* **Data Series:** There are multiple data series represented by different colored lines. The exact number of series is difficult to determine without a legend, but there are at least 6 distinct colors: red, yellow, magenta, green, orange, and cyan. Each series also has a shaded region around it, representing the minimum and maximum reward values at each episode.

### Detailed Analysis

Here's a breakdown of the trends observed for each data series:

* **Red Line:** Starts around -6. It increases sharply around episode 750 to approximately -0.25, then again around episode 1000 to a value of 2.5, and remains relatively constant at that level for the rest of the episodes. The shaded region around the red line is quite wide initially, narrowing significantly after the sharp increase.

* **Yellow Line:** Starts around -2.5 and remains relatively flat until episode 2000, then gradually increases to approximately 1 by episode 3000. The shaded region around the yellow line is relatively narrow.

* **Magenta Line:** Starts around -6. It fluctuates between -6 and -2 until episode 2000, where it sharply increases to approximately 2.5, then drops again to approximately -0.5 by episode 2500, and remains relatively constant. The shaded region around the magenta line is wide.

* **Green Line:** Starts around -6. It gradually increases to approximately -4.5 by episode 3000. The shaded region around the green line is relatively narrow.

* **Orange Line:** Starts around -6. It remains relatively flat around -6 until episode 2500, then gradually increases to approximately -4.5 by episode 3000. The shaded region around the orange line is relatively narrow.

* **Cyan Line:** Starts around -6. It remains relatively flat around -6 for the entire duration of the episodes. The shaded region around the cyan line is relatively narrow.

* **Teal Line:** Starts around -6. It remains relatively flat around -6 for the entire duration of the episodes. The shaded region around the teal line is relatively narrow.

### Key Observations

* The red and magenta lines show the most significant improvement in reward over the episodes, with the red line achieving a high reward relatively early in the training process.

* The yellow line shows a gradual improvement in reward over time.

* The green, orange, cyan, and teal lines show little to no improvement in reward over the episodes.

* The shaded regions indicate the variability in reward for each series. Some series have more consistent rewards (narrow shaded regions), while others have more variable rewards (wide shaded regions).

### Interpretation

The chart compares the performance of different algorithms or configurations (represented by the different colored lines) in terms of the "Evaluate Reward" metric over a series of episodes. The red line represents the most successful algorithm, as it achieves a high reward relatively early in the training process and maintains that level for the rest of the episodes. The magenta line also shows significant improvement, but it is more volatile than the red line. The yellow line shows a gradual improvement, while the green, orange, cyan, and teal lines show little to no improvement.