# Finding the Cracks: Improving LLMs Reasoning with Paraphrastic Probing and Consistency Verification

**Authors**: Weili Shi, Dongliang Guo, Lehan Yang, Tianlong Wang, Hanzhang Yuan, Sheng Li

## Abstract

Large language models have demonstrated impressive performance across a variety of reasoning tasks. However, their problem-solving ability often declines on more complex tasks due to hallucinations and the accumulation of errors within these intermediate steps. Recent work has introduced the notion of critical tokens –tokens in the reasoning process that exert significant influence on subsequent steps. Prior studies suggest that replacing critical tokens can refine reasoning trajectories. Nonetheless, reliably identifying and exploiting critical tokens remains challenging. To address this, we propose the P araphrastic P robing and C onsistency V erification (PPCV) framework. PPCV operates in two stages. In the first stage, we roll out an initial reasoning path from the original question and then concatenate paraphrased versions of the question with this reasoning path. And we identify critical tokens based on mismatches between the predicted top-1 token and the expected token in the reasoning path. A criterion is employed to confirm the final critical token. In the second stage, we substitute critical tokens with candidate alternatives and roll out new reasoning paths for both the original and paraphrased questions. The final answer is determined by checking the consistency of outputs across these parallel reasoning processes. We evaluate PPCV on mainstream LLMs across multiple benchmarks. Extensive experiments demonstrate PPCV substantially enhances the reasoning performance of LLMs compared to baselines.

Machine Learning, ICML

<details>

<summary>images/compare_ct_sc_gsm8k.png Details</summary>

### Visual Description

\n

## Line Chart: Pass@k vs. Number of Sample k

### Overview

This line chart illustrates the relationship between the "number of sample k" and the "pass@k (%)" metric for two different methods: "critical tokens" and "self-consistency". The chart displays how the percentage of successful passes (pass@k) changes as the number of samples (k) increases.

### Components/Axes

* **X-axis:** "number of sample k", ranging from 0 to 50, with markers at 0, 10, 20, 30, 40, and 50.

* **Y-axis:** "pass@k (%)", ranging from 70% to 90%, with markers at 70%, 72.5%, 75%, 77.5%, 80%, 82.5%, 85%, 87.5%, and 90%.

* **Legend:** Located in the bottom-right corner, identifying two data series:

* "critical tokens" - represented by a red line with triangle markers.

* "self-consistency" - represented by a purple line with star markers.

* **Gridlines:** Vertical dashed gridlines are present to aid in reading values.

### Detailed Analysis

**Critical Tokens (Red Line):**

The "critical tokens" line slopes generally upward, indicating an increase in pass@k as the number of samples (k) increases.

* At k = 0, pass@k is approximately 81%.

* At k = 10, pass@k is approximately 83%.

* At k = 20, pass@k is approximately 85%.

* At k = 30, pass@k is approximately 86%.

* At k = 40, pass@k is approximately 88%.

* At k = 50, pass@k is approximately 89%.

**Self-Consistency (Purple Line):**

The "self-consistency" line also slopes upward, but at a slower rate than the "critical tokens" line.

* At k = 0, pass@k is approximately 71%.

* At k = 10, pass@k is approximately 77%.

* At k = 20, pass@k is approximately 80%.

* At k = 30, pass@k is approximately 82%.

* At k = 40, pass@k is approximately 84%.

* At k = 50, pass@k is approximately 85%.

### Key Observations

* The "critical tokens" method consistently outperforms the "self-consistency" method across all values of k.

* Both methods show diminishing returns as k increases. The rate of improvement in pass@k decreases as k gets larger.

* The initial increase in pass@k is more pronounced for the "self-consistency" method between k=0 and k=10.

### Interpretation

The data suggests that increasing the number of samples (k) generally improves the pass@k metric for both "critical tokens" and "self-consistency" methods. However, the "critical tokens" method is more effective at achieving higher pass rates, especially as the number of samples increases. This could indicate that identifying and utilizing critical tokens is a more efficient strategy for improving performance than relying solely on self-consistency. The diminishing returns observed at higher values of k suggest that there is a point beyond which increasing the number of samples provides minimal additional benefit. This information is valuable for optimizing the sampling process and balancing performance gains with computational cost. The difference in initial slope suggests that self-consistency benefits more from a small number of samples, while critical tokens maintain a more consistent improvement.

</details>

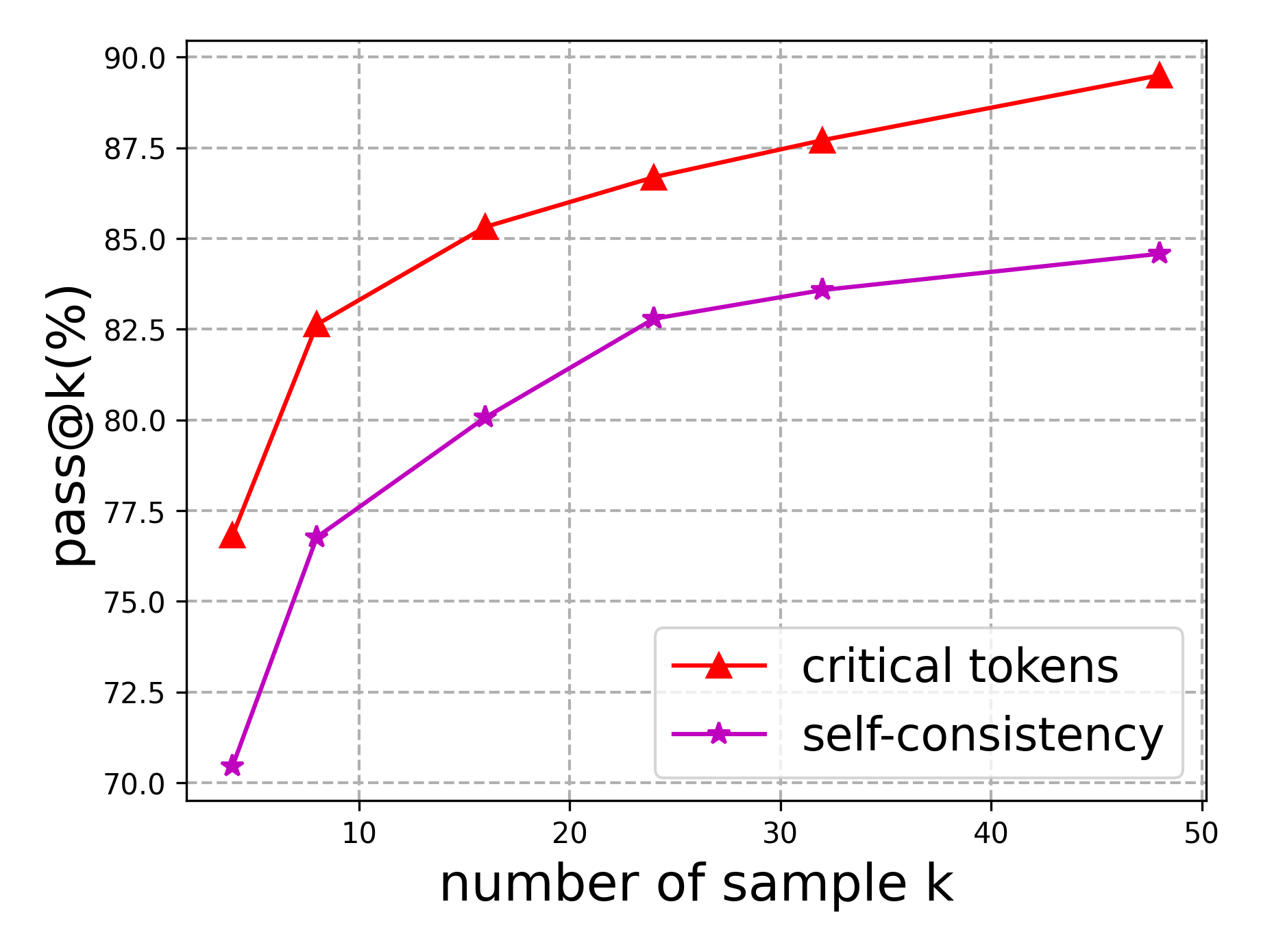

Figure 1: Comparison of the effects of critical tokens and Self-Consistency on the reasoning performance of LLMs, evaluated on samples from the GSM8K training data.

## 1 Introduction

The emergence of large language models (LLMs) (Brown et al., 2020; Grattafiori et al., 2024; Achiam et al., 2023; Yang et al., 2025a) has astonished the AI community with their remarkable capabilities across a wide range of reasoning tasks, including mathematical problem solving, programming, and planning. By generating intermediate reasoning steps through techniques such as chain-of-thought prompting (Wei et al., 2022; Kojima et al., 2022; Zhang et al., 2022; Sprague et al., 2025), LLMs can emulate human-like reasoning processes and achieve strong performance on diverse reasoning benchmarks. Despite their success, the problem-solving ability of LLMs often declines on complex reasoning tasks due to hallucinations and the accumulation of errors in intermediate steps (Lightman et al., 2023; Ling et al., 2023; Bubeck et al., 2023). To mitigate this issue, prior works (Wang et al., 2024; Yuan et al., 2025; Chen et al., 2024a; Chen and Li, 2024) have proposed the inference-time optimization paradigm, which evaluates and refines reasoning trajectories through self-correction by the LLM itself or with feedback from external verifiers such as process reward models (PRMs) (Uesato et al., 2022). However, existing studies (Yang et al., 2025b; Tyen et al., 2024; Stechly et al., 2025) suggest that LLMs struggle to reliably identify errors in their reasoning and often fail to correct previous outputs without external guidance. Moreover, obtaining high-quality, stepwise process supervision for training external verifiers is challenging and limits the practicality of these approaches (Feng et al., 2025).

<details>

<summary>images/para_example.png Details</summary>

### Visual Description

## Textual Document: Case Study on the Effects of Question Paraphrasing

### Overview

The image presents a side-by-side comparison of two versions of a word problem – an original question and a paraphrased question – along with their respective solution steps. The document also indicates the "ground truth" answer for both problems is 8. Each problem's solution is followed by a visual indicator (a red 'X' or a green checkmark) signifying whether the provided solution is correct.

### Components/Axes

The document is structured into two columns. Each column contains:

1. A question (labeled "Original question:" or "Paraphrased question:")

2. A statement of the "ground truth answer"

3. A "Response:" section detailing the solution steps.

4. A visual indicator of solution correctness.

### Detailed Analysis or Content Details

**Original Question:**

"In a card game, you are awarded 10 points if you win one round. While playing, Jane ends up with 60 points. If she lost 20 points, then how many rounds did she play? (ground truth answer: 8)"

**Original Question Response:**

Step 1: Determine the total points Jane earned by subtracting the points she lost from her final score. Total points earned = Final score - Points lost. Total points earned = 60 - 20. Total points earned = 40.

Step 2: Since Jane earns 10 points for each round she wins, we can find the number of rounds she played by dividing the total points earned by the points earned per round. Number of rounds = Total points earned / Points earned per round. Number of rounds = 40 / 10. Number of rounds = 4.

Therefore, Jane played 4 rounds. (Marked with a red 'X')

**Paraphrased Question:**

"At a bridge competition, every game won grants the player 10 points. Unfortunately, Jane started with an advantage but ended up losing 20 points and scoring only 60 points. If she had won x rounds and lost none, what would be the value of x? (ground truth answer: 8)"

**Paraphrased Question Response:**

Step 1: Understand the given information. Jane started with an advantage but ended up losing 20 points. She scored a total of 60 points. Every game won grants the player 10 points.

Step 2: Determine the total points Jane would have had if she hadn’t lost any points. Since she lost 20 points, we need to add 20 to her total score to find out how many points she would have had if she hadn’t lost any points. Total points without loss = 60 + 20 = 80 points.

Step 3: Calculate the number of games Jane won. Since every game won grants the player 10 points, we can divide the total points without loss by 10 to find the number of games she won. Number of games won = Total points without loss / 10 = 8. The number of games she won is equal to 8. (Marked with a green checkmark)

### Key Observations

* The original question's solution is incorrect, while the paraphrased question's solution is correct.

* The paraphrased question uses more complex language ("advantage," "x rounds") compared to the original question.

* The original question focuses on rounds played, while the paraphrased question focuses on games won.

* The visual indicators (X and checkmark) clearly denote the correctness of each solution.

### Interpretation

This document demonstrates the impact of question paraphrasing on problem-solving accuracy. The original question, while simpler in wording, leads to an incorrect solution (4 rounds). The paraphrased question, despite being more complex, guides the solver towards the correct answer (8 games won). This suggests that the way a question is framed can significantly influence a person's ability to understand and solve it. The paraphrasing appears to clarify the underlying mathematical relationship, leading to a more accurate result. The use of 'x' in the paraphrased question may encourage a more algebraic approach, which is appropriate for the problem. The document serves as a case study highlighting the importance of careful question design and the potential pitfalls of ambiguous or poorly worded problems. The red 'X' and green checkmark provide a clear visual assessment of the solution's validity, reinforcing the learning objective.

</details>

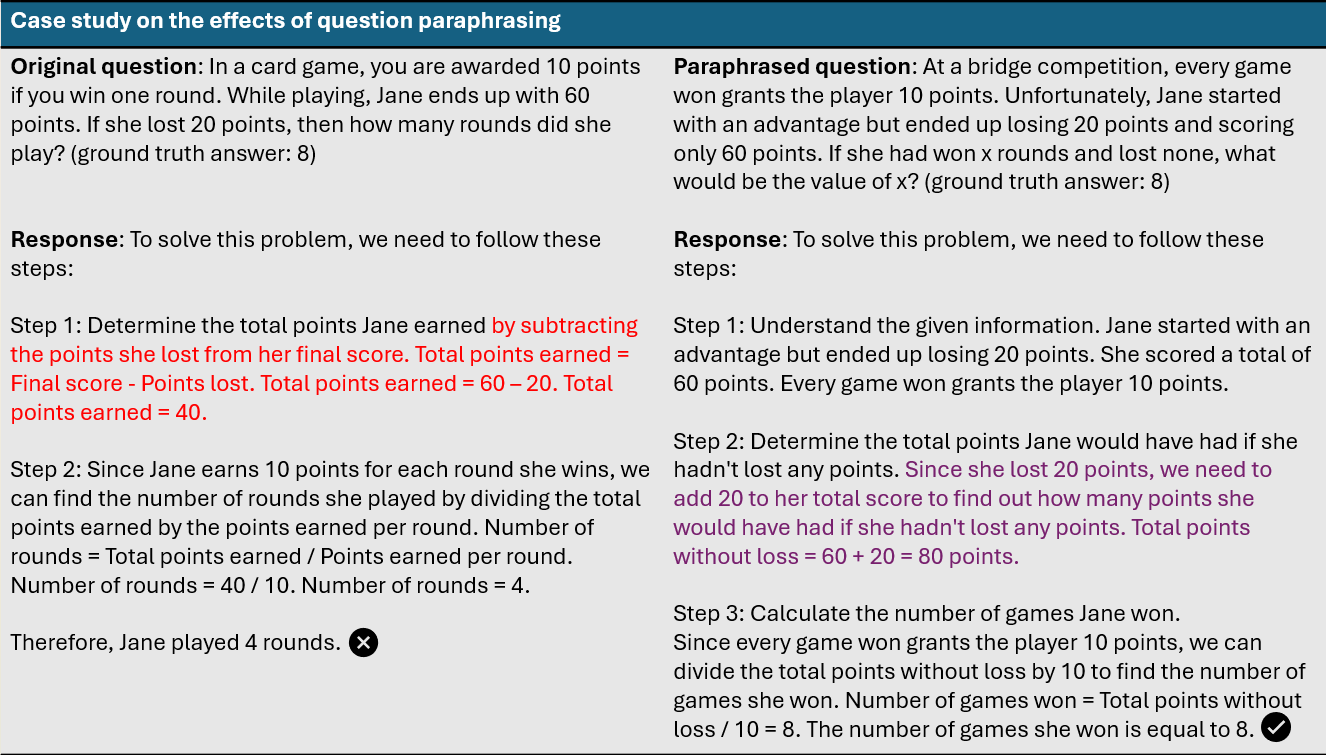

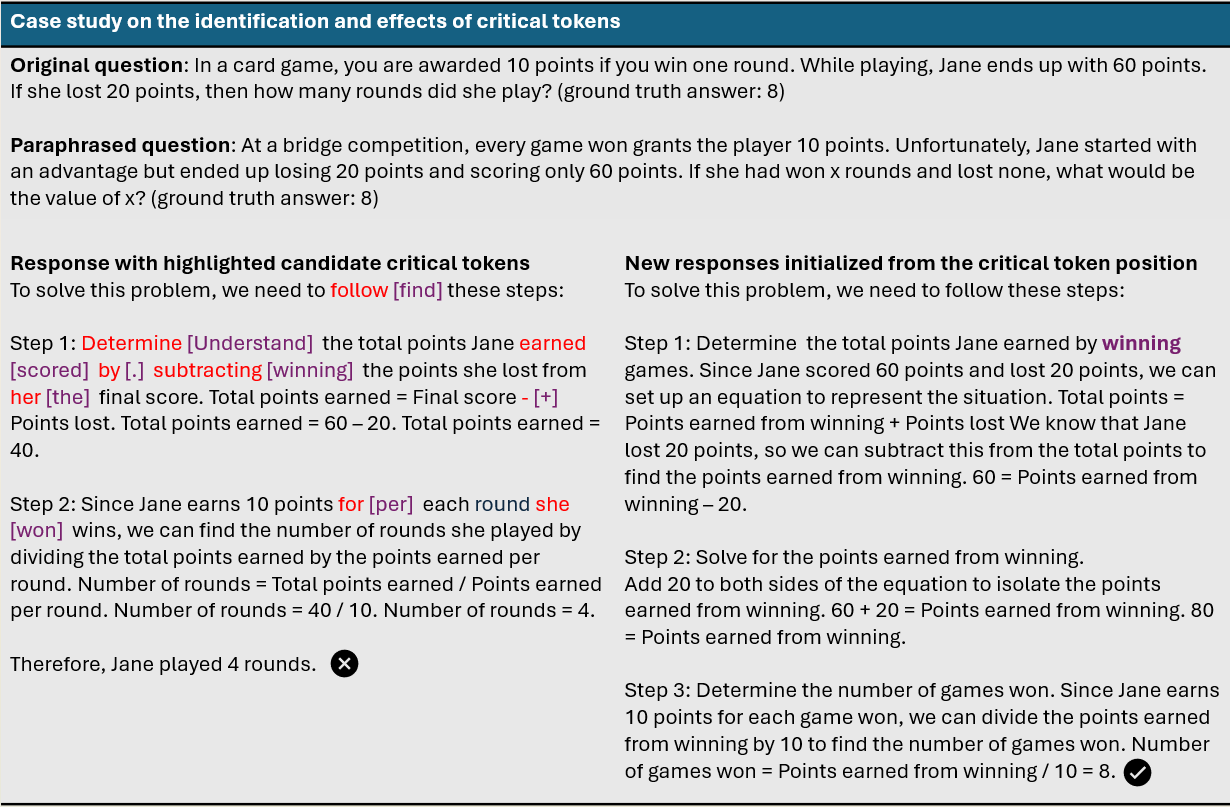

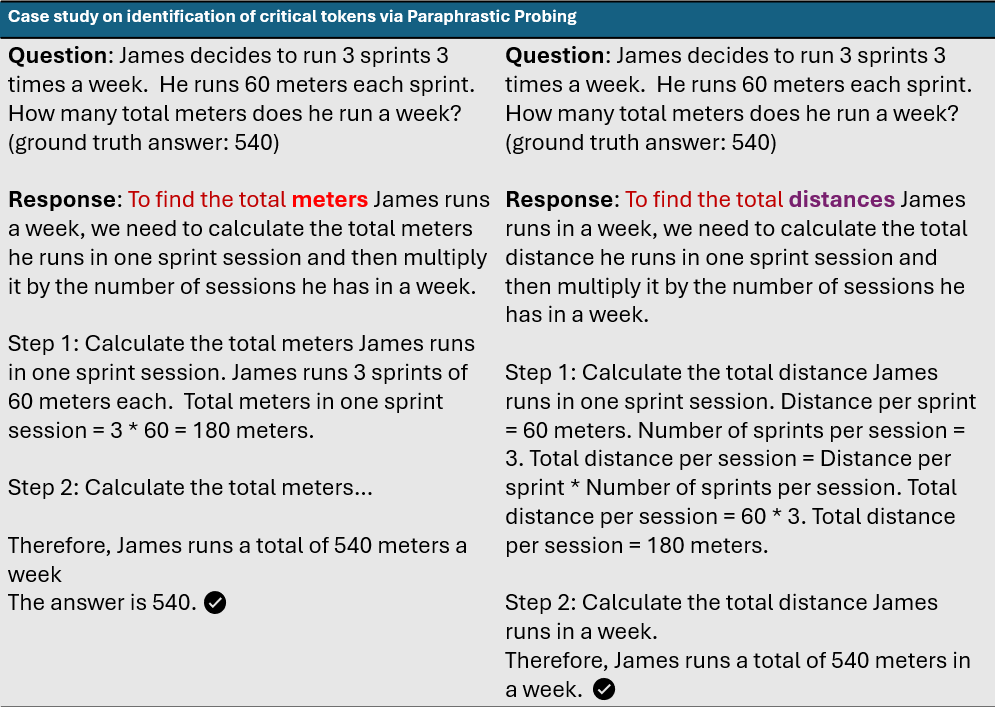

Figure 2: Case study illustrating that LLMs can refine intermediate incorrect reasoning segments (highlighted in red).

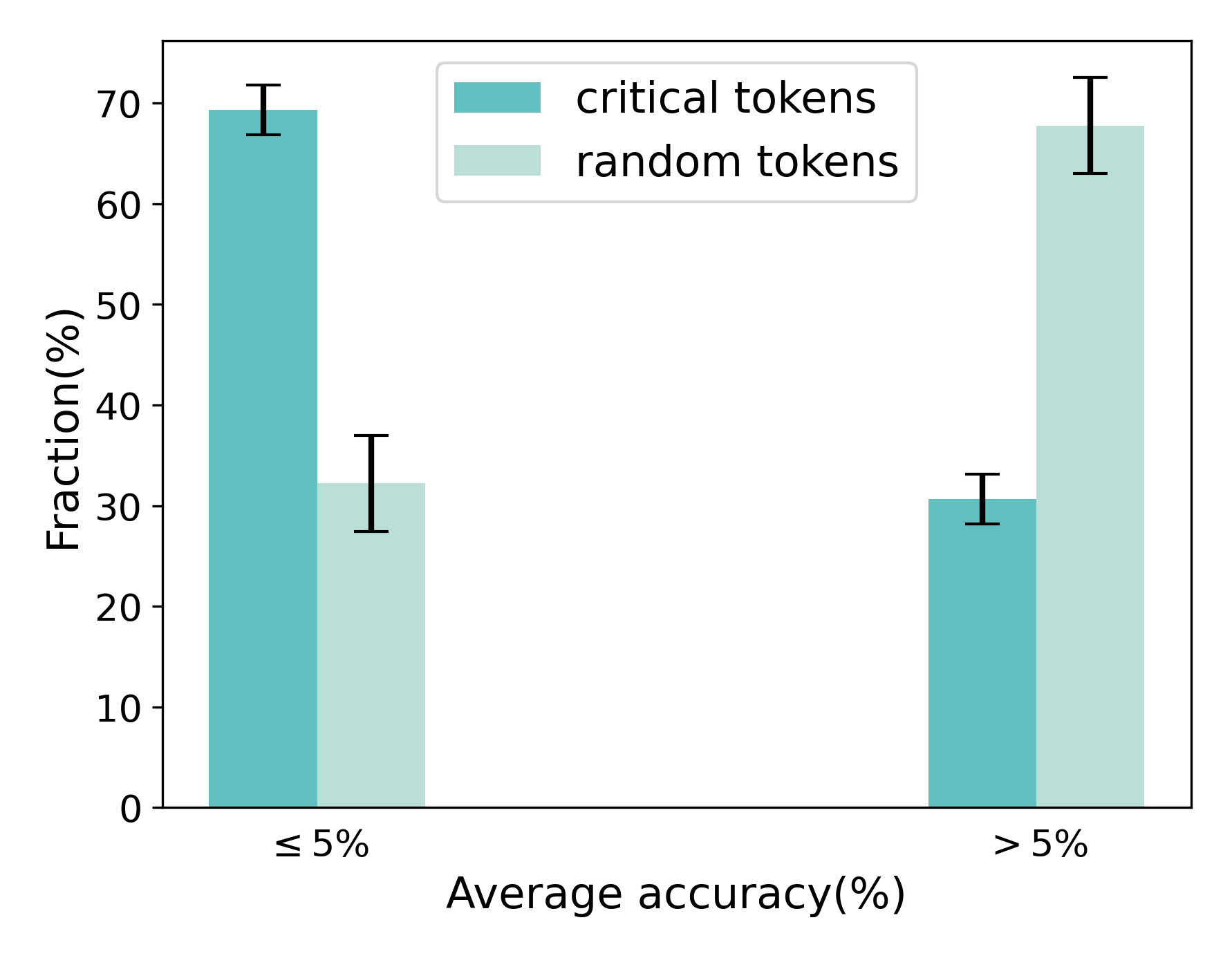

Recent work has introduced the concept of critical tokens (Lin et al., 2025), which play pivotal roles in intermediate reasoning steps and exert strong influence on subsequent reasoning and final outcomes. We evaluate the criticality of a token based on the average accuracy of sampling rollouts generated from the partial reasoning trajectories truncated at the token’s position. A token is identified as critical if it lies on an incorrect trajectory and satisfies the following conditions:

- The correctness score of rollouts initiated from the token is close to zero (e.g., below 5%);

- The correctness scores of rollouts initiated from subsequent tokens remain below a threshold (e.g., 10%).

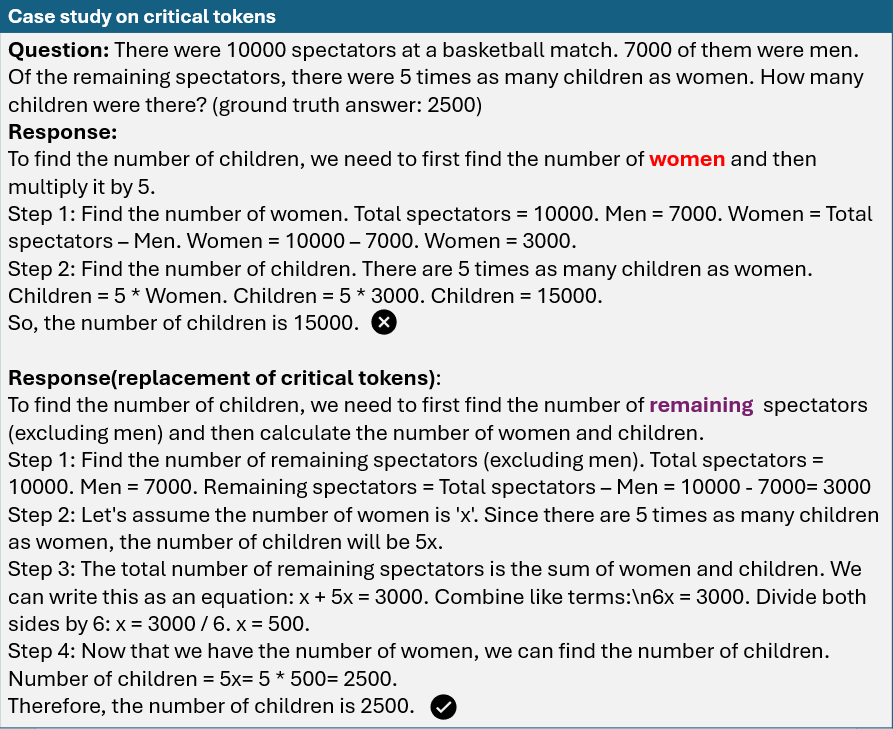

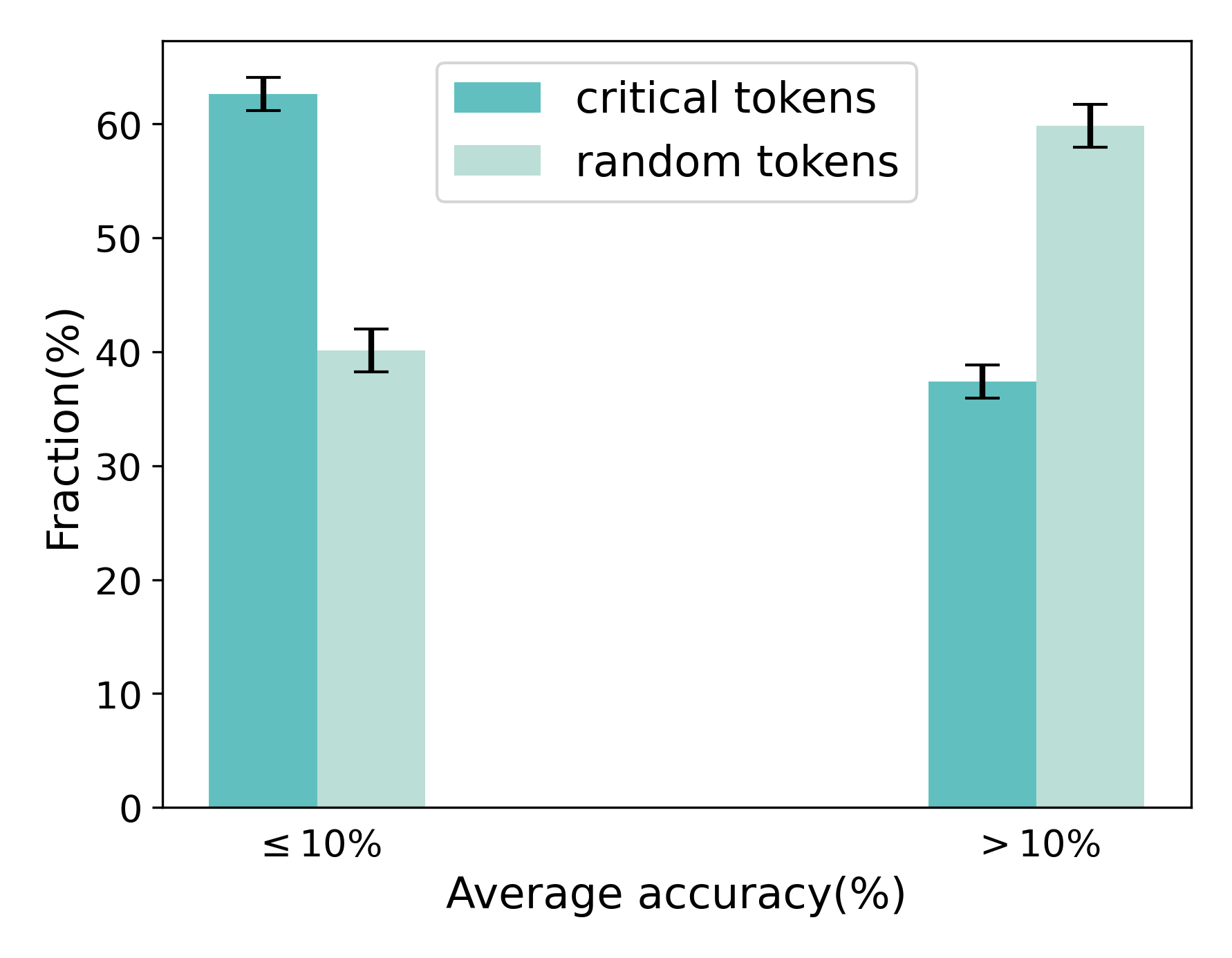

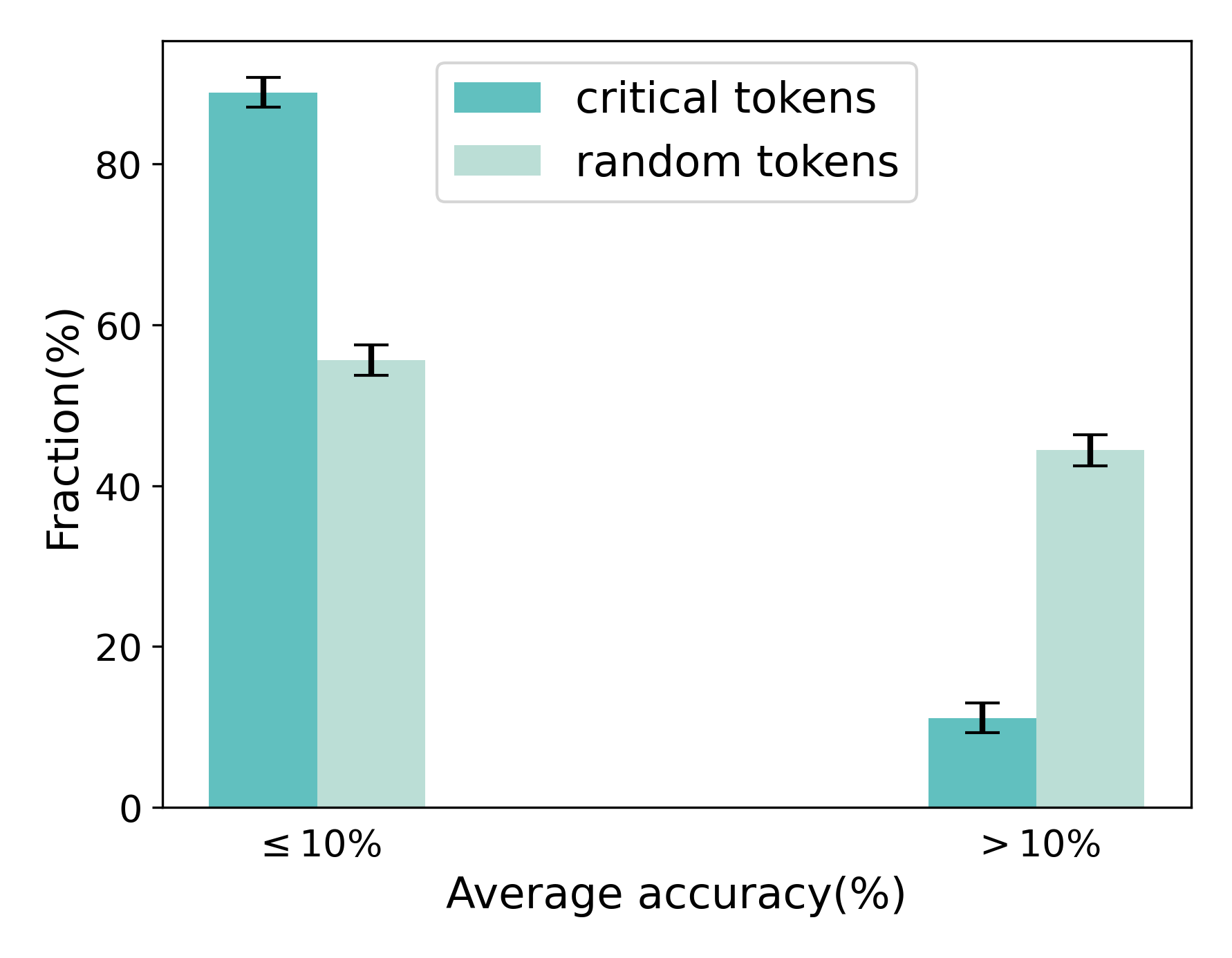

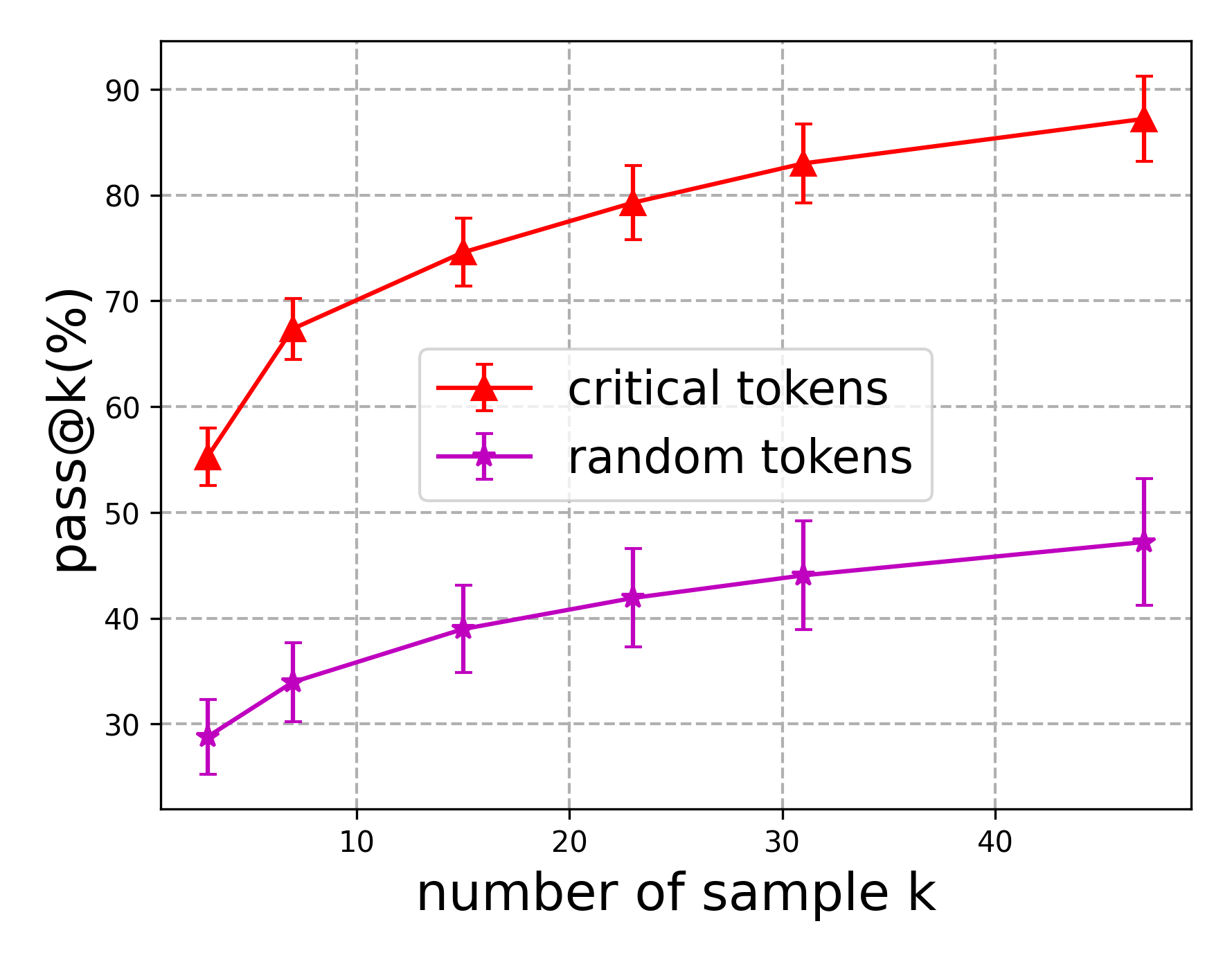

Prior studies suggest that critical tokens often diverge from human-annotated error tokens, yet they induce more sustained degradation in reasoning quality than other tokens. Moreover, as illustrated in Figure 3, replacing critical tokens in an incorrect reasoning trajectory with suitable candidate tokens can correct subsequent steps and lead to the right answer in new rollouts. To quantitatively assess the effectiveness of critical tokens, we conduct an empirical study using LLMs such as Llama-3.1-8B-Instruct (Grattafiori et al., 2024) on reasoning tasks. Specifically, we randomly sample 100 instances with incorrect reasoning steps from the GSM8K (Cobbe et al., 2021) training data. Following the criterion, we locate critical tokens through exhaustive search. We then truncate the reasoning path at the critical token, substitute it with alternative tokens, and roll out new reasoning paths. For example, as shown in Figure 3, the token “woman” is replaced with “remaining”. We evaluate performance using Pass@k and compare against Self-Consistency (Wang et al., 2023), which also samples multiple reasoning paths. As shown in Figure 1, critical token replacement provides a clear advantage in correcting errors compared to plain sampling. Nonetheless, reliably identifying and leveraging critical tokens for reasoning remains a nontrivial challenge.

<details>

<summary>images/critical_token_example.png Details</summary>

### Visual Description

\n

## Textual Document: Case Study on Critical Tokens

### Overview

The image presents a case study focused on evaluating responses to a mathematical word problem. It showcases two different approaches to solving the same problem, highlighting the importance of "critical tokens" in achieving the correct answer. The problem involves calculating the number of children at a basketball game given the total spectators, the number of men, and the ratio of children to women.

### Components/Axes

The document is structured into sections:

* **Question:** The original word problem.

* **Response:** An initial attempt at solving the problem, marked with a red 'X' indicating an incorrect answer.

* **Response(replacement of critical tokens):** A revised solution, marked with a green checkmark indicating a correct answer.

* Each response is broken down into numbered steps.

### Detailed Analysis or Content Details

**Question:**

"There were 10000 spectators at a basketball match. 7000 of them were men. Of the remaining spectators, there were 5 times as many children as women. How many children were there? (ground truth answer: 2500)"

**Response (Incorrect):**

"To find the number of children, we need to first find the number of women and then multiply it by 5.

Step 1: Find the number of women. Total spectators = 10000. Men = 7000. Women = Total spectators - Men. Women = 10000 - 7000. Women = 3000.

Step 2: Find the number of children. There are 5 times as many children as women. Children = 5 * Women. Children = 5 * 3000. Children = 15000.

So, the number of children is 15000."

**Response (Correct):**

"To find the number of children, we need to first find the number of remaining spectators (excluding men) and then calculate the number of women and children.

Step 1: Find the number of remaining spectators (excluding men). Total spectators = 10000. Men = 7000. Remaining spectators = Total spectators - Men = 10000 - 7000 = 3000

Step 2: Let's assume the number of women is 'x'. Since there are 5 times as many children as women, the number of children will be 5x.

Step 3: The total number of remaining spectators is the sum of women and children. We can write this as an equation: x + 5x = 3000. Combine like terms: \n6x = 3000. Divide both sides by 6: x = 3000 / 6. x = 500.

Step 4: Now that we have the number of women, we can find the number of children. Number of children = 5x = 5 * 500 = 2500.

Therefore, the number of children is 2500."

### Key Observations

* The initial response incorrectly calculates the number of women by subtracting the number of men from the total spectators without considering that the ratio of children to women applies *only* to the remaining spectators.

* The corrected response correctly identifies the need to first find the number of remaining spectators and then applies the ratio to that subset.

* The use of a variable 'x' in the corrected response allows for a proper algebraic solution.

* The "critical tokens" appear to be the understanding of the scope of the remaining spectators and the correct setup of the equation.

### Interpretation

This case study demonstrates the importance of careful reading and accurate interpretation of word problems. The initial response failed because it misapplied the given ratio to the entire spectator population instead of the remaining population after accounting for the men. The corrected response highlights the need to isolate the relevant subset of data before applying the given relationships. The use of algebraic representation (introducing 'x') is crucial for a correct solution. The "critical tokens" are not necessarily specific words, but rather the conceptual understanding of how to correctly frame the problem mathematically. The inclusion of the "ground truth answer" (2500) serves as a validation point and emphasizes the importance of verifying solutions. The visual cues (red 'X' and green checkmark) provide immediate feedback on the correctness of each approach.

</details>

Figure 3: An example demonstrating how substitution of a critical token (red) with a candidate token (purple) modifies the reasoning path and produces the correct answer.

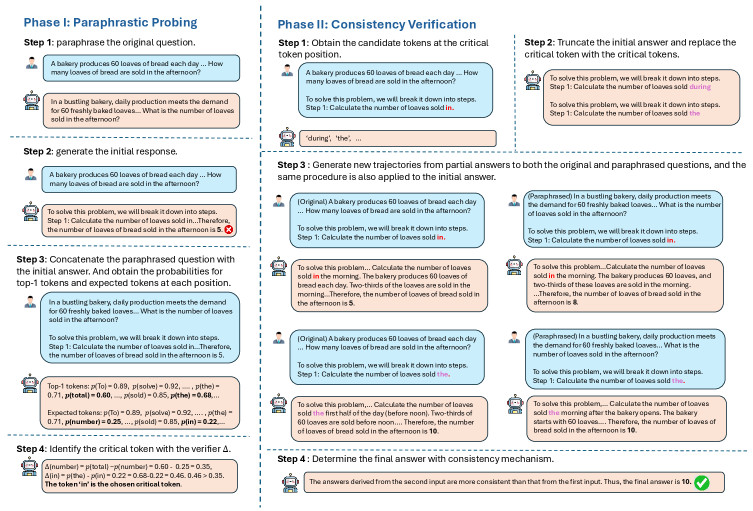

Recent studies (Zhou et al., 2024; Chen et al., 2024b), on surface form, the way questions, assumptions, and constraints are phrased, have revealed its subtle influence on the trajectory of intermediate reasoning steps, As shown in Figure 2. LLMs could adjust the intermediate steps under the paraphrased form of the question. This motivates us to explore the role of paraphrasing in the extraction and utilization of critical tokens for reasoning tasks. To this end, we propose the P araphrastic P robing and C onsistency V erification (PPCV) framework , a two-stage approach designed to leverage critical tokens to enhance the reasoning ability of LLMs. In the first stage, we probe critical tokens using paraphrased questions. Specifically, we first roll out the initial reasoning path from the original question, then concatenate paraphrased questions with this reasoning path. The resulting synthetic inputs are fed into the LLM to obtain token-level logits for each position in the reasoning path. Positions where the predicted top-1 token diverges from the expected token are flagged as potential pivotal points, as these positions are sensitive to paraphrased inputs and can trigger a pivot in the reasoning trajectory. Next, an empirical criterion is applied to determine the final critical token. In contrast to prior work (Lin et al., 2025), which depends on external models for identifying critical tokens with ambiguous criteria, our method introduces a self-contained mechanism that pinpoints critical tokens.

In the second stage, we leverage the extracted critical tokens to refine the initial reasoning path. Specifically, we select the top-K tokens (include critical token itself) at the critical token position and roll out new reasoning paths for both the original and paraphrased questions. Based on the empirical observation that trajectories leading to correct answers are robust to paraphrastic perturbations, we propose a paraphrase consistency mechanism. In contrast to Self-Consistency (Wang et al., 2023), which relies on majority voting, our method selects the final answer by comparing outputs from paraphrased and original questions and choosing the one with the most consistent matches. To address potential ties across multiple answers, we further introduce similarity-weighted paraphrase consistency, which incorporates similarity scores between paraphrased and original questions when computing consistency.

Compared with self-correction (Wu et al., 2024; Miao et al., 2024) and PRM-based methods (Wang et al., 2024; Yuan et al., 2025), our framework exploits critical tokens to refine reasoning trajectories without requiring step-level error detection by the LLM itself or auxiliary models. We evaluate our method on mainstream LLMs across mathematical and commonsense reasoning benchmarks, demonstrating consistent improvements in reasoning performance. The contributions of the paper is summarized as follows:

- We propose a novel two-stage framework, P araphrastic P robing and C onsistency V erification (PPCV) designed to extract and leverage critical tokens to enhance the reasoning performance of LLMs.

- We show that critical tokens can more effectively correct erroneous reasoning trajectories than traditional sampling methods like Self-Consistency. Furthermore, our approach successfully extracts these tokens through paraphrastic probing, achieving improved final results via paraphrase consistency.

- We evaluate our method on mainstream LLMs across various reasoning tasks, including math and logic reasoning. Experimental results show significant performance improvements over baseline methods.

## 2 Related Work

Inference-Time Optimization for LLM reasoning. With the advent of chain-of-thought (CoT) prompting, LLMs have demonstrated strong reasoning capabilities by producing intermediate steps during inference. This success has motivated a growing body of work (Wu et al., 2025; Snell et al., 2024) on augmenting reasoning trajectories at test time to further improve performance. Existing approaches can be broadly categorized into search-based methods (Bi et al., 2025; Yao et al., 2023; Hao et al., 2023; Xie et al., 2023; Besta et al., 2024), such as Tree-of-Thoughts (Yao et al., 2023), and sampling-based methods (Wang et al., 2023; Xu et al., 2025; Wan et al., 2025; Ma et al., 2025), such as Self-Consistency (Wang et al., 2023). However, due to intrinsic hallucinations (Bubeck et al., 2023), LLMs often generate erroneous intermediate steps, which can ultimately lead to incorrect answers, especially on complex problems. This limitation highlights the need for inference-time optimization of reasoning processes.

To address this issue, one line of research (Yin et al., 2024; Chen et al., 2024a; Ling et al., 2023; Wu et al., 2024; Miao et al., 2024; Madaan et al., 2023) designs instructional prompts that guide LLMs to detect and refine their own mistakes. Despite its appeal, prior work has shown that the effectiveness of self-correction is limited in practice. Another line of work (Wang et al., 2024; Yuan et al., 2025; He et al., 2024; Havrilla et al., 2024) introduces external verifiers, such as process reward models (Snell et al., 2024), to identify and filter out error-prone reasoning steps. These methods typically require high-quality training data for the verifier, with data scarcity often mitigated through strategies such as Monte Carlo Tree Search (Guan et al., 2025; Qi et al., 2025; Li, 2025; Zhang et al., 2024). In addition, a recent line of decoding-based approaches (Xu et al., 2025; Ma et al., 2025) seeks to improve reasoning by dynamically adjusting the next-token prediction based on future trajectory probing. In contrast, our method refines reasoning by directly leveraging critical tokens, without relying on stepwise verification or external verifiers. This design underscores both the utility and universality of our approach.

Paraphrasing for LLMs. A growing number of work (Zhou et al., 2024; Chen et al., 2024b) has examined the impact of a problem’s surface form on the reasoning ability of LLMs. Findings (Zhou et al., 2024; Chen et al., 2024b; Huang et al., 2025) suggest that even subtle modifications in phrasing can substantially affect both the reasoning process and the final outcome. Building on this observation, several methods (Yadav et al., 2024; Chen et al., 2024b) leverage beneficial paraphrasing to enhance LLM performance in tasks such as reasoning and intent classification. In addition, paraphrasing has been employed to assess model uncertainty (Gao et al., 2024; Tanneru et al., 2024), thereby enhancing the reliability and trustworthiness of LLM applications. In our work, we utilize paraphrasing as a principled tool to extract critical tokens and to aggregate answers.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Paraphrastic Probing & Consistency Verification

### Overview

The image presents a diagram illustrating a two-phase process: "Paraphrastic Probing" and "Consistency Verification". The diagram details steps within each phase, demonstrating how a question is paraphrased, an initial answer is generated, and then the consistency of that answer is verified through token manipulation and trajectory generation. The diagram uses a flowchart-like structure with text boxes and arrows to show the flow of information.

### Components/Axes

The diagram is divided into two main phases, labeled "Phase I: Paraphrastic Probing" (left side) and "Phase II: Consistency Verification" (right side). Each phase is further broken down into three steps, numbered 1-3. The diagram also includes a "Re-ranking & Selection" section at the bottom. Text boxes contain descriptions of each step, and arrows indicate the flow of the process. Highlighted text within the boxes indicates critical tokens.

### Detailed Analysis or Content Details

**Phase I: Paraphrastic Probing**

* **Step 1: Paraphrase the original question.**

* Original Question: "A bakery produces 60 loaves of bread each day… How many loaves of bread are sold in the afternoon?"

* Paraphrased Question: "In a bustling bakery, daily production meets the demand for 60 freshly baked loaves… What is the number of loaves sold in the afternoon?"

* Highlighted Token: "during"

* **Step 2: Generate the initial response.**

* Initial Response: "A bakery produces 60 loaves of bread each day… How many loaves of bread are sold in the afternoon?"

* To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in… Therefore, the number of loaves of bread sold in the afternoon is 5."

* **Step 3: Concatenate the paraphrased question with the initial answer.**

* Concatenated Question/Answer: "In a bustling bakery, daily production meets the demand for 60 freshly baked loaves… What is the number of loaves sold in the afternoon? A bakery produces 60 loaves of bread each day… How many loaves of bread are sold in the afternoon? To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in… Therefore, the number of loaves of bread sold in the afternoon is 5."

* Highlighted Tokens: "5", "afternoon"

**Phase II: Consistency Verification**

* **Step 1: Obtain the candidate tokens at the critical token position.**

* Question: "A bakery produces 60 loaves of bread each day… How many loaves of bread are sold in the afternoon?"

* Highlighted Tokens: "during", "the"

* **Step 2: Truncate the initial answer and replace the critical token with the critical tokens.**

* Truncated Answer: "To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in…"

* **Step 3: Generate new trajectories from partial answers to both the original and paraphrased questions, and meet the same procedure as applied to the initial answer.**

* Original Question Trajectory: "Original) A bakery produces 60 loaves of bread each day… How many loaves of bread are sold in the afternoon? To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in…"

* Paraphrased Question Trajectory: "Paraphrased) In a bustling bakery, daily production meets the demand for 60 freshly baked loaves… What is the number of loaves sold in the afternoon? To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in…"

**Re-ranking & Selection (Bottom Section)**

* "Re-ranking & Selection: Re-rank the generated trajectories based on their likelihood and select the most consistent answer."

* Trajectory 1: "To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in the morning. The bakery produces 60 loaves of bread each day, two-thirds of the loaves are sold in the morning. Therefore, the number of loaves sold in the afternoon is 5."

* Trajectory 2: "To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in the morning. The bakery produces 60 loaves, and two-thirds of these loaves are sold in the morning… Therefore, the number of loaves of bread sold in the afternoon is 5."

* Trajectory 3: "To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in the morning. The bakery produces 60 loaves. Two-thirds of the loaves are sold in the morning. Therefore, the number of loaves sold in the afternoon is 5."

* Trajectory 4: "To solve this problem, we will break it down into steps. Step 1: Calculate the number of loaves sold in the morning. The bakery produces 60 loaves. Therefore, the number of loaves sold in the afternoon is 5."

* Final Answer: "Therefore, the number of loaves of bread sold in the afternoon is 5."

### Key Observations

The diagram highlights the importance of token manipulation in verifying the consistency of answers generated from both original and paraphrased questions. The repeated answer of "5" suggests this is the expected solution to the problem. The process involves breaking down the problem into steps and using partial answers to assess consistency.

### Interpretation

This diagram illustrates a method for ensuring the robustness of question-answering systems. By paraphrasing the original question and then verifying the consistency of the answer through token replacement and trajectory generation, the system can mitigate the effects of subtle variations in wording. The process aims to identify answers that are not only correct but also stable across different phrasings of the same question. The focus on "critical tokens" suggests that certain words or phrases are more important for maintaining consistency than others. The final selection of the most consistent answer indicates a preference for solutions that are less sensitive to paraphrasing. The diagram demonstrates a sophisticated approach to natural language understanding and reasoning, aiming to build more reliable and trustworthy AI systems. The repeated calculation of 2/3 of 60 loaves being sold in the morning, and the subsequent calculation of the remaining loaves being sold in the afternoon, is a key element of the problem-solving process.

</details>

Figure 4: Illustration of the proposed paraphrastic probing and consistency verification (PPCV) framework. The framework comprises two stages: (i) probing critical tokens through paraphrased forms, and (ii) rolling out new reasoning steps with alternative tokens and selecting the final answer using the paraphrase consistency verification mechanism.

<details>

<summary>images/probe_illustration.png Details</summary>

### Visual Description

\n

## Textual Document: Case Study on Identification and Effects of Critical Tokens

### Overview

The image presents a case study examining the impact of "critical tokens" in solving mathematical word problems. It compares a response generated with highlighted candidate critical tokens to a new response initialized from those tokens. The study focuses on two similar word problems involving points earned in games and rounds, and demonstrates a step-by-step solution process.

### Components/Axes

The document is structured into sections:

* **Original Question:** A word problem about a card game.

* **Paraphrased Question:** A reworded version of the original problem, using a bridge competition context.

* **Response with Highlighted Critical Tokens:** A solution attempt with specific words highlighted.

* **New Responses Initialized from Critical Token Position:** A revised solution based on the identified critical tokens.

* **Step-by-Step Solutions:** Detailed steps for both approaches, with equations and calculations.

### Content Details

**Original Question:**

"In a card game, you are awarded 10 points if you win one round. While playing, Jane ends up with 60 points. If she lost 20 points, then how many rounds did she play? (ground truth answer: 8)"

**Paraphrased Question:**

"At a bridge competition, every game won grants the player 10 points. Unfortunately, Jane started with an advantage but ended up losing 20 points and scoring only 60 points. If she had won x rounds and lost none, what would be the value of x? (ground truth answer: 8)"

**Response with Highlighted Critical Tokens:**

"To solve this problem, we need to follow [find] these steps:

Step 1: Determine [Understand] the total points Jane earned [scored] by [.] subtracting [winning] the points she lost from her [the] final score. Total points earned = Final score - [†] Points lost. Total points earned = 60 - 20. Total points earned = 40.

Step 2: Since Jane earns 10 points for [per] each round she [won] wins, we can find the number of rounds played by dividing the total points earned by the points earned per round. Number of rounds = Total points earned / Points earned per round. Number of rounds = 40 / 10. Number of rounds = 4.

Therefore, Jane played 4 rounds. ❌"

**New Responses Initialized from Critical Token Position:**

"To solve this problem, we need to follow these steps:

Step 1: Determine the total points Jane earned by winning games. Since Jane scored 60 points and lost 20 points, we can set up an equation to represent the situation. Total points = Points earned from winning + Points lost. We know that Jane lost 20 points, so we can subtract this from the total points to find the points earned from winning. 60 = Points earned from winning - 20.

Step 2: Solve for the points earned from winning. Add 20 to both sides of the equation to isolate the points earned from winning. 60 + 20 = Points earned from winning. 80 = Points earned from winning.

Step 3: Determine the number of games won. Since Jane earns 10 points for each game won, we can divide the points earned from winning by 10 to find the number of games won. Number of games won = Points earned from winning / 10 = 8."

### Key Observations

* The initial response incorrectly calculates the total points earned (40 instead of 80) due to a misinterpretation of the problem.

* The highlighted "critical tokens" (e.g., "Understand", "find", "per") seem to guide the revised response towards a more accurate solution.

* The revised response uses a more formal equation-based approach, which clarifies the problem-solving process.

* The original response is marked with a red "❌", indicating an incorrect answer.

### Interpretation

This case study demonstrates the potential of identifying and utilizing "critical tokens" to improve the accuracy and clarity of solutions to mathematical word problems. The initial response, while attempting to follow a logical process, falters due to a misunderstanding of the problem's core elements. By focusing on key terms and relationships (highlighted as critical tokens), the revised response is able to construct a correct and well-explained solution. The use of equations in the revised response further enhances the clarity and rigor of the solution. The study suggests that incorporating critical token analysis into problem-solving strategies could be a valuable approach for both students and automated problem-solving systems. The difference in the final answer (4 vs. 8) highlights the significant impact of accurate problem interpretation. The use of both a card game and bridge competition context suggests the method is robust to changes in the problem's framing.

</details>

Figure 5: Case study illustrating the identification and effects of critical tokens identified by our method. Tokens highlighted in red indicate candidate critical tokens, whereas tokens highlighted in purple correspond to alternative tokens generated when conditioning on paraphrased questions.

## 3 Methodology

In this section, we present the two components of our framework in detail: paraphrastic probing and consistency verification. An detailed illustration of our framework is shown in Figure 4. We then discuss the proposed method and provide the complete algorithm.

### 3.1 Paraphrastic Probing

Previous findings (Zhou et al., 2024; Chen et al., 2024b; Huang et al., 2025) on the impact of a problem’s surface form suggest that the quality of intermediate reasoning steps is influenced not only by the underlying mathematical relationships and logic, but also by how the problem is expressed. Notably, LLMs are sometimes able to solve a paraphrased version of a problem that they fail to solve in its original form, highlighting the potential of paraphrasing to uncover pivotal tokens that are critical for successful reasoning. Motivated by this observation, we introduce paraphrastic probing to efficiently identify the critical token. Given the original question $q_{0}$ , we first prompt the LLM to generate multiple paraphrased forms, denoted as $q_{1},q_{2},...,q_{N}$ , where $N$ is the number of the paraphrased questions. We adopt Automatic Prompt Engineering (APE) (Zhou et al., 2022) to derive paraphrasing instructions that preserve semantic integrity and all numerical values, mathematical relationships, and core logical structures of the problem, while maximizing linguistic and contextual diversity. Additional details can be found in Appendix B. We then obtain the initial reasoning path $r^{q_{0}}_{0}$ for the original question using greedy decoding. This reasoning path is subsequently concatenated with each paraphrased question, and the resulting synthetic inputs are fed into the LLM to compute the token probability distribution at each position in $r^{q_{0}}_{0}$ . Specifically, the token probability distribution at $i$ th position conditioned on the paraphrased question $q_{n}$ is expressed as

$$

P_{i}^{q_{n}}=\text{LLM}(\tilde{a}_{i}|\mathcal{I},q_{n},r^{q_{0}}_{0,<i}), \tag{1}

$$

where $\mathcal{I}$ denotes the instruction prefix and $\tilde{a}_{i}$ represents the sampled token at $i$ th position. The token $\tilde{a}_{i}$ is regarded as a candidate critical token if predicted top-1 token does not match the expected token at the same position in $r^{q_{0}}_{0}$ , i.e.,

$$

\operatorname*{arg\,max}P_{i}^{q_{n}}\neq a_{i}, \tag{2}

$$

where $a_{i}$ denotes the token at the $i$ th position in $r^{q_{0}}_{0}$ .

<details>

<summary>images/condition_one_gsm8k.png Details</summary>

### Visual Description

\n

## Bar Chart: Fraction vs. Average Accuracy for Critical and Random Tokens

### Overview

This bar chart compares the fraction of tokens (expressed as a percentage) for "critical tokens" and "random tokens" based on their average accuracy (also expressed as a percentage). The chart displays two groups of bars, one for tokens with average accuracy less than or equal to 5% and another for tokens with average accuracy greater than 5%. Error bars are included for each bar, indicating the variability or uncertainty in the fraction values.

### Components/Axes

* **X-axis:** "Average accuracy (%)" with two categories: "≤ 5%" and "> 5%".

* **Y-axis:** "Fraction (%)" ranging from 0 to 70.

* **Legend:** Located in the top-right corner, distinguishing between "critical tokens" (represented by a teal color) and "random tokens" (represented by a light grey color).

* **Bars:** Represent the fraction of tokens for each category and token type.

* **Error Bars:** Black lines extending vertically from the top of each bar, indicating the standard error or confidence interval.

### Detailed Analysis

The chart presents four bars with associated error bars.

**For Average Accuracy ≤ 5%:**

* **Critical Tokens:** The bar is teal and reaches approximately 68% on the Y-axis. The error bar extends from approximately 63% to 73%.

* **Random Tokens:** The bar is light grey and reaches approximately 32% on the Y-axis. The error bar extends from approximately 27% to 37%.

**For Average Accuracy > 5%:**

* **Critical Tokens:** The bar is teal and reaches approximately 30% on the Y-axis. The error bar extends from approximately 25% to 35%.

* **Random Tokens:** The bar is light grey and reaches approximately 66% on the Y-axis. The error bar extends from approximately 61% to 71%.

### Key Observations

* When average accuracy is ≤ 5%, critical tokens have a significantly higher fraction than random tokens.

* When average accuracy is > 5%, random tokens have a significantly higher fraction than critical tokens.

* The error bars suggest a reasonable degree of uncertainty in the fraction estimates, but the differences between critical and random tokens within each accuracy category appear substantial.

### Interpretation

The data suggests a strong inverse relationship between average accuracy and the fraction of critical versus random tokens. Tokens with low average accuracy (≤ 5%) are predominantly critical tokens, while tokens with high average accuracy (> 5%) are predominantly random tokens. This could indicate that critical tokens are more challenging to predict or classify accurately, perhaps due to their inherent complexity or importance in the context. The higher fraction of random tokens with higher accuracy suggests they are easier to predict or classify.

The chart implies that focusing on improving the accuracy of critical tokens might be a key area for improvement, as they currently exhibit lower accuracy compared to random tokens. The error bars indicate that these differences are likely statistically significant, but further analysis would be needed to confirm this. The data could be used to inform strategies for model training or data selection, prioritizing critical tokens to enhance overall performance.

</details>

(a)

<details>

<summary>images/condition_two_gsm8k.png Details</summary>

### Visual Description

\n

## Bar Chart: Fraction vs. Average Accuracy for Token Types

### Overview

This bar chart compares the fraction of tokens (expressed as a percentage) for "critical tokens" and "random tokens" across two categories of average accuracy (also expressed as a percentage): "≤ 10%" and "> 10%". Error bars are included for each data point, indicating the variability or confidence interval.

### Components/Axes

* **X-axis:** "Average accuracy (%)" with two categories: "≤ 10%" and "> 10%".

* **Y-axis:** "Fraction (%)" ranging from 0% to 60%.

* **Legend:** Located in the top-right corner, distinguishing between:

* "critical tokens" (represented by a teal/green color)

* "random tokens" (represented by a light teal/green color)

* **Error Bars:** Vertical lines extending above each bar, representing the standard error or confidence interval.

### Detailed Analysis

The chart presents four data series, two for each accuracy category, and each series has an associated error bar.

**1. Average Accuracy ≤ 10%**

* **Critical Tokens:** The bar for critical tokens is approximately 62% tall, with an error bar extending to approximately 65%.

* **Random Tokens:** The bar for random tokens is approximately 40% tall, with an error bar extending to approximately 43%.

**2. Average Accuracy > 10%**

* **Critical Tokens:** The bar for critical tokens is approximately 36% tall, with an error bar extending to approximately 39%.

* **Random Tokens:** The bar for random tokens is approximately 60% tall, with an error bar extending to approximately 63%.

### Key Observations

* For tokens with average accuracy ≤ 10%, the fraction of critical tokens is significantly higher than that of random tokens.

* For tokens with average accuracy > 10%, the fraction of random tokens is significantly higher than that of critical tokens.

* The error bars suggest a relatively low degree of uncertainty in the measurements.

### Interpretation

The data suggests a strong inverse relationship between average accuracy and the fraction of critical tokens. When average accuracy is low (≤ 10%), critical tokens are more prevalent. Conversely, when average accuracy is high (> 10%), random tokens are more prevalent. This could indicate that critical tokens are more likely to be associated with errors or lower-performing elements, while random tokens are more likely to be associated with successful or higher-performing elements.

The chart implies that identifying and focusing on critical tokens might be a useful strategy for improving overall accuracy, particularly in scenarios where accuracy is initially low. The shift in prevalence between token types as accuracy increases suggests that addressing the issues related to critical tokens leads to improved performance, and they become less dominant as the system improves. The error bars indicate that these trends are relatively robust and not likely due to random chance.

</details>

(b)

<details>

<summary>images/compare_ct_rnd_gsm8k.png Details</summary>

### Visual Description

\n

## Line Chart: Pass@k vs. Number of Sample k

### Overview

This line chart compares the "pass@k%" metric for two categories: "critical tokens" and "random tokens" as a function of the "number of sample k". Error bars are included for each data point, indicating the variability or confidence interval.

### Components/Axes

* **X-axis:** "number of sample k". Scale ranges from approximately 0 to 45, with markers at 0, 10, 20, 30, and 40.

* **Y-axis:** "pass@k%". Scale ranges from approximately 50% to 87%, with markers at 50%, 55%, 60%, 65%, 70%, 75%, 80%, and 85%.

* **Data Series 1:** "critical tokens" - Represented by a red line with triangular markers and error bars.

* **Data Series 2:** "random tokens" - Represented by a purple line with square markers and error bars.

* **Legend:** Located in the bottom-right corner, clearly labeling each data series with its corresponding color.

### Detailed Analysis

**Critical Tokens (Red Line):**

The line representing "critical tokens" slopes generally upward, indicating an increasing "pass@k%" with increasing "number of sample k".

* At k = 0, pass@k% is approximately 71% ± 4%.

* At k = 10, pass@k% is approximately 78% ± 3%.

* At k = 20, pass@k% is approximately 82% ± 2%.

* At k = 30, pass@k% is approximately 84% ± 2%.

* At k = 40, pass@k% is approximately 85% ± 2%.

**Random Tokens (Purple Line):**

The line representing "random tokens" also slopes upward, but at a slower rate than the "critical tokens" line.

* At k = 0, pass@k% is approximately 52% ± 5%.

* At k = 10, pass@k% is approximately 58% ± 4%.

* At k = 20, pass@k% is approximately 61% ± 3%.

* At k = 30, pass@k% is approximately 63% ± 3%.

* At k = 40, pass@k% is approximately 65% ± 4%.

### Key Observations

* "Critical tokens" consistently achieve a higher "pass@k%" than "random tokens" across all values of "number of sample k".

* The difference in "pass@k%" between the two categories appears to be more pronounced at lower values of "number of sample k".

* The error bars suggest that the "critical tokens" data has slightly less variability than the "random tokens" data.

* Both lines appear to be approaching a plateau as "number of sample k" increases, suggesting diminishing returns.

### Interpretation

The data suggests that using "critical tokens" leads to a significantly higher "pass@k%" compared to using "random tokens". This implies that selecting tokens based on their importance or criticality is a more effective strategy for achieving a desired level of performance (as measured by "pass@k%"). The diminishing returns observed at higher values of "number of sample k" suggest that there is a point beyond which increasing the sample size provides minimal improvement. The smaller error bars for "critical tokens" indicate a more consistent and reliable performance compared to "random tokens". This chart likely represents the results of an experiment evaluating different token selection strategies in a machine learning or natural language processing context, where "pass@k%" is a metric for evaluating the quality of generated outputs. The "number of sample k" likely refers to the number of candidate tokens considered during the generation process.

</details>

(c)

<details>

<summary>images/density_gsm8k.png Details</summary>

### Visual Description

\n

## Histogram: Density of Consistency Score with Correct/Incorrect Answers

### Overview

The image presents a histogram comparing the density distribution of "consistency score" for two groups: those with "incorrect answers" and those with "correct answers". The x-axis represents the consistency score, and the y-axis represents the density (expressed as a percentage).

### Components/Axes

* **X-axis Title:** "consistency score"

* **X-axis Range:** 0 to 5

* **Y-axis Title:** "density (%)"

* **Y-axis Range:** 0 to 0.7

* **Legend:** Located in the top-right corner.

* "w incorrect answers" - represented by a light green color.

* "w correct answers" - represented by a light red color.

### Detailed Analysis

The histogram displays the distribution of consistency scores for each group.

**Incorrect Answers (Light Green):**

The distribution is heavily skewed towards lower consistency scores.

* Around a consistency score of 0, the density is approximately 0.68.

* The density decreases rapidly as the consistency score increases.

* Between consistency scores of 0 and 1, the density remains high, around 0.65-0.7.

* At a consistency score of 1, the density is approximately 0.65.

* At a consistency score of 2, the density drops to approximately 0.15.

* The density remains low for consistency scores greater than 2, fluctuating around 0.05-0.1.

**Correct Answers (Light Red):**

The distribution is skewed towards higher consistency scores.

* Around a consistency score of 5, the density is approximately 0.55.

* The density is relatively low for consistency scores below 3.

* At a consistency score of 0, the density is approximately 0.02.

* At a consistency score of 1, the density is approximately 0.03.

* At a consistency score of 2, the density is approximately 0.05.

* At a consistency score of 3, the density increases to approximately 0.12.

* At a consistency score of 4, the density increases to approximately 0.18.

* At a consistency score of 5, the density reaches approximately 0.55.

### Key Observations

* The distribution of consistency scores is significantly different for incorrect and correct answers.

* Incorrect answers tend to have lower consistency scores, while correct answers tend to have higher consistency scores.

* There is a clear separation between the two distributions, suggesting that consistency score is a useful indicator of answer correctness.

* The peak density for incorrect answers is around 0, while the peak density for correct answers is around 5.

### Interpretation

The data suggests a strong correlation between consistency score and answer correctness. Higher consistency scores are associated with correct answers, and lower consistency scores are associated with incorrect answers. This implies that the "consistency score" metric effectively measures the reliability or coherence of the reasoning process leading to an answer.

The stark difference in distributions indicates that the consistency score can be used as a predictor of answer accuracy. A model or system could potentially use this score to identify and flag potentially incorrect answers for review. The fact that the distributions are not entirely separated suggests that consistency score is not a perfect predictor, and some incorrect answers may still have relatively high consistency scores, and vice versa. Further investigation might be needed to understand the reasons for these outliers. The distributions are not normal, but rather heavily skewed, which suggests that the consistency score may be bounded or influenced by other factors.

</details>

(d)

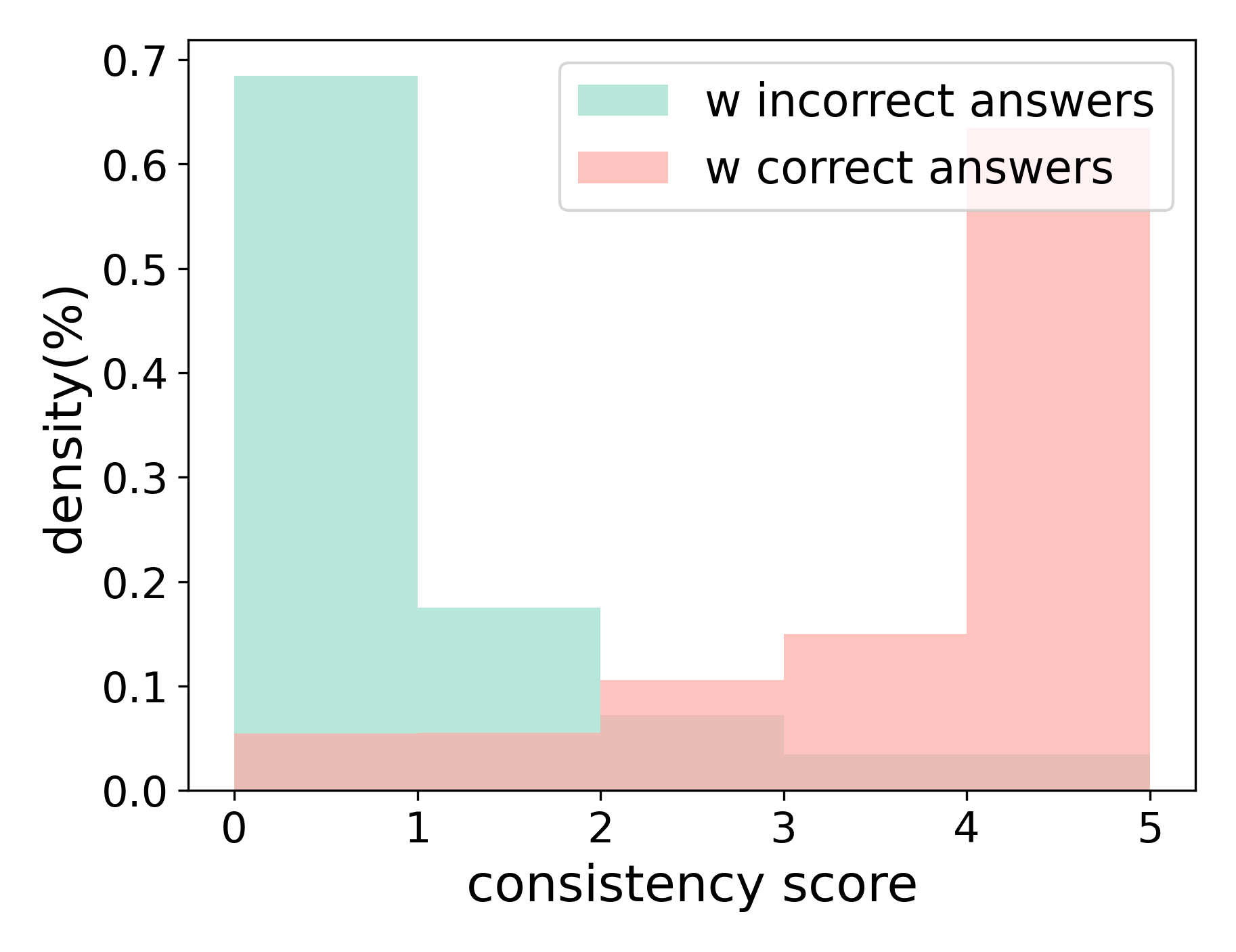

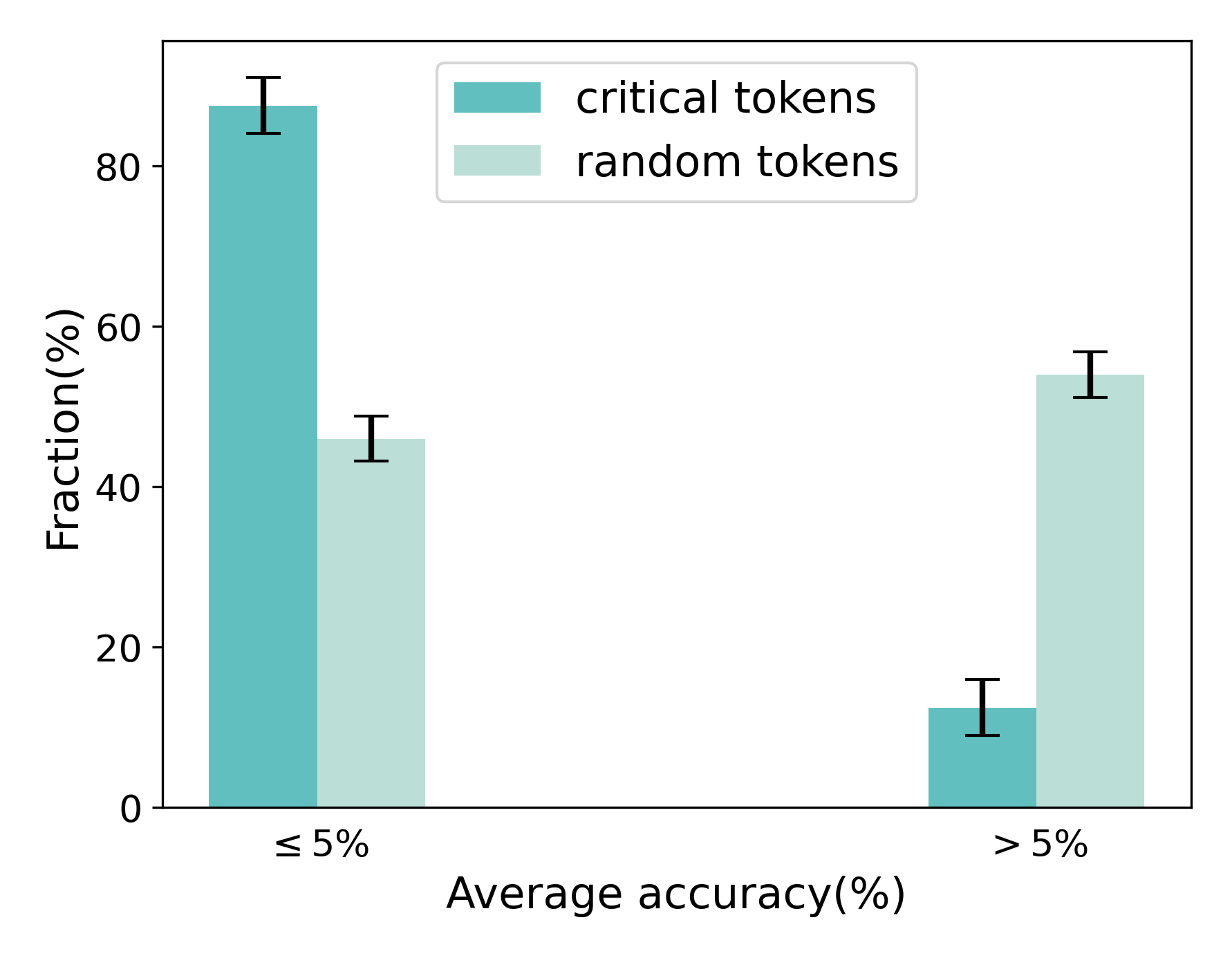

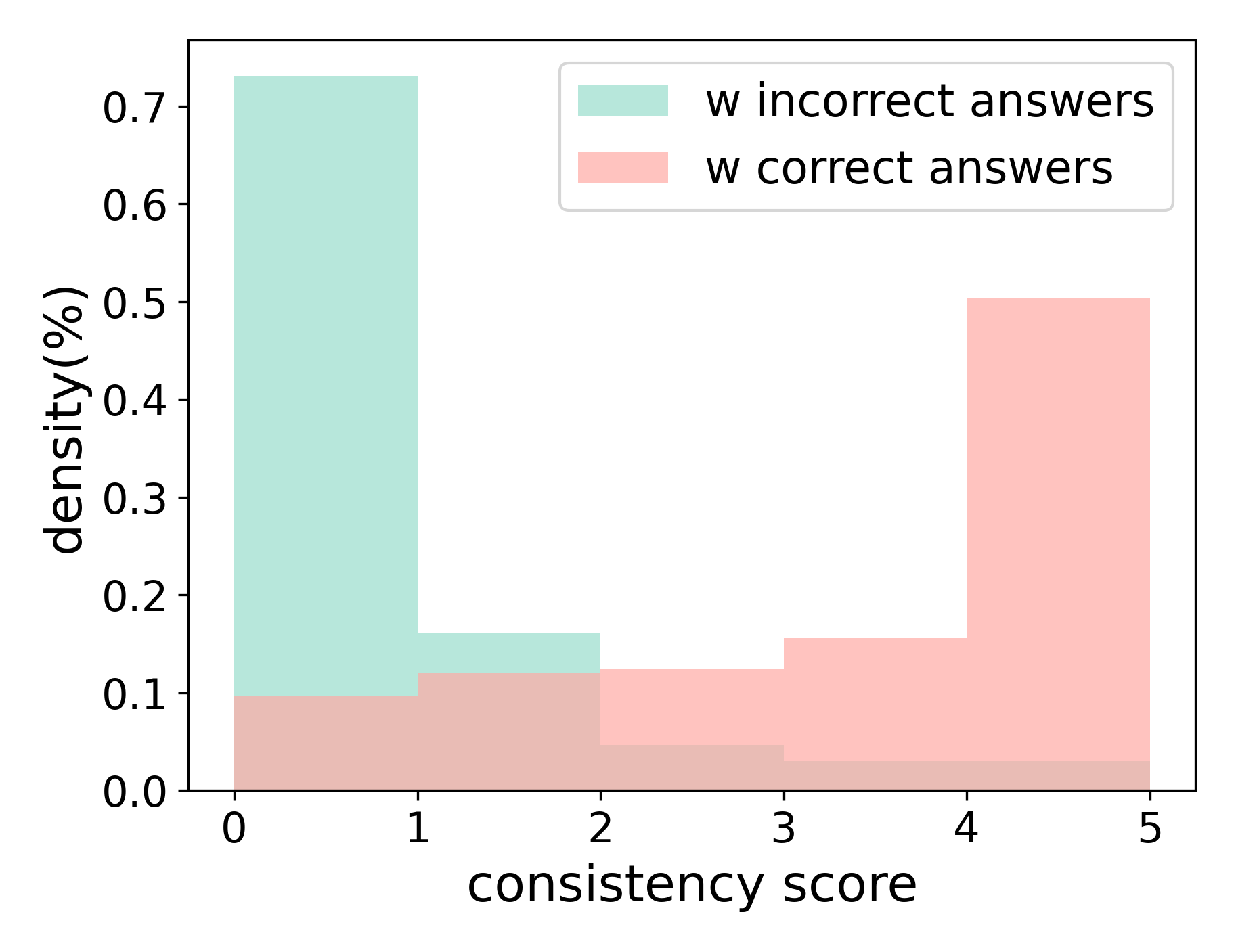

Figure 6: (a) Comparison of the fraction of tokens whose associated rollout correctness falls below or above 5%, for tokens extracted by our method versus randomly selected tokens. (b) Comparison of the fraction of the subsequent tokens whose associated rollout correctness falls below or above 10%, for tokens extracted by our method versus randomly selected tokens. (c) Comparison of the impact of extracted tokens by our method versus random tokens on LLM reasoning performance. (d) Comparison of the density distributions of consistency scores for rollouts with correct and incorrect answers.

To validate the effectiveness of our method in identifying critical tokens and to demonstrate the pivotal role these tokens play when extracted via paraphrastic probing, we conduct a case study illustrated in Figure 5. Because large language models can adjust their reasoning trajectories under the paraphrased form of the question, certain tokens in the original reasoning sequence produce different subsequent tokens when conditioned on paraphrased inputs. In this example, most candidate critical tokens are located within incorrect reasoning segments. Notably, when we identify “subtracting” as a critical token and replace it with an alternative token (i.e., “winning”), the resulting rollout corrects the erroneous reasoning steps and yields the correct final answer. This example highlights the impact of critical tokens and underscores the effectiveness of our method in identifying tokens that are pivotal to reasoning outcomes.

In addition, we conduct a quantitative analysis to examine the authenticity and impact of tokens extracted via paraphrastic probing, comparing them against randomly selected tokens. Specifically, we sample 100 instances with incorrect reasoning trajectories from the GSM8K (Cobbe et al., 2021) training sets. Following the paraphrastic probing pipeline, we identify candidate critical tokens in the early reasoning steps. In each run, we randomly sample 40% of the candidate critical tokens for evaluation and repeat the experiment independently 10 times. For comparison, we apply the same procedure to randomly selected tokens. All evaluations are conducted using Llama-3.1-8B-Instruct (Grattafiori et al., 2024). We first compute the average accuracy of rollouts generated from partial trajectories truncated at the position of the extracted tokens, and compare these results with those obtained from random-token truncation. As shown in Figure 6(a), a large proportion of the extracted tokens exhibit near-zero correctness, consistent with the first criterion of critical tokens. We further evaluate the average accuracy of rollouts initiated from subsequent tokens (5–10 tokens). The results in Figure 6(b) indicate that errors persist beyond the identified positions, supporting the second criterion that critical tokens induce sustained degradation in downstream reasoning. Finally, we substitute the extracted critical tokens with alternative tokens and roll out multiple new reasoning trajectories. As shown in Figure 6(c), replacing critical tokens leads to a significantly larger improvement in reasoning accuracy compared to substituting random tokens. These results further validate both the pivotal role of the identified critical tokens and the effectiveness of our paraphrastic probing method. Additional results can be found in Appendix A.

We introduce a heuristic verifier to select the final critical token from multiple candidates. For a candidate token $a_{i}$ and paraphrased question $q_{n}$ , the verification score is defined as

$$

\Delta_{q_{n}}(a_{i})=\max P^{q_{n}}_{i}-P^{q_{n}}_{i}(\tilde{a}_{i}=a_{i}). \tag{3}

$$

where $P^{q_{n}}_{i}$ denotes the predictive distribution at position $i$ on question $q_{n}$ . Intuitively, $\Delta$ measures how much the predicted top-1 token deviates from the expected token, indicating the token’s potential impact on the reasoning trajectory. For each extracted token $a_{i}$ ,we take the maximum score across paraphrases,

$$

\Delta(a_{i})=\max_{q_{n}}\Delta_{q_{n}}(a_{i}),\vskip-5.69054pt \tag{4}

$$

and select the final critical token as

$$

a_{c}=\operatorname*{arg\,max}_{i}\Delta(a_{i}).\vskip-5.69054pt \tag{5}

$$

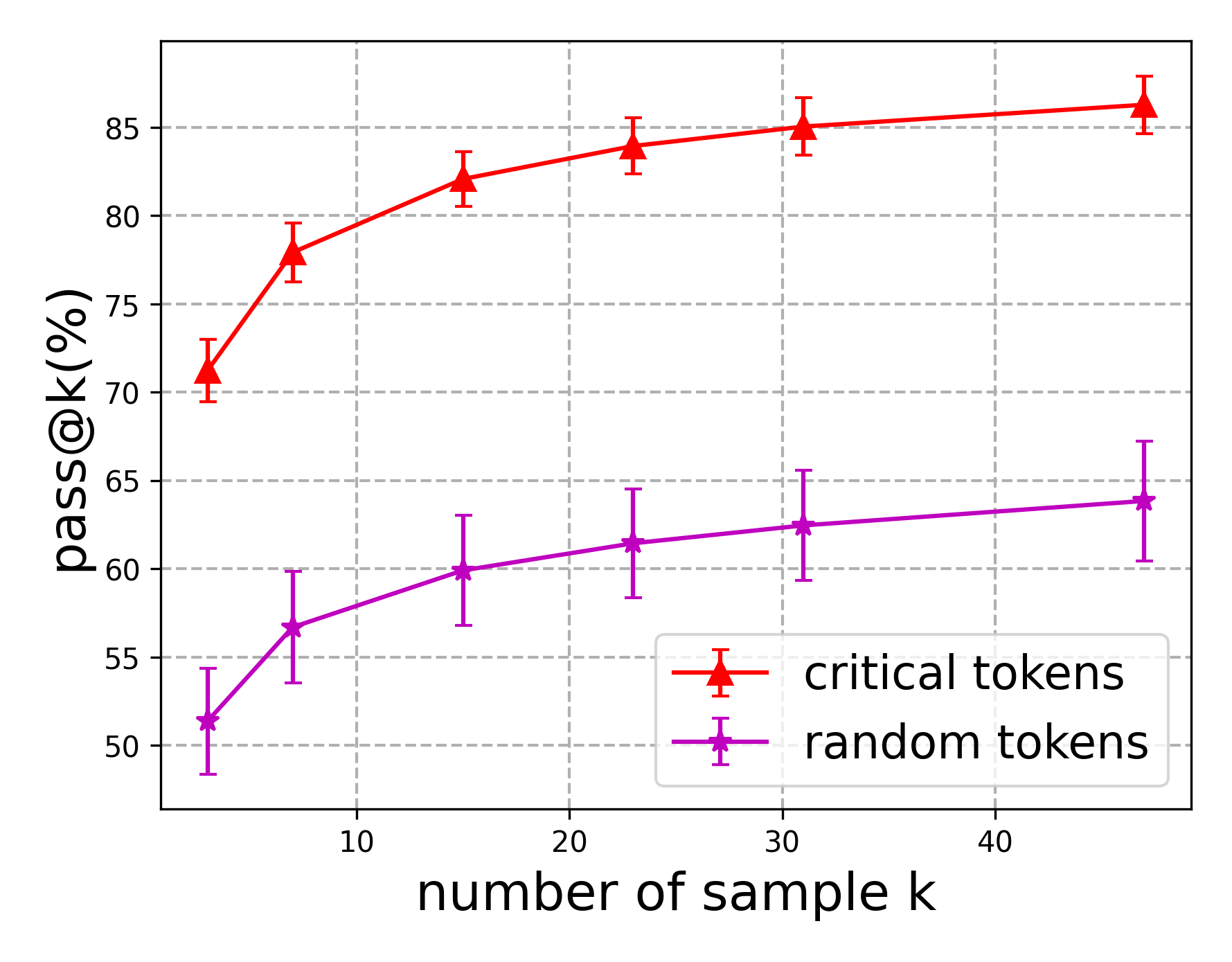

### 3.2 Consistency Verification

After identifying the final critical token $a_{c}$ , we aim to refine the original reasoning path with alternative tokens and achieve final answer with paraphrase consistency mechanism. Specifically, we generate a set of alternative tokens $a^{0}_{c},a^{1}_{c},a^{2}_{c},...,a^{K-1}_{c}$ using the LLM conditioned on original question $q_{0}$ , where $a^{0}_{c}$ is the original token in $r^{q_{0}}_{0}$ and the remaining tokens are sampled via top-K sampling. The initial reasoning path is truncated at the position of critical token, and each alternative token is concatenated to form synthetic inputs $\tilde{r}_{c}^{0},\tilde{r}_{c}^{1},\tilde{r}_{c}^{2},...,\tilde{r}_{c}^{K-1}$ . We then roll out new reasoning trajectories for each synthetic input with respect to both the original and paraphrased questions using greedy decoding, denoted as $r^{q_{0}}_{k},r^{q_{1}}_{k},...,r^{q_{N}}_{k}$ for $k=0,1,2,...,K-1$ . Next, for the rollout with the $k$ th alternative token, we compare the answers obtained from the paraphrased forms with that from the original form and compute a consistency score $c_{k}=\sum_{n-1}^{N}\mathbb{I}(\Phi(r^{q_{0}}_{k})=\Phi(r^{q_{n}}_{k}))$ , where $\Phi(\cdot)$ and $\mathbb{I}(\cdot)$ denotes the function that extracts the final answer from a reasoning trajectory and the indicator function, respectively. The answer associated with the highest consistency score is then selected as the final prediction

$$

\text{ans}_{f}=\Phi(r^{q_{0}}_{k}),\text{where}\,k=\operatorname*{arg\,max}_{k}c_{k}.\vskip-11.38109pt \tag{6}

$$

To justify our paraphrase consistency mechanism, we investigate the impact of paraphrased forms on LLM reasoning. We sample instances from the GSM8K (Cobbe et al., 2021) and follow our pipeline to extract critical tokens. From each truncated reasoning trajectory, we roll out multiple reasoning paths by concatenating alternative tokens. For each original question, we generate five paraphrased variants and compute the consistency score for resulting rollouts. The evaluation is conducted on Llama-3.1-8B-Instruct (Grattafiori et al., 2024). We then analyze the distribution of consistency scores for rollouts that yield correct versus incorrect answers. As shown in Figure 6(d), more than 90% of rollouts with correct answers achieve a consistency score of at least 1, whereas this proportion drops to around 30% for rollouts with incorrect answers. This sharp contrast indicates that correct rollouts are more robust across paraphrased variants, motivating the design of our paraphrase consistency mechanism to exploit this property for improved final predictions.

To address potential collisions when multiple answers obtain the same maximum consistency score, we introduce similarity-weighted consistency verification. Inspired by weighted majority voting (Dogan and Birant, 2019), this approach adjusts the influence of each paraphrased form on the consistency score according to its similarity to the original form. Intuitively, paraphrased forms with lower similarity should exert greater weight, as they provide stronger evidence of robustness, whereas those closely resembling the original form contribute less. Concretely, we first extract embeddings for both the original and paraphrased questions and compute their similarity scores as $s_{n}=\text{sim}(q_{0},q_{n})$ , where $\text{sim}(\cdot)$ denotes a similarity measure such as cosine similarity. We then derive weights via a softmax function $w_{n}=\text{softmax}(s_{n})=\frac{\exp(-\lambda s_{n})}{\sum_{n}\exp(-\lambda n)}$ , where $\lambda$ is the temperature scaling coefficient. Finally, the similarity-weighted consistency score is defined as $\tilde{c}_{k}=\sum_{n-1}^{N}w_{n}\mathbb{I}(\Phi(r^{q_{0}}_{k})=\Phi(r^{q_{n}}_{k}))$ . This ensures agreement with more diverse paraphrases contributes more strongly to the final decision.

Table 1: Comparison of our method with baseline approaches on Llama-3.1-8B-Instruct and Mistral-7B-Instruct-v0.2.

| Llama-3.1 Self-Consistency Tree-of-Thought | Chain-of-Thought 80.60 75.74 | 77.40 31.80 33.28 | 28.00 37.80 31.60 | 31.00 85.10 81.20 | 83.00 60.75 80.72 | 58.91 |

| --- | --- | --- | --- | --- | --- | --- |

| Guided Decoding | 75.51 | 32.45 | 31.20 | 81.70 | 81.74 | |

| Predictive Decoding | 81.43 | 40.26 | 34.00 | 85.90 | 84.56 | |

| Phi-Decoding | 86.58 | 39.88 | 38.20 | 84.50 | 85.41 | |

| PPCV (Ours) | 88.24 | 49.73 | 50.00 | 89.60 | 88.31 | |

| Mistral-7B | Chain-of-Thought | 46.45 | 26.91 | 12.20 | 62.40 | 41.42 |

| Self-Consistency | 50.38 | 28.65 | 14.20 | 66.70 | 44.54 | |

| Tree-of-Thought | 50.49 | 25.78 | 11.40 | 60.60 | 41.04 | |

| Guided Decoding | 50.79 | 27.07 | 14.00 | 62.90 | 39.51 | |

| Predictive Decoding | 55.67 | 27.07 | 14.40 | 62.10 | 47.87 | |

| Phi-Decoding | 56.60 | 28.43 | 13.40 | 63.20 | 60.24 | |

| PPCV (Ours) | 56.58 | 31.08 | 14.60 | 69.30 | 69.88 | |

Table 2: Comparison of our method with baseline approaches on Qwen3-32B (non-thinking mode).

| Qwen3-32B Guided Decoding Predictive Decoding | Chain-of-Thought 26.67 32.67 | 30.00 22.67 24.00 | 23.67 28.67 33.33 | 30.00 7.33 10.33 | 9.67 |

| --- | --- | --- | --- | --- | --- |

| Phi-Decoding | 33.60 | 24.33 | 36.67 | 10.67 | |

| PPCV (Ours) | 40.00 | 26.00 | 43.33 | 13.33 | |

### 3.3 Discussion

Our technical contributions differ from prior works in three distinct ways. First, prior works (Zhou et al., 2024; Chen et al., 2024b; Yadav et al., 2024) typically use paraphrasing merely to expand the solution space. In contrast, we introduce Paraphrastic Probing, a mechanism that uses paraphrasing to test the model’s internal confidence. By analyzing the discrepancy in token-level logits of the initial trajectory between the original and paraphrased questions, we can rigorously pinpoint the critical tokens that may lead to errors in the following steps.This transforms paraphrasing from a generation tool into a precise, token-level diagnostic tool. Second, prior works (Zhou et al., 2024; Chen et al., 2024b) typically rely on simple majority voting across multiple solutions. Our Paraphrase Consistency mechanism is technically distinct. It validates answers based on their robustness across semantic variations of the problem constraint. We further introduce a similarity-weighted consistency metric that weighs answers based on the linguistic diversity of the paraphrase, offering a more nuanced selection criterion than simple frequency counts. At last, a major technical limitation in current reasoning research is the reliance on external models or human-annotated error steps. Our method contributes a fully self-contained pipeline that identifies and corrects errors using the model’s own sensitivity to surface-form perturbations. More discussion on the impact of critical tokens on correct trajectory can be found in Appendix D.

Besides, although we select the top candidate for the primary experiments to maintain computational efficiency, the framework itself naturally extends to the multi–critical-token setting. For multiple critical tokens, we can generate alternative tokens for each identified position and apply paraphrase consistency across the new rollouts. This allows the model to refine multiple segments of its intermediate reasoning steps rather than only one. The details of the algorithm can be found in Appendix C.

Table 3: Comparison of model performance when using critical tokens versus random tokens.

| Chain-of-Thought random tokens critical tokens (Ours) | 77.40 82.08 88.24 | 28.00 40.29 49.73 | 31.00 42.12 50.00 | 83.00 84.77 89.60 | 58.91 75.68 88.31 |

| --- | --- | --- | --- | --- | --- |

Table 4: Comparison of our proposed paraphrase consistency against the majority voting.

| Chain-of-Thought majority voting paraphrase consistency (Ours) | 77.40 87.20 88.24 | 28.00 47.36 49.73 | 31.00 48.19 50.00 | 83.00 88.80 89.60 | 58.91 86.16 88.31 |

| --- | --- | --- | --- | --- | --- |

## 4 Experiments

In this section, we first describe the experimental setup, followed by the main results of our proposed method compared to the baselines. We also perform ablation study and computational cost analysis.

### 4.1 Setup

Datasets. To comprehensively assess our method, we evaluate it on seven benchmarks. Six focus on mathematical reasoning, including GSM8K (Cobbe et al., 2021), GSM-Hard (Gao et al., 2023), SVAMP (Patel et al., 2021), Math500 (Hendrycks et al., 2021), and the more challenging competition-level datasets AIME2024, AIME2025, BRUMO2025, and HMMT2025 (Balunović et al., 2025). In addition, we use ARC-Challenge (Clark et al., 2018) to evaluate knowledge reasoning ability of LLMs.

Baselines. In our experiments, we use Chain-of-Thought (CoT) (Wei et al., 2022), Self-Consistency (Wang et al., 2023), Tree-of-Thought (ToT) (Yao et al., 2023), Guided Decoding (Xie et al., 2023), Predictive Decoding (Ma et al., 2025), and Phi-Decoding (Xu et al., 2025) as baseline methods.

Metric. Following prior work, we adopt pass@k (k=1,4) as the primary evaluation metric.

Implementation Details. In our experiments, we adopt Llama-3.1-8B-Instruct (Grattafiori et al., 2024), Mistral-7B-Instruct-v0.2 (Jiang et al., 2023), Qwen-3-32B (Yang et al., 2025a) and DeepSeek-R1-Distill-Llama-70B as the target models. we employ the non-thinking mode for Qwen-3-32B. Throughout our method, we employ the same model for generating paraphrased problems, identifying critical tokens, and producing new rollouts. In the first stage, we generate 4 paraphrased variants for each problem in the math benchmarks and 3 variants for each problem in the ARC dataset. In the second stage, we select the top 10 tokens for new rollouts, with the temperature scaling coefficient $\lambda$ set to 2. For fair comparison, we ensure a comparable inference budget across methods. Specifically, we rollout 48 samples for Self-Consistency (Wang et al., 2023). For Predictive Decoding (Ma et al., 2025) and Phi-Decoding (Xu et al., 2025), we rollout 4-8 samples per foresight step, and each problem typically involves 5–8 foresight steps. We also adopt a zero-shot CoT prompt to elicit the new rollouts. For the baselines, we strictly follow their original settings, including temperature values, sampling strategies, and the number of few-shot examples. All experiments are conducted on NVIDIA A100 GPUs.

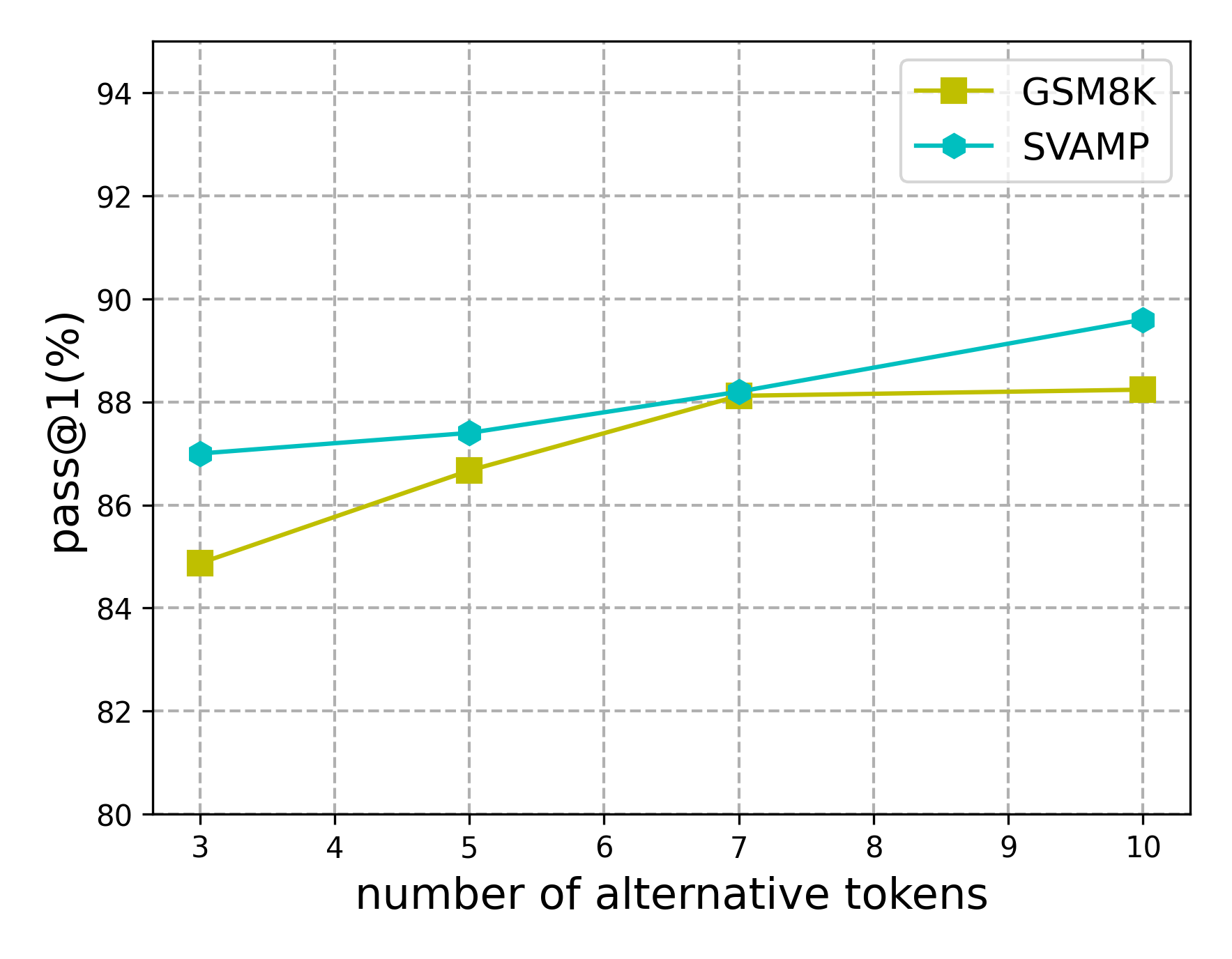

<details>

<summary>images/topk.png Details</summary>

### Visual Description

## Line Chart: Pass@1 Performance vs. Number of Alternative Tokens

### Overview

This line chart compares the Pass@1 performance of two models, GSM8K and SVAMP, as the number of alternative tokens increases from 3 to 10. The y-axis represents the Pass@1 accuracy (in percentage), and the x-axis represents the number of alternative tokens considered.

### Components/Axes

* **X-axis Title:** "number of alternative tokens"

* Scale: 3 to 10, with increments of 1.

* **Y-axis Title:** "pass@1 (%)"

* Scale: 80 to 94, with increments of 2.

* **Legend:** Located in the top-right corner.

* GSM8K: Represented by a yellow line with square markers.

* SVAMP: Represented by a teal line with circular markers.

* **Gridlines:** Present in both horizontal and vertical directions, aiding in value estimation.

### Detailed Analysis

**GSM8K (Yellow Line):**

The line representing GSM8K shows a slight upward trend, but plateaus after 7 alternative tokens.

* At 3 alternative tokens: Approximately 84.5% Pass@1.

* At 4 alternative tokens: Approximately 85.5% Pass@1.

* At 5 alternative tokens: Approximately 87% Pass@1.

* At 6 alternative tokens: Approximately 87.5% Pass@1.

* At 7 alternative tokens: Approximately 88% Pass@1.

* At 8 alternative tokens: Approximately 88% Pass@1.

* At 9 alternative tokens: Approximately 88% Pass@1.

* At 10 alternative tokens: Approximately 88% Pass@1.

**SVAMP (Teal Line):**

The line representing SVAMP shows a more consistent upward trend throughout the range of alternative tokens.

* At 3 alternative tokens: Approximately 87% Pass@1.

* At 4 alternative tokens: Approximately 87.5% Pass@1.

* At 5 alternative tokens: Approximately 88% Pass@1.

* At 6 alternative tokens: Approximately 88.5% Pass@1.

* At 7 alternative tokens: Approximately 88.5% Pass@1.

* At 8 alternative tokens: Approximately 89% Pass@1.

* At 9 alternative tokens: Approximately 89.5% Pass@1.

* At 10 alternative tokens: Approximately 90% Pass@1.

### Key Observations

* SVAMP consistently outperforms GSM8K across all tested numbers of alternative tokens.

* The performance gain for GSM8K diminishes significantly after 7 alternative tokens, suggesting a saturation point.

* SVAMP shows a more sustained improvement in Pass@1 as the number of alternative tokens increases.

* The difference in performance between the two models is relatively small, but noticeable.

### Interpretation

The chart demonstrates the impact of increasing the number of alternative tokens on the Pass@1 accuracy of two language models, GSM8K and SVAMP. The results suggest that while both models benefit from considering more alternatives, SVAMP is more effectively utilizing this increased search space. The plateauing performance of GSM8K indicates that its ability to leverage additional tokens is limited, potentially due to architectural constraints or training data characteristics. The consistent improvement of SVAMP suggests a more robust mechanism for exploring and selecting optimal solutions from a larger set of possibilities. This could be due to differences in model size, training methodology, or the specific tasks they were trained on. The data suggests that for maximizing Pass@1 accuracy, increasing the number of alternative tokens is a beneficial strategy, particularly for models like SVAMP that can effectively exploit this expanded search space.

</details>

(a)

<details>

<summary>images/time.png Details</summary>

### Visual Description

\n

## Bar Chart: Latency Comparison of Decoding Methods

### Overview

This bar chart compares the latency (in seconds) of several decoding methods – Chain-of-Thought, Predictive Decoding, Phi-Decoding, and four variations of PPCV (PPCV-T1 to PPCV-T4) – across five different datasets: GSM8K, GSMHard, Math500, SVAMP, and ARC. The chart uses stacked bars to represent the contribution of each decoding method to the total latency for each dataset.

### Components/Axes

* **X-axis:** Datasets - GSM8K, GSMHard, Math500, SVAMP, ARC.

* **Y-axis:** Latency (s) - Scale ranges from 0 to 40 seconds, with increments of 5 seconds.

* **Legend (Top-Right):**

* Chain-of-Thought (Light Teal)

* Predictive Decoding (Medium Teal)

* Phi-Decoding (Light Orange)

* PPCV-T1 (Ours) (Medium Orange)

* PPCV-T2 (Ours) (Dark Orange)

* PPCV-T3 (Ours) (Yellow)

* PPCV-T4 (Ours) (Pink)

### Detailed Analysis

Here's a breakdown of the latency values for each dataset and decoding method, based on the bar heights. Note that these are approximate values read from the chart.

* **GSM8K:**

* Chain-of-Thought: ~15s

* Predictive Decoding: ~2s

* Phi-Decoding: ~0.5s

* PPCV-T1: ~1s

* PPCV-T2: ~0.5s

* PPCV-T3: ~0.2s

* PPCV-T4: ~0.2s

* Total: ~19.4s

* **GSMHard:**

* Chain-of-Thought: ~24s

* Predictive Decoding: ~3s

* Phi-Decoding: ~1s

* PPCV-T1: ~1.5s

* PPCV-T2: ~0.5s

* PPCV-T3: ~0.3s

* PPCV-T4: ~0.3s

* Total: ~30.6s

* **Math500:**

* Chain-of-Thought: ~42s

* Predictive Decoding: ~2s

* Phi-Decoding: ~0.5s

* PPCV-T1: ~1.5s

* PPCV-T2: ~0.5s

* PPCV-T3: ~0.2s

* PPCV-T4: ~0.2s

* Total: ~46.9s

* **SVAMP:**

* Chain-of-Thought: ~13s

* Predictive Decoding: ~2s

* Phi-Decoding: ~0.5s

* PPCV-T1: ~1.5s

* PPCV-T2: ~0.5s

* PPCV-T3: ~0.2s

* PPCV-T4: ~0.2s

* Total: ~17.9s

* **ARC:**

* Chain-of-Thought: ~15s

* Predictive Decoding: ~2s

* Phi-Decoding: ~0.5s

* PPCV-T1: ~1.5s

* PPCV-T2: ~0.5s

* PPCV-T3: ~0.2s

* PPCV-T4: ~0.2s

* Total: ~19.9s

**Trends:**

* Chain-of-Thought consistently contributes the largest portion of latency across all datasets.

* PPCV-T3 and PPCV-T4 have very similar, minimal contributions to latency.

* Predictive Decoding and Phi-Decoding contribute relatively small amounts of latency compared to Chain-of-Thought.

* PPCV-T1 and PPCV-T2 contribute slightly more latency than PPCV-T3 and PPCV-T4.

### Key Observations

* The Math500 dataset exhibits the highest overall latency, primarily driven by the Chain-of-Thought method.

* The latency for Chain-of-Thought is significantly higher than all other methods across all datasets.

* The PPCV methods (T1-T4) consistently show low latency contributions.

* The differences in latency between the PPCV methods are relatively small.

### Interpretation

The chart demonstrates a clear trade-off between decoding method and latency. Chain-of-Thought, while potentially offering higher accuracy or quality of results (not shown in this chart), incurs a substantial latency cost. The PPCV methods, particularly T3 and T4, appear to offer a significant reduction in latency, albeit potentially at the expense of performance on the tasks. The consistent pattern across datasets suggests that this latency difference is inherent to the decoding methods themselves and not specific to the characteristics of the datasets. The "Ours" label on PPCV-T1 through PPCV-T4 indicates these methods were developed by the authors of the study, and the chart serves to highlight their efficiency gains compared to established methods like Chain-of-Thought, Predictive Decoding, and Phi-Decoding. The stacked bar format effectively visualizes the composition of total latency, allowing for a clear comparison of the contribution of each method.

</details>

(b)

<details>

<summary>images/throughput.png Details</summary>

### Visual Description

\n

## Bar Chart: Throughput Comparison of Decoding Methods

### Overview

This bar chart compares the throughput (tokens/second) of four different decoding methods – Chain-of-Thought, Predictive Decoding, Phi-Decoding, and PPCV (Ours) – across five different datasets: GSM8K, GSMHard, Math500, SVAMP, and ARC. Each dataset has four bars representing the throughput of each decoding method.

### Components/Axes

* **X-axis:** Datasets - GSM8K, GSMHard, Math500, SVAMP, ARC.

* **Y-axis:** Throughput (tokens/sec), ranging from 0 to 2000, with increments of 250.

* **Legend:** Located in the top-right corner, identifies the color-coding for each decoding method:

* Chain-of-Thought

* Predictive Decoding

* Phi-Decoding

* PPCV (Ours)

</details>

(c)

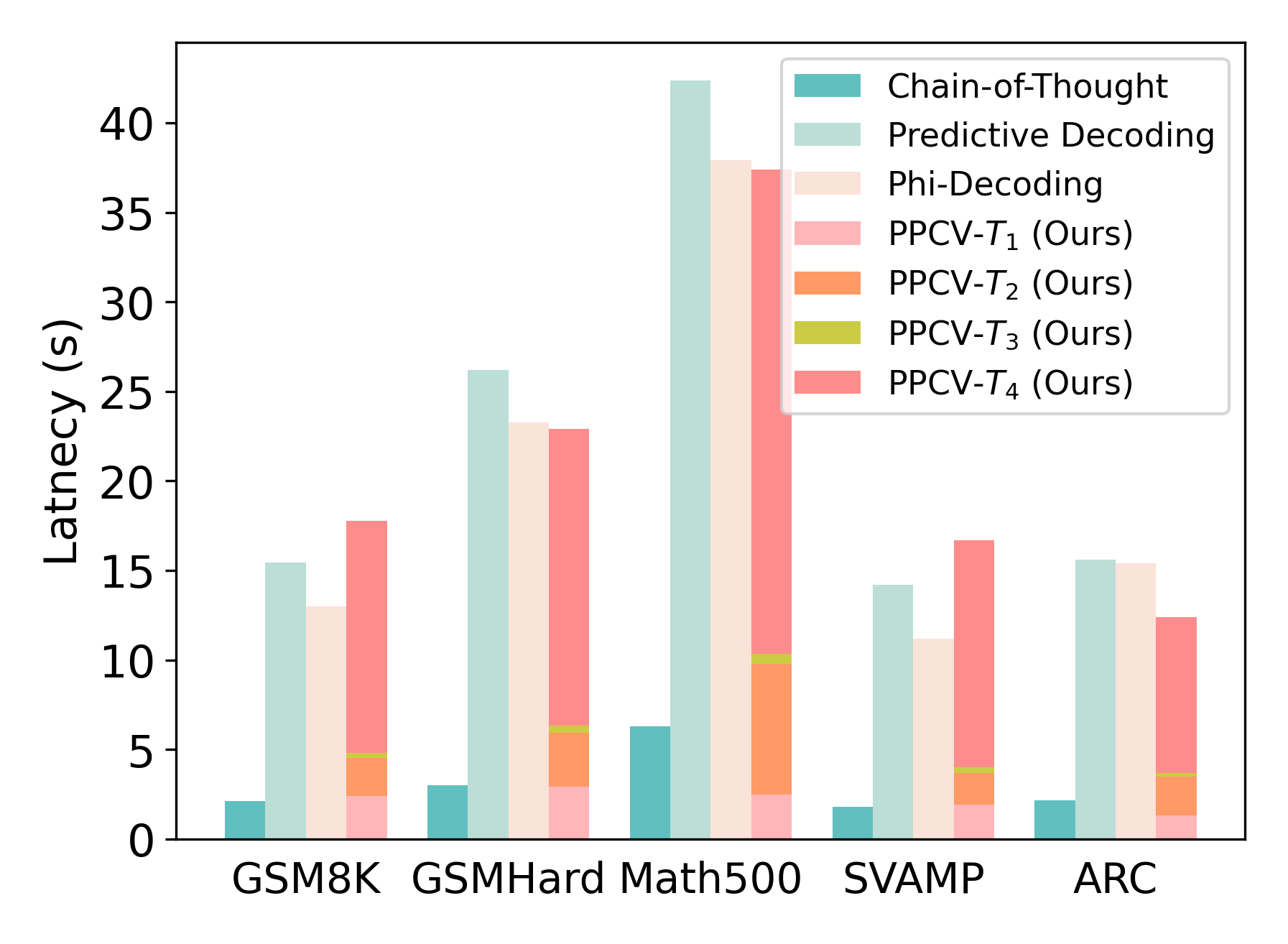

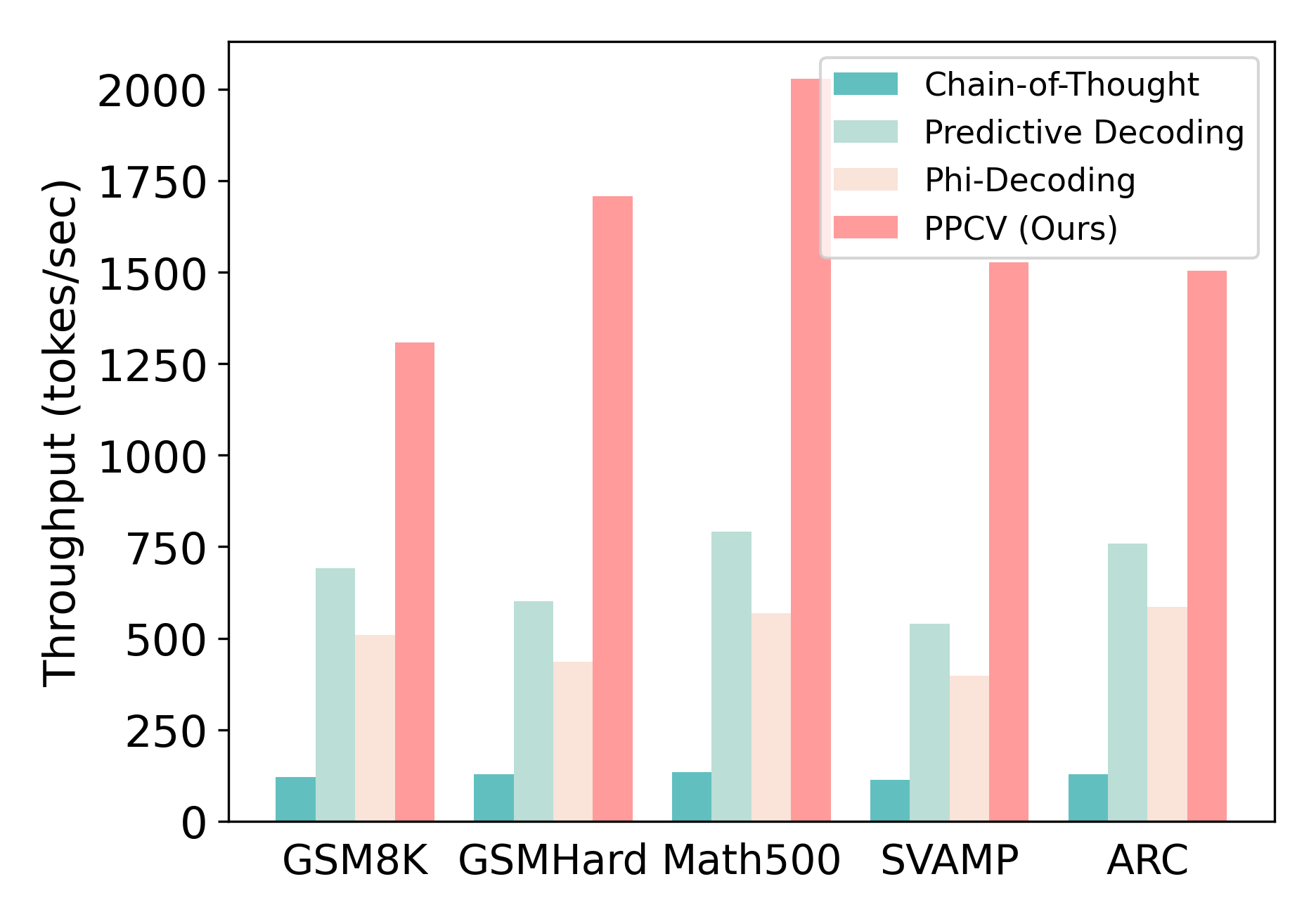

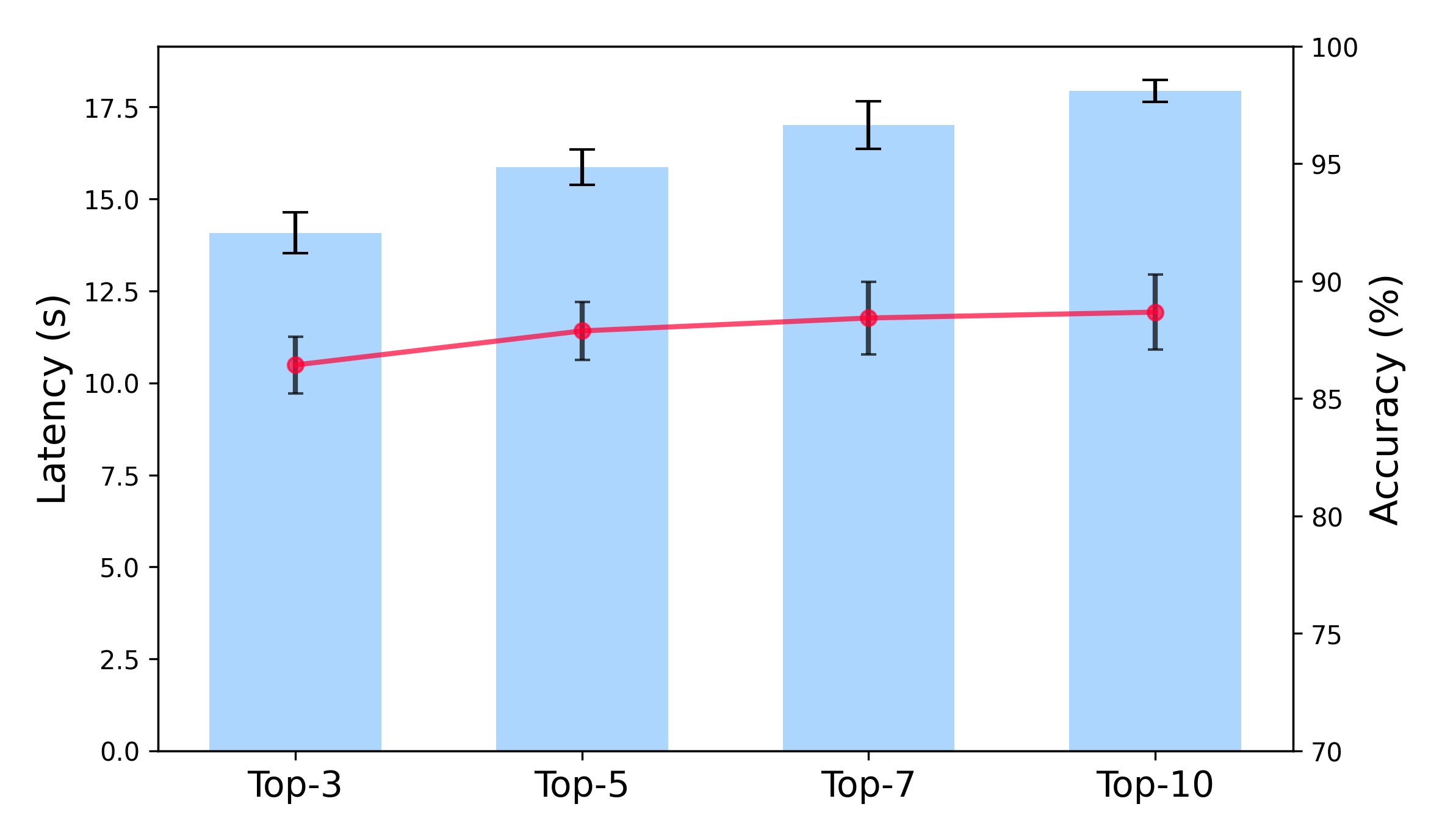

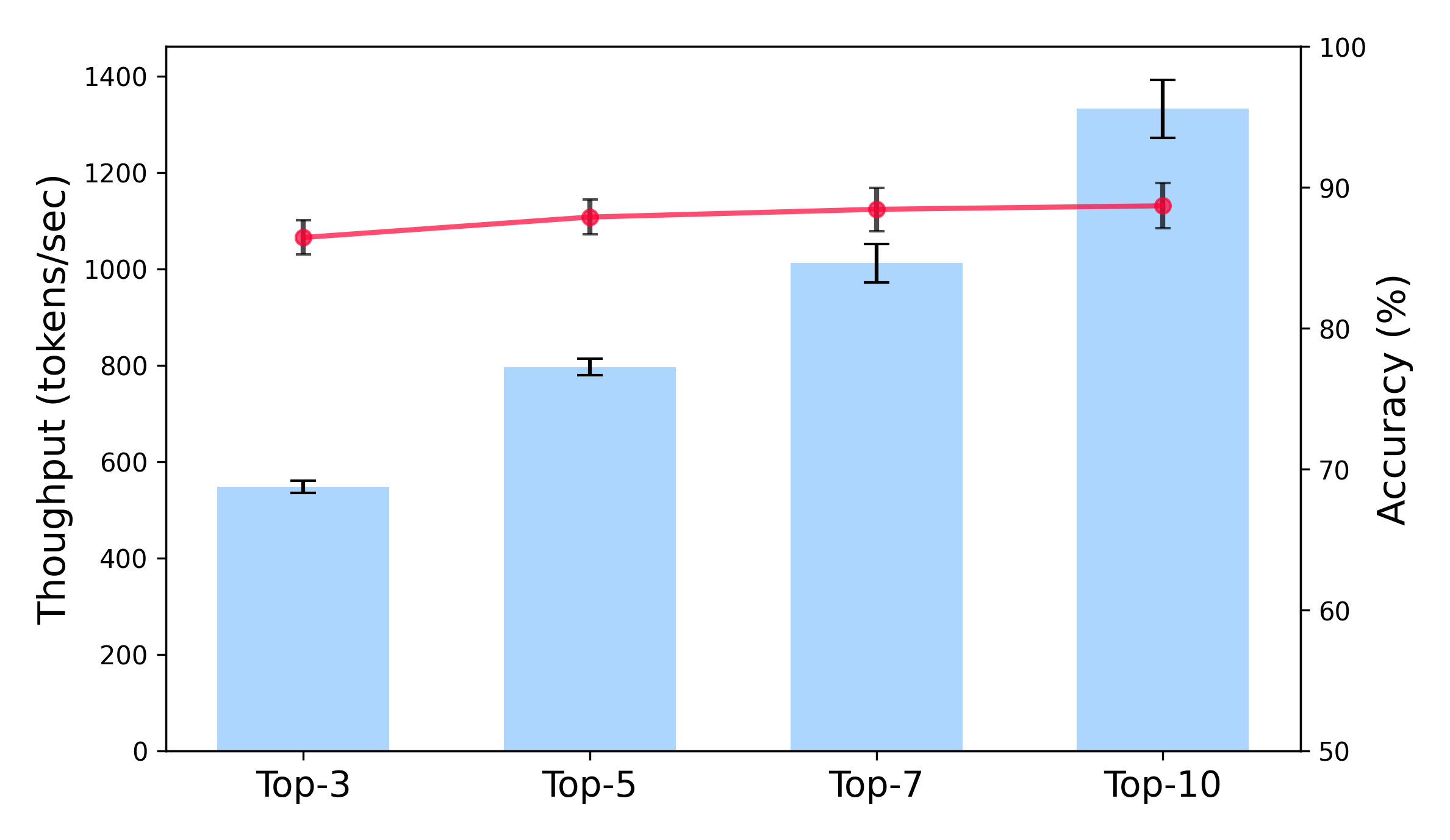

Figure 7: (a) The impact of number of sampled alternative tokens on the performance. (b) Latency comparison between the baselines and our method, measured as the average inference time per question (in seconds). $T_{1}$ , $T_{2}$ , $T_{3}$ , $T_{4}$ denote time for paraphrased question generation, initial answer generation, forward pass and new rollouts from truncated trajectories. (c) Throughput comparison between the baselines and our method, measured in output tokens per second.

### 4.2 Main Results

The main results are summarized in Table 1 and Table 2. The results indicate that Self-Consistency effectively improves the reasoning performance of LLMs compared to vanilla Chain-of-Thought prompting. For example, Llama-3.1-8B-Instruct (Grattafiori et al., 2024) achieves about 3% higher accuracy with Self-Consistency than with CoT. These findings suggest that augmenting reasoning during inference through sampling is an effective way to refine reasoning trajectories. Recent decoding-based methods, such as Predictive Decoding (Ma et al., 2025) and Phi-Decoding (Xu et al., 2025), also achieve strong results.Unlike prior works that rely on carefully designed prompts to self-correct errors in intermediate steps, these two methods modify the current step by probing future steps with pre-defined reward signals. Furthermore, our experimental results demonstrate that the proposed method consistently outperforms the baselines across most tasks, spanning both mathematical and knowledge reasoning, thereby highlighting its generalization ability across different reasoning settings. Notably, our method even surpasses the latest approaches such as Predictive Decoding (Ma et al., 2025) and Phi-Decoding (Xu et al., 2025). In particular, it achieves approximately 50.00% accuracy on the Math500 dataset (Hendrycks et al., 2021), exceeding these baselines considerably. The results on competition-level datasets further demonstrate the effectiveness of our method in enhancing the reasoning ability of LLMs. These results indicate that our method can effectively extract critical tokens that play a pivotal role in the final outcome and correct the reasoning trajectory by leveraging alternative tokens. Additional results can be found in Appendix E.

### 4.3 Ablation Study