# Jailbreaking Leaves a Trace: Understanding and Detecting Jailbreak Attacks from Internal Representations of Large Language Models

**Authors**: Sri Durga Sai Sowmya Kadali, Evangelos E. Papalexakis

> University of California, RiversideRiversideCAUSAskada009@ucr.edu

> University of California, RiversideRiversideCAUSAepapalex@cs.ucr.edu

(2026)

Abstract.

Jailbreaking large language models (LLMs) has emerged as a critical security challenge with the widespread deployment of conversational AI systems. Adversarial users exploit these models through carefully crafted prompts to elicit restricted or unsafe outputs, a phenomenon commonly referred to as Jailbreaking. Despite numerous proposed defense mechanisms, attackers continue to develop adaptive prompting strategies, and existing models remain vulnerable. This motivates approaches that examine the internal behavior of LLMs rather than relying solely on prompt-level defenses. In this work, we study jailbreaking from both security and interpretability perspectives by analyzing how internal representations differ between jailbreak and benign prompts. We conduct a systematic layer-wise analysis across multiple open-source models, including GPT-J, LLaMA, Mistral, and the state-space model Mamba2, and identify consistent latent-space patterns associated with adversarial inputs. We then propose a tensor-based latent representation framework that captures structure in hidden activations and enables lightweight jailbreak detection without model fine-tuning or auxiliary LLM-based detectors. We further demonstrate that these latent signals can be used to actively disrupt jailbreak execution at inference time. On an abliterated LLaMA 3.1 8B model, selectively bypassing high-susceptibility layers blocks 78% of jailbreak attempts while preserving benign behavior on 94% of benign prompts. This intervention operates entirely at inference time and introduces minimal overhead, providing a scalable foundation for achieving stronger coverage by incorporating additional attack distributions or more refined susceptibility thresholds. Our results provide evidence that jailbreak behavior is rooted in identifiable internal structures and suggest a complementary, architecture-agnostic direction for improving LLM security. Our implementation can be found here (jai, [n. d.]).

Jailbreaking, Large Language Models, LLM Internal Representations, Self-attention, Hidden Representation, Tensor Decomposition copyright: acmlicensed journalyear: 2026 doi: XXXXXXX.XXXXXXX conference: ; ; ccs: Computing methodologies Artificial intelligence ccs: Information systems Data mining ccs: Security and privacy Software and application security

1. Introduction

Large Language Models (LLMs) have demonstrated remarkable capabilities across a wide range of tasks and have become increasingly integrated into applications spanning diverse domains and user populations. Despite their utility and efforts to align them according to human and safety expectations (Wang et al., 2023; Zeng et al., 2024; Glaese et al., 2022), these models remain highly susceptible to adversarial exploitation, raising significant safety and security concerns given their widespread accessibility. Among such threats, Jailbreaking has emerged as a persistent and particularly concerning attack vector, wherein malicious actors craft carefully engineered prompts to circumvent built-in safety mechanisms (Chao et al., 2024; Zou et al., 2023) and elicit restricted, sensitive, or otherwise disallowed content (Liu et al., 2024). Jailbreak attacks pose substantial risks, as they enable users with harmful intent to manipulate LLMs into producing outputs that violate safety policies, including actionable instructions for malicious activities (Zhang et al., 2024; Wang et al., 2024a; Yu et al., 2023). The growing availability of jailbreak prompts in public repositories, research artifacts, and online forums further exacerbates this issue (Jiang et al., 2024; Shen et al., 2024).

To mitigate these risks, prior work has explored a range of defense strategies, including prompt-level filtering (Wang et al., 2024b), model-level interventions (Zeng et al., 2024; Xiong et al., 2025), reinforcement learning from human feedback (RLHF) (Bai et al., 2022), and the use of auxiliary safety models (Chen et al., 2023; Lu et al., 2024). While such approaches have demonstrated partial effectiveness, they are not without limitations. In practice, even well-aligned models can remain vulnerable under repeated or adaptive attack attempts. Moreover, no single defense mechanism has proven sufficient to counter the continually evolving landscape of jailbreak strategies.

In this study, we investigate a complementary and comparatively underexplored direction: leveraging internal model representations to distinguish jailbreak prompts from benign inputs and to guide mitigation. Our central hypothesis is that adversarial prompts induce distinct and detectable structural patterns within the hidden representations of LLMs, independent of output behavior. To evaluate this hypothesis, we extract layer-wise internal representations such as multi-head attention and layer output/hidden state representation from multiple models such as GPT-J, LlaMa, Mistral, and the state-space sequence model Mamba, and apply tensor decomposition (Sidiropoulos et al., 2017) techniques to characterize and compare latent-space behaviors across benign and jailbreak prompts. Building on this analysis, we further demonstrate how these latent representations can be used to identify layers that are particularly susceptible to adversarial manipulation and to intervene during inference by selectively bypassing such layers. This representation-centric framework not only enables reliable detection of jailbreak prompts but also provides a principled mechanism for mitigating harmful behavior without modifying model parameters or relying on output-level filtering. Together, our results suggest that internal representations offer a powerful and generalizable foundation for both understanding and defending against jailbreak attacks beyond surface-level text analysis. Our contributions are as follows:

- Layer-wise Jailbreak Signature: We show that jailbreak and benign prompts exhibit distinct, layer-dependent latent signatures in the internal representations of LLMs, which can be uncovered using tensor decomposition (Sidiropoulos et al., 2017).

- Effective Defense via Targeted Layer Bypass: We demonstrate that these latent signatures can be exploited at inference time to identify susceptible layers and disrupt jailbreak execution through targeted layer bypass.

2. Related work

2.1. Adversarial Attacks

Adversarial attacks on LLMs encompass a broad class of inputs intentionally crafted to induce unintended, incorrect, or unsafe behaviors (Zou et al., 2023; Chao et al., 2024). Unlike adversarial examples in vision or speech domains, which often rely on imperceptible input perturbations, attacks on LLMs primarily exploit semantic, syntactic, and contextual vulnerabilities in language understanding and generation. By manipulating instructions, context, or interaction structure, adversaries can steer models toward generating factually incorrect information, violating behavioral constraints, or producing harmful or sensitive content, posing significant risks to deployed systems (Liu et al., 2024). Existing adversarial strategies span a wide range of mechanisms, including prompt injection, role-playing and persona manipulation, instruction obfuscation, multi-turn coercion (Yu et al., 2023; Jiang et al., 2024), and indirect attacks embedded within external content such as documents or code, and even by fine-tuning (Yang et al., 2023; Yao et al., 2023). A central challenge in defending against these attacks is their adaptability: adversarial prompts are often transferable across models and can be easily modified to evade static defenses (Zou et al., 2023). As a result, surface-level or prompt-based mitigation strategies have shown limited robustness

2.2. Jailbreak attacks

A prominent and particularly challenging class of adversarial attacks on LLMs is jailbreaking. Jailbreak attacks aim to circumvent built-in safety mechanisms and alignment constraints, enabling the model to produce outputs that it is explicitly designed to refuse. These attacks often rely on prompt engineering techniques such as hypothetical scenarios, instruction overriding, contextual reframing, or step-by-step coercion, effectively manipulating the model’s internal decision-making processes (Wei et al., 2023). Unlike general adversarial prompting, jailbreak attacks explicitly target safety guardrails and content moderation policies, making them a critical concern from both security and governance perspectives (Liu et al., 2024; Zou et al., 2023; Jiang et al., 2024). Despite extensive efforts to harden models through alignment training and reinforcement learning from human feedback (Bai et al., 2022; Wang et al., 2023; Glaese et al., 2022), jailbreak prompts continue to evolve, highlighting fundamental limitations in current defense approaches. This motivates the need for methods that analyze jailbreak behavior at the level of internal model representations, rather than relying solely on external prompt or output inspection.

2.3. Jailbreak Defenses

Prior work on defending against jailbreak attacks in LLMs has primarily focused on prompt and output-level safeguards. Rule-based filtering and keyword matching are commonly used due to their low computational cost, but such approaches are brittle and easily bypassed through paraphrasing, obfuscation, or multi-turn prompting (Deng et al., 2024). Learning-based defenses, including supervised classifiers and auxiliary LLMs for intent detection or self-evaluation (Wang et al., 2025), improve robustness but introduce additional complexity, inference overhead, and new attack surfaces. Model-level defenses, such as alignment fine-tuning, reinforcement learning from human feedback (RLHF), and policy-based or constitutional training, aim to internalize safety constraints within the model (Ouyang et al., 2022). While effective to an extent, these approaches are resource-intensive and require continual updates as jailbreak strategies evolve. Moreover, even extensively aligned models remain susceptible to jailbreak attacks, indicating fundamental limitations in current training-based defenses. Overall, existing defenses largely treat jailbreak detection as a black-box problem and rely on external signals from prompts or generated outputs. In contrast, fewer works explore the internal representations of LLMs as a basis for defense (Candogan et al., 2025). This gap motivates approaches that leverage latent-space and layer-wise signals to identify jailbreak behavior in an interpretable and architecture-agnostic manner, without requiring additional fine-tuning or auxiliary models.

3. Preliminaries

3.1. Tensors

Tensors (Sidiropoulos et al., 2017) are defined as multi-dimensional arrays that generalize one-dimensional arrays (vectors) and two-dimensional arrays (matrices) to higher dimensions. The dimension of a tensor is traditionally referred to as its order, or equivalently, the number of modes, while the size of each mode is called its dimensionality. For instance, we may refer to a third-order tensor as a three-mode tensor $\boldsymbol{\mathscr{X}}∈\mathbb{R}^{I× J× K}$ .

3.2. Tensor Decomposition

Tensor Decomposition (Sidiropoulos et al., 2017) is a popular data science tool for discovering underlying low-dimensional patterns in the data. We focus on the CANDECOMP/PARAFAC (CP) decomposition model (Sidiropoulos et al., 2017), one of the most famous tensor decomposition models that decomposes a tensor into a sum of rank-one components. We use CP decomposition because of its simplicity and interpretability. The CP decomposition of a three-mode tensor $\boldsymbol{\mathscr{X}}∈\mathbb{R}^{I× J× K}$ is the sum of three-way outer products, that is, $\boldsymbol{\mathscr{X}}≈\sum_{r=1}^{R}\mathbf{a}_{r}\circ\mathbf{b}_{r}\circ\mathbf{c}_{r}$ , where $R$ is the rank of the decomposition, $\mathbf{a}_{r}∈\mathbb{R}^{I}$ , $\mathbf{b}_{r}∈\mathbb{R}^{J}$ , and $\mathbf{c}_{r}∈\mathbb{R}^{K}$ are the factor vectors and $\circ$ denotes the outer product. The rank of a tensor $\boldsymbol{\mathscr{X}}$ is the minimal number of rank-1 tensors required to exactly reconstruct it:

$$

\text{rank}(\boldsymbol{\mathscr{X}})=\min\left\{R:\boldsymbol{\mathscr{X}}=\sum_{r=1}^{R}\mathbf{a}_{r}\circ\mathbf{b}_{r}\circ\mathbf{c}_{r},\;\mathbf{a}_{r}\in\mathbb{R}^{I},\mathbf{b}_{r}\in\mathbb{R}^{J},\mathbf{c}_{r}\in\mathbb{R}^{K}\right\}

$$

Selecting an appropriate rank is critical, as it directly affects both the expressiveness and interpretability of the decomposition. Lower-rank approximations yield compact and computationally efficient representations, while higher ranks can capture richer structure at the cost of increased complexity and potential noise.

3.3. Transformer Architecture

A Transformer (Vaswani et al., 2023) is a neural network architecture designed for modeling sequential data through attention mechanisms rather than recurrence or convolution. Transformers process input sequences in parallel and capture long-range dependencies by explicitly modeling interactions between all tokens in a sequence.

A transformer consists of a stack of layers, each composed of two primary submodules: multi-head self-attention and a position-wise feed-forward network (FFN). Residual connections and layer normalization are applied around each submodule to stabilize training.

3.3.1. Multi-Head Self-Attention

Multi-head self-attention enables the model to attend to different parts of the input sequence simultaneously. Given an input representation $\mathbf{H}∈\mathbb{R}^{T× d}$ , each attention head projects $\mathbf{H}$ into query ( $\mathbf{Q}$ ), key ( $\mathbf{K}$ ), and value ( $\mathbf{V}$ ) matrices:

$$

\mathbf{Q}=\mathbf{H}\mathbf{W}^{Q},\quad\mathbf{K}=\mathbf{H}\mathbf{W}^{K},\quad\mathbf{V}=\mathbf{H}\mathbf{W}^{V}.

$$

Attention is computed as

$$

\mathrm{Attn}(\mathbf{Q},\mathbf{K},\mathbf{V})=\mathrm{softmax}\!\left(\frac{\mathbf{Q}\mathbf{K}^{\top}}{\sqrt{d_{k}}}\right)\mathbf{V},

$$

where $d_{k}$ is the dimensionality of each attention head. Multiple attention heads operate in parallel, and their outputs are concatenated and linearly projected, allowing the model to capture diverse relational patterns across tokens.

3.3.2. Layer Outputs and Hidden Representations

Each transformer layer produces a hidden representation (or layer output) that serves as input to the next layer. Formally, for layer $\ell$ , the output representation $\mathbf{H}^{(\ell)}$ is given by:

$$

\mathbf{H}^{(\ell)}=\mathrm{LN}\!\left(\mathbf{H}^{(\ell-1)}+\mathrm{MHA}\!\left(\mathbf{H}^{(\ell-1)}\right)\right),

$$

followed by

$$

\mathbf{H}^{(\ell)}=\mathrm{LN}\!\left(\mathbf{H}^{(\ell)}+\mathrm{FFN}\!\left(\mathbf{H}^{(\ell)}\right)\right),

$$

where $\mathrm{MHA}$ denotes multi-head attention, $\mathrm{FFN}$ denotes the feed-forward network, and $\mathrm{LN}$ denotes layer normalization.

The sequence of hidden states $\{\mathbf{H}^{(1)},...,\mathbf{H}^{(L)}\}$ captures increasingly abstract features, ranging from local syntactic patterns in early layers to semantic and task-relevant representations in deeper layers. These intermediate representations are commonly referred to as hidden layer activations and form the basis for interpretability and internal behavior analysis.

3.4. Model Types: Base, Instruction-Tuned, and Abliterated Models

Large language models (LLMs) can be categorized based on their training and alignment processes, which influence their behavior under adversarial conditions.

Base Models

These are pretrained on large-scale text corpora using self-supervised objectives without explicit instruction tuning. Examples include GPT-J and LLaMA. Base models capture broad language patterns but lack alignment with human preferences, making them prone to generate unrestricted or unsafe outputs.

Instruction-Tuned Models

Derived from base models via supervised fine-tuning on datasets containing human instructions and responses, these models improve instruction-following capabilities (Zhang et al., 2026) and enforce safety constraints, such as refusing harmful queries. While instruction tuning enhances safety, these models remain susceptible to sophisticated jailbreak prompts.

Abliterated Models

Abliterated models are instruction-tuned models where alignment or safety components have been removed, disabled, or bypassed (Young, 2026). Such models behave more like base models but may retain subtle differences due to prior fine-tuning. Abliterated models serve as valuable testbeds to study jailbreak vulnerabilities and internal representational changes resulting from alignment removal.

Analyzing these model types enables us to investigate how alignment and instruction tuning affect internal layer activations and latent patterns, informing the design of robust jailbreak detection methods that generalize across model variations.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Latent Analysis and Classifier Training & Jailbreak Mitigation at Inference

### Overview

This diagram illustrates a two-part process: first, the latent analysis and training of a classifier to distinguish between benign and jailbreak prompts to a Large Language Model (LLM); and second, the application of this classifier during inference to mitigate jailbreak attacks. The diagram uses a series of stacked blocks representing LLM layers, and visual representations of data transformations.

### Components/Axes

The diagram is divided into two main sections: "Latent Analysis and Classifier Training" (top) and "Jailbreak Mitigation at Inference" (bottom). Each section contains several components:

* **LLM Layers:** Represented as stacked rectangular blocks in teal.

* **Input Prompts:** "Benign" and "Jailbreak" prompts are shown as input to the LLM.

* **Intermediate Layers' Data:** Represented as stacked rectangular blocks in grey.

* **Layer 't' extracted for a single input prompt:** A rectangular block representing a specific layer's output.

* **3-mode activation tensor:** A 3D representation of the extracted layer data.

* **Decomposed Factors (CP Decomposition):** Represented as blocks labeled A, B, and C.

* **Resultant prompt layer in latent space:** A rectangular block representing the projected prompt.

* **Classifier:** Represented as a question mark within a circle.

* **Layers in red are bypassed:** Layers highlighted in red, indicating they are bypassed during inference.

* **Susceptible layer bypassing:** A visual representation of bypassed layers.

The diagram also includes labels for key concepts like "Sequence length", "Token embedding length", "Effective separation of factors in the latent space", "Training a classifier on prompt-mode factors", "Identify Jailbreak sensitive layers", and "Layer bypass prevented harmful generation".

### Detailed Analysis or Content Details

**Latent Analysis and Classifier Training (Top Section):**

1. **Input Prompts:** Two types of input prompts are shown: "Benign" (green checkmark) and "Jailbreak" (red lock).

2. **LLM Layers:** The LLM is represented by a stack of teal blocks.

3. **Intermediate Layers' Data:** The output of the LLM layers is represented by a stack of grey blocks.

4. **Layer 't' extracted for a single input prompt:** A single layer's output is extracted for analysis. The dimensions are labeled "Sequence length" and "Token embedding length".

5. **Construct 3-mode activation tensor:** The extracted layer data is transformed into a 3-mode tensor, visualized as a 3D block with dimensions "Sequence length", "Token embedding length", and "prompts".

6. **Decomposed Factors (CP Decomposition):** The 3-mode tensor is decomposed into factors A, B, and C.

7. **Effective separation of factors in the latent space:** Benign prompts are represented as green dots clustered together, while Jailbreak prompts are represented as red dots clustered separately. This indicates successful separation in the latent space.

8. **Training a classifier on prompt-mode factors:** The decomposed factors are used to train a classifier to distinguish between benign and jailbreak prompts.

**Jailbreak Mitigation at Inference (Bottom Section):**

1. **Inference time prompt:** A single prompt is input to the LLM during inference.

2. **LLM Layers:** The LLM is again represented by a stack of teal blocks.

3. **Intermediate Layers' Data:** The output of the LLM layers is represented by a stack of grey blocks.

4. **Layer output/MHA output:** The output of a specific layer is extracted.

5. **Resultant prompt layer in latent space:** The layer output is projected onto the learned latent factors.

6. **Classify using the trained factors:** The projected prompt is classified using the trained classifier. A question mark indicates the classification result.

7. **If layer wise Jailbreak Prob > threshold, layer might be showing more signals of jailbreak attack:** A conditional statement indicating that if the jailbreak probability for a layer exceeds a threshold, the layer is considered susceptible to jailbreak attacks.

8. **Layers in red are bypassed:** Layers identified as susceptible to jailbreak attacks are bypassed (highlighted in red).

9. **Layer bypass prevented harmful generation:** The diagram states that bypassing these layers prevents harmful generation.

### Key Observations

* The diagram highlights the importance of latent space analysis for identifying and mitigating jailbreak attacks.

* The use of CP decomposition to extract factors from the LLM layers is a key step in the process.

* The classifier is trained to distinguish between benign and jailbreak prompts based on these factors.

* During inference, the classifier is used to identify susceptible layers, which are then bypassed to prevent harmful generation.

* The diagram visually emphasizes the separation of benign and jailbreak prompts in the latent space.

### Interpretation

The diagram demonstrates a method for enhancing the security of LLMs against jailbreak attacks. By analyzing the latent representations of prompts, the system can identify layers that are vulnerable to manipulation and bypass them during inference. This approach aims to prevent the generation of harmful or unintended outputs. The use of CP decomposition suggests a dimensionality reduction technique to simplify the analysis of the high-dimensional latent space. The classifier acts as a gatekeeper, identifying potentially harmful prompts and triggering the bypassing mechanism. The diagram suggests a proactive approach to security, rather than relying solely on reactive measures. The conditional statement regarding the jailbreak probability threshold indicates a tunable parameter for controlling the sensitivity of the mitigation system. The diagram does not provide specific numerical data or performance metrics, but it clearly outlines the conceptual framework for a jailbreak mitigation strategy. The diagram is a conceptual illustration and does not provide details on the specific algorithms or implementation details used.

</details>

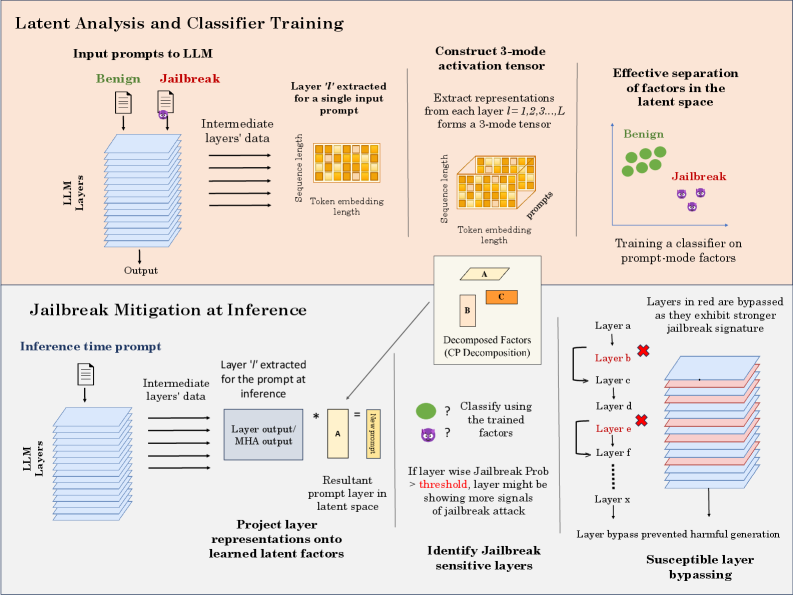

Figure 1. Proposed method: (top) Latent analysis and classifier training: self-attention and layer-output tensors are constructed from input prompts, decomposed via CP decomposition, and used to learn jailbreak-discriminative latent factors. (Bottom) Inference-time mitigation: internal representations from a new prompt are projected onto the learned factors to estimate layer-wise jailbreak susceptibility; layers exhibiting strong adversarial signals are bypassed to suppress jailbreak behavior.

4. Proposed Method

We study jailbreak behavior through internal model representations using two complementary analysis pipelines.

4.1. Hidden Representation Analysis

Model Suite and Representation Extraction

We perform hidden representation analysis by examining both multi-head self-attention outputs and layer-wise hidden representations across a diverse set of large language models. Specifically, we evaluate three base models: GPT-J-6B (Wang et al., 2021), LLaMA-3.1-8B (AI@Meta, 2024b), and Mistral-7B-v01 (Jiang et al., 2023); three instruction-tuned models: GPT-JT-6B (Computer, 2022), LLaMA-3.1-8B-Instruct (AI@Meta, 2024a), and Mistral-7B-Instruct-V0.1 (AI, 2023); one abliterated model, LLaMA-3.1-8B-Instruct-Abliterated (Labonne, [n. d.]); and one state-space sequence model, Mamba-2.8b-hf (mam, [n. d.]). This selection enables a systematic comparison across different stages of alignment and architectural paradigms.

The inclusion of base, instruction-tuned, and abliterated models is intentional. Base models offer insight into unaligned latent structures; instruction-tuned models show how safety fine-tuning alters internal processing; and abliterated models help isolate the role of alignment layers. We focus not on output quality but on how jailbreak and benign prompts are internally encoded across this alignment spectrum. From this perspective, the specific semantic quality of the output is not critical; instead, we focus on identifying discriminative patterns that persist across model variants. Together, these model categories enable us to analyze jailbreak behavior across the full alignment spectrum and assess whether latent-space signatures of jailbreak prompts are consistent and model-agnostic.

Tensor Construction and Latent Factors for Jailbreak Detection

For a given set of input prompts containing both benign and jailbreak instances, we extract multi-head attention representations and hidden state/layer output from each layer of the model. For a single prompt, the resulting representation has dimensions $1× T× d$ , where $T$ denotes the sequence length and $d$ is the hidden dimensionality. By stacking representations across multiple prompts, we construct a third-order tensor of size $N× T× d$ , where $N$ is the number of prompts as illustrated in Fig. 1.

To analyze the latent structure of these internal representations, we apply the CANDECOMP/PARAFAC (CP) tensor decomposition to factorize the tensor into three low-rank factors corresponding to the prompt, sequence, and hidden dimensions. The factor associated with the prompt mode captures latent patterns that reflect how different prompts are encoded internally across model layers. Prior work (Papalexakis, 2018; Zhao et al., 2019) has shown that tensor decomposition-derived latent patterns effectively capture meaningful structure for classification and detection tasks, even with limited data (Qazi et al., 2024; Kadali and Papalexakis, 2025).

We use these prompt-mode latent factors as features for a lightweight classifier that distinguishes jailbreak prompts from benign prompts. This classifier serves two purposes: to assess separability between prompt types in the latent space (Fig. 2), and as a mechanism to estimate layer-wise susceptibility to jailbreak behavior, which is leveraged by the mitigation method described next.

Layer-wise Separability and Susceptibility

To localize where jailbreak-related information is expressed within the network, we train separate classifiers on latent factors extracted from individual layers. This produces a layer-wise profile that quantifies how strongly each layer encodes representations associated with adversarial prompts. From an interpretability perspective, these layers can be viewed as critical representational stages where benign and adversarial behaviors diverge.

Importantly, we do not interpret high separability as evidence that a layer directly causes jailbreak behavior. Rather, it indicates that these layers capture discriminative representations associated with jailbreak prompts, making them especially informative for detection. This observation provides insight into how adversarial instructions propagate through the model and forms the basis for the inference-time intervention introduced in the following section.

<details>

<summary>figures/layer_9_layer_output_tsne_rank_20_regenerated.png Details</summary>

### Visual Description

\n

## Scatter Plot: t-SNE Visualization of Decomposed Factors

### Overview

This image presents a scatter plot generated using t-distributed Stochastic Neighbor Embedding (t-SNE). The plot visualizes the distribution of "Decomposed factors" across two dimensions (t-SNE Dimension 1 and t-SNE Dimension 2) for two categories: "Benign" and "Jailbreak". The points are color-coded to distinguish between the two categories.

### Components/Axes

* **Title:** "t-SNE Visualization of Decomposed factors" (centered at the top)

* **X-axis:** "t-SNE Dimension 1" (ranging approximately from -50 to 50)

* **Y-axis:** "t-SNE Dimension 2" (ranging approximately from -40 to 40)

* **Legend:** Located in the top-right corner.

* **Blue circles:** Labelled "Benign"

* **Red circles:** Labelled "Jailbreak"

* **Data Points:** Numerous circular points representing individual data instances.

### Detailed Analysis

The plot shows a clear separation between the "Benign" and "Jailbreak" categories.

**Benign (Blue):**

The "Benign" data points are primarily clustered in the left half of the plot, with a concentration between t-SNE Dimension 1 values of -40 and -10, and t-SNE Dimension 2 values of -10 and 30. There are a few scattered points extending towards the right, but the majority remain on the left.

* Approximate coordinates (sampled):

* (-42, 28)

* (-35, 15)

* (-25, 22)

* (-15, 5)

* (-5, -8)

* (-40, -15)

* (-30, -25)

**Jailbreak (Red):**

The "Jailbreak" data points are predominantly located in the right half of the plot, with a concentration between t-SNE Dimension 1 values of 10 and 45, and t-SNE Dimension 2 values of -30 and 20. There is a noticeable vertical spread, with points extending from approximately -30 to 20 on the t-SNE Dimension 2 axis.

* Approximate coordinates (sampled):

* (15, 18)

* (25, 8)

* (35, -5)

* (40, -20)

* (20, -30)

* (10, 10)

* (45, 5)

There is some overlap between the two categories in the central region of the plot (around t-SNE Dimension 1 = 0), but the overall separation is quite distinct.

### Key Observations

* The t-SNE visualization effectively separates the "Benign" and "Jailbreak" categories into distinct clusters.

* The "Benign" cluster is more tightly grouped than the "Jailbreak" cluster, suggesting greater homogeneity within the benign data.

* The "Jailbreak" cluster exhibits a wider range of values along both t-SNE dimensions, indicating more variability within the jailbreak data.

* There are a few "Benign" points that appear closer to the "Jailbreak" cluster, and vice versa, suggesting some instances may be misclassified or represent transitional states.

### Interpretation

The t-SNE plot demonstrates that the "Decomposed factors" can be used to effectively distinguish between "Benign" and "Jailbreak" instances. The clear separation suggests that the factors capture meaningful differences between these two categories. The wider spread of the "Jailbreak" cluster could indicate that jailbreak attempts are more diverse in their characteristics than benign operations. The overlap between the clusters suggests that some instances are not easily categorized, potentially due to noise or ambiguity in the data. This visualization is useful for understanding the underlying structure of the data and identifying potential features that contribute to the distinction between benign and jailbreak behavior. The t-SNE dimensionality reduction technique has successfully projected the high-dimensional data into a two-dimensional space while preserving the relative distances between data points, allowing for visual inspection of the clusters.

</details>

Figure 2. t-SNE visualization of prompt-mode CP factors for a representative model. Clear separation between benign and jailbreak clusters indicates that internal latent factors capture strong structure, motivating their use for jailbreak detection. Similar patterns are observed across models.

4.2. Layer-Aware Mitigation via Latent-Space Susceptibility

To mitigate jailbreak attacks, we propose a representation-level defense method, that leverages layer-wise susceptibility signals derived from internal representations. By identifying layers that strongly encode jailbreak-specific representations, we selectively bypass them during inference (Elhoushi et al., 2024; Shukor and Cord, 2024). While prior work has explored layer bypassing primarily for reducing computation and improving inference efficiency, our approach demonstrates that such bypassing can simultaneously reduce computational cost and mitigate jailbreak behaviors (Luo et al., 2025; Lawson and Aitchison, 2025).

We conduct this experiment on an abliterated model (LLaMA-3.1-8B). Base models are excluded from this intervention as they primarily perform next-token prediction without alignment constraints, making output-based safety evaluation less meaningful. Instruction-tuned models are also not ideal candidates, as their built-in guardrails obscure whether observed safety improvements arise from our method or from prior alignment. Abliterated models, which lack safety mechanisms while retaining instruction-tuned structure, provide a suitable testbed for isolating the effects of our approach.

Layer-wise Projection and Jailbreak Susceptibility Scoring

Given an input prompt, we extract intermediate representations from each transformer layer of the model. Let $\mathbf{x}∈\mathbb{R}^{d}$ denote the feature vector obtained from a specific layer (e.g., multi-head self-attention output or layer output).

Latent Projection.

For each layer, we project the extracted features onto a lower-dimensional latent space defined by factors obtained from tensor decomposition of the corresponding instruct model representations obtained in § 4.1. Let $\mathbf{W}∈\mathbb{R}^{d× r}$ denote the matrix of $r$ basis vectors (factors). The projected representation is computed as:

$$

\mathbf{z}=\mathbf{W}^{\top}\mathbf{x},

$$

where $\mathbf{z}∈\mathbb{R}^{r}$ is the latent feature representation. This operation constitutes a linear projection that preserves task-relevant structure encoded by the factors.

Layer-wise Jailbreak Probability Estimation.

The projected features are passed to a classifier trained to distinguish between benign and jailbreak prompts. For a logistic regression classifier, the probability of a jailbreak at a given layer is computed as:

$$

p=\sigma(\mathbf{w}^{\top}\mathbf{z}+b),

$$

where $\mathbf{w}$ and $b$ denote the classifier weights and bias, respectively, and $\sigma(·)$ is the sigmoid function:

$$

\sigma(x)=\frac{1}{1+e^{-x}}.

$$

Classifier Training Objective.

The classifier is trained using labeled projected representations $(\mathbf{z}_{i},y_{i})$ , where $y_{i}∈\{0,1\}$ indicates benign or jailbreak prompts. The parameters are optimized by minimizing the binary cross-entropy loss:

$$

\mathcal{L}=-\frac{1}{N}\sum_{i=1}^{N}\left[y_{i}\log p_{i}+(1-y_{i})\log(1-p_{i})\right],

$$

where $N$ denotes the number of samples and $p_{i}$ is the predicted probability for sample $i$ .

Layer Susceptibility Interpretation.

Layers exhibiting higher classification performance (e.g., F1 score) indicate stronger representational separability between benign and jailbreak prompts within the latent space. We interpret such layers as being more susceptible to jailbreak-style perturbations, as they encode discriminative adversarial features.

5. Experimental Evaluation

<details>

<summary>x2.png Details</summary>

### Visual Description

## Heatmaps: F1 Score Analysis - Transformer Models

### Overview

The image presents two heatmaps comparing the F1 scores of different Transformer models across various layers. The left heatmap displays "Layer Output F1 Scores", while the right heatmap shows "MHA Output F1 Scores". Both heatmaps use a color scale to represent F1 score values, ranging from 0.5 (red) to 1.0 (green). The models being compared are GPT-J-Base, GPT-J-Instruct, Llama-Base, Llama-Instruct, Llama-Abliterated, Mistral-Base, and Mistral-Instruct. The x-axis of both heatmaps represents the layer number, ranging from 0 to 31.

### Components/Axes

* **Title:** "F1 Score Analysis: Transformer Models" (centered at the top)

* **Left Heatmap Title:** "Layer Output F1 Scores" (top-left)

* **Right Heatmap Title:** "MHA Output F1 Scores" (top-right)

* **Y-axis Label (Both Heatmaps):** "Model" (left side)

* Categories: GPT-J-Base, GPT-J-Instruct, Llama-Base, Llama-Instruct, Llama-Abliterated, Mistral-Base, Mistral-Instruct

* **X-axis Label (Both Heatmaps):** "Layer Number" (bottom)

* Markers: 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28, 29, 30, 31

* **Color Scale (Both Heatmaps):** Located to the right of each heatmap.

* Minimum: 0.5 (Red)

* Maximum: 1.0 (Green)

* Intermediate values are represented by shades of yellow and light green.

### Detailed Analysis or Content Details

**Left Heatmap: Layer Output F1 Scores**

* **GPT-J-Base:** Generally high F1 scores (approximately 0.95-1.0) across all layers, with a slight dip around layers 1-3 (approximately 0.9).

* **GPT-J-Instruct:** Similar to GPT-J-Base, with high F1 scores (approximately 0.95-1.0) across most layers. A more pronounced dip is observed around layers 1-5 (approximately 0.85-0.9).

* **Llama-Base:** F1 scores start lower (approximately 0.7-0.8) in the initial layers (0-5), then increase to around 0.9 by layer 10 and remain relatively stable.

* **Llama-Instruct:** Similar trend to Llama-Base, but with slightly higher initial F1 scores (approximately 0.8-0.9) and a faster increase to around 0.95 by layer 10.

* **Llama-Abliterated:** F1 scores are consistently lower than other models, ranging from approximately 0.6 to 0.8 across all layers.

* **Mistral-Base:** F1 scores start around 0.7-0.8 in the initial layers, increase to approximately 0.9 by layer 10, and remain relatively stable.

* **Mistral-Instruct:** Similar to Mistral-Base, but with slightly higher F1 scores (approximately 0.8-0.9) in the initial layers and a faster increase to around 0.95 by layer 10.

**Right Heatmap: MHA Output F1 Scores**

* **GPT-J-Base:** Very high and consistent F1 scores (approximately 0.95-1.0) across all layers.

* **GPT-J-Instruct:** Similar to GPT-J-Base, with very high and consistent F1 scores (approximately 0.95-1.0) across all layers.

* **Llama-Base:** F1 scores are generally high (approximately 0.85-0.95) across all layers, with a slight dip around layers 1-3 (approximately 0.8).

* **Llama-Instruct:** Similar to Llama-Base, with high F1 scores (approximately 0.9-1.0) across all layers.

* **Llama-Abliterated:** F1 scores are lower than other models, ranging from approximately 0.7 to 0.9 across all layers.

* **Mistral-Base:** F1 scores are generally high (approximately 0.85-0.95) across all layers.

* **Mistral-Instruct:** Similar to Mistral-Base, with high F1 scores (approximately 0.9-1.0) across all layers.

### Key Observations

* GPT-J models (Base and Instruct) consistently exhibit the highest F1 scores in both heatmaps.

* Llama-Abliterated consistently performs the worst across all layers and both types of F1 scores.

* Llama-Base and Mistral-Base show a clear trend of increasing F1 scores with layer number.

* The "MHA Output F1 Scores" heatmap generally shows higher F1 scores across all models compared to the "Layer Output F1 Scores" heatmap.

* The differences in F1 scores between models are more pronounced in the "Layer Output F1 Scores" heatmap, particularly in the initial layers.

### Interpretation

The data suggests that GPT-J models are the most effective in terms of F1 score, regardless of whether the evaluation is performed on layer outputs or MHA outputs. The consistent high performance of GPT-J models indicates a strong ability to learn and represent information across all layers. Llama-Abliterated's consistently lower scores suggest that the ablation process negatively impacts the model's performance.

The higher F1 scores observed in the "MHA Output F1 Scores" heatmap compared to the "Layer Output F1 Scores" heatmap may indicate that the multi-head attention mechanism is a crucial component for achieving high performance in these Transformer models. The increasing F1 scores with layer number for Llama-Base and Mistral-Base suggest that these models benefit from deeper architectures, allowing them to learn more complex representations.

The differences in performance between the "Base" and "Instruct" versions of each model are relatively small, suggesting that instruction tuning does not significantly impact F1 scores in this particular evaluation. However, further analysis may be needed to determine the impact of instruction tuning on other metrics, such as perplexity or human evaluation.

</details>

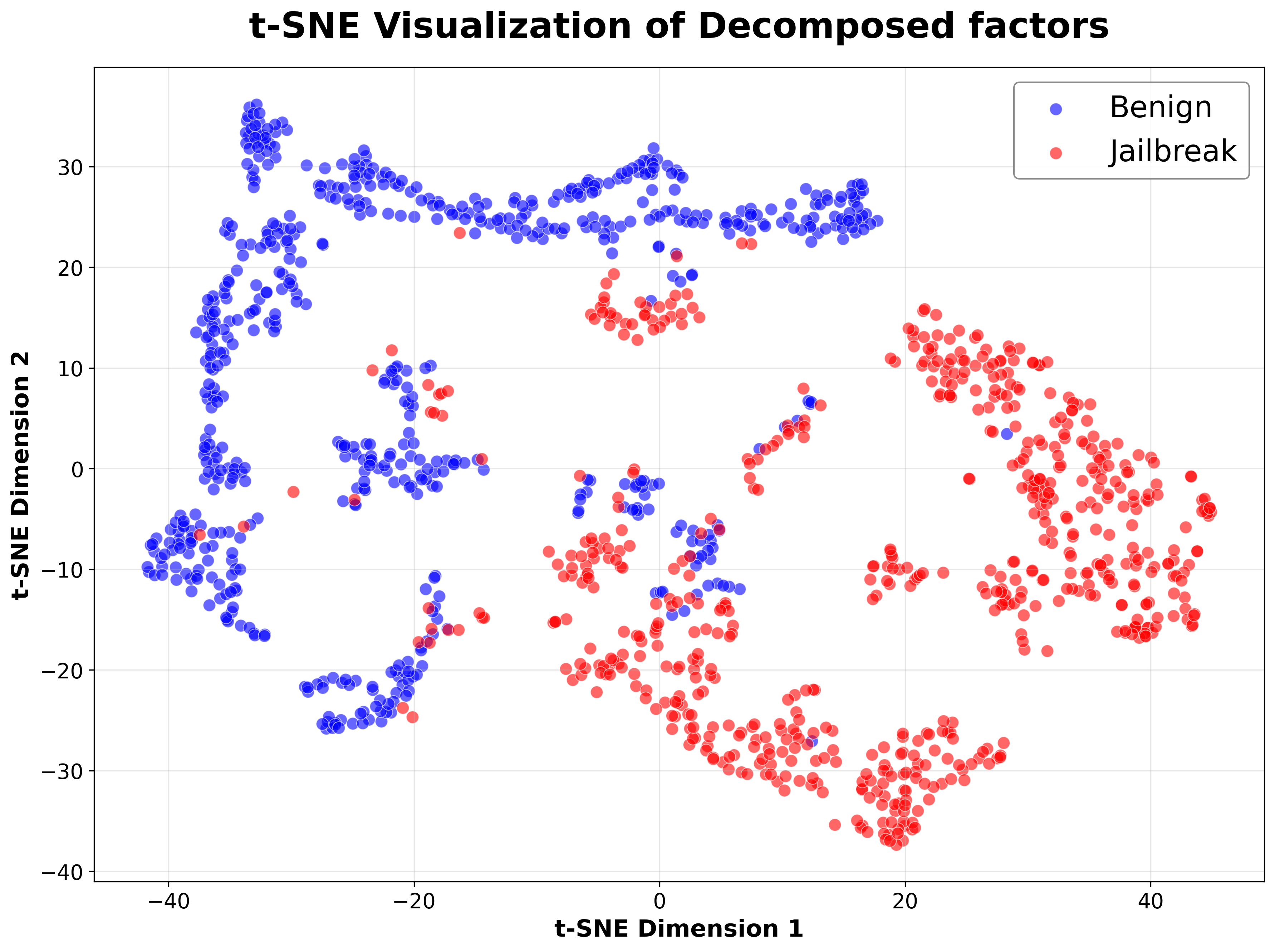

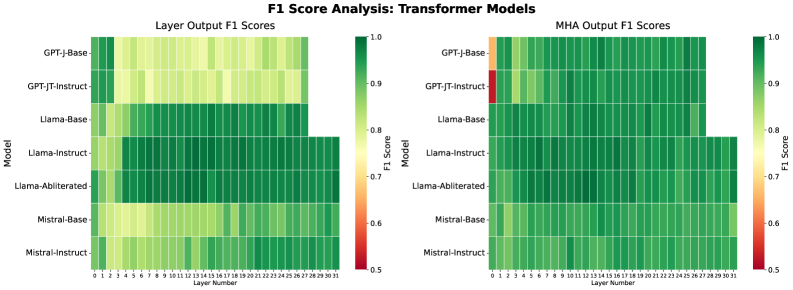

Figure 3. (Left) Layer-wise F1 scores using CP-decomposed Transformer layer outputs. (Right) Layer-wise F1 scores using CP-decomposed multi-head attention representations. Jailbreak and benign prompts become reliably separable at early depths, suggesting that adversarial intent is encoded shortly after input embedding. The strong performance of attention-based features further indicates that prompt-type information is reflected in token interaction structure as well as in hidden representations.

5.1. Datasets and Prompt Scope

We used two prompt sources with provenance relationships to separate representation learning from mitigation evaluation.

Training/representation analysis. For latent-space analysis, we use the Jailbreak Classification dataset from Hugging Face (Hao, [n. d.]). This dataset provides labeled benign and jailbreak prompts and is used to (i) extract layer-wise hidden representations and multi-head attention (MHA) outputs, (ii) learn CP decomposition factors for each layer and representation type, and (iii) train a lightweight classifier on the resulting latent features.

Test/mitigation evaluation. To evaluate layer-aware bypass at inference time, we construct a held-out test set of 200 prompts (100 benign, 100 jailbreak) sourced from the ‘In the Wild Jailbreak Prompts’ (Shen et al., 2024) prompt collection. (Hao, [n. d.]) reports that its jailbreak prompts are drawn from (Shen et al., 2024), which motivates this choice, as these evaluations are consistent and distinct from the training corpus.

Attack scope. Both datasets primarily consist of instruction-level jailbreaks (e.g., persona overrides, explicit safety negation, role-play framing, and meta-instructions). They do not include optimization-based attacks such as GCG (Zou et al., 2023), PAIR (Chao et al., 2024), or other gradient-guided adversarial suffix constructions. Since our framework operates on internal representations, extending it to additional attack families can be achieved by incorporating corresponding prompt distributions during factor learning; we leave such evaluations to future work.

5.2. Hidden Representation Analysis

We assess whether internal model representations can reliably separate jailbreak from benign prompts across model families, layers, and representation types. Our analysis spans eight models: base, instruction-tuned, abliterated, and state-space (Mamba), providing a broad view across training paradigms.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Line Chart: Mamba-2.8B: Block vs Mixer Output F1 Scores

### Overview

This line chart compares the F1 scores of "Block Output" and "Mixer Output" across different layers in a Mamba-2.8B model. The x-axis represents the layer number, and the y-axis represents the F1 score. The chart displays the performance of each output type as the model depth increases.

### Components/Axes

* **Title:** Mamba-2.8B: Block vs Mixer Output F1 Scores

* **X-axis Label:** Layer

* **Y-axis Label:** F1 Score

* **Y-axis Scale:** Ranges from approximately 0.5 to 1.0, with tick marks at 0.6, 0.7, 0.8, 0.9, and 1.0.

* **X-axis Scale:** Ranges from 0 to 56, with tick marks at intervals of 8.

* **Legend:** Located in the bottom-left corner.

* **Blue Line:** Block Output

* **Pink/Magenta Line:** Mixer Output

### Detailed Analysis

The chart shows two lines representing the F1 scores for Block Output and Mixer Output as a function of layer number.

**Block Output (Blue Line):**

The line starts at approximately 0.85 at layer 0, exhibits some initial fluctuations, then generally increases to a plateau around 0.95 between layers 16 and 48. After layer 48, the line begins a slight downward trend, ending at approximately 0.92 at layer 56.

* Layer 0: ~0.85

* Layer 8: ~0.89

* Layer 16: ~0.93

* Layer 24: ~0.94

* Layer 32: ~0.95

* Layer 40: ~0.95

* Layer 48: ~0.95

* Layer 56: ~0.92

**Mixer Output (Pink/Magenta Line):**

The line begins at approximately 0.82 at layer 0, shows more pronounced fluctuations than the Block Output line, reaching a peak around 0.96 at layer 24. It then fluctuates around 0.94-0.95 until layer 48, after which it declines to approximately 0.91 at layer 56.

* Layer 0: ~0.82

* Layer 8: ~0.88

* Layer 16: ~0.92

* Layer 24: ~0.96

* Layer 32: ~0.94

* Layer 40: ~0.95

* Layer 48: ~0.94

* Layer 56: ~0.91

### Key Observations

* Both Block Output and Mixer Output achieve high F1 scores (above 0.9) across most layers.

* Mixer Output exhibits greater variability in F1 scores compared to Block Output.

* Mixer Output initially starts with a lower F1 score than Block Output but reaches a higher peak around layer 24.

* Both lines show a slight decline in F1 score towards the final layers (around layer 56).

### Interpretation

The data suggests that both Block Output and Mixer Output perform well in the Mamba-2.8B model, achieving high F1 scores across most layers. The Mixer Output demonstrates more dynamic behavior, with larger fluctuations in performance. The initial lower performance of Mixer Output, followed by a peak, could indicate a period of adaptation or learning within the model. The slight decline in both outputs towards the end suggests potential saturation or diminishing returns as the model depth increases. The consistent high performance of Block Output suggests it is a more stable component within the model. The differences in the curves could be indicative of the strengths and weaknesses of each output type within the Mamba architecture. Further investigation would be needed to understand the reasons behind these differences and their impact on the overall model performance.

</details>

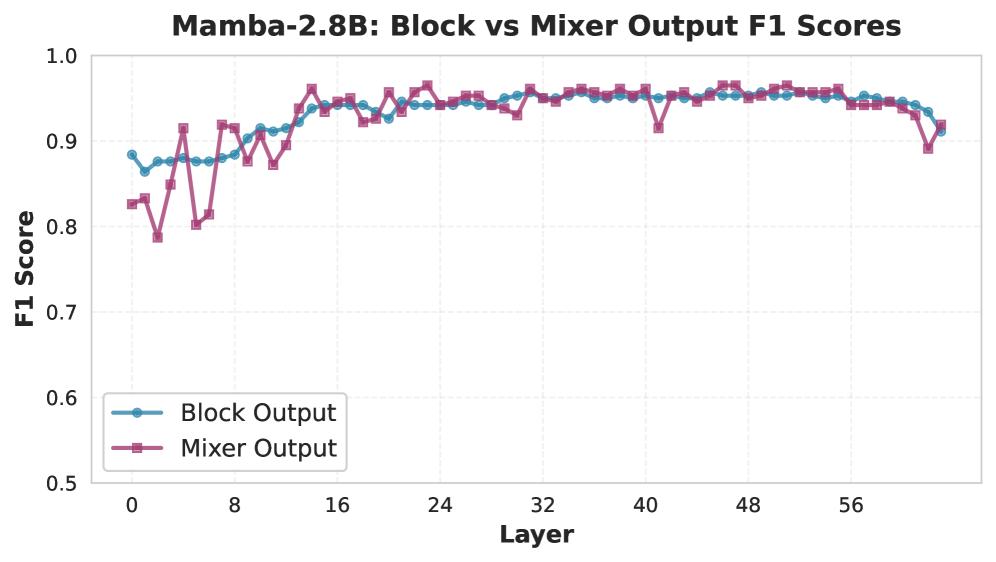

Figure 4. Layer-wise F1 scores for CP-decomposed Mamba representations (mixer and block outputs) showing early and increasing separability between benign and jailbreak prompts, indicating that state-space architectures encode adversarial prompt structure in their internal representations.

For each model, we extract hidden states and multi-head attention (MHA) outputs across all layers. These are aggregated into third-order tensors ( $N× T× d$ ) and decomposed using CP tensor decomposition (rank $r=20$ ) to obtain low-dimensional features for each prompt. We fix the CP decomposition rank to $r=20$ for all experiments, balancing expressiveness and efficiency as discussed in § 3.2, and to ensure consistent latent representations across models. A lightweight classifier is trained on these features to predict jailbreak status. Fig. 3 presents layer-wise F1 scores for each model. For Mamba, we report results from both the mixer (analogous to MHA) and full block output in Fig. 4.

Our results show a clear separation between the two prompt types in the learned latent space, achieving consistently high F1 scores across all evaluated models. These findings suggest that jailbreak behavior manifests as identifiable and discriminative patterns within internal representations, independent of output quality or alignment stage, and can be effectively leveraged for detection without model fine-tuning.

Qualitative Analysis.

For clarity of presentation, we visualize qualitative results for instruction-tuned models, which provide the most interpretable view of aligned internal dynamics. The qualitative patterns discussed here are representative of those observed across all evaluated models.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Heatmaps: Activation Patterns for Language Models

### Overview

The image presents a 3x3 grid of heatmaps, visualizing activation patterns for three different language models (GPT-J 6B, LLaMA-3 1.8B, and Mistral 7B) under two conditions: "Benign" and "Jailbreak". The third heatmap in each row shows the "Difference" between the "Benign" and "Jailbreak" activations. Each heatmap plots activation values against "Key Token" and "Query Token" dimensions.

### Components/Axes

Each heatmap shares the following components:

* **Title:** Indicates the model and layer being visualized (e.g., "GPT-J 6B - Layer 7").

* **X-axis:** Labeled "Key Token", ranging from 0 to 448, with markers at intervals of 64.

* **Y-axis:** Labeled "Query Token", ranging from 0 to 448, with markers at intervals of 64.

* **Color Scale:** A continuous color scale ranging from approximately -10 to 4, with colors transitioning from dark purple/blue (low values) to yellow/red (high values).

* **Sub-Titles:** "Benign", "Jailbreak", and "Difference" indicate the condition being visualized.

### Detailed Analysis or Content Details

**Row 1: GPT-J 6B - Layer 7**

* **Benign:** A diagonal band of high activation (yellow/red) extends from the bottom-left to the top-right corner. Values appear to peak around 3-4. Activation is low (dark purple) elsewhere.

* **Jailbreak:** A mostly uniform dark purple/blue color indicates low activation across the entire heatmap. There's a slight diagonal band of slightly higher activation (purple) but significantly lower than the "Benign" case. Values are around -2 to -8.

* **Difference:** A diagonal band of positive values (yellow/red) mirrors the "Benign" heatmap, indicating a significant increase in activation in this region when the input is benign. Values range from approximately -2 to 4.

**Row 2: LLaMA-3 1.8B - Layer 4**

* **Benign:** A strong diagonal band of high activation (yellow/red) is present, similar to GPT-J, but more concentrated. Values peak around 3-4.

* **Jailbreak:** Predominantly dark purple/blue, indicating low activation. A faint diagonal band of slightly higher activation (purple) is visible. Values are around -2 to -8.

* **Difference:** A diagonal band of positive values (yellow/red) corresponds to the "Benign" heatmap, showing increased activation in the same region. Values range from approximately -2 to 4.

**Row 3: Mistral 7B - Layer 18**

* **Benign:** A diagonal band of high activation (yellow/red) is present, but it's broader and less sharply defined than in the other models. Values peak around 3-4.

* **Jailbreak:** A diagonal band of negative activation (dark blue/purple) is visible, contrasting with the "Benign" case. Values range from approximately -10 to -2.

* **Difference:** A diagonal band of positive values (yellow/red) indicates increased activation in the same region as the "Benign" heatmap. Values range from approximately -2 to 4.

### Key Observations

* All three models exhibit a strong diagonal activation pattern in the "Benign" condition.

* The "Jailbreak" condition consistently results in lower overall activation across all models.

* The "Difference" heatmaps highlight the regions where activation is most significantly affected by the "Jailbreak" condition.

* Mistral 7B shows a negative activation band in the "Jailbreak" condition, which is different from the other two models.

### Interpretation

The heatmaps suggest that the "Jailbreak" condition significantly alters the activation patterns within these language models. The strong diagonal activation in the "Benign" condition likely represents the model's normal processing of coherent input. The suppression of activation in the "Jailbreak" condition could indicate that the model is recognizing and attempting to mitigate potentially harmful or undesirable input. The "Difference" heatmaps visually confirm this, showing that the regions of highest activation in the "Benign" condition are most affected by the "Jailbreak" condition.

The negative activation band observed in Mistral 7B during the "Jailbreak" condition is particularly interesting. It could suggest a different mechanism for handling adversarial inputs compared to GPT-J and LLaMA-3. This could be due to architectural differences or training data.

The consistent diagonal pattern across all models and conditions suggests a fundamental aspect of how these models process information, potentially related to attention mechanisms or token relationships. The data suggests that jailbreaking attempts disrupt this normal processing pattern, leading to altered activation landscapes.

</details>

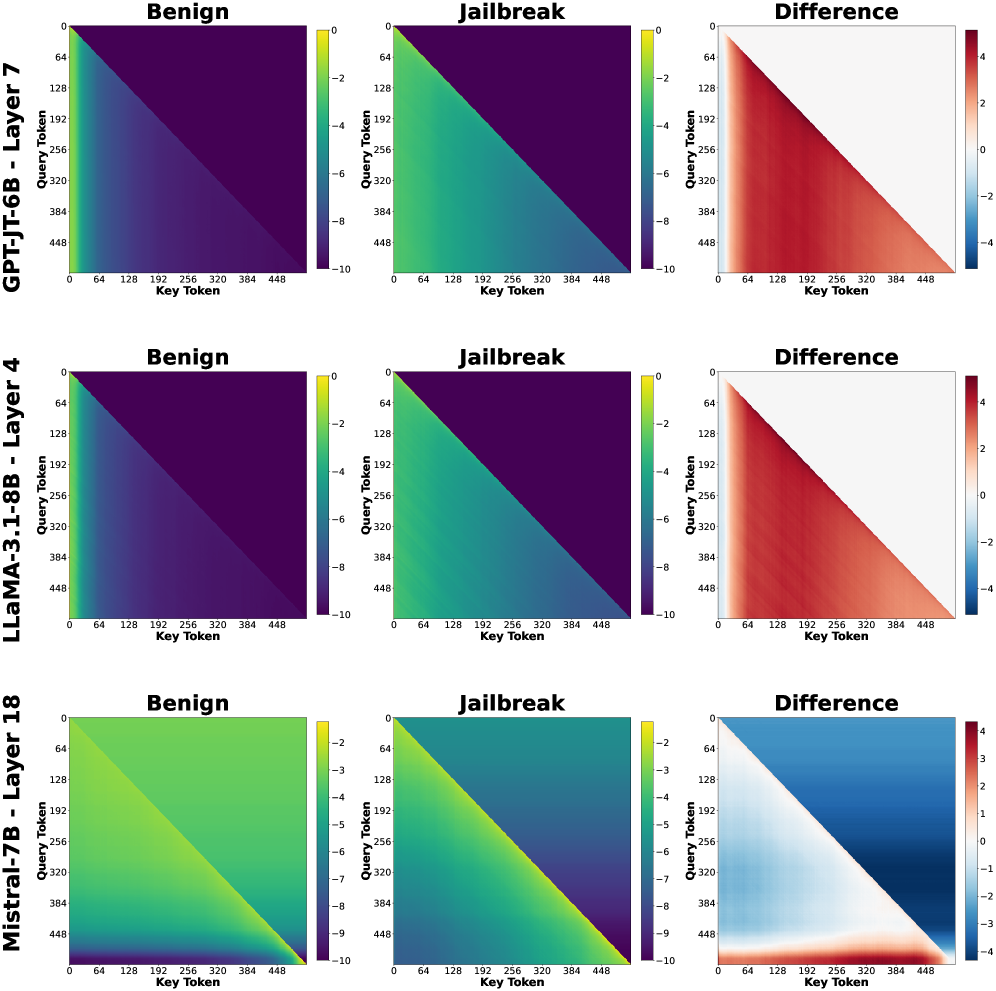

Figure 5. Self-attention maps for three instruction-tuned models, averaged over benign and jailbreak prompts (log 10 scale). Difference maps (right) highlight systematic but localized changes in attention patterns induced by jailbreak prompts, suggesting that adversarial intent manifests as targeted rerouting of attention rather than global disruption.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Heatmaps: Activation Analysis of Language Models - Benign vs. Jailbreak

### Overview

The image presents a 3x3 grid of heatmaps, comparing the activation patterns of three different language models (GPT-JT-6B, LLaMA-3-1.8B, and Mistral-7B) under benign and jailbreak prompts. Each model has three heatmaps: one for benign prompts, one for jailbreak prompts, and one showing the difference between the two. The heatmaps visualize activation levels across layers (y-axis) and token positions (x-axis).

### Components/Axes

* **Models:** GPT-JT-6B, LLaMA-3-1.8B, Mistral-7B (arranged vertically)

* **Prompts:** Benign, Jailbreak, Difference (arranged horizontally)

* **X-axis:** Token Position (ranging from approximately 0 to 448, with markers at 64, 128, 192, 256, 320, 384, and 448)

* **Y-axis:** Layer (ranging from approximately 0 to 24, with markers at 4, 8, 12, 16, 20, and 24)

* **Color Scales:** Each heatmap has a distinct color scale representing activation levels.

* GPT-JT-6B Difference: Ranges from -2 (blue) to 2 (red).

* LLaMA-3-1.8B Difference: Ranges from -0.2 (blue) to 0.2 (red).

* Mistral-7B Difference: Ranges from -1.5 (blue) to 1.5 (red).

* Benign and Jailbreak heatmaps use a similar color scheme, but the scales are not explicitly labeled.

### Detailed Analysis or Content Details

**GPT-JT-6B:**

* **Benign:** The heatmap is predominantly yellow/green, indicating moderate activation across all layers and token positions. There's a slight gradient, with higher activation in the middle layers (around layer 12-16).

* **Jailbreak:** Similar to the benign heatmap, it's mostly yellow/green. There's a slightly more pronounced gradient, with a potential increase in activation in the middle layers.

* **Difference:** The heatmap shows a clear pattern. The top half (layers 0-12) is predominantly blue (negative difference), indicating lower activation during jailbreak compared to benign prompts. The bottom half (layers 12-24) is predominantly red (positive difference), indicating higher activation during jailbreak. The strongest differences are around token positions 192-320.

**LLaMA-3-1.8B:**

* **Benign:** The heatmap is almost entirely yellow, indicating consistent moderate activation across all layers and token positions.

* **Jailbreak:** Similar to the benign heatmap, it's mostly yellow. There's a very subtle gradient.

* **Difference:** The heatmap shows very small differences. Most of the heatmap is white/light yellow (close to zero difference). There are some very faint blue and red areas, indicating minor activation differences.

**Mistral-7B:**

* **Benign:** The heatmap is predominantly yellow/green, with a gradient showing higher activation in the middle layers (around layer 12-16).

* **Jailbreak:** Similar to the benign heatmap, it's mostly yellow/green. There's a slightly more pronounced gradient.

* **Difference:** The heatmap shows a pattern similar to GPT-JT-6B, but more pronounced. The top half (layers 0-12) is predominantly blue (negative difference), and the bottom half (layers 12-24) is predominantly red (positive difference). The strongest differences are around token positions 192-320.

### Key Observations

* GPT-JT-6B and Mistral-7B exhibit a clear shift in activation patterns between benign and jailbreak prompts, with different layers responding differently.

* LLaMA-3-1.8B shows minimal activation differences between benign and jailbreak prompts.

* The difference heatmaps for GPT-JT-6B and Mistral-7B suggest that the lower layers are more suppressed during jailbreak, while the higher layers are more activated.

* The most significant differences in activation appear to occur around token positions 192-320 for GPT-JT-6B and Mistral-7B.

### Interpretation

The heatmaps suggest that GPT-JT-6B and Mistral-7B employ different activation strategies when processing benign versus jailbreak prompts. The layer-specific activation differences indicate that these models might be utilizing different parts of their neural networks to handle potentially harmful requests. The suppression of lower layers and activation of higher layers during jailbreak could be a mechanism for generating responses that bypass safety constraints.

LLaMA-3-1.8B's minimal activation differences suggest that it might be less sensitive to the type of prompt or that its activation patterns are more consistent regardless of the input. This could indicate a different internal representation of language or a more robust safety mechanism.

The concentration of differences around token positions 192-320 might correspond to the part of the prompt where the jailbreak attempt is most evident. This could be a region where the model detects a potentially harmful request and adjusts its activation accordingly.

These findings highlight the importance of analyzing internal activations to understand how language models respond to different types of prompts and to identify potential vulnerabilities. The differences in activation patterns across models suggest that different architectures and training procedures can lead to varying levels of sensitivity to jailbreak attempts.

</details>

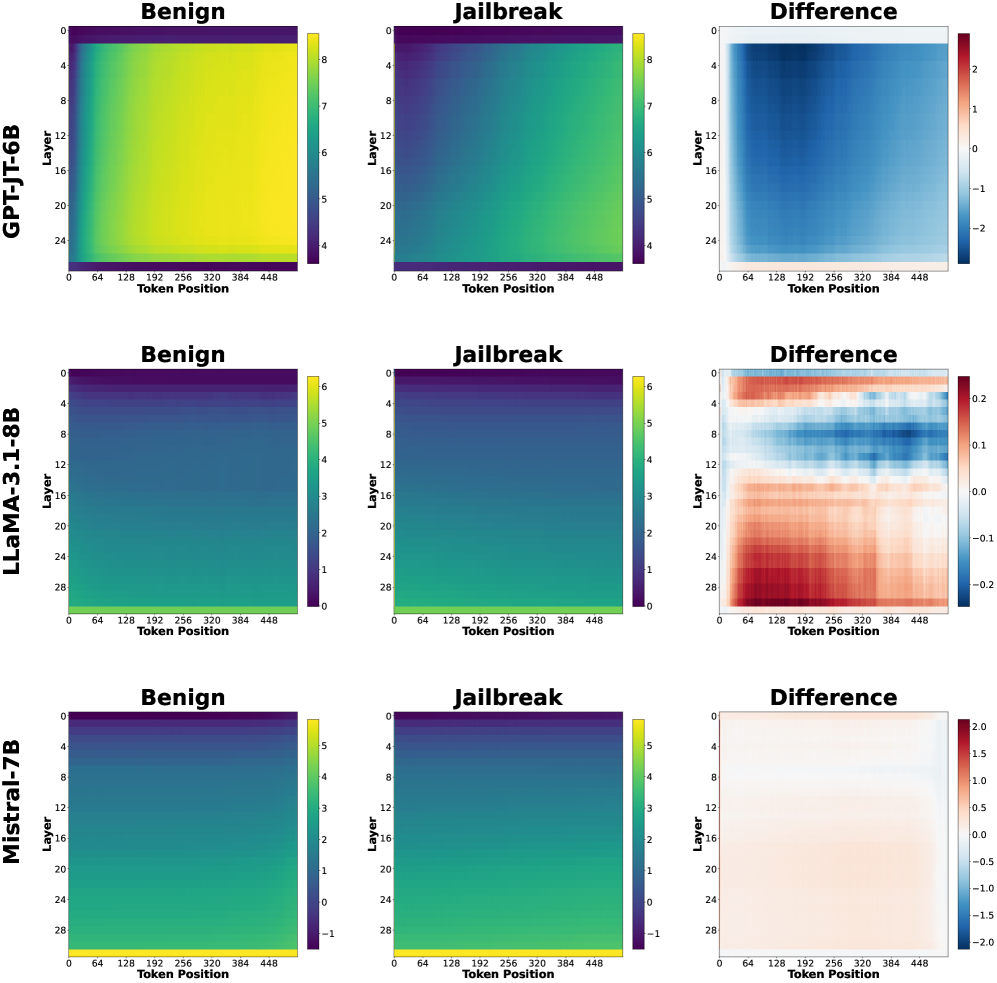

Figure 6. Layer-wise log-magnitude of hidden representations for benign (left) and jailbreak (middle) prompts, averaged across prompts, with their difference shown on the right. The difference heatmaps reveal consistent, localized deviations across layers, highlighting where adversarial prompts induce layer-dependent representational shifts.

Aggregated Self-Attention Heatmaps.

We use aggregated self-attention heatmaps to qualitatively assess how jailbreak prompts alter token-to-token information routing within the model. While attention alone does not encode semantic content, systematic differences in attention patterns can indicate how adversarial prompts redirect internal focus during processing.

For each instruction-tuned model and transformer layer $\ell$ , we extract the self-attention weight tensors

$$

\mathbf{A}^{(\ell)}\in\mathbb{R}^{N\times H\times T\times T},

$$

where $N$ is the number of prompts, $H$ the number of attention heads, and $T$ the (padded) token length. To obtain a stable, global view of attention behavior, we aggregate over both prompts and heads. Let $\mathcal{I}_{\text{ben}}$ and $\mathcal{I}_{\text{jb}}$ denote the index sets of benign and jailbreak prompts, respectively. We compute the class-wise, head-averaged attention maps:

$$

\bar{\mathbf{A}}^{(\ell)}_{\text{ben}}=\frac{1}{|\mathcal{I}_{\text{ben}}|H}\sum_{n\in\mathcal{I}_{\text{ben}}}\sum_{h=1}^{H}\mathbf{A}^{(\ell)}_{n,h,:,:},\qquad\bar{\mathbf{A}}^{(\ell)}_{\text{jb}}=\frac{1}{|\mathcal{I}_{\text{jb}}|H}\sum_{n\in\mathcal{I}_{\text{jb}}}\sum_{h=1}^{H}\mathbf{A}^{(\ell)}_{n,h,:,:}.

$$

To highlight systematic differences between prompt types, we additionally compute a per-layer difference map:

$$

\Delta\mathbf{A}^{(\ell)}=\bar{\mathbf{A}}^{(\ell)}_{\text{jb}}-\bar{\mathbf{A}}^{(\ell)}_{\text{ben}}.

$$

Visualizing $\bar{\mathbf{A}}^{(\ell)}{\text{ben}}$ , $\bar{\mathbf{A}}^{(\ell)}{\text{jb}}$ , and $\Delta\mathbf{A}^{(\ell)}$ (Fig. 6) shows that jailbreak prompts lead to consistent, localized changes in attention patterns. This indicates that adversarial prompts influence attention by selectively emphasizing specific instruction or control tokens, providing qualitative evidence that jailbreak behavior arises from targeted changes in information flow rather than global attention disruption.

Hidden-Representation Magnitude Heatmaps.

While attention maps reflect information routing, hidden representations capture the content and intensity of internal computation. We therefore analyze layer-wise hidden-state magnitudes to understand how strongly jailbreak prompts perturb internal activations across network depth.

For each layer $\ell$ , we extract hidden states

$$

\mathbf{H}^{(\ell)}\in\mathbb{R}^{N\times T\times D},

$$

where $D$ is the hidden dimensionality. To summarize activation strength across token positions, we compute the per-token $\ell_{2}$ magnitude:

$$

\mathbf{M}^{(\ell)}_{n,t}=\left\lVert\mathbf{H}^{(\ell)}_{n,t,:}\right\rVert_{2},\qquad\mathbf{M}^{(\ell)}\in\mathbb{R}^{N\times T}.

$$

We then average magnitudes across prompts within each class:

$$

\bar{\mathbf{M}}^{(\ell)}_{\text{ben}}(t)=\frac{1}{|\mathcal{I}_{\text{ben}}|}\sum_{n\in\mathcal{I}_{\text{ben}}}\mathbf{M}^{(\ell)}_{n,t},\qquad\bar{\mathbf{M}}^{(\ell)}_{\text{jb}}(t)=\frac{1}{|\mathcal{I}_{\text{jb}}|}\sum_{n\in\mathcal{I}_{\text{jb}}}\mathbf{M}^{(\ell)}_{n,t}.

$$

For visualization, we apply a logarithmic transform:

$$

\tilde{\mathbf{M}}^{(\ell)}(t)=\log\big(\bar{\mathbf{M}}^{(\ell)}(t)+\varepsilon\big),

$$

with a small $\varepsilon>0$ for numerical stability. We plot $\tilde{\mathbf{M}}^{(\ell)}(t)$ as heatmaps with layers on the y-axis and token positions on x-axis.

Although the averaged hidden-state magnitudes for benign and jailbreak prompts appear broadly similar (especially for LLaMA abd Mistral), their difference heatmaps reveal consistent, localized deviations across layers. This indicates that jailbreak behavior does not manifest as a global disruption of internal activations, but rather as subtle, structured changes superimposed on otherwise normal model processing.

5.3. Layer-Aware Mitigation via Latent-Space Susceptibility

We evaluate our second proposed method, layer-aware mitigation via latent-space susceptibility, to check whether representation-level signals can be used to suppress jailbreak execution during inference without relying on output-level filtering or fine-tuning.

Experimental Setup.

We conduct this experiment on the abliterated LLaMA 3.1 8B model described earlier. Evaluation is performed on a held-out set of 200 prompts (100 benign, 100 jailbreak), using latent factors learned during the analysis phase (§ 5.2).

Inference-time Susceptibility Scoring

Given an input prompt at inference time, we extract layer outputs and attention representations, project them onto the pre-learned CP factors, and use a lightweight classifier to compute a per-layer susceptibility score indicating the strength of jailbreak-correlated features. Layers whose predicted jailbreak probability exceeds a fixed threshold ( $\tau=0.7$ ) are treated as highly susceptible. The threshold $\tau$ is a tunable hyperparameter that controls the trade-off between mitigation strength and preservation of benign behavior.

Layer-/Head-Bypass Intervention.

Based on the susceptibility score, we selectively perform: (i) Layer Bypass: bypassing selected layer outputs; and (ii) MHA Bypass: bypassing selected attention components. This intervention is parameter-free (no fine-tuning), prompt-conditional (depends on the susceptibility profile), and does not require any output-side heuristics.

Output-Based Evaluation with LLM-Assisted Judging.

Since our goal is to prevent harmful compliance rather than optimize helpfulness, we evaluate mitigation effectiveness based on observed output behavior. Model responses are categorized as: (i) harmful completions, where the jailbreak intent succeeds; (ii) benign completions, where the model responds appropriately; and (iii) disrupted outputs, including truncated, repetitive, or incoherent text.

For jailbreak prompts, disrupted or non-compliant outputs are treated as successful defenses, while for benign prompts such outputs are undesirable. Output labels are assigned using an LLM-as-a-judge rubric, followed by manual review of ambiguous cases. This evaluation protocol follows established practice for open-ended generation assessment with human validation (Zheng et al., 2023; Dubois et al., 2024; Li et al., 2024). Based on these criteria, we define the confusion matrix as follows:

| Jailbreak | Harmful completion | False Negative (FN) |

| --- | --- | --- |

| Jailbreak | Disrupted/benign output | True Positive (TP) |

| Benign | Benign completion | True Negative (TN) |

| Benign | Disrupted output | False Positive (FP) |

Results.

Table 1 summarizes the confusion-matrix counts. Layer-guided bypass suppresses most jailbreak attempts (TP=78) while largely preserving benign behavior (TN=94). In contrast, MHA-only bypass results in substantially more jailbreak failures (FN=39), indicating that layer outputs capture a larger fraction of jailbreak-relevant computation than attention components alone.

To provide a compact summary aligned with prior jailbreak evaluations, we additionally report the attack success rate (ASR), defined as the fraction of jailbreak prompts that remain successful after mitigation. Layer-output bypass reduces ASR to $22\%$ (22/100), compared to $39\%$ for MHA-only bypass, highlighting the effectiveness of layer-level intervention.

Table 1. Confusion matrix counts for latent-space-guided mitigation (100 jailbreak and 100 benign prompts).

| Layer Bypass | 78 | 22 | 94 | 6 |

| --- | --- | --- | --- | --- |

| MHA Bypass | 61 | 39 | 92 | 8 |

Failure (False Negative) Analysis

We examine the 22 jailbreak prompts that remain harmful after layer-output bypass. The majority are persona- or roleplay-based prompt injections (e.g., “never refuse,” “no morals,” forced speaker tags such as “AIM:” and “[H4X]:”) that aim to establish persistent control over the model’s identity, tone, and formatting. Because such instructions are repeatedly reinforced throughout the prompt, elements of adversarial control can persist even when highly susceptible layers are bypassed.

Additional failures stem from susceptibility estimation: the intervention targets layers exceeding a fixed probability threshold chosen to preserve benign behavior. Attacks that distribute their influence across multiple layers, or weakly activate any single layer, may therefore evade suppression despite succeeding overall. Some failures also involve milder jailbreaks that retain adversarial framing without immediately producing explicit harmful content; under our conservative evaluation criterion, these are counted as failures.

These limitations are addressable within the proposed framework by expanding the diversity of jailbreak styles used for latent factor learning and by adopting adaptive or cumulative susceptibility criteria. Since the method operates entirely in latent space, such extensions require no architectural changes.

6. Conclusion

Our hypothesis and experiments indicate that internal representations of LLMs contain sufficiently strong and consistent signals to both detect jailbreak prompts and, in many cases, disrupt jailbreak execution at inference time. Importantly, these capabilities emerge from lightweight representation-level analysis and intervention, without requiring additional post-training, auxiliary models, or complex rule-based filtering. The consistency of these findings across diverse model families suggests that adversarial intent leaves stable latent-space signatures, motivating internal-representation monitoring as a practical and broadly applicable direction for understanding and mitigating jailbreak behavior.

7. GenAI Usage Disclosure

The authors acknowledge the use of AI-based writing and coding assistance tools during the preparation of this manuscript. These tools were used exclusively to improve clarity, organization, and academic tone of text written by the authors, as well as to assist with code formatting and plot generation. All scientific ideas, methodologies, analyses, and conclusions are the original intellectual contributions of the authors. No AI system was used to generate research ideas or substantive technical content, and all AI-assisted revisions were carefully reviewed and validated by the authors.

References

- (1)

- jai ([n. d.]) [n. d.]. Implementation of the proposed method. https://anonymous.4open.science/r/Jailbreaking-leaves-a-trace-Understanding-and-Detecting-Jailbreak-Attacks-in-LLMs-C401

- mam ([n. d.]) [n. d.]. Mamab2.8b. https://huggingface.co/state-spaces/mamba-2.8b-hf

- AI (2023) Mistral AI. 2023. Mistral 7B Instruct v0.1. https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.1.

- AI@Meta (2024a) AI@Meta. 2024a. Llama 3 Instruct Models. https://ai.meta.com/llama/. LLaMA-3.1-8B-Instruct.

- AI@Meta (2024b) AI@Meta. 2024b. Llama 3 Models. https://ai.meta.com/llama/. LLaMA-3.1-8B base model.

- Bai et al. (2022) Yuntao Bai, Andy Jones, Kamal Ndousse, Amanda Askell, Anna Chen, Nova DasSarma, Dawn Drain, Stanislav Fort, Deep Ganguli, Tom Henighan, Nicholas Joseph, Saurav Kadavath, Jackson Kernion, Tom Conerly, Sheer El-Showk, Nelson Elhage, Zac Hatfield-Dodds, Danny Hernandez, Tristan Hume, Scott Johnston, Shauna Kravec, Liane Lovitt, Neel Nanda, Catherine Olsson, Dario Amodei, Tom Brown, Jack Clark, Sam McCandlish, Chris Olah, Ben Mann, and Jared Kaplan. 2022. Training a Helpful and Harmless Assistant with Reinforcement Learning from Human Feedback. arXiv:2204.05862 [cs.CL] https://arxiv.org/abs/2204.05862

- Candogan et al. (2025) Leyla Naz Candogan, Yongtao Wu, Elias Abad Rocamora, Grigorios Chrysos, and Volkan Cevher. 2025. Single-pass Detection of Jailbreaking Input in Large Language Models. Transactions on Machine Learning Research (2025). https://openreview.net/forum?id=42v6I5Ut9a

- Chao et al. (2024) Patrick Chao, Alexander Robey, Edgar Dobriban, Hamed Hassani, George J. Pappas, and Eric Wong. 2024. Jailbreaking Black Box Large Language Models in Twenty Queries. arXiv:2310.08419 [cs.LG] https://arxiv.org/abs/2310.08419

- Chen et al. (2023) Bocheng Chen, Advait Paliwal, and Qiben Yan. 2023. Jailbreaker in Jail: Moving Target Defense for Large Language Models. arXiv:2310.02417 [cs.CR] https://arxiv.org/abs/2310.02417

- Computer (2022) Together Computer. 2022. GPT-JT-6B: Instruction-Tuned GPT-J Model. https://huggingface.co/togethercomputer/GPT-JT-6B-v1.

- Deng et al. (2024) Yue Deng, Wenxuan Zhang, Sinno Jialin Pan, and Lidong Bing. 2024. Multilingual Jailbreak Challenges in Large Language Models. arXiv:2310.06474 [cs.CL] https://arxiv.org/abs/2310.06474

- Dubois et al. (2024) Yann Dubois, Aarohi Srivastava, Abhinav Venigalla, et al. 2024. Length-Controlled AlpacaEval: A Simple Way to Debias Automatic Evaluators. arXiv preprint arXiv:2404.04475 (2024). https://arxiv.org/abs/2404.04475

- Elhoushi et al. (2024) Mostafa Elhoushi, Akshat Shrivastava, Diana Liskovich, Basil Hosmer, Bram Wasti, Liangzhen Lai, Anas Mahmoud, Bilge Acun, Saurabh Agarwal, Ahmed Roman, Ahmed Aly, Beidi Chen, and Carole-Jean Wu. 2024. LayerSkip: Enabling Early Exit Inference and Self-Speculative Decoding. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 12622–12642. doi: 10.18653/v1/2024.acl-long.681

- Glaese et al. (2022) Amelia Glaese, Nat McAleese, Maja Trębacz, John Aslanides, Vlad Firoiu, Timo Ewalds, Maribeth Rauh, Laura Weidinger, Martin Chadwick, Phoebe Thacker, Lucy Campbell-Gillingham, Jonathan Uesato, Po-Sen Huang, Ramona Comanescu, Fan Yang, Abigail See, Sumanth Dathathri, Rory Greig, Charlie Chen, Doug Fritz, Jaume Sanchez Elias, Richard Green, Soňa Mokrá, Nicholas Fernando, Boxi Wu, Rachel Foley, Susannah Young, Iason Gabriel, William Isaac, John Mellor, Demis Hassabis, Koray Kavukcuoglu, Lisa Anne Hendricks, and Geoffrey Irving. 2022. Improving alignment of dialogue agents via targeted human judgements. arXiv:2209.14375 [cs.LG] https://arxiv.org/abs/2209.14375

- Hao ([n. d.]) Jack Hao. [n. d.]. Jailbreak Classification Dataset. https://huggingface.co/datasets/jackhhao/jailbreak-classification

- Jiang et al. (2023) Albert Q. Jiang, Alexandre Sablayrolles, et al. 2023. Mistral 7B. https://mistral.ai/news/introducing-mistral-7b/.

- Jiang et al. (2024) Liwei Jiang, Kavel Rao, Seungju Han, Allyson Ettinger, Faeze Brahman, Sachin Kumar, Niloofar Mireshghallah, Ximing Lu, Maarten Sap, Yejin Choi, and Nouha Dziri. 2024. WildTeaming at Scale: From In-the-Wild Jailbreaks to (Adversarially) Safer Language Models. arXiv:2406.18510 [cs.CL] https://arxiv.org/abs/2406.18510

- Kadali and Papalexakis (2025) Sri Durga Sai Sowmya Kadali and Evangelos Papalexakis. 2025. CoCoTen: Detecting Adversarial Inputs to Large Language Models through Latent Space Features of Contextual Co-occurrence Tensors. In Proceedings of the 34th ACM International Conference on Information and Knowledge Management (CIKM ’25). ACM, 4857–4861. doi: 10.1145/3746252.3760886

- Labonne ([n. d.]) M. Labonne. [n. d.]. Meta-Llama-3.1-8B-Instruct-abliterated. https://huggingface.co/mlabonne/Meta-Llama-3.1-8B-Instruct-abliterated. Hugging Face model card.

- Lawson and Aitchison (2025) Tim Lawson and Laurence Aitchison. 2025. Learning to Skip the Middle Layers of Transformers. arXiv:2506.21103 [cs.LG] https://arxiv.org/abs/2506.21103

- Li et al. (2024) Haitao Li, Qian Dong, Junjie Chen, Huixue Su, Yujia Zhou, Qingyao Ai, Ziyi Ye, and Yiqun Liu. 2024. LLMs-as-Judges: A Comprehensive Survey on LLM-based Evaluation Methods. arXiv:2412.05579 [cs.CL] https://arxiv.org/abs/2412.05579

- Liu et al. (2024) Xiaogeng Liu, Nan Xu, Muhao Chen, and Chaowei Xiao. 2024. AutoDAN: Generating Stealthy Jailbreak Prompts on Aligned Large Language Models. arXiv:2310.04451 [cs.CL] https://arxiv.org/abs/2310.04451

- Lu et al. (2024) Weikai Lu, Ziqian Zeng, Jianwei Wang, Zhengdong Lu, Zelin Chen, Huiping Zhuang, and Cen Chen. 2024. Eraser: Jailbreaking Defense in Large Language Models via Unlearning Harmful Knowledge. arXiv:2404.05880 [cs.CL] https://arxiv.org/abs/2404.05880

- Luo et al. (2025) Xuan Luo, Weizhi Wang, and Xifeng Yan. 2025. Adaptive layer-skipping in pre-trained llms. arXiv preprint arXiv:2503.23798 (2025).

- Ouyang et al. (2022) Long Ouyang, Jeff Wu, Xu Jiang, Diogo Almeida, Carroll L. Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, John Schulman, Jacob Hilton, Fraser Kelton, Luke Miller, Maddie Simens, Amanda Askell, Peter Welinder, Paul Christiano, Jan Leike, and Ryan Lowe. 2022. Training language models to follow instructions with human feedback. arXiv:2203.02155 [cs.CL] https://arxiv.org/abs/2203.02155

- Papalexakis (2018) Evangelos E. Papalexakis. 2018. Unsupervised Content-Based Identification of Fake News Articles with Tensor Decomposition Ensembles. https://api.semanticscholar.org/CorpusID:26675959

- Qazi et al. (2024) Zubair Qazi, William Shiao, and Evangelos E. Papalexakis. 2024. GPT-generated Text Detection: Benchmark Dataset and Tensor-based Detection Method. In Companion Proceedings of the ACM Web Conference 2024 (Singapore, Singapore) (WWW ’24). Association for Computing Machinery, New York, NY, USA, 842–846. doi: 10.1145/3589335.3651513

- Shen et al. (2024) Xinyue Shen, Zeyuan Chen, Michael Backes, Yun Shen, and Yang Zhang. 2024. “Do Anything Now”: Characterizing and Evaluating In-The-Wild Jailbreak Prompts on Large Language Models. In ACM SIGSAC Conference on Computer and Communications Security (CCS). ACM.

- Shukor and Cord (2024) Mustafa Shukor and Matthieu Cord. 2024. Skipping Computations in Multimodal LLMs. In Workshop on Responsibly Building the Next Generation of Multimodal Foundational Models. https://openreview.net/forum?id=qkmMvLckB9

- Sidiropoulos et al. (2017) Nicholas D Sidiropoulos, Lieven De Lathauwer, Xiao Fu, Kejun Huang, Evangelos E Papalexakis, and Christos Faloutsos. 2017. Tensor decomposition for signal processing and machine learning. IEEE Transactions on signal processing 65, 13 (2017), 3551–3582.

- Vaswani et al. (2023) Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin. 2023. Attention Is All You Need. arXiv:1706.03762 [cs.CL] https://arxiv.org/abs/1706.03762

- Wang et al. (2021) Ben Wang, Aran Komatsuzaki, and EleutherAI. 2021. GPT-J-6B. https://huggingface.co/EleutherAI/gpt-j-6B. Model card and weights.