# Experiential Reinforcement Learning

> Work done during internship at Microsoft’s Office of Applied Research

## Abstract

Reinforcement learning has become the central approach for language models (LMs) to learn from environmental reward or feedback. In practice, the environmental feedback is usually sparse and delayed. Learning from such signals is challenging, as LMs must implicitly infer how observed failures should translate into behavioral changes for future iterations. We introduce Experiential Reinforcement Learning (ERL), a training paradigm that embeds an explicit experience–reflection–consolidation loop into the reinforcement learning process. Given a task, the model generates an initial attempt, receives environmental feedback, and produces a reflection that guides a refined second attempt, whose success is reinforced and internalized into the base policy. This process converts feedback into structured behavioral revision, improving exploration and stabilizing optimization while preserving gains at deployment without additional inference cost. Across sparse-reward control environments and agentic reasoning benchmarks, ERL consistently improves learning efficiency and final performance over strong reinforcement learning baselines, achieving gains of up to +81% in complex multi-step environments and up to +11% in tool-using reasoning tasks. These results suggest that integrating explicit self-reflection into policy training provides a practical mechanism for transforming feedback into durable behavioral improvement.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Comparison of Three Learning Paradigms

### Overview

The image is a conceptual diagram comparing three machine learning paradigms: Direct Learning (Supervised Fine-Tuning, SFT), Reinforcement Learning (RLVR), and Experiential Learning (ERL). The diagram is divided into three vertical sections, each illustrating the core feedback loop of a paradigm. A horizontal arrow at the bottom indicates a conceptual progression from "Learning from Feedback" to "Learning from Experience."

### Components/Axes

The diagram has no traditional axes. Its components are labeled boxes, arrows, and text annotations arranged in three distinct columns.

**1. Left Column: Direct Learning (SFT)**

* **Title:** "Direct Learning (SFT)"

* **Components:**

* A box labeled "Policy" (top).

* A box labeled "Example" (bottom).

* A gray box labeled "Supervised Learning" positioned on the arrow from "Example" to "Policy".

* **Flow/Text:** An arrow points from "Example" to "Policy". The text on the arrow reads: `π_θ(·|x) ← y'`. This denotes the policy `π_θ` being updated with a target label `y'` given input `x`.

**2. Middle Column: Reinforcement Learning (RLVR)**

* **Title:** "Reinforcement Learning (RLVR)"

* **Components:**

* A box labeled "Policy" (top).

* A box labeled "Environment" (bottom).

* A green box labeled "Action" on the arrow from "Policy" to "Environment".

* A peach-colored box labeled "Scalar Reward" on the arrow from "Environment" to "Policy".

* **Flow/Text:**

* Arrow from "Policy" to "Environment": Labeled with `y ~ π_θ(·|x)`, indicating an action `y` is sampled from the policy.

* Arrow from "Environment" to "Policy": Labeled with `π_θ(·|x) ← r`, indicating the policy is updated based on a scalar reward `r`.

**3. Right Column: Experiential Learning (ERL)**

* **Title:** "Experiential Learning (ERL)"

* **Components:**

* A box labeled "Policy" (top).

* A box labeled "Environment" (bottom).

* A green box labeled "Action" on the arrow from "Policy" to "Environment".

* A blue box labeled "Experience Internalization" positioned between "Environment" and "Policy".

* A peach-colored box labeled "Self-Reflection" positioned below "Experience Internalization".

* **Flow/Text:**

* Arrow from "Policy" to "Environment": Labeled with `y ~ π_θ(·|x)`.

* Arrow from "Environment" to "Policy": This path is more complex. It flows through the "Experience Internalization" box.

* Text in the "Experience Internalization" box: `π_θ(·|x) ← π_θ(·|x, Δ)`. This suggests the policy is updated using an internalized version of experience, parameterized by `Δ`.

* Text in the "Self-Reflection" box: `Δ ~ π_θ(·|x, y, r)`. This indicates that the internal parameter `Δ` is generated by a self-reflection process based on the input `x`, action `y`, and reward `r`.

**Bottom Annotation:**

* A long, gray horizontal arrow spans the width of the diagram beneath the three columns.

* Left end label: "Learning from Feedback" (aligned under SFT and RLVR).

* Right end label: "Learning from Experience" (aligned under ERL).

### Detailed Analysis

The diagram illustrates an evolution in learning complexity:

1. **Direct Learning (SFT):** A simple, one-step supervised loop. The policy is directly corrected towards a known good example (`y'`). The feedback is the example itself.

2. **Reinforcement Learning (RLVR):** An interactive loop with an environment. The policy takes an action, receives a scalar reward signal from the environment, and updates accordingly. Feedback is indirect (a reward number).

3. **Experiential Learning (ERL):** An enhanced interactive loop that adds an internal cognitive layer. Instead of updating the policy directly from the reward `r`, the system first performs "Self-Reflection" to generate an internal representation `Δ` from the tuple `(x, y, r)`. This `Δ` is then used for "Experience Internalization," updating the policy in a more nuanced way (`π_θ(·|x, Δ)`). This suggests learning from a processed, internalized form of experience rather than the raw reward signal.

### Key Observations

* **Increasing Complexity:** The diagrams grow more complex from left to right, adding components (Environment, Action, Self-Reflection) and more sophisticated update rules.

* **Shift in Feedback Source:** The source of learning signal evolves: from a static `y'` (SFT), to an external scalar `r` (RLVR), to an internally generated `Δ` (ERL).

* **Spatial Grounding:** The "Self-Reflection" and "Experience Internalization" boxes in the ERL diagram are centrally located between the Environment and Policy, visually emphasizing their role as a mediating, internal process.

* **Color Coding:** Green is consistently used for the "Action" output. Peach/light red is used for reward-related signals (`r` in RLVR, `Self-Reflection` in ERL). Blue is introduced in ERL for the new "Experience Internalization" process.

### Interpretation

This diagram argues for a progression in machine learning paradigms towards more autonomous and introspective systems.

* **SFT** represents foundational learning from curated data, but it's limited to mimicking provided examples.

* **RLVR** introduces learning through trial-and-error interaction with an environment, a significant step towards autonomous decision-making. However, it relies on an external reward function, which can be sparse or poorly defined.

* **ERL** proposes a next step where the agent doesn't just react to rewards but actively *reflects* on its experiences (`x, y, r`) to form an internal understanding (`Δ`). This internalized experience then guides learning. The key innovation is the **Self-Reflection** module, which transforms raw experience into a form more suitable for deep learning. This mimics aspects of biological learning, where experience is consolidated and interpreted internally, potentially leading to more robust, generalizable, and sample-efficient learning. The bottom arrow frames this as a shift from learning based on external feedback to learning based on internalized experience.

</details>

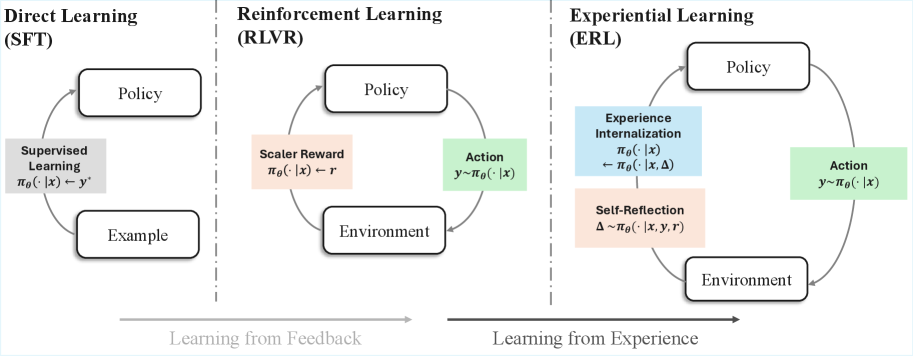

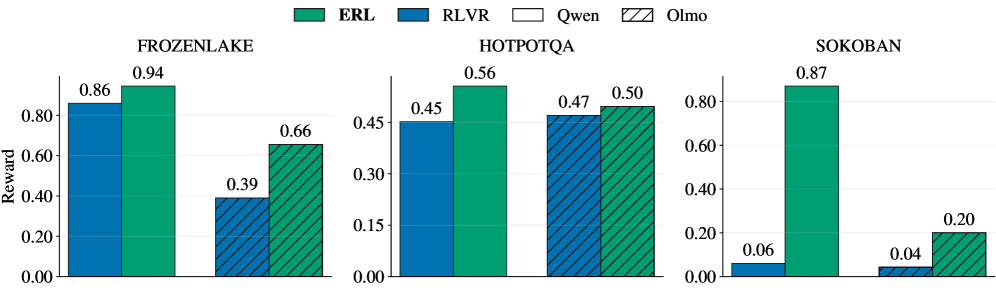

Figure 1: In Experiential Reinforcement Learning (ERL), instead of learning from feedback or outcome directly, an agent learns to (1) verbally reflect on its experience and observed outcome, and (2) internalize the reflections to induce behavioral changes in future iterations.

## 1 Introduction

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Comparison of RLVR and ERL Learning Paradigms in an Unknown Environment

### Overview

The image is a technical diagram comparing two learning paradigms—**RLVR** (Reinforcement Learning with Visual Representations, inferred) and **ERL** (Evolutionary Reinforcement Learning or a similar reflective variant, inferred)—applied to an agent acting in an unknown, grid-based environment. The diagram illustrates the process and outcome of each approach through sequential visual scenes and annotations. The overall task is defined at the top.

### Components/Axes

* **Header/Task Definition:** Text at the top reads: "Task: Act in an unknown environment ※ with no prior knowledge".

* **Main Sections:** The diagram is split horizontally by a dashed line into two primary sections:

* **Top Section:** Labeled **RLVR**.

* **Bottom Section:** Labeled **ERL**.

* **Visual Elements:** Each section contains a sequence of four grid-world screenshots. The environment consists of:

* **Walls:** Dark grey, textured blocks forming maze boundaries.

* **Floor:** Light beige tiles.

* **Agent:** A small human-like icon (blue shirt, brown hair).

* **Boxes:** Brown, square objects with a lighter square pattern on top.

* **Targets:** Small, pinkish-red dots on the floor.

* **Annotations & Flow:**

* **Arrows:** Grey arrows indicate the sequence of steps or flow of the process.

* **Text Labels:** Describe actions or states between scenes (e.g., "Trial & Error", "Forget", "Back & Forth", "Experience Internalization").

* **Outcome Labels:** Below the second and fourth scenes in the RLVR sequence, the text "No reward" appears.

* **Self-Reflection Box:** In the ERL sequence, between the third and fourth scenes, there is a text block titled "Self-Reflection:" containing three bullet points.

* **Legend/Key:** Implicitly defined by the consistent visual elements across all scenes (agent, boxes, walls, targets).

### Detailed Analysis

**RLVR Sequence (Top Section):**

1. **Scene 1 (Left):** Initial state. Agent is in the top-left area. Several boxes and targets are scattered.

2. **Transition 1:** Arrow labeled "Trial & Error" points to Scene 2.

3. **Scene 2:** Agent has moved. A blue line traces a path from the agent's previous position to its current one, ending at a red "X" over a box. This indicates a failed attempt to push the box onto a target. Text below: "No reward".

4. **Transition 2:** Arrow labeled "Forget" points to Scene 3.

5. **Scene 3:** The environment appears reset or unchanged from Scene 1. The agent is back near its starting position. An overarching dashed arrow labeled "Back & Forth" connects Scene 1 and Scene 3, indicating a cycle.

6. **Transition 3:** Arrow labeled "Trial & Error" points to Scene 4.

7. **Scene 4:** Visually identical to Scene 2, showing the same failed attempt. Text below: "No reward". The process is cyclical and unproductive.

**ERL Sequence (Bottom Section):**

1. **Scene 1 (Left):** Identical initial state to RLVR's Scene 1.

2. **Transition 1:** Arrow labeled "Trial & Error" points to Scene 2.

3. **Scene 2:** Identical to RLVR's Scene 2, showing the same failed attempt (agent path, red "X" on box).

4. **Transition 2:** Arrow points to a "Self-Reflection" text block.

5. **Self-Reflection Content:**

* "I guess..."

* "• [Wall Icon] is wall."

* "• I can control [Agent Icon]"

* "• Push [Box Icon] into [Target Icon]"

6. **Transition 3:** Arrow labeled "Experience Internalization" (above the dashed line) points from the Self-Reflection block to Scene 4. A dashed arrow also loops from Scene 4 back to Scene 1, suggesting a learned policy is applied from the start.

7. **Scene 4 (Right):** A new, successful state. The agent is in the bottom-right. Two boxes have been successfully pushed onto two target dots (the dots are now covered by the boxes). The layout of other boxes remains.

### Key Observations

1. **Identical Starting Conditions:** Both RLVR and ERL begin with the exact same environment configuration.

2. **Identical Initial Failure:** Both paradigms experience the same initial "Trial & Error" failure, depicted by the same scene with the red "X".

3. **Divergent Processes:** The critical difference occurs after the first failure.

* **RLVR** enters a "Forget" and "Back & Forth" loop, repeatedly attempting and failing the same action, leading to no reward.

* **ERL** engages in "Self-Reflection," deriving explicit rules about the environment's mechanics (walls, controllability, objective).

4. **Divergent Outcomes:** The reflection enables "Experience Internalization," leading to a successful outcome in the final ERL scene where boxes are on targets. RLVR shows no progress.

5. **Spatial Layout:** The legend (agent, box, target icons) is integrated directly into the "Self-Reflection" text, grounding the abstract rules in the visual symbols used in the diagrams.

### Interpretation

This diagram argues for the superiority of a reflective, model-based learning approach (ERL) over a purely trial-and-error, possibly memory-less approach (RLVR) in novel environments.

* **What the Data Suggests:** The data (visual outcomes) demonstrates that simply repeating failed actions (RLVR) is futile. In contrast, pausing to abstract rules from experience (ERL) allows the agent to build an internal model of the world ("wall," "control," "push into"). This model enables planning and successful task completion.

* **How Elements Relate:** The "Self-Reflection" block is the pivotal component. It transforms raw sensory experience (the failed trial) into declarative knowledge. The "Experience Internalization" arrow signifies the application of this knowledge to modify the agent's policy, leading to a different and successful outcome. The dashed loop in ERL suggests this learned policy can be applied from the outset of similar future tasks.

* **Notable Anomalies/Patterns:** The most striking pattern is the direct visual contrast between the cyclical, static failure of RLVR and the linear, progressive success of ERL. The identical starting points and initial failures make the comparison controlled and highlight the reflection phase as the sole differentiating variable responsible for the success. The diagram is a clear visual metaphor for the adage "learn from your mistakes" applied to artificial intelligence.

</details>

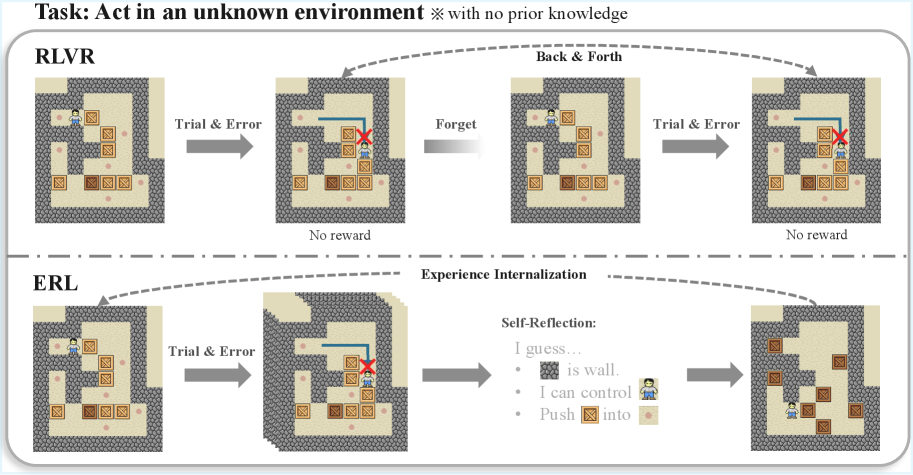

Figure 2: Conceptual comparison of learning dynamics in RLVR and Experiential Reinforcement Learning (ERL). RLVR relies on repeated trial-and-error driven by scalar rewards, leading to back-and-forth exploration without durable correction. ERL augments this process with an experience–reflection–consolidation loop that generates a revised attempt and internalizes successful corrections, enabling persistent behavioral improvement.

Large language models are increasingly deployed as decision-making agents that must act, observe feedback, and adapt their behavior in environments with delayed rewards and partial information (Singh et al., 2025; Yang et al., 2025; Song et al., 2025a; Bai et al., 2026). Reinforcement learning offers a natural framework for improving such agents. The task environments typically provide feedback in the form of outcome reward after an agent generates the entire trajectory. In practice, training agents against such sparse and delayed outcome signals remains difficult, as models must implicitly infer how to translate observed failures into corrective behavior, a process that is often unstable and sample-inefficient (Zhang et al., 2025; Shi et al., 2026). These challenges become more pronounced in agentic reasoning tasks, where multi-step decisions could amplify small errors and obscure credit assignment.

Humans address similar challenges through a process often described as experiential learning, in which effective adaptation arises from a cycle of experience, reflection, conceptualization, and experimentation (Kolb, 2014). After observing an outcome, a learner reflects on what occurred, forms revised internal models, and applies those revisions in subsequent attempts. This cycle transforms raw feedback into actionable behavioral corrections before those corrections are consolidated into future behavior. While language models have demonstrated reflection-like capabilities at inference time, standard reinforcement learning pipelines largely reduce feedback to scalar optimization signals, requiring policies to implicitly discover corrective structure through undirected exploration rather than explicit experiential revision.

This perspective highlights a progression in how language models learn from supervision and interaction, illustrated in Figures 1 and 2. In supervised fine-tuning (SFT), policies imitate fixed examples, enabling strong pattern reproduction but offering no mechanism for revising behavior once deployed. Reinforcement learning with verifiable rewards (RLVR) extends learning into interactive settings by optimizing scalar feedback, allowing agents to improve through trial-and-error; however, corrective structure must still be inferred implicitly from sparse or delayed rewards. As visualized in Figure 2, this can lead to repeated exploration without durable behavioral correction. A natural next step is to structure learning around experience itself, transforming feedback into intermediate reasoning that supports explicit revision and consolidation within each episode. Figure 1 conceptualizes this shift as moving from learning purely from feedback toward deliberate learning from experience.

In this work, we introduce Experiential Reinforcement Learning (ERL), a training paradigm that embeds an explicit experience–reflection–consolidation loop inside reinforcement learning. Instead of learning solely from outcome rewards, the model first produces an initial attempt, receives environment feedback, and generates a structured reflection describing how the attempt should be improved. This reflection conditions a refined second attempt, whose outcome is reinforced and internalized into the base policy. By converting feedback into intermediate reasoning signals, ERL enables the model to perform targeted behavioral correction before policy consolidation. Over time, these corrections become part of the policy itself, allowing improved behavior to persist even when reflection is absent at deployment. An overview of the algorithm is shown in Figure 3.

We evaluate ERL across sparse-reward control environments and agentic reasoning benchmarks spanning two model scales. ERL consistently outperforms RLVR in all six evaluated settings, achieving gains of up to +81% in Sokoban, +27% in FrozenLake, and up to +11% in HotpotQA. These results demonstrate that embedding structured experiential revision into training improves learning efficiency and produces stronger final policies across both control and reasoning tasks.

#### Contributions.

Our main contributions are:

- We introduce Experiential Reinforcement Learning (ERL), a reinforcement learning paradigm that incorporates an explicit experience–reflection–consolidation loop, enabling models to transform environment feedback into structured behavioral corrections.

- We propose an internalization mechanism that consolidates reflection-driven improvements into the base policy, preserving gains without requiring reflection at inference time.

- We demonstrate that experiential reinforcement learning improves training efficiency and final performance across agentic reasoning tasks.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: ERL: Experiential Reinforcement Learning

### Overview

The image is a technical flowchart illustrating a machine learning framework called "ERL: Experiential Reinforcement Learning." It depicts a multi-stage process for training a policy model, involving initial reinforcement learning (RL), a self-reflection phase, a second RL attempt, and a final internalization step via supervised fine-tuning (SFT). The diagram uses boxes, arrows, mathematical symbols, and icons to represent components, data flow, and learning mechanisms.

### Components/Axes

The diagram is organized into two primary horizontal rows, separated by a dashed gray line.

**Top Row (Three-Phase RL Process):**

This row is divided into three sequential phases by vertical dashed orange lines.

1. **First Attempt (RL):** The initial phase.

2. **Self-reflection (RL):** The middle, reflective phase. This section's title and its internal dashed box are colored orange.

3. **Second Attempt (RL):** The final RL phase.

**Bottom Row (Internalization):**

1. **Internalization (SFT):** A parallel process that runs concurrently with the top row's sequence.

**Key Components & Symbols:**

* **Policy Boxes:** Rectangular boxes labeled "Policy," each containing a network graph icon (three interconnected nodes) and a flame icon (🔥) in the top-right corner. There are four such boxes in total.

* **Arrows:** Solid black arrows indicate the primary flow of data and control.

* **Mathematical Symbols:**

* `x`: The input task.

* `y^(1)`: The output from the first policy attempt.

* `f`: Represents "Env. Feedback" (Environment Feedback).

* `⊕`: A summation or combination node (circle with a plus sign).

* `Δ`: Represents "Self-Reflection."

* `y^(2)`: The final output from the second policy attempt.

* **Text Labels:**

* "Task" (pointing to `x`).

* "Env. Feedback" (pointing to `f`).

* "Cross Episode Memory" (within an orange dashed box, pointing to a combination node).

* "Self-Reflection" (pointing to `Δ`).

### Detailed Analysis

The process flow is as follows:

**1. First Attempt (RL):**

* A task `x` is input into the first "Policy" model.

* This policy produces an initial output `y^(1)`.

**2. Self-reflection (RL):**

* The output `y^(1)` receives "Env. Feedback" `f`.

* This feedback (`f`) and the original task `x` are combined at a summation node (`⊕`).

* The combined signal is fed into a second "Policy" model.

* This policy generates a "Self-Reflection" signal `Δ`.

* **Crucially, there is a feedback loop:** The `Δ` signal is routed back via "Cross Episode Memory" to the summation node that feeds the *same* policy, creating an iterative refinement loop within this phase.

**3. Second Attempt (RL):**

* The original task `x` and the self-reflection signal `Δ` are combined at another summation node (`⊕`).

* This combined input is fed into a third "Policy" model.

* This final policy produces the output `y^(2)`.

**4. Internalization (SFT):**

* Running in parallel, the original task `x` is also fed directly into a fourth "Policy" model located in the bottom row.

* This policy's output is also `y^(2)`, indicating it produces the same final output as the top-row process. This suggests the knowledge or policy from the RL process is being "internalized" or distilled into a standalone model via Supervised Fine-Tuning (SFT).

### Key Observations

* **Iterative Refinement:** The "Self-reflection (RL)" phase is not a single step but contains an internal loop where the policy's own reflection (`Δ`) is fed back to improve itself, leveraging "Cross Episode Memory."

* **Two Pathways to Final Output:** The final output `y^(2)` is generated by two distinct pathways: the complex, multi-stage RL+Self-Reflection pipeline (top row) and a direct SFT pathway (bottom row). This implies the SFT model is trained to mimic the behavior of the fully refined RL policy.

* **Policy Iconography:** Every "Policy" box is marked with a flame icon (🔥), which commonly symbolizes an active, trained, or "hot" model in machine learning diagrams.

* **Color Coding:** Orange is used exclusively to highlight the "Self-reflection" phase and its associated memory component, emphasizing its central, distinctive role in the ERL framework.

### Interpretation

This diagram outlines a sophisticated reinforcement learning methodology designed to overcome the limitations of standard, single-attempt RL. The core innovation is the **"Self-reflection" phase**, which acts as an introspective correction mechanism. Instead of learning only from external environment feedback (`f`), the policy learns to generate and utilize its own internal critique (`Δ`), stored in a cross-episode memory, to iteratively refine its approach *before* committing to a final second attempt.

The parallel "Internalization (SFT)" pathway suggests a practical deployment strategy. The complex, computationally expensive ERL process (with its memory and reflection loops) is used to generate high-quality training data (`y^(2)` given `x`). A separate, likely simpler, policy model is then trained via supervised learning on this data. This allows the final deployed model to benefit from the advanced reasoning of ERL without requiring the reflection machinery to run in real-time.

In essence, ERL frames learning not as a single trial, but as a cycle of **action, feedback, introspection, and re-action**, followed by **knowledge distillation**. This mimics a more human-like learning process where experience is not just accumulated but actively reflected upon to improve future performance.

</details>

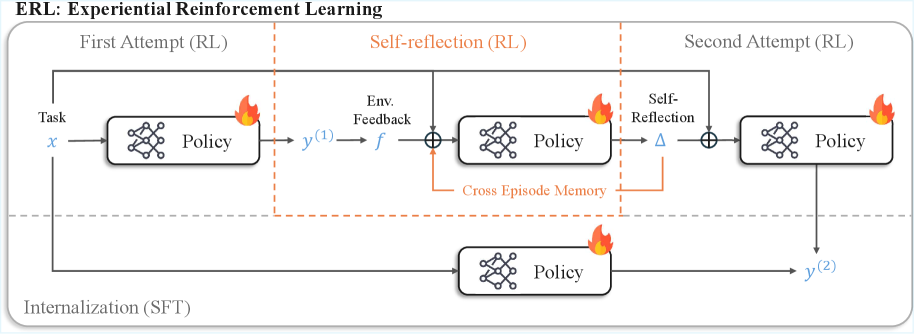

Figure 3: Overview of Experiential Reinforcement Learning (ERL). Given an input task $x$ , the language model first produces an initial attempt and receives environment feedback. The same model then generates a self-reflection conditioned on this attempt, which is used to guide a second attempt. Both attempts and reflections are optimized with reinforcement learning, while successful second attempts are internalized via self-distillation, so the model learns to reproduce improved behavior directly from the original input without self-reflection.

## 2 Experiential Reinforcement Learning (ERL)

Algorithm 1 Experiential Reinforcement Learning

1: Inputs: Language model $\pi_{\theta}$ ; dataset of questions $x$ ; reward threshold $\tau$ ; environment returning feedback $f$ and reward $r$ .

2: Initialize: reflection memory $m\leftarrow\emptyset$ .

3: repeat

4: Sample question $x$ from the dataset.

5: // First attempt

6: Sample an answer $y^{(1)}\sim\pi_{\theta}(\cdot\mid x)$ .

7: Obtain environment feedback and reward $(f^{(1)},r^{(1)})$ .

8: // Self-reflection

9: Sample a reflection $\Delta\sim\pi_{\theta}(\cdot\mid x,y^{(1)},f^{(1)},r^{(1)},m)$ .

10: // Second attempt

11: Sample a refined answer $y^{(2)}\sim\pi_{\theta}(\cdot\mid x,\Delta)$ .

12: Obtain environment feedback and reward $(f^{(2)},r^{(2)})$ .

13: Set reflection reward $\tilde{r}\leftarrow r^{(2)}$ .

14: Store reflection $m\leftarrow\Delta\;\;$ if $\;\;r^{(2)}>\tau$ .

15: // RL update

16: Update $\theta$ via $\mathcal{L}_{\text{policy}}(\theta)$ over the first attempt, reflection, and second attempt.

17: // Internalization

18: Update $\theta$ via $\mathcal{L}_{\text{distill}}(\theta)$ to internalize reflection, training $\pi_{\theta}$ to produce $y^{(2)}$ from $x$ only.

19: until converged

We introduce Experiential Reinforcement Learning (ERL), a training framework that enables a language model to iteratively improve its behavior through self-generated feedback and internalization. The key idea is to treat reflection as an intermediate reasoning signal that guides a refined second attempt, while reinforcement learning aligns both attempts with reward, and supervised distillation consolidates successful behaviors into the base policy. An overview is shown in Figure 3, and the core training loop appears in Algorithm 1. A detailed implementation, including memory persistence and gating logic, is provided in Appendix A.

Given an input task $x$ , the model $\pi_{\theta}$ first produces an initial response

$$

y^{(1)}\sim\pi_{\theta}(\cdot\mid x), \tag{1}

$$

which is evaluated by the environment to produce textual feedback $f^{(1)}$ and reward $r^{(1)}$ . Rather than immediately updating the policy, ERL optionally triggers a reflection-and-retry phase when the first attempt underperforms relative to a reward threshold $\tau$ . This selective retry mechanism focuses compute on trajectories that are most likely to benefit from revision while avoiding unnecessary refinement when performance is already sufficient. When triggered, the model generates a reflection

$$

\Delta\sim\pi_{\theta}(\cdot\mid x,y^{(1)},f^{(1)},r^{(1)},m), \tag{1}

$$

which serves as self-guidance describing how the initial attempt can be improved. Here, $m$ denotes a cross-episode reflection memory that persists successful corrective patterns discovered during training. This memory provides contextual priors that help stabilize reflection generation and encourage reuse of previously effective strategies. The model then produces a refined response

$$

y^{(2)}\sim\pi_{\theta}(\cdot\mid x,\Delta), \tag{2}

$$

and receives new feedback $(f^{(2)},r^{(2)})$ . Reflections that lead to sufficiently improved outcomes are stored back into memory,

$$

m\leftarrow\Delta\quad\text{if}\quad r^{(2)}>\tau, \tag{2}

$$

allowing corrective knowledge to accumulate across training episodes. The reflection is assigned reward $\tilde{r}=r^{(2)}$ , encouraging reflections that lead to improved downstream performance.

Both attempts and reflections are optimized using a reinforcement learning objective

$$

\mathcal{L}_{\text{policy}}(\theta)=-\mathbb{E}\!\left[A\,\log\pi_{\theta}(y\mid x,\cdot)\right],

$$

where $y$ denotes model outputs arising from the first attempt, reflection, or second attempt, and the conditioning context corresponds to the inputs specified in Algorithm 1. The advantage estimate $A$ is computed from the associated rewards.

While reflection and environment feedback provide strong training signals, such supervision is typically unavailable at deployment time, where the model must operate in a zero-shot setting. We therefore introduce an internalization step that converts reflection-guided improvements into persistent policy behavior. The goal is to make the model remember corrections discovered during training and avoid repeating the same mistakes when feedback is absent. We implement internalization via selective distillation: we supervise the model to imitate only successful second attempts while removing reflection context from the input. Concretely, given a training example $x$ , we generate a refined response $y^{(2)}$ and reward $r^{(2)}$ , and optimize

$$

\mathcal{L}_{\text{distill}}(\theta)=-\mathbb{E}\Big[\mathbb{I}\!\left(r^{(2)}>0\right)\,\log\pi_{\theta}\!\left(y^{(2)}\mid x\right)\Big], \tag{2}

$$

where $\mathbb{I}(\cdot)$ is the indicator function. This trains $\pi_{\theta}$ to reproduce improved behavior from the original input $x$ alone (no reflection), ensuring that lessons learned through feedback and self-reflection persist at test time.

By alternating between reinforcement learning, selective reflection, and distillation, ERL bootstraps self-improvement: reflections guide higher-quality retries, memory preserves effective corrective structure, reinforcement learning aligns behavior with reward, and distillation internalizes gains into the core model. Over time, this interaction stabilizes training, concentrates exploration on failure cases, and reduces dependence on explicit reflection at inference.

### 2.1 Comparison to Standard RLVR

Standard reinforcement learning with verifiable rewards (RLVR) optimizes a policy directly from scalar outcome signals. Given an input $x$ , the model samples a response $y\sim\pi_{\theta}(\cdot\mid x)$ and receives a reward $r$ , with policy updates derived from trajectory-level credit assignment. In this formulation, feedback influences learning only through reward-driven optimization, requiring the model to implicitly discover how failures should translate into behavioral change. Corrective structure therefore emerges slowly through repeated exploration, with no explicit mechanism for revising behavior within the same learning episode. This learning dynamic corresponds to trial-and-error optimization, as illustrated in Figure 2.

Experiential Reinforcement Learning (ERL) augments this loop with an explicit experience–reflection–consolidation stage embedded inside each trajectory. Instead of optimizing solely from outcome reward, the model converts environment feedback into a reflection that conditions a refined attempt. This intermediate revision produces a locally improved trajectory that is reinforced and later internalized through selective distillation, allowing the base policy to reproduce corrected behavior without reflection at inference. A cross-episode reflection memory further stabilizes this process by preserving corrective patterns that proved effective, allowing subsequent reflections to reuse prior improvements. Importantly, ERL preserves the underlying RLVR objective: policy gradients remain reward-driven, but operate over a richer trajectory structure that includes explicit behavioral correction. This reframing shifts feedback from a scalar endpoint signal to a catalyst for immediate revision, reducing reliance on undirected exploration while maintaining compatibility with standard reinforcement learning pipelines. This contrast between blind trial-and-error learning and reflection-guided revision is visualized in Figure 1 and Figure 2.

## 3 Experiment

We evaluate Experiential Reinforcement Learning (ERL) against standard RLVR on a set of agentic reasoning tasks.

### 3.1 Task

We evaluate ERL on three agentic reasoning tasks: Frozen Lake, Sokoban, and HotpotQA (Yang et al., 2018). Detailed environment descriptions are provided in Appendix B.

For Frozen Lake and Sokoban, we configure the environments with sparse terminal rewards following Wang et al. (2025) and Guertler et al. (2025). The agent receives reward only at episode completion: a reward of +1 is assigned for successfully achieving the objective and 0 otherwise. Crucially, we do not provide explicit game rules or environment dynamics. The model must infer task structure purely through interaction, with access limited to the available action set. This evaluation design is inspired by prior work on learning from experience, where the goal is to measure an agent’s ability to acquire task understanding through trial-and-error rather than relying on human-authored priors embedded in pretraining. The combination of sparse rewards and unknown dynamics therefore creates a challenging setting that emphasizes reasoning, planning, and experiential learning.

HotpotQA is adapted into an agentic multi-hop question-answering task following Search-R1 (Jin et al., 2025). Given a question, the model performs iterative tool-assisted retrieval before producing a final answer. To maintain consistency with the experiential learning setup, we provide only a default system prompt describing available tools, without additional task-specific guidance. Correctness is evaluated using token-level F1 against ground-truth answers. The reward function assigns 1.0 for exact matches, a proportional reward for partial matches with F1 score $\geq 0.3$ , and 0 otherwise.

### 3.2 Models and Baselines

In our experiments, we train Olmo-3-7B-Instruct (Olmo et al., 2025) and Qwen3-4B-Instruct-2507 (Yang et al., 2025) using both standard RLVR and our proposed ERL paradigm, with GRPO (Shao et al., 2024) serving as the underlying policy-gradient optimizer in all cases. To ensure stable training, we adopt common reinforcement learning techniques such as clipping, KL regularization, and importance sampling. Notably, the internalization stage in ERL naturally involves off-policy data, which can introduce additional instability. We therefore apply the same stabilization techniques during this phase to maintain consistent optimization behavior. Additionally, because ERL requires two attempts per task along with an additional reflection step, we allocate 10 rollouts per task for RLVR and half as many per task per attempt for ERL to equalize the training compute per task across methods. Full hyperparameters and implementation details are provided in Appendix C.

## 4 Result and Discussion

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Line Chart Grid: ERL vs. RLVR Training Performance

### Overview

The image displays a 2x3 grid of line charts comparing the training performance of two reinforcement learning methods, **ERL** (green line) and **RLVR** (blue line). Performance is measured by "Reward" over "Training wall-clock time (hours)". The top row shows results for the **Qwen3-4B-Instruct-2507** model, and the bottom row for the **Olmo-3-7B-Instruct** model. Each column corresponds to a different task environment: **FROZENLAKE**, **HOTPOTQA**, and **SOKOBAN**.

### Components/Axes

* **Legend:** Located at the top center of the entire figure. It defines two data series:

* **ERL:** Represented by a solid green line.

* **RLVR:** Represented by a solid blue line.

* **Y-Axis (All Charts):** Labeled **"Reward"**. The scale and range vary per chart.

* **X-Axis (All Charts):** Labeled **"Training wall-clock time (hours)"**. The scale and range vary per chart.

* **Row Labels (Left Side):**

* Top Row: **"Qwen3-4B-Instruct-2507"**

* Bottom Row: **"Olmo-3-7B-Instruct"**

* **Column Titles (Top of Each Chart):**

* Left Column: **"FROZENLAKE"**

* Middle Column: **"HOTPOTQA"**

* Right Column: **"SOKOBAN"**

### Detailed Analysis

**1. Top Row: Qwen3-4B-Instruct-2507**

* **FROZENLAKE (Top-Left):**

* **Axes:** Y-axis from 0.20 to 0.80. X-axis from 0 to 8 hours.

* **ERL (Green):** Starts near 0.20. Shows a steep, near-linear increase from ~1 to 4 hours, reaching ~0.85. Plateaus near 0.90 from 4 to 8 hours.

* **RLVR (Blue):** Starts near 0.20. Increases more gradually and linearly, reaching ~0.75 by 8 hours. Consistently below ERL after the first hour.

* **HOTPOTQA (Top-Middle):**

* **Axes:** Y-axis from 0.32 to 0.48. X-axis from 0 to 5 hours.

* **ERL (Green):** Starts near 0.32. Rises sharply to ~0.46 by 1 hour, then continues a slower ascent to a peak of ~0.49 at 3 hours, before a slight decline to ~0.48 at 5 hours.

* **RLVR (Blue):** Starts near 0.32. Rises to ~0.44 by 0.5 hours, then fluctuates between ~0.44 and ~0.46, ending near 0.44 at 5 hours. Generally below ERL after the initial rise.

* **SOKOBAN (Top-Right):**

* **Axes:** Y-axis from 0.00 to 0.80. X-axis from 0 to 32 hours.

* **ERL (Green):** Remains near 0.00 until ~12 hours. Then exhibits a very steep, sigmoidal rise, reaching ~0.70 by 20 hours and peaking near 0.85 at 32 hours.

* **RLVR (Blue):** Remains flat near 0.00 for the entire 32-hour duration.

**2. Bottom Row: Olmo-3-7B-Instruct**

* **FROZENLAKE (Bottom-Left):**

* **Axes:** Y-axis from 0.20 to 0.50. X-axis from 0 to 9 hours.

* **ERL (Green):** Starts near 0.20. Shows a generally upward trend with some fluctuations, reaching ~0.50 by 9 hours.

* **RLVR (Blue):** Starts near 0.20. Fluctuates between ~0.18 and ~0.25 for the first 4 hours, then rises to ~0.35 by 9 hours. Consistently below ERL.

* **HOTPOTQA (Bottom-Middle):**

* **Axes:** Y-axis from 0.24 to 0.48. X-axis from 0 to 4 hours.

* **ERL (Green):** Starts near 0.24. Rises steeply to ~0.46 by 1.5 hours, then continues a slower ascent to ~0.48 by 4 hours.

* **RLVR (Blue):** Starts near 0.24. Rises steeply to ~0.46 by 1.5 hours, tracking ERL closely. After 2 hours, it plateaus and slightly declines to ~0.45 by 4 hours, falling slightly below ERL.

* **SOKOBAN (Bottom-Right):**

* **Axes:** Y-axis from 0.00 to 0.16. X-axis from 0 to 80 hours.

* **ERL (Green):** Starts near 0.04. Rises to a peak of ~0.15 at ~24 hours, then fluctuates between ~0.11 and ~0.17 for the remainder, ending near 0.14 at 80 hours.

* **RLVR (Blue):** Starts near 0.04. Shows a slight decline, fluctuating near or below 0.02 for the entire 80-hour duration.

### Key Observations

1. **Consistent Superiority of ERL:** In all six charts, the ERL (green) method achieves a higher final reward than the RLVR (blue) method.

2. **Task-Dependent Learning Curves:** The shape of the learning curve is highly dependent on the task.

* **FROZENLAKE:** Shows steady, linear improvement for both models.

* **HOTPOTQA:** Shows rapid initial learning followed by a plateau or slight decline.

* **SOKOBAN:** Shows a long "warm-up" period (especially for Qwen) followed by a sharp phase transition for ERL, while RLVR fails to learn.

3. **Model-Dependent Performance Scale:** The absolute reward values differ significantly between models for the same task. For example, on SOKOBAN, Qwen3-4B reaches ~0.85 reward, while Olmo-3-7B only reaches ~0.16, suggesting the task is much harder for the latter model or the reward scale is different.

4. **RLVR's Struggle on SOKOBAN:** RLVR shows near-zero learning on the SOKOBAN task for both models, indicating a potential failure mode or incompatibility with this environment's structure.

### Interpretation

This grid of charts provides a comparative analysis of two training algorithms (ERL and RLVR) across diverse reasoning and planning tasks (FROZENLAKE: simple navigation; HOTPOTQA: multi-hop question answering; SOKOBAN: complex puzzle-solving) and two different language model bases.

The data strongly suggests that **ERL is a more robust and effective training method** than RLVR across this set of conditions. It not only achieves higher final performance but also demonstrates more consistent learning dynamics. The dramatic difference in the SOKOBAN task is particularly telling; ERL is capable of unlocking performance after a significant training period, while RLVR shows no progress. This could imply that ERL's optimization strategy is better suited for tasks requiring long-horizon planning or sparse rewards.

The variation in learning curve shapes (linear, saturating, sigmoidal) across tasks highlights that the **difficulty and learning dynamics are not uniform**. The long delay before learning in SOKOBAN for the Qwen model suggests a critical threshold of experience or internal representation must be reached before the skill can emerge. The lower overall performance of the Olmo model, especially on SOKOBAN, may indicate differences in model architecture, pre-training data, or inherent capability for these specific types of reasoning tasks.

In summary, the visualization serves as evidence for the efficacy of the ERL method and illustrates how task complexity and model architecture interact to shape the trajectory of reinforcement learning in language models.

</details>

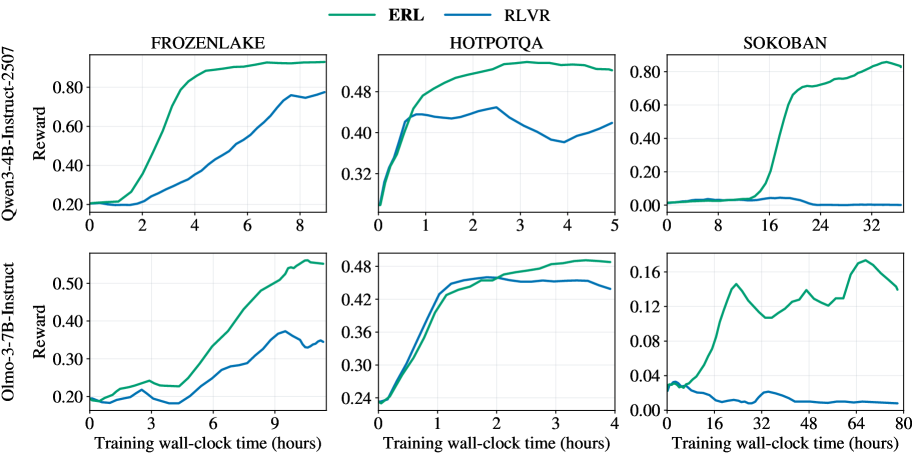

Figure 4: Validation reward trajectories versus training wall-clock time on FrozenLake, HotpotQA, and Sokoban for Qwen3-4B-Instruct-2507 and Olmo-3-7B-Instruct. ERL consistently achieves higher reward and faster improvement than RLVR across tasks and models.

We evaluate Experiential Reinforcement Learning (ERL) against standard RLVR across three environments spanning sparse-reward control (FrozenLake, Sokoban) and agentic reasoning (HotpotQA). Table 1 summarizes the final performance, while Figures 5 – 6 visualize the performance and learning dynamics. All curves are smoothed with a trailing moving average over 5 points. The same smoothing procedure is applied to all figures unless otherwise noted.

### 4.1 Performance Across Tasks

ERL consistently improves final evaluation performance over RLVR across all tasks and both model backbones. As shown in Table 1 and Figure 5, experiential training yields gains ranging from moderate improvements on HotpotQA to substantial improvements on Sokoban and Frozenlake.

The largest effect occurs in Sokoban, where Qwen3-4B-Instruct improves from 0.06 to 0.87 and Olmo3-7B-Instruct from 0.04 to 0.20. Sokoban requires long-horizon planning and recovery from compounding errors, making performance sensitive to how well the agent reasons about environment dynamics. Similarly, FrozenLake demands that the agent infer symbol semantics, action consequences, and terminal conditions purely through interaction under sparse rewards. Importantly, as described in Section 3, unlike many prior evaluation setups that provide explicit rules or environment structure, our environments expose only observations and action interfaces; the agent must infer task dynamics through trial-and-error. This design emphasizes learning from experience rather than relying on pre-specified priors, making structured revision particularly valuable. In these settings, the experience–reflection–consolidation loop enables the model to analyze failures, revise strategies, and internalize corrective behavior within each episode, producing large improvements in exploration efficiency and policy quality.

HotpotQA shows smaller but reliable gains. A likely explanation lies in differences in task structure. Compared to the grid-based control environments, HotpotQA presents a more homogeneous interaction pattern centered on repeated tool invocation and answer synthesis, with denser evaluation feedback and fewer latent dynamics to infer. Because RLVR already receives relatively informative gradients in this regime, the additional benefit of structured experiential revision is reduced. This contrast suggests that ERL yields the greatest advantage in environments where learning requires substantial reasoning about unknown dynamics and long-horizon consequences, rather than primarily optimizing over a stable interaction loop.

Importantly, improvements are observed across both models, indicating that the benefits of ERL arise from enhanced learning dynamics rather than architecture-specific effects.

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Grouped Bar Chart: Model Performance Comparison Across Three Tasks

### Overview

The image displays a grouped bar chart comparing the performance (measured in "Reward") of four different models—ERL, RLVR, Qwen, and Olmo—across three distinct tasks or environments: FROZENLAKE, HOTPOTQA, and SOKOBAN. The chart is divided into three separate panels, one for each task.

### Components/Axes

* **Legend:** Located at the top center of the entire figure. It defines the four data series:

* **ERL:** Solid green bar.

* **RLVR:** Solid blue bar.

* **Qwen:** White bar with a black outline.

* **Olmo:** Hatched bar (diagonal lines) with a green outline.

* **Y-Axis (Common to all panels):** Labeled "Reward". The scale runs from 0.00 to approximately 0.90, with major tick marks at 0.00, 0.20, 0.40, 0.60, and 0.80. The exact upper limit varies slightly per panel to accommodate the data.

* **X-Axis (Per Panel):** Each panel represents a task, labeled at the top: "FROZENLAKE", "HOTPOTQA", and "SOKOBAN". Within each panel, four bars are grouped together, corresponding to the four models in the legend order (RLVR, ERL, Qwen, Olmo from left to right).

* **Data Labels:** Numerical values are printed directly above each bar, indicating the precise reward score.

### Detailed Analysis

**Panel 1: FROZENLAKE**

* **RLVR (Blue):** Reward = 0.86

* **ERL (Green):** Reward = 0.94 (Highest in this task)

* **Qwen (White):** Reward = 0.39

* **Olmo (Hatched):** Reward = 0.66

* **Trend:** ERL performs best, followed by RLVR. There is a significant drop-off for Qwen and Olmo, with Qwen scoring the lowest.

**Panel 2: HOTPOTQA**

* **RLVR (Blue):** Reward = 0.45

* **ERL (Green):** Reward = 0.56 (Highest in this task)

* **Qwen (White):** Reward = 0.47

* **Olmo (Hatched):** Reward = 0.50

* **Trend:** Performance is much more tightly clustered compared to FROZENLAKE. ERL again leads, but the margins are smaller. RLVR is the lowest performer here.

**Panel 3: SOKOBAN**

* **RLVR (Blue):** Reward = 0.06

* **ERL (Green):** Reward = 0.87 (Highest in this task)

* **Qwen (White):** Reward = 0.04

* **Olmo (Hatched):** Reward = 0.20

* **Trend:** ERL demonstrates dominant performance, achieving a reward score over 20 times higher than the next best model (Olmo). RLVR and Qwen show near-zero performance.

### Key Observations

1. **Consistent Leader:** The ERL model (green bar) achieves the highest reward score in all three tasks (0.94, 0.56, 0.87).

2. **Task-Dependent Performance:** The relative ranking and absolute performance of the other models (RLVR, Qwen, Olmo) vary dramatically by task. For example, RLVR is the second-best in FROZENLAKE (0.86) but the worst in HOTPOTQA (0.45).

3. **Extreme Disparity in SOKOBAN:** The SOKOBAN task shows the most extreme performance gap, with ERL excelling while the other three models fail almost completely (scores ≤ 0.20).

4. **Clustering in HOTPOTQA:** The HOTPOTQA task shows the most competitive and clustered results, with all models scoring between 0.45 and 0.56.

### Interpretation

This chart suggests that the **ERL model is robust and generalizes well** across diverse task types (a grid-world navigation task like FROZENLAKE, a question-answering task like HOTPOTQA, and a planning/puzzle task like SOKOBAN). Its performance is not only consistently high but also dominant in two of the three domains.

The performance of the other models is **highly task-specific**. This indicates that their underlying architectures or training may be specialized or lack the flexibility to handle different problem structures. The near-failure of RLVR, Qwen, and Olmo on SOKOBAN is particularly notable, suggesting this task requires a specific capability (e.g., long-horizon planning, spatial reasoning) that ERL possesses but the others lack.

From a technical evaluation perspective, this data would argue strongly for the superiority of the ERL approach in the tested environments. The stark contrast in SOKOBAN could be a key area for investigating the specific algorithmic or representational advantages of ERL.

</details>

Figure 5: Final evaluation reward on FrozenLake, HotpotQA, and Sokoban. ERL consistently outperforms RLVR for both Qwen3-4B-Instruct-2507 and Olmo-3-7B-Instruct.

### 4.2 Learning Efficiency and Optimization Dynamics

Figure 4 compares validation reward against wall-clock training time. Across tasks and models, ERL reaches higher reward earlier and maintains a persistent margin over RLVR. This acceleration is especially pronounced in FrozenLake and Sokoban, where RLVR progresses gradually while ERL rapidly approaches high-reward behavior.

These dynamics suggest that reflection introduces an intermediate corrective signal that reshapes exploration. Instead of relying solely on terminal reward propagation, the model conditions on feedback and self-generated critique to revise its behavior. This concentrates training updates on trajectories that are already partially aligned with the objective, reducing inefficient exploration.

Even in HotpotQA, where rewards are denser and the environment is comparatively simpler, ERL maintains a consistent performance advantage over RLVR. Across environments, these results indicate that ERL achieves higher final reward while improving learning efficiency, demonstrating that structured experiential revision leads to faster and more effective policy improvement.

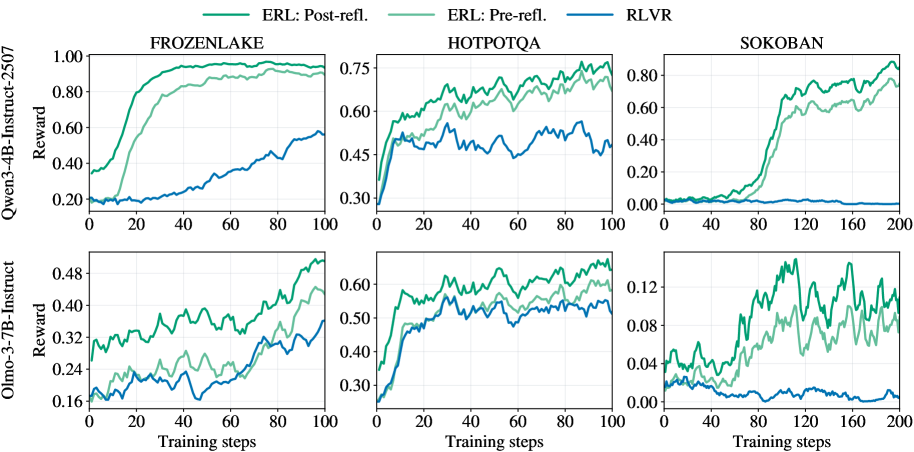

### 4.3 Mechanistic Role of Reflection

Figure 6 shows training reward trajectories for ERL before and after the reflection step, alongside RLVR. Across environments and models, post-reflection trajectories consistently achieve higher training reward than pre-reflection trajectories and also exceed RLVR.

This comparison highlights the immediate within-episode effect of reflection. After observing feedback from the first attempt, the model generates a structured revision that guides a second attempt with improved actions. The resulting gain in training reward indicates that reflection produces actionable corrections within the same episode, rather than only shaping behavior over long horizons. The sustained separation between pre- and post-reflection curves throughout training suggests that reflection serves as a systematic revision mechanism. By converting observed outcomes into targeted adjustments, it improves the quality of second attempts, which are subsequently reinforced and contribute to longer-term policy improvement.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Charts: Comparative Performance of ERL and RLVR Across Environments

### Overview

The image displays a set of six line charts arranged in a 2x3 grid. The charts compare the training performance (measured in "Reward") of three different reinforcement learning or training methods across three distinct environments. The comparison is conducted for two different base language models.

### Components/Axes

* **Grid Structure:** Two rows, three columns.

* **Row Labels (Y-axis titles for the entire row):**

* Top Row: `Qwen-3-4B-Instruct-2507` (Y-axis label: `Reward`)

* Bottom Row: `Olmo-3-7B-Instruct` (Y-axis label: `Reward`)

* **Column Labels (Chart Titles):**

* Left Column: `FROZENLAKE`

* Middle Column: `HOTPOTQA`

* Right Column: `SOKOBAN`

* **X-axis:** Labeled `Training steps` for all charts. The scale varies:

* FROZENLAKE and HOTPOTQA charts: 0 to 100 steps.

* SOKOBAN charts: 0 to 200 steps.

* **Y-axis:** Labeled `Reward` for all charts. The scale and range differ significantly per chart and model.

* **Legend:** Located at the top center of the entire figure. It defines three data series:

* `ERL: Post-refl.` (Dark Green line)

* `ERL: Pre-refl.` (Light Green line)

* `RLVR` (Blue line)

### Detailed Analysis

**1. Top Row: Qwen-3-4B-Instruct-2507 Model**

* **FROZENLAKE (Top-Left):**

* **Trend Verification:** Both ERL lines show a steep, sigmoidal increase, plateauing near the top. The RLVR line shows a slow, steady linear increase.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Starts ~0.35, rises sharply between steps 10-40, plateaus near 1.0 by step 60.

* `ERL: Pre-refl.`: Starts ~0.20, follows a similar but slightly delayed and lower trajectory than Post-refl., plateaus near 0.95.

* `RLVR`: Starts ~0.20, increases gradually to ~0.55 by step 100.

* **HOTPOTQA (Top-Middle):**

* **Trend Verification:** All three lines show an initial rapid rise followed by noisy, fluctuating plateaus. ERL lines consistently outperform RLVR.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Starts ~0.30, rises to ~0.70 by step 40, fluctuates between 0.65-0.75 thereafter.

* `ERL: Pre-refl.`: Follows a similar pattern to Post-refl. but is consistently lower, fluctuating between 0.60-0.70.

* `RLVR`: Starts ~0.30, rises to ~0.50 by step 20, then fluctuates noisily between 0.45-0.55.

* **SOKOBAN (Top-Right):**

* **Trend Verification:** ERL lines show a delayed but very sharp increase. RLVR remains near zero throughout.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Near 0 until step ~60, then rises sharply to ~0.80 by step 140, ending near 0.90.

* `ERL: Pre-refl.`: Follows a similar delayed rise but is lower, reaching ~0.75 by step 200.

* `RLVR`: Hovers near 0.00 for the entire 200 steps.

**2. Bottom Row: Olmo-3-7B-Instruct Model**

* **FROZENLAKE (Bottom-Left):**

* **Trend Verification:** All lines show a gradual, noisy upward trend. ERL lines are distinctly higher than RLVR.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Starts ~0.30, ends near 0.50.

* `ERL: Pre-refl.`: Starts ~0.18, ends near 0.44.

* `RLVR`: Starts ~0.18, ends near 0.36.

* **HOTPOTQA (Bottom-Middle):**

* **Trend Verification:** Similar pattern to the Qwen model on HOTPOTQA: rapid initial rise, then noisy plateaus with ERL leading.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Rises to ~0.60 by step 20, fluctuates between 0.55-0.65.

* `ERL: Pre-refl.`: Rises to ~0.55 by step 20, fluctuates between 0.50-0.60.

* `RLVR`: Rises to ~0.50 by step 20, fluctuates between 0.45-0.55.

* **SOKOBAN (Bottom-Right):**

* **Trend Verification:** ERL lines show high volatility with a general upward trend. RLVR shows a slight downward trend.

* **Data Points (Approximate):**

* `ERL: Post-refl.`: Highly volatile, ranging from ~0.04 to a peak near 0.14, ending around 0.10.

* `ERL: Pre-refl.`: Also volatile but generally lower than Post-refl., ranging from ~0.02 to 0.12.

* `RLVR`: Starts near 0.03, shows a slight decline, ending near 0.00.

### Key Observations

1. **Consistent Hierarchy:** In all six charts, the `ERL: Post-refl.` method (dark green) achieves the highest final reward, followed by `ERL: Pre-refl.` (light green), with `RLVR` (blue) performing the worst.

2. **Environment Difficulty:** The SOKOBAN environment appears to be the most challenging, especially for the RLVR method, which fails to learn (reward ~0) in both models. ERL methods show a significant learning delay in SOKOBAN with the Qwen model.

3. **Model Comparison:** The Qwen-3-4B model achieves higher absolute reward values (e.g., near 1.0 in FROZENLAKE) compared to the Olmo-3-7B model (max ~0.50 in FROZENLAKE), suggesting the tasks or reward scales may differ, or the Qwen model is more capable for these specific tasks.

4. **Learning Dynamics:** ERL methods typically show faster initial learning (steeper slopes) and higher asymptotic performance than RLVR. The "Post-refl." variant consistently offers a performance boost over "Pre-refl.".

### Interpretation

The data strongly suggests that the **ERL (Evolutionary Reinforcement Learning) methodology, particularly with post-reflection ("Post-refl."), is significantly more effective than the RLVR baseline** for training the evaluated language models on these sequential decision-making and reasoning tasks (FROZENLAKE, SOKOBAN, HOTPOTQA).

The consistent performance gap indicates that the evolutionary and reflective components of ERL provide a more robust learning signal or exploration strategy. The dramatic failure of RLVR in SOKOBAN highlights its potential inadequacy for sparse-reward, long-horizon planning tasks, where ERL's population-based approach may excel. The volatility in the Olmo-3-7B SOKOBAN chart suggests less stable training for that model-environment-method combination. Overall, the charts present compelling evidence for the superiority of the proposed ERL framework over the RLVR alternative across diverse tasks and model architectures.

</details>

Figure 6: Training reward trajectories for Qwen3-4B-Instruct-2507 and Olmo-3-7B-Instruct comparing RLVR with ERL before and after reflection. Post-reflection trajectories consistently achieve higher reward than both RLVR and pre-reflection trajectories.

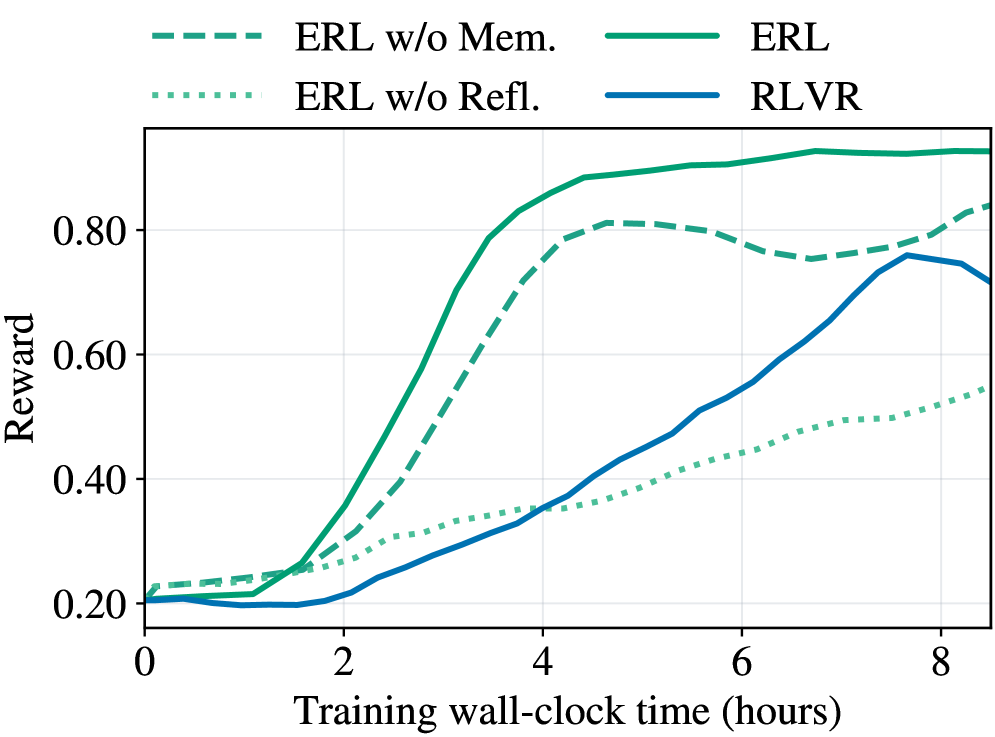

### 4.4 Ablation Study: Memory and Reflection Mechanisms

| Task Qwen3-4B-Instruct-2507 FrozenLake | RLVR 0.86 | ERL 0.94 | ERL w/o Mem. 0.86 (-0.08) | ERL w/o Refl. 0.60 (-0.34) |

| --- | --- | --- | --- | --- |

| HotpotQA | 0.45 | 0.56 | 0.56 (-0.00) | 0.48 (-0.08) |

| Sokoban | 0.06 | 0.87 | 0.87 (-0.00) | 0.59 (-0.28) |

| Olmo3-7B-Instruct | | | | |

| FrozenLake | 0.39 | 0.66 | 0.64 (-0.02) | 0.54 (-0.12) |

| HotpotQA | 0.47 | 0.50 | 0.47 (-0.03) | 0.46 (-0.04) |

| Sokoban | 0.04 | 0.20 | 0.24 (+0.04) | 0.06 (-0.14) |

Table 1: Final evaluation reward on FrozenLake, HotpotQA, and Sokoban. ERL performance is compared against ablation variants, with highlighted drops showing the performance degradation relative to ERL when removing memory reuse (w/o Mem.) or structured reflection (w/o Refl.).

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Line Chart: Training Reward Over Time for Different Algorithms

### Overview

The image displays a line chart comparing the performance of four reinforcement learning algorithms over training time. The chart plots "Reward" on the vertical axis against "Training wall-clock time (hours)" on the horizontal axis. The primary purpose is to demonstrate the learning efficiency and final performance of the "ERL" algorithm and its variants compared to "RLVR".

### Components/Axes

* **Chart Type:** Multi-line chart.

* **X-Axis:**

* **Label:** "Training wall-clock time (hours)"

* **Scale:** Linear, from 0 to approximately 8.5 hours.

* **Major Tick Marks:** 0, 2, 4, 6, 8.

* **Y-Axis:**

* **Label:** "Reward"

* **Scale:** Linear, from 0.20 to approximately 0.95.

* **Major Tick Marks:** 0.20, 0.40, 0.60, 0.80.

* **Legend:** Positioned at the top center of the chart area. It contains four entries, each with a distinct line style and color:

1. `--- ERL w/o Mem.` (Dashed green line)

2. `... ERL w/o Refl.` (Dotted green line)

3. `─── ERL` (Solid green line)

4. `─── RLVR` (Solid blue line)

### Detailed Analysis

**Trend Verification & Data Point Extraction:**

1. **ERL (Solid Green Line):**

* **Trend:** Shows the fastest and highest learning curve. It begins at a reward of ~0.20, experiences a sharp, near-exponential increase starting around 1.5 hours, and plateaus at the highest reward level.

* **Approximate Data Points:**

* 0 hrs: ~0.20

* 2 hrs: ~0.35

* 4 hrs: ~0.85

* 6 hrs: ~0.92

* 8 hrs: ~0.93 (Peak appears around 7 hrs at ~0.94)

2. **ERL w/o Mem. (Dashed Green Line):**

* **Trend:** Follows a similar shape to the full ERL model but with consistently lower reward values after the initial phase. It peaks and then shows a slight decline before a final uptick.

* **Approximate Data Points:**

* 0 hrs: ~0.22

* 2 hrs: ~0.30

* 4 hrs: ~0.75

* 6 hrs: ~0.78

* 8 hrs: ~0.83 (Peak around 5 hrs at ~0.81, dip to ~0.75 at 7 hrs)

3. **RLVR (Solid Blue Line):**

* **Trend:** Starts the lowest, remains flat for the first 2 hours, then begins a steady, roughly linear increase. It surpasses the "ERL w/o Refl." model around 4 hours and peaks before a noticeable decline at the end of the plotted time.

* **Approximate Data Points:**

* 0 hrs: ~0.20

* 2 hrs: ~0.20

* 4 hrs: ~0.35

* 6 hrs: ~0.55

* 8 hrs: ~0.72 (Peak around 7.5 hrs at ~0.76)

4. **ERL w/o Refl. (Dotted Green Line):**

* **Trend:** Exhibits the slowest and most gradual improvement. It maintains a steady, shallow upward slope throughout the entire training period, never achieving a high reward.

* **Approximate Data Points:**

* 0 hrs: ~0.21

* 2 hrs: ~0.28

* 4 hrs: ~0.35

* 6 hrs: ~0.45

* 8 hrs: ~0.55

### Key Observations

1. **Performance Hierarchy:** The full ERL algorithm significantly outperforms all other variants and the RLVR baseline in both learning speed and final reward.

2. **Ablation Impact:** Removing the "Memory" component (`ERL w/o Mem.`) causes a moderate performance drop. Removing the "Reflection" component (`ERL w/o Refl.`) causes a severe performance degradation, resulting in the worst-performing model.

3. **Learning Dynamics:** ERL and its memory-ablated variant learn rapidly in the first 4 hours. RLVR has a delayed start but learns steadily. The reflection-ablated model learns slowly and linearly.

4. **Late-Stage Behavior:** The `ERL` and `ERL w/o Mem.` lines show signs of plateauing or slight fluctuation after 6 hours. The `RLVR` line shows a distinct performance drop after its peak at ~7.5 hours.

### Interpretation

This chart provides strong evidence for the efficacy of the proposed ERL (Evolutionary Reinforcement Learning) framework and the importance of its core components.

* **Component Contribution:** The stark contrast between the solid green line (full ERL) and the dotted green line (without Reflection) suggests that the "Reflection" mechanism is critical for efficient learning and achieving high performance. The "Memory" component also contributes positively, as its removal leads to a consistent performance gap.

* **Algorithmic Comparison:** ERL demonstrates superior sample efficiency (in wall-clock time) compared to RLVR. While RLVR eventually reaches a respectable reward level (~0.76), it takes nearly 7.5 hours to do so, a level that ERL surpasses in under 4 hours.

* **Practical Implication:** For applications where training time is a constraint, ERL is the clearly preferable approach based on this data. The ablation study (`w/o Mem.`, `w/o Refl.`) effectively isolates and validates the contribution of each architectural innovation within the ERL framework.

* **Anomaly/Note:** The final downturn in the RLVR curve could indicate instability in late-stage training or overfitting, a behavior not observed in the ERL variants within this time window.

</details>

Figure 7: Ablation study on Qwen3-4B-Instruct-2507 in FrozenLake. We compare full ERL with two variants: (1) no memory, which disables cross-episode reflection reuse, and (2) no reflection, which replaces structured self-reflection with raw first-attempt context and a generic retry instruction.

To understand how structured reflection and cross-episode memory contribute to performance, we conduct ablation studies across tasks and models. The quantitative results are reported in Table 1, and representative learning dynamics for FrozenLake with Qwen3-4B-Instruct-2507 are shown in Figure 7. These experiments isolate individual components of ERL while keeping the overall training setup fixed.

The no-memory variant disables cross-episode reflection storage. Reflections are still generated and used to guide the second attempt within each episode, but they are not retained for reuse in future episodes. As a result, corrective signals remain local to individual trajectories rather than accumulating into persistent behavioral priors.

The no-reflection variant preserves the two-attempt interaction structure but removes explicit structured reflection. Instead, the model receives the full first-attempt interaction history together with a generic instruction encouraging improvement. This design tests whether contextual reuse alone can replicate the benefits of structured reflective reasoning. The prompt template used in this setting is shown in Table 9 (Appendix).

The results in Table 1 show a consistent ordering across most tasks and models: full ERL achieves the strongest performance, followed by the no-memory variant, while the no-reflection variant exhibits the largest degradation in most settings. Figure 7 further illustrates that removing memory slows convergence, whereas removing reflection substantially reduces both learning speed and final reward. These findings support the core design intuition of ERL: reflection generates actionable behavioral corrections, and memory propagates those corrections across episodes to enable cumulative refinement.

At the same time, Table 1 reveals an important caveat. In the Olmo3-7B-Instruct Sokoban setting, the no-memory variant slightly outperforms full ERL. This suggests that when a model’s self-reflective ability is limited, or when the environment is complex and stochastic, persistent memory may propagate early inaccurate reflections, making recovery more difficult. In such cases, disabling cross-episode memory can mitigate the accumulation of erroneous priors. Nevertheless, across the broad set of tasks and models evaluated, ERL consistently delivers the strongest overall performance, demonstrating that structured reflection combined with persistent memory is highly effective in most practical settings.

## 5 Related Work

#### Reinforcement Learning for LLMs.

Reinforcement learning has become a central approach for improving large language models. Early work focused on reinforcement learning from human feedback (RLHF) to align model behavior with human preferences and conversational objectives (Ouyang et al., 2022; Christiano et al., 2023; Shi et al., 2024; 2025). More recent efforts extend RL to enhance mathematical reasoning, where verifiable or programmatic rewards derived from executable checks or formal answer verification provide structured supervision for reasoning and solution construction (OpenAI et al., 2024; Guo et al., 2025; Song et al., 2025b; Shi et al., 2026). In parallel, research on tool-using and agentic LLMs treats the model as a policy that interacts with external environments, alternating between actions and observations under task-dependent rewards to solve multi-step problems (Yao et al., 2023; Jin et al., 2025; Bai et al., 2026; Jiang et al., 2026). Despite their different goals, these approaches primarily treat environment feedback as a scalar optimization signal propagated through policy gradients, requiring the model to implicitly infer corrective structure through exploration. In contrast, our ERL paradigm introduces an explicit experience-reflection-consolidation loop that transforms environment feedback into structured behavioral revision before internalizing improvements into the base policy.

#### Learning from Experience.

A growing body of work argues that the next scaling regime for AI will come not from more static human text, but from agents generating ever-richer data through interaction-i.e., learning predominantly from experience. Silver and Sutton (2025) emphasizes that continual, agent-generated data streams and long-horizon decision-making as the route beyond imitation of human corpora. This motivates algorithmic mechanisms that convert failures into usable learning signal rather than relying on rare successes. In classic reinforcement learning, Andrychowicz et al. (2018) addresses sparse rewards by relabeling goals so that failed trajectories can still provide informative updates, substantially improving sample efficiency in goal-conditioned tasks. In the LLM-agent setting, Zhang et al. (2025) similarly targets the gap between imitation and full RL by training agents on their own interaction traces even when explicit rewards are unavailable, using the agent’s generated future states as supervision and including self-reflection as a way to learn from suboptimal actions. Meanwhile, inference-time reflection methods demonstrate that LLMs can critique and revise their own outputs to improve success (Zelikman et al., 2022; Madaan et al., 2023; Shinn et al., 2023), but typically require reflection or memory at deployment. Concurrent research explores integrating feedback-conditioned improvement directly into training. hübotter2026reinforcementlearningselfdistillation; Song et al. (2026) formalize RL with textual feedback by distilling a feedback-conditioned teacher policy into a student policy. ERL is aligned with this direction but emphasizes explicit self-reflection as an intermediate reasoning step embedded inside the RL trajectory, where an initial attempt is followed by reflection and a refined retry. Coupled with selective internalization and cross-episode memory, this design treats reflection as a structured credit-assignment mechanism that transforms raw experience into durable behavioral improvement without requiring reflection at inference time.

## 6 Conclusion

In this work, we presented Experiential Reinforcement Learning (ERL), a training paradigm that incorporates an explicit experience–reflection–consolidation stage into the reinforcement learning loop to convert environment feedback into structured behavioral correction. By pairing reflection-guided revision with selective internalization, ERL enables models to learn corrective strategies during training and consolidate them into a deployable policy that operates without reflection at inference time. Across sparse-reward control and agentic reasoning tasks, ERL improves learning efficiency, stabilizes optimization, and produces stronger final policies relative to standard reinforcement learning baselines. These results demonstrate that embedding structured experiential revision directly into the training process provides an effective mechanism for translating feedback into durable behavioral improvement. Looking forward, this work suggests a path toward reinforcement learning systems that are fundamentally grounded in experience, where explicit reflection and consolidation become core primitives for building agents that continually learn, adapt, and improve from their own interactions.

## Acknowledgements

The authors thank the members of the LIME Lab and Microsoft Office of Applied Research for their helpful discussions, feedback, and resources.

## References

- R. Agarwal, N. Vieillard, Y. Zhou, P. Stanczyk, S. Ramos Garea, M. Geist, and O. Bachem (2024) On-policy distillation of language models: learning from self-generated mistakes. In International Conference on Learning Representations, B. Kim, Y. Yue, S. Chaudhuri, K. Fragkiadaki, M. Khan, and Y. Sun (Eds.), Vol. 2024, pp. 21246–21263. External Links: Link Cited by: Appendix A.

- M. Andrychowicz, F. Wolski, A. Ray, J. Schneider, R. Fong, P. Welinder, B. McGrew, J. Tobin, P. Abbeel, and W. Zaremba (2018) Hindsight experience replay. External Links: 1707.01495, Link Cited by: §5.

- Y. Bai, Y. Bao, Y. Charles, C. Chen, G. Chen, H. Chen, H. Chen, J. Chen, N. Chen, R. Chen, Y. Chen, Y. Chen, Y. Chen, Z. Chen, J. Cui, H. Ding, M. Dong, A. Du, C. Du, D. Du, Y. Du, Y. Fan, Y. Feng, K. Fu, B. Gao, C. Gao, H. Gao, P. Gao, T. Gao, Y. Ge, S. Geng, Q. Gu, X. Gu, L. Guan, H. Guo, J. Guo, X. Hao, T. He, W. He, W. He, Y. He, C. Hong, H. Hu, Y. Hu, Z. Hu, W. Huang, Z. Huang, Z. Huang, T. Jiang, Z. Jiang, X. Jin, Y. Kang, G. Lai, C. Li, F. Li, H. Li, M. Li, W. Li, Y. Li, Y. Li, Y. Li, Z. Li, Z. Li, H. Lin, X. Lin, Z. Lin, C. Liu, C. Liu, H. Liu, J. Liu, J. Liu, L. Liu, S. Liu, T. Y. Liu, T. Liu, W. Liu, Y. Liu, Y. Liu, Y. Liu, Y. Liu, Z. Liu, E. Lu, H. Lu, L. Lu, Y. Luo, S. Ma, X. Ma, Y. Ma, S. Mao, J. Mei, X. Men, Y. Miao, S. Pan, Y. Peng, R. Qin, Z. Qin, B. Qu, Z. Shang, L. Shi, S. Shi, F. Song, J. Su, Z. Su, L. Sui, X. Sun, F. Sung, Y. Tai, H. Tang, J. Tao, Q. Teng, C. Tian, C. Wang, D. Wang, F. Wang, H. Wang, H. Wang, J. Wang, J. Wang, J. Wang, S. Wang, S. Wang, S. Wang, X. Wang, Y. Wang, Y. Wang, Y. Wang, Y. Wang, Y. Wang, Z. Wang, Z. Wang, Z. Wang, Z. Wang, C. Wei, Q. Wei, H. Wu, W. Wu, X. Wu, Y. Wu, C. Xiao, J. Xie, X. Xie, W. Xiong, B. Xu, J. Xu, L. H. Xu, L. Xu, S. Xu, W. Xu, X. Xu, Y. Xu, Z. Xu, J. Xu, J. Xu, J. Yan, Y. Yan, H. Yang, X. Yang, Y. Yang, Y. Yang, Z. Yang, Z. Yang, Z. Yang, H. Yao, X. Yao, W. Ye, Z. Ye, B. Yin, L. Yu, E. Yuan, H. Yuan, M. Yuan, S. Yuan, H. Zhan, D. Zhang, H. Zhang, W. Zhang, X. Zhang, Y. Zhang, Y. Zhang, Y. Zhang, Y. Zhang, Y. Zhang, Y. Zhang, Y. Zhang, Y. Zhang, Z. Zhang, H. Zhao, Y. Zhao, Z. Zhao, H. Zheng, S. Zheng, L. Zhong, J. Zhou, X. Zhou, Z. Zhou, J. Zhu, Z. Zhu, W. Zhuang, and X. Zu (2026) Kimi k2: open agentic intelligence. External Links: 2507.20534, Link Cited by: §1, §5.

- P. Christiano, J. Leike, T. B. Brown, M. Martic, S. Legg, and D. Amodei (2023) Deep reinforcement learning from human preferences. External Links: 1706.03741, Link Cited by: §5.

- T. Dao (2024) FlashAttention-2: faster attention with better parallelism and work partitioning. In International Conference on Learning Representations (ICLR), Cited by: Appendix C.

- M. Douze, A. Guzhva, C. Deng, J. Johnson, G. Szilvasy, P. Mazaré, M. Lomeli, L. Hosseini, and H. Jégou (2024) The faiss library. External Links: 2401.08281 Cited by: §B.3.

- L. Guertler, B. Cheng, S. Yu, B. Liu, L. Choshen, and C. Tan (2025) TextArena. External Links: 2504.11442, Link Cited by: §B.1, §B.2, §3.1.

- D. Guo, D. Yang, H. Zhang, J. Song, P. Wang, Q. Zhu, R. Xu, R. Zhang, S. Ma, X. Bi, X. Zhang, X. Yu, Y. Wu, Z. F. Wu, Z. Gou, Z. Shao, Z. Li, Z. Gao, A. Liu, B. Xue, B. Wang, B. Wu, B. Feng, C. Lu, C. Zhao, C. Deng, C. Ruan, D. Dai, D. Chen, D. Ji, E. Li, F. Lin, F. Dai, F. Luo, G. Hao, G. Chen, G. Li, H. Zhang, H. Xu, H. Ding, H. Gao, H. Qu, H. Li, J. Guo, J. Li, J. Chen, J. Yuan, J. Tu, J. Qiu, J. Li, J. L. Cai, J. Ni, J. Liang, J. Chen, K. Dong, K. Hu, K. You, K. Gao, K. Guan, K. Huang, K. Yu, L. Wang, L. Zhang, L. Zhao, L. Wang, L. Zhang, L. Xu, L. Xia, M. Zhang, M. Zhang, M. Tang, M. Zhou, M. Li, M. Wang, M. Li, N. Tian, P. Huang, P. Zhang, Q. Wang, Q. Chen, Q. Du, R. Ge, R. Zhang, R. Pan, R. Wang, R. J. Chen, R. L. Jin, R. Chen, S. Lu, S. Zhou, S. Chen, S. Ye, S. Wang, S. Yu, S. Zhou, S. Pan, S. S. Li, S. Zhou, S. Wu, T. Yun, T. Pei, T. Sun, T. Wang, W. Zeng, W. Liu, W. Liang, W. Gao, W. Yu, W. Zhang, W. L. Xiao, W. An, X. Liu, X. Wang, X. Chen, X. Nie, X. Cheng, X. Liu, X. Xie, X. Liu, X. Yang, X. Li, X. Su, X. Lin, X. Q. Li, X. Jin, X. Shen, X. Chen, X. Sun, X. Wang, X. Song, X. Zhou, X. Wang, X. Shan, Y. K. Li, Y. Q. Wang, Y. X. Wei, Y. Zhang, Y. Xu, Y. Li, Y. Zhao, Y. Sun, Y. Wang, Y. Yu, Y. Zhang, Y. Shi, Y. Xiong, Y. He, Y. Piao, Y. Wang, Y. Tan, Y. Ma, Y. Liu, Y. Guo, Y. Ou, Y. Wang, Y. Gong, Y. Zou, Y. He, Y. Xiong, Y. Luo, Y. You, Y. Liu, Y. Zhou, Y. X. Zhu, Y. Huang, Y. Li, Y. Zheng, Y. Zhu, Y. Ma, Y. Tang, Y. Zha, Y. Yan, Z. Z. Ren, Z. Ren, Z. Sha, Z. Fu, Z. Xu, Z. Xie, Z. Zhang, Z. Hao, Z. Ma, Z. Yan, Z. Wu, Z. Gu, Z. Zhu, Z. Liu, Z. Li, Z. Xie, Z. Song, Z. Pan, Z. Huang, Z. Xu, Z. Zhang, and Z. Zhang (2025) DeepSeek-r1 incentivizes reasoning in llms through reinforcement learning. Nature 645 (8081), pp. 633–638. External Links: ISSN 1476-4687, Link, Document Cited by: §5.

- B. Jiang, T. Shi, R. Kamoi, Y. Yuan, C. J. Taylor, L. Yang, P. Zhou, and S. Chen (2026) One model, all roles: multi-turn, multi-agent self-play reinforcement learning for conversational social intelligence. External Links: 2602.03109, Link Cited by: §5.

- B. Jin, H. Zeng, Z. Yue, J. Yoon, S. O. Arik, D. Wang, H. Zamani, and J. Han (2025) Search-r1: training LLMs to reason and leverage search engines with reinforcement learning. In Second Conference on Language Modeling, External Links: Link Cited by: §B.3, §3.1, §5.