# SRFed: Mitigating Poisoning Attacks in Privacy-Preserving Federated Learning with Heterogeneous Data

**Authors**:

- Yiwen Lu1,2Corresponding Author (School of Mathematics, Nanjing University, Nanjing 210093, China)

- 2E-mail: luyw@smail.nju.edu.cn

## Abstract

Federated Learning (FL) enables collaborative model training without exposing clients’ private data, and has been widely adopted in privacy-sensitive scenarios. However, FL faces two critical security threats: curious servers that may launch inference attacks to reconstruct clients’ private data, and compromised clients that can launch poisoning attacks to disrupt model aggregation. Existing solutions mitigate these attacks by combining mainstream privacy-preserving techniques with defensive aggregation strategies. However, they either incur high computation and communication overhead or perform poorly under non-independent and identically distributed (Non-IID) data settings. To tackle these challenges, we propose SRFed, an efficient Byzantine-robust and privacy-preserving FL framework for Non-IID scenarios. First, we design a decentralized efficient functional encryption (DEFE) scheme to support efficient model encryption and non-interactive decryption. DEFE also eliminates third-party reliance and defends against server-side inference attacks. Second, we develop a privacy-preserving defensive model aggregation mechanism based on DEFE. This mechanism filters poisonous models under Non-IID data by layer-wise projection and clustering-based analysis. Theoretical analysis and extensive experiments show that SRFed outperforms state-of-the-art baselines in privacy protection, Byzantine robustness, and efficiency.

## I Introduction

Federated learning (FL) has emerged as a promising paradigm for distributed machine learning, which enables multiple clients to collaboratively train a global model without sharing their private data. In a typical FL setup, multiple clients periodically train local models using their private data and upload model updates to a central server, which aggregates these updates to obtain a global model with enhanced performance. Due to its ability to protect data privacy, FL has been widely applied in real-world scenarios, such as intelligent driving [1, 2, 3, 4, 5], medical diagnosis [6, 7, 8, 9], and intelligent recommendation systems [10, 11, 12, 13].

Although FL avoids direct data exposure, it is not immune to privacy and security risks. Existing studies [14, 15, 16] have shown that the curious server may launch inference attacks to reconstruct sensitive data samples of clients from the model updates. This may lead to the leakage of clients’ sensitive information, e.g., medical diagnosis records, which can be exploited by adversaries for launching further malicious activities. Moreover, FL is also vulnerable to poisoning attacks [17, 18, 19]. The adversaries may manipulate some clients to execute malicious local training and upload poisonous model updates to mislead the global model [18, 20, 21]. This attack will damage the performance of the global model and lead to incorrect decisions in downstream tasks such as medical diagnosis and intelligent driving.

To address privacy leakage issues, existing privacy-preserving federated learning (PPFL) methods primarily rely on techniques such as differential privacy (DP) [22, 23, 24, 25, 26], secure multi-party computation (SMC) [27, 28], and homomorphic encryption (HE) [29, 30]. However, DP typically leads to a reduction in global model accuracy, while SMC and HE incur substantial computational and communication overheads in FL. Recently, lightweight functional encryption (FE) schemes [31, 32] have been applied in FL. FE enables the server to aggregate encrypted models and directly obtain the decrypted results via a functional key, which avoids the accuracy loss in DP and the extra communication overhead in HE/SMC. However, existing FE schemes rely on third parties for functional key generation, which introduces potential privacy risks.

To mitigate poisoning attacks, existing Byzantine-robust FL methods [33, 34, 35, 36] typically adopt defensive aggregation strategies, which filter out malicious model updates based on statistical distances or performance-based criteria. However, these strategies rely on the assumption that clients’ data distributions are homogeneous, leading to poor performance in non-independent and identically distributed (Non-IID) data settings. Moreover, they require access to plaintext model updates, which directly contradicts the design goal of PPFL [32]. Recently, privacy-preserving Byzantine-robust FL methods [37, 29] have been proposed to address both privacy and poisoning attacks. However, these methods still suffer from limitations such as accuracy loss, excessive overhead, and limited effectiveness in Non-IID environments, as they merely combine the PPFL with existing defensive aggregation strategies. As a result, there is still a lack of practical solutions that can simultaneously ensure privacy protection and Byzantine robustness in Non-IID data scenarios.

To address the limitations of existing FL solutions, we propose a novel Byzantine-robust privacy-preserving FL method, SRFed. SRFed achieves efficient privacy protection and Byzantine robustness in Non-IID data scenarios through two key designs. First, we propose a new functional encryption scheme, DEFE, to protect clients’ model privacy and resist inference attacks from the server. Compared with existing FE schemes, DEFE eliminates reliance on third parties through distributed key generation and improves decryption efficiency by reconstructing the ciphertext. Second, we develop a privacy-preserving robust aggregation strategy based on secure layer-wise projection and clustering. This strategy resists the poisoning attacks in Non-IID data scenarios. Specifically, this strategy first decomposes client models layer by layer, projects each layer onto the corresponding layer of the global model. Then, it performs clustering analysis on the projection vectors to filter malicious updates and aggregates the remaining benign models. DEFE supports the above secure layer-wise projection computation and enables privacy-preserving model aggregation. Finally, we evaluate SRFed on multiple datasets with varying levels of heterogeneity. Theoretical analysis and experimental results demonstrate that SRFed achieves strong privacy protection, Byzantine robustness, and high efficiency. In summary, the core contributions of this paper are as follows.

- We propose a novel secure and robust FL method, SRFed, which simultaneously guarantees privacy protection and Byzantine robustness in Non-IID data scenarios.

- We design an efficient functional encryption scheme, which not only effectively protects the local model privacy but also enables efficient and secure model aggregation.

- We develop a privacy-preserving robust aggregation strategy, which effectively defends against poisoning attacks in Non-IID scenarios and generates high-quality aggregated models.

- We implement the prototype of SRFed and validate its performance in terms of privacy preservation, Byzantine robustness, and computational efficiency. Experimental results show that SRFed outperforms state-of-the-art baselines in all aspects.

## II Related Works

### II-A Privacy-Preserving Federated Learning

To safeguard user privacy, current research on privacy-preserving federated learning (PPFL) mainly focuses on protecting gradient information. Existing solutions are primarily built upon four core technologies: Differential Privacy (DP) [22, 23, 24], Secure Multi-Party Computation (SMC) [27, 28], Homomorphic Encryption (HE) [29, 38, 30], and Functional Encryption (FE) [31, 32, 39, 40]. DP achieves data indistinguishability by injecting calibrated noise into raw data, thus ensuring privacy with low computational overhead. Miao et al. [24] proposed a DP-based ESFL framework that adopts adaptive local DP to protect data privacy. However, the injected noise inevitably degrades the model accuracy. To avoid accuracy loss, SMC and HE employ cryptographic primitives to achieve privacy preservation. SMC enables distributed aggregation while keeping local gradients confidential, revealing only the aggregated model update. Zhang et al. [27] introduced LSFL, a secure FL framework that applies secret sharing to split and transmit local parameters to two non-colluding servers for privacy-preserving aggregation. HE allows direct computation on encrypted data and produces decrypted results identical to plaintext computations. This property preserves privacy without sacrificing accuracy. Ma et al. [29] developed ShieldFL, a robust FL framework based on two-trapdoor HE, which encrypts all local gradients and achieves aggregation of encrypted gradients. Despite their strong privacy guarantees, SMC/HE-based FL methods incur substantial computation and communication overhead, posing challenges for large-scale deployment. To address these issues, FE has been introduced into FL. FE avoids noise injection and eliminates the high overhead caused by multi-round interactions or complex homomorphic operations. Chen et al. [31] proposed ESB-FL, an efficient secure FL framework based on non-interactive designated decrypter FE (NDD-FE), which protects local data privacy but relies on a trusted third-party entity. Yu et al. [40] further proposed PrivLDFL, which employs a dynamic decentralized multi-client FE (DDMCFE) scheme to preserve privacy in decentralized settings. However, both FE-based methods require discrete logarithm-based decryption, which is typically a time-consuming operation. To overcome these limitations, we propose a decentralized efficient functional encryption (DEFE) scheme that achieves privacy protection and high computational and communication efficiency.

TABLE I: COMPARISON BETWEEN OUR METHOD WITH PREVIOUS WORK

| Methods | Privacy Protection | Defense Mechanism | Efficient | Non-IID | Fidelity |

| --- | --- | --- | --- | --- | --- |

| ESFL [24] | Local DP | Local DP | ✓ | ✗ | ✗ |

| PBFL [37] | CKKS | Cosine similarity | ✗ | ✗ | ✓ |

| ESB-FL [31] | NDD-FE | ✗ | ✓ | ✗ | ✓ |

| Median [35] | ✗ | Median | ✓ | ✗ | ✓ |

| FoolsGold [33] | ✗ | Cosine similarity | ✓ | ✗ | ✓ |

| ShieldFL [29] | HE | Cosine similarity | ✗ | ✗ | ✓ |

| PrivLDFL [40] | DDMCFE | ✗ | ✓ | ✗ | ✓ |

| Biscotti [41] | DP | Euclidean distance | ✓ | ✗ | ✗ |

| SRFed | DEFE | Layer-wise projection and clustering | ✓ | ✓ | ✓ |

- Notes: The symbol ”✓” indicates that it owns this property; ”✗” indicates that it does not own this property. ”Fidelity” indicates that the method has no accuracy loss when there is no attack. ”Non-IID” indicates that the method is Byzantine-Robust under Non-IID data environments.

### II-B Privacy-Preserving Federated Learning Against Malicious Participants

To resist poisoning attacks, several defensive aggregation rules have been proposed in FL. FoolsGold [33], proposed by Fung et al. [33], reweights clients’ contributions by computing the cosine similarity of their historical gradients. Krum [34] selects a single client update that is closest, in terms of Euclidean distance, to the majority of other updates in each iteration. Median [35] mitigates the effect of malicious clients by taking the median value of each model parameter across all clients. However, the above aggregation rules require access to plaintext model updates. This makes them unsuitable for direct application in PPFL. To achieve Byzantine-robust PPFL, Shiyan et al. [41] proposed Biscotti. Biscotti leverages DP to protect local gradients while using the Krum algorithm to mitigate poisoning attacks. Nevertheless, the injected noise in DP reduces the accuracy of the aggregated model. To overcome this limitation, Zhang et al. [27] proposed LSFL. LSFL employs SMC to preserve privacy and uses Median-based aggregation for poisoning defense. However, its dual-server architecture introduces significant communication overhead. In addition, Ma et al. [29] and Miao et al. [37] proposed ShieldFL and PBFL, respectively. Both schemes adopt HE to protect local gradients and cosine similarity to defend against poisoning attacks. However, they suffer from high computational complexity and limited robustness under non-IID data settings. To address these challenges, we propose a novel Byzantine-robust and privacy-preserving federated learning method. Table I compares previous schemes with our method.

## III Problem Statement

### III-A System Model

The system model of SRFed comprises two roles: the aggregation server and clients.

- Clients: Clients are nodes with limited computing power and heterogeneous data. In real-world scenarios, data heterogeneity typically arises across clients (e.g., intelligent vehicles) due to differences in usage patterns, such as driving habits. Each client is responsible for training its local model based on its own data. To protect data privacy, the models are encrypted and submitted to the server for aggregation.

- Server: The server is a node with strong computing power (e.g., service provider of intelligent vehicles). It collects encrypted local models from clients, conducts model detection, and then aggregates selected models and distributes the aggregated model back to clients for the next training round.

### III-B Threat Model

We consider the following threat model:

1) Honest-But-Curious server: The server honestly follows the FL protocol but attempts to infer clients’ private data. Specifically, upon receiving encrypted local models from the clients, the server may launch inference attacks on the encrypted models and exploit intermediate computational results (e.g., layer-wise projections and aggregated outputs) to extract sensitive information of clients.

2) Malicious clients: We consider a FL scenario where a certain proportion of clients are malicious. These malicious clients conduct model poisoning attacks to poison the global model, thereby disrupting the training process. Specifically, we focus on the following attack types:

- Targeted poisoning attack. This attack aims to poison the global model so that it incurs erroneous predictions for the samples of a specific label. More specifically, we consider the prevalent label-flipping attack [29]. Malicious clients remap samples labeled $l_{src}$ to a chosen target label $l_{tar}$ to obtain a poisonous dataset $D_{i}^{*}$ . Subsequently, they train local models based on $D_{i}^{*}$ and submit the poisonous models to the server for aggregation. As a result, the global model is compromised, leading to misclassification of source-label samples as the target label during inference.

- Untargeted poisoning attack. This attack aims to degrade the global model’s performance on the test samples of all classes. Specifically, we consider the classic Gaussian Attack [27]. The malicious clients first train local models based on the clean dataset. Then, they inject Gaussian noise into the model parameters and submit the malicious models to the server. Consequently, the aggregated model exhibits low accuracy across test samples of all classes.

### III-C Design Goals

Under the defined threat model, SRFed aims to ensure the following security and performance guarantees:

- Confidentiality. SRFed should ensure that any unauthorized entities (e.g., the server) cannot infer clients’ private training data from the encrypted models or intermediate results.

- Robustness. SRFed should mitigate poisoning attacks launched by malicious clients under Non-IID data settings while maintaining the quality of the final aggregated model.

- Efficiency. SRFed should ensure efficient FL, with the introduced DEFE scheme and robust aggregation strategy incurring only limited computation and communication overhead.

## IV Building Blocks

### IV-A NDD-FE Scheme

NDD-FE [31] is a functional encryption scheme that supports the inner-product computation between a private vector $\boldsymbol{x}$ and a public vector $\boldsymbol{y}$ . NDD-FE involves three roles, i.e., generator, encryptor, and decryptor, to elaborate on its construction.

- NDD-FE.Setup( ${1}^{\lambda}$ ) $\rightarrow$ $pp$ : It is executed by the generator. It takes the security parameter ${1}^{\lambda}$ as input and generates the system public parameters $pp=(G,p,g)$ and a secure hash function $H_{1}$ .

- NDD-FE.KeyGen( $pp$ ) $\rightarrow$ $(pk,sk)$ : It is executed by all roles. It takes $pp$ as input and outputs public/secret keys $(pk,sk)$ . Let $(pk_{1},sk_{1}),$ $(pk_{2i},sk_{2i})$ and $(pk_{3},sk_{3})$ denote the public/secret key pairs of the generator, the $i$ -th encryptor and the decryptor, respectively.

- NDD-FE.KeyDerive( $pk_{1},sk_{1},\{pk_{2i}\}_{i=1,2,\dots,I},ctr,\boldsymbol{y},$ $aux$ ) $\rightarrow$ $sk_{\otimes}$ : It is executed by the generator. It takes $(pk_{1},sk_{1})$ , the $\{pk_{2i}\}_{i=1,2,\dots,I}$ of $I$ encryptors, an incremental counter $ctr$ , a vector $\boldsymbol{y}$ and auxiliary information $aux$ as input, and outputs the functional key $sk_{\otimes}$ .

- NDD-FE.Encrypt( $pk_{1},sk_{2i},pk_{3},ctr,x_{i},aux$ ) $\rightarrow$ $c_{i}$ : It is executed by the encryptor. It takes $pk_{1},(pk_{2i},sk_{2i}),$ $pk_{3},ctr,aux$ , and the data $x_{i}$ as input, and outputs the ciphertext $c_{i}=pk_{1}^{r_{i}^{ctr}}\cdot pk_{3}^{x_{i}}$ , where $r_{i}^{ctr}$ is generated by $H_{1}$ .

- NDD-FE.Decrypt( $pk_{1},sk_{\otimes},sk_{3},\{ct_{i}\}_{i=1,2,\dots,I},\boldsymbol{y}$ ) $\rightarrow$ $\langle\boldsymbol{x},\boldsymbol{y}\rangle$ : It is executed by the decryptor. It takes $pk_{1},sk_{\otimes},$ $sk_{3}$ , $\{ct_{i}\}_{i=1,2,\dots,I}$ and $\boldsymbol{y}$ as input. First, it outputs $g^{\langle\boldsymbol{x},\boldsymbol{y}\rangle}$ and subsequently calculates $\log(g^{\langle\boldsymbol{x},\boldsymbol{y}\rangle})$ to reconstruct the result of the inner product of $(\boldsymbol{x},\boldsymbol{y})$ .

### IV-B The Proposed Decentralized Efficient Functional Encryption Scheme

We propose a decentralized efficient functional encryption (DEFE) scheme for more secure and efficient inner product operations. Our DEFE is an adaptation of NDD-FE in three aspects:

- Decentralized authority: DEFE eliminates reliance on the third-party entities (e.g., the generator) by enabling encryptors to jointly generate the decryptor’s decryption key.

- Mix-and-Match attack resistance: DEFE inherently restricts the decryptor from obtaining the true inner product results, which prevents the decryptor from launching inference attacks.

- Efficient decryption: DEFE enables efficient decryption by modifying the ciphertext structure. This avoids the costly discrete logarithm computations in NDD-FE.

We consider our SRFed system with one decryptor (i.e., the server) and $I$ encryptors (i.e., the clients). The $i$ -th encryptor encrypts the $i$ -th component $x_{i}$ of the $I$ -dimensional message vector $\boldsymbol{x}$ . The message vector $\boldsymbol{x}$ and key vector $\boldsymbol{y}$ satisfy $\|\boldsymbol{x}\|_{\infty}\leq X$ and $\|\boldsymbol{y}\|_{\infty}\leq Y$ , with $X\cdot Y<N$ , where $N$ is the Paillier composite modulus [42]. Decryption yields $\langle\boldsymbol{x},\boldsymbol{y}\rangle\bmod N$ , which equals the integer inner product $\langle\boldsymbol{x},\boldsymbol{y}\rangle$ under these bounds. Let $M=\left\lfloor\frac{1}{2}\left(\sqrt{\frac{N}{I}}\right)\right\rfloor$ . We assume $X,Y<M$ in DEFE. Specifically, the construction of the DEFE scheme is as follows. The notations are described in Table II.

TABLE II: Notation Descriptions

| Notations | Descriptions | Notations | Descriptions |

| --- | --- | --- | --- |

| $pk,sk$ | Public/secret key | $skf$ | Functional key |

| $T$ | Total training round | $t$ | Training round |

| $I$ | Number of clients | $C_{i}$ | The $i$ -th client |

| $D_{i}$ | Dataset of $C_{i}$ | $D_{i}^{*}$ | Poisoned dataset |

| $l$ | Model layer | $\zeta$ | Length of $W_{t}$ |

| $W_{t}$ | Global model | $W_{t+1}$ | Aggregated model |

| $W_{t}^{i}$ | Benign model | $(W_{t}^{i})^{*}$ | Poisonous model |

| $|W_{t}^{(l)}|$ | Length of $W_{t}^{l}$ | $\lVert W_{t}^{(l)}\rVert$ | The Euclidean norm of $\lVert W_{t}^{(l)}\rVert$ |

| $\eta$ | Hash noise | $H_{1}$ | Hash function |

| $noise$ | Gaussian noise | $E_{t}^{i}$ | Encrypted update |

| $V_{t}^{i}$ | projection vector | $OA$ | Overall accuracy |

| $SA$ | Source accuracy | $ASR$ | Attack success rate |

- $\textbf{DEFE.Setup}(1^{\lambda},X,Y)\rightarrow pp$ : It takes the security parameter $1^{\lambda}$ as input and outputs the public parameters $pp$ , which include the modulus $N$ , generator $g$ , and hash function $H_{1}$ . It initializes by selecting safe primes $p=2p^{\prime}+1$ and $q=2q^{\prime}+1$ with $p^{\prime},q^{\prime}>2^{l(\lambda)}$ (where $l$ is a polynomial in $\lambda$ ), ensuring the factorization hardness of $N=pq$ is $2^{\lambda}$ -hard and $N>XY$ . A generator $g^{\prime}$ is uniformly sampled from $\mathbb{Z}_{N^{2}}^{*}$ , and $g=g^{\prime 2N}\mod N^{2}$ is computed to generate the subgroup of $(2N)$ -th residues in $\mathbb{Z}_{N^{2}}^{*}$ . Hash function $H_{1}:\mathbb{Z}\times\mathbb{N}\times\mathcal{AUX}\rightarrow\mathbb{Z}$ is defined, where $\mathcal{AUX}$ denotes auxiliary information (e.g., task identifier, timestamp).

- $\textbf{DEFE.KeyGen}(1^{\lambda},N,g)\rightarrow(pk_{i},sk_{i})$ : It is executed by $n$ encryptors. It takes $\lambda$ , $N$ , and $g$ as input, and outputs the corresponding key pair $(pk_{i},sk_{i})$ . For the $i$ -th encryptor, an integer $s_{i}$ is drawn from a discrete Gaussian distribution $D_{\mathbb{Z},\sigma}$ ( $\sigma>\sqrt{\lambda}\cdot N^{5/2}$ ), and the public key $h_{i}=g^{s_{i}}\mod N^{2}$ , forming key pair $(pk_{i}=h_{i},sk_{i}=s_{i})$ .

- $\textbf{DEFE.Encrypt}(pk_{i},sk_{i},ctr,x_{i},aux)\rightarrow ct_{i}$ : It is executed by $I$ encryptors. It takes key pair $(pk_{i},sk_{i})$ , counter $ctr$ , data $x_{i}\in\mathbb{Z}$ , and $aux$ as input, and outputs noise-augmented ciphertext $ct_{i}\in\mathbb{Z}_{N^{2}}$ . Considering the multi-round training process of FL, each $i$ -th encryptor generates a noise value $\eta_{t,i}$ for the $t$ -th round following the recursive relation $\eta_{t,i}=H_{1}(\eta_{t-1,i},pk_{i},ctr)\mod M$ , where $ctr$ is an incremental counter. The initial noise $\eta_{0,i}$ is uniformly set across all encryptors via a single communication. Using the noise-augmented data $x^{\prime}_{i}=x_{i}+\eta_{t,i}$ and secret key $sk_{i}$ , the encryptor computes the ciphertext $ct_{i}^{\prime}=(1+N)^{x^{\prime}_{i}}\cdot g^{r_{i}^{ctr}}\mod N^{2}$ with $r_{i}^{ctr}=H_{1}(sk_{i},ctr,aux)$ and $aux\in\mathcal{AUX}$ .

- $\textbf{DEFE.FunKeyGen}\bigl((pk_{i},sk_{i})_{i=1}^{I},ctr,y_{i},aux\bigr)\rightarrow skf_{i,\boldsymbol{y}}$ : It is executed by $I$ encryptors. Each encryptor computes its partial functional key $skf_{i,\boldsymbol{y}}$ . It takes the public/secret key pairs ${(pk_{i},sk_{i})}^{n}_{i=1}$ of encryptors and the $i$ -th component $y_{i}$ of the key vector $\boldsymbol{y}$ as inputs and outputs:

$$

skf_{i,\boldsymbol{y}}=r_{i}^{ctr}y_{i}+\sum\nolimits_{j=1}^{i-1}\varphi^{i,j}-\sum\nolimits_{j=i+1}^{I}\varphi^{i,j}, \tag{1}

$$

where $r_{i}^{ctr}=H_{1}(sk_{i},ctr,aux)$ and $\varphi^{i,j}=H_{1}(pk_{j}^{sk_{i}},ctr,$ $aux)$ . Note that $\varphi^{i,j}=\varphi^{j,i}$ .

- $\textbf{DEFE.FunKeyAgg}\bigl(\{skf_{i,\boldsymbol{y}}\}^{I}_{i=1})\rightarrow skf_{\boldsymbol{y}}$ : It is executed by the decryptor. It inputs partial functional keys $skf_{i,\boldsymbol{y}}$ and derives the final functional key:

$$

skf_{\boldsymbol{y}}=\sum\nolimits_{i=1}^{I}skf_{i,\boldsymbol{y}}=\sum\nolimits_{i=1}^{I}r_{i}^{ctr}\cdot y_{i}\in\mathbb{Z}. \tag{2}

$$

- $\textbf{DEFE.AggDec}(skf_{\boldsymbol{y}},\{ct_{i}\}^{I}_{i=1})\rightarrow\langle\boldsymbol{x^{\prime}},\boldsymbol{y}\rangle$ : It is executed by the decryptor. It first computes

$$

CT_{\boldsymbol{x^{\prime}}}=\left(\prod\nolimits_{i=1}^{I}ct_{i}^{y_{i}}\right)\cdot g^{-skf_{\boldsymbol{y}}}\mod N^{2}. \tag{3}

$$

Then, it outputs $\log_{(1+N)}(CT_{\boldsymbol{x^{\prime}}})=\frac{CT_{\boldsymbol{x^{\prime}}}-1\mod N^{2}}{N}=\langle\boldsymbol{x^{\prime}},\boldsymbol{y}\rangle.$

- $\textbf{DEFE.UsrDec}(\langle\boldsymbol{x^{\prime}},\boldsymbol{y}\rangle,\{pk_{i}\}^{I}_{i=1},\boldsymbol{y},ctr)\rightarrow\langle\boldsymbol{x},\boldsymbol{y}\rangle$ : It is executed by $I$ encryptors. During FL processes, each encryptor maintains a $I$ -dimensional noise list $\texttt{$L_{t}$}=[\eta_{t,i}]^{I}_{i=1}$ for each training round $t$ . Based on this, each encryptor can obtain the true inner product value: $\langle\boldsymbol{x},\boldsymbol{y}\rangle=\langle\boldsymbol{x^{\prime}},\boldsymbol{y}\rangle-\sum_{i=1}^{I}\eta_{t,i}\cdot y_{i}.$

## V System Design

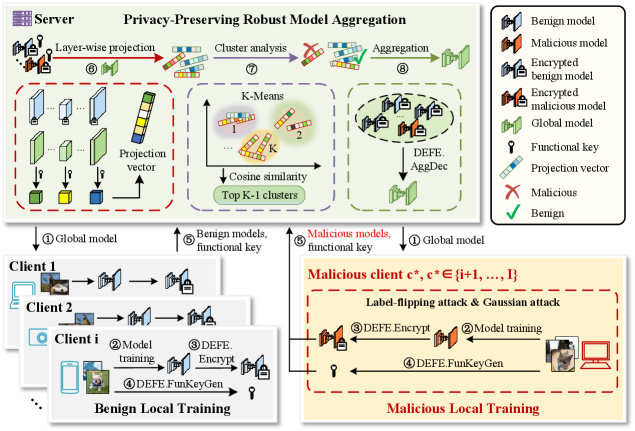

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Privacy-Preserving Robust Model Aggregation System

### Overview

This image is a technical system architecture diagram illustrating a **Privacy-Preserving Robust Model Aggregation** protocol for federated learning. It details the interaction between a central server and multiple clients (both benign and malicious), focusing on a secure aggregation process that uses encryption, clustering, and projection techniques to defend against model poisoning attacks (specifically label-flipping and Gaussian attacks). The diagram is divided into distinct regions: a Server process (top), Benign Local Training (bottom-left), and Malicious Local Training (bottom-right).

### Components/Axes

The diagram is organized into three primary spatial regions:

1. **Top Region: Server Process**

* **Title:** "Server Privacy-Preserving Robust Model Aggregation"

* **Components (Left to Right):**

* **Step ⑥ Layer-wise projection:** Shows model layers being processed into a "Projection vector".

* **Step ⑦ Cluster analysis:** Depicts a "K-Means" clustering process using "Cosine similarity" to find "Top K-1 clusters".

* **Step ⑧ Aggregation:** Illustrates the aggregation of models into a "Global model" using "DEFE: AggDec".

* **Legend (Top-Right Corner):** A box defining the symbols used throughout the diagram.

* `Benign model` (Green brain icon)

* `Malicious model` (Red brain icon)

* `Encrypted benign model` (Green brain with lock)

* `Encrypted malicious model` (Red brain with lock)

* `Global model` (Green brain with globe)

* `Functional key` (Key icon)

* `Projection vector` (Multi-colored bar)

* `Malicious` (Red X)

* `Benign` (Green checkmark)

2. **Bottom-Left Region: Benign Local Training**

* **Title:** "Benign Local Training"

* **Components:** Shows a stack of clients (Client 1, Client 2, Client i).

* **Process Flow (Numbered Steps):**

* ① `global model` (received from server)

* ② `Model training`

* ③ `DEFE: Encrypt`

* ④ `DEFE: FunKeyGen` (generates a `Functional key`)

* ⑤ `Benign models, functional key` (sent to server)

3. **Bottom-Right Region: Malicious Local Training**

* **Title:** "Malicious Local Training"

* **Subtitle:** "Malicious client `c*`, `c* ∈ {j+1, ..., I}`"

* **Attack Description:** "Label-flipping attack & Gaussian attack"

* **Process Flow (Numbered Steps):**

* ① `Global model` (received from server)

* ② `Model training` (with attack applied)

* ③ `DEFE: Encrypt`

* ④ `DEFE: FunKeyGen` (generates a `Functional key`)

* ⑤ `Malicious models, functional key` (sent to server)

### Detailed Analysis

The diagram outlines an 8-step server-side process that interacts with client-side training:

**Server-Side Workflow (Top Region):**

1. The server initiates the process by sending the `Global model` (Step ①) to all clients.

2. It receives models and keys from clients (Steps ⑤).

3. **Step ⑥ - Layer-wise projection:** The received models undergo a projection operation, resulting in a `Projection vector`. This is likely a dimensionality reduction or feature extraction step.

4. **Step ⑦ - Cluster analysis:** The projected vectors are clustered using `K-Means` based on `Cosine similarity`. The goal is to identify the `Top K-1 clusters`, presumably to isolate potentially malicious model updates.

5. **Step ⑧ - Aggregation:** The models from the identified benign clusters are aggregated using a function labeled `DEFE: AggDec` to produce an updated `Global model`. This new global model is then sent back to clients (Step ①), completing the cycle.

**Client-Side Workflow (Bottom Regions):**

* **Benign Clients:** Follow a standard secure training protocol: receive global model -> train locally -> encrypt model (`DEFE: Encrypt`) -> generate a functional key (`DEFE: FunKeyGen`) -> send encrypted model and key to server.

* **Malicious Clients:** Follow a similar technical flow but with a critical difference: their `Model training` (Step ②) incorporates a `Label-flipping attack & Gaussian attack`. Their output is a poisoned model, which is then encrypted and sent to the server alongside a functional key, mimicking the benign clients' communication pattern.

### Key Observations

1. **Symmetry in Communication:** Both benign and malicious clients perform identical post-training steps (encryption, key generation). This makes it difficult for the server to distinguish them based on communication protocol alone.

2. **Centralized Defense Mechanism:** The core defense lies entirely within the server's `Cluster analysis` (Step ⑦). The system assumes that malicious model updates will be statistically different enough (after projection) to form separate clusters, allowing the server to exclude them (`Top K-1 clusters`) before aggregation.

3. **Attack Specification:** The diagram explicitly names two attack vectors: `Label-flipping attack` (changing training data labels) and `Gaussian attack` (adding Gaussian noise to model updates).

4. **Notation:** The malicious client is denoted mathematically as `c*`, belonging to a set `{j+1, ..., I}`, suggesting a system with `I` total clients where the last `I-j` clients are malicious.

### Interpretation

This diagram presents a **defense-in-depth strategy for federated learning**. It addresses two critical challenges simultaneously:

* **Privacy:** Achieved through the `DEFE` (likely an acronym for a specific encryption scheme) processes (`Encrypt`, `FunKeyGen`, `AggDec`), which ensure the server cannot inspect raw model updates.

* **Robustness:** Achieved through the `Layer-wise projection` and `K-Means clustering` pipeline. The core hypothesis is that malicious model updates, even when encrypted, will project into a different region of the feature space than benign ones. By clustering in this space and aggregating only the largest cluster(s) (`Top K-1`), the server can dilute or exclude the influence of poisoned models.

The system's effectiveness hinges on the assumption that the projection and clustering can reliably separate benign from malicious updates *without* decrypting them. The inclusion of a `Functional key` for each client suggests it may be used in the secure aggregation decryption process (`AggDec`) or to verify client authenticity. The diagram effectively communicates a complex, multi-stage protocol where privacy and security are interwoven rather than treated as separate concerns.

</details>

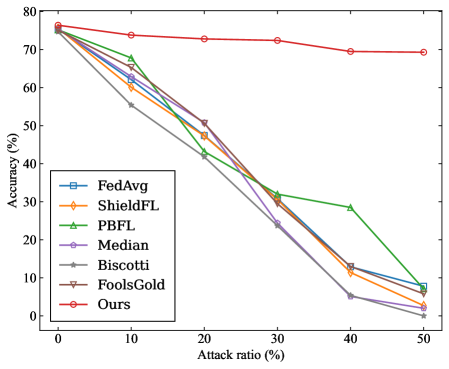

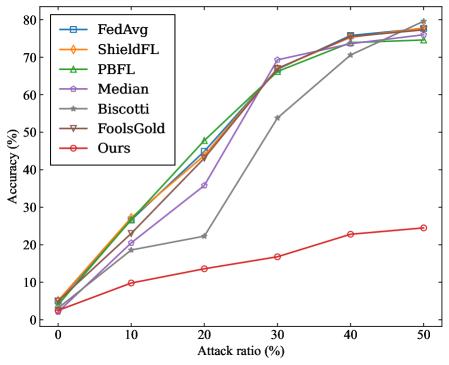

Figure 1: The workflow of SRFed.

### V-A High-Level Description of SRFed

The workflow of SRFed is illustrated in Figure 1. Specifically, SRFed iteratively performs the following three steps: 1) Initialization. The server initializes the global model $W_{0}$ and distributes it to all clients (step ①). 2) Local training. In the $t$ -th training iteration, each client $C_{i}$ receives the global model $W_{t}$ from the server and performs local training on its private dataset to obtain the local model $W_{t}^{i}$ (step ②). To protect model privacy, $C_{i}$ encrypts the local model and gets the encrypted model $E_{t}^{i}$ (step ③). Then, $C_{i}$ generates the functional key $skf_{t}^{i}$ for model detection (step ④), and uploads the encrypted model and the functional key to the server (step ⑤). 3) Privacy-preserving robust model aggregation. Upon receiving all encrypted local models $\{E_{t}^{i}\}_{i=1,...,I}$ , the server computes a layer-wise projection vector $V_{t}^{i}$ for each model based on the global model $W_{t}$ (step ⑥). The server then performs clustering analysis on $\{V_{t}^{i}\}_{i=1,...,I}$ to filter malicious models and identify benign models (step ⑦). Finally, the server aggregates these benign clients to update the global model $W_{t+1}$ (step ⑧).

### V-B Construction of SRFed

#### V-B 1 Initialization

In this phase, the server first executes the $\textbf{DEFE.Setup}(1^{\lambda},X,$ $Y)$ algorithm to generate the public parameters $pp$ , which are then made publicly available. Each client $C_{i}$ ( $i\in[1,I]$ ) subsequently generates its key pair $(pk_{i},sk_{i})$ by executing the $\textbf{DEFE.KeyGen}(1^{\lambda},N,g)$ algorithm. Finally, the server distributes the initial global model $W_{0}$ to all clients.

#### V-B 2 Local Training

The local training phase consists of three components: model training, model encryption, and functional key generation. 1) Model Training: In the $t$ -th ( $t\in[1,T]$ ) training round, once receiving the global model $W_{t}$ , each client $C_{i}$ utilizes its local dataset $D_{i}$ to update $W_{t}$ and obtains model update $W_{t}^{i}$ . For benign clients, they minimize their local objective function $L_{i}$ to obtain $W_{t}^{i}$ , i.e.,

$$

W_{t}^{i}=\arg\min_{W_{t}}L_{i}(W_{t},D_{i}). \tag{4}

$$

Malicious clients execute distinct update strategies based on their attack method. Specifically, to perform a Gaussian attack, malicious clients first conduct normal local training according to Equation (4) to obtain the benign model $W_{t}^{i}$ , and subsequently inject Gaussian noise $noise_{t}$ into it to produce a poisoned model $(W_{t}^{i})^{*}$ , i.e.,

$$

(W_{t}^{i})^{*}=W_{t}^{i}+noise_{t}. \tag{5}

$$

In addition, to launch a label-flipping attack, malicious clients first poison their training datasets by flipping all samples labeled as $l_{\text{src}}$ to a target class $l_{\text{tar}}$ . They then perform local training on the poisoned dataset $D_{i}^{*}(l_{\text{src}}\rightarrow l_{\text{tar}})$ to derive the poisoned model $(W_{t}^{i})^{*}$ , i.e.,

$$

(W_{t}^{i})^{*}=\arg\min_{W_{t}^{i}}L_{i}\left(W_{t}^{i},D_{i}^{*}(l_{\text{src}}\rightarrow l_{\text{tar}})\right). \tag{6}

$$

2) Model Encryption: To protect the privacy information of the local model, the clients exploit the DEFE scheme to encrypt their local models. Specifically, the clients first parse the local model as $W_{t}^{i}=[W_{t}^{(i,1)},\dots,W_{t}^{(i,l)},\dots,W_{t}^{(i,L)}]$ , where $W_{t}^{(i,l)}$ denotes the parameter set of the $l$ -th model layer. For each parameter element $W_{t}^{(i,l)}[\varepsilon]$ in $W_{t}^{(i,l)}$ , client $C_{i}$ executes the $\textbf{DEFE.Encrypt}(pk_{i},sk_{i},ctr,W_{t}^{(i,l)}[\varepsilon])$ algorithm to generate the encrypted parameter $E_{t}^{(i,l)}[\varepsilon]$ , i.e.,

$$

E_{t}^{(i,l)}[\varepsilon]=(1+N)^{{W_{t}^{(i,l)}}^{\prime}[\varepsilon]}\cdot g^{{r_{i}}^{ctr}}\bmod N^{2}, \tag{7}

$$

where ${W_{t}^{(i,l)}}^{\prime}[\varepsilon]$ is the parameter $W_{t}^{(i,l)}[\varepsilon]$ perturbed by a noise term $\eta$ , i.e.,

$$

{W_{t}^{(i,l)}}^{\prime}[\varepsilon]=W_{t}^{(i,l)}[\varepsilon]+\eta. \tag{8}

$$

In SRFed, the noise $\eta$ remains fixed for all clients during the first $T-1$ training rounds, and is set to $0$ in the final training round. Specifically, $\eta$ is an integer generated by client $C_{1}$ using the hash function $H_{1}$ during the first iteration, and is subsequently broadcast to all other clients for model encryption. The magnitude of $\eta$ is constrained by:

$$

m_{l}\cdot\eta^{2}\ll\lVert W_{0}^{(l)}\rVert^{2},\quad\forall l\in[1,L], \tag{9}

$$

where $W_{0}^{(l)}$ denotes the $l$ -th layer model parameters of the initial global model $W_{0}$ , and $m_{l}$ represents the number of parameters in $W_{0}^{(l)}$ . Finally, the client $C_{i}$ obtains the encrypted local model $E_{t}^{i}=[E_{t}^{(i,1)},\dots,E_{t}^{(i,l)},\dots,E_{t}^{(i,L)}]$ .

3) Functional Key Generation: Each client $C_{i}$ generates a functional key vector $skf_{t}^{i}=[skf_{t}^{(i,1)},skf_{t}^{(i,2)},\dots,skf_{t}^{(i,L)}]$ to enable the server to perform model detection on encrypted models. Specifically, for the $l$ -th layer of the global model $W_{t}$ , client $C_{i}$ executes the $\textbf{DEFE.FunKeyGen}(pk_{i},sk_{i},ctr,W_{t}^{(l)}[\varepsilon])$ algorithm to generate element-wise functional keys, i.e.,

$$

skf_{t}^{(i,l,\varepsilon)}=r_{i}^{ctr}\cdot W_{t}^{(l)}[\varepsilon]=H_{1}(sk_{i},ctr,\varepsilon)\cdot W_{t}^{(l)}[\varepsilon], \tag{10}

$$

where $W_{t}^{(l)}[\varepsilon]$ denotes the $\varepsilon$ -th parameter of $W_{t}^{(l)}$ . After processing all elements in $W_{t}^{(l)}$ , client $C_{i}$ obtains the set of element-wise functional keys $\{\{skf_{t}^{(i,l,\varepsilon)}\}_{\varepsilon=1}^{|W_{t}^{(l)}|}\}_{l=1}^{L}$ . Subsequently, the layer-level functional key is derived by aggregating the element-wise keys using the $\textbf{DEFE.FunKeyAgg}(\{skf_{t}^{(i,l,\varepsilon)}\}_{\varepsilon=1}^{|W_{t}^{(l)}|})$ algorithm, i.e.,

$$

skf_{t}^{(i,l)}=\sum\nolimits_{\varepsilon=1}^{|W_{t}^{(l)}|}skf_{t}^{(i,l,\varepsilon)}. \tag{11}

$$

This procedure is repeated for all layers to obtain the complete functional key vector $skf_{t}^{i}$ for client $C_{i}$ . Finally, each client uploads the encrypted local model $E_{t}^{i}$ and the corresponding functional key $skf_{t}^{i}$ to the server for subsequent model detection and aggregation.

#### V-B 3 Privacy-Preserving Robust Model Aggregation

To resist poisoning attacks from malicious clients, SRFed implements a privacy-preserving robust aggregation strategy, which enables secure detection and aggregation of encrypted local models without exposing private information. As illustrated in Figure 1, the proposed method performs layer-wise projection and clustering analysis to identify abnormal updates and ensure reliable model aggregation. Specifically, in each training round, the local model $W_{t}^{i}$ and the global model $W_{t}$ are decomposed layer by layer. For each layer, the parameters are projected onto the corresponding layer of the global model, and clustering is performed on the projection vectors to detect anomalous models. After that, the server filters malicious models and aggregates the remaining benign updates. Unlike prior defenses that rely on global statistical similarity between model updates [33, 29, 35], our approach captures fine-grained parameter anomalies and conducts clustering analysis to achieve effective detection even under non-IID data distributions.

1) Model Detection: Once receiving $E_{t}^{i}$ and $skf_{t}^{i}$ from client $C_{i}$ , the server computes the projection $V_{t}^{(i,l)}$ of $W_{t}^{(i,l)^{\prime}}$ onto $W_{t}^{(l)}$ , i.e.,

$$

V_{t}^{(i,l)}=\frac{\langle W_{t}^{(i,l)^{\prime}},W_{t}^{(l)}\rangle}{\lVert W_{t}^{(l)}\rVert_{2}}. \tag{12}

$$

Specifically, the server first executes the $\textbf{DEFE.AggDec}(skf_{t}^{(i,l)},$ $E_{t}^{(i,l)})$ algorithm, which effectively computes the inner product of $W_{t}^{(i,l)^{\prime}}$ and $W_{t}^{(l)}$ . This value is then normalized by $\lVert W_{t}^{(l)}\rVert_{2}$ to obtain

$$

V_{t}^{(i,l)}=\frac{\textbf{DEFE.AggDec}(skf_{t}^{(i,l)},E_{t}^{(i,l)})}{\lVert W_{t}^{(l)}\rVert_{2}}. \tag{13}

$$

By iterating over all $L$ layers, the server obtains the layer-wise projection vector $V_{t}^{i}=[V_{t}^{(i,1)},V_{t}^{(i,2)},\dots,V_{t}^{(i,L)}]$ corresponding to client $C_{i}$ . After computing projection vectors for all clients, the server clusters the set $\{V_{t}^{i}\}_{i=1}^{I}$ into $K$ clusters $\{\Omega_{1},\Omega_{2},\dots,\Omega_{K}\}$ using the K-Means algorithm. For each cluster $\Omega_{k}$ , the centroid vector $\bar{V}_{k}$ is computed, and the average cosine similarity $\overline{cs}_{k}$ between all vectors in the cluster and $\bar{V}_{k}$ is calculated. Finally, the $K-1$ clusters with the largest average cosine similarities are identified as benign clusters, while the remaining cluster is considered potentially malicious.

3) Model Aggregation: The server first maps the vectors in the selected $K-1$ clusters to their corresponding clients, generating a client list $L^{t}_{bc}$ and a weight vector $\gamma_{t}=(\gamma^{1}_{t},\dots,\gamma^{I}_{t})$ , where

$$

\gamma^{i}_{t}=\begin{cases}1&\text{if }C_{i}\in L^{t}_{bc},\\

0&\text{otherwise.}\end{cases} \tag{14}

$$

The server then distributes $\gamma_{t}$ to all clients. Upon receiving $\gamma^{i}_{t}$ , each client $C_{i}$ locally executes the $\textbf{DEFE.FunKeyGen}(pk_{i},sk_{i},$ $ctr,\gamma^{i}_{t},aux)$ algorithm to compute the partial functional key $skf^{(i,\mathsf{Agg})}_{t}$ as

$$

skf^{(i,\mathsf{Agg})}_{t}=r_{i}^{ctr}y^{i}_{t}+\sum_{j=1}^{i-1}\varphi^{i,j}-\sum_{j=i+1}^{n}\varphi^{i,j}. \tag{15}

$$

Each client uploads $skf^{(i,\mathsf{Agg})}_{t}$ to the server. Subsequently, the server executes the $\textbf{DEFE.FunKeyAgg}\left((skf^{(i,\mathsf{Agg})}_{t})_{i=1}^{I}\right)$ to compute the aggregation key as

$$

skf^{\mathsf{Agg}}_{t}=\sum_{i=1}^{I}skf^{(i,\mathsf{Agg})}_{t}. \tag{16}

$$

Finally, the server performs layer-wise aggregation to obtain the noise-perturbed global model $W_{t+1}^{\prime}$ as

$$

\displaystyle W_{t+1}^{(l)^{\prime}}[\varepsilon] \displaystyle=\frac{\text{DEFE.AggDec}\left(skf^{\mathsf{Agg}}_{t},\{E_{t}^{(i,l)}[\varepsilon]\}_{i=1}^{I}\right)}{n} \displaystyle=\frac{\langle(W_{t}^{(1,l)^{\prime}}[\varepsilon],\dots,W_{t}^{(I,l)^{\prime}}[\varepsilon]),\gamma_{t}\rangle}{n} \tag{17}

$$

where $n$ denotes the number of 1-valued elements in $L^{t}_{bc}$ . The server then distributes $W_{t+1}^{\prime}$ to all clients for the $(t+1)$ -th training round. Note that $W_{t+1}^{\prime}$ is noise-perturbed, the clients must remove the perturbation to recover the accurate global model $W_{t+1}$ . They will execute the $\textbf{DEFE.UsrDec}(W_{t+1}^{(l)^{\prime}}[\varepsilon],\gamma_{t})$ algorithm to restore the true global model parameter $W_{t+1}^{(l)}[\varepsilon]=W_{t+1}^{(l)^{\prime}}[\varepsilon]-\eta$ .

## VI Analysis

### VI-A Confidentiality

In this subsection, we demonstrate that our DEFE-based SRFed framework guarantees the confidentiality of clients’ local models under the Honest-but-Curious (HBCS) security setting.

**Definition VI.1 (Decisional Composite Residuosity (DCR) Assumption[43])**

*Selecting safe primes $p=2p^{\prime}+1$ and $q=2q^{\prime}+1$ with $p^{\prime},q^{\prime}>2^{l(\lambda)}$ , where $l$ is a polynomial in security parameter $\lambda$ , let $N=pq$ . The Decision Composite Residuosity (DCR) assumption states that, for any Probability Polynomial Time (PPT) adversary $\mathcal{A}$ and any distinct inputs $x_{0},x_{1}$ , the following holds:

$$

|Pr_{win}(\mathcal{A},(1+N)^{x_{0}}\cdot g^{r_{i}^{ctr}}\mod N^{2},x_{0},x_{1})-\frac{1}{2}|=negl(\lambda),

$$

where $Pr_{win}$ denotes the probability that the adversary $\mathcal{A}$ distinguishes ciphertexts.*

**Definition VI.2 (Honest but Curious Security (HBCS))**

*Consider the following game between an adversary $\mathcal{A}$ and a PPT simulator $\mathcal{A}^{*}$ , a protocol $\Pi$ is secure if the real-world view $\textbf{REAL}_{\mathcal{A}}^{\Pi}$ of $\mathcal{A}$ is computationally indistinguishable from the ideal-world view $\textbf{IDEAL}_{\mathcal{A}^{*}}^{\mathcal{F_{\Pi}}}$ of $\mathcal{A}^{*}$ , i.e., for all inputs $\hat{x}$ and intermediate results $\hat{y}$ from participants, it holds $\textbf{REAL}_{\mathcal{A}}^{\Pi}(\lambda,\hat{x},\hat{y})\overset{c}{\equiv}\textbf{IDEAL}_{\mathcal{A}^{*}}^{\mathcal{F_{\Pi}}}(\lambda,\hat{x},\hat{y})$ , where $\overset{c}{\equiv}$ denotes computationally indistinguishable.*

**Theorem VI.1**

*SRFed achieves Honest but Curious Security under the DCR assumption, which means that for all inputs $\{C_{t}^{i},{skf}_{t}^{i}\}_{i=1,...,I}$ and intermediate results ( $V_{t}^{i}$ , $W_{t+1}^{\prime}$ , $W_{T}$ ), SRFed holds: $\textbf{REAL}_{\mathcal{A}}^{SRFed}(C_{t}^{i},{skf}_{t}^{i},skf_{t}^{\mathsf{Agg}},V_{t}^{i},W_{t+1}^{\prime},W_{T})\overset{c}{\equiv}$ $\textbf{IDEAL}_{\mathcal{A}^{*}}^{\mathcal{F}_{SRFed}}(C_{t}^{i},{skf}_{t}^{i},skf_{t}^{\mathsf{Agg}},V_{t}^{i},W_{t+1}^{\prime},W_{T})$ .*

* Proof:*

To prove the security of SRFed, we just need to prove the confidentiality of the privacy-preserving defense strategy, since only it involves the computation of private data by unauthorized entities (i.e., the server). For the curious server, $\textbf{REAL}_{\mathcal{A}}^{SRFed}$ contains intermediate parameters and encrypted local models $\{C_{t}^{i}\}_{i=1,...,I}$ collected from each client during the execution of SRFed protocols. Besides, we construct a PPT simulator $\mathcal{A}^{*}$ to execute $\mathcal{F}_{SRFed}$ , which simulates each process of the privacy-preserving defensive aggregation strategy. The detailed proof is described below. Hyb 1 We initialize a series of random variables whose distributions are indistinguishable from $\textbf{REAL}_{\mathcal{A}}^{SRFed}$ during the real protocol execution. Hyb 2 In this hybrid, we change the behavior of simulated client $C_{i}$ $(i\in[1,I])$ . $C_{i}$ takes the selected random vector of random variables $\Theta_{W}$ as the local model $W_{t}^{i^{\prime}}$ , and uses the DEFE.Encrypt algorithm to encrypt $W_{t}^{i^{\prime}}$ . As only the original contents of ciphertexts have changed, it guarantees that the server cannot distinguish the view of $\Theta_{W}$ from the view of original $W_{t}^{i^{\prime}}$ according to the Definition (VI.1). Then, $C_{i}$ uses the DEFE.FunKeyGen algorithm to generate the key vector $skf_{t}^{i}=[skf_{t}^{(i,1)},skf_{t}^{(i,2)},\dots,skf_{t}^{(i,L)}].$ Note that each component of $skf_{t}^{i}$ essentially is the inner product result, thus revealing no secret information to the server. Hyb 3 In this hybrid, we change the input of the protocol of Secure Model Aggregation executed by the server with encrypted random variables instead of real encrypted model parameters. The server gets the plaintexts vector $V_{t}^{i}=[V_{t}^{(i,1)},V_{t}^{(i,2)},\dots,V_{t}^{(i,L)}]$ corresponding to $C_{i}$ , which is the layer-wise projection of $\Theta_{W}$ and $W_{t}$ . As the inputs $\Theta_{W}$ follow the same distribution as the real $W^{i^{\prime}}_{t}$ , the server cannot distinguish the $V_{t}^{i}$ between ideal world and real world without knowing further information about the inputs. Then, the server performs clustering based on $\{V_{t}^{i}\}^{I}_{i=1}$ to obtain $\{\Omega_{k}\}^{K}_{k=1}$ . Subsequently, it computes the average cosine similarity of all vectors within each cluster to their centroid, and assigns client weights accordingly. Since $\{V_{t}^{i}\}^{I}_{i=1}$ is indistinguishable between the ideal world and the real world, the intermediate variables calculated via $\{V_{t}^{i}\}^{I}_{i=1}$ above also inherit this indistinguishability. Hence, this hybrid is indistinguishable from the previous one. Hyb 4 In this hybrid, the aggregated model $W_{t+1}^{\prime}$ is computed by the DEFE.AggDec algorithm. $\mathcal{A}^{*}$ holds the view $\textbf{IDEAL}_{\mathcal{A}^{*}}^{\mathcal{F}_{SRFed}}$ $=$ $(C_{t}^{i},{skf}_{t}^{i},skf_{t}^{\mathsf{Agg}},V_{t}^{i},W_{t+1}^{\prime},W_{T})$ , where $skf_{t}^{\mathsf{Agg}}$ is obtained by the interaction of non-colluding clients and server, the full security property of DEFE and the non-colluding setting ensure the security of $skf_{t}^{\mathsf{Agg}}$ . Among the elements of intermediate computation, the local model $W_{t}^{i^{\prime}}$ is encrypted, which is consistent with the previous hybrid. Throughout the $T$ -round iterative process, the server obtains the noise-perturbed aggregated model $W_{t+1}^{\prime}=W_{t+1}+\eta$ via the secure model aggregation when $0\leq t<T-1$ . Thus, the server cannot infer the real $W_{t+1}$ , and cannot distinguish the $W_{t+1}^{\prime}$ between ideal world and real world. When $t=T-1$ , since the distribution of $\Theta_{W}$ remains identical to that of $W_{T}$ , the probability that the server can distinguish the final averaged aggregated model $W_{T}$ is negligible. Hence, this hybrid is indistinguishable from the previous one. Hyb 5 When $0\leq t<T$ , all clients further execute the DEFE.UsrDec algorithm to restore the $W_{t+1}$ . This process is independent of the server, hence this hybrid is indistinguishable from the previous one. The argument above proves that the output of $\textbf{IDEAL}_{\mathcal{A}^{*}}^{\mathcal{F}_{SRFed}}$ is indistinguishable from the output of $\textbf{REAL}_{\mathcal{A}}^{SRFed}$ . Thus, it proves that SRFed guarantees HBCS. ∎

### VI-B Robustness

To theoretically analyze the robustness of SRFed against poisoning attacks, we first prove the following theorem.

**Theorem VI.2**

*When the noise perturbation $\eta$ satisfies the constraint in (9), the clustering results of SRFed over all $T$ iterations remain approximately equivalent to those obtained using the original local models $\{W_{t}^{i}\}_{1,2,...,I}$ .*

* Proof:*

Let $\overline{\eta}$ be a vector of the same shape as $W_{t}^{(i,l)^{\prime}}$ with all entries equal to $\eta$ , and ${V_{t}^{i}}_{real}$ be the real projection vector derived from the noise-free models. We discuss the following three cases. ① $t=0:$ For any $i\in[1,I]$ , $W_{0}^{(i,l)^{\prime}}=W_{0}^{(i,l)}+\overline{\eta}$ , we have

$$

\displaystyle V_{0}^{i} \displaystyle=\frac{\langle W_{0}^{(i,l)^{\prime}},W_{0}^{(l)}\rangle}{\lVert W_{0}^{(l)}\rVert_{2}}=\frac{\langle W_{0}^{(i,l)}+\overline{\eta},W_{0}^{(l)}\rangle}{\lVert W_{0}^{(l)}\rVert_{2}}={V_{0}^{i}}_{real}+\frac{\langle\overline{\eta},W_{0}^{(l)}\rangle}{\lVert W_{0}^{(l)}\rVert_{2}}. \tag{18}

$$

Note that $\frac{\langle\overline{\eta},W_{0}^{(l)}\rangle}{\lVert W_{0}^{(l)}\rVert_{2}}$ is identical for any client, the clustering result of $\{V_{0}^{i}\}^{I}_{i=1}$ is entirely equivalent to that of $\{{V_{0}^{i}}_{real}\}^{I}_{i=1}$ based on the underlying computation of K-Means. ② $0<t<T:$ For any $i\in[1,I]$ , $W_{t}^{(i,l)^{\prime}}=W_{t}^{(i,l)}+\overline{\eta}$ . Correspondingly, $W_{t}^{(l)^{\prime}}=W_{t}^{(l)}+\overline{\eta}$ , and we have

$$

\begin{split}V_{t}^{i}&=\frac{\langle W_{t}^{(i,l)^{\prime}},W_{t}^{(l)^{\prime}}\rangle}{\lVert W_{t}^{(l)^{\prime}}\rVert_{2}}\\

&=\frac{\langle W_{t}^{(i,l)},W_{t}^{(l)}\rangle+\langle W_{t}^{(i,l)},\overline{\eta}\rangle+\langle\overline{\eta},W_{t}^{(l)}\rangle+\langle\overline{\eta},\overline{\eta}\rangle}{\sqrt[]{\lVert W_{t}^{(l)}\rVert_{2}^{2}+2\langle W_{t}^{(l)},\overline{\eta}\rangle+\lVert\overline{\eta}\rVert_{2}^{2}}}.\end{split} \tag{19}

$$

By combining the above equation with the constraint (9), $V_{t}^{i}$ is approximately equivalent to the real value of ${V_{t}^{i}}_{real}$ . ③ $t=T:$ For any $i\in[1,I]$ , $W_{T}^{(i,l)^{\prime}}=W_{T}^{(i,l)}$ . Correspondingly, $W_{T}^{(l)^{\prime}}=W_{T}^{(l)}+\overline{\eta}$ , and we have

$$

\displaystyle V_{T}^{i} \displaystyle=\frac{\langle W_{T}^{(i,l)},W_{T}^{(l)^{\prime}}\rangle}{\lVert W_{T}^{(l)^{\prime}}\rVert_{2}}=\frac{\langle W_{T}^{(i,l)},W_{T}^{(l)}\rangle+\langle W_{T}^{(i,l)},\overline{\eta}\rangle}{\sqrt[]{\lVert W_{T}^{(l)}\rVert_{2}^{2}+2\langle W_{T}^{(l)},\overline{\eta}\rangle+\lVert\overline{\eta}\rVert_{2}^{2}}}. \tag{20}

$$

Similarly, by combining the above equation with the constraint (9), $V_{T}^{i}$ is approximately equivalent to the real value of ${V_{T}^{i}}_{real}$ . Therefore, across all iterations, the clustering results based on $\{V_{t}^{i}\}$ closely approximate those derived from the original local models, confirming that the introduced perturbation does not affect model detection. ∎

Then, we introduce a key assumption, which has been proved in [37, 32]. This assumption reveals the essential difference between malicious and benign models and serves as a core basis for subsequent robustness analysis.

**Assumption VI.1**

*An error term $\tau^{(t)}$ exists between the average malicious gradients $\mathbf{W}_{t}^{i*}$ and the average benign gradients $\mathbf{W}_{t}^{i}$ due to divergent training objectives. This is formally expressed as:

$$

\sum_{C_{i}\in\mathcal{M}}\mathbf{W}_{t}^{i*}=\sum_{C_{i}\in\mathcal{B}}\mathbf{W}_{t}^{i}+\tau^{(t)}. \tag{21}

$$

The magnitude of $\tau^{(t)}$ exhibits a positive correlation with the number of iterative training rounds.*

**Theorem VI.3**

*SRFed guarantees robustness to malicious clients in non-IID settings, provided that most clients are benign.*

* Proof:*

In the secure model aggregation phase of SRFed, the server collects the encrypted model $C_{t}^{i}$ and the corresponding key vectors $skf_{t}^{i}$ from each client, then computes the projection $V_{t}^{(i,l)}$ of $W_{t}^{(i,l)}$ onto $W_{t}^{(l)}$ , i.e., $\frac{\langle W_{t}^{(i,l)},W_{t}^{(l)}\rangle}{\lVert W_{t}^{(l)}\rVert_{2}}$ . By iterating over $L$ layers, the server obtains the layer-wise projection vector $V_{t}^{i}=[V_{t}^{(i,1)},V_{t}^{(i,2)},\dots,V_{t}^{(i,L)}]$ corresponding to $C_{i}$ . Subsequently, the server performs clustering on the projection vectors $\{V_{t}^{i}\}^{I}_{i=1}$ . Based on the Assumption (VI.1), a non-negligible divergence $\tau^{(t)}$ emerges between benign and malicious local models, which grows with the number of iterations. Meanwhile, by independently projecting each layer’s parameters onto the corresponding layer of the global model, our operation eliminates cross-layer interference. This ensures that malicious modifications confined to specific layers can be detected significantly more effectively. Therefore, our clustering approach successfully distinguishes between benign and malicious models by grouping them into separate clusters. Due to significant distribution divergence, malicious models exhibit a lower average cosine similarity to their cluster center. Consequently, our scheme filters out the cluster containing malicious models by computing average cosine similarity, ultimately achieving robust to malicious clients. ∎

### VI-C Efficiency

**Theorem VI.4**

*The computation and communication complexities of SRFed are $\mathcal{O}(T_{lt})+\mathcal{O}(\zeta T_{me-defe})+\mathcal{O}(T_{md-defe})+\mathcal{O}(T_{ma-defe})$ and $\mathcal{O}(I\zeta|w_{defe}|)+\mathcal{O}(IL|w|)$ , respectively.*

* Proof:*

To evaluate the efficiency of SRFed, we analyze its computational and communication overhead per training iteration and compare it with ShieldFL [29]. ShieldFL is an efficient PPFL framework based on the partially homomorphic encryption (PHE) scheme. The comparative results are presented in Table III. Specifically, the computational overhead of SRFed comprises four components: local training $\mathcal{O}(T_{lt})$ , model encryption $\mathcal{O}(\zeta T_{me-defe})$ , model detection $\mathcal{O}(T_{md-defe})$ , and model aggregation $\mathcal{O}(T_{ma-defe})$ . For model encryption, FE inherently offers a lightweight advantage over PHE, leading to $\mathcal{O}(\zeta T_{me-defe})<\mathcal{O}(\zeta T_{me-phe})$ . In terms of model detection, SRFed performs this process primarily on the server side using plaintext data, whereas ShieldFL requires multiple rounds of interaction to complete the encrypted model detection. This results in $\mathcal{O}(T_{md-defe})\ll\mathcal{O}(T_{md-phe})$ . Furthermore, SRFed enables the server to complete decryption and aggregation simultaneously. In contrast, ShieldFL necessitates aggregation prior to decryption and involves interactions with a third party, resulting in significantly higher overhead, i.e., $\mathcal{O}(T_{ma-defe})\ll\mathcal{O}(T_{ma-phe})$ . Overall, these characteristics collectively render SRFed more efficient than ShieldFL. The communication overhead of SRFed comprises two components: the encrypted models $\mathcal{O}(I\zeta|w_{defe}|)$ and key vectors $\mathcal{O}(IL|w|)$ uploaded by $I$ clients, where $\zeta$ denotes the model dimension, $L$ is the number of layers, $|w_{defe}|$ and $|w|$ are the communication complexity of a single DEFE ciphertext and a single plaintext, respectively. Since $|w|$ is significantly lower than $|w_{defe}|$ , and $|w_{defe}|$ and $|w_{phe}|$ are nearly equivalent, SRFed reduces the overall communication complexity by approximately $\mathcal{O}(12I\zeta|w_{phe}|)$ compared to ShieldFL. This reduction in overhead is primarily attributed to SRFed’s lightweight DEFE scheme, which eliminates extensive third-party interactions. ∎

TABLE III: Comparison of computation and communication overhead between different methods

| Method | SRFed | ShieldFL |

| --- | --- | --- |

| Comp. | $\mathcal{O}(T_{lt})+\mathcal{O}(\zeta T_{me-defe})$ | $\mathcal{O}(T_{lt})+\mathcal{O}(\zeta T_{me-phe})$ |

| $+\mathcal{O}(T_{ma-defe})$ | $+\mathcal{O}(\zeta T_{md-phe})$ | |

| $+\mathcal{O}(T_{ma-defe})$ | $+\mathcal{O}(T_{md-phe})$ | |

| Comm. | $\mathcal{O}(I\zeta|w_{defe}|)^{1}+\mathcal{O}(IL|w|)^{2}$ | $\mathcal{O}(13I\zeta|w_{phe}|)^{\mathrm{3}}$ |

- Notes: ${}^{\mathrm{1,2,3}}|w_{defe}|$ , $|w|$ and $|w_{phe}|$ denote the communication complexity of a DEFE ciphertext, a plaintext, and a PHE ciphertext, respectively.

## VII Experiments

### VII-A Experimental Settings

#### VII-A 1 Implementation

We implement SRFed on a small-scale local network. Each machine in the network is equipped with the following hardware configuration: an Intel Xeon CPU E5-1650 v4, 32 GB of RAM, an NVIDIA GeForce GTX 1080 Ti graphics card, and a network bandwidth of 40 Mbps. Additionally, the implementation of the DEFE scheme is based on the NDD-FE scheme [31], and the code implementation of FL processes is referenced to [44].

#### VII-A 2 Dataset and Models

We evaluate the performance of SRFed on two datasets:

- MNIST [45]: This dataset consists of 10 classes of handwritten digit images, with 60,000 training samples and 10,000 test samples. Each sample is a grayscale image of 28 × 28 pixels. The global model used for this dataset is a Convolutional Neural Network (CNN) model, which includes two convolutional layers followed by two fully connected layers.

- CIFAR-10 [46]: This dataset contains RGB color images across 10 categories, including airplane, car, bird, cat, deer, dog, frog, horse, boat, and truck. It consists of 50,000 training images and 10,000 test samples. Each sample is a 32 × 32 pixel color image. The global model used for this dataset is a CNN model, which includes three convolutional layers, one pooling layer, and two fully connected layers.

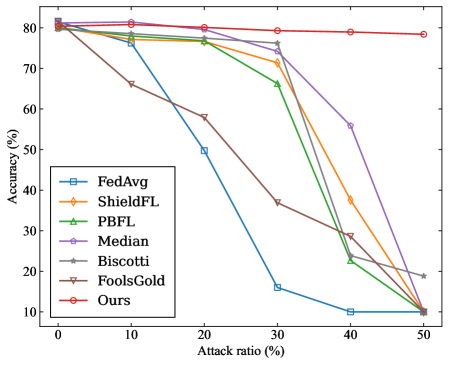

#### VII-A 3 Baselines

To evaluate the robustness of the proposed SRFed method, we conduct comparative experiments against several advanced baseline methods, including FedAvg [47], ShieldFL [29], PBFL [37], Median [35], Biscotti [41], and FoolsGold [33]. Furthermore, to evaluate the efficiency of SRFed, we compare it with representative methods such as ShieldFL [29] and ESB-FL [31].

#### VII-A 4 Experimental parameters

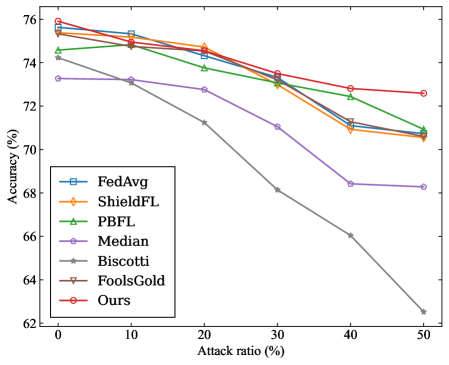

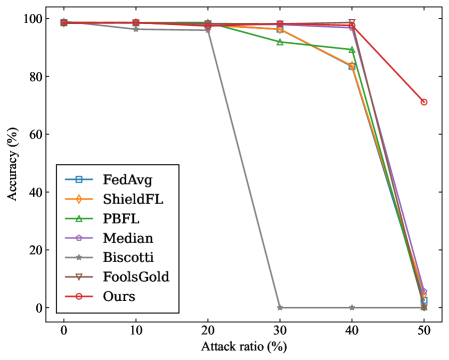

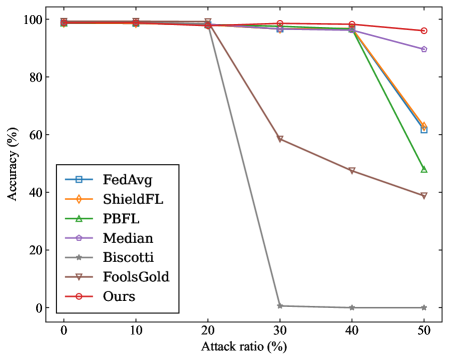

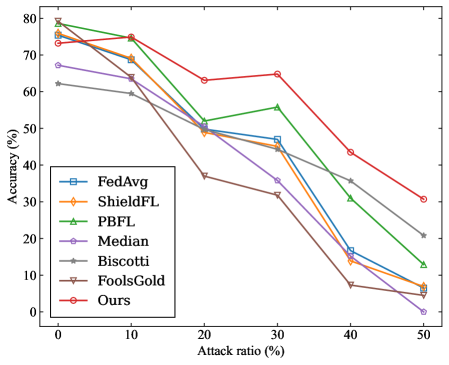

In all experiments, the number of local clients is set to 20, the number of training rounds is set to 100, the batchsize is set to 64, and the number of local training epochs is set to 10. We use the stochastic gradient descent (SGD) to optimize the model, with a learning rate of 0.01 and a momentum of 0.5. Additionally, our experiments are conducted under varying levels of data heterogeneity, with the data distributions configured as follows:

- MNIST: Two distinct levels of data heterogeneity are configured by sampling from a Dirichlet distribution with the parameters $\alpha=0.2$ and $\alpha=0.8$ , respectively, to simulate Non-IID data partitions across clients.

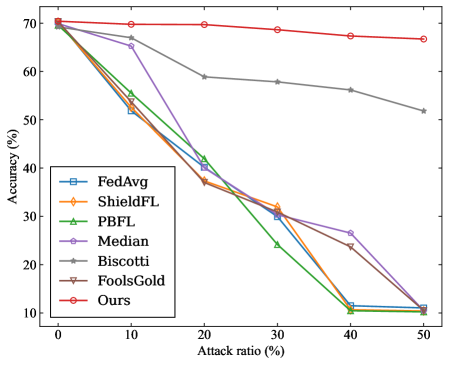

- CIFAR-10: Two distinct levels of data heterogeneity are configured by sampling from a Dirichlet distribution with the parameters $\alpha=0.2$ and $\alpha=0.6$ , respectively, to simulate Non-IID data partitions across clients.

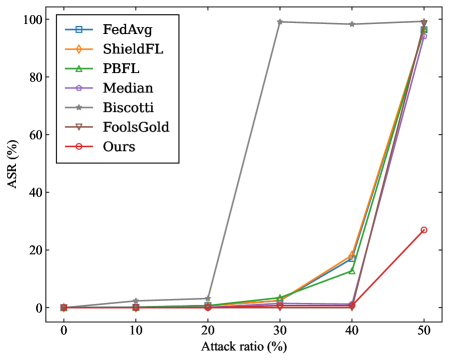

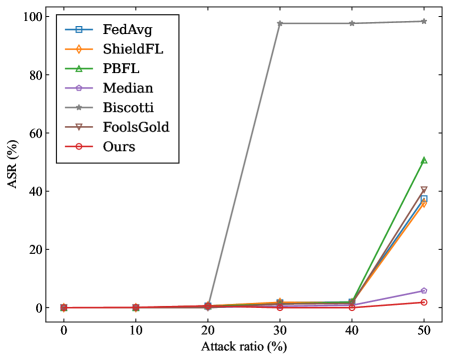

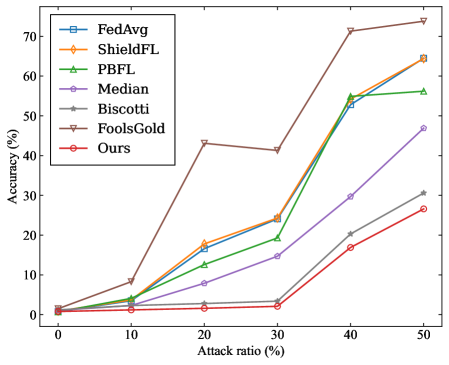

#### VII-A 5 Attack Scenario

In each benchmark, the adversary can control a certain proportion of clients to launch poisoning attacks, with the proportion varying across {0%, 10%, 20%, 30%, 40%, 50%}. The attack scenario parameters are configured as follows:

- Targeted Poisoning Attack: we consider the mainstream label-flipping attack. For experiments on the MNIST dataset, the training samples originally labeled as ”0” are reassigned to the target label ”4”. For the CIFAR-10 dataset, the training samples originally labeled as ”airplane” are reassigned to the target label ”deer”.

- Untargeted Poisoning Attack: We consider the commonly used Gaussian attack. In experiments, malicious clients inject noise that follows a Gaussian distribution $\mathcal{N}(0,0.5^{2})$ into their local model updates.

#### VII-A 6 Evaluation Metrics

For each benchmark experiment, we adopt the following evaluation metrics on the test dataset to quantify the impact of poisoning attacks on the aggregated model in FL.

- Overall Accuracy (OA): It is the ratio of the number of samples correctly predicted by the model in the test dataset to the total number of predictions for all samples in the test dataset.

- Source Accuracy (SA): It specifically refers to the ratio of the number of correctly predicted flip class samples by the model to the total number of flip class samples in the dataset.

- Attack Success Rate (ASR): It is defined as the proportion of source-class samples that are misclassified as the target class by the aggregated model.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Line Chart: Accuracy vs. Attack Ratio for Federated Learning Methods

### Overview

The image is a line chart comparing the performance (accuracy) of seven different federated learning methods or defense mechanisms as the ratio of adversarial attacks increases. The chart demonstrates how each method's accuracy degrades under increasing attack pressure.

### Components/Axes

* **X-Axis:** Labeled "Attack ratio (%)". It represents the percentage of malicious participants or attacks in the system. The axis has major tick marks at 0, 10, 20, 30, 40, and 50.

* **Y-Axis:** Labeled "Accuracy (%)". It represents the model's accuracy. The axis has major tick marks at 84, 86, 88, 90, 92, 94, and 96.

* **Legend:** Located in the bottom-left quadrant of the chart area. It lists seven data series with corresponding line colors and marker symbols:

1. **FedAvg:** Blue line with square markers (□).

2. **ShieldFL:** Orange line with diamond markers (◇).

3. **PBFL:** Green line with upward-pointing triangle markers (△).

4. **Median:** Purple line with circle markers (○).

5. **Biscotti:** Gray line with star/asterisk markers (☆).

6. **FoolsGold:** Brown line with downward-pointing triangle markers (▽).

7. **Ours:** Red line with circle markers (○).

### Detailed Analysis

The chart plots Accuracy (%) against Attack ratio (%). All methods start at a high accuracy (approximately 96%) when the attack ratio is 0%. As the attack ratio increases, the accuracy of most methods declines, but at dramatically different rates.

**Data Series Trends & Approximate Values:**

1. **Ours (Red line, ○):**

* **Trend:** Shows the most robust performance. The line remains nearly flat, exhibiting only a very slight downward slope.

* **Data Points:** ~96.2% (0%), ~96.1% (10%), ~96.1% (20%), ~96.0% (30%), ~95.9% (40%), ~95.8% (50%).

2. **Median (Purple line, ○):**

* **Trend:** Very stable, similar to "Ours" but with a marginally steeper decline at the highest attack ratio.

* **Data Points:** ~96.0% (0%), ~95.9% (10%), ~95.8% (20%), ~95.7% (30%), ~95.6% (40%), ~95.0% (50%).

3. **FedAvg (Blue line, □), ShieldFL (Orange line, ◇), PBFL (Green line, △), FoolsGold (Brown line, ▽):**

* **Trend:** These four methods follow a very similar pattern. They maintain high accuracy (~96%) until an attack ratio of 30-40%, after which they experience a sharp, precipitous drop.

* **Data Points (Approximate for the group):** ~96.0% (0%), ~96.0% (10%), ~95.8% (20%), ~95.5% (30%). At 40%, they begin to diverge slightly: FedAvg/PBFL ~95.0%, ShieldFL ~94.8%, FoolsGold ~94.5%. At 50%, they all drop significantly: FedAvg ~86.5%, PBFL ~87.0%, ShieldFL ~87.0%, FoolsGold ~85.2%.

4. **Biscotti (Gray line, ☆):**

* **Trend:** Exhibits the worst performance and earliest degradation. The line shows a steady, steep downward slope from the beginning.

* **Data Points:** ~96.0% (0%), ~95.5% (10%), ~91.0% (20%), ~86.5% (30%), ~83.5% (40%), ~83.2% (50%).

### Key Observations

1. **Clear Performance Tiers:** The methods cluster into three distinct performance tiers under attack:

* **Tier 1 (Highly Robust):** "Ours" and "Median" maintain accuracy above ~95% even at a 50% attack ratio.

* **Tier 2 (Moderately Robust, then Collapse):** FedAvg, ShieldFL, PBFL, and FoolsGold are resilient up to a ~30-40% attack ratio but fail catastrophically beyond that point.

* **Tier 3 (Vulnerable):** Biscotti's accuracy degrades linearly and significantly with any increase in attack ratio.

2. **Critical Threshold:** For four of the seven methods, an attack ratio between 30% and 40% appears to be a critical failure threshold.

3. **"Ours" is the Top Performer:** The method labeled "Ours" demonstrates the highest and most consistent accuracy across the entire range of attack ratios tested.

### Interpretation

This chart is a robustness evaluation of federated learning aggregation or defense strategies. The "Attack ratio" simulates an increasingly hostile environment where a larger portion of participating devices are malicious (e.g., sending poisoned model updates).

* **What the data suggests:** The proposed method ("Ours") and the "Median" aggregation are significantly more resilient to Byzantine (malicious) attacks than the other compared methods. Their design likely incorporates robust statistics or other mechanisms that are not easily fooled by a high volume of adversarial inputs.

* **How elements relate:** The sharp drop-off for FedAvg, ShieldFL, PBFL, and FoolsGold indicates they have a breaking point. Their defense mechanisms may work well when malicious actors are a minority but become overwhelmed and fail completely when malicious participants approach or exceed 40-50% of the total. Biscotti's linear decline suggests its defense is fundamentally less effective, offering little resistance as attacks increase.

* **Notable Anomaly:** The near-identical performance of FedAvg, ShieldFL, PBFL, and FoolsGold until the 30% mark is striking. It suggests that under moderate attack conditions, these different methods offer similar levels of protection, and their key differentiator is their failure mode under extreme conditions.

* **Practical Implication:** For a real-world federated learning system where security is a concern, choosing an aggregation method from Tier 1 ("Ours" or "Median") would provide a much larger safety margin. Methods from Tier 2 might be acceptable only if the system can guarantee the attack ratio will never approach the 30-40% threshold.

</details>

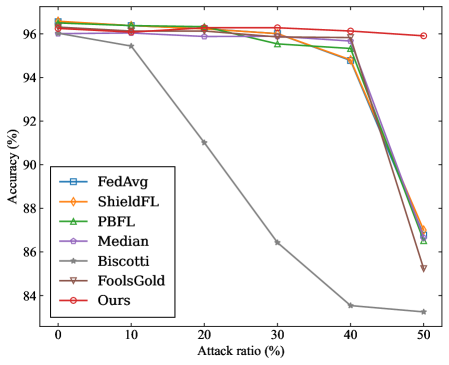

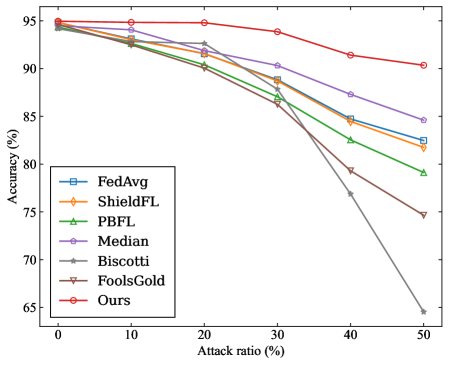

(a) MNIST ( $\alpha$ =0.2)

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Attack Ratio for Federated Learning Methods

### Overview

The image is a line chart comparing the performance (accuracy) of seven different federated learning methods or algorithms as the percentage of malicious participants (attack ratio) in the system increases. The chart demonstrates how each method's accuracy degrades under increasing adversarial pressure.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:** Labeled **"Attack ratio (%)"**. It has major tick marks at 0, 10, 20, 30, 40, and 50.

* **Y-Axis:** Labeled **"Accuracy (%)"**. It has major tick marks at 70, 75, 80, 85, 90, and 95.

* **Legend:** Positioned in the **bottom-left corner** of the chart area. It contains seven entries, each with a unique color and marker symbol:

1. **FedAvg:** Blue line with square markers (□).

2. **ShieldFL:** Orange line with diamond markers (◇).

3. **PBFL:** Green line with upward-pointing triangle markers (△).

4. **Median:** Purple line with circle markers (○).

5. **Biscotti:** Gray line with star/asterisk markers (*).

6. **FoolsGold:** Brown line with downward-pointing triangle markers (▽).

7. **Ours:** Red line with pentagon markers (⬠).

### Detailed Analysis

The chart plots Accuracy (%) against Attack ratio (%). Below is an analysis of each data series, with approximate values read from the chart. Values are approximate due to visual estimation.

**1. FedAvg (Blue, □):**

* **Trend:** Starts high and remains nearly flat until a sharp decline after 40% attack ratio.

* **Data Points:** ~96% at 0%, ~96% at 10%, ~96% at 20%, ~96% at 30%, ~96% at 40%, ~92% at 50%.

**2. ShieldFL (Orange, ◇):**

* **Trend:** Very similar to FedAvg, maintaining high accuracy before a late decline.

* **Data Points:** ~96% at 0%, ~96% at 10%, ~96% at 20%, ~96% at 30%, ~96% at 40%, ~92.5% at 50%.

**3. PBFL (Green, △):**

* **Trend:** Follows the same high-accuracy plateau as FedAvg and ShieldFL but begins its decline slightly earlier.

* **Data Points:** ~96% at 0%, ~96% at 10%, ~96% at 20%, ~96% at 30%, ~96% at 40%, ~91% at 50%.

**4. Median (Purple, ○):**

* **Trend:** Starts slightly lower than the top group and shows a very gradual, linear decline.

* **Data Points:** ~94% at 0%, ~94% at 10%, ~93% at 20%, ~93% at 30%, ~93% at 40%, ~92% at 50%.

**5. Biscotti (Gray, *):**

* **Trend:** Exhibits the most severe and rapid degradation. It starts high but plummets dramatically between 20% and 30% attack ratio.

* **Data Points:** ~96% at 0%, ~95% at 10%, ~93% at 20%, **~73% at 30%**, ~72% at 40%, ~68% at 50%.

**6. FoolsGold (Brown, ▽):**

* **Trend:** Starts lower than most and shows a steady, moderate decline.

* **Data Points:** ~94.5% at 0%, ~94% at 10%, ~90.5% at 20%, ~90% at 30%, ~88.5% at 40%, ~88.5% at 50%.

**7. Ours (Red, ⬠):**

* **Trend:** Maintains the highest and most stable accuracy across the entire range, with only a very slight dip at the highest attack ratio.

* **Data Points:** ~96% at 0%, ~96% at 10%, ~96% at 20%, ~96% at 30%, ~96% at 40%, ~95% at 50%.

### Key Observations

1. **Performance Clustering:** Three distinct performance clusters are visible:

* **High-Resilience Group:** "Ours", FedAvg, ShieldFL, and PBFL maintain ~96% accuracy until 40% attack ratio.

* **Moderate-Resilience Group:** "Median" and "FoolsGold" start lower and degrade more gradually.

* **Low-Resilience Outlier:** "Biscotti" experiences a catastrophic failure, losing over 20 percentage points of accuracy between 20% and 30% attack ratio.

2. **Critical Threshold:** The 20%-30% attack ratio range is a critical point where the performance of several methods diverges sharply.

3. **Top Performer:** The method labeled "Ours" demonstrates superior robustness, maintaining near-peak accuracy even at a 50% attack ratio, while all other methods show some decline.

### Interpretation

This chart is a robustness evaluation of federated learning algorithms against poisoning attacks (where a fraction of participants are malicious). The "Attack ratio (%)" represents the proportion of malicious clients.

* **What the data suggests:** The proposed method ("Ours") is significantly more robust to adversarial attacks than the six baseline methods compared. It maintains model accuracy even when half the participants are malicious.

* **How elements relate:** The x-axis (attack strength) is the independent variable testing the systems. The y-axis (accuracy) is the dependent variable measuring system performance. The legend identifies the different defensive strategies being tested.

* **Notable trends/anomalies:**

* The **Biscotti** method's sharp drop indicates a specific vulnerability that is triggered once the attack ratio exceeds 20%. This is a critical failure mode.

* The **FedAvg, ShieldFL, and PBFL** methods show a "cliff-edge" failure pattern—they are highly effective up to a point (40% attack ratio) but then degrade quickly.

* The **"Ours"** method's flat line suggests it employs a fundamentally different or more effective defense mechanism that does not degrade linearly or catastrophically with increased attack strength.

* **Peircean Investigation:** The chart is an **index** of resilience (the lines point to the performance outcome) and a **symbol** of a comparative study (the legend encodes the meaning of each line). The stark visual contrast between the red "Ours" line and the plummeting gray "Biscotti" line is the most significant **iconic** representation of the claimed improvement. The data argues that the new method solves a stability problem present in prior work.

</details>

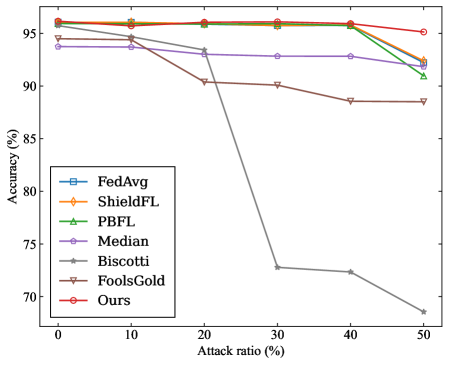

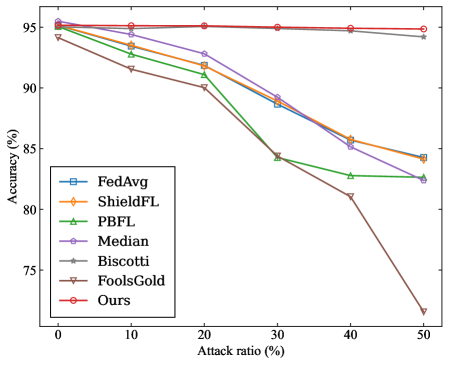

(b) MNIST ( $\alpha$ =0.8)

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Accuracy of Federated Learning Defense Mechanisms Under Poisoning Attacks

### Overview

This image is a line chart comparing the performance (accuracy) of seven different federated learning defense mechanisms as the ratio of malicious attacks increases. The chart demonstrates how each method's accuracy degrades under increasing adversarial pressure.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:** Labeled **"Attack ratio (%)"**. It represents the percentage of malicious participants or updates in the federated learning system. The axis has major tick marks at 0, 10, 20, 30, 40, and 50.

* **Y-Axis:** Labeled **"Accuracy (%)"**. It represents the model's classification accuracy. The axis ranges from approximately 57.5% to 76%, with major tick marks at 57.5, 60, 62.5, 65, 67.5, 70, 72.5, and 75.

* **Legend:** Located in the **bottom-left quadrant** of the plot area. It lists seven data series with corresponding line colors and marker styles:

1. **FedAvg** (Light blue line, square marker `□`)

2. **ShieldFL** (Orange line, plus marker `+`)

3. **PBFL** (Green line, upward-pointing triangle marker `△`)

4. **Median** (Purple line, circle marker `○`)

5. **Biscotti** (Gray line, left-pointing triangle marker `◁`)

6. **FoolsGold** (Brown line, downward-pointing triangle marker `▽`)

7. **Ours** (Red line, pentagon marker `⬠`)

### Detailed Analysis

The chart plots Accuracy (%) against Attack ratio (%). Below is an analysis of each data series, including approximate values and visual trends.

**Trend Verification & Data Points (Approximate):**

1. **FedAvg (Light blue, `□`):**

* **Trend:** Starts high, remains relatively stable until a 30% attack ratio, then declines sharply.

* **Points:** (0%, ~75.5%), (10%, ~75.0%), (20%, ~73.5%), (30%, ~73.5%), (40%, ~69.5%), (50%, ~68.5%).

2. **ShieldFL (Orange, `+`):**

* **Trend:** Starts as the highest, shows a steady, near-linear decline across all attack ratios.

* **Points:** (0%, ~76.0%), (10%, ~75.0%), (20%, ~73.5%), (30%, ~73.5%), (40%, ~69.0%), (50%, ~69.0%).

3. **PBFL (Green, `△`):**

* **Trend:** Starts very high, declines gradually and consistently.

* **Points:** (0%, ~75.5%), (10%, ~74.5%), (20%, ~74.0%), (30%, ~72.0%), (40%, ~71.0%), (50%, ~68.5%).

4. **Median (Purple, `○`):**

* **Trend:** Starts significantly lower than the top group, shows a very gradual, shallow decline.

* **Points:** (0%, ~68.5%), (10%, ~69.0%), (20%, ~68.0%), (30%, ~67.5%), (40%, ~64.5%), (50%, ~64.0%).

5. **Biscotti (Gray, `◁`):**

* **Trend:** Starts moderately high but exhibits the steepest and most consistent decline of all methods.

* **Points:** (0%, ~73.0%), (10%, ~70.0%), (20%, ~66.5%), (30%, ~64.5%), (40%, ~61.5%), (50%, ~56.5%).

6. **FoolsGold (Brown, `▽`):**

* **Trend:** Starts high, declines steadily, with a particularly sharp drop between 30% and 40% attack ratio.

* **Points:** (0%, ~73.5%), (10%, ~73.5%), (20%, ~72.5%), (30%, ~71.5%), (40%, ~67.0%), (50%, ~62.5%).

7. **Ours (Red, `⬠`):**

* **Trend:** Starts among the top group, maintains high accuracy robustly until a 30% attack ratio, then declines but remains competitive.

* **Points:** (0%, ~75.5%), (10%, ~74.0%), (20%, ~73.5%), (30%, ~73.5%), (40%, ~69.0%), (50%, ~68.5%).

### Key Observations

1. **Performance Hierarchy at Low Attack (0%):** ShieldFL, FedAvg, PBFL, and "Ours" are tightly clustered at the top (~75.5-76%). FoolsGold and Biscotti are slightly lower (~73-73.5%). Median is a clear outlier, starting much lower (~68.5%).

2. **Robustness to Increasing Attacks:** The method labeled **"Ours"** and **FedAvg** show a "plateau-then-drop" pattern, maintaining high accuracy up to a 30% attack ratio before declining. **ShieldFL** and **PBFL** show a more linear, graceful degradation.

3. **Most Vulnerable Method:** **Biscotti** demonstrates the poorest robustness, suffering the most severe and consistent drop in accuracy, falling from ~73% to ~56.5%.

4. **Critical Threshold:** A notable inflection point occurs for several methods (FedAvg, FoolsGold, "Ours") between **30% and 40% attack ratio**, where the rate of accuracy loss increases sharply.

5. **Final Ranking at 50% Attack:** The order from highest to lowest accuracy is approximately: ShieldFL ≈ PBFL ≈ FedAvg ≈ "Ours" (all ~68.5-69%) > Median (~64%) > FoolsGold (~62.5%) > Biscotti (~56.5%).

### Interpretation

This chart is a comparative robustness analysis for federated learning (FL) systems under data poisoning attacks. The "Attack ratio" simulates an adversary controlling a fraction of the participating clients or their model updates.