## [Diagram]: Transformer Language Model with Depth and Width Pruning

### Overview

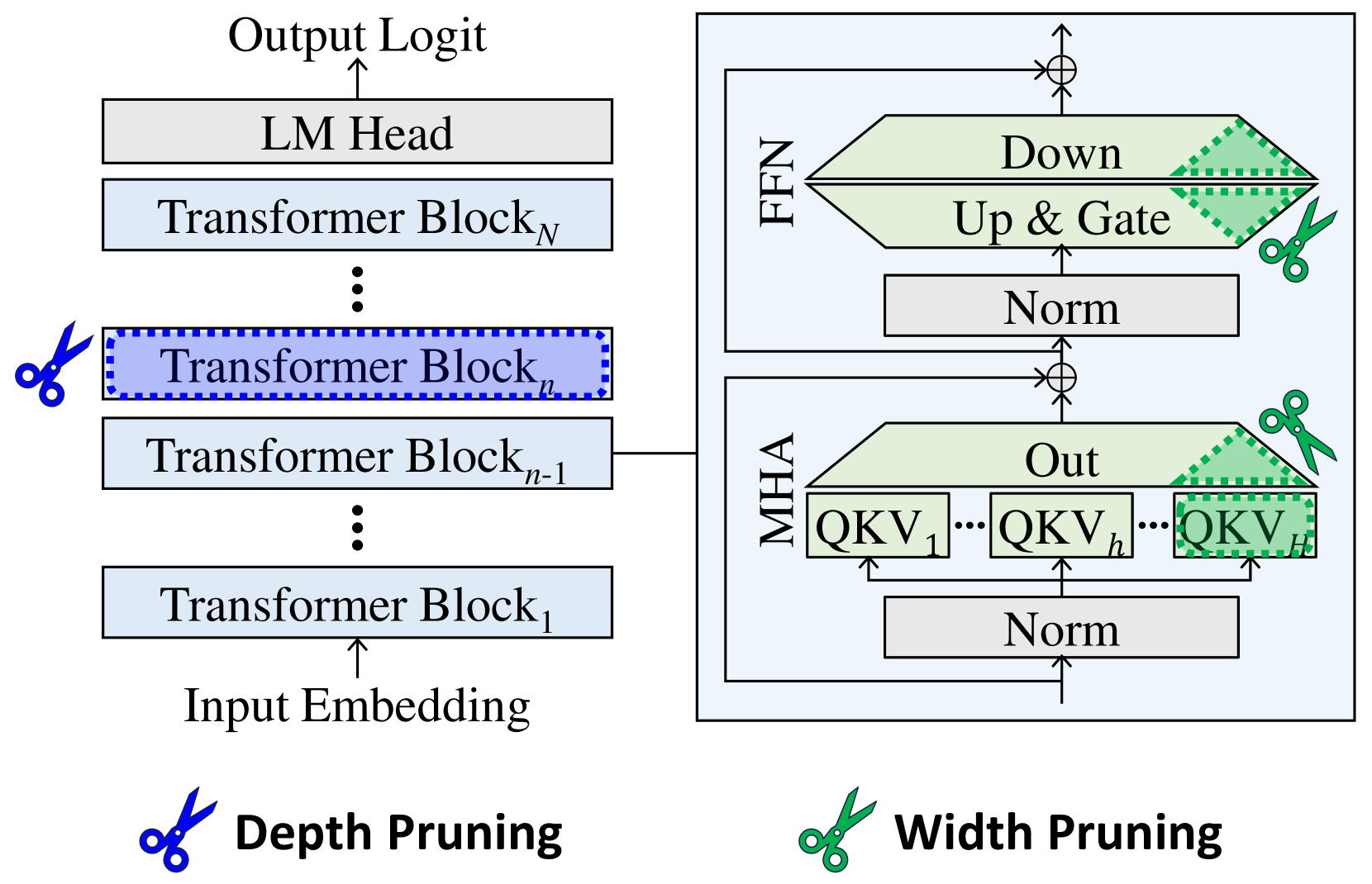

The image is a technical diagram illustrating a **Transformer-based language model** architecture, with two model compression techniques: **Depth Pruning** (removing entire Transformer blocks) and **Width Pruning** (pruning components within a Transformer block). The left side shows the overall model structure, while the right side provides a detailed view of a single Transformer block’s internal components.

### Components/Axes (Diagram Elements)

#### Left Side (Model Architecture)

- **Input Embedding**: Feeds into the first Transformer block (`Transformer Block₁`).

- **Transformer Blocks**: Stacked sequentially from `Transformer Block₁` to `Transformer Blockₙ`.

- `Transformer Blockₙ` (top) connects to the **LM Head** (Language Model Head), which produces the **Output Logit**.

- `Transformer Blockₙ₋₁` (middle) is highlighted with a blue dashed border and a **blue scissors icon** (labeled *Depth Pruning*), indicating pruning of entire blocks.

- **Depth Pruning**: Blue scissors icon + label *“Depth Pruning”* (bottom-left) denotes removing entire Transformer blocks to reduce model depth.

#### Right Side (Transformer Block Detail)

- **Transformer Blockₙ₋₁** (connected to the left) is expanded to show internal components:

- **MHA (Multi-Head Attention)**:

- Contains `QKV₁`, `QKVₕ`, `QKVₕ` (likely `QKV₁` to `QKVₕ`, with a **green scissors icon** on `QKVₕ`, indicating pruning of attention heads).

- Includes an *“Out”* (output) layer and a *“Norm”* (normalization) layer below MHA.

- **FFN (Feed-Forward Network)**:

- Contains *“Up & Gate”* (input to FFN) and *“Down”* (output), with a **green scissors icon** on the FFN (indicating pruning of FFN components).

- Includes a *“Norm”* (normalization) layer below FFN.

- **Width Pruning**: Green scissors icon + label *“Width Pruning”* (bottom-right) denotes pruning components *within* a Transformer block (e.g., attention heads in MHA, layers in FFN).

### Detailed Analysis (Component Breakdown)

- **Depth Pruning (Left)**: The blue scissors icon next to `Transformer Blockₙ` (blue dashed) shows that entire Transformer blocks (layers) can be pruned (removed) to reduce model depth. This compresses the model by reducing the number of layers.

- **Width Pruning (Right)**: Within a Transformer block (`Transformer Blockₙ₋₁`), two components are pruned:

- **MHA (Multi-Head Attention)**: The green scissors on `QKVₕ` (one of the attention heads) indicates pruning of attention heads (reducing the number of parallel attention mechanisms).

- **FFN (Feed-Forward Network)**: The green scissors on the FFN (spanning *“Up & Gate”* and *“Down”*) indicates pruning of FFN layers (reducing the size of the feed-forward network).

- **Transformer Block Structure (Right)**: Each block has:

- MHA (with `QKV` heads, *“Out”*, and *“Norm”*).

- FFN (with *“Up & Gate”*, *“Down”*, and *“Norm”*).

- Arrows show data flow: Input Embedding → `Transformer Block₁` → ... → `Transformer Blockₙ₋₁` → (right block) → ... → `Transformer Blockₙ` → LM Head → Output Logit.

### Key Observations

- **Pruning Types**: Two distinct pruning strategies:

- *Depth Pruning*: Removes entire Transformer blocks (layers) to reduce model depth.

- *Width Pruning*: Prunes components *within* a block (attention heads in MHA, layers in FFN) to reduce model width.

- **Visual Cues**: Blue scissors (Depth) vs. Green scissors (Width) distinguish pruning types. A dashed blue box highlights the target block for depth pruning.

- **Labels**: All text is in English (no other language). Key labels: *Input Embedding*, *Transformer Block₁*, ..., *Transformer Blockₙ*, *LM Head*, *Output Logit*, *MHA*, *QKV₁*, *QKVₕ*, *Out*, *Norm*, *FFN*, *Up & Gate*, *Down*, *Depth Pruning*, *Width Pruning*.

### Interpretation

This diagram explains how to compress a Transformer-based language model using two complementary pruning techniques:

- **Depth Pruning** reduces model depth by removing entire layers (Transformer blocks), which can decrease computational cost and memory usage but may impact performance if critical layers are removed.

- **Width Pruning** reduces model width by pruning redundant components *within* layers (e.g., attention heads in MHA, neurons in FFN), preserving depth but reducing per-layer complexity.

The diagram effectively communicates that model compression can target both *layer-wise* (depth) and *component-wise* (width) redundancy, with visual icons (scissors) and labels clarifying each method. The right-side detail shows the internal structure of a Transformer block, highlighting where width pruning occurs (MHA heads and FFN), while the left side illustrates depth pruning (removing blocks). This dual approach balances model size reduction with performance preservation by targeting different sources of redundancy.