TECHNICAL ASSET FINGERPRINT

035a51ef5bafa80509a23d6f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

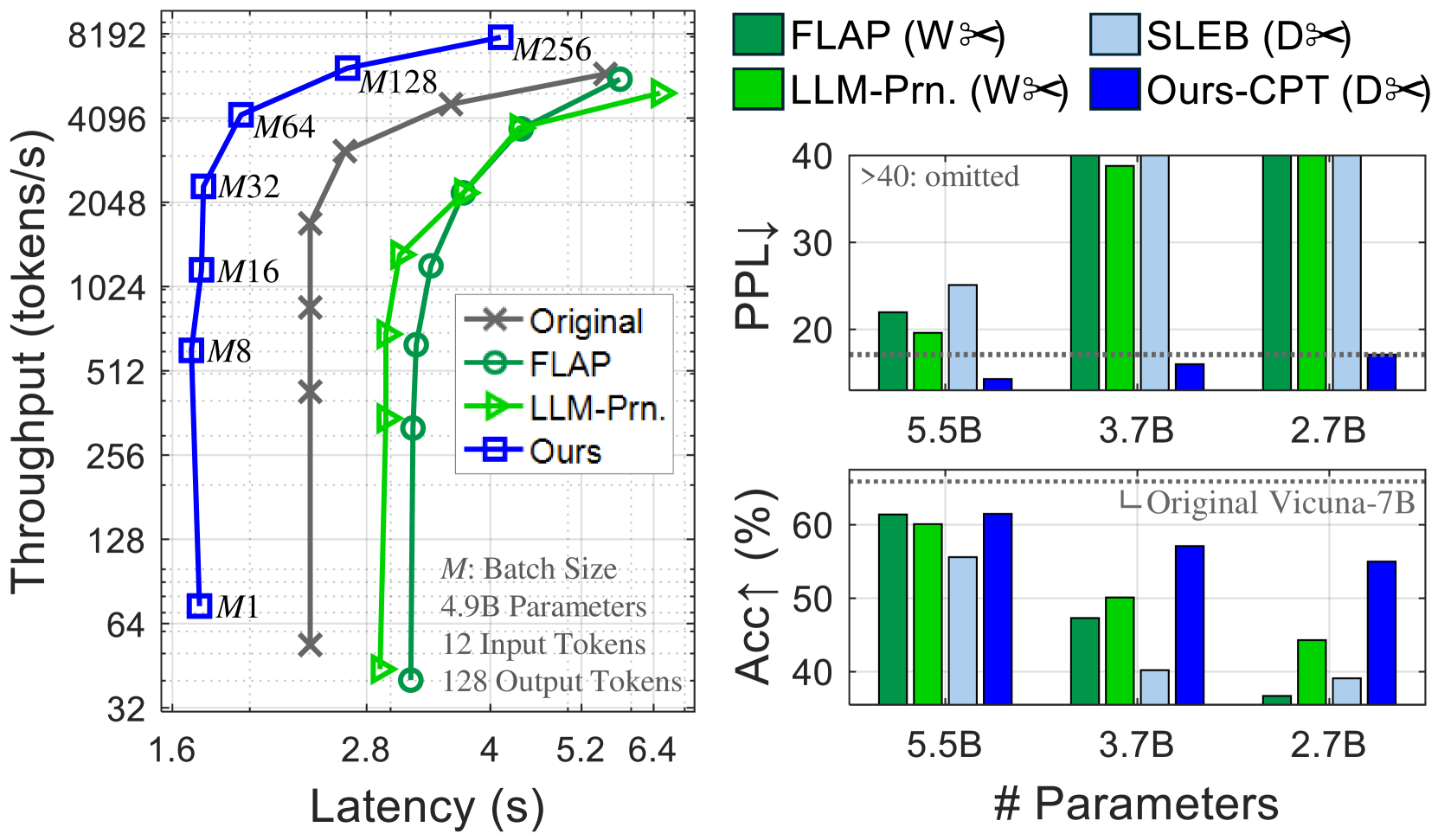

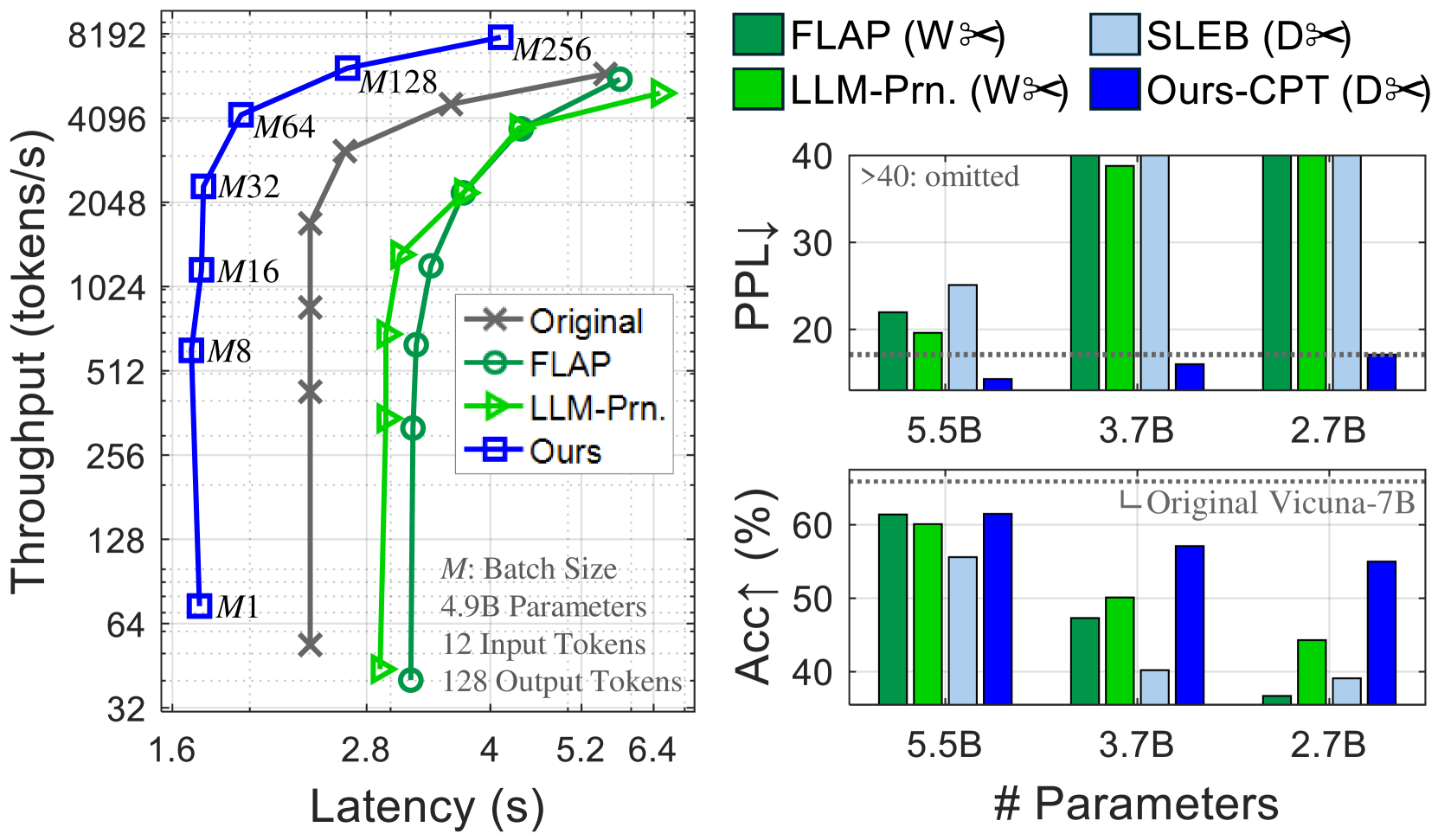

## Chart/Diagram Type: Multi-Panel Performance Comparison

### Overview

The image presents a multi-panel figure comparing the performance of different language models. The left panel shows a throughput vs. latency plot, while the right panels display perplexity (PPL) and accuracy (Acc) bar charts for varying model sizes. The models compared are "Original", "FLAP", "LLM-Prn.", "SLEB", and "Ours/Ours-CPT".

### Components/Axes

**Left Panel: Throughput vs. Latency**

* **X-axis:** Latency (s), ranging from 1.6 to 6.4.

* **Y-axis:** Throughput (tokens/s), ranging from 32 to 8192 (logarithmic scale).

* **Legend (Top-Right):**

* Original (Gray line with 'x' markers)

* FLAP (Green line with circle markers)

* LLM-Prn. (Green line with triangle markers)

* Ours (Blue line with square markers)

* **Annotations:**

* "M: Batch Size"

* "4.9B Parameters"

* "12 Input Tokens"

* "128 Output Tokens"

* Batch sizes are annotated near the "Ours" line: M1, M8, M16, M32, M64, M128, M256

**Right Panels: Perplexity and Accuracy Bar Charts**

* **X-axis (both charts):** # Parameters, with values 5.5B, 3.7B, and 2.7B.

* **Top Right Panel: Perplexity (PPL)**

* Y-axis: PPL↓, ranging from 20 to 40. Values above 40 are omitted and indicated by ">40: omitted".

* Legend (Top):

* FLAP (Dark Green)

* LLM-Prn. (Light Green)

* SLEB (Light Blue)

* Ours-CPT (Dark Blue)

* Horizontal dotted line at PPL = 17.5, without a label.

* **Bottom Right Panel: Accuracy (Acc)**

* Y-axis: Acc↑ (%), ranging from 40 to 60.

* Legend: "Original Vicuna-7B" (Horizontal dotted line at Acc = 58%)

* Bar colors match the Perplexity chart.

### Detailed Analysis

**Left Panel: Throughput vs. Latency**

* **Original (Gray 'x' markers):** Throughput increases with latency.

* (2.0s, 64 tokens/s)

* (2.4s, 128 tokens/s)

* (2.8s, 512 tokens/s)

* (3.2s, 1024 tokens/s)

* (4.0s, 2048 tokens/s)

* (5.2s, 4096 tokens/s)

* **FLAP (Green circle markers):** Throughput increases with latency.

* (3.2s, 256 tokens/s)

* (3.6s, 512 tokens/s)

* (4.0s, 1024 tokens/s)

* (4.8s, 2048 tokens/s)

* (5.6s, 4096 tokens/s)

* **LLM-Prn. (Green triangle markers):** Throughput increases with latency.

* (2.8s, 32 tokens/s)

* (3.2s, 512 tokens/s)

* (3.6s, 1024 tokens/s)

* (4.4s, 2048 tokens/s)

* (5.2s, 4096 tokens/s)

* **Ours (Blue square markers):** Throughput increases sharply with latency.

* (1.6s, 64 tokens/s)

* (1.6s, 512 tokens/s)

* (1.6s, 1024 tokens/s)

* (1.6s, 2048 tokens/s)

* (1.6s, 4096 tokens/s)

* (1.6s, 8192 tokens/s)

**Right Panels: Perplexity and Accuracy Bar Charts**

* **Perplexity (PPL) Chart:**

* **5.5B Parameters:**

* FLAP (Dark Green): ~22

* LLM-Prn. (Light Green): ~20

* SLEB (Light Blue): ~25

* Ours-CPT (Dark Blue): ~10

* **3.7B Parameters:**

* FLAP (Dark Green): >40

* LLM-Prn. (Light Green): >40

* SLEB (Light Blue): >40

* Ours-CPT (Dark Blue): ~2

* **2.7B Parameters:**

* FLAP (Dark Green): >40

* LLM-Prn. (Light Green): >40

* SLEB (Light Blue): >40

* Ours-CPT (Dark Blue): ~2

* **Accuracy (Acc) Chart:**

* **5.5B Parameters:**

* FLAP (Dark Green): ~60%

* LLM-Prn. (Light Green): ~60%

* SLEB (Light Blue): ~55%

* Ours-CPT (Dark Blue): ~62%

* **3.7B Parameters:**

* FLAP (Dark Green): ~50%

* LLM-Prn. (Light Green): ~50%

* SLEB (Light Blue): ~10%

* Ours-CPT (Dark Blue): ~62%

* **2.7B Parameters:**

* FLAP (Dark Green): ~10%

* LLM-Prn. (Light Green): ~45%

* SLEB (Light Blue): ~10%

* Ours-CPT (Dark Blue): ~62%

### Key Observations

* **Throughput vs. Latency:** The "Ours" model demonstrates significantly higher throughput at lower latencies compared to the other models.

* **Perplexity:** The "Ours-CPT" model consistently achieves the lowest perplexity across all parameter sizes. Perplexity for FLAP, LLM-Prn, and SLEB exceeds the chart limit for 3.7B and 2.7B parameters.

* **Accuracy:** The "Ours-CPT" model maintains high accuracy across all parameter sizes, while the accuracy of other models varies significantly with parameter size.

* **Original Vicuna-7B Baseline:** The accuracy of the "Original Vicuna-7B" model is shown as a baseline at approximately 58%.

### Interpretation

The data suggests that the "Ours-CPT" model outperforms the other models in terms of throughput, latency, perplexity, and accuracy. The "Ours-CPT" model maintains a consistent performance profile across different parameter sizes, while the performance of other models degrades significantly as the parameter size decreases. The throughput vs. latency plot indicates that the "Ours" model achieves high throughput with minimal latency, suggesting efficient processing. The perplexity and accuracy charts further support the superior performance of the "Ours-CPT" model, indicating better language modeling capabilities and higher prediction accuracy. The comparison against the "Original Vicuna-7B" baseline highlights the improvements achieved by the "Ours-CPT" model.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart & Bar Charts: Performance Comparison of Language Models

### Overview

The image presents a performance comparison of several language models (Original, FLAP, LLM-Prn, and Ours) across three metrics: Throughput vs. Latency, Perplexity (PPL), and Accuracy (Acc1). The left chart shows throughput as a function of latency for different batch sizes. The two charts on the right show PPL and Acc1 as a function of model size (number of parameters).

### Components/Axes

**Left Chart:**

* **X-axis:** Latency (s), ranging from 1.6 to 6.4.

* **Y-axis:** Throughput (tokens/s), on a logarithmic scale from 32 to 8192.

* **Lines:** Represent different models:

* Original (Grey 'x' markers)

* FLAP (Green circle markers)

* LLM-Prn (Light Blue triangle markers)

* Ours (Dark Blue square markers)

* **Batch Size Labels:** "M" labels (M1, M8, M16, M32, M64, M128, M256) are positioned along the lines, indicating the batch size used for each data point.

* **Text Box:** "M: Batch Size", "4.9B Parameters", "12 Input Tokens", "128 Output Tokens"

**Top Right Chart (PPL):**

* **X-axis:** Number of Parameters (5.5B, 3.7B, 2.7B)

* **Y-axis:** Perplexity (PPL), ranging from 0 to 40.

* **Bars:** Represent different models, color-coded as follows:

* FLAP (Green)

* SLEB (Orange)

* LLM-Prn (Light Green)

* Ours-CPT (Light Blue)

* **Horizontal Line:** "Original Vicuna-7B" at approximately PPL = 33.

**Bottom Right Chart (Acc1):**

* **X-axis:** Number of Parameters (5.5B, 3.7B, 2.7B)

* **Y-axis:** Accuracy (Acc1) in percentage, ranging from 0 to 60.

* **Bars:** Represent different models, color-coded as follows:

* FLAP (Green)

* SLEB (Orange)

* LLM-Prn (Light Green)

* Ours-CPT (Light Blue)

* **Horizontal Line:** "Original Vicuna-7B" at approximately Acc1 = 60%.

**Legend:** Located in the top-right corner, associating colors with models.

### Detailed Analysis or Content Details

**Left Chart (Throughput vs. Latency):**

* **Original:** Starts at approximately 64 tokens/s at 1.6s latency, rapidly decreases to approximately 32 tokens/s at 2.8s latency.

* **FLAP:** Starts at approximately 4096 tokens/s at 1.6s latency, decreases to approximately 512 tokens/s at 2.8s latency, then plateaus around 512 tokens/s.

* **LLM-Prn:** Starts at approximately 2048 tokens/s at 1.6s latency, increases to approximately 4096 tokens/s at 4s latency, then plateaus.

* **Ours:** Starts at approximately 128 tokens/s at 1.6s latency, increases rapidly to approximately 8192 tokens/s at 5.2s latency.

**Top Right Chart (PPL):**

* **5.5B Parameters:** FLAP ~36, SLEB ~38, LLM-Prn ~32, Ours-CPT ~24.

* **3.7B Parameters:** FLAP ~37, SLEB ~39, LLM-Prn ~33, Ours-CPT ~25.

* **2.7B Parameters:** FLAP ~36, SLEB ~38, LLM-Prn ~32, Ours-CPT ~24.

**Bottom Right Chart (Acc1):**

* **5.5B Parameters:** FLAP ~52%, SLEB ~54%, LLM-Prn ~44%, Ours-CPT ~58%.

* **3.7B Parameters:** FLAP ~52%, SLEB ~54%, LLM-Prn ~44%, Ours-CPT ~58%.

* **2.7B Parameters:** FLAP ~52%, SLEB ~54%, LLM-Prn ~44%, Ours-CPT ~58%.

### Key Observations

* **Throughput/Latency Trade-off:** The left chart demonstrates a clear trade-off between throughput and latency. Increasing latency generally leads to higher throughput.

* **"Ours" Model:** The "Ours" model exhibits the highest throughput at higher latencies.

* **PPL:** The "Ours-CPT" model consistently achieves the lowest perplexity across all parameter sizes.

* **Acc1:** The "Ours-CPT" model consistently achieves the highest accuracy across all parameter sizes.

* **SLEB and FLAP:** SLEB and FLAP show similar performance in both PPL and Acc1.

* **Vicuna-7B:** The original Vicuna-7B model serves as a baseline, with performance comparable to the 5.5B parameter models.

### Interpretation

The data suggests that the "Ours" model represents a significant improvement over the other models, particularly in throughput at higher latencies. The "Ours-CPT" model also demonstrates superior performance in terms of both perplexity and accuracy, indicating better language modeling capabilities. The consistent performance of "Ours-CPT" across different parameter sizes suggests that it is a scalable and efficient model. The trade-off between throughput and latency is a common characteristic of language models, and the "Ours" model appears to effectively balance these two metrics. The horizontal lines representing the original Vicuna-7B model provide a useful benchmark for evaluating the performance of the other models. The fact that the "Ours-CPT" model outperforms Vicuna-7B across all metrics suggests that it represents a substantial advancement in language modeling technology. The consistent performance of SLEB and FLAP suggests they are comparable alternatives.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Chart Type: Multi-Panel Performance Analysis (Line Graph + Bar Graphs)]

### Overview

The image contains three panels: a left line graph (Throughput vs. Latency) and two right bar graphs (Perplexity (PPL) and Accuracy (Acc) vs. Model Size). It compares the performance of different methods (Original, FLAP, LLM-Prn., Ours, SLEB, Ours-CPT) across metrics like throughput, latency, perplexity, and accuracy.

### Components/Axes

#### Left Panel: Throughput vs. Latency (Line Graph)

- **Y-axis**: Throughput (tokens/s) (logarithmic scale: 32, 64, 128, 256, 512, 1024, 2048, 4096, 8192).

- **X-axis**: Latency (s) (linear scale: 1.6, 2.8, 4, 5.2, 6.4).

- **Legend**:

- Gray cross: *Original*

- Green circle: *FLAP*

- Green triangle: *LLM-Prn.*

- Blue square: *Ours*

- **Batch Sizes (M)**: Labeled on the *Ours* line: M1, M8, M16, M32, M64, M128, M256.

- **Setup**: 4.9B Parameters, 12 Input Tokens, 128 Output Tokens (text at bottom).

#### Right Top Panel: Perplexity (PPL) vs. Model Size (Bar Graph)

- **Y-axis**: PPL (lower = better; values: 20, 30, 40; >40 omitted).

- **X-axis**: Model Size (# Parameters: 5.5B, 3.7B, 2.7B).

- **Legend (top-right)**:

- Dark green: *FLAP (W%)*

- Light green: *LLM-Prn. (W%)*

- Light blue: *SLEB (D%)*

- Dark blue: *Ours-CPT (D%)*

#### Right Bottom Panel: Accuracy (Acc) vs. Model Size (Bar Graph)

- **Y-axis**: Accuracy (%) (values: 40, 50, 60).

- **X-axis**: Model Size (# Parameters: 5.5B, 3.7B, 2.7B).

- **Legend (top-right)**: Same as PPL (FLAP, LLM-Prn., SLEB, Ours-CPT).

### Detailed Analysis

#### Left Panel: Throughput vs. Latency

- **Trends**:

- *Ours* (blue squares) has the **highest throughput** across most latencies, especially at larger batch sizes (M128, M256).

- *Original* (gray crosses) has lower throughput, increasing with latency but slower than *Ours*.

- *FLAP* (green circles) and *LLM-Prn.* (green triangles) lie between *Original* and *Ours*, with *LLM-Prn.* slightly outperforming *FLAP* at some points.

- **Key Data Points** (approximate):

- *Ours* at M256: Throughput ~8192 tokens/s, Latency ~6.4s.

- *Original* at M256: Throughput ~4096 tokens/s, Latency ~6.4s.

#### Right Top Panel: Perplexity (PPL)

- **Trends**:

- *Ours-CPT* (dark blue) has the **lowest PPL** (best language modeling) across all model sizes.

- *FLAP*, *LLM-Prn.*, and *SLEB* have higher PPL (worse performance), especially at 3.7B and 2.7B (exceeding 40 for some).

- **Key Data Points** (approximate):

- 5.5B: *Ours-CPT* ~15, *FLAP* ~22, *LLM-Prn.* ~20, *SLEB* ~25.

- 3.7B: *Ours-CPT* ~15, *FLAP* ~40, *LLM-Prn.* ~40, *SLEB* ~40.

#### Right Bottom Panel: Accuracy (Acc)

- **Trends**:

- *Ours-CPT* (dark blue) has the **highest accuracy** across all model sizes.

- *SLEB* (light blue) has the lowest accuracy. *FLAP* and *LLM-Prn.* lie in between, with *LLM-Prn.* slightly outperforming *FLAP* at 3.7B and 2.7B.

- **Key Data Points** (approximate):

- 5.5B: *Ours-CPT* ~61%, *FLAP* ~61%, *LLM-Prn.* ~60%, *SLEB* ~55%.

- 3.7B: *Ours-CPT* ~57%, *FLAP* ~47%, *LLM-Prn.* ~50%, *SLEB* ~40%.

### Key Observations

- **Efficiency (Throughput/Latency)**: *Ours* outperforms *Original*, *FLAP*, and *LLM-Prn.* in throughput (tokens/s) at similar or lower latency, especially with larger batch sizes.

- **Performance (PPL/Acc)**: *Ours-CPT* outperforms *FLAP*, *LLM-Prn.*, and *SLEB* in both perplexity (lower = better) and accuracy (higher = better) across all model sizes.

- **Model Size Impact**: Larger models (5.5B) generally have better PPL and accuracy than smaller ones (3.7B, 2.7B), but *Ours-CPT* maintains strong performance even at 2.7B.

### Interpretation

The data suggests that the “Ours” method (and its variant “Ours-CPT”) is more efficient (higher throughput, lower latency) and effective (lower PPL, higher accuracy) than competing methods (Original, FLAP, LLM-Prn., SLEB). This implies:

- **Efficiency**: “Ours” processes more tokens per second with less delay, making it suitable for high-throughput applications.

- **Effectiveness**: “Ours-CPT” achieves better language modeling (lower PPL) and task accuracy, indicating improved model quality.

- **Scalability**: Performance gains hold across different model sizes (5.5B, 3.7B, 2.7B), suggesting the method scales well.

These results highlight the superiority of “Ours” in both efficiency and performance, making it a promising approach for large language model optimization.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Throughput vs. Latency for Different Models

### Overview

The left chart compares throughput (tokens/s) against latency (s) for four models: **Original**, **FLAP**, **LLM-Prn.**, and **Ours**. Throughput increases with latency, but the models exhibit distinct performance curves.

### Components/Axes

- **X-axis (Latency)**: Ranges from 1.6s to 6.4s, with gridlines at 1.6, 2.8, 4.0, 5.2, and 6.4s.

- **Y-axis (Throughput)**: Ranges from 32 to 8192 tokens/s, with gridlines at 32, 64, 128, 256, 512, 1024, 2048, 4096, and 8192.

- **Legend**:

- **Original**: Gray crosses (×)

- **FLAP**: Green circles (○)

- **LLM-Prn.**: Green triangles (△)

- **Ours**: Blue squares (■)

### Detailed Analysis

- **Original (Gray ×)**:

- Starts at ~64 tokens/s at 1.6s latency, rising sharply to ~8192 tokens/s at 6.4s.

- Slope is steep, indicating high throughput at high latency.

- **FLAP (Green ○)**:

- Begins at ~32 tokens/s at 1.6s, peaking at ~4096 tokens/s at 5.2s.

- Slope is less steep than Original, with a plateau at higher latencies.

- **LLM-Prn. (Green △)**:

- Starts at ~32 tokens/s at 1.6s, reaching ~4096 tokens/s at 5.2s.

- Similar to FLAP but with slightly lower throughput at 6.4s.

- **Ours (Blue ■)**:

- Starts at ~32 tokens/s at 1.6s, peaking at ~8192 tokens/s at 6.4s.

- Outperforms all models at higher latencies.

### Key Observations

- **Ours** achieves the highest throughput across all latencies, especially at 6.4s.

- **Original** has the steepest slope, suggesting it prioritizes throughput over latency efficiency.

- **FLAP** and **LLM-Prn.** show similar performance but lag behind **Ours** at higher latencies.

### Interpretation

The data suggests **Ours** is the most efficient model, balancing throughput and latency. **Original** sacrifices latency for maximum throughput, while **FLAP** and **LLM-Prn.** offer moderate performance. The use of distinct markers (×, ○, △, ■) and colors (gray, green, blue) in the legend ensures clear differentiation.

---

## Bar Charts: PPL and Accuracy Across Parameter Sizes

### Overview

The right side contains two bar charts:

1. **PPL (Perplexity)**: Lower values indicate better performance.

2. **Accuracy (%)**: Higher values indicate better performance.

Both charts compare models (**FLAP**, **SLEB**, **LLM-Prn.**, **Ours-CPT**) across parameter sizes (5.5B, 3.7B, 2.7B).

### Components/Axes

- **X-axis (Parameter Sizes)**: 5.5B, 3.7B, 2.7B.

- **Y-axis (PPL)**: Ranges from 0 to 40, with a dashed line at 20.

- **Y-axis (Accuracy)**: Ranges from 0% to 60%, with a dashed line at 50%.

- **Legend**:

- **FLAP (W✂️)**: Green bars

- **SLEB (D✂️)**: Blue bars

- **LLM-Prn. (W✂️)**: Green bars

- **Ours-CPT (D✂️)**: Blue bars

### Detailed Analysis

#### PPL Chart

- **5.5B Parameters**:

- **FLAP**: ~25 PPL

- **SLEB**: ~30 PPL

- **LLM-Prn.**: ~20 PPL

- **Ours-CPT**: ~10 PPL (lowest, best performance)

- **3.7B Parameters**:

- **FLAP**: ~35 PPL

- **SLEB**: ~40 PPL

- **LLM-Prn.**: ~30 PPL

- **Ours-CPT**: ~15 PPL

- **2.7B Parameters**:

- **FLAP**: ~45 PPL

- **SLEB**: ~50 PPL

- **LLM-Prn.**: ~40 PPL

- **Ours-CPT**: ~20 PPL

#### Accuracy Chart

- **5.5B Parameters**:

- **FLAP**: ~60%

- **SLEB**: ~55%

- **LLM-Prn.**: ~50%

- **Ours-CPT**: ~65% (highest, best performance)

- **3.7B Parameters**:

- **FLAP**: ~50%

- **SLEB**: ~45%

- **LLM-Prn.**: ~40%

- **Ours-CPT**: ~55%

- **2.7B Parameters**:

- **FLAP**: ~40%

- **SLEB**: ~35%

- **LLM-Prn.**: ~30%

- **Ours-CPT**: ~45%

### Key Observations

- **Ours-CPT** consistently outperforms other models in both PPL and accuracy across all parameter sizes.

- **FLAP** and **LLM-Prn.** show similar trends but with higher PPL and lower accuracy.

- **SLEB** performs poorly in PPL but slightly better in accuracy for 5.5B parameters.

### Interpretation

The charts highlight **Ours-CPT** as the most effective model, achieving the lowest PPL and highest accuracy. **FLAP** and **LLM-Prn.** are comparable but less efficient. The parameter size inversely correlates with performance: larger models (5.5B) outperform smaller ones (2.7B) in both metrics. The use of green (W✂️) and blue (D✂️) bars in the legend aligns with the model categories, ensuring clarity.

---

## Interpretation

The data demonstrates that **Ours** and **Ours-CPT** models excel in throughput, PPL, and accuracy, suggesting superior optimization. **Original** prioritizes throughput at the cost of latency, while **FLAP** and **LLM-Prn.** offer moderate performance. The parameter size directly impacts performance, with larger models (5.5B) outperforming smaller ones (2.7B). The visual design (colors, markers) effectively distinguishes models, aiding in quick comparisons.

DECODING INTELLIGENCE...