## Diagram: Recurrent Neural Network Architecture with Input Injection

### Overview

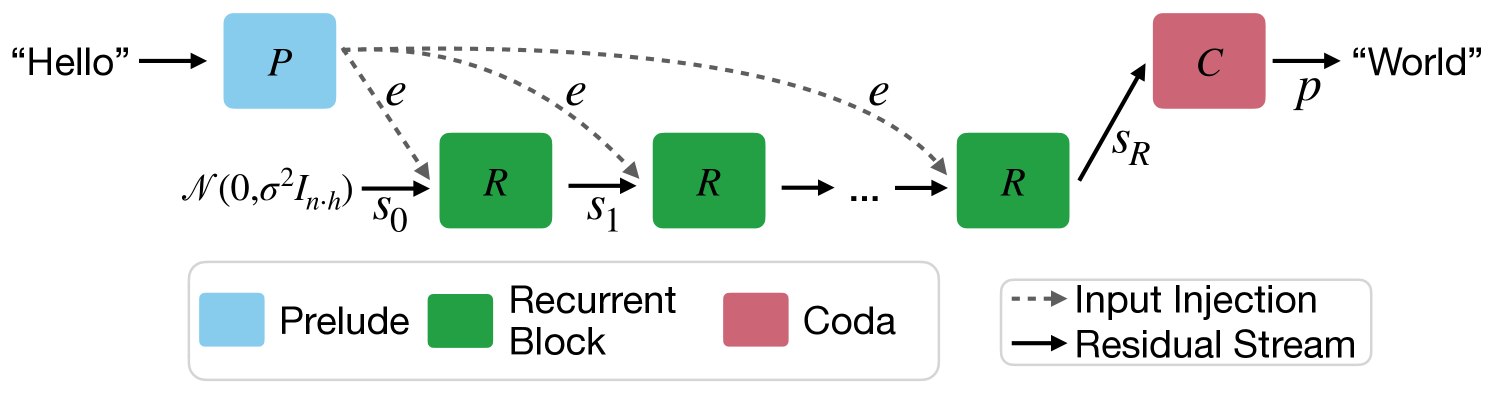

The image displays a technical flow diagram of a neural network architecture designed for sequence-to-sequence processing (e.g., translating "Hello" to "World"). The architecture consists of three main component types: a Prelude, a series of Recurrent Blocks, and a Coda. The diagram illustrates the flow of data and control signals between these components.

### Components/Axes

The diagram is composed of labeled blocks, directional arrows with annotations, and a two-part legend.

**Main Components (from left to right):**

1. **Prelude (Blue Block):** Labeled with the letter **"P"**. It receives the initial input text **"Hello"**.

2. **Recurrent Blocks (Green Blocks):** A sequence of blocks, each labeled with the letter **"R"**. The diagram shows three explicit blocks with an ellipsis **"..."** between the second and third, indicating a variable or repeated number of such blocks.

3. **Coda (Red Block):** Labeled with the letter **"C"**. It produces the final output text **"World"**.

**Arrows and Annotations:**

* **Input Text:** The string **"Hello"** with an arrow pointing into the Prelude block.

* **Output Text:** The string **"World"** with an arrow originating from the Coda block.

* **Input Injection (Dashed Arrows):** Three dashed gray arrows, each labeled with the letter **"e"**, originate from the Prelude block and point to each of the visible Recurrent Blocks.

* **Residual Stream (Solid Arrows):**

* A solid black arrow labeled **"s₀"** points from a mathematical notation into the first Recurrent Block.

* A solid black arrow labeled **"s₁"** points from the first Recurrent Block to the second.

* A solid black arrow points from the second Recurrent Block to the ellipsis **"..."**.

* A solid black arrow points from the ellipsis **"..."** to the final Recurrent Block.

* A solid black arrow labeled **"s_R"** points from the final Recurrent Block to the Coda block.

* **Mathematical Notation:** The expression **"𝒩(0, σ²I_{n·h})"** is positioned to the left of the first Recurrent Block, with an arrow labeled **"s₀"** pointing from it into the block. This denotes a normal distribution with mean 0 and variance σ², applied to an identity matrix of dimension n·h, likely representing the initialization of the residual stream state.

**Legend (Bottom of Diagram):**

* **Component Legend (Left Box):**

* Blue square: **"Prelude"**

* Green square: **"Recurrent Block"**

* Red square: **"Coda"**

* **Arrow Legend (Right Box):**

* Dashed gray arrow: **"Input Injection"**

* Solid black arrow: **"Residual Stream"**

### Detailed Analysis

The diagram defines a specific computational flow:

1. The input sequence ("Hello") is first processed by the **Prelude (P)**.

2. The Prelude generates an embedding or signal, denoted by **"e"**, which is **injected** (via dashed arrows) into **every** Recurrent Block in the sequence. This is a key architectural feature, suggesting the initial input context is made available at each processing step.

3. The core processing occurs in the chain of **Recurrent Blocks (R)**. They are connected sequentially by the **Residual Stream**, a solid pathway carrying state information (`s₀`, `s₁`, ..., `s_R`).

4. The initial state `s₀` of the residual stream is drawn from a normal distribution **𝒩(0, σ²I_{n·h})**, indicating a randomized initialization.

5. The final state `s_R` from the last Recurrent Block is passed to the **Coda (C)**.

6. The Coda processes this final state to generate the output sequence ("World").

### Key Observations

* **Parallel Input Injection:** The Prelude's output (`e`) is connected to all Recurrent Blocks simultaneously, not just the first one. This is a non-standard feature for classic recurrent networks, where input is typically only fed at the first step.

* **Residual Stream as State Carrier:** The primary sequential connection between Recurrent Blocks is explicitly labeled as a "Residual Stream," implying the network uses a residual learning framework where the stream carries the evolving hidden state.

* **Variable Depth:** The ellipsis **"..."** between Recurrent Blocks explicitly indicates that the architecture is not fixed to three blocks but can be scaled to a depth of `R` blocks.

* **Clear Separation of Concerns:** The three component types (Prelude, Recurrent Block, Coda) have distinct colors and roles, suggesting a modular design where each handles a specific part of the sequence transformation task (encoding, iterative processing, decoding).

### Interpretation

This diagram illustrates a **modified recurrent neural network (RNN) architecture** with a specific design choice to mitigate the vanishing gradient problem or enhance information flow.

* **What it demonstrates:** The architecture decouples the initial input processing (Prelude) from the core recurrent computation (Recurrent Blocks) and final output generation (Coda). The critical innovation shown is the **"Input Injection"** mechanism. By feeding the Prelude's output (`e`) directly into every Recurrent Block, the model ensures that the original input information is never more than one step away from any point in the processing chain. This likely helps preserve long-range dependencies and provides a stable reference signal throughout the sequence generation.

* **Relationships:** The Prelude acts as an encoder. The Recurrent Blocks form the dynamic core, with their state evolving via the Residual Stream. The Coda acts as a decoder. The Input Injection creates a bypass, allowing the encoded input to influence every time step of the recurrent processing directly.

* **Notable Anomalies/Patterns:** The most notable pattern is the dual-path information flow: the sequential **Residual Stream** (carrying the evolving state `s_t`) and the parallel **Input Injection** (carrying the static input context `e`). This creates a hybrid model combining aspects of feed-forward networks (direct input access) and recurrent networks (sequential state evolution). The use of a normally distributed initialization for `s₀` is standard practice, but its explicit notation here emphasizes the importance of the residual stream's starting condition.