## Diagram: Knowledge Retrieval with LLMs

### Overview

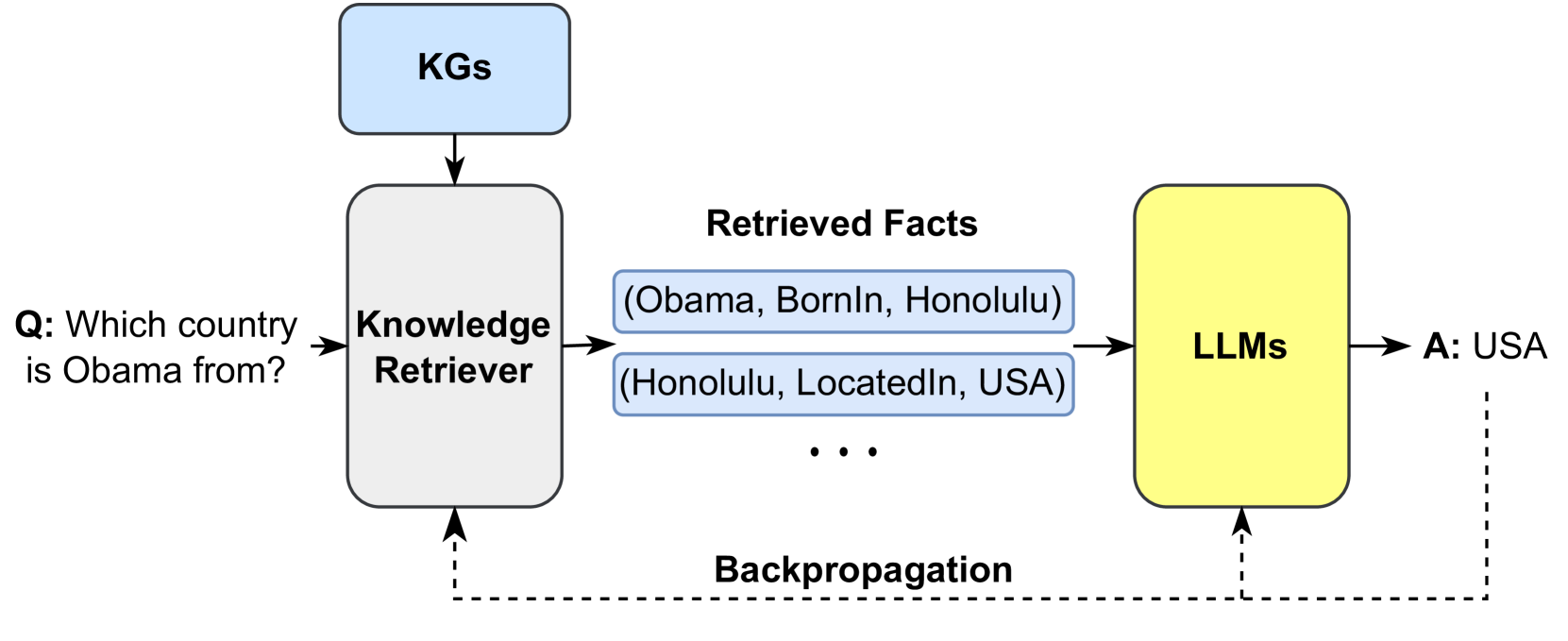

The image is a diagram illustrating a knowledge retrieval process using Large Language Models (LLMs). It shows how a question is processed, facts are retrieved from Knowledge Graphs (KGs), and an answer is generated by the LLMs, with a backpropagation loop for refinement.

### Components/Axes

* **KGs:** A blue rounded rectangle at the top, representing Knowledge Graphs.

* **Knowledge Retriever:** A white rounded rectangle in the center-left, responsible for retrieving relevant facts.

* **Retrieved Facts:** Two blue rounded rectangles containing facts: "(Obama, BornIn, Honolulu)" and "(Honolulu, LocatedIn, USA)". An ellipsis "..." indicates more facts may be retrieved.

* **LLMs:** A yellow rounded rectangle on the right, representing Large Language Models.

* **Q: Which country is Obama from?:** The question being asked, positioned to the left of the Knowledge Retriever.

* **A: USA:** The answer provided by the LLMs, positioned to the right of the LLMs.

* **Backpropagation:** A dashed line indicating the flow of information for model refinement, connecting the LLMs and Knowledge Retriever.

### Detailed Analysis or ### Content Details

1. **Question Input:** The process begins with the question "Q: Which country is Obama from?".

2. **Knowledge Retrieval:** The Knowledge Retriever uses the KGs to find relevant facts.

3. **Retrieved Facts:** The retrieved facts include:

* "(Obama, BornIn, Honolulu)"

* "(Honolulu, LocatedIn, USA)"

* "..." (representing additional facts)

4. **LLM Processing:** The LLMs process the retrieved facts to generate an answer.

5. **Answer Output:** The LLMs output the answer "A: USA".

6. **Backpropagation:** A backpropagation loop connects the LLMs and the Knowledge Retriever, suggesting a mechanism for refining the retrieval process based on the LLM's performance.

### Key Observations

* The diagram illustrates a pipeline where a question is answered by retrieving facts from a knowledge graph and processing them with a large language model.

* The backpropagation loop suggests an iterative process where the knowledge retrieval is refined based on the LLM's output.

### Interpretation

The diagram demonstrates a system that leverages knowledge graphs and large language models to answer questions. The process involves retrieving relevant facts from the knowledge graph, feeding them into the LLM, and generating an answer. The backpropagation loop indicates a learning mechanism where the system can improve its knowledge retrieval based on the accuracy of the LLM's answers. This architecture highlights the integration of structured knowledge (KGs) with the reasoning capabilities of LLMs to enhance question-answering performance.