\n

## Diagram: Knowledge Retrieval and LLM Interaction

### Overview

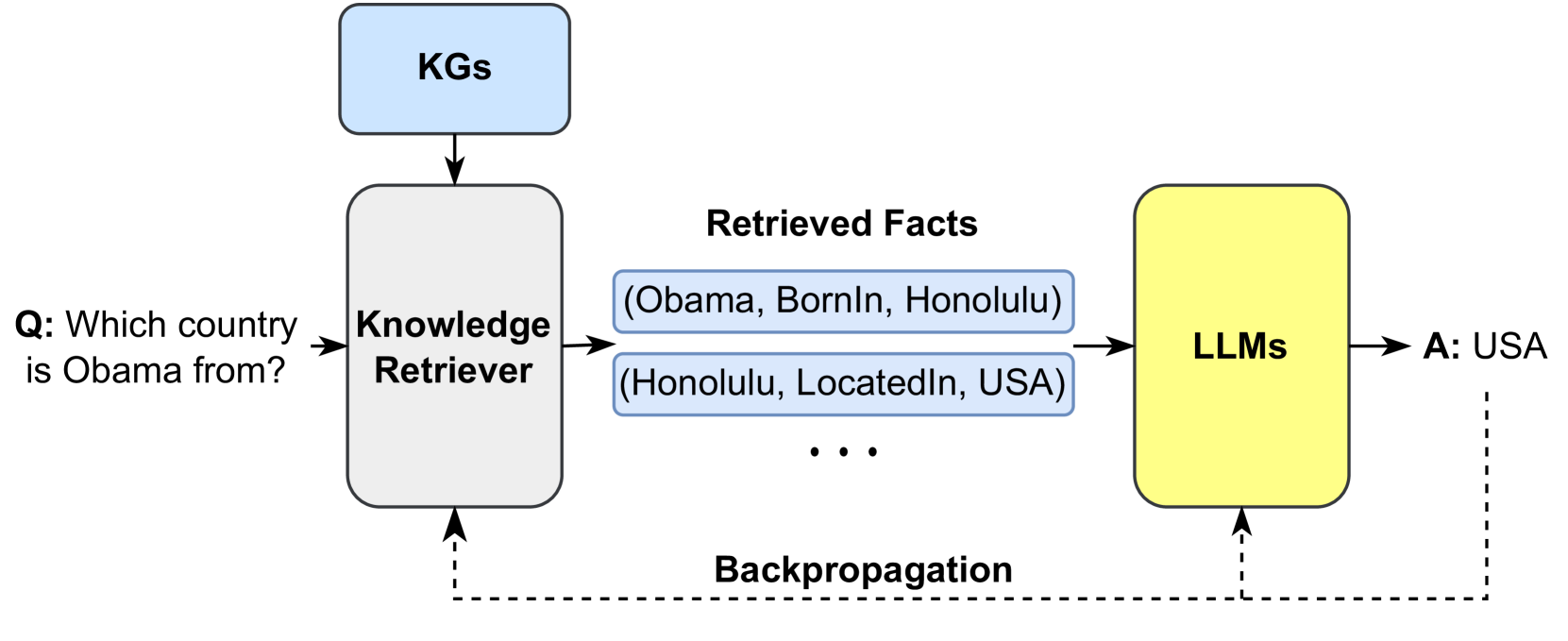

This diagram illustrates a process of knowledge retrieval and its integration with Large Language Models (LLMs) to answer a question. The process begins with a question, retrieves relevant facts from a Knowledge Graph (KGs), and then uses these facts to inform the LLM's response. A backpropagation step is also indicated.

### Components/Axes

The diagram consists of four main components:

1. **KGs (Knowledge Graphs):** A rectangular block at the top, representing the source of knowledge.

2. **Knowledge Retriever:** A rectangular block in the center-left, responsible for retrieving facts.

3. **Retrieved Facts:** A rectangular block in the center, displaying the facts retrieved by the Knowledge Retriever.

4. **LLMs (Large Language Models):** A rectangular block at the center-right, representing the language model that generates the answer.

Additionally, there are:

* **Question:** "Q: Which country is Obama from?" positioned on the left.

* **Answer:** "A: USA" positioned on the right.

* **Backpropagation:** A dashed line with the label "Backpropagation" connecting the LLMs block to the Knowledge Retriever block.

* **Arrows:** Solid arrows indicating the flow of information.

### Detailed Analysis or Content Details

The diagram shows the following flow:

1. A question, "Q: Which country is Obama from?" is input to the **Knowledge Retriever**.

2. The **Knowledge Retriever** queries the **KGs** (Knowledge Graphs).

3. The **Knowledge Retriever** outputs **Retrieved Facts**, which are displayed as two fact tuples within a rectangular block:

* "(Obama, BornIn, Honolulu)"

* "(Honolulu, LocatedIn, USA)"

* An ellipsis ("...") indicates that there are more retrieved facts not shown.

4. The **Retrieved Facts** are fed into the **LLMs** (Large Language Models).

5. The **LLMs** generate the answer "A: USA".

6. A dashed arrow labeled "Backpropagation" indicates a feedback loop from the **LLMs** to the **Knowledge Retriever**.

### Key Observations

The diagram highlights the importance of external knowledge sources (KGs) in enhancing the capabilities of LLMs. The backpropagation step suggests a mechanism for refining the knowledge retrieval process based on the LLM's performance. The specific facts retrieved – Obama's birthplace and Honolulu's location – demonstrate how the system connects information to answer the question.

### Interpretation

This diagram illustrates a Retrieval-Augmented Generation (RAG) approach to question answering. The LLM doesn't rely solely on its pre-trained knowledge; instead, it leverages an external knowledge base to provide more accurate and contextually relevant answers. The backpropagation loop suggests a learning mechanism where the LLM's feedback can improve the knowledge retrieval process over time. This is a common architecture for building more reliable and knowledgeable AI systems. The diagram emphasizes the modularity of the system, separating knowledge storage (KGs) from knowledge retrieval and reasoning (LLMs). The use of fact tuples suggests a structured representation of knowledge within the KGs. The ellipsis indicates that the system can handle a larger and more complex knowledge base than what is explicitly shown.