\n

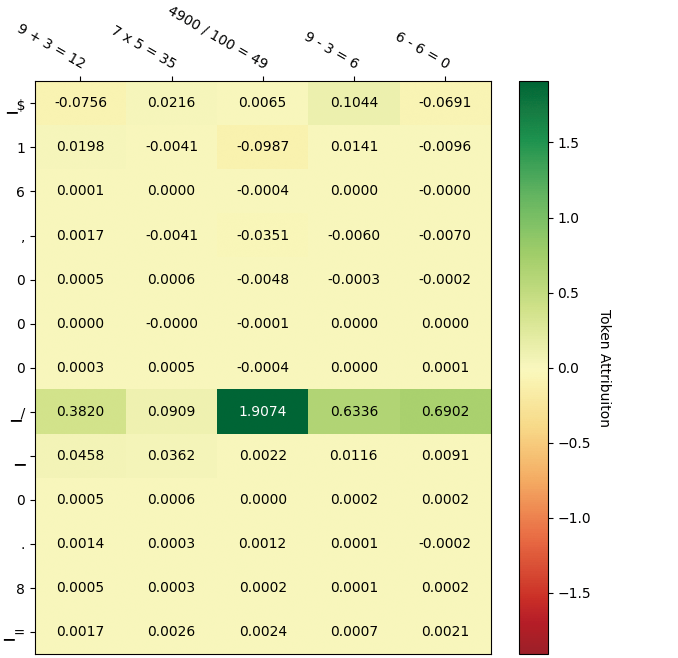

## Heatmap: Token Attribution for Arithmetic Operations

### Overview

The image displays a heatmap visualizing "Token Attribution" scores for various arithmetic operations. The heatmap is a grid where rows represent individual tokens (symbols or characters) and columns represent five distinct arithmetic expressions. Each cell's color and numerical value indicate the attribution score of that token for the corresponding operation, with a color scale ranging from red (negative attribution) to green (positive attribution).

### Components/Axes

* **Column Headers (Top, angled):** Five arithmetic expressions:

1. `9 + 3 = 12`

2. `7 x 5 = 35`

3. `4900 / 100 = 49`

4. `9 - 3 = 6`

5. `6 - 6 = 0`

* **Row Labels (Left side, vertical):** A sequence of 13 tokens/symbols:

`$`, `1`, `6`, `,`, `0`, `0`, `0`, `_/`, `-`, `0`, `.`, `8`, `_=`

* **Color Scale/Legend (Right side, vertical):** A vertical bar labeled "Token Attribution". The scale runs from approximately -1.5 (dark red) at the bottom, through 0 (light yellow) in the middle, to +1.5 (dark green) at the top. Major tick marks are at -1.5, -1.0, -0.5, 0.0, 0.5, 1.0, 1.5.

* **Data Grid:** A 13-row by 5-column grid of cells. Each cell contains a numerical attribution score and is colored according to the scale.

### Detailed Analysis

**Attribution Scores by Row (Token):**

* **Row 1 (`$`):** -0.0756, 0.0216, 0.0065, 0.1044, -0.0691

* **Row 2 (`1`):** 0.0198, -0.0041, -0.0987, 0.0141, -0.0096

* **Row 3 (`6`):** 0.0001, 0.0000, -0.0004, 0.0000, -0.0000

* **Row 4 (`,`):** 0.0017, -0.0041, -0.0351, -0.0060, -0.0070

* **Row 5 (`0`):** 0.0005, 0.0006, -0.0048, -0.0003, -0.0002

* **Row 6 (`0`):** 0.0000, -0.0000, -0.0001, 0.0000, 0.0000

* **Row 7 (`0`):** 0.0003, 0.0005, -0.0004, 0.0000, 0.0001

* **Row 8 (`_/`):** **0.3820, 0.0909, 1.9074, 0.6336, 0.6902** (This row has the highest magnitude values, colored in shades of green).

* **Row 9 (`-`):** 0.0458, 0.0362, 0.0022, 0.0116, 0.0091

* **Row 10 (`0`):** 0.0005, 0.0006, 0.0000, 0.0002, 0.0002

* **Row 11 (`.`):** 0.0014, 0.0003, 0.0012, 0.0001, -0.0002

* **Row 12 (`8`):** 0.0005, 0.0003, 0.0002, 0.0001, 0.0002

* **Row 13 (`_=`):** 0.0017, 0.0026, 0.0024, 0.0007, 0.0021

**Attribution Scores by Column (Operation):**

* **Column 1 (`9 + 3 = 12`):** Values range from -0.0756 to 0.3820. The token `_/` has the highest positive attribution (0.3820).

* **Column 2 (`7 x 5 = 35`):** Values are generally low, ranging from -0.0041 to 0.0909. The token `_/` has the highest attribution (0.0909).

* **Column 3 (`4900 / 100 = 49`):** Contains the single highest value in the entire heatmap: **1.9074** for the token `_/`. Other values are mostly near zero or slightly negative.

* **Column 4 (`9 - 3 = 6`):** Values range from -0.0060 to 0.6336. The token `_/` has the highest attribution (0.6336).

* **Column 5 (`6 - 6 = 0`):** Values range from -0.0691 to 0.6902. The token `_/` has the highest attribution (0.6902).

### Key Observations

1. **Dominant Token:** The token represented by `_/` (row 8) has overwhelmingly the highest attribution scores across all five arithmetic operations, peaking at 1.9074 for the division problem. Its cells are distinctly green.

2. **Low Attribution for Most Tokens:** The vast majority of tokens (rows 1-7, 9-13) have attribution scores very close to zero (e.g., 0.0001, -0.0004). Their cells are a uniform light yellow, indicating minimal contribution.

3. **Operation-Specific Patterns:** While `_/` is dominant everywhere, its relative strength varies. It is most critical for the division operation (`4900 / 100 = 49`). The subtraction operations (`9-3=6` and `6-6=0`) also show moderately high attribution for this token.

4. **Negative Attributions:** A few tokens show small negative attributions (e.g., `$` for `9+3=12` at -0.0756, `1` for `4900/100=49` at -0.0987), suggesting a slight negative influence on the model's output for those specific problems.

### Interpretation

This heatmap is likely the output of an interpretability technique (like integrated gradients or attention rollout) applied to a neural network (e.g., a language model) that solves arithmetic problems. It reveals which input tokens the model "attends to" or relies on most when computing each result.

* **The `_/` Token is Key:** The symbol `_/` is almost certainly a tokenizer's representation for the division operator (`/`). Its massive attribution score for the division problem (`4900 / 100 = 49`) is logically consistent: the model correctly identifies the division operator as the most critical component for solving that specific calculation. Its high attribution for other operations is more puzzling and could indicate that this token carries a general "operator" or "computation" signal in the model's internal representation, or it might be an artifact of the tokenization scheme.

* **Model Focus:** The model appears to focus sharply on the core operator token and largely ignores the numerical digits and punctuation (commas, decimal points, equals signs) for these particular problems. This suggests the model may have learned a strategy where identifying the operation type is the primary step, with the specific numbers being processed in a more distributed, less token-attributable way.

* **Anomaly/Insight:** The near-zero attribution for most number tokens (`0`, `1`, `6`, `8`) is striking. It implies that for these clean, single-step equations, the model's reasoning is not strongly tied to individual digit tokens in the input, but rather to a higher-level understanding encapsulated by the operator token. This could be a sign of efficient abstraction or, conversely, a limitation in how the model processes numerical values.