## System Architecture Diagram: Single Agent Task Framework

### Overview

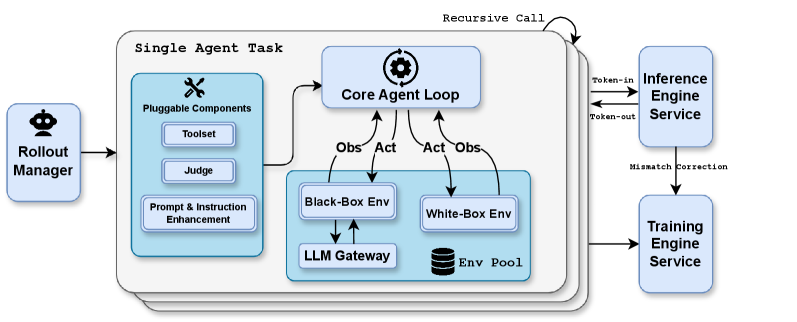

This image is a technical system architecture diagram illustrating the components and data flow of a "Single Agent Task" framework. The diagram depicts a modular system designed for agent-based tasks, involving pluggable components, core processing loops, multiple environment types, and external services for inference and training. The overall flow suggests a system for developing, testing, and refining AI agents.

### Components/Axes

The diagram is organized into several interconnected blocks and services:

1. **Rollout Manager** (Far left, blue box with a crown icon): The entry point or orchestrator that initiates the process.

2. **Single Agent Task** (Large central container): The main processing unit, containing:

* **Pluggable Components** (Left sub-box, blue): A module containing:

* `Toolset`

* `Judge`

* `Prompt & Instruction Enhancement`

* **Core Agent Loop** (Top-center sub-box, blue with gear icon): The central processing engine.

* **Environment Layer** (Bottom-center sub-box, blue):

* `Black-Box Env` (Left)

* `White-Box Env` (Right)

* `LLM Gateway` (Below Black-Box Env)

* `Env Pool` (Database icon, below White-Box Env)

3. **External Services** (Right side):

* `Inference Engine Service` (Top blue box)

* `Training Engine Service` (Bottom blue box)

**Labels and Text Flow:**

* Arrows indicate data/control flow. Key labels on arrows include:

* `Obs` (Observation) and `Act` (Action) between the Core Agent Loop and both environments.

* `Token-in` and `Token-out` between the Core Agent Loop and the Inference Engine Service.

* `Mismatch Correction` from the Inference Engine Service to the Training Engine Service.

* `Recursive Call` looping back from the Core Agent Loop to itself.

* The `LLM Gateway` has a bidirectional arrow connecting it to the `Black-Box Env`.

* The `Env Pool` is connected to the `White-Box Env`.

### Detailed Analysis

The system operates through a defined sequence of interactions:

1. **Initiation:** The `Rollout Manager` sends a task to the `Single Agent Task` unit.

2. **Agent Core Processing:** The `Core Agent Loop` is the central hub. It:

* Receives configuration and tools from the `Pluggable Components`.

* Engages in a **recursive call** loop with itself.

* Exchanges `Token-in` and `Token-out` with the external `Inference Engine Service`.

* Sends `Act` (actions) to and receives `Obs` (observations) from two types of environments.

3. **Environment Interaction:**

* The `Black-Box Env` interacts with an external `LLM Gateway` (likely for API-based model calls).

* The `White-Box Env` draws from an `Env Pool` (suggesting a repository of accessible, internal environments).

4. **Learning & Correction:** The `Inference Engine Service` detects a "Mismatch" and sends a `Mismatch Correction` signal to the `Training Engine Service`. The `Training Engine Service` also receives a direct input from the `Single Agent Task` unit, indicating a feedback loop for model improvement.

### Key Observations

* **Modularity:** The system is highly modular, with clear separation between the agent's core logic (`Core Agent Loop`), its configurable tools (`Pluggable Components`), and the environments it operates in.

* **Dual Environment Strategy:** The explicit separation of `Black-Box` and `White-Box` environments is a key architectural choice. This suggests the system is designed to handle scenarios where the agent has limited visibility (black-box) versus full access (white-box) to the environment's internal state.

* **Closed-Loop Learning:** The connection from `Inference Engine Service` to `Training Engine Service` via `Mismatch Correction` creates a closed feedback loop, enabling the system to learn from its errors.

* **Central Orchestration:** The `Core Agent Loop` acts as the central nervous system, coordinating between tools, environments, and external services.

### Interpretation

This diagram represents a sophisticated framework for developing and training AI agents in a controlled, iterative manner. The architecture is designed for **Peircean investigative** reasoning: the agent forms hypotheses (Acts), tests them in environments (both opaque and transparent), observes outcomes (Obs), and the system uses discrepancies (mismatches) to correct and improve the underlying models.

The **"reading between the lines"** suggests this is a research or production framework for:

1. **Benchmarking & Evaluation:** The `Judge` component and dual environments allow for rigorous testing of agent performance under different conditions.

2. **Iterative Refinement:** The recursive call and training feedback loop enable continuous agent improvement without full retraining.

3. **Hybrid Model Deployment:** The use of both an `LLM Gateway` (for black-box, possibly commercial APIs) and an `Env Pool` (for white-box, custom environments) indicates a flexible approach to leveraging different types of models and simulation environments.

The system's goal is likely to create more robust, reliable, and self-improving AI agents by systematically exposing them to varied challenges and using the resulting data to correct inference errors.