\n

## Diagram: Knowledge Graph Reasoning Pipeline

### Overview

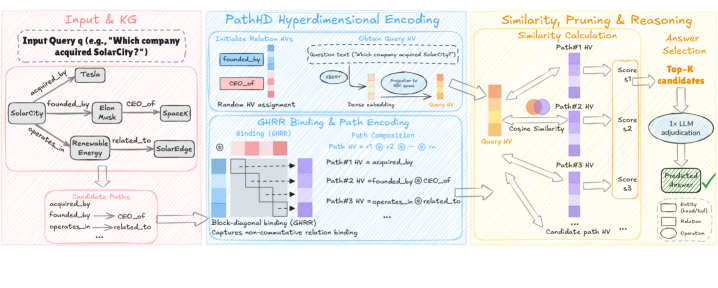

This diagram illustrates a pipeline for knowledge graph reasoning, taking a natural language query as input and producing a predicted answer. The pipeline consists of three main stages: Input & Knowledge Graph, PathHD Hyperdimensional Encoding, and Similarity, Pruning & Reasoning. The diagram visually represents the flow of information and the key operations performed at each stage.

### Components/Axes

The diagram is divided into three main sections, visually separated by background colors:

* **Input & KG (Leftmost, Pink Background):** Represents the input query and the knowledge graph.

* **PathHD Hyperdimensional Encoding (Center, Blue Background):** Details the process of encoding paths from the knowledge graph into hyperdimensional vectors.

* **Similarity, Pruning & Reasoning (Rightmost, Yellow Background):** Shows how similarity is calculated between the query vector and path vectors, followed by pruning and answer selection.

Key elements include:

* **Entities:** Represented by rounded rectangles (e.g., "SolarCity", "Tesla", "Elon Musk", "SpaceX", "Renewable Energy", "SolarEdge").

* **Relations:** Represented by arrows connecting entities (e.g., "acquired\_by", "founded\_by", "CEO\_of", "operates\_by", "related\_to").

* **Vectors:** Represented by vertical purple bars labeled "HV" (HyperVector).

* **Operations:** Represented by boxes with text (e.g., "Initialize Relation HVs", "Obtain Query HV", "Dense embedding", "Path Composition", "Cosine Similarity", "1x LLM adjudication").

* **Legend:** Located at the bottom-right, defining the shapes used for entities, relations, and operations.

### Detailed Analysis or Content Details

**1. Input & KG:**

* **Input Query:** "Which company acquired SolarCity?"

* **Knowledge Graph:** Shows relationships between entities:

* SolarCity `acquired_by` Tesla

* Tesla `founded_by` Elon Musk

* Elon Musk `CEO_of` SpaceX

* Renewable Energy `operates_by` SolarEdge

* SolarEdge `related_to` Renewable Energy

* **Candidate Paths:** Lists possible paths: `acquired_by`, `founded_by`, `CEO_of`, `related_to`.

**2. PathHD Hyperdimensional Encoding:**

* **Initialize Relation HVs:** Initializes hypervectors for each relation (e.g., `founded_by`, `CEO_of`).

* **Obtain Query HV:** Converts the input query text ("Which company acquired SolarCity?") into a query hypervector. This involves a "Projection to HV" step.

* **Random HV assignment:** Assigns random hypervectors.

* **GHRR Binding & Path Encoding:**

* **Binding (GHRR):** A visual representation of a matrix with pink and white blocks, labeled "Non-diagonal binding (GHRR) Captures non-commutative relation binding".

* **Path Composition:** Combines relation hypervectors to create path hypervectors. Examples:

* Path #1 HV: `acquired_by`

* Path #2 HV: `founded_by @ CEO_of`

* Path #3 HV: `operates_by @ related_to`

**3. Similarity, Pruning & Reasoning:**

* **Similarity Calculation:** Calculates the cosine similarity between the query hypervector and each path hypervector.

* Path #1 HV: Score s1

* Path #2 HV: Score s2

* Path #3 HV: Score s3

* **Top-K candidates:** Selects the top K candidate paths based on their similarity scores.

* **1x LLM adjudication:** Uses a Large Language Model (LLM) to adjudicate the top candidates and select the final predicted answer.

* **Predicted Answer:** A green checkmark indicates the predicted answer.

**Legend:**

* Rounded Rectangle: Entity (e.g., SolarCity)

* Arrow: Relation (e.g., acquired\_by)

* Rectangle: Operation (e.g., Path Composition)

### Key Observations

* The pipeline leverages hyperdimensional computing to represent entities and relations as vectors.

* The GHRR binding mechanism is highlighted as a key component for capturing non-commutative relation binding.

* An LLM is used as a final step to refine the answer selection process.

* The diagram emphasizes the flow of information from the input query through the knowledge graph encoding and reasoning stages to the final prediction.

### Interpretation

This diagram demonstrates a sophisticated approach to knowledge graph reasoning that combines hyperdimensional computing with LLM adjudication. The use of hypervectors allows for efficient representation and comparison of paths within the knowledge graph. The GHRR binding mechanism suggests an attempt to address the challenges of representing complex relationships where the order of relations matters. The final LLM step likely serves to disambiguate results and provide a more natural language-based answer. The pipeline appears designed to handle complex queries that require reasoning over multiple hops in the knowledge graph. The diagram is a high-level overview and doesn't provide specific details about the implementation of each component (e.g., the dimensionality of the hypervectors, the architecture of the LLM). The diagram is a conceptual illustration of a system, not a presentation of data.