## Diagram: Kernel Processing Architecture

### Overview

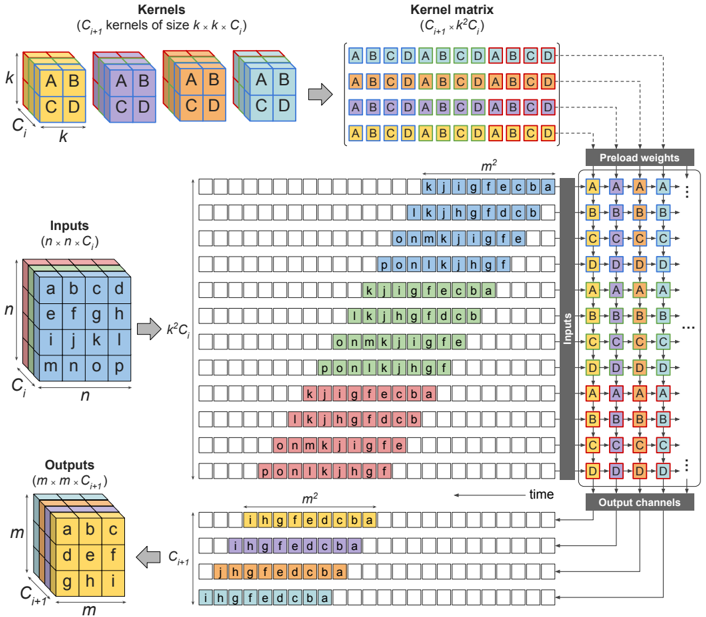

This diagram illustrates a computational architecture for processing inputs through a series of kernels, kernel matrices, and precomputed weights. It depicts the flow of data from raw inputs to transformed outputs, emphasizing the role of kernel combinations and weight matrices in the transformation process.

### Components/Axes

1. **Kernels Section**:

- **Labels**:

- "Kernels (C_k kernels of size k x k, C)"

- Grid of colored blocks labeled with combinations like `AB`, `CD`, `AB`, `CD`, etc.

- **Structure**:

- A 2D grid of `k x k` kernels, each represented by a unique color-coded block (e.g., purple for `AB`, orange for `CD`).

- Kernels are indexed by `k` (size) and `C` (number of kernels).

2. **Kernel Matrix Section**:

- **Labels**:

- "Kernel matrix (C_k x C_k)"

- **Structure**:

- A grid of letters (A–D) representing combinations of kernels.

- Each cell in the matrix corresponds to a specific kernel combination (e.g., `ABCD`, `ABCD`, etc.).

3. **Inputs Section**:

- **Labels**:

- "Inputs (n x n x C)"

- **Structure**:

- A 3D grid of letters (a–o) arranged in `n x n` spatial dimensions and `C` channels.

- Example: `a b c d` (row 1), `e f g h` (row 2), etc.

4. **Outputs Section**:

- **Labels**:

- "Outputs (n x m x C_out)"

- **Structure**:

- A 3D grid of letters (a–o) with transformed values (e.g., `i h g f`, `d c b a`).

5. **Preload Weights Section**:

- **Labels**:

- "Preload weights"

- **Structure**:

- A grid of letters (A–D) and numbers (1–4), likely representing weight matrices or transformation rules.

- Example: `A1 B2 C3 D4` in rows.

### Detailed Analysis

- **Kernels to Kernel Matrix**:

- Kernels (`AB`, `CD`, etc.) are combined into a `C_k x C_k` matrix, where each cell represents a unique kernel combination (e.g., `ABCD`).

- The matrix is color-coded to match the original kernels (e.g., purple for `AB`, orange for `CD`).

- **Inputs to Outputs**:

- Inputs (`n x n x C`) are processed through the kernel matrix and preload weights to produce outputs (`n x m x C_out`).

- The transformation involves applying kernel combinations (e.g., `i h g f`, `d c b a`) to the input data.

- **Preload Weights**:

- The weights grid (`A1 B2 C3 D4`) suggests a structured transformation applied to the kernel matrix, possibly for optimization or efficiency.

### Key Observations

1. **Color-Coded Kernels**:

- Kernels are distinctly color-coded (e.g., purple for `AB`, orange for `CD`), ensuring clear differentiation in the kernel matrix.

2. **Spatial Dimensions**:

- Inputs and outputs are 3D grids, with spatial dimensions (`n x n` and `n x m`) and channel dimensions (`C` and `C_out`).

3. **Kernel Combinations**:

- The kernel matrix explicitly shows how individual kernels are combined (e.g., `ABCD`, `ABCD`), indicating a hierarchical or composite processing approach.

4. **Preload Weights**:

- The weights grid (`A1 B2 C3 D4`) implies a systematic application of weights to the kernel matrix, possibly for bias or scaling.

### Interpretation

This diagram represents a **convolutional or matrix-based processing pipeline**, likely for tasks like image recognition or signal processing. The architecture emphasizes:

- **Kernel Reuse**: Kernels (`AB`, `CD`) are reused and combined into a matrix for efficient computation.

- **Weight Optimization**: Preload weights (`A1 B2 C3 D4`) suggest precomputed transformations to reduce computational overhead.

- **Dimensionality Reduction**: Inputs (`n x n x C`) are transformed into outputs (`n x m x C_out`), indicating a change in spatial or channel dimensions.

The use of color-coding and structured grids highlights the importance of **kernel organization** and **weight management** in the processing pipeline. This architecture likely aims to balance computational efficiency with the ability to capture complex patterns in the input data.