## Line Chart: CIFAR-100 Test Accuracy vs. Parameter d₁

### Overview

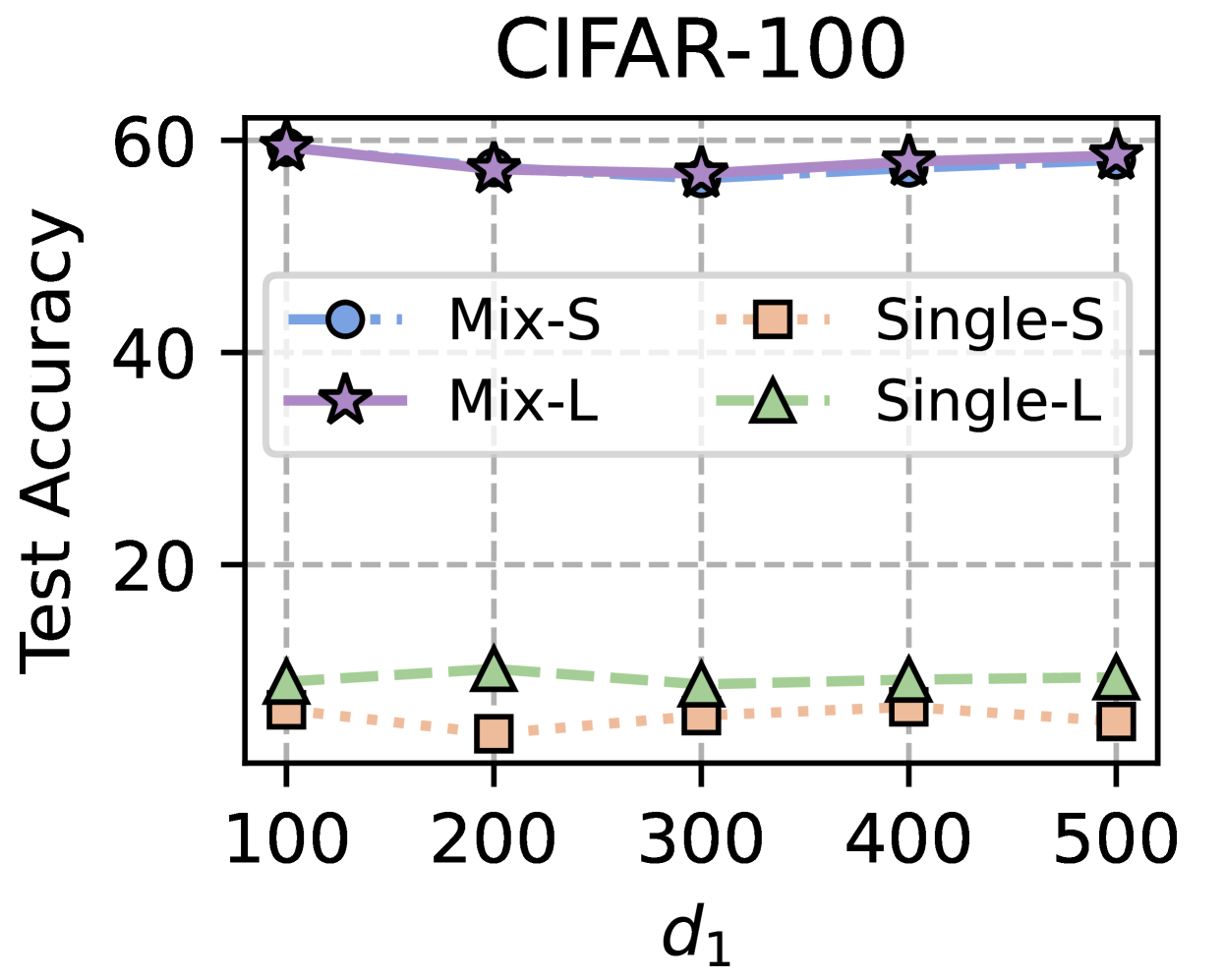

The image displays a line chart titled "CIFAR-100," plotting "Test Accuracy" (y-axis) against a parameter labeled "d₁" (x-axis). The chart compares the performance of four different methods or model variants across five discrete values of d₁. The data is presented using lines with distinct markers, and a legend is provided to identify each series.

### Components/Axes

* **Title:** "CIFAR-100" (centered at the top).

* **Y-Axis:**

* **Label:** "Test Accuracy" (rotated vertically on the left).

* **Scale:** Linear scale from 0 to 60, with major tick marks and labels at 0, 20, 40, and 60.

* **X-Axis:**

* **Label:** "d₁" (centered at the bottom).

* **Scale:** Discrete values at 100, 200, 300, 400, and 500.

* **Legend:** Positioned in the upper-middle area of the plot, slightly overlapping the data lines. It contains four entries:

1. **Mix-S:** Blue circle marker with a dash-dot line (`-·-`).

2. **Mix-L:** Purple star marker with a solid line (`-`).

3. **Single-S:** Orange square marker with a dotted line (`···`).

4. **Single-L:** Green triangle marker with a dashed line (`--`).

* **Grid:** A light gray dashed grid is present for both major x and y ticks.

### Detailed Analysis

The chart shows the test accuracy for four series as d₁ increases from 100 to 500.

**1. Mix-L (Purple Stars, Solid Line):**

* **Trend:** This series exhibits the highest accuracy overall. It starts at approximately 59.5 at d₁=100, shows a very slight dip to around 58 at d₁=200 and 300, then recovers to approximately 59 at d₁=400 and 500. The line is nearly flat, indicating high and stable performance.

* **Data Points (Approximate):**

* d₁=100: ~59.5

* d₁=200: ~58.0

* d₁=300: ~57.5

* d₁=400: ~58.5

* d₁=500: ~59.0

**2. Mix-S (Blue Circles, Dash-Dot Line):**

* **Trend:** This series performs just below Mix-L. It follows a similar pattern: starting high (~58.5 at d₁=100), dipping slightly at d₁=200 (~57.5) and 300 (~57.0), then rising again at d₁=400 (~58.0) and 500 (~58.5). The performance gap between Mix-L and Mix-S is small but consistent.

* **Data Points (Approximate):**

* d₁=100: ~58.5

* d₁=200: ~57.5

* d₁=300: ~57.0

* d₁=400: ~58.0

* d₁=500: ~58.5

**3. Single-L (Green Triangles, Dashed Line):**

* **Trend:** This series shows significantly lower accuracy than the "Mix" variants. It starts at ~10 at d₁=100, peaks at ~12 at d₁=200, then gradually declines to ~10 at d₁=300, ~9 at d₁=400, and ~8 at d₁=500. The trend is a slight arch, peaking at d₁=200.

* **Data Points (Approximate):**

* d₁=100: ~10.0

* d₁=200: ~12.0

* d₁=300: ~10.0

* d₁=400: ~9.0

* d₁=500: ~8.0

**4. Single-S (Orange Squares, Dotted Line):**

* **Trend:** This series has the lowest accuracy. It begins at ~7 at d₁=100, dips to its lowest point of ~5 at d₁=200, then slowly increases to ~7 at d₁=300, ~8 at d₁=400, and ~7 at d₁=500. Its trend is roughly inverse to Single-L between d₁=100 and 300.

* **Data Points (Approximate):**

* d₁=100: ~7.0

* d₁=200: ~5.0

* d₁=300: ~7.0

* d₁=400: ~8.0

* d₁=500: ~7.0

### Key Observations

1. **Performance Hierarchy:** There is a clear and large performance gap between the "Mix" methods (Mix-L, Mix-S) and the "Single" methods (Single-L, Single-S). The "Mix" methods achieve ~57-60% accuracy, while the "Single" methods are below ~12%.

2. **Stability:** The "Mix" methods are remarkably stable across the range of d₁, with variations of only ~2-3 percentage points. The "Single" methods show more relative volatility but within a very low accuracy band.

3. **L vs. S:** Within each group ("Mix" and "Single"), the "L" variant consistently outperforms the "S" variant, though the margin is much smaller in the high-performing "Mix" group.

4. **Anomaly at d₁=200:** At d₁=200, the two "Single" methods show opposite movements: Single-L reaches its peak, while Single-S reaches its trough. This suggests a potential interaction effect at this specific parameter value.

### Interpretation

This chart likely comes from a machine learning paper evaluating model performance on the CIFAR-100 image classification dataset. The parameter `d₁` could represent a dimensionality, width, or capacity parameter of the model.

The data strongly suggests that the "Mix" strategy (which might involve mixing data, features, or models) is fundamentally superior to the "Single" strategy for this task, yielding a ~50 percentage point advantage. The "L" (likely "Large") variants outperforming "S" ("Small") variants is expected, as larger models typically have greater capacity.

The most intriguing finding is the divergent behavior of the Single-L and Single-S models at `d₁=200`. This could indicate that for smaller, single-component models, there is a specific, non-monotonic optimal capacity point. In contrast, the mixed approaches are robust to changes in this parameter. The primary takeaway is that the "Mix" strategy is not only more effective but also more stable, making it a more reliable choice regardless of the specific `d₁` setting within the tested range.