\n

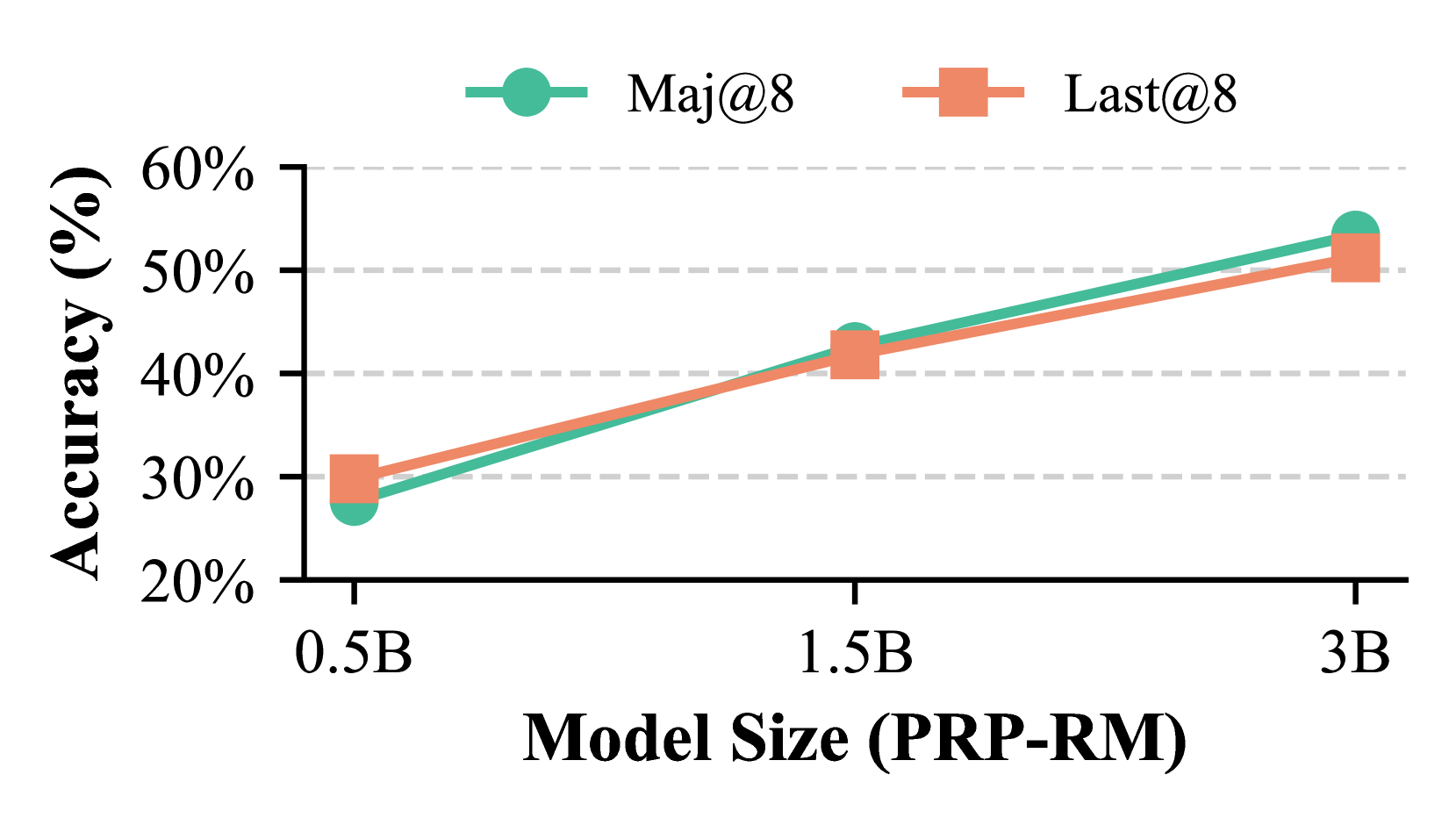

## Line Chart: Accuracy vs. Model Size

### Overview

This line chart depicts the relationship between model size (PRP-RM) and accuracy for two metrics: Maj@8 and Last@8. The chart shows how accuracy changes as the model size increases from 0.5B to 3B.

### Components/Axes

* **X-axis:** Model Size (PRP-RM) with markers at 0.5B, 1.5B, and 3B.

* **Y-axis:** Accuracy (%) with a scale ranging from 20% to 60%, incremented by 10%. Horizontal dashed lines mark 30%, 40%, and 50%.

* **Legend:** Located at the top-right of the chart.

* Maj@8: Represented by a teal line with circular markers.

* Last@8: Represented by a salmon-colored line with square markers.

### Detailed Analysis

* **Maj@8 (Teal Line):** The line slopes generally upward.

* At 0.5B, the accuracy is approximately 27%.

* At 1.5B, the accuracy is approximately 37%.

* At 3B, the accuracy is approximately 54%.

* **Last@8 (Salmon Line):** The line also slopes generally upward, but appears slightly steeper than the Maj@8 line.

* At 0.5B, the accuracy is approximately 24%.

* At 1.5B, the accuracy is approximately 44%.

* At 3B, the accuracy is approximately 52%.

### Key Observations

* Both metrics show a positive correlation between model size and accuracy. Larger models generally perform better.

* Last@8 consistently exhibits higher accuracy than Maj@8 across all model sizes.

* The increase in accuracy appears to diminish as the model size increases, suggesting a potential point of diminishing returns. The difference between 0.5B and 1.5B is larger than the difference between 1.5B and 3B for both metrics.

### Interpretation

The data suggests that increasing the model size (PRP-RM) leads to improved accuracy for both Maj@8 and Last@8 metrics. However, the rate of improvement decreases with larger model sizes. The consistently higher accuracy of Last@8 indicates that the model is better at predicting the last item in a sequence compared to the first. This could be due to the model learning more contextual information as it processes the sequence. The diminishing returns observed at larger model sizes suggest that further increasing the model size may not yield significant improvements in accuracy, and resources might be better allocated to other areas of model development, such as data quality or architectural improvements. The choice of metrics (Maj@8 and Last@8) implies an evaluation context focused on ranking or retrieval tasks where the position of the correct answer within a set of candidates is important.