## Diagram: Robotic Task Execution System

### Overview

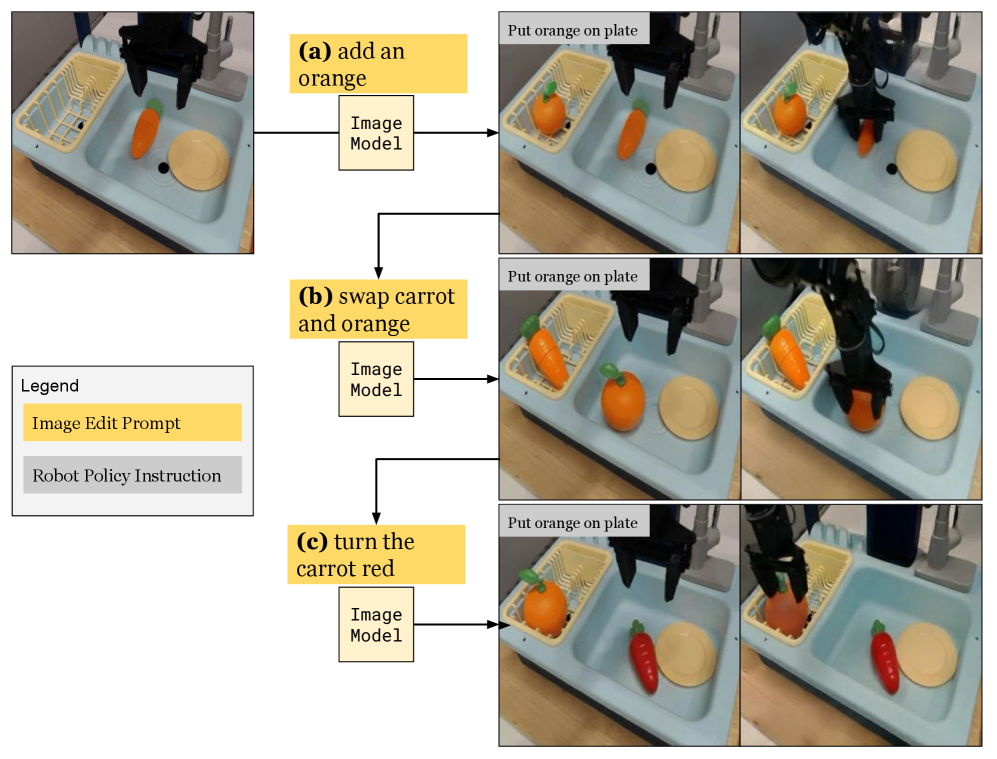

The diagram illustrates a robotic system performing three distinct tasks in a simulated kitchen environment. It demonstrates the integration of image processing models with robotic policy instructions to manipulate objects (orange, carrot, plate) within a sink setup. The system uses color-coded prompts to differentiate between image edit instructions and robot policy commands.

### Components/Axes

1. **Legend** (left side):

- **Image Edit Prompt** (yellow): Represents task-specific instructions for image manipulation

- **Robot Policy Instruction** (gray): Indicates the robotic action to be executed

2. **Task Structure** (vertical flow):

- Each task (a, b, c) contains:

- Input image of the sink environment

- Image model processing

- Output robot policy instruction

- Execution result image

3. **Spatial Layout**:

- Legend positioned in bottom-left quadrant

- Task components arranged in three vertical columns

- Robot arm shown in action across all task executions

### Detailed Analysis

1. **Task (a): Add an orange**

- Image Model Input: Sink with plate and carrot

- Robot Policy: "Put orange on plate"

- Execution: Orange placed in dish rack

2. **Task (b): Swap carrot and orange**

- Image Model Input: Sink with orange and carrot

- Robot Policy: "Put orange on plate"

- Execution: Orange moved to dish rack, carrot placed in sink

3. **Task (c): Turn the carrot red**

- Image Model Input: Sink with orange and carrot

- Robot Policy: "Put orange on plate"

- Execution: Carrot color changed to red while maintaining position

### Key Observations

- All tasks share the same robot policy instruction ("Put orange on plate") despite different objectives

- Color coding strictly follows legend: yellow for image prompts, gray for robot policies

- Robot arm maintains consistent positioning across all task executions

- Object positions change according to task requirements while maintaining spatial relationships

### Interpretation

This diagram demonstrates a vision-guided robotic system capable of:

1. Interpreting task descriptions through image models

2. Generating appropriate robotic actions based on environmental context

3. Executing complex object manipulations (addition, swapping, color modification)

The system shows:

- **Task-Image-Execution Correlation**: Each task's image model output directly informs the robot's physical action

- **Color-Coded Workflow**: Visual distinction between planning (yellow) and execution (gray) phases

- **Environmental Adaptation**: Maintains object relationships while performing specific manipulations

Notable patterns include the consistent use of the same robot policy instruction across different tasks, suggesting either a limitation in the system's task interpretation or a deliberate design choice for demonstration purposes. The color modification task (c) implies potential integration with computer vision systems capable of object recognition and property alteration.