## Diagram: Flow of Information Processing

### Overview

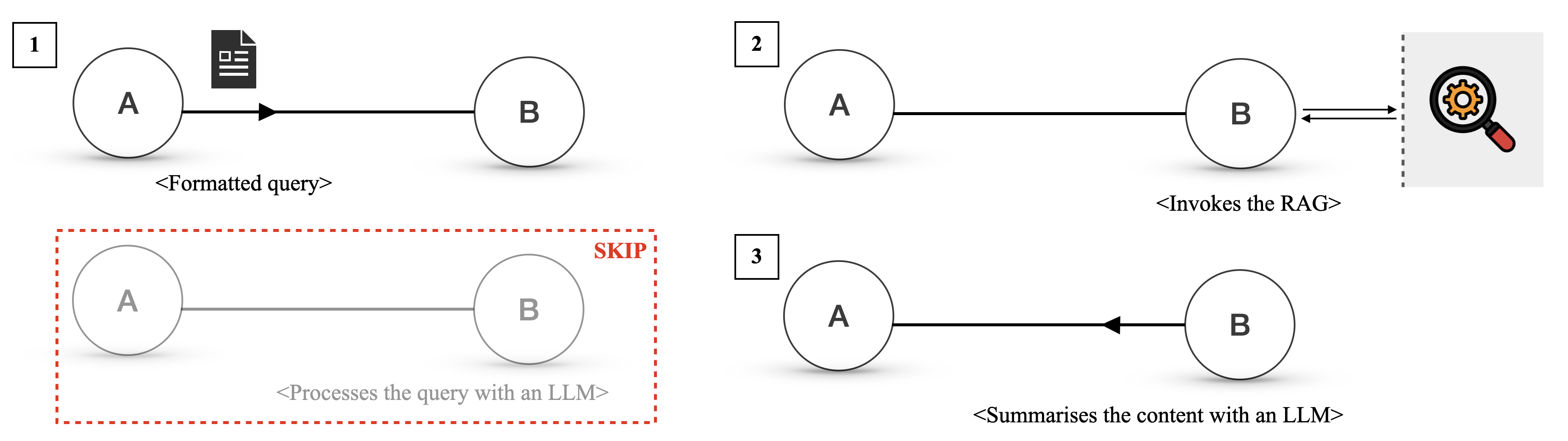

This image displays three distinct diagrams, each illustrating a different stage or aspect of information processing, likely within a system involving a Large Language Model (LLM) and a Retrieval Augmented Generation (RAG) component. The diagrams use circles labeled 'A' and 'B' to represent entities or processes, connected by arrows to indicate data flow. Each diagram is numbered (1, 2, and 3) and includes descriptive text below.

### Components/Axes

There are no traditional axes or legends in this diagram. The key components are:

* **Circles labeled 'A' and 'B'**: These represent abstract entities or stages in a process.

* **Arrows**: Indicate the direction of data flow or interaction between 'A' and 'B'.

* **Icons**: A document icon in Diagram 1 and a magnifying glass with gears icon in Diagram 2.

* **Dashed Boxes**: A red dashed box in Diagram 1 highlights a skipped process. A grey dashed vertical line in Diagram 2 separates 'B' from the RAG component.

* **Numbers (1, 2, 3)**: Used to sequentially label each distinct diagram.

* **Descriptive Text (in angle brackets)**: Provides context for each diagram's depicted process.

### Detailed Analysis

**Diagram 1:**

* **Label:** 1

* **Components:** Circle 'A', Circle 'B', a document icon next to 'A', a solid arrow pointing from 'A' to 'B'. A red dashed box encloses a greyed-out representation of 'A' and 'B' connected by a grey line, with the text "SKIP" and "<Processes the query with an LLM>" below it.

* **Text:** `<Formatted query>` below the arrow from 'A' to 'B'.

* **Description:** This diagram shows a formatted query originating from 'A' and being sent to 'B'. The red dashed box indicates that a process involving 'A' and 'B' ("Processes the query with an LLM") is skipped.

**Diagram 2:**

* **Label:** 2

* **Components:** Circle 'A', Circle 'B', a solid arrow pointing from 'A' to 'B', a double-headed arrow between 'B' and a RAG component (represented by a dashed vertical line and a magnifying glass with gears icon).

* **Text:** `<Invokes the RAG>` below the double-headed arrow.

* **Description:** This diagram illustrates 'A' sending a query to 'B', and 'B' then invoking the RAG component. The double-headed arrow suggests a two-way interaction between 'B' and the RAG.

**Diagram 3:**

* **Label:** 3

* **Components:** Circle 'A', Circle 'B', a solid arrow pointing from 'B' to 'A'.

* **Text:** `<Summarises the content with an LLM>` below the arrow from 'B' to 'A'.

* **Description:** This diagram shows content being summarized by an LLM, with the summarized content flowing from 'B' back to 'A'.

### Key Observations

* The diagrams depict a sequential or conditional flow of information.

* Diagram 1 suggests an initial formatting of a query.

* Diagram 2 shows a critical step where a RAG system is invoked, implying retrieval of information.

* Diagram 3 indicates a summarization step, likely performed by an LLM, with the output returning to the originating entity ('A').

* The "SKIP" annotation in Diagram 1 implies that the LLM processing step might be bypassed in certain scenarios, or that the initial query formatting is a prerequisite before LLM processing.

### Interpretation

These diagrams collectively illustrate a potential workflow for a system that uses a Large Language Model (LLM) and Retrieval Augmented Generation (RAG).

* **Diagram 1** likely represents the initial stage where a user's input (or an internal process) is transformed into a structured or "formatted query" that can be understood by the subsequent components. The "SKIP" annotation around the LLM processing suggests that the system might first format the query and then, depending on the context or if RAG is involved, proceed to other steps without direct LLM processing at that specific point, or that the LLM processing is a separate, potentially optional, step.

* **Diagram 2** is the core of the RAG interaction. 'A' sends a query to 'B' (which could be an orchestrator or a specific module). 'B' then "invokes the RAG," meaning it queries an external knowledge base or data source to retrieve relevant information. The double-headed arrow between 'B' and the RAG icon signifies that 'B' sends a query to RAG and receives results back. This retrieved information is crucial for grounding the LLM's responses.

* **Diagram 3** shows the final stage where 'B' (having potentially processed retrieved information) "summarises the content with an LLM." The arrow pointing from 'B' back to 'A' indicates that the summarized output is returned to the initial entity or process 'A'. This suggests that 'A' is the recipient of the final, processed information, which could be an answer to the original query, a summary of retrieved documents, or some other form of generated content.

In essence, the diagrams demonstrate a process where a query is formatted, relevant information is retrieved via RAG, and then an LLM is used to synthesize and summarize this information before returning it. The skipped LLM processing in Diagram 1 might imply that the RAG step is prioritized or that the LLM is only engaged for specific types of queries or after retrieval. This workflow is a common pattern in modern LLM applications to improve accuracy and reduce hallucinations by providing external context.