TECHNICAL ASSET FINGERPRINT

0ebfc77702f33798ee4c9b04

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

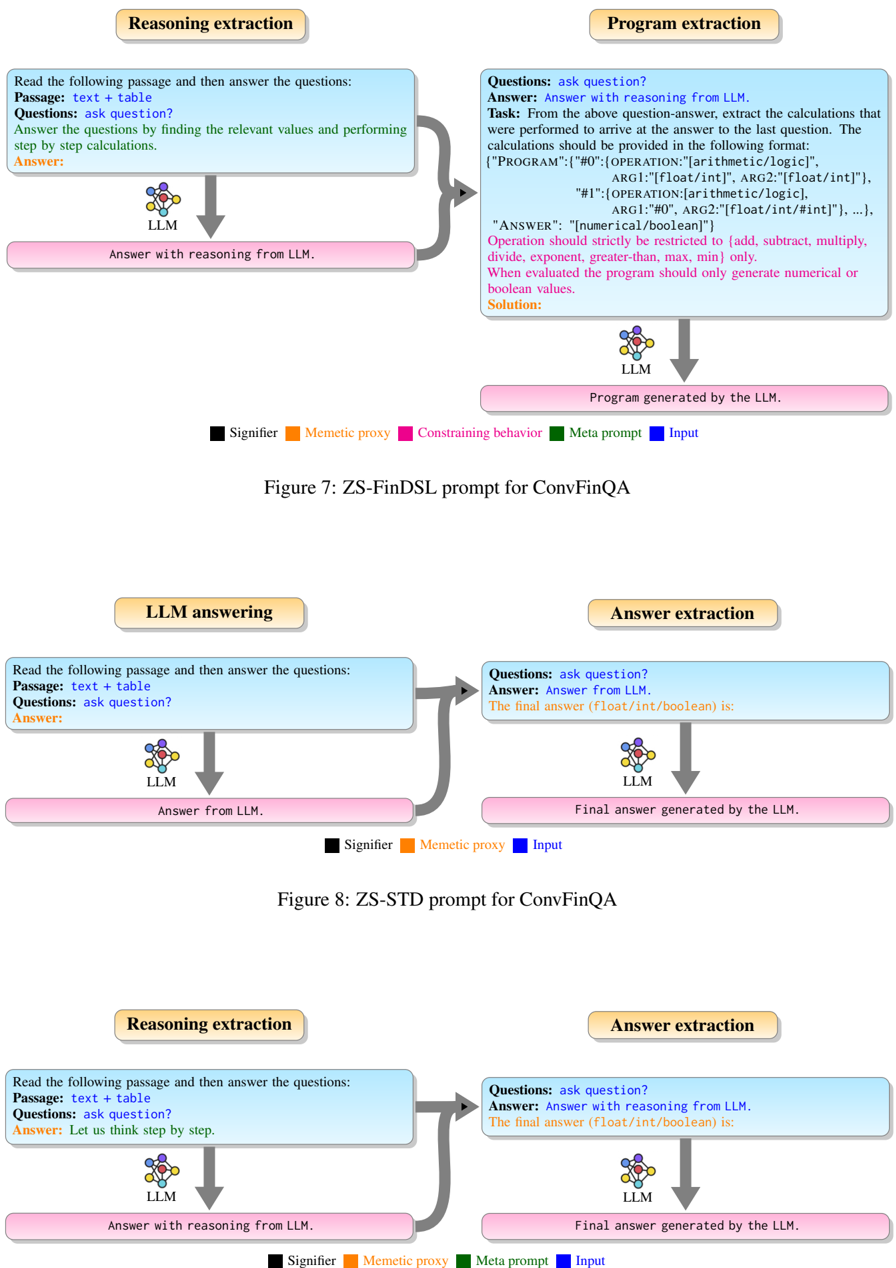

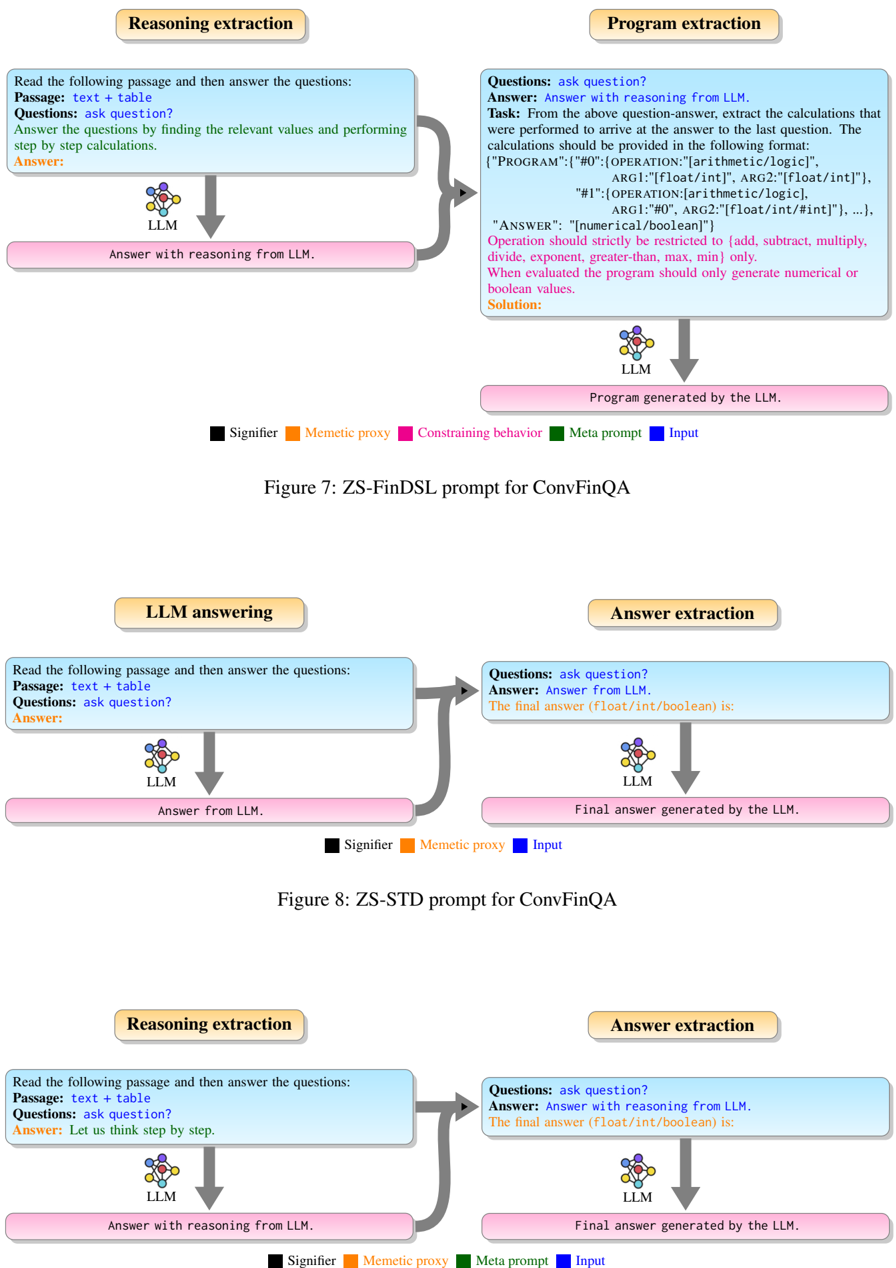

## Diagram: Prompt Engineering Strategies for ConvFinQA

### Overview

The image presents three diagrams illustrating different prompt engineering strategies (ZS-FinDSL, ZS-STD, and a third unnamed strategy) used with a Large Language Model (LLM) for the ConvFinQA task. Each diagram outlines the flow of information and processing steps involved in answering questions based on a given passage and table.

### Components/Axes

**General Components (Present in all diagrams):**

* **Input Text Box (Light Blue):** Contains the initial prompt instructions and data.

* **LLM Icon:** Represents the Large Language Model processing the input.

* **Output Text Box (Light Pink):** Displays the LLM's response or generated output.

* **Arrows:** Indicate the flow of information between components.

* **Legend (Bottom):** Defines the color-coded elements:

* Black: Signifier

* Orange: Memetic proxy

* Green: Constraining behavior (Only in Figure 7)

* Pink: Meta prompt

* Blue: Input

**Figure 7: ZS-FinDSL prompt for ConvFinQA**

* **Top-Left Box (Reasoning extraction):**

* Title: Reasoning extraction

* Text:

* Read the following passage and then answer the questions:

* Passage: text + table

* Questions: ask question?

* Answer the questions by finding the relevant values and performing step by step calculations.

* Answer:

* **Top-Right Box (Program extraction):**

* Title: Program extraction

* Text:

* Questions: ask question?

* Answer: Answer with reasoning from LLM.

* Task: From the above question-answer, extract the calculations that were performed to arrive at the answer to the last question. The calculations should be provided in the following format:

* {"PROGRAM": {"#0": {OPERATION:"[arithmetic/logic]", ARG1:"[float/int]", ARG2:"[float/int]"}, "#1":{OPERATION: [arithmetic/logic], ARG1:"#0", ARG2:"[float/int/#int]"}, ...}, "ANSWER": "[numerical/boolean]"}

* Operation should strictly be restricted to {add, subtract, multiply, divide, exponent, greater-than, max, min} only.

* When evaluated the program should only generate numerical or boolean values.

* Solution:

* **Bottom-Left Box:** Answer with reasoning from LLM.

* **Bottom-Right Box:** Program generated by the LLM.

**Figure 8: ZS-STD prompt for ConvFinQA**

* **Top-Left Box (LLM answering):**

* Title: LLM answering

* Text:

* Read the following passage and then answer the questions:

* Passage: text + table

* Questions: ask question?

* Answer:

* **Top-Right Box (Answer extraction):**

* Title: Answer extraction

* Text:

* Questions: ask question?

* Answer: Answer from LLM.

* The final answer (float/int/boolean) is:

* **Bottom-Left Box:** Answer from LLM.

* **Bottom-Right Box:** Final answer generated by the LLM.

**Third Diagram (Unnamed)**

* **Top-Left Box (Reasoning extraction):**

* Title: Reasoning extraction

* Text:

* Read the following passage and then answer the questions:

* Passage: text + table

* Questions: ask question?

* Answer: Let us think step by step.

* **Top-Right Box (Answer extraction):**

* Title: Answer extraction

* Text:

* Questions: ask question?

* Answer: Answer with reasoning from LLM.

* The final answer (float/int/boolean) is:

* **Bottom-Left Box:** Answer with reasoning from LLM.

* **Bottom-Right Box:** Final answer generated by the LLM.

### Detailed Analysis or ### Content Details

**Figure 7: ZS-FinDSL prompt for ConvFinQA**

1. **Reasoning extraction:** The LLM receives a passage and table, and is prompted to answer questions by finding relevant values and performing step-by-step calculations.

2. **Program extraction:** The LLM extracts the calculations performed to arrive at the answer. The calculations are formatted as a program with arithmetic/logic operations on float/int values. The output is a numerical or boolean answer.

3. The "Reasoning extraction" box connects to the "Program extraction" box with a gray arrow.

4. The LLM icon below the "Reasoning extraction" box connects to the "Answer with reasoning from LLM" box with a downward arrow.

5. The LLM icon below the "Program extraction" box connects to the "Program generated by the LLM" box with a downward arrow.

**Figure 8: ZS-STD prompt for ConvFinQA**

1. **LLM answering:** The LLM receives a passage and table, and is prompted to answer questions.

2. **Answer extraction:** The LLM extracts the final answer, which is a float, integer, or boolean value.

3. The "LLM answering" box connects to the "Answer extraction" box with a gray arrow.

4. The LLM icon below the "LLM answering" box connects to the "Answer from LLM" box with a downward arrow.

5. The LLM icon below the "Answer extraction" box connects to the "Final answer generated by the LLM" box with a downward arrow.

**Third Diagram (Unnamed)**

1. **Reasoning extraction:** The LLM receives a passage and table, and is prompted to answer questions by thinking step by step.

2. **Answer extraction:** The LLM extracts the final answer, which is a float, integer, or boolean value.

3. The "Reasoning extraction" box connects to the "Answer extraction" box with a gray arrow.

4. The LLM icon below the "Reasoning extraction" box connects to the "Answer with reasoning from LLM" box with a downward arrow.

5. The LLM icon below the "Answer extraction" box connects to the "Final answer generated by the LLM" box with a downward arrow.

### Key Observations

* Figure 7 (ZS-FinDSL) involves a two-step process: reasoning extraction followed by program extraction.

* Figure 8 (ZS-STD) is a simpler, direct answer extraction process.

* The third diagram is similar to ZS-STD but includes the prompt "Let us think step by step" in the reasoning extraction phase.

* The legend provides a color-coding scheme for different elements in the diagrams.

### Interpretation

The diagrams illustrate different approaches to prompt engineering for question answering tasks using LLMs. ZS-FinDSL aims to extract the reasoning process as a program, while ZS-STD directly extracts the answer. The third diagram combines elements of both, prompting the LLM to think step by step before extracting the answer. The choice of prompt engineering strategy can significantly impact the LLM's performance and the interpretability of its reasoning process. The diagrams highlight the importance of carefully designing prompts to elicit the desired behavior from the LLM.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Diagram: LLM Prompting Strategies for ConvFinQA

### Overview

This image presents three distinct flowcharts, each illustrating a different prompting strategy for Large Language Models (LLMs) in the context of ConvFinQA (Conversational Financial Question Answering). Each flowchart details a multi-step process involving an LLM to either extract reasoning, generate programs, or provide answers, often chaining the output of one LLM step as input to another. The diagrams are titled "Figure 7: ZS-FinDSL prompt for ConvFinQA", "Figure 8: ZS-STD prompt for ConvFinQA", and a third unnamed diagram that follows a similar two-stage structure.

### Components/Axes

The image is composed of three vertically stacked diagrams. Each diagram features:

* **Process Headers (Light Brown/Gold Rectangles)**: These labels describe the overall task of a specific flow, such as "Reasoning extraction", "Program extraction", "LLM answering", and "Answer extraction". They are positioned at the top of each processing column.

* **Input Prompt Boxes (Light Blue Rectangles)**: These boxes contain the textual prompts given to the LLM. They are positioned below the process headers.

* **LLM Component (Multi-colored Brain-like Icon)**: Represented by a stylized brain icon with multiple colored spheres (purple, blue, green, orange, red, yellow), labeled "LLM". This signifies the Large Language Model processing the input. It is positioned below the input prompt boxes.

* **LLM Output Boxes (Pink Rectangles)**: These boxes contain the textual output generated by the LLM. They are positioned below the LLM component.

* **Flow Arrows (Dark Gray)**: Straight arrows indicate a direct downward flow from input to LLM, and from LLM to output. Curved arrows indicate the output of one LLM process serving as input to another LLM process, flowing from the bottom-left output box to the top-right input box.

* **Legends (Bottom-center of each Figure)**: Each figure includes a legend defining different types of prompt elements by color.

* **Figure 7 Legend (from left to right)**:

* Black square: "Signifier"

* Orange square: "Memetic proxy"

* Magenta square: "Constraining behavior"

* Dark Green square: "Meta prompt"

* Dark Blue square: "Input"

* **Figure 8 Legend (from left to right)**:

* Black square: "Signifier"

* Orange square: "Memetic proxy"

* Dark Blue square: "Input"

* **Bottom Diagram Legend (from left to right)**:

* Black square: "Signifier"

* Orange square: "Memetic proxy"

* Dark Green square: "Meta prompt"

* Dark Blue square: "Input"

### Detailed Analysis

#### Figure 7: ZS-FinDSL prompt for ConvFinQA

This diagram illustrates a two-stage process: "Reasoning extraction" followed by "Program extraction".

1. **Reasoning extraction (Left Flow)**:

* **Input Prompt (Light Blue Box, top-left)**:

* "Read the following passage and then answer the questions:" (Black text, Signifier)

* "**Passage**: text + table" (Black text, Signifier)

* "**Questions**: ask question?" (Black text, Signifier)

* "**Answer**: Answer the questions by finding the relevant values and performing" (Black text, Signifier)

* "step by step calculations." (Black text, Signifier)

* "Answer:" (Black text, Signifier)

* **LLM Component**: Processes the prompt.

* **Output (Pink Box, bottom-left)**: "Answer with reasoning from LLM."

2. **Program extraction (Right Flow)**:

* **Input Prompt (Light Blue Box, top-right)**: This prompt receives input from the "Answer with reasoning from LLM." output.

* "**Questions**: ask question?" (Black text, Signifier)

* "**Answer**: Answer with reasoning from LLM." (Black text, Signifier)

* "**Task**: From the above question-answer, extract the calculations that" (Black text, Signifier)

* "were performed to arrive at the answer to the last question. The" (Black text, Signifier)

* "calculations should be provided in the following format:" (Black text, Signifier)

* "[\"PROGRAM\":{\"#0\":{\"OPERATION\":[arithmetic/logic]," (Black text, Signifier)

* "ARG1:\"[float/int]\", ARG2:\"[float/int]\"}," (Black text, Signifier)

* "\"#1\":{\"OPERATION\":[arithmetic/logic]," (Black text, Signifier)

* "ARG1:\"[float/int]\", ARG2:\"[float/int]\"}, ...}," (Black text, Signifier)

* "\"ANSWER\": \"[numerical/boolean]\"}" (Black text, Signifier)

* "Operation should strictly be restricted to {add, subtract, multiply," (Magenta text, Constraining behavior)

* "divide, exponent, greater-than, max, min} only." (Magenta text, Constraining behavior)

* "When evaluated the program should only generate numerical or" (Magenta text, Constraining behavior)

* "boolean values." (Magenta text, Constraining behavior)

* "Solution:" (Black text, Signifier)

* **LLM Component**: Processes this prompt.

* **Output (Pink Box, bottom-right)**: "Program generated by the LLM."

* **Flow**: The output of the "Reasoning extraction" LLM ("Answer with reasoning from LLM.") feeds into the "Program extraction" LLM's input prompt via a curved dark gray arrow.

#### Figure 8: ZS-STD prompt for ConvFinQA

This diagram illustrates a two-stage process: "LLM answering" followed by "Answer extraction".

1. **LLM answering (Left Flow)**:

* **Input Prompt (Light Blue Box, top-left)**:

* "Read the following passage and then answer the questions:" (Black text, Signifier)

* "**Passage**: text + table" (Black text, Signifier)

* "**Questions**: ask question?" (Black text, Signifier)

* "**Answer:" (Black text, Signifier)

* **LLM Component**: Processes the prompt.

* **Output (Pink Box, bottom-left)**: "Answer from LLM."

2. **Answer extraction (Right Flow)**:

* **Input Prompt (Light Blue Box, top-right)**: This prompt receives input from the "Answer from LLM." output.

* "**Questions**: ask question?" (Black text, Signifier)

* "**Answer**: Answer from LLM." (Black text, Signifier)

* "The final answer (float/int/boolean) is:" (Black text, Signifier)

* **LLM Component**: Processes this prompt.

* **Output (Pink Box, bottom-right)**: "Final answer generated by the LLM."

* **Flow**: The output of the "LLM answering" LLM ("Answer from LLM.") feeds into the "Answer extraction" LLM's input prompt via a curved dark gray arrow.

#### Third Diagram (Bottom, Unnamed)

This diagram illustrates a two-stage process: "Reasoning extraction" followed by "Answer extraction".

1. **Reasoning extraction (Left Flow)**:

* **Input Prompt (Light Blue Box, top-left)**:

* "Read the following passage and then answer the questions:" (Black text, Signifier)

* "**Passage**: text + table" (Black text, Signifier)

* "**Questions**: ask question?" (Black text, Signifier)

* "**Answer**: Let us think step by step." (Black text, Signifier)

* "Answer:" (Black text, Signifier)

* **LLM Component**: Processes the prompt.

* **Output (Pink Box, bottom-left)**: "Answer with reasoning from LLM."

2. **Answer extraction (Right Flow)**:

* **Input Prompt (Light Blue Box, top-right)**: This prompt receives input from the "Answer with reasoning from LLM." output.

* "**Questions**: ask question?" (Black text, Signifier)

* "**Answer**: Answer with reasoning from LLM." (Black text, Signifier)

* "The final answer (float/int/boolean) is:" (Black text, Signifier)

* **LLM Component**: Processes this prompt.

* **Output (Pink Box, bottom-right)**: "Final answer generated by the LLM."

* **Flow**: The output of the "Reasoning extraction" LLM ("Answer with reasoning from LLM.") feeds into the "Answer extraction" LLM's input prompt via a curved dark gray arrow.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: ZS-FinDSL and ZS-STD Prompts for ConvFinQA

### Overview

The image presents a series of diagrams illustrating the process of question answering using Large Language Models (LLMs) for the ConvFinQA dataset. There are three distinct diagram sets, each depicting a different stage: Reasoning and Extraction, Program Extraction, and LLM Answering/Answer Extraction. Each diagram uses a similar visual structure with boxes representing LLMs, text blocks representing input/output, and colored lines representing different types of prompts or data flow.

### Components/Axes

Each diagram set contains the following components:

* **Input:** A text block labeled "Passage: text + table" and "Questions: ask question?".

* **LLM:** A box labeled "LLM".

* **Output:** A text block labeled "Answer:".

* **Prompt/Data Flow:** Colored lines connecting the input, LLM, and output. These lines are labeled with terms like "Signifier", "Memetic proxy", "Constraining behavior", "Meta prompt", and "Input".

* **Diagram Titles:** "Figure 7: ZS-FinDSL prompt for ConvFinQA", "Figure 8: ZS-STD prompt for ConvFinQA".

* **Text Blocks within LLM boxes:** These contain descriptions of the LLM's task.

### Detailed Analysis or Content Details

**Diagram 1: Reasoning and Extraction (Figure 7)**

* **Input:** "Passage: text + table", "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Answer:".

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A green line labeled "Memetic proxy" connects the input to the LLM.

* A purple line labeled "Constraining behavior" connects the input to the LLM.

* A yellow line labeled "Meta prompt" connects the input to the LLM.

**Diagram 2: Program Extraction (Figure 7)**

* **Input:** "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Program generated by the LLM."

* **Text within LLM box:**

* "Task: From the above question-answer, extract the calculations that were performed to arrive at the answer to the last question. The calculations should be provided in the following format: '[PROGRAM: {"0": operation(arg1, arg2, logic)}, {"1": operation(arg1, arg2, logic)}]'".

* "ARG1: [float/int/logic], ARG2: [float/int/logic]".

* "OPERATION: [arithmetic/logic]".

* "ARG1: “#”, ARG2: “#”. ARG2: [float/int/#int”], …".

* "ANSWER: “numerical/boolean”".

* "Operation should strictly be restricted to [add, subtract, multiply, divide, exponent, greater than, less than, min, max] only."

* "When evaluated the program should only generate numerical or boolean values."

* "Solution:"

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A green line labeled "Memetic proxy" connects the input to the LLM.

**Diagram 3: LLM Answering (Figure 8)**

* **Input:** "Passage: text + table", "Questions: ask question?".

* **LLM:** "Answer from LLM."

* **Output:** "Answer:".

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

**Diagram 4: Answer Extraction (Figure 8)**

* **Input:** "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Final answer (float/int/boolean) is:".

* **Text within LLM box:** "Final answer (float/int/boolean) is:"

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A yellow line labeled "Input" connects the input to the LLM.

**Diagram 5: Reasoning Extraction (Figure 8)**

* **Input:** "Passage: text + table", "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Answer: Let us think step by step.".

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A green line labeled "Memetic proxy" connects the input to the LLM.

* A yellow line labeled "Meta prompt" connects the input to the LLM.

**Diagram 6: Answer Extraction (Figure 8)**

* **Input:** "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Final answer (float/int/boolean) is:".

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A yellow line labeled "Final answer generated by the LLM".

### Key Observations

* The diagrams consistently use the same visual structure, highlighting a standardized process.

* The "Program Extraction" diagram contains the most detailed textual information, outlining the specific format and constraints for the LLM's output.

* The prompts labeled "Signifier", "Memetic proxy", "Constraining behavior", and "Meta prompt" appear to guide the LLM's reasoning and output generation.

* The diagrams illustrate a multi-stage process, starting with input, progressing through LLM processing, and culminating in an answer or program output.

### Interpretation

These diagrams demonstrate two different prompting strategies – ZS-FinDSL and ZS-STD – for tackling the ConvFinQA dataset. The diagrams visually represent how LLMs are used to process text and tables, extract reasoning, generate programs (in the case of ZS-FinDSL), and ultimately provide answers. The different colored lines represent different types of prompts or data flow, suggesting that the researchers are experimenting with various techniques to improve the LLM's performance. The detailed instructions within the "Program Extraction" diagram indicate a focus on structured output and adherence to specific constraints. The consistent use of the LLM box and input/output blocks suggests a standardized workflow for evaluating and comparing the effectiveness of different prompting strategies. The diagrams are a visual representation of a research process aimed at improving the ability of LLMs to solve complex financial question-answering tasks. The use of terms like "Signifier" and "Memetic proxy" suggests a theoretical framework related to information transfer and representation within the LLM.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram Set: LLM Prompting Strategies for ConvFinQA

### Overview

The image displays three separate flow diagrams illustrating different prompting strategies for using Large Language Models (LLMs) on the ConvFinQA task. Each diagram shows a two-stage process where an initial prompt is sent to an LLM, and its output is then processed or used in a second stage. The diagrams are labeled as Figure 7, Figure 8, and an unlabeled third figure. A color-coded legend is provided for each diagram to identify the function of different text elements.

### Components/Axes

The image is composed of three distinct diagrams arranged vertically.

**Common Elements Across Diagrams:**

* **Process Boxes:** Each diagram has two main process boxes (e.g., "Reasoning extraction", "Program extraction") with a beige background and rounded corners.

* **Prompt Boxes:** Within each process box, there is a light blue box containing the prompt text sent to the LLM.

* **LLM Icon:** A stylized brain/chip icon labeled "LLM" represents the language model.

* **Output Boxes:** Pink boxes show the output generated by the LLM.

* **Arrows:** Grey arrows indicate the flow of information from input prompts to the LLM and then to the output.

* **Legends:** Each diagram has a legend at the bottom explaining the color-coding of text within the prompt boxes.

**Legend Key (from the diagrams):**

* **Black Square:** Signifier

* **Orange Square:** Memetic proxy

* **Pink Square:** Constraining behavior (Only in Figure 7)

* **Green Square:** Meta prompt

* **Blue Square:** Input

### Detailed Analysis

#### **Figure 7: ZS-FinDSL prompt for ConvFinQA (Top Diagram)**

* **Left Process - "Reasoning extraction":**

* **Prompt Text:** "Read the following passage and then answer the questions: Passage: text + table Questions: ask question? Answer the questions by finding the relevant values and performing step by step calculations. Answer:"

* **LLM Output:** "Answer with reasoning from LLM."

* **Right Process - "Program extraction":**

* **Prompt Text:** "Questions: ask question? Answer: Answer with reasoning from LLM. Task: From the above question-answer, extract the calculations that were performed to arrive at the answer to the last question. The calculations should be provided in the following format: {"PROGRAM":{"#0":{"OPERATION":["arithmetic/logic"], "ARG1":["float/int"], "ARG2":["float/int"]}, "#1":{"OPERATION":["arithmetic/logic"], "ARG1":"#0", "ARG2":["float/int/#int"]}, ...}, "ANSWER": ["numerical/boolean"]} Operation should strictly be restricted to {add, subtract, multiply, divide, exponent, greater-than, max, min} only. When evaluated the program should only generate numerical or boolean values. Solution:"

* **LLM Output:** "Program generated by the LLM."

* **Flow:** The reasoning from the first stage is fed as input into the second stage, which instructs the LLM to convert that reasoning into a structured program (FinDSL format).

#### **Figure 8: ZS-STD prompt for ConvFinQA (Middle Diagram)**

* **Left Process - "LLM answering":**

* **Prompt Text:** "Read the following passage and then answer the questions: Passage: text + table Questions: ask question? Answer:"

* **LLM Output:** "Answer from LLM."

* **Right Process - "Answer extraction":**

* **Prompt Text:** "Questions: ask question? Answer: Answer from LLM. The final answer (float/int/boolean) is:"

* **LLM Output:** "Final answer generated by the LLM."

* **Flow:** The initial answer from the LLM is fed into a second prompt that explicitly asks for the final answer in a specific data type (float/int/boolean). This is a simpler, two-step extraction process.

#### **Unlabeled Diagram (Bottom Diagram)**

* **Left Process - "Reasoning extraction":**

* **Prompt Text:** "Read the following passage and then answer the questions: Passage: text + table Questions: ask question? Answer: Let us think step by step."

* **LLM Output:** "Answer with reasoning from LLM."

* **Right Process - "Answer extraction":**

* **Prompt Text:** "Questions: ask question? Answer: Answer with reasoning from LLM. The final answer (float/int/boolean) is:"

* **LLM Output:** "Final answer generated by the LLM."

* **Flow:** Similar to Figure 8, but the first-stage prompt explicitly includes the chain-of-thought directive "Let us think step by step." The reasoned answer is then passed to the second stage for final answer extraction.

### Key Observations

1. **Progression of Complexity:** The diagrams show a progression from a simple answer extraction pipeline (Figure 8) to one that incorporates explicit step-by-step reasoning (Bottom Diagram), and finally to a complex pipeline that converts natural language reasoning into a formal, executable program (Figure 7).

2. **Structured Output:** Figure 7 is unique in requiring a highly structured JSON-like output ("PROGRAM") with defined operations and arguments, moving beyond free-text answers.

3. **Prompt Engineering Techniques:** The diagrams illustrate different prompt engineering techniques:

* **Zero-Shot (ZS):** Implied by the figure titles.

* **Chain-of-Thought (CoT):** Explicitly used in the bottom diagram ("Let us think step by step").

* **Two-Stage Prompting:** All diagrams use a two-stage process where the output of one LLM call becomes the input for the next.

4. **Legend Consistency:** The color-coding for "Signifier" (black), "Memetic proxy" (orange), and "Input" (blue) is consistent across all three legends. Figure 7 includes additional categories ("Constraining behavior" in pink, "Meta prompt" in green) not present in the other two.

### Interpretation

These diagrams represent different methodological approaches to solving complex financial question-answering (ConvFinQA) using LLMs. The core challenge is to move from raw text and tables to precise numerical or boolean answers.

* **Figure 8 (ZS-STD)** represents a baseline approach: ask the model for an answer, then ask it again to format that answer. It relies on the LLM's inherent reasoning but doesn't explicitly guide or verify the process.

* **The Bottom Diagram** introduces a **chain-of-thought** prompt, encouraging the model to externalize its reasoning process before the final extraction. This is a common technique to improve accuracy on multi-step problems.

* **Figure 7 (ZS-FinDSL)** represents the most sophisticated approach. It doesn't just seek an answer or reasoning; it seeks to **extract and formalize the computational logic** behind the reasoning into a domain-specific language (FinDSL). This has significant implications:

* **Interpretability:** The generated program makes the model's calculation steps explicit and auditable.

* **Verifiability:** The structured program could potentially be executed or verified by a separate system, reducing reliance on the LLM's internal, opaque computation.

* **Generalization:** It frames the problem as one of "program synthesis" from natural language, which is a powerful paradigm for handling complex, multi-step tasks.

The progression suggests a research direction focused on increasing the reliability, transparency, and formal rigor of LLM outputs for financial analysis, moving from black-box answers to transparent, structured computations. The absence of the "Constraining behavior" and "Meta prompt" elements in the simpler diagrams highlights that the FinDSL approach requires more carefully engineered prompts to guide the model toward the desired structured output format.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: ZS-FinDSL and ZS-STD Prompts for ConvFinQA

### Overview

The image contains three diagrams (Figure 7, 8, 9) illustrating workflows for a ConvFinQA system. Each diagram outlines steps for **Reasoning extraction**, **Program extraction**, **LLM answering**, and **Answer extraction**, with color-coded elements (Signifier, Mementic proxy, Constraining behavior, Meta prompt, Input) and directional arrows indicating process flow.

---

### Components/Axes

#### Figure 7: ZS-FinDSL Prompt

- **Components**:

- **Reasoning extraction**: "Read the following passage and then answer the questions: Passage: text + table. Questions: ask question? Answer: Answer with reasoning from LLM."

- **Program extraction**: "From the above question-answer, extract last calculations... Operation should strictly be restricted to [add, subtract, multiply, divide, exponent, greater-than, max, min] only."

- **LLM**: Arrows connect to "Answer with reasoning from LLM" and "Program generated by the LLM."

- **Legend**:

- **Signifier** (black), **Mementic proxy** (orange), **Constraining behavior** (pink), **Meta prompt** (green), **Input** (blue).

#### Figure 8: ZS-STD Prompt

- **Components**:

- **LLM answering**: "Read the following passage and then answer the questions: Passage: text + table. Questions: ask question? Answer: Answer from LLM."

- **Answer extraction**: "The final answer (float/int/boolean) is: [answer]."

- **LLM**: Arrows connect to "Answer from LLM" and "Final answer generated by the LLM."

- **Legend**:

- **Signifier** (black), **Mementic proxy** (orange), **Input** (blue).

#### Figure 9: ZS-STD Prompt (Alternative)

- **Components**:

- **Reasoning extraction**: "Read the following passage and then answer the questions: Passage: text + table. Questions: ask question? Answer: Let us think step by step."

- **Answer extraction**: "The final answer (float/int/boolean) is: [answer]."

- **LLM**: Arrows connect to "Answer with reasoning from LLM" and "Final answer generated by the LLM."

- **Legend**:

- **Signifier** (black), **Mementic proxy** (orange), **Meta prompt** (green), **Input** (blue).

---

### Detailed Analysis

#### Figure 7: ZS-FinDSL Prompt

- **Textual Content**:

- **Reasoning extraction** requires the LLM to generate answers with explicit reasoning.

- **Program extraction** mandates strict adherence to predefined operations (e.g., no custom functions).

- **LLM** outputs both reasoning and program code.

- **Flow**:

- Input (text + table) → Reasoning extraction → Program extraction → LLM → Program generation.

#### Figure 8: ZS-STD Prompt

- **Textual Content**:

- **LLM answering** focuses on direct answers without explicit reasoning.

- **Answer extraction** isolates the final numerical/boolean result.

- **Flow**:

- Input (text + table) → LLM answering → Answer extraction → LLM → Final answer.

#### Figure 9: ZS-STD Prompt (Alternative)

- **Textual Content**:

- **Reasoning extraction** emphasizes step-by-step thinking ("Let us think step by step").

- **Answer extraction** mirrors Figure 8 but includes reasoning in the LLM output.

- **Flow**:

- Input (text + table) → Reasoning extraction → Answer extraction → LLM → Final answer.

---

### Key Observations

1. **Legend Consistency**:

- **Signifier** (black) and **Input** (blue) are consistently used across all figures.

- **Mementic proxy** (orange) appears in all figures, suggesting it represents contextual or auxiliary data.

- **Constraining behavior** (pink) and **Meta prompt** (green) are unique to Figure 7 and 9, respectively.

2. **Process Differences**:

- **Figure 7** emphasizes program generation from reasoning, while **Figures 8 and 9** focus on direct answer extraction.

- **Figure 9** introduces a "step-by-step" reasoning prompt, distinct from the other figures.

3. **Color-Coding**:

- **Signifier** (black) likely marks critical elements (e.g., questions, answers).

- **Mementic proxy** (orange) may denote contextual or secondary information.

---

### Interpretation

The diagrams illustrate two workflows for ConvFinQA:

1. **ZS-FinDSL (Figure 7)**: Designed for tasks requiring programmatic reasoning (e.g., financial calculations). The LLM generates both reasoning and executable code, constrained by strict operation rules.

2. **ZS-STD (Figures 8 and 9)**: Focuses on direct answer extraction, with variations in prompting (e.g., "step-by-step" reasoning in Figure 9).

**Notable Trends**:

- **Program extraction** (Figure 7) is more rigid, enforcing specific operations, while **answer extraction** (Figures 8/9) allows flexibility in output formats (float/int/boolean).

- The inclusion of **Meta prompt** (green) in Figure 9 suggests additional constraints or metadata handling.

**Implications**:

- The system adapts to different task requirements: structured program generation vs. direct answer retrieval.

- Color-coded elements (e.g., **Mementic proxy**) likely serve to differentiate contextual or auxiliary data from core inputs.

---

**Note**: No numerical data or charts are present; the diagrams focus on process flow and textual instructions.

DECODING INTELLIGENCE...