## Diagram: Transformer Model with KV Cache for Sequence Processing

### Overview

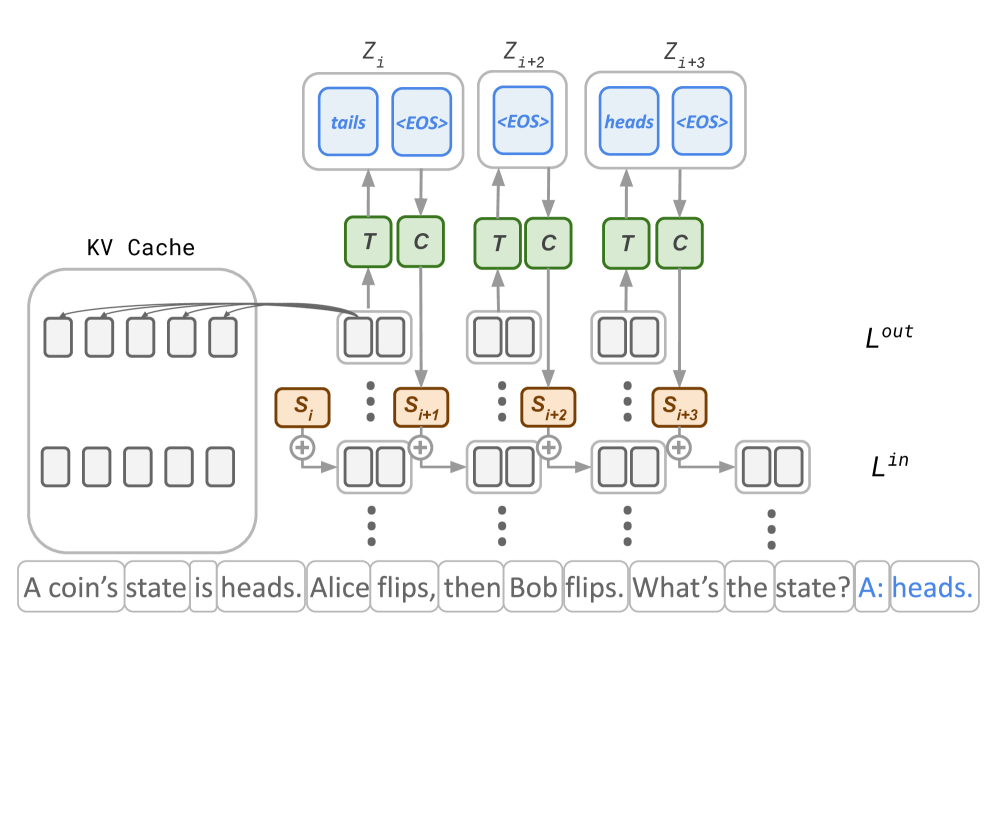

This image is a technical diagram illustrating the architecture and data flow of a transformer-based model processing a sequential reasoning task. It specifically highlights the use of a Key-Value (KV) Cache for efficient inference. The diagram shows how an input text sequence is processed through multiple layers, with intermediate states and cached values, to generate output tokens.

### Components/Axes

The diagram is organized into several distinct layers and components, flowing from bottom to top:

1. **Input Sequence (Bottom Layer):** A horizontal sequence of text tokens forming a complete question and answer.

* **Full Transcription:** "A coin's state is heads. Alice flips, then Bob flips. What's the state? A: heads."

* The final token "heads." is highlighted in blue, indicating it is the generated answer.

2. **KV Cache (Left Side):** A large, rounded rectangular block labeled "KV Cache". It contains two rows of empty rectangular boxes (5 in the top row, 5 in the bottom row), representing stored Key and Value vectors from previous processing steps. An arrow originates from this cache and points towards the processing chain on the right.

3. **Hidden State / Embedding Layer (L<sup>in</sup>):** A series of brown, rounded rectangular boxes labeled sequentially: `S_i`, `S_{i+1}`, `S_{i+2}`, `S_{i+3}`. These are connected by arrows and plus signs (`+`), indicating a sequential or residual connection. This layer is labeled `L^{in}` on the right side.

4. **Processing Blocks (Middle Layer):** Above each `S` box, there is a pair of green, rounded rectangular boxes labeled `T` and `C`. These likely represent Transformer blocks or specific operations (e.g., Attention and Feed-Forward networks). Arrows connect the `S` boxes to these `T`/`C` pairs.

5. **Output / Prediction Layer (L<sup>out</sup>):** At the top, there are three larger, rounded rectangular blocks labeled `Z_i`, `Z_{i+2}`, and `Z_{i+3}`. Each contains two smaller blue boxes representing output tokens:

* `Z_i`: Contains "tails" and "<EOS>".

* `Z_{i+2}`: Contains "<EOS>".

* `Z_{i+3}`: Contains "heads" and "<EOS>".

* This layer is labeled `L^{out}` on the right side.

6. **Data Flow Arrows:** Arrows indicate the direction of data propagation:

* From the KV Cache to the `S` boxes.

* Sequentially between the `S` boxes.

* From the `S` boxes up to the `T`/`C` blocks.

* From the `T`/`C` blocks up to the `Z` output blocks.

### Detailed Analysis

* **Sequence Processing:** The diagram models the processing of the 13-token input sequence. The indices `i`, `i+1`, `i+2`, `i+3` suggest the model is operating on specific, non-consecutive positions within this sequence, likely focusing on the reasoning steps ("flips") and the final answer.

* **KV Cache Role:** The KV Cache stores intermediate representations (Keys and Values) from earlier tokens. The arrow from the cache to the `S` boxes indicates that processing for later tokens (`S_{i+1}`, etc.) reuses this cached information, avoiding redundant computation—a key optimization for autoregressive generation.

* **Output Tokens:** The `Z` blocks show the model's predictions at different steps. `Z_i` predicts "tails" (an incorrect intermediate state) and an end-of-sequence token. `Z_{i+3}` correctly predicts "heads" as the final answer, followed by "<EOS>". The presence of multiple `<EOS>` tokens suggests the model can generate sequence terminators at various points.

* **Layer Labels:** `L^{in}` and `L^{out}` clearly demarcate the input embedding/hidden state layer and the final output/logit layer, respectively.

### Key Observations

1. **Non-Sequential Indexing:** The diagram skips from `S_i` to `S_{i+1}`, `S_{i+2}`, and `S_{i+3}`, implying it is highlighting specific, important steps in the reasoning chain rather than every single token.

2. **Intermediate Prediction:** The output at `Z_i` includes "tails," which is not part of the final correct answer. This suggests the model may generate or consider intermediate hypotheses during its reasoning process.

3. **Architectural Clarity:** The separation into distinct `T` and `C` blocks within the transformer layer is a common pedagogical simplification to represent the multi-head attention (`T` for "Transformer" or "Attention") and position-wise feed-forward network (`C` for "Context" or "Convolution/MLP") sub-layers.

4. **Visual Emphasis:** The final answer token "heads." in the input sequence is highlighted in blue, matching the color of the output tokens in the `Z` blocks, creating a visual link between the generated output and its place in the sequence.

### Interpretation

This diagram serves as an explanatory model for how a large language model (LLM) performs multi-step reasoning while maintaining efficiency. The core message is the **integration of a KV Cache within the autoregressive generation loop**.

* **What it demonstrates:** It shows that for a complex query requiring state tracking ("coin flips"), the model doesn't process the entire text from scratch for each new token. Instead, it leverages cached computations (KV Cache) from previous steps (`L^{in}` states `S_i`, etc.) to efficiently compute new hidden states and generate subsequent tokens (`Z_{i+2}`, `Z_{i+3}`).

* **Relationships:** The flow is cyclical and layered: Input tokens → Hidden States (`S`) → Transformer Operations (`T/C`) → Output Predictions (`Z`). The KV Cache acts as a memory bank that feeds back into this cycle, enabling context-aware generation without quadratic recomputation cost.

* **Notable Insight:** The inclusion of the incorrect prediction "tails" at `Z_i` is particularly insightful. It suggests the model's internal reasoning may involve exploring or verbalizing potential states before converging on the correct answer ("heads" at `Z_{i+3}`). This aligns with observed "chain-of-thought" behaviors in LLMs, where the model's intermediate outputs can reflect its problem-solving process, even if those specific tokens are not part of the final desired response. The diagram thus illustrates not just the architecture, but a plausible mechanism for **step-by-step reasoning** within a transformer.